Windows Azure and Cloud Computing Posts for 5/14/2010+

| Windows Azure, SQL Azure Database and related cloud computing topics now appear in this weekly series. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database, Codename “Dallas” and OData

- AppFabric: Access Control and Service Bus

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Windows Azure Infrastructure

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Read the detailed TOC here (PDF) and download the sample code here.

Discuss the book on its WROX P2P Forum.

See a short-form TOC, get links to live Azure sample projects, and read a detailed TOC of electronic-only chapters 12 and 13 here.

Wrox’s Web site manager posted on 9/29/2009 a lengthy excerpt from Chapter 4, “Scaling Azure Table and Blob Storage” here.

You can now download and save the following two online-only chapters in Microsoft Office Word 2003 *.doc format by FTP:

- Chapter 12: “Managing SQL Azure Accounts and Databases”

- Chapter 13: “Exploiting SQL Azure Database's Relational Features”

HTTP downloads of the two chapters are available from the book's Code Download page; these chapters will be updated in May 2010 for the January 4, 2010 commercial release.

Azure Blob, Drive, Table and Queue Services

Steve Marx explains Serving Your Website From a Windows Azure Drive in this 5/14/2010 post:

For this week’s episode of Cloud Cover, Ryan and I showed how to mount a Windows Azure drive and serve your website from it using my Hosted Web Core Worker Role. I’ve released a new Visual Studio 2010 solution called

HWCWorker_Drive_source.zipthat mounts a snapshot of a Windows Azure Drive and points Hosted Web Core at it. You can download it over on the Hosted Web Core Worker Role project on Code Gallery.To use the project, you need to store your web content in a VHD, upload it to the cloud, take a snapshot of the VHD, and then configure the project to use that snapshot. To make changes, just upload the new version of the VHD, take a new snapshot, and change the configuration setting in the project.

In the rest of this post, I’ll walk through how the various steps work.

Steve continues with “Creating a VHD on Windows 7 or Windows Server,” “Uploading and Snapshotting the VHD,” “Mounting and Using the VHD,” “Using the Development Fabric” and “Known Issues” topics, and concludes:

More Information

If you’re interested in learning more about Windows Azure drives, I recommend reading the following blog posts on the Windows Azure storage team blog:

- Windows Azure Drive Demo at MIX 2010

- Using Windows Azure Page Blobs and How to Efficiently Upload and Download Page Blobs

- Windows Azure Storage Abstractions and their Scalability Targets

You should also read the Windows Azure Drives whitepaper.

Get the Code

You can download the

HWCWorker_drive_source.zippackage over on the Hosted Web Core Worker Role project on Code Gallery.

<Return to section navigation list>

SQL Azure Database, Codename “Dallas” and OData

Shannon Lowder offers a SQL Server Incompatibilities List in this 5/14/2010 post:

While 99% of what you do in SQL is supported in SQL Azure, there is a small list of things you'll have to redesign, or at least reconsider before implementing your code on SQL Azure. The following is a list of items that are well documented, but I want to create a more complete list. If you run into problems in your SQL Azure installation, let me know about them. By learning these incompatibilities before you go into a new project, you should be able to save yourself some time. You'll be able to go right to the problem, and create corrections, or workarounds to get the job done.

Without further ado, the list:

SQL Server 2008 Features Not Supported by SQL Azure

- Change Data Capture

- Data Auditing

- Data Compression

- Extended Events

- External Key Management / Extensible Key Management

- FILESTREAM Data

- Integrated Full-Text Search

- Large User-Defined Aggregates (UDAs)

- Large User-Defined Types (UDTs)

- Performance Data Collection (Data Collector)

- Policy-Based Management

- Resource Governor

- Sparse Columns

- Spatial data with GEOGRAPHY and GEOMETRY data types

- SQL Server Replication

- Transparent Data Encryption

SQL Server 2005 Features Not Supported by SQL Azure

- Common Language Runtime (CLR) and CLR User-Defined Types

- Database Mirroring

- Service Broker

- Table Partitioning

- Typed XML and XML indexing is not supported. The XML data type is supported by SQL Azure.

Other SQL Server Features Not Supported by SQL Azure

- Backup and Restore

- Replication

- Extended Stored Procedures

- WITH (NOLOCK), and possibly other locking hints

I'm continuing to build out a new web service on top of SQL Azure. During that process I'll continue to add to this list. I've already shared some problems I've run into with simply using the Import and Export wizard. My current step has be building a SSIS package to read in XML data from a published datasource and write the results to my SQL Azure instance. So far, the issues I've found are more to do with the XML than with SQL Azure.

If you're in the middle of a project that includes SQL Azure, what problems have you found? Send them in, and we can discuss them and develop workarounds together!

Joe Francica’s Microsoft SQL Azure: Spatial database management in the cloud post of 5/14/2010 reports:

Azure is Microsoft's cloud computing platform and the instance of SQL Server that runs on Azure, i.e. SQL Azure, is geospatial-ready. SQL Azure is SQL in the cloud with a few restrictions. Microsoft provides a full replication of SQL Server in the cloud and the hassle of being the database administer is completely removed, according to Ed Katibah, SQL Server Spatial's program manager.

To briefly review, SQL Server Spatial was designed for a developer to leverage geospatial data. There is no embedded mapping application. It has only two datatypes: "Geography" data type that deals with geodetic data, and the "Geometry" data type that deals with planar data. SQL Spatial is completely OGC compliant and supports simple feature specifications.

New for the next release of SQL Server is the SQL Server Reporting Service that will access SQL Server Spatial functionality such that query and review of spatial data is fully supported. The Reporting Tool is comprised of executable functions and designed simply to provide basic reporting so don't expect it to be a mapping tool as well.SQL Azure with spatial functionality will be released this June. A migration tool for moving SQL Server to SQL Azure is available on Microsoft's Codeplex but see Ed's blog (SpatialEd) to get more details. Katibah notes that one key feature coming in SQL 11 is a self tuning capability so "stay tuned."

Stacey Higgenbotham claims Microsoft Wants to Build Its Business With Data in this 5/13/2010 post about Codename “Dallas” to the Gigaom blog:

Everyone likes to talk about big data, but few know how to make use of it. Thanks to cloud computing and the efforts of several companies, however, the ability to access and make sense of huge chunks of information is here. The question is whether there’s a business in providing intelligible data sets to information workers, application developers and analysts in a world where turn-by-turn directions and real-time financial quotes — which used to be expensive — are now free.

Microsoft is hoping there is, and to that end has built out a storefront for data sets that range from geolocation data to weather information that’s codenamed Project Dallas. The project, which will become commercially available in the second half of the year, aims to provide access to data from information providers like InfoUSA, Zillow and Navteq so that developers can use it to build applications and information services. Other potential users of the information are researchers, analysts and information workers — from buyers at retail stores to competitive intelligence officers at big companies. Microsoft will take a cut of the fee charged by the information providers, but Dallas isn’t about profiting from data brokerage so much as it’s about showcasing Microsoft’s Azure cloud and making its Office products more compelling.

“The indirect monetization is potentially bigger than the direct monetization,” said Moe Khosravy, general product manager of Project Dallas, in a conversation last week. “That will cover some bandwidth and compute and the credit card surcharges for the transactions, but the real opportunity is that more developers will use Azure and Office because we’ve made it easy and will build support for Dallas into Office.”

I explore Microsoft’s efforts as well as those of a startup called Infochimps, which is also building a data marketplace, in a research note over on GigaOM Pro (sub req’d) called Big Data Marketplaces Put a Price on Funding Patterns. In it, I lay out how the ability to host and process large data on compute clouds has changed the way people can access and profit off of data.

John Papa and Deepesh Mohnani deliver Silverlight TV 26: Exposing SOAP, OData, and JSON Endpoints for RIA Services as a 00:17:27 Channel9 Webcast posted 5/13/2010:

In this video, John meets with Deepesh Mohnani from the WCF RIA Services team. Deepesh demonstrates how to expose various endpoints from WCF RIA Services. This is a great explanation and walk through of how to open RIA Services domain services to clients, including:

- Silverlight clients (of course)

- Creating an OData endpoint and showing how Excel can use it

- Creating a SOAP endpoint to a domain service and using it from a Windows Phone 7 application

- Creating a JSON endpoint and having an AJAX application talk to it

Relevant links:

- John's Blog and on Twitter (@john_papa)

- Deepesh's Blog and on Twitter (@deepeshm)

- More about RIA Services

Follow us on Twitter @SilverlightTV or on the web at http://silverlight.tv/.

Scott Watermasysk’s OData post of 5/13/2010 reviews an early OData Roadshow:

Yesterday, I made the trip into NYC to [attend] the OData Roadshow.

For those who have not looked into OData:

The Open Data Protocol (OData) is a Web protocol for querying and updating data that provides a way to unlock your data and free it from silos that exist in applications today. OData does this by applying and building upon Web technologies such as HTTP, Atom Publishing Protocol (AtomPub) and JSON to provide access to information from a variety of applications, services, and stores.

I personally do not feel it is truly RESTful, but I am willing to give up some of the tenants of REST in order to gain consistency.

While the protocol specification states it is read and write, I would [estimate] 99% of implementations will be read only. In fact, in the 5 hours of content covered by Microsoft, there were zero examples of updates.

If you are using [LINQ as] “Your Data Access” technology, adding OData at a high level will be simple. If you are not using [LINQ], I expect this as quite a bit of work.

Overall, I really like the concept. Simple conventions for querying and representing data. IMO, this is the kind of stuff Microsoft should be doing more of (instead of Silverlight/Windows Phone/etc). Couple with their data market (Dallas). I would expect to see much more data becoming available via OData.

On a side note, if the OData Roadshow is coming through your town, I would highly recommend checking it out.

Finally, for a walk through on setting up your own OData service, check out Hanselman’s OData For StackOverflow post.

Doug Purdy’s OData and You: An Everyday Guide for Architects Featuring Douglas Purdy (@douglasp) presentation of 5/7/2010 is finally available for playback and download here (requires registration):

Join Doug Purdy as he talks about the importance of the Open Data Protocol (OData) to Archi-tects. Doug will provide context regarding the rise of OData, and the challenges it seeks to solve. Then, through both demo and discussion, Doug will explain why knowledge of OData is as important – if not more so – for Architects as it is for Developers. To conclude, Doug will discuss the implications OData has to service orientation and what he sees as future trends.

Thanks to @WadeWegner for the heads-up.

<Return to section navigation list>

AppFabric: Access Control and Service Bus

Alex James says “To do #OData #Auth guidance you need to know all the possible hooks. So I'm reading http://bit.ly/dnPzWf to see how WCF and ASP.NET interact” in this 5/14/2010 tweet:

WCF Services and ASP.NET

This topic discusses hosting Windows Communication Foundation (WCF) services side-by-side with ASP.NET and hosting them in ASP.NET compatibility mode.

Hosting WCF Side-by-Side with ASP.NET

WCF services hosted in Internet Information Services (IIS) can be located with .ASPX pages and ASMX Web services inside of a single, common Application Domain. ASP.NET provides common infrastructure services such as AppDomain management and dynamic compilation for both WCF and the ASP.NET HTTP runtime. The default configuration for WCF is side-by-side with ASP.NET.

The ASP.NET HTTP runtime handles ASP.NET requests but does not participate in the processing of requests destined for WCF services, even though these services are hosted in the same AppDomain as is the ASP.NET content. Instead, the WCF Service Model intercepts messages addressed to WCF services and routes them through the WCF transport/channel stack.

The results of the side-by-side model are as follows:

- ASP.NET and WCF services can share AppDomain state. Because the two frameworks can coexist in the same AppDomain, WCF can also share AppDomain state with ASP.NET (including static variables, events, and so on).

- WCF services behave consistently, independent of hosting environment and transport. The ASP.NET HTTP runtime is intentionally coupled to the IIS/ASP.NET hosting environment and HTTP communication. Conversely, WCF is designed to behave consistently across hosting environments (WCF behaves consistently both inside and outside of IIS) and across transport (a service hosted in IIS 7.0 has consistent behavior across all endpoints it exposes, even if some of those endpoints use protocols other than HTTP).

- Within an AppDomain, features implemented by the HTTP runtime apply to ASP.NET content but not to WCF. Many HTTP-specific features of the ASP.NET application platform do not apply to WCF Services hosted inside of an AppDomain that contains ASP.NET content. Examples of these features include the following:

- HttpContext: Current is always null when accessed from within a WCF service. Use RequestContext instead.

- File-based authorization: The WCF security model does not allow for the access control list (ACL) applied to the .svc file of the service when deciding if a service request is authorized.

- Configuration-based URL Authorization: Similarly, the WCF security model does not adhere to any URL-based authorization rules specified in System.Web’s <authorization> configuration element. These settings are ignored for WCF requests if a service resides in a URL space secured by ASP.NET’s URL authorization rules.

- HttpModule extensibility: The WCF hosting infrastructure intercepts WCF requests when the PostAuthenticateRequest event is raised and does not return processing to the ASP.NET HTTP pipeline. Modules that are coded to intercept requests at later stages of the pipeline do not intercept WCF requests.

- ASP.NET impersonation: By default, WCF requests always runs as the IIS process identity, even if ASP.NET is set to enable impersonation using System.Web’s <identity impersonate=”true” /> configuration option.

These restrictions apply only to WCF services hosted in IIS application. The behavior of ASP.NET content is not affected by the presence of WCF. …

Vittorio Bertocci’s The “Third Book” post of 5/14/2010 describes the Proceedings of ISSE 2009:

Back in August 2009 I wrote a post announcing the various book efforts I was involved in, and I mentioned a mysterious third book I was supposed to contribute one article to. Well, last week my esteemed colleague Tom Kohler walked in one of my EIC sessions in Munich and handed me a copy of that very book… which is no longer mysterious: it’s the highlights of the Information Security Solutions Europe 2009 Conference, which opens with my article on claims & cloud :-) nice!

Mary Mosquera reports "The [federal] plan to develop a strategy will focus on ways to improve identity management” of electronic health records in her White House cyber security plan to cite e-health post of 5/12/2010 to the Government Health IT blog:

The White House has begun developing a strategy for securing online transactions and stemming identity fraud that pays particular heed to the importance of building a trusted arena for electronic healthcare transactions.

Howard Schmidt, the nation’s cyber security coordinator, said this week that the administration wants to make online commerce more secure so that government, industry and consumers will feel comfortable doing more of their business to the Internet.

The plan to develop a strategy will focus on ways to improve identity management, Schmidt said at a May 11 conference on privacy and security sponsored by the Health and Human Service Department’s Office of Civil Rights and National Institute for Standards and Technology.

As part of that effort, the administration will roll out a “trust framework” incorporating authentication technologies, standards, services and policies that government, industry and consumers could adopt. [Emphasis added.]

“The key issue is that we have to instill trust in the system,” Schmidt said. “If we don’t trust the system, we won’t use it and if we don’t use it, we lose its [potential] benefits.

Schmidt cited new electronic medical devices used by or implanted in patients as among the factors increasing the urgency of his work. They include as wireless pacemakers that can transmit data to a smart phone or computer system and directly into an electronic health record.

Identity management currently represents a diverse marketplace of vendor solutions and methods. But these approaches must also be interoperable, privacy-enhancing, voluntary, cost effective and easy-to-use, Schmidt said. That’s especially true in the healthcare arena, where the push is on to encourage small practices and individual providers to adopt electronic health records.

“One-person physician offices have to be part of this system,” Schmidt said. “They have to have the capacity to trust identity and to trust medical records and information because they don’t have infrastructure and they don’t have a CIO.”

The president is “concerned and very committed” to making sure that as healthcare goes electronic that “we also have the right controls for security and privacy,” Schmidt said.

Dave Kearns claims “At the heart of proposed federated directory is minimal disclosure and the ability to only release exactly the data that is needed” in a preface to his Use cases outlined for federated directory post of 5/11/2010 to NetworkWorld’s Security blog:

As I said in the last issue, one of the highlights of last week's European Identity Conference was Kim Cameron's presentation on his new project, federated directories. In the presentation he announced the imminent shipment (May 5) of Active Directory Federation Services (ADFS) version 2. With that, he said, that claims-based transactions within the enterprise were a done deal, a foregone conclusion -- an integral part of the plumbing. There are a few small details to work out, but it's time to set our sights on moving this technology across security domains, even into the cloud platform that XaaS (X=everything as-a-service) has created.

Cloud-based computing demands a strong, secure identity structure. In short, according to Cameron, it needs a claims-based system. But more than that it needs a federated claims-based system.

What's needed is for the relevant data from my enterprise directory to merge with the cloud provider's directory in such a way that it appears to be one unified directory. But this has to happen for all of the clients of that cloud service provider. Yet the privacy of each constituent user, the security of each client's data and even the knowledge of who the provider's clients are has to be managed intrinsically. Minimal disclosure, the ability to only release exactly the data that is needed, is at the heart of the system Cameron describes.

He listed a large number of use cases in which a federated directory system could play:

- Cell phone to enterprise directory -- All employees and their phone numbers immediately available when a phone is unlocked (but no other information), and immediately delisted when the employee is terminated.

- Departmental directory to enterprise directory -- Information kept on a departmental basis (e.g., sales contacts) are federated to the enterprise level and available to those who need them wherever and whenever the need arises.

- Cell phone to cloud directory -- cell phone address book is kept up to date by not only by corporate directory system but also by my federated (clients, vendors, family, friends, etc.) directory systems.

- Merger and acquisition -- A temporary federation of directories could occur within days -- even hours -- while a genuine merged system might take months. …

This last use case necessitates the need for a schema transformation system. Not everyone uses the same vocabulary and lexicon for the attributes and values (or, as Cameron says, the "claims") within their directories. But building a engine that translates and/or transforms these terms would be relatively easy. That transformation engine is also a necessary part of the system.

There were many more use cases presented.

But this is a project still in its formative stages. It's well funded, but still needs determined effort to succeed. I wish Cameron lots of success with it and I hope you do, too.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

Matias Woloski’s Windows Azure MMC v2 – Diagnostic Viewer Plugins post of 5/14/2010 begins:

We’ve been working during the last couple of months with Ryan Dunn and David Aiken on various things related to Windows Azure management API. One of them, released yesterday was the Windows Azure MMC v2 (read Ryan’s post about it) This version provides a significant amount of features compared to the first version.

Ryan covered pretty much of the features in this 15 minutes screencast, so I will focus on the extensibility of the Windows Azure MMC.

The Windows Azure MMC has the following extensibility points:

- Adding a new module (i.e. a new node somewhere in the tree)

- Adding a new diagnostic data viewer

- Adding a new table storage viewer

One of the pieces that we enjoyed building with Sebastian (aka Iaco) was the diagnostics data analysis. This functionality allows you to work with the data generated by the Windows Azure diagnostics infrastructure and it’s built using MEF and the MVVM pattern. If you want to create your own visualizer or viewer for diagnostic data, keep reading….

Matias continues with an illustrated, step-by-step “How to implement a diagnostics data viewer” tutorial and concludes with this Excel demonstration:

John O’Donnell reports David Bankston of INgage Networks, talks about social networking and Azure in this 00:5:42 Channel9 video interview of 5/14/2010:

Greg Oliver, MS Developer Evangelist, talks with David Bankston, CTO of ISV Gold partner INgage Networks, about their SaaS based enterprise social networking platform and moving to Azure.

David Linthicum claims “The DoD could run more effectively using cloud technologies -- and show other large organizations how it should be done” and explains How and why the military should adopt the cloud in this 5/14/2010 post to InfoWorld’s Cloud Computing blog:

At the Association for Enterprise Information (AFEI) 2010 DoD Enterprise Architecture Conference this week, I gave the keynote presentation on (what else?) cloud computing -- specifically, how it should work within Department of Defense. The DoD is the poster child for organizations that should be using the cloud, but it must first overcome several issues -- and not just technical ones.

The challenge here is to present yet another new, hype-driven concept and sell it within the context of the existing billions of dollars of IT projects that are under way for the U.S. military. No doubt, everyone in DoD IT has heard of cloud computing, but few understand how it could work within their problem domains and support their missions.

The fact of the matter is that the focus has generally been on the mission first, and IT planning and architecture second. So what you get -- and what the DoD, like many large organizations, has -- are thousands of mission systems, with no real idea of how they all work and play together, supporting the larger mission. Moreover, the one-system-one-application approach to IT used by so many organizations such as the DoD means that inefficiencies are designed in from the start.

Cloud computing would bring real advantages to the DoD. For example, the DoD has been asked to tighten its belt, such as by consolidating data centers. The cloud can help there. Also, there's an understanding that agility is now an essential attribute needed to quickly to align to mission changes; that's a very important concept, considering the events of the last 10 years. The cloud can help there, too. …

Return to section navigation list>

Windows Azure Infrastructure

My Q&A with Microsoft about SharePoint 2010 OnLine with Access Services post of 5/12/2010 (copied from my Amazon Author Blog on 5/14/2010) provides the official reply of a Microsoft spokesman to my following two questions:

When will SharePoint 2010 be available online from Microsoft?

This is Clint from the Online team. It will be available to our larges[t] online customers this year, and we will continue rolling out the 2010 technology to our broad base of online customers, with updates coming every 90 days. You can expect to see a preview of these capabilities later this year.Will SharePoint 2010 Online offer the Enterprise Edition or at least Access Services to support Web databases?

This is Clint from the Online team. We have not disclosed the specific features that will be available in SharePoint Online at this time, but you can expect most of the Enterprise Edition features to be available. Stay tuned for more details in the coming months.

Geva Perry concludes “[Hybrid cloud] is a model that won't scale. "Pure" cloud will win this war” in his Integration-as-a-Service: IBM+Cast Iron post of 5/14/2010:

In Integration-as-a-Service Heats Up I talked about the cloud integration space and mentioned three companies: SnapLogic, Cast Iron Systems and Boomi. Well, I guess I was right about it heating up because on May 3 IBM announced its plan to acquire Cast Iron.

It's important to note the difference between the three companies. Among the three, Boomi is the only one that has taken a pure cloud approach and provides its product solely as a web service. Cast Iron had started as an on-premise appliance play (virtual or physical) and recently added Cloud2, which they describe as a "multi-tenant integration-as-a-service cloud offering." SnapLogic is a downloadable product that needs to be installed on-premise or deployed by the customer in a public cloud environment such as Amazon Web Services.

[For more on application delivery models see Software Delivery Models in the Era of Cloud Computing].

Besides writing this post to say "I told you so" :-), I wanted to call attention to the debate about the appliance versus the pure cloud service approaches. In a blog post entitled Why Integration Appliances Won't Scale for Cloud Computing, Boomi CEO Bob Moul essentially slams Cast Iron's approach (although he is very gracious about the acquisition). The title of the post pretty much says it all, but you can read the details on their blog.

To me this is part of a bigger debate that is going on, and that is the fate of the notion of internal clouds (infrastructure-as-a-service and platform-as-a-service environments that run in the company's own data center).

Several of the large vendors, such as IBM -- as well as many startups such as Eucalyptus -- are pushing the notion of internal clouds. And they are gaining some traction. From the customer perspective the idea of internal clouds resonates because they want to provide their development teams with the flexibility of public clouds such as AWS, but without the huge headaches of governance, security, migration, lock-in and other problems associated today with external public clouds.

And then of course, there is the idea of a hybrid environment -- where some aspects of IT are run in-house and others in a public cloud or as software-as-a-service, which is where an offering such as Cast Iron's comes in.

But at the end of the day, Boomi's Moul has got it right. It is a model that won't scale. "Pure" cloud will win this war. Internal clouds will be a temporary stop-gap during the next 2-3 years, but will eventually settle as a small niche.

Reuven Cohen’s Exploring Differentiation Among Cloud Service Providers post of 5/14/2010 explores “the emerging cloud service provider segment:”

… One of the biggest transitions in the hosting space over the last decade has been that of Virtual Private Servers (VPS)-- a market controlled effectively by one company, Parallels. A critical problem with the Virtuozzo Containers product line and approach has been that there is effectively no difference between any VPS hosting company. The lack of differentiation among the various VPS hosting firms has meant that the only real way to set your service apart from that of the other guys is based purely on price. This price centric approach to product/service differentiation creates a commodity market for all the providers. Basically they're sales pitch is "We're cheaper". This means you're now competing based on a low margin, high volume business, not on any real value proposition. Effectively the VPS space has become a race to zero. So in this market you'll find that most VPS hosting companies are now charging roughly the same low price, a few dollars a month, with the same low margins for same basic service. The only one making any real margin is Parallels at the expensive of their customers.

What's interesting about the emerging cloud service provider segment is the opportunity to differentiate based on the value you provide to your customers. It's not that you're cheaper than the other guy, but instead that you have an actual solution to a problem they have -- today. Maybe your platform can scale more easiliy, or you're in a specific region or city, you possibly you have a particular application deployment focus. In the case of Enomaly ECP when we developed our platform we focused on developing a cloud infrastructure with the capability to define various economic models that run the gamut from pure utility offering to quota or even tiered quality of service centric approaches. By doing so, we understand that there may still be multiple ECP deployments in a particular region, but from an end customer point of view (The customer of our customers) they can be significantly different from one another. This allows for competition based on business value not purely on price. I'll choose ECP cloud provider A because they solve my particular pain point, even though they may be more expensive than cloud B who doesn't.

My position has always been to create a shared success model, one that is mutually beneficial. The better our customers do, the better we do. A model that doesn't cannibalize our service provider customers margin in return for higher margins for us. A shared success value also provides an incentive to buy more licenses based on our customers growth. At the end of the day those 500 customers City Cloud in Sweden have are now effectively become my responsibly too. (The customer of my customer is my customer) I win because my customers wins and they win because they're different, they're compelling and they have a service people need and more importantly want to buy. …

Alex Handy reports Cloud development ties code to dollars from the All About the Cloud Conference in this 5/14/2010 story for SD Times on the Web:

Writing applications for cloud environments is a different affair that writing for in-house hosting. At the All About the Cloud summit in San Francisco this week, the focus was on what changes developers need to make to their applications to enable their optimal use in the cloud. Perhaps the most interesting revelation offered during the event was that of the direct correlation between billing and the quality of source code.

Treb Ryan, CEO and founder of OpSource, said in a keynote talk that cloud hosting offers “the best margins I have seen in the hosting business.” He added that Amazon has largely played down the profitability of cloud hosting, and he suggested they have done so to scare off potential competition. OpSource has been offering hosting for software-as-a-service applications for more than five years and has relatively recently entered the cloud hosting business.

Ryan said that applications hosted in the cloud are under a performance microscope. If they send too many requests to the database, that will be reflected by a higher bill at the end of the month. He said that developers writing applications for deployment in the cloud need to realize that “bad code costs me twice as much as good code. I can cut my op costs in half” by optimizing the code.

Thus, said Ryan, developers can see a direct correlation between the code they've worked on all month and the reduction in their cloud hosting bill. That's something developers haven't really been able to do since the days of mainframes and time-sharing. …

Alex continues with details of “remaining problems of the cloud.”

Bruce Guptil’s Microsoft Office 2010 Shows Key Points in Cloud Strategy Research Alert for Saugatuck Technology of 5/13/2010 (site registration required) reports:

Microsoft Corp. took the wraps off of its Office 2010 software this week at a special launch event in New York City. The launch was subject to significant media and industry analyst coverage, Microsoft itself helped stoke the hyperbole. "It is a moment of fundamental change and there are a lot of reasons for this," Microsoft Business Division president Stephen Elop said, adding, "The 2010 products represent an epic release for Microsoft."

What is Happening?

The announcement of a new version of the ubiquitous, market-leading Office suite was less “epic” than the associated announcement of free, web-browser versions of four Office applications (Excel, Word, PowerPoint, and OneNote). Office Web Apps is due to be formally released in June 2010.

Saugatuck sees the move toward web versions of Office applications as critical to Microsoft’s future as a software provider. It is an indicator of CEO Steve Ballmer’s famous “all-in” and “moving at Cloud speed” pronouncements regarding Microsoft’s critical need to embrace a Cloud-based future (please see Research Alert, “Microsoft “All-in” Memo Coalesces Cloud IT Reality for Master Brands,” RA-712, published 10Mar2010).

However, Saugatuck sees the initial Office Web Apps effort as falling well short of what Microsoft needs to accomplish when it comes to Cloud-based business software. Analysts and reviewers far and wide are already taking Microsoft to task on what are seen as extremely limited (and limiting) functionality in the apps, especially as compared to similar offerings from Google, Zoho and others.

So, while it is making bets at the Cloud business applications table, Microsoft does not truly seem to be “all-in” just yet. And that could create more opportunity for its established Cloud-based competitors.

Bruce continues with “Why is it Happening” and “Market Impact” sections, but doesn’t address issues with SharePoint 2010, such as the current lack of an Online implementation. In fact, the term “SharePoint” doesn’t appear in the report. Perhaps Saugatuck is reserving SharePoint 2010 analysis for next week?

<Return to section navigation list>

Cloud Security and Governance

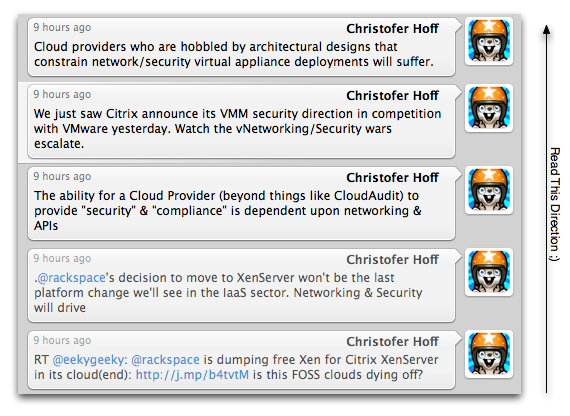

Chris Hoff (@Beaker) explores The Hypervisor Platform Shuffle: Pushing The Networking & Security Envelope in this 5/14/2010 post:

Last night we saw coverage by Carl Brooks [and] Jo Maitland (sorry, Jo) of an announcement from RackSpace that they were transitioning their IaaS Cloud offerings based on the FOSS Xen platform and moving to the commercially-supported Citrix XenServer instead:

Jaws dropped during the keynote sessions [at Citrix Synergy] when Lew Moorman, chief strategy officer and president of cloud services at Rackspace said his company was moving off Xen and over to XenServer, for better support. Rackspace is the second largest cloud provider after Amazon Web Services. AWS still runs on Xen.

People really shouldn’t be that surprised. What we’re playing witness to is the evolution of the next phase of provider platform selection in Cloud environments.

Many IaaS providers (read: the early-point market leaders) are re-evaluating their choices of primary virtualization platforms and some are actually adding support for multiple offerings in order to cast the widest net and meet specific requirements of their more evolved and demanding customers. Take Terremark, known for their VMware vCloud-based service, who is reportedly now offering services based on Citrix:

Hosting provider Terremark announced a cloud-based compliance service using Citrix technology. “Now we can provide our cloud computing customers even greater levels of compliance at a lower cost,” said Marvin Wheeler, chief strategy officer at Terremark, in a statement.

Demand for services will drive hypervisor-specific platform choices on the part of provider with networking and security really driving many of those opportunities. IaaS Providers who offer bare-metal boot infrastructure that allows flexibility of multiple operating environments (read: hypervisors) will start to make in-roads. This isn’t a mass-market provider’s game, but it’s also not a niche if you consider the enterprise as a target market.

Specifically, the constraints associated with networking and security (via the hypervisor) limit the very flexibility and agility associated with what IaaS/PaaS clouds are designed to provide. What many developers, security and enterprise architects want is the ability to replicate more flexible enterprise virtualized networking (such as multiple interfaces/IP’s) and security capabilities (such as APIs) in Public Cloud environments.

Support of specific virtualization platforms can enable these capabilities whether they are open or proprietary (think Open vSwitch versus Cisco Nexus 1000v, for instance.) In fact, Citrix just announced a partnership with McAfee to address integrated security between the ecosystem and native hypervisor capabilities. See Simon Crosby’s announcement here titled “Taming the Four Horsemen of the Virtualization Security Apocalypse” (it’s got a nice title, too

To that point, are some comments I made on Twitter that describe these points at a high level:

I wrote about this in my post titled “Where Are the Network Virtual Appliances? Hobbled By the Virtual Network, That’s Where…” and what it means technically in my “Four Horsemen of the Virtualization Security Apocalypse” presentation. Funny how these things come back around into the spotlight.

I think we’ll see other major Cloud providers reconsider their platform architecture from the networking and security perspectives in the near term.

Lori MacVittie writes about Extending identity management into the cloud while warning F5 Friday: Never Outsource Control in this 5/14/2010 post:

The focus of several questions I was asked at Interop involved identity management and application access in a cloud computing environment.

This makes sense; not all applications that will be deployed in a public cloud environment are going to be “customer” or “market” focused. Some will certainly be departmental or business unit applications designed to be used by employees and thus require a certain amount of access control and integration with existing identity management stores, like Active Directory.

Interestingly F5 isn’t the only one that thinks identity and access management needs to be addressed for cloud computing initiatives to succeed.

It's important to not reinvent the wheel when it comes to moving to the cloud, especially as it pertains to identity and access management. Brown [Timothy Brown, senior vice president and distinguished engineering of security management for CA] said that before moving to the cloud it's important that companies have a plan for managing identities, roles and relationships.

Users should extend existing identity management systems. The cloud, however, brings together complex systems and opens to door for more collaboration, meaning more control is necessary. Brown said simple role systems don't always work, dynamic ones are required. [emphasis added]

--“10 Things to Consider Before Moving to the Cloud”, CRN, 2010

Considering the emphasis on “control” and “security”, both of which identity management is closely tied, were the top two concerns of organizations in an InformationWeek Analytics Cloud Computing survey this is simply good advice.

The problem is how do you do that? Replicate your Active Directory forest? Maybe just a branch or two? There are overarching systems that can handle that replication, of course, but do you really want your corporate directory residing in the cloud? Probably not. What you really want is to leverage your existing identity management systems where they reside – in the corporate data center – but use its authentication and authorization information to allow or deny access to cloud-based applications.

Lori continues her essay with a “EXTENDING IDENTITY to the CLOUD with F5” explanation.

Alex Williams asks Is IT Showing Insecurities in the Distrust of End Users? in this 5/14/2010 post to the ReadWriteCloud blog:

We just looked at the survey results from a CA sponsored report about IT's views on cloud computing and security.

Is it us or does IT seem a bit threatened by the overwhelming interest in cloud computing?

Or is IT prudent in their views that the trends to put everything in the cloud is security nightmare waiting to happen?

The CA survey results were tallied from more than 600 IT professionals in the U.S. and more than 200 in Europe. The gist of the report states that end users are using cloud computing services without the okay from IT. End users are using cloud computing services with not enough attention paid to security and privacy. In essence, end users are running rogue and the consequences are dire.

End users are taking advantage of cloud computing services because they work. It's the business groups that in many respects are spurring innovation. These groups take advantage of web oriented services as the alternative can often be an endless slog through a maze of IT.

Plus, many of the services are affordable. Business groups can expense the cost without needing to go through an IT budget cycle.

The report demonstrates IT's own insecurities about cloud computing. It reflects the general distrust that IT harbors for the people who are making their own choices about what services to use.

It seems the argument is more about consumer IT than anything else. IT considers it a security risk. But the concern also points to the uncertain climate that is enveloping the IT establishment.

SaaS services do not require the level of integration that on-premise systems do. Security can be dialed up or down, depending on the organization using the service. The result is a changing role for IT. Security is increasingly the responsibility of the SaaS company. In truth there is tremendous opportunity out of this.

The smart ones will learn the skills that come with managing public cloud infrastructures and SaaS services. For instance, the operations costs will be substantial for companies deploying cloud environments.

This is where IT should focus its effort. IT is needed to provide efficiencies and secure systems that enable the adoption of cloud computing so a trusted environment can blossom.

The issues about cloud security v. on-premise security are relative concerns. But it's nuts to argue that end users are irresponsible with cloud services and may be revealing company trade secrets or health information. We are sure it happens but similar types of breaches have a long history in the on-premise, IT world.

What's really at risk is IT as we know it. The IT department is in danger of becoming irrelevant. And this trend will continue if the issue is always about the dangers of the cloud.

Instead, the focus should be on learning and trust. Cloud computing points to an era of sophisticated IT networks, managed by smart, open people. If such an intelligent environment is not fostered then IT only has itself to blame if it becomes marginalized and relegated to a back room role.

See Mary Mosquera reports "The [federal] plan to develop a strategy will focus on ways to improve identity management” of electronic health records in her White House cyber security plan to cite e-health post of 5/12/2010 to the Government Health IT blog in the AppFabric: Access Control and Service Bus section above.

<Return to section navigation list>

Cloud Computing Events

tbtechnet reminds developers of the Windows Azure Virtual Boot Camp IV May 17th - May 24th 2010 in this 5/14/2010 post to the Windows Azure Platform, Web Hosting and Web Services blog:

Almost 400 developers have done this and you can too…

Virtual Boot Camp IV: Learn Windows Azure at your own pace, in your own time and without travel headaches.

Windows Azure one week pass is provided so you can put Windows Azure and SQL Azure through their paces. NO credit card required.

You can start the Boot Camp any time during May 17th and May 24th and work at your own pace.

The Windows Azure virtual boot camp pass is valid from 5am USA PST May 17th through 6pm USA PST May 24th

Follow these steps:

- Request a Windows Azure One Week Pass here – Passes will be given out starting Monday May 17th

- Sign in to the Windows Azure Developer Portal and use the pass to access your Windows Azure account.

- Please note: your Windows Azure application will automatically de-provision at the end of the virtual boot camp on May 24th

- Since you will have a local copy of your application, you will be able to publish your application package on to Windows Azure after the virtual boot camp should you decide to sign up for a regular Windows Azure account after the Boot Camp.

- For USA developers, no-cost phone and email support during and after the Windows Azure virtual boot camp with the Front Runner for Windows Azure program.

- For non-USA developers - sign up for Green Light https://www.isvappcompat.com/global

- Startups - get low cost development tools and production licenses with BizSpark - join here

- Get the Tools

- To get started on the boot camp, download and install these tools:

- Download Microsoft Web Platform Installer

- Download Windows Azure Tools for Microsoft Visual Studio

- Learn about Azure

- Learn how to put up a simple application on to Windows Azure

- Take the Windows Azure virtual lab

- View the series of Web seminars designed to quickly immerse you in the world of the Windows Azure Platform

- Why Windows Azure - learn why Azure is a great cloud computing platform with these fun videos

- Dig Deeper into Windows Azure

- Download the Windows Azure Platform Training Kit

- PHP on Windows Azure

Gregg Willis will present Australians with a Windows Azure and the IT Pro Discussion as a TechNet Virtual Conference on 6/4/2010 at 1:00 PM Australia (East):

Event Overview

Do cloud services make the IT Pro obsolete? Join us in this informative session to discuss the role of IT Pros with regard to Windows Azure. Discover what’s changed and what hasn’t for the IT Professional including how to manage services in the new cloud environment and what tools are available to help manage the application lifecycle.

Speaker Details

Greg Willis is an Architect Evangelist within the Microsoft DPE team with over 18 years commercial experience in the IT, telecoms, digital media and finance industries. He joined Microsoft Australia in 2004. …

Conference ID: 841917

Registration Options

Event ID: 1032452886

Register with a Windows Live™ ID: Register Online

<Return to section navigation list>

Other Cloud Computing Platforms and Services

Alexander Wolfe claims “Cloud vendors such as Google and Amazon don’t buy in bulk from Dell or HP. They roll their own, with highly efficient designs” in a preface to his Cloud computing revolution seeding unseen servers post of 5/14/2010 to InformationWeek’s Cloud Computing blog:

The rise of cloud computing is going to stoke demand for servers, according to a new forecast from IDC. For me, the critical point is that public cloud providers such as Google don’t buy servers, they build them. And the design decisions they make - constructing sparsely configured but powerful scale-out servers - will feed back into the enterprise market.

The raw numbers of which they speak are as follows: "Server revenue for public cloud computing will grow from USD 582 million in 2009 to USD 718 million in 2014. Server revenue for the much larger private cloud market will grow from USD 7.3 billion to USD 11.8 billion in the same time period."

When you consider that the overall server market was around USD 44 billion in 2009, the qualitative takeaway is that the public-cloud portion amounts to a spring rain shower. However, I believe the influence of this sector will be disproportionately large. Here's why:

The server market for public cloud vendors such as Google and Amazon is a hidden market. These guys don’t go out and buy in bulk from Dell or HP. They roll their own, and the own that they roll are highly, highly, highly efficient designs. They strip their blades of all extraneous components and outfit them with nothing but the bare necessities. So if taking out a USB port saves USD 5 and a couple of watts of power, that’s how the system is configured.

What's also not obvious to the public is that the Googles of the world exert influence on the server design arena right at the source. Namely, they go directly to Intel and AMD and tell them stuff they'd like to see in terms of TDP (processor power dissipation), power management, price/performance, etc. Since a Google can buy upwards of 10,000 of a processor SKU, Intel and AMD listen. …

Alexander continues with page 2 of his analysis.

J. Nicholas Hoover reports “The federal government hopes moving the stimulus-tracking Web site to Amazon EC2 will allow the recovery board to save money and refocus on its core mission” in a preface to his Recovery.gov Moved To Amazon Cloud post of 5/13/2010 to InformationWeek’s Government blog:

The federal government has moved Recovery.gov, the Web site people can use to track spending under last year's $787 million economic stimulus package, to Amazon's Elastic Compute Cloud infrastructure-as-a-service platform, the Recovery Accountability and Transparency Board announced Thursday.

The move marks a milestone for the Obama administration's cloud computing initiative. Federal CIO Vivek Kundra said in a conference call with reporters it is the first government-wide system to move to a cloud computing infrastructure. It's also the first federal government production system to run on Amazon EC2, Kundra said.

Cloud computing has been one of Kundra's top priorities since becoming federal CIO in March 2009. In next year's IT budget requests, for example, federal agencies will have to discuss whether they've considered cloud computing as an alternative to investing in on-premises IT systems.

The recovery board expects to save about $750,000 over the next two years -- $334,000 this year and $420,000 in 2011 -- by running Recovery.gov on EC2. This represents about 10% of the total $7.5 million the board has spent overall on the site so far, including development costs. "Significantly" more savings are expected over the long term, according to the recovery board.

Jim Finkle describes “a rare interview” with Larry Ellison in his Special Report: Can That Guy in Ironman 2 Whip IBM in Real Life? story of 5/12/2010 for ABC News:

In the movie "Ironman 2," Larry Ellison makes a cameo appearance as a billionaire, playboy software magnate. It is a role he knows well. He is playing himself -- chief executive of Oracle Corp, one of Silicon Valley's most enduring, successful and flamboyant figures.

At age 65, he is undertaking one of the biggest challenges of his career, and it's not playing Hamlet on Broadway. Oracle, the company Ellison founded three decades ago and built into dominant force in the software industry, is making a go at hardware with the acquisition of money-losing Sun Microsystems.

This is not entirely unlike MIT deciding to field a competitive football team, but Ellison being Ellison, he could not be less worried. "We have a wealth of technology to package into systems," said Ellison, who won the America's Cup in February. "I see no reason why we can't get this to where Sun under Oracle should be larger than Sun ever was."

In a rare interview he discussed his turnaround efforts at Sun so far, revealed plans to purchase additional hardware companies and detailed new products that will launch in the near future. And he did so with his usual in-your-face style -- heaping all manner of abuse, for example, on Sun's previous managers. …

Jim continues with a detailed, four-page interview.

Stacey Higginbotham reports Heroku Raises $10M for Its Ruby Platform in this 5/10/2010 post to the Gigaom blog:

Heroku, a platform provider built on top of Amazon’s EC2 compute infrastructure, has raised $10 million in its second round of funding, bringing its total investment to $15 million. In the last six months, the Heroku platform, which was developed for Ruby on Rails applications, has seen the number of apps it hosts rise to 60,000from 40,000. The funding led by Ignition Partners with participation by existing investors Redpoint Venture Partners, Baseline Ventures, and Harrison Metal Capital, will be used to develop a channel partnership strategy and to expand the marketing of the Heroku platform, which competes with other platforms such as Google’s AppEngine and Microsoft’s Azure.

“We don’t think the market is going to end up with a Ruby platform and a Java platform and a PHP platform,” Byron Sebastian, Heroku’s CEO, said to me in an interview. “People want to build enterprise apps, Twitter apps and to do what they want regardless of the language.”

Sebastian said he sees the round as a huge validation for the Ruby language as a way to build cloud-based applications, but doesn’t want to tie Heroku too closely to Ruby. “The solution is going to be a cloud app platform, rather than as a specific language as a service,” Sebastian said. In the last few weeks, Heroku announced that it would provide experimental support for node.js, but Sebastian stopped short of saying that Heroku was adding support or planning to add support for Java, PHP or anything else.

He did say that Heroku, while built to run efficiently on top of Amzon’s EC2 service, would eventually also be hosted on other clouds. That would be a way to differentiate Heroku from competitors that are closely tied to the infrastructure of one company, such as Microsoft’s Azure. His multi-infrastructure effort might be validated by VMware’s recent decision to host a SpringSource Java platform on the Salesforce.com infrastructure creating VMforce. [Emphasis added.]

“I think the genius of Microsoft was that it recognized that you should separate the operating system from the PC itself, so the hardware vendors can focus on the hardware, and we think that’s a reasonable way to think about this market going forward,” Sebastian said.

With $10 million, a planned channel partner strategy to deal with multiple software vendors that recommend the platform to their clients, and rapid growth, we’ll see if Heroku’s bets on separating itself from the infrastructure, and downplaying Ruby while emphasizing the ease of the platform in general, is the way to go. For more on what users want when it comes to choosing a cloud, check out our “Different Clouds, Different Purposes: A Taxonomy of Clouds” panel at our Structure 10 event June 23 and 24th in San Francisco.

<Return to section navigation list>