Windows Azure and Cloud Computing Posts for 11/12/2010+

| A compendium of Windows Azure, Windows Azure Platform Appliance, SQL Azure Database, AppFabric and other cloud-computing articles. |

•• Update 11/14/2010: Articles marked ••

• Update 11/13/2010: Articles marked •

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database and Reporting

- Marketplace DataMarket and OData

- AppFabric: Access Control and Service Bus

- Windows Azure Virtual Network, Connect, and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch

- Windows Azure Infrastructure

- Windows Azure Platform Appliance (WAPA) and Hyper-V Cloud

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Read the detailed TOC here (PDF) and download the sample code here.

Discuss the book on its WROX P2P Forum.

See a short-form TOC, get links to live Azure sample projects, and read a detailed TOC of electronic-only chapters 12 and 13 here.

Wrox’s Web site manager posted on 9/29/2009 a lengthy excerpt from Chapter 4, “Scaling Azure Table and Blob Storage” here.

You can now freely download by FTP and save the following two online-only PDF chapters of Cloud Computing with the Windows Azure Platform, which have been updated for SQL Azure’s January 4, 2010 commercial release:

- Chapter 12: “Managing SQL Azure Accounts and Databases”

- Chapter 13: “Exploiting SQL Azure Database's Relational Features”

HTTP downloads of the two chapters are available for download at no charge from the book's Code Download page.

Tip: If you encounter articles from MSDN or TechNet blogs that are missing screen shots or other images, click the empty frame to generate an HTTP 404 (Not Found) error, and then click the back button to load the image.

Azure Blob, Drive, Table and Queue Services

• Peter Kellner posted Building a Simple Azure Blob Tree Viewer With Azure StorageClient API on 11/12/2010:

Understanding how Azure Blob Storage can be used to simulate directory structures is a little tricky to say the least. I’ve got a long forum thread on the Windows Azure Community site now discussing the details. As always, Steve Marx has been a big help here with a bunch of code. Steve’s got a great blog where he provides lots of examples and insights. Neil Mackenzie has also contributed here to getting to the answer.

Just so we now have an example, I’ve put together a simple windows form app that let’s you set a few variables in your app.config to point at your azure storage and container, let you view your app as a tree as well as see the code how it can be done. I have not commented the code much, just thought it would be good to get it out there. The running application shows you the data as follows.

So, for the details, I’m pasting below the meet of the code. Basically, it does what you would expect in terms of iterating through the directories recursively to build the list. Again, just set your parameters in your app.config as follows:

and you can run it for yourself and see how it goes: AzureBlobTreeViewer.zip

• Joe Giardino of the Windows Azure Storage Team warned developers on 11/12/2010 that Windows Azure Storage Client Library: CloudBlob.DownloadToFile() may not entirely overwrite file contents:

Summary

There is an issue in the Windows Azure Storage Client Library that can lead to unexpected behavior when utilizing the CloudBlob.DownloadToFile() methods.

The current implementation of CloudBlob.DownloadToFile() does not erase or clear any preexisting data in the file. Therefore, if you download a blob which is smaller than the existing file, preexisting data at the tail end of the file will still exist.

Example: Let’s say I have a blob titled movieblob that currently contains all the movies that I would like to watch in the future. I want to download this blob to a local file moviesToWatch.txt, which currently contains a lot of romantic comedies which my wife recently watched, however, when I overwrite that file with the action movies I want to watch (which happens to be a smaller list) the existing text is not completely overwritten which may lead to a somewhat random movie selection.

moviesToWatch.txt

You've Got Mail;P.S. I Love You.;Gone With The Wind;Sleepless in Seattle;Notting Hill;Pretty Woman;The Runaway Bride;The Holiday;Little Women;When Harry Met Sally

movieblob

The Dark Knight;The Matrix;Braveheart;The Core;Star Trek 2:The Wrath of Khan;The Dirty Dozen;

moviesToWatch.txt (updated)

The Dark Knight;The Matrix;Braveheart;The Core;Star Trek 2:The Wrath of Khan;The Dirty Dozen;Woman;The Runaway Bride;The Holiday;Little Women;When Harry Met Sally

As you can see in the updated local moviesToWatch.txt file, the last section of the previous movie data still exists on the tail end of the file.

This issue will be addressed in a forthcoming release of the Storage Client Library.

Workaround

In order to avoid this behavior you can use the CloudBlob.DownloadToStream() method and pass in the stream for a file that you have already called File.Create on, see below.

using (var stream = File.Create("myFile.txt"))

{

myBlob.DownloadToStream(stream);

}To reiterate, this issue only affects scenarios where the file already exists, and the downloaded blob contents are less than the length of the previously existing file. If you are using CloudBlob.DownloadToFile() to write to a new file then you will be unaffected by this issue. Until the issue is resolved in a future release, we recommend that users follow the pattern above.

Ranier Stropek described a Custom SSIS Data Source For Loading Azure Tables Into SQL Server with a 00:03:29 video segment and downloadable source code in an 11/11/2010 post:

Yesterday the wether in Frankfurt was horrible and so my plane from Berlin was late. I missed my connection flight to Linz and had to stay in a hotel in Frankfurt. Therefore I had some time and I used it for implementing a little sample showing how you can use a customer SSIS data source to easily transfer data from Windows Azure Table Storage to SQL Server databases using the ETL tool "SQL Server Integration Services" (SSIS).

Here is the source code for download. Please remember:

- This is just a sample.

- The code has not been tested.

- If you want to use this stuff you have to compile and deploy it. Check out the post-build actions in the project to see which DLLs you have to copy to which folders in order to make them run.

Let's start by demonstrating how the resulting component works inside SSIS. For this I have created this very short video:

Now let's take a look at the source code.

Reading an Azure Table without a fixed class

The first problem that has to be solved is to read data from an Azure table without knowing it's schema at compile time. There is an excellent post covering that in the Azure Community pages. I took the sourcecode shown there and extended/modified it a little bit so that it fits to what I needed.

First class is just a helper representing a column in the table store (Column.cs):

using System;

using Microsoft.SqlServer.Dts.Runtime.Wrapper;

namespace TableStorageSsisSource

{

public class Column

{

public Column(string columnName, string typeName, string valueAsString)

{

this.ColumnName = columnName;

this.ClrType = Column.GetType(typeName);

this.DtsType = Column.GetSsisType(typeName);

this.Value = Column.GetValue(this.DtsType, valueAsString);

}

public string ColumnName { get; private set; }

public Type ClrType { get; private set; }

public DataType DtsType { get; private set; }

public object Value { get; private set; }

private static Type GetType(string type)

{

switch (type)

{

case "Edm.String": return typeof(string);

case "Edm.Int32": return typeof(int);

case "Edm.Int64": return typeof(long);

case "Edm.Double": return typeof(double);

case "Edm.Boolean": return typeof(bool);

case "Edm.DateTime": return typeof(DateTime);

case "Edm.Binary": return typeof(byte[]);

case "Edm.Guid": return typeof(Guid);

default: throw new NotSupportedException(string.Format("Unsupported data type {0}", type));

}

}

private static DataType GetSsisType(string type)

{

switch (type)

{

case "Edm.String": return DataType.DT_NTEXT;

case "Edm.Binary": return DataType.DT_IMAGE;

case "Edm.Int32": return DataType.DT_I4;

case "Edm.Int64": return DataType.DT_I8;

case "Edm.Boolean": return DataType.DT_BOOL;

case "Edm.DateTime": return DataType.DT_DATE;

case "Edm.Guid": return DataType.DT_GUID;

case "Edm.Double": return DataType.DT_R8;

default: throw new NotSupportedException(string.Format("Unsupported data type {0}", type));

}

}

private static object GetValue(DataType dtsType, string valueAsString)

{

switch (dtsType)

{

case DataType.DT_NTEXT: return valueAsString;

case DataType.DT_IMAGE: return Convert.FromBase64String(valueAsString);

case DataType.DT_BOOL: return bool.Parse(valueAsString);

case DataType.DT_DATE: return DateTime.Parse(valueAsString);

case DataType.DT_GUID: return new Guid(valueAsString);

case DataType.DT_I2: return Int32.Parse(valueAsString);

case DataType.DT_I4: return Int64.Parse(valueAsString);

case DataType.DT_R8: return double.Parse(valueAsString);

default: throw new NotSupportedException(string.Format("Unsupported data type {0}", dtsType));

}

}

}

}Second class represents a row inside the table store (without strong schema; GenericEntity.cs):

using System.Collections.Generic;

using Microsoft.WindowsAzure.StorageClient;

namespace TableStorageSsisSource

{

public class GenericEntity : TableServiceEntity

{

private Dictionary<string, Column> properties = new Dictionary<string, Column>();

public Column this[string key]

{

get

{

if (this.properties.ContainsKey(key))

{

return this.properties[key];

}

else

{

return null;

}

}

set

{

this.properties[key] = value;

}

}

public IEnumerable<Column> GetProperties()

{

return this.properties.Values;

}

public void SetProperties(IEnumerable<Column> properties)

{

foreach (var property in properties)

{

this[property.ColumnName] = property;

}

}

}

}Last but not least we need a context class that interprets the AtomPub format and builds the generic content objects (GenericTableContent.cs):

using System;

using System.Data.Services.Client;

using System.Linq;

using System.Xml.Linq;

using Microsoft.WindowsAzure;

using Microsoft.WindowsAzure.StorageClient;

namespace TableStorageSsisSource

{

public class GenericTableContext : TableServiceContext

{

public GenericTableContext(string baseAddress, StorageCredentials credentials)

: base(baseAddress, credentials)

{

this.IgnoreMissingProperties = true;

this.ReadingEntity += new EventHandler<ReadingWritingEntityEventArgs>(GenericTableContext_ReadingEntity);

}

public GenericEntity GetFirstOrDefault(string tableName)

{

return this.CreateQuery<GenericEntity>(tableName).FirstOrDefault();

}

private static readonly XNamespace AtomNamespace = "http://www.w3.org/2005/Atom";

private static readonly XNamespace AstoriaDataNamespace = "http://schemas.microsoft.com/ado/2007/08/dataservices";

private static readonly XNamespace AstoriaMetadataNamespace = "http://schemas.microsoft.com/ado/2007/08/dataservices/metadata";

private void GenericTableContext_ReadingEntity(object sender, ReadingWritingEntityEventArgs e)

{

var entity = e.Entity as GenericEntity;

if (entity != null)

{

e.Data

.Element(AtomNamespace + "content")

.Element(AstoriaMetadataNamespace + "properties")

.Elements()

.Select(p =>

new

{

Name = p.Name.LocalName,

IsNull = string.Equals("true", p.Attribute(AstoriaMetadataNamespace + "null") == null ? null : p.Attribute(AstoriaMetadataNamespace + "null").Value, StringComparison.OrdinalIgnoreCase),

TypeName = p.Attribute(AstoriaMetadataNamespace + "type") == null ? null : p.Attribute(AstoriaMetadataNamespace + "type").Value,

p.Value

})

.Select(dp => new Column(dp.Name, dp.TypeName, dp.Value.ToString()))

.ToList()

.ForEach(column => entity[column.ColumnName] = column);

}

}

}

}The Custom SSIS Data Source

The custom SSIS data source is quite simple (TableStorageSsisSource.cs):

using System.Collections.Generic;

using Microsoft.SqlServer.Dts.Pipeline;

using Microsoft.SqlServer.Dts.Pipeline.Wrapper;

using Microsoft.WindowsAzure;

namespace TableStorageSsisSource

{

[DtsPipelineComponent(DisplayName = "Azure Table Storage Source", ComponentType = ComponentType.SourceAdapter)]

public class TableStorageSsisSource : PipelineComponent

{

public override void ProvideComponentProperties()

{

// Reset the component.

base.RemoveAllInputsOutputsAndCustomProperties();

ComponentMetaData.RuntimeConnectionCollection.RemoveAll();

// Add output

IDTSOutput100 output = ComponentMetaData.OutputCollection.New();

output.Name = "Output";

// Properties

var storageConnectionStringProperty = this.ComponentMetaData.CustomPropertyCollection.New();

storageConnectionStringProperty.Name = "StorageConnectionString";

storageConnectionStringProperty.Description = "Azure storage connection string";

storageConnectionStringProperty.Value = "UseDevelopmentStorage=true";

var tableNameProperty = this.ComponentMetaData.CustomPropertyCollection.New();

tableNameProperty.Name = "TableName";

tableNameProperty.Description = "Name of the source table";

tableNameProperty.Value = string.Empty;

}

public override IDTSCustomProperty100 SetComponentProperty(string propertyName, object propertyValue)

{

var resultingColumn = base.SetComponentProperty(propertyName, propertyValue);

var storageConnectionString = (string)this.ComponentMetaData.CustomPropertyCollection["StorageConnectionString"].Value;

var tableName = (string)this.ComponentMetaData.CustomPropertyCollection["TableName"].Value;

if (!string.IsNullOrEmpty(storageConnectionString) && !string.IsNullOrEmpty(tableName))

{

var cloudStorageAccount = CloudStorageAccount.Parse(storageConnectionString);

var context = new GenericTableContext(cloudStorageAccount.TableEndpoint.AbsoluteUri, cloudStorageAccount.Credentials);

var firstRow = context.GetFirstOrDefault(tableName);

if (firstRow != null)

{

var output = this.ComponentMetaData.OutputCollection[0];

foreach (var column in firstRow.GetProperties())

{

var newOutputCol = output.OutputColumnCollection.New();

newOutputCol.Name = column.ColumnName;

newOutputCol.SetDataTypeProperties(column.DtsType, 0, 0, 0, 0);

}

}

}

return resultingColumn;

}

private List<ColumnInfo> columnInformation;

private GenericTableContext context;

private struct ColumnInfo

{

public int BufferColumnIndex;

public string ColumnName;

}

public override void PreExecute()

{

this.columnInformation = new List<ColumnInfo>();

IDTSOutput100 output = ComponentMetaData.OutputCollection[0];

var cloudStorageAccount = CloudStorageAccount.Parse((string)this.ComponentMetaData.CustomPropertyCollection["StorageConnectionString"].Value);

context = new GenericTableContext(cloudStorageAccount.TableEndpoint.AbsoluteUri, cloudStorageAccount.Credentials);

foreach (IDTSOutputColumn100 col in output.OutputColumnCollection)

{

ColumnInfo ci = new ColumnInfo();

ci.BufferColumnIndex = BufferManager.FindColumnByLineageID(output.Buffer, col.LineageID);

ci.ColumnName = col.Name;

columnInformation.Add(ci);

}

}

public override void PrimeOutput(int outputs, int[] outputIDs, PipelineBuffer[] buffers)

{

IDTSOutput100 output = ComponentMetaData.OutputCollection[0];

PipelineBuffer buffer = buffers[0];

foreach (var item in this.context.CreateQuery<GenericEntity>((string)this.ComponentMetaData.CustomPropertyCollection["TableName"].Value))

{

buffer.AddRow();

for (int x = 0; x < columnInformation.Count; x++)

{

var ci = (ColumnInfo)columnInformation[x];

var value = item[ci.ColumnName].Value;

if (value != null)

{

buffer[ci.BufferColumnIndex] = value;

}

else

{

buffer.SetNull(ci.BufferColumnIndex);

}

}

}

buffer.SetEndOfRowset();

}

}

}

<Return to section navigation list>

SQL Azure Database and Reporting

Steve Yi recommended on 11/12/2010 Julie Lerman’s (@julielerman) Using the Entity Framework to Reduce Network Latency to SQL Azure article in MSDN Magazine’s November 2010 issue:

Julie Lerman writing for MSDN Magazine has written an article titled, “Using the Entity Framework to Reduce Network Latency to SQL Azure.” This article examines mitigation strategies for addressing network latency with SQL Azure Database when connecting from on-premises applications, which can substantially affect overall application performance.

Fortunately, a good understanding of the effects of network latency leaves you in a powerful position to use the Entity Framework to reduce that impact in the context of SQL Azure Database.

These concerns are eliminated if the application is running on Windows Azure and is deployed to the same datacenter as your SQL Azure Database.

Read: Using the Entity Framework to Reduce Network Latency to SQL Azure

Alyson Behr reported Microsoft's PASS Summit features new business and data cloud products for SD Times on the Web on 11/11/2010:

Microsoft made several announcements at the Professional Association for SQL Server (PASS) 2010 Summit this week, including the general availability of SQL Server 2008 R2 Parallel Data Warehouse, previously codenamed “Madison”; the CTP1 of SQL Server, codenamed “Denali;” and the release of a new cloud service, codenamed “Atlanta."

According to the company, SQL Server 2008 R2 Parallel Data Warehouse is a scalable, high-performance appliance targeted toward large warehouses with hundreds of terabytes of data, as it is pre-architected to deliver simplicity and decreased deployment time. This release is the next-generation iteration of DATAllegro’s data warehouse appliance.

Ted Kummert, senior vice president of the Business Platform Division at Microsoft, said, “Enterprises today are facing challenges of increasing volumes of data from which they need to gain business insight rapidly.” He added: “SQL Server 2008 R2 Parallel Data Warehouse provides high-scale enterprise capabilities delivered as an appliance with choice and deployment simplicity.”

The product uses massively parallel processing (MPP) on SQL Server 2008 R2, Windows Server 2008 and other industry-standard hardware. Microsoft currently is in partnership with HP and is looking to forge additional partnerships with France-based cluster provider Bull. The MPP architecture helps enable better scalability, more predictable performance, reduced risk and a lower cost per terabyte.

Query processing occurs within one physical instance of a database in symmetric multi-processing architecture. The CPU, memory and storage impose physical limits on speed and scale. The new release partitions large tables across multiple physical nodes, each node having a dedicated CPU, memory and storage, and each running its own instance of SQL Server in a parallel, shared architecture.

The warehousing appliance will be sold at a per-processor software price of US$38,255 in racks of 11 or 22 nodes.Microsoft also unveiled the community technology preview (CTP) of SQL Server, codenamed “Denali." "The appliance is an emerging industry trend," said Microsoft General Manager Eugene Saburi. "Consumers are looking for simplicity in the way they consume technologies. We're trying to take some of that complexity out by shipping preconfigured and pre-optimized appliances."

The new version will feature SQL Server AlwaysOn, designed to reduce downtime and lower total cost of ownership, and a project codenamed "Crescent," a new Web-based data visualization and reporting solution.

New capabilities for strengthened data management, performance and integration include Project Apollo, a new column-store database for increased query performance; Project Juneau, a new tool integrated into Visual Studio that unifies SQL Server and cloud SQL Azure development for database and application developers; and Data Quality Services, knowledge-driven tools that developers can use to create and maintain a Data Quality Knowledge Base. [Emphasis added.]

Also released is a new cloud service that oversees SQL Server configuration, called Atlanta. The secure cloud service assists IT in avoiding configuration problems and resolving issues. The Atlanta agent and gateway require Microsoft .NET Framework 3.5 SP1 and Silverlight 4, and can be run on recent versions of either Firefox or Internet Explorer. Atlanta is supported by most business versions of the 32- and 64-bit editions of Windows Server 2008 and 2008 R2.

<Return to section navigation list>

Dataplace DataMarket and OData

•• Shawn Wildermuth described working with OData on WP7 in his Architecting WP7 - Part 7 of 10: Data on the Wire(less) post of 11/13/2010:

In this (somewhat belated) part 7

6of my Architecture for the Windows Phone 7, I want to talk about dealing with data across the wire (or lack of wire I guess). This is at the heart of the idea that the phone is one of those screens in '3 screens and the cloud'. The use-cases for using data are varied including:

- Consuming public data (e.g. displaying Netflix Queue or Amazon Catalog).

- Consuming private data (e.g. showing your company's private

publicdata).- Data Entry on the phone.

When coming from Silverlight or the web, the real challenge is to meet your needs while realizing you're working with limitations. When you are creating an app for the desktop (e.g. browser, plug-in based or desktop client) you can make assumptions about network bandwidth and machine memory. While most developers won't admit it, we will often (to help the project get done) just consume the data we need without regard to these limitations. For the most part on the desktop this works as we often have enough bandwidth and lots of memory. On the phone this is definitely different.

You have a number of choices for gathering data across the wire(less) but the real job in architecting a solution is to get just enough data from the cloud. The limitations of a 3G (or eventually 4G) connections aside, making smart decisions about what to bring over is crucial. You may think that 3G should be enough to just get the data you want but don't forget that you need consume that data too.

I recently chagned updated my Training app for the Windows Phone 7 to optimize the experience. I found that over 3G (which is hard to test without a device) that the experience was less then perfect. When I built originally build the app, I just pulled down all the data for my public courses into the application. In doing that the start-up time was pretty awful. To address this, I purposely tuned the experience to make sure that I only loaded the data that I really needed. But what that meant at the time was to only pull down the information on the selected workshop when the user requested it. In fact, I did some slight-of-hand to load the outline and events of the workshop while the description of the workshop was shown to the user. For example:

void LoadWorkshopIntoView(int id) { // Get the right workshop _workshop = App.CurrentApp.TheVM.Workshops .Where(w => w.WorkshopId == id) .FirstOrDefault(); // Make the Data Bind DataContext = _workshop; // Only load the data if the data is needed if (_workshop != null && _workshop.WorkshopTopics.Count == 0) { // Load the data asynchronously while the user // reads the description App.CurrentApp.TheVM.LoadWorkshopAsync(_workshop); } }I released the application to the marketplace and the performance was acceptable...but acceptable is not good enough. Upon refactoring the code, I realized that I was loading the entire entity from the server (even though there were a lot of fields I never used). It became clear that if the size of the payload were lower, then the performance could really be better.

This story does not depend on the nature of your data access. In my case I was using OData to consume the data. My original request looked like this:

var uri = string.Concat("/Events?$filter=Workshop/WorkshopId eq {0}", "&$expand=EventLocation,Instructor,TrainingPartner"); var qryUri = string.Format(uri, workshop.WorkshopId);By requesting the entire Workshop (and the related EventLocation, Instructor and TrainingPartner) I was retrieving a small number of very large objects. Viewing the requests in Fiddler told me that the size of these objects were pretty good:

HTTP/1.1 200 OK Cache-Control: no-cache Content-Length: 20033 Content-Type: application/atom+xml;charset=utf-8 Server: Microsoft-IIS/7.5 DataServiceVersion: 1.0; X-AspNet-Version: 4.0.30319 X-Powered-By: ASP.NET Date: Sat, 13 Nov 2010 21:17:38 GMTBy limiting the type of data to only fields I needed I thought I could see some small change. I decided to use the projection support in OData to only retrieve the objects I needed:

var uri = string.Concat("/Events?$filter=Workshop/WorkshopId eq {0}", "&$expand=EventLocation,Instructor,TrainingPartner", "&$select=EventId,EventDate,EventLocation/LocationName,", "TrainingLanguage,Instructor/Name,TrainingPartner/Name,", "TrainingPartner/InformationUrl"); var qryUri = string.Format(uri, workshop.WorkshopId);Selecting these fields which were the *only* ones I used reduced the size to:

HTTP/1.1 200 OK Cache-Control: no-cache Content-Length: 8637 Content-Type: application/atom+xml;charset=utf-8 Server: Microsoft-IIS/7.5 DataServiceVersion: 1.0; X-AspNet-Version: 4.0.30319 X-Powered-By: ASP.NET Date: Sat, 13 Nov 2010 21:17:59 GMTThat's over a 55+% savings! The size difference wasn't only in bandwidth, but the cost in memory to deserialize the results, storage size in memory and CPU cost should be substantially cheaper too. I did the same thing with similar results to the Events list. I saw a 55+% savings there too.

While using OData's projection mechanism ($select) worked for my case, OData isn't special here. You could have done the same when you're building a web service or REST service. The only case where this type of decision isn't possible is when you're building an app on top of a service you don't control. Since most REST and Web Services don't have a built-in mechanism to limit the result set, you could proxy through your servers and trim the offending sized entities too.

The important thing to think about is that you're working with a different kind of platform than you have in the past (when working strictly on the desktop). You have to think about optimization the whole way through.

•• User20358 asked OData based WCF service or regular WCF service for Silverlight application? in StackOverflow on 11/13/2010:

Hi, I have just started evaluating whether or not I should be using OData influenced wcf data services or a standard WCF service application as the primary data source for Silverlight applications. I would like your thoughts on which is a better way under what situation/circumstance. What is lighter over the wire, easier to maintain, etc.

What I have gathered so far is:

- There are no Wcf data service templates in VS2010 that I know of, and I will need to create a asp.net web project first and then add a wcf data service, so its going to affect how I structure my projects.

- WCF Data services expose actual table names over the service. I don't know yet of a way I can alias them and I'm not sure its a good idea to let the world know my table structure

- In a standard wcf service I will need to write linq queries against the EF or Domain service classes on the service side, while in a data service I can move that processing logic to the client.

- At first glance examining the classes exposed by the wcf data services seem easier to read and understand than those exposed by the EF

Do add your thoughts on this..

Mark Scheuner replied on the same date:

There are no Wcf data service templates in VS2010 that I know of,

Not project template - just an item template (for use inside an ASP.NET web site or web app). WCF DataServices are very tightly coupled to HTTP, so they only make sense in a web site/app.

WCF Data services expose actual table names over the service.

NO ! At least not necessarily. The whole point of EF is that you can decouple the actual physical structure of your database from the (conceptual) model that gets exposed. You can totally rename entities, you can map several entities onto a single table, split up an entity over several tables, you can leave out attributes - anything you like!

At first glance examining the classes exposed by the wcf data services seem easier to read and understand than those exposed by the EF

I doubt it - because by default, WCF Data Services will use a Linq-to-SQL or EF model as their basis, really. You can make that as simple or as complicated as you like.

Using a "regular" WCF service allows you to use the

netTcpBindingfor much faster performance (thanks to binary message encoding vs. textual messages for other bindings), when using your Silverlight 4 app in a company-internal network (doesn't work for internet scenarios) - not something you can do with WCF DataServices.The main difference in my opinion is the SOAP vs. REST difference:

SOAP (traditional WCF) is oriented towards methods - you think and design your system in terms of methods - things you can do (

GetCustomer,SaveOrderetc.)REST (the WCF DataServices approach) is all about resources, e.g. you have your resources and collections of resources (e.g.

Customers) and you expose those out to the world, with standard HTTP verbs (GET, POST, PUT, DELETE) instead of separate specific methods that you defineSo both approaches have their pros and cons. I guess the most important question is: what kind of app are you creating, and what kind of user audience are you targetting?

Update:

for intranet / internal apps, I would think the advantage of a

netTcpBinding(binary encoding) would justify using a classic WCF service - also for data-intensive apps, I personally find a method-based approach (GetCustomer, SaveCustomer) to be easier to use and understandfor a public-facing app, using HTTP and being as interoperable as possible is probably your major concern, so in that scenario, I'd probably favor the WCF Data Service - easy to use, easy to understand URLs for the user

Nina (Ling) Hu posted a link to her Building Offline Applications using Sync Framework and SQL Azure PDC2010 presentation on 11/13/2010. Notice the use of OData as the sync protocol:

Following is the demo summary:

The video presentation from the PDS 2010 archives is here.

• Jon Udell (@JonUdell) explained How to translate an OData feed into an iCalendar feed in an 11/12/2010 post to the O’Reilly Answers blog:

Introduction

In this week's companion article on the Radar blog, I bemoan the fact that content management systems typically produce events pages in HTML format without a corresponding iCalendar feed, I implore CMS vendors to make iCalendar feeds, and I point to a validator that can help make them right. Typically these content management systems store event data in a database, and flow the data through HTML templates to produce HTML views. In this installment I'll show one way that same data can be translated into an iCalendar feed.

The PDC 2010 schedule

The schedule for the 2010 Microsoft Professional Developers conference was, inadvertently, an example of a calendar made available as an HTML page but not also as a companion iCalendar feed. There was, however, an OData feed at http://odata.microso...ataSchedule.svc. When Jamie Thomson noticed that, he blogged:

Whoop-de-doo! Now we can, get this, view the PDC schedule as raw XML rather than on a web page or in Outlook or on our phone, how cool is THAT?

Seriously, I admire Microsoft's commitment to OData, both in their Creative Commons licensing of it and support of it in a myriad of products but advocating its use for things that it patently should not be used for is verging on irresponsible and using OData to publish schedule information is a classic example.

I both understood Jamie's frustration, and applauded the publication of a generic OData service that can be used in all sorts of ways. Here, for example, are some questions that you could ask and answer directly in your browser:

Q: How many speakers? (http://odata.microso...Speakers/$count) A: 79Q: How many Scott Hanselman sessions? (http://odata.microso...tt+Hanselman%27) A: 1Q: How many cloud services sessiosn? (http://odata.microso...oud+Services%27) A: 31General-purpose access to data is a wonderful thing. But Jamie was right, a special-purpose iCalendar feed ought to have been provided too. Why wasn't that done? It was partly just an oversight. But it's an all-too-common oversight because, although iCalendar is strongly analogous to RSS, that analogy isn't yet widely appreciated.

To satisfy Jamie's request, and to demonstrate one way to translate a general-purpose data feed into an iCalendar feed, I wrote a small program to do that translation. Let's explore how it works.

The PDC 2010 OData feed

If you hit the PDC's OData endpoint with your browser, you'll retrieve this Atom service document:

<service xml:base="http://odata.microsoftpdc.com/ODataSchedule.svc/" xmlns:atom="http://www.w3.org/2005/Atom" xmlns:app="http://www.w3.org/2007/app" xmlns="http://www.w3.org/2007/app"> <workspace> <atom:title>Default</atom:title> <collection href="ScheduleOfEvents"> <atom:title>ScheduleOfEvents</atom:title> </collection> <collection href="Sessions"> <atom:title>Sessions</atom:title> </collection> <collection href="Tracks"> <atom:title>Tracks</atom:title> </collection> <collection href="TimeSlots"> <atom:title>TimeSlots</atom:title> </collection> <collection href="Speakers"> <atom:title>Speakers</atom:title> </collection> <collection href="Manifests"> <atom:title>Manifests</atom:title> </collection> <collection href="Presenters"> <atom:title>Presenters</atom:title> </collection> <collection href="Contents"> <atom:title>Contents</atom:title> </collection> <collection href="RelatedSessions"> <atom:title>RelatedSessions</atom:title> </collection> </workspace> </service>The service document tells you which collections exist, and how to form URLs to access them. If you form the URL http://odata.microso...le.svc/Sessions and hit it with your browser, you'll retrieve an Atom feed with entries like this:

<content type="application/xml"> <m:properties> <d:SessionState>VOD</d:SessionState> <d:Tags>Windows Azure Platform</d:Tags> <d:SessionId m:type="Edm.Guid">1b08b109-c959-4470-961b-ebe8840eeb84</d:SessionId> <d:TrackId>Cloud Services</d:TrackId> <d:TimeSlotId m:type="Edm.Guid">bd676f93-2294-4f76-bf7f-60e355d8577b</d:TimeSlotId> <d:Code>CS01</d:Code> <d:TwitterHashtag>#azure #platform #pdc2010 #cs01</d:TwitterHashtag> <d:ThumbnailUrl>http://az8714.vo.mse...ads/matthew.png</d:ThumbnailUrl> <d:ShortUrl>http://bit.ly/9n4t9S</d:ShortUrl> <d:Room>McKinley</d:Room> <d:StartTime m:type="Edm.Int32">0</d:StartTime> <d:ShortTitle>Building High Performance Web Apps with Azure</d:ShortTitle> <d:ShortDescription xml:space="preserve"> Windows Azure Platform enables developers to build dynamically scalable web applications easily. Come and learn how forthcoming new application services in conjunction with services like the Windows </d:ShortDescription> <d:FullTitle>Building High Performance Web Applications with the Windows Azure Platform</d:FullTitle> </m:properties> </content>To represent this event in iCalendar doesn't require much information: a title, a description, a location, and the times. The mapping from the fields shown here and the properties in an iCalendar feed can go like this:

OData

iCalendarShortTitle

SUMMARYShortDescription

DESCRIPTIONRoom

LOCATION?

DTSTART?

DTENDWe're off to a good start, but where will DTSTART and DTEND come from? Let's check out the TimeSlots collection. It's an Atom feed with entries like this:

<entry> <content type="application/xml"> <m:properties> <d:Duration>01:00:00</d:Duration> <d:Id m:type="Edm.Guid">bd676f93-2294-4f76-bf7f-60e355d8577b</d:Id> <d:Start m:type="Edm.DateTime">2010-10-29T15:15:00</d:Start> <d:End m:type="Edm.DateTime">2010-10-29T16:15:00</d:End> </m:properties> </content> </entry>Nothing about OData requires the sessions to be modeled this way. The start and end times could have been included in the Sessions table. But since they weren't, we'll access them indirectly by matching the TrackId in the Sessions table to the Id in the TimeSlots table.

Reading the OData collections

OData collections are just Atom feeds, so you can read them using any XML parser. The elements in each entry are optionally typed, according to a convention defined by the OData specification. One way to represent the entries in a feed is as a list of dictionaries, where each dictionary has keys that are field names and values that are objects. Here's one way to convert a feed into that kind of list.

static List<Dictionary<string, object>> GetODataDicts(byte[] bytes)

{

XNamespace ns_odata_metadata = "http://schemas.microsoft.com/ado/2007/08/dataservices/metadata";

var dicts = new List<Dictionary<string, object>>();

var xdoc = XmlUtils.XdocFromXmlBytes(bytes);

IEnumerable<XElement> propbags = from props in xdoc.Descendants(ns_odata_metadata + "properties") select props;

dicts = UnpackPropBags(propbags);

return dicts;

}// walk and unpack an enumeration of elements

static List<Dictionary<string, object>> UnpackPropBags(IEnumerable<XElement> propbags)

{

var dicts = new List<Dictionary<string, object>>();

foreach (XElement propbag in propbags)

{

var dict = new Dictionary<string, object>();

IEnumerable<XElement> xprops = from prop in propbag.Descendants() select prop;

foreach (XElement xprop in xprops)

{

object value = xprop.Value;

var attrs = xprop.Attributes().ToList();

var type_attr = attrs.Find(a => a.Name.LocalName == "type");

if ( type_attr != null)

{

switch ( type_attr.Value.ToString() )

{

case "Edm.DateTime":

value = Convert.ToDateTime(xprop.Value);

break;

case "Edm.Int32":

value = Convert.ToInt32(xprop.Value);

break;

case "Edm.Float":

value = float.Parse(xprop.Value);

break;

case "Edm.Boolean":

value = Convert.ToBoolean(xprop.Value);

break;

}

}

dict.Add(xprop.Name.LocalName, value);

}

dicts.Add(dict);

}

return dicts;

}In this C# example the XML parser is the one provided by System.Xml.Linq. The GetODataDicts method creates a System.Xml.Linq.XDocument in the variable xdoc, and then forms a LINQ expression to query the document for elements, which are in the namespace http://schemas.micro...vices/metadata. Then it hands the query to UnpackPropBags, which enumerates the elements and does the following for each:

- Creates an empty dictionary

- Enumerates the properties

- Saves each property's value in an object

- If the property is typed, converts the object to the indicated type

- Adds each property to the dictionary

- Adds the dictionary to the accumulating list

Writing the iCalendar feed

Now we can use GetODataDicts twice, once for sessions and again for timeslots. Then we can walk through the list of session dictionaries, translate each into a corresponding iCalendar object, and finally serialize the calendar as a text file.

// load sessions

var sessions_uri = new Uri("http://odata.microsoftpdc.com/ODataSchedule.svc/Sessions");

var xml_bytes = new WebClient().DownloadData(sessions_uri);

var session_dicts = GetODataDicts(xml_bytes);

// load timeslots

var timeslots_uri = new Uri("http://odata.microsoftpdc.com/ODataSchedule.svc/TimeSlots");

xml_bytes = new WebClient().DownloadData(timeslots_uri);

var timeslot_dicts = GetODataDicts(xml_bytes);

// create calendar object

var ical = new DDay.iCal.iCalendar();

// add VTIMEZONE

var tzid = "Pacific Standard Time";

var tzinfo = System.TimeZoneInfo.FindSystemTimeZoneById(tzid);

var timezone = DDay.iCal.iCalTimeZone.FromSystemTimeZone(tzinfo);

ical.AddChild(timezone);

foreach (var session_dict in session_dicts)

{

var url = "http://microsoftpdc.com";

var summary = session_dict["ShortTitle"].ToString();

var description = session_dict["ShortDescription"].ToString();

var location = session_dict["Room"].ToString();

var timeslot_id = session_dict["TimeSlotId"]; // find the timeslot

var timeslot_dict = timeslot_dicts.Find(ts => (string) ts["Id"] == timeslot_id.ToString());

if (timeslot_dict != null) // because test record has id 00000000-0000-0000-0000-000000000000

{

var dtstart = (DateTime)timeslot_dict["Start"]; // local time

var dtend = (DateTime)timeslot_dict["End"];

var evt = new DDay.iCal.Event();

evt.DTStart = new iCalDateTime(dtstart); // time object with zone

evt.DTStart.TZID = tzid; // "Pacific Standard Time"

evt.DTEnd = new iCalDateTime(dtend);

evt.DTEnd.TZID = tzid;

evt.Summary = summary;

evt.Url = new Uri(url);

if (location != null)

evt.Location = location;

if (description != null)

evt.Description = description;

ical.AddChild(evt);

}

}

var serializer = new DDay.iCal.Serialization.iCalendar.iCalendarSerializer(ical);

var ics_text = serializer.SerializeToString(ical);

File.WriteAllText("pdc.ics", ics_text);In 2010 the PDC was held in Redmond, WA, and the times expressed in the OData feed were local to the Pacific time zone. But the event was globally available and live everywhere. I was following it from my home in the Eastern time zone, for example, so I wanted PDC events starting at 9AM Pacific to show up at 12PM in my calendar. To accomplish that, the program that generates the iCalendar feed has to do two things:

1. Produce a VTIMEZONE component that defines the standard and daylight savings offsets from GMT, and when daylight savings starts and stops. On Windows, you acquire this ruleset by looking up a time zone id (e.g. "Pacific Standard Time") using System.TimeZoneInfo.FindSystemTimeZoneById. DDay.iCal can then apply the ruleset, using DDay.iCal.iCalTimeZone.FromSystemTimeZone, to produce the VTIMEZONE component shown below. On a non-Windows system, using some other iCalendar library, you'd do the same thing -- but in these cases, the ruleset is defined in the Olson (aka Zoneinfo, aka tz) database. If you've never had the pleasure, you should read through this remarkable and entertaining document sometime, it's a classic work of historical scholarship!

2. Relate the local times for events to the specified timezone. Using DDay.iCal, I do that by assigning the same time zone id used in the VTIMEZONE component (i.e., "Pacific Standard Time") to each event's DTStart.TZID and DTEnd.TZID. If you don't do that, the dates and times come out like this:

DTSTART:20101029T090000

DTEND:20101029T100000

If my calendar program is set to Eastern time then I'll see these events at 9AM my time, when I should see them at noon.

If you do assign the time zone id to DTSTART and DTEND, then the dates and times come out like this:

DTSTART;TZID=Pacific Standard Time:20101029T090000

DTEND;TZID=Pacific Standard Time:20101029T100000

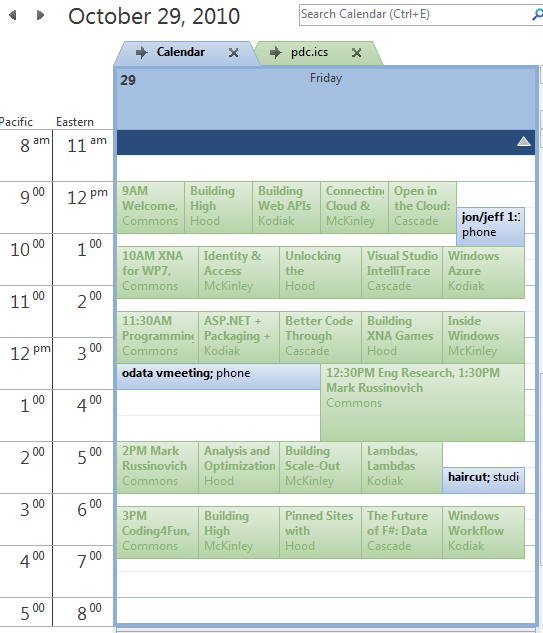

Now an event at 9AM Pacific shows up at noon on my Eastern calendar. Here's a picture of my personal calendar and the PDC calendar during the PDC:

Examining the iCalendar feed

Here's a version of the output that I've stripped down to just one event.

BEGIN:VCALENDAR VERSION:2.0 PRODID:-//ddaysoftware.com//NONSGML DDay.iCal 1.0//EN BEGIN:VTIMEZONE TZID:Pacific Standard Time BEGIN:STANDARD DTSTART;VALUE=DATE:20090101 RRULE:FREQ=YEARLY;BYDAY=1SU;BYHOUR=2;BYMINUTE=0;BYMONTH=11 TZNAME:Pacific Standard Time TZOFFSETFROM:-0700 TZOFFSETTO:-0800 END:STANDARD BEGIN:DAYLIGHT DTSTART;VALUE=DATE:20090101 RRULE:FREQ=YEARLY;BYDAY=2SU;BYHOUR=2;BYMINUTE=0;BYMONTH=3 TZNAME:Pacific Daylight Time TZOFFSETFROM:-0800 TZOFFSETTO:-0700 END:DAYLIGHT END:VTIMEZONE BEGIN:VEVENT DESCRIPTION:The Windows Azure Platform is an open and interoperable platfor m which supports development using many programming languages and tools. In this session\, you will see how to build large-scale applications in th e cloud using Java\, taking advantage of new Windows Azure Platform featur es. You will learn how to build Windows Azure applications using Java wit h Eclipse\, Apache Tomcat\, and the Windows Azure SDK for Java. DTEND;TZID=Pacific Standard Time:20101029T100000 DTSTAMP:20101110T163629 DTSTART;TZID=Pacific Standard Time:20101029T090000 LOCATION:Cascade SEQUENCE:0 SUMMARY:Open in the Cloud: Windows Azure and Java UID:f3fbb0bc-4415-4883-ab72-796df7487c35 URL:http://microsoftpdc.com END:VEVENT END:VCALENDARAs I discuss in this week's companion article on the Radar blog, this isn't rocket science. Back in the day, many of us whipped up basic RSS 0.9 feeds "by hand" without using libraries or toolkits. It's feasible to do the same with iCalendar, especially now that there's a validator to help you check your work.

• Clint Rutkas and Bob Tabor present Windows Phone7 for Absolute Beginners, a series of Channel9 videos with downloadable source code:

This video series will help aspiring Windows Phone 7 developers get started. We'll start off with the basics and work our way up so in a few hours, you will know enough to build simple WP7 applications, such as a GPS aware note taking application.

We'll walk you through getting the tools, knowing what an if statement is, to using the GPS built into the phone and much more!

• Michael Washington (@ADefWebserver) posted Windows Phone 7 Development for Absolute Beginners (The parts you care about) on 11/13/2010:

Microsoft has posted a great series Windows Phone 7 Development for Absolute Beginners. However, it starts with “how to program”. This might drive you crazy if you already know how to program. Also, if you are like me and simply want the links to download the videos and the code, this should help:

Isolated Storage, ListBox and DataTemplates

In a continuation of the previous video, Bob demonstrates how to read a list of file names saved to the phone's flash drive and display those names in a ListBox. Each file name is displayed in a DataTemplate that contains a HyperlinkButton. This results in one Hyperlink button for each file name. Bob then demonstrates how to pass the file name in the QueryString to a second Silverlight page that allows the user to view the contents of the file.

Michael continues with 20+ entries with links and abstracts. If you’re interested in MarketPlace Datamarket and OData, you’ll probably want to write WP7 clients.

Alex James (@adjames) described Named Resource Streams in an 11/12/2010 post to the WCF Data Services blog:

One of the new WCF Data Services features in the October 2010 CTP is something called Named Resource Streams.

Background

Data Services already supports Media Link Entries which allows you to associate a single streamed blob with an entry. For example, you could have a Photo entry that lists the metadata about the photo and links directly to the photo itself.

But what happens though if you have multiple versions of the Photo?

Today you could model this with multiple MLEs, but doing so requires you to have multiple copies of the metadata for each version of the stream. Clearly this is not desirable when you have multiple versions of essentially the photo.

It turns out that this is a very common scenario, common enough that we thought it needed to be supported without forcing people to use multiple MLEs. So, with this release we’ve allowed an entry to have multiple streams associated with it such that you can now create services that do things such as expose a Photo entry with links to its print, web and thumbnail versions.

Let’s explore how to use this feature.

Client-Side

Once a producer exposes Named Resource Streams you have two ways to manipulate them on the client, the first is via new overloads of GetReadStream(..) and SetSaveStream(..) that take a stream name:

// retrieve a person

Person fred = (from p in context.People

where p.Name == "Fred"

select p).Single();

// set custom headers etc via args if needed.

var args = new DataServiceRequestArgs();

args.Headers.Add(…);

// make the request to get the stream ‘Photo’ stream on the Fred entity.

var response = context.GetReadStream(fred, "Photo", args);

Stream stream = response.Stream;If you want to update the stream you use SetWriteStream(..) something like this:

var fileStream = new FileStream("C:\\fred.jpeg", FileMode.Open);

context.SetSaveStream(person, "Photo", fileStream, true, args);

context.SaveChanges();The other option is to use the StreamDescriptor which hangs off the entity’s EntityDescriptor, and carries information about the SelfLink, EditLink (often they are the same, but you can sometimes get and update the stream via different urls), ETag and ContentType of the Stream:

// Get the entity descriptor for Fred

var dscptor = context.GetEntityDescriptor(fred);

// Get the ‘Photo’ StreamDescriptor

var photoDscptor = dscptor.StreamDescriptors.Single(s => s.Name == "Photo");

Uri uriOfFredsPhoto = photoDscptor.SelfLink;With the StreamDescriptor you can do low level network activities or perhaps use this Uri when rendering a webpage in the ‘src’ of an <img> tag.

Server-Side

Adding Named Resource Streams to your Model

The first step to using Named Resource Streams is to add them into your model. Because data services supports 3 different types of data sources (Entity Framework, Reflection, Custom) there are three ways to add Named Resource Streams into your model.

Entity Framework

When using the Entity Framework adding a Named Resource Stream to an EntityType is pretty straight forward, you simply add a structural annotation into your Entity Framework EDMX file something like this:

<EntityType Name="Person">

<Key>

<PropertyRef Name="ID" />

</Key>

<Property Name="ID" Type="Edm.Int32" Nullable="false" />

<Property Name="Name" Type="Edm.String" Nullable="true" />

<m:NamedStreams>

<m:NamedStream Name="PhotoThumbnail" />

<m:NamedStream Name="Photo" />

</m:NamedStreams>

</EntityType>Here the the m:NamedStreams element (xmlns:m="http://schemas.microsoft.com/ado/2007/08/dataservices/metadata") indicates Person has two Named Resource Streams, Photo and PhotoThumbnail.

Reflection Provider

To add a Named Resource Streams to an EntityType using the Reflection Provider we added a new attribute called NamedStreamAttribute:

[NamedStream(“PhotoThumbnail”)]

[NamedStream(“Photo”)]

public class Person

{

public int ID {get;set;}

public string Name {get;set}

}This example is the Reflection provider equivalent of the Entity Framework example above.

Custom Provider

When you write a custom provider (see this series for more) you add the Named Resource Streams via your implementation of IDataServiceMetadataProvider.

To support this we added a new ResourceStreamInfo class which you can add to your ResourceType definitions something like this:

ResourceType person = …

person.AddNamedStream(new ResourceStreamInfo("PhotoThumbnail"));

person.AddNamedStream(new ResourceStreamInfo("Photo"));$metadata

No matter how you tell Data Services about your Named Resource Streams consumers always learn about Named Streams via $metadata, which is an EDMX file, so as you might guess we simply tell consumers about named streams using the same structured annotations we used in the Entity Framework provider example above.

Implementing Named Resource Streams

Next you have to implement a new interface called IDataServiceStreamProvider2

Implementing this interface is very similar to implementing IDataServiceStreamProvider which Glenn explains well in his two part series (Part1 & Part2).

IDataServiceStreamProvider2 extends IDataServiceStreamProvider by adding some overloads to work with Named Resource Streams.

The overloads always take a ResourceStreamInfo from which you can learn which Named Resource Streams is targeted. When reading from or writing to the stream you also get the eTag for the stream and a flag indicating whether the eTag should be checked.

Summary

As you can see Named Resource Streams add a significant new feature to Data Services, which is very useful if you have any entities that contain metadata for anything that has multiple representations, like for example Photos or Videos.

Named Resource Streams are very easy to use client-side and build upon our existing Stream capabilities on the server side.

As always we are keen to hear your thoughts and feedback.

Alex James (@adjames) proposed Support for Any and All OData operators in an 11/11/2010 post to the OData blog and mailing list:

One thing that folks in this list and elsewhere have brought up is the need to express filters based on the contents of a collection, be that a set of related entities or a multi-valued property.

This is because currently there is no easy way to:

- find all movies starring a particular actor

- find all orders where at least one of the order-lines is for a particular product

- find all orders where every order-line has a value greater than $400

- find all movies tagged 'quirky' - where Tags is a multi-valued property.

- find all movies with a least one actor tagged as a favorite

This proposal addresses that by introducing two new operators, "any" and "all".

Relationships

For example it would be nice if this:

~/Movies/?$filter=any(Actors,Name eq 'John Belushi')

could be used to find any John Belushi movie. And this:

~/Orders/?$filter=any(OrderLines,Product/Name eq 'kinect')

would find orders that include one or more 'kinect' orderline. And this:

~/Orders/?$filter=all(OrderLines, Cost gt 400.0m)

would find orders where all orderlines costs more than $400.

Multi-Valued properties

All of the above query relationships, but this also needs to work for Multi-Value properties too:

The key difference here from a semantics perspective - is that a multi-valued property is often just a primitive, rather than an entity - so you often need to refer to 'it' directly in comparisons, perhaps something like this:

~/Movies/?$filter=any(Tags, it eq 'quirky')

In this example Tags is a multi-valued property containing strings. And 'it' or 'this' or something similar is required to refer to the current tag.

Given the need to be able refer to the 'current' item being tested in the predicate, perhaps we should force the use of 'it' even when the thing referred to is an entity?

Which would mean this:

~/Movies/?$filter=any(Actors, Name eq 'John Belushi')

Would need to become this:

~/Movies/?$filter=any(Actors, it/Name eq 'John Belushi')

Interestingly forcing this would conceptually allow for queries like this too:

~/Movies/?$filter=any(Actors, it eq Director)

Here we are looking for movies where an actor in the movie is also the movie's director.

Note: Today OData doesn't allow for entity to entity comparisons like above, so instead you'd have to compare keys like this:

~/Movies/?$filter=any(Actors, it/ID eq Director/ID)

Nested queries

Our design must also accommodate nesting too:

~/Movies/?$filter=any(Actors,any(it/Tags, it eq 'Favorite'))

Here we are asking for movies where any of actors are tagged as a favorite.

Notice though that 'it' is used twice and has a different meaning each time. First it refers to 'an actor' then it refers to 'a tag'.

Does this matter?

Absolutely if you want to be able to refer to the outer variable inside an inner predicate, which is vital if you need to ask questions like:

- Find any movies where any of the actors is tagged as a favorite

- Find any movies where an actor has also directed another movie

Clearly these are useful queries.

LINQ handles this by forcing you to explicitly name your variable whenever you call Any/All.

from m in ctx.Movies

where m.Actors.Any(a => a.Tags.Any(t => t == "Favorite"))

select m;from m in ctx.Movies

where m.Actors.Any(a => a.Movies.Any(am => am.Director.ID == a.ID))

select m;If we did something similar it would look like this:

~/Movies/?$filter=any(Actors a, a/Name eq 'John Belushi')This is a little less concise, but on the plus side you get something that is much more unambiguously expressive:

~/Movies/?$filter=any(Actors a, any(a/Movies m, a/ID eq m/Director/ID))

Here we are looking for movies where any of the actors is also a director of at least one movie.

Final Proposal

Given that it is nice to support nested scenarios like this, it is probably better to require explicit names.

Trying to be flexible for the sake of a little conciseness often leads to pain and confusion down the road, so let's not do that J

That leaves us with a proposal where you have to provide an explicit alias any time you need Any or All.

Per the proposal here are some examples of valid queries:

~/Orders/?$filter=all(OrderLines line, line/Cost gt 400.0m)

~/Movies/?$filter=any(Actors a, a/Name eq 'John Belushi')

~/Movies/?$filter=any(Actors a, a/ID eq Director/ID)

~/Movies/?$filter=any(Actors a, any(a/Tags t, t eq 'Favorite'))

~/Movies/?$filter=any(Actors a, any(a/Movies m, m/Director/ID eq a/ID))Summary

Adding Any / All support to the protocol will greatly improve the expressiveness of OData queries.

I think the above proposal is expressive, flexible and unambiguous, and importantly it also addresses the major limitations of MultiValue properties that a few of you were rightly concerned about.

As always I'm very keen to hear your thoughts…

Cumulux posted a SQL Azure OData Service overview on 11/11/2010:

OData is an emerging web protocol for querying and updating data over the Web. This allows data to be updated modularly freeing it from the huge blocks in applications. OData is built upon technologies such as HTTP, REST, Atom publishing protocol and JSON to provide access to information from a variety of applications, services, and stores. The way OData is implemented is consistent with Internet standards and this enables data integration and interoperability across a broad range of clients, servers, services, and tools.

OData can be used to access and update data in variety of sources. The sources may be relational databases or file systems or content management systems or traditional websites. In addition, various clients, from ASP.NET, PHP, Java websites or Excel or mobile devices applications, can seamlessly access vast data stores through OData.

SQL Azure OData Service provides an OData interface to SQL Azure databases that is hosted by Microsoft. This feature is currently available only as a part of SQL Azure Labs . Users with Live ID can Sign in to try the feature.

This integration will give SQL Azure, an additional advantage and a great technical service to the developer to control and work on the required data efficaciously over the web. The end user will have a more optimized and quicker web service.

For more detailed technical overview of OData Service, Refer Microsoft PDC 2010 session on OData Services.

Cumulux continued its Azure-related posts with Microsoft Azure Market Place on 11/11/2010:

In the announcement of Azure Market Place in the Microsoft Professional Development Conference 2010, it is defined as “ an online marketplace for developers to share, find, buy and sell building block components, training, service templates, premium data sets plus finished services and applications needed to build Windows Azure platform applications. “ There are two sections in the Market place, the Data Market and the App Market.

The Data Market, codenamed Project Dallas, contains data, imagery, and real-time web services from various leading data providers and authoritative public data sources. The users will have access to data points, statistics of demography, environment, financial markets, retail business, weather, sports etc. Data Market uses visualizations and analytics to enable insight in addition to the data. This feature is commercially available. Users can access Microsoft Azure Market Place Data Market to shop Data.

The App Market will be launched as a Beta edition by the end of 2010. This will include listings of building block components, training, services, and finished services/applications. These building blocks are designed in such a fashion to be incorporated by other developers into their Windows Azure platform applications. Other examples could include developer tools, administrative tools, components and plug-ins, and service templates.

This feature will redefine the way work is done and will give rise to innovative solutions in the cloud. This will define the landscape where companies which develop products on and for Microsoft Azure will compete, deliver and grow. With this feature in place, data and information will be made readily available to the developer and user once the respective services are subscribed to.

For more detailed overview of Microsoft Azure Market Place, Refer Microsoft PDC 2010 session on Azure Market Place.

<Return to section navigation list>

AppFabric: Access Control and Service Bus

• Igor Ladnik described Automating Windows Applications Using Azure AppFabric Service Bus in an 11/13/2010 post with demo and source code to the Code Project:

Unmanaged Windows GUI application with no exported program interface gets automated with injected code. A WCF aware injected component allows world-wide located clients to communicate with automated application via Azure AppFabric Service Bus despite firewalls and dynamic IP addresses

Introduction

This article is the third in row of my CodeProject articles on automation of unmanaged Windows GUI applications with no exported program interface ("black box" applications). These applications are referred to below as target applications. While using the same injection technique, the articles present different ways of communication between injected code and outside world.

Background

The suggested technique transforms target application to an automation server, i.e., its clients (subscribers) running in separate processes are able to control target application and get some of its services either in synchronous or asynchronous (by receiving event notifications) modes. In the previous articles [1] and [2], this transformation was described in detail with appropriate code samples. So here only a brief overview is given.

The course of actions for the transformation is as follows:

- A piece of foreign code is injected into target application using the remote thread technique [3];

- The injected code subclasses windows of target application adding desirable [pre]processing for Windows messages received by subclassed windows;

- A COM object is created in the injected code in context of target application process. This object is responsible for communication of automated target application with the outside world.

The above steps are common for all three articles. The main difference lays in the nature and composition of the COM object operating inside the target application. In the earliest article [1], the communication object was an ordinary COM object self-registered with Running Object Table (ROT). It supports direct interface for inbound calls and outgoing (sink) interface for asynchronous notification of its subscribers. Such objects provide duplex communication with several subscriber applications (both managed and unmanaged) machine-wide but cannot support communication with remote subscriber applications since ROT is available only locally.

The communication object of the next generation [2] constitutes COM Callable Wrapper (CCW) around managed WCF server component. This allows automated target application to be connected to both local and remote subscribers, thus considerably expanding access range of newly formed automation server. But this advanced communication object still has limitation: it enables only remote subscribers locating in the same Local Area Network (LAN). Subscribers outside the LAN are banished by firewall and lack of permanent IP address of target application machine. It means that if I automate a GUI application on my home desktop, then my daughter and my dog can utilize its services from their machines at home (within the same LAN), whereas a friend of mine is deprived of such pleasure in his/her home (outside my LAN).

Azure AppFabric Service Bus

This is where the Microsoft Azure platform [4] comes to the rescue. Among other useful things it offers AppFabric Service Bus, technology and infrastructure enabling connection between WCF aware server and client with changeable IP addresses locating behind their firewalls. Since dynamic IP address and firewall are obstacles for inbound calls and transparent for outbound calls, Service Bus acts as Internet-based mediator for client and server outbound calls.

It turned out that to make automated target application available world-wide with Azure Service Bus is amazingly simple. Taking software in the article [2] as my departure point, I found that no change in code is required (well, actually I did change code a little, but this change was not compulsory). Actually three actions should be carried out:

- Open account for Windows Azure AppFabric Service Bus and create a service namespace (see MSDN or e.g. [5], chapter 11)

- Install Windows Azure SDK on both target application and client machines, and

- Change configuration files for both target application and client.

New configuration files are needed because usage of Service Bus implies special Relay binding with appropriate security setting and special endpoint behavior enabling Service Bus connection. In our sample,

netTcpRelayBindingis used. Endpoint behavior provides issuerName and issuerSecret parameters for Service Bus connection. Both of them should be copied from your Azure AppFabric Service Bus account. From the same account, mandatory part of WCF service base addresses should be copied:

Collapse | Copy Code

sb://SERVICE-NAMESPACE.servicebus.windows.net(

SERVICE-NAMESPACEplaceholder should be replaced with your service namespace). Details of configuration files can be seen in the sample.Code Sample

Like in the previous articles, well known Notepad text editor is taken as target application for automation. Console application InjectorToNotepad.exe injects NotepadPlugin.dll into target application with the remote thread technique using Injector.dll COM object. The injected NotepadPlugin subclasses both frame and view windows of Notepad and creates NotepadHandlerNET managed component in COM Callable Wrapper (CCW). NotepadHandlerNET is responsible for communication with outside world. This technique was explained in full details in my previous article [2].

Build and Run Sample

Output of projects will be placed in Release or Debug (according to your built choice) working directory. InjectorToNotepad application will copy Notepad.exe to the same directory, and fancy configuration file Notepad.exe.config is created during the build. InjectorToNotepad console application runs Notepad from working directory and should report SUCCESS! However it takes some time before word [AUTOMATED] will appear in caption of the Notepad frame window. Then press big button tagged with "Bind to Automated Notepad via Azure Service Bus". Binding takes time (up to 30 sec). On its completion, the big button becomes disabled and three smaller operation buttons get enabled. Please see Test Sample section to perform test.

- Run Visual Studio 2010 as administrator and open NotepadAuto.sln.

- Open Solution Property Pages, select Multiple startup projects, set for projects

InjectorToNotepadandAutomationClientNETAction attribute to Start and press OK button.- In App.config files of

NotepadHandlerNETandAutomationClientNETprojects, replace values of issuerName and issuerSecret attributes in<sharedSecret>tag and placeholderSERVICE-NAMESPACEwith appropriate values of your Azure AppFabric Service Bus account.- Build and run the solution.

Run Demo

Demo contains two directories, namely, Injector and Client. The Injector directory should be placed on the target application machine. It contains all components required for injection to Notepad target application. The Client directory should be placed on client (subscriber) machines.

Before starting sample, please install Windows Azure SDK on both target application and client machines. Replace in configuration files Notepad.exe.config and AutomationClientNET.exe.config values of issuerName and issuerSecret attributes in

<sharedSecret>tag and placeholderSERVICE-NAMESPACEwith appropriate values of your Azure AppFabric Service Bus account. Please note that cmd and exe (except for target application Notepad) files should be run as administrator. Make sure that you have stopped your software protecting applications from injection (since this protective software considers any injection as malicious action).First run COMRegisteration.cmd command file. It registers COM components WindowFinder.dll and Injector.dll with regsvr32.exe utility and CCW for NotepadHandlerNET.dll with regasm.exe utility. Then run InjectorToNotepad.exe console application. It copies Notepad.exe from system to working directory (for successful operation of injected COM object Notepad should run from working directory) and performs injection. It takes some time after injector prints message SUCCESS! before Notepad will show word [AUTOMATED] in its caption. InjectorToNotepad.exe console application may be closed. Now our Notepad target application is automated and ready to serve its clients.

From Client directory (that can be placed on any machine world-wide), run AutomationClientNET.exe client application and press big button "Bind to Automated Notepad via Azure Service Bus" on it. It takes some time (app. 30 sec) before the client binds to automated Notepad. If the binding succeeds, the big button becomes disabled while operation buttons get enabled.

Test Sample

Now the sample is ready for test. You may open Notepad's Find dialog, add custom menu item by pressing appropriate buttons of the client application. You may type some text in Notepad and copy it to clients by pressing client's "Copy Text" button. To test asynchronous action, type some text in Notepad followed by a sentence conclusion sign (".", "?", or "!"). Text typed will be reproduced in an edit box of client application.

You may test the sample for several clients. For this, you have to make sure that URI of each client is unique. So in AutomationClientNET.exe.config file of another AutomationClientNET.exe client application, replace suffix of its service base address from /Subscriber1 to, say, /Subscriber2, start this new client and bind it to automated Notepad. All active clients get copied text and asynchronous events when text typed in Notepad is concluded with ".", "?", or "!".

Discussion

The described approach shows great usefulness of Azure AppFabric Service Bus for automation of desktop applications. The Service Bus allows automated application to serve its clients world-wide overcoming firewall and dynamic IP address limitations, and this is achieved without additional code. The Service Bus may be used as a mediator between any kind of automated applications and their clients.

In the sample for the article, the target application and its clients communicate only via Service Bus. In real world automation, different endpoints should be provided for local client, clients located in the same Local Area Network (LAN) and for "distant" remote clients. Service Bus endpoints should be of hybrid type allowing the most efficient way for communication with each client.

Conclusion

The article presents evolution of a technique for automation of Windows applications with injected objects. A .NET WCF equipped component inside unmanaged target process provides communication between automated application and its clients via Azure AppFabric Service Bus. This approach overcomes such obstacles as firewalls and dynamic IP addresses on both sides making automation server available world-wide.

References

[1] Igor Ladnik. Automating Windows Applications. CodeProject.

[2] Igor Ladnik. Automating Windows Applications Using the WCF Equipped Injected Component. CodeProject.

[3] Jeffrey Richter, Christophe Nasarre. Windows via C/C++. Fifth edition. Microsoft Press, 2008.

[4] Roger Jennings. Cloud Computing with the Windows Azure Platform. Wiley Publishing, 2009.

[5] Juval Lowy. Programming WCF Services. Third edition. O'Reilly, 2010.History

- 12th November, 2010: Initial version

License

This article, along with any associated source code and files, is licensed under The Code Project Open License (CPOL)

• Alik Levin explained Windows Phone 7 and RESTful Services: Delegated Access Using Azure AppFabric Access Control Service (ACS) And OAuth in an 11/12/2010 post:

This post is a summary of steps I have taken in order to run the Windows Phone 7 Sample that Caleb Baker recently published on ACS Codeplex site. The sample demonstrates how to use Azure AppFabric Access Control Service to allow end users sign in to RESTful service with their Windows Live ID, Yahoo!, Facebook, Google, or corporate accounts managed in Active Directory.

This sample answers the following question:

How can I externalize authentication when accessing RESTful services from Windows Phone 7 applications?

Scenario:

Solution:

Summary of steps:

- Step 1 – Prepare development environment

- Step 2 – Create and configure ACS project, namespace, and Relying Party

- Step 3 – Create Windows Phone 7 Silverlight application (the sample code)

- Step 4 – Enhance Windows Phone 7 Silverlight application with OAuth capability

- Step 5 – Test your work

Step 1 – Prepare development environment

There are several prerequisites needed to be installed on the development environment. Here is the list:

- Visual Studio 2010 installed.

- IIS7 is installed and running.

- Download and extract the Windows Phone 7 Sample code.

- Download and install Windows Phone Developer Tools RTW.

- Download and extract DPE.OAuth project from the FabrikamShipping Demo, available as part of the Source Code.

Step 2 – Create and configure ACS project, namespace, and Relying Party

- Login to http://portal.appfabriclabs.com/

- If you have not created your project and a namespace, do so now.

- On the Project page click on “Access Control” link next to your namespace in the bottom right corner.

- On the “Access Control Settings” page click on “Manage Access Control” link at the bottom.

- Sign in using any of the available accounts. I used Windows Live ID.

- On the “Access Control Service” page click on “Relying Party Applications” link.

- On the “Relying Party Applications” page click on “Add Relying Party Application”.

- Fill the fields as follows:

- Name: ContosoContacts

- Realm: http://ContosoContacts/

- Return URL: http://localhost:9000/Default.aspx

- Token format: SWT

- Token lifetime (secs): leave default, 600

- Identity providers: leave default, I have Google and Windows Live ID

- Rule groups: leave default “Create New Rule Group” checked

- Click “Generate” button for the token signing key field. Copy the key into a safe place for further reuse.

- Click “Save” button.

- Click on “Access Control Service” link in the breadcrumb at the top.

- On the “Access Control Service” page click on the “Rule Groups” link.

- On the “Rule Groups” page click on the “Default Rule Group for ContosoContacts” link.

- On the “Edit Rule Group’ page click on “Generate Rules” link at the bottom.

- On the “Generate Rules: Default Rule Group for ContosoContacts” page click on “Generate” button.

- On the “Edit Rule Group” page click “Save” button.

- Click on the “Access Control Service” link in the breadcrumb at the top.

- On the “Access Control Service” page click on “Application Integration” at the bottom.

- On the “Application Integration” page click on “Login Pages” link.