Windows Azure and Cloud Computing Posts for 8/18/2010+

| A compendium of Windows Azure, Windows Azure Platform Appliance, SQL Azure Database, AppFabric and other cloud-computing articles. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database, Codename “Dallas” and OData

- AppFabric: Access Control and Service Bus

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch

- Windows Azure Infrastructure

- Windows Azure Platform Appliance (WAPA)

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Read the detailed TOC here (PDF) and download the sample code here.

Discuss the book on its WROX P2P Forum.

See a short-form TOC, get links to live Azure sample projects, and read a detailed TOC of electronic-only chapters 12 and 13 here.

Wrox’s Web site manager posted on 9/29/2009 a lengthy excerpt from Chapter 4, “Scaling Azure Table and Blob Storage” here.

You can now freely download by FTP and save the following two online-only PDF chapters of Cloud Computing with the Windows Azure Platform, which have been updated for SQL Azure’s January 4, 2010 commercial release:

- Chapter 12: “Managing SQL Azure Accounts and Databases”

- Chapter 13: “Exploiting SQL Azure Database's Relational Features”

HTTP downloads of the two chapters are available for download at no charge from the book's Code Download page.

Tip: If you encounter articles from MSDN or TechNet blogs that are missing screen shots or other images, click the empty frame to generate an HTTP 404 (Not Found) error, and then click the back button to load the image.

Azure Blob, Drive, Table and Queue Services

Neil MacKenzie provided Examples of the Windows Azure Storage Services REST API in this 8/18/2010 post:

In the Windows Azure MSDN Azure Forum there are occasional questions about the Windows Azure Storage Services REST API. I have occasionally responded to these with some code examples showing how to use the API. I thought it would be useful to provide some examples of using the REST API for tables, blobs and queues – if only so I don’t have to dredge up examples when people ask how to use it. This post is not intended to provide a complete description of the REST API.

The REST API is comprehensively documented (other than the lack of working examples). Since the REST API is the definitive way to address Windows Azure Storage Services I think people using the higher level Storage Client API should have a passing understanding of the REST API to the level of being able to understand the documentation. Understanding the REST API can provide a deeper understanding of why the Storage Client API behaves the way it does.

Fiddler

The Fiddler Web Debugging Proxy is an essential tool when developing using the REST (or Storage Client) API since it captures precisely what is sent over the wire to the Windows Azure Storage Services.

Authorization

Nearly every request to the Windows Azure Storage Services must be authenticated. The exception is access to blobs with public read access. The supported authentication schemes for blobs, queues and tables and these are described here. The requests must be accompanied by an Authorization header constructed by making a hash-based message authentication code using the SHA-256 hash.

The following is an example of performing the SHA-256 hash for the Authorization header

private String CreateAuthorizationHeader(String canonicalizedString)

{

String signature = string.Empty;

using (HMACSHA256 hmacSha256 = new HMACSHA256(AzureStorageConstants.Key))

{

Byte[] dataToHmac = System.Text.Encoding.UTF8.GetBytes(canonicalizedString);

signature = Convert.ToBase64String(hmacSha256.ComputeHash(dataToHmac));

}String authorizationHeader = String.Format(

CultureInfo.InvariantCulture,

"{0} {1}:{2}",

AzureStorageConstants.SharedKeyAuthorizationScheme,

AzureStorageConstants.Account,

signature);return authorizationHeader;

}This method is used in all the examples in this post.

AzureStorageConstants is a helper class containing various constants. Key is a secret key for Windows Azure Storage Services account specified by Account. In the examples given here, SharedKeyAuthorizationScheme is SharedKey.

The trickiest part in using the REST API successfully is getting the correct string to sign. Fortunately, in the event of an authentication failure the Blob Service and Queue Service responds with the authorization string they used and this can be compared with the authorization string used in generating the Authorization header. This has greatly simplified the us of the REST API.

Table Service API

The Table Service API supports the following table-level operations:

The Table Service API supports the following entity-level operations:

These operations are implemented using the appropriate HTTP VERB:

- DELETE - delete

- GET - query

- MERGE - merge

- POST - insert

- PUT - update

Neil continues with sections that provide examples of the Insert Entity and Query Entities operations, Get Entity, Blob Service API, Put Blob, Lease Blob, Queue Service API, Put Message, and Get Messages.

Mike Admundsen did a great deal of early work with the REST API.

Tofuller posted Announcing the Perfmon-Friendly Azure Log Viewer Plug-In on 8/18/2010:

The Background

About 3 months ago as some colleagues and I were working on the "Advanced Debugging Hands On Lab for Windows Azure" (for more info contact me via this blog) we identified an interesting opportunity within the Azure MMC. If you've worked with this tool you may have seen that the default export option for the performance counters log is an Excel file. More specifically a CSV file. This is fine for getting a dump of event log data or native Azure infrastrucutre logs but for performance counter data this is not ideal.

The Problem

The format of the data exported in the Azure MMC made it impossible to use our existing Performance Monitor tool on Windows (perfmon) or our Performance Analysis of Logs tool from codeplex. These tools are essential for us in support as we do initial problem analysis. Issues like managed or unmanaged memory leaks, high and low CPU hangs, and process restarts are just a few examples of issues that start with perfmon analysis. These issues do not go away in the cloud!

The Solution

The Azure MMC team made an outstanding design decision that allowed us to very quickly build a plug-in that could provide additional options for exporting those performance counters however we want. Because the team decided to take advantage of the Managed Extensibility Framework (aka MEF) it was easy to extend the capabilies of the MMC with our own functionality. Here are the steps that were required to code up the solution:

- Start from a new WPF User Control Library project.

- Next you'll want to set your project to target .NET Framework 3.5 if you are using Visual Studio 2010 because the version of MEF that the Azure MMC uses is pre .NET 4.0

- Add References to the following assemblies located in your %InstallRoot%\WindowsAzureMMC\release folder

- Microsoft.Samples.WindowsAzureMmc.Model

- Microsoft.Samples.WindowsAzureMmc.ServiceManagement

- MicrosoftManagementConsole.Infrastructure

- System.ComponentModel.Composition

- WPFToolkit

- Next, the implementation decision made in the Azure MMC base classes meant we had to follow the MVVM pattern for WPF to create a ViewModel that would bind to the controls rendered as part of the dialog when the "Show" button is clicked. This ViewModel class must inherit from ViewerViewModelBase<T> which is found in the WindowsAzureMmc.ServiceManagement dll referenced above. T in this case will be your actual view with all of the XAML markup.

- The next part was easy, to get the perfmon counter data we just needed to override the OnSearchAsync and OnSearchAsyncCompleted methods from the ViewerViewModelBase type which takes care of making the calls to the Azure APIs and retrieving the data.

- Now for the fun part, we still had to get the data in the proper structure to be readable by perfmon and PAL. To do this we wrote an Export command (see Code Snippet 1) that would be triggered from the pop up in the same way it is triggered to export to excel. The only difference is in this case we take all the incoming performance counter data and group it first by tickcount and then map that back to the unique columns which are created as "RoleName\RoleInstance\CounterID" (see Code Snippet 2).

- Now that we had all the data restructured we needed to come up with a way to to take the new CSV output and get it into the native BLG perfmon format. Big thanks to my colleague Greg Varveris for the design approach here. All we needed to do was use a Process.Start command to run a conversion of the CSV file to BLG using relog.exe and then launch the resulting file with perfmon directly!

The Result

Once this DLL is built and dropped into the WindowsAzureMMC\release folder it is automatically picked up by the MMC and you will see a new option in the "Performance Counters Logs" drop down (note: if you drop the DLL in the release folder when the Azure MMC is already running you need to do a "refresh plugins" to get the new option to show up)

Now when you click on "Show" there will be a new dialog that opens and allows you to open the data directly in perfmon.

Here's a quick snapshot of the perfmon data. Notice it shows multiple roles, multiple instances, and multiple counters. There is no limitation to the amount of data you want to pull down from the MMC and show in perfmon!

On top of getting to open the data directly in perfmon you also will find that the data is being saved on disk for you to collect and store for later viewing or feed into a tool like PAL to determine if you have any bad resource usage trends. All you need to do is go to your C:\Users\%username%\AppData\Local\Temp and you will see the files there.

What Next?

We'd love to get feedback on this plug in and whether or not it was useful to you for reviewing your Azure application performance data. You can download it here: Windows Azure MMC Downloads Page

Tofuller continues with source code snippets.

<Return to section navigation list>

SQL Azure Database, Codename “Dallas” and OData

Wayne Walter Berry (@WayneBerry) explains The Real Cost of [SQL Azure] Indexes in this 9/19/2010 post to the SQL Azure Team blog:

In a previous blog post I discussed creating covered indexes to increase performance and reduce I/O usage. Covered indexes are a type of non-clustered index that “covers” all the columns in your query to create better performance than a table scan of all the data. An observant reader pointed out that there is a financial cost associated with creating covered indexes. He was right, SQL Azure charges for the room required to store the covered index on our server. I thought it would be interesting to write a few Transact-SQL queries to determine the cost of my covered index; that is what I will be doing in this blog post.

Covered Indexes Consume Resources

There is no such thing as a free covered index; regardless of whether you are on SQL Server or SQL Azure covered indexes consume resources. With SQL Server, you pay some of the costs upfront when you purchase the machine to run SQL Server; by buying additional RAM and hard drive space for the index. There are other costs associated with an on-premise SQL Server, like warranty, backup, power, cooling, and maintenance. All of these are hard to calculate on a monthly basis, you need to depreciate the machines over time, and some expenses are variable and unplanned for, like hard drive failures. These are overall server operating costs, there is no way to drill down and determine the cost of a single covered index. If there was, wouldn’t it would be nice to run a Transact-SQL statement to compute the monthly cost of the index? With SQL Azure you can do just that.

SQL Azure Pricing

Currently, SQL Azure charges $9.99 per gigabyte of data per month (official pricing can be found here). That is the cost for the range in which the actually size of data you want to store falls, not the cap size of the database. In other words, if you are storing just a few megabytes on a 1 GB Web edition database, the cost is $9.99 a month. The ranges are 1, 5, 10, 20, 30, 40, and 50 gigabytes – the closer you are to those sizes that lower the cost per byte to store your data. Here is a Transact-SQL statement that will calculate the cost per byte.

DECLARE @SizeInBytes bigint SELECT @SizeInBytes = (SUM(reserved_page_count) * 8192) FROM sys.dm_db_partition_stats SELECT (CASE WHEN @SizeInBytes/1073741824.0 < 1 THEN 9.99 WHEN @SizeInBytes/1073741824.0 < 5 THEN 49.95 WHEN @SizeInBytes/1073741824.0 < 10 THEN 99.99 WHEN @SizeInBytes/1073741824.0 < 20 THEN 199.98 WHEN @SizeInBytes/1073741824.0 < 30 THEN 299.97 WHEN @SizeInBytes/1073741824.0 < 40 THEN 399.96 WHEN @SizeInBytes/1073741824.0 < 50 THEN 499.95 END) / @SizeInBytes FROM sys.dm_db_partition_statsThe Cost of Covered Indexes

Now that we know our true cost per byte, let’s figure out what each covered index costs. Note that the Transact-SQL can’t tell which indexes are covered indexes from all the non-clustered indexes, only the index creator will know why the index was created.

Here is some Transact-SQL to get our cost per month for each non-clustered index.

DECLARE @SizeInBytes bigint SELECT @SizeInBytes = (SUM(reserved_page_count) * 8192) FROM sys.dm_db_partition_stats DECLARE @CostPerByte float SELECT @CostPerByte = (CASE WHEN @SizeInBytes/1073741824.0 < 1 THEN 9.99 WHEN @SizeInBytes/1073741824.0 < 5 THEN 49.95 WHEN @SizeInBytes/1073741824.0 < 10 THEN 99.99 WHEN @SizeInBytes/1073741824.0 < 20 THEN 199.98 WHEN @SizeInBytes/1073741824.0 < 30 THEN 299.97 WHEN @SizeInBytes/1073741824.0 < 40 THEN 399.96 WHEN @SizeInBytes/1073741824.0 < 50 THEN 499.95 END) / @SizeInBytes FROM sys.dm_db_partition_stats SELECT idx.name, SUM(reserved_page_count) * 8192 'bytes', (SUM(reserved_page_count) * 8192) * @CostPerByte 'cost' FROM sys.dm_db_partition_stats AS ps INNER JOIN sys.indexes AS idx ON idx.object_id = ps.object_id AND idx.index_id = ps.index_id WHERE type_desc = 'NONCLUSTERED' GROUP BY idx.name ORDER BY 3 DESCThe results of my Adventure Works database look like this:

The most expense index is: IX_Address_AddressLine1_AddressLine2_City_StateProvince_PostalCode_CountryRegion which is a covered index to increase the lookup time for the address table. It costs me almost 50 cents per month. With this information, I can make a cost comparison against the performance benefits of having this covered index and determine if I want to keep it.

Keeping It All in Perspective

If I delete my IX_Address_AddressLine1_AddressLine2_City_StateProvince_PostalCode_CountryRegion index does it save me money? Not if it doesn’t change the range I fall into. Because the Adventure Works database is less than 1 Gigabyte, I pay $9.99 per month; as long as I don’t go over the top side of the range, creating another clustered index doesn’t cost me anymore. If you look at it that way, creating a covered index that doesn’t completely fill your database is basically free. Once you are committed to spending the money for the range you need for your data, you should create as many covered indexes (that increase performance) as will fit inside the maximum size.

This is basically the same as an on-premise SQL Server, once you have committed to spending the money for the server, adding additional clustered indexes within the machines resources doesn’t cost you anymore.

Disclaimer

The prices for SQL Azure and the maximum database sizes can change in the future, make sure to compare the current costs against the queries provided to make sure your index costs are accurate.

Mary Jo Foley (@maryjofoley) asserted It takes a lot of syncing to build a Microsoft personal cloud in this 8/19/2010 post to her All About Microsoft blog for ZDNet:

When Microsoft officials talk about syncing devices and services, they can be referring to any number of different Microsoft sync technologies. There’s ActiveSync, Windows Live Sync, Sync Framework, Windows Sync, the synchronization provided via the Zune PC software client… and lots more.

Several of these sync technologies are essential to realizing what Microsoft marketing execs (and Forrester Research analysts) have started referring to as the “personal cloud.” Microsoft execs talked up the potential of the personal cloud earlier this summer at both the Worldwide Partner Conference and the Microsoft Financial Analyst Meeting (FAM).

Corporate Vice President of Windows Consumer Marketing Brad Brooks told Wall Street analysts and press they’d be hearing and seeing more from Microsoft about the personal cloud later this year. He said that Microsoft is one of four tech companies (with the others being Apple, Google and Facebook) who are set to deliver “multiple components of the emerging Personal Cloud user experience.”

“Speaking of cloud and Windows, we have a unique point of view on the cloud for consumers, and we call it the PC. Only in this case we call it the personal cloud,” Brooks told FAM attendees in late July. “And the personal cloud, well, it’s going to connect all the things that are important to you and make them available and ready for you to use wherever you’re at, whenever you need it.”

Windows Live Essentials — a suite consisting of a number of Microsoft’s Windows Live services, and which just this week got a “beta refresh” — is one element of Microsoft’s personal cloud experience. Brooks also included Windows 7, Bing, Xbox Live, Zune, Windows Phone 7 and “client virtualization technologies that so far are aimed mostly at IT managers”

Microsoft’s personal cloud experience works like this: Your Windows (preferably Windows 7) PC is the hub. From there, you can connect and sync various devices, like your phone, your gaming console, etc. In some cases, you’ll be able to sync directly from the devices to the cloud. But the main goal, from Microsoft’s standpoint, is to keep the PC at the center of a user’s syncing existence.

This fall/winter — when Microsoft rolls out the final version of its Windows Live Essentials 2011 bundle and its phone partners start selling Windows Phone 7 devices — Microsoft execs will be touting how these products are enhanced by the personal cloud. That sounds a lot fancier than saying Windows 7/Vista and Windows Phone 7 users will be able to install and run the new Windows Live Messenger, Mail, Family Safety parental controls and Live Sync (which is what they really mean).

Speaking of Live Sync, Microsoft officials have conceded (and beta testers realize) that the coming version allows users to sync their Windows PCs and Macs. But it doesn’t support phones — not even Windows Phones. And the Zune PC client (codenamed “Dorado”), which will enable the personal cloud synchronization between Windows PCs and Windows Phone 7 devices is dedicated to syncing digital media content, not things like contacts or e-mail, as Windows SuperSite’s Paul Thurrott (who is writing a Windows Phone 7 book) noted in a recent blog post.

Microsoft execs tend to gloss over this reality in their demos. What’s enabling them to seamlessly sync their photos on their Windows Phone 7s with their Windows 7 PCs? It’s not the coming version of Windows Live Sync. And I don’t think it’s the Zune sync, either.

Maybe it’s Windows Sync? That seemed to be what Brooks was telling FAM attendees last month, according to the transcript of his remarks (unless Brooks was talking about Windows Live Sync, which I’m doubting, since the coming 2011 Essentials version doesn’t support phones). Brooks said:

“Well, now with the new Windows Sync feature, I can choose one folder or choose all my folders on a PC, and choose to share them and they automatically sync up in the background whenever I’m connected to the Internet. So, that means all my files and all my content across all these devices always keep in sync.”

Windows Sync is a feature built into Windows 7. According to Microsoft, “(u)sing Windows Sync, developers can write synchronization providers that keep data stores such as contacts, calendars, tasks, and notes on a computer or on a network synchronized with corresponding data stores in personal information managers (PIMs) and smart phones that support synchronization.”

After clicking around for a while on some of the Windows Sync links, I realized Windows Sync builds on Microsoft’s Sync Framework. What’s the Sync Framework? Microsoft’s description:

“Sync Framework a comprehensive synchronization platform enabling collaboration and offline for applications, services and devices. Developers can build synchronization ecosystems that integrate any application, any data from any store using any protocol over any network. Sync Framework features technologies and tools that enable roaming, sharing, and taking data offline.”

(By the way, Microsoft just rolled out this week Version 2.1 of the Sync Framework, which adds SQL Azure synchronization as an option.) [See article below.]

I tried to get more clarity from company execs about Microsoft’s consumer sync strategy but had little luck, as a result of many Softies being on vacation (and the fact that Microsoft is still, no doubt, ironing out the details of its fall rollouts.)

Does any of this under-the-covers stuff really matter? I realize Microsoft (hopefully) will isolate consumers from sync programming interfaces and sync providers, but I’m wondering how seamless — and complete — Microsoft’s personal cloud actually will be. “Version 1″ of this personal cloud experience seems like it will be neither. Maybe that’s OK for a “V1.” I guess we’ll see soon….

LarenC announced Sync Framework 2.1 Available for Download in this 8/18/2010 post to the Sync Framework Team Blog:

Sync Framework 2.1 is available for download.

Sync Framework 2.1 includes all the great functionality of our 2.0 release, enhanced by several exciting new features and improvements. The most exciting of these lets you synchronize data stored in SQL Server or SQL Server Compact with SQL Azure in the cloud. We’ve added top customer requests like parameter-based filtering and the ability to remove synchronization scopes and templates from a database, and of course we’ve made many performance enhancements to make synchronization faster and easier. Read on for more detail or start downloading now!

SQL Azure Synchronization

With Sync Framework 2.1, you can leverage the Windows Azure Platform to extend the reach of your data to anyone that has an internet connection, without making a significant investment in the infrastructure that is typically required. Specifically, Sync Framework 2.1 lets you extend your existing on premises SQL Server database to the cloud and removes the need for customers and business partners to connect directly to your corporate network. After you configure your SQL Azure database for synchronization, users can take the data offline and store it in a client database, such as SQL Server Compact or SQL Server Express, so that your applications operate while disconnected and your customers can stay productive without the need for a reliable network connection. Changes made to data in the field can be synchronized back to the SQL Azure database and ultimately back to the on premises SQL Server database. Sync Framework 2.1 also includes features to interact well with the shared environment of Windows Azure and SQL Azure. These features include performance enhancements, the ability to define the maximum size of a transaction to avoid throttling, and automatic retries of a transaction if it is throttled by Windows Azure. All of this is accomplished by using the same classes you use to synchronize a SQL Server database, such as SqlSyncProvider and SqlSyncScopeProvisioning, so you can use your existing knowledge of Sync Framework to easily synchronize with SQL Azure.

Bulk Application of Changes

Sync Framework 2.1 takes advantage of the table-valued parameter feature of SQL Server 2008 and SQL Azure to apply multiple inserts, updates, and deletes by using a single stored procedure call, instead of requiring a stored procedure call to apply each change. This greatly increases performance of these operations and reduces the number of round trips between client and server during change application. Bulk procedures are created by default when a SQL Server 2008 or SQL Azure database is provisioned.

Parameter-based Filtering

Sync Framework 2.1 enables you to create parameter-based filters that control what data is synchronized. Parameter-based filters are particularly useful when users want to filter data based on a field that can have many different values, such as user ID or region, or a combination of two or more fields. Parameter-based filters are created in two steps. First, filter and scope templates are defined. Then, a filtered scope is created that has specific values for the filter parameters. This two-step process has the following advantages:

Easy to set up. A filter template is defined one time. Creating a filter template is the only action that requires permission to create stored procedures in the database server. This step is typically performed by a database administrator.

Easy to subscribe. Clients specify parameter values to create and subscribe to filtered scopes on an as-needed basis. This step requires only permission to insert rows in synchronization tables in the database server. This step can be performed by a user.

Easy to maintain. Even when several parameters are combined and lots of filtered scopes are created, maintenance is simple because a single, parameter-based procedure is used to enumerate changes.

Removing Scopes and Templates

Sync Framework 2.1 adds the SqlSyncScopeDeprovisioning and SqlCeSyncScopeDeprovisioning classes to enable you to easily remove synchronization elements from databases that have been provisioned for synchronization. By using these classes you can remove scopes, filter templates, and the associated metadata tables, triggers, and stored procedures from your databases.

SQL Server Compact 3.5 SP2 Compatibility

The Sync Framework 2.1 SqlCeSyncProvider database provider object uses SQL Server Compact 3.5 SP2. Existing SQL Server Compact databases are automatically upgraded when Sync Framework connects to them. Among other new features, SQL Server Compact 3.5 SP2 makes available a change tracking API that provides the ability to configure, enable, and disable change tracking on a table, and to access the change tracking data for the table. SQL Server Compact 3.5 SP2 can be downloaded here.

For more information about Sync Framework 2.1, including feature comparisons, walkthroughs, how-to documents, and API reference, see the product documentation.

David Ramel recommended Try Your Own OData Feed In The Cloud--Or Not! in this 8/12/2010 post to Visual Studio Magazine’s Data Driver column:

So, being a good Data Driver, I was all pumped up to tackle a project exploring OData in the cloud and Microsoft's new PHP drivers for SQL Server, the latest embodiments of its "We Are The World" open-source technology sing-along.

I was going to throw some other things in, too, if I could, like the Pivot data visualization tool. I literally spent days boning up on the technologies and trying different tutorials (by the way, if someone finds on the Web a tutorial on anything that actually works, please let me know about it; you wouldn't believe all the outdated, broken crap out there--some of it even coming from good ol' Redmond).

So part of the project was going to use my very own Odata feed in the cloud, hosted on SQL Azure. The boys at SQL Azure Labs worked up a portal that lets you turn your Azure-hosted database into an OData feed with a couple of clicks. It also lets you try out the Project Houston CTP 1 and SQL Azure Data Sync.

The https://www.sqlazurelabs.com/ portal states:

SQL Azure Labs provides a place where you can access incubations and early preview bits for products and enhancements to SQL Azure. The goal is to gather feedback to ensure we are providing the features you want to see in the product.

Well, I provided some feedback: It doesn't work.

Every time I clicked on the "SQL Azure OData Service" tab and checked the "Enable OData" checkbox, I got the error in Fig. 1.

Figure 1. Problems on my end when I try to get to enable the SQL Azure OData Service. [Click image for larger view.]

I figured the problem was on my end. It always is. I have an uncanny knack of failing where others succeed in anything technology-related. It's always some missed configuration or incorrect setting or wrong version or outdated software or … you get the idea. Sometimes the cause of my failure is unknown. Sometimes it's just the tech gods punishing me for something, it seems. Check out what just now happened as I was searching for some links for this blog. This is a screenshot of the search results:

Figure 2. Stephen Forte's blog can be difficult to read at times. But it's absolutely not his fault. [Click image for larger view.]

I mean, does this stuff happen to anyone else?

Basically, though, I'm just not that good. But hey, there are other tech dilettantes out there or beginners who like to muck around where they don't belong, so I keep trying in the hopes of learning and sharing knowledge. And, truthfully, 99 percent of the time, I persevere through sheer, stubborn, blunt force trial-and-error doggedness. But it usually takes insane amounts of time--you've no idea.

So I took my usual approach and started trying different things. Different databases to upload to Azure. Different scripts to upload these different databases. Different servers to host these different databases uploaded with different scripts. I tried combination after combination, and nothing worked. I combed forums and search engines. I found no help.

(By the way, try searching for that error message above. I can almost always find a solution to problems like this by Googling the error message. But in this case, nothing comes up except for one similar entry--but not an exact match. That is absolutely incredible. I didn't even think there were any combinations of words that didn't come up as hits in today's search engines.)

Usually, a call to tech support or a forum post is my last resort. They don't usually work, and worse, they often just serve to highlight how ignorant I am.

But, after days of trying different things, I sent a message to the Lab guys. That, after all, is the purpose for the Lab, as they stated above: feedback.

So things went something like this in my exchange with the Labs feedback machine:

Me: When I check box to enable Odata on any database, I get error:

SQL Azure Labs - OData Incubation Error Report

Error: Data at the root level is invalid. Line 1, position 1.

Page: https://www.sqlazurelabs.com:20000/ConfigOData.aspx

Time: 8/9/2010 2:56:16 PMD., at SQL Azure Labs: Adding a couple others..

Anything change guys?J., at SQL Azure Labs: None from me.

M., at SQL Azure Labs: I'm seeing the same error, though I haven't changed anything either.

J., can you look at the change log of the sources to see if anything changed?

D., have you published any changes in the past week?J., at SQL Azure Labs: OK. Will have to wait until this afternoon, however.

M., at SQL Azure Labs: J.; might something have changed on the ACS side?

The error is coming from an xml reader somewhere, and occurs when the "Enable OData" checkbox is selected...So that was a couple days ago and I'm still waiting for a fix. But at least I know it wasn't me, for a change. That's a blessed relief.

But that made me wonder: Just how many people are tinkering around with OData if this issue went unreported for some unknown amount of time, and hasn't been fixed for several days? Is it not catching on? Is no one besides me interested in this stuff? That's scary.

I'm not complaining, mind you. I was gratified to get a response, and a rapid one at that--not a common occurence with big companies. I think Microsoft in general and the Lab guys specifically are doing some great stuff. I was enthusiastic about the possibilities of OData and open government/public/private data feeds being accessible to all. And the new ways coming out to visualize data and manipulate it are cool. And a few others out there agree with me, judging from various blog entries. But now I wonder. Maybe I'm in the tiny minority.

Anyway, today I found myself at Data Driver deadline with no OData project to write about, as I had planned to. So I had to come up with something, quick. Hmmm... what to write about?

<Return to section navigation list>

AppFabric: Access Control and Service Bus

Alex James (@adjames) posted a detailed OData and Authentication – Part 8 – OAuth WRAP tutorial to the WCF Data Services Blog on 8/19/2010:

OAuth WRAP is a claims based authentication protocol supported by the AppFabric Access Control (ACS) which is part of Windows Azure.

But most importantly it is REST (and thus OData) friendly too.

The idea is that you authenticate against an ACS server and acquire a Simple Web Token or SWT – which contains signed claims about identity / roles / rights etc – and then embed the SWT in requests to a resource server that trusts the ACS server.

The resource server then looks for and verifies the SWT by checking it is correctly signed, before allowing access based on the claims made in the SWT.

If you want to learn more about OAuth WRAP itself here’s the spec.

Goal

Now we know the principles behind OAuth WRAP it’s time to map those into the OData world.

Our goal is simple. We want an OData service that uses OAuth WRAP for authorization and a client to test it end to end.

Why OAuth WRAP?

You might be wondering why this post covers OAuth WRAP and not OAuth 2.0.

OAuth 2.0 essentially combines the best features of OAuth 1.0 and OAuth WRAP.

Unfortunately OAuth 2.0 is not yet a ratified standard, so ACS doesn’t support it yet. On the other hand OAuth 1.0 is cumbersome for RESTful protocols like OData. So that leaves OAuth WRAP.

However once it is ratified OAuth 2.0 will essentially depreciate OAuth WRAP and ACS will rev to support it. When that happens you can expect to see a new post in this Authentication Series.

Strategy

First we’ll provision an ACS server to act as our identity server.

Next we’ll configure our identity server with appropriate roles, scopes and claim transformation rules etc.

Then we’ll create a HttpModule (see part 5) to intercept all requests to the server, which will crack open the SWT, convert it into an IPrincipal and store it in HttpContext.Current.Request.User. This way it can be accessed later for authorization purposes inside the Data Service.

Then we’ll create a simple OData service using WCF Data Services and protect it with a custom HttpModule.

Finally we’ll write client code to authenticate against the ACS server and acquire a SWT token. We’ll use the techniques you saw in part 3 to send the SWT as part of every request to our OData services.

Step 1 – Provisioning an ACS server

First you’ll need an Windows Azure account and a running AppFabric namespace.

Once your namespace is running you also have a running ACS server.

Step 2 – Configuring the ACS server

To correctly configure the ACS server you’ll need to Install the Windows Azure Platform AppFabric SDK which you can find here.

ACM.exe is a command line tool that ships as part of the AppFabric SDK, and that allows you to create Issuers, TokenPolicies, Scopes and Rules.

For an introduction to ACM.exe and ACS look no further than this excellent guide by Keith Brown.

To simplify our acm commands you should edit your ACM.exe.config file to include information about your ACS like this:

<?xml version="1.0" encoding="utf-8" ?>

<configuration>

<appSettings>

<add key="host" value="accesscontrol.windows.net"/>

<add key="service" value="{Your service namespace goes here}"/>

<add key="mgmtkey" value="{Your Windows Azure Management Key goes here}"/>

</appSettings>

</configuration>Doing this saves you from having to re-enter this information every time you run ACM.

Very handy.

Claims Transformation

Before we start configuring our ACS we need to know a few principles…

Generally claims authentication is used to translate a set of input claims into a signed set of output claims.

Sometimes this extends to Federation, which allows trust relationships to be established between identity providers, such that a user on one system can gain access to resources on another system.However in this blog post we are going to keep it simple and skip federation.

Don’t worry though we’ll add federation in the next post.Issuers

In ACS terms an Issuer represents a security principal. And whether we want federation or not our first step is to create a new issuer like this:

> acm create issuer

-name: partner

-issuername: partner

-autogeneratekeyThis will generate a key which you can retrieve by issuing this command:

> acm getall issuer

Count: 1id: iss_89f12a7ed023c3b7b0a85f32dff96fed2014ad0a

name: odata-issuer

issuername: odata-issuer

key: 9QKoZgtxxU4ABv8uiuvaR+k0cOmUxfEOE0qfPK2lCJY=

previouskey: 9QKoZgtxxU4ABv8uiuvaR+k0cOmUxfEOE0qfPK2lCJY=

algorithm: Symmetric256BitKeyOur clients are going to need to know this key, so make a note of it for later.

Token Policy

Next we need a token policy. Token Policies specify a timeout indicating how long a new Simple Web Token (or SWT) should be valid, or put another way, how long before the SWT expires.

When creating a token policy you need to balance security versus ease of use and convenience. The shorter the timeout the more likely it is to be based on up to date Identity and Role information, but that comes at the cost of frequent refreshes, which have performance and convenience implications.

For our purposes a timeout of 1 hour is probably about right. So we create a new policy like this:

> acm create tokenpolicy

-name: odata-service-policy

-timeout: 3600

-autogeneratekeyWhere 3600 is the number of seconds in an hour. To see what you created issue this command:

> acm getall tokenpolicy

Count: 1id: tp_aaf3fd9ca64d4471a5c7b5c572c087fb

name: odata-service-policy

timeout: 3600

key: WRwJkQ9PgbhnIUgKuuovw/6yVAo/Dh0qrb7rqQWnsBk=We’ll need both the id and key later.

This key is what we share with our resource servers, so that they can check SWTs are correctly signed. We’ll come back to that later.

Scope

A service may have multiple ‘scopes’ each with a different set of access rules and rights.

Scopes are linked to a token policy, telling ACS how long SWTs should remain valid, how to sign the SWT, and scopes contain a set of rules which tell ACS how to translate incoming claims into claims embedded in the SWT.

When requesting a SWT a client must include an ‘applies_to’ parameter, which tells ACS for which scope they need a SWT, and consequently which token policy and rules should apply when constructing the SWT.

Here are just some of the reasons you might need multiple scopes:

- A multi-tenant resource server would probably need different rules per tenant.

- A single-tenant resource server with distinct sets of independently protected resources.

But for our purposes one scope is enough.

> acm create scope

-name: odata-service-scope

-appliesto:http://odata.mydomain.com

-tokenpolicyid:tp_aaf3fd9ca64d4471a5c7b5c572c087fbFor ‘appliesto’ I chose the url for our planned OData service. Notice too that we bind the scope to the token policy we just created via it’s id.

You can retrieve this scope by executing this:

> acm getall scope

Count: 1id: scp_c028015be790fb5d3ead59307bb3e537d586eac0

name: odata-service

appliesto: http://odata.mydomain.com

tokenpolicyid: tp_d8c65f770fb14a90bc707e958a722df9You’ll need to know the scopeid to add Rules to the scope.

Rules

ACS has one real job, which you could sum up with these four words: “Claims in, claims out”. Essentially ACS is just a claims transformation engine, and the transformation is achieved by applying a series of rules.

The rules are associated with a scope, and tell ACS how to transform input claims for the target scope (via applies_to) into signed output claims.

In our simple example, all we really want to do is this: ‘If you know the key of my issuer, we’ll sign a claim that you are a ‘User’.

To do that we need this rule:

> acm create rule

-name:partner-is-user

-scopeid:scp_c028015be790fb5d3ead59307bb3e537d586eac0

-inclaimissuerid:iss_89f12a7ed023c3b7b0a85f32dff96fed2014ad0a

-inclaimtype:Issuer

-inclaimvalue:partner

-outclaimtype:Roles

-outclaimvalue:User

"Issuer" is a special type of input claim type (normally input claim type is just a string that needs to be found in an incoming SWT) that says anyone who demonstrates direct knowledge of the issuer key will receive a SWT that includes that output claim specified in the rule*.So this particular rule means anyone who issues an OAuth WRAP request with the Issuer name as the wrap_name and the Issuer key as the wrap_password will receive a signed SWT that claims their "Roles=User".

*NOTE: there are other ways that this rule particular can match, but they are outside the scope of this blog post, check out this excellent guide by Keith Brown for more.

To test that our rule is working try this:

WebClient client = new WebClient();

client.BaseAddress = "https://{your-namespace-goes-here}.accesscontrol.windows.net";

NameValueCollection values = new NameValueCollection();

values.Add("wrap_name", "partner");

values.Add("wrap_password", "9QKoZgtxxU4ABv8uiuvaR+k0cOmUxfEOE0qfPK2lCJY=");

values.Add("wrap_scope", "http://odata.mydomain.com");

byte[] responseBytes = client.UploadValues("WRAPv0.9", "POST", values);

string response = Encoding.UTF8.GetString(responseBytes);

string token = response.Split('&')

.Single(value => value.StartsWith("wrap_access_token="))

.Split('=')[1];Console.WriteLine(token);

When I run that code get this:

Roles%3dUser%26Issuer%3dhttps%253a%252f%252ffabrikamjets.accesscontrol.windows.net%252f%26Audience%3dhttp%253a%252f%252fodata.mydomain.com%26ExpiresOn%3d1282071821%26HMACSHA256%3d%252bc2ZiBpm74Etw%252bAkXY1jNwme8acHfIYd9AAtGMckoss%253d

As you can see the Roles%#dUser is simply a UrlEncoded version of Roles=User, so assuming this is a correctly signed SWT (more on that in Step 3) our rule appears to be working.

Step 3 – Creating the OAuth WRAP HttpModule

Now we have our ACS server correctly configured the next step is to create a HttpModule to crack open SWTs and map them into principles for use inside Data services.

Lets just take the code we wrote in parts 4 & 5 and rework it for OAuth WRAP, firstly by creating a OAuthWrapHttpModule that looks like this:

public class OAuthWrapAuthenticationModule : IHttpModule

{

public void Init(HttpApplication context)

{

context.AuthenticateRequest +=

new EventHandler(context_AuthenticateRequest);

}

void context_AuthenticateRequest(object sender, EventArgs e)

{

HttpApplication application = (HttpApplication)sender;

if (!OAuthWrapAuthenticationProvider.Authenticate(application.Context))

{

Unauthenticated(application);

}}

void Unauthenticated(HttpApplication application)

{

// you could ignore this and rely on authorization logic to

// intercept requests etc. But in this example we fail early.

application.Context.Response.Status = "401 Unauthorized";

application.Context.Response.StatusCode = 401;

application.Context.Response.AddHeader("WWW-Authenticate", "WRAP");

application.CompleteRequest();

}

public void Dispose() { }

}As you can see this relies on an OAuthWrapAuthenticationProvider which looks like this:

public class OAuthWrapAuthenticationProvider

{

static TokenValidator _validator = CreateValidator();

static TokenValidator CreateValidator()

{

string acsHostname =

ConfigurationManager.AppSettings["acsHostname"];

string serviceNamespace =

ConfigurationManager.AppSettings["serviceNamespace"];

string trustedAudience =

ConfigurationManager.AppSettings["trustedAudience"];

string trustedSigningKey =

ConfigurationManager.AppSettings["trustedSigningKey"];

return new TokenValidator(

acsHostname,

serviceNamespace,

trustedAudience,

trustedSigningKey

);

}

public static TokenValidator Validator

{

get { return _validator; }

}public static bool Authenticate(HttpContext context)

{

if (!HttpContext.Current.Request.IsSecureConnection)

return false;if (!HttpContext.Current.Request.Headers.AllKeys.Contains("Authorization"))

return false;string authHeader = HttpContext.Current.Request.Headers["Authorization"];

// check that it starts with 'WRAP'

if (!authHeader.StartsWith("WRAP "))

{

return false;

}

// the header should be in the form 'WRAP access_token="{token}"'

// so lets get the {token}

string[] nameValuePair = authHeader

.Substring("WRAP ".Length)

.Split(new char[] { '=' }, 2);if (nameValuePair.Length != 2 ||

nameValuePair[0] != "access_token" ||

!nameValuePair[1].StartsWith("\"") ||

!nameValuePair[1].EndsWith("\""))

{

return false;

}// trim off the leading and trailing double-quotes

string token = nameValuePair[1].Substring(1, nameValuePair[1].Length - 2);if (!Validator.Validate(token))

return false;var roles = GetRoles(Validator.GetNameValues(token));

HttpContext.Current.User = new GenericPrincipal(

new GenericIdentity("partner"),

roles

);

return true;

}

static string[] GetRoles(Dictionary<string, string> nameValues)

{

if (!nameValues.ContainsKey("Roles"))

return new string[] { };

else

return nameValues["Roles"].Split(',');

}

}As you can see the Authenticate method does a number of things:

- Verifies we are using HTTPS because it would be insecure to pass SWT tokens around over straight HTTP.

- Verifies that the authorization header exists and it is a WRAP header.

- Extracts the SWT token from the authorization header.

- Asks a TokenValidator to validate the token. More on this in a second.

- Then extracts the Roles claims from the token (it assumes there is a Roles claim that contains a ',' delimited list of roles).

- Finally if every check passes it constructs a GenericPrincipal, with a hard coded identity set to ‘partner’, and the list of roles found in the SWT and assigns it to HttpContext.Current.User.

In our example the identity itself is hard coded because currently our ACS rules don’t make any claims about the username, it just has role claims. Clearly though if we added more ACS rules you could include a username claim too.

The TokenValidator used in the code above is lifted from Windows Azure AppFabric v1.0 C# samples, which you can find here. If you download and unzip these samples you’ll find the TokenValidator here:

~\AccessControl\GettingStarted\ASPNETStringReverser\CS35\Service\App_Code\TokenValidator.cs

Our create CreateValidator() method creates a shared instance of the TokenValidator, and as you can see we are pulling these settings from web.config:

<configuration>

…

<appSettings>

<add key="acsHostName" value="accesscontrol.windows.net"/>

<add key="serviceNamespace" value="{your namespace goes here}"/>

<add key="trustedAudience" value="http://odata.mydomain.com"/>

<add key="trustedSigningKey" value="{your token policy key goes here}>

</appSettings>

…

</configuration>The most interesting one is the trustedSigningKey.

This is a key shared between ACS and the resource server (in our case our HttpModule). It is the key from the token policy we created in step 2.The ACS server uses the token policy key to create a hash of the claims (or HMACSHA256) which gets appended to the claims to complete the SWT. Then to verify that the SWT and its claims are valid the resource server simply re-computes the hash and compares.

Now that we’ve got our module we simply need to register it with IIS via the web.config like this:

<configuration>

…

<system.webServer>

<modules>

<add name="OAuthWrapAuthenticationModule"

type="SimpleService.OAuthWrapAuthenticationModule"/>

</modules>

</system.webServer>

…

</configuration>Step 4 – Creating an OData Service

Next we need to add (if you haven’t already) an OData Service.

There are lots of ways to create an OData Service using WCF Data Services. But by far the easiest way to create a read/write service is using the Entity Framework like this.

Now because we’ve converted the OAuth WRAP SWT into a GenericPrincipal by the time requests hit our Data Service all the authorization techniques we already know using QueryInterceptors and ChangeIntercepts are still applicable.

So you could easily write code like this:

[QueryInterceptor("Orders")]

public Expression<Func<Order, bool>> OrdersFilter()

{

if (!HttpContext.Current.Request.IsAuthenticated)

return (Order o) => false;

var user = HttpContext.Current.User;

if (user.IsInRole("User"))

return (Order o) => true;

else

return (Order o) => false;

}And of course you can rework the HttpModule and interceptors as needed if your claims get more involved.

Step 5 – Acquiring and using a SWT Token

The final step is to write a client that will send a valid SWT with each OData request.

In part 3 we explored the available client-side hooks. So we know that we can hook up to the DataServiceContext.SendingRequest like this:

ctx.SendingRequest +=new EventHandler<SendingRequestEventArgs>(OnSendingRequest);

And in our event hander we can add headers to the outgoing request. For OAuth WRAP we need to add a authorization header in the form:

Authorization:WRAP access_token="{YOUR SWT GOES HERE}"

NOTE: the double quotes (") are actually part of the format, but the curly bracked ({) are not. See the string.Format call below if you have any doubts.

So our OnSendingRequest event handler looks like this:

static void OnSendingRequest(object sender, SendingRequestEventArgs e)

{

e.RequestHeaders.Add(

"Authorization",

string.Format("WRAP access_token=\"{0}\"", GetToken())

);

}As you can see this uses GetToken() to acquire the actual SWT:

static string GetToken()

{

if (_token == null){

WebClient client = new WebClient();

client.BaseAddress =

"https://{your-namespace-goes-here}.accesscontrol.windows.net";

NameValueCollection values = new NameValueCollection();

values.Add("wrap_name", "partner");

values.Add("wrap_password", "{Issuer Key goes here}");

values.Add("wrap_scope", "http://odata.mydomain.com");

byte[] responseBytes = client.UploadValues("WRAPv0.9", "POST", values);

string response = Encoding.UTF8.GetString(responseBytes);

string token = response.Split('&')

.Single(value => value.StartsWith("wrap_access_token="))

.Split('=')[1];_token = HttpUtility.UrlDecode(token);

}

return _token;

}

static string _token = null;As you can see we acquire the SWT once (by demonstrating knowledge of the Issuer key)and assuming that is successful we cache it for later reuse.

Finally if we issue queries like say this:

try

{

foreach (Order order in ctx.Orders)

Console.WriteLine(order.Number);

}

catch (DataServiceQueryException ex)

{

//var scheme = ex.Response.Headers["WWW-Authenticate"];

var code = ex.Response.StatusCode;

if (code == 401)

_token = null;

}And our token has expired, as it will after 60 minutes, an exception will occur and we can just null out the cached SWT and any retries will force our code to acquire a new SWT.

Summary

In this post we’ve come a long way. We’ve now got a simple OData and OAuth WRAP authentication scenario working end to end.

It is a good foundation to build upon. But there are a few things we can do to make it better.

We could:

- Configure our ACS to federate identities across domains, and configure our client code to do SWT exchange to go from one domain to another.

- Create an expiring cache of Principals so that we don’t need to re-validate everytime a new request is received.

- Upgrade our Principal object so it can handle more general claims rather than just User/Roles.

We’ll address these issues in Part 9.

Vittorio Bertocci (@vibronet) announced Programming Windows Identity Foundation” has been sent to the printer and “[this may look weird at first, but bear with me]” on 9/18/2010:

The Roman numerals notation emerged with Roman civilization itself, around the 9th century BC, though its roots go all the way back to the Etruscans.

It is not an especially handy system: it’s not well suited for representing large numbers, and arithmetic (especially multiplications and divisions) gets tricky real fast. Nonetheless it beats counting with fingers, scratches on sticks and stones, and backed the growth and development of Western civilization for more than 2 millennia. Although scientists and professionals managed to do their thing despite of the inherent complexities of the system, the layman was forced to rely on experts for anything beyond trivial accounting.

What I find absolutely amazing is that Europe got exposed to Hindu-Arabic numerals, an obviously superior system, before the year 1000; and our good Fibonacci, who learned about the system in Africa, even wrote a book about it. Despite that, pretty much everybody stubbornly stuck with the old system well into medieval times.

You know what changed everything? Printing. Once printing was invented, information started to circulate fast and the superiority of the new system became evident to a wider and wider audience. Network effect and Darwinian selection did the rest, and today we pretty much all use the new system. Now anybody with basic education can do most of the math he or she needs, and science advanced to marvels which I doubt would have been invented or discovered if we’d be stuck in some Roman numbers-fueled steampunk nightmare.

Why did I bore you with that tangent? Because I believe there’s an important lesson to be learned here: no matter how incredibly good an idea is, it’s the availability of the right technology that can make or break its fortunes.

The idea of claims has been around for quite some time now, however despite the wide consensus it gathered it didn’t enjoy widespread adoption until recent times. In fact, you have just to look at our platform to observe a Cambrian explosion of products and services which are taking hard dependencies on claims. What happened? Why now?

I’ll tell you what happened on our platform: Windows Identity Foundation showed up on the scenes. Windows Identity Foundation, which is at the heart of Active Directory Federation Services, Sharepoint 2010 and can easily be in your applications and services, too. Windows Identity Foundation gave legs to the ideas that, while very compelling, often failed to cross the chasm between the whiteboard and a functioning token deserializer, a manageable STS.

Windows Identity Foundation is what makes it possible for you to take advantage of the claims-based identity patterns, without feeling the pain of implementing the entire stack yourself. Since 2007 my job included evangelizing Windows Identity Foundation: a great experience, from which I learned a lot. One of the things which I’ve observed is that oftentimes people have a hard time using WIF in the right way, because they are stuck in mental models tied to the artifacts of the old way of doing things, such as dealing with credentials and protocols directly. This happens to security experts and to generalist developers alike. Invariably, just a bit of help in seeing things from the right angle is enough to push people past the bump and unleash great productivity; like many things on the Internet, once seen claims-based identity cannot be un-seen. The frustrating part of this is, though, that without that little help it’s not always easy to go past the bump. If you follow this blog you know that we go out of our way to provide you with samples, learning materials and occasions to learn through live and online sessions: but I wanted to do more, if possible. I wanted to capture some of the experience I gathered in the last few years and package it in a format that beginners and experts alike could consume.

The result of that effort has been sent to the printers yesterday, and it’s the book Programming Windows Identity Foundation.

In later posts I will perhaps go in further details about the table of contents, the people who contributed to the book, and even some content excepts, but right now I just want to breathe and look back at the reasons for which I took on this commitment, which is what I did while writing this weird post.

Writing this book has been hard work, but I truly, truly hope that it will help you past the bumps you may encounter and fully enjoy the power of claims-based identity.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

The Windows Azure Team posted a Real World Windows Azure: Interview with Sinclair Schuller, Chief Executive Officer at Apprenda case study on 8/19/2010:

As part of the Real World Windows Azure series, we talked to Sinclair Schuller, CEO at Apprenda, about using the Windows Azure platform to deliver the company's middleware solution, which helps Apprenda's customers deliver cloud applications. Here's what he had to say:

MSDN: Tell us about Apprenda and the services you offer.

Schuller: Apprenda serves independent software vendors (ISVs) in the United States and Europe that build business-to-business software applications on the Microsoft .NET Framework. Our product, SaaSGrid, is a next-generation application server built to solve the architecture, business, and operational complexities of delivering software as a service in the cloud.

MSDN: What was the biggest challenge you faced prior to implementing the Windows Azure platform?

Schuller: We wanted to offer customers a way to offload some of their server capacity needs to a cloud solution and integrate their cloud capacity with their on-premises capacity. We looked at other cloud providers, like Google App Engine and Salesforce.com, but those are fundamentally the wrong solutions for our target customers because they do not allow developers enough flexibility in how they build applications for the cloud.

MSDN: Can you describe the solution you built with the Windows Azure platform?

Schuller: SaaSGrid is the unifying middleware for optimizing application delivery for any deployment paradigm. With SaaSGrid and the Windows Azure platform, we can offer our customers more infrastructure options and they can build very sophisticated applications with the .NET Framework. We also see cloud computing as the biggest shift in how infrastructure is provisioned and consumed, and Window Azure gives customers the ability to take full advantage of the simplified provisioning and database scaling capabilities of Windows Azure and Microsoft SQL Azure.

MSDN: What makes your solution unique?

Schuller: The software industry is moving to a software-as-a-service model. Embracing this change requires developers to refactor existing applications and build out new infrastructure in order to move from shipping software to delivering software. By coupling the infrastructure capabilities of Windows Azure with SaaSGrid, we can offer our customers an incredibly robust, highly efficient platform at a low cost. Plus, customers can go to market with their cloud offerings in record time.

MSDN: What benefits have you seen since implementing the Windows Azure platform?

Schuller: With SaaSGrid and Windows Azure, ISVs can move their existing .NET-based applications to the cloud up to 60 percent faster and with 80 percent less new code than developing from the ground up. Customers do not have to invest significant capital and attain lower application delivery costs while ensuring application responsiveness. At the same time, with Windows Azure, customers can plan an infrastructure around baseline average capacity-rather than building around peat compute-intensive loads-and offset peak loads with Windows Azure. This helps our customers reduce their overall IT infrastructure footprint by as much as 70 percent.

For more information about Apprenda, visit: www.apprenda.com. To read more Windows Azure customer success stories, visit: www.windowsazure.com/evidence

Scott Dunsmore, et al., released patterns & practices - Windows Phone 7 Developer Guide to CodePlex on 8/17/2010:

Welcome to patterns & practices Windows Phone 7 Developer Guide community site

This new guide from patterns & practices will help you design and build applications that target the new Windows Phone 7 platform.

The key themes for these projects are:

As usual, we'll periodically publish early versions of our deliverable on this site. Stay tuned!

- Project Overview

- High level Architecture

- UI Mockups

Project's scope

Scott continues with lists of the project member’s blogs and recent posts.

Return to section navigation list>

VisualStudio LightSwitch

Mary Jo Foley (@maryjofoley) reported Microsoft starts Beta 1 rollout of new LightSwitch dev tool on 8/19/2010:

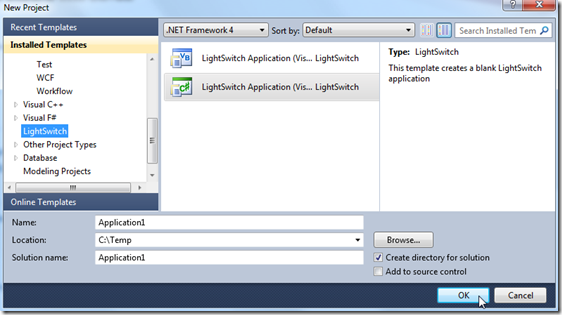

Microsoft is making the first beta of its LightSwitch development tool available to Microsoft Developer Network (MSDN) subscribers today, according to an August 19 Microsoft blog post.

Microsoft plans to make the LightSwitch beta available to the public on Monday, August 23, officials said earlier this month.

Microsoft is positioning LightSwitch, codenamed “KittyHawk,” as a way to build business applications for the desktop, the Web and the cloud. It’s a tool that relies on pre-built templates to make building applications easier for non-professional programmers. Microsoft officials have said LightSwitch is designed to bring the Fox/Access style of programming to .Net.

The LightSwitch Beta 1 documentation is available now on MSDN. The introduction to the documents makes it clear that while LightSwitch is meant to simplify development, it isn’t for non-programmers:

“The process of creating an application by using LightSwitch resembles other development tools. Connect to data, create a form and bind the data to the controls, add some validation based on business logic, and then test and deploy. The difference with LightSwitch is that each one of those steps is simplified.”

Many professional programmers have made their misgivings about LightSwitch public, claiming that non-professionals could end up creating a bunch of half-baked .Net apps using LightSwitch. Microsoft officials have countered those objections by saying LightSwitch applications can be handed off to professional developers to carry forward if/when needed.

LightSwitch allows users to connect their applications to Excel, SharePoint or Azure services. Applications built with LightSwitch can run anywhere Silverlight can — in a variety of browsers (Internet Explorer, Safari, Firefox), on Windows PCs or on Windows Azure. Microsoft is planning to add support for Microsoft Access to LightSwitch possibly by the time Beta 2 rolls around. Support for mobile phones won’t be available in version 1 of the product, Microsoft officials have said.

Microsoft officials have said LightSwitch will be a 2011 product.

(Note: I’m finding a number of the links on the MSDN blogs aren’t working and are redirecting users to log in. The pointer in the MSDN availability blog post to which I pointed at the start isn’t working. I have a question in to Microsoft about TechNet and BizSpark availability of the first LightSwitch beta. I’ll update when I hear more.)

Update: Only MSDN subscribers are getting the Beta 1 LightSwitch bits this week. TechNet, BizSpark and other users all have to wait until August 23, a Microsoft spokesperson confirmed.

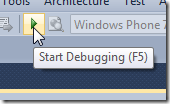

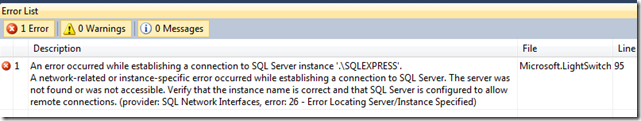

I downloaded and installed LightSwitch Beta 1 on 8/19/2010 without incident. However, make sure you have a running instance of SQL Server 200x Express named SQLEXPRESS with Named Pipes and TCP enabled before compiling a project. (Like Windows Azure, The LightSwitch server uses this instance as a local development database.) If you’re running SQL Server 2008 R2 Express, make sure the version is RTM (10.50.1600.1.)

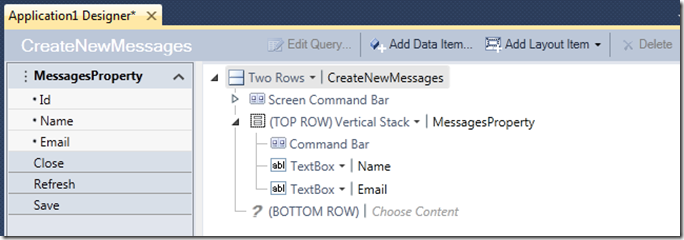

Michael Washington wrote The First Hour With LightSwitch –BETA- on 9/18/2010, the night LightSwitch bits were first available to MSDN subscribers:

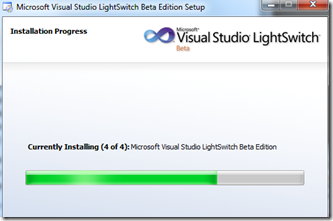

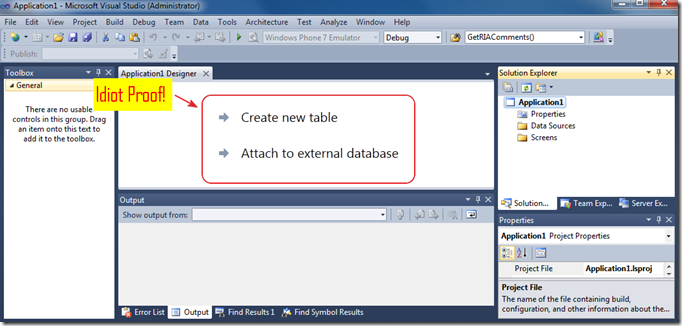

8:40 pm – I downloaded LightSwitch and it’s installing. I downloaded the .iso image and mounted it with PowerISO. This is a Beta and the first one at that. All I hope to see is “idiot Proof”. I already know how to program full scale Silverlight application.

This is not for me. It’s for “you” and “them”. The people who will hopefully have a tool that allows them to build useful applications… that will need professionals like me when they are ready to take to the next level.

Ok let’s do this…

Hmm that wasn’t what I was expecting to see next… (I don’t know what I expected actually…)

Ok now I’m wandering aimlessly… I hope this is what I’m suppose to do next…

Whew! Ok I think I’m back on track…

Yes! We have already achieved the start of Idiot Proof! (Must buy more MSFT stock…)

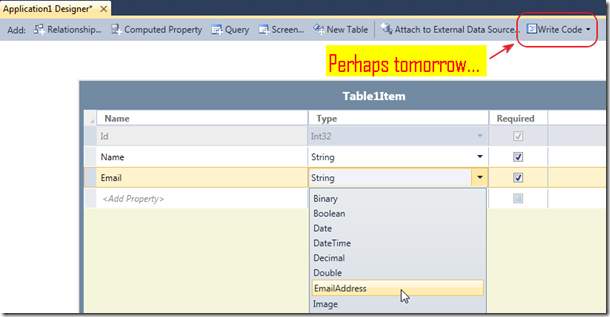

I spend 1 minute making a table that I call Messages.

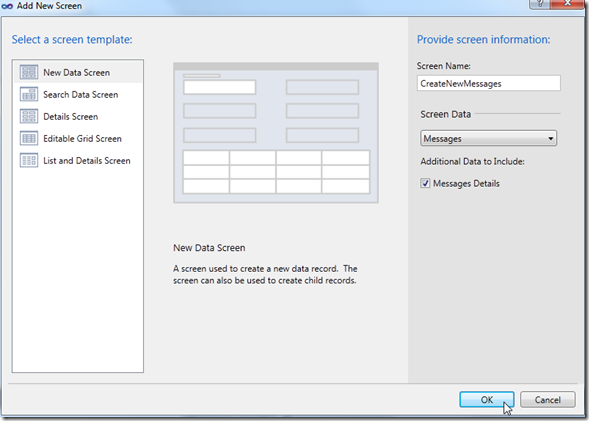

I click on the Screen button.

Ok all this seems obvious. I am doing this as it happens so at this point I do not know if this will actually work…

Hmm… now what am I looking at? Must stare at this screen for a bit…

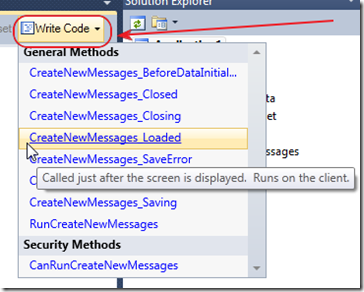

Ok I couldn’t resist, I had to click the Write Code button. Hmm I like what I see you are actually suppose to be able to write code if you need to.

But, I want to see what you can do if you don’t know how to write code.

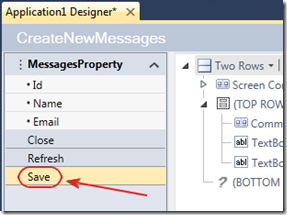

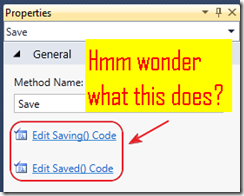

Ok one small detour, If I click on the “Save bar”…

I see additional places to write code :)

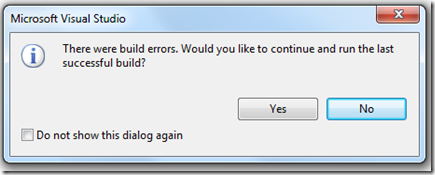

But, I have to admit I am totally lost. When in doubt, hit F5…

Nooooooo

(sigh)

[Exactly what happened to me! My copy of SQL Server 2008 R2 express had outlived it’s “evaluation period” and wouldn’t start.]

Ok I cannot resist a small rant. This is not ok, it must work, period. If this were Microsoft Access it would work. period. This kind of thing is also the problem I have with WCF. It is is hard to deploy. The fact that it is so great means nothing if it doesn’t work. Things must work, period. Ok rant over.

In case you didn’t notice, I am no longer wearing my “happy face”. However, this is a Beta software, and consider the rant above my “early feedback”. At this point a “Popup Wizard” should open up and guide me through solving the connection problem.

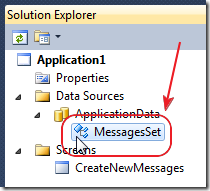

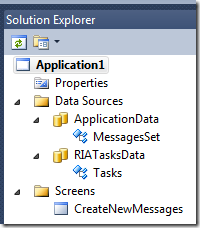

Ok now I have my “professional programmer” hat on and I’m gonna try to figure this one out. I double-click on MessagesSet in the Solution Explorer…

Click on Attach to External Data Source…

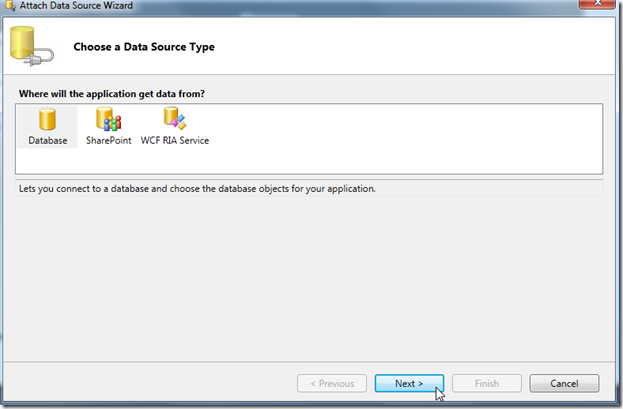

Umm let’s click Database and then Next…

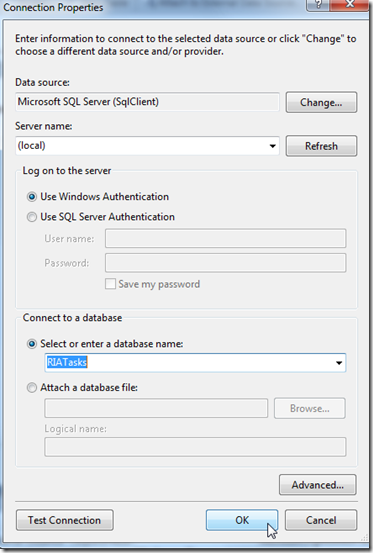

Ok I am connecting to one of my existing databases because I don’t know what else to do…

Ok I am connecting to the database used in this tutorial: RIATasks: A Simple Silverlight CRUD Example

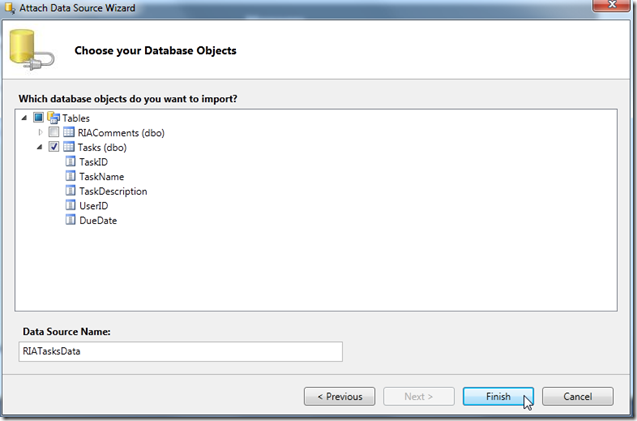

I point that out because while that tutorial is easy, it is still a LOT for a person to go through to create an application. My hope is that LightSwitch will make things like that easy.

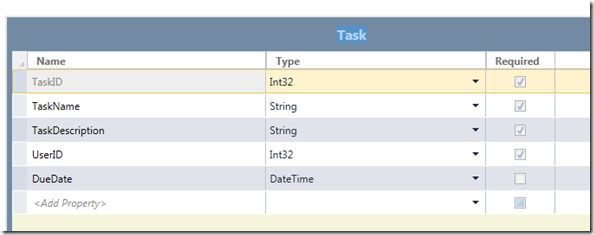

Now that it looks like I wont have a connection problem connecting to my existing data source, let’s try to build an application with my existing Tasks table…

Ok fine, the smile is starting to return…

However, the Solution Explorer now looks like the image above. I am going to delete MessagesSet and CreateNewMessages screen…

Michael continues with a “Second Try: Application 2.” I’m as non-plussed so far as Michael. LightSwitch doesn’t appear intuitive to me, but I’ve only spent an hour with it.

Beth Massi (@BethMassi) reported LightSwitch Beta 1 Available to MSDN Subscribers Today, General Public on Monday on 8/18/2010 with a few additional links:

We just released Visual Studio LightSwitch Beta 1 to MSDN subscribers. Public availability will be this Monday, August 23rd but if you are an MSDN subscriber visit your subscriptions page to get access to the download now. Otherwise check the the LightSwitch Developer Center on Monday for the public download.

Here are some resources to help get you started -- we have a lot more for you on Monday via the Dev Center so stay tuned!

- LightSwitch Beta 1 Documentation on MSDN

- Vision Clinic Application Walkthrough and Sample

- LightSwitch Forum

- LightSwitch on Channel 9

- LightSwitch Team Blog -- yes I am there too ;-)

We're looking forward to your feedback,

Paul Patterson (@PaulPatterson) posted Microsoft LightSwitch – Creating My First Table and the two following LightSwitch articles on 8/18/2010:

Alrighty then. In my last post I documented my experiences in using my Visual Studio environment to create a new LightSwitch project. Now that I have my project created, and few hours of sleep, I am going to wrangle some data together to create a simple table. What’s a Table? In my previous life as [...]

Microsoft LightSwitch – First Use

Oky doky, here we go… We’ve seen all the launch material, presentations, videos, MSDN stuff, blogs, and even followed the forums. Now it is time to sink some teeth into this LightSwitch. Here’s the deal though. Instead of doing a one-off post on the end-to-end process of creating an application. I am going to take [...]

Microsoft LightSwitch – My First #fail at Beta 1 Installation

Hey, I don’t need a map. I know where I am going… Maybe I should have read the instructions before attempting the beta 1 install of LightSwitch. I fired up the virtual DVD drive and double clicked Setup.exe. The excitement was killing me… Doh! You would think I would have known better when I saw the “…Beta Prerequisite…” [...]

Paul Patterson (@PaulPatterson) announced Microsoft LightSwitch – Beta 1 Available for Download to MSDN Subscribers on 8/18/2010:

If you are an MSDN subscriber then you can now download the Beta 1 of Visual Studio LightSwitch (here).

I was out at an appointment so I missed the announcement this afternoon, otherwise it would have already been downloaded, installed, and a blog post up about the installation experience already.

LightSwitch Beta 1 Documentation on MSDN

Vision Clinic Application Walkthrough and Sample

Here ‘ goes…

Paul Patterson (@PaulPatterson) posted Microsoft LightSwitch – A Value Proposition for the Enterprise on 8/17/2010, a day before Microsoft released Beta 1 to MSDN subscribers:

LightSwitch is a tool that will be used to easily create .net applications for the desktop as well as for the “cloud”. LightSwitch takes software development best practices and encloses them into an easy to use tool that developers can use to quickly build data centric Silverlight 4 applications.

The more I understand LightSwitch, the more I can understand what the value proposition will be for using LightSwitch in a larger enterprise. Although LightSwitch is not targeted specifically at organizations with dedicated IT business units, there is some value in enabling an enterprise with the tool.

My life in IT started out in a role as a Business Analyst. A Business Analyst is, essentially, the liaison between an organization’s business units and its information technology group. From line of business application support, to defining requirements of a brand new system, the role of a business analyst wears many hats. Fundamental to the role is making sure that the IT related issues are mapped and measured directly to the overall goals and objectives of the organization. I’ve worked as a Business Analyst for many years with a number of organizations, and have arguably worn every hat possible.

Here are some of things I have seen and learned over the years as a Business Analyst, as well as many other roles.

Empowerment

Like it or not, non-IT business units are going to build and use IT solutions.

Every single organization I have worked for has technology related solutions that are not, or were not originally, under the watchful and controlling eye of that organizations’ information technology group. I have seen everything from synchronizing mobile device applications to single user database applications being created by and used by non-IT business units. Often times these are applications that were created without the support of the IT group.

From a technology perspective, the larger the organization is, the more disparate business units become. This is likely because the group that is charged with managing an enterprise’s technology must carefully place priority on the biggest issues. A small business unit that needs a technology solution, like a simple database application, is likely not going to be put high on the IT priority list. And even if they get on the list, there are plenty of bigger, enterprise class IT fish that need to be fried.

Knowing Enough to be Dangerous.

Everyone in an organization has goals and objectives. Business units have their own goals mapped to the greater good of the enterprise. So if there is a potential to leverage a technology solution to help meet their own objectives, and they are not going to get support from the IT group, then what are they to do?

There were times when I would work 100% of my time on issues from software applications built by business units that had no or little experience in creating software. These are applications were needed sooner than later and were created to solve real business problems. The risk of not achieving or solving a business need was such that a solution was required, regardless of the quality and supportability of it.

Microsoft Access based applications are a prime example of how business units create tools that help solve common business problems. Most of the time these types of applications are created by people who have very little experience with software design. I have seen many Access based applications created with table structures that were simply the duplication of existing Excel spreadsheets. With a little reading, a person can quickly replace a spreadsheet solution with an Access based application with tables, queries, and forms for data visualization.

Usage of business unit created applications grows quickly. Without formal IT support and control, business units can quickly respond to their own requirements. It is not uncommon to see simple applications like these become mission critical for the business unit. Even more growth occurs across business unit domains where other groups see and recognize the value in the solution. Before long, that once simple Access based application has become a division wide line-of-business application.

Where’s the Value?

LightSwitch has been criticized by some as being a Microsoft Access replacement, or as a tool that does the same as Access – a drag and drop development tool. In fact, LightSwitch is not a replacement for Access. Instead, LightSwitch offers an application developer with a richer set of tools that delivers applications in a quicker and more effective way than Access can.

Notwithstanding, Access is still a great tool, however it would take a lot more time and effort to create an application that can be created using LightSwitch. Creating an Access application with; three tier architecture, model driven abstraction, and uses distributed business logic and data tier via the cloud… You do the math. Without training and experience, how long would it take you to create an Access based application to do all that?

From an enterprise perspective, there are a couple of value propositions here.

LightSwitch offers a data centric approach to creating applications, without requiring a developer to know much about the plumbing required to create databases and user interfaces. In Access, a user needs to design tables, queries, forms, and then wire the forms to the data. This workflow is somewhat similar in LightSwitch; however LightSwitch does so using a much more intuitive workflow.

Using this data centric approach, LightSwitch creates an application using software design best practices, without requiring a developer to write a single line of code. Creating sources of data is one thing, but wiring up the business logic, communication with the data source, and data presentation is another. LightSwitch takes care of all that for you. From a business perspective, that means the developer will save time in that the developer does not have to manually create the coding infrastructure needed to do all that.