Windows Azure and Cloud Computing Posts for 8/9/2010+

| A compendium of Windows Azure, Windows Azure Platform Appliance, SQL Azure Database, AppFabric and other cloud-computing articles. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database, Codename “Dallas” and OData

- AppFabric: Access Control and Service Bus

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Windows Azure Infrastructure

- Windows Azure Platform Appliance (WAPA)

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Major NoSQL coverage in this issue: See the Azure Blob, Drive, Table and Queue Services section. All use Azure Drive for persistence.

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Read the detailed TOC here (PDF) and download the sample code here.

Discuss the book on its WROX P2P Forum.

See a short-form TOC, get links to live Azure sample projects, and read a detailed TOC of electronic-only chapters 12 and 13 here.

Wrox’s Web site manager posted on 9/29/2009 a lengthy excerpt from Chapter 4, “Scaling Azure Table and Blob Storage” here.

You can now freely download by FTP and save the following two online-only PDF chapters of Cloud Computing with the Windows Azure Platform, which have been updated for SQL Azure’s January 4, 2010 commercial release:

- Chapter 12: “Managing SQL Azure Accounts and Databases”

- Chapter 13: “Exploiting SQL Azure Database's Relational Features”

HTTP downloads of the two chapters are available for download at no charge from the book's Code Download page.

Tip: If you encounter articles from MSDN or TechNet blogs that are missing screen shots or other images, click the empty frame to generate an HTTP 404 (Not Found) error, and then click the back button to load the image.

Azure Blob, Drive, Table and Queue Services

My (@rogerjenn) Windows Azure-Compatible NoSQL Databases: GraphDB, RavenDB and MongoDB post of 8/9/2010 begins:

I’m guessing that the properties of these three NoSQL database are what the original SQL Server Data Services (SSDS) and later SQL Data Services (SDS) teams had in mind when they developed their original SQL Server-hosted Entity-Attribute-Value (EAV) database. GraphDB is the newest and one of the most interesting of the databases.

SQL Azure has become very popular among .NET developers working on Windows Azure deployments due to its relatively low cost for 1-GB Web databases (US$9.99 per month). A single small Worker role instance of a NoSQL database costs US$ 0.12 * 24 * 30 = $86.40/month average, not counting storage charges.

As more details surface on the scalability and availability of these Azure-compatible databases becomes available, I’ll update this post.

The article continues with the following topics:

- GraphDB

- RavenDB

- MongoDB

- Windows-Compatible NoSQL Databases

Alex Popescu reported sones GraphDB available on Microsoft Windows Azure to his myNoSQL blog on 8/9/2010:

sones GraphDB available in the Microsoft cloud:

“The sones GraphDB is the first graph database which is available on Microsoft “Windows Azure. Since the sones GraphDB is written in C# and based upon Microsoft .NET it can run as an Azure Service in it’s natural environment. No Wrapping, no glue-code. It’s the performance and scalability a customer can get from a on-premise hosted solution paired with the elasticity of a cloud platform.”

You can read a bit more about it ☞ here.

In case you’ve picked [an]other graph database, you can probably set it up with one of the cloud providing Infrastructure-as-a-Service.

Reading List:

- sones Releases First Open Source Version of GraphDB

- Video: Emil Eifrem about NoSQL and the Benefits of Graph Databases

- NoSQL Graph Database Matrix

- InfiniteGraph Graph Database Reaches 1.0 Release

- Quick Review of Existing Graph Databases

You might like:

Alex’s post has an interesting set of “Most Read Articles” and “Latest Posts”. He describes his site:

myNoSQL features the best daily NoSQL news, articles and links covering all major NoSQL projects and following closely all things related to the NoSQL ecosystem.

myNoSQL is maintained by Alex Popescu, a code hacker, geek co-founder and CTO of InfoQ.com, web and NoSQL aficionado.

<Return to section navigation list>

SQL Azure Database, Codename “Dallas” and OData

Wayne Walter Berry (@WayneBerry) explained How to Tell If You Are Out of Room in an SQL Azure database in this 8/10/2010 post to the SQL Azure blog:

SQL Azure databases are capped in size; one reason for this is that we don’t want to send you a surprise bill if your data grows beyond your expectations. You can always increase or decrease the cap of your database (up to 50 Gigabytes); however this is not done automatically. This article will discuss the various tricks and tips to handling the size of your SQL Azure databases, including: making them bigger, querying your current cap, figuring out the current size, and detecting size errors.

One thing to note before we get started you are not charged based on the database cap, you are charged for the data that the database is actually holding based on ranges in each edition of the database. For the web edition those ranges are 0-1 Gigabytes, and 1-5 Gigabytes. For business edition those ranges are 0-10 Gigabytes, 10-20 Gigabytes, 20-30 Gigabytes, 30-40 Gigabytes, and 40-50 Gigabytes.

Current Cap

You can query the current cap of your SQL Azure database using this Transact-SQL query:

SELECT DATABASEPROPERTYEX ('AdventureWorksLTAZ2008R2' , 'MaxSizeInBytes' )Obviously, since the property is named MaxSizeInBytes the result are in bytes, rightly so SQL Azure believes that a Gigabyte is 1,073,741,824 bytes not 1,000,000,000 bytes. One thing to note, the database argument should be the same as the current database; otherwise the result will be NULL.

Current Size

You can query the current size of your database using the query below:

SELECT SUM(reserved_page_count) * 8192 FROM sys.dm_db_partition_statsThe results of this query are returned in bytes also, you can use it to compare to your current cap gotten from the query above. Pop quiz: When will the current size be bigger than the current limit? Answer: Never. If you try to update or insert data that exceeds the limit you will get SQL error.

Detecting the Size Error

If you are out of room in your SQL Azure database you will get error 40544, the error message is: “The database has reached its size quota. Partition or delete data, drop indexes, or consult the documentation for possible resolutions. “

In SQL Server Management Studio it looks like this:

Msg 40544, Level 20, State 5, Line 1

The database has reached its size quota. Partition or delete data, drop indexes, or consult the documentation for possible resolutions. Code: 524289If you wanted to trap this error in your C# code you could do this:

try { // ... } catch (SqlException sqlException) { switch (sqlException.Number) { // The database has reached its size quota. Partition or delete data, // drop indexes, or consult the documentation for possible resolutions. case 40544: break; } }Automatically Increasing the Database Cap

Wouldn’t it be nice to increase the cap when the database grew beyond the current cap? SQL Azure almost performs that auto growth for you, because we only charge you for the data you are using, not based on the database cap. For example, if you have .8 Gigabytes of data and a cap of 5 Gigabytes you are only charge the 0-1 Gigabyte range. When the database gets larger than 1 Gigabyte, there are no errors, however from that day onward you are charged for the 1-5 Gigabyte range. In essence this is auto growth, you tell us the maximum that the database is allowed to auto grow to, and we charge you only for the range that you are using. If you grow beyond your current range, we automatically charge you for the next range, without errors.

The caveat to this auto growth is that SQL Azure currently will not let you “jump” between web edition and business edition. You can have 1, 5, 10, 20, 30, 40 and 50 Gigabyte database caps. The one and five gigabyte database is part of the WEB edition, in order to get larger databases caps you need to change to the BUSINESS edition. This means that as your database grows, you need to keep track of that growth, and execute Transact-SQL (see the code in the next section) to change editions.

Coding Edition Changes

I was thinking about writing a little C# code data layer to automatically increase the database cap or the edition whenever an exception was caught with a SQL Server error number of 40544. This would be something to add to your Windows Azure web role, so that your database could continue growing.

However, there is a concurrency problem with this concept. Let’s say that your web site was getting thousands of connections per second, and just when the database was full, three or four threads on the Windows Azure server got error 40544. The threads would all attempt to increase the database size, make the database size grow larger than it needs to be. The solution might be to transactionally wrap the concept, so that only one thread at a time could increase the database size, and the proceeding threads would do nothing. However, the ALTER DATABASE statement in SQL Azure (the statement to increase the database size) needs to run in a batch by itself and cannot be transactionally wrapped.

I am still contemplating an elegant solution for handling a 40544 error, and automatically increasing the database edition; if I do find one I will blog about it. If you have an idea, post it to the comments below.

Changing the Database Size

While we can’t automatically change the cap, you can with some Transact-SQL. I blogged about changing the database cap in detail here. To change the database edition you can use the ALTER DATABASE Syntax like this:

ALTER DATABASE AdventureWorksLTAZ2008R2 MODIFY (EDITION='BUSINESS', MAXSIZE=10GB)Databases capped can be sized up and sized down. Interestingly enough if you try to downsize your database and you have too much data for that option, you will get this error:

Msg 60003, Level 16, State 1, Line 1

Operation failed because the resulting cumulative database size would exceed your database sku limit

Doug Ware (@dougware) asserts SharePoint REST (ODATA) is Insecure in this 8/10/2010 post:

SharePoint 2010 includes a number of new services to allow interoperability with other systems and to make it easy to create rich Internet applications. Among these is SharePoint's ODATA implementation provided by the ListData.svc service. Overall, I think the REST is an awesome lightweight alternative to formal SOAP. Unfortunately, the implementation SharePoint provides opens a large attack surface that makes it very easy to discover the information for every user in a site.

Unlike alternative interoperability services like the traditional SOAP based services and the SharePoint Client Object Model, you must access the ListData.svc with an account that has Browse User Information permission. Consider a scenario where you want to allow a system external to your organization to read and write items in a site. You can create a permission level that allows this interaction by granting:

- Add Items

- Edit Items

- Delete Items

- View Items

- Use Remote Interfaces

- Open

An account with this set of permissions allows the use of the Client Object Model and some of the SOAP services to the external system to read and write list items and documents. However, the account can't browse the site's contents because it hasn't got View Pages permission, nor can the account access information about the other accounts with access to the site.

SharePoint's WCF Services implementation, ListData.svc, will not work with the above set of permissions. To make it work, you must grant Browse User Information permission.

There are probably many scenarios where you can justify this, but I personally think that a data interchange technology that reveals the accounts with access to the data store is best avoided considering that the alternatives don't have this flaw.

I hope this is fixed soon, because I'd love to use REST with SharePoint. At the moment I think it would be irresponsible to do so in most of the scenarios where REST is attractive.

Mike Flasko describe an OData Extension for Internet Explorer in this 8/10/2010 post:

Most browsers today will automatically enable their RSS/Atom reader option when you're on a page that has a feed in it. This is because the webpage has one or more <link> elements pointing to RSS/Atom endpoints. This lead a few of us on the OData team to ask: Wouldn’t it be great if we could do the same for OData feeds and provide rich or alternate data viewing experiences if the data being displayed by a webpage is also exposed as an OData feed?

The first step towards exploring such an experience for OData is we need a standard way for pages to advertise their data is exposed using OData. We recently discussed this in the team and Pablo wrote about it here: “Advertising support for OData in web pages”. I wont rehash his post, but one idea is that you include tags such as below on the page to advertise the service doc and/or feeds for the OData service:

<link rel="odata.service" title="NerdDinner.com OData Service"href="/Services/OData.svc" />

<link rel="odata.feed" title="NerdDinner.com OData Service - Dinners" href="/Services/OData.svc/Dinners" />---- Extending the Data Browsing Experience ----

Ok, so now webpages can advertise their OData feeds – its time to look at one way we could make use of such information. For this I built a quick extension for IE which displays an OData button on the main IE toolbar. When you visit a page you can click the button and if the page advertises OData feeds you are one click away from viewing the feed in PowerPivot, Pivot, the OData Explorer or just in the main browser window. Here is the experience with the IE extension installed:

1) Go to nerddinner.com:

2) Click the new “odata button” on the toolbar:

3) The “Consume OData Feed” dialog opens and allows you to select the Service Document for the page or the “Dinners” feed and the desired viewing experience…

4) Selecting the ‘Dinners’ feed and ‘Pivot’ as the viewer and clicking OK launches Pivot. It then automatically pulls the ‘Dinners’ feed and on-the-fly generates the Pivot collection:

Since this extension is generic to any OData feed it works well with any webpage that advertises their OData feed. For example, here it is with odata.stackexchange.com and launching directly into PowerPivot…

2) automatically loading stackexchange data into excel :

Finally, just as you can launch into standalone viewers like Pivot and PowerPivot, the plugin allows redirecting to other websites which can take OData URIs as parameters. For example, selecting “OData Explorer” from the OData IE Extension when viewing the nerddinner.com site would cause the browser to open this page:

Ok, so that pretty much sums up the experience we’re thinking about. I’ll try to make the code for this IE plugin and possibly the OData JIT collection server for Pivot available as soon as I can. For convenience the code for this stuff currently has dependencies on stuff that we’ve not released in any form yet, so I need to break those dependencies first :) before I can publish the code.

Svetla Stoycheva posted a link to Shawn Wildermuth’s tutorial for Using OData with Windows Phone 7 SDK Beta in this 8/10/2010 post to the Silverlight Show blog:

Shawn Wildermuth posted the steps to using OData on WP7 phone.

While I was giving my OData talk, someone asked about consuming OData on the WP7 phone. I had done this on the CTP earlier, but hadn't tried it during the beta. So I figured I'd look into it today. While this is still pretty easy to do, the tooling still isn't in place. This means that you can't simply do an "Add Service Reference" to a Windows Phone 7 project. Instead you have to follow these steps:

Wayne Walter Berry (@WayneBerry) reminded all about this Article: Tips for Migrating Your Applications to the Cloud on 8/9/2010:

Because of the interest many of our corporate customers expressed in Windows Azure, George Huey and Wade Wegner decided to hold a set of Windows Azure Migration Labs at the Microsoft Technology Centers. The intent was for customers to bring their applications into the lab and actually migrate them to SQL Azure.

Through this process, every single customer was able to successfully migrate its Web applications and SQL databases to the Windows Azure platform; they used SQL Azure Migration Wizard. Now George and Wade are going to tell you how it was done in this article from MSDN Magazine.

Read the article here.

<Return to section navigation list>

AppFabric: Access Control and Service Bus

No significant articles today.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

The Windows Azure Team sent on 8/9/2010 the following e-mail message to users (like me) with old-timey Azure projects running in data centers:

ACTION RECOMMENDED: If you are using a CTP version of Windows Azure SDK (released before November 2009)

Hello Windows Azure Customer,

While reviewing system logs, we have discovered that one or more of your active deployments in the cloud may be using a Community Technology Preview (CTP) version of Windows Azure SDK. All Windows Azure SDKs released prior to November 2009 were CTP SDKs.

With the release of November 2009 SDK, CTP SDKs were deprecated and are no longer supported. Applications developed using CTP SDKs will no longer work in Windows Azure as of October 1, 2010. It is recommended that you recompile your application with the latest SDK available today and redeploy it.

The latest version of Windows Azure Tools and SDK is available at: http://go.microsoft.com/fwlink/?LinkId=128752

Please note this is not a monitored alias. For additional questions or support please use the resources available here.

Regards,

The Windows Azure Team.

You can run but you can’t hide; the CTP Police will find you.

Beth Massi (@BethMassi) reported New Videos and More Rolling out on LightSwitch Developer Center on 8/10/2010:

A new video just rolled out on the LightSwitch Developer Center on MSDN in a section that is all about helping you discover the range of possibilities that you can do with the product that were done by Orville McDonald, Visual Studio Product Manager:

Connect to Multiple Data Sources

More of these types of informational videos will roll out here until Beta 1 is released. But what’s the LightSwitch product team members doing? Let me tell you!

We’ve got a lot of great content in store as well, including deeper Channel 9 Videos on how to extend LightSwitch (watch this feed) and “How Do I” step-by-step videos and “Getting Started” tutorials on how to actually use the product (watch the dev center). We’ve also got a great line-up of team bloggers on the LightSwitch Team Blog who have started diving into the architecture of LightSwitch in response to the community asking some great questions on the Internet and in the LightSwitch forums.

The LightSwitch Developer Center will collect content from all these sources plus more. It will be your one-stop-shop for everything you need to get up to speed on LightSwitch including a link to the Beta 1 download on August 23rd.

I’m very excited to be part of this product team and even more excited to be learning LightSwitch and creating great content for you on MSDN.

Stay tuned!

Matt Cooper reports Sitefinity CMS 4.0 Beta running on Azure, now! in this 8/10/2010 post to Telerik’s Sitefinity forum:

Couldn't sleep last night so thought I would see how the current Beta got on with Azure. Turns out it seems to work fine already, have a look! :) http://sitefinity.cloudapp.net [see below].

I just created a couple of pages. /home and /test and wrote a tiny widget to get the instance of the Web Role that handled the request. So you can see which instance generated the page, there are currently 2 Web Roles running.

As you can see the whole interface is there too :) http://sitefinity.cloudapp.net/Sitefinity

I will leave it running for a couple of days while I do some testing and anything else I can think of at this early stage.

It ran fine in the development fabric as well so all looks good. The admin interface doesn't seem to mind being load balanced without any configuration and is clearly not using any session state as you can get subsequent requests handled by different instances, cool!

The DB also migrated to SQL Azure without any errors, well done Telerik.

It went a little slowly to start with but I then realised I had put the DB in W Europe and the App in N Europe!! Once I located them together it goes very quickly I think. I am just running 2 small instances for the Web Role. Admin site runs quickly too.

I haven't found anything that doesn't work yet, would appreciate the guidance of the Sitefinity team on what to try..? I would like to write a logging provider so the SF logs can be transferred to Azure storage along with the other diagnostics. Hopefully we can have an image manager provider to store images/videos/docs on Azure Storage which we can then push out to the CDN to speed things up further.

What’s next? I want to develop this, I think SF on Azure is going to be great.

Here’s a capture of the live proof of concept page:

Click here for more information on Sitefinity, an ASP.NET content management system (CMS), which Telerik describes as follows:

Telerik announces the release of the Sitefinity ASP.NET CMS 4.0 Beta, featuring a comprehensive forms module, advanced content versioning, revolutionary digital asset management platform, Fluent API and more.

Gareth Reynolds of the Microsoft Partner Readiness and Training UK team announced New Windows Azure Practice Developer & Architecture courses now available! on 8/10/2010:

Technical Architectural Guidance for Migrating your application to the Windows Azure Platform – NEW!

- Get architectural guidance for migrating your application

- Learn to use the Application Assessment tool

- Explore architectural design considerations for common application types

Assess and plan an application’s migration to Windows Azure – NEW!

The Windows Azure Migration Assessment Tool will allow you to understand the business and technical considerations before migrating to Windows Azure.

How to develop a practice around Windows Azure – NEW!

- View offers and programs which help you get started today

- Understand the value Windows Azure provides to enterprise Customers

- Learn how to develop a practice around Windows Azure

- Read white papers on how to take advantage of the Windows Azure Platform

Wes Yanaga answered Where are the Windows Azure CDN Nodes Located? on 8/10/2010:

The Windows Azure Content Delivery Network (CDN) enhances the user experience by placing copies of data at various locations on the network. Today, the Windows Azure CDN delivers Microsoft products such as Windows Update, Zune videos and Bing Maps.

This is the list of the 20 physical nodes for the Windows Azure CDN that was posted on the Windows Azure <TEAM BLOG>. Visit the link to learn more.

United States

- Ashburn, VA

- Bay Area, CA

- Chicago, IL

- San Antonio, TX

- Los Angeles, CA

- Miami, FL

- Newark, NJ

- Seattle, WA

EMEA

- Amsterdam, NL

- Dublin, IE

- London, GB

- Paris, FR

- Stockholm, SE

- Vienna, AT

- Zurich, CH

Asia-Pacific/Rest of World

- Hong Kong, HK

- Sao Paulo, BR

- Singapore, SG

- Sydney, AU

- Tokyo, JP

The Azure Team continues with:

Offering pay-as-you-go, one-click-integration with Windows Azure Storage, the Windows Azure CDN is a system of servers containing copies of data, placed at various points in our global cloud services network to maximize bandwidth for access to data for clients throughout the network. The Windows Azure CDN can only deliver content from public blob containers in Windows Azure Storage - content types can include web objects, downloadable objects (media files, software, documents), applications, real time media streams, and other components of Internet delivery (DNS, routes, and database queries).

For details about pricing for the Windows Azure CDN, read our earlier blog post here.

*A Windows Azure CDN customer's traffic may not be served out of the physically "closest" node; many factors are involved including routing and peering, Internet "weather", and node capacity and availability. We are continually grooming our network to meet our Service Level Agreements and our customers' requirements.

Don MacVittie (@dmacvittie) asked Is there a CDN in your future? on 8/9/2010:

Jessica Scarpoti over at Tech Target has an article discussing the state of CDN and application delivery. While I had not put a ton of thought into this particular market – CDNs grew into some pretty special-use, high-end items because of their pricing models over a decade ago – it does bear some obvious similarities to cloud, so this is a pretty well thought-out article.

The question that springs to mind for me is “will CDNs remain CDNs?” in the rush to cloud services, CDN providers are a large portion of the way there already, having to have massive distributed networks to do what they do efficiently. Global CDNs have touch-point in most geographic locations, if they could load balance them and further automate the services, they would move a very long way toward being cloud providers. And if one starts implementing cloud-like (in this case IaaS-like) services without claiming to be a cloud provider, all will have to follow suit to remain competitive.

And that’s when the division goes away. No matter what you call it, a massively distributed network with IaaS is a cloud provider, regardless of the name they choose to attach to their presence. The interesting bit is that CDN providers have a lot more experience keeping things separate, securing your content, and delivering on five nines or better than your average cloud provider. Which would make such a move highly competitive, since availability and security are two of the biggest things holding back public cloud.

Of course the trend to lower CDN pricing based upon competition would have to continue to the point that your average mid-sized enterprise could afford to start tossing a lot of applications out there before this could have meaning for the enterprise. Of course, since Cloud providers are rapidly catching up in the ability to get your content out there and host servers, some have already reached parity, I see this as inevitable if CDN providers wish to survive.

Sometimes, the wheel does get reinvented – well.

So when you’re shopping around for cloud or hosting providers, revisit CDNs – you know, the group of providers that you had previously decided was too expensive – and compare their TCO with the TCO and capabilities of your cloud provider before making the leap. You may find that you can save some of your budget in what most of us have long considered a highly unlikely place, and while you’re getting a bargain, you will likely be getting enhanced services too, just so they can keep ahead of the competition.

James Staten reported AP's API Empowers New Media Through AWS And Azure on 8/9/2010:

It’s no secret traditional news organizations are struggling to stay relevant today in an age where an always-connected generation has little use for newspaper subscriptions and nightly news programs. The Associated Press (AP), the world's oldest and largest news cooperative, is one such organization who has felt the threats which this paradigm shift carries and thus the need to intensify its innovation efforts. However, like many organizations today, its in-house IT Ops and business processes weren’t versatile enough for the kind of innovation needed.

"The business had identified a lot of new opportunities we just weren't able to pursue because our traditional syndication services couldn't support them," said Alan Wintroub, director of development, enterprise application services at the AP, "but the bottom line is that we can't afford not to try this."

To make AP easily accessible for emerging Internet services, social networks, and mobile applications, the nearly 164-year-old news syndicate needed to provide new means of integration that let these customers serve themselves and do more with the content — mash it up with other content, repackage it, reformat it, slice it up, and deliver it in ways AP never could think of — or certainly never originally intended.

In my recent Forrester report I discuss how AP created its API platform, an SOA architecture merging Windows Azure and Amazon Web Services, to achieve the above, and in doing so, bring products to market faster, provide even greater flexibility to its partners, and more rapidly prove out new business models. It’s a classic case of agile development leveraging self-service capabilities and not being constrained by the status quo.

What’s your cloud use story? Share it with us and we’ll help spread the word in next year’s best practices report.

— Christian Kane contributed to this Forrester report and blog.

Chris Czarnecki asked Why Are So Many Microsoft Developers In the Dark about Azure? in this 8/9/2010 post to the Learning Tree blog:

I recently taught the Learning Tree ASP.NET MVC course. This framework is really elegant and was enthusiastically received by a group of 15 .NET developers. When we came to discuss deploying MVC applications I mentioned that Cloud Computing could provide a cost effective environment for testing and deployment of not just MVC applications but all .NET Web applications. As the discussion progressed and I mentioned Microsoft Azure I was really surprised to discover that not one of the 15 attendees had heard of or knew anything about Microsoft Azure. This got me thinking – was it the experience level of attendee that contributed to this lack of awareness(i.e. new to .NET development) or is it that Microsoft are failing to get the message across to their development community about Azure ?

On closer analysis only 2 of the 15 attendees were relatively new to .NET development, the rest had a least 2 years .NET programming experience. So why had they not heard of Azure ? Whilst Microsoft have made a strong marketing push on Azure it is clear it is not reaching a large section of their development community. There could be many reasons for this – and I am purely speculating here, because of things such as

- Is their hardware infrastructure currently large enough to support a large number of deployments ?

- Is their Azure software and toolset currently robust enough to support a large number of deployments ?

- Does the cloud computing revenue model leave Microsoft disadvantaged compared to selling software server licenses ?

Or it may be none of these and that I have such a small sample size that it is has no meaning although I believe it does have meaning. It may also be that Microsoft is building its Azure user base in a controlled manner whilst it verifies its infrastructure.

From my experience of Azure I can state with certainty that

- It works

- A lot of the features are really neat and incredibly useful e.g. provisioning a SQL server instance in less than 60 seconds

- There is room for improvement – e.g. application deployment time, deploying plain ASP.NET and MVC applications

The recent announcements by Microsoft of the deal to work with eBay and also the private cloud offering show Microsoft have a strong commitment to Azure. It will be interesting to see how their marketing strategy gains momentum over the coming months to reach a wider audience in parallel with the increase in functionality of their cloud computing offering. If you are interested in learning about Azure and how it compares to other cloud computing offerings such as Amazon EC2 it is worth considering the Learning Tree Cloud Computing course. Alternatively, for an in depth knowledge of what Azure has to offer and how to exploit this for your organisation take a look at the excellent Azure course.

It occurs to me that none of the developers in the class were members of a local .NET Users group or attended Microsoft PDC or TechEd conferences. That is, this was an abnormally insular group.

Return to section navigation list>

Windows Azure Infrastructure

Fred O’Connor engages in breathless journalism by reporting Cloud Computing Will Surpass the Internet in Importance in this 8/9/2010 post to PCWorld’s Business Center blog:

Cloud computing will top the Internet in importance as development of the Web continues, according to a university professor who spoke Friday at the World Future Society conference in Boston.

While those who developed the Internet had a clear vision and the power to make choices about the road it would take -- factors that helped shape the Web -- Georgetown University professor Mike Nelson wondered during a panel discussion whether the current group of developers possesses the foresight to continue growing the Internet.

"In the mid-90s there was a clear conscience about what the Internet was going to be," he said. "We don't have as good a conscience as we did in the '90s, so we may not get there."

While a vision of the Internet's future may appear murky, Nelson said that cloud computing will be pivotal. "The cloud is even more important than the Web," he said.

Cloud computing will allow developing nations to access software once reserved for affluent countries. Small businesses will save money on capital expenditures by using services such as Amazon's Elastic Compute Cloud to store and compute their data instead of purchasing servers.

Sensors will start to appear in items such as lights, handheld devices and agriculture tools, transmitting data across the Web and into the cloud.

If survey results from the Pew Internet and American Life Project accurately reflect the U.S.' attitude toward the Internet, Nelson's cloud computing prediction could prove true.

In 2000, when the organization conducted its first survey and asked people if they used the cloud for computing, less than 10 percent of respondents replied yes. When asked the same question this May, that figure reached 66 percent, said Lee Rainie, the project's director, who also spoke on the panel.

Further emphasizing the role of cloud computing's future, the survey also revealed an increased use of mobile devices connecting to data stored at offsite servers.

However, cloud computing faces development and regulation challenges, Nelson cautioned.

"There are lots of forces that could push us away from the cloud of clouds," he said.

He advocated that companies develop cloud computing services that allow users to transfer data between systems and do not lock businesses into one provider. The possibility remains that cloud computing providers will use proprietary technology that forces users into their systems or that creates clouds that are only partially open.

"I think there is a chance that if we push hard ... we can get to this universal cloud," he said.

Cloud computing must also contend with other challenges, he said. Other threats include government privacy regulations, entertainment companies that look to clamp down on piracy, and nations that fear domination from U.S. companies in the cloud computing space and develop their own systems.

Beyond the role of cloud computing and the Internet, the Pew Research Center also examined how the Web possibly decreased intelligence, redefined social connections and raised the question if people share too much personal information online, among other issues.

On the question of the Web lowering society's intelligence, the center found that people's inherent traits will determine whether they use the Web as a tool to seek out new information and learn or simply accept the first answer that Google delivers. The technology is not the problem, said Janna Anderson, director of the Imagining the Internet Center at Elon University, who also spoke on the panel.

People responded that the Internet has not negatively impacted their social interactions. Respondents answered that they realize social networking does not necessarily lead to deep friendships. The Internet affords people tools that allow them to continue being introverted or extroverted, Anderson said.

Young adults face criticism for posting too much personal information to sites like Facebook. Research indicates that the extreme sharing of information is unlikely to change. Lee said that young adults have embraced social networking and will continue to do so because online sharing builds relationships. He also said that the survey showed a new view on privacy that advocated disclosing more personal details.

Mike Nelson’s assertion might have risen above foolishness if he made the case that private clouds would dominate the cloud-computing landscape. He didn’t. This article would have been grist for Don MacVittie’s essay [see below.]

Don MacVittie’s (@dmacvittie) The Clouds! They Are In My iPhone! post of 8/6/2010 to F5’s DevCentral blog debunks recent cloud-computing posts by self-appointed experts (a.k.a. thought leaders):

This week has been a banner week in the world of Cloud computing. The experts have been out there saving us from ourselves in droves. Good to know that there is no shortage of people who either don’t get what cloud is and think they do, or don’t get what cloud isn’t and think they do. Meanwhile, they will offer us a bit of entertainment. The second more than the first, admittedly, but both are amusing.

I CAN HA[Z] FUD?

First for your studied consideration is Phil Wainright, writing over on Ziff-Davis’ website. In Calling All Cloud Skeptics, he claims that everyone now acknowledges that cloud is the future of computing. Apparently

there was no future before cloud came along? Or is cloud so powerful it has now changed the future? No more laptops for you, for cloud is the future of computing! That’s just the first paragraph. It gets worse. Let’s look, shall we?

In paragraph two, he points out that he is searching for the biggest cloud skeptic (notice the polarizing terminology, much like happens in politics) to sit on a panel hosted by… A Cloud Integrator. There are more transparent set-ups, but I would argue that they are few. That’s okay, this blog goes on to win the “top of the heap” prize. Let’s keep looking.

Oh lookie, I found someone’s #snark tag

Next up, the writer says

But be warned: this is no easy challenge. Cloud skeptics have a habit of setting up a ’straw man’ definition of cloud computing that’s easy to demolish but has no relation to the reality of the cloud.

This is provocative enough, but even better, what Mr. Wainright thinks is the “reality of the cloud” is never defined, thus setting up a supposed superior position based not on facts, but on ad-hominem attacks before he even knows who he’s attacking.

Think I’m wrong? Haven’t read the blog yet? Try this little snippet on for size…

Is this really a dying breed or does cloud skepticism have a champion who can rally the forces of fear, uncertainty and doubt against the onward march of cloud computing?

No, not the slightest bit of grand-standing, name-calling, or “my stance is right, yours is FUD” going on. Not one bit.

The real problem with his entire write-up? The fallacy that this gentleman appears to be hugging tightly – that the world is black and white, you’re either for cloud or against it (whatever unnamed definition he’s thinking of)… And that just isn’t reality. In the real world, IT is cautiously evaluating their options and finding where cloud fits into their overall architecture plans. Like every other “next big thing”, cloud isn’t going to destroy the old, it will complement it in important ways. So it’s not us-versus-them, and as long as people like Mr. Wainright act like it is, they are doing a huge disservice to the market and underestimating the capability of IT to see through the smoke and mirrors and find what is useful and good for their organization. Of course I could be wrong, after all, he says in his bio that he’s been a cloud computing expert since 1998, a clear indication of competence. But I don’t think so, perhaps he can team up with our next expert though…

THE CLOUDS! THEY ARE IN MY iPHONE!

Next up is the new-new-newest definition of cloud, brought to you by Robert Green on Briefing.com. While many of you might have seen this passed around by the Clouderati on Twitter, for those who haven’t, it’s a fun read. Interesting bits I “learned”…

- Cloud computing is only wireless. Yes indeed, it’s a fact. I didn’t know that, and it seems that it includes cell phones but not much else.

- Your connection to the cloud is not dedicated to a single server… Making a many to one relationship of servers/clients. This is intriguing, I didn’t know that my little cell phone got many servers completely dedicated to it, silly me, I thought many clients used each server. Of course I’m obviously behind the times, since I thought that your connection was to a single server at any given time.

- A service or device that requires Internet access should not be considered cloud. Really? I missed that one, I could have sworn that those little cloud graphics grew from the traditional representation of the Internet… But no, I’m wrong because…

- Later he declares that the fact that phones store data on servers is the reason that the term “cloud” came about. Huh. Good to know, my history was obviously off.

- And finally, when looking at all the separate clouds in the sky, you can see them from one place, thus the reason it’s called cloud.

You just can’t make this stuff up. Or at least I can’t. Obviously someone can.

BUT SERIOUSLY FOLKS

I’m not the cloud skeptic. I’m the cloud realist. Like the knowledgeable IT people who commented on Mr. Wainright’s blog, I want to see cloud get past this type of stuff and become real. Grand-standing and writing sans research does no one any good, it leaves the less informed members of the market more confused than yesterday and doesn’t forward the realistic use of cloud.

Want to know about cloud? Follow Lori. On Twitter, in her blog, where ever. There are plenty of other great luminaries out there, follow one of them. But check facts a bit. These two are obviously not luminaries, and they’re just two examples of an Internet full of self-proclaimed experts.

You know I follow Lori. She’s a “legit cloud luminary.”

David Linthicum claimed “Cloud computing has created some great opportunities in IT, but many still consider cloud computing a threat to their livelihood” in a prefix to his Dealing with the cloud's threat to IT jobs post of 8/10/2010 to InfoWorld’s Cloud Computing blog:

It's difficult to track cloud computing without stumbling upon a few stories about cloud computing coming in and pushing IT workers out. There's also the threat that if they don't adopt cloud computing, they'll be labeled as "non-innovative" and shoved out the door just as fast.

I hear about these concerns more in one-on-one conversations than in meetings these days, as it's become very politically incorrect to push back on cloud computing in public statements. My response is a bit different, depending on whom I'm speaking with, but the core notion is the same: We are always evolving IT; thus if you're in IT, your job is going to change much more often than other industries -- so get over it. Cloud computing is not the first disruptive technology to evolve approaches, skills, and career paths, and it won't be the last.

The core concern is that more efficiency leads to fewer people. Indeed, cloud computing should bring better efficiencies to IT, so in some instances businesses won't need as many IT bodies as before. This is logical considering that having fewer servers in the enterprise means needing fewer people to manage the servers. Moreover, the cloud brings better ways to do development and testing, as well as fewer instances of expensive enterprise software installations that have to be maintained internally.

Clearly, we're going to adjust our staffing needs within enterprises as cloud computing becomes more pervasive. But in the past, we've done this around the ERP movement and client/server, outsourcing, just to name two. I believe there will be many more cloud computing jobs created in terms of cloud managers, cloud solutions architects, platform-service developers, and so on. I suspect that there will be a huge net gain in IT jobs due to the cloud, and salaries should kick up higher over the next several years as well.

The real concern here is around change, not cloud computing. Change is and should be core to IT. We should always be thinking about better ways to support the business. Cloud computing is just one instance of change and one instance of a type of solution that could make things better. The more effective IT is, the more opportunities we'll have for growth -- and that translates into more, not fewer, jobs. Let's keep that in mind.

R “Ray” Wang continued his Research Report: The Upcoming Battle For The Largest Share Of The Tech Budget (Part 2) – Cloud Computing on 8/10/2010:

Welcome to a part 2 of a multi-part series on The Software Insider Tech Ecosystem Model. Part 2 describes how the cloud fits into the model. Subsequent posts will apply the model to these leading vendors:

- Cisco

- Dell

- HP

- IBM

- Microsoft

- Oracle

- Salesforce.com

- SAP

The aggregation of these posts will result into a research report available for reprint rights.

Cloud Computing Represents The “New” Delivery Model For Internet Based IT Services

Technology veterans often observe that new mega trends emerge every decade. The market has evolved from mainframes (1970’s); to mini computers (1980’s); to client server (1990’s); to internet based (2000’s); and now to cloud computing (2010’s). Many of the cloud computing trends do take users back to the mainframe days of time sharing (i.e. multi-tenancy) and service bureaus (i.e cloud based BPO). What’s changed since 1970? Quite plenty — users gain better usability, connectivity improves with the internet, storage continue to plummet, and performance increases in processing capability.

Cloud delivery models share a stack approach similar to traditional delivery. At the core, both deployment options share four types of properties (see Figure 1):

- Consumption – how users consume the apps and business processes

- Creation – what’s required to build apps and business processes

- Orchestration – how parts are integrated or pulled from an app server

- Infrastructure – where the core guts such as servers, storage, and networks reside

As the über category, Cloud Computing manifests in the four distinct layers of:

- Business Services and Software-as-a-Service (SaaS) – The traditional apps layer in the cloud includes software as a service apps, business services, and business processes on the server side.

- Development-as-a-Service (DaaS) – Development tools take shape in the cloud as shared community tools, web based dev tools, and mashup based services.

- Platform-as-a-Service (PaaS) – Middleware manifests in the cloud with app platforms, database, integration, and process orchestration.

- Infrastructure-as-a-Service (IaaS) – The physical world goes virtual with servers, networks, storage, and systems management in the cloud.

Figure 1. Traditional Delivery Compared To Cloud Delivery

The following illustrations are from Ray’s later topics. Read Ray’s entire analysis here.

Figure 2. The Software Insider Tech Ecosystem Model For The Cloud

Figure 3. Sample Solution Providers Across The Four Layers Of Cloud Computing

Audrey Watters’ Recommended Listening: 10 Cloud Computing Podcasts post of 8/10/2010 provides a list of the most significant recurring cloud-related podcasts:

Looking for some cloud-related listening? An aural explanation of the hypervisor? Analysis of cloud news? Interviews with industry experts?

There are actually a number of podcasts devoted to the subject of cloud computing. We've gathered a list for you below, along with a few individual episodes from other technology podcasts that address cloud and virtualization issues.

If you have other suggestions or recommendations, please leave us a comment.

Cloud Computing Podcast Series

- Overcast: Conversations on Cloud Computing: Overcast is hosted by cloud bloggers James Urquhart and Geva Perry.

- Cloud Computing Podcast: Blue Mountain Labs CTO David Linthicum hosts this podcast, focusing on enterprise adoption of cloud computing.

- This Week in Cloud Computing: Hosted by Amanda Coolong, TWiCC offers both an audio and video podcast of its weekly show.

- The Cloud Computing Show: Hosted by Gary Orenstein, The Cloud Computing tracks industry news.

- IT Management Podcast: Redmonk industry analyst Michael Coté and Tivoli's John Willis talk infrastructure.

- DABCC Radio: This podcast features virtualization and cloud computing news interviews.

- Cloud Weekly: While Cloud Weekly is a self-described "infinite hiatus," you can still find archives to past shows via iTunes.

Cloud-Related Podcast Episodes

- TechStuff: "How Cloud Computing Works," July 2008 and "The Dark Side of Cloud Computing," October 2008

- Briefings Direct: "Governance in Cloud Computing," June 2009

- Mixergy: "The Challenges of Having Investors," with Microsoft Azure's Brandon Watson, June 2009

Lori MacVittie (@lmacvittie) asserts Multi-tenancy encompasses the management of heterogeneous business, technical, delivery, and security models in a preface to her Multi-Tenancy Requires More Than Just Isolating Customers post of 8/9/2010 to F5’s DevCentral blog:

Last week, during what was certainly an invigorating if not agonizingly redundant debate regarding the value of public versus private cloud computing , it was suggested that perhaps if we’d just refer to “private cloud” computing as “single-tenant cloud” all would be well.

I could point out that we’ve been over this before, and that the value proposition of shared infrastructure internal to an “organization” is the sharing of resources across projects, departments, and lines of business all of which are endowed with their very own budgets. There are “customer” level distinctions to be made internal to an organization, particularly a large one, that may perhaps be lost on those who’ve never been (un)fortunate enough to work within the trenches of an actual enterprise IT organization.

The problem is larger than that, however, and goes far beyond the simplistic equating of “line of business” with “company”. Both still assume that tenant is analogous to business (customer in the eyes of a public cloud provider) and that’s simply not always the case.

THE TYPE of CLOUD DETERMINES the NATURE of the TENANT

Certainly in certain types of clouds, specifically a SaaS (Software as a Service) offering, the heterogeneity of the tenancy is at the customer level. But as you dive down the cloud “stack” from SaaS –> PaaS –> IaaS you’ll find that the “tenant” being managed changes. In a SaaS, of course, the analogy holds true – to an extent. It is business unit and financial obligation that defines a “tenant”, but primarily because SaaS focuses on delivering one application and “customer” at that point becomes the only real way to distinguish one from another. An organization that is deploying a similar on-premise SaaS may in fact be multi-tenant simply by virtue of supporting multiple lines of business, all of whom have individual financial responsibility and in many cases may be financially independent from the “mothership.”

Tenancy becomes more granular and, at the very bottom layer, at IaaS, you’ll find that the tenant is actually an application and that each one has its own unique set of operational and infrastructure needs. Two applications, even though deployed by the same organization, may have a completely different – and sometimes conflicting – set of parameters under which it must be deployed, secured, delivered, and managed.

A truly “multi-tenant” cloud (or any other multi-tenant architecture) recognizes this. Any such implementation must be able to differentiate between applications either by applying the appropriate policy or by routing through the appropriate infrastructure such that the appropriate policies are automatically applied by virtue of having traversed the component. The underlying implementation is not what defines an architecture as multi-tenant or not, it’s how it behaves.

When you consider a high-level architectural view of a public cloud versus an on-premise cloud, it should be fairly clear that the only thing that really changes between the two is who is getting billed. The same requirements regarding isolation, services, and delivery on a per-application basis remain.

THE FUTURE VALUE of CLOUD is in RECOGNIZING APPLICATIONS as INDIVIDUAL ENTITIES

This will become infinitely more important as infrastructure services begin to provide differentiation for cloud providers. As different services are able to be leveraged in a public cloud computing environment, each application will become more and more its own entity with its own infrastructure and thus metering and ultimately billing. This is ultimately the way cloud providers will be able to grow their offerings and differentiate from their competitors – their value-added services in the infrastructure that delivers applications powered by on-demand compute capacity.

The tenants are the applications, not necessarily the organization, because the infrastructure itself must support the ability to isolate each application from every other application. Certainly a centralized management and billing framework may allow customers to manage all their applications from one console, but in execution the infrastructure – from the servers to the network to the application delivery network – must be able to differentiate and treat each individual application as its own, unique “customer”. And there’s no reason an organization with multiple internal “customers” can’t – or won’t – build out an infrastructure that is ultimately a smaller version of a public cloud computing environment that supports such a business model. In fact, they will – and they’ll likely be able to travel the path to maturity faster because they have a smaller set of “customers” for which they are responsible.

And this, ultimately, is why the application of the term “single-tenant” to an enterprise deployed cloud computing environment is simply wrong. It ignores that differentiation in a public IaaS cloud is (or should be) at the same level of the hierarchy as an internal IaaS cloud.

CLOUD COMPUTING is ULTIMATELY a DEPLOYMENT and DELIVERY MODEL

Dismissing on-premise cloud as somehow less sophisticated because its customers (who are billed in most organizations) are more granular is naive or ignorant, perhaps both. It misses the fact that public cloud only bills by customer, its actual delivery model is per-application, just as it would be in the enterprise. And it is certainly insulting to presume that organizations building out their own on-premise cloud don’t face the same challenges and obstacles as cloud providers. In most cases the challenges are the same, simply on a smaller scale. For the largest of enterprises – the Fortune 50, for example – the challenges are actually more demanding because they, unlike public cloud providers, have myriad regulations with which they must comply while simultaneously building out essentially the same architecture.

Anyone who has worked inside a large enterprise IT shop knows that most inter-organizational challenges are also intra-organizational challenges. IT even talks in terms of customers; their customers may be internal to the organization but they are treated much the same as any provider-customer relationship. And when it comes to technology, if you think IT doesn’t have the same supply-chain management issues, the same integration challenges, the same management and reporting issues as a provider then you haven’t been paying attention.

Dividing up a cloud by people makes little sense because the reality is that the architectural model divides resources up by application. Ultimately that’s because cloud computing is used by applications, not people or businesses.

John Dix moderated a Who has the better virtualization platform – VMware or Microsoft? Tech Debate for NetworkWorld on 8/9/2010:

A big head start matters

By Bogomil Balkansky, Vice president of product marketing, Virtualization and Cloud Platform at VMware

IT is being served by a combination of vendors, some of whom are working to provide a clear path forward, and others that are struggling to hold on to the past. VMware vSphere represents the architecture, expertise and ecosystem driving a new approach for IT, enabled by virtualization and broadly recognized as cloud computing.

VSphere capabilities have expanded to optimize not only servers, but storage and network, improving quality of service, increasing IT agility and recasting the economic model of computing.

Since pioneering x86 virtualization more than a decade ago, VMware has established a steady track record of industry firsts, continually advancing the transformative capabilities of virtualization through our flagship platform, VMware vSphere: first live migration, first integrated network distributed switch, first VM fault tolerance capabilities – the list goes on.

The destination has changed

By David Greschler, Director of virtualization strategy, Server and Tools Business at Microsoft

If you think VMware is the winner of this conversation, I'd ask you to think again. IT leaders care about deploying a platform that is reliable, stable, cost-effective and easily adapts to changing business needs. A few years ago virtualization was seen as the final destination. Now it is clear this technology is a stepping stone to the more agile, responsive world of cloud computing.

I’m suggesting when you evaluate a virtualization platform, one of the most important characteristics you need to consider is how easy it will be to integrate the platform with the cloud.

The core benefits of virtualization – the ability to consolidate servers, quickly provision new applications, automatically backup system – pales in comparison to the speed and cost savings possible with cloud computing.

Matthew Weinberger posted What Google Can Teach You About Freemium Services to the MSPMentor blog on 8/9/2010:

The 2010 Google I/O developer conference may have passed back in May, but Google just posted a recap of the event’s “Making Freemium work – converting free users to paying customers” panel discussion. An impressive lineup of venture capitalists sat down and explained how to make millions by giving services away. Here’s some takeaways for MSPs.

Google’s Don Dodge, who moderated the panel, has a video of the full hour-long I/O session, and it’s well worth a watch if freemium is something you want to know more about. For those not in the know, “freemium” refers to a business model where you give away basic services at no cost, and then offer premium services at, well, a premium.

The panelists included Brad Feld (Foundry Group), Dave McClure (500 Startups), Jeff Clavier (SoftTech VC), Matt Holleran (Emergence Capital) and Joe Kraus (Google Ventures).

Some key points from their discussion for service providers:

- Customers will probably pay more for services than you think they will — and when they do make the plunge into premium, they’ll often opt for more than they need. Think of how you buy a cell phone service plan.

- Freemium works best when you’re set up such that the service is more valuable the more people are using it — a little tricky in the managed services space, but not impossible.

- Be flexible with your business model. Test several freemium scenarios and see what works best.

- Collect usage statistics as you can – they’ll help tell you what

MSPmentor has long been watching the development of freemium as an IT service provider business model, and now we have some fairly authoritative figures in the IT space backing the idea up.

We expect the freemium trend to continue at this week’s CompTIA Breakaway conference. Listen closely for separate news announcements from N-able and Own Web Now, respectively, two vocal freemium proponents.

<Return to section navigation list>

Windows Azure Platform Appliance

No significant articles today.

<Return to section navigation list>

Cloud Security and Governance

The Windows Azure Team reported a New Windows Azure Security Overview White Paper Now Available on 8/10/2010:

Windows Azure must provide confidentiality, integrity, and availability of customer data, while also enabling transparent accountability. To help customers better understand the array of security controls implemented within Windows Azure from both the customer's and Microsoft operations' perspectives, a new white paper, "Windows Azure Security Overview", has just been released that provides a comprehensive look at the security available with Windows Azure.

Written by Charlie Kaufman and Ramanathan Venkatapathy, the paper provides a technical examination of the security functionality available from both the customer's and Microsoft operations' perspectives, the people and processes that help make Windows Azure more secure, as well as a brief discussion about compliance.

<Return to section navigation list>

Cloud Computing Events

Sascha Corti announced on 8/10/2010 Hands-On Labs on developing Windows Phone 7 apps and Windows Azure in September coming to Wallisellen, Basel, Geneva and Berne (Switzerland):

This is your chance to attend one of our hands-on labs even if you don’t like traveling to Zurich – we are taking two of our labs on the road to Basel, Geneva and Berne:

The first lab will get you “Writing a Windows Phone 7 application in Silverlight”, the second one is titled “Discovering Windows Azure”.

Both are self-paced instructor-led labs that require you to bring your own laptop. You will get everything you need to start developing for Windows Phone 7 or against Windows Azure using a detailed hands-on lab manual. Our specialists will be on site to help you should you get in trouble and answer your questions.

Interested? Here is the official invitation including all the required links to sign up.

Make sure to have a look at the prerequisites to make sure you will get the most out of the lab should you decide to participate.

I am looking forward to seeing you at one of the labs!

MSDN Hands-on Lab Roadshow

"Writing a Windows Phone 7 Application in Silverlight"

and "Discovering Windows Azure"Dear Sir / Madam,

In order to get up to speed in developing applications for the new Windows Phone 7 and Windows Azure platforms, Microsoft Switzerland is organizing a Hands-on Lab roadshow in September with events in Wallisellen, Bern, Basel and Geneva.

The Windows Phone 7 Hands-on Lab will show you how you can create a consumer application that aggregates news feeds and images and displays them in a panorama-style user interface that can be seen in the new Windows Phone 7 hubs; all this using Microsoft Silverlight.

The Windows Azure Hands-on Lab provides you an overview of Microsoft Windows Azure, which represents Microsoft offering for cloud-based computing. In the first part of the lab you will create a simple ASP.NET Web Application; in the second part you will prepare it and extend it for Windows Azure. In the last part you will deploy the whole solution on Windows Azure.

For more information please visit the event pages:

MSDN Hands-on Lab "Writing a Windows Phone 7 Application in Silverlight"

MSDN Hands-on Lab "Discovering Windows Azure"The Hands-on Labs are free of charge and will be held in English. There is a limit on the number of participants. Registrations will be processed in the order in which they are received.

For additional information please write us to chnetcom@microsoft.com.

We look forward to welcoming you soon.

Microsoft Switzerland Ltd.

Developer & Platform Evangelism

Cloud Tweaks reported The HP Software Cloud Computing Tech Day – September 9th and 10th, 2010 in this 8/10/2010 post:

CloudTweaks is pleased to announce that we’ve been invited to attend The “HP Software Cloud Computing Tech Day” on September 9th and 10th, 2010.

This event will take place at the HP campus in Cupertino, California and has been organized by the folks at Ivyworldwide.com.

We are one of a select few cloud related sites offered this opportunity so we are very appreciative of this fact. We plan on keeping our readers updated via the CloudTweaks website as well as through Twitter during these 2 days.

Some of the areas that will be covered will be listed here in the next couple weeks.

To stay updated follow us at: http://www.twitter.com/cloudtweaks

Lydia Leong’s (@cloudpundit) HostingCon keynote slides post of 8/10/2010 reported:

My HostingCon keynote slides are now available. Sorry for the delay in posting these slides — I completely spaced on remembering to do so.

I believe Lydia incorrectly classified Windows Azure as Infrastructure as a Service (Iaas) in this slide. Microsoft and almost everyone else considers the Windows Azure Platform to be a Platform as a Service (PaaS).

Update 8/10/2010 12:45 PM PDT: Apparently placing Windows Azure in the IaaS group was an oversight. See Lydia’s response to my comment.

Wayne Walter Berry (@WayneBerry) reported about TechEd Austrialia 2010’s cloud sessions in this 8/9/2010 post to the SQL Server Team blog:

David Robinson will be in Australia and New Zealand this month for TechEd Austrialia 2010 and TechEd New Zealand 2010. If you are interested in SQL Azure make sure to attend his presentations.

Australia

DAT209 Migrating Applications to Microsoft SQL Azure

Are you looking to migrate your on-premise applications and database from MySql or other RDBMs to SQL Azure? Or are you simply focused on the easiest ways to get your SQL Server database up to SQL Azure? Then, this session is for you. Chris Hewitt, David Robinson cover two fundamental areas in this session: application data access tier and the database schema+data. In Part 1, they dive into application data-access tier, covering common migration issues as well as best practices that will help make your data-access tier more resilient in the cloud and on SQL Azure. In Part 2, the focus is on database migration. They go through migrating schema and data, taking a look at tools and techniques for efficient transfer of schema through Management Studio and Data-Tier Application (DAC). Then, they discover efficient ways of moving small and large data into SQL Azure through tools like SSIS and BCP. We close the session with a glimpse into what is in store in future for easing migration of applications into SQL Azure.

Meeting Room 8 Wednesday, August 25 09:45 - 11:00

COS220 SQL Azure Performance in a Multi-Tenant Environment

Microsoft SQL Azure presents unique opportunities and challenges with regards to database performance. While query performance is the key discussion in traditional, on-premise database deployments, David Robinson shows you that factors such as network latency and multi-tenant effects also need to be considered in the Cloud. He offer some tips to resolve or mitigate related performance problems. He also digs deep into the inner workings of how SQL Azure manages resources and balances workload in a massive Cloud environment. The elastic access to additional physical resource in the Cloud offers new opportunities to achieve better database performance in a cost effective manner, an emerging pattern not available in the on-premise world. He shows you some numbers that quantify the potential benefits.

Arena 1B Wednesday, August 25 15:30 - 16:45

New Zealand

David’s sessions for TechEd New Zealand are not finalized yet, I will repost when I have them.

Microsoft (@PDC2010) has updated the Professional Developers Conference (PDC) 2010 site in preparation for its 10/28 and 10/29/2010 run at the Microsoft Campus in Redmond, WA:

Mike Swanson's downloader and renamer is a convenient list with live links to all PDC 09 sessions.

Mithun Dhar asked Are you ready for Microsoft PDC 2010? and provided Redmond hotel recommendations on 8/10/2010:

With less than 12 weeks away…this year’s Microsoft’s Professional Developer Conference is right around the corner.

First things first: If you haven’t already registered – do it right now! As you already know, this PDC will be a lot smaller and more intimate than the other ones. Microsoft for the first time will be having the Professional Developer Conference in the Seattle area. In fact, it will be on the Microsoft Campus. This will be your opportunity to come be a part of our uber nice campus, enjoy the geekdom and get a chance to network with the people behind the products and technologies that you love so much! Because we’re on campus this year, attendees will enjoy unprecedented access to campus facilities, Microsoft developers and product teams.

Registration: https://www.ustechsregister.com/PDC10/RegistrationSelect.aspx

Please note that there are limited hotels in and around Redmond, WA. Here are my top 3 suggestions for where to stay:

I know the Heathman and the Courtyard both have courtesy shuttles to Campus. As I said earlier, hotels are extremely limited in the area so make sure to book them asap.

Keynote: I have said this before, and I’ll say it again – If you haven’t watched SteveB talk in person – then you really haven’t see passion! Steve Ballmer is one of the most entertaining and passionate people to watch on stage. This PDC will have him keynote along with Bob Muglia. These people will help set the strategy for the company for the coming year. Rest assured, you will not won’t to miss this!

The high-level agenda is now live on the website. Basically, we’ll focus on three tracks - client/devices, Cloud Services and developer tools & technologies

Watch the Countdown to PDC10 show on Channel 9

Event owner (Jennifer Ritzinger) and keynote owner (Mike Swanson) host a 10-minute, behind-the-scenes preview of content and event activities. Get the inside scoop on why this is going to be an exciting PDC and the type of content that attendees and viewers can expect.

As always, Get the latest PDC news on Twitter via @PDCEvent #PDC10 and follow the blog at: PDC10 Blog

And in case you can’t attend in person, fret not! We got you covered all the sessions and keynotes will be streamed online and guaranteed this will be the best experience you’ve seen!

If you have any questions about Microsoft PDC 2010 – feel free to tweet me about it! @mithund

Awesomeness comes to those who wait!

Jim O’Neill reported about Firestarter Events Coming Your Way! on the right coast in this 8/8/2010 post:

As the summer begins to wind down, we’re gearing up for several series of events focused on the core pillars of client development, web development and cloud computing. Over the next year, you’ll see Chris and myself, along with our fellow Developer Evangelists from across the East region presenting “Firestarters” on various aspects of those core development pillars. A Firestarter is a day-long event focused on a single technology starting at the basics and diving deeper throughout the day. The intention is to provide you an opportunity for focused immersion to spark your own development efforts with the technology that is covered. …

[Windows Phone 7 events skipped for brevity]

So what about the

Web andCloud events? We're still finalizing the agendas and venues, but if you want to pencil-in dates on your calendars, here's the near-final lineup. Check back on Chris’s and my blogs for more details and registration links when they become available.

<Return to section navigation list>

Other Cloud Computing Platforms and Services

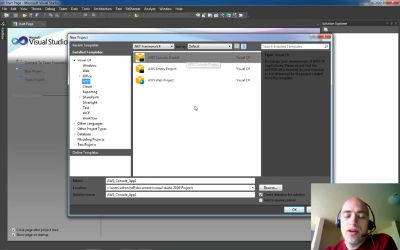

Adron Hall (@adronbh) posted a 00:06:20 Amazon Web Services ~5 Minute .NET Quick Start screencast on 8/10/2010:

This is the beginning of a series of blog posts I’m putting together on building out a website on Amazon’s Web Services. This is a quick start on where to find the .NET SDK, what templates are installed in Visual Studio 2010, and what you get when starting a new Web Application Project with the AWS .NET SDK.

AWS Quick Start

This movie requires Adobe Flash for playback.

Links mentioned in the video:

<Return to section navigation list>

0 comments:

Post a Comment