Windows Azure and Cloud Computing Posts for 6/27/2011+

| A compendium of Windows Azure, Windows Azure Platform Appliance, SQL Azure Database, AppFabric and other cloud-computing articles. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database and Reporting

- Marketplace DataMarket and OData

- Windows Azure AppFabric: Access Control, WIF and Service Bus

- Windows Azure VM Role, Virtual Network, Connect, Traffic Manager, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch

- Windows Azure Infrastructure and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Azure Blob, Drive, Table and Queue Services

Yves Goeleven (@yvesgoeleven) explained Overcoming message size limits on the Windows Azure Platform with NServiceBus in a 6/26/2011 post to his CloudShaper blog:

When using any of the windows azure queuing mechanisms for communication between your web and worker roles, you will quickly run into their size limits for certain common online use cases.

Consider for example a very traditional use case, where you allow your users to upload a picture and you want to resize it into various thumbnail formats for use throughout your website. You do not want the resizing to be done on the web role as this implies that the user will be waiting for the result if you do it synchronously, or that the web role will be using its resources for doing something else than serving users if you do it asynchronously. So you most likely want to offload this workload to a worker role, allowing the web role to happily continue to serve customers.

Sending this image as part of a message through the traditional queuing mechanism to a worker is not easy to do. For example it is not easily implemented by means of queue storage as this mechanism is limited to 8K messages, neither is it by means of AppFabric queues as they can only handle messages up to 256K

messages, and as you know image sizes far outreach these numbers.To work your way around these limitations you could perform the following steps:

- Upload the image to blob storage

- Send a message, passing in the images Uri and metadata to the workers requesting a resize.

- The workers download the image blob based on the provided Uri and performs the resizing operation

- When all workers are done, cleanup the original blob

This all sounds pretty straight forward, until you try to do it, then you run into quite a lot of issues. Among others:

To avoid long latency and timeouts you want to upload and/or download very large images in parallel blocks. But how many blocks should you upload at once and how large should these blocks be? How do you maintain byte order while uploading in parallel? What if uploading one of the blocks fails?

To avoid paying too much for storage you want to remove the large images. But when do you remove the original blob? How do you actually know that all workers have successfully processed the resizing request? Turns out you actually can’t know this, for example due to the built in retry mechanisms in the queue the message may reappear at a later time.

Now the good news is, I’ve gone through the ordeal of solving these questions and implemented this capability for windows azure into NServiceBus. It is known as the databus and on windows azure it uses blob storage to store the images or other large properties (FYI on-premises it is implemented using a file share).

How to use the databus

When using the regular azure host, the databus is not enabled by default. In order to turn it on you need to request custom initialization and call the AzureDataBus method on the configuration instance.

internal class SetupDataBus : IWantCustomInitialization { public void Init() { Configure.Instance.AzureDataBus(); } }As the databus is implemented using blob storage, you do need to provide a connection string to your storage account in order to make it work properly (it will point to development storage if you do not provide this setting)

<Setting name="AzureDatabusConfig.ConnectionString" value="DefaultEndpointsProtocol=https;AccountName={yourAccountName};AccountKey={yourAccountKey} />Alright, now the databus has been set up, using it is pretty simple. All you need to do is specify which of the message properties are too large to be sent in a regular message, this is done by wrapping the property type by the DataBusProperty<T> type. Every property of this type will be serialized independently and stored as a BlockBlob in blob storage.

Furthermore you need to specify how long the associated blobs are allowed to stay alive in blob storage. As I said before there is no way of knowing when all the workers are done processing the messages, therefore the best approach to not flooding your storage account is providing a background cleanup task that will remove the blobs after a certain time frame. This time frame is specified using the TimeToBeReceived attribute which must be specified on every message that exposes databus properties.

In my image resizing example, I created an ImageUploaded event that has an Image property of type DataBusProperty<byte[]> which contains the bytes of the uploaded image. Furthermore it contains some metadata like the original filename and content type. The TimeToBeReceived value has been set to an hour, assuming that the message will be processed within an hour.

[Serializable, TimeToBeReceived("01:00:00")] public class ImageUploaded : IMessage { public Guid Id { get; set; } public string FileName { get; set; } public string ContentType { get; set; } public DataBusProperty<byte[]> Image { get; set; } }That’s it, besides this configuration there is no difference with a regular message handler. It will appear as if the message has been sent to the worker as a whole, all the complexity regarding sending the image through blob storage is completely hidden for you.

public class CreateSmallThumbnail : IHandleMessages<ImageUploaded> { private readonly IBus bus; public CreateSmallThumbnail(IBus bus) { this.bus = bus; } public void Handle(ImageUploaded message) { var thumb = new ThumbNailCreator().CreateThumbnail(message.Image.Value, 50, 50); var uri = new ThumbNailStore().Store(thumb, "small-" + message.FileName, message.ContentType); var response = bus.CreateInstance<ThumbNailCreated>(x => { x.ThumbNailUrl = uri; x.Size = Size.Small; }); bus.Reply(response); } }Controlling the databus

In order to control the behavior of the databus, I’ve provided you with some optional configuration settings.

- AzureDataBusConfig.BlockSize allows you to control the size in bytes of each uploaded block. The default setting is 4MB, which is also the maximum value.

- AzureDataBusConfig.NumberOfIOThreads allows you to set the number of threads that will upload blocks in parallel. The default is 5

- AzureDataBusConfig.MaxRetries allows you to specify how many times the databus will try to upload a block before giving up and failing the send. The default is 5 times.

- AzureDataBusConfig.Container specifies the container in blob storage to use for storing the message parts, by default this container is named ‘databus’, note that it will be created automatically for you.

- AzureDataBusConfig.BasePath allows you to add a base path to each blob in the container, by default there is no basepath and all blobs will be put directly in the container. Note that using paths in blob storage is purely a naming convention that is being adhered to, it has no other effects as blob storage is actually a pretty flat store.

Wrapup

With the databus, your messages are no longer limited in size. At least the limit has become so big that you probably don’t care about it anymore, in theory you can use 200GB per property and 100TB per message. The real limit however has now become the amount of memory available on either the machine generating or receiving the message, you cannot exceed those amounts for the time being… Furthermore you need to keep latency in mind, uploading a multi mega or gigabyte file takes a while, even from the cloud.

That’s it for today, please give the databus a try and let me know if you encounter any issues. You can find the sample used in this article in the samples repository, it’s named AzureThumbnailCreator and shows how you can create thumbnails of various sizes (small, medium, large) from a single uploaded image using background workers.

Martin Ingvar Kofoed Jensen (@IngvarKofoed) asked Why is MD5 not part of ListBlobs result? in a 6/26/2011 post to his Invar blog:

For some strange reason the ContentMD5 blob property is only populated if you call FetchAttributes on the blob. This means that you can not obtain the value of the ContentMD5 blob property by calling ListBlobs, with any parameter settings. If you have to call FetchAttributes to obtain the value it is not feasible to use on a large set of blobs because of the request time for calling FetchAttributes is going to take a long time. This means that it can not be used in a optimal way when doing something like fast folder synchronization I wrote about in this blog post.

The ContentMD5 blob property is never set by the blob storage it self. And if you try to put any value in it that is not a correct 128 MD5 hash of the file, you will get an exception. So it has limited usage.

I have made some code to illustrate the missing population of the ContentMD5 blob property when using the listBlobs method.

Here is the output of running the code:

GetBlobReference without FetchAttributes: Not populated GetBlobReference with FetchAttributes: zhFORQHS9OLc6j4XtUbzOQ== ListBlobs with BlobListingDetails.None: Not populated ListBlobs with BlobListingDetails.Metadata: Not populated ListBlobs with BlobListingDetails.All: Not populatedAnd here is the code:

CloudStorageAccount storageAccount = /* Initialize this */; CloudBlobClient client = storageAccount.CreateCloudBlobClient(); CloudBlobContainer container = client.GetContainerReference("mytest"); container.CreateIfNotExist(); const string blobContent = "This is a test"; /* Compute 128 MD5 hash of content */ MD5 md5 = new MD5CryptoServiceProvider(); byte[] hashBytes = md5.ComputeHash(Encoding.UTF8.GetBytes(blobContent)); /* Get the blob reference and upload it with MD5 hash */ CloudBlob blob = container.GetBlobReference("MyBlob1.txt"); blob.Properties.ContentMD5 = Convert.ToBase64String(hashBytes); blob.UploadText(blobContent); CloudBlob blob1 = container.GetBlobReference("MyBlob1.txt"); /* Not populated - this is expected */ Console.WriteLine("GetBlobReference without FetchAttributes: " + (blob1.Properties.ContentMD5 ?? "Not populated")); blob1.FetchAttributes(); /* Populated - this is expected */ Console.WriteLine("GetBlobReference with FetchAttributes: " + (blob1.Properties.ContentMD5 ?? "Not populated")); CloudBlob blob2 = container.ListBlobs().OfType().Single(); /* Not populated - this is NOT expected */ Console.WriteLine("ListBlobs with BlobListingDetails.None: " + (blob2.Properties.ContentMD5 ?? "Not populated")); CloudBlob blob3 = container.ListBlobs(new BlobRequestOptions { BlobListingDetails = BlobListingDetails.Metadata }). OfType().Single(); /* Not populated - this is NOT expected */ Console.WriteLine("ListBlobs with BlobListingDetails.Metadata: " + (blob3.Properties.ContentMD5 ?? "Not populated")); CloudBlob blob4 = container.ListBlobs(new BlobRequestOptions { UseFlatBlobListing = true, BlobListingDetails = BlobListingDetails.All }). OfType().Single(); /* Not populated - this is NOT expected */ Console.WriteLine("ListBlobs with BlobListingDetails.All: " + (blob4.Properties.ContentMD5 ?? "Not populated"));

<Return to section navigation list>

SQL Azure Database and Reporting

Sudhir Hasbe described Database Import and Export for SQL Azure in a 6/26/2011 post:

Support for DAC will make import export very easy for our customers. Every conversation I have with customers topic of import/export, backup and disaster recovery always comes up. This is great next step in our feature set in the space.

Database Import and Export for SQL Azure

SQL Azure database users have a simpler way to archive SQL Azure and SQL Server databases, or to migrate on-premises SQL Server databases to SQL Azure. Import and export services through the Data-tier Application (DAC) framework make archival and migration much easier.

The import and export features provide the ability to retrieve and restore an entire database, including schema and data, in a single operation. If you want to archive or move your database between SQL Server versions (including SQL Azure), you can export a target database to a local export file which contains both database schema and data in a single file. Once a database has been exported to an export file, you can import the file with the new import feature. Refer to the FAQ at the end of this article for more information on supported SQL Server versions.

This release of the import and export feature is a Community Technology Preview (CTP) for upcoming, fully supported solutions for archival and migration scenarios. The DAC framework is a collection of database schema and data management services, which are strategic to database management in SQL Server and SQL Azure.

Microsoft SQL Server “Denali” Data-tier Application Framework v2.0 Feature Pack CTP

The DAC Framework simplifies the development, deployment, and management of data-tier applications (databases). The new v2.0 of the DAC framework expands the set of supported objects to full support of SQL Azure schema objects and data types across all DAC services: extract, deploy, and upgrade. In addition to expanding object support, DAC v2.0 adds two new DAC services: import and export. Import and export services let you deploy and extract both schema and data from a single file identified with the “.bacpac” extension.

For an introduction to and more information on the DAC Framework, this whitepaper is available: http://msdn.microsoft.com/en-us/library/ff381683(SQL.100).aspx.

Database Import and Export for SQL Azure

<Return to section navigation list>

MarketPlace DataMarket and OData

Lohith (@kashyapa) described Performing CRUD on OData using cURL in a 6/14/2011 post (missed when posted):

In one of my previous article i had shown how to perform CRUD operations on an OData using a new JavaScript library/API from Microsoft called dataJS. With that example we saw how from a HTML page we can perform the CRUD operations on a OData service. With just dataJS and JQuery we were able to build a complete application. Here is the article link if you have not read it: Performing CRUD on OData Service using DataJS

In this post, I will explore the feasibility of performing the CRUD operations on a OData service but from a command line. Yes, from a command line we can perform the CRUD operations to a OData service. For the rest of this article, i will be using the same service that i had built in “Performing CRUD on OData Service using DataJS”. You would need to follow Step 1 to 7 to get an OData service up and running. So lets start looking into what is cURL and how do we perform CRUD operation using cURL.

What is cURL?

Here is the official definition for what is cURL:

curl is a command line tool for transferring data with URL syntax, supporting DICT, FILE, FTP, FTPS, GOPHER, HTTP, HTTPS, IMAP, IMAPS, LDAP, LDAPS, POP3, POP3S, RTMP, RTSP, SCP, SFTP, SMTP, SMTPS, TELNET and TFTP. curl supports SSL certificates, HTTP POST, HTTP PUT, FTP uploading, HTTP form based upload, proxies, cookies, user+password authentication (Basic, Digest, NTLM, Negotiate, kerberos…), file transfer resume, proxy tunneling.

So cURL is a command line tool for transferring data with URL syntax. And supports many different protocol.

How will cURL help in CRUD operations on OData?

If you remember the tenets of OData, we communicate to any OData service using HTTP and we use the HTTP verbs like GET, POST, PUT, DELETE to indicate the actions to be performed to the service. Also the content negotiation happens through the HTTP headers. cURL also provides us the ability to pass the headers and the HTTP verbs right from the command line. we will see the examples one by one.

Where do we start?

Follow Step 1 to Step 7 on my previous blog post “Performing CRUD on OData Service using DataJS”. You can also download the source and set up the OData Service from the attached demo code on that blog post.

Also download the cURL library from the official site: http://curl.haxx.se/download.html. They support plethora of platforms. Select your platform and download the right version of cURL. You can also go through cURL download wizard here http://curl.haxx.se/dlwiz/ which will guide you through the right version for you by asking certain question.

The download is a single executable by name curl.exe.

Pre Step:

Open a command prompt and navigate to the folder where you have downloaded the cURL library. in my case here is what it looks like:

Performing READ operation:

Assuming you have done the Pre Step, lets now jump into some action. First and foremost lets see how to do a GET using cURL. OData service in my system is running at http://localhost:57581/UsersOData.svc/Users. So this is the service i will be using for all the operations.

cURL supports the following syntax for doing a GET:

curl.exe <url>

Thats it. curl.exe takes a URL, performs HTTP get and will return the HTTP response right on the console. So in our case the command i issued was as follows:

curl.exe http://localhost:57581/UsersOData.svc/Users

Here is the snapshot of the result:

Performing CREATE operation:

When we want to create a resource, we would need to do the following:

- First and foremost, the data that needs to be transferred i.e. atom/xml or JSON

- Second, what format is the data we are trying to transfer i.e. HTTP Header

- Third and important one, the HTTP verb which in this case is POST

cURL has certain command line options to achieve all the above:

- - d, –data :- option which is used to set the data we are passing

- -H, –header :- option which is used to set the Header for the request

- -X, –request – option which is used to set the HTTP Verb or the request method

So here is the curl command for create a request:

curl.exe

-d “{username:’Lohith’,email:’lohith@test.com’,password:’pass’}”

-H content-type:application/json

-X POST

http://localhost:57581/UsersOData.svc/Users

If all went well you will see the following response on the command line:

That’s it. We created a resource and we got the response from the server. And the response is actually a entry item of the newly created resource. Pay attention to the userid column. That’s the identity of the newly created resource

Performing UPDATE operation:

Very similar to CREATE request. But when you want to edit a resource the change is the URL and the method, everything else remains the same. The URL would need to be pointing to that resources rather than the collection. And the method will be PUT. Here is the command for UPDATE:

curl.exe

-d “{username:’Lohith Ed’,email:’lohith@test.com’,password:’pass123’}”

-H content-type:application/json

-X PUT

http://localhost:57581/UsersOData.svc/Users(15)

Notice the usage of the URL – Users(15). In OData this is the addressability factor of any resource. It says find a resource whose id is 15 in the users collection.

For a PUT request, server wont return back anything if the request succeeded.

Performing DELETE operation:

DELETE operation is very similar to UPDTE operation. Changes are in the request method we pass and the data. For a DELETE operation method passed will be “DELETE” and we wont pass any data. The URL needs to point to the resource we want to delete. Here is the command:

curl.exe

-X PUT

http://localhost:57581/UsersOData.svc/Users(15)

That’s it. We have deleted the resource with id 15. If the command was successful, you wont get any return data from server similar to UPDATE.

So this was a walkthrough of how Open Data Protocol makes it possible for any clients having the capability to communicate via HTTP, interact with OData service and perform CRUD operation.

<Return to section navigation list>

Windows Azure AppFabric: Access Control, WIF and Service Bus

Saravana Kumar announced a Marriage between BizTalk360 and Azure AppFabric Service Bus - Result: Birth of http://demo.biztalk360.com in a 6/27/2011 post to the BizTalkGurus blog:

This is an open invitation for all of you to access/manage one of our test BizTalk environment, running in our premise protected by NAT/Firewall.

Note: The first time loading will take sometime, once the SilverLight application is downloaded, it will be quick.

Why do we need AppFabric Service Bus integration for BizTalk 360?

Even before shipping the V1.0 of BizTalk360, we started on some of the cool features requested by potential customers (coming in V2.0). Ability to manage their BizTalk environment remotely, securely, and with less investment in infrastructure changes.

There are various scenarios why a company may want to do this. One of the main reason is outsourcing your Microsoft BizTalk Server support/operations to some third party companies. Previously providing something like this required heavy investment on infrastructure like setting up VPN connections, Citrix/RDP access to your production environment etc. But thanks to BizTalk360, which by default comes with lot of features targeted for production support, things like fine grained authorization, complete governance/auditing, ability to give read only access etc. Now with the support for AppFabric service bus, it's possible to allow third party companies to securely manage your BizTalk environment. It's important to mention, you'll be able to audit/govern who did what!!.

Technology behind the scene

Majority of the hard work here is taken by Microsoft Azure AppFabric Service Bus (SB from now) infrastructure. Basically AppFabric SB takes care of all the challenges around establishing the connection with the on-premise infrastructure crossing through NAT/Firewall securely. This was possible also because of the BizTalk360 architecture. The front SilverLight application merely displays the data provided by the back end 8+ WCF services. We simply introduced AppFabric Service Bus layer in-between the SilverLight front end and the WCF backend as shown in the above figure. All of our recent blog posts were related to this. There were few challenges, but once we understood the challenges and how the whole thing works, everything went very smooth.

- Azure AppFabric Service bus, Silverlight Integration - End-to-End (walkthrough) - Part 1

- Azure AppFabric Service bus, Silverlight Integration - End-to-End (walkthrough) - Part 2

- AppFabric Service Bus : net:pipe needs to be specified error

- Understand Azure AppFabric Service Bus Pricing

As you can see from the picture above, the original BizTalk Environment is running in one of our internal environments. The front end is hosted on Windows Azure as a web role. The Azure AppFabric service bus puts the plumbing in-between and takes care of all the communication.

There is also a CNAME mapping, which maps http://demo.biztalk360.com to the actual Windows Azure Webrole application http://biztalk360.cloudapp.net

All the communication from the Azure Web role to the on premise BizTalk environment is fully encrypted using "https" traffic.

User[s] simply come and hit the URL http://demo.biztalk360.com without knowing what's going on behind the scene.

Vittorio Bertocci (@vibronet) suggested the Cloud Identity Summit: Come to Our Workshop! in a 6/27/2011 post:

In just about three weeks the who’s who of identity in the cloud is going to converge to Keystone, in the beautiful Rocky Mountains, to talk each other into identity-induced stupor. The Cloud Identity Summit, hosted by our friends at Ping Identity, is an event that I greatly enjoyed last year: and judging from the speakers lineup, this year holds great promises as well!

For the occasion, the egregious1 Brian Puhl, the formidable Laura Hunter and (the …hairy?) yours truly will fight (for three full hours!) that mysterious force that seems to keep IT administrators and developers apart, and will share a stage to tell you about identity in Windows Azure and Office 365. Here there’s the abstract:

Tuesday, July 19, 2011 - 9am to 12pm

Identity in the Microsoft Cloud - Windows Azure, Office 365, and More!

In this unique workshop, come and hear about adopting and implementing the Microsoft Cloud from seasoned identity professionals who have been working with these technologies first-hand from Day One. We’ll begin with an overview and description of the technologies that allow an Identity Management professional to interact with both Windows Azure and Office 365. We’ll then walk through a real-life example of integrating Microsoft Cloud technologies from the perspective of both the application developer and the infrastructure architect. Along the way, we’ll share best practices and tales from the trenches from customers, partners, and Microsoft’s own Evangelists and internal Identity Management Architects.

Now, let me tell you for a moment about Brian and Laura’s work on this. Microsoft IT has been using ADFS2.0 not from Day One, but like from Day –360

Our Corp STS has been a critical service for our worldwide organization for a pretty long time, and is quite a spectacular deployment across geographies (for a snapshot from more than 1 year ago check out this video). Among other things, this was a fantastic enabler for us to take advantage of cloud services in our LoB applications at a spectacular pace: but this also meant really a lot of work for Brian’s team, who had to experiment with different policies/practices and really see what works. Brian and Laura are going to share some gems you won’t hear from anyone else.

About the developer’s portion of the workshop… well, I’ll refrain from chest-beating (at my shape/age, that would not be a good sight anyway). Let’s just say that I’ve been talking & writing about identity for developers for quite some time, and I hope you’ll find at least some insights in my logorrhea.

If the above was not enough to convince you to come, I don’t know what else to do… oh wait, I do!

For the first 15 people that will register using the code MSFT719%15, our workshop is free. Pretty neat trick.Well, I registered and booked the flights & the hotel, I just have to rent the car at this point. If you want to partake to the intellectual feast of sharing a table with the likes of Gunnar Peterson, John Shewchuk, Bob Blakley, Eric Sachs, Andrew Nash and many others… you know what to do

- see you there!

-----------------------

1In Italian the term “egregio” means “excellent”. I am told that in English “egregious” can mean that as well, but the default meaning is less flattering. Of course I meant it in its “excellent” meaning.

<Return to section navigation list>

Windows Azure VM Role, Virtual Network, Connect, Traffic Manager, RDP and CDN

The Windows Azure Team (@WindowsAzure) reported New Windows Azure Traffic Manager Features Ease Visibility Into Hosted Service Health in a 6/27/2011 post:

We announced several updates to Windows Azure at MIX11. One was a Community Technology Preview (CTP) of Windows Azure Traffic Manager, which allows you to load-balance traffic to multiple hosted services. Today we are pleased to announce that an updated version of the Windows Azure Traffic Manager CTP, which offers enhanced visibility of your policy’s monitoring status, is now available.

The new version offers a Poll State column in the Windows Azure Platform Management Portal that displays the most recent monitor status for all defined Traffic Manager policies. You can use this status to understand the health of your domains. When a policy is healthy, DNS queries will be distributed to your hosted services based on the policy’s configuration, e.g., Round Robin, Performance or Failover. Once the Traffic Manager monitoring system detects a change in monitor status, it updates the Poll State entry in the Management Portal.

This new visibility into the health of your policies helps you understand and manage your end user’s experience. Keep in mind that although there is no charge for Traffic Manager, its monitoring transactions are not free. We recommend using the default monitor value, “/” or as small a monitor probe file as possible.

During the CTP period, Windows Azure Traffic Manager is free of charge, and invitation-only. To request an invitation, please visit the Beta Programs section of the Windows Azure Platform Management Portal. Click here to learn more about Windows Azure Traffic Manager.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

Bruce Kyle reported Private Cloud, Windows Azure MPR Test to Apply to ISV Competency in a 6/27/2011 post to the US ISV Evangelism blog:

Private Cloud test will apply to the Silver ISV Competency in the coming months and a Windows Azure MPR test will apply to the Gold ISV Competency in October. The announcements were made on the Microsoft Partner Network site.

Changes to Other Competencies

Also of interest to ISVs, changes to the Mobility Competency will be announced at the Worldwide Partner Conference.

Learn about updated competency requirements and benefits that will apply over the next 12 months. Review the requirements so you can ensure you will be compliant by your next renewal date ─ and discover new benefits to help you run and grow your business. Changes are being made in many of the 29 competencies. See Microsoft Partner Network Competency Roadmap.

About Competencies

A Microsoft gold or silver competency can help set your company apart from the competition by demonstrating a specific, proven skill set to your customers. Did you know that there are over 650,000 partners in Microsoft’s channel alone, but only 30,000 (5%) partners worldwide can distinguish themselves with a Microsoft silver competency as having attained a high degree of proficiency?

Silver competencies offer a clear path to help you showcase your skillset in today’s competitive environment. Gold competencies demonstrate your best-in-class expertise within Microsoft’s marketplace. Earning a gold competency is evidence of the deepest, most consistent commitment to a specific, in-demand, business solution area, along with the distinction of being among only 1 percent of Microsoft partners worldwide that have attained this outstanding degree of proficiency.

Review the requirements to attain the ISV/Software competency.

Review the benefits of earning the ISV/Software Competency.

Free Assistance in Reaching the ISV Competency

Join the Microsoft Platform Ready (MPR) for free assistance in getting your application compatible.

The Windows Azure Team (@WindowsAzure) posted Hosting Services with WAS and IIS on Windows Azure and downloadable source code on 6/27/2011:

The Adoption Program Insights series describes experiences of Microsoft Services consultants involved in the Windows Azure Technology Adoption Program assisting customers deploy solutions on the Windows Azure platform. This post is by Tom Hollander.

Many developers choose to use service oriented techniques to break large systems into smaller, loosely coupled services. Frequently, each service will be hosted on a different machine and use WS-* protocols for standards-based communication. However there can be times when different hosting and communication approaches make more sense. For example, if you control a service and all its clients, you may be able to get better performance using binary messages sent over TCP. And in some cases you may choose to host multiple services on the same machine and use an optimised local communication mechanism such as named pipes, while maintaining the logical separation between your services.

Microsoft’s application platform has long made it easy to support all of these scenarios with few or no changes to your application code. Windows Communication Foundation (WCF) provides a unified programming model over multiple messaging protocols, while Windows Process Activation Services (WAS) allows you to host your services in IIS and have them “woken up” by incoming messages sent over non-HTTP transports, including TCP and named pipes.

However if you’ve decided to leverage Windows Azure to deploy your applications, it isn’t obvious how you can take advantage of WAS. But the good news is that with a little help from PowerShell, it’s entirely possible to get this working. The key steps that you’ll need to script are:

- Enable and start the WAS activation service for your chosen protocol

- Add a binding for your chosen protocol to your IIS web site

- Enable the chosen protocol on your web sites or applications.

This blog describes a simple application consisting of a single web role containing a web site (the client) and a virtual application hosting a WCF service. Since both the client and the service are hosted on the same machine, we’ll use WCF’s NetNamedPipeBinding for optimised communication, although the same basic approach also works for the NetTcpBinding. (Note that MSMQ is not supported on Windows Azure, so you cannot use those bindings). I’ve included the code for the most important parts of the solution in the post, or you can download the entire sample by clicking on the WasInAzure.zip file included at the bottom of this post.

WCF Configuration

There’s nothing Azure-specific about the WCF configuration, but I’ll show some of it for completeness. I’ve built a simple service and have configured a single endpoint with the NetNamedPipeBinding and a net.pipe:// URL.

web.config (Service):

<system.serviceModel> <services> <service name="WcfService1.Service1"> <endpoint binding="netNamedPipeBinding" address="net.pipe://localhost/WcfService1/service1.svc" contract="WcfService1.IService1"/> </service> </services> </system.serviceModel>The client’s WCF configuration looks much the same, using the matching ABCs (Address, Binding and Contract).

Windows Azure Startup Task

When you want to perform some scripting at the time your Windows Azure instances start, normally you’ll do all of your work in a script defined in the Service Definition file’s <Startup> element. However tasks defined here execute before the fabric has configured IIS, so it turns out this is not a good place to make most of the required changes for WAS. So all we’ll do in the startup task is configure PowerShell so we can run our script later in the startup sequence:

ServiceDefinition.csdef:

<ServiceDefinition name="WasInAzure" xmlns="http://schemas.microsoft.com/ServiceHosting/2008/10/ServiceDefinition"> <WebRole name="WebRole1"> ... <Startup> <Task commandLine="setup\startup.cmd" executionContext="elevated" /> </Startup> </WebRole> </ServiceDefinition>Startup.cmd:

powershell -command "set-executionpolicy Unrestricted" >> out.txtWindows Azure Role OnStart

The OnStart method of your WebRole class executes after IIS has been set up by the fabric, so at this time we’re free to run scripts that make the required configuration changes. The first thing to note is that normally the OnStart method does not run with administrator privileges—we’ll need to change this for our script to work.

ServiceDefinition.csdef:

<ServiceDefinition name="WasInAzure" xmlns="http://schemas.microsoft.com/ServiceHosting/2008/10/ServiceDefinition"> <WebRole name="WebRole1"> ... <Runtime executionContext="elevated" /> </WebRole> </ServiceDefinition>Now, we can add the code that kicks off our PowerShell script when the role starts:

WebRole.cs:

public class WebRole : RoleEntryPoint { public override bool OnStart() { var startInfo = new ProcessStartInfo() { FileName = "powershell.exe", Arguments = @".\setup\rolestart.ps1", RedirectStandardOutput = true, UseShellExecute=false, }; var writer = new StreamWriter("out.txt"); var process = Process.Start(startInfo); process.WaitForExit(); writer.Write(process.StandardOutput.ReadToEnd()); writer.Close(); return base.OnStart(); } }Finally, we come to the PowerShell script that starts the listener service and configures the bindings. There are a few things to note about this script:

- If you’re using TCP instead of Named Pipes, change all references to net.pipe to net.tcp, change the bindings to include your chosen port (e.g. “808:*”). You’ll also need to enable and both the NetTcpPortSharing and NetTcpActivator services.

- The script makes some assumptions on the name of your role and also updates the protocols on all IIS virtual applications. Depending on how you’ve set up your roles and sites you may need to change this.

- There are some differences in how you access the IIS PowerShell cmdlets between IIS 7.0 and IIS 7.5. My script works for IIS 7.5, which is included in Windows Server 2008 R2 and the “2.x” family of the Windows Azure OS. To get this version, make sure you set osFamily="2" in the root node of your ServiceConfiguration.cscfg file. Alternatively if you want to use IIS 7.0 (Azure OS “1.x”) you can modify your PowerShell script to make this work.

RoleStart.ps1:

write-host "Begin RoleStart.ps1" import-module WebAdministration # Starting the net.pipe service $listenerService = Get-WmiObject win32_service -filter "name='NetPipeActivator'" $listenerService.ChangeStartMode("Manual") $listenerService.StartService() # Enable net.pipe bindings $WebRoleSite = (Get-WebSite "*webrole*").Name Get-WebApplication -Site $WebRoleSite | Foreach-Object { $site = "IIS:/Sites/$WebRoleSite" + $_.path; Set-ItemProperty $site -Name EnabledProtocols 'http,net.pipe'} New-ItemProperty "IIS:/Sites/$WebRoleSite" -name bindings -value @{protocol="net.pipe";bindingInformation="*"} write-host "End RoleStart.ps1"Summary

With the right elements in your Windows Azure Service Definition and Service Configuration files, most common configuration tasks can be achieved without any coding or scripting. However there are some useful capabilities that you may be used to from on-premises Windows installations that are not currently exposed in this way. Fortunately, if you’re familiar with scripting techniques and the Windows Azure startup sequence you can normally code your way around these problems. Windows Process Activation Services is one example of a useful feature that’s not obviously available in Windows Azure, but can be made to work with a little perseverance.

FireDancer posted her new Layered Architecture Sample for Azure to CodePlex on 6/26/2011:

Layered Architecture Sample for Azure takes the Expense Sample application that was originally developed in Layered Architecture Sample for .NET and ports it into the Windows Azure platform to demonstrate how a carefully designed layered application can be easily deployed to the cloud. It also illustrates how layering concepts can be applied in the cloud environment.

Layered Architecture Sample for .NET was designed to showcase various .NET Technologies such as Windows Presentation Foundation (WPF), Windows Communication Foundation (WCF), Windows Workflow Foundation (WF), Windows Form, ASP.NET and ADO.NET Entity Framework working in conjunction with the Layered Architecture Design Pattern. Layered Architecture Sample for Azure extends on that vision by demonstrating how to perform them in the cloud.

The current version of Layered Architecture Sample for Azure uses Windows Azure and SQL Azure completely to show how to deploy all the layers to the cloud while retaining a small portion of the desktop clients for on-premise communication with the cloud service. Future versions of the sample will demonstrate hybrid on-premise and cloud scenario by leveraging on Windows Azure AppFabric Service Bus to communicate with on-premise services.

You can now quickly create a layered project structure for your Windows Azure cloud applications with Layered Architecture Solution Guidance

Full cloud scenario with minimal on-premise bits (Non Azure AppFabric scenario)

Adactus Ltd reported Adactus Ltd Launches Cloud Based Claims Management Software for the Insurance Repair and Restoration Market in a 6/26/2011 press release:

Adactus Ltd, providers of claims management software to the general insurance industry, has released a new claims management system called Pulse for use by insurance companies and their providers to support the management of property related claims.

Thame, United Kingdom, June 26, 2011 --(PR.com)-- Adactus Ltd today announced that it has released Pulse, a cloud based claims management software solution to help the insurance industry better manage the wide variety of insurance property claims. Designed using the very latest Microsoft Cloud based technology with ease of use as a primary design goal, Pulse can be used on a software-as-a-service payment model which helps the insurance industry with any variations in their claim volumes and significantly reduces the initial level of investment required.

Today, Adactus provides claims management software to the insurance industry including companies such as Aviva and Rainbow International, part of the ISS Group, one of the world’s largest facility service groups. Pulse offers companies the ability to securely connect directly to insurers, accounting systems and additional back-office systems speeding up the claims process and reducing the cost of processing each claim. Customer service levels are improved as policy holder’s claims are managed within Pulse against service levels allowing suppliers to focus on achieving service level agreements throughout the entire claims process. Pulse is capable of reducing timescales and costs by capturing vital survey and inspection details onsite through mobile devices, a key element which differentiates Pulse from its competitors.

Pulse is a Microsoft approved cloud based application operating on the Windows Azure platform and offers unparalleled scalability, flexibility and security in claim management. Pulse is ideally suited to managing high claim volumes during claim surges experienced earlier this year. Commenting on this solution, James Smith, Head of Software Development at Adactus said, “Our clients are demanding flexibility in the pricing and support models for claims technology solutions and Pulse has been built with that as its core objective. Volumes can vary dramatically based on a number of factors including the environmental conditions and the complexity of claim information required.

Commenting on the new application, Ian Bullen, MD of bSure Ltd, a supplier of services to major insurers and building repair networks in the UK said “Pulse is a functionality rich system with the most up to date technology, well suited to companies in the insurance supply chain.”

Adactus MD, Chris Hall, said “We have designed Pulse based on our customer’s requirements including an easy to use dashboard and intuitive user interface with mobile use improving process efficiency. We will offer our service on a pay on demand basis to help our customers better manage their costs.

About Adactus

Adactus, http://www.adactus.co.uk, is a technology-based consulting and mobile development firm that specializes in e-Business consulting and development that includes custom eCommerce solutions, insurance claims management software, internet business applications, mobile web and multiple platform based mobile applications. Adactus was founded by the key staff responsible for the launch of Dell Online's presence in Europe. Founded in 2001, the management team has a wealth of experience in the specification and development of web application software solutions. They know how to embrace the Internet to deliver to customer's higher revenues, lower operating costs and increased customer satisfaction. Adactus have delivered scalable web applications in many market sectors both in the UK and internationally. For more information please visit http://www.adactus.co.uk

Wely Lau explained Setting Timezone in Windows Azure in a 6/26/2011 post:

Introduction

I strongly believe that in most application we are building, we would need to store date and time. In some application, date and time play very important role, for instance: rental-similar application which could result big impact if date and time are incorrect. In other kinds of application, date and time may not be playing very significant role, for example: some of them is just as informational purpose, when the data is actually inserted in the system.

Regardless the importance level we discussed above, one essential factor that we need to be considered is the timezone of the system. Whether setting it as local country timezone or other timezone such as UTC / GMT, we would need to determine the it, typically on the server where our application is hosted.

Thus, when we type “DateTime.Now” inside your code, we should be able to get the correct result.

Timezone in Windows Azure

No matter which data center we selected in Windows Azure (remember, Windows Azure has 6 data centers world wide: 2 in America, 2 in Europe, and 2 in Asia), by default, Windows Azure VM would provide us UTC timezone.

If you are considering migrating your app to Windows Azure, you should ask yourself now what timezone your current application set. If it’s on UTC, you are safe, nothing to worry.

However, if you are running local time, (for example in Singapore, it’s UTC + 8 hour) and you want to ensure the consistency of your current data, then you will need to be cautious. You have “at least” 2 choice to go:

- Use UTC on your app, which mean you would need to convert your current date/time data to UTC.

- To set your preferred timezone in Windows Azure (it could be your local time).

I bet most of you will decline the first option.

How to set timezone in Windows Azure

Alright, I assume we go with option 2. To set timezone in Windows Azure, I believe there are actually a few ways via Powershell or by modifying registry. Honestly, I’ve not tried these option on Windows Azure, yet I am not pretty sure if it could be applied in Windows Azure. But there’s an option that is definitely working well.

Okay, there’s actually a command utility called “tzutil” that can be used to change timezone. Please take note that this command is only applicable in Windows 7 and Windows Server 2008 R2.

TZUTIL

You may try to run it using your command prompt by typing “tzutil /?” for the information.

To change to your preferred timzeon, simply run the following command.

tzutil /s "Singapore Standard Time"Run it as Start-up task

In Windows Azure, we would need to run this command as start-up task, to ensure that when is starting up, the command will be executed first.

1. To do that, create a empty file (using notepad), and paste the above tzutil command inside, just save the file as settimezone.cmd inside your Windows Azure Project.

In your project, ensure that this file is included, if not you’ll need to include it manually.

2. The next step is to set the properties of this file to Copy Always. This is to ensure that the file will be included when project is packaged before deployment.

3. Subsequently, we would need to tell Windows Azure to run the start-up task. This could be achieved by adding the following start-up section inside your ServiceDefinition.csdef file.

4. Using Windows Server 2008 R2 VM Images

At earlier, I mentioned that the tzutil is only available in Windows 7 and Windows Server 2008 R2. Windows 7 is definitely out of context as there’s no such OS in Windows Azure.

Windows Azure at this moment allows us to choose either Windows Server 2008 or Windows Server 2008 R2. Both of them are running on 64 bit architecture.

By default (if you are not modifying anything in your configuration file), Windows Server 2008 will be selected.

In order to use tzutil, we would need to set the VM running as Windows Server 2008 R2. To do that, simply navigate to the ServiceConfiguration.cscfg file. In the ServiceConfiguration section, change the osFamily from 1 to 2.

*1 = Win 2008, while 2 = Win 2008 R2

We would also need to set the version of the OS. If you not preferring any OS, you can just simply put * and it will automatically perform update for you when there’s patch / new version of guest OS released.

5. Verification

If everything runs well, you should be getting your preferred timezone as expected.

Here’s how it’s look like, when I performed remote desktop to my Windows Azure VM.

What’s next?

In the app level, your are safe since you’ve successfully configure the timezone of your VM. But how about database level? What if there’s any stored procedure / function inside your code, use “getdate()” function?

I’ll discussed more on this topic in the next post. Stay tune[d]…

Jonathan Rozenblit (@jrozenblit) described What’s In the Cloud: Canada Does Windows Azure – Lead in a 6/26/2011 post to his DevPulse blog:

Since it is the week leading up to Canada day, I thought it would be fitting to celebrate Canada’s birthday by sharing the stories of Canadian developers who have developed applications on the Windows Azure platform. A few weeks ago, I started my search for untold Canadian stories in preparation for my talk, Windows Azure: What’s In the Cloud, at Prairie Dev Con. I was just looking for a few stores, but was actually surprised, impressed, and proud of my fellow Canadians when I was able to connect with several Canadian developers who have either built new applications using Windows Azure services or have migrated existing applications to Windows Azure. What was really amazing to see was the different ways these Canadian developers were Windows Azure to create unique solutions.

This week, I will share their stories.

Lead is a worldwide leaderboard platform which developers can integrate into their games to engage its users. Lead provides

a consistent reliable service and a growing ecosystem of products for developers regardless of the platform on which they develop. Lead is developed by Fersh, a student digital multimedia start-up based out of Toronto, Ontario. Fersh’s portfolio includes a number of award-winning mobile games, applications and developer resources. Fersh offers a range of products and services including consulting and customized software solutions across several platforms.I had a chance to sit down with one of Lead’s developers, Kowsheek Mahmood (@aredkid) to find out how he and his team built Lead using Windows Azure.

Jonathan: Kowsheek, when you and the team were designing Lead, what was the rationale behind your decision to develop for the Cloud, and more specifically, to use Windows Azure?

Kowsheek: Being a start-up company with constrained funding, it was not feasible for us to have multiple dedicated servers for Lead. Furthermore, since we wanted Lead to provide a consistent experience across geographical locations, a distributed solution was the right fit.

At Fersh we use Microsoft technologies to develop our applications which range from games to mobile and web applications. We chose Windows Azure because we could use our existing knowledge of the technologies involved and leverage them well. Also, Windows Azure provided for high availability and a flexible utility-style service, that fulfilled our requirements and was affordable.Jonathan: What Windows Azure services are you using? How are you using them?

Kowsheek: We are using Windows Azure Compute Web Role instances to host the frontend site, as well as our simple but really powerful API with developers will integrate in their games. SQL Azure hosts our databases in which we store the leaderboard data for each game using the API. We are also using Blob Storage and the Content Delivery Network (CDN) for static content, distributed to different geographic regions.

Jonathan: During development, did you run into anything that was not obvious and required you to do some research? What were your findings? Hopefully, other developers will be able to use your findings to solve similar issues.

Kowsheek: While working with the ASP.NET Membership service, initially it wasn't clear how the database on SQL Azure would have to be built. A quick Bing search showed that there is a tool similar to the regular tool called "aspnet_regsqlazure" that builds the database. You can find out more in this support article. Once we downloaded the proper files and ran them against the database, all was well.

Jonathan: Lastly, what were some of the lessons you and your team learned as part of ramping up to use Windows Azure or actually developing for Windows Azure?

Kowsheek: Initially, dedicated servers seemed feasible but after taking into consideration things like scalability and reliability, the hosting solutions seemed to fall short, so it always serves well to consider all the requirements and available options from the get-go.

That’s it for today’s interview with Kowsheek Mahmood of Fersh about their application Lead. Lead is currently in Alpha release but has already been integrated into several games, such as the popular Windows Phone 7 game Sudoku3D (Facebook, Twitter). If you’re a game developer, Lead is definitely something to check out. Perhaps you could even participate in testing Lead.

Tomorrow – another Windows Azure developer story.

This post also appears on the Canadian Developer Connection.

<Return to section navigation list>

Visual Studio LightSwitch

Sheel Shah of the Visual Studio LightSwitch Team (@VSLightSwitch) announced Excel Importer Updated for Web Applications in 6/27/2011 post:

The most common feedback that we’ve heard about the Excel Import extension is that it doesn’t work for web applications. Since we can’t access Excel from a web application, we’ve updated the extension to import data from CSV files in this situation. For details on using the extension, please see How to Import Data from Excel Using the Excel Import Extension.

Michael Washington (@ADefWebserver) reported that he’s Betting The House On LightSwitch in a 6/26/2011 post:

You should never “bet the house” (to risk everything) on anything. If you were actually gambling, and risking your proverbial “house”, where would you live if you were wrong and you lost? However, when backed into a corner (Microsoft has indicated they will tell us nothing about the future of Silverlight until the Build conference in September), what have I got to lose?

As of now, one place that I feel confidant that Silverlight will be used, for years to come, is in Visual Studio LightSwitch. Here is my reason:

I believe that LightSwitch will release a HTML/HTML5/Jupiter (whatever that is) “enhancement”

I believe that when these “other outputs” come, Silverlight will remain the “best user experience”, and it will continue to be used. However, the ability to create LightSwitch applications that render output that works on a IPad, makes it a technology that is worth my investment.

Then again, what choice do I have?

The Future Of LightSwitch

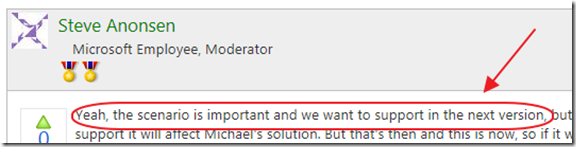

I have already pointed to statements by Steve Anonsen (one of the LightSwitch architects), about supporting the “HTML scenario”, and that the LightSwitch team is “looking for help with V2 and ‘Windows next’”. I just saw a statement by another LightSwitch team member, Steve Hoag:

“The architecture of LightSwitch is such that we can (somewhat) easily switch out the presentation layer. Looking forward, is that HTML5, or WPF, or something else related to an alleged future version of Windows that hasn't yet been announced?”

I guess my point is that I really do not know what Microsoft’s plans are, and most importantly, what will actually happen in the future. My “bet” is that the LightSwitch team seems to be in the best position to deal with future changes.

- LightSwitch is composed of Data (create a table, attach to external table, or attach to a WCF RIA data source), and Screens (create a "object tree" or "View Model") to bind your data to visual controls (built-in or custom). When you click publish it "Renders" the application you built. LightSwitch can therefore "Render" HTML5, Jupiter (Windows 8) ect.

- The LightSwitch team knew about HTML5 and Windows 8 years ago (ok I do not know this, I just believe it)

- The LightSwitch team went with Silverlight because it is, and will probably remain the "best user experience", however, they made sure not to "paint themselves into a corner". This is why I believe the Screen designer is only an object tree not a visual layout.

- LightSwitch is the future of Visual Studio. The creators of Visual Studio know that the majority of developers need something that is easy to use. LightSwitch is a "Home Run success" in achieving that objective.

- LightSwitch is being sold as "for non-professional developers" but that is mostly because that is a bigger market. They know that pro developers will use LightSwitch when they see that they are able to do the advanced things we describe on http://LightSwitchHelpWebsite.com.

Basically, the LightSwitch team has not “bet the house” on anything. What they have done is kept their options open. They use Silverlight (and I believe they will continue to do so), but they have not tied themselves down to Silverlight.

They have not even tied themselves down to any particular data source. By using WCF RIA Services, they allow you to basically connect to practically any data source that you would need.

By “betting on LightSwitch”, I am basically “betting on team that is keeping all their options open”.

Return to section navigation list>

Windows Azure Infrastructure and DevOps

Simon Munro (@simonmunro) reported Azure – All PaaSed up and no IaaS to go in a 6/27/2011 post to his CloudComments.net blog:

There were two interesting events in the Azure world last week:

- Firstly Joyent and Microsoft have partnered to port Node.js to Windows (and Azure). This is interesting because it lands slap bang in the middle of frenzied activity around server-side JavaScipt, using the JVM (or not), and the appreciation of frameworks for services by those who have been happy just with web frameworks (such as Django or Rails). I don’t see .NET enterprise developers dropping WCF and C# en masse to run stuff on Node.js, but perhaps if you hang out with the trendy kids then some of their lustre will rub off on you – this applies, I suppose, to individual developers and Microsoft themselves.

- In other news 10Gen (I assume) has been building a wrapper for MongoDB that hosts MongoDB in an Azure worker role. I’m not quite sure how this will work (they talk about a hot standby with shared storage) in the MongoDB world where data is (preferably) not committed to disk immediately. Where Azure likes to recycle roles whenever it feels like it, it would seem that you have little choice but to always work in ‘safe mode’.

In both of these cases, I find myself wondering why anyone would bother running MongoDB or Node.js on Windows in general or Azure in particular. It reminds me of the AWS tech summit that I was at a couple of weeks ago where a Microsoft evangelist was telling everybody in the room that you can run Ruby on Windows. “Why bother?”, were the thoughts echoing around the room. The AWS crowd have their choice of OSs and the rubyists are happy without Windows, thank you very much.

There only two reasons why you would be interested in MongoDB or Node.js on Windows. Firstly, it is mandated from above (as in ‘enterprise standard’ or something) that you run Windows. But then surely those same ‘standards’ would mandate the use or SQL for storing data and I doubt Node.js has made it onto an enterprise architecture powerpoint slide yet. The second reason is because you have been locked in to the Azure platform and cannot run any other OS because it is simply not possible in the (Azure) datacentre. And this is where the strategy around Azure becomes confusing.

Quite simply,

If Azure intends to be more IaaS it has to run something other than Windows. If it can’t do that then it must become frikkin’ good at PaaS.

Obviously Linux will run on the Azure fabric shortly after hell has frozen over, so that is out of the question. Azure simply needs to get better at PaaS. It needs to add good platform features like scheduling, search capability, map reduce (Dryad) and native, performant NoSQL capabilities (as in more than one), rich monitoring (like New Relic) and recoverability tools. And it needs to add these features fast, with an AWS-like tempo – releasing meaningful features every single week instead of languishing with a feature set that has barely moved since launch.

Azure as PaaS is currently simply not good enough and getting involved with throwing IaaS scraps is confusing their brand, removing engineering focus from what is important and ultimately pushing developers to other platforms. They definitely aren’t getting Ruby/Python/Node.js developers to come rushing. So why bother?

Lori MacVittie (@lmacvittie) claimed “The former is easy. The latter? Not so much” as an introduction to her Intercloud: Are You Moving Applications or Architectures? post of 6/27/2011:

In the many, many – really, many – posts I’ve penned regarding cloud computing , and in particular the notion of Intercloud, I’ve struggled to come up with a way to simply articulate the problem inherent in current migratory and, for that matter, interoperability models. Recently I found the word I had long been groping for: architecture.

Efforts from various working groups, standards bodies and even individual vendors still remain focused on an application; a packaged up application with a sprinkling of meta-data designed to make a migration from data center A to data center B less fraught with potential disaster. But therein lies the continuing problem – it is focused on the application as a discrete entity, with very little consideration for the architecture that enables it, delivers it and supports it.

The underlying difficulty is not just that most providers simply don’t offer the services necessary to replicate the infrastructure necessary, it’s that there’s an inherent reliance on network topology built into those services in the first place. That dependency makes it a non-trivial task to move even the simplest of architectures from one location to another, and introduces even more complexity when factoring in the dynamism inherent in cloud computing environments.

Virtualization will radically change how you secure and manage your computing environment," Gartner analyst Neil MacDonald said this week at the annual Gartner Security and Risk Management Summit. "Workloads are more mobile, and more difficult to secure. It breaks the security policies tied to physical location. We need security policies independent of network topology.

[emphasis added]

-- Gartner: New security demands arising for virtualization, cloud computing, InfoWorld

We could – and probably should – expand that statement to say, “We need application delivery policies independent of network topology”, where “application delivery policies” include security but also encompass access management, load balancing, acceleration and optimization profiles. We desperately need to decouple architecture and services from network topology if we’re to ever evolve to a truly dynamic and mobile data center – to realize IT as a Service.

JIT DEPLOYMENT

The best solution we have, now, is scripting. Scripting usually involves devops who use agile development technologies to create scripts that essentially reconfigure the entire environment “just in time” for actual deployment. This includes things like reconfiguring IP address dependencies. Once the environment is “up”, scripts can be utilized to insert and update the appropriate policies that ultimately define the architecture’s topology. Scripting performs some other environment-dependent settings, as well, but most important perhaps is getting those IP addresses re-linked such that traffic flows in the expected topology from one end to the other.

But in many ways this can be as frustrating as waiting for a neural network to converge as dependencies can actually inhibit an image from achieving full operational status. If you’ve ever booted a web server that relies upon an NFS or SMB mount on another machine for its file system, you know that if the server upon which the file system resides is not booted that it can cause excessive delays in boot time for the web server as well as rendering it inoperable – a.k.a. unavailable. That’s a simple problem to fix – unlike some configurations and policies that rely heavily upon having the IP address of other interconnected systems.

These are well-known problems with not-so-best-practices solutions today. This complicates the process of moving an “application” from one location to another because you aren’t moving just an application, you’re moving an architecture.

Relying upon “the cloud” to provide the same infrastructure services is a gambling proposition. Even if the same services are available you still run the (very high) risk of a less-than-stellar migratory experience due to the differences in provisioning methods. The APIs used to provision ELB are not the same as those used to provision a load balancing service in another provider’s environment, and certainly aren’t the same as what you might be using in your own data center. That makes migration a several layer process, with logically moving images just the beginning of a long process that may take weeks or months to straighten out.

SNS (SERVICE NAME SYSTEM)

We really do need to break free from the IP-address chains that bind architectures today. The design of a stateless infrastructure is one way to achieve that, but certainly there are other means by which we can make this process a smoother one. DNS effectively provides that layer of abstraction for the network. One can query a domain name at any time and even if the domain moves from IP to IP, we are still able to find it. In fact we leverage that dynamism every day to provide services like Global Application Delivery in our quest for multi-site resilience. We rely upon that dynamism as the primary means by which disaster recovery processes actually work as expected in the event of a disaster. We need something similar to DNS for services; something that’s universal and ubiquitous and allows configurations to reference services by name and not IP address.

We need a service registry, a service name system if you will, in which we can define for each environment a set of services available and the means by which they can be integrated. Rather than relying upon scripts to reconfigure components and services, a component would need only learn the location of the SNS service and from there could determine – based on service names – the location of dependent services and components without requiring additional reconfiguration or deployment of policies.

A more dynamic, service-oriented system that decouples IP from services would enable greater mobility not only across environments but within environments, enabling higher levels of resiliency in the event of inevitable failure. Purpose-built cloud services often already take this into consideration, but many of the infrastructure components – regardless of form-factor – do not. This means moving an architecture from the data center to a cloud computing environment is a nearly Herculean task today, requiring sacrifice of operationally critical services in exchange for cheaper compute and faster provisioning times.

If we’re serious about moving toward IT as a Service – whether that leverages public or private cloud computing models – then we need to get serious about how to address the interdependencies inherent in enterprise infrastructure architecture that make such a goal more difficult to reach. Services will not empower migratory cloud computing behavior unless they are unchained from the network topology. That’s true for security, and it’s true for other application delivery concerns as well.

A core principle of a service-oriented anything is de –coupling interface from implementation. We need to apply that core principle to infrastructure architecture in order to move forward – and outward.

RJ: Once upon a time we had “a service registry, a service name system.” It was called Universal Description Discovery and Integration (UDDI). Microsoft and others established public UDDI registries in the cloud. According to Wikipedia:

UDDI was written in August 2000, at a time when the authors had a vision of a world in which consumers of Web Services would be linked up with providers through a public or private dynamic brokerage system. In this vision, anyone needing a service such as credit card authentication would go to their service broker and select one supporting the desired SOAP or other service interface and meeting other criteria. In such a world, the publicly operated UDDI node or broker would be critical for everyone. For the consumer, public or open brokers would only return services listed for public discovery by others, while for a service producer, getting a good placement in the brokerage—by relying on metadata of authoritative index categories—would be critical for effective placement.

UDDI was included in the Web Services Interoperability (WS-I) standard as a central pillar of web services infrastructure, and the UDDI specifications supported a publicly accessible Universal Business Registry in which a naming system was built around the UDDI-driven service broker.

UDDI has not been widely adopted in the way its designers had hoped. IBM, Microsoft, and SAP announced they were closing their public UDDI nodes in January 2006. The group defining UDDI, the OASIS Universal Description, Discovery, and Integration (UDDI) Specification Technical Committee, voted to complete its work in late 2007 and has been closed. In September 2010, Microsoft announced they were removing UDDI services from future versions of the Windows Server operating system. Instead, this capability would be moved to Biztalk.

I was offered in 2005 the opportunity to write a book about UDDI. Fortunately, I saw the handwriting on the wall and declined.

CloudTimes (@cloudtimesorg) recommended a TechRepublic and ZDNet Webcast in a The Top 10 Considerations for Cloud Computing post of 6/26/2011:

Business and IT decision makers are increasingly confused by the rising number and type of cloud computing solutions. Everyone it would seem is now promising to save you time and money with this or that kind of web-based service for everything from email and enterprise applications to storage and data management. Regardless of the information overload, however, organizations of all types and sizes must embrace the age of computing without borders to increase competitive advantage and grow their business. Sounds great, but it isn’t easy. This on-demand Webcast, featuring the editors of TechRepublic and ZDNet.com, provides The Top 10 Considerations for Cloud Computing that will not only satisfy your need-to-know but will also help you make a more informed decision about the future of your business.

Business and IT decision makers are increasingly confused by the rising number and type of cloud computing solutions. Everyone it would seem is now promising to save you time and money with this or that kind of web-based service for everything from email and enterprise applications to storage and data management. Regardless of the information overload, however, organizations of all types and sizes must embrace the age of computing without borders to increase competitive advantage and grow their business.

Sounds great, but it isn’t easy. This on-demand Webcast, featuring the editors of TechRepublic and ZDNet.com, provides The Top 10 Considerations for Cloud Computing that will not only satisfy your need-to-know but will also help you make a more informed decision about the future of your business.

View the full On-Demand Webcast here

Ben Rockwood (@benr) posted Three Aspects of DevOps: What’s in a word to his Cuddletech blog on 6/24/2011:

Cloud. DevOps. Both are in the fad category, but both are very popular and everyone is grasping at what they really are. There is a subtle difference however. “Cloud” is ambiguous, this leads to the never ending line of questions “What is Cloud?” and yet more as the concept evolves such as “If cloud means in the cloud, then isn’t private cloud an oximoron?” DevOps on the other hand seems deceptively intuitive. This has caused confusion in the ongoing conversation because different people mean different things.

I see it as 3 distinct definitions and I’m going to lay them out to help people start to refine their thinking. To facilitate this I’m going to take dev and ops as keywords and add an operator. The operator determines which methodology is adopted by the other camp.

Now let me go a step further and suggest that these are not simply aspects of devops, they are in fact the 3 phases of what is collectively the “devops transformation”.

Phase I: Dev > Ops

In this phase developement methodology and mentality are adopted by operations. My estimate is that this represents about 90% of the devops movement. This where the DevOps movement started and where most of its focus is today. Several things happen in this phase:

- IT groups and systems/network administrators re-realize themselves as “operations”. Let us not forget that this is a new concept to many people, they don’t think of themselves as “operations” they think of themselves as IT. If you’re running a website this is a fairly natural fit, but for traditional IT groups this is an area of contention in and of itself. In the enterprise space you’re not “operating” a website, your “operating” a business.

- Agile is slowly adopted and adapted into operations. In many cases this means striping agile down to its first principles and its Lean roots. Its a matter of taking existing practices such as ITIL and marrying them with agile principles. This is slow and individually tailored to each company as many folks have found that things like SCRUM don’t work for ops, but visual workflow and control of work in progress (meaning, the inappropriately named “Kanban”) do. Finding balance between Peter Drucker’s “doing things right and doing the right thing” takes time.

- Re-tooling for the virtualized world. I could say “cloud world” but thats inaccurate, since the problems are the same if you have a large internal VMware deployment or an external AWS deployment. This is where most of the action has been in DevOps so far and what the DevOps Toolchain Project has been about. This is the draw, in particular, to configuration management (in the automation sense, not the ITIL one) and is helped along by the 3 companies really driving the publicity of DevOps, those being Puppet Labs, OpsCode and DTO Solutions.

- Monitoring gets kicked up a gear. Just as virtualization causes you to re-evaluate your tools for configuration management and command-and-control (now being called “distributed orchestration”) your monitoring needs to step up to the new challenges as well. This is where you will challenge your existing monitoring system, expand its functionality and re-consider your logging and trending strategies. Maybe everything is up to snuff already, but with all the recent additions to the alerting/logging/trending category you’ll inevitably try some new things and get over your fear of using tools written in Ruby.

- etc.

Phase II: Dev < Ops

In this phase operations methodology and mentality are adopted by developers. My estimate is that this represents less than 10% of the devops movement. This phase generally represents the bonding of the two groups and is easily confused with Phase I. Things that happen in this phase are:

- Metrics everywhere. This is something championed by John Allspaw, the collection of metrics from everywhere. In Phase I you may have started collecting metrics but they were by Ops for Ops, however in this phase Dev is actually interested in the metrics and they are more business focused, so metrics aren’t just coming from the OS but are also coming from application code. This is where dashboards start to be created to facilitate the wide absorption of metrics.

- Continuous Integration is implemented or evaluated. Ops alone can’t implemented CI, so so inevitably cooperation forms around it.

- Cross-training of tools and practices. This is when developers take a genuine interest in day-to-day operations activities, challenges and start aligning the toolsets between both groups.

- etc.

Phase III: Dev <> Ops

In this phase developers and operations unify, sharing responsibilities and practices. I think this is an underlying principle of the movement, and frankly is what DevOps really is about. This is the magical destination of your journey, a far country where Adam Jacobs rides unicorns and children spend time with their OpsDad’s and everyone sings drinking songs together at the pub. The DevOps movement is so concentrated on Phases I and II that this is still an uncrystalized space, but it is what you are driving towards, therefore the things that emerge in this phase are: