Windows Azure and Cloud Computing Posts for 3/21/2011+

| A compendium of Windows Azure, Windows Azure Platform Appliance, SQL Azure Database, AppFabric and other cloud-computing articles. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database and Reporting Services

- Marketplace DataMarket and OData

- Windows Azure AppFabric: Access Control, WIF and Service Bus

- Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch

- Windows Azure Infrastructur and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Azure Blob, Drive, Table and Queue Services

Steve Marx (@smarx) and Wade Wegner (@WadeWegner) produced a 00:43:28 Cloud Cover Episode 40 - ASP.NET MVC 3 with Table Storage video segment on 3/19/2011:

Join Wade and Steve each week as they cover the Windows Azure Platform. You can follow and interact with the show @CloudCoverShow.

In this episode, Steve and Wade talk through how to use Windows Azure Table Storage from an ASP.NET MVC 3 application. Steve shows us some tricks for using ASP.NET MVC 3 and AJAX, JQuery, Knockout, continuation tokens, and an efficient way to delete entities from tables.

Reviewing the news, Wade and Steve:

- Highlight the updates to the Windows Azure AppFabric production portal

- Discuss using Windows Azure CDN for your web applications

- Point out that LightSwitch Beta 2 can publish to Windows Azure

- Review Vittorio Bertocci's post on the enterprise edition of FabrikamShipping SaaS

- Remind everyone about Composite Applications talked about at PDC10

Note: Wade announced in a pair of 3/20 and 3/19/2011 Tweets:

Thanks you all for the kind words of congratulations! Mommy and baby are great, and we're home from the hospital.

Yippee, I've got a healthy baby boy! - http://twitpic.com/4b1yit.

He looks a lot like Wade, no?

Steve Marx (@smarx) explained how to create a Todo List App Using ASP.NET MVC 3 and Windows Azure Tables on 3/18/2011:

For this week’s episode of Cloud Cover, Wade and I talked about how to use Windows Azure Tables from .NET code, and we focused on using ASP.NET MVC 3 and doing paging over tables.

Rather than rehash the entire episode here, I’d like to point you to the episode itself (should go live Friday morning), the running app (http://smarxtodo.cloudapp.net), and the full source code (http://cdn.blog.smarx.com/files/smarxtodo_source.zip).

The one piece of code I’d like to highlight here is the code that performs paging. This is from

ApiController.cs:[HttpPost] [ValidateInput(false)] public ActionResult Get(string token) { var account = CloudStorageAccount.Parse( RoleEnvironment.GetConfigurationSettingValue("DataConnectionString")); var ctx = account.CreateCloudTableClient().GetDataServiceContext(); var query = ctx.CreateQuery<TaskEntity>("Tasks") .Where(t => t.PartitionKey == string.Empty).Take(5).AsTableServiceQuery(); var continuation = token != null ? (ResultContinuation)new XmlSerializer(typeof(ResultContinuation)) .Deserialize(new StringReader(token)) : null; var response = query.EndExecuteSegmented(query.BeginExecuteSegmented(continuation, null, null)); var sw = new StringWriter(); new XmlSerializer(typeof(ResultContinuation)).Serialize(sw, response.ContinuationToken); return Json(new { tasks = response.Results, nextToken = sw.ToString(), hasMore = response.ContinuationToken != null }); }This code is invoked by an AJAX call from the client. The JSON data it returns includes

nextToken, which references the continuation token, if any, returned by table storage. The format of thenextTokenstring is actually an XML-serialized version of theResultContinuationobject. I did that becauseResultContinuationis a sealed class with no public constructor or properties. The only way to save it and reinstantiate it is to use XML serialization. The above code uses this serialized representation to pass the token down to the client, and then the client passes it back when it wants to retrieve the next page.Check out the rest of the code to see how tasks are created, updated, and deleted. You may also be interested in the JavaScript code that manages the client-side view. This is the first time I’ve used Knockout.

<Return to section navigation list>

SQL Azure Database and Reporting Services

Steve Yi asked Visual Studio LightSwitch Beta 2 – Where Have You Been All My Life? in a 3/18/2011 post to the SQL Azure Team blog:

Visual Studio LightSwitch Beta 2 was released earlier this week and is now available for public download. LightSwitch applications utilize Silverlight that can be run in the browser or on the desktop. Beta 2 introduced new capabilities to deploy to Windows Azure and SQL Azure so I decided I really needed to give it a try.

Never having used the tool before, after 2 hours I was really impressed. LightSwitch helped me design a simple database, create the user screens, implemented basic field validation, and deployed it to Windows Azure. Amazing. Next week I'll post some video of the app I created and the LightSwitch development experience.

It is really easy to use for writing and deploying CRUD (Create, Read, Update, Delete) data-driven applications to Windows Azure and SQL Azure and is best suited for departmental and business applications. For business analysts and non-professional developers this is a fantastic tool that requires extremely little knowledge of coding or .NET. In the sample app I created I only wrote one line of code. Used in conjunction with currently CTP SQL Azure services such as SQL Azure Reporting and SQL Azure Data Sync, using Visual Studio LightSwitch is going to make it incredibly easy to quickly write hybrid applications for departmental and small business scenarios.

You can read more details about LightSwitch in blog posts from Jason Zander and Somasegar. Below are more detailed notes of my experience using it, and screenshots of the app I created and of LightSwitch development itself. Enjoy!

So Easy a Caveman, or Cavewomen Can Do It

As a quick test, I created an application that records a grocery list that can be accessed by my wife and I.

All within the LightSwitch experience:

- I designed the database visually (ok, only two tables)

- Created three screens for CRUD operations, adding stores, grocery items, and viewing grocery items by store

- I only ended up writing one line of .NET in the entire app to compute a calculated field

- Deployed the database schema to SQL Azure, and the application to Windows Azure in the final Publish step without having to edit any config files.

I did this start to finish in less than 2 hours without ever using the tool before or reading any tutorial documentation.

Screenshot of the LightSwitch application I deployed to Windows Azure and SQL Azure

Setup & Installation - A Lot Easier

Download and installation of LightSwitch Beta 2 was straightforward. No other tools required. No additional SDKs were needed for Azure deployment, nor any additional tools for connectivity to SQL Server or SQL Azure. This is a lot easier than configuring the development environment for a web/worker role deployment and installing SSMS (SQL Server Management Studio) for SQL Azure/Server development.

If you already have Visual Studio 2010 installed, LightSwitch becomes another project type within the VS experience.

Development - Different, But Easy

LightSwitch is all about writing data-driven applications. When authoring a LightSwitch app, you can connect to an existing database, SharePoint sites, or WCF RIA Services; the SQL Azure/Server experience is very clean and easy to use. Creating a greenfield application and designing the database is also incredibly easy and productive. It includes a friendly entity modeler that works against SQL Express, SQL Server, and SQL Azure, utilizing common data types like string, integer, date - but also data types in 'human' terms, such as "Email" and "PhoneNumber" which are stored as nvarchar in the database, but LightSwitch provides validation to make sure those fields are entered correctly with regexp expressions in the background. The database schema that's created is completely readable, in 3rd normal form, and can be easily used by other developers writing against the database or to write reports with for SQL Azure Reporting or on-premises SQL Server Reporting Services.

LightSwitch table designer

The most interesting development paradigm in LightSwitch is that the screens are driven completely by the data. There are no traditional form designers for layout, after you select one of a few wizard types LightSwitch creates the screens and data-bindings automatically. Customization in regards to fonts and layout are intentionally constrained for purposes of productivity and less complexity. I only went to code view once, to calculate subtotals for each grocery item based on quantity.

UX customization can be done at design time or run-time by an administrator via a Silverlight designer.

LightSwitch form designer

Deployment - Did I Say Easy a Lot Already? I'll Say It Again. Easy.

LightSwitch applications are Silverlight based, and can be deployed to the desktop, IIS, or Windows Azure - all configurable via radio boxes in the Publish Wizard. Provided a user already has an Azure account, it's a matter of cutting and pasting in subscription information to the Windows Azure account, and connection string information to the SQL Azure database. Of course, you can also choose to deploy to an IIS server and a SQL Azure database as well.

This is straightforward, but it does require already having an account, having security certificates deployed, and provisioning an empty Windows Azure service and SQL Azure database. LightSwitch made this as easy as possible. After clicking 'Publish', it all deployed to Windows Azure in a matter of minutes and was very straightforward. I didn't have to open or edit one XML configuration file throughout the whole process.

Deploying the database schema to SQL Azure was completely seamless and transparent. It doesn't deploy data - just schema, but "it just works". This was very impressive - no need to create and run scripts to implement the schema.

LightSwitch Publish Application Wizard

Security & Authorization

For on-premises deployments, Windows and Forms authentication are available. For Windows Azure deployment, Forms Authentication is the only option. Forms Authentication utilizes the same authentication mechanism from ASP.NET and created seven "aspnet_x" tables in my SQL Azure database for storing roles and user information. Administration screens are included in the LightSwitch app, accessible to a user identified as the administrator when the app is published within LightSwitch.

User and Role Administration within a LightSwitch application

Summary

I was really amazed how productive I was using LightSwitch to write an app and deploy it. I encourage you to try it out. I'll be writing some follow-up posts showing how quickly you can get started using it with SQL Azure.

<Return to section navigation list>

MarketPlace DataMarket and OData

My (@rogerjenn) SharePoint 2010 Lists’ OData Content Created by Access Services is Incompatible with ADO.NET Data Services post of 3/20/2010 describes a problem that conventional Visual Studio and LightSwitch applications have with some SharePoint 2010 data sources:

Introduction

Microsoft SharePoint has gained a substantial share of the market for content management and collaboration tools. Sales of SharePoint 2007 exceeded US$1 billion and Mary Jo Foley reported in her What Is Microsoft's Next Billion-Dollar Business? article of 9/1/2010 for Redmond Magazine that “SharePoint is well on its way to becoming Microsoft's first new $2 billion business.”

SharePoint 2010 is likely to become a data source for a wider range of Microsoft .NET, Windows Phone 7 and other OS client applications because it it exposes list collections in OData format. My Access Web Databases on AccessHosting.com: What is OData and Why Should I Care? post of 3/16/2011 explains the significance of OData-formatted information and how to manipulate it with PowerPivot for Excel.

Microsoft Access 2010 has the capability to easily and quickly publish conventional *.accdb databases to Web Databases, which run under SharePoint 2010’s Access Services. Access Services stores relational tables in SharePoint lists, displays forms in SharePoint Web pages, and prints reports with the assistance of SQL Server Reporting Services.

Visual Studio LightSwitch Beta 2 is a rapid application delivery (RAD) framework for SilverLight Windows and Web database clients that targets user and targets developer populations similar to those of Access. LightSwitch accepts OData, SQL Server, and SQL Azure as a data sources. PowerPivot for Excel runs on OData from SharePoint, and OData’s the only data format offered by Microsoft’s Windows Azure Marketplace DataMarket.

Windows Phone 7 has an OData software development kit (SDK), Android phones can process OData with the Java SDK, and there’s an OData SDK for iPhone’s Object C language. (See J. C. Cimetiere’s OData interoperability with .NET, Java, PHP, iPhone and more post of 3/16/2011 to the Interoperability @ Microsoft blog.) OData is likely to become a leading format in the rapidly growing mobile data market.

Problem Discovery

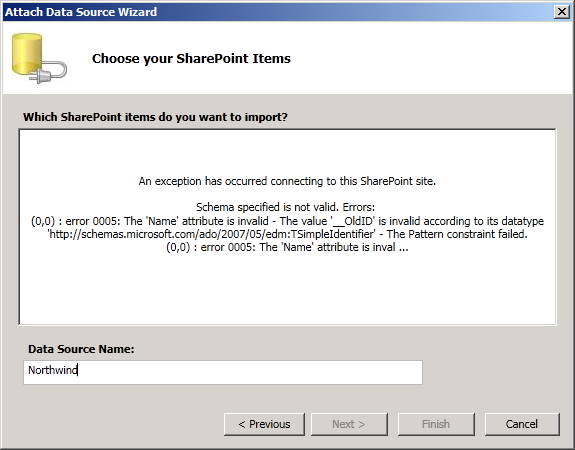

While running tests with SharePoint 2010’s OData producer as a data source for Visual Studio 2010 and LightSwitch Beta 2 client applications, I encountered the following error when attempting create a Visual Studio LightSwitch Beta 2 Service Reference from an Access application published to SharePoint 2010:

1. I started a LightSwitch project in Visual Studio and selected SharePoint as the Data Source Type:

2. I clicked Next to Enter Connection Information for a SharePoint site created from an Access 2010 project:

The NorthwindTraders site was created by running the Northwind Traders Web Database template from Office Online to create an Access *.accdb database and then publishing it to Access Services in a hosted SharePoint 2010 instance at AccessHosting.com.

3. When I clicked Next, I received the following error message:

The problem relates to the OData client rejection of two leading underscores in the Name property of a column in all lists generated by Access tables. However, the Microsoft SQL Server Data Portability specification for Simple Identifier provides the following specification:

2.2.4 SimpleIdentifier

The SimpleIdentifier attribute specifies an identifier that conforms to the rules for the String data type as specified in [XMLSCHEMA2].

The following is the XML schema definition of the SimpleIdentifier attribute.

<xs:simpleType name="TSimpleIdentifier">

<xs:restriction base="xs:string"/>

</xs:simpleType>The XML Schema Character Range for Char is:

Char

::=#x9 | #xA | #xD | [#x20-#xD7FF] | [#xE000-#xFFFD] | [#x10000-#x10FFFF]

/* any Unicode character, excluding the surrogate blocks, FFFE, and FFFF. */The preceding specifications don’t appear to me to specify a pattern that precludes two leading underscores.

You can’t edit the column name of a list linked to Access in the SharePoint UI or with SharePoint Designer 2010. The field is hidden by default in Access Datasheet view. You can unhide the field, but Datasheet views of tables linked to SharePoint lists don’t have a Design mode:

To change the __OldID column name to OldID, you must disconnect the Access front-end from the Access Services version to enable modifying the list designs in the UI, edit 15 lists for this example, and then reconnect Office Access to Access Services. This is, to be generous, a daunting task.

After you perform the preceding tasks for the NorthwindTraders example, the Choose Your SharePoint Items dialog appears as expected:

Following is the corrected Product entity displayed in VS LightSwitch Beta 2:

So far, I haven’t found any side effects from removing the two underscores, other than the OldID column now appears by default in Access Datasheet view.

Tests with a simple VS 2010 C# Console application exhibit the same problem.

It’s interesting that PowerPivot for Excel doesn’t exhibit a similar problem. See the “Browsing OData-formatted Web Database Content in PowerPivot for Excel” section of my Access Web Databases on AccessHosting.com: What is OData and Why Should I Care? post.

Conclusion

The pattern applied to the TSimpleIdentifier Entity Data Model data type that precludes leading underscores should be removed in a later EDM v4.1 CTP because the rule is arbitrary. Changes to Access Services to eliminate the leading underscore and change the method for making columns with such names hidden by default in Access would need to wait for Office Service Pack 2.

Full Disclosure: I’m a contributing editor for Visual Studio Magazine, a sister publication to Redmond Magazine, both published by 1105 Media.

Bruno Terkaly described Azure DataMarket– Writing Code to Consume DataMarket Data on 3/19/2011:

Introduction Windows Azure Marketplace DataMarket

This blog is based on a lab exercise in the Windows Azure Platform training Kit.

The lab is called Introduction to Windows Azure Marketplace DataMarket.

I ran through the lab to see if I could get it to work. And it does work.The basic first steps in the lab:

Step 1 -Signing Up Navigate to https://datamarket.azure.com/ and use your Windows Live ID to sign up. Step 2 - Fill in Account Details Name, email address, etc. Agree to DataMarket privacy policies. Click the Continue button Step 3 -Explore your account keys The lab instructs you to go to My Account to get account keys. Your account key is used to access the data from client applications. Step 4 - Browsing the data sources You can navigate the DataMarket to find the data that makes sense to you.

If you haven’t done so, download the free kit now: Windows Azure Platform Training Kit Download

Sign up to Gender Info

The lab has you sign up for Gender Information from the United Nations database. Note that not all data sources are free.

Once you sign up, you can begin to explore the data:

Note the query that is setup for you. You can modify the query as needed:

Query for Italy Note the query now has been modified to include results for just Italy. https://api.datamarket.azure.com/Data.ashx/UnitedNations/GenderInfo2007/Values?$filter=CountryName%20eq%20%27Italy%27&$top=100 Consuming the Data from Visual Studio

Some basic steps to consume data

At a high level, here are the changes we will need to make:

Here is the class we added:Service Reference for UN Gender Data

https://api.datamarket.azure.com/Data.ashx/UnitedNations/GenderInfo2007/Service Reference Name

UNDataUsing Statements to add

using System.Net;

using ConsumingDataMarketData;

Intellisense is available – notice that as soon as we hit the period (.) we get information about our data source, making the coding less error-prone.

Conclusion

It is very easy to consume DataMarket data. It is in [AtomPub] format. Also notice that we did not need to worry about putting the OData syntax. Just execute the LINQ query and the tooling takes care of the rest.

John Gartner asserted in a 3/18/2011 Reuters news item that Microsoft Wants to Be the Google of Utility Data and reported that Ford Motor Company will use Azure Marketplace DataMarket for managing electric vehicle (EV) rechaging:

When it comes to looking for the best prices for electricity to charge your plug-in vehicle, Microsoft wants to be at the top of the search results. The desktop and internet application company wants to be the data source for all things utility by creating a national database of tariff and rate plan information. The Microsoft Utility Rate Service (MURS) will be available via subscriptions to government agencies, power providers, auto makers, and EV charging equipment companies.

Microsoft's Director of Business Development Warren Dent, in a meeting with organizations involved in EV infrastructure in Portland, Oregon, explained that the company intends to have utility rate data available for 17 metropolitan areas, with all but three (Detroit, Denver, and Chicago) located in coastal states. It's not a coincidence that these areas closely align with Ford's electric vehicle launch schedule.

Ford is relying on Microsoft's service for its "value charging" program that will enable EV owners to program their vehicles to charge based on when utility rates are lowest.

When the service is complete, pricing data would be relayed from the utility to Microsoft to Ford's data operations to Ford vehicles. Customers would be able to program vehicle charging via the onboard computer or a mobile application.

This pathway is significant because it puts the controls into the vehicle itself and cuts out EV charging equipment companies, who are looking to develop their own services in partnership with smart grid service providers. Obviously, the vehicle needs to be plugged in to charge, but the vehicle would send the signal to the charging equipment to start or stop. Automaker will take a variety of approaches as to how much intelligence they will put in the vehicles.Microsoft does not have any interest in interfacing directly with consumers. As with Ford, MURS subscribers will use their own branding to interact with the public, according to Dent.Microsoft is partnering with one to three utilities in each region to get access to the data, including many of the largest in the country, including Duke Energy, Xcel Energy, and Portland General Electric.

Microsoft wants to be a leader in providing services to utilities and their customers, and is leveraging the company's Azure Marketplace DataMarket database infrastructure. The managing of EVs is seen as a driving force for establishing a platform for what will be a huge opportunity in smart grid data services. As examples, Pike Research estimates that investment in information technology for managing electric vehicles will reach $371 million annually in 2015, while smart grid data analytics services revenue will top $1.1 billion.

Microsoft is creating its own standards for storing and sharing utility data in an industry where standardization hasn't been necessary since utilities in Texas have not needed to have software or communications equipment that speaks the same language as that in Oregon or Illinois. With this early investment and development work, Microsoft may be temporarily pulling ahead of companies such as IBM, Accenture, SAP, Oracle and yes, Google, which also want a large slice of the energy information services pie. But they are sure to have company before long.

John Gartner is a senior analyst at Pike Research and a co-founder of the Matter Network.

<Return to section navigation list>

Windows Azure AppFabric: Access Control, WIF and Service Bus

The Windows Azure AppFabric Team explained How to use the Windows Azure AppFabric Access Control service to federate identities in a Windows Azure ASP.NET Application in a 3/21/2011 post to the Windows Azure AppFabric Team blog:

Alik Levin, from the Access Control service content and documentation team, has written these great step-by-step instructions on how you can use the service for federated authentication using Windows Live ID and Google accounts in your Windows Azure ASP.NET application. [See post below.]

Read the step-by-step instructions, and find a number of great resources related to the Access Control service in these posts:

- Securing Windows Azure Distributed Application Using AppFabric Access Control Service (ACS) v2 – Scenario and Solution Approach

- Windows Azure Web Role ASP.NET Application Federated Authentication Using AppFabric Access Control Service (ACS) v2 – Part 1

- Windows Azure Web Role ASP.NET Application Federated Authentication Using AppFabric Access Control Service (ACS) v2 – Part 2

- Windows Azure Web Role ASP.NET Application Federated Authentication Using AppFabric Access Control Service (ACS) v2 – Part 3

- Live demo - https://wawithacsv2.cloudapp.net:8085/

The capabilities of the Access Control service mentioned in these instructions will be added to the production service soon. In the meanwhile they are available for you to check-out in the Community Technology Preview (CTP) service in our LABS/Previews environment.

In order to use the CTP service just sign up here: https://portal.appfabriclabs.com/

To use the production service you can sign up to our free trial offer, just click on the image below and get started!

Alik also includes a link to a live demo of his app at https://wawithacsv2.cloudapp.net:8085/. Disregard the Certificate Error message to open this sign-in page:

Click either the Windows Live ID or Google button and provide your credentials to display the default screen:

Alik Levin continued his series with Windows Azure Web Role ASP.NET Application Federated Authentication Using AppFabric Access Control Service (ACS) v2 – Part 3 on 3/20/2011:

Before proceeding with further reading, review the following previous posts:

- Securing Windows Azure Distributed Application Using AppFabric Access Control Service (ACS) v2 – Scenario and Solution Approach

- Windows Azure Web Role ASP.NET Application Federated Authentication Using AppFabric Access Control Service (ACS) v2 – Part 1

- Windows Azure Web Role ASP.NET Application Federated Authentication Using AppFabric Access Control Service (ACS) v2 – Part 2

In this post I will show how to publish your ASP.NET web application and how to configure it to keep federated authentication with ACS v 2.0 functional when running in Windows Azure production environment.

Step 5 – Deploy your solution to Windows Azure

Content in this step adopted and adapted from Code Quick Launch: Create and deploy an ASP.NET application in Windows Azure.

In this step you will deploy the application to the cloud using the Windows Azure management portal. First you’ll need to create a service and a service configuration file, called service package, and then upload it to Windows Azure environment using Windows Azure management portal.

To create service package and upload it to Windows Azure environment

- In Visual Studio’s Solution Explorer, right-click the project name (the one that’s above Roles folder) and then click Publish….

- Within the Deploy Windows Azure project dialog, click Create Service Package Only, then click OK.

- Assuming successful compilation, Visual Studio will open a folder that contains two files created as part of the publish process. The files are ServiceConfiguration.cscfg (which we discussed earlier in this topic) and <YourProjectName>.cspkg (such as WindowsAzureProject1.cspkg). The .cspkg extension is for service package files. A package file contains the service definition as well as the binaries and other items for the application being deployed. Make a note of the path to these files, as you’ll be prompted for the path when you deploy the application using the Windows Azure management portal.

- Log in to the Windows Azure management portal to deploy the application. Log in at http://windows.azure.com.

- Within the Windows Azure management portal, click Hosted Services, Storage Accounts & CDN.

- Click New Hosted Service.

- Select a subscription that will be used for this application.

- Enter a name for your application. This name is used to distinguish your services within the Windows Azure management portal for the specified subscription.

- Enter the URL for your application. The Windows Azure management portal ensures that the URL is unique within the Windows Azure platform (not in use by anyone else’s applications). Note: this is the URL that needs to be then updated in both ACS v2.0 management portal for that relying party’s realm and return URL and in the application’s web.config audienceUri and realm.

- Choose a region from the list of regions.

- Choose Deploy to stage environment.

- Ensure that Start after successful deployment is checked.

- Specify a name for the deployment.

- For Package location, click the corresponding Browse Locally… button, navigate to the folder where your <YourProjectName>.cspkg file is, and select the file.

For Configuration file, click the corresponding Browse Locally… button, navigate to the folder where your ServiceConfiguration.cscfg is, and select the file.

Click on Add Certificate button to add your certificate to be deployed, but first you need to export the certificate into file. To export the certificate into file follow these steps:

Open mmc console by first clicking on Windows button in task bar and typing mmc. You should see mmc.exe appears in search results. Click on it.

When mmc console appears click on File option and then on Add/Remove Snap-in… option.

In the Add or Remove Snap-ins dialog box choose Certificates from the available snap-ins list and click on Add> button.

Choose Computer Account option and click Finish button.

In the Select Computer wizard page select Local Computer (the computer this console is running on) and click Finish button. The click OK button.

Expand Console Root folder.

Expand Certificates(Local Computer) folder.

Expand Personal folder.

Click on Certificates folder to list available certificates.

Locate your certificate in the list.

Right click on the certificate and choose All Tasks and then Export… option.

Click Next on the welcome page of the wizard.

On the Export Private Key page choose Yes, export the private key option and click Next button.

On the Export File Format leave the default option which is Personal Information Exchange - PKCS #12 (.PFX) and click Next button.

On the Password page of the wizard specify password. You will need it when uploading the certificate to Windows Azure environment via management portal.

On the File to Export page of the wizard specify destination file and click Next button. Make a note where you are saving the file. Note, since the certificate being exported has private key extra care should be taken to not exposing it to the public. It’s best if you delete the file altogether after uploading it to Windows Azure environment.

Click Finish to complete the wizard. You should be presented with The export was successful message, click OK button to dismiss the message.

Switch back to Windows Azure management portal where you opened a dialog box to locate your certificate (.PFX) file and locate the certificate file you have just exported.

Specify the password for your certificate in the Certificate Password field.

Click OK. You will receive a warning after you click OK because there is only one instance of the web role defined for your application (this setting is contained in the ServiceConfiguration.cscfg file). For purposes of this walk-through, override the warning by clicking Yes, but realize that you likely will want more than one instance of a web role for a robust application.

You can monitor the status of the deployment in the Windows Azure management portal by navigating to the Hosted Services section. Because this was a deployment to a staging environment, the DNS will be of the form http://<guid>.cloudapp.net. You can see the DNS name if you click the deployment name in the Windows Azure management portal (you may need to expand the Hosted Service node to see the deployment name); the DNS name is in the right hand pane of the portal. Once your deployment has a status of Ready (as indicated by the Windows Azure management portal), you can enter the DNS name in your browser (or click it from the Windows Azure management portal) to see that your application is deployed to the cloud.

Although this walk-through was for a deployment to the staging environment, a deployment to production follows the same steps, except you pick the production environment instead of staging. A deployment to production results in a DNS name based on the URL of your choice, instead of a GUID as used for staging.

If this is your first exposure to the Windows Azure management portal, take some time to familiarize yourself with its functionality. For example, similar to the way you deployed your application, the portal provides functionality for stopping, starting, deleting, or upgrading a deployment.

Important

Assuming no issues were encountered, at this point you have deployed your Windows Azure application to the cloud. However, before proceeding, realize that a deployed application, even if it is not running, will continue to accrue billable time for your subscription. Therefore, it is extremely important that you delete unwanted deployments from your Windows Azure subscription. To delete the deployment, use the Windows Azure management portal to first stop your deployment, and then delete your deployment. These steps take place within the Hosted Services section of the Windows Azure management portal: Navigate to your deployment, select it, and then click the Stop icon. After it is stopped, delete it by clicking the Delete icon. If you do not delete the deployment, billable charges will continue to accrue for your deployment, even if it is stopped.

Publish to production clicking on you deployment node so that Swap VIP ribbon appears.

Click on Swap VIP ribbon and then OK button. The deployment to production should take couple of minutes.

In the next procedure you will update the the package and the ACSv2 to reflect on the address changes from staging environment to production.

To update the package and ACS v2.0 configuration to reflect on production environment

- Switch back to your solution in Visual Studio.

- Open web.config and change audienceUri and realm to reflect on the changes you made when switching from staging to production environment.

- In the Solution Explorer right clock on the role (right above the roles folder) and click on Publish option republish the package with the changes in web.config. You cannot update just web.config, you need to create the new package.

- Switch to Windows Azure management portal.

- Click on the role so that you see the Upgrade ribbon appears in the tool bar, click on the ribbon and provide path to newly created package and configuration files in Package Location: and Configuration File: fields respectively.

- Click OK button. The update should take several minutes.

- Go to ACS v2.0 portal and update realm and return URL of the relying party to reflect the changes you made when switching from staging to production, these values should match audienceUris and realm values in the web.config.

Next procedure helps you to verify your application is functional when running in Windows Azure environment.

To verify the application is functional and running in Windows Azure environment

- Navigate to your production URL of your application. Make sure that you use SSL (HTTPS) and use the right port you configured in the package.

- You should be redirected to the Home Realm Discovery (HRD) page presenting you with two options – Windows Live ID and Google.

- Choose one of them to authenticate yourself.

- Upon successful authentication you will be redirected to your Default.aspx page.

My live demo is here https://wawithacsv2.cloudapp.net:8085/.

Related materials

- Windows Azure AppFabric Access Control Service (ACS) v2 As A Cloud Single Sing On (SSO) Service – Scenarios and Solution Approaches

- Windows Identity Foundation (WIF) and Azure AppFabric Access Control (ACS) Service Survival Guide

- Video: What’s Windows Azure AppFabric Access Control Service (ACS) v2?

- Video: What Windows Azure AppFabric Access Control Service (ACS) v2 Can Do For Me?

- Video: Windows Azure AppFabric Access Control Service (ACS) v2 Key Components and Architecture

- Video: Windows Azure AppFabric Access Control Service (ACS) v2 Prerequisites

- Windows Azure AppFabric Access Control Service 2.0 Documentation

- Windows Identity Foundation (WIF) Fast Track

- Windows Identity Foundation (WIF) Code Samples

- Windows Identity Foundation (WIF) SDK Help Overhaul

- Windows Identity Foundation (WIF) Questions & Answers

<Return to section navigation list>

Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

Jason Sherron posted Best Practices for the Windows Azure Content Delivery Network to the Windows Azure blog on 3/18/2011:

Excerpted from: http://msdn.microsoft.com/en-us/library/gg680299.aspx

How can I use web.config to manage the caching behavior in hosted-object CDN delivery?

The Windows Azure Content Delivery Network (CDN) honors the cache control headers provided by the hosted service. If the content is provided by a web role, this is controlled by IIS. Cache control headers for static content can be customized by changing the <clientCache> element of the <staticContent> element in the web configuration.

For example:

The following configuration sample adds an HTTP "Expires: Tue, 19 Jan 2038 03:14:07 GMT" header to the response, which configures requests to expire years from now.

<configuration> <system.webServer> <staticContent> <clientCache cacheControlMode="UseExpires" httpExpires="Tue, 19 Jan 2038 03:14:07 GMT" /> </staticContent> </system.webServer> </configuration>For ASP.NET pages, the default behavior is to set cache control to private. In this case, the Windows Azure CDN will not cache this content. To override this behavior, use the Response object to change the default cache control settings.

For example:

Response.Cache.SetCacheability(HttpCacheability.Public);

Response.Cache.SetExpires(DateTime.Now.AddYears(1));

Settings contained in the root location of your origin will be inherited to all subfolders. Note that you can also place a web.config file in any sub-directory within origin to control individual configuration settings appropriate to the given sub-directory tree.

You should only utilize a web.config file to add MIME types that are not IIS defaults. If you use a web.config and redefine a standard MIME type IIS could return a 500 (see: http://blogs.msdn.com/b/chaun/archive/2009/12/04/iis7-error-cannot-add-duplicate-collection-entry-of-type-mimemap-with-unique-key-attribute-fileextension.aspx). As a best practice, you generally only need to set a MIME type if try to deliver a new file type and are receiving a 404. E.g. test any new file types for delivery and, if required, add a mime type prior to generally publishing any links.

Are there any best practices for managing different CDN environments in my Windows Azure project?

For example, if you want to have a separate CDN endpoint configured for a test environment vs. production in the same project, we recommend putting the different domains in a configuration setting (ServiceConfiguration.cscfg), since those values can be changed at runtime. However web.config is also an acceptable repository.

What's the best way to ensure that my content is fresh?

Setting good cache expiration headers is the simplest way to ensure freshness, control costs, and give your clients the best performance.

See http://msdn.microsoft.com/en-us/library/gg680305.aspx

Can I use different folder names in the Windows Azure CDN?

Yes, absolutely. The Windows Azure CDN simply reflects the URL structure to your source content; you can nest it as deeply as you need.

For hosted-service object delivery as of SDK 1.4, you are restricted to publishing under the /cdn folder root on your service.

How are query string parameters used in caching?

For hosted-service object delivery, you can toggle this behavior in the Windows Azure Developer Portal when you enable CDN on a hosted service. Enabled means that the entire query string will be used as part of the object's cache key; that is, objects with the same root URL path but with different query strings will be cached as separate objects. "Disabled" means that different query string values are ignored and only the root URL path is used as cache key so different query strings are treated as the same object and only cached once.

In blob storage origin, query strings are always ignored.

What are examples of how I could use HTTPS delivery?

HTTPS delivery on the Windows Azure CDN is used to deliver publicly available objects that must be contained within a secure session. For example, a shopping cart may use many "chrome" objects including graphics, buttons, static scripts and other reusable elements; in a secured shopping-cart page flow, these objects must be delivered via HTTPS to avoid browser warnings.

How does the Windows Azure CDN do replication?

There is no replication. File load in the Windows Azure CDN is "pull". Files are uploaded to a single location (e.g. the origin) and pulled into cache nodes based upon requests from clients.

What nodes of the Windows Azure CDN is my service deployed to?

When you deploy to the Windows Azure CDN, you are automatically "deployed" to all of our locations, and you are added to each additional location as it comes on line. You do not have to do anything to start utilizing a "new" CDN node. The Windows Azure CDN employs anycast to route the end user to the closest node.

What are best practices for byte range requests in the Windows Azure CDN?

Do not put your signatures/table-of-contents/catalog at the end of a large file and then use a byte-range GET to retrieve it. This will cause sub-optimal performance. A signature file should be placed in the beginning of a file, or ideally in a separate file.

How does the Windows Azure CDN work with compressed content?

The Windows Azure CDN will not modify (or add) compression to your objects. The Windows Azure CDN respects whatever compression is provided by the origin based on the "Accept-Encoding" header. As of 1.4, Azure Storage does not support compression. If you are using hosted-service object delivery, you can configure IIS to return compressed objects.

How can I purge or invalidate content in the Windows Azure CDN?

As of 1.4, no purge function is available. This feature is under development. The best freshness control is to set good cache expiration headers as described in this document and the Windows Azure CDN documentation on MSDN.

Example Problem Report: "The CDN isn't getting fresh objects," or, "The CDN seems to be caching objects for 72 hours"

You're not emitting a Cache-Control header, or a value that is meaningless to our CDN and it therefore ignores it and will cache up to 72 hours.

Cache-control headers should be "public" and have a value greater than 300, or the CDN will ignore it. If you're intentionally emitting a Cache-Control value < 300 then that content arguably shouldn't be on the CDN in the first place.

Please adjust your origin expirations to better values, as described here: http://msdn.microsoft.com/en-us/library/ff919705.aspx

How often does the CDN refresh objects from origin?

It depends on the object's cache-control headers (or lack thereof), object popularity, parent cacheability and edge utilization. It will hit origin at least once per node per freshness window, and follow up with If-Modified-Since requests upon refresh.

If no Cache-Control header is set, please see the "72 hours" question above.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

Maarten Balliauw (@martenballiauw) answered Windows Azure and scaling: how? (.NET) on 3/21/2011:

One of the key ideas behind cloud computing is the concept of scaling.Talking to customers and cloud enthusiasts, many people seem to be unaware about the fact that there is great opportunity in scaling, even for small applications. In this blog post series, I will talk about the following:

- Put your cloud on a diet (or: Windows Azure and scaling: why?)

- Windows Azure and scaling: how? (.NET) – the post you are currently reading

- Windows Azure and scaling: how? (PHP)

Creating and uploading a management certificate

In order to be able to programmatically (and thus possibly automated) scale your Windows Azure service, one prerequisite exists: a management certificate should be created and uploaded to Windows Azure through the management portal at http://windows.azure.com. Creating a certificate is easy: follow the instructions listed on MSDN. It’s as easy as opening a Visual Studio command prompt and issuing the following command:

1 makecert -sky exchange -r -n "CN=<CertificateName>" -pe -a sha1 -len 2048 -ss My "<CertificateName>.cer"

Too anxious to try this out? Download my certificate files (management.pfx (4.05 kb) and management.cer (1.18 kb)) and feel free to use it (password: phpazure). Beware that it’s not safe to use in production as I just shared this with the world (and you may be sharing your Windows Azure subscription with the world :-)).

Uploading the certificate through the management portal can be done under Hosted Services > Management Certificates.

Building a small command-line scaling tool

In order to be able to scale automatically, let’s build a small command-line tool. The idea is that you will be able to run the following command on a console to scale to 4 instances:

1 AutoScale.exe "management.cer" "subscription-id0" "service-name" "role-name" "production" 4

Or down to 2 instances:.

1 AutoScale.exe "management.cer" "subscription-id0" "service-name" "role-name" "production" 2

Now let’s get started. First of all, we’ll be needing the Windows Azure service management client API SDK. Since there is no official SDK, you can download a sample at http://archive.msdn.microsoft.com/azurecmdlets. Open the solution, compile it and head for the /bin folder: we’re interested in Microsoft.Samples.WindowsAzure.ServiceManagement.dll.

Next, create a new Console Application in Visual Studio and add a reference to the above assembly. The code for Program.cs will start with the following:

1 class Program 2 { 3 private const string ServiceEndpoint = "https://management.core.windows.net"; 4 5 private static Binding WebHttpBinding() 6 { 7 var binding = new WebHttpBinding(WebHttpSecurityMode.Transport); 8 binding.Security.Transport.ClientCredentialType = HttpClientCredentialType.Certificate; 9 binding.ReaderQuotas.MaxStringContentLength = 67108864; 10 11 return binding; 12 } 13 14 static void Main(string[] args) 15 { 16 } 17 }

This constant and WebHttpBinding() method will be used by the Service Management client to connect to your Windows Azure subscription’s management API endpoint. The WebHttpBinding() creates a new WCF binding that is configured to use a certificate as the client credential. Just the way Windows Azure likes it.

I’ll skip the command-line parameter parsing. Next interesting thing is the location where a new management client is created:

1 var managementClient = Microsoft.Samples.WindowsAzure.ServiceManagement.ServiceManagementHelper.CreateServiceManagementChannel( 2 WebHttpBinding(), new Uri(ServiceEndpoint), new X509Certificate2(certificateFile));

Afterwards, the deployment details are retrieved. The deployment’s configuration is in there (base64-encoded), so the only thing to do is read that into an XDocument, update the number of instances and store it back:

1 var deployment = managementClient.GetDeploymentBySlot(subscriptionId, serviceName, slot); 2 string configurationXml = ServiceManagementHelper.DecodeFromBase64String(deployment.Configuration); 3 4 var serviceConfiguration = XDocument.Parse(configurationXml); 5 6 serviceConfiguration 7 .Descendants() 8 .Single(d => d.Name.LocalName == "Role" && d.Attributes().Single(a => a.Name.LocalName == "name").Value == roleName) 9 .Elements() 10 .Single(e => e.Name.LocalName == "Instances") 11 .Attributes() 12 .Single(a => a.Name.LocalName == "count").Value = instanceCount; 13 14 var changeConfigurationInput = new ChangeConfigurationInput(); 15 changeConfigurationInput.Configuration = ServiceManagementHelper.EncodeToBase64String(serviceConfiguration.ToString(SaveOptions.DisableFormatting)); 16 17 managementClient.ChangeConfigurationBySlot(subscriptionId, serviceName, slot, changeConfigurationInput);

Here’s the complete Program.cs code:

1 using System; 2 using System.Linq; 3 using System.Security.Cryptography.X509Certificates; 4 using System.ServiceModel; 5 using System.ServiceModel.Channels; 6 using System.Xml.Linq; 7 using Microsoft.Samples.WindowsAzure.ServiceManagement; 8 9 namespace AutoScale 10 { 11 class Program 12 { 13 private const string ServiceEndpoint = "https://management.core.windows.net"; 14 15 private static Binding WebHttpBinding() 16 { 17 var binding = new WebHttpBinding(WebHttpSecurityMode.Transport); 18 binding.Security.Transport.ClientCredentialType = HttpClientCredentialType.Certificate; 19 binding.ReaderQuotas.MaxStringContentLength = 67108864; 20 21 return binding; 22 } 23 24 static void Main(string[] args) 25 { 26 // Some commercial info :-) 27 Console.WriteLine("AutoScale - (c) 2011 Maarten Balliauw"); 28 Console.WriteLine(""); 29 30 // Quick-and-dirty argument check 31 if (args.Length != 6) 32 { 33 Console.WriteLine("Usage:"); 34 Console.WriteLine(" AutoScale.exe <certificatefile> <subscriptionid> <servicename> <rolename> <slot> <instancecount>"); 35 Console.WriteLine(""); 36 Console.WriteLine("Example:"); 37 Console.WriteLine(" AutoScale.exe mycert.cer 39f53bb4-752f-4b2c-a873-5ed94df029e2 bing Bing.Web production 20"); 38 return; 39 } 40 41 // Save arguments to variables 42 var certificateFile = args[0]; 43 var subscriptionId = args[1]; 44 var serviceName = args[2]; 45 var roleName = args[3]; 46 var slot = args[4]; 47 var instanceCount = args[5]; 48 49 // Do the magic 50 var managementClient = Microsoft.Samples.WindowsAzure.ServiceManagement.ServiceManagementHelper.CreateServiceManagementChannel( 51 WebHttpBinding(), new Uri(ServiceEndpoint), new X509Certificate2(certificateFile)); 52 53 Console.WriteLine("Retrieving current configuration..."); 54 55 var deployment = managementClient.GetDeploymentBySlot(subscriptionId, serviceName, slot); 56 string configurationXml = ServiceManagementHelper.DecodeFromBase64String(deployment.Configuration); 57 58 Console.WriteLine("Updating configuration value..."); 59 60 var serviceConfiguration = XDocument.Parse(configurationXml); 61 62 serviceConfiguration 63 .Descendants() 64 .Single(d => d.Name.LocalName == "Role" && d.Attributes().Single(a => a.Name.LocalName == "name").Value == roleName) 65 .Elements() 66 .Single(e => e.Name.LocalName == "Instances") 67 .Attributes() 68 .Single(a => a.Name.LocalName == "count").Value = instanceCount; 69 70 var changeConfigurationInput = new ChangeConfigurationInput(); 71 changeConfigurationInput.Configuration = ServiceManagementHelper.EncodeToBase64String(serviceConfiguration.ToString(SaveOptions.DisableFormatting)); 72 73 Console.WriteLine("Uploading new configuration..."); 74 75 managementClient.ChangeConfigurationBySlot(subscriptionId, serviceName, slot, changeConfigurationInput); 76 77 Console.WriteLine("Finished."); 78 } 79 } 80 }

Now schedule this (when needed) and enjoy the benefits of scaling your Windows Azure service.

So you’re lazy? Here’s my sample project (AutoScale.zip (26.31 kb)) and the certificates used (management.pfx (4.05 kb) and management.cer (1.18 kb)).

De Standaard of Belgium published a story about a problem Windows Azure could solve (translated from Dutch by Google): Belgian ticket sales for Olympics London resume April 26 on 3/21/2011:

I’m sure Maarten Balliauw (@martenballiauw) at Real Dolmen is following this issue closely.

Here’s the Microsoft Translator version, which isn’t much more colloquial:

Johannes Kebeck described on 3/20/2011 creating Dynamic Tile-Layers with Windows Azure and SQL Azure for determining Japanese road status after the earthquake and tsunami:

Introduction

Recently I had the honour to support our friends and colleagues in Japan in the development of a service that shows the status of roads following the tragic earthquake and the even more disastrous tsunami. Setting up this service in the cloud took only a few hours and in the following I will walk through the components of this solution and the thoughts the led to this particular implementation path.

You will find the sample [Windows Azure] website here.

I have also created a Bing Map App here.

Components and Reasoning to Choose this Implementation Path

Bing Maps is a cloud-based web mapping service that guarantees high availability and scalability. It is accessible through a consumer site and through a set of APIs which include SOAP and REST web services as well as more interactive AJAX and Silverlight Controls. The AJAX and the Silverlight controls allow you to overlay your own data in vector format or rasterized into tile-layers. Since version 7 the AJAX control is not only supported on the PC and Mac but also on the iPhone, Android and Blackberry browsers. You will find a list of supported browsers here.

In order to remain independent from browser plugins and also to support multiple platforms we settled for the Bing Maps AJAX Control version 7.When you have a large amount of vector data it might be advisable to rasterize these data into tile layers before overlaying them on your web mapping solution. In this case the source data (provided by Honda) was available as a KMZ-file. The uncompressed size of this data is 40 MB and even compressed it is still 8.7 MB. Downloading such an amount of data would take a while. Particularly if you also intend to support mobile devices it might not be the best option. In addition you have to consider the amount of objects that you intend to render. In this example the data contained 53,566 polylines and rendering would take a while, deteriorating the user experience even further.

Therefore we decided to rasterize the data and overlay them as a tile layer.

After the decision was made to rasterize the data and visualize them as a tile layer on Bing Maps the question was, if we create a static tile layer or rasterize the data on the fly. In this example the data covers 75,628 road-kilometres and an area of 156,624 square kilometres. Creating a complete tile-set down to level 19 would result in 61,875,766 tiles. Rendering all these tiles would take many hours and daily updates would not have been practical. On the other side it is not likely that all these tiles will be needed. There might be regions that people wouldn’t look at at all zoom-levels. In fact it turned out that only a few thousand different tiles are being retrieved every day.

Therefore we decided to set up a solution that creates the tiles on demand and implement a tile-cache in order to enhance performance.

When we started the project we had no idea what traffic we had to expect. Therefore we wanted to be able to scale he solution up and down depending on the traffic. We also wanted to make sure that data and services are held close to the end-users in order to keep latency times low.

Therefore we chose to deploy the solution on Windows Azure and enable the Content Delivery Network (CDN) for the tile cache. Vector data will be stored as spatial data types in SQL Azure.

The last ingredient was the Spatial ETL tool that allows us to load the KMZ-file into SQL Azure and we chose Safe FME for this task. Safe supports hundreds of spatial data formats – including KML/KMZ and SQL Server Spatial data formats.

On a high level the components of the proposed solution look like this.

The solution is accessible as a Map App from the Bing Maps consumer website as well as a “regular” website from PC, Mac and mobile devices.

Loading the Data into SQL Azure

As mentioned above we use Safe FME to load the data into SQL Azure. We only need an OGCKML-reader and the MSSQL_SPATIAL writer.

At the reader we expose the kml_style_url Format Attribut because this holds the information that allows us to distinguish between road segments that were open to traffic and those where traffic had not been observed.

On the writer we create a new User Attribute Color and link the kml_style_url from the reader to it.

Also on the writer we define under the tab parameters a SQL script for the Spatial Index Creation.

alter table JapanRoads0319 add MyID int identity;

ALTER TABLE JapanRoads0319 ADD CONSTRAINT PK_JapanRoads0319 PRIMARY KEY CLUSTERED (MyID);

CREATE SPATIAL INDEX SI_JapanRoads0319 ON JapanRoads0319(GEOM) USING GEOMETRY_GRID WITH( BOUNDING_BOX = ( xmin = 122.935256958008, ymin = 24.2508316040039, xmax = 153.965789794922, ymax = 45.4863815307617), GRIDS = ( LEVEL_1 = MEDIUM, LEVEL_2 = MEDIUM, LEVEL_3 = MEDIUM, LEVEL_4 = MEDIUM), CELLS_PER_OBJECT = 16);In the navigator on the left hand side of the FME workbench we also define a SQL statement that runs after we completed the loading process and validates potentially invalid geometries.

update JapanRoads0319 set geom=geom.MakeValid();Now that we have the data in SQL Azure we can already start to analyse and find out how many road-kilometres are open to traffic and on how many road-kilometres traffic has not been verified, e.g.

SELECT color, sum(geography::STGeomFromWKB(GEOM.STAsBinary(), 4326).STLength())/1000 as [1903] from JapanRoads0319 group by colorIf we keep doing this with each of the daily data updates the results allow us to create graphs showing the improvements over time.

Tile Rendering and Caching

The tile rendering and cache handling is implemented in a Generic Web Handler (SqlTileServer.ashx). When you overlay a tile layer on top of Bing Maps the control will send for each tile in the current map view a HTTP-GET-Request to a virtual directory or in our case a web service. The request passes the quadkey of the tile as a parameter. In our service we will need to

- Determine if we have already cached the tile and if so retrieve it from the cache and return it to the map

- If we haven’t cached the tile

- determine the bounding box of the tile. This is where we use a couple of functions as listed in this article.

- query the database and find all spatial objects that intersect this bounding box.

- create a PNG image

- write this image to cache and also

- return the image to the map

In order to keep the communication between SQL Azure and the web service efficient we transport the data as binary spatial data types. This requires then of course that we decode the binary data in the web service. Fortunately the SQL Server Spatial team provides us with the means to do that. As part of the SQL Server 2008 R2 Feature Pack you will find the “Microsoft System CLR Types for SQL Server 2008 R2” which include the spatial data types and spatial functions and can be integrated in .NET applications and services even if you don’t have SQL Server installed.

Note: The package is available in 32bit, 64bit and Itanium versions. Windows Azure is based on Windows Server 2008 R2 64bit. If you want to deploy your solution on Windows Azure make sure you use the 64bit version of the “Microsoft System CLR Types for SQL Server 2008 R2”.

In the database we have a stored procedure that executes our spatial query.

See site for source code.

In order to convert geographic coordinates into pixel coordinates for the Bing Maps Tile System we use a couple of functions as listed in this article.

Since we want to cache the tiles in the Windows Azure Blob Storage we will also need to add references to the assemblies

- Microsoft.WindowsAzure.ServiceRuntime and

- Microsoft.WindowsAzure.StorageClient

Both assemblies are part of the Windows Azure SDK and the Windows Azure Tools for Microsoft Visual Studio, which includes the Windows Azure SDK.

Once we have the stored procedure in SQL Azure, the 3 assemblies (2 for Azure, 1 for SQL Server Spatial) and the helper functions for Bing Maps in place the code for the tile rendering looks like this.

See site for source code.

We also need a helper function to access the Windows Azure Blob Storage.

See site for source code.

Calling the Service from Bing Maps

Adding a tile layer in Bing Maps is very simple. Below you find the source code for a complete web page that calls our service.

See site for source code.

And that’s already it. In a very short time we were able to develop a service and deploy it as a scalable and highly available service in the cloud.

One final tip: If you also plan to use this service from other Silverlight applications in domains outside your Windows Azure environment you will need to publish a ClientAccessPolicy.xml file to the root of your Windows Azure hosted service. In our case that was necessary since we will access the service not only from the website itself but also from a Bing Map App.

The Windows Azure Team reported New Video Case Study: Volvo Selects Windows Azure to Launch Worldwide Game on 3/17/2011 (missed when posted):

Volvo Cars is a premium global car manufacturer recognized for its innovative safety systems and unique designs. Volvo was written into the original books that became the Twilight franchise, so there was a natural marketing partnership for movie releases. As part of the latest movie, Eclipse, Volvo wanted to create a virtual world resembling the Twilight story where users from around the globe could participate in a chance to win a new Volvo XC 60. However, the existing technology infrastructure could not provide the scalability or level of security required.

After consulting with Microsoft Gold Certified Partner LBI and evaluating other cloud services, Volvo selected the Windows Azure platform. Volvo has now successfully delivered an exciting, secure online gaming experience that scaled to meet demand and had a rapid time to market, all at a fraction of the previously expected costs.

Watch the video here: http://www.microsoft.com/casestudies/Windows-Azure/Volvo-Cars/Global-Car-Manufacturer-Selects-Microsoft-Cloud-Services-to-Launch-Worldwide-Game/4000008490

For more information about Windows Azure customer and partner stories, visit: www.windowsazure.com/evidence

<Return to section navigation list>

Visual Studio LightSwitch

Edu Lorenzo steps back with an overview in his Visual Studio LightSwitch Roundup post of 3/21/2011:

Okay, so I have blogged a few entries on how to this and that on Visual Studio Lightswitch. And I have gotten a lot of questions offline from folks who want to try it out. I have remembered several of the questions, and here are the things that I had to say, as answers.

But first, I would like to put everything in its proper context.

First, LightSwitch was made to be a tool for those with a beginner’s level (if not level 0) in programming. Although it makes use of pretty advanced technology/ies, it was not made primarily to develop enterprise level applications. It is more fit (IMHO) for smaller, probably single office/department applications that would require connectivity within the group. So for those who are into developing Enterprise level apps, LS is not for you. But if you are just pretending to be a developer of Enterprise Level apps.. well.. try notepad.

Second, I look at lightswitch as what I call a “New generation RAD tool”, nothing more. It is not the answer to all your problems.

And lastly, although I was able to illustrate that VS-LS uses industry accepted pattern and tools like MVVM, Entity Framework and Silverlight 4, this does not equate to one being an expert in any of these technologies even if one has already built and deployed an application using VS-LS.

At first glance, VS LS looks like a fun tool, and nothing more than that. But I did try to dig deeper and saw that there are two angles one might try to look at.

- How lightswitch was made and

- What lightswitch can make.

On how lightswitch was made, or deeper on what technologies it brings to the user, I am quite happy. Visual Studio LightSwitch was made to create small applications from scratch, with the least amount of coding as possible. Just imagine a tool that allows you to keep track of your cellphone contacts with the ability to search, filter, validate and deploy on either the desktop of the web, with zero lines of code. You will get menus, buttons, error messages, tabs, validation messages, dropdown data entry for phone numbers without needing to learn VB.Net or C#. This might get you to think that even a non-IT or a non-Developer person can make such, and I won’t blame you for that, because I also believe that is true.

There is one thing I can say about VS-LS though, that might surprise some. I am basing this on the fact, that VS-LS is available for free. Yes, all the things I wrote about, for free? Well it is a free world, and I am free to speculate as much as I want.. and blog about it. Hahaha!

For all those that are not in the know, most technologies we use are military products. Remote controlled planes, cars etc. GPS, NightVision Goggles and a lot more. I see this as like the same with technology in the software development arena. I see LS as a tool, whose parts are remnants of technologies used for developing MS-Sharepoint. If one looks deeper into a deployed Sharepoint site, and how it is maintained, the automation behind it and the patterns used for deployment, you might see some familiar stuff, and common with VS-LS. So in short, I see LS as more of a by-product than a whole product in itself. I know what I just wrote looks to be demeaning to the builders of VS-LS, but I see it as a very smart way of putting together available technology to build a totally separate application that can hold its own as a separate product. Not enough of that running around nowadays.

On what lightswitch can make, I have already expressed the essence of my thoughts. It is safer to look at VS-LS as a tool to create small apps, and that’s it. If one looks at it closer, one will see that the possibilities are huge. I know VS-LS can build larger apps. Its ability to hook up with RIA datasources and existing datasources gives it the ability to consume data from an enterprise level database. But access to a big DB does not make a small app an enterprise app. And if one does decide to use VS-LS to create such a large scaled app, then one should prepare for a lot of effort and tweaking. With that, I am not saying that it is impossible for one to make such a large scale app using LS, just prepare some headache tablets.

One dangerous note that I have though with VS-LS comes from VS-LS’ good feature. The fact that it uses current technologies to create an application is somewhat of a worry for me. I have often seen applicants trying to be accepted as developers. And when I look at their CV, sometimes, I get impressed. I see numerous technologies written down for each project they have either completed or have been a part of. JQuery, MVC, t-SQL, ASP.Net etc. I use these to guide the interview. Then I find out that the applicant who claims on paper that he/she has been administering a DB for the past year doesn’t even know the difference between a toupee and a tuple. Get my drift?

That interview would go..

Me: “Ah.. I see here you are familiar with EF, MVVM and Silverlight OOB. Why did you choose MVVM over MVC on such a recent project?”

Applicant: “Because MVVM has more letters than MVC?”

Me: “ah.. you used LightSwitch for this?”

Applicant: “yes sir.”

I know it is unfair to blame this on VS-LS, so I am not going to do that. This is a people problem, and a separate topic for a separate blog.

See My (@rogerjenn) SharePoint 2010 Lists’ OData Content Created by Access Services is Incompatible with ADO.NET Data Services post of 3/20/2010 describes a problem that conventional Visual Studio and LightSwitch applications have with some SharePoint 2010 data sources item in the Marketplace DataMarket and OData section above.

See Steve Yi asked Visual Studio LightSwitch Beta 2 – Where Have You Been All My Life? in a 3/18/2011 post to the SQL Azure Team blog in the SQL Azure Database and Reporting Services section above.

Return to section navigation list>

Windows Azure Infrastructure and DevOps

Patrick Butler Monterde described Windows Azure Guest OS Releases and SDK Compatibility Matrix on 3/17/2011 (missed when posted):

Link: http://msdn.microsoft.com/en-us/library/ee924680.aspx

The following guest operating systems have been released on Windows Azure:

- Windows Guest OS Family 2 which is substantially compatible with Windows Server 2008 R2

- Windows Guest OS Family 1 which is substantially compatible with Windows Server 2008 SP2

The included link shows which guest operating system releases are compatible with which versions of the Windows Azure SDK.

Note: To ensure that your service works as expected, you must deploy it to a release of the Windows Azure guest operating system that is compatible with the version of the Windows Azure SDK with which you developed it.

<Return to section navigation list>

Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

No significant articles today.

<Return to section navigation list>

Cloud Security and Governance

No significant articles today.

<Return to section navigation list>

Cloud Computing Events

The Windows Azure and SQL Azure teams announced a one-hour Leverage ParcelAtlas data on DataMarket to create Innovative Solutions (APP26CAL) Webinar on 3/31/2011 at 8:00 AM PDT:

Description:

Cloud technologies provide businesses running geospatial software and data, combined to lower implementation risks and increase margins. ParcelAtlas is patented technology that has changed the very notion of location in the world from a point on a map to a piece of the map itself, the area within its parcel boundary. Implications to transaction accuracy and throughput automation is touching near every business sector. Rather adding a NPL to your clients operations by jumping on an ISP server, jump instead on Azure, the Microsoft Cloud. BSI's patented technology has contributed to BSI being a sustained leader in both the technology and data sharing policy development needed to complete SEAMLESS USA, a 3,141 County Open Records NPL.

During this webinar you will learn:

- Business benefits associated with Cloud based GeoSpatial Information Services.

- How the DataMarket datasets helped establish ParcelAtlas

- Relationship between Cloud and other GeoSpatial Information Services.

- ParcelAtlas Database Scope and vast GeoSpatial functionality supported by API.

- How ParcelAtlas is like subscribing to SEAMLESS USA - Business opportunities in expediting the NPL.

- How an open records 3,140 County National Parcel Layer will be a wellspring to a 100,000 business ops.

Presenters

Dennis H. Klein

GISP, past PE, past AICP Boundary Solutions

Dennis started his GIS career in 1971 as a practicing planner who was esri's first private sector customer, the Bretton Wood, Mt Washington Development Program. After fifteen years in the planning trenches, Dennis went full time GIS, opening up Facility Mapping Systems in 1986, offering AutoCAD based GIS software for municipal and utilities. Shipped 3,000 systems, including hundreds to counties that started full function digital parcel maps early on. After nine years, sold to EaglePoint. Started next project. Building on experience of providing software to counties, now assembling, normalizing and deploying a national parcel layer online as ParcelAtlas, now available in MarketPlace.

Sudhir Hasbe

Senior Product Manager; SQL Azure and Middleware, Microsoft

Sudhir Hasbe is a Sr. Product Manager at Microsoft focusing on SQL Azure and Middleware. Mr. Hasbe is responsible for DataMarket, Microsoft's information marketplace. Mr. Hasbe is responsible for building ISV partner ecosystem around Windows Azure Platform. Mr. Hasbe has passion for incubation businesses and has worked at few startup businesses prior to Microsoft. Sudhir Hasbe has rich background in ERP, EAI and Supply chain management space. Prior to Microsoft Mr. Hasbe has worked with more than 55 companies in 10 countries over 10 years implementing and integrating ERP systems with various supply chain and data collection solutions.

The Windows Azure Bootcamp announced Windows Azure Office Hours on 3/21, 3/23, 3/29, 3/31, 4/4, 4/6, 4/8, 4/12 and 4/18/2011 for one hour starting at 7:00 AM PST:

Got questions? Come on in!

There are alot of people that are interested in the cloud, but they don't know how to get started. Maybe they just have a lot of questions before they decide to get going.

This is what we are here for. During office hours we will have the door wide open. Come on in, toss out your questions, and we'll help you anyway we can. The office will be staffed with cloud computing experts from Mirosoft, and some of our leading partners. No agenda, just show up and pitch us your questions.

No Registration. No emails. No commitments. Just show up.

To Join

Join online meetingEach meeting is one hour long.

Schedule

- March 21, 2011 – 10:00 am EST add to your calendar

- March 23, 2011 – 1:00 pm EST add to your calendar

- March 29, 2011 – 3:00 pm EST add to your calendar

- March 31, 2011 – 10:00 am EST add to your calendar

- April 4, 2011 – 1:00 pm EST add to your calendar

- April 6, 2011 – 3:00 pm EST add to your calendar

- April 8, 2011 – 11:00 am EST add to your calendar

- April 12, 2011 – 1:30 pm EST add to your calendar

- April 14, 2011 – 10:00 am EST add to your calendar

<Return to section navigation list>

Other Cloud Computing Platforms and Services

<Return to section navigation list>

0 comments:

Post a Comment