Windows Azure and Cloud Computing Posts for 3/15/2011+

| A compendium of Windows Azure, Windows Azure Platform Appliance, SQL Azure Database, AppFabric and other cloud-computing articles. |

• Updated 3/15/2011, 4:00 PM PDT with new articles by the Windows Azure Team, Beth Massi, Mary Jo Foley and Jeffrey Schwartz marked •.

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database and Reporting

- Marketplace DataMarket and OData

- Windows Azure AppFabric: Access Control, WIF and Service Bus

- Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch and Entity Framework v4

- Windows Azure Infrastructur and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Azure Blob, Drive, Table and Queue Services

No significant articles today.

<Return to section navigation list>

SQL Azure Database and Reporting

Ike Ellis and Scott Read compared Microsoft SQL Azure vs. Amazon RDS in a 3/2011 article for DevelopMentor with my comments added in square brackets:

Microsoft SQL Azure and Amazon RDS are marketed remarkably similarly. Both companies claim that their cloud database product makes it easy to migrate from on-premise servers to their database-as-a-service cloud offerings; simply migrate your schema and data to the cloud, then change a connection string in your application, and it works. They also emphasize that the same management tools used with an on-premise database can be used in the cloud. The emphasis on these similarities seems to imply that the main competition facing the two companies is on-premise offerings, rather than each other. An examination of both products shows that it is very difficult to directly compare them because the two vendors have taken an entirely different approach to architecture. The key difference arises from the fact that Amazon dedicates hardware resources to the user, while Microsoft shares resources among users. Perhaps the most significant result of this difference is the consequent disparity in pricing. However there are also other differences that may influence the decision of a consumer, and these are examined below.

PRICING

WINNER: SQL Azure

Due to architectural differences, the two companies have setup their own way of charging for their offerings. SQL Azure only charges by the amount of data stored (database size). There is no cost for dedicated CPU compute time or for memory used. In contrast, the Amazon offering charges for CPU time, regardless of the size of the database. SQL Azure can be priced between $10 - $500/month, while RDS can cost between $84 - $2100/month. As the chart shows below, SQL Azure can cost significantly less than RDS, but it depends on the particular situation. By zooming in on the graph, you can see that RDS will actually cost less when dealing with a small CPU and memory allocation and having more than 10GB of data. This pricing difference is significant enough that many organizations will make the choice solely on price.

[RJ comment: The graph is missing.]

BACKUP/RESTORE

WINNER: RDS

Have you ever gotten this phone call? “Uh, I accidentally deleted all the customers in the customer table… in production.” That’s when point in time restore will save your life. Well, maybe not your life, but it will definitely save your job. This category was an easy one to call because RDS offers backup/restore, while SQL Azure does not. RDS’s backup strategy allows for eight days of backups. It will also backup the logs and restore those logs to a point in time. The SQL Azure solution must be configured manually using existing on-premise tools which causes additional bandwidth charges. This is a significant drawback to SQL Azure which may prevent companies from migrating to this product.

[RJ comment: SQL Azure maintains an original and two replicas of the data in the same data center and automatically restores the data in the event of a failure.]

SCALING

WINNER: RDS

When scaling a database you have two choices, “scaling up” or “scaling out.” “Scaling up” is adding more CPUs or memory to one box, allowing it to process requests faster. “Scaling out” is partitioning the database into smaller chunks to put it on more servers which also increases the I/O throughput. Because RDS runs on dedicated hardware, it allows you to scale-up by choosing how much processing power and memory your instance uses. Although that is expensive, it is available. SQL Azure doesn’t offer the option of scaling up. Neither product offers an in-the-box scale out solution, but third-party software is available for both.

[RJ comment: SQL Azure scales up with added resources as you increase database size. SQL Azure will enable scaling out to multiple databases when SQL Azure Federation arrives later in 2011. See my Build Big-Data Apps in SQL Azure with Federation article in the March 2011 issue of Visual Studio Magazine.]

DATABASE SIZE

WINNER: RDS

RDS allows you to have up to a 1TB database size, while SQL Azure has a maximum capacity of 50GB. This will prohibit some companies from moving to SQL Azure.

[RJ comment: SQL Azure Federation will enable scaling out by automatic sharding later in 2011.]

PERFORMANCE

WINNER: RDS

We ran our own rudimentary performance tests from San Diego. We ran INSERTs and SELECTs against both products. RDS was significantly faster on INSERT performance, while SQL Azure was slightly faster on SELECT performance. Since Amazon’s datacenter is in Northern California, while Microsoft’s datacenter is in San Antonio, our location may account for some of the performance difference. It might also be because RDS runs on dedicated hardware, while Microsoft is a shared service. We were running RDS on the smallest allotment of CPU and memory, so had we been willing to pay more, RDS could be even faster. Again, that option isn’t available on SQL Azure.

[RJ comment: Given the choice between SELECT and INSERT performance, I’ll almost always choose SELECT because data returning queries usually are much more prevalent than INSERT operations.]

FEATURE SET

WINNER: RDS

RDS runs as an instance of a full-blown MySQL installation. As a result, RDS has every MySQL feature there is. RDS users can also choose a specific MySQL version to deploy. SQL Azure has a subset of the features that ship with SQL Server 2008. While SQL Server 2008 is arguably more full-featured than MySQL; feature for feature SQL Azure is might come out ahead, users who are used to particular features from SQL Server 2008 may find that they are not available in SQL Azure. For example, SQL Azure does not support XML indexes, CLR objects, or SQL Server Profiler.

[RJ comment: SQL Azure still beats MySQL in features, disregarding the lack of XML indexes, CLR objects or SQL Server Profiler; MySQL doesn’t offer these features.]

TOOLING

WINNER: SQL Azure

As previously stated, both vendors enable the user to use tools that they are already comfortable with. SQL Azure can be managed using SQL Server Management Studio 2008 R2. We were also able to connect using SQLCMD, Visual Studio 2010, and BCP. We used MySQL Workbench 5.2 to manage RDS, but all of the existing MySQL tools are available. All the tools for both products worked seamlessly and without incident. Because the cloud allows users to experiment with different technologies and platforms without the burden of management, installation, and licensing, we felt that a cloud database should provide some management software as a service (SaaS). This would allow novices to get comfortable with a new environment. Microsoft addresses this with a Silverlight database tool called Database Manager (formally Project Houston). Using this simple and small-featured tool, anyone can get started creating tables, adding data, and creating stored procedures without installing local software. We ran it using Internet Explorer on Windows 7 and using Chrome on Mac OSX. While the fact that it even exists is superior to RDS, Microsoft’s tooling could be improved. For example, SQL Server Management Studio doesn’t have any designers when creating objects while connected to SQL Azure, whereas Workbench does when connected to RDS.

DISASTER RECOVERY

WINNER: SQL Azure

RDS allows you to create a standby replica of your database, but this is not done automatically. SQL Azure automatically creates standby servers when you create a new database. RDS’s standby replicas can be in a different data center in the same geographic region (called a multiple availability zone deployment), unlike SQL Azure. In SQL Azure, there is no additional cost for standby replicas, while RDS replicas can double or even triple the cost.

FUTURE ROADMAP

WINNER: SQL Azure

The future of SQL Azure assures an even stronger cloud database offering. Well-published roadmaps promise new features including SQL Azure Reporting Services, SQL Azure Data Sync Services, and SQL Azure OData support. With a little digging we found that Microsoft is addressing their woeful backup shortcomings and their limited maximum database size. In videos from PDC and TechEd Berlin, we found references to SQL Server Analysis Services in the cloud, SQL Server Integration Services in the cloud, and dedicated compute and memory SLAs. Meanwhile, Amazon has said that in Q2-2011, they will offer RDS using Oracle 11g as well as MySQL. While that is compelling, we expect this to raise their prices even higher due to the licensing costs. We weren’t able to find any other details on their future plans.

[RJ comment: “SQL Azure Reporting Services, SQL Azure Data Sync Services, and SQL Azure OData support” were available as Community Technical Previews (CTPs) in March 2011 when this comparison published.]

CONCLUSION

There are two major differences between the Microsoft SQL Azure and Amazon RDS platforms: pricing and capabilities. If price is no object and the user wants full features and high performance, then RDS is the obvious choice. If the user is more cost-conscious, SQL Azure has enough features and is good enough for many use-cases. The two exceptions are if a user has a database larger than 50GB, or needs a mature backup system. These are not possible with SQL Azure. That said, in most instances, Microsoft developers will favor SQL Azure because of their comfort level with the T-SQL syntax and the Microsoft tooling. Ruby and Java developers who have written MySQL applications will be inclined to choose RDS. With that in mind, perhaps these products aren’t competing with one another after all.

[RJ comments: I have never found that “price is no object” in a commercial programming environment.]

Shaun Xu announced SQL Azure Reporting Limited CTP Arrived in a 2/16/2011 post (missed when published):

It’s about 3 months later when I registered the SQL Azure Reporting CTP on the Microsoft Connect after TechED 2010 China. Today when I checked my mailbox I found that the SQL Azure team had just accepted my request and sent the activation code over to me. So let’s have a look on the new SQL Azure Reporting.

Concept

The SQL Azure Reporting provides cloud-based reporting as a service, built on SQL Server Reporting Services and SQL Azure technologies. Cloud-based reporting solutions such as SQL Azure Reporting provide many benefits, including rapid provisioning, cost-effective scalability, high availability, and reduced management overhead for report servers; and secure access, viewing, and management of reports. By using the SQL Azure Reporting service, we can do:

- Embed the Visual Studio Report Viewer ADO.NET Ajax control or Windows Form control to view the reports deployed on SQL Azure Reporting Service in our web or desktop application.

- Leverage the SQL Azure Reporting SOAP API to manage and retrieve the report content from any kinds of application.

- Use the SQL Azure Reporting Service Portal to navigate and view the reports deployed on the cloud.

Since the SQL Azure Reporting was built based on the SQL Server 2008 R2 Reporting Service, we can use any tools we are familiar with, such as the SQL Server Integration Studio, Visual Studio Report Viewer. The SQL Azure Reporting Service runs as a remote SQL Server Reporting Service just on the cloud rather than on a server besides us.

Establish a New SQL Azure Reporting

Let’s move to the Windows Azure Deveploer Portal and click the Reporting item from the left side navigation bar. If you don’t have the activation code you can click the Sign Up button to send a requirement to the Microsoft Connect. Since I already recieved the received code mail I clicked the Provision button.

Then after agree the terms of the service I will select the subscription for where my SQL Azure Reporting CTP should be provisioned. In this case I selected my free Windows Azure Pass subscription.

Then the final step, paste the activation code and enter the password of our SQL Azure Reporting Service. The user name of the SQL Azure Reporting will be generated by SQL Azure automatically.

After a while the new SQL Azure Reporting Server will be shown on our developer portal. The Reporting Service URL and the user name will be shown as well. We can reset the password from the toolbar button.

Deploy Report to SQL Azure Reporting

If you are familiar with SQL Server Reporting Service you will find this part will be very similar with what you know and what you did before. Firstly we open the SQL Server Business Intelligence Development Studio and create a new Report Server Project.

Then we will create a shared data source where the report data will be retrieved from. This data source can be SQL Azure but we can use local SQL Server or other database if it opens the port up. In this case we use a SQL Azure database located in the same data center of our reporting service. In the Credentials tab page we entered the user name and password to this SQL Azure database.

The SQL Azure Reporting CTP only available at the North US Data Center now so that the related SQL Server and hosted service might be better to select the same data center to avoid the external data transfer fee.

Then we create a very simple report, just retrieve all records from a table named Members and have a table in the report to list them. In the data source selection step we choose the shared data source we created before, then enter the T-SQL to select all records from the Member table, then put all fields into the table columns. The report will be like this as following

In order to deploy the report onto the SQL Azure Reporting Service we need to update the project property. Right click the project node from the solution explorer and select the property item. In the Target Server URL item we will specify the reporting server URL of our SQL Azure Reporting. We can go back to the developer portal and select the reporting node from the left side, then copy the Web Service URL and paste here. But notice that we need to append “/reportserver” after pasted.

Then just click the Deploy menu item in the context menu of the project, the Visual Studio will compile the report and then upload to the reporting service accordingly. In this step we will be prompted to input the user name and password of our SQL Azure Reporting Service. We can get the user name from the developer portal, just next to the Web Service URL in the SQL Azure Reporting page. And the password is the one we specified when created the reporting service. After about one minute the report will be deployed succeed.

View the Report in Browser

SQL Azure Reporting allows us to view the reports which deployed on the cloud from a standard browser. We copied the Web Service URL from the reporting service main page and appended “/reportserver” in HTTPS protocol then we will have the SQL Azure Reporting Service login page.

After entered the user name and password of the SQL Azure Reporting Service we can see the directories and reports listed. Click the report will launch the Report Viewer to render the report.

View Report in a Web Role with the Report Viewer

The ASP.NET and Windows Form Report Viewer works well with the SQL Azure Reporting Service as well. We can create a ASP.NET Web Role and added the Report Viewer control in the default page. What we need to change to the report viewer are

- Change the Processing Mode to Remote.

- Specify the Report Server URL under the Server Remote category to the URL of the SQL Azure Reporting Web Service URL with “/reportserver” appended.

- Specify the Report Path to the report which we want to display. The report name should NOT include the extension name. For example my report was in the SqlAzureReportingTest project and named MemberList.rdl then the report path should be /SqlAzureReportingTest/MemberList.

And the next one is to specify the SQL Azure Reporting Credentials. We can use the following class to wrap the report server credential.

1: private class ReportServerCredentials : IReportServerCredentials2: {3: private string _userName;4: private string _password;5: private string _domain;6:7: public ReportServerCredentials(string userName, string password, string domain)8: {9: _userName = userName;10: _password = password;11: _domain = domain;12: }13:14: public WindowsIdentity ImpersonationUser15: {16: get17: {18: return null;19: }20: }21:22: public ICredentials NetworkCredentials23: {24: get25: {26: return null;27: }28: }29:30: public bool GetFormsCredentials(out Cookie authCookie, out string user, out string password, out string authority)31: {32: authCookie = null;33: user = _userName;34: password = _password;35: authority = _domain;36: return true;37: }38: }And then in the Page_Load method, pass it to the report viewer.

1: protected void Page_Load(object sender, EventArgs e)2: {3: ReportViewer1.ServerReport.ReportServerCredentials = new ReportServerCredentials(4: "<user name>",5: "<password>",6: "<sql azure reporting web service url>");7: }Finally deploy it to Windows Azure and enjoy the report.

Summary

In this post I introduced the SQL Azure Reporting CTP which had just available. Likes other features in Windows Azure, the SQL Azure Reporting is very similar with the SQL Server Reporting. As you can see in this post we can use the existing and familiar tools to build and deploy the reports and display them on a website. But the SQL Azure Reporting is just in the CTP stage which means

- It is free.

- There’s no support for it.

- Only available at the North US Data Center [I believe it’s only available at the South Central US data center].

You can get more information about the SQL Azure Reporting CTP from the links following:

You can download the solutions and the projects used in this post here.

I’m still waiting for Shaun’s promised descriptive material about his Partition-Oriented Data Access project, which he described in his Happy Chinese New Year! post of 1/31/2011:

If you have heard about the new feature for SQL Azure named SQL Azure Federation, you might know that it’s a cool feature and solution about database sharding. But for now there seems no similar solution for normal SQL Server and local database. I had created a library named PODA, which stands for Partition Oriented Data Access which partially implemented the features of SQL Azure Federation. I’m going to explain more about this project after the Chinese New Year but you can download the source code here.

<Return to section navigation list>

MarketPlace DataMarket and OData

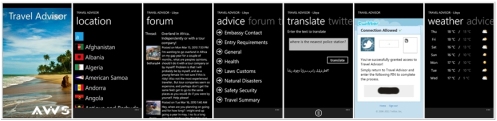

Richard Prodger described Travel Advisor – A Windows Azure DataMarket and Windows Phone 7 integration exercise on 3/10/2011 (missed when posted):

Over the last couple of months, I been leading a small project looking at extending the reach of the cloud to handheld devices. To test this out, we’ve built a Windows Phone 7 application that consumes data feeds from Windows Azure DataMarket, Bing, twitter and others. The app, known as “Travel Advisor”, went live on the Windows Phone 7 MarketPlace today.

What is it?

The application is ideal for people travelling abroad, perfect for the adventurous or regular traveller, the app provides up to the minute travel advice and warnings on every country listed by the UK Foreign and Commonwealth office. Travel Advisor provides embassy contact information, entry requirements, local customs information, local health advice, vaccination recommendations and much more. In addition the app provides language translation services, currency conversion and local weather forecasts. Live integration with the forums of GapYear.com provides access to a community of extreme travellers and the location aware Twitter integration allows you to keep your social network up to date with your adventures.

How does it work?

The UK Foreign and Commentwealth Office publishes travel advisory data as RSS feeds from their web site. We’ve created a Windows Azure worker role hosted application that monitors the RSS feeds and updates a SQL Azure database with the latest advice. The Windows Azure DataMarket then provides a discoverable interface for consuming this data in OData format. Our Windows Phone 7 application connects to the DataMarket and retrieves the data on demand. To augment the travel advice, the phone app also consumes weather data hosted on the DataMarket by Weather Central. In addition to the the DataMarket data, the phone app makes use of Bing services for language translation and currency conversion. With twitter integration, you can use the app to tweet a location message to your feed. The app makes use of the built in GPS to automatically select the appropriate country when you are outside the UK.

Watch a video demo here.

If you have a Windows Phone 7, please download the app from the MarketPlace and let us know what you think. It’s free!

Jon Galloway and Jesse Liberty produced a 00:39:39 Full-Stack Video Webcast: Windows Phone Development with TDD and MVVM segment:

In this episode of The Full Stack, Jesse and Jon reboot their windows phone client project using Test Driven Development (TDD) and the Model View ViewModel (MVVM) pattern.

Previous episodes focused on getting different technologies such as Windows Phone, WCF, and OData, connected. With a better understanding of the technology and some working code, we decided it's time to restructure the project using some sustainable practices and patterns.

Watch the video segment here.

<Return to section navigation list>

Windows Azure AppFabric: Access Control, WIF and Service Bus

Vittorio Bertocci (@vibronet) continued his Fabrikam series with Fun with FabrikamShipping SaaS II: Creating an Enterprise Edition Instance on 3/14/2011:

I finally found (read: made) the time to get back to the “Fun with FabrikamShipping SaaS” series. As in the first installment (where I provided a “script” for going through the creation and use of a small business edition instance) here I will walk you through the onboarding and use of a new Enterprise instance of FabrikamShipping SaaS without going in the implementation details. Later posts (I hate myself when I commit like this) will build on those walkthroughs for explaining how we implemented certain features. I would suggest you skim through the Small Business walkthrough before jumping to this one, as there are few things that I covered at length there and I won’t repeat here.

Demoing the Enterprise edition is a bit more demanding than the Small Business edition, mostly because in the enterprise case we require the customer to have a business identity provider available. Also, every new subscription requires us to spin a new dedicated hosted service, hence we approve very few new ones in the live instance of the demo. The good news is that we provide you with helpers which allow you to experience the demo end to end without paying the complexity costs that the aforementioned two points entail: the enterprise companion and a pre-provisioned enterprise subscription. In this walkthrough I will not make use of any special administrative powers, but I will instead use those two assets exactly in the way in which you would. And without further ado…

Subscribing to an Enterprise Edition instance of FabrikamShipping

Today we’ll walk a mile in the shoes of Joe. Joe handles logistic for AdventureWorks, a mid-size enterprise which crafts customized items on-demand. AdventureWorks invested in Active Directory and keeps its users neatly organized in organizational and functional groups.

AdventureWorks needs to streamline its shipment practices, but does not want to develop an in-house solution; Joe is shopping for a SaaS application which can integrate with AdventureWorks infrastructure and processes, and lands on FabrikamShipping SaaS. The main things about FabrikamShipping which capture Joe’s interest are:

- The promise of easy Single Sign On for all AdventureWorks’ employees to the new instance

- The exclusive use of resources: enterprise instances of FabrikamShipping are guaranteed to run on dedicated compute and DB resources that are not shared with any other enterprise customer, with all the advantages which come with it (complete isolation, chance to fine-tune the amount of instances on which the service runs according to demand, heavy customizations are possible, and so on)

- The possibility to reflect in the application’s access rights the existing hierarchy and attributes defined in AdventureWorks’ AD

- The existence of REST-based programmatic endpoints which would allow the integration of shipment and management capabilities in existing tools and processes

And all this in full SaaS tradition: a new customized instance of FabrikamShipping can be provisioned simply by walking through an online wizard, and after that all that is required for accessing the application is a browser.

The feature set of the Enterprise edition fits the bill nicely, hence Joe goes ahead and subscribes at https://fabrikamshipping.cloudapp.net/. The first few steps are the same ones we saw for the small biz edition.

(here I am using Live ID again, but of course you can use google or facebook just as well. Remember the note about subscriptions being unique per admin subscriber)

Once signed in, you land on the first page of the subscription wizard.

Compared to the corresponding screen in the subscription wizard for the Small Biz edition, you’ll notice two main differences:

- The “3. Users” tab is not present. In tis place there are two tabs, “3. Single Sign On” and “4. Access Policies”. We’ll see the content of both in details, here I’d just like to point out that our general purpose visualization engine is the same and here it is simply reflecting the different kind of info we need to gather for creating an enterprise-type instance.

- There’s a lot more text: that’s for explaining some behind-the-scenes of the demo and setting expectations. I’ll pick up some of those points in this walkthrough

Who reads all that text anyway? Let’s just hit Next.

The Company Data step is precisely the same as the one in the Small Biz edition, all the same considerations apply; to get to something interesting we have to hit Next one more time.

Now things start getting interesting. Rather than paraphrasing, let me paste here what I wrote in the UI:

One of the best advantages of the Enterprise Edition of FabrikamShipping is that it allows your employees to access the application with their corporate credentials: depending on your local setups, they may even be able to gain access to FabrikamShipping without even getting prompted, just like they would access an intranet portal.

The subscription wizard can automatically configure FabrikamShipping to recognize users from your organization, but for doing so it requires a file which describes your network setup. If your company uses Active Directory Federation Services 2.0 (ADFS 2.0) that file is named FederationMetadata.xml: please ask your network administrator to provide it to you and upload it here using the controls below.

If you are not sure about the authentication software used in your organization, please contact your network administrator before going further in the subscription wizard and ask if your infrastructure supports federation or if a different subscription level may better suit your needs.Modern federation tools such as ADFS2.0 can generate formal descriptions of themselves: information such as which addresses should be used, which certificate should be used to verify signatures and which claims you are willing to disclose about your users can be nicely packaged in a well-known format, in this case WS-Federation metadata. Such descriptions can be automatically consumed by tools and APIs, so that trust relationships can be established automatically without exposing administrators to any of the underlying complexity. As a result, you can set up single sign on between your soon-to-be new instance of FabrikamShipping and your home directory just by uploading a file.

What happens here is that the subscription wizard does some basic validation on the uploaded metadata - for example it verifies that you are not uploading metadata which are already used for another subscriber - then it saves it along with all the other subscription info. At provisioning time, at the end of the wizard, the provisioning engine will use the federation metadata to call some ACS APIs for setting up AdventureWorks as an Identity Provider.

That’s a very crisp demonstration of the PaaS nature of the Windows Azure platform: I don’t have to manage a machine with a federation product on top of it in order to configure SSO, I can just call an API to configure the trust settings and the platform will take care of everything else. That’s pure trust on tap, and who cares about the pipes.

Note 1: You don’t have an ADFS2.0 instance handy? We’ve got you covered! Jump to the last section of the post to learn how to use SelfSTS as a surrogate for this demo.

Note 2: If you use ADFS2.0 here, you’ll need to configure the new instance as a valid relying party. Once your instance will be ready, you’ll get all the necessary information via email.

Also: the claims that FabrikamShipping needs are email, given name, surname name, other phone and group. You can use any claim type as long as they contain the same information, however if you use the schemas.xmlsoap.org URIs things will be much easier (FabrikamShipping will automatically create mappings: see the next screen).Let’s say that Joe successfully uploaded the metadata file and can now hit Next.

The Access Policies screen is, in my opinion, the most interesting of the entire wizard. Here you decide who in your org can do what in your new instance.

FabrikamShipping instances recognize three application roles:

- Shipping Creators, who can create (but not modify) shipments

- Shipping Managers, who can create AND modify shipments

- Administrators, who can can do all that the Shipping Managers can do and in addition (in a future version of the demo) will have access to some management UI

Those roles are very application-specific, and asking the AdventureWorks administrators to add them in their AD would be needlessly burdening them (note that they can still do it if they choose to). Luckily, they don’t have to: In this screen Joe can determine which individuals in the existing organization will be awarded which application role.

FabrikamShipping is, of course, using claims-based identity under the hood, but the word “claim” never occur on the page. Note how the UI is presenting to the user very easy and intuitive choices: for every role there’s the option to assign it to all users or none, there’s no mention of claims or attributes. If Joe so chooses, he can pick the Advanced option and have access to a further level of sophistication: in that case, the UI offers the possibility of defining more fine-grained rules which assign the application roles only when certain claims with certain values are present. Note that the claim types list has been obtained directly from AdventureWork’s metadata.

Once again, you can see PaaS in action. All the settings Joe is entering here will end up being codified as ACS rules at provisioning time, via management API.

The lower area on the screen queries Joe about how to map the claims coming from AdventureWorks in the user attributes that FabrikamShipping needs to know in order to perform its function (for example, for filling the sender info for shipments). One of the advantages of claims based identity is that often there is no need to pre-provision users, as the necessary user info can be obtained just in time directly at runtime together with the authentication token. In this case AdventureWorks claims are a perfect match for FabrikamShipping, but it may not always be the case (for example instead of having Surname your ADFS2 may offer Last Name, which contains the same info but it is codified as a different claim type).

Once the settings are all in, hitting Next will bring to the last screen of the wizard.

This is again analogous to the last screen in the subscription wizard for Small Biz, in also in this case I am going to defer the explanation for the Windows Azure-PayPal integration to a future post.

Let’s click on the link for monitoring the provisioning status.

Now that’s a surprise! Whereas the workflow for the Small Biz provisioning was just 3 steps long, here the steps are exactly twice that number. But more importantly, some of the steps here are significantly more onerous. You can refer to the session I did at TechEd Europe about details on the provisioning, or to a future post (I feel for my future self, I am really stacking up A LOT of those

) but let me just mention here that one Enterprise Edition instance requires the manual creation of a new hosted service, the dynamic creation of a new Windows Azure package and deployment, the startup of a new web role and so on. That’s all stuff which require resources and some time, which is why we accept very few new Enterprise subscriptions. However! Walking through the provisioning wizard is useful per se, for getting a feeling of how onboarding for an enterprise type SaaS application may look like and for better understanding the source code.

If instead you are interested to play with the end result, we got you covered as well! You can access a pre-provisioned Enterprise instance of FabrikamShipping, named (surprise surprise) AdventureWorks. All the nécessaire for accessing that instance is provided in the FabrikamShipping SaaS companion\, which I’ll cover in the next section.

For the purpose of this walkthrough, however, let’s assume that the subscription above gets accepted and processed. After some time, Joe will receive an email containing the text below:

Your instance of FabrikamShipping Enterprise Edition is ready!

The application is available at the address https://fs-adventureworks1.cloudapp.net/. Below you can find some instructions that will help you to get started with your instance.

You can manage your subscription by visiting the FabrikamShipping management console <https://fabrikamshipping.cloudapp.net/SubscriptionConsole/Management/Index>

. Please make sure to use the same account you used when you created the subscription.

Single Sign On

If you signed up using SelfSTS, make sure that the SelfSTS endpoint is active when you access the application. SelfSTS should use the same signing certificate that was active at subscription time. If you configured your tenant using the companion package <http://code.msdn.microsoft.com/fshipsaascompanion> , the SelfSTS should already be configured for you.

If you indicated your ADFS2.0 instance (or equivalent product) at sign-up time, you need to establish Relying Party Trust with your new instance before using it. You can find the federation metadata of the application at the address https://fabrikamshipping.accesscontrol.appfabriclabs.com/FederationMetadata/2007-06/FederationMetadata.xml

Expiration

Your instance will be de-provisioned within 2 days. Once the application is removed we will notify you.

_____

If you want a demonstration of how to use FabrikamShipping, please refer to the documentation at www.fabrikamshipping.com <http://www.fabrikamshipping.com/> . If you want to take a peek at what happens behind the scenes, you can download the FabrikamShipping Source Package <http://code.msdn.microsoft.com/fshipsaassource> which features the source code of the entire demo.

For any question or feedback, please feel free to contact us at fshipsupport@microsoft.com.

Thank you for your interest in the Windows Azure platform, and have fun with FabrikamShipping!

Sincerely,

the Windows Azure Platform Evangelism Team

Microsoft C 2010

The first part of the mail communicates the address at which the new instance is now active. Note the difference between the naming schema we used for Small Business, https://fabrikanshipping-smallbiz.cloudapp.net/<subscribercompany>, and the one we use here, .cloudapp.net">https://fs-<subscribercompany>.cloudapp.net. The first schema betrays its (probably) multitenant architecture, whereas here it’s pretty clear that every enterprise has its own exclusive service. Among the many differences between the two approaches, there is the fact that in the small biz case you use a single SSL certificate for all tenants, whereas here you need to change it for everyone (no wildcards). As you can’t normally obtain a <something>.cloudapp.net certificate, we decided to just use self-signed certs and have the red bar show so that you are aware of what’s going on in there. In a real life app FabrikamShipping would likely offer a “vanity URL” service instead of the naming schema used here, which would eliminate the certificate problem here. Anyway, long story short: later you’ll see a red bar in the browser, and that’s by design.

The Single Sign On section gives indications on how to set up the Relying Party Trust toward FabrikamShipping on your ADFS; of course what you are really doing is pointing to the ACS namespace that FabrikamShipping is leveraging. Once you’ve done that, Joe just needs to follow the link at the beginning of the mail to witness the miracle of federation & SSO unfold in front of his very eyes.

Accessing the AdventureWorks Instance with the Enterprise Companion

When we deployed the live instance of FabrikamShipping, we really thought hard how to make it easy for you guys to play with the demo. For the Small Biz it was easy, all you need is a browser, but the Enterprise posed challenges. Just how many developers have access to one ADFS2 instance to play with? And if they do, just how long they’ll have to wait between their subscription wizard run and when we have time to provision their subscription?

In order to ease things for you, we attached the problem on two fronts:

- We created a self contained WS-Federation STS surrogate, which does not require installation, supports federation metadata generation, uses certificates form the file system and has some limited claim editing capabilities. That’s the (now) well known SelfSTS.

- We used one pre-defined SelfSTS instance to provision one Enterprise edition instance, which we named (surprise!) AdventureWorks, then we made that pre-configured SelfSTS available for download so that everybody can run it on their local machine, pretend that it is the AdventureWorks’s ADFS2 and use it to access the aforementioned pre-provisioned FabrikamShipping SaaS instance.

All that stuff (and more) ended up in the package we call the Enterprise Companion, which (just like the source code package) can be downloaded from code gallery.

Once you download and install the companion, check out the StartHere.htm page: it contains the basic instructions for playing with the AdventureWorks instance (and more, which I will cover in the next “Fun with..” installment).

In fact, all you need to do is to launch the right SelfSTS and navigate to the instance’s address. Let’s do that!

Assuming that you unpacked the companion in the default location, you’ll find the SelfSTS in C:\FabrikamShippingSaaS_Companion\assets\OAuthSample\AdventureWorks.SelfSTS\SelfSTS.exe. The name already gives away the topic of the next “Fun with” post. If you launch it and you click on Edit Claim Types and Values you’ll see which claims are being sent. You can change the values, but remember that the AdventureWorks instance has been set with this set of claim types: if you change the types, you won’t be able to access the instance.

Close the Edit Claims window, and hit the green button Start; the button will turn red, and SelfSTS will begin listening for requests. At this point you just need to navigate to https://fs-adventureworks1.cloudapp.net/ and that’s it! If you want to verify that the federation exchange is actually taking place, you can use your standard inspection tools (Fiddler, HttpWatch, IE9 dev tools) to double check; below I am using HttpWatch. Remember what I said above about the browser’s red bar being by design here.

…and that’s pretty much it! Taken out of context this is just a web site federating with a WS-Federation IP thru ACS, but if you consider that this instance has been entirely generated from scratch starting from the company info provided during the subscription wizard, just leveraging the management APIs of Windows Azure, SQL Azure and ACS, and that the same machinery can be used again and again and again for producing any custom instance you want, that’s pretty damn impressive. Ah, and of course the only things that are running on premise here are the STS and the user’s browser; everything else is in the cloud.

You don’t have ADFS2.0 but you want to sign-up for a new Enterprise Instance?

In the part of the walkthrough covering the subscription wizard I assumed that you have an ADFS2 instance handy from where you could get the required FederationMetadata.xml document, but as I mentioned in the last section we know that it is not always the case for developers. In order to help you to sign up for a new instance even if you don’t have ADFS2 (remember the warnings about us accepting very few new instances), in addition to the AdventureWorks SelfSTS we packed in the Enterprise companion a SECOND SelfSTS instance, that you can modify to your heart’s content and use as a base for creating a new subscription. The reason for which we have two SelfSTSes is that no two subscription can refer to the same IP metadata (for security reasons), hence if you want to create a new subscription you cannot reuse the metadata that come in the SelfSTS described in the last section as those are already tied to the preprovisioned AdventureWorks instance. At the same time, we don’t want to force you to modify that instance of SelfSTS as it would make impossible to you to get to AdventureWorks again (unless you re-download the companion).

The second SelfSTS, which you can find in C:\FabrikamShippingSaaS_Companion\assets\SelfSTS\SelfSTS\bin\Release, out of the box is a copy of the AdventureWorks one. There was no point making it different, because you have to modify it anyway (remember, you are sharing the sample with everybody else who downloaded the companion hence you all need to have different metadata if you want to create new subscriptions). Creating different metadata is pretty simple, in fact all you need to do is to generate a new certificate (the introductory post about SelfSTS explains how) and you’re done.

The last thing you need to do before being ready to use that copy of SelfSTS in the new subscription wizard is to generate the metadata file itself. I often get feature requests about generating the metadata document file from the SelfSTS, but in fact it is very simple tog et one already with today’s UI:

- Click Start on the SelfSTS UI

- Hit the “C” button on the right of the Metadata filed; that will copy on the clipboard the address from which SelfSTS serves the metadata document

- Open notepad, File->Open, paste the metadata address: you’ll get the metadata bits

- Save in a file with .XML extension

Et voila’! Metadata document file a’ la carte, ready to be consumed by the subscription wizard. By the way, did I mention that we provision really few enterprise instances and if you want to experience the demo there’s a preprovisoned instance you can go thru? Ah right, that was 1/2 the post so I did mention that. I’m sorry, it must be the daylight savings

Neext?

Phew, long post is long. This was the second path you can take through FabrikamShipping SaaS to experience how the demo approached the tradeoffs entailed in putting together a SaaS solution. There is a third one, and it’s in fact a sub-path of the Enterprise edition: it shows how an Enterprise instance of FabrikamShipping SaaS offers not only web pages, but also web services which can be used to automate some aspects of the shipping processes. The web services aren’t too interesting per se, what is interesting is how they are secured: using OAuth2 and the autonomous profile for bridging between a business IP and REST services, all going through ACS, of course still being part of the dynamic provisioning that characterized the generation of the instance as a whole. That scenario is going to be the topic of the third and last installment of the “Fun with FabrikamShipping SaaS” series: after those, I’ll start slicing the sample to reach the code and uncover the choices we made, the solutions we found and the code you can reuse for handling the same issues in your own solutions.

<Return to section navigation list>

Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

• The Windows Azure Team suggested on 3/15 that you Register Now for Webinar This Thursday, March 17, "Windows Azure CDN - New Features":

If you want to learn more about the new and upcoming features of the Windows Azure Content Delivery Network (CDN), don't miss the free Academy Live Webinar this Thursday, March 17 at 8:00 AM PDT, "Windows Azure CDN - New Features." Hosted by Windows Azure CDN program management lead Jason Sherron, this session will include a quick review of the Windows Azure CDN, as well as an overview of new and soon-to-be released features. There will be time for Q&A during and following the presentation.

Click here to learn more about this session and to register.

Shaun Xu described CDN on Hosted Service in Windows Azure in a 3/10/2011 post (missed when posted):

Yesterday I told Wang Tao, an annoying colleague sitting beside me, about how to make the static content enable the CDN in his website which had just been published on Windows Azure. The approach would be

- Move the static content, the images, CSS files, etc. into the blob storage.

- Enable the CDN on his storage account.

- Change the URL of those static files to the CDN URL.

I think these are the very common steps when using CDN. But this morning I found that the new Windows Azure SDK 1.4 and new Windows Azure Developer Portal had just been published announced at the Windows Azure Blog. One of the new features in this release is about the CDN, which means we can enabled the CDN not only for a storage account, but a hosted service as well. Within this new feature the steps I mentioned above would be turned simpler a lot.

Enable CDN for Hosted Service

To enable the CDN for a hosted service we just need to log on the Windows Azure Developer Portal. Under the “Hosted Services, Storage Accounts & CDN” item we will find a new menu on the left hand side said “CDN”, where we can manage the CDN for storage account and hosted service. As we can see the hosted services and storage accounts are all listed in my subscriptions.

To enable a CDN for a hosted service is veru simple, just select a hosted service and click the New Endpoint button on top.

In this dialog we can select the subscription and the storage account, or the hosted service we want the CDN to be enabled. If we selected the hosted service, like I did in the image above, the “Source URL for the CDN endpoint” will be shown automatically. This means the windows azure platform will make all contents under the “/cdn” folder as CDN enabled. But we cannot change the value at the moment.

The following 3 checkboxes next to the URL are:

- Enable CDN: Enable or disable the CDN.

- HTTPS: If we need to use HTTPS connections check it.

- Query String: If we are caching content from a hosted service and we are using query strings to specify the content to be retrieved, check it.

Just click the “Create” button to let the windows azure create the CDN for our hosted service. The CDN would be available within 60 minutes as Microsoft mentioned. My experience is that about 15 minutes the CDN could be used and we can find the CDN URL in the portal as well.

Put the Content in CDN in Hosted Service

Let’s create a simple windows azure project in Visual Studio with a MVC 2 Web Role. When we created the CDN mentioned above the source URL of CDN endpoint would be under the “/cdn” folder. So in the Visual Studio we create a folder under the website named “cdn” and put some static files there. Then all these files would be cached by CDN if we use the CDN endpoint.

The CDN of the hosted service can cache some kind of “dynamic” result with the Query String feature enabled. We create a controller named CdnController and a GetNumber action in it. The routed URL of this controller would be /Cdn/GetNumber which can be CDN-ed as well since the URL said it’s under the “/cdn” folder. In the GetNumber action we just put a number value which specified by parameter into the view model, then the URL could be like /Cdn/GetNumber?number=2.

1: using System;2: using System.Collections.Generic;3: using System.Linq;4: using System.Web;5: using System.Web.Mvc;6:7: namespace MvcWebRole1.Controllers8: {9: public class CdnController : Controller10: {11: //12: // GET: /Cdn/13:14: public ActionResult GetNumber(int number)15: {16: return View(number);17: }18:19: }20: }And we add a view to display the number which is super simple.

1: <%@ Page Title="" Language="C#" MasterPageFile="~/Views/Shared/Site.Master" Inherits="System.Web.Mvc.ViewPage<int>" %>2:3: <asp:Content ID="Content1" ContentPlaceHolderID="TitleContent" runat="server">4: GetNumber5: </asp:Content>6:7: <asp:Content ID="Content2" ContentPlaceHolderID="MainContent" runat="server">8:9: <h2>The number is: <%1: : Model.ToString()%></h2>10:11: </asp:Content>Since this action is under the CdnController the URL would be under the “/cdn” folder which means it can be CDN-ed. And since we checked the “Query String” the content of this dynamic page will be cached by its query string. So if I use the CDN URL, http://az25311.vo.msecnd.net/GetNumber?number=2, the CDN will firstly check if there’s any content cached with the key “GetNumber?number=2”. If yes then the CDN will return the content directly; otherwise it will connect to the hosted service, http://aurora-sys.cloudapp.net/Cdn/GetNumber?number=2, and then send the result back to the browser and cached in CDN.

But to be notice that the query string are treated as string when used by the key of CDN element. This means the URLs below would be cached in 2 elements in CDN:

- http://az25311.vo.msecnd.net/GetNumber?number=2&page=1

- http://az25311.vo.msecnd.net/GetNumber?page=1&number=2

The final step is to upload the project onto azure.

Test the Hosted Service CDN

After published the project on azure, we can use the CDN in the website. The CDN endpoint we had created is az25311.vo.msecnd.net so all files under the “/cdn” folder can be requested with it. Let’s have a try on the sample.htm and c_great_wall.jpg static files.

Also we can request the dynamic page GetNumber with the query string with the CDN endpoint.

And if we refresh this page it will be shown very quickly since the content comes from the CDN without MCV server side process.

The style of this page was missing. This is because the CSS file was not includes in the “/cdn” folder so the page cannot retrieve the CSS file from the CDN URL.

Summary

In this post I introduced the new feature in Windows Azure CDN with the release of Windows Azure SDK 1.4 and new Developer Portal. With the CDN of the Hosted Service we can just put the static resources under a “/cdn” folder so that the CDN can cache them automatically and no need to put then into the blob storage. Also it support caching the dynamic content with the Query String feature. So that we can cache some parts of the web page by using the UserController and CDN. For example we can cache the log on user control in the master page so that the log on part will be loaded super-fast.

There are some other new features within this release you can find here. And for more detailed information about the Windows Azure CDN please have a look here as well.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

Yasser Abdel Kader announced the March Update for Visual Studio 2010 and .NET Framework 4 Training Course Just Released with Windows Azure features on 3/15/2011:

Microsoft just released the March update for the VS 2010 and .Net 4.0 Training Kit. It includes Videos, Hands-on-Labs for: C# 4.0, Visual Basic 10, F#, ASP.NET 4, parallel computing, WCF, Windows Workflow, WPF, Silverlight and Windows Azure. The kit now contains 50 labs, 22 demos, 16 presentations and 12 videos.

For Windows Azure, there are two new Labs (Introduction and Debugging Application), two new Demo script for Hello Windows Azure Application and Deploying Windows Azure Services, One new presentation for Platform Overview and a new Video for What is Windows Azure.

For Silverlight, there are new Hands on Lab for Migrating Windows Forms / ASP.Net Web Forms Applications to Silverlight, Working with Panels, XAML and Controls, Silverlight Data Binding, Migrating Existing Applications to Out-of-Browser, Great UX with Blend, Web Services and Silverlight, Using WCF RIA Services, Deep Dive into Out of Browser and Using the MVVM Pattern in Silverlight Applications

Steve Plank (@plankytronixx) posted Windows Azure and Open Source Applications on 3/14/2011:

Whenever most of us think of OSS, we think in terms of a stack with Linux at the bottom, providing the OS platform and say PHP at the top with say a CMS app atop that. It’s often forgotten of course, that there’s a healthy and thriving community of developers who write OSS code to run on Windows (and therefore Windows Azure).

I guess the web apps that come to mind for me are those like Umbraco, Wordpress, Drupal, Joomla and so on. WebMatrix has done a good job of catering for the needs of one community of developers by making the creation and deployment of such sites as simple as possible.

For Windows Azure developers, there is a tool called the Windows Azure Companion. It’s now in its March 2011 CTP release and can downloaded from here. I’m mentioning all this because it appears to be a fantastically well-guarded secret among the cloud community. Pretty well known among OSS and interop folks, but it’s a really cool piece of cloud technology I think deserves a much wider audience. I wonder how many people know there is an installer that can install most of your favourite OSS web applications, plus platform-level components such as say, MySQL, on to your Windows Azure subscription for you…

One important reason to load the March CTP, if you happen to already have the old version of the companion, is because it’s built using the recent refresh of the SDK (v 1.4).

It works like this:

- Download the .cspkg file from the Windows Azure Companion site. This file contains all the application code needed to run the installer.

- Download the .cscfg file from the Windows Azure Companion site.

- This contains not only the configuration for the installer but also points to the list of apps you want to install. You need to edit the file to point at the app feed file.

- Edit the .cscfg file to point to the app feed file

- The app feed file point to the applications you want to deploy, for example, this entry points to Drupal

- …and this entry points to MySQL

- Once you have deployed the .cspkg and .cscfg to Windows Azure, an installer will fire up on port 8080 at your specified URL, say http://myDrupal.cloudapp.net:8080. It has to run on port 8080 because the actual application (say Drupal) you want to install, will run on port 80, later. All you have deployed at this stage is the installer itself.

- The installer gets some configuration information from the .cscfg that you edited in step 3. It consults the app feed and displays a list of applications and platform level packages you might want to install (as defined in the app feed).

- The installer installs the application(s), in this case Drupal.

- The application is installed – you can go to the application’s URL, say, http://myDrupal.cloudapp.net on port 80 and there you have it, a fully deployed OSS application on Windows Azure.

Above is a video, put together by the “Interop Technologies” Microsoft Evangelist Craig Kitterman which shows how simple these steps are. In fact, one piece of the hard work has already been done in the video – the creation of the app feed file. The “Cloud/Web Famous” Maarten Balliauw has already broken that ground for us! Thanks Maarten.

To learn more, download the code, engage in forums etc, go to the Windows Azure Companion site.

David Chou described Cloud-optimized architecture and Advanced Telemetry in a 3/14/2011 post:

One of the projects I had the privilege of working with this past year, is the Windows Azure platform implementation at Advanced Telemetry. Advanced Telemetry offers an extensible, remote, energy-monitoring-and-control software framework suitable for a number of use case scenarios.

One of their current product offerings is EcoView™, a smart energy and resource management system for both residential and small commercial applications. Cloud-based and entirely Web accessible, EcoView enables customers to view, manage, and reduce their resource consumption (and thus utility bills and carbon footprint), all in real-time via the intelligent on-site control panel and remotely via the Internet.

Much more than Internet-enabled thermostats and device end-points, “a tremendous amount of work has gone into the core platform, internally known as the TAF (Telemetry Application Framework) over the past 7 years” (as Tom Naylor, CEO/CTO of Advanced Telemetry wrote on his blog), which makes up the server-side middleware system implementation, and provides the intelligence to the network of control panels (with EcoView being one of the applications), and an interesting potential third-party application model.

The focus of the Windows Azure platform implementation, was moving the previously hosted server-based architecture into the cloud. Advanced Telemetry completed the migration in 2010, and the Telemetry Application Framework is now running in Windows Azure Platform. Tom shared some insight from the experience in his blog post “Launching Into the Cloud”. And of course, this effort was also highlighted as a Microsoft case study on multiple occasions:

- Energy Monitoring Firm Saves Money, Scales Business with Hosted Computing Platform

- Startup Uses Cloud Computing to Change Business Model, Becomes Instantly Profitable

- SQL Azure team blog’s Interview with Tom Naylor, CTO of Advanced Telemetry

- Windows Azure AppFabric team blog’s How Advanced Telemetry became instantly profitable after migrating to the Windows Azure Platform

- Channel 9 Video - Building on Azure: Advanced Telemetry

The Move to the Cloud

As pointed out by the first case study, the initial motivation to adopt cloud computing was driven by the need to reduce operational costs of maintaining an IT infrastructure, while being able to scale the business forward.

“We see the Windows Azure platform as an alternative to both managing and supporting collocated servers and having support personnel on our side dedicated to making sure the system is always up and the application is always running,” says Tom Naylor. “Windows Azure solves all those things for us effectively with the redundancy and fault tolerance we need. Because cost is based on usage, we’ll also be able to much more accurately assess our service fees. For the first time, we’ll be able to tell exactly how much it costs to service a particular site.”

For instance, in the Channel 9 video, Tom mentioned that replicating the co-located architecture from Rackspace to Windows Azure platform resulted in approximately 75% cost reduction on a monthly basis in addition to other benefits. One of the major ‘other’ benefits is agility, which arguably is much more valuable than the cost reduction normally associated with cloud computing benefits. In fact, as the second case study pointed out, in addition to breaking ties to an IT infrastructure, Windows Azure platform become a change enabler that supported to shift to a completely different business model for Advanced Telemetry (from a direct market approach to that of an original equipment manufacturer (OEM) model). The move to Windows Azure platform provided the much needed scalability (of the technical infrastructure), flexibility (to adapt to additional vertical market scenarios), and manageability (maintaining the level of administrative efforts while growing the business operations). The general benefits cited in the case study were:

- Opens New Markets with OEM Business Model

- Reduces Operational Costs

- Gains New Revenue Stream

- Improves Customer Service

Cloud-Optimized Architecture

However, this is not just another simple story of migrating software from one data center to another data center. Tom Naylor understood well the principles of cloud computing, and saw the value in optimizing the implementation for the cloud platform instead of just using it as a hosting environment for the same thing from somewhere else. I discussed this in more detail in a previous post Designing for Cloud-Optimized Architecture. Basically, it is about leveraging cloud computing as a way of computing and as a new development paradigm. Sure, conventional hosting scenarios do work in cloud computing, but there is more value and benefits to gain if an application is designed and optimized specifically to operate in the cloud, and built using unique features from the underlying cloud platform.

In addition to the design principles around “small pieces, loosely coupled” fundamental concept I discussed previously, another aspect of the cloud-optimized approach is to think about storage first, as opposed to thinking about compute. This is because, in cloud platforms like Windows Azure platform, we can build applications using the cloud-based storage services such as Windows Azure Blob Storage and Windows Azure Table Storage, which are horizontally scalable distributed storage systems that can store petabytes and petabytes of data and content without requiring us to implement and manage the infrastructure. This is in fact, one of the significant differences between cloud platforms and traditional outsourced hosting providers.

In the Channel 9 video interview, Tom Naylor said “what really drove us to it, honestly, was storage”. He mentioned that the Telemetry Application Platform currently handles about 200,000 messages per hour, each containing up to 10 individual point updates (which roughly equates to 500 updates per second). While this level of traffic volume isn’t comparable to the top websites in the world, it still poses significant issues for a startup company to store and access the data effectively. In fact, the data required the Advanced Telemetry team to cull the data periodically in order to maintain a relatively workable size for the operational data.

“We simply broke down the functional components, interfaces and services and began replicating them while taking full advantage of the new technologies available in Azure such as table storage, BLOB storage, queues, service bus and worker roles. This turned out to be a very liberating experience and although we had already identified the basic design and architecture as part of the previous migration plan, we ended up making some key changes once unencumbered from the constraints inherent in the transitional strategy. The net result is that in approximately 6 weeks, with only 2 team members dedicated to it (yours truly included), we ended up fully replicating our existing system as a 100% Azure application. We were still able to reuse a large percentage of our existing code base and ended up keeping many of the database-driven functions encapsulated in stored procedures and triggers by leveraging SQL Azure.” Tom Naylor described the approach on his blog.

The application architecture employed many cloud-optimized designs, such as:

- Hybrid relational and noSQL data storage – SQL Azure for data that is inherently relational, and Windows Azure Table Storage for historical data and events, etc.

- Event-driven design – Web roles receiving messages act as event capture layer, but asynchronously off-loads processing to Worker roles

Lessons Learned

In the real world, things rarely go completely as anticipated/planned. And it was the case for this real-world implementation as well. :) Tom Naylor was very candid about some of the challenges he encountered:

- Early adopter challenges and learning new technologies – Windows Azure Table and Blob Storage, and Windows Azure AppFabric Service Bus are new technologies and have very different constructs and interaction methods

- “The way you insert and access the data is fairly unique compared to traditional relational data access”, said Tom, such as the use of “row keys, combined row keys in table storage and using those in queries”

- Transactions - initial design was very asynchronous; store in Windows Azure Blob storage and put in Windows Azure Queue, but that resulted in a lot of transactions and significant costs based on the per-transaction charge model for Windows Azure Queue. Had to leverage Windows Azure AppFabric Service Bus to reduce that impact

The end result is a an application that is horizontally scalable, allowing Advanced Telemetry to elastically scale up or down the deployments of individual layers according to capacity needs, as different application layers are nicely decoupled from each other, and the application is decoupled from horizontally scalable storage. Moreover, the cloud-optimized architecture supports both multi-tenant and single-tenant deployment models, enabling Advanced Telemetry to support customers who have higher data isolation requirements.

<Return to section navigation list>

Visual Studio LightSwitch and Entity Framework v4

• Beth Massi (@bethmassi) posted Visual Studio LightSwitch Beta 2 Released! on 3/15/2011 at 12:58 PM PDT:

Wow, what a busy morning! I’m super excited that we announced the release of Visual Studio LightSwitch Beta 2 today! Check out the team blog post for details:

Visual Studio LightSwitch Beta 2 Released with Go Live License!

We've also got a lot of new content and a fresh look for the LightSwitch Developer Center on MSDN so check it out! Whether you are just beginning or have been using LightSwitch for a while, we've got something for you.

We've also done a major overhaul to the LightSwitch Developer Learning Center in order to organize learning topics, blog articles, tips & tricks, and documentation better and allows us to easily roll out more training content each week. You can access training, samples, How Do I Videos, articles and more here.

We'll also be updating the Training Kit (that you can also access from the Learning Center) on Thursday for the public release. Also check out our team blogs and bloggers for more information on LightSwitch. We're in the process of updating all our Beta 1 blog posts to Beta 2 so keep checking back.

- LightSwitch Team Blog

- Beth Massi's Blog

- Robert Green's Blog

- Matt Thalman's Blog

- See the Dev Center Commuity page for more from the community

Also make sure to ask questions in the LightSwitch Forums, we have the majority of the team hanging out there ready to answer any questions or issues you are having.

Michael Desmond asserted “The second pre-release version of Microsoft's wizard-based, rapid business application development tool adds cloud and extensibility features” in a deck for his Microsoft Releases LightSwitch Beta 2 report of 3/15/2011:

Microsoft today announced at its Developer Tools Partner Summit in Redmond that Beta 2 of the Visual Studio LightSwitch rapid business application development tool is available for immediate download [see article below]. LightSwitch Beta 1 was announced at the Visual Studio Live! event in August 2010. Dave Mendlen, senior director of Developer Marketing at Microsoft said the final shipping version of LightSwitch will be released "later this year."

The new pre-release version of LightSwitch offers two significant new capabilities, Mendlen said.

"With Beta 2 we've introduced some new functionality. The first is we added Windows Azure publishing, which is now fully integrated. The second is extensibility. Anyone with a copy of Visual Studio Pro can, starting with LightSwitch Beta 2, build extensions for LightSwitch," Mendlen said.

LightSwitch Beta 2 also addresses an incompatibility between the earlier pre-release version of LightSwitch (Beta 1) and the recently released Visual Studio 2010 Service Pack 1 (SP1). Visual Studio developers who have upgraded to VS2010 SP1 must upgrade to LightSwitch Beta 2 to work with LightSwitch. Also, LightSwitch Beta 2 will not work with the RTM version of VS2010.

Extensions and Cloud

The new capabilities in LightSwitch Beta 2 draw the tool into line with Microsoft's broad cloud computing strategy. Windows Azure publishing will enable LightSwitch developers to easily deploy their applications to either the desktop or the cloud. Mendlen described the tool as offering "simple and fast" LOB application creation "for desktop and cloud."LightSwitch Beta 2 support for extensions will certainly appeal to attendees at the Developer Tools Partner Summit, an invitation-only event for Microsoft ecosystem partners that build and market tools for the Microsoft development stack. Mendlen said LightSwitch extensions can include screens, business templates, data sources, business types and controls. He singled out a pair of working LightSwitch extensions as examples: An Infragistics custom shell extension that enables a Windows Phone 7 Metro-like, touch-enabled UI, and a ComponentOne pivot table control that offers Excel-like data manipulation.

Mendlen said the focus was to build a robust ecosystem of third-party providers around LightSwitch. "Don't go crazy trying to make a pivot table. Just go buy one and you'll have that functionality for you," he said, adding. "We have more extensibility points and more places to monetize than just the traditional control vendor model."

Visual Studio LightSwitch is aimed at business analysts and power users who today often create ad-hoc business logic in applications like FileMaker Pro or Microsoft Excel and Access. Based on Visual Studio, LightSwitch offers a visual, wizard-driven UI that allows business users to craft true, .NET-based applications with rich data bindings. Unlike ad-hoc development, the .NET code produced by LightSwitch can be seamlessly imported into Visual Studio for professional developers to inspect, edit and extend.

Also announced at the Developer Tools Partner Summit was a program that gives Visual Studio Ultimate Edition license holders free access to unlimited virtual users with Microsoft's load testing tool and agent. Ultimate Edition users will get a license key to generate unlimited users with the Visual Studio 2010 Load Test Feature Pack, without having to buy the Visual Studio Load Test Virtual User Pack 2010. The Load Test Virtual User Pack normally costs $4499 per pack supporting 1000 virtual users.

"I saw an estimate from one customer that this could be a million dollars in cost savings for them. It's massive," said Mendlen. "It's free and we're making it available to Ultimate customers forever. If you have Ultimate you get this value."

The Visual Studio LightSwitch Team announced Visual Studio LightSwitch Beta 2 Released with Go Live License! on 3/15/2011 at 10:02 AM:

We are extremely happy to announce the release of Microsoft® Visual Studio® LightSwitch™ Beta 2! MSDN subscribers can access LightSwitch Beta 2 today and public availability will be Thursday, March 17th. Please see Jason Zander’s post that walks through some of the new features.

- MSDN Subscribers download LightSwitch Beta 2 now!

- The general public can download Beta 2 on Thursday at 10AM PST here

Read What’s New in Beta 2 for information on new capabilities in this release. We’d also like to announce that Beta 2 comes with a “Go Live” license which means you can now start using Visual Studio LightSwitch for production projects!

We’ve also done some major updates to the LightSwitch Developer Center with How Do I videos based on Beta 2 as well as a new and improved Learning Center and Beta 2 samples to get you up to speed fast, whether you’re a beginner or advanced developer. We’ll be rolling out more in-depth content and training in the coming weeks so keep checking back.

INSTALLATION NOTES: Visual Studio 2010 Express, Professional, Premium, Test Professional or Ultimate users must install Visual Studio 2010 SP1 before installing Visual Studio LightSwitch Beta 2. Visual Studio LightSwitch Beta 1 users should uninstall Beta 1 before installing Beta 2. Also see the Beta 2 readme for late breaking issues. These are known incompatible releases with Visual Studio LightSwitch Beta 2 (see links for details on compatibility and workarounds):

- Visual Studio Async CTP - known compatibility details(see *Async CTP Issues with VS2010 SP1* announcement)

- Windows SDK 7.1 - known compatibility details

- Help Viewer Power Tool – known compatibility details

PROJECT UPGRADE NOTES: Due to the many improvements in Beta 2, projects created in Beta 1 cannot be opened or upgraded. You will need to recreate your projects and copy over any user code that you have added. You can get to the user code files by switching to File View in the Solution Explorer. Please note that some APIs have changed so you may need to update some of your code appropriately. At this point we do not plan to introduce breaking changes post-Beta 2 that will cause you to need to recreate your Beta 2 projects.

We want to hear from you! Please visit the LightSwitch Forums to ask questions and interact with the team and community. Have you found a bug? Please report them on Microsoft Connect.

Have fun building business applications with Visual Studio LightSwitch!

Jason Zander posted Announcing Microsoft® Visual Studio® LightSwitch™ Beta 2 on 3/15/2011 at 10:00 AM:

I’m happy to announce that as of 10:00 AM PDT today Microsoft® Visual Studio® LightSwitch™ Beta 2 is available for download! MSDN subscribers using Visual Studio 2010 can download the beta immediately with general availability on Thursday, March 17.

- Download LightSwitch Beta 2 (MSDN Subscribers with Visual Studio 2010)

- Thursday's 10:00 AM PDT general availability download will be here.

Please see “What’s New in Beta 2” for information on new capabilities, installation options, and compatibility notes for this release. Unfortunately, due to the many improvements in Beta 2, projects created in Beta 1 cannot be opened or upgraded. You can find instructions for moving your existing projects forward on the LightSwitch team blog.

We are happy to announce that Beta 2 comes with a “Go Live” license which means you can now start using Visual Studio LightSwitch for production projects!

Since the launch of Beta 1, the team has been heads down in working through your feedback and has made some improvements that I think you’ll agree are pretty cool.

- Publish to Azure: the Publish Wizard now provides the ability to publish a LightSwitch desktop or browser application to Windows Azure, including the application’s database to SQL Azure. The team is planning a detailed tutorial of this experience that will get posted on the team blog later this week.

- Improved runtime and design-time performance: Build times are 50% faster in Beta 2 and we have made the build management smarter to improve iterative F5 scenarios by up to 70%. LightSwitch Beta 2 applications will startup up to 30% faster than Beta 1. New features like static spans will include related data in a single query execution and improve the time to load data on a screen by reducing the total number of server round-trips. The middle tier data load/save pipeline has been optimized to improve throughput by up to 60%.

- Runtime UI improvements: Auto-complete box, better keyboard navigation, and improved end-user experience for long-running operations.

- Allow any authenticated Window user: When Windows authorization was selected in a LightSwitch app, you previously needed to add the Windows users who are allowed to use the application into the User Administration screen of the running application. This is cumbersome in installations where there are a large number of Windows users and when you just wanted to open the app up to all Windows users. The project properties UI now allows you to authenticate any Windows user in a LightSwitch application while still using the LightSwitch authorization subsystem for determining user permissions for specific users.

LightSwitch Architectural Overview