Windows Azure and Cloud Computing Posts for 10/26/2011+

| A compendium of Windows Azure, SQL Azure Database, AppFabric, Windows Azure Platform Appliance and other cloud-computing articles. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table, Queue and Hadoop Services

- SQL Azure Database and Reporting

- Marketplace DataMarket and OData

- Windows Azure AppFabric: Apps, Access Control, WIF and Service Bus

- Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch and Entity Framework v4+

- Windows Azure Infrastructure and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

Azure Blob, Drive, Table, Queue and Hadoop Services

No significant articles today.

<Return to section navigation list>

SQL Azure Database and Reporting

No significant articles today.

<Return to section navigation list>

MarketPlace DataMarket and OData

No significant articles today.

<Return to section navigation list>

Windows Azure AppFabric: Apps, Access Control, WIF and Service Bus

Rick Garibay (rickggaribay) reported his New Article in CODE Magazine on Azure Service Bus Queues & Topics on 10/26/2011:

I am pleased to share that my new article on Azure Service Bus Queues and Topics has just been published by CODE Magazine.

CODE Magazine is a leading Microsoft technical journal with a worldwide in-print circulation in excess of 20,000 along with on-line, Xiine and Amazon Kindle media distribution. CODE is distributed to a combination of paid subscriptions, qualified requests, and newsstand sales. In addition, CODE Magazine has bonus distribution at targeted Microsoft-oriented conferences and targeted industry events throughout the year such as Microsoft Professional Developer Conference (PDC), Tech Ed, DevTeach, MVP Global Summit, DevConnections, Devscovery, QCon, Code Camps, and more!

Here is the link to the article: http://www.code-magazine.com/article.aspx?quickid=1112041

The article covers critical capabilities provided by Azure Service Bus for composing distributed messaging solutions for the hybrid enterprise and how the latest release delivers on the Software + Services vision that was first laid out over five years ago.

The new release includes the addition of Queues and Topics which build on top of an already robust set of capabilities introducing new levels of reliability for building loosely coupled distributed solutions across a variety of clients and services, be they on-premise, in the cloud, or a combination of the two.

There are many exciting changes happening within Microsoft around integration and middleware, and the release of Service Bus Brokered Messaging/Queues and Topics is a strong reflection of the commitment to the platform that I believe is going to make this new wave of innovation more exciting than ever before.

It has been a tremendous privilege to have the opportunity to work with the Azure Service Bus team and experiment with the early bits ahead of release. I’d like to thank Todd Holmquist-Sutherland, Clemens Vasters, Abhishek Lal and David Ingham for the unprecedented access to their team, resources and information as well as kindly and patiently answering my many questions over the last several weeks.

Long live messaging!

<Return to section navigation list>

Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

No significant articles today.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

Mary Jo Foley (@maryjofoley) reported Nokia's first Windows Phones: What's there, what's not in a 10/26/2011 post to her All About Microsoft blog for ZD Net:

Nokia took the wraps off its first two Windows Phones at the opening day of its Nokia World conference.

There are two of them: The Nokia Lumia 800 (the phone known as “SeaRay”) and the Lumia 710 (the phone codenamed “Sabre”). The phones both are built on the same 1.4 GHz Snapdragon processor and “Mango” operating system release. The Lumia 800 features a 3.7-inch display and an 8 MP camera and a ; the 710 has a 3.7-inch display and a 5 MP camera. The estimated retail price of the Lumia 800 is 420 Euros (about $585 U.S.) and the 710 is 270 Euros (or $376 U.S.).

The rumored third Windows Phone device — a more business-focused phone codenamed “Ace” was a no-show today (if it exists at all, which I believe it does).

Many on Twitter noted the first Nokia Windows Phones were solid, but lacking some of the features one might expect — like front-facing cameras, built-in NFC support and on-device storage of more than 16 GB.

There’s no official word as to when — and actually if — the Lumia 800 and 710 models will come to the U.S. Nokia officials promised a “portfolio” of Windows Phones will begin rolling out in the U.S. starting early next year, but didn’t actually say whether those two devices will be part of that portfolio.

We do know that Nokia is going to support CDMA and LTE in certain markets where it makes sense, but, again, no specific official promises. (ThisIsMyNext reporters noted that Verizon Wireless reps were at the show, which is a somewhat encouraging sign for us Windows Phone users on Verizon who still only have one model from which to choose, a year after Windows Phone debuted.)

What wasn’t lacking was Nokia’s thoughts and plans for marketing the new phones with a slogan “Amazing Every day.” Company officials played up during today’s kick-off keynote the way Mango features that are common to all Windows Phones will resonate with consumers and help differentiate Microsoft’s offerings from the Android and iPhone competition. …

Read more.

Steve Plank (@plankytronixx) described Androids, iPhone and Windows Phone–what could they all have in common in a 10/26/2011 post:

Yes – I know, touchscreens, apps, keyboards.

If you develop apps for any of these platforms that’s great. And if say, your app needs to do something a bit more meaty than the phone’s onboard processor can manage, you’ll almost certainly use processing power on the other end of an Internet connection.

You could build a meaty server and run the code in your own office. You could, if you are a bit bigger than that, use an Internet facing server in your own data-centre. You could use a VM at a hoster. You could provision that server portion of your app on to a public-cloud Infrastructure-as-a-Service (IaaS) provider or you could write your app and send it to a Platform-as-a-Service (PaaS) provider.

Were it me – there’d be almost no decision to make. The first option, buying a server, installing an OS then writing my app on top of that and getting it connected to the Internet from home – hmmm. For a start that sounds pretty expensive. I’d have to buy the hardware and possibly the Operating System (if I don’t choose an open-source option), write my app, deploy it, harden the platform itself and then get it securely connected to the Internet.

The next option of running this is in my own data-centre means I’d have to find the IT group that is going to maintain the service level this server will give me. There will be a fair cost associated with procuring and running the hardware and software (outside of my app I mean) and getting it to provide those services in a realistic and secure way.

I could go to a hoster and take out a contract for say a 12 month period and send them my OS image with my app installed. Again, the work of securing the OS and, importantly, in a hosting situation, keeping it up-to-date and secure is a fair old commitment. But at least I’d be using their infrastructure and rather than being hit with a big capital outlay at the start, I’d just get a monthly bill. It has its attractions – oh of course their big fat pipe to the Internet would especially help.

I could go to an IaaS provider. Aside from the commercial details around the contract and so on, I’d have very similar technical challenges. I wouldn’t be able to just concentrate on my application. I’d have to look after the OS, its configuration, security, patching and so on. But I could buy compute resources by-the-hour. Also, if I wrote my app to scale-out by adding more instances of the app alongside the existing one, on new VMs, with the super-fast provisioning models that IaaS providers provide, I could respond to peaks and troughs in demand very quickly – within minutes. In an elastic fashion as Amazon, the biggest IaaS cloud provider would say. I’d have to write my app to work that way – to be stateless, to work in a multiple-VM fashion, but there’d be a really big advantage of using an IaaS cloud for this.

But not being all that keen on configuring & securing server operating systems, and not being too keen on the commitment that the on-going maintenance of it would put on to me, I’d go to a PaaS provider. All I have to do is send them my application code and data and they’ll take care of the actual environment for me. Like hosters and IaaS providers, they have high-bandwidth Internet connections and they actually tend to give good availability SLAs – like 99.95%, but I actually know the reality is that availability tends to be considerably higher than that anyway.

I work for Microsoft so my preferred choice of PaaS provider would be Windows Azure. But I guess, if I were a phone developer there’s another reason too. The availability of toolkits that allow me to write apps for Android, iPhone and Windows Phone that use a Windows Azure backend. [Emphasis added.]

There are specific services such as Storage in blobs, queues or tables I might want to take advantage of because the phone itself is light on storage – especially if it’s already full of music and videos. Or maybe I’d like a really simple way of using Facebook usernames and passwords, or Live-ID, or Google or Yahoo or… I could take advantage of Push Notification services. The thing is, the phone toolkits plus Windows Azure mean it’s easy for me to concentrate on the only thing that creates value in an application – and that’s the application itself, rather than the infrastructure it runs on.

I’d only have to write phone and Azure code and I could live confident that when an important security patch is released it will simply be applied to my service in a co-ordinated way by the back-end service and I not only don’t have to be bothered with it – I don’t even have to be aware of it. It will happen automatically. Managing infrastructure doesn’t really bring any true value in and of itself. It’s necessary but if I can get all that from a provider who gives me an SLA, then I’m all ears.

And then if I wrote my app the same way I might do for an IaaS provider – stateless, multi-instance, scale-out etc – I could still take advantage of the fast provisioning and de-provisioning of servers. Because provisioning of servers is similarly fast in Windows Azure. So I can scale out and back in an elastic fashion. All I need to do is set the instance count on a service and apply that and bingo! A few minutes later my service has been scaled up. And if it was right in the middle of having a mission critical security patch applied at the time, I don’t worry about the infrastructure, the Windows Azure fabric will sequence everything correctly and take care of that for me.

Or alternatively, I could use the service management API inside my server-app. It could monitor its own performance and call the methods to scale the service out to say 4 instances (entire VMs with the app and all configuration automatically applied) at busy times and then when the app quietens down, to scale it back down to say 2.

This isn’t an example of a phone-specific app, but take a listen to what Mark Bower at Cube Social says about scaling their social CRM app in this 3 minute video.

Cube Social’s Experience with Windows Azure

- Get the Android Toolkit for Windows Azure here

- Get the iOS Toolkit for Windows Azure here

- Get the Windows Phone Toolkit for Windows Azure here

In my opinion, if you are at your core, a developer, not an infrastructure guy – the easiest way to be productive and get not only your code out of the door on time but also the service you are creating – especially for mobile apps, there’s no better combination than Windows Azure plus one of these toolkits.

If you are a UK phone developer and you want to understand more about Windows Azure, come on one of our UK bootcamps. The very next day, you can follow it up with a Windows Phone Bootcamp. They’re free. Next dates are:

Windows Azure Bootcamps:

Windows Phone Bootcamps:

BusinessWire reported Red Gate Acquires Cerebrata: Two companies with singular vision unite in Windows Azure market in a 10/26/2011 press release:

CAMBRIDGE, U.K., Oct 26, 2011 (BUSINESS WIRE) -- Red Gate announced today that it has acquired Cerebrata, the maker of user-acclaimed tools for developers building on the Microsoft Windows Azure platform. The agreement brings together two companies that share a user-first philosophy and a passion for tools that transform the way developers work.

"We used Red Gate's .NET tools internally to build Cerebrata products and I've seen the resources they dedicate to creating the ultimate user experience," says Gaurav Mantri, Cerebrata's founder and head of technology. "Working with a similar user base, shared values, and complementary technical expertise, we can expand and enrich the Windows Azure market."

Same team, new resources

Cerebrata will continue to operate its own web site and the entire development team will be kept in place for the foreseeable future.

"Cerebrata will continue to be run as Gaurav's citadel," says Luke Jefferson, Red Gate's Windows Azure product manager. "We want to tap into his incredible technical knowledge and help his team by automating processes and providing our user-experience expertise."

New products on the way

Cerebrata is rewriting its three core products, with new versions of Azure Diagnostic Manager and Cloud Storage Studio slated for release in January 2012. Shortly after, Cerebrata will release an all-in-one tool called Azure Management Studio -- a single product that will handle storage, applications, diagnostics and other critical tasks for Azure developers.

Excitement among users

Users familiar with the two companies are excited about what's ahead for this collaboration.

"As a user of both companies' tools, I'm excited about this combination of Cerebrata's leading-edge products with Red Gate's proven track record for delivering high-quality, easy-to-use tools," says James Smith, head of software development at Adactus, a provider of customized software development services for eBusiness and eCommerce. "I believe that using Cerebrata/Red Gate tools will continue to yield major benefits for our business."

Please visit the Cerebrata and Red Gate websites for more information on products and services.

SOURCE: Red Gate

Copied from Windows Azure and Cloud Computing Posts for 10/20/2011+ Continued: Part 2 of 10/25/2011.

Avkash Chauhan (@avkashchauhan) explained Reading configuration entries using System.Configuration.ConfigurationManager class in a Windows Azure Application in a 10/25/2011 post:

With Windows Azure application you can use ServiceConfiguration.cscfg to include any item-value pair and then in your role code you can read these settings directly however if you would want to read configuration entries using System.Configuration.ConfigurationManager class in an Azure application then you would need to use little different method to get it done. The potential reasons for different method are as below:

With Web Role:

- In a web role OnStart() function runs within WaIISHost.exe which does not have any association with web.config and the web.config you had is part of w3wp.exe process which is running separately.

With Worker Role:

- In worker role OnStart() function runs WaWorkerHost.exe and I believe with worker role you would not have a web.config associated with it.

So whenever you would need to use configuration entries in your OnStart() function using System.Configuration.ConfigurationManager class you would need to create an app.config file in your Windows Azure project and included all of your configuration related data in it.

Step 1: Adding app.config to your Windows Azure application:

Step 2: Adding Configuraiton items into App.config

<?xml version="1.0"?>

<configuration>

<startup>

<supportedRuntime version="v2.0.50727"/>

</startup>

<appSettings>

<add key="ConfigurationItem" value="ThisIsCongiruationItem" />

</appSettings>

</configuration>

Step 3: Reading Configuration related data in your Web Role OnStart() function as below:

public class WebRole : RoleEntryPoint

{

public override bool OnStart()

{

// For information on handling configuration changes

// see the MSDN topic at http://go.microsoft.com/fwlink/?LinkId=166357.

string myConfiguration = System.Configuration.ConfigurationManager.AppSettings.Get("ConfigurationItem");

return base.OnStart();

}

}

Testing the code:

While running the code in compute Emulator you can verify the value is read correctly as below:

If you don’t have app.config and reading configuration data using Configuration Manager class you will get results as below:

Steve Marx (@smarx) described Packaging an Executable as a Windows Azure Application From the Command Line in a 10/25/2011 post with downloadable source code:

I just published https://github.com/smarx/packanddeploy, a simple scaffold for deploying an executable as a Windows Azure application. That code runs, as an example, mongoose (a web server). It uses the new ProgramEntryPoint in a worker role to launch a batch file, which then launches the executable. I did it this way because I wanted to pass the address and port as command line parameters (so environment variable expansion via a batch file was a good option).

The project does not use Visual Studio. It simply uses the

cspackandcsruncommands from the Windows Azure SDK to package the app for local or cloud deployment and to run it locally.This should be a good starting point for people who just have an executable they want to get running in the cloud. Enjoy!

[UPDATE 10:19pm PDT] Added that the code uses mongoose as an example.

Wade Wegner (@WadeWegner) reported a updated Windows Azure Toolkit for Windows Phone v1.3.1 on 10/25/2011:

Yesterday I released an update to the Windows Azure Toolkit for Windows Phone. There are a few important updates in this release, so I encourage you to go and download the new tools. We have a number of significant updates:

- Upgraded Windows Azure projects to Windows Azure Tools for Microsoft Visual Studio 2010 1.5 – September 2011

- Upgraded the tools tools to support the Windows Phone Developer Tools RTW

- Update SQL Azure only scenarios to use ASP.NET Universal Providers (through the System.Web.Providers v1.0.1 NuGet package)

- Changed Shared Access Signature service interface to support more operations

- Refactored Blobs API to have a similar interface and usage to that provided by the Windows Azure SDK StorageClient library

- Added two new samples: Tweet Your Blobs and CRUD SQL Azure

In addition to the core updates listed above, we’ve also enhanced the setup and dependency checking experience. The experience is more tightly integrated with the Web Platform Installer, making it easier for you to use the toolkit.

Of all the updates, I’m most excited about the work we did to bring forward our blobs API to have parity with the primary Windows Azure StorageClient library. Now, regardless of whether you’re using the a web application or phone application, you’ll have a consistent API with which to use Windows Azure storage.

Wade Wegner is a Technical Evangelist at Microsoft, responsible for influencing and driving Microsoft’s technical strategy for the Windows Azure Platform.

Rob Tiffany (@RobTiffany) continued his Phone <-> Windows Azure series with a Consumerization of IT Collides with MEAP: Android > On-Premises post of 10/24/2011:

In my last ‘Consumerization of IT Collides with MEAP’ article, I described how to connect iPhones and iPads to Microsoft’s Cloud servers in Azure. In this week’s scenario, I’ll use the picture below to illustrate how Android devices can utilize many of Gartner’s Critical Capabilities to connect to Microsoft’s On-Premise infrastructure:

As you can see from the picture above:

For the Management Tools Critical Capability, Android uses Microsoft Exchange for On-Premise policy enforcement via Exchange ActiveSync (EAS) but has no private software distribution equivalent to System Center Configuration Manager 2007 from Microsoft today. Instead, in-house apps are hosted and APKs distributed via a web server over wireless by having a user click on a URL or through a variety of app stores. In the future, System Center Configuration Manager 2012 will be able to better manage Android devices.

- For both the Client and Server Integrated Development Environment (IDE) and Multichannel Tool Critical Capability, Android uses Visual Studio. While the Server/EAI development functionality is the same as every other platform, endpoint development will consist of HTML5, ECMAScript 5, and CSS3 delivered by ASP.NET. WCF REST + JSON Web services can also be created and consumed via Ajax calls from the browser.

- For the cross-platform Application Client Runtime Critical Capability, we will rely on Android’s WebKit browser to provide HTML5 + CSS3 + ECMAScript5 capabilities. Offline storage is important to keep potentially disconnected Android working and this is facilitated by Web Storage which is accessible via JavaScript.

- For the Security Critical Capability, Android 3.0 and higher provides hardware encryption based on the user’s device passcode for data-at-rest. Data-in-transit is secured via SSL and VPN. LDAP API support allows it to access corporate directory services.

- For the Enterprise Application Integration Tools Critical Capability, Android can reach out to servers directly via Web Services or indirectly via SQL Server (JDBC) or BizTalk using SSIS/Adapters to connect to other enterprise packages.

- The Multichannel Server Critical Capability to support any open protocol directly, via Reverse Proxy, or VPN is facilitated by ISA/TMG/UAG/IIS. Cross-Platform wire protocols riding on top of HTTP are exposed by Windows Communication Foundation (WCF) and include SOAP, REST and Atompub. Cross-Platform data serialization is also provided by WCF including XML, JSON, and OData. These Multichannel capabilities support thick clients making web service calls as well as thin web clients making Ajax calls. Distributed caching to dramatically boost the performance of any client is provided by Windows Server AppFabric Caching.

- While the Hosting Critical Capability may not be as relevant in an on-premises scenario, Windows Azure Connect provides an IPSec-protected connection to the Cloud and SQL Azure Data Sync can be used to move data between SQL Server and SQL Azure.

- For the Packaged Mobile Apps or Components Critical Capability, Android runs cross-platform mobile apps including Skype, Bing, MSN, Tag, Hotmail, and of course the critical ActiveSync component that makes push emails, contacts, calendars, and device management policies possible.

Newer versions of Android (3.x/4.0) are beginning to meet more of Gartner’s Critical Capabilities. It’s really improved in the last year in areas of encryption, but device fragmentation makes this improvement uneven. The app story is still the ‘Wild West’ since the Android Market is an un-vetted free-for-all. This big ‘red flag’ has given rise to curated app stores like the one from Amazon. As you can see from the picture, the big gap is with the client application runtime critical capability. Native development via Java/Eclipse is where Google wants to steer you and Microsoft doesn’t make native tools, runtimes or languages for this platform. You can definitely perform your own due diligence on Mono for Android from our friend Miguel de Icaza and his colleagues in order to reuse your existing .NET and C# skills. From a Microsoft perspective though, you’re definitely looking at HTML5 delivered via ASP.NET.

Next week, I’ll cover how Android connects to the Cloud.

<Return to section navigation list>

Visual Studio LightSwitch and Entity Framework 4.1+

Return to section navigation list>

Windows Azure Infrastructure and DevOps

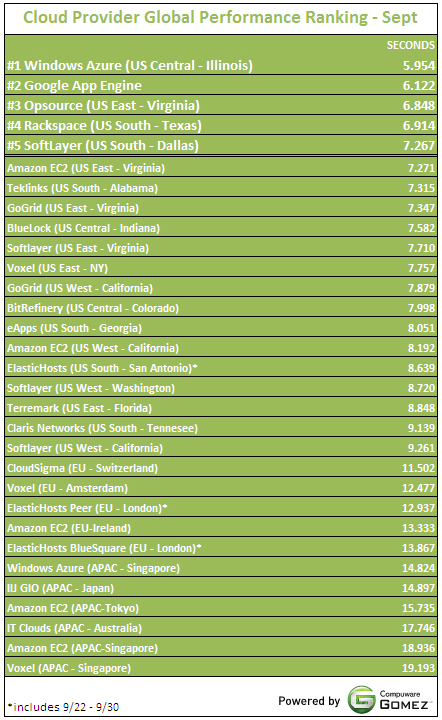

Fig1: Cloud Provider Response times (seconds) from backbone locations around the world

Looking for previous results?

Per my usual disclaimer, please check out the Global Provider View Methodology for the background on this data and use the app itself for a more accurate representation of your specific geography.

As I’ve mentioned in previous posts, I’ve asked Ryan to add Microsoft’s South Central US (San Antonio) data center to the list. All Amazon Web Services’ data centers are listed, so it would be appropriate for the CloudSleuth team to include all nine Microsoft data centers. (Note the the Windows Azure APAC-Singapore data center is included near the end of the list and it’s the leader of the Asian group.

Lori MacVittie (@lmacvittie) asserted Infrastructure architecture is often the answer to many of IT’s most challenging issues in an introduction to her Infrastructure Architecture: Removing Blinders from Security Infrastructure post of 10/26/2011 to F5’s DevCentral blog:

It is a fact of IT that different businesses have different technical requirements in terms of security, processing, performance, and even storage. In many organizations, particularly those that transport sensitive personal or financial information, end-to-end encryption is a must. At first glance this seems to be a fairly simple thing – enable a secure transport from client to server and vice-versa and voila! But further exploration reveals that this isn’t the case, primarily because it’s never a straight shot between the client and the server – there are a variety of critical functions and processes that must be applied to exchanges in flight, many of which are impeded by the presence of encrypted traffic.

INFRASTRUCTURE ARCHITECTURE

One solution, of course, is to get rid of the encryption requirement and ensure that all functions and processes in the data path can perform their functions uninhibited.

That’s rarely an option, however, so we need to use an architectural approach to provide those components with unencrypted traffic upon which they can perform their tasks in a way that preserves the security of the data, which is certainly the intent behind encryption in the first place.

A side-arm (or one-arm) architectural configuration provides the answer to this problem, in conjunction with a terminating-capable application delivery controller. This, in itself, is somewhat ironic as load balancers (from which application delivery controllers evolved) were often deployed in one-armed configurations in the early days of web-enabling network architecture. Thus it is amusing that it is now the application delivery controller that serves as the “body” for a one-armed configuration for other infrastructure components.

Or maybe I just have a really odd sense of humor. That, too, is likely.

In any case, this popular infrastructure architecture solution is fairly straightforward, comprising an application delivery controller - provides load balancing services, multi-layer threat mitigation for the applications, and of course SSL termination and re-establishment – and the IDS/IPS infrastructure. The application delivery controller, acting as the topological endpoint for the client, terminates the SSL session and decrypts the data before sending it to the IDS/IPS for evaluation. When the data is returned, it is re-encrypted and traverses its normal path to the web/application servers for processing.

The details are as follows (some assumptions are made to keep this simple):

1. Client request is made to the application (i.e. https://www.customer.com ). This TCP/IP request is terminated by the application delivery controller as follows:

a. TCP/IP Optimizations are applied for client-side traffic.

b. SSL decryption occurs using CA-signed cert/key pairs as appropriate.

c. A load-balancing decision is made for the web server per the configured algorithm.

d. Persistence is applied as configured (typically encrypted HTTP cookie injection) to maintain “stickiness” of client to the selected webserver.

e. HTTP is processed and optimized as appropriate (header injection/scrubbing, caching, compression, etc…)

f. Application firewalling policy (via web application firewall services) is applied as desired

2. The application delivery controller initiates a separate TCP/IP connection to the chosen server. This connection (optimized for TCP/IP multiplexing and LAN characteristics) remains decrypted and exits towards the IDS/IPS appliance for inspection.

3. Return traffic from the IDS/IPS re-enters the application delivery controller after it has been inspected.

4. The application delivery controller re-encrypts the traffic and routes it to the appropriate webserver.

Return traffic flows through the same path in reverse. This architecture is fully redundant and would survive a failure of any given device in the path.

SERVICE-ORIENTED INFRASTRUCTURE

You’ll note that the data path flow from the application delivery controller to the IDS/IPS is similar to what we’d call in the application development world a “lookup” or a “callout".

From the admittedly high-level perspective of an architectural flow it’s a service call, the integration of an externally provided function / operation into an operational process. It’s service-oriented in theory and practice, if not actual implementation. It’s also near-stateless, with the routed flow of traffic implying the policy application rather than an explicitly stated instruction.

This is a simple architectural solution to a common problem, one that’s plagued IT since the introduction of encrypted communication as a standard practice. More often than not, the solution to many of IT’s problems can be found in a collaborative architectural approach. If cloud computing and virtualization are doing anything, it’s bringing this reality to the fore by forcing the conversation up the stack.

Geva Perry (@gevaperry) posted 10 Predictions About Cloud Computing on 10/25/2011:

Next week in Tel Aviv I'm going to participate in a panel about "the future of clouds", moderated by the legendary Yossi Vardi. In preperation, I wrote down a few of the concepts I've been thinking about for the past several years and I thought I would share them with my readers to get some feedback. Keep in mind these are long-term predicitions and trends (in no particular order).

- PaaS Rules: IaaS becomes niche. In the long-run, IaaS doesn't make sense, except for a limited set of scenarios. All IaaS providers want to be PaaS when they grow up.

- Public Rules: Internal clouds will be niche. In the long-run, Internal Clouds (clouds operated in a company's own data centers, aka "private clouds") don't make sense. The economies of scale, specialization (an aspect of economies of scale, really) and outsourcing benefits of public clouds are so overwhelming that it will not make sense for any one company to operate its own data centers. Sure, there need to be in place many security and isolation measures, and feel free to call them "private clouds" -- but they will be owned and operated by a few major public providers.

- Specialized Clouds: There are many dimensions to an application: the pattern of its workload; the government regulations it must adhere to; the geographic access to it; the programmig language and framework it supports; the levels of security, performance and reliability it requires; and other more specialized requirements. It's not a one-size-fits-all world. At least, not always. There will be big generic clouds, and then, many specialized clouds. I've written about this in the past.

- Government Regulation: The largest cloud providers will become nationally strategic infrastructure (like utilities, financials, telcos, airlines and shipping companies in the past). Given my "public rules" prediction above, cloud providers will become crucial infrastructure to the economy and the interests of their respective nations. They will become "too big to fail". Any change in their pricing will have a profound effect on the economy. And they will also hold the risk of a "cloud run" (similar to a "bank run", a sudden surge of demand they haven't anticipated. Not to mention the fact that they will maintain the sensitive data of consumers, corporations and government agencies. Any way you slice it, it spells regulation. But if history teaches us anything, this regulation will only come after "The Great Cloud Catastrophe" (use your imagination to figure out what that will look like).

- The Control vs. Freedom Debate: This sums up the story of cloud computing so far. Freedom is the catch-all phrase for drivers of cloud adoption (no upfront costs, on-demand, self-service, empowerment of the rank & file - e.g., developers), but control (or lack thereof) is the catch-all phrase of barriers to adoption by large enterprises. Every democratic country experiences this: there is sometimes a contradiction between the so-called sacred principles of rule-of-law and personal freedom. It's a matter of drawing the line -- and we're just in the beginning stages of understanding this issue when it comes to cloud computing. This debate will be with us for years to come and will shape the variety of enterprise cloud computing offerings.

- Cloud Federations - While AWS has enjoyed tremendous international success, in any business that relies heavily on trust, such as IT, nothing beats a local brand. So people will flock to the cloud of their trusted national telco or big IT provider. But on the flip side, they will need to reach a global audience and will want servers around the world. As a result, we will see the formation of cloud federations, similar to what we see in airline alliances, such as Star, SkyTeam and Oneworld.

- Financial Efficiency and Sophistication: Computing is a commodity, and every commodity ends up being traded, future-traded, brokered, arbitraged, speculated and manipulated with derivative instruments. The good: the market becomes very efficient. The bad: the market becomes complex and opaque. We are already seeing spot markets

- Cloud Standards: About two years ago there was a strong wave of interest and discussion about the need for cloud standards. I wrote then, and still believe, it is too soon. But it is also inevitable. We will, however, see multiple competing standards. At least one formal standard specification from a standards body and several de facto standards from large commercial players such as Amazon and VMWare.

- The Ecosystem Wars: I've recently written about the importance of ecosystems in cloud computing. Success in building an ecosystem will be a determining factor in who wins and loses in the cloud. It is not just about the size and breadth of the ecosystem, but how well it all works together. In many ways, Amazon has done a poor job of this so far, but it has the one big compelling factor for an ecosystem: a very large install base.

- Horizontal and Vertical Consolidation: As with any industry, as cloud computing matures, it will consolidate. This will happen both horizontally, for example large IaaS players will roll-up regional and smaller IaaS and hosting providers, as well as vertically, for example IaaS providers will acquire cloud management system providers such as RightScale and enStratus.

I'd love to hear some feedback on these trends. Do you agree? Disagree? I have left something out? Please let me know in the comments.

Simon Munro (@simonmunro) asked Can Cloud Computing Reduce IT Cost Complexities? in a 10/24/2011 post:

Those of us who have been in IT for a while have anecdotes of seemingly ridiculous billing in enterprise IT. One of my personal favourites was a web app that I built in the late 90′s where we had to deploy two web servers – one inside the firewall and one outside. The reason was that the application was what would be classed as ‘extranet’ and the internal users had to pay a monthly fee for the privilege of Internet access, so most couldn’t access the server if it was outside the firewall. The ‘simplest’ solution was one inside as well where both accessed the same database.

So it was interesting to read ‘It’s a tough world out there‘ by JP Rangaswami of SalesForce lamenting his extensive experiences in trying to track and control internal IT costs. The resulting case for cloud computing is a compelling one, where it is not so much the costs of IT that are the problem, but how complex they are applied and calculated within the business. So it would seem then that cloud computing (and SaaS in particular) provides a simpler cost and billing mechanism where costs are attributed directly to the consumer (business unit) of the resource.

I generally agree with this principle, but only as it applies to the provisioning of infrastructure for servers and their related server-side applications. Billing of end user hardware, configuration and support still exists, even if all applications run in the cloud – although this is on the decline due to bring-your-own policies and web only applications.

In addition to the end user, there still remains the issue as to providing support for applications – such support is still required whether or not applications run in the cloud. Until the patterns of supporting cloud-based applications are resolved (see First Line Support in the Public Cloud ) the need for, and the complexities of billing of, centralised IT will still exist.

However, a product like Office 365, with a simple per-user pricing model, may go some way to simplifying the allocation of IT costs within an organization. But, in my experience allocation of costs are less sensible and based on little empires, egos, pointy haired managers and a whole host of problems that cannot be resolved by technology alone. Perhaps a side-mission of technologists implementing cloud solutions is to address complex cost and billing arrangements in their engagements, otherwise the benefits may be anemic.

<Return to section navigation list>

Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

No significant articles today.

<Return to section navigation list>

Cloud Security and Governance

<Return to section navigation list>

Cloud Computing Events

David Chou reported TechNet Events Presents: The Pathway to the Private Cloud for the Western US on 10/26/2011:

Get Ready for Tomorrow, Today - Hyper-V Virtualization for the Cloud

Virtualization is one of the critical elements of networks operations of all kinds. Virtualization is a key to cloud operations. Joins us as we discuss the key components of virtualization that provide the operational foundation for both Public and Private Cloud.

Private Cloud 201: Microsoft Private Cloud Tools and Technologies

So you have heard the private cloud story from a 101 level and you want to know more. Join us as we discuss Private cloud in greater detail with a focus on the tools and technologies that make Private Cloud such an appetizing It and business opportunity for business both large and small.

Ask More Questions! Attend an Afternoon IT Camp!

These are informal event where the true agenda is up to you. That’s right, we will have a list of topics (such as ways to get on Windows Server 2008 R2) plus your write-in topic(s) and we will put it to a vote. The ones that get the most votes will be the ones that get discussed. This will be very interactive and works best with participation from everyone. We look forward to having you join us for this new informal event.

For more information or to register,

visit > www.technetevents.com

OR CALL 1-877-MSEVENT

Location

TechNet IT Camp Bellevue Los Angeles

Denver

Tempe

Mountain View

Portland

Lehi TechNet Events Presents: The Pathway to the Private Cloud

8:30 PM – 12:30 PMIT Camp

1:00 PM – 5:00 PM

Seating is limited,

so register today.

Patriek van Dorp (@pvandorp) posted on 10/26/2011 Announcing 5th Windows Azure User Group NL Meeting to be held 11/17/2011 at the Novotel Rotterdam Brainpark:

On Thursday November 17th the Windows Azure User Group NL will organize another meeting revolving around the topic of Windows Azure. This will be the 5th time such an event is organized and this time the location and catering will be sponsored by Betabit. This time the theme will be ‘Interoperability with the Windows Azure Platform’.

As some of you may already know, the Windows Azure User Group NL organizes meetings to share knowledge of and experience with the Windows Azure Platform amongst Architects, Engineers and Consultants. The Windows Azure User Group meetings have proved to be a nice way to interact with people that have the same interest: Windows Azure.

Agenda

- 17:30u Reception

- 17:45-18:00u Word of Welcom by Betabit & WAZUG NL

- 18:00-18:45u Patriek van Dorp (Sogeti) – Java and Azure make a good combination!

When considering moving your Java EE applications to the Cloud, stop and have a look at the Windows Azure Platform. This session explains briefly the advantages of moving your applications to the Cloud. Step-by-step we will see how various aspects of the Windows Azure Platform can be used in Java development to end with our entire Java EE application running on Windows Azure.

- 18:45u-19:30u Diner

- 19:30u-20:15u Wouter Dingemanse (Betabit) and Marino van der Heijden (Betabit) – Integrating Mobile apps with LOB applications with Azure

This session will explain how to integrate mobile apps with enterprise systems using Windows Azure. Subjects: Mobile Development (iPhone, Android, Phonegap), Windows Azure, Architecture and ‘lessons learned’ in the real world.

- 20:15u-21:00u Maarten Balliauw (RealDolmen) – Dive into PHP on Azure

Curious about other languages on Microsoft’s cloud platform? Curious about how you can develop and deploy applications to Microsoft’s cloud from outside Visual Studio? Let’s have a look at why one would run PHP on Windows Azure and how you can benefit from the rich set of components Windows Azure has to offer to your PHP code.

- 21:00u Socialize

Attendance to this event is free so register now: www.wazug.nl

Locatie (Bing Maps):

- Novotel Rotterdam Brainpark

- K.P. van der Mandelelaan 150

- 3062 MB Rotterdam

Cloudcor, Inc. will present the Up 2011 Cloud Computing Conference on 12/5 through 12/9, starting at the Computer History Museum in Mountain View, CA:

UP 2011 is developed to promote collaborative analysis of the latest trends and challenges in cloud computing and ICT, looking at core process and strategies in the rapidly shifting world. We create thought provoking conference panels, workshops, and tutorials which are selected to cover a range of the hottest topics in ICT.

Event tracks cover following areas:

- 1. Cloud for Business.

- 2. Technology & Innovation.

- 3. Cloud For Public Sector.

- 4. Industry Implementation Insights.

- 5. Governance & Enforcement.

- 6. Research & Findings.

From the Venues page:

UP 2011 conference is holding first two days (December 5 and 6, 2011) in Computer History Museum, Mountain View, California.

The Computer History Museum is the world’s leading institution exploring the history of computing and its ongoing impact on society. It is home to the largest international collection of computing artifacts in the world, including computer hardware, software, documentation, ephemera, photographs, and moving images. The Museum brings computer history to life through an acclaimed speaker series, dynamic website, on-site tours, and physical exhibitions. Click here for a list of exhibits.

Floor Plan

The event has two main parts accommodated on second floor: general sessions in Hahn Hall and Expo space in Grand Hall.Click here to view a floor plan.

Location

Computer History Museum, 1401 N. Shoreline Blvd Mountain View, CA 94043, T: 650.810.1010 F: 650.810.1055

Airports

Fly into either (SJC) San Jose International Airport (12.2 miles from CHM) or (SFO) San Francisco International Airport (23.9 miles from CHM). Both are off of Highway 101. CHM is right off of N. Shoreline exit off of Highway 101.

Hotels near venue

Mountain View

Hilton Garden Inn, 840 East El Camino Real Mountain View, CA 94040 Tel: 650-964-1700 | Fax: 650-964-7900 (4.6 miles from CHM) www.hiltongardeninn.com

Hotel Avante, (This is a small boutique hotel - No restaurant) 860 E. El Camino Mountain View, CA 94040 Tel: 650-940-1000 | Fax: 650-968-7870 | Toll free: 800-538-1600 (4.6 miles from CHM) http://www.jdvhospitality.com/hotels/hotel/17

Palo Alto

Crowne Plaza Cabaña Hotel, 4290 El Camino Real Palo Alto, CA 94306 Tel: 650-8570787 | Fax: 650-4961939 | Toll Free: 888-422-2264 (4.0 miles from CHM) www.cppaloalto.crowneplaza.com/

Four Seasons Hotel Silicon Valley at East Palo Alto, 2050 University Avenue, University Circle E. Palo Alto, CA 94303 Tel: 650-566-1200 | Fax: 650-566-1221 (6.0 miles from CHM) http://www.fourseasons.com/siliconvalley/

Garden Court Hotel, (Italian Restaurant off lobby – walk into downtown) 520 Cowper Street, Palo Alto, CA 94301 Tel: 650-322-9000 | Toll free: 800-824-9028 (7.4 miles from CHM) hotel@gardencourt.com

San Jose

Fairmont Hotel San Jose, (Italian Restaurant off lobby – walk into downtown) 170 South Market Street San Jose, CA 95113 Tel: 408-998-1900 (13.9 miles from CHM) www.fairmont.com

Santa Clara

The Westin Santa Clara, 5101 Great America Parkway Santa Clara, CA 95054 Tel: 408-986-0700 | Fax: 408-980-3990 | Toll free: 800-228-3000 (8.1 miles from CHM) http://www.thewestinsantaclara.com/welcome.htm

Hilton Santa Clara, 4949 Great America Parkway, Santa Clara, CA 95054 Tel: 408-330-0001 | Fax: 408-562-6736 (8.0 miles from CHM)

Attire and Dress Code

The conference attire for all sessions and social events is business casual. A sweater or jacket is suggested for outdoor activities.

Register today!

Thank you for your interest in Second Annual UP 2011 conference. Please use the form below to register for full access to the conference. If you experience any problems with this form, or it does not render please try to register directly at http://up11.eventbrite.com If you still experience any difficulties, please contact us at info@up-con.com For feature comparison list, please visit this page.

<Return to section navigation list>

Other Cloud Computing Platforms and Services

Pete Johnson (@nerdguru) offered a Peek Inside [the HP Cloud Services] Private Beta with the Getting Started Video in a 10/25/2011 post:

It’s kept you up at night, hasn’t it? You heard about our private beta program in September, filled out a sign up form and then you waited. And waited some more. You may be wondering if what we have is real or if it’s just another slideware cloud strategy with nothing behind it, right?

You want proof that there’s light at the end of the tunnel. You want pictures. You want video. You want a tour of HP Cloud Services narrated by a tall bald man with glasses and a melodious voice - yours truly.

Well, today is your lucky day. Your wait is about to come to an end! As I write this we are expanding our private beta to quickly on-board those of you awaiting an invite code. You should be hearing from us within the next few weeks, but in the meantime you can take a look at what you’ll get to play with by looking at our Getting Started Video.

A whole lot of work by a whole lot of people went into making our private beta a reality. All I had to do was show it in action with a video that greets new customers and gives them the lay of the land. At first, we put password protection on it so only those in private beta could see it, but with the overwhelming response we had with sign ups we decided to let everyone peek inside by viewing our Getting Started video.

It’s not going to win any Oscars but it will show you how to launch Compute instances or add files to Object Storage through the web experience, interact with the system using our Command Line Interface, as well as show you early versions of our technical documentation and our forums so you can figure out how to build things yourself or get help from others if you get stuck. Bonus points if you can find the hidden movie references that Emil doesn’t realize I put in for fun.

HP Cloud Services: Getting Started from HP Cloud on Vimeo.

In all seriousness, we’re having a great time working with everybody who has been using HP Cloud Services during the private beta. If you haven’t received an invite code yet, please be patient as we ramp up. We’ve had a ton of great feedback so far and are iterating as quick as we can to make HP Cloud Services the best it can be. If you have questions about your on-boarding status let us know at cloudsales@hp.com

Pete is HP’s Community Manager.

I’m still waiting to be on-boarded.

Alex Handy reported Oracle says ‘yes’ to NoSQL in a 10/26/2011 post to the SD Times on the Web blog:

October has been a busy month for the emerging NoSQL market. Oracle kicked it off with the announcement at its annual conference of its own NoSQL database, a scalable cluster-based version of the Berkeley DB key/value store. Then, the Apache Foundation and DataStax announced the release of Cassandra 1.0, which adds multi-threaded compaction and performance improvements to the popular NoSQL database.

Despite the heady pace of innovation and the release of new open-source projects in the NoSQL space, there are still new projects emerging almost every day. Even as Oracle is entering a market that until now it has maintained was filled with snake oil, smaller startups and groups of developers are still putting together new and novel solutions for quickly managing and storing large amounts of data.

And that's probably because some NoSQL companies are already seeing big gains inside enterprises and government organizations. MarkLogic, the company behind the unstructured data store of the same name, has been growing quickly with the rise of the NoSQL movement, said David Gorbet, vice president of product strategy at MarkLogic.

Gorbet said MarkLogic was founded in 2003, and that its database product increasingly fills a hole left by traditional relational databases. “The principle of the company is that there is some data that's difficult to fit into a relational data store that needs a different paradigm for managing it, but it still needs the benefits of a database behind that," he said.

"Our first customers were in intelligence and publishing, and more recently we've been making inroads to financial services and healthcare firms that have big data problems. We're a private company with over 250 employees. We've had great growth. We grew by 45% in 2010, and we're on track to grow faster than that in 2011."

That growth is fueled by the increasing need within enterprises for solutions to their big data problems. While solutions like Hadoop are handling the after effects of the big-data explosion and allowing enterprises to get their arms around the data slowly, NoSQL solutions deal with the opposite end of the problem: storing and making that unstructured data available for immediate use in scalable Web and mobile applications. …

Read more: Next Page, Pages 2, 3, 4

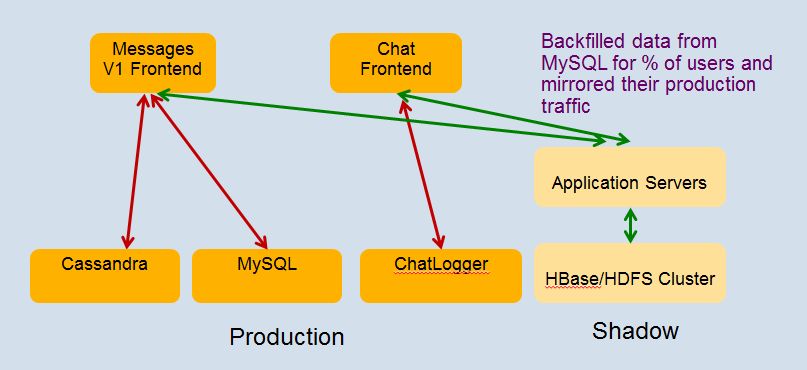

James Hamilton summarized the Storage Infrastructure Behind Facebook Messages in a 10/25/2011 post:

One of the talks that I particularly enjoyed yesterday at HPTS 2011 was Storage Infrastructure Behind Facebook Messages by Kannan Muthukkaruppan. In this talk, Kannan talked about the Facebook store for chats, email, SMS, & messages.

This high scale storage system is based upon HBase and Haystack. HBase is a non-relational, distributed database very similar to Google’s Big Table. Haystack is simple file system designed by Facebook for efficient photo storage and delivery. More on Haystack at: Facebook Needle in a Haystack.

In this Facebook Message store, Haystack is used to store attachments and large messages. HBase is used for message metadata, search indexes, and small messages (avoiding the second I/O to Haystack for small messages like most SMS).

Facebook Messages takes 6B+ messages a day. Summarizing HBase traffic:

- 75B+ R+W ops/day with 1.5M ops/sec at peak

- The average write operation inserts 16 records across multiple column families

- 2PB+ of cooked online data in HBase. Over 6PB including replication but not backups

- All data is LZO compressed

- Growing at 250TB/month

- The Facebook Messages project timeline:

- 2009/12: Project started

- 2010/11: Initial rollout began

- 2011/07: Rollout completed with 1B+ accounts migrated to new store

- Production changes:

- o 2 schema changes

- o Upgraded to Hfile 2.0

They implemented a very nice approach to testing where, prior to release, they shadowed the production workload to the test servers.

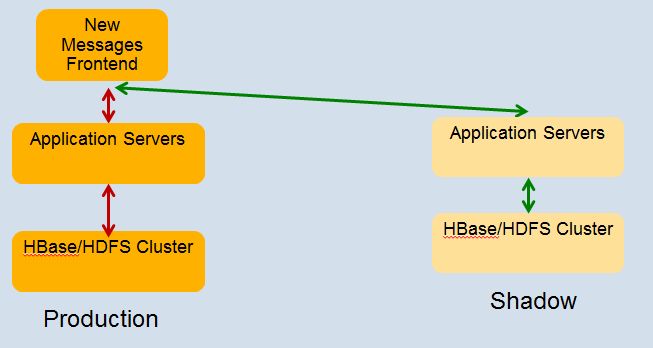

After going into production the continued the practice of shadowing the real production workload into the test cluster to test before going into production:

The list of scares and scars from Kannan:

- Not without our share of scares and incidents:

- o s/w bugs. (e.g., deadlocks, incompatible LZO used for bulk imported data, etc.)

- § found a edge case bug in log recovery as recently as last week!

- performance spikes every 6 hours (even off-peak)!

- o cleanup of HDFS’s Recycle bin was sub-optimal! Needed code and config fix.

- transient rack switch failures

- Zookeeper leader election took than 10 minutes when one member of the quorum died. Fixed in more recent version of ZK.

- HDFS Namenode – SPOF

- flapping servers (repeated failures)

- Sometimes, tried things which hadn’t been tested in dark launch!

- o Added a rack of servers to help with performance issue

- § Pegged top of the rack network bandwidth!

- § Had to add the servers at much slower pace. Very manual .

- § Intelligent load balancing needed to make this more automated.

- A high % of issues caught in shadow/stress testing

- Lots of alerting mechanisms in place to detect failures cases

- o Automate recovery for a lots of common ones

- o Treat alerts on shadow cluster as hi-pri too!

- Sharding service across multiple HBase cells also paid off

Kannan’s slides are posted at: http://mvdirona.com/jrh/TalksAndPapers/KannanMuthukkaruppan_StorageInfraBehindMessages.pdf

There’s no question that Facebook Messages are BigData.

Scott M. Fulton, III (@SMFulton3) reported Oracle Formally Embraces NoSQL, Implies It Invented NoSQL in a 10/24/2011 post to the ReadWriteHack blog:

Whether the acronym "NoSQL" stands for "not only SQL," as some database architects conten[d] or literally "no SQL," up until this month it has been taken to imply "no Oracle." One of the many hallmarks of Oracle's SQL RDBMS technology, historically, has been consistency -- the notion that every client perceives the same view of the data at any one time. Maintaining consistency, among other factors, incurs latency issues as database sizes scale with social media into the stratosphere.

NoSQL databases scale up*, but typically at the expense of consistency, which is something you wouldn't think Oracle would want to give up.

This morning, Oracle lifted the veil on its first branded NoSQL software product, whose existence was inferred (albeit with blaring klaxons) three weeks ago at the company's OpenWorld conference. As the company's vice president for database development, Marie-Anne Neimat, told RWW this morning, one of Oracle NoSQL's key differentiators will concern how it mitigates the consistency problem: by enabling users to scale the tradeoff.

"It's a distributed key/value store with built-in high availability, and it also offers users options as to the level of consistency that they want," Neimat tells us. "Consistency is made somewhat easier because the user can choose the level of consistency he or she wants, and can therefore trade rigid consistency for latency. One can be totally consistent at the potential cost of high latency, or one can say, 'I'm willing to compromise on consistency, but I'll have very fast response times.'"

A consistent message on consistency

Queries will be able to request the master copy of data when necessary, says Neimat, or alternately the most readily available, or "closest," copy. When a database administrator requests rigid, or "absolute consistency," the system will update all copies of the data prior to returning any data to the user at all. In-between, the user can request what Neimat calls "majority consistency," or quicker still, "single copy consistency," where there's no guarantee the returned image will match any copy on any other partition. In such cases, other copies of the data will be updated asynchronously.

As a white paper released this morning concedes (PDF available here), an application can be programmed to return immediately after a write process has concluded, even though there's no evidence at that point that the written data was made persistent (backed up throughout the system). "Such a policy provides the best write performance, but provides no durability guarantees," the white paper says. "By specifying when the database writes records to disk and what fraction of the copies of the record must be persistent (none, all, or a simple majority), applications can enforce a wide range of durability policies.

Last January, Google's approach to the consistency/latency tradeoff was the publication of its own approach, entitled Megastore (PDF available here). It's characterized by an algorithmic method, called Paxos, for distributing vast amounts of data among multiple nodes while maintaining a relatively acceptable degree of consistency, and giving developers visibility into potential performance costs in advance of such activities as inner joins and schema changes.

Today, Oracle's white paper takes a quintessentially Oracle tack, saying not only did it embrace NoSQL, but it effectively invented NoSQL by having acquired Innobase in October 2005. Innobase was the originator of the Berkeley DB key/value store currently used in Oracle's embedded databases; and this morning, Oracle admitted that Berkeley DB lies at the heart of its new NoSQL.

"Although some of the early NoSQL solutions built their systems atop existing relational database engines, they quickly realized that such systems were designed for SQL-based access patterns and latency demands that are quite different from those of NoSQL systems, so these same organizations began to develop brand new storage layers," reads this morning's white paper. "In contrast, Oracle's Berkeley DB product line was the original key/value store; Oracle Berkeley DB Java Edition has been in commercial use for over eight years. By using Oracle Berkeley DB Java Edition as the underlying storage engine beneath a NoSQL system, Oracle brings enterprise robustness and stability to the NoSQL landscape."

Propagation and migration

As Marie-Anne Neimat made clear to us this morning, the "No" in "Oracle NoSQL" truly does mean no. "The data is sharded around many nodes. For each partition, there is a master and several copies. The updates always go to the master copy, and are then propagated to the other copies, either synchronously or asynchronously," she explains. "But it's not an Oracle-style database; it's a key/value store."

In Oracle's scheme, like with other NoSQL databases, the data is distributed over multiple storage nodes in the network by way of hashing algorithms. For an application to get or put data, it utilizes a client library, which in this case is a Java API. The library is aware of the hashing algorithm, which helps it to determine the identity of the node where data resides.Now that Oracle is in the business of producing both principal types of database managers, which is best suited for SQL and which for NoSQL? "Our view at the moment is that NoSQL's main advantage is the flexibility of the schema," responds Neimat. "With a relational database and SQL, you pretty much have to decide the tables and the attributes you will have. And yes, you may evolve them over time, but it's still a big deal to evolve a relational schema. But with a NoSQL database, you have the advantage that the schema is in the eyes of the developer, and can evolve over time. It does put some of the burden of the knowledge of the schema on the application writers, so it's a little harder to write applications. On the other hand, you have the benefit of flexibility.

"As things settle down," she continues, "and one knows exactly what the schema should be, one can envision migrating to a SQL database... What Oracle wants to do is provide our customers with all means of managing data, and all means of migrating data from one model to the other to make life easier, and to provide them with the best enterprise-level support.

Many of the leading open source NoSQL models available today, including Neo4j and Infinite Graph, describe themselves as graph databases. They use key/value pairs as well, but data nodes are related to other data nodes by way of properties or attributes, the way a predicate modifies a subject by way of an adverb. At least for now, Neimat tells us, Oracle NoSQL should not be considered a graph database. Its storage model should instead be based around columns, the records within which may be related by way of subkeys. Here, records that are closely associated with one another are stored and indexed in the immediate vicinity of one another to ensure fast lookup times. But records that are more incidentally associated with one another are joined by minor keys, defined by the program but not necessarily indexed together.

Here is where the SQL concept of the record (a row in a table) is broken down, and you have to be careful to distinguish associations (as I did above) from relationships. The principal association between Oracle NoSQL records is established by what's called a major key path (for example, "Brian Fuller," "Scott Fulton," "Henry Fulton") and the subordinate associations by minor key paths (Brian's address, Scott's address, Henry's address). What SQL would call a "second-order relation" might be associated using NoSQL by a minor key path.

Down the road, an admin may have the bright idea of associating the names columns with the filenames of their online avatar's (Brian's mug shot, Scott's mug shot, Henry's mug shot). This can be done without reinventing the schema, Neimat explains. "The subkey model is a very convenient way to model an arbitrary number of columns, and different records for different columns, of adding attributes in an ad hoc manner and not having to plan the schema ahead of time."

Can Oracle still claim "atomicity?"

So it might amaze some to discover that Oracle is promising atomic transactions, or "ACID capability," for all write and update functions despite the fact that consistency is now a variable. Atomic transactions will apply to records that share the same main key, says Neimat, but not necessarily the same subkeys.

For the moment, she adds, Oracle NoSQL will not have direct support [for] the Oracle Public Cloud, meaning it won't be aware of the exclusive characteristics of the storage nodes where its databases are hosted. A future release may add data center-specific features, such as the ability for the admin to specify a recovery data center for explicit backups and recoveries, and also to outline some of the geography of a local data center versus a remote or cloud-based center. That geography may be helpful in establishing a proper order for updates to be propagated asynchronously among nodes -- for example, local nodes first.

We can now state with absolute certainty that NoSQL is no longer the alternative to major-brand database management systems. The question now is whether customers will begin perceiving Oracle as the stronger alternative in the NoSQL realm.

See Also

* I believe Scott meant to say “scale out.”

I certainly wouldn’t perceive “Oracle as the stronger alternative in the NoSQL realm.” If Larry Ellison should suddenly decide his BerkeleyDB-based contender is biting the golden apple of Oracle RDBMS profits, it would be gone in an instant.

<Return to section navigation list>

0 comments:

Post a Comment