Windows Azure and Cloud Computing Posts for 9/21/2011+

| A compendium of Windows Azure, SQL Azure Database, AppFabric, Windows Azure Platform Appliance and other cloud-computing articles. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database and Reporting

- Marketplace DataMarket and OData

- Windows Azure AppFabric: Apps, Access Control, WIF and Service Bus

- Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch and Entity Framework v4+

- Windows Azure Infrastructure and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

Azure Blob, Drive, Table and Queue Services

No significant articles today.

<Return to section navigation list>

SQL Azure Database and Reporting

Samidip Basu (@samidip) described Azure Services connecting Windows Phone to Data in a 9/21/2011 post to the Silverlight Show blog:

This article would be a natural continuation of my previous post on “Connecting Azure and Windows Phone through OData” and show a different way of leveraging Azure cloud services for your Mobile applications.

Don't miss...

- Webinar recording: Building LOB apps with Silverlight and WCF Data Services

- Article series: Producing and Consuming OData in a Silverlight and WP7 application

- The OData series offline version (MOBI/EPUB/PDF/Word files and source code):

Also, Peter Kuhn (aka MisterGoodcat) did a brilliant article series on “Getting Ready for the Windows Phone 7 Exam 70-599” and I wanted to expand on the Data Access Strategy section by talking more about connectivity to Windows Azure.

Prelude

In the last article (here), we talked about how OData can really be very handy when providing cloud data backend support for a variety of mobile & web platforms. We simply had a SQL Azure database & used the SQL Azure services to expose the data through an OData feed straight out of Azure, which had full support for CRUD operations given the right roles. However, this approach might seem brute-force for some project needs. For one, we did not have any granularity over how much of a Table’s data we wanted to expose; it was all or nothing. Also, what if you did not want to expose all of the data, but just a few operations on it? And what if you really wanted to have your database on-premise; yet use the ubiquitous Azure services to empower Mobile applications to perform operations on the data? That’s what we talk about in this installment :)

The Database

Now, let’s say we are trying to write a Windows Phone application that interacts with data housed in a remote Database. This is obviously quite a common scenario, because it allows for the data to be utilized/consumed on other platforms. Now, for this demo, I chose to use a simple Database hosted in SQL Azure, since I find it very easy to do so. It is important to note that this Database can be just about anything, on-premise or in the cloud; as long as .NET can connect to it, we should be fine. That said, here’s my Azure Portal set-up:

You’ll notice that I have used one of my Azure subscriptions to create a “PatientData” Database in my SQL Azure server .. this will house our super-secret patient information :) Now, before moving on, you may want to copy the appropriate Connection String to this Database so that we may connect to it remotely. Now, let’s get inside our “PatientData” Database and create a simple table, also called “PatientData”, & fill in some dummy data like here:

The data schema is over-simplified: the ID field is an auto-incrementing integer & we just keep track of Patient “Name” & “Condition” – told you we were dealing with sensitive data :)

Again, this Database could be anywhere. What we want to do however, is to have a Service that is globally accessible from mobile applications and one that interacts with the backend Database only supporting agreed-upon operations.

The Service

We want a simple a service that interacts with our backend Database & one that can be consumed from a Windows Phone application. Again, Windows Azure seems like one of the easiest places to host such a service and make it globally accessible. So, here’s our goal:

- Create a Windows Azure cloud project

- Use ADO.NET Entity Data Model as an ORM Mapper to our backend Database

- Create a simple WCF Service inside of a Azure WebRole

- Utilize the ORM context to expose specific operations through the WCF service

- When all is done & working, host the WCF service in Azure

This is how we start:

Next step, we need a way to map the relational Database table into .NET world objects .. this is where an Object Relational Mapper [ORM] steps in. For our purpose, let’s add the ADO.NET Entity Data Model to our project, like below:

As a part of adding the ADO.NET Entity Data Model, you step through a wizard that is essentially going to point to a Database table to reflect upon & provides column-mapping as Properties to a .NET object. This is where we get to utilize the “Connection String” that we had copied from the Azure Portal, so that we can point ADO.NET Entity Data Model to our SQL Azure table, as shown in the steps below:

The last image shows generated “.edmx” file that ADO.NET Data Entity Model created, on being pointed to our Database Table. That’s it! Now we have “PatientData” as an entity in our C# code. The final project structure should look like this:

Now, let’s define the “PatientService” Interface with exactly the operations we want to support & implement them. For this demo, I chose to do two – one that gets us a list of “Patients” & one that allows for adding “Patients”. Here’s the simple Interface:

1: namespace PatientService2: {3: [ServiceContract]4: public interface IPatientServiceInterface5: {6: [OperationContract]7: List<PatientData> GetPatientList();8:9: [OperationContract]10: string AddPatient(PatientData newPatient);11: }12:13: }And the implementation:

1: namespace PatientService2: {3: public class PatientDataService : IPatientServiceInterface4: {5: #region "Interface Implementation"6:7: public List<PatientData> GetPatientList()8: {9: var context = new PatientDataEntities();10: return context.PatientData.ToList<PatientData>();11: }12:13: public string AddPatient(PatientData newPatient)14: {15: try16: {17: var context = new PatientDataEntities();18: context.AddObject("PatientData", newPatient);19:20: context.SaveChanges();21: return "Success!";22: }23: catch (Exception e)24: {25: return e.Message;26: }27: }28:29: #endregion30: }31: }Notice how we are easily able to utilize the “PatientDataEntities” mapping provided by ADO.NET Entity Data Model as a “Context” within our service implementation. Now, with our service looking like it has all the needed pieces, we do some testing locally to make sure that the methods invoked do actually reach out to our SQL Azure Database & do what they are supposed to do. If satisfied, comes the next step of hosting our service in Azure for ubiquitous availability. This is where the “Windows Azure Project” template helps, as it has already provided an Azure wrapper for our WCF Service. Here the steps to host our simple service in Azure:

- Right Click on the “AzurePatientService” cloud project & select “Publish”

- Then, “Create Service Packages only”

- This should build our Project & produce two deliverables

- One is the Cloud Service Configuration File (.cscfg)

- Other, the actual Project package (.cspkg)

The steps are demonstrated below:

Once the deployment packages are created locally, you may want to copy the directory file path, as we shall need it in the next step. Now, to actually hosting the Service in Azure – Create a new “Hosted Service” in the Azure Portal, with appropriate settings. Notice how we use our local directory file path to point Azure where to pick up the Service Configuration & Project Package from. Also, for demo purposes, we are directly making our Service live in “Production”; actual Services may benefit from being tested in “Staging” before going to “Production”.

Now, little wait till Azure spins up the resources needed to host our Service & voila, we are live in Azure! Let’s hit the WCF endpoint to make sure our Service is properly hosted & its metadata is accessible. If you’re with me so far, you now know how to deploy & host simple WCF Services in Azure! The rest will be easy :)

The Windows Phone Client

Now that we have our WCF Service hosted & accessible in Azure, consuming it from our Windows Phone Mango application will be standard procedure. Let us aim to build a simple Windows Phone client that leverages the two operations supported by our Service – show list of “Patients” & ability to add new “Patients”. My demo UI looks like the following:

As we start building our Windows Phone client, one of our first steps would be to “Add Service Reference” to our Service, so that our project knows the metadata & exposes methods from the Service. This is as simple as copying the URL to our Azure-hosted Service & adding it as a Reference as below:

That’s it! .NET builds the proxies & we are ready to consume our Service methods. Here’s how we request the “List of Patients” from our Service:

1: PatientServiceInterfaceClient PatientServiceClient = new PatientServiceInterfaceClient();2: PatientServiceClient.GetPatientListCompleted += new EventHandler<GetPatientListCompletedEventArgs>(PatientServiceClient_GetPatientListCompleted);3: PatientServiceClient.GetPatientListAsync();4:5: void PatientServiceClient_GetPatientListCompleted(object sender, GetPatientListCompletedEventArgs e)6: {7: ObservableCollection<PatientData> patientList = e.Result;8: this.lstPatients.ItemsSource = patientList;9: }And the little XAML code to bind the results to UI:

1: <ListBox Name="lstPatients" Margin="20,0,0,60">2: <ListBox.ItemTemplate>3: <DataTemplate>4: <StackPanel Orientation="Vertical">5: <StackPanel Orientation="Horizontal">6: <Image Source="/Images/Medicine.png" Height="50" Width="50"/>7: <TextBlock Text="{Binding Name}" FontSize="40" Margin="10,0,0,0" Style="{StaticResource PhoneTextAccentStyle}"/>8: </StackPanel>9: <TextBlock Text="{Binding Condition}" FontSize="32" Margin="60,0,0,20"/>10: </StackPanel>11: </DataTemplate>12: </ListBox.ItemTemplate>13: </ListBox>Here’s how we use the Service to “Add Patients”:

1: // Instantiate the client.2: PatientServiceInterfaceClient PatientServiceClient = new PatientServiceInterfaceClient();3:4: PatientData newPatient = new PatientData();5: newPatient.Name = this.txtName.Text.Trim();6: newPatient.Condition = this.txtCondition.Text.Trim();7:8: // Invoke method asynchronously.9: PatientServiceClient.AddPatientCompleted += new EventHandler<AddPatientCompletedEventArgs>(PatientServiceClient_AddPatientCompleted);10: PatientServiceClient.AddPatientAsync(newPatient);11:12: void PatientServiceClient_AddPatientCompleted(object sender, AddPatientCompletedEventArgs e)13: {14: if (e.Result == "Success!")15: {16: MessageBox.Show("Voila, Saved to the Cloud :)", "All Done!", MessageBoxButton.OK);17: this.NavigationService.Navigate(new Uri("/PatientList.xaml", UriKind.Relative));18: }19: else20: {21: MessageBox.Show(e.Result, "Save Error!", MessageBoxButton.OK);22: this.NavigationService.Navigate(new Uri("/PatientList.xaml", UriKind.Relative));23: }24: }Conclusion

So, what we talked about so far was ways in which Azure can be of much benefit in hosting WCF Services, which in turn communicate with remote Databases & could easily provide CRUD operation functionality to your backend server. You could take this concept & literally make your services do anything you want .. Azure just makes hosting a snap, with high availability & failover. And, each of these Services can be easily consumed from Mobile applications, like the Windows Phone one in our case. Hope this article demonstrated some techniques & got you thinking on how to Service-enable your Mobile applications. Thanks a lot for reading & cheers to SilverlightShow.

Samidip is a technologist and gadget-lover working as a Manager and Solutions Lead for Sogeti out of the Columbus OH Unit.

<Return to section navigation list>

MarketPlace DataMarket and OData

No significant articles today.

<Return to section navigation list>

Windows Azure AppFabric: Apps, Access Control, WIF and Service Bus

Neil MacKenzie (@mknz) explained Windows Azure AppFabric Service Bus Brokered Messaging in a 9/21/2011 post:

At Build 2011, Microsoft released the Windows Azure AppFabric Service Bus Brokered Messaging feature which has been previewed in the AppFabric Labs environment over the last few months. Service Bus Brokered Messaging provides a sophisticated publish/subscribe mechanism supporting disconnected communication between many producers and many consumers. This capability can be used to support load leveling among one or more producers and load balancing among one or more consumers, thereby supporting increased scalability of a service.

Clemens Vasters (@clemensv) announced the release on the Windows Azure Team blog. The MSDN documentation for Windows Azure AppFabric v1.5 release, including Brokered Messaging, is here. Valery Mizonov (@TheCATerminator), of the Windows Azure Customer Advisory Team, has a post on Best Practices for Leveraging Windows Azure Service Bus Brokered Messaging API. Rick Garibay (@rickggaribay) has a post describing the differences between the preview and release versions that includes links to additional resources. Alan Smith (@alansmith) has a post providing an AppFabric Walkthrough: Simple Brokered Messaging (1st in a series). There is also a post comparing Windows Azure Storage Queues with AppFabric Service Bus Brokered Messaging.

This post does not cover the WCF bindings and the REST API for Brokered Messaging that are also contained in the release. The post is a replacement for an earlier post describing the preview release of Service Bus Brokered Messaging.

Intro to Brokered Messaging

The name brokered messaging is used to distinguish the functionality from direct messaging in which a producer communicates directly with a consumer, and relayed messaging in which a producer communicates with a consumer through a relay. Both direct messaging and relayed messaging are subject to backpressure from the consumer being limited in how fast it can consume messages, and this causes the producer to throttle message production thereby limiting service scalability. By contrast, brokered messaging uses a high-capacity intermediate store to consume and durably store messages which can then be pulled by the consumer. The benefit of this is that the producer and consumer can scale independently of each other since the intermediate message broker buffers any difference in the real-time ability of the producer to send messages and the consumer to receive them.

The Windows Azure AppFabric Service Bus supports two distinct forms of brokered messages

- Queues

- Topics & Subscriptions

Queues represent a persistent sequenced buffer into which one or more producers send messages to the tail and one or more consumers compete to receive messages from the head. A queue has a single cursor, pointing to the current message, that is shared among all consumers. This cursor is moved each time a consumer receives a message. Service Bus Queues provide various methods to modify that default handling – including the timed visibility of messages on the queue, the deferral of message processing, and the ability of messages to reappear on the queue.

Topics supports a persistent sequenced buffer into which one or more producers send messages to the tail of the buffer which has multiple heads referred to as subscriptions, each of which receives distinct copies of the messages. One or more consumers compete to receive messages from the head of a subscription. Each subscription has its own cursor that is moved each time a message is retrieved. A subscription can be filtered so that only a subset of messages are available through it. This provides for functionality where geographically-focused subscriptions contain messages only for particular regions while an auditing subscription contains all the messages.

TokenProvider Classes

The AppFabric ServiceBus v1.5 release introduced the following classes, derived from TokenProvider, to support authentication with Service Bus Brokered Messaging.

The TokenProvider class creates factory methods to create instances of the various token providers. The factory methods for the SharedSecretTokenProvider supports the creation of a token from the issuer and shared secret for the service bus namespace.

These classes are all in the Microsoft.ServiceBus namespace in the Microsoft.ServiceBus.dll assembly.

NamespaceManager Class

The NamespaceManager and NamespaceManagerSettings classes are used to manage the Windows Azure AppFabric Service Bus namespace and, more specifically, the rendezvous endpoints used in Brokered Messaging. It exposes methods supporting the creation and deletion of queues, topics and subscriptions. The NamespaceManagerSettings class exposes the OperationTimeout and the TokenProvider used with operations performed by an associated NamespaceManager.

The NamespaceManager class is declared (in truncated form):

public sealed class NamespaceManager {

public NamespaceManager(String address, NamespaceManagerSettings settings);

public NamespaceManager(Uri address, NamespaceManagerSettings settings);

public NamespaceManager(String address, TokenProvider tokenProvider);

public NamespaceManager(Uri address, TokenProvider tokenProvider);public Uri Address { get; }

public NamespaceManagerSettings Settings { get; }public QueueDescription CreateQueue(String path);

public QueueDescription CreateQueue(QueueDescription description);

public SubscriptionDescription CreateSubscription(String topicPath, String name, Filter filter);

public SubscriptionDescription CreateSubscription(String topicPath, String name,

RuleDescription ruleDescription);

public SubscriptionDescription CreateSubscription(SubscriptionDescription description,

RuleDescription ruleDescription);

public SubscriptionDescription CreateSubscription(String topicPath, String name);

public SubscriptionDescription CreateSubscription(SubscriptionDescription description,

Filter filter);

public SubscriptionDescription CreateSubscription(SubscriptionDescription description);

public TopicDescription CreateTopic(String path);

public TopicDescription CreateTopic(TopicDescription description);

public void DeleteQueue(String path);

public void DeleteSubscription(String topicPath, String name);

public void DeleteTopic(String path);

public QueueDescription GetQueue(String path);

public IEnumerable<QueueDescription> GetQueues();

public IEnumerable<RuleDescription> GetRules(String topicPath, String subscriptionName);

public SubscriptionDescription GetSubscription(String topicPath, String name);

public IEnumerable<SubscriptionDescription> GetSubscriptions(String topicPath);

public TopicDescription GetTopic(String path);

public IEnumerable<TopicDescription> GetTopics();

public Boolean QueueExists(String path);

public Boolean SubscriptionExists(String topicPath, String name);

public Boolean TopicExists(String path);

}The constructors provide various ways of creating a NamespaceManager from a full namespace URI and a token provider, either directly or through a NamespaceManagerSettings instance. There are also asynchronous versions of all the methods.

The ServiceBusEnvironment class contains some helper methods to create the appropriate namespace URI. For example, the following code creates a shared secret token provider and a service URI – and uses them to create a NamespaceManager instance:

String serviceNamespace = “mynamespace”;

String issuer = “owner”;

String issuerKey = “base64-encoded key”;TokenProvider tokenProvider = TokenProvider.CreateSharedSecretTokenProvider(

issuer, issuerKey);

Uri serviceUri = ServiceBusEnvironment.CreateServiceUri(“sb”, serviceNamespace,

String.Empty);

NamespaceManager namespaceManager = new NamespaceManager(serviceUri, tokenProvider);The NamespaceManager class provides an extensive set of methods supporting the management of queues, topics and subscriptions – including the creation, deletion and existence checking of them. It also provides methods allowing for the retrieval of the properties of either a specific queue, topic and subscription, or all of each type. Each of these methods is available in both synchronous and asynchronous versions.

The following example uses a NamespaceManager instance to create a queue asynchronously, and then synchronously a topic and an associated subscription.

IAsyncResult iAsyncResult = namespaceManager.BeginCreateQueue(

“myqueue”,

(result) =>

{

namespaceManager.EndCreateQueue(result);

},

null);TopicDescription topicDescription = namespaceManager.CreateTopic(“mytopic”);

SubscriptionDescription subscriptionDescription =

namespaceManager.CreateSubscription(topicDescription.Path, “mysubscription”);The delete and existence-checking methods are invoked similarly to the create methods.

Note that service scalability can be significantly improved by using asynchronous calls rather than blocking synchronous calls. This is particularly true when receiving messages from queues and subscriptions. For clarity, this post primarily uses synchronous calls because it is easier to understand what is going on. However, the Valery Mizonov post on Best Practices for Leveraging Windows Azure Service Bus Brokered Messaging API has an extensive discussion on the robust use of asynchronous calls.

Note that other than to avoid namespace collisions no knowledge of how the namespace is allocated to queues, topics and subscriptions is needed when using the Brokered Messaging API. However, for completeness with a service named myservice, a queue named myqueue, a topic named mytopic and a subscription named mysubscription the following show examples of the full address for a queue, a topic and a subscription respectively:

- https://myservice.windows.net/myqueue

- https://myservice.windows.net/mytopic

- https://myservice.windows.net/mytopic/subscriptions/mysubscription

QueueDescription, TopicDescription and SubscriptionDescription

The QueueDescription, TopicDescription and SubscriptionDescription classes are used with the NamespaceManager factory methods to parameterize the creation of queues, topics and subscriptions. It is interesting to look at the properties of these classes since they provide a direct way of understanding the functionality exposed through queues, topics and subscriptions. In particular, when displayed in tabular form it becomes immediately apparent which properties affect message sending and which affect message retrieval.

DefaultMessageTimeToLive specifies the default time to live for a message. DuplicateDetectionHistoryTimeWindow specifies the default time window for duplicate message detection. EnableBatchOperations specifies whether server-side batch operations are enabled. EnableDeadLetteringOnFilterEvaluationExceptions specifies whether messages encountering filter-evaluation exceptions on a subscription are sent to the dead letter subqueue. EnableDeadLetteringOnMessageExpiration specifies whether messages which have reached their time-to-live are sent to the dead letter subqueue. LockDuration specifies the default value for the lock duration when a message is retrieved using the PeekLock receive mode.

MaxDeliveryCount specifies the maximum number of

delivery attempts before a message is sent to the dead letter subqueue. MaxSizeInMegabytes specifies the maximum size in megabytes of the queue. Note that the current quotas allow for queues up to 5 GB. MessageCount returns the number of messages in the queue or subscription. Name specifies the name of a subscription. Path specifies the name of a queue or topic. RequiresDuplicateDetection specifies whether the queue or topic implements duplicate detection. RequiresSession specifies whether a MessageSession receiver must be used to retrieve messages from the queue or subscription. SizeInBytes returns the size of the queue or topic. TopicPath specifies the topic path for a subscription.BrokeredMessage

The BrokeredMessage class represents a brokered message to be sent to a queue or topic or retrieved from a queue or subscription. It is declared:

public sealed class BrokeredMessage : IXmlSerializable, IDisposable {

public BrokeredMessage(Object serializableObject, XmlObjectSerializer serializer);

public BrokeredMessage(Stream messageBodyStream, Boolean ownsStream);

public BrokeredMessage();

public BrokeredMessage(Object serializableObject);public String ContentType { get; set; }

public String CorrelationId { get; set; }

public Int32 DeliveryCount { get; }

public DateTime EnqueuedTimeUtc { get; }

public DateTime ExpiresAtUtc { get; }

public String Label { get; set; }

public DateTime LockedUntilUtc { get; }

public Guid LockToken { get; }

public String MessageId { get; set; }

public IDictionary<String,Object> Properties { get; }

public String ReplyTo { get; set; }

public String ReplyToSessionId { get; set; }

public DateTime ScheduledEnqueueTimeUtc { get; set; }

public Int64 SequenceNumber { get; }

public String SessionId { get; set; }

public Int64 Size { get; }

public TimeSpan TimeToLive { get; set; }

public String To { get; set; }public void Abandon();

public IAsyncResult BeginAbandon(AsyncCallback callback, Object state);

public IAsyncResult BeginComplete(AsyncCallback callback, Object state);

public IAsyncResult BeginDeadLetter(AsyncCallback callback, Object state);

public IAsyncResult BeginDeadLetter(String deadLetterReason,

String deadLetterErrorDescription, AsyncCallback callback, Object state);

public IAsyncResult BeginDefer(AsyncCallback callback, Object state);

public void Complete();

public void DeadLetter();

public void DeadLetter(String deadLetterReason, String deadLetterErrorDescription);

public void Defer();

public void EndAbandon(IAsyncResult result);

public void EndComplete(IAsyncResult result);

public void EndDeadLetter(IAsyncResult result);

public void EndDefer(IAsyncResult result);

public T GetBody<T>();

public T GetBody<T>(XmlObjectSerializer serializer);public void Dispose();

public override String ToString();

XmlSchema IXmlSerializable.GetSchema();

void IXmlSerializable.ReadXml(XmlReader reader);

void IXmlSerializable.WriteXml(XmlWriter writer);

}The BrokeredMessage class exposes several constructors as well as various methods to manage methods received using the PeekLock receive mode:

- Abandon() to have the queue or subscription unlock the message and make it visible to consumers.

- Complete() to have the queue or subscription delete the message

- DeadLetter() to have the queue or subscription transfer the message to the dead letter subqueue.

- Defer() to have the queue or subscription defer the message for subsequent processing.

The dead letter subqueue is used as a store where messages can be stored for out-of-band processing. This is typically used when there is a problem with the message which needs to be investigated outside normal processing. Note that a deferred message can only be received by the explicit provision of the sequence number for the message. Consequently, it is essential to save the sequence number for later use before invoking Defer().

These methods exist in both synchronous and asynchronous form. The BrokeredMessage class also exposes various accessor methods to the message body. The BrokeredMessage class has several properties which help describe some of the features of brokered messaging.

ContentType is an application-specific value that can be used to specify the type of data in the message. The CorrelationId is used with the CorrelationFilter to correlate two-way messaging between the producer and consumer. DeliveryCount specifies the number of times the message has been delivered to a consumer. EnqueuedTimeUtc specifies when the message was sent to the queue or topic. ExpiresAtUtc specifies when the message expires on the queue or subscription. Label is an application-specific label for the message. LockedUntilUtc specifies the unlock time for a message received using the PeekLock receive mode. LockToken specifies the lock token of the message received using the PeekLock receive mode. The MessageId is an application-specific identifier for a message.

The Properties collection contains a set of application-specific properties which can be used, instead of the message body, to pass message content. The Properties collection is also accessible during the rule processing for message retrieval from a subscription. ReplyTo contains the name of a queue to which any reply should be sent. The ReplyToSessionId specifies a session the message should be replied to. ScheduledEnqueueTimeUtc specifies a future time when a new message will become visible on the queue or topic. By default, a message becomes visible immediately. The SequenceNumber is a Service Bus provided unique identifier for the message.

The SessionId identifies a session, if any, that the message belongs to.

Size specifies the size of the message in bytes. TimeToLive specifies the time-to-live of the message after which it is either deleted or moved to the dead letter subqueue. With regard to To, your guess is as good as mine.ReceiveMode

A consumer can receive a message in one of two receive modes: PeekLock (the default) and ReceiveAndDelete. In PeekLock receive mode, a consumer receives the message which remains invisible on the queue until the consumer either abandons the message (physically or through a timeout) and the message once again becomes visible or deletes the message on successful completion of processing. This mode supports at least once processing, since each message is consumed one or more times. In ReceiveAndDelete mode, a consumer receives the message and it is immediately deleted from the queue. This mode provides for at most once processing since each message is consumed at most once. A message can be lost in ReceiveAndDelete mode if the consumer fails while processing a message.

MessagingFactory and MessagingClientEntity

Messages are sent and received using instances of classes in the MessagingClientEntity hierarchy:

MessagingClientEntity

MessageReceiver

MessageSession

MessageSender

MessagingFactory

QueueClient

SubscriptionClient

TopicClientThe MessagingFactory class contains factory methods that can be used to create a MessagingFactory instance which can then be used to create instances of the MessageReceiver, MessageSender, QueueClient, SubscriptionClient and TopicClient classes. The QueueClient and SubscriptionClient classes expose methods to create MessageSession intances.

The QueueClient class is used to send and receive messages to a queue. The TopicClient class is used to send messages to a topic while the SubscriptionClient class is used to receive messages from a subscription to a topic. The MessageSender class and MessageReceiver classes abstract the sending and receiving functionality so that they can be used against either queues or topics and subscriptions. The MessageSession class exposes functionality allowing queue and subscription receivers to receive related messages as a “session.” After some receiver receives a message in a session, all messages in that session are reserved for that receiver.

QueueClient

The QueueClient class is to some extent a superset of the TopicClient and SubscriptionClient classes. This is because it has to handle both the sending and receiving of messages. It lacks the functionality related to rules, filters and actions that the SubscriptionClient exposes but sending to a topic is pretty much the same as sending to a queue.

The following is a severely truncated class declaration for the QueueClient class:

public abstract class QueueClient : MessageClientEntity {

public MessagingFactory MessagingFactory { get; }

public String Path { get; }

public Int32 PrefetchCount { get; set; }public static String FormatDeadLetterPath(String queuePath);

public MessageSession AcceptMessageSession();

public void Abandon(Guid lockToken);

public void Complete(Guid lockToken);

public void Defer(Guid lockToken);

public void DeadLetter(Guid lockToken);

public BrokeredMessage Receive();

public void Send(BrokeredMessage message);

public ReceiveMode Mode { get; }

}The PrefetchCount property is a performance enhancement that specifies how many messages should be retrieved BEFORE the first call to Receive(). Note that this can lead to message loss if the consumer fails before completely processing the messages.

The instance methods of the class exist have several overloads, each of which has an associated asynchronous version. AcceptMessageSession() is used to request the next session (with an overload allowing a specific session to be requested). The returned MessageSession instance can then be used to retrieve sequentially the messages in that session.

The Abandon(), Complete(), DeadLetter()and Defer() methods have the same meaning as the equivalent methods in the BrokeredMessage class. The difference is that in this case the lock token contained in the message must be provided as a parameter.

The FormatDeadLetterPath() method is invoked to get the path for the dead letter subqueue. This path can then be used to create a QueueClient to retrieve messages from the dead letter subqueue.

A produces invokes Send() to send a message to the queue while a consumer invokes Receive() to receive a message from the queue. Note that a message received using the ReceiveAndDelete receive mode is deleted immediately from the queue. None of the Abandon(), Complete(), Defer() and DeadLetter() functionality, just described, applies to such a message.

The following code fragment shows messages being sent to two separate queues:

MessagingFactory messagingFactory = MessagingFactory.Create(serviceUri, tokenProvider);

QueueClient bodySender = messagingFactory.CreateQueueClient(“BodyQueue”);

BrokeredMessage brokeredMessage1 = new BrokeredMessage(“serializable message body”);

bodySender.Send(brokeredMessage1);QueueClient propertySender = messagingFactory.CreateQueueClient(“PropertyQueue”);

BrokeredMessage brokeredMessage2 = new BrokeredMessage()

{

Properties = {

{“Month”, “July”},

{“NumberOfDays”, 31}

}

};

propertySender.Send(brokeredMessage2);messagingFactory.Close();

messagingFactory = null;These show two different ways of inserting information in a message – either as serializable content in the message body or as message properties. In the first case, a message containing body text is sent to a queue. In the second case, a message containing a pair of properties is sent to a different queue. These show distinct ways of using messages. Other than being convenient when a message contains only a few properties, the latter becomes essential when using subscriptions since the Service Bus messaging broker can modify message handling based on the value of the message properties. Indeed, it can even modify the values of the properties. This functionality is not available when the actionable content is stored in the message body.

The fragment also shows the closing of the MessagingFactory instance. Each MessagingClientEntity (QueueClient, TopicClient, etc.) gets its own connection and the resources consumed by the connection should be released when no longer needed. This can be done either by invoking Close() on the individual MessagingClientEntity or by invoking close on the MessagingFactory instance, which releases all resources created through the factory instance including any MessagingClientEntity instances created by it.

The following code fragment shows messages being retrieved from two separate queues:

QueueClient bodyReceiver = messagingFactory.CreateQueueClient(“BodyQueue”,

ReceiveMode.ReceiveAndDelete);

BrokeredMessage brokeredMessage3 = bodyReceiver.Receive();

String messageBody = brokeredMessage3.GetBody<String>();

bodyReceiver.Close();QueueClient propertyReceiver = messagingFactory.CreateQueueClient(“PropertyQueue”);

propertyReceiver.BeginReceive(ReceiveDone, propertyReceiver);public static void ReceiveDone(IAsyncResult result)

{

QueueClient queueClient = result.AsyncState as QueueClient;

BrokeredMessage brokeredMessage = queueClient.EndReceive(result);

String messageId = brokeredMessage.MessageId;

String month = brokeredMessage.Properties["Month"] as String;

Int32 numberOfDays = (Int32)brokeredMessage.Properties["NumberOfDays"];switch (numberOfDays)

{

case 28:

brokeredMessage.Defer();

break;

case 30:

brokeredMessage.Complete();

break;

case 31:

brokeredMessage.Abandon();

break;

}

queueClient.Close();

}This sample shows both the synchronous and asynchronous retrieval of messages. In the synchronous case, the actionable content is retrieved from the message body. In the asynchronous case, the actionable content is retrieved from message properties. The property values are then used to select the disposition of the message as deferred, completed or abandoned. Note that this depends on the message receive mode being the default PeekLock. The MessageId needs to be retained for a deferred message since such a message can be retrieved only by MessageId.

TopicClient

A TopicClient is the MessageClientEntity used to send messages to a topic. It exposes synchronous and asynchronous versions of the Send() method.

SubscriptionClient

The SubscriptionClient class us used to handle messages received from a subscription. SubscriptionClient is declared (in truncated form):

public abstract class SubscriptionClient : MessageClientEntity {

public MessagingFactory MessagingFactory { get; }

public String Name { get; }

public Int32 PrefetchCount { get; set; }

public String TopicPath { get; }public IAsyncResult BeginAddRule(String ruleName, Filter filter, AsyncCallback callback,

Object state);

public IAsyncResult BeginAddRule(RuleDescription description, AsyncCallback callback,

Object state);

public IAsyncResult BeginRemoveRule(String ruleName, AsyncCallback callback,

Object state);

public void EndAddRule(IAsyncResult result);

public void EndRemoveRule(IAsyncResult result);

public static String FormatDeadLetterPath(String topicPath, String subscriptionName);

public static String FormatSubscriptionPath(String topicPath, String subscriptionName);

public void RemoveRule(String ruleName);public IAsyncResult BeginAcceptMessageSession(AsyncCallback callback,

Object state);

public IAsyncResult BeginAcceptMessageSession(String sessionId, AsyncCallback callback,

Object state);

public IAsyncResult BeginAcceptMessageSession(TimeSpan serverWaitTime,

AsyncCallback callback, Object state);

public IAsyncResult BeginAcceptMessageSession(String sessionId, TimeSpan serverWaitTime,

AsyncCallback callback, Object state);

public MessageSession EndAcceptMessageSession(IAsyncResult result);

public IAsyncResult BeginAbandon(Guid lockToken, AsyncCallback callback, Object state);

public void EndAbandon(IAsyncResult result);

public IAsyncResult BeginComplete(Guid lockToken, AsyncCallback callback, Object state);

public void EndComplete(IAsyncResult result);

public IAsyncResult BeginDefer(Guid lockToken, AsyncCallback callback, Object state);

public void EndDefer(IAsyncResult result);

public IAsyncResult BeginDeadLetter(Guid lockToken, AsyncCallback callback, Object state);

public IAsyncResult BeginDeadLetter(Guid lockToken, String deadLetterReason,

String deadLetterErrorDescription, AsyncCallback callback, Object state);

public void EndDeadLetter(IAsyncResult result);

public IAsyncResult BeginReceive(AsyncCallback callback, Object state);

public IAsyncResult BeginReceive(TimeSpan serverWaitTime, AsyncCallback callback,

Object state);

public IAsyncResult BeginReceive(Int64 sequenceNumber, AsyncCallback callback,

Object state);

public BrokeredMessage EndReceive(IAsyncResult result);

public ReceiveMode Mode { get; }

}The Begin/End asynchronous pairs of methods have equivalent synchronous methods. The Windows Azure AppFabric Service Bus team is serious about wanting us to do everything asynchronously. Much of the exposed functionality is familiar from the receive capability of a QueueClient – AcceptMessageSession(), Abandon(), Complete(), DeadLetter(), Defer() and Receive().

The novel functionality exposed by a SubscriptionClient is that related to rules used to modify the behavior of a subscription. Note that this affects the subscription NOT the subscription receiver. Any modification to the rules using these methods affect only those messages sent to the associated topic after the change. I’m not convinced that this functionality should be in SubscriptionClient as well as the NamespaceManager since the methods affect the subscription not the SubscriptionClient. As it is care must be taken when there is more than one rule for a subscription since a message can be received more than once if it satisfies more than one rule on the subscription.

Rules, Filters and Actions

The RuleDescription class associates a rule name with a Filter and an optional RuleAction. The default Filter for a RuleDescription is the TrueFilter which always evaluates to true. A Filter is used to filter the messages, based on the values of the message properties, that a subscription handles for a topic. A message passes the filter when it evaluates to true. RuleAction is used to modify the properties of a message when it is received through the subscription. Rules significantly enhance the power of a subscription over a simple queue in that different filter and rule actions can be used for different subscriptions against the same topic. This allows different consumers to have very different views of the messages sent to the topic. Note that a rule does not need to have an associated RuleAction.

There are several Filter classes in the following hierarchy:

Filter

CorrelationFilter

FalseFilter

SqlFilter

TrueFilterThe CorrelationFilter provides a filter on the value of the CorrelationId of a message. This filter is intended to be used when correlating a reply message from a consumer to a producer. A reply message is put on a reply queue with the associated CorrelationId, and the original producer can use a CorrelationFilter on this queue to receive a response to a message it sent. The FalseFilter always fails so that no message is received through a subscription with a FalseFilter. A TrueFilter always succeeds so that all messages are received through a subscription with a TrueFilter. A SqlFilter supports a simple SQL-92 style syntax that can be used to filter messages depending on the property values.

The RuleAction classes have the following hierarchy:

RuleAction

SqlRuleActionSqlRuleAction supports a simple SQL-92 style syntax allowing message properties to be modified as they are received through the subscription.

The following code fragment shows the creation of a topic and two subscriptions and the sending of some messages to the topic. Note that a subscription handles only those messages submitted to the topic after the subscription is created. Similarly, a rule only affects messages submitted after the rule is added.

TokenProvider tokenProvider = TokenProvider.CreateSharedSecretTokenProvider(

issuer, issuerKey);

Uri serviceUri = ServiceBusEnvironment.CreateServiceUri(“sb”, serviceNamespace,

String.Empty);

NamespaceManager namespaceManager = new NamespaceManager(serviceUri, tokenProvider);RuleDescription summerRule = new RuleDescription()

{

Action = new SqlRuleAction(“SET HavingFun = ‘Yes’”),

Filter = new SqlFilter(“Month = ‘June’ OR Month = ‘July’ OR [Month] = ‘August’”),

Name = “SummerRule”

};TopicDescription topicDescription = namespaceManager.CreateTopic(“WeatherTopic”);

SubscriptionDescription summerSubscriptionDescription =

namespaceManager.CreateSubscription(“WeatherTopic”, “WeatherSubscription”, summerRule);

SubscriptionDescription allSubscriptionDescription =

namespaceManager.CreateSubscription(“WeatherTopic”, “AllSubscription”);IEnumerable<RuleDescription> rules = namespaceManager.GetRules(

“WeatherTopic”, “WeatherSubscription”);

List<RuleDescription> listRules = rules.ToList();MessagingFactory messagingFactory = MessagingFactory.Create(serviceUri, tokenProvider);

TopicClient weatherSender = messagingFactory.CreateTopicClient(“WeatherTopic”);BrokeredMessage augustMessage = new BrokeredMessage()

{

Properties = {

{“HavingFun”, “No”},

{“Month”, “August”},

{“NumberOfDays”, 31}

}

};

weatherSender.Send(augustMessage);BrokeredMessage aprilMessage = new BrokeredMessage()

{

Properties = {

{“HavingFun”, “No”},

{“Month”, “April”},

{“NumberOfDays”, 30}

}

};

weatherSender.Send(aprilMessage);BrokeredMessage septemberMessage = new BrokeredMessage()

{

Properties = {

{“HavingFun”, “No”},

{“Month”, “September”},

{“NumberOfDays”, 30}

}

};

weatherSender.Send(septemberMessage);weatherSender.Close();

weatherSender = null;This fragment creates a RuleDescription with an action that sets the HavingFun property of a message to Yes if the Month property is June, July or August. A topic and two associated subscriptions are created, one of which is assigned this rule while the other has no filter (and consequently passes all messages). A MessagingFactory is then created which, in turn, creates a TopicClient used to send to the topic three messages with different property values.

The following code fragment shows the retrieval of messages from two different subscriptions to the same topic. The first subscription has a rule that filters the messages and transforms the message properties while the second has rule that passes through all the message and performs no transformations.

SubscriptionClient summerReceiver = messagingFactory.CreateSubscriptionClient(“WeatherTopic”, “WeatherSubscription”, ReceiveMode.ReceiveAndDelete);

while (true)

{

BrokeredMessage brokeredMessage = summerReceiver.Receive(TimeSpan.FromSeconds(1));

if (brokeredMessage != null)

{

String havingFun = brokeredMessage.Properties["HavingFun"] as String;

String month = brokeredMessage.Properties["Month"] as String;

brokeredMessage.Dispose();

}

else

{

break;

}

}SubscriptionClient allReceiver = messagingFactory.CreateSubscriptionClient(“WeatherTopic”, “AllSubscription”, ReceiveMode.ReceiveAndDelete);

while (true)

{

BrokeredMessage brokeredMessage = allReceiver.Receive(TimeSpan.FromSeconds(1));

if (brokeredMessage != null)

{

String havingFun = brokeredMessage.Properties["HavingFun"] as String;

String month = brokeredMessage.Properties["Month"] as String;

brokeredMessage.Dispose();

}

else

{

break;

}

}messagingFactory.Close();

messagingFactory = null;MessageSender and MessageReceiver

The MessageSender and MessageReceiver classes abstract out the sending and receiving functionality of queues, topics and subscriptions, and can be used instead of the QueueClient, TopicClient and SubscriptionClient classes. The MessageSender class exposes synchronous and asynchronous Send() methods while the MessageReceiver class exposes the usual synchronous and asynchronous Abandon(), Complete(), DeadLetter() and Defer() methods.

The earlier example using a SubscriptionClient to receive messages can be converted to use a MessageReceiver by replacing the following line:

SubscriptionClient summerReceiver = messagingFactory.CreateSubscriptionClient(

“WeatherTopic”, “WeatherSubscription”, ReceiveMode.ReceiveAndDelete);with

MessageReceiver summerReceiver = messagingFactory.CreateMessageReceiver(

“WeatherTopic/subscriptions/WeatherSubscription”, ReceiveMode.ReceiveAndDelete);with WeatherTopic/subscriptions/WeatherSubscription being the full entity path to the WeatherSubscription on WeatherTopic.

MessageSession

Service Bus Brokered Messaging supports sessions and session state. A queue or subscription must be set up to support sessions through the use of the RequiresSession property in the QueueDescription or SubscriptionDescription. Subsequently, each message may have a SessionId associated with it. The intent is that related messages share a SessionId and comprise a session. A MessageSession receiver can then be created on the queue or subscription to receive messages with the same SessionId. A persistent session state can be associated with the session. This can be used along with the PeekLock receive mode to implement exactly once processing of messages since the session state can be used to store the current state of processing regardless of any failure in the session consumer.

The MessageSession class is derived from the MessageReceiver class and adds synchronous and asynchronous versions of the following methods to maintain session state:

public Stream GetState();

public void SetState(Stream stream);The following code fragment shows the creation of a session-aware queue and the sending of some messages to it:

TokenProvider tokenProvider = TokenProvider.CreateSharedSecretTokenProvider(

issuer, issuerKey);

Uri serviceUri = ServiceBusEnvironment.CreateServiceUri(

“sb”, serviceNamespace, String.Empty);

NamespaceManager namespaceManager = new NamespaceManager(serviceUri, tokenProvider);QueueDescription queueDescription = new QueueDescription(“SessionQueue”)

{

RequiresSession = true

};

namespaceManager.CreateQueue(queueDescription);MessagingFactory messagingFactory = MessagingFactory.Create(serviceUri, tokenProvider);

QueueClient sender = messagingFactory.CreateQueueClient(“SessionQueue”);

for (Int32 i = 1; i < 10; i++)

{

BrokeredMessage brokeredMessage = new BrokeredMessage()

{

MessageId = i.ToString(),

SessionId = (i % 3).ToString(),

};

sender.Send(brokeredMessage);

}

sender.Close();The novelty in this fragment is in the RequiresSession property for the QueueDescription and the setting of the SessionId on each message.

The following code fragment shows the use of a MessageSession to receive messages in a single session:

QueueClient receiver = messagingFactory.CreateQueueClient(

“SessionQueue”, ReceiveMode.ReceiveAndDelete);

MessageSession sessionReceiver = receiver.AcceptMessageSession();

while ( true )

{

BrokeredMessage message = sessionReceiver.Receive(TimeSpan.FromSeconds(1));

if (message == null)

{

break;

}

String messageId = message.MessageId;

String sessionId = message.SessionId;

}

messagingFactory.Close();The MessageSession receiver is created by invoking AcceptMessageSession() on the QueueClient receiver. The session receiver receives the first message and then all subsequent messages with the same SessionId as the first one.

Yves Goeleven (@YvesGoeleven) described AppFabric queue support for NServiceBus in a 9/21/2011 post to his Cloud Shaper blog:

Last week at the //BUILD conference, Microsoft announced the public availability of the AppFabric Queues, Topics and Subscriptions. A release that I have been looking forward to for quite some time now, as especially the queues are a very valuable addition to the NServiceBus toolset.

These new queues have several advantages over azure storage queues:

- Maximum message size is up to 256K in contrast to 8K

- Throughput is a lot higher as these queues are not throttled on number of messages/second

- Supports TCP for lower latency

- You can enable exactly once delivery semantics

- For the time being they are free!

For a complete comparison between appfabric queues and azure storage queues, this blog post seems to be a very comprehensive and complete overview: http://preps2.wordpress.com/2011/09/17/comparison-of-windows-azure-storage-queues-and-service-bus-queues/

How to configure NServiceBus to use AppFabric queues

As always, we try to make it as simple as possible. If you manually want to initialize the bus using the Configure class, you can just call the following extension method.

.AppFabricMessageQueue()If you use the generic role entrypoint, you can enable appfabric queue support by specifying the following profile in the service configuration file

NServiceBus.WithAppFabricQueuesBesides one of these enablers you also need to specify a configuration for both your service namespace and your issuer key. These can be specified in the AppFabricQueueConfig section and are required as they cannot be defaulted by nservicebus because there is no local development equivalent to default to.

<Setting name="AppFabricQueueConfig.IssuerKey" value="yourkey" /> <Setting name="AppFabricQueueConfig.ServiceNamespace" value="yournamespace" />That’s it, your good to go.. this is all you need to do to make it work.

additional settings

If you want to further control the behavior of the queue there are a couple more settings:

- AppFabricQueueConfig.IssuerName – specifies the name of the issuer, defaults to owner

- AppFabricQueueConfig.LockDuration – specifies the duration of the message lock in milliseconds, defaults to 30 seconds

- AppFabricQueueConfig.MaxSizeInMegabytes – specifies the size, defaults to 1024 (1GB), allowed values are 1, 2, 3, 4, 5 GB

- AppFabricQueueConfig.RequiresDuplicateDetection – specifies whether exactly once delivery is enabled, defaults to false

- AppFabricQueueConfig.RequiresSession – specifies whether sessions are required, defaults to false (not sure if sessions makes sense in any NServiceBus use case either)

- AppFabricQueueConfig.DefaultMessageTimeToLive – specifies the time that a message can stay on the queue, defaults to int64.MaxValue which is roughly 10.000 days

- AppFabricQueueConfig.EnableDeadLetteringOnMessageExpiration – specifies whether messages should be moved to a dead letter queue upon expiration

- AppFabricQueueConfig.DuplicateDetectionHistoryTimeWindow – specifies the amount of time in milliseconds that the queue should perform duplicate detection, defaults to 1 minute

- AppFabricQueueConfig.MaxDeliveryCount – specifies the number of times a message can be delivered before being put on the dead letter queue, defaults to 5

- AppFabricQueueConfig.EnableBatchedOperations – specifies whether batching is enabled, defaults to no (this may change)

- AppFabricQueueConfig.QueuePerInstance – specifies whether NServiceBus should create a queue per instance instead of a queue per role, defaults to false

Now it’s up to you

If you want to give NServiceBus on AppFabric queues a try, you can start by running either the fullduplex or pubsub sample and experiment from there.

Any feedback is welcome of course!

Clemens Vasters (@clemensv) posted Service Bus Relay Load Balancing–The Missing Feature (But Not For Much Longer!) on 9/20/2011:

Load Balancing on the Service Bus Relay is by far our #1 most requested feature now that we’ve got Queues and Topics finally in production. It’s reasonable expectation for us deliver that capability in one of the next production updates and the good news is that we will. I’m not going to promise any concrete ship dates here, but it’d be sorely disappointing if that wouldn’t happen while the calendar still says 2011.

I just completed writing the functional spec for the feature and it’s worth communicating how the feature will show up, since there is a tiny chance that the behavioral change may affect implementations that rely on a particular exception to drive the strategy of how to perform failover.

The gist of the Load Balancing spec is that the required changes in your code and config to get load balancing are zero. With either the NetTcpRelayBinding or any of the HTTP bindings (WebHttpRelayBinding, etc) as well as the underlying transport binding elements, you’ll just open up a second (and third and fourth … up to 25) listener on the same name and instead of getting an AddressAlreadyInUseException as you get today, you’ll just get automatic load balancing. When a request for your endpoints shows up at Service Bus, the system will roll the dice on which of the connected listeners to route the request or connection/session to and perform the necessary handshake to make that happen.

The bottom line is that we’re effectively making the AddressAlreadyInUseException go away for the most part. It’ll still be thrown when the listener’s policy settings don’t match up, i.e. when one listener wants to have Access Control enabled and the other one doesn’t, but otherwise you’ll just won’t see it anymore.

The only way this way of just lighting up the feature may get anyone in trouble is if your application were to rely on that exception in a situation where you’ve got an active listener on Service Bus on one node and a ‘standby’ listener on another node that keeps trying to open up a listener into the same address to create a hot/warm cluster failover scheme and if the two nodes were tripping over each other if they were getting traffic concurrently. That doesn’t seem too likely. If you have questions about this drop me a line here in the comments, by email to clemensv at microsoft.com or on Twitter @clemensv.

<Return to section navigation list>

Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

No significant articles today.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

My Problems Installing Tools for Windows Azure to Visual Studio 11 Developer Preview on Windows 7 post of 9/21/2011 describes a cycle that prevents creating Windows Azure projects on Windows 7:

The Windows Azure Team posted an updated Windows Azure Toolkit for Windows 8 v1.0.1 to CodePlex on 9/21/2011:

Recommended Download

WATWindows.exe: source code, 10645K, uploaded Today - 802 downloads

Release Notes for v1.0.1:

Initial release:

Update dependency checker for install on Windows 7 - note: if you wish you can develop your Windows Azure cloud project on W7 and client on W8

- Dependency Checker - Provides a streamlined means to get all the pre-requisite tools configured to start developing with Windows Azure on Windows 8

- The Windows Push Notification Service Recipe - This will simplify your development effort significantly. This recipe provides a simple managed API for authenticating against WNS, constructing payloads and posting the notification to WNS. In three lines of code you can harness the power of Toast, Tile and Badge notifications on Windows 8.

- Dev 11 Windows Metro style app - a sample application that demonstrates how to request a notification channel from WNS and register this channel with your Windows Azure service.

- VS 2010 Windows Azure Cloud Project Template

- A sample WCF REST service for your client apps to register their channel with.

- An ASP .NET MVC 3 portal to build and send Toast, Tile and Badge notifications to clients using the Windows Push Notification Service Recipe.

- An example of how to utilize Blob Storage for Tile and Toast notification images.

- Sample applications are included in the toolkit to demonstrate application scenarios using the Access Control Service, Worker Roles, Queues and the Windows Azure Marketplace Data Market.

Running the dependency checker displays the following:

Note: The instruction for correcting the missing dependency isn’t a paragon of clarity. Does anyone at Microsoft read these messages before releasing a product. It appears that all files in the updated version are dated 9/14/2011, the date of the original release.

Clicking the Download link leads to the default Visual Studio Landing page. Open the Products menu, choose Visual Studio Roadmap to open the eponymous page, click the Learn more about Visual Studio 11 Developer Preview › link to open the Visual Studio 11 Developer Preview page, and click the Download Visual Studio 11 Developer Preview › link to start the Web Installer. The following is from the the Web Installer page:

Overview

Visual Studio 11 Developer Preview is an integrated development environment that seamlessly spans the entire life cycle of software creation, including architecture, user interface design, code creation, code insight and analysis, code deployment, testing, and validation. This release adds support for the most advanced Microsoft platforms, including the next version of Windows (code-named "Windows 8") and Windows Azure, and enables you to target platforms across devices, services, and the cloud. Integration with Team Foundation Server allows the entire team, from the customer to the developer, to build scalable and high-quality applications to exacting standards and requirements.

Visual Studio 11 Developer Preview is prerelease software and should not be used in production scenarios.

This preview enables you to test updates and improvements made since Visual Studio 2010, including the following:

- Support for the most advanced platforms from Microsoft, including Windows 8 and Windows Azure, as well as a host of language enhancements.

- New features such as code clone detection, code review workflow, enhanced unit testing, lightweight requirements, production intelliTrace™, exploratory testing, and fast context switching.

This preview can be installed to run side by side with an existing Visual Studio 2010 installation. The preview provides an opportunity for developers to use the software and provide feedback before the final release. To provide feedback, please visit the Microsoft Connect website.The .NET Framework 4.5 Developer Preview is also installed as part of Visual Studio 11 Developer Preview.

Note: This prerelease software will expire on June 30, 2012. To continue using Visual Studio 11 after that date, you will have to install a later version of the software.

In order to develop Metro style applications, the Visual Studio 11 Developer Preview must be installed on the Windows Developer Preview with developer tools English, 64-bit. Developing Metro style applications on other Preview versions of Windows 8 is not supported.

System requirements

Supported Operating Systems: Windows 7, Windows Server 2008 R2

- Windows 7 (x86 and x64)

- Windows 8 (x86 and x64)

- Windows Server 2008 R2 (x64)

- Windows Server Developer Preview (x64)

- Supported Architectures:

- 32-bit (x86)

- 64-bit (x64)

- Hardware Requirements:

- 1.6 GHz or faster processor

- 1 GB of RAM (1.5 GB if running on a virtual machine)

- 5.5 GB of available hard disk space

- 5400 RPM hard drive

- DirectX 9-capable video card running at 1024 x 768 or higher display resolution

Installation adds the following Start menu items:

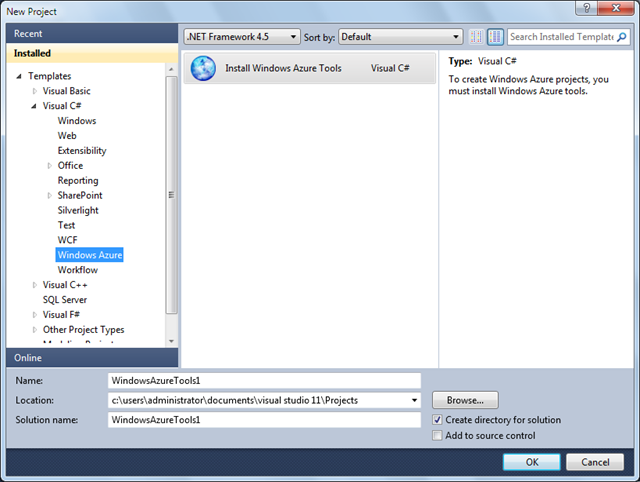

Start a new C# (or VB) Windows Azure project, which requires installing the Windows Azure Tools:

Clicking OK opens the following page:

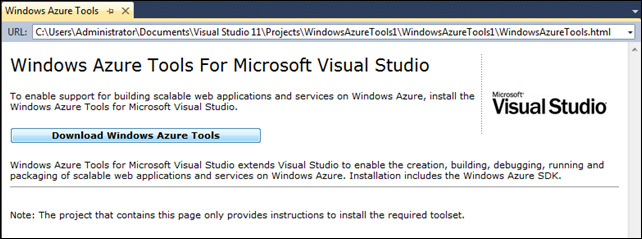

Clicking Download Windows Azure Tools opens the following Warning page:

The above appeared to me to be a cycle, so I performed a repair on the Web install by rerunning it to start Maintenance Mode:

Repair reinstalls all downloaded components:

The following error occurred shortly after starting the repair:

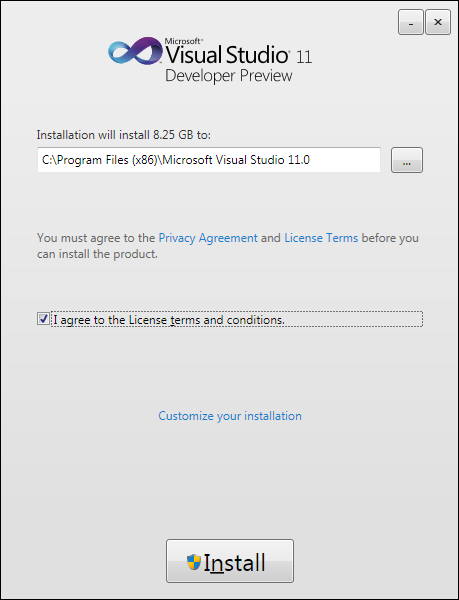

So, I removed Microsoft Visual Studio Developer Preview v11.0.40825.0, which also removed its Language Pack and Visual Studio 11 Tools for SQL Server Compact 4.0 v4.0.8821.1, and reinstalled it from the VS11_DP_CTP_ULT_ENU.iso version (1.95 GB) from MSDN Subscriber Downloads’ Visual Studio 11 Developer Preview page, which expands to 8.25 GB:

The following error appeared when installation completed, which probably is due to the existence of a SQL Server 2008 R2 .\SQLEXPRESS instance:

When launched, the Install Windows Azure Tools template appeared as above.

It will be interesting to find out what caused this problem.

Michael Collier (@MichaelCollier) posted First Look: Scaling with the Windows Azure Autoscaling Block on 9/21/2011:

A little more than a week ago, Microsoft’s Patterns & Practices team released the first preview of the new Windows Azure Autoscaling Block (WASABi). I have been exploring some of the features recently, and I must say I’m quite impressed. The block contains some very welcome functionality. It’ll be nice to see how the team continues to flush out the block’s features. Developing an intelligent scaling solution is not as easy as it sounds. There are a lot of aspects to consider, and so far it looks like WASABi is striving to address those.

As a little disclaimer, I’m not a big user of the Enterprise Library blocks that are currently available. To be perfectly honest, I haven’t used them probably since their v1 or v2 days. So for me getting started with WASABi I felt like there was some ramp-up time to just get up to speed on how to configure the block and the patterns for doing so. After spending some time with the Enterprise Library to learn about the new Autoscaling block, it’s obvious I need to give these new blocks another deep look. Sure looks like there is some really nice, helpful utilities there.

To get started, let’s set up WASABi to have a very simple rule – increase the number of instances on a web role during peak business hours. In this scenario, the web role should have at least two instances at all times, and increase to 8 instances from 7am – 7pm ET. Naturally, after peak hours the count should decrease to the minimum again – 2 instances.

WASABi needs a host to run. In this walkthrough, a Windows Azure worker role will serve as the host for WASABi, the “scaler”. It will be the responsiblity of this scaler role to monitor the web role, adjusting the instance counts according to the configured rules.

If you don’t already have WASABi, now would be a good time to get it from NuGet. Also, I would encourage you to watch the Channel 9 video walkthrough. The video does a pretty good job of getting you going. The initial preview release does not include much in the way of developer documentation. I know the team is working on this and I’d imagine the final bits would ship with nice, detailed documentation. In the sections that follow I’m hoping to clear up something areas that I found a little confusing getting started.

Creating a Worker Role

The worker role logic itself is actually pretty simple. The real magic (a.k.a. “work”) happens in configuration. We just need to instantiate a new Autoscaler instance and then start it. The worker role code can be as simple as:

public override void Run() { try { this.autoscaler = EnterpriseLibraryContainer.Current.GetInstance(); this.autoscaler.Start(); while (true) { Thread.Sleep(10000); Trace.WriteLine("Working", "Information"); } } catch (Exception ex) { Trace.Write(ex); } }With the basic code in place, now we need to configure the Autoscaler. The configuration sets up where the Autoscaler will look for rule and service information. This configuration lives in the web role’s app.config file. It is easiest to get started with setting up the configuration by using the Enterprise Library Configuration extenstion in Visual Studio. The video walkthrough does a pretty good job of showing this, so I’ll let you watch that. Go ahead . . . I’ll wait. In my opinion, the tool is not the easiest thing to use, but it does get you going. I would assume the configuration tool will improve prior to the final bits shipping. Like I said, it’s a starting point. A few things to note about the configuration:

- The default logger is the LoggingBlockLogger. For some reason, I couldn’t get this to log all the log messages I expected related to when new rules where evaluated and when the scaler would trigger a scaling action. I changed to use the SourceLogger. I also copied the logging configuration (listeners, category sources, and special sources) from the app.config included with the ConsoleAutoscaler sample application included in the NuGet feed for the WASABi source. That said, I’m not sure if the logging problems I experienced were related to the logger itself, or a misconfiguration on my part (probably me).

- I created two XML configuration files for rules and services, rules.xml and services.xml (more on those below). You can easily set those as the blob name when configurating the rule and service information store elements in the app.config.

<rulesStores> <add name="Blob Rules Store" type="Microsoft.Practices.EnterpriseLibrary.WindowsAzure.Autoscaling.Rules.Configuration.BlobXmlFileRulesStore, Microsoft.Practices.EnterpriseLibrary.WindowsAzure.Autoscaling" blobContainerName="autoscaling-container" blobName="rules.xml" storageAccount="RulesStoreStorageAccount" monitorPeriod="30" /> </rulesStores> <serviceInformationStores> <add name="Blob Service Information Store" type="Microsoft.Practices.EnterpriseLibrary.WindowsAzure.Autoscaling.ServiceModel.Configuration.BlobXmlFileServiceInformationStore, Microsoft.Practices.EnterpriseLibrary.WindowsAzure.Autoscaling" blobContainerName="autoscaling-container" blobName="services.xml" storageAccount="RulesStoreStorageAccount" monitorPeriod="30" /> </serviceInformationStores>Creating the Rules

The rule configuration file contains the, well, rules for under what conditions the scaler should scale a hosted service. There are two types of rules available – constraint rules and reactive rules (see the announcement for more details on the rules). Let’s take a look at a simple rule configuration.

<?xml version="1.0" encoding="utf-8" ?> <rules xmlns="http://schemas.microsoft.com/practices/2011/entlib/autoscaling/rules"> <constraintRules> <rule name="default" description="test test" enabled="true" rank="1"> <actions> <!--'MvcWebRole1' is the name of the web role. --> <range target="MvcWebRole1" min="2" max="15"/> </actions> </rule> <rule name="business hours" description="scale out during business hours" rank="100" enabled="true"> <timetable startTime="07:00:00" duration="12:00:00" startDate="2011-09-15" utcOffset="-04:00"> <weekly days="Monday Tuesday Wednesday Thursday Friday"/> </timetable> <actions> <range target="MvcWebRole1" min="8" max="8"/> </actions> </rule> </constraintRules> </rules>It should be noted that the ’startTime’ is your local time (e.g. EDT). Also, make sure you have the correct UTC offset (e.g. EDT vs. EST). If not, the scaler may not scale when you think it should (yes – happened to me). The scaler uses the ‘utcOffset’ value to convert the configured time to UTC time (which is the time Windows Azure uses). The video walkthrough mentions this as well.

Creating the Service Information

The service configuration “tells” the scaler which Windows Azure subscriptions and hosted service(s) to monitor. Let’s take a look at a sample service configuration file.

<?xml version="1.0" encoding="utf-8" ?> <serviceModel xmlns="http://schemas.microsoft.com/practices/2011/entlib/autoscaling/serviceModel"> <subscriptions> <subscription name="something" subscriptionId="b5fb8dfb-3e0b-xxxx-xxxx-xxxxxxxxxxxx" certificateStoreLocation="LocalMachine" certificateStoreName="My" certificateThumbprint="FE5EB4279BC6354xxxxxxxxxxxxxxxxxxxxxxxxx"> <services> <service name="myHostedServiceName" slot="Staging"> <roles> <role name="MvcWebRole1" roleName="MvcWebRole1" wadStorageAccountName="wadStorageAccount"/> </roles> </service> </services> <storageAccounts> <storageAccount name="wadStorageAccount" connectionString="DefaultEndpointsProtocol=https;AccountName=myaccount;AccountKey=123xxxxxxxxx"> </storageAccount> </storageAccounts> </subscription> </subscriptions> </serviceModel>A few things to note about the service configuration:

- The subscription name can be pretty much whatever you want.

- The service name should be the name of your hosted service. This is the DNS prefix for the production slot.

- You will need to make sure to configure the worker role (the “scaler”) to contain the certificate thumbprint. This is done in the service definition file.

- One of the things that needs to be set up in order for WASABi to scale a Windows Azure application is both a management and service certificate. These certificates are necessary in order for WASABi to query characteristics of a service and to create, or shut down, instances of a service. For more information on Windows Azure management and service certificates, please go here.

I hope you found this brief walkthrough helpful. As I continue to explore WASABi, I’ll be sure to post more here about my experiences. In the meantime, be sure to check out the following list of resources:

Michael Washam (@MWashamMS) recommended that you Automate It! Deploying a Windows Azure Service Package from PowerShell in a 9/21/2011 post:

With the new version of Windows Azure PowerShell Cmdlets 2.0 we have made it possible to script the deployment of an entire hosted service directly from PowerShell!

As a proof of concept we have put together a sample script that reads settings from a configuration file and deploys a service package to your Windows Azure Account. What is new about this is if the hosted service or storage account do not exist yet we will create them using the settings you specify!

To get started ensure you have downloaded and installed the Windows Azure PowerShell Cmdlets 2.0.

Next download the sample script from here: https://mlwpublic.blob.core.windows.net/downloads/e2e-ps-deployment.zip

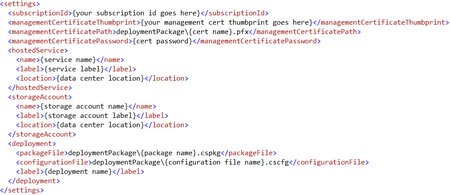

Once the file is unzipped you will need to modify the configuration.xml file with your own settings:

Once configured copy your .cspkg file and .cscfg file to the \deploymentPackage folder beneath the script.

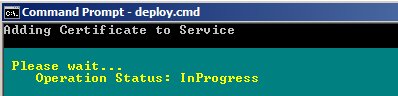

Finally, run the deploy.cmd file which will launch your PowerShell script and deploy the service!

Operations demonstrated in this sample include:

- New-HostedService / Get-HostedService

- New-StorageAccount / Get-StorageAccount

- New-Deployment / Get-Deployment

- Add-Certificate

- Move-Deployment (VIP Swap)

The Windows Azure Team posted Real World Windows Azure: Interview with Dan Scarfe, Chief Executive Officer at Dot Net Solutions on 9/21/2011:

As part of the Real World Windows Azure series, we talked to Dan Scarfe, Chief Executive Officer at Dot Net Solutions, about how the company is using Windows Azure to expand business opportunities, explore new revenue models, and help customers reduce costs. Here’s what he had to say:

MSDN: What does Dot Net Solutions do?

Scarfe: We’re a systems integrator and a software development house. We build tailored solutions for organizations where there are no off-the-shelf solutions available. We help organizations unlock the competitive advantage of their information systems.

MSDN: When did you start using Windows Azure?

Scarfe: We started developing for the cloud in September 2008, even before Microsoft released Windows Azure. We built one of the platform’s first applications, the Wikipedia Explorer, a tool for visualizing relationships between documents in Wikipedia. This process requires immense quantities of compute power for short periods of time—a classic cloud scenario. The application is still showcased as a demonstration of the power of Windows Azure.

MSDN: What opportunities do you see with cloud technologies?

Scarfe: By developing for Windows Azure, we can expand our offerings to deliver complete lifecycle management services. We can deliver hosting and automated management, where before we would have had to work with a separate hosting partner. As a business, we can provide a much more holistic service.

MSDN: What are your customers looking for in terms of Windows Azure solutions?

Scarfe: By using Windows Azure, our customers can launch new products or services with less risk. Some customers are looking to explore new business models quickly without the need for large, up-front investments. Other customers need a reliable platform that is highly scalable so that they can innovate. We also have customers who want to migrate to the cloud a stand-alone solution that has historically been delivered using an on-premises deployment model.

MSDN: Talk about how your business model has changed since you began developing solutions for Windows Azure?

Scarfe: We’re moving toward a business model in which we bundle all the stages of an IT project—including design, build, support, and hosting—and charge a monthly fee over the contract period. This is attractive to our customers and makes it much easier for us to tap into a predictable revenue stream. We’re beginning to see opportunities in vertical markets including games, retail, and media—areas where organizations can address their business problems by tapping into the Windows Azure global infrastructure. Our entire growth strategy over the next three to five years is pinned to Windows Azure.

MSDN: Describe some of the benefits of Windows Azure for Dot Net Solutions and its customers.