Windows Azure and Cloud Computing Posts for 9/9/2011+

| A compendium of Windows Azure, SQL Azure Database, AppFabric, Windows Azure Platform Appliance and other cloud-computing articles. |

• Update 9/9/2011 3:45 PM PDT with articles marked • by Claudio Caldato, Alex Handy, Avkash Chauhan and the SQL Azure Team.

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database and Reporting

- Marketplace DataMarket and OData

- Windows Azure AppFabric: Apps, Access Control, WIF and Service Bus

- Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch and Entity Framework v4+

- Windows Azure Infrastructure and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

Azure Blob, Drive, Table and Queue Services

Bruce Kyle reminds developers to Add Metrics, Logging, Leasing to Your Windows Azure Storage in a 9/9/2011 post to the US ISV Evangelism blog:

Steve Marx, Microsoft Technical Strategist for Windows Azure, has put together a set of source code and example that shows how you can add support the new storage analytics API.

It uses smarx.WazStorageExtensions, a collection of useful extension methods for Windows Azure storage operations that aren't covered by the .NET client library.

The examples in his post show how with a few lines of code you can:

- enable metrics with a 7-day retention policy

- try to lease a blob and write "Hello, World!" to it

- check the existence of a blob

See Analytics, Leasing, and More: Extensions to the .NET Windows Azure Storage Library.

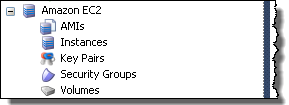

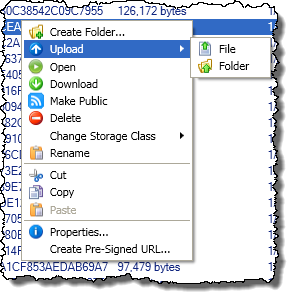

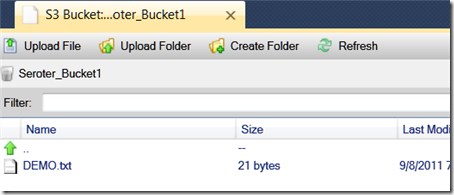

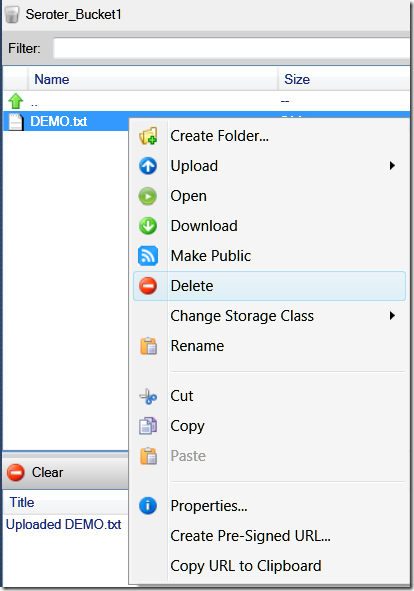

Alexandra Brown reported CloudBerry Explorer: Windows Azure Storage Analytics support in a 9/8/2011 post to the CloudBerry Lab blog:

It has been awhile since we introduced CloudBerry Explorer client for Windows Azure Blob storage service. We designed it as an Azure storage browser that helps managing your data and now we are proud to announce a release which introduces a new Azure Storage Analytics tool.

Windows Azure Storage Analytics offers you the ability to track, analyze, and debug your usage of storage. You can use this data to analyze storage usage to improve the design of your applications and their access patterns to Windows Azure Storage. Analytics data consists of:

- Logs - Provide trace of executed requests

- Metrics - Provide summary of key capacity and request statistics

How to enabled Logs

Click Storage Settings link next to the account list.

On the following dialog you can enable the logging and decided what kind of requests you would like to track. The available options are Delete requests, Read requests, Write requests.

You can also set up a retention period for the logs.

Logs will become available in a special container called $logs.

How to enable Metrics

You can enable Request information and Capacity information collection.

- Request information: Provides hourly aggregates of number of requests, etc

- Capacity information: Provides daily statistics for the space consumed by the service, number of containers and number of objects that are stored in the service.

You can also set up the retention period for the metrics

Where to see the logs?

The log files will show up in a special container called logs$. You will have to manually enter it to the navigation bar and to navigate down to actual files. The log file format is outside of the scope of this article and you can learn about it in MS documentation.

What's next?

We are looking forward to your feedback and we would like to see how popular this feature will become. Based on its popularity we will make a full blown analytic reports similar to the one available for Amazon S3 version of CloudBerry Explorer.

Download the new version of Cloudberry Explorer and share you thoughts with us.

Note: this post applies to CloudBerry Explorer for Azure 1.4 and later.

<Return to section navigation list>

SQL Azure Database and Reporting

• The SQL Azure Team reported that TechNet Wiki Articles Offer Best Practices and Guidance on SQL Azure Connectivity in a 9/9/2011 post:

The Microsoft TechNet Wiki is always chock-full of the latest and greatest user-generated content about Microsoft technologies. If you’re looking for guidance and best practices on SQL Azure connectivity, these community-sourced articles are worth checking out:

- SQL Azure: Connection Management in SQL Azure

- Retry Logic for Transient Failures in SQL Azure

- SQL Azure Connectivity Troubleshooting Guide

The TechNet Wiki is a great place to interact and share your expertise with technology professionals worldwide. Click here to learn how to join the wiki community.

The [SQL Azure Database] [North Central US] and [South Central US] databases reported [Red] SQL Azure Portal [are] unavailable in a 9/8/2011 Atom feed:

Sep 9 2011 2:47AM We are currently experiencing an outage preventing access to SQL Azure Portal. Functionality of SQL Azure Databases are not impacted.

Sep 9 2011 3:30AM The root cause of this incident has been identified. A network device involved in the traffic routing of the Windows Azure Platform Management Portal is experiencing functionality issues. The repair steps are underway. Further updates will be published to keep you apprised of the situation. We apologize for any inconvenience this causes our customers.Sep 9 2011 4:30AM The repair steps are still underway. The issue has been mitigated in most regions, however the Windows Azure Platform Management Portal remains inaccessible in some regions.Further updates will be published to keep you apprised of the situation. We apologize for any inconvenience this causes our customers.

Sep 9 2011 5:30AM The repair steps have been implemented and we are validating that they take effect across all regions worldwide. The issue has been largely mitigated at this time, and the Windows Azure Platform Management Portal should be accessible in most regions. It is important to note that throughout this incident which impacted the Windows Azure Platform Management Portal, the Windows Azure Service Management API remained fully functional. Further updates will be published to keep you apprised of the situation. We apologize for any inconvenience this causes our customers.

Sep 9 2011 5:43AM The repair steps have been successfully validated in all regions worldwide. The Windows Azure Platform Management Portal functionality has been restored. We apologize for any inconvenience this caused our customers.

From the Service Dashboard’s Status History:

Woody Leonhard asserted “Microsoft continues to struggle, with Hotmail, SkyDrive, MSN, Azure, and Office 365 going down for a couple of hours Thursday night. At least users were in the loop this time” in a deck for his Blow-by-blow: Another Microsoft cloud outage post to InforWorld’s Tech Watch blog.

However, my OakLeaf Systems Azure Table Services Sample Project demo running in Microsoft’s South Central US (San Antonio) stayed up continuously on Thursday and Friday (so far) as reported by System Center Operations Manager (SCOM. See my Installing the Systems Center Monitoring Pack for Windows Azure Applications on SCOM 2012 Beta and Configuring the Systems Center Monitoring Pack for Windows Azure Applications on SCOM 2012 Beta posts for more detail.) Neither Pingdom nor mon.itor.us reported downtime (See my Uptime Report for my Live OakLeaf Systems Azure Table Services Sample Project: August 2011 for more detail.)

James Podgorski described SQL Azure Federations with Entity Framework Code-First in a 9/6/2011 post to the Windows Azure Customer Advisory Team (CAT) blog (missed when posted):

A number of our SQL Azure Federation product evaluation customers have been inquiring about the typical how-to’s for using Entity Framework with this new SQL Azure capability. After providing guidance to a number of these customers it was apparent that I could deliver a jumpstart of sorts for those common scenarios and questions so as to broaden the community reach and awareness. My intent is to release a series of postings with examples and various layers of depth as I tick off some of the common points customers are facing when using Entity Framework with SQL Azure Federations.

In this blog I will provide a slight boost for those wanting to use the Code First feature of Entity Framework (EF) with SQL Azure Federations. The sample I demonstrate in this posting is a basic quintessential ‘hello world’ example for Code First, while basic it does overcome the first potential blocker, that is connecting to federation members by executing the ‘USE FEDERATION’ statement before executing LINQ query against the backend SQL Azure federated database. It also provides a primer for those interested in SQL Azure Federations.

Background Information

Below is a quick summary of the USE FEDERATION statement, for those familiar with the concepts simply jump below to the sample code.

Connecting to Federation Members

SQL Azure Federations has the concept of a Federation Member, which is a system managed database that contains a subset of the data in the scaled-out federation cluster. The data within a federation member is defined by a distribution key and partitioning scheme. The federation distribution key can be of data type int, bigint, uniqueidentifier or varbinary(n). Currently only range partitioning schemes are permitted, but in the future it is anticipated that hash and other schemes will be made available. Connecting to individual federation members is established by the ‘USE FEDERATION’ command as submitted to the SQL Azure Gateway Services layer as denoted by the DDL snippet below. You can find more information about the SQL Azure Architecture here.

USE FEDERATION ROOT WITH RESET USE FEDERATION federation_name (distribution_name = value) WITH FILTERING={ON|OFF}, RESETThe ROOT keyword redirects the connection to the federation root database if run within a federation member. The WITH FILTERING=ON|OFF is devised to set the connection scope, either to the full range of a federation member or to a specific distribution key value. The WITH RESET keyword is mandatory for version 1 of SQL Azure Federations and provides an explicit reset of the connection.

Interesting considerations and FAQs about the USE FEDERATION statement are:

1. The USE FEDERATION statement must be the only statement in a batch but can be executed at any time on an active connection.

2. The USE FEDERATION statement enables the client to switch between federation members and thus promotes efficient connection pooling with all client libraries.

3. Execution of the USE FEDERATION statement is analogous to running sp_reset_connection on a connection as they are retrieved from the connection pool, all connection settings are reset to default.

Sample – Code First Against a Federated Member

The sample is composed of two sections. Firstly the creation of the database objects including the federated database, federated tables and federated members. Data will be inserted for demonstration purposes. Secondly using the code first feature of Entity Framework, create a code first model and associated queries against the database.

Step1 – Create Database Objects

First create a federated database using the ‘CREATE FEDERATION’ DDL statement. In this sample below the federation ‘FED_1’ was created with a distribution key named ‘range_id’ of type BIGINT with a range type distribution scheme.

-- Create the federation named FED_1. -- Federate on a bigint with a distribution key named range_id CREATE FEDERATION FED_1 (range_id BIGINT RANGE) GOConnect to the first and only federated member available at this time and create a federated table called Orders with a distribution key named customer_id. Note that the primary key is made up of the order_id and customer_id, thus conforming to the stipulation that the distribution key must be included in any unique indexes such as a primary key definition. The order of the distribution key is not relevant.

-- Connect to the first and only federated member USE FEDERATION FED_1 (range_id = 0) WITH FILTERING = OFF, RESET GO -- Create the table in the first federated member, this will be a federated table. -- The federated column in this case is col1. CREATE TABLE Orders ( order_id bigint not null, customer_id bigint, total_cost money not null, order_date datetime not null, primary key (order_id, customer_id) ) FEDERATED ON (range_id = customer_id) GO -- Insert 160 values into the federated table DECLARE @i int SET @i = 0 WHILE @i < 80 BEGIN INSERT INTO Orders VALUES (@i, @i, 10, getdate()) INSERT INTO Orders VALUES (@i+1, @i, 20, getdate()) SET @i = @i + 1 END GOSplit the federated database into eight federated members (eight federated members and 1 root fit well on the diagram below) with high range boundaries set every ten customer_ids. Given the data entered into the Orders federated table we will have twenty rows per federated member with each federated member containing the orders for ten customers or two rows per customer_id. In this case we are taking advantage of the multi-tenancy properties of federated tables by housing ten tenants per member database. The premise is that if any of the tenants becomes hot or the data usage high, one can simply split that individual federated member to provide more resources to that tenant when needed.

Note that during the SPLIT operation all non-federated tables (reference tables) are duplicated to the new federation members. The data for federated tables are copied to the federation member as defined by the boundary value within the SPLIT statement and the given distribution key on the table. Since the SPLIT operation is an asynchronous operation while the filtered data is copied from the original federation to the new destination federation member, we must wait for the SPLIT statement to complete before we execute the next SPLIT operation. Failure to wait will simply result in an error as shown below.

Msg 45007, Level 16, State 1, Line 1 ALTER FEDERATION SPLIT cannot be run while another federation operation is in progress on federation FED_1 and member with id 65728.The WaitForFederationOperation stored procedure used in this script was written to wait till the SPLIT operation completed before execution of the subsequent SPLIT operation. The text to the stored procedure is included below the ALTER FEDERATION statements.

-- Create 8 federated members using the SPLIT command -- Split must be run at the root database USE FEDERATION ROOT WITH RESET GO -- range_low = -9223372036854775808, range_high = 10 ALTER FEDERATION FED_1 SPLIT AT (range_id=10) GO EXEC WaitForFederationOperations 'FED_1' GO -- range_low = 10, range_high = 20 ALTER FEDERATION FED_1 SPLIT AT (range_id=20) GO EXEC WaitForFederationOperations 'FED_1' GO -- range_low = 20, range_high = 30 ALTER FEDERATION FED_1 SPLIT AT (range_id=30) GO EXEC WaitForFederationOperations 'FED_1' GO -- range_low = 30, range_high = 40 ALTER FEDERATION FED_1 SPLIT AT (range_id=40) GO EXEC WaitForFederationOperations 'FED_1' GO -- range_low = 40, range_high = 50 ALTER FEDERATION FED_1 SPLIT AT (range_id=50) GO EXEC WaitForFederationOperations 'FED_1' GO -- range_low = 50, range_high = 60 ALTER FEDERATION FED_1 SPLIT AT (range_id=60) GO EXEC WaitForFederationOperations 'FED_1' GO -- range_low = 60, range_high = 70 && range_low = 80, range_high = null ALTER FEDERATION FED_1 SPLIT AT (range_id=70) GO EXEC WaitForFederationOperations 'FED_1' GOTip:Use the sys.dm_federation_operations dynamic management view to observe the current status for all federation operations such as SPLIT and DROP.

CREATE PROCEDURE WaitForFederationOperations ( @federation_name varchar(200) ) AS DECLARE @i INT SET @i = 1 WHILE @i > 0 BEGIN SELECT @i = COUNT(*) FROM SYS.dm_federation_operations WHERE federation_name = @federation_name WAITFOR DELAY '00:00:01' ENDCreate an Orders table at the root, this table will not be federated and is used for demonstration purposes only. Typically one would not include a regular table at the root with the same name as a federated table.

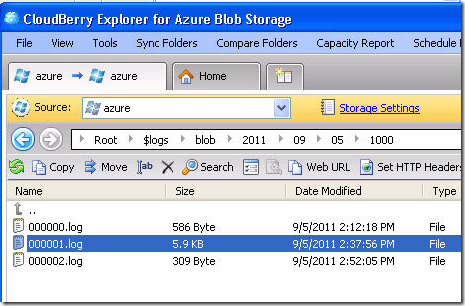

USE FEDERATION ROOT WITH RESET GO -- Create the table at the root, this table will not be federated. -- The table T1 was created at the root for demonstration purposes, typically one -- would not include a regular table at the root with the same name as a federated table. CREATE TABLE Orders ( order_id bigint not null, customer_id bigint, total_cost money not null, order_date datetime not null, primary key (order_id, customer_id) ) GO -- Insert negative values to clarify the demonstration INSERT INTO Orders VALUES (-1, -1, -10, getdate()) INSERT INTO Orders VALUES (-2, -2, -10, getdate()) INSERT INTO Orders VALUES (-3, -3, -10, getdate()) GOAt this point we have eight federation members and a root database that make up the FED_1 federated database. We can view the federation members by the querying sys.federation_member_distributions when executed against the root of the federation.

SELECT * FROM sys.federation_member_distributions GO

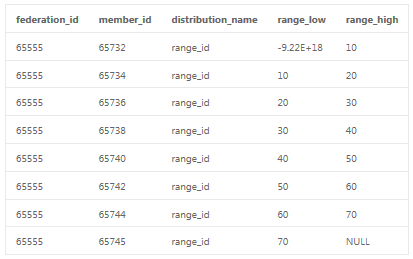

Step2 – Create Code First Objects

Entity Framework 4 allows us to develop with a code-first mentality, not only modeling objects as plain old classes but also forgoing the Visual Studio designer and associated XML mapping files. This code-first support is part of a separate download called ADO.NET Entity Framework 4.1 as found here. Included with EF4.1 is the simplified abstraction of the ObjectContext as surfaced by the DbContext API.

Earlier we created an Orders table as federated on the customer_id and included this column in the primary key. Thus we can model this table with the following code-first snippet. Note that the order for the primary key is specified using data annotations and the Key and Column attributes to define the composite key.

public class Order { [Key, Column(Order = 1)] public long order_id { get; set; } [Key, Column(Order = 2)] public long customer_id { get; set; } public decimal total_cost { get; set; } public DateTime order_date { get; set; } }The database context is created for retrieval of our orders from the federated members and is defined below as SalesEntities.

public class SalesEntities: DbContext { public DbSet<Order> Orders { get; set; } public SalesEntities (string connStr) : base(connStr) { } }Step – 3 T-SQL Query

The diagram below positions the intention of our sample, we wish to retrieve all orders (in this case two per customer) for the customer with a customer_id = 40. To do so we must first execute the USE FEDERATION statement on a given connection and follow that up with our LINQ query. In summary we want to execute the following against SQL Azure using code-first.

USE FEDERATION FED_1 (range_id=40) WITH FILTERING=ON, RESET GO SELECT * FROM Orders GO

The following diagram details to execution sequence. We have a federated database with eight federated members and one root database. The members are distributed amongst multiple servers. In this sample, matters were simplified by placing all federation members on servers named x, y and z. In reality these members would be spread out across more servers in the Azure data center. The first statement (USE FEDERATION) establishes a connection to federated member so that the queries are scoped to the tenant with customer_id = 40. The WITH FILTERING=ON clause scopes the connection to the federation key instance of 40 in the federation member with a range_low =40 and high_range=50 and it also specifies that queries are filtered with the given federated value of 40.

Step 4 – EF Code-First Query

We will first show two common code-first approaches that fail and the reason to why these queries fail, followed by the corrected code-first sample.

First Code-First Blunder

This query doesn’t work as expected and returns an incorrect result set because it plays into common misunderstanding. That is, for each execution EF checks the connection state and if connection is already open – it just uses it, if the connection is closed – it opens the connection then executes then brings the connection to the initial state before the execution (i.e. closes it).

So in this example we execute the appropriate USE FEDERATION statement by calling ExecuteSqlCommand, but neglect the fact that the connection is closed when the command completes and that this connection is thus sent back to the connection pool. When EF executes the LINQ statement, it pulls a new connection from the pool (it is reset) and submits the select statement to SQL Azure. In this case the select is made against the root database not the particular federated unit with customer_id = 40. We can see that the query was executed against the root database by observing the negative values for order_id and customer_id.

using (SalesEntities dc = new SalesEntities(ConnStr)) { string federationCmdText = @"USE FEDERATION FED_1 (range_id=40) WITH FILTERING=ON, RESET"; dc.Database.ExecuteSqlCommand(federationCmdText); IList<Order> orders = (from x in dc.Orders select x).ToList<Order>(); foreach (Order o in orders) Console.WriteLine("order_id: {0}, customer_id: {1}", o.order_id, o.customer_id); }Results:

order_id: -3, customer_id: -3

order_id: -2, customer_id: -2

order_id: -1, customer_id: -1

Second Code-First Blunder

Let’s change the snippet of code above to open the database connection (dc.DataBase.Connection.Open) prior to submitting the USE FEDERATION statement; in this way we would expect for EF to reuse the same connection for all statements and thereby overcome the prior misunderstanding. But, this query doesn’t work as expected. It plays into a nuance with the code-first implementation of DbContext and how it differs from that of ObjectContext. The Connection property of DbContext is of type SqlConnection while the Connection property of an ObjectContext is of type EntityConnection. The current code-first implementation does not check the underlying SqlConnection before attempting to open the EntityConnection, thus receive an exception because instances of EntityConnection cannot be created with an open DbConnection.

Corrected Code-First Query

In this corrected version we cast the DbContext to an IObjectContextAdapter so as to expose the ObjectContext property. From there we can simply access the EntityConnection on the context and open it prior to executing the USE FEDERATION command. When EF attempts to execute the LINQ query to retrieve the orders, it examines the EntityConnection and finds that it is already open, thus using this connection for the scope of the DbContext.

We can see from the results that we have successfully filtered on customer_id = 40 and retrieved the correct orders from the database.

using (SalesEntities dc = new SalesEntities(ConnStr)) { string federationCmdText = @"USE FEDERATION FED_1 (range_id=40) WITH FILTERING=ON, RESET"; ((IObjectContextAdapter)dc).ObjectContext.Connection.Open(); dc.Database.ExecuteSqlCommand(federationCmdText); IList<Order> orders = (from x in dc.Orders select x).ToList<Order>(); foreach (Order o in orders) Console.WriteLine("order_id: {0}, customer_id: {1}", o.order_id, o.customer_id); }Results:

order_id: 40, customer_id: 40

order_id: 41, customer_id: 40

Conclusion

In this blog we provided an overview of SQL Azure Federations and three snippets of EF code for the most basic query; one snippet returning incorrect results, another throwing an unexpected exception and a third corrected version. In the next blog posting I will demonstrate another common gotcha with SQL Azure Federations and Entity Framework.

<Return to section navigation list>

MarketPlace DataMarket and OData

• Claudio Caldato reported The OData Producer Library for PHP is here in a 9/9/2011 post:

I’m pleased to announce that today we released the OData Producer Library for PHP. In case you missed it, we released last year a client library that allows PHP applications to consume an OData feed, and with this new library it now easy for PHP Applications to generate OData Feeds. PHP developers can now add OData support to their applications so it can be consumed by all clients and libraries that support OData.

The library is designed to be used with a wide range of data sources (from databases such as SQL Server and MySQL to data structures that are at the application level for applications such as CMS systems). The library is available for download under the open source BSD license: http://odataphpproducer.codeplex.com/.

In order to make the library generic so it can be used on a wide range of scenarios we didn’t take any dependency to specific data structures or data sources. Instead the library is based on 3 main interfaces that, when implemented by the developers for the specific data source, allow the library to retrieve the appropriate data and serialize it for the client. The library takes care of handling metadata, query processing and serialization/deserialization of the data streams.

Two examples are included that show how a full OData service can be built using the library: the Northwind DB example uses an SQL Express DB as data source and the WordPress example that uses the WordPress’s MySQL DB Schema to expose a feed for Posts, Comments and Users.

Quick Introduction to OData

Open Data Protocol is an open protocol for sharing data. It is built upon AtomPub (RFC 5023) and JSON. OData is a REST (Representational State Transfer) protocol, therefore a simple web browser can view the data exposed through an OData service.

The basic idea behind OData is to use a well-known data format (Atom feed or JSON) to expose a list of entities.

The OData technology has two main parts:

- The OData data model, which provides a generic way to organize and describe data. OData uses the Entity Data Model (EDM).The EDM models data as entities and associations among those entities. Thus OData work with pretty much any kind of data.

- The OData protocol, which lets a client make requests to and get responses from an OData service. Data sent by an OData service can be represented on the wire today either in the XML-based format defined by Atom/AtomPub or in JavaScript Object Notation (JSON).

An OData client accesses data provided by an OData service using standard HTTP. The OData protocol largely follows the conventions defined by REST, which define how HTTP verbs are used. The most important of these verbs are:

- GET : Reads data from one or more entities.

- PUT : Updates an existing entity, replacing all of its properties.

- MERGE : Updates an existing entity, but replaces only specified properties.

- POST : Creates a new entity.

- DELETE : Removes an entity.

Each HTTP request is sent to a specific URI, identifying some resource in the target OData service's data model.

The OData Producer Library for PHP

The OData Producer Library for PHP is a server library that allows to exposes data sources by using the OData Protocol.

The OData Producer supports all Read-Only operations specified in the Protocol version 2.0:

- It provides two formats for representing resources, the XML-based Atom format and the JSON format.

- Servers expose a metadata document that describes the structure of the service and its resources.

- Clients can retrieve a feed, Entry or service document by issuing an HTTP GET request against its URI.

- Servers support retrieval of individual properties within Entries.

- It supports pagination, query validation and system query options like $format, $top, $linecount, $filter, $select, $expand, $orderby, $skip .

- User can access the binary stream data (i.e. allows an OData server to give access to media content such as photos or documents in addition to all the structured data)

How to use the OData Producer Library for PHP

Data is mapped to the OData Producer through three interfaces into an application. From there the data is converted to the OData structure and sent to the client.

The 3 interfaces required are:

- IDataServiceMetadataProvider: this is the interface used to map the data source structure to the Metadata format that is defined in the OData Protocol. Usually an OData service exposes a $metadata endpoint that can be used by the clients to figure out how the service exposes the data and what structures and data types they should expect.

- IDataServiceQueryProvider: this is the interface used to map a client query to the data source. The library has the code to parse the incoming queries but in order to query the correct data from the data source the developer has to specify how the incoming OData queries are mapped to specific data in the data source.

- IServiceProvider: this is the interface that deals with the service endpoint and allows defining features such as Page size for the OData Server paging feature, access rules to the service, OData protocol version(s) accepted and so on.

- IDataServiceStreamProvider: This is an optional interface that can be used to enable streaming of content such as Images or other binary formats. The interface is called by the OData Service if the DataType defined in the metadata is EDM.Binary.

If you want to learn more about the PHP Producer Library for PHP, the User Guide included with the code provides detailed information on how to install and configure the library, it also show how to implement the interfaces in order to build a fully functional OData service.

The library is built using only PHP and it runs on both Windows and Linux.

This is the first release of a Producer library, future versions may add Write support to be used for scenarios where the OData Service needs to provide the ability to update data. We will also keep it up to date with future versions of the OData Protocol.

Claudio is Principal Program Manager for Microsoft’s Interoperability Strategy Team.

Lohith explained Client side paging of Server Paged OData Entity Sets using dataJS in a 9/9/2011 post:

I am back with one more article today. Had a little halt to my writings as I moved to my new house and was busy setting up things including my internet connection and all. Today I will be talking about an interesting concept in OData world. So sit back, read and enjoy.

Objective/Goal:

Odata, if you are not familiar is an open data exchange protocol from Microsoft released under Open Specification Promise. Uses the HTTP as backbone with all its VERBS for handling CRUD functionality, adheres to ATOM/ATOMPUB formats for read and write and adds Queryability feature to the consumers. More on OData here.

Since OData provide the complete control over the data to the consumers, it may often happen that the client may not restrict the data read in terms of number of records to fetch. For e.g. if you have a products catalog with lets say 10K products and you have this set exposed as a OData feed, if a client access this set without any record limit, the service is going to return all 10K product rows. As you can see there two things we need to tackle here:

- How do we avoid the situation of a client requesting without a page size – as a Producer.

- How do we know what is the next page for the entity set, if it has a page size set at server – as a Consumer.

Lets try to answer the above questions one by one

Background:For more information on the concept of Page Size limit, do have a read of the following blog post from WCF Data Services Team:

http://blogs.msdn.com/b/astoriateam/archive/2010/02/02/server-paging-in-data-services.aspx

The article talks about .NET clients but the concept of the server side entity set page size is the highlight there. Rest of the article is based on those understandings.

Server Side Set Entity Page Size:

The producers of an Odata service can put a page size limit on the server side itself. Following is the code which sets the page size limit on a data set

1: config.SetEntitySetPageSize("Products", 20);The DataServiceConfiguration object provides SetEntitySetPageSize() method which takes the Entity Set name and the page size. In the above code Products Entity Set is set to a page size of 20. This means anytime a request for Products is made the server will only send 20 records at a time. That’s good we are not sending too many payloads now and there is a check in place. But what really happens to the entity set feed when we put a page size limit on the server. For this example I will be using the following OData service which is available for anybody to consume.

http://services.odata.org/Northwind/Northwind.svc/

This OData service is of the classic Northwind Database we all are familiar with. The service exposes Products Entity Set and it has a page size limit set to 20. Here is the URI for the Products Feed:

http://services.odata.org/Northwind/Northwind.svc/Products

When we request for the Products entity set we will get the feed for the Products Entity Set. Now if we observer closely the feed, we will see the following information at the end of the feed:

1: <link rel="next" href="http://services.odata.org/Northwind/Northwind.svc/Products?$skiptoken=20" />This is a familiar <link> tag and pay attention to the “rel” attribute. It has a value of “next”. And notice the “href” attribute. It has a url pointing to the Products entity set but with an additional odata construct called “$skiptoken”. This is how Producers let the Consumer know that here is the URL you need to navigate to get the next page. All it means to the server is skip the 20 records and then return the rest. If I navigate to the above URL and we still have more records, I would get back a <link> tag as follows:

1: <link rel="next" href="http://services.odata.org/Northwind/Northwind.svc/Products?$skiptoken=40" />Notice the skip token has a value of 40. That means 3rd page will be served by skipping 40 records and returning the rest from there.

So this is all fine from human eye perspective. But how to achieve these from lets say a client side application. Lets see that in the next section.

Looping through pages from Client Side:

In previous section we understood how the server page size limit is represented in the odata feeds. Now lets see how a client side app can make use of the same. For this example we will be doing the following:

- Access Products Entity Set from http://services.odata.org/Northwind/Northwind.svc/

- Create a HTML page and create a Tab per Page of data feed read

- List out products in each tab

Here is the screen shot of the finished solution:

As usual I will be using the dataJS library for reading the OData. This example we will focus on dataJS. I will follow up with other examples such as – using JQuery to page, within .NET client apps etc.

So lets walkthrough the example:

HTML Markup:

1: <div>2: <button id="btnGetData">3: List Products</button>4: <span id="loading">Fetching data...</span>5: </div>6: <div id="tabs">7: <ul>8: </ul>9: </div>Nothing fancy, I have a button to list the products. Then I have a div to create the tabs. I will be using the JQuery tabs widget to achieve this.

Product Listing Template:

1: <script type="x-jquery-tmpl" id="resultsTemplate">2: <ul>3: {{each results}}4: <li style="margin:.5em">5: {{if $value.Discontinued }}6: <span class='discontinued'><b>${$value.ProductName}<i> ($${$value.UnitPrice})</i></b></span>7: {{else}}8: <span class='productName'><b>${$value.ProductName}<i> ($${$value.UnitPrice})</i></b></span>9: {{/if}}10: </li>11: {{/each}}12: </ul>13: </script>Again nothing fancy, I have a unordered list <ul> and output each product as a <li>. If the product is discontinued I use a style to show it in red.

Scripts:

On DOM ready, I wire up the button click to a handler:

1: $(getDataButton).button().click(OnGetDataClick);Now on click of the button “List Products”, use dataJS to make the service call to Products entity set as follows:

1: function MakeRequest(url)2: {3: OData.read(url, successHandler, errorHandler);4: };We make use of dataJS read API to read the data. successHandler is the callback for success and errorHandler is the callback if in case of any errors.

Now all the magic happens in the successHandler. Here is the code for the successHandler:

1: function successHandler(data)2: {3: var tab_title = "Catalog Page " + tab_counter;4: $(tabsDiv).tabs("add", "#tabs-" + tab_counter, tab_title);5: $(resultsTemplate).tmpl(data).appendTo("#tabs-" + tab_counter);6: $(tabsDiv).show();7: if (data.__next) {8: tab_counter++;9: MakeRequest(data.__next);10: }11: else {12: $(getDataButton).button("enable");13: $(loadingDiv).hide();14: }15: }Line 4 creates a new tab. The meat of the work is on line 7. we are checking if there is a “next” link available for the feed. If yes we know that we have one more page of data to read. Remember from previous section that “next” is actually a URI to the next page. So we now make use of that URI and we give a request again. We increase the tab counter and a new tab is created on the success. This continues till all the pages are read. And we have a nice looking app which loops through all the pages and creates a nice lookin[g] tabs for each page.

With OData + dataJS + JQuery its super simple to create a data centric applications without much effort.

Conclusion:

- If you are an OData producer don’t forget to set Entity Set Page Size limit

- If you are a Consumer, do look out for “next” link in the feed. Make use of this and loop accordingly through all the pages

- Recommend using dataJS for all OData service related reads and writes.

A live version of this example can be found at the following URL:

http://odataplayground.99on.com/clientsidepaging/index.html

Just view the source and you will get the source code

See Mary Jo Foley (@maryjofoley) described potential cloud-related sessions in her Microsoft Build: Developer topics to watch post of 9/9/2011 to her All About Microsoft blog for ZDNet in the Cloud Computing Events section below.

<Return to section navigation list>

Windows Azure AppFabric: Apps, Access Control, WIF and Service Bus

See Mary Jo Foley (@maryjofoley) described potential cloud-related sessions in her Microsoft Build: Developer topics to watch post of 9/9/2011 to her All About Microsoft blog for ZDNet in the Cloud Computing Events section below.

Riccardo Becker (@riccardobecker) began a new series with AppFabric Service Bus Topics part I on 9/8/2011:

Available in the May CTP but release[d] today on the 9th of August*, an update of the AppFabric Service Bus containing the wonderful Queues and Topics.

How to implement the great pub/sub mechanism by using Topics. Topics enable us to implement a 1-to-many messaging solution where the rules of filtering are NOT in your application (or database or whatsoever) but just like ACS, configuration on the Service Bus. It enables you to abstract messaging logic from your app to the bus instead of implementing complex rules in your application logic.

Imagine your application running on Azure (a worker role) that is being diagnosed by using Performance Counters. These are collected locally and flushed to storage every configurable period. Now imagine your system administrators, responsible for monitoring your cloud assets, being at home but still need to be notified on events. Imagine 2 system administrators both having WP7 being the subscribers to your messaging solution. The first admin is responsible for scaling up and down your Windows Azure App (a worker role doing lots of calculations) while the second system admin (who is the manager) is only to be notified on the actual scaling up and scaling down events. The first system admin will receive messages showing him averages of CPU utilization and free memory per hour. Based on this, he can decide to scale up or down. The example in the next blog post below* show how to setup the Service Bus and how to create this pub/sub mechanism.

I'll finish the next one in a few days.

* There appears to be a one-month discrepancy in Riccardo’s release date and I didn’t see a “blog post below.”

Brent Stineman (@BrentCodeMonkey) continued his series with The Virtual DMZ (Year of Azure Week 10) on 9/8/2011:

Hey folks, super short post this week. I’m busy beyond belief and don’t have time for much. So what I want to call out is something that’s not really new, but something that I believe hasn’t been mentioned enough. Securing services hosted in Windows Azure so that only the parties I want to have connect to can.

In a traditional infrastructure, we’d use Mutual Authentication and certificates. Both communicating parties would have to have a certificate installed, and exchange/validate that when establishing a connection. If you only share/exchange certificates with people you trust, this makes for a fairly easy way to secure a service. The challenge however, is that if you have 10,000 customers you connect with in this manner, you now have to coordinate a change in the certificates with all 10,000.

Well, if we add in the Azure AppFabric’s Access Control Service, we can help mitigate some of those challenges. Set up a rule that will take multiple certificates and issue single standardized token. I’d heard of this approach awhile back but never had time to explore it or create a working demo of it. Well I needed one recently so I sent out some network calls to get a demo recently and fortunately had a colleague down in Texas found something ON MSDN that I’d never run across, How To: Authenticate with a Client Certificate to a WCF Service Protected by ACS.

I’ve taken lately to referring to this approach as the creation of a “virtual DMZ”. You have on or more publically accessible services running in Windows Azure with input endpoints. You then, secured by certificates an the ACS, have another set of “private” services, also with input endpoints.

A powerful option, and one that by using the ACS isn’t overly complex to setup or manage. Yes, there’s an initial hit with calls to the secured services because they first need to get a token from the ACS before calling the service, but they can then cache that token until it expires to make sure subsequent calls are not impacted as badly.

So there we have it. Short and sweet this week, and sadly sans any code (again). So until next time… send me any suggestions for code samples.

<Return to section navigation list>

Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

No significant articles today.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

• Avkash Chauhan explained Why Ping between Windows Azure roles does not work? in a 9/9/2011 post:

A question always comes if it possible to ping between roles in Windows Azure deployment?

This is because, when you have multiple roles and instances in your deployment, it is comm

eontto check the health between all the roles and instances by RDP to one instance and then ping to other (assume you have collected IP address to other roles and instances) instances or roles.

But if you are one of those who tried PING between instances or roles, you know that PING does not work..

As of now, ICMP is not supported with Windows Azure Internal Endpoints so you will not be able to ping between roles or instances. If you really want to test PING between roles, you would have to do this over TCP using a tool like TCPING. If you try using TCPING, please be sure that you have opened up TCP internal endpoint on all of your roles.

• Alex Handy asserted PHP becomes a hot PaaS property in a 9/9/2011 post to the SD Times on the Web blog:

In August, the previously Ruby-only PaaS firm, Engine Yard, acquired PHP PaaS company Orchestra, while Zend Technologies released version 5.5 of its Zend Server. But the repercussions of consolidation and innovation in the PHP market will still be with us into next year.

Andi Gutmans, CEO of Zend, said that the real focus for Zend Server 5.5 is deployment. He said that while enterprises are no longer struggling to use agile and PHP, they are now encountering problems keeping up with faster release cycles.

“What you're seeing in the DevOps movement, you're seeing the development side has really adopted agile development. You see development teams putting out new functionality faster. You typically want to be on a bi-weekly release cycle, but the deployment is not automated enough to foster that," said Gutmans.

"Even though on the development side, some of the issues have been resolved, on the deployment side they haven't."To that end, Zend Server 5.5 includes deployment capabilities that mimic those in Java. “It's similar to Java, where you have a WAR file," said Gutmans. "You can package up whole applications with configuration, and hand that consistent package off to production and ensure all the code is ending up on the production side. You can also enforce requirements on the configuration of the servers and the provisioning of the servers."

Zend focused on a deploy-anywhere approach to cloud, with Zend Server offering scaling and provisioning of new servers. Elsewhere, however, PHP PaaS means public cloud.

Engine Yard, for example, has long hosted its own Ruby-on-Rails PaaS in Amazon's Elastic Compute Cloud. So it makes sense that Orchestra (the PHP PaaS company Engine Yard acquired in August) is also based on Amazon Web Services.

PHP's obstacles

Michael Piech, vice president of product management and marketing at Engine Yard, said that PHP remains an appealing language for enterprises, even though it's not as “cool” as Ruby. He said that while Ruby growth is faster than PHP, he added that PHP has already won much success around the world, and thus has less room to grow. …

Rob Blackwell (@RobBlackwell) posted Java on the Windows Azure Cloud: Pros and Cons to The Register’s Cloud Developer Workshop on 9/9/2011:

Many people are surprised to hear that running Java on Windows Azure is even possible, but there are already large Java-based financial services and scheduling applications running in production.

Microsoft has committed to making Java a “first class citizen on Windows Azure”. In this article I explore the motivations behind Java on Windows Azure and weighs up the pros and cons.

Why choose Windows Azure?

Java has been a popular platform for Enterprise development for over ten years. Many organisations have large investments in Java code with applications representing hundreds of man years of development.

Java applications can be difficult and expensive to host, needing dedicated servers and data centre resources. Cloud computing can help to simplify and consolidate IT infrastructure and there are large potential cost savings in moving to the Cloud.

With the demise of Sun Microsystems, Windows Server and SQL Server have become a common choice of hosting platform.* Windows Azure and SQL Azure can be a natural next step.

Java web applications

Typically Java web applications are implemented using servlets and/or Java Server Pages running in a servlet container such as Tomcat, JBOSS or Jetty.

Microsoft provides a number of solution accelerators to help install these web stacks, including the Windows Azure Tomcat Solution Accelerator and the Windows Azure Jetty Solution Accelerator.

[AzureRunMe] is also a popular choice because it provide a more general purpose, customisable and extensible framework. It works with a variety of technologies and application frameworks.

Cloud based web applications should ideally be stateless, but Java applications often use shared session state. One approach is to use the Atomus TomcatAzureSessionManager which persists session information to Windows Azure table storage.

Sticky sessions are not supported on Windows Azure (they inhibit horizontal scale out) but there are some workarounds, including Ryan Dunn’s [Windows Azure Sticky HTTP Session Router]. Optionally, IIS Application Request Routing can be used as a reverse proxy in front of your web application to provide additional security.

Relational databases

Java applications are encouraged to be database agnostic, so moving to Microsoft’s SQL Azure database-as-a-service offering can be as straightforward as changing a JDBC driver. The Microsoft JDBC Driver now officially supports SQL Azure. Most SQL Server databases can be moved to SQL Azure with tools such as the SQL Azure Migration Wizard. I’ve also seen successful conversions from MySQL.

Storage

Windows Azure Storage includes blobs, tables and queues. All are available programmatically using the Windows Azure SDK for Java.

Blobs are often a cheap and scalable alternative to file storage for documents, and can easily be incorporated into the Windows Azure CDN.

Tables can be a cheaper and more scalable alternative to relational databases for tabular data.

Queues are a useful way to distribute work to worker roles.

Tooling

It is technically possible to develop Azure applications on Mac OS X or Linux, but it’s still easier to use a Windows machine for build and packaging. The Windows Azure management portal requires Silverlight and seems to work best in Internet Explorer.

Microsoft and Soyatec have provided the Windows Azure Tools for Eclipse.

If you are familiar with PowerShell, then the Windows Azure Platform PowerShell Cmdlets are useful management and administration tools.

If you prefer a GUI, then I recommend the Cerebrata tools.

What are the cons?

At the time of writing, the maximum size of a SQL Azure database is 50 GB – Not a huge amount for an enterprise application.

You're only allowed five external TCP ports per VM in your service definition. Many Java stacks use ports liberally for everything from JMX, JCA, clustering etc.

You can’t use the Windows Azure AppFabric Cache service yet – there is no suitable API for Java.

You need to be careful about IP address configuration in your application stack and understand the way that the Windows firewall works.

It’s still hard to get support from Java vendors who question whether Windows Azure is the same as Windows Server.

Embracing the cloud

Moving your Java application to the cloud is just the first step. To really benefit from cloud economics you need to make use of cloud features and cloud architecture. A small amount of rework in your application can often allow better scale out and better use of smaller, cheaper Virtual Machines.

The Windows Azure APIs and libraries allow you to access cheaper and more scalable storage (blobs, tables and queues) as well as features such as the Windows Azure Content Distribution Network (CDN). The Windows Azure AbbFabric provides cloud based middleware services that make it easier to build hybrid cloud / on-premises solutions.

Summary

Microsoft is clearly committed to interoperability on the Windows Azure Platform, and support for Java in particular. Java on Windows Azure not only works, but can form part of a cost effective strategy for Java applications.

* I’m not sure what the “demise of Sun Microsystems” had to do with Windows Azure and SQL Azure becoming “a common choice of hosting platform.” A UK phenomenon?

Rob is R&D Director at Two10degrees, a UK based Microsoft Partner specialising in Windows Azure architecture and software development.

Michael Collier and Dave Nielsen discuss how to leverage Windows Azure using social media applications in this 00:03:13 Bytes by MSDN video segment:

Join Dave Nielsen, co-founder of CloudCamp & Principal at Platform D, and Michael Collier, Software Architect at Neudesic as they discuss how to leverage Windows Azure using social media applications. They walk through an example of Outback Steakhouse and how they utilized Azure to launch a Facebook Fan & Twitter page. They were able to scale up instances and compute power as new visitors came to their site and still meet existing customer needs with ease.

Video Downloads

WMV (Zip) | WMV | iPod | MP4 | 3GP | Zune | PSPAudio Downloads

AAC | WMA | MP3 | MP4

About Michael: Michael is an Architect with Neudesic. He has nearly 10 years of experience building Microsoft based applications for a wide range of clients. Michael spends his days serving as a developer or architect – helping clients succeed with the Microsoft development platform. He gets very “geeked up” about any new technology, tool, or technique that makes his development life easier. Michael spends most of his spare time reading technology blogs and exploring new development technologies. He is also an avid golfer and attempts to be good at shooters on the Xbox 360. Follow Michael on Twitter under the handle @MichaelCollier and also on his blog at www.MichaelSCollier.com.

About Dave: Dave Nielsen, Co-founder of CloudCamp & Principal at Platform D, is a world-renowned Cloud Computing strategy consultant and speaker. He is also the founder of CloudCamp, a series of more than 200 unconferences, all over the world, where he enjoys engaging in discussions with developers & executives about the benefits, challenges & opportunities of Cloud Computing. Prior to CloudCamp, Dave worked at PayPal where he ran the PayPal Developer Network & Strikeiron where he managed Partner Programs.

<Return to section navigation list>

Visual Studio LightSwitch and Entity Framework 4.1+

Paul Patterson posted Microsoft LightSwitch – Binding to the Telerik RadScheduler (Draft) on 9/9/2011:

Awesome timing! Today I was working through some thoughts on how to integrate the Telerik RadScheduler control into a LightSwitch application. I had even sent off a message to a fella at Telerik asking about this RadScheduler. Within minutes I had a response from Evan Hutnik, Developer Evangelist, at Telerik who put me on to a hands on lab that they had just published up to their site (right here) . The lab was exactly what I was looking for – thanks Evan!

Using the lab as a reference, here is how I used the RadScheduler control with binding to my LightSwitch application…

Using a component like the RadSchedule control is something that I have been wanting to do for some time. I already knew how to add the control to the application, however it was the binding part where I got stuck. Thanks to some timely information from Telerik, I can now overcome that challenge.

First thing I did was create a new LightSwitch application. In my case, I selected to create a new Visual Basic LightSwitch application named LSRadScheduler…

Before I go any further, there is something I should say…. Excuse me! (I just gulped a large volume of a carbonated beverage).

I also wanted to mention something else….Using the RadScheduler control is a bit tricky, especially when it comes to data binding in LightSwitch. Using the RadScheduler is not as simple as adding some binding attribute to the control XAML. The RadScheduler itself uses a built-in Telerik object type of Appointment to bind to. Actually, it is a collection of Appointments that the RadScheduler needs. This is important to understand because it is the Appointment that I needed to actually bind to and use when using the RadScheduler control. This will all be explained as I move through the steps to get this working in my application.

Moving on… the first thing I did was create a table that is going to hold the information about the appointments I am going to keep track of. Here is table I created…

…and that is all there really is to that!

For giggles, I created another table called Employee. Here is what it looks like…

Back in the ScheduledAppointment table, I select to add a Relationship…

… and then defined a relationship between the Employee and ScheduledAppointment tables…

With that, I wanted to set up a simple screen that will show me the scheduled appointments for different employees. So, from the Solution Explorer, I right-click the Screens node and select to add a new screen…

The new screen I added is a List and Details Screen, and in the Add New Screen dialog I selected the Employees table as the screen data, as well as included the Employee ScheduledAppointments data…

I am not too concerned right now about the screen, so I saved and closed the screen designer at this point. What I needed to do is do some creative dancing to soft show my way around the creation of a custom control for the purpose of showing and binding the RadScheduler control in my screen.

So, back in the Solution Explorer I select to view the solution in File View…

…also, I clicked the Show All Files button so that the Client Generated project shows…

I expanded the Client Generated project and opened the My Project item…

In the ClientGenerated properties window, I selected the References tab and then proceeded to add the following references to the project….

- Telerik.Windows.Controls

- Telerik.Windows.Controls.Input

- Telerik.Windows.Controls.Navigation

- Telerik.Windows.Controls.ScheduleView

Next I right-click the Client project and select to add a new Item to the project. The item I select to add is a new Silverlight User control that I name MyScheduleView…

When I click the Add button in the Add New Item dialog, Visual Studio created the .xaml file, however the designer choked on something. I clicked the Reload the designer link and that seemed to fix the issue (for now).

If you happened to be doing the Visual Basic version of this post, and have followed along in some earlier posts, you’ll notice that the Visual Studio templates missed out on a couple of things (C# versions work fine). It has to do with the namespacing required for the control. In the resulting XAML for the MySCheduleView control, I had to update both the XAML and the code behind file to include the namespacing…

…and update the namespace in the code behind (and include an Imports declaration for the System.Windows.Controls namespace too)…

…more content to come…

See Mary Jo Foley (@maryjofoley) described potential cloud-related sessions in her Microsoft Build: Developer topics to watch post of 9/9/2011 to her All About Microsoft blog for ZDNet in the Cloud Computing Events section below.

Paul Patterson recommended that you Singleton your Entity Framework Model in a 9/8/2011 post:

Looking for a great way to lighten the workload of creating and using instances of your entity framework generated models? Here is how I implemented a simple singleton pattern to make sure I only use what I need, when I need it…

I typically abstract my code into loosely coupled assemblies, mostly for maintainability and reusability reasons. (Is “reusausebililitiitlly a real word?) I also do a lot of work with the ADO.Net Entity Framework and with all that separation of concerns going on, I tend to end up with a more than my fare share of assemblies.

Early on in my entity framework journey I had found that I was constantly having to instantiate a new instance of my entities model for almost every call to the model. Being the lazy, uh, I mean, creative and OO conscious fella that I am, I found a much more “friendly” way to use my entities model.

My original problem was that sometimes I would open multiple instances of the entity model to do some data work. Doing the work this way sometimes resulted in some data concurrency issues. So, instead of ensuring that I had completely finished and closed out the model, I decided to make sure that I was always using only one instance of the model.

The solution came in the implementation of a simpleton pattern when creating the instance of the model. For example, here is a class named DataContext. This class contains a shared property that would return a new instance of the model, but only of the model did not already exist.

Public Class DataContext Private Shared _DBEntities As MyModelEntities Public Shared Property DBEntities() As MyModelEntities Get If _DBEntities Is Nothing Then _DBEntities = New MyModelEntities() End If Return _DBEntities End Get Set(ByVal value As MyModelEntities) _DBEntities = value End Set End Property End ClassNow from a business logic layer (middle tier) I would do some work that would require some data, but use the singleton pattern to make sure that I was using only one instance of the model. For example…

Public Function GetCustomers() As List(Of Customer) Dim listOfCustomers = (From c In DataContext.DBEntities.Customers Select c).ToList() Return listOfCustomers End FunctionPretty nifty eh?

Let me know if it helps you in your ADO.Net Entity Framework efforts.

Return to section navigation list>

Windows Azure Infrastructure and DevOps

David Linthicum (@DavidLinthicum) asserted “The concept of cloud broker is taking shape, but its value is not clear, especially for the long term” in a deck for his How 'cloud brokers' help you navigate cloud services post of 9/9/2011 to InfoWorld’s Cloud Computing blog:

What's a cloud broker? Gartner defines it as "a type of cloud service provider that plays an intermediary role in cloud computing." Perhaps better put, they help you locate the best and most cost-effective cloud provider for your needs.

The idea is compelling. You're looking for a storage-as-a-service provider, and instead of going to them directly, you use a broker to gather your requirements and find the best fit -- in some cases, several good fits that you can use based on the best cost, availability, and performance.

There are two types of cloud brokers emerging: passive and active:

- Passive cloud brokers provide information and assist you in finding the right cloud-based solution. They may gather your requirements, understand budgets, then pick the best cloud providers for your needs and wallet. They even assist you in signing up, perhaps wetting their beaks in the process.

- Active cloud brokers may provide dynamic access to different cloud providers based on cost and performance data, and they could use different cloud providers at different times, based on what best serves their clients. They may even multiplex cloud providers so that clients use a single interface serviced by many providers. This is analogous to least-cost routing of phone calls back in the day, taking a request for service -- say, storage -- and seeking the best cloud provider for the job at that time, perhaps using performance, availability, and cost data. Then the agent would broker the relationship between the client and the cloud provider.

The advantages of cloud brokers are cost savings and information. Indeed, as the number of cloud providers continues to grow, a single interface for information, combined with service, could be compelling to companies that prefer to spend more time with their clouds than doing the research.

At the same time, the value of a cloud broker is undetermined, considering that cloud computing is still emerging and the provider choices could become more obvious. In such a case, brokers won't have as much value.

David Pallman announced a Windows Azure Design Pattern Icons Update - Now Resizable on 9/9/2011:

Not too long ago I released a set of Windows Azure Design Pattern Icons, which are related to my Windows Azure Design Patterns web site and upcoming Windows Azure architecture book (Volume 2 of The Windows Azure Handbook series).

Since making these icons available for download, I've been gratified to see many people using them. I've also had some requests for something that is resizable. I've gone ahead and updated the icon download zip file to also include Enhanced Windows Metafile (.emf) files that you can resize nicely in tools like PowerPoint and Visio. As you can see from the example below, this vector format allows you to resize these icons without pixellation.

Enjoy!

Patrick Dubois announced a Special [DevOps White Paper] Offer from Cutter Consortium on 9/9/2011:

Download your complimentary copy of the complete Cutter IT Journal issue -- Devops: A Software Revolution in the Making? -- below!

Opening Statement by Guest Editor Patrick Debois

Some people get stuck on the word 'devops', thinking that it is just about development and operations working together. Systems thinking advises us to optimize the whole; therefore devops must apply to the whole organization, not only the part between development and operations. We need to break through blockers in our thought process, and devops invites us to challenge traditional organizational barriers. The days of top-down control are over -- devops is a grass-roots movement similar to other horizontal revolutions, such as Facebook. The role of management is changing: no longer just directive, it is taking a more supportive role, unleashing the power of the people on the floor to achieve awesome results. And that is the focus of this issue of Cutter IT Journal, the first installment of a two-part series.

This issue includes these articles:

Opening Statement by Patrick Debois

Why Enterprises Must Adopt Devops to Enable Continuous Delivery by Jez Humble and Joanne Molesky

Devops at Advance Internet: How We Got in the Door by Eric Shamow

The Business Case for Devops: A Five-Year Retrospective by Lawrence Fitzpatrick and Michael Dillon

Next-Generation Process Integration: CMMI and ITIL Do Devops by Bill Phifer

Devops: So You Say You Want a Revolution? by Dominica DeGrandis

Download your copy of this Cutter IT Journal issue now!

To receive your complimentary copy of this issue, simply enter the promotion code DEVOPSREVOLUTION when you click here and fill out our special offer form.

Cutter members can access the issue here.

Scott M. Fulton, III (@SMFulton3) reported Intune Makes Windows Software Maintenance Into an Azure Service in a 9/8/2011 post to the ReadWriteCloud:

The first tool Microsoft produced for remotely deploying Windows on client computers throughout a network was called - in classic Microsoft-ese - the Automated Installation Kit (AIK). What made it relatively versatile was a feature introduced with the Vista kernel called ImageX, that enabled a complete, working image of the operating system to be "painted" onto any hard drive, locally or remotely. This was so much better having to generate each new component through the classic Setup process we all know and hate; and later, the Microsoft Deployment Toolkit took over this task.

The next generation of Microsoft's remote installer, called Windows Intune, has been in beta since last March; and as we learned yesterday, will move to general availability (GA) on October 17.

Besides having a much nicer name, Microsoft's next remote installer console goes into far greater depth than its sorry-acronym predecessors. Unlike AIK or MDT, Intune is browser-based, and can manage deployments on clients remotely through the Internet as well as the local network. It offers deployment support for third-party software. And the deployment agent - the thing that actually installs the software - is moved off of the company network and onto the Windows Azure platform. [Emphasis added.]

The console is Silverlight-based, so it should not require Internet Explorer. The Managed Software workspace, shown here, can deploy supported software packages to any of the managed computers that are signed up with Intune. Keep in mind, this is all about your Intune circle, not just your corporate network. Each piece of managed software represents an installation image that you create yourself. Adobe Reader X shown above may be a bad example since there typically isn't much there for you to configure. However, software that supports Microsoft's ImageX deployment mechanism will let you install it on a prototype computer, configure it as you will and load it with options (for instance, company templates for Office), then capture an image of that software and deploy it multiple places.

That may seem wrong and even illegal, but it all works out in the end. Once this remote system deploys the image, the enrollment process takes place for each user separately. So licensing matters are all straightened out on startup.

What does not work anymore - at least not for Intune - is the notion of creating multiple images of the operating system plus applications, each one configured differently, storing those images in a colossal repository, and remembering which one is the right one for a particular class of client. Microsoft called that process thick imaging, and with Intune, it's now a no-no.

In its place comes thin imaging, which mandates that there be one master image of the operating system, and separate images of applications which can be updated as necessary.

As online documentation currently reads, "In this process, you install the default Windows image, and then automatically install required applications immediately afterward. Both MDT 2010 and System Center Configuration Manager make this process easy. The benefit is that thin imaging reduces image maintenance and image count considerably. Updating an application no longer requires capturing a new image. You simply update the application in the deployment share."

Intune monitoring clients must still be installed on remote systems, and this process still requires MDT or SCCM.

In what could be a glimpse of the future for Microsoft management tools, Intune adds a feature that lets managers run a few security scans on remote client systems - in this example, running a malware scan. If admins can do something like this remotely, there's a number of other System Center-centered tasks that admins might like to see moved to the Azure platform as well.

After all, as Intune program manager Alex Heaton pointed out on his company's own blog earlier this week, "Businesses need to ensure that PCs are highly secure and well-managed to help protect corporate data and assets, reduce support calls and streamline the cost of delivering routine management and security."

See Also

<Return to section navigation list>

Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

See Mary Jo Foley (@maryjofoley) described potential cloud-related sessions in her Microsoft Build: Developer topics to watch post of 9/9/2011 to her All About Microsoft blog for ZDNet in the Cloud Computing Events section below.

Neil McEvoy posted Private PaaS – The Next Era of Enterprise IT to the Cloud Computing Best Practices blog on 9/7/2011:

Central to the Government Community Cloud model is the development of a ‘Private PaaS’ (Platform as a Service).

Public Cloud providers like Azure offer a combination of hosting and built-in software components that make life easier and quicker for software developers, to write, maintain and publish new software to production environments. [Emphasis added.]

Deploying this same combination internally is therefore a Private PaaS, and in my view this will be the real ‘killer app’ of the Cloud trend. This will be where the bulk of enterprise spending in the Cloud space will be concentrated, as large organizations like banks and governments leverage the power of the technology via the mode they feel most comfortable with.

The concept and the benefits of it are very nicely explained in this white paper by Apprenda, a vendor specializing in this model implemented on Microsoft .net. One Java equivalent is CloudBees.

In a nutshell where IaaS is about how virtualization enables a single hardware platform to run multiple OS instances, PaaS repeats the same effect for running multiple software applications on a single OS instance. Where virtualization amplifies the economic value of a hardware platform through running multiple OS, PaaS then expands that another dimension by enabling each OS to run multiple applications too.

DevOps – Maximizing the business benefits of Cloud Computing

This emphasizes how the value of PaaS is more about a new software layer and associated productivity benefits for developers, less so than it is about outsourcing to Cloud providers for elastic supply of IaaS, or similarly the deployment of internal IaaS.

The reality for most large enterprises is that the cost of hardware is the least expensive part of their challenge. They can and do throw lots of servers at projects because in relative terms it’s not that difficult or costly to do so.

In contrast the complexities of software development and deploying large enterprise applications is a hugely expensive activity, both in terms of labor, agility and change management, as well as financial cost. For example how rapidly they can implement new systems determines how quickly they can launch new products, which can directly impact market share and subsequently revenues and profits.

The Apprenda white paper highlights that PaaS can impact on this level of business ROI:

- Standardize application development and drive highly efficient software architecture, establishing conformity across all guest applications

- Increasing agility through automating critical DevOps workflows, reducing complexity and delegating control through self-service

- Dramatically boost developer productivity by reducing time-to-deploy new applications (eg from 30 days to 5 mins) through the PaaS handling common components like authentication, session management, caching etc.

This appears to have been Microsoft’s marketing theory for WAPA that resulted in acquiring a single customer (Fujitsu.)

<Return to section navigation list>

Cloud Security and Governance

James Downey (@james_downey) listed Five Bad Reasons Not to Adopt Agile in a 9/8/2011 post:

While the advantages of agile appear obvious, I observe that many IT departments stick stubbornly to waterfall. Despite failure after failure, IT managers blame the people—staff members, vendors, users—rather than the process.

Waterfall organizes software projects into distinct sequential stages: requirements, design, coding, integration, testing. Despite its common sense appeal, waterfall projects almost always lead to unproductive conflicts. Most users cannot visualize a system specified in a requirements document. Rather, users discover the true requirements only late in the project, usually during user acceptance testing, at which point accommodating the request means revising the design, reworking the implementation, and re-testing.

In fact, users should change their minds. We all do as we learn. As users begin using a system, they begin to see more clearly how it could improve their jobs. We should welcome this learning. But under the waterfall approach, such discovery gets labeled as scope creep, it undermines the process, causes cost overruns, and leads to a frenzy of finger pointing.

Agile methods throw out the assumption that a complex system can be understood and fully designed and planned for up front. While there are a great diversity of agile methods, they almost all tackle complexity by breaking projects into small—one week to a month—iterations, each one of which delivers working software of value to a customer. Users get to work with and provide feedback on the system sooner rather than later.

So why do so many IT organizations insist on crashing over waterfalls again and again? Here are the most frequent refrains I hear from waterfall proponents:

“We need a fixed-fee contract to avoid risk.”

Fixed-fee contracts force projects into a waterfall approach that makes change cost prohibitive. While fixed-fee contracts in theory transfer risk from client to vendor, these contracts in reality increase the risk of a final product that meets specification but fails to achieve the business objectives. A far simpler alternative would be a time and material contract that requires renewal at the start of each iteration. The customer reduces risk by agreeing to less cost at each signing and by receiving working software sooner rather than later, which both achieves a quicker return on investment and assures the project remains in line with expectations.

“What’s this going to cost?”

To make the business case for a project, it is essential to nail down costs, which the waterfall method promises for an entire project as early as the requirements stage. And while I’m all for business cases, making decisions based on bogus data makes little sense. What we need is an agile business case, one that covers a single iteration and rests on meaningful cost and revenue data.

“I’m a PM and I need a schedule to manage to.”

Wedded to the illusion of predictability, many PMs identify their discipline with large, complex schedules, full of interdependent sequences of tasks stretching out over months, schedules so easy to create in MS Project but that so rarely work out in reality. These schedules, together with requirement documents the size of a New York City phone book, end up as just more road kill of the waterfall process.

“Should we buy or build? We need a decision.”

Agile eschews big designs up front in favor of designs that evolve organically over time. But IT departments must often make certain big design decisions up front, such as whether to buy or build a system or component.

And, unfortunately, these sorts of decisions fall outside of the well-known agile frameworks such as Scrum or XP, which focus exclusively on software development. So it might be assumed that once a big up front decision is required, that agile no longer applies. Indeed, it does make sense that more requirements and more design is needed up front to avoid committing dollars to the wrong vendor.

Regardless, I’d argue that there are ways to keep a project agile even if certain decisions must be made up front.

1) Keep in mind that there is no need to collect every requirement, just those needed for the decision.

2) Take advantage of demo versions and commit a few early iterations to build out rapid prototypes on different products.

3) Consider open source. Low cost means low commitment.