Windows Azure and Cloud Computing Posts for 12/21/2010+

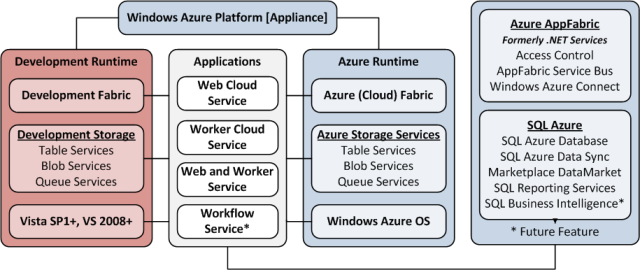

| A compendium of Windows Azure, Windows Azure Platform Appliance, SQL Azure Database, AppFabric and other cloud-computing articles. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database and Reporting

- Marketplace DataMarket and OData

- Windows Azure AppFabric: Access Control and Service Bus

- Windows Azure Virtual Network, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch

- Windows Azure Infrastructure

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Read the detailed TOC here (PDF) and download the sample code here.

Discuss the book on its WROX P2P Forum.

See a short-form TOC, get links to live Azure sample projects, and read a detailed TOC of electronic-only chapters 12 and 13 here.

Wrox’s Web site manager posted on 9/29/2009 a lengthy excerpt from Chapter 4, “Scaling Azure Table and Blob Storage” here.

You can now freely download by FTP and save the following two online-only PDF chapters of Cloud Computing with the Windows Azure Platform, which have been updated for SQL Azure’s January 4, 2010 commercial release:

- Chapter 12: “Managing SQL Azure Accounts and Databases”

- Chapter 13: “Exploiting SQL Azure Database's Relational Features”

HTTP downloads of the two chapters are available for download at no charge from the book's Code Download page.

Tip: If you encounter articles from MSDN or TechNet blogs that are missing screen shots or other images, click the empty frame to generate an HTTP 404 (Not Found) error, and then click the back button to load the image.

Azure Blob, Drive, Table and Queue Services

See Erik Oppedijk analyzed the cost of five scenarios for Using Azure CDN to extend Bing Maps in a 12/21/2010 post to the InfoSupport Blog Community in the Windows Azure Virtual Network, Connect, RDP and CDN section below. (Stores maps in blobs).

See Rob Gillen reported Planet Technologies Launches GovCloud on 12/21/2010 in the Other Cloud Computing Platforms and Services section below. (Links to Rob Gillen’s Windows Azure blob performance analysis.)

<Return to section navigation list>

SQL Azure Database and Reporting

Kristofer Anderson (@KristoferA) described Inferring Foreign Key Constraints in Entity Framework Models in a 12/21/2010 post:

Foreign key constraints are one of the most important components of relational database models. At the database level they are key to maintaining data integrity and prevents applications and users from writing invalid data or from orphaning data by inadvertently deleting data referenced from other tables.

When reverse-engineering entity relationships in modelling tools and OR mappers, foreign key constraints serve yet another important role; they also describe the relationship between tables/entities. Without foreign key constraints, OR mappers can’t generate navigation properties between entities, and will not know in what order* it should process inserts, updates, and deletes for various tables.

* = e.g. generating order detail records before the main order record has been created.

Databases with no FK constraints

Unfortunately, there are lots of real-world databases that - although they are created and deployed in RDBMSes – lack foreign key constraints. The reasons for omitting FK constraints vary, but it is not uncommon that the reasons stated are based on misconceptions, misunderstandings, or lack of information. Sometimes they are omitted by programmers that can’t get their head around proper order of inserts/deletes, sometimes they are omitted “for performance reasons”, sometimes noone can remember anymore why they were omitted. Whatever the reason, relational databases with some or all FK constraints missing are out there and they’re fairly common.

Adding FK constraints to legacy databases

One way to tackle FK-less databases is simply to add the missing FK constraints. Simple solution to data integrity issues, and makes OR mappes happy, right? Unfortunately it is not that simple. The real problem with FK-less databases is the applications behind them. If there are tens-of-thousands, hundreds-of-thousands, or millions of lines of code that interact with those databases you can be certain that some of it will break if you just add all the missing FK constraints. There’s almost certainly code that do inserts/deletes in the wrong order, and there’s a fairly good chance that there is junk data or orphaned data that will break the FK constraint already during creation, and so on.

Inferring FK constraints

Since FK constraints are key to generating the associations in OR mappers such as Entity Framework, I decided to add a feature to infer FKs. Instead of creating them in the database, I use table and column names and types to deduce what might be a FK candidate in the database. After that, the inferred FKs are displayed in the Model Comparer’s list of missing FK constraints.

The user can choose and pick both real and inferred foreign key constraints and add them to the model selectively, and generate model-level associations and navigation properties without actually creating the FKs in the database.

The tool also allows inferred FK constraints to be materialized into SQL-DDL scripts, but that part is entirely optional.

Demonstration

The following set of screenshots demonstrate how the FK inference feature in the Model Comparer for EFv4 can be used to infer FKs in a database and to add selected keys to the EF4 model.

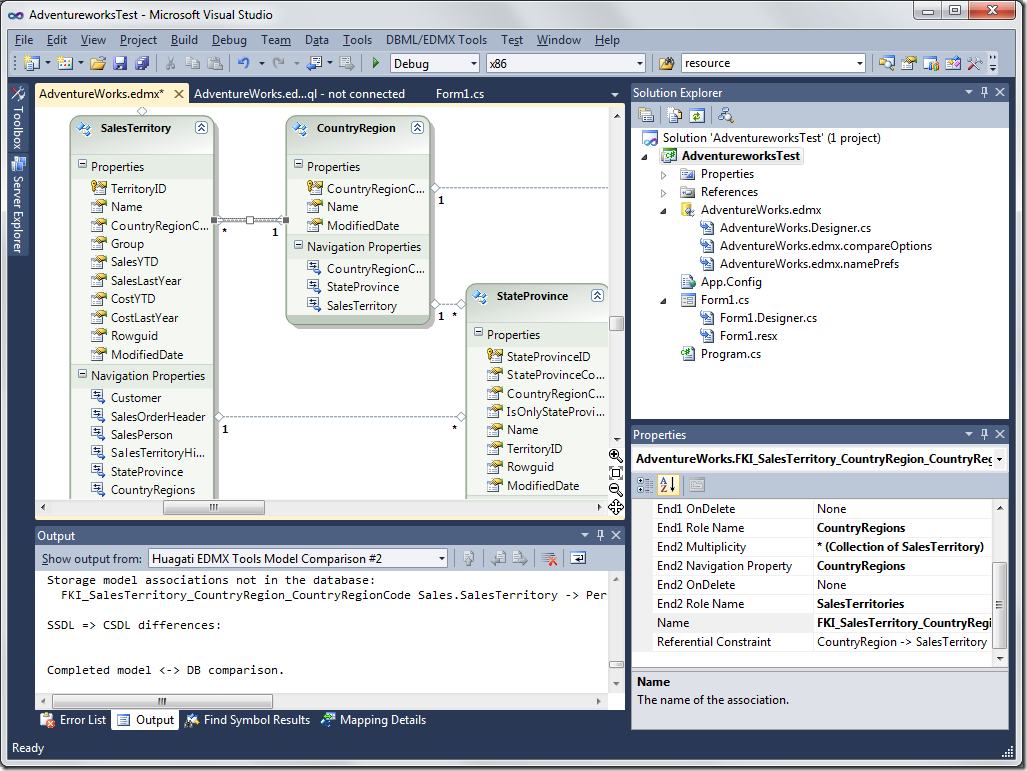

Step 1 – open a EFv4 model in Visual Studio 2010, and bring up the Model Comparer:

Step 2 – Click on Settings to open the settings dialog. Go to the Infer Foreign Keys tab.

Select the type of objects you want to infer FKs for; tables and/or views. Although views can not have FK constraints in the database it is perfectly fine to infer FKs and use them to add associations to views. This makes it possible to have navigation properties to/from views.

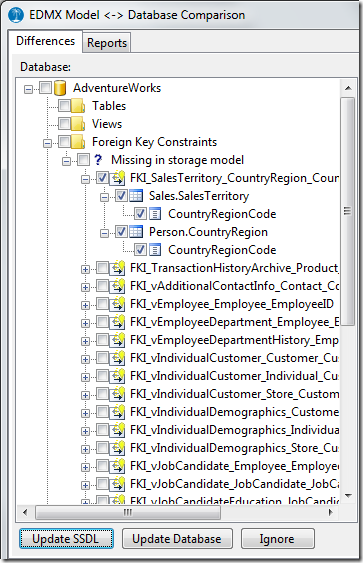

There are four different name matching options controlled by two checkboxes: whether PK members contain the name of the entity or not, whether entity names and member names are separated with underscores or not. Changing the checkboxes updates the table example in the middle of the dialog between the four different naming conventions, to make it easy to verify that the settings match the desired naming conventions.Step 3 – with the Infer Foreign Key Constraints for Tables setting and/or the Infer Foreign Key Constraints for Views setting enabled, the model comparer will infer FK constraints where they don’t exist in the database. Inferred FK constraints start with the FKI prefix and has a different icon than existing FK constraints to make it easy to differentiate between inferred and real FK constraints.

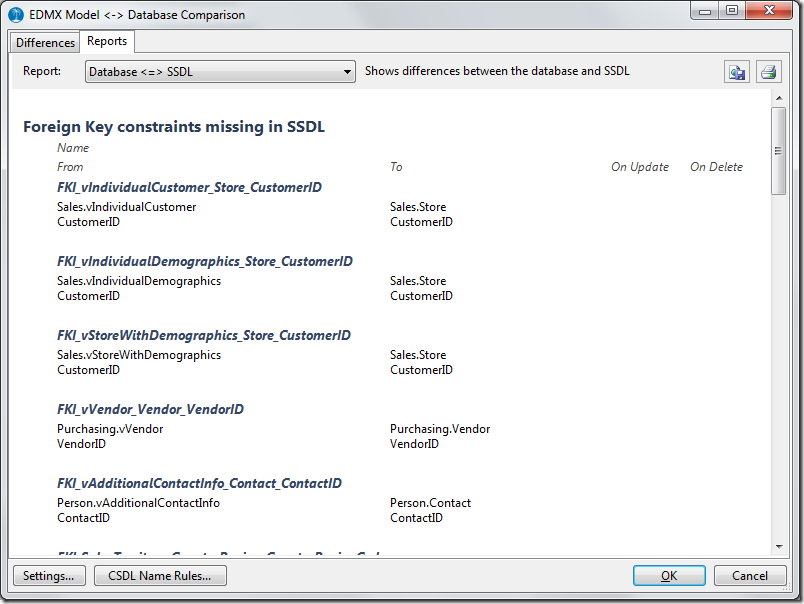

Step 4 (optional) – The Report view also show all inferred keys. If generating a lot of keys the report view is a more eye-friendly way to go through them in order to determine which ones are valid/desired in the model.

Step 5 – Select the FK constraints that you want to use for generating associations in the model. Click Update SSDL to add them to the storage model (SSDL) in the Entity Framework 4 model.

Step 6 (optional) – The SSDL-to-DB tree will now show the newly added association. This allows inferred FKs to be materialized as SQL-DDL-scripts if desired. If not, select them and click Ignore. If you want to generate a SQL-DDL script, allowing the inferred FKs to be added to the database, select the FKs and click on Update Database.

Step 7 (optional) – If you generated SQL-DDL in Step 6, the SQL-DDL script will appear in a separate SQL editor in the Visual Studio instance that the Model Comparer belongs to. This allows you to review, edit, and save the script before applying to the database.

Step 8 – Select the new association(s) in the SSDL-to-CSDL tree and click Update CSDL to add them to the conceptual layer of the model. This will result in navigation properties added between the involved entities.

Step 9 – Close the model comparer and return to Visual Studio. Continue coding. Enjoy.

The Huagati Model Comparer

The Model Comparer is a feature in the Huagati DBML/EDMX Tools add-in for Visual Studio 2010. The add-in can be downloaded from http://huagati.com/dbmltools/, and time-limited free trial licenses are available from the same site.

Screencasts showcasing some of the basic functionality in the Model Comparer for EFv4 is available at http://huagati.blogspot.com/2010/07/introducing-model-comparer-for-entity.html and http://huagati.blogspot.com/2010/08/using-model-comparer-to-generate.html

Cory Fowler (@SyntaxC4) delivered Post #AzureFest Follow-up Videos: Part 2 on 12/21/2010:

In our last set of Videos [Post #AzureFest Follow-up Videos] Barry and I talked about how to Register for a Windows Azure Account, Setting up a Hosted Service, Deploying your first Azure Application, and tearing down the Application.

In this set of videos Barry and I walk-thru setting up a SQL Azure Database using the Windows Azure Platform Portal, as well as Generating Script files for the existing NerdDinner Application for Deployment into the Cloud.

Setting Up a SQL in the Cloud using SQL Azure

As explained at AzureFest, there are very few steps required to set up a Database on SQL Azure. The main thing to watch for in this video is the Firewall settings, this is important to keeping your data secure in the cloud. If you set up Firewall rules for Cafe’s or Restaurants, be sure to remove the IP Address Range from the Firewall before you exit the establishment.

Generating Scripts against On-Premise Database to Deploy to SQL Azure

In this Video, Barry and I explain how to script the existing on premise databases from the NerdDinner Application and run those scripts against our newly created SQL Azure Database. We also venture into the new SQL Azure Database Manager [Built on Windows Azure with Silverlight].

Next Steps…

Look for some additional content coming in the New Year! Barry and I will be covering Setting up your environment, and deploying the full NerdDinner Application to the Cloud!

Frédéric Faure (@federicfaure) wrote SQL + NoSQL = Yes ! on 12/21/2010 as a guest post for the High Scalabilty blog:

Data storage has always been one of the most difficult problems to address, especially as the quantity of stored data is constantly increasing. This is not simply due to the growing numbers of people regularly using the Internet, particularly with all the social networks, games and gizmos now available. Companies are also amassing more and more meticulous information relevant to their business, in order to optimize productivity and ROI (Return On Investment). I find the positioning of SQL and NoSQL (Not Only SQL) as opposites rather a shame: it’s true that the marketing wave of NoSQL has enabled the renewed promotion of a system that’s been around for quite a while, but which was only rarely considered in most cases, as after all, everything could be fitted into the « good old SQL model ». The reverse trend of wanting to make everything fit the NoSQL model is not very profitable either.

So, what’s new … and what isn’t?

A so-called SQL database is a structured relational storage system:

- “structured” means that an ensemble of attributes (columns) which will contain data (values) will correspond to a single or composite key.

- “relational” means that the key of a table can itself be the value of one of the attributes. Attributes in another table correspond to this key. A relationship is therefore established between these two tables and repetition of the same information in all the lines of the table is avoided. Functional features are structured and modeled in the database…

NoSQL is a structured database which enables access to stored data via a simple key. It’s somewhat like an extreme version of a de-standardized model (which is mentioned in SQL, by purposely repeating information in a table in order to limit the number of relationships to be taken into account in a request and therefore optimize the response time – as long as the act of repeating the information doesn’t overly increase the size of a line and therefore of the table).

Remember too that in NoSQL bases, the notion of indexing is not taken into account (hashtable’s mechanism) and that it is not possible to make conditional requests (with WHERE clauses): the values are recovered by the key and that’s it! There are some exceptions, such as Tokyo Cabinet’s API « Table », MongoDB, or even AWS’ SimpleDB,… which do enable such requests and deal with indexing… But pay attention to performance in those cases.

NoSQL OR/AND SQL?

As I said in the introduction, the Not Only SQL or quite simply key-value(s) bases are not a new idea.All that was needed to re-launch the recipe was to find a great marketing name. It’s a little like the POJOs (Plain Old Java Objects). This acronym neatly refers to the ease of use of a Java Object, which does not implement a framework’s specific interface. Since this term was launched, there’s been a return to taking pleasure in the simple things in life (well, perhaps not all the time ;ob), a bit like it is with REST… It just needed good marketing.

Beware of the exaggerations of NoSQL salesmen who are riding the wave!

Each data-storage family has its use. I will return to the example I gave in a previous article concerning an architecture used in the casual gaming industry, and which uses both systems:

- Storage of what could be called the meta-model, which is not intended for data-sharding (yes, the relational model makes it difficult to shard data on several servers), based on a structured, indexed relational base, containing general information about each user (with the following information in the case of a social application: name, top score, previous score, etc.). It is therefore possible to execute SQL-type conditional requests and thus recover information or compile statistics by using WHERE clauses.

- Storage of more volatile data (such as gaming data, which implies a strong write:read ratio) and for which you much choose a real, structured, non-relational storage solution of the key-value(s) type, thus enabling easy data-sharding on X servers. Be careful in this case, as it is the ease of data-sharding in particular which encourages you to use this model, rather than the high write:read ratio. The write performances will be unitarily better with a NoSQL tool (as opposed to a SQL tool with an equivalent data set on a key-value(s) model), but it doesn’t do everything, as we will see later.

I’m deliberately not talking about cache systems at this point, even if the cache would be genuinely useful in this case for the elements which tend to be accessed in read, therefore for the SQL part. I will come back to the notion of cache at the end of the article.

It is clear that not all cases are suitable candidates for using key-value(s) storage systems, such as a company’s IT department which uses databases integrated into business workflows with a great many EAI and ETL processes. If it doesn’t have relational databases with integrated business / functional notions (object modelisation), I wouldn’t even attempt to touch it with a barge pole.

On the other hand, it is possible to operate a « key-value(s) » usage from relational databases (think SQL). However, can’t we opt for a NoSQL base which will enable us to tackle a simple problem (one key – x values) without getting into such considerations as tablespaces, indexing and other parameters, sometimes rather complex?

It’s precisely the need for functional features (you know… functional specifications’ documents, which are drawn up in response to a study of the clients’ requirements, and which tend to disappear in the wake of agile methodologies simplified in the extreme) which leads us to choose one or the other data-storage family.

And what about performance?

NoSQL? NoRead (Not Only Read)!

Most of the cases presented which emphasise « out of the box » NoSQL tools are based on cases with a low write:read ratio. That is, applications where high volumes of sharded data on multiple servers are made available to users in read, with few updates.I recently read a very interesting blog post: Using MySQL as a NoSQL – A story for exceeding 750,000 qps on a commodity server. The author, working for a casual gaming company, DeNA, explains how they worked on MySQL by perfecting a very handy plugin called HandlerSocket:

HandlerSocket is a MySQL daemon plugin so that applications can use MySQL like NoSQL

Thus, the InnoDB engine can be requested in two different ways: by using the standard MySQL layer and thus carrying out complex requests, or else by bypassing the layer in order to execute recurring requests on indexed columns, doing away with SQL Parsing, Open Table, Query Plan and Close Table, not to mention security:

Like other NoSQL databases, HandlerSocket does not provide any security feature. HandlerSocket’s worker threads run with system user privileges, so applications can access to all tables through HandlerSocket protocols. Of course you can use firewalls to filter packets, like other NoSQL products.

In the end, you obtain a MySQL, or rather an InnoDB engine, which can respond just as well in standard (SQL) as a true NoSQL database. The performances presented here are very interesting (even a bonus, compared to a Memcached). This enables Memcached to be deleted and you therefore no longer have to manage the problem of data inconsistency between Memcached and the MySQL database, and you can make the best use of the RAM by only using the InnoDB buffer pool. Moreoever, you can also make the most of the quality of the InnoDB engine, and in the event of a crash, you don’t have to worry about volatile data. Even by playing around with the innodb_flush_log_at_trx_commit parameter to improve the write performances, a failure can still be acceptable.

But it is not a miracle solution! The limitation which caught my eye is the following:

No benefit for HDD bound workloads

For HDD i/o bound workloads, a database instance can not execute thousands of queries per second, which normally results in only 1-10% CPU usage. In such cases, SQL execution layer does not become bottleneck, so there is no benefit to use HandlerSocket. We use HandlerSocket on servers that almost all data fit in memory.This means, therefore, that if the data don’t all (or nearly all) fit into the memory and that you must therefore create a reasonable number of disk accesses (read, or, even worse… write!) … It just doesn’t work, as for any kind of key-value(s) system, NoSQL or Not Only SQL. Take a look at a Redis which puts everything into memory and regularly dumps onto the disk, or even other key-value(s) tools such as Tokyo Tyrant / Tokyo Cabinet, which operate very much in memory.

In any case it no longer works « out of the box » and as for « pure player » (no, I’m not making fun of the marketing, I’m just teasing), well get ready to stay up all night! :o)

Moreover, in these cases, given that data remains relational, even if potentially accessible in NoSQL mode, it cannot be easily sharded among several servers.

There’s no real secret to it, as long as there are plenty of disks IOs (which you were not reading from the RAM, or, particularly that you were writing – because of the necessity of synchronizing the writes via mutex or semaphores), you will have to fine-tune the following at all levels:

- at the network level (this point is not directly linked to disk IOs, but it is a potential bottleneck in general, including with the use of data in RAM): recycling of connexions, buffer management etc., in the /etc/sysctl.conf. You know the things in net.ipv4.tcp_fin_timeout, net.ipv4.tcp_tw_recycle, etc.

- at the filesystem level (select a type: xfs, ext4, etc.) and mount options (noatime, nodiratime, etc.),

- at the scheduler level: noop, cfq, etc.

- remember to increase file descriptors (cf. ulimit),

- etc.

And of course, don’t forget to properly configure the NoSQL database itself. It is usually delivered with a user guide (more or less effective, depending on the maturity of the tool) which explains the tuning which is not as stripped down as you might think. So configure the tool itself by following the supplied recommendations and by adding the fruits of your own experience: number of threads in the connexion pool, master/slave management, etc.

Don’t forget your bandwith either. Well … you will have to tinker with the engine. And once you have optimized all the levels, you’ll have to think of sharding again.

Don’t underestimate the importance of the functional analysis of the data that you will put in the NoSQL database either. Well-structured and well-researched data will economize your resources (especially the network resources). Functional specifications are often a major focus of optimization.

I already encountered these problems when using Tokyo Tyrant / Tokyo Cabinet with a high write:read ratio. Ultimately, the tool is very efficient on reads, manage a lot of things in memory, even in the case where you choose disk storage (you can choose an on-memory storage, like a Memcached), however, when it comes to concurrent and intensive writes, there are limits (acknowledged by the creator of the tool himself, who produces concurrently an optimized version in this sense: Kyoto Cabinet – storage API – and Kyoto Tycoon – network interface). I haven’t looked at how the Kyoto Team works, but the Tokyo One writes with only a single thread at a time, for synchronization problems. The previously mentioned aspects should therefore be optimized to obtain the best results.

For further information on Tokyo Tyrant / Tokyo Cabinet, you can refer to the following article: Tokyo Tyrant / Tokyo Cabinet, un key-value store à la Japonaise (in French). Think lightweight, think Lua! :o)

And … what about performance?

To summarize the previous point, it is simple to observe that a key-value(s)-type database will provide superior performances because it has fewer functionalities and therefore fewer stages to satisfy: authentication for example, which is not managed in key-value(s) databases, or even SQL Parsing, Open Table, Query Plan and Close Table management, which are eliminated. No need for managing those hard-to-reconstruct indexes either. In general, a key-value(s) database is based on a HashTable-type storage mechanism, whose complexity function is O(1), a function representing the complexity of access to the data as a function of the number of occurrences N stored in the system: for example, for a B+tree the complexity function is O(log N). Therefore O(1) represents a constant access time, no matter what the number of occurrences N is. It is obvious that, based on this principle, the NoSQL base will perform better because it will restrict itself to the specific task attributed to it: take key, send back value(s).However, it must be remembered that, whatever the database family used, you won’t go any faster than the system! There is no miracle solution! The RAM is faster than the disk and you won’t write faster than the filesystem… what a surprise! Hey! Maybe, you could try to directly access the raw device, or even better: have a filesystem based on a key-value(s) system… What? The filesystem idea has already been taken? Well ok… Take a look at this article: Pomegranate – Storing Billions And Billions Of Tiny Little Files.

And what about Memcached?!

A cache will always be useful, no matter what type of database you’re using (SQL or NoSQL) and will always be used in the case of data accessed essentially in read.NoSQL databases often work in memory and even offer full memory modes (like a cache but without TTL and with the possibility of replication on a slave which writes to disk). The SQL databases also manage their request caches in RAM, like InnoDB Buffer Pool of the InnoDB MySQL engine. However, even with a cache that is sufficiently dimensioned, the requests still cost them processing time (CPU), bandwith, etc.

The purpose of a pure cache (with TTL hit management) in front will be to relieve the database, no matter which kind, of the burden of any read possible, in order to let it concentrate on the write requests or sending back the more dynamic/volatile data in read.

So, a cache can be placed equally in front of an SQL or a NoSQL base in order to economize resources. They are however more often found in front of SQL-type databases, because, in this case, as well as saving resources, the cache based on the model of a NoSQL database enables improved performance (even if the whole dataset fits into the SQL server’s RAM) because you get rid of authentication, parsing of the SQL request, etc.

Conclusion

There are several elements to bear in mind from this comparison of the two models:

- NoSQL databases are in general faster than SQL, as they implement only the mechanisms that they need and leave out all that’s necessary for an SQL model to function (we must thereby accept a certain number of functional limits, particularly with regard to conditional requests with nice WHERE clauses).

- Special mention, while we’re on the subject, goes to the security of NoSQL databases where, in most cases, it is assured by network filtering and there is no authentication management.

- As the key-value(s) model is not relational, it enables easy data-sharding among several servers. It is possible to use an SQL-type database on a « key-value(s) » model.

- Whether SQL or NoSQL is the selected tool, if the dataset fits in memory, performance will be much enhanced.

- As soon as disk accesses become necessary, whether because the dataset in read does not fit in memory or because there is a high number of writes, performance will be poorer, and a bottleneck will occur at the OS/hardware level via the disk IOs.

- Configuration of the OS is indispensable for optimizing the disk IOs and the network IOs aspects.

- Don’t underestimate the importance of the functional specifications of the data that you will load into the NoSQL database, either. Well-structured and well-researched data will economize your resources (particularly network resources). These specifications are often a major focus of optimization.

- Data integrity: consider the reliability of the SQL database engines which have been tested for quite some time now. There is not yet much feedback available regarding the reliability of the NoSQL engines (which does not mean that it is not satisfactory, but experience counts in this area).

- In the HandlerSocket example, the point is above all to unify the data cache in a single location (the InnoDB buffer pool) in order to ensure the consistency of the data without necessarily compromising on the performance provided by a Memcached.

- There is an interesting variety of NoSQL databases with specific functionalities, but be careful: having extra functionalities means less efficient response times than with the standard key-value(s). Take for example the API « Table » of Tokyo Cabinet, which enables management of conditional requests, or even the geospatial functionalities of MongoDB.

- An amusing equation: NoSQL database = Memcached – TTL + synchronization of writes on disk.

Frédéric is an architect at Ysance. you can follow him on twitter.

<Return to section navigation list>

MarketPlace DataMarket and OData

Mehul Harry posted Expose OData Feed Through XPO Toolkit (includes samples) to the DevExpress Community blog on 12/20/2010:

Check out this OData and XPO (our ORM) CodePlex project:

What is OData?

First, a short explanation of the Open Data Protocol as described by the odata.org website:

The Open Data Protocol (OData) is a Web protocol for querying and updating data that provides a way to unlock your data and free it from silos that exist in applications today. Learn more here: odata.org

The XPO Toolkit

If you use eXpress Persistent Objects (XPO) then here’s where it gets fun. There is a free toolkit that allows you to expose your data as an OData feed with just a few lines of code.

Check out the related blog articles on it so far:

- OData Provider for XPO – Introduction

- OData Provider for XPO - IDataServiceMetadataProvider & IDataServiceQueryProvider

- OData Provider for XPO - Client Side

- OData Provider for XPO - Complex Types

- OData Provider for XPO - !summary

- OData Provider for XPO - GroupBy(), Count(), Max() and More...

- OData Provider for XPO - Using Server Mode to handle Huge Datasets

- End User Report Designer – Publishing Reports (Part 1)

- End User Report Designer – Viewing Reports (Part 2)

Download From CodePlex

Download the project source code and samples here: eXpress Persistent Objects (XPO) Toolkit

Samples:

- Calendar - Example shows how to create an OData feed and consume it using a Scheduler Control

- Channel - Example shows how to create a Video feed application in Silverlight

Watch Video Webinar

Watch the video of the Introduction to OData webinar to learn about OData and WinForms Scheduler data binding:

Azret Botash is our resident OData guru and he'd love to hear from you about anything and everything related to OData.

Try the OData XPO Toolkit and then drop me a line below with your thoughts. Thanks.

I'm on twitter

DXperience? What's That?

DXperience is the .NET developer's secret weapon. Get full access to a complete suite of professional components that let you instantly drop in new features, designer styles and fast performance for your applications. Try a fully-functional version of DXperience for free now: http://www.devexpress.com/Downloads/NET/

<Return to section navigation list>

Windows Azure AppFabric: Access Control and Service Bus

Alik Levin (@alikl) reported Just Published: Single Sign-On from Active Directory to a Windows Azure Application Whitepaper on 12/21/2010:

Just released: Single Sign-On from Active Directory to a Windows Azure Application Whitepaper.

Abstract:

This paper contains step-by-step instructions for using Windows® Identity Foundation, Windows Azure, and Active Directory Federation Services (AD FS) 2.0 for achieving SSO across web applications that are deployed both on premises and in the cloud. Previous knowledge of these products is not required for completing the proof of concept (POC) configuration. This document is meant to be an introductory document, and it ties together examples from each component into a single, end-to-end example.

Related Books

- Programming Windows Identity Foundation (Dev - Pro)

- A Guide to Claims-Based Identity and Access Control (Patterns & Practices) – free online version

- Developing More-Secure Microsoft ASP.NET 2.0 Applications (Pro Developer)

- Ultra-Fast ASP.NET: Build Ultra-Fast and Ultra-Scalable web sites using ASP.NET and SQL Server

- Advanced .NET Debugging

- Debugging Microsoft .NET 2.0 Applications

Related Info

- SSO, Identity Flow, Authorization In Cloud Applications and Services – Challenges and Solution Approaches

- Windows Identity Foundation (WIF) Fast Track

- Windows Identity Foundation (WIF) Code Samples

- Windows Identity Foundation (WIF) SDK Help Overhaul

- Windows Identity Foundation (WIF) and Azure AppFabric Access Control (ACS) Service Survival Guide

- Video: What’s Windows Azure AppFabric Access Control Service (ACS) v2?

- Video: What Windows Azure AppFabric Access Control Service (ACS) v2 Can Do For Me?

- Video: Windows Azure AppFabric Access Control Service (ACS) v2 Key Components and Architecture

<Return to section navigation list>

Windows Azure Virtual Network, Connect, RDP and CDN

Erik Oppedijk analyzed the cost of five scenarios for Using Azure CDN to extend Bing Maps in a 12/21/2010 post to the InfoSupport Blog Community:

The Content Delivery Network (CDN) is a nice Windows Azure feature which will help us deliver content across the globe. This is ideal for mostly static content, which needs the fastest possible access/download. The CDN network currently consists of 24 Edge servers, delivering the content to your end users.

But what are the extra costs of using a CDN, compared to the normal blob storage. First let's have a look at the scenario, I've created an additional layer for Bing Maps, showing some extra charts with nautical navigation information. For every zoom level in Bing Maps I need images, but the detailed zoom levels will take several GB of data (or even TB for all the charts of the world). Here is a screenshot with the navigation charts overlaid on the Bing roads on the left and some Bing Satellite on the right:

With MapCruncher I made several layers (limited to zoom level 10 in Bing Maps) and uploaded them to the cloud to my blob storage container.

If most of your user are all from the same region, we can store our content in the Azure storage blob in the datacenter you choose during the creation of your storage, so it will be in Amsterdam if you choose Region West Europe. For more performance, we need more places to store our content.

So let's do some calculations on the cost of this solution(use the azure roi calculator for some calculations), and start with some assumptions:

- The map consist of 100.000 files, each file is around 60KB, so total storage is 6GB

- 200 visitors a day, each querying 1000 files * 30 days is 6 Million transactions/downloads per month

- 6 Million downloads * 60KB = 360 GB of traffic each month

- No updates to the data are made

- Just to calculate for the future, I use 26 CDN locations (only 24 available at this moment)

Scenario 1

Normal Windows Azure blob storage in Europe West, users from all over the world connect to this location. All content is stored in a single blob. Users around the world will have some delays as the content is served.

Total cost:

6GB of blob storage used in a month

6GB * $0.15 = $0.906 Million downloads a month

6M / 10K * $0.01 = $6360 GB of bandwidth every month

360GB * $0.15 = $54===

$60.90 per month

Scenario 2

Store a copy in each of the 6 Windows Azure locations (2x Asia, 2x Europe and 2x US), this will give an increase for users near 1 of the locations, however Australia and other countries still might suffer. This solution needs 6 blob storage, so we manually have to keep all 6 blob storage in sync.

360 GB of bandwidth, assume 1/3 of all bandwidth is in Asia, at higher costs per GB download.Total cost:

6 datacenters each with 6 GB of storage

6 * 6GB * $0.15 = $5.406 million downloads a month

6M / 10K * $0.01 = $6240 GB of bandwidth in Europe/US

240GB * $0.15 = $36120 GB of bandwidth in Asia

120GB * $0.20 = $24===

$71.40 per month

Scenario 3A

Again store everything in 1 location, and enable the CDN network. Now we have 26 CDN nodes delivering the content to us. Remember that all nodes need to download the data once, before they can cache it. Also the CDN will check every 2 days if there is a change to the cached files(this is the default), so a connection is made. For this scenario we'll do some worst case thinking, that all users access all 26 CDN centers AND the CDNs download ALL content.

Assume all data is cached on each CDN server (assume 26 CDN datacenters, 6GB each)

Assume each CDN is filled during the first month (this is worst case, normally over time each CDN will fill up)

CDN checks every 2 days if the data has changed(so 100.000 files, are checked 30/2 = 15 times each month = 1.5 Million transactions)

Total cost (worst case scenario):6GB of blob storage used in a month

6GB * $0.15 = $0.90 for storage6 million downloads a month

6M / 10K * $0.01 = $6240 GB of bandwidth in Europe/US

240GB * $0.15 = $36120 GB of bandwidth in Asia

120GB * $0.20 = $2426 CDN locations check every 2 days for changes for 100.000 files

26 * 30/2 * 100.000 / 10K * $0.01 = $3926 CDN locations need to download 6GB of data

26 * 6GB * $0.15 = $23.4 (first month only)26 CDN locations each with 6GB of storage

26 * 6GB * $0.15 = $23.40===

$152,70 per month (first month, after CDN is filled this will drop to $129.30)

Scenario 3B

Same as scenario 3A, except that the CDN network only checks once a month if there is new content. This will only work with relatively static content, or move new data to a new blob container or new filename and start caching again (at extra download costs)

Total cost:

6GB of blob storage used in a month

6GB * $0.15 = $0.90 for storage6 million downloads a month

6M / 10K * $0.01 = $6240 GB of bandwidth in Europe/US

240GB * $0.15 = $36120 GB of bandwidth in Asia

120GB * $0.20 = $2426 CDN locations check once a month for changes for 100.000 files

26 * 1 * 100.000 / 10K * $0.01 = $2.6026 CDN locations need to download 6GB of data

26 * 6GB * $0.15 = $23.4 (first month only)26 CDN locations each with 6GB of storage

26 * 6GB * $0.15 = $23.40===

116,30 per month (first month, after CDN is filled this will drop to $92.90)Scenario 4

Same as scenario 3B, but lets assume the users from the CDN don't access all the content, each CDN has an average of 2GB of storage(33.333 files). Now the individual CDN servers don't need as much transactions to check if the content was modified. Over time the CDN would fill up with more data and the cost could increase over time.

Total cost (normal scenario):

6GB of blob storage used in a month

6GB * $0.15 = $0.90 for storage6 million downloads a month

6M / 10K * $0.01 = $6240 GB of bandwidth in Europe/US

240GB * $0.15 = $36120 GB of bandwidth in Asia

120GB * $0.20 = $2426 CDN locations check once a month for changes for 33.333 files

26 * 1 * 33.333 / 10K * $0.01 = $0.8626 CDN locations need to download 2GB of data

26 * 2GB * $0.15 = $7.80 (first month only)26 CDN locations each with 62B of storage

26 * 2GB * $0.15 = $7.80===

$83.36 per month (first month, after CDN is filled this will drop to $75.56)Conclusion

With the CDN we can quickly deliver content to our customers, but there is still some guesswork to be made. The worst case scenario (3A) is about 2.5 times the price of the simple blob storage (scenario 1), but we get a lot of edge servers delivering our content very fast. The slower growth example (4) is around 25% more expensive and looks more interesting and the price is about the same as scenario 2. But keep in mind that if your service gets popular all around the world, the cost will rise to scenario 3B!

By caching items longer (scenario 3A versus 3B) we're saving $36.40 a month in transaction costs! This really pays out. Only think about an update scenario when your content changes a lot. Upload everything in new files, or start a new public blob container as a new cache.

You can test the overlays, so lets hope my demo Azure account I got from MS keeps working a few more week!

Other considerations

If the content would change more often, the CDN would clear old items and save some storage costs.

Most of the costs are into bandwidth, so make sure your content is cached correctly. Set the CacheControl of all the items in your blob container to "public, max-age=7200" or longer to get the files cached at the client and downstream proxy servers. With returning customers this will save a lot in bandwidth.

More information

About the Windows Azure CDN

Azure ROI Calculator

NOAA Charts of the US

MapCruncher

Bing Maps Quad Key coordinate system

Cory Fowler (@SyntaxC4) described Setting up RDP to a Windows Azure Instance: Part 2 for IT Pros in a 12/20/2010 post:

In my last post, Setting up RDP to a Windows Azure Instance: Part 1, I explained how to setup Remote Desktop into the Cloud using Visual Studio 2010.

However, the cloud isn’t for Developers alone, we have to think of our IT Pro counterparts. This post will explain how to setup and configure RDP access to the Cloud without using Developer Tools.

Creating a Self-Signed Certificate with IIS7(.5)

1. Open IIS, Double-Click on Server Certificates.

2. In the Actions menu on the right, Select “Create Self-Signed Certificate…”.

3. Specify a Friendly Name for the Certificate.

4. Ensure the new Certificate has been created.

Using the Windows Azure Service Management API

So I said I was going to use the Service Management API and I am, however I am going to cheat a little bit by using the Windows Azure Service Management CmdLets [which is a convenient PowerShell Snap-in created by Ryan Dunn (@dunnry)].

Before we can interact with the Service Management API we must upload a Management Certificate [which is similar to he process outlined in my previous post on Exporting and Uploading a Certificate to Windows Azure]. The Management Certificates are uploaded from within the Windows Azure Platform Portal as seen in this picture to the right.

Management Certificates are used by Visual Studio to interact with the Windows Azure Platform. The Management Certificate paired with the Subscription ID are used to Authenticate Access to the Windows Azure APIs.

Now that we’ve covered the Management Certificates, lets fire up the Windows PowerShell ISE.

Using the Windows Azure Service Management CmdLets

If you haven’t already done so download the Windows Azure Service Management CmdLets.

First you will have to tell PowerShell you would like to use the snap-in, use the following snippet of code to add the Azure Management Tools Snap-in.

Add-PSSnapin AzureManagementToolsSnapInIf you’d like to list all the Commands that are included in the Windows Azure Management Snap-in simply execute this line of code:

Get-Command -PSSnapIn AzureManagementToolsSnapInWe’re going to be using the Add-Certificate command to add a Certificate to our Hosted Service. First lets take a look at some of the examples of how to use this command by executing:

Get-Help Add-CertificateAs you can see there are a few options for running the Add-Certificate Command, I’ve chosen this format:

Add-Certificate -ServiceName RDP2Azure -CertificateToDeploy (gi <path-to-cert>\azurefest-rpd.cer) -SubscriptionId ********-****-****-****-************ -Certificate (gi cert:\CurrentUser\My\<thumbprint>)There are two “Certificate” Arguments which can be confusing especially when they accept different values. CertificateToDeploy is the newly created Certificate which will be used to encrypt the password for our RDP Connection, this argument accepts a file as a parameter. The Certificate argument is the Management Certificate that is being leveraged to Authenticate the transaction. When you run the Script the result should look like this:

You’ll also notice that the Certificate has been uploaded to the Windows Azure Platform Portal.

Configuring RDP in the Windows Azure Platform Portal

Now that we’ve created the Certificate needed to encrypt the RDP password, and we’ve used the Azure Service Management API to upload the Certificate to our hosted Service. Now it’s time to configure our RDP Connection in the Windows Azure Platform Portal.

To Configure our RDP Access, Select the Role you wish to configure the RDP access for. Then in the Ribbon check off the Enable checkbox, then click on the Configure Button in the Remote Access Group.

Set your username and password for the RDP Connection. Select the Certificate you wish to use to encrypt the password, then select an expiration date for the connection.

Once you’ve finished these steps you will be able to select an instance and Connect to the Cloud.

**Note: I’ll be creating one last entry to review the process of opening up the RDP File to gain access to an Instance running on Windows Azure.

Conclusion

This post was considered the IT Pro explanation for how to grant access to RDP in the Cloud. These skills are transferrable to Development as well if you don’t have Visual Studio. I will create one final post which explains how to manually create the XML nodes that Visual Studio creates in the Cloud Service Configuration file auto-magically using it’s UI. This manual creation is intended for Open Source Developers or Developers that like to understand how the underlying pieces of the Visual Studio Tools Operate.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

Wade Wegner (@wadewegner) explained Web Deploy with Windows Azure on Restrictive Networks on 12/21/2010:

Some networks are more restrictive than others, and (much to my continual chagrin) this is especially true here at Microsoft. As I wrote and tested the post Using Web Deploy with Windows Azure for Rapid Development, I found that I was not able to use Web Deploy on port 8172; turns out that we prevent SSL traffic on any port but 443. Fortunately, it’s pretty easy to resolve this restriction by modifying the Input Endpoint values in the Service Definition.

Essentially, we need to map an external port (e.g. the port to which you will publish) to a local portal on the machine (e.g. the port with which Web Deploy listens). On the Microsoft network, this means that I’ll publish to port 443 but need it to resolve to a local port of 8172.

Before starting, review my post on using Web Deploy with Windows Azure. There are only two differences, as noted below.

First, update the InputEndpoint values in your Service Definition file (ServiceDefinition.csdef) to map the external port to a local port.

Code: InputEndpoint

- <InputEndpoint name="mgmtsvc" protocol="tcp" port="443" localPort="8172" />

Second, after you’ve deployed to Windows Azure and your instance is up and running, you’ll need to update your publish settings for Web Deploy – specifically, the Service URL. Here are the values that I’ve gotten to work:

The screen shot cuts off the service URL. The full URL is:

https://mywebdeploy.cloudapp.net:443/msdeploy.axd

Of course, you’ll need to update these values to your DNS name and the port to which you’ll deploy.

Felipe de Sá described Getting started with Windows Azure in this 12/21/2010 post:

For the last couple of weeks, we've been studying converting our solution to a Windows Azure Application. So this post [will] explain the basics of Windows Azure. Note that I did not put any payment information here. I don't even have the list of prices and I don't know what is charged and what isn't for sure.

Let's understand it.

How it works

We basically have two types of projects within a Windows Azure Application Solution: We have Web Roles, which can be Web Applications, and Worker Roles, which can be class libraries, console applications. Note that a Web Role can only be a Web Application, not a Web Site. See the "Converting a Web Site to a Web Application" post for more details.

The Web Role will call the Worker Roles to do some heavy work, like background working. Something asynchronous that doesn't necessarily send a response to the Web Role, although you could have this situation. How will it call them? We have a communication pipe, a Queue. As surprising as it may be, it works exactly as a Queue object: first in, first out. The Web Role goes and puts a message in the Queue. Within a time span, the Worker Roles check the Queue for any new message, and verify whether it's assigned to them or not. If it is, they do their work, and delete the message. Simple.

To add Roles, just right click the folder Roles and select the desired option. You can also make an existing project (if compatible) a Role.

When you create a Role, you'll get a file named WebRole.cs or WorkerRole.cs, depending on which type of role you've created. These inherit from class RoleEntryPoint. These files contain methods such as OnStart(), OnStop() and Run(). You'll understand it later.This is an image of what the Solution Explorer looks like with a Web Role and a Worker Role:

Notice that we have two configuration files: ServiceConfiguration.cscfg and ServiceDefinition.csdef. Those basically contain information about the roles and their settings.

Storage?

We have SQL Azure, Tables, and Blobs.

SQL Azure seems to go smoothly with basic instructions. It only requires that each table has a Clustered Index. Also, you can put your already existing database there, scripting it. I haven't tested it yet, but it looks like SQL Server 2008 R2 supports connecting to SQL Azure as if it was an ordinary SQL Server instance, meaning that it shows the server on Object Explorer, and lets you visually navigate through its objects. You can then script your existing database and run the scripts through Management Studio. For existing applications, connecting to it should be as simple as changing the Connection String.

Blobs. "Blobs are chunks of data". Well, they are written as byte arrays, so you could use it to store .txt, .xml files, anything. You can create a container, which would be a folder, and put blobs inside of it. For example, each customer would have their own container, and inside, their Blobs.

Tables work like an Excel Sheet, or an Access Database. Their use are to store data that is not relational. An example would be storing each customer's code and connection string.

Clouding it

First, let's configure our Roles to use development storage. I've done this with Windows Azure SDK 1.3, so I'm assuming you have that installed.

Open settings page of a role. Right click it, click properties, then go to Settings Page. You'll see the following:Add another setting and mark the settings type as a Connection String. Then, click on the [...] button, and check Use the Windows Azure storage emulator. You can name it whatever you want to, just keep that name in mind. Let's use something generic, like "DataConnectionString". Do that for each of your roles.

After you've done that, your project now knows where's his storage, so the next step is to write to the Queue.[We'll] write a string message that tells our Worker Role to create a Blob .txt file, and write something to it. Firstly, there's a piece of code that we need to execute before we can connect to our development storage account:

See original post for unformatted source code.

I couldn't find an actual answer to "Why do I need to run this?", so if you do have an answer, leave a comment with it, and I'll add it here. I'll even leave the code's original comments. If you try to get the storage account without running the code above, you'll get an exception saying that you must call the method SetConfigurationSettingPublisher before accessing it. Also, I tried putting this on the OnStart() method on WebRole.cs, and it did not work. So just put it on Application_Start() on Global.asax. As for WorkerRoles, you should put it on the OnStart() method on WorkerRole.cs.

To add our Queue Message, I've put this on a button click event:

See original post for unformatted source code.

Notice that we call the CreateIfNotExist() method. This way we can always be sure we won't get a NullReferenceException. At least not when dealing with this Queue. And the Queue name MUST be entirely lowercase! You'll get a StorageClientException with message "One of the request inputs is out of range." if you have any uppercase character in its name.

Also notice that the message is pure string, so [we'll need] to handle message parts our own way.

So when the code above executes we have our first message on the Queue. Simple, right?

Now we'll get that message on our WorkerRole.

On the WorkerRole.cs file I've mentioned before, check the code that was generated inside the Run() method:

See original post for unformatted source code.

Yes, it's literally that: a while(true) loop. We trap the execution there, and then we can do whatever we want, including getting messages from the Queue. We could implement some sort of "timer against counter" logic to relief the processor a bit, like, each time it checks for a message and notices there isn't any, it sets the sleep time a little bit higher. Of course we'd have to handle that so it doesn't become a huge amount of time, set a maximum on that sleep.

Now let's handle getting messages from the Queue:

See original post for unformatted source code.

Like I said, we could implement a better solution for that Sleep() over there, but, in some cases, it is fully functional just as it is: checking the queue within a certain time span.

Now we'll get that message, and write its content to a Blob:

See original post for unformatted source code.

That should create a new Blob container (a folder) and inside it, a .txt file (Blob).

The same goes for the container name: it must not have any uppercase characters, or you will get a StorageClientException, with message "One of the request inputs is out of range.".

Simple again, huh.

Let's write something to the Table. In this example, we'll write customer records to the table: Customer ID, Customer's Name, and Customer's Connection String, assuming that each customer will have its own Database.

In a brief explanation of tables, the three important columns are: PartitionKey, RowKey, and TimeStamp.

Partition Key behaves like a string, so we could use it to filter our Records. For example, if we have two sources of customers, and we want to know where they came from, we could have two different values for PartitionKey: "CustSource1" and "CustSource2". So when selecting those customers, we could filter them by PartitionKey.

RowKey is our primary key. It cannot be repeated, and it doesn't have auto-incrementation systems as identity fields. Usually, they get GUIDs.

TimeStamp is just a last modified time stamp.

To interact with Tables, we have to define a Model and a Context.

Our model will describe the record, and which columns it'll have. The context will interact directly with the table, inserting, deleting and selecting records.

It's important NOT to link these tables with SQL Server tables. They don't maintain a pattern. You can insert a record with 3 columns in a certain table, and then a record with completely different 6 columns in the same table, and it will take it normally.

To define our Model, we have to inherit from TableServiceEntity. We can then define our own columns and constructors and whatever we want to.

See original post for unformatted source code.

Once we have defined our Model, we'll work on our Context. The Context iherits from TableServiceContext. It also provides us some important key methods to work with. Our defined interaction methods should also be here, like the code below:

See original post for unformatted source code.

Notice the IQueryable property that returns us the table corresponding to the table name specified as a parameter. Whenever you want to access the table, that should be the property. You can also define more than one property, but always a table per property. The only other key method is the one called inside the constructor.

Oh and you have to add a reference to System.Data.Services.Client or you won't be able to call method CreateQuery().

Now how do we work with this? With the code below!

See original post for unformatted source code.

It should create a table with the name defined in the constant CustModel.TABLENAME, respecting the columns defined in the Model. You do not have to create the columns manually, it'll do so automatically.

So this post explained the basics of Windows Azure, and how simple it is to work with it. If you are going to do so, I suggest you download some applications from codeplex, such as:

- SQL Azure Migration Wizard helps you migrate your already existing database to SQL Azure.

- Azure Storage Explorer lets you visually navigate through your storage objects, such as Queue Messages, Blobs, Containers, Tables, etc.

Felipe is a Brazilian software developer.

Eric Nelson (@ericnel) announced a New Windows Phone 7 Developer Guidance released for building line of business applications in a 12/21/2010 post:

Several partners have been asking about guidance on combining Windows Phone 7 applications with Windows Azure. The patterns and practices team recently released new guidance on Windows Phone 7. This is a continuation of the Windows Azure Guidance. It takes the survey application and makes a version for Windows Phone 7. The guide includes the following topics:

- Prism for Windows Phone 7

- Reactive Extensions

- WCF Services on top of Windows Azure [Emphasis added]

- Push Notifications

- Camera & Voice

- Panorama

- Much more...

Well worth a read if you are an ISV looking at taking Line of Business applications to Windows Phone 7.

Related Links:

We have created Microsoft Platform Ready to help software houses develop applications for Windows Azure and On-Premise. Check it out and the goodies it can deliver for little effort.

Bruce Kyle reported a 00:06:47 ISV Video: Credit Card Processing for Windows Phone 7 on Windows Azure in a 12/20/2010 post to the US ISV Evangelism blog:

Want to process credit cards on your Windows Azure site or from Windows Phone 7 application? Microsoft partner Accumulus provides a solution for both. CEO Gregory Kim and company President Christian Dreke describe their solution and provide a demo of how you might accept credit cards on Windows Phone 7 application. The record keeping for credit card processing is provided by Accumulus over Windows Azure.

See Credit Card Processing for Windows Phone 7 on Windows Azure on Channel 9.

From Channel9’s description:

Credit Card Sales System

The Credit Card Sales System by Accumulus brings fast, simple and secure credit card capabilities to Windows Phone 7 devices.The Credit Card Sales System lets users accept credit card payments with their Windows Phone 7 devices anywhere that they have an Internet connection.

Accumulus developed the Credit Card Sales System as their first foray into Windows Phone 7 application development. The Credit Card Sales System leverages credit card processing logic from Accumulus’ own Windows Azure-based recurring revenue system.

About Accumulus

Accumulus has developed a suite of integrated technologies that make recurring revenue models easier to implement, faster to scale, and more profitable to manage. The Accumulus dashboard makes it easier for

businesses to acquire, manage and grow a customer-base, while automating their fees, credit card processing, invoice creation, customer contact and renewals.The Accumulus team has fifteen years of experience in the subscription billing industry, having designed systems that have supported more than 400 companies in over thirty countries, and processed more than a billion

dollars of credit card transactions.For Assistance in Building Your Windows Phone and Windows Azure Applications:

Join Microsoft Platform Ready.

<Return to section navigation list>

Visual Studio LightSwitch

Andy Kung explained How to Programmatically Control LightSwitch UI in a 12/21/2010 post to the Visual Studio LightSwitch Team blog:

LightSwitch, by default, intelligently generates UI based on the shape of an entity. For example, adding an Employee entity to a screen might generate a TextBox for Employee.Name, a DatePicker for Employee.Birthdate, a ComboBox for Employee.Gender, etc.

Some screens, however, require UI that simply guides the user to complete certain tasks. This UI may not directly represent a stored value in the database. For example, you may have a CheckBox that controls the visibility of a section of the screen. The CheckBox itself does not directly reflect a stored value in the database. It is a local screen property.

In this post, we will create a simple flight search screen. Similar to any travel website you may have used in the past, it contains a couple of dropdown lists, date pickers for the user to input search criteria. It will show and hide a piece of the UI based on the value of another. We will achieve this by creating several local screen properties.

Here is the sketch of the UI we want to build. Let’s start!

Start with data

We will start by adding an Airport table via the Entity Designer:

Airport

- Name (String, required)

- City (String, required)

- State (String, required)

- Code (String, required)

We can also add a summary field to the Airport table so it has a meaningful string representation by default. For more details of how to customize an entity’s summary field, please see Getting the Most out of LightSwitch Summary Properties by Beth Massi.

In this example, we will use:

Private Sub Summary_Compute(ByRef result As String)

result = City + ", " + State + " (" + Code + ") - " + Name

End Sub

Assuming we already have some Airport data in the database, you will see the airports show up in this format by default:

Create a screen

Let’s create a screen called SearchFlights via the “Add New Screen” dialog. We will use “New Data Screen” template with no screen data included.

This will essentially give us a blank screen to start with. Change the screen root node from “Two Rows” to “Vertical Stack.”

Based on our sketch, we need the following UI elements:

- A ComboBox to specify the origin

- A ComboBox to specify the destination

- A DatePicker to specify the departure date

- A DatePicker to specify the return date

- A CheckBox to indicate whether to include return trip in the search result

Each UI element represents a piece of screen data. Therefore, we need to add some screen properties to the screen first.

Using the “Add Screen Item” dialog, add a local property of type Airport called FromAirport. In the property sheet, check “Is Required” property. Similarly, add another property called ToAirport.

Add a local property of type Date called LeaveDate. Similarly, add another property called ReturnDate.

Finally, add a local property of type Boolean called RoundTrip. This property indicates whether we should include the return trip in the search results.

We have now added 5 local properties: FromAirport, ToAirport, LeaveDate, ReturnDate, and RoundTrip. You should have these screen properties in the screen designer.

We can now create some screen UI for these screen properties. Based on our sketch, the layout requires 2 groups. One group uses a “Vertical Stack” containing the airport dropdowns. The other uses a “Horizontal Stack” containing the date pickers and checkbox.

Therefore, we will add two groups to the screen content tree, one using “Vertical Stack,” the other using “Horizontal Stack.”

Using the “+ Add” button, add FromAirport to the first group and change the control to ComboBox.

Similarly, add ToAirport to the group.

Next, add LeaveDate, ReturnDate, and RoundTrip to the 2nd group.

Select the screen root node. Set the “Label Position” property to “Top” via the property sheet. This will position the display names on top of the controls.

Let’s run the application (F5) and see what we’ve got.

Write some screen code

We’re pretty close to what we want! However, there are a couple of things we can improve. First, the LeaveDate and ReturnDate are not being initialized to a reasonable value. Second, when RoundTrip is unchecked, we want to hide the ReturnDate UI.

We can achieve these by writing some code for the SearchFlights screen. Let’s go back to the screen designer. Right click on SearchFlights in the Solution Explorer and choose “View Screen Code.”

First, we want LeaveDate to be to today’s date and RoundTrip to be true by default. We can do this in the <Screen>_BeforeDataInitialize event.

Private Sub SearchFlights_BeforeDataInitialize()

LeaveDate = Date.Today

RoundTrip = True

End Sub

Next, we want ReturnDate to be 7 days after the LeaveDate whenever it is changed. We can do this in the <Property>_Changed event.

Private Sub LeaveDate_Changed()

ReturnDate = LeaveDate.Date.AddDays(7)

End Sub

Finally, we want to show and hide the ReturnDate’s DatePicker based on the RoundTrip property.

Private Sub RoundTrip_Changed()

FindControl("ReturnDate").IsVisible = RoundTrip

End Sub

FindControl allows you to reference a control by its programmatic name. In this case the programmatic name of the ReturnDate DatePicker is “ReturnDate.” You can find the programmatic name from the “Name” property in the property sheet.

Let’s also clean up the display name of the controls via the property sheet while we’re at it.

That’s it! Now run the application. The dates are initialized properly, and the CheckBox now controls the visibility of the Return Date.

What’s next? Now that we have the search criteria on the screen, we can simply bind these properties to a parameterized query that returns a list of qualifying flights. For more information on parameterized queries, please see How to use lookup tables with parameterized queries by Karol Zadora-Przylecki.

Beth Massi (@bethmassi) updated her LightSwitch Community Links and Resources post on 12/21/2010:

I’ve been trying to stay on top of the forming LightSwitch community and have been collecting a list of bloggers and community resources that talk about LightSwitch. It’s exciting to see people so enthusiastic about the product. I’m sure I haven’t spotted them all but here are some notable bloggers & emerging communities about LightSwitch. If you know of more links please post a comment to the end of this post! As the ecosystem build up, I’ll create a “Community” page for the LightSwitch Developer Center.

Microsoft Blogs & Bloggers:

- LightSwitch Team Blog

- Beth Massi (shameless plug <g>)

- Matt Thalman (developer on the LightSwitch team)

- Jason Zander (my boss’s boss’s boss <g>)

Microsoft Sites and Forums:

- LightSwitch Developer Center

- LightSwitch Forums

- LightSwitch Bugs & Feedback submission

- LightSwitch on Channel 9

Community Blogs & Bloggers:

- Roger Jennings Oakleaf Blog

- Alessandro Del Sole (VB MVP)

- Kunal Chowdhury (Silverlight MVP)

- Michael Washington (Silverlight MVP)

- LightSwitch Infragistics blogs

- Daizen Ikehara (Japanese)

Community Sites and Forums:

- LightSwitch on Facebook

- LightSwitch Team on Twitter

- LightSwitch on StackOverflow

- Visual Studio LightSwitch Help Website

- LightSwitch Tips & Tricks (Italian)

Enjoy! (and Happy Holidays!!!)

Return to section navigation list>

Windows Azure Infrastructure

Buck Woody published Windows Azure Learning Plan – Architecture on 12/21/2010:

This is one in a series of posts on a Windows Azure Learning Plan. You can find the main post here. This one deals with what an Architect needs to know about Windows Azure.

General Architectural Guidance

Overview and general information about Azure - what it is, how it works, and where you can learn more.

Cloud Computing, A Crash Course for Architects (Video)

Patterns and Practices for Cloud Development

Design Patterns, Anti-Patterns and Windows Azure

Application Patterns for the Cloud

http://blogs.msdn.com/b/kashif/archive/2010/08/07/application-patterns-for-the-cloud.aspx

Architecting Applications for High Scalability (Video)

David Aiken on Azure Architecture Patterns (Video)

Cloud Application Architecture Patterns (Video)

10 Things Every Architect Needs to Know about Windows Azure

Key Differences Between Public and Private Clouds

Microsoft Application Platform at a Glance

http://blogs.msdn.com/b/jmeier/archive/2010/10/30/microsoft-application-platform-at-a-glance.aspx

Windows Azure is not just about Roles

http://vikassahni.wordpress.com/2010/11/17/windows-azure-is-not-just-about-roles/

Example Application for Windows Azure

Implementation Guidance

Practical applications for the architect to consider

5 Enterprise steps for adopting a Platform as a Service

Performance-Based Scaling in Windows Azure

Windows Azure Guidance for the Development Process

http://blogs.msdn.com/b/eugeniop/archive/2010/04/01/windows-azure-guidance-development-process.aspx

Microsoft Developer Guidance Maps

http://blogs.msdn.com/b/jmeier/archive/2010/10/04/developer-guidance-ia-at-a-glance.aspx

How to Build a Hybrid On-Premise/In Cloud Application

A Common Scenario of Multi-instances in Windows Azure

Slides and Links for Windows Azure Platform Best Practices

AppFabric Architecture and Deployment Topologies guide

Windows Azure Platform Appliance

Integrating Cloud Technologies into Your Organization

Interoperability with Open Source and other applications; business and cost decisions

Interoperability Labs at Microsoft

Windows Azure Service Level Agreements

Bruno Terkaly described Two unmistakable and inseparable trends – Mobile & Cloud in this 12/21/2010 post:

The rapid rise and use of mobile devices and cloud computing is unmistakable. It makes sense that these two technologies are interrelated. Nearly all, if not all, cellular devices leverage compute and storage from somewhere else. The cloud can offer a lot - massive amounts of CPU, robust storage models, and comparatively huge bandwidth.

With that said, I still agree that there is still a need for hardware at businesses. However, Gartner makes a huge prediction that by 2012 20 percent of businesses will own no IT assets. Budgets will be targeted at more strategic, core-competency business goals. IT staff will be reskilled or reduced. Gartner further predicts that by 2014, there will be a 90% mobile penetration rate and 6.5 billion mobile connections. Penetration will not be uniform, as continents like the lower levels in Africa versus Asia. Electronic transactions will be the rule, not the exception.

At my next talk, I’ve planned 45 minute walkthrough where I explain how Microsoft's Mobile/Cloud offering provides all the core building blocks needed by companies and individuals to harness cloud power on a mobile device. Indeed, creating mobile applications that leverage cloud power will be a critical piece of the future of software.

The bottom line is this, companies that write these mobile/cloud applications will need a very comprehensive set of tools and services for building the mobile client to connecting and securely using cloud services.

Mobile applications have redefined the timelines to deliver applications. Businesses must build applications in weeks, not months. The tooling and technologies must support a unified approach to building mobile/cloud applications, spanning the client all the way to the server, including various models for storage, compute. Developers need one robust development environment (Visual Studio), with related SDKs, frameworks, and languages, regardless whether they are writing cloud-based server code or mobile client code. They need to interoperate between cloud based and on-premise, client applications, not just one or the other.

When you speak of open standards, there are many things to speak of here. Are we talking about compliance? HIPPA? Sarbanes-Oxley? Is it security you are worried about – OATH, SWT, AD? Is it web standards – HTTP/HTTPS, ATOM, JSON, XML?

Microsoft offers a full suite of products to help you with both on-premise and cloud-based software. Microsoft also offers a variety of languages and products which allow you to build the whole application, from the cloud based server down to the client, all the way to on-premise.

Here is a sample of the technologies that I frequently demo:

Bill Zack glorified your Journey to the Cloud with Microsoft technologies on 12/21/2010:

As you can see from the diagram below no company other than Microsoft has so many cloud solutions that you can apply to solving your business needs.

What this means for Independent Software Vendors and Partners is that we have truly entered a new generation of Information Technology. No product or service can be successful without taking the cloud into consideration.

You can leverage the cloud in three key ways. You can move things to the cloud like outsourcing email with Office 365. You can utilize the cloud by running applications in the Windows Azure Platform. And/or you can build your own private cloud on-premise and deliver IT as a service. You can also build Hybrid Clouds that combine the best of both on-premise and public clouds.

Outsource to the cloud – Using Microsoft’s Software as a Service (SaaS) public cloud offering Office 365 (Office, Exchange Online, SharePoint Online, and Lync Online) you can purchase a business process such as email which is delivered at a business relevant SLA.

In addition to the components of Office 365 Microsoft also offers Customer Relationship Management (CRM) in the cloud with CRM Online and all the consumer focused Live Services such as Bing, Hotmail, Messenger and more.

You can also outsource processing to one of the many 3rd party hosting companies that base their offerings on the Microsoft product stack.

Leverage the cloud – Using Microsoft’s Platform as a Service (PaaS) public cloud offering, the Windows Azure Platform, you can build and run applications on a Microsoft provided service oriented global data center infrastructure.

Be the cloud – You can build your own Infrastructure as a Service (IaaS) private cloud in your own data center utilizing Hyper-V and the Hyper-V Cloud tooling, guidance and partnerships.

For more on your cloud options see the Microsoft Cloud Power web site.

To the Cloud.

The Windows Azure Team published a Billing Clarification for Windows Azure Extra Small Instance Hours on 12/21/2010:

If you plan to enroll in the Windows Azure Extra Small Instance beta program, please note that the Extra Small compute instance will be billed separately from other compute instance sizes. Compute hours included in the Introductory Special, MSDN Premium or Development Accelerator offers don't apply to Extra Small Instance hours. The Extra Small Windows Azure Instance is priced at $0.05 per compute hour and provides developers with a cost-effective training and development environment. Compute hours are calculated based on the number of hours that your application is deployed. Sign up for the beta on the Windows Azure Management Portal. Requests will be approved on a first-come, first-served basis.

Mark Minasi published Ringing Up The Costs of the Cloud to the Windows IT Pro blog on 12/21/2010:

To read the first part of this series, check out "Avoid Being Plowed by the Cloud."

Unless you’ve been living in a cave—or at least a cave without broadband—for the past two years, you know that vendors of so-called “cloud services” have been hawking their wares non-stop, claiming that their product (the latest form of “outsourcing,” basically) can save you money, and that only a fool wouldn’t opt for the cloud. So if the cloud decision is all about money (and it is, actually), let’s think about cloud costs a bit.

You’ll Need More Internet Bandwidth

First, there’s the point that I made last month: when you send your servers out to the cloud, the people don’t go with them. If your people are still in your building but the servers are out on the Internet, you’re going to have to invest in (and maintain) a lot of new Internet bandwidth and its attendant switches, routers, etc, and that ain’t gonna be cheap.

Entry and Exit Costs

Next, ask the question, “How much would it cost to get into the cloud, and then to get out of the cloud?” If you’re opting for an Infrastructure as a Service approach, such as Amazon’s EC2 or Microsoft’s Hyper-V Cloud Service Provider Program (now, there’s a catchy name, eh?), then transfer costs aren’t too bad. In the worst case, you have to rebuild an existing physical server on a virtual machine running on the cloud vendor’s network, which is annoying and time-consuming but not impossible. In many cases, you’ll be able to use some sort of physical-to-virtual (P2V) tool like VMware Converter or Sysinternal’s Disk2VHD to quickly convert a physical system to a ready-for-virtualization one, and if you’re truly lucky then your server is already implemented on some popular virtual format, like a VHD or VMDK, and you may be able to just upload its file to the cloud vendor.

If, on the other hand, you intend to exploit a Software as a Service (SaaS) or Platform as a Service (PaaS) sort of cloud, then you will almost certainly need to rewrite your applications or adopt your new cloud vendor’s applications. For example, you can’t take just any Windows server-based application and drop it on an Azure cloud, as Azure is basically a very large web hosting service for ASP.NET applications. Azure is, however, only basically an ASP.NET platform, as the rules for writing ASP.NET apps on Azure are just different enough from the rules for running them atop a home-built IIS 7.5 system that rewrites will be in order. Understand also that I’m using Azure as one example—you’ll need rewrites for just about any SaaS or PaaS-type cloud.