Using Excel 2010 and the Hive ODBC Driver to Visualize Hive Data Sources in Apache Hadoop on Windows Azure

•• Updated 8/26/2012 with changes in Apache Hadoop for Windows Azure (AH4WA) Service Updates 2 (SU2, June 2012) and 3 (SU3, August 2012) and the availability of OakLeaf Systems’ US Air Carrier Flight Delays dataset from the Windows Azure Marketplace DataMarket. SU3 didn’t fix the below problem.

• Updated 4/14/2012 with the reason I didn’t use the Codename “Data Numerics” Team’s public 32-month subset of the U.S. Federal Aviation Administration’s (FAA) On_Time Performance *.csv files as a note in the Sample Hive Tables for Testing the Hive ODBC Driver section.

Contents

- Introduction

- Sample Hive Tables for Testing the Hive ODBC Driver

- Downloading and Installing the Hive ODBC Driver and and Excel Add-In

- Setting Up the ODBC Data Source in Excel

- Executing HiveQL Queries from Excel

- Executing an Aggregate Query and Visualizing the Result

Introduction

•• Service Update 2 (SU2) provided only 2 nodes and 1 TB of disk space to permit onboarding more prospective users. Service Update 3 (SU3) increased the allotment to 3 nodes and 1.5 TB disk space.

The Apache Software Foundation’s Hive™ is a related data warehousing and ad hoc querying component of Apache Hadoop v1.0. According to the Hive Wiki:

Hive is a data warehouse system for Hadoop that facilitates easy data summarization, ad-hoc queries, and the analysis of large datasets stored in Hadoop compatible file systems. Hive provides a mechanism to project structure onto this data and query the data using a SQL-like language called HiveQL. At the same time this language also allows traditional map/reduce programmers to plug in their custom mappers and reducers when it is inconvenient or inefficient to express this logic in HiveQL.

The following two earlier OakLeaf blog posts provide an introduction to Hadoop on Azure in general and its Interactive Hive Console in particular:

- Introducing Apache Hadoop Services for Windows Azure (4/2/2012)

- Using Data from Windows Azure Blobs with Apache Hadoop on Windows Azure CTP (4/9/2012)

This demonstration requires creating a flightdata_asv Hive data source table from downloadable tab-delimited text files as described in the second article and the following section. You design and execute an aggregate query against the Hive table and then visualize the query result set with an Excel bar chart.

Sample Hive Tables for Testing the Hive ODBC Driver

The following two sections describe the default (built-in) hivesampletable and a substantially larger custom flightdata_asv table.

Hadoop on Azure’s hivesampletable Data Set

The Hadoop-based Services on Windows Azure How To Guide TechNet wiki provides basic instructions for using Hadoop on Azure clusters. Microsoft tutorials for the Hive ODBC driver and Excel 2012 use the built-in hivesampletable, which contains 59,793 rows of smartphone device market share data defined by the following schema:

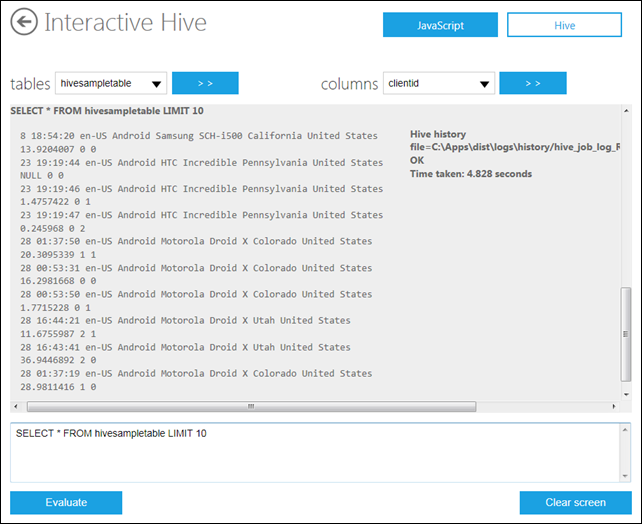

Figure 1. The schema for the hivesampletable data set displayed by the

Interactive Hive Console

Following are 10 random rows from the data set:

Figure 2. 10 random rows from the hivesampletable data set.

60,000 rows of simple structured data doesn’t come close to qualifying as BigData.

OakLeaf Systems’ flightdata_asv Data Source

My Using Data from Windows Azure Blobs with Apache Hadoop on Windows Azure CTP post of 4/9/2012 describes how to set up tab-delimited files stored in a Windows Azure blob container’s flightdata folder as the data source for an external hive table:

Figure 3. The HiveQL DDL statement to create a Hive table from other than a HDFS data source, Windows Azure blobs for this example.

This data source consists of 2,991,369 rows of simplified tab delimited *.txt data derived from the Federal Aviation Administration’s On_Time_Performance.csv files for US-certificated air carriers (airlines) for January 2012 and August through December 2011. The blobs, which average about 12.5 MB in size, are stored in Microsoft’s North Central US (Chicago) data center where the Hadoop on Azure EMR service is hosted. Storing the blobs in the same data center as the EMR service improves performance by eliminating transport over the Internet. You can download this initial set of six files from my SkyDrive account here.

• Note: A larger set of 32 sample files will be available shortly from the same OakLeaf Systems storage account in the North Central US data center. The Microsoft Codename “Data Numerics” team has made available a 16-month subset, which includes all columns, of this data for use with a sample application described by Roope Astala in a “Cloud Numerics” Example: Analyzing Air Traffic “On-Time” Data post of 3/8/2012. My Analyzing Air Carrier Arrival Delays with Microsoft Codename “Cloud Numerics” post of 3/26/2012 is a detailed tutorial for using this data with Apache Hadoop on Windows Azure. However, the Manage Cluster’s Configure Azure Blob Storage page doesn’t allow connecting to public blobs because it requires a password to save settings. I’ve requested a fix from the Hadoop on Azure team in a thread in the Apache Hadoop on Azure CTP Yahoo! Group.

Following is a 10 random-row sample of the data:

Figure 4. Ten random rows from the flightdata_asv table.

Three million rows comes closer to the BigData threshhold and is sufficient to gauge the performance of Excel with data retrieved over moderate speed Internet connections. Of course, supporting three million row tables is no challenge for relational databases.

Downloading and Installing the Hive ODBC Driver and Excel Add-In

32- and 64-bit versions of the Hive ODBC driver are available for download from the EMR Console’s Downloads page. (Earlier CTP versions offered only the 64-bit driver.) To download and install the driver, do this:

1. Click the Downloads tile on the EMR Console’s home page to open the Downloads page:

Figure 5. Start the Hive ODBC Driver installation from the Downloads page.

Note: Clicking the Word or PDF link opens an Apache Hadoop‐based Services for Windows Azure - How‐To and FAQ Guide document with a brief section on this topic.

2. Click the installer link for the bitness of your Excel 2010 edition (32-bit for this example), click the Run button, which opens a warning dialog. Click the More Options button to expose the Run Anyway choice:

Figure 6. Run the Hive ODBC installer despite the warning dialogs displayed.

3. Click Run Anyway to start the installation and open the ODBC Driver for Hive Setup dialog and mark the I Accept … check box:

Figure 7. Accept the Hive ODBC Driver EULA.

4. Click Install to install the driver, click Finish to exit the installer, open Excel, click the Data tab to verify presence of the Hive Pane icon and click it to display the Hive Query panel at the right of the worksheet:

Figure 8. Verify installation of the Hive ODBC driver in Excel 2010.

5. Return to the EMR Console’s home page and click the Open Ports tile to open the Configure Ports page and drag the ODBC Server slider to the right to open TCP port 10000:

Figure 9. Open TCP port 10000 to permit communication with your local computer.

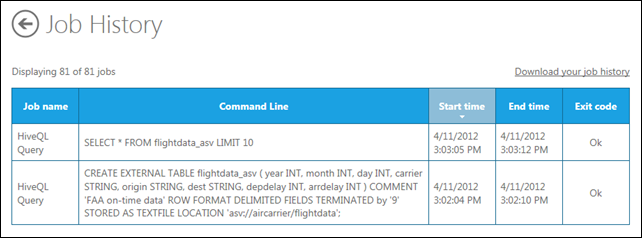

6. Return to the EMR Console’s home page and click the Job History tile to display entries for the preceding HiveQL operations:

Figure 10. The two HiveQL queries you executed to create and test the flightdata_asv table.

Setting Up the ODBC Data Source in Excel

To set up the Hive ODBC data source in Excel:

1. Open the Hive Query task pane if necessary, click the Enter Cluster Details button to open the ODBC Hive Setup dialog, type a description and the DNS host name, accept the TCP port, select Username/Password authentication, and type your username and password for the EMR Portal instance:

Figure 10. Configuring the Hive ODBC Driver with the Hive table name, cluster DNS name,

and user credentials.

2. Click OK to accept the configuration data and add FlightData_ASV Hive Table to the Hive Connection list, open the Select the Hive Object to Query list and select flightdata_asv [Table] to populate the Columns list:

Figure 11. Completing the initial Hive ODBC Driver configuration process.

You’re now ready to execute HiveQL queries against the active Hive tables.

Executing HiveQL Queries from Excel

The add-in’s Hive Query task pane lets you design HiveQL queries by defining:

- The projection (column list)

- WHERE clause columns, operators and values

- GROUP BY columns with aggregated values

- ORDER BY clauses for sorting

- LIMIT n values to return TOP(n) rows

The following sections describe how to execute a simple SELECT query and a more complex aggregate query that’s suitable for populating an Excel bar chart.

Verifying the Data Connection

1. Mark the eight column checkboxes, open the Limit Rows/Sample Data text box, and accept the 200-row default:

Figure 12. Designing a simple query in the Hive Query task pane

2. Click Execute Query. After a minute or so, the first 200 rows from the first *.txt file in name order appear in the worksheet:

Figure 13. A simple SELECT query executed on the Hive flightdata_asv table.

Executing an Aggregate Query and Visualizing the Result

Comparing the average on-time performance of air carriers requires for a particular period requires defining an aggregate query. For a six-month example, there’s no need to restrict the date range of flights included. To create a bar chart that displays average arrival delay minutes for the ? air carriers included in the FAA data, do the following:

1. Clear the year, month, day, origin, dest, and depdelay check boxes leaving only carrier and arrdelay columns included in the projection.

2. Click the arrdelay column’s Function cell to open the function list and select avg:

Figure 14. Selecting the avg function to average arrival delays.

Note: HiveQL has many more functions than most SQL implementations, including T-SQL.

2. Double-click avg to add it to the arrdelay column’s Function cell.

3. Clear the Limit Results check box.

4. Open the Aggregate Grouping list and select the carrier column:

Figure 15. Selecting the carrier column for grouping.

5. Type arrdelay between the avg() function’s parenthesis to correct a bug in the query design process and click Execute Query to return the result set, which appears in a minute or so:

Figure 16. Adding a missing function argument (bug) and executing the query.

Note: B6 and F9 are FAA unique two-character carrier codes for Jet Blue and Frontier Airlines. You can open the FAA’s lookup list of unique carrier codes here.

6. Remove the spurious Carrier item, probably added from a column header mistakenly left in a text file, reduce the length of the decimal fraction to 1, close the task pane, and add a bar chart to the worksheet.

7. Add a chart title, x-axis title and data labels:

Figure 17. Visualizing average arrival delays with a formatted bar (column) chart.

Note: This tutorial will be updated when the full set of 32 tab- or comma-delimited flightdata files are available for download.

0 comments:

Post a Comment