Windows Azure and Cloud Computing Posts for 8/13/2012+

| A compendium of Windows Azure, Service Bus, EAI & EDI,Access Control, Connect, SQL Azure Database, and other cloud-computing articles. |

•• Updated 8/17/2012 with new articles marked ••.

• Updated 8/16/2012 with new articles marked •.

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Windows Azure Blob, Drive, Table, Queue and Hadoop Services

- SQL Azure Database, Federations and Reporting

- Marketplace DataMarket, Cloud Numerics, Big Data and OData

- Windows Azure Service Bus, Access Control, Caching, Active Directory, and Workflow

- Windows Azure Virtual Machines, Virtual Networks, Web Sites, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch and Entity Framework v4+

- Windows Azure Infrastructure, Media Services and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

Azure Blob, Drive, Table, Queue and Hadoop Services

•• The Datanami Staff (@datanami) posted Marching Hadoop to Windows on 8/17/2012:

Bringing Hadoop to Windows and the two-year development of Hadoop 2.0 are two of the more exciting developments brought up by Hortonworks’s Cofounder and CTO, Eric Baldeschwieler, in a talk before a panel at the Cloud 2012 Conference in Honolulu.

The panel, which was also attended by Baldeschwieler’s Cloudera counterpart Amr Awadallah, focused on insights into the big data world, a subject Baldeschwieler tackled almost entirely with Hadoop. The eighteen-minute discussion also featured a brief history of Hadoop’s rise to prominence, improvements to be made to Hadoop, and a few tips to enterprising researchers wishing to contribute to Hadoop.

“Bringing Hadoop to Windows,” says Baldeschwieler “turns out to be a very exciting initiative because there are a huge number of users in Windows operating system.” In particular, the Excel spreadsheet program is a popular one for business analysts, something analysts would like to see integrated with Hadoop’s database. That will not be possible until, as Baldeschwieler notes, Windows is integrated into Hadoop later this year, a move that will also considerably expand Hadoop’s reach.

However, that announcement pales in comparison to the possibilities provided by the impending Hadoop 2.0. “Hadoop 2.0 is a pretty major re-write of Hadoop that’s been in the works for two years. It’s now in usable alpha form…The real focus in Hadoop 2.0 is scale and opening it up for more innovation.” Baldeschwieler notes that Hadoop’s rise has been result of what he calls “a happy accident” where it was being developed by his Yahoo team for a specific use case: classifying, sorting, and indexing each of the URLs that were under Yahoo’s scope.

What ended up happening was that other Yahoo teams requested use of the Hadoop nodes and found success with it, leading to a much more significant investment from Yahoo. “Yahoo took this (Hadoop) prototype and then built an internal service that now runs on 42,000 computers with roughly 200 petabytes of raw storage involved and it took about 300 person-years of investment and open source software to make this thing work.” From there, folks like Baldeschwieler and Awadallah went off and formed other projects like Hortonworks and Cloudera to further add to Hadoop.

While Hadoop’s rise makes for a fun success story, its status as somewhat of a happy accident has led to some inefficiencies and limitations, such that a new version entirely was necessary to continue its growth. “The existing Hadoop 1.0 base runs on about 4,000 computers whereas the target design is about 10,000 and that takes Moore’s law forward a few years. Our current target computer has about 12 TB of disk, the new one would have 36.”

Hadoop 2.0 is more than about improving its scale, however. Baldeschwieler would like to see programmers and data scientists able to work with more than MapReduce, in essence making it more ‘pluggable.’ He would also like to see new varieties of files introduced to Hadoop through version 2.0.

Making 2.0 more pluggable may also solve another Hadoop problem businesses are having. Baldeschwieler mentioned that every Fortune 500 company has Hadoop running in some form but many businesses are slow to make full use of it. Making Hadoop more pluggable will not help the businesses that hear of Hadoop, want to get into big data, and end up buying several nodes to accomplish that end without much thought.

It will however assist those with competent technology departments that have analytics tools but are unable to integrate them with Hadoop for whatever reason. “We need to make sure that there’s the right APIs for everyone who’s building data products to plug into Hadoop in various ways.”

Finally, someone has to be doing all this research into the advancement of Hadoop into its second version. Baldeschwieler notes that while the Hadoop community welcomes good ideas and contributions, one should build a reputation in the community by doing interesting research with Hadoop before trying to add to it.

Microsoft’s Apache Hadoop on Windows Azure service integrates Excel PowerPivot with a Hive ODBC provider to deliver effective “BI for the masses.”

Matt Asay (@mjasay) asserted “In a world of tissue when you're Kleenex, you've won” in a deck for his Becoming Red Hat: Cloudera and Hortonworks' Big-Data death match article of 8/17/2012 for The Register:

Open ... and Shut In the Big Data market, Hadoop is clearly the team to beat. What is less clear is which of the Hadoop vendors will claim the spoils of that victory.

Because open source tends to be winner-take-all, we are almost certainly going to see a "Red Hat" of Hadoop, with the second place vendor left to clean up the crumbs.

As ever with open source, this means the Hadoop market ultimately comes down to a race for community support because, as Redmonk analyst Stephen O'Grady argues, the biggest community wins.

In community and other areas, Linux is a great analogue for Hadoop. I've suggested recently that Hadoop market observers could learn a lot from the indomitable rise of Linux, including from how it overcame technical shortcomings over time through communal development. But perhaps a more fundamental observation is that, as with Linux, there's no room for two major Hadoop vendors.

Yes, there will be truckloads of cash earned by EMC, IBM and others who use Hadoop as a complement to drive the sale of proprietary hardware and software, just as we have in the Linux market with IBM, Oracle, Hewlett-Packard and others.

But for those companies aspiring to be the Red Hat of Hadoop - that primary committer of code and provider of associated support services - there's only room for one such company, and it's Cloudera or Hortonworks. I don't feel MapR has the ability to move Hadoop development, given that it doesn't employ key Hadoop developers as Cloudera and Hortonworks do, so it has no chance of being a dominant Hadoop vendor.

Cash kings

Cloudera and Hortonworks recognise this, which is why both have raised mountains of cash. The size of the Big Data pie is huge, but it's not going to be split evenly. Only one company gets to be the center of the Hadoop ecosystem. Not two.

In enterprise Linux, that "one company" is Red Hat. SUSE (then Novell then just SUSE again) initially took Red Hat on and had a real chance to be the leader, but Red Hat persevered and became the billion-dollar open-source company while SUSE-Novell-SUSE did not.

Why did Red Hat win? Community.

No, not the kind of community we sometimes associate with open source, ie, individual hackers staying up late for the love of coding, though that demographic matters. Red Hat contributes more to the Linux kernel than any single individual or company.

This, in turn, led Red Hat to attract the second type of community: the "professional developer," or third-party application developer. Red Hat managed to amass an unassailable third-party application ecosystem lead. Ultimately, in the Hadoop battle the community to be won is this community of developers building around the Hadoop ecosystem, because it's this ecosystem that leads to customer adoption, which fuels revenues which fuel the hiring of more code committers.

Call it the virtuous cycle of commercial open-source community development.

From 2002 until 2005, I worked at Novell and after the SUSE acquisition saw first-hand how Red Hat used its third-party application ecosystem to crush SUSE. SUSE was always second choice with customers because the applications they wanted ran on Red Hat first, which in turn made SUSE second-best with partners, too. By the time Novell/SUSE finally caught up in terms of sheer number of applications (and now exceeds Red Hat), Red Hat had already cemented its brand and Novell's Linux business languished.

As Linux Foundation executive director Jim Zemlin is fond of saying: "In a world of tissue when you're Kleenex, you've won." When Red Hat became "Kleenex," the game was over.

In the Hadoop world, the race to be "Kleenex" is on, and it involves attracting the biggest ISV community. Between the two dominant Hadoop distributions, it's still a somewhat even race, even if Cloudera took the early lead with customer traction. Hortonworks has been playing up its open source purity, arguing that it's "true" open source while Cloudera offers a freemium/open core model. It's very similar to the argument that Red Hat used to use against Novell/SUSE.

But in this case, I don't think it applies.

Both Cloudera and Hortonworks contribute to and distribute 100 per cent open-source Hadoop platforms. The difference comes from the management and other tools each offers alongside Hadoop. Hortonworks believes even this area should be open source, which is why its rival to Cloudera Manager is open-source Ambari. …

Read more.

•• Brad Sarsfield (@bradoop) reported availability of a new Hadoop on Azure REST API preview in a 6/13/2012 thread in the Hadoop on Azure Yahoo! Group, which Ganeshan Iyer updated on 8/13/2012 (missed when originally posted):

One of the top pieces of feedback that we’ve heard from those using the Hadoop on Azure preview to build proof of concepts and prototypes is the need for the ability to programmatically submit a Map Reduce or Hive job to the cluster from outside RDP on head node or www.hadooponazure.com web page.

To facilitate this we’d like to share a prototype .Net library that uses a REST API to submit jobs against the Hadoop on Azure free preview. This provides the ability to submit JAR and Hive jobs to the cluster and monitor status of those jobs.

While it's highly likely the REST API will change and evolve over time, we would like to share this now as a proof of concept to iterate and get your feedback as we continue to iterate.

Download:

- Zip: http://bit.ly/hoaRESTzip

- GitHub: http://bit.ly/hoaRESTgit

Example:

// Start a Map Reduce job

var package = new JobPackageBuilder().WithJar("hadoop-examples-0.20.203.1-SNAPSHOT.jar").AsHadoopJar("pi", "10 100");

var jarJob = jobClient.StartJob(package.ToStream());

// Issue a Hive query

var hivePackage = new JobPackageBuilder().WithJar(jarPath).AsHiveQuery("show tables;");

var hiveJob = jobClient.StartJob(hivePackage.ToStream());

The source code, which includes a .NET test project and is released under an Apache v2.0 license, is dated 7/16/2012.

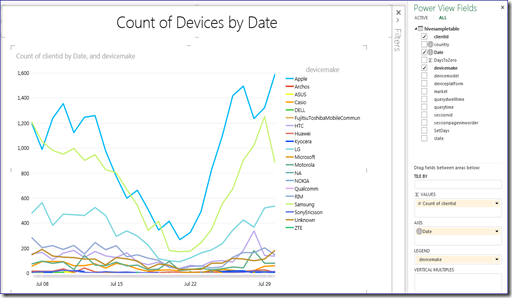

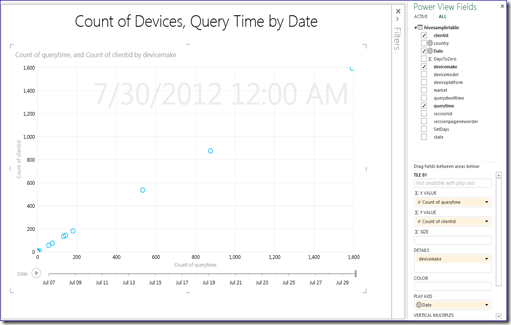

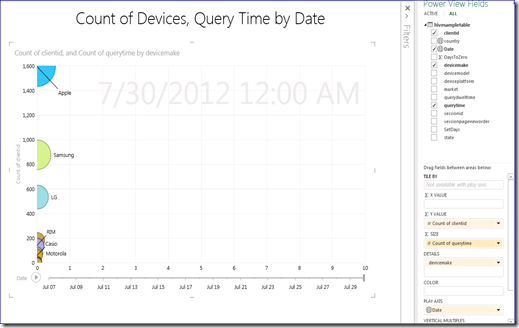

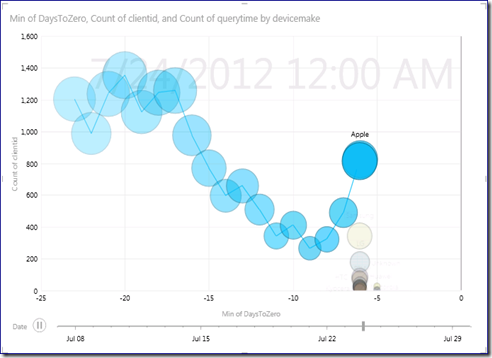

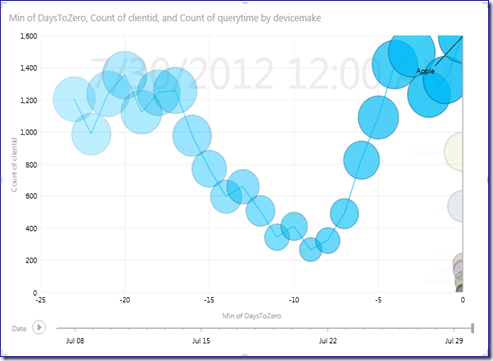

• Denny Lee (@dennylee) posted a Power View Tip: Scatter Chart over Time on the X-Axis and Play Axis on 7/24/2012 (missed when posted):

As you have seen in many Power View demos, you can run the Scatter Chart over time by placing date/time onto the Play Axis. This is pretty cool and it allows you to see trends over time on multiple dimensions. But how about if you want to see time also on the x-axis?

For example, let’s take the Hive Mobile Sample data as noted in my post: Connecting Power View to Hadoop on Azure. As noted in Office 2013 Power View, Bing Maps, Hive, and Hadoop on Azure … oh my!, you can quickly create Power View reports right out of Office 2013.

Scenario

In this scenario, I’d like to see the number of devices on the y-axis, date on the x-axis, broken out by device make. This can be easily achieved using a column bar chart.

Yet, if I wanted to add another dimension to this, such as the number of calls (QueryTime), the only way to do this without tiling is to use the Scatter Chart. Yet, this will not yield the results you may like seeing either.

It does have a Play Axis of Date, but while the y-axis has count of devices (count of ClientID), the x-axis is the count of QueryTime – it’s a pretty lackluster chart. Moving Count of QueryTime to the Bubble Size makes it more colorful but now all the data is stuck near the y-axis. When you click on the play-axis, the bubbles only move up and down the y-axis.

Date on X-Axis and Play Axis

So to solve the problem, the solution is to put the date on both the x-axis and the play axis. Yet, the x-axis only allows numeric values – i.e. you cannot put a date into it. So how do you around this limitation?

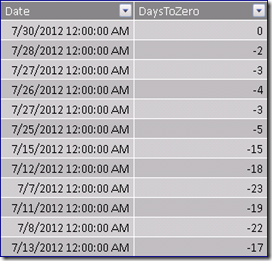

What you can do is create a new calculated column:

DaysToZero = -1*(max([date]) – [date])

What this does is to calculate the number of days differing between the max([date]) within the [date] column as noted below.

As you can see, the max([date]) is 7/30/2012 and the [DaysToZero] column has the value of datediff(dd, [Date], max([Date]))

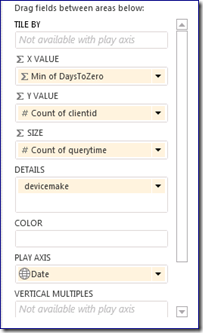

Once you have created the [DaysToZero] column, you can then place this column onto the x-axis of your Scatter Chart. Below is the scatter chart configuration.

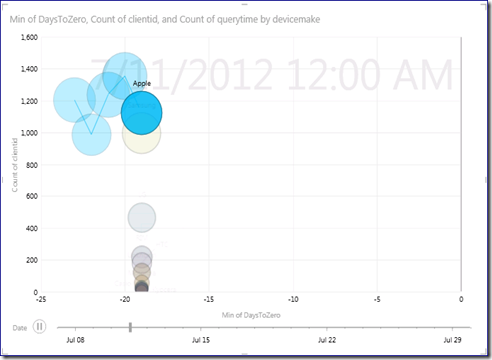

With this configuration, you can see events occur over time when running the play axis as noted in the screenshots below.

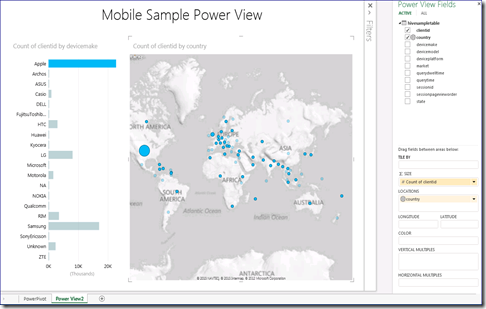

• Denny Lee (@dennylee) posted a Office 2013 Power View, Bing Maps, Hive, and Hadoop on Azure … on my! on 7/23/2012 (missed when posted):

With all the excitement surrounding Office 2013 (here’s a nice Engadget Review) and energized by Andrew Brust’s tweets (@andrewbrust) and post Office 2013 brings BI, Big Data to Windows 8 tablets, I thought I would expand on my posts:

- Connecting Power View to Hadoop on Azure

- Connecting PowerPivot to Hadoop on Azure – Self Service BI to Big Data in the Cloud

For us involved in BI, the excitement surrounding Office 2013 is because Power View is now embedded directly in Excel. But in addition to that, now I can include maps! Yay!

So to make my Power View to Hive / Hadoop on Azure even more compelling, I downloaded Office 2013, installed the Hive ODBC driver, connected to Hadoop on Azure (instructions on how to do this are in the above bulleted blog posts)and charged ahead with Power View within Excel 2013.

Seriously cool, now I can click on the bars within my Power View report and it changes the bubbles in the maps depicting (in this case) the number of Apple devices (blue bar on the left chart) throughout the world (light blue bubbles indicate no devices in that part). The source of this data is from my Hadoop on Azure cluster, eh?!

Have fun and start downloading, eh?!

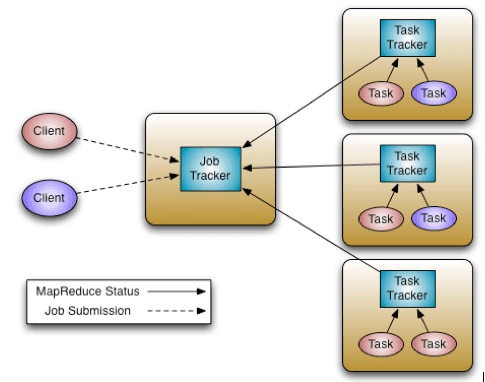

Arun Murthy (@acmurthy) posted Apache Hadoop YARN – Background and an Overview to the Hortonworks Blog on 8/7/2012:

Celebrating the significant milestone that was Apache Hadoop YARN being promoted to a full-fledged sub-project of Apache Hadoop in the ASF we present the first blog in a multi-part series on Apache Hadoop YARN – a general-purpose, distributed, application management framework that supersedes the classic Apache Hadoop MapReduce framework for processing data in Hadoop clusters.

MapReduce – The Paradigm

Essentially, the MapReduce model consists of a first, embarrassingly parallel, map phase where input data is split into discreet chunks to be processed. It is followed by the second and final reduce phase where the output of the map phase is aggregated to produce the desired result. The simple, and fairly restricted, nature of the programming model lends itself to very efficient and extremely large-scale implementations across thousands of cheap, commodity nodes.

Apache Hadoop MapReduce is the most popular open-source implementation of the MapReduce model.

In particular, when MapReduce is paired with a distributed file-system such as Apache Hadoop HDFS, which can provide very high aggregate I/O bandwidth across a large cluster, the economics of the system are extremely compelling – a key factor in the popularity of Hadoop.

One of the keys to this is the lack of data motion i.e. move compute to data and do not move data to the compute node via the network. Specifically, the MapReduce tasks can be scheduled on the same physical nodes on which data is resident in HDFS, which exposes the underlying storage layout across the cluster. This significantly reduces the network I/O patterns and keeps most of the I/O on the local disk or within the same rack – a core advantage.

Apache Hadoop MapReduce, circa 2011 – A Recap

Apache Hadoop MapReduce is an open-source, Apache Software Foundation project, which is an implementation of the MapReduce programming paradigm described above. Now, as someone who has spent over six years working full-time on Apache Hadoop, I normally like to point out that the Apache Hadoop MapReduce project itself can be broken down into the following major facets:

- The end-user MapReduce API for programming the desired MapReduce application.

- The MapReduce framework, which is the runtime implementation of various phases such as the map phase, the sort/shuffle/merge aggregation and the reduce phase.

- The MapReduce system, which is the backend infrastructure required to run the user’s MapReduce application, manage cluster resources, schedule thousands of concurrent jobs etc.

This separation of concerns has significant benefits, particularly for the end-users – they can completely focus on the application via the API and allow the combination of the MapReduce Framework and the MapReduce System to deal with the ugly details such as resource management, fault-tolerance, scheduling etc.

The current Apache Hadoop MapReduce System is composed of the JobTracker, which is the master, and the per-node slaves called TaskTrackers.

The JobTracker is responsible for resource management (managing the worker nodes i.e. TaskTrackers), tracking resource consumption/availability and also job life-cycle management (scheduling individual tasks of the job, tracking progress, providing fault-tolerance for tasks etc).

The TaskTracker has simple responsibilities – launch/teardown tasks on orders from the JobTracker and provide task-status information to the JobTracker periodically.

For a while, we have understood that the Apache Hadoop MapReduce framework needed an overhaul. In particular, with regards to the JobTracker, we needed to address several aspects regarding scalability, cluster utilization, ability for customers to control upgrades to the stack i.e. customer agility and equally importantly, supporting workloads other than MapReduce itself.

We’ve done running repairs over time, including recent support for JobTracker availability and resiliency to HDFS issues (both of which are available in Hortonworks Data Platform v1 i.e. HDP1) but lately they’ve come at an ever-increasing maintenance cost and yet, did not address core issues such as support for non-MapReduce and customer agility.

Why support non-MapReduce workloads?

MapReduce is great for many applications, but not everything; other programming models better serve requirements such as graph processing (Google Pregel / Apache Giraph) and iterative modeling (MPI). When all the data in the enterprise is already available in Hadoop HDFS having multiple paths for processing is critical.

Furthermore, since MapReduce is essentially batch-oriented, support for real-time and near real-time processing such as stream processing and CEPFresil are emerging requirements from our customer base.

Providing these within Hadoop enables organizations to see an increased return on the Hadoop investments by lowering operational costs for administrators, reducing the need to move data between Hadoop HDFS and other storage systems etc.

Why improve scalability?

Moore’s Law… Essentially, at the same price-point, the processing power available in data-centers continues to increase rapidly. As an example, consider the following definitions of commodity servers:

- 2009 – 8 cores, 16GB of RAM, 4x1TB disk

- 2012 – 16+ cores, 48-96GB of RAM, 12x2TB or 12x3TB of disk.

Generally, at the same price-point, servers are twice as capable today as they were 2-3 years ago – on every single dimension. Apache Hadoop MapReduce is known to scale to production deployments of ~5000 nodes of hardware of 2009 vintage. Thus, ongoing scalability needs are ever present given the above hardware trends.

What are the common scenarios for low cluster utilization?

In the current system, JobTracker views the cluster as composed of nodes (managed by individual TaskTrackers) with distinct map slots and reduce slots, which are not fungible. Utilization issues occur because maps slots might be ‘full’ while reduce slots are empty (and vice-versa). Fixing this was necessary to ensure the entire system could be used to its maximum capacity for high utilization.

What is the notion of customer agility?

In real-world deployments, Hadoop is very commonly deployed as a shared, multi-tenant system. As a result, changes to the Hadoop software stack affect a large cross-section if not the entire enterprise. Against that backdrop, customers are very keen on controlling upgrades to the software stack as it has a direct impact on their applications. Thus, allowing multiple, if limited, versions of the MapReduce framework is critical for Hadoop.

Enter Apache Hadoop YARN

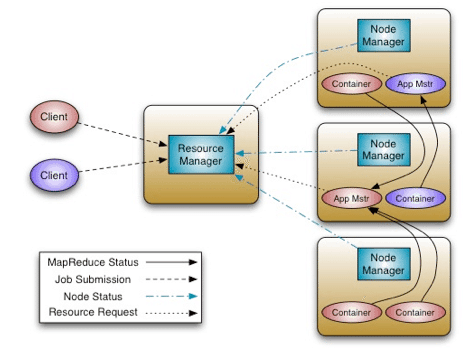

The fundamental idea of YARN is to split up the two major responsibilities of the JobTracker i.e. resource management and job scheduling/monitoring, into separate daemons: a global ResourceManager and per-application ApplicationMaster (AM).

The ResourceManager and per-node slave, the NodeManager (NM), form the new, and generic, system for managing applications in a distributed manner.

The ResourceManager is the ultimate authority that arbitrates resources among all the applications in the system. The per-application ApplicationMaster is, in effect, a framework specific entity and is tasked with negotiating resources from the ResourceManager and working with the NodeManager(s) to execute and monitor the component tasks.

The ResourceManager has a pluggable Scheduler, which is responsible for allocating resources to the various running applications subject to familiar constraints of capacities, queues etc. The Scheduler is a pure scheduler in the sense that it performs no monitoring or tracking of status for the application, offering no guarantees on restarting failed tasks either due to application failure or hardware failures. The Scheduler performs its scheduling function based on the resource requirements of the applications; it does so based on the abstract notion of a Resource Container which incorporates resource elements such as memory, cpu, disk, network etc.

The NodeManager is the per-machine slave, which is responsible for launching the applications’ containers, monitoring their resource usage (cpu, memory, disk, network) and reporting the same to the ResourceManager.

The per-application ApplicationMaster has the responsibility of negotiating appropriate resource containers from the Scheduler, tracking their status and monitoring for progress. From the system perspective, the ApplicationMaster itself runs as a normal container.

Here is an architectural view of YARN:

One of the crucial implementation details for MapReduce within the new YARN system that I’d like to point out is that we have reused the existing MapReduce framework without any major surgery. This was very important to ensure compatibility for existing MapReduce applications and users. More on this later.

The next post will dive further into the intricacies of the architecture and its benefits such as significantly better scaling, support for multiple data processing frameworks (MapReduce, MPI etc.) and cluster utilization.

Matt Winkler wrote the following on 5/29/2012 in a [HadoopOnAzureCTP] Re: Daytona support Yahoo! group thread:

In the hadoop 2.0 timeframe, there are interesting capabilities that YARN brings to the table. We are evaluating those now but don't have any concrete plans. As YARN evolves we will see other parts of the stack (like pig and hive) evolve to take advantage of the new model.

We are working closely to understand how this evolve[s] and what opportunities exist.

It’s a good bet that YARN will be part of the Apache Hadoop on Windows Azure picture in the future because Hortonworks is a Microsoft partner, but not the near future.

<Return to section navigation list>

SQL Azure Database, Federations and Reporting

•• Cihan Biyikoglu (@cihangirb, pictured below) reported on 8/15/2012 Intergen’s Chris Auld talking about Federations & Fan-out Queries at TechEd 2012 New Zealand in early September:

If you are in town between Sept 4 and 7i n Auckland, here is a fantastic talk to attend!

http://newzealand.msteched.com/topic/details/AZR304#fbid=qwdA5tTXqJW

Windows Azure SQL Database Deep Dive

Track: Windows Azure Level: 300 By: Chris Auld – CTO Intergen

Most developers are familiar with the concept of scaling out their application tier; with SQL Azure Federations it is now possible to scale out the data tier as well. In this session we will deep dive on building large scale solutions on SQL Azure. In this session we will cover patterns and techniques for building scalability into your relational databases. SQL Azure Federations allow databases to be spread over 100s of nodes in the Azure data centre with databases paid for by the day. This presents a unique avenue for dealing with particularly massive volumes of data, of user load, or both. This session will discuss how to design a schema for federation scale-out while still maintaining the value afforded by a true relational (SQL) database. We’ll look at approaches for minimizing cross federation queries and as well as approaches to fan-out queries when necessary. We will examine approaches for dealing with elastically scaling applications and other high load scenarios.

Don’t miss it!

Repeated in the Cloud Computing Events section below.

Himanshu Singh (@himanshuks) posted Data Series: Control Database Access Using Windows Azure SQL Database Firewall Rules to the Windows Azure blog on 8/14/2012:

Editor's Note: Today's post comes from Kumar Vivek [pictured at right], Technical Writer in our Customer Experience team. This post provides an overview of the newly-introduced database-level firewall rules in Windows Azure SQL Database.

Windows Azure SQL Database firewall prevents access to your SQL Database server to help protect your data. You could specify firewall rules to control access to your SQL Database server by specifying ranges of acceptable IP addresses. However, these firewall rules were defined at the server level, and enabled clients to access your entire SQL Database server, that is, all the databases within the same logical server. What if you wanted to control access to particular databases (containing secure information) within your SQL Database server; you could not do so earlier.

Well, now you can! Introducing database-level firewall rules in Windows Azure SQL Database! In addition to the server-level firewall rules, you can now define firewall rules for each database in your SQL Database server to restrict access to selective clients. To do so, you must create a database-level firewall rule for the required database with an IP address range that is beyond the IP address range specified in the server-level firewall rule, and ensure that the IP address of the client falls in the range specified in the database-level firewall rule.

This is how the connection attempt from a client passes through the firewall rules in Windows Azure SQL Database:

- If the IP address of the request is within one of the ranges specified in the server-level firewall rules, the connection is granted to your SQL Database server.

- If the IP address of the request is not within one of the ranges specified in the server-level firewall rule, the database-level firewall rules are checked. If the IP address of the request is within one of the ranges specified in the database-level firewall rules, the connection is granted only to the database that has a matching database-level rule.

- If the IP address of the request is not within the ranges specified in any of the server-level or database-level firewall rules, the connection request fails.

For detailed information, see the full article Windows Azure SQL Database Firewall.

Managing Database-Level Firewall Rules

Unlike server-level firewall rules, the database-level firewall rules are created per database and are stored in the individual databases (including master). The sys.database_firewall_rules view in each database displays the current database-level firewall rules. Further, you can use the sp_set_database_firewall_rule and sp_delete_database_firewall_rule stored procedures in each database to create and delete the database-level firewall rules for the database.

For detailed information about managing database-level firewall rules, see the complete article How to: Configure the Database-Level Firewall Settings.

Cyrielle Simeone (@cyriellesimeone, pictured below) posted Thomas Mechelke’s Using a Windows Azure SQL Database with Autohosted apps for SharePoint on 8/13/2012:

This article is brought to you by Thomas Mechelke, Program Manager for SharePoint Developer Experience team. Thomas has been monitoring our new apps for Office and SharePoint forums and providing help on various topics. In today's post, Thomas will walk you through how to use a Windows Azure SQL Database with autohosted apps for SharePoint, as it is one of the most active thread on the forum. Thanks for reading !

Hi ! My name is Thomas Mechelke. I'm a Program Manager on the SharePoint Developer Experience team. I've been focused on making sure that apps for SharePoint can be installed, uninstalled, and updated safely across SharePoint, Windows Azure, and Windows Azure SQL Database. I have also been working closely with the Visual Studio team to make the tools for building apps for SharePoint great. In this blog post I'll walk you through the process for adding a very simple Windows Azure SQL Database and accessing it from an autohosted app for SharePoint. My goal is to help you through the required configuration steps quickly, so you can get to the fun part of building your app.

Getting started

In a previous post, Jay described the experience of creating a new autohosted app for SharePoint. That will be our starting point.

If you haven't already, create a new app for SharePoint 2013 project and accept all the defaults. Change the app name if you like. I called mine "Autohosted App with DB". Accepting the defaults creates a solution with two projects: the SharePoint project with a default icon and app manifest, and a web project with some basic boilerplate code.

Configuring the SQL Server project

Autohosted apps for SharePoint support the design and deployment of a data tier application (DACPAC for short) to Windows Azure SQL Database. There are several ways to create a DACPAC file. The premier tools for creating a DACPAC are the SQL Server Data Tools, which are part of Visual Studio 2012.

Let's add a SQL Server Database Project to our autohosted app:

- Right-click the solution node in Solution Explorer, and then choose Add New Project.

- Under the SQL Server node, find the SQL Server Database Project.

- Name the project (I called it AutohostedAppDB), and then choose OK.

A few steps are necessary to set up the relationship between the SQL Server project and the app for SharePoint, and to make sure the database we design will run both on the local machine for debugging and in Windows Azure SQL Database.

First, we need to set the target platform for the SQL Server Database project. To do that, right-click the database project node, and then select SQL Azure as the target platform.

Next, we need to ensure that the database project will update the local instance of the database every time we debug our app. To do that, right-click the solution, and then choose Set Startup Projects. Then, choose Start as the action for your database project.

Now, build the app (right-click Solution Node and then choose Build). This generates a DACPAC file in the database output folder. In my case, the file is at /bin/Debug/projectname.dacpac.

Now we can link the DACPAC file with the app for SharePoint project by setting the SQL Package property.

Setting the SQL Package property ensures that whenever the SharePoint app is packaged for deployment to a SharePoint site, the DACPAC file is included and deployed to Windows Azure SQL Database, which is connected to the SharePoint farm.

This was the hard part. Now we can move into building the actual database and data access code.

Building the database

SQL Server Data Tools adds a new view to Visual Studio called SQL Server Object Explorer. If this view doesn't show up in your Visual Studio layout (usually as a tab next to Solution Explorer), you can activate it from the View menu. The view shows the local database generated from your SQL Server project under the node for (localdb)\YourProjectName.

This view is very helpful during debugging because it provides a simple way to get at the properties of various database objects and provides access to the data in tables.

Adding a table

For the purposes of this walkthrough, we'll keep it simple and just add one table:

- Right-click the database project, and then add a table named Messages.

- Add a column of type nvarchar(50) to hold messages.

- Select the Id column, and then change the Is Identity property to be true.

After this is done, the table should look like this:

Great. Now we have a database and a table. Let's add some data.

To do that, we'll use a feature of data-tier applications called Post Deployment Scripts. These scripts are executed after the schema of the data-tier application has been deployed. They can be used to populate look up tables and sample data. So that's what we'll do.

Add a script to the database project. That brings up a dialog box with several script options. Select Post Deployment Script, and then choose Add.

Use the script editor to add the following two lines:

delete from Messages

insert into Messages values ('Hello World!')

The delete ensures the table is empty whenever the script is run. For a production app, you'll want to be careful not to wipe out data that may have been entered by the end user.

Then we add the "Hello World!" message. That's it.

Configuring the web app for data access

After all this work, when we run the app we still see the same behavior as when we first created the project. Let's change that. The app for SharePoint knows about the database and will deploy it when required. The web app, however, does not yet know the database exists.

To change that we need to add a line to the web.config file to hold the connection string. For that we are using a property in the <appSettings> section named SqlAzureConnectionString.

To add the property, create a key value pair in the <appSettings> section of the web.config file in your web app:

<add key="SqlAzureConnectionString" value="Data Source=(localdb)\YourDBProjectName;Initial Catalog=AutohostedAppDB;Integrated Security=True;Connect Timeout=30;Encrypt=False;TrustServerCertificate=False" />

The SqlAzureConnectionString property is special in that its value is set by SharePoint during app installation. So, as long as your web app always gets its connections string from this property, it will work whether it's installed on a local machine or in Office 365.

You may wonder why the connection string for the app is not stored in the <connectionStrings> section. We implemented it that way in the preview because we already know the implementation will change for the final release, to support geo-distributed disaster recovery (GeoDR) for app databases. In GeoDR, there will always be two synchronized copies of the database in different geographies. This requires the management of two connection strings, one for the active database and one for the backup. Managing those two strings is non-trivial and we don't want to require every app to implement the correct logic to deal with failovers. So, in the final design, SharePoint will provide an API to retrieve the current connection string and hide most of the complexity of GeoDR from the app.

I'll structure the sample code for the web app in such a way that it should be very easy to switch to the new approach when the GeoDR API is ready.

Writing the data access code

At last, the app is ready to work with the database. Let's write some data access code.

First let's write a few helper functions that set up the pattern to prepare for GeoDR in the future.

GetActiveSqlConnection()

GetActiveSqlConnection is the method to use anywhere in the app where you need a SqlConnection to the app database. When the GeoDR API becomes available, it will wrap it. For now, it will just get the current connection string from web.config and create a SqlConnection object:

// Create SqlConnection.

protected SqlConnection GetActiveSqlConnection()

{

return new SqlConnection(GetCurrentConnectionString());

}

GetCurrentConnectionString()

GetCurrentConnectionString retrieves the connection string from web.config and returns it as a string.

// Retrieve authoritative connection string.

protected string GetCurrentConnectionString()

{

return WebConfigurationManager.AppSettings["SqlAzureConnectionString"];

}

As with all statements about the future, things are subject to change—but this approach can help to protect you from making false assumptions about the reliability of the connection string in web.config.

With that, we are squarely in the realm of standard ADO.NET data access programming.

Add this code to the Page_Load() event to retrieve and display data from the app database:

// Display the current connection string (don't do this in production).

Response.Write("<h2>Database Server</h2>");

Response.Write("<p>" + GetDBServer() + "</p>");

// Display the query results.

Response.Write("<h2>SQL Data</h2>");

using (SqlConnection conn = GetActiveSqlConnection())

{

using (SqlCommand cmd = conn.CreateCommand())

{

conn.Open();

cmd.CommandText = "select * from Messages";

using (SqlDataReader reader = cmd.ExecuteReader())

{

while (reader.Read())

{

Response.Write("<p>" + reader["Message"].ToString() + "</p>");

}

}

}

}

We are done. This should run. Let's hit F5 to see what happens.

It should look something like this. Note that the Database Server name should match your connection string in web.config.

Now for the real test. Right-click the SharePoint project and choose Deploy. Your results should be similar to the following image.

The Database Server name will vary, but the output from the app should not.

Using Entity Framework

If you prefer working with the Entity Framework, you can generate an entity model from the database and easily create an Entity Framework connection string from the one provided by GetCurrentConnectionString(). Use code like this:

// Get Entity Framework connection string.

protected string GetEntityFrameworkConnectionString()

{

EntityConnectionStringBuilder efBuilder =

new EntityConnectionStringBuilder(GetCurrentConnectionString());

return efBuilder.ConnectionString;

}

We need your feedback

I hope this post helps you get working on the next cool app with SharePoint, ASP.NET, and SQL. We'd love to hear your feedback about where you want us to take the platform and the tools to enable you to build great apps for SharePoint and Office.

Han reported SQL Data Sync Preview 6 is now live! in an 8/13/2012 post to the Sync Team blog:

SQL Data Sync Preview 6 has been successfully released to production. In this release, there are 2 major improvements:

- Enhance overall performance on initial provision and sync tasks

- Enhance sync performance between on-promise databases and Windows Azure SQL databases

Please download the new Agent from http://www.microsoft.com/en-us/download/details.aspx?id=27693

Also, for now on, we will be calling all subsequent preview releases Preview instead of the usual Service Update.

In another episode of Microsoft’s recent branding frenzy, SQL Azure Data Sync has become simply SQL Data Sync, similar to SQL Azure -> Windows Azure SQL Database, but (strangely) missing the Window Azure prefix.

Cihan Biyikoglu (@cihangirb) described Setting up Azure Data Sync Service with Federations in Windows Azure SQL Database For Reference Data Replication in an 8/12/2012 post:

In a previous post, I talked about the ability to use Data Sync Service with Federation Members. In this post, like to walk you through the details.

The scenario here is to sync a reference table called language_codes_tbl across federation members. The table represents language codes for the blogs_federation in my BlogsRUs_DB.

In my case the topology I created has the root database as a hub and all members defined as regular edge databases. Here is what you need to do to get the same setup

1. Create a “sync server” and a “sync group” called sync_codes

2. Add the root database as the hub database; blogsrus_db with conflict resolution set to “Hub Wins” and schedule set to every 5 mins.

3. Define the Sync dataset as the dbo.language_code_tbl

4. Add federation member databases into the sync_codes sync group and “deploy” the changes.

With this setup, replication happens bi-directionally. This means, I can update any one of the federation member dbs and the changes will first get replicated to the root db copy of my reference table and then will be replicated to all other federation member dbs automatically by SQL Data Sync. SQL Data Sync provides powerful control over the direction of data flow and conflict resolution to create the desired topology for syncing reference data in federation members.

Handling Federation Repartitioning Operations

This will work as long as you don’t reconfigure these members with an operation like ALTER FEDERATION … SPLIT. With SPLIT we drop the existing member database and create 2 new member databases that contain the redistributed data based on the new split point of the federation. Lets assume we issue the following statement to split the existing range 350000-400000.

alter federation blogs_federation split at (id=355000)

With that, you will notice that the sync group will start reporting an error on the member is impacted by the split operation – see the red error indicator marked below the database icon.

1. Given this database no longer exists, you need to deprovision the database from the sync group. To do this first remove the database with the “remove database” button above the topology area. You finalize the operation by deploying the change using the “deploy” button above. Since the database is dropped, you will need to do a forced removal after the deploy.

2. Next you need to add in the 2 new member names that are created by the SPLIT operation. we do this by running the following script. Once you have the new database names for the members covering the new ranges 350000-355000 and 355000 – 400000, you can follow step #4 above to add the names to the sync group.

Limitations with Azure Data Sync Service:

There are a few limitation to be aware of SQL Data Sync however; First the service has 5 min as its lowest latency for replication. There is no scripting support for set up of the data sync relationships. This means you will need to populate all the db names through the UI by hand. SQL Data sync also does not allow synchronization between more than 30 databases in sync groups in a single sync server at the moment. You can only create a single sync server with DSS today. SQL Data Sync is currently in preview mode and is continuously collecting feedback. Vote for your favorite request or add a new one at SQL Data Sync Feature Voting website!

<Return to section navigation list>

Marketplace DataMarket, Cloud Numerics, Big Data and OData

•• Doug Mahugh (@dmahugh) described Using the Cloudant Data Layer for Windows Azure in an 8/16/2012 post to the Interoperability @ Microsoft blog:

If you need a highly scalable data layer for your cloud service or application running on Windows Azure, the Cloudant Data Layer for Windows Azure may be a great fit. This service, which was announced in preview mode in June and is now in beta, delivers Cloudant’s “database as a service” offering on Windows Azure.

From Cloudant’s data layer you’ll get rich support for data replication and synchronization scenarios such as online/offline data access for mobile device support, a RESTful Apache CouchDB-compatible API, and powerful features including full-text search, geo-location, federated analytics, schema-less document collections, and many others. And perhaps the greatest benefit of all is what you don’t get with Cloudant’s approach: you’ll have no responsibility for provisioning, deploying, or managing your data layer. The experts at Cloudant take care of those details, while you stay focused on building applications and cloud services that use the data layer.

You can do your development in any of the many languages supported on Windows Azure, such as .NET, Node.JS, Java, PHP, or Python. In addition, you’ll get the benefits of Windows Azure’s CDN (Content Delivery Network) for low-latency data access in diverse locations. Cloudant pushes your data to data centers all around the globe, keeping it close to the people and services who need to consume it.

For a free trial of the Cloudant Data Layer for Windows Azure, create a new account on the signup page and select “Lagoon” as your data center location.

For an example of how to use the Cloudant Data Layer, see the tutorial “Using the Cloudant Data Layer for Windows Azure,” which takes you through the steps needed to set up an account, create a database, configure access permissions, and develop a simple PHP-based photo album application that uses the database to store text and images:

The sample app uses the SAG for CouchDB library for simple data access. SAG works against any Apache CouchDB database, as well as Cloudant’s CouchDB-compatible API for the data layer.

My colleague Olivier Bloch has provided another great example of using existing CouchDB libraries to simplify development when using the Cloudant Data Layer. In this video, he demonstrates how to put a nice Windows 8 design front end on top of the photo album demo app:

This example takes advantage of the couch.js library available from the Apache CouchDB project, as well as the GridApp template that comes with Visual Studio 2012. Olivier shows how to quickly create the app running against a local CouchDB installation, then by simply changing the connection string the app is running live against the Cloudant data layer running on Windows Azure.

The Cloudant data layer is a great example of the new types of capabilities – and developer opportunities – that have been created by Windows Azure’s support for Linux virtual machines. As Sam Bisbee noted in Cloudant’s announcement of the service, “The addition of Linux-based virtual machines made it possible for us to offer the Cloudant Data Layer service on Azure.”

If you’re looking for a way to quickly build apps and services on top of a scalable high-performance data layer, check out what the Cloudant Data Layer for Windows Azure has to offer!

•• Alex James (@adjames) described OData support in ASP.NET Web API in an 8/15/2012 post updated 8/16/2012:

UPDATE 2 @1:21 pm on 16th August (PST):

There is an updated version of the nuget package that resolves the previous dependency issues. Oh and my comments are now working again.UPDATE 1 @10:00 am on 16th August (PST):

If you’ve tried using the preview nuget package and had problems, rest assured we are working on the issue (which is a dependency version issue). Essentially the preview and one of its dependencies have conflicting dependencies on specific versions of packages. The ETA for a fix that resolves this nuget issue is later today.

Also if you’ve made a comment and it isn’t showing up, don’t worry it will. I’m currently having technical problems approving comments.

Earlier versions ASP.NET Web API included basic support for the OData, allowing you to use the OData Query Options $filter, $orderby, $top and $skip to shape the results of controller actions annotated with the [Queryable] attribute. This was very useful and worked across formats. That said true support for the OData format was rather limited. In fact we barely scratched the surface of OData, for example there was no support for creates, updates, deletes, $metadata and code generation etc.

To address this we’ve create a preview of a new nuget package (and codeplex project) for building OData services that:

- Continues to support the [Queryable] attribute, but also allows you to drop down to an Abstract Syntax Tree (or AST) representing $filter & $orderby.

- Adds ways to infer a model by convention or explicitly customize a model that will be familiar to anyone who’s used Entity Framework Code First.

- Adds support for service documents and $metadata so you can generate clients (in .NET, Windows Phone, Metro etc) for your Web API.

- Adds support for creating, updating, partially updating and deleting entities.

- Adds support for querying and manipulating relationships between entities.

- Adds the ability to create relationship links that wire up to your routes.

- Adds support for complex types.

- Adds support for Any/All in $filter.

- Adds the ability to control null propagation if needed (for example to avoid null refs working about LINQ to Objects).

- Refactors everything to build upon the same foundation as WCF Data Services, namely ODataLib.

In fact this is an early preview of a new OData Server stack built to take advantage of Web APIs inherent flexibility and power which compliments WCF Data Services.

This preview ships with a companion OData sample service built using Web API. The sample includes three controllers, that each expose an OData EntitySet with varying capabilities. One is rudimentary supporting just query and create, the other two are more complete, supporting Query, Create, Update, Patch, Delete and Relationships. The first complete example does everything by hand, the second derives from a sample base controller called EntitySetController that takes care of a lot of the plumbing for you and allows you to focus on the business logic.

The rest of this blog post will introduce you to the components that make up this preview and how to stitch them together to create an OData service, using the code from this sample from the ASP.NET Web Stack Sample repository if you want to follow along.

[Queryable] aka supporting OData Query

If you want to support OData Query options, without necessarily supporting the OData Formats, all you need to do it put the [Queryable] attribute on an action that returns either IQueryable<> or IEnumerable<>, like this:

[Queryable]

public IQueryable<Supplier> GetSuppliers()

{

return _db.Suppliers;

}Here _db is an EntityFramework DBContext. If this action is routed to say ~/Suppliers then any OData Query options applied to that URI will be applied by an Action Filter before the result is sent to the client.

For example this: ~/Suppliers?$filter=Name eq ‘Microsoft’

Will pass the result of GetSuppliers().Where(s => s.Name == “Microsoft”) to the formatter.

This all works as it did in earlier previews, although there are a couple of, hopefully temporary, caveats:

- The element type can’t be primitive (for example IQueryable<string>).

- Somehow the [Queryable] attribute must find a key property. This happens automatically if your element type has an ID property, if not you might need to manually configure the model (see setting up your model).

Doing more OData

If you want to support more of OData, for example, the official OData formats or allow for more than just reads, you need to configure a few things.

The ODataService.Sample shows all of this working end to end, and the remainder of this post will talk you through what that code is doing.

Setting up your model

The first thing you need is a model. The way you do this is very similar to the Entity Framework Code First approach, but with a few OData specific tweaks:

- The ability to configure how EditLinks, SelfLinks and Ids are generated.

- The ability to configure how links to related entities are generated.

- Support for multiple entity sets with the same type.

If you use the ODataConventionModelBuilder most of this is done automatically for you, all you need to do is tell the builder what sets you want, for example this code:

ODataModelBuilder modelBuilder = new ODataConventionModelBuilder();

modelBuilder.EntitySet<Product>("Products");

modelBuilder.EntitySet<ProductFamily>("ProductFamilies");

modelBuilder.EntitySet<Supplier>("Suppliers");

IEdmModel model = modelBuilder.GetEdmModel();Builds a model with 3 EntitySets (Products, ProductFamilies and Suppliers) where the EntityTypes of those sets are inferred from the CLR types (Product, ProductFamily and Supplier) automatically, and where EditLinks, SelfLinks and IdLinks are all configured to use the default OData routes.

You can also take full control of the model by using the ODataModelBuilder class, here you explicitly add EntityTypes, Properties, Keys, NavigationProperties and how to route Links by hand. For example this code:

var products = modelBuilder.EntitySet<Product>("Products");

products.HasEditLink(entityContext => entityContext.UrlHelper.Link(

ODataRouteNames.GetById,

new { controller = "Products", id = entityContext.EntityInstance.ID }

));

var product = products.EntityType;

product.HasKey(p => p.ID);

product.Property(p => p.Name);

product.Property(p => p.ReleaseDate);

product.Property(p => p.SupportedUntil);Explicitly adds a EntitySet called Products to the model, configures the EditLink (and unless overridden the SelfLink and IdLink) generation so that if uses the ODataRouteNames.GetById route, and then as you can see the code needed to configure the Key and Properties is very similar to the Code First.

For a more complete example take a look at the GetExplicitEdmModel() method in the sample it builds the exact same model as the ODataConventionModelBuilding by hand.

Setting up the formatters, routes and built-in controllers

To use the OData formats you first need to register an ODataMediaTypeFormatter, which will need the model you built previously:

// Create the OData formatter and give it the model

ODataMediaTypeFormatter odataFormatter = new ODataMediaTypeFormatter(model);// Register the OData formatter

configuration.Formatters.Insert(0, odataFormatter);Next you need to setup some routes to handle common OData requests, below are the routes required for a Read/Write OData model built using the OData Routing conventions that also supports client side code-generation (vital if you want a WCF DS client application to talk to your service).

// Metadata routes to support $metadata and code generation in the WCF Data Service client.

configuration.Routes.MapHttpRoute(

ODataRouteNames.Metadata,

"$metadata",

new { Controller = "ODataMetadata", Action = "GetMetadata" }

);

configuration.Routes.MapHttpRoute(

ODataRouteNames.ServiceDocument,

"",

new { Controller = "ODataMetadata", Action = "GetServiceDocument" }

);// Relationship routes (notice the parameters is {type}Id not id, this avoids colliding with GetById(id)).

// This code handles requests like ~/ProductFamilies(1)/Products

configuration.Routes.MapHttpRoute(ODataRouteNames.PropertyNavigation, "{controller}({parentId})/{navigationProperty}");// Route for manipulating links, the code allows people to create and delete relationships between entities

configuration.Routes.MapHttpRoute(ODataRouteNames.Link, "{controller}({id})/$links/{navigationProperty}");// Routes for urls both producing and handling urls like ~/Product(1), ~/Products() and ~/Products

configuration.Routes.MapHttpRoute(ODataRouteNames.GetById, "{controller}({id})");

configuration.Routes.MapHttpRoute(ODataRouteNames.DefaultWithParentheses, "{controller}()");

configuration.Routes.MapHttpRoute(ODataRouteNames.Default, "{controller}");

One thing to note is the way that the ODataRouteNames.PropertyNavigation route attempts to handle requests to urls like ~/ProductFamilies(1)/Products and ~/Products(1)/Family etc. Essentially a single route for all Navigations. For this to work without requiring a single Action for all navigation properties, we register a custom action selector that will build the Action name using the {navigationProperty} parameter of the PropertyNavigation route:// Register an Action selector that can include template parameters in the name

configuration.Services.Replace(typeof(IHttpActionSelector), new ODataActionSelector());This custom action selector will dispatch a request to GET ~/ProductFamilies(1)/Products, to an action called the GetProducts(int parentId) on the ProductFamilies controller.

At this point our previous GetSupplier action can return OData formats. However it will still produce links that won’t work when dereferenced. To fix this we need to start creating our controllers.

Adding Support for OData requests

In our model we added 3 entitysets: Products, ProductFamilies and Suppliers. So first we create 3 controllers, called ProductController, ProductFamiliesController and SuppliersController respectively.

Queries

By convention your controllers should have a method called Getxxx() that returns IQueryable<T>. Here the T is the CLR type backing your EntitySet, so for example on the ProductsController which is for the Products entityset the T should be Product, and the action should look something like this:

[Queryable]

public IQueryable<Product> GetProducts()

{

return _db.Products;

}As you can see this is the same as before, but now it is participating in a ‘compliant’ OData service. Inside this method you can do all sorts of things, you can add additional filters based on the identity of the caller, you do auditing, you can do logging, you can aggregate data from multiple sources.

There is even a way to drop down a layer so you get to the see the OData query that has been received. Doing this allows you to process the query yourself in whatever way is appropriate for your data sources. For example you might not have an IQueryable you can use. See ODataQueryOptions for more on this.

At this point because you’ve registered a model and you’ve setup routes for $metadata and the OData Service Document, you should be able to use Visual Studio’s Add Service Reference to generate a set of client proxy classes that you could use like this:

foreach(var product in ctx.Products.Where(p => p.Name.StartsWith(“MS”))

{

Console.WriteLine(product.Name);

}WCF DS client will then translate this into a GET request like this:

~/Products$filter=startswith(Name,’MS’)

And everything should just work.

Get by Key

To retrieve an individual item using it’s key in OData you send a GET request to a url like this: ~/Products(1). The ODataRouteNames.GetById route handles requests like this:

public HttpResponseMessage GetById(int id)

{

Supplier supplier = _db.Suppliers.SingleOrDefault(s => s.ID == id);

if (supplier == null)

{

return Request.CreateResponse(HttpStatusCode.NotFound);

}

else

{

return Request.CreateResponse(HttpStatusCode.OK, supplier);

}

}As you can see the code simply attempts to retrieve an item by ID, and then return it with a 200 OK response or return 404 Not Found.

If you have WCF DS proxy classes on the client a request like this:

ctx.Products.Where(p => p.ID == 5).FirstOrDefault();

Should now hit this action.

Inserts (POST requests)

To create a new Entity in OData you POST a new Entity directly to the url that represents the EntitySet, this means that insert requests just like queries end up matching the ODataRouteNames.Default route, however Web API’s action selector picks actions prefixed with Post for POST requests to distinguish from GET requests for Queries:

public HttpResponseMessage PostProduct(Product product)

{

product.Family = null;Product addedProduct = _db.Products.Add(product);

_db.SaveChanges();

var response = Request.CreateResponse(HttpStatusCode.Created, addedProduct);

response.Headers.Location = new Uri(

Url.Link(ODataRouteNames.GetById, new { Controller = "Products", Id = addedProduct.ID })

);

return response;

}Here we simply add the product to our Entity Framework context and then call SaveChanges(). The only interesting bit way we set the location header using the ODataRouteNames.GetById route (i.e. the default OData route for Self/Edit links).

Updates (PUT requests)

To replace an Entity in OData you PUT the updated version of the entity to the uri specified in the EditLink of the entity. This will match the GetById route, but because it is a PUT request it will look for an Action name prefixed with Put.

This example shows how to do this:

public HttpResponseMessage Put(int id, Product update)

{

if (!_db.Products.Any(p => p.ID == id))

{

throw ODataErrors.EntityNotFound(Request);

}

update.ID = id; // ignore the ID in the entity use the ID in the URL.

_db.Products.Attach(update);

_db.Entry(update).State = System.Data.EntityState.Modified;

_db.SaveChanges();

return Request.CreateResponse(HttpStatusCode.NoContent);

}The code is pretty simple, first we verify the entity the client is trying to update actually exists, then we ignore the ID in the entity and instead use the id extracted from the uri of the request, this stops clients updating one entity using the editlink of another entity.

Once all that validation is out of the way we attach the updated entity and tell EF it has been modified before calling save changes and finally returning a No Content response.

As you can see this leverages a helper class called ODataErrors, its job is simply to raise OData compliant errors. The sample uses the EntityNotFound method quite frequently, and it follows the general pattern for failing in an OData compliant way so lets take a peak at its implementation:

public static HttpResponseException EntityNotFound(this HttpRequestMessage request)

{

return new HttpResponseException(

request.CreateResponse(

HttpStatusCode.NotFound,

new ODataError

{

Message = "The entity was not found.",

MessageLanguage = "en-US",

ErrorCode = "Entity Not Found."

}

)

);

}We create a HttpResponseException with a nested Not Found response, the body of which contains an ODataError. By putting an ODataError in the body we get the ODataMediaTypeFormatter to format the body as a valid OData error message as per the content-type requested by the client.

NOTE: Over time we will try to teach the ODataMediaTypeFormatter to handle HttpError as well by using a default translation from HttpError to ODataError.

Partial Updates (PATCH requests)

PUT request have replace semantics which makes updates all or nothing, meaning you have to send all the properties even if only a subset have changed.This is where PATCH comes in, PATCH allows clients to send just the modified properties on the wire, essentially allowing for partial updates.

This example shows an implementation of Patch for Products:

public HttpResponseMessage PatchProduct(int id, Delta<Product> product)

{

Product dbProduct = _db.Products.SingleOrDefault(p => p.ID == id);

if (dbProduct == null)

{

throw new HttpResponseException(HttpStatusCode.NotFound);

}

product.Patch(dbProduct);

_db.SaveChanges();return Request.CreateResponse(HttpStatusCode.NoContent);

}Notice that the method receives a Delta<Product> rather than a Product.

We do this because, if we use a Product directly and set the properties that came on the wire as part of the patch request, we would not be able to tell why a property has a default value. It could be because the request set the property to its default value or it could be because the property hasn’t been set yet. This could lead to mistakenly resetting properties the client doesn’t want reset. Can anyone say ‘data corruption’?

The Delta<Product> class is a dynamic class that acts as a lightweight proxy for a Product. It allows you to set any Product property, but it also remembers which properties you have set. It uses this knowledge when you call .Patch(..) to copy across only properties that have been set.

Given this we simply retrieve the product from the database and then we call Patch to apply the changes requested to the entity in the database. Once that is done we call SaveChanges to push the changes to the database.

Deletes (DELETE requests)

To delete an entity you send a DELETE request to it’s editlink, so this is similar to GetById, Put and Patch, except this time Web API will look for an action prefixed with Delete, something like this:

public HttpResponseMessage DeleteProduct(int id)

{

Product toDelete = _db.Products.FirstOrDefault(p => p.ID == id);

if (toDelete == null)

{

throw ODataErrors.EntityNotFound(Request);

}

_db.Products.Remove(toDelete);

_db.SaveChanges();

return Request.CreateResponse(HttpStatusCode.Accepted);

}As you can see if the entity is found it is simply deleted and we return an Acccepted response.

Following Navigations

To address related entities in OData you typically start with the Uri of a particular entity and then append the name of the navigationProperty. For example to retrieve the Family for Product 15 you do a GET request here: ~/Products(15)/Family.

Implementing this is pretty simple:

public ProductFamily GetFamily(int parentId)

{

return _db.Products.Where(p => p.ID == parentId).Select(p => p.Family).SingleOrDefault();

}This matches the ODataRouteNames.PropertyNavigation which you’ll notices uses {parentId} rather than {id}, if it was id, this method would also be a match for the ODataRouteNames.GetById route, which would cause problems in the action selector infrastructure. By using parentId we sidestep this issue.

Creating and Deleting links

The OData protocol allows you to get and modify links between entities as first class resources. In the preview however we only support modifications.

To create links you POST or PUT a uri (specified in the request body) to a url something like this: ~/Products(1)/$links/Family.

The uri in the body points to the resource you wish to assign to the relationship. So in this case it would be the uri of a ProductFamily you wish to assign to Product.Family (i.e. setting Product 1’s Family).

Whether you POST or PUT depends on the cardinality of the relationship, if it is a Collection you POST, if it is a single item (as Family is) you PUT. Both are mapped through the ODataRouteNames.Link route, which is set up to handle creating links for any relationship. Unlike following Navigations it feels okay to use a single method because the return type always the same, i.e. a No Content response:

public HttpResponseMessage PutLink(int id, string navigationProperty, [FromBody] Uri link)

{

Product product = _db.Products.SingleOrDefault(p => p.ID == id);switch (navigationProperty)

{

case "Family":

// The utility method uses routing (ODataRoutes.GetById should match) to get the value of {id} parameter

// which is the id of the ProductFamily.

int relatedId = Configuration.GetKeyValue<int>(link);

ProductFamily family = _db.ProductFamilies.SingleOrDefault(f => f.ID == relatedId);

product.Family = family;

break;default:

throw ODataErrors.CreatingLinkNotSupported(Request, navigationProperty);

}

_db.SaveChanges();return Request.CreateResponse(HttpStatusCode.NoContent);

}The {navigationProperty} parameter from the route is used to decide what relationship to create, also notice the [FromBody] attribute on the Uri link parameter it is vital otherwise the link will always be null.

If you want to remove a relationship between entities you send a DELETE request to the same URL, the code for that looks like this:

public HttpResponseMessage DeleteLink(int id, string navigationProperty, [FromBody] Uri link)

{

Product product = _db.Products.SingleOrDefault(p => p.ID == id);

switch (navigationProperty)

{

case "Family":

product.Family = null;

break;default:

throw ODataErrors.DeletingLinkNotSupported(Request, navigationProperty);

}

_db.SaveChanges();

return Request.CreateResponse(HttpStatusCode.NoContent);

}Conclusion

If you implement all these methods for each of your OData EntitySets you should have a compliant OData service. Of course this code is only at preview quality so is likely to have bugs, that said I hope you’ll agree this provides a good foundation for creating OData services.

We are currently thinking about adding support for: inheritance, OData actions & functions, etags and JSON Light etc. And of course we want to hear your thoughts so we can incorporate your feedback!

Next up

This blog post doesn’t cover everything you can do with the Preview, you can use:

- ODataQueryOptions rather than [Queryable] to take full control of handling the query.

- ODataResult<> to implement OData features like Server Driven Paging and $inlinecount.

- EntitySetController<,> to simplify creating fully compliant OData entitysets.

I’ll be blogging more about these soon.

•• The WCF Data Services Team reported the availability of a WCF Data Services 5.0.2-rc Prerelease on 8/15/2012:

We’re happy to announce that we’re ready for another public RC that includes a whole bunch of bug fixes.

What is in the prerelease

This prerelease contains a number of bug fixes:

- Fixes NuGet packages to have explicit version dependencies

- Fixes a bug where WCF Data Services client did not send the correct DataServiceVersion header when following a nextlink

- Fixes a bug where projections involving more than eight columns would fail if the EF Oracle provider was being used

- Fixes a bug where a DateTimeOffset could not be materialized from a v2 JSON Verbose value

- Fixes a bug where the message quotas set in the client and server were not being propagated to ODataLib

- Fixes a bug where WCF Data Services client binaries did not work correctly on Silverlight hosted in Chrome

- Allows "True" and "False" to be recognized Boolean values in ATOM (note that this is more relaxed than the OData spec, but there were known cases where servers were serializing "True" and "False")

- Fixes a bug where nullable action parameters were still required to be in the payload

- Fixes a bug where EdmLib would fail validation for attribute names that are not SimpleIdentifiers

- Fixes a bug where the FeedAtomMetadata annotation wasn't being attached to the feed even when EnableAtomMetadataReading was set to true

- Fixes a race condition in the WCF Data Services server bits when using reflection to get property values

- Fixes an error message that wasn't getting localized correctly

Getting the prerelease

The prerelease is only available on NuGet. To install this prerelease NuGet package, you will need to use one of the following commands from the Package Manager Console:

- Install-Package <PackageId> –Pre –Version 5.0.2-r

- Update-Package <PackageId> –Pre –Version 5.0.2-r

Our NuGet package ids are:

- Microsoft.Data.Services.Client (WCF Data Services Client)

- Microsoft.Data.Services (WCF Data Services Server)

- Microsoft.Data.OData (ODataLib)

- Microsoft.Data.Edm (EdmLib)

- System.Spatial (System.Spatial)

Call to action

If you have experienced one of the bugs mentioned above, we encourage you to try out the prerelease bits in a preproduction environment. As always, we’d love to hear any feedback you have!

<Return to section navigation list>

Windows Azure Service Bus, Access Control Services, Caching, Active Directory and Workflow

• Dan Plastina posted AD RMS under the hood: Client bootstrapping (Part 2 of 2) on 8/10/2012 (missed when published):

Although this blog normally features content updates and product announcements from the members who work with me within the Information Protection team here at Microsoft, we do occasionally have the opportunity to feature guest bloggers from AD RMS [Active Directory Rights Management Services] community whose expertise we think you will really enjoy and benefit from hearing.

In this case, I'm pleased to offer you Part 2 in the story on AD RMS bootstrapping that Alexey Goldbergs of Crypto-Pro first presented on our team blog a little while ago. It gives a lot of great insight to what is happening at a deeper level at the AD RMS client as users access rights-protected content.

Though we like to pride ourselves on making AD RMS something you shouldn't have to know all the "under the hood" workings of to make great use of it, for those who enjoy knowing more of that sort of thing, Alexey can and will provide you all the intimate technical details.

Enjoy!

- Dan

Hey, what's up? After a very looooong delay it's Alexey Goldbergs of Crypto-Pro here with you once again to give you the rest of a deeper look into the AD RMS client bootstrapping process.

As I mentioned in my previous post, AD RMS under the hood: Server bootstrapping (Part 1 of 2), this is the second part of my discussion on how the bootstrapping process occurs, from the client perspective, which consists of acquiring user certificates, including the Rights Account Certificate (RAC). In some materials you might also see the term Group Identity Certificate (GIC) but keep in mind if you do that it is referring to the same thing. (You can check out this post by Enrique Saggese to learn more about what the RAC is and how it is related to other AD RMS entities.)

RAC acquisition starts right after the SPC creation. This is the first time when an AD RMS client communicates with the AD RMS Server and the RAC is the first certificate that isn’t self-signed but is signed by AD RMS Server certificate (SLC) which was created during the AD RMS Server bootstrapping process described in Part 1.

But before the client can receive the RAC it should find the "right" AD RMS Certification Server. So this is how the service discovery process looks like in a typical scenario (Note that this is the sequence for a client trying to protect content for the first time. The process is slightly different for a client that’s consuming content before it is activated for the first time):

- Client checks if it has been manually configured with registry settings for activation.

○ For x86 clients: HKEY_LOCAL_MACHINE\Software\Microsoft\MSDRM\ServiceLocation.

○ For 64 bit clients running 32 bit applications: HKEY_LOCAL_MACHINE\Software\Wow6432Node\Microsoft\MSDRM\ServiceLocation.

You can find more details about key values for registry overrides in this relatedTechNetarticle, AD RMS Client Requirements.

If these registry keys are empty then the client sends the request for RMS Service Connection Point (SCP) to Active Directory, which returns the URL for the Intranet Certification URL configured by AD RMS.

After the client has used this URL to acquire a RAC, the client communicates with the AD RMS Certification "ServiceLocator" service to ask for the AD RMS Licensing Service URL. This service will be used for getting the next certificate - the Client Licensor Certificate (CLC). This URL could also be manually configured in the client with registry keys instead of using automatic discovery.

Going back to RAC acquisition, as soon as the client finds the certification server it goes through the following sequence:

- Client sends request for the RAC and it includes its Secure Process Certificate (SPC) to the AD RMS Certification Server (<http/https>://<AD_RMS_cluster_name>/_wmcs/certification/certification.asmx).

- The server extracts machine public key from the SPC sent by the client.

- The user has already authenticated against the web service, so the server users the user information to query the users email address from Active Directory.

- The server checks if the user already has a RAC in the AD RMS database (as you will see it the next steps the RAC is stored there as an encrypted blob) and if so gets it from this database.

- If the user doesn’t have a RAC in AD RMS database then the server generates the key pair which could be up to RSA 2048-bit if the cluster is configured to use CryptographicMode 2 instead of default RSA 1024-bit.