Generating Big Data for Use with SQL Azure Federations and Apache Hadoop on Windows Azure Clusters

Updated 1/8/2011 3:30 PM PST: Major additions and edits. See “Update History” section at the end of this post.

Background

Most tutorials for and demos of SQL Azure Federations use tables having only a few rows. Similarly, sample data for Apache Hadoop on Windows Azure also doesn’t qualify for “Big Data” status.

I wanted to use larger tables with a variety of SQL Server data types for testing these new SQL Azure and Windows Azure technologies. I also wanted to determine best practices for creating large data sets, as well as discover and deal with problems creating them. For SQL Azure Federations, I wanted to demonstrate issues with federating SQL Azure tables that don’t have a column innately suited to act as a federation key.

Fortunately, I had enabled collecting Windows Azure Diagnostics for my OakLeaf Systems Azure Table Services Sample Project in November 2011. Following is the source code and configuration data shown in the “Setting up Windows Azure Diagnostics” section of my Adding Trace, Event, Counter and Error Logging to the OakLeaf Systems Azure Table Services Sample Project post of 12/5/2010, updated 11/20/2011:

As you can see from the preceding code’s Windows Performance Counters section, data is available for the following six counters (shown in the sequence of their appearance in the data):

- \Network Interface(Microsoft Virtual Machine Bus Network Adapter _2)\Bytes Sent/sec

- \Network Interface(Microsoft Virtual Machine Bus Network Adapter _2)\Bytes Received/sec

- \ASP.NET Applications(__Total__)\Requests/Sec

- \TCPv4\Connections Established

- \Memory\Available Mbytes

- \Processor(_Total)\% Processor Time

I specified a one-minute interval for transferring performance counter data (sampled in five-second increments) to Windows Azure table storage. This provided me with more than 1 million rows in the more than a year that has elapsed since I started collecting the data.

Over the New Year holiday, I began downloading the WADPerformanceCountersTable from my storage account in Microsoft’s South Central US Data Center to my local development machine. I encountered a problem when using the Cerebrata’s newly updated Windows Azure Diagnostics manager with very large files, so I changed to the company’s Cloud Storage Studio.

I specified in the Download Table Data dialog a new text file every 100 MB to create files that I could view with Notepad or (much more quickly) Textpad, and analyze with Excel:

Here’s first screen of data from the first rollover file:

Following is a detail view of the record highlighted above:

One 1,000-row page (258 kB of data) downloads about every five seconds over my AT&T commercial DSL line (3.5 Mbps down). According to Fiddler2, the size of the OData document returned for a page is 1,544,268 bytes.

Following are the HTTP request headers:

GET https://oakleaf.table.core.windows.net/WADPerformanceCountersTable?timeout=30&$top=1000&NextPartitionKey=1!28!MDYzNDQ4OTI4NTgwMDAwMDAwMA--&NextRowKey=1!108!MzNkNjEyZTFhYmE2NDNiNzg1NDI0MTVlMTViNjQ2YTFfX19XZWJSb2xlMV9fX1dlYlJvbGUxX0lOXzFfX18wMDAwMDAwMDAxMDM4MDkwMjg1 HTTP/1.1

Content-Type: application/atom+xml

x-ms-date: Thu, 05 Jan 2012 01:15:46 GMT

authorization: SharedKey oakleaf:LaDfsreMvTkisEgJxG9UiiVmqTHZI3vSVibSJSvbAeA=

x-ms-version: 2009-09-19

DataServiceVersion: 1.0;NetFx

MaxDataServiceVersion: 1.0;NetFx

Host: oakleaf.table.core.windows.net

Connection: Keep-Alive

and HTTP response headers:

HTTP/1.1 200 OK

Cache-Control: no-cache

Content-Type: application/atom+xml;charset=utf-8

Server: Windows-Azure-Table/1.0 Microsoft-HTTPAPI/2.0

x-ms-request-id: bc619aaa-82cd-469f-9155-c2a3d8ca32e5

x-ms-version: 2009-09-19

x-ms-continuation-NextPartitionKey: 1!28!MDYzNDQ4OTI5MDAwMDAwMDAwMA--

x-ms-continuation-NextRowKey: 1!108!MzNkNjEyZTFhYmE2NDNiNzg1NDI0MTVlMTViNjQ2YTFfX19XZWJSb2xlMV9fX1dlYlJvbGUxX0lOXzFfX18wMDAwMDAwMDAxMDM4MDkwMjc3

Date: Thu, 05 Jan 2012 01:15:46 GMT

Content-Length: 1544268

with the NextPartitionKey and NextRowKey continuation values for the paging operation.

Creating the WADPerfCounters Table and Indexes

Following is the T-SQL script to create the table with a composite, clustered primary key index on the PartitionKey and RowKey columns, which comprise the unique identifier for all WAD tables in the AzureDiagnostics database, and non-clustered indexes on the Timestamp and CounterValue columns:

USE [AzureDiagnostics]

GO

/****** Object: Table [dbo].[WADPerfCounters] Script Date: 01/04/2012 11:38:05 ******/

IF EXISTS (SELECT * FROM sys.objects WHERE object_id = OBJECT_ID(N'[dbo].[WADPerfCounters]') AND type in (N'U'))

DROP TABLE [dbo].[WADPerfCounters]

GO

USE [AzureDiagnostics]

GO

/****** Object: Table [dbo].[WADPerfCounters] Script Date: 01/04/2012 11:38:05 ******/

SET ANSI_NULLS ON

GO

SET QUOTED_IDENTIFIER ON

GO

SET ANSI_PADDING ON

GO

CREATE TABLE [dbo].[WADPerfCounters](

[PartitionKey] [bigint] NOT NULL,

[RowKey] [varchar](100) NOT NULL,

[Timestamp] [datetime2](7) NOT NULL,

[EventTickCount] [bigint] NOT NULL,

[DeploymentId] [varchar](50) NOT NULL,

[Role] [varchar](20) NOT NULL,

[RoleInstance] [varchar](20) NOT NULL,

[CounterName] [varchar](100) NOT NULL,

[CounterValue] [decimal](12,6) NOT NULL,

CONSTRAINT [PK_WADPerfCounters] PRIMARY KEY CLUSTERED

(

[PartitionKey] ASC,

[RowKey] ASC

)WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]

) ON [PRIMARY]

GO

/****** Object: Index [IX_Timestamp] Script Date: 01/05/2012 09:27:03 ******/

CREATE NONCLUSTERED INDEX [IX_Timestamp] ON [dbo].[WADPerfCounters]

(

[Timestamp] ASC

)WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, SORT_IN_TEMPDB = OFF, IGNORE_DUP_KEY = OFF, DROP_EXISTING = OFF, ONLINE = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]

GO

CREATE NONCLUSTERED INDEX [IX_CounterValue] ON [dbo].[WADPerfCounters]

(

[CounterValue] ASC

)WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, SORT_IN_TEMPDB = OFF, IGNORE_DUP_KEY = OFF, DROP_EXISTING = OFF, ONLINE = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]

GO

SET ANSI_PADDING OFF

GO

Testing the T-SQL BULK INSERT Command with a 1,000-row Sample File

The T-SQL BULK INSERT statement converts a file data directly into an OLE DB rowset. The OLE DB rowsets are inserted into the target table by the query processor, which plans and optimizes each operation. Following is the statement to process 1000 rows of data (the first row contains column header data):

SET DATEFORMAT ymd

BULK INSERT dbo.WADPerfCounters

FROM 'C:\Users\Administrator\My Documents\Cerebrata\WADPerformanceCountersTable-Page-1.txt'

WITH

(

FIRSTROW = 2,

LASTROW = 1001,

BATCHSIZE = 1000,

FIELDTERMINATOR = '\t'

)

The FIELDTERMINATOR argument isn’t required because the default value is \t (tab). The BATCHSIZE argument isn’t germane for a small number of rows. According to Books Online’s “Managing Batches for Bulk Import” topic:

… By default, all the rows in a data file are imported as a single batch of unknown size in a single transaction. In this case, if the import operation fails before completion, the entire transaction is rolled back, and no data is added to the destination table. The failed operation must then be restarted at the beginning of the data file.

Importing a large data file as a single batch can be problematic, so bcp and BULK INSERT let you import data in a series of batches, each of which is smaller than the data file. Each batch is imported and logged in a separate transaction, and after a given transaction is committed, the rows imported by that transaction are committed. If the operation fails, only rows imported from the current batch are rolled back, and you can resume importing data starting at the beginning of the failed batch rather than at the beginning of the data file.

Here’s the T-SQL SELECT statement for the WADPerfCounters table’s Select Top 1000 Rows context menu choice:

/****** Script for SelectTopNRows command from SSMS ******/

SELECT TOP 1000 [PartitionKey]

,[RowKey]

,[Timestamp]

,[EventTickCount]

,[DeploymentId]

,[Role]

,[RoleInstance]

,[CounterName]

,[CounterValue]

FROM [AzureDiagnostics].[dbo].[WADPerfCounters]

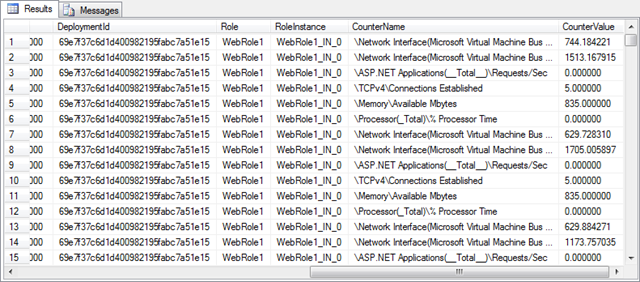

The following screen capture shows the first four columns of the first 15 rows of the test table’s data:

and the remaining five columns:

Problems with Header Rows added for Every Page of 1,000 Rows

Limiting the resultset to 1,001 rows in the preceding tests to return a header row and 1,000 data rows disguises a problem that evidences itself when you remove the LASTROW = 1001 argument to process an entire 100+ MB rollover text file. :

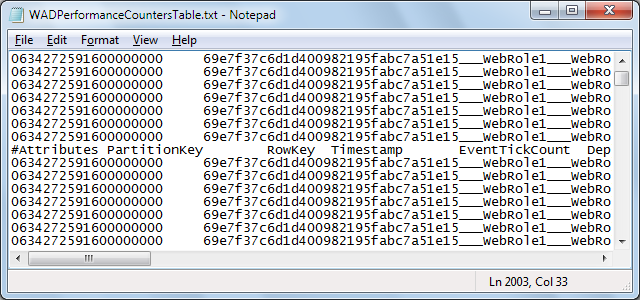

As you can see from the preceding messages, errors occur every 1001 rows. Examining the text file in Notepad displays a additional #Attributes (column headings) list at row 1002:

row 2003 and so on:

If you use Notepad’s Replace feature, copy the line to the Replace What box and leave the Replace With box empty, the result is the same number of empty lines, which don’t throw errors:

Note: Books Online’s “Managing Batches for Bulk Import” topic doesn’t mention that empty rows of text files are disregarded by the operation.

However, you will see occasional non-fatal truncation errors for the Counter Value column:

Changing the precision and scale arguments from 12,6 to 16,8 solved the initial truncation problem and doesn’t increase number of bytes of storage. However, inspection of the data in Excel doesn’t indicate that a problem should have occurred with the 12,6 precision and scale.

Note: See the Attempting to Deal with CounterValue Truncation Problems section below.

The last message displays the number of rows, which represents an integer number of pages (398 for this example):

Interestingly, errors resulting from the presence of rows with column header values don’t cause batches to roll back, which conflicts with commit and rollback details in Books Online’s “Managing Batches for Bulk Import” topic quoted above. The same number of pages/rows return:

A local copy of 64-bit SQL Server 2008 R2 Enterprise Edition executes the BULK INSERT query in about 75 seconds with the two non-clustered indexes, giving a 5,307 rows/second insert rate.

Dealing with Non-Numeric Columns as Federation Keys

The present version of SQL Azure Federations limits the data type of the federation key to int, bigint, uniqueidentifier or varbinary(n), according to MSDN’s CREATE FEDERATION (SQL Azure Database) topic:

The type name for the federation key type. The type must be of type INT, BIGINT, UNIQUEIDENTIFIER or VARBINARY(n), where n can be a maximum of 900.

Using the six values of the CounterName column as the federation key requires adding a surrogate CounterId column of datatype int (nulls allowed) with values of 1 to 6 to the existing clustered primary key specification of the federated databases by altering the CONSTRAINT arguments as emphasized below:

CONSTRAINT [PK_WADPerfCounters] PRIMARY KEY CLUSTERED(

[PartitionKey] ASC,

[RowKey] ASC,

[CounterId] ASC

)WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]

) ON [PRIMARY]

See MSDN’s CREATE TABLE (SQL Azure Database) topic:

All unique and clustered indexes on the federated table must contain the federation column. The order in which the federation column appears in the index can be different from the key ordinal in the federation.

The upshot of adding the surrogate CounterId column is that it must be populated by a CASE clause in an UPDATE statement:

UPDATE dbo.WADPerfCounters SET CounterId =

(CASE

WHEN CounterName = '\Network Interface(Microsoft Virtual Machine Bus Network Adapter _2)\Bytes Sent/sec' THEN 1

WHEN CounterName = '\Network Interface(Microsoft Virtual Machine Bus Network Adapter _2)\Bytes Received/sec' THEN 2

WHEN CounterName = '\ASP.NET Applications(__Total__)\Requests/Sec' THEN 3

WHEN CounterName = '\TCPv4\Connections Established' THEN 4

WHEN CounterName = '\Memory\Available Mbytes' THEN 5

WHEN CounterName = '\Processor(_Total)\% Processor Time' THEN 6

ELSE 0

END)

FROM dbo.WADPerfCounters

The ELSE 0 condition lets you delete erroneous rows which would cause errors when processed for addition to federation members.

The preceding statement executed on 3,880,000 columns in about 45 seconds. Here’s the first few rows of the result:

Adding the CounterId column before executing BULKINSERT statements requires specifying a format file in either XML or non-XML format because the table’s columns complement doesn’t match that of the text files. To create a basic, non-XML file named WADPerfCounters-f-c.fmt, execute the following statement in the Command Window:

bcp AzureDiagnostics..WADPerfCounters format nul -c -f C:\WADPerfCounters-f-c.fmt -r\n -T

Here’s the content of the non-XML version, with column spacing edited to fit:

10.010

1 SQLCHAR 0 21 "\t" 1 PartitionKey ""

2 SQLCHAR 0 100 "\t" 2 RowKey SQL_Latin1_General_CP1_CI_AS

3 SQLCHAR 0 30 "\t" 3 Timestamp ""

4 SQLCHAR 0 21 "\t" 4 EventTickCount ""

5 SQLCHAR 0 50 "\t" 5 DeploymentId SQL_Latin1_General_CP1_CI_AS

6 SQLCHAR 0 20 "\t" 6 Role SQL_Latin1_General_CP1_CI_AS

7 SQLCHAR 0 20 "\t" 7 RoleInstance SQL_Latin1_General_CP1_CI_AS

8 SQLCHAR 0 100 "\t" 8 CounterName SQL_Latin1_General_CP1_CI_AS

9 SQLCHAR 0 41 "\t" 9 CounterValue ""

10 SQLCHAR 0 12 "\r\n" 10 CounterId ""

If you’ve installed a beta or RC version of SQL Server 2012 (“Denali”), this file requires editing to change the bcp version number from 11.0 to that of SQL Server 2008 = 10.0.

To create the XML version of the Format file, named WADPerfCounters-f-c.xml, execute the following statement in the Command Window:

bcp AzureDiagnostics..WADPerfCounters format nul -c -f C:\WADPerfCounters-f-c.xml -x -r\n -T

Here’s the content:

<?xml version="1.0"?>

<BCPFORMAT xmlns="http://schemas.microsoft.com/sqlserver/2004/bulkload/format" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance">

<RECORD>

<FIELD ID="1" xsi:type="CharTerm" TERMINATOR="\t" MAX_LENGTH="21"/>

<FIELD ID="2" xsi:type="CharTerm" TERMINATOR="\t" MAX_LENGTH="100" COLLATION="SQL_Latin1_General_CP1_CI_AS"/>

<FIELD ID="3" xsi:type="CharTerm" TERMINATOR="\t" MAX_LENGTH="30"/>

<FIELD ID="4" xsi:type="CharTerm" TERMINATOR="\t" MAX_LENGTH="21"/>

<FIELD ID="5" xsi:type="CharTerm" TERMINATOR="\t" MAX_LENGTH="50" COLLATION="SQL_Latin1_General_CP1_CI_AS"/>

<FIELD ID="6" xsi:type="CharTerm" TERMINATOR="\t" MAX_LENGTH="20" COLLATION="SQL_Latin1_General_CP1_CI_AS"/>

<FIELD ID="7" xsi:type="CharTerm" TERMINATOR="\t" MAX_LENGTH="20" COLLATION="SQL_Latin1_General_CP1_CI_AS"/>

<FIELD ID="8" xsi:type="CharTerm" TERMINATOR="\t" MAX_LENGTH="100" COLLATION="SQL_Latin1_General_CP1_CI_AS"/>

<FIELD ID="9" xsi:type="CharTerm" TERMINATOR="\t" MAX_LENGTH="41"/>

<FIELD ID="10" xsi:type="CharTerm" TERMINATOR="\r\n" MAX_LENGTH="12"/>

</RECORD>

<ROW>

<COLUMN SOURCE="1" NAME="PartitionKey" xsi:type="SQLBIGINT"/>

<COLUMN SOURCE="2" NAME="RowKey" xsi:type="SQLVARYCHAR"/>

<COLUMN SOURCE="3" NAME="Timestamp" xsi:type="SQLDATETIME2" SCALE="7"/>

<COLUMN SOURCE="4" NAME="EventTickCount" xsi:type="SQLBIGINT"/>

<COLUMN SOURCE="5" NAME="DeploymentId" xsi:type="SQLVARYCHAR"/>

<COLUMN SOURCE="6" NAME="Role" xsi:type="SQLVARYCHAR"/>

<COLUMN SOURCE="7" NAME="RoleInstance" xsi:type="SQLVARYCHAR"/>

<COLUMN SOURCE="8" NAME="CounterName" xsi:type="SQLVARYCHAR"/>

<COLUMN SOURCE="9" NAME="CounterValue" xsi:type="SQLDECIMAL" PRECISION="16" SCALE="8"/>

<COLUMN SOURCE="10" NAME="CounterId" xsi:type="SQLINT"/>

</ROW>

</BCPFORMAT>

Notice the the XML version is version-independent.

The alternative to specifying a Format file is to import all data required for all federation members, alter the table’s schema by adding the CounterId column and then running the UPDATE statement to populate the column values. This appears to be the most efficient choice because it eliminates the Format file steps. It’s not necessary for the source table to include CounterId column in its primary key because it doesn’t contribute uniqueness.

Dealing with SQL Azure Database Size Issues

My MSDN Premium subscription benefit for Windows Azure resources includes five free 1-GB SQL Azure Web databases. The plan was to federate the database by CounterName value into six 1-GB databases, one of which I would pay for. This plan made it necessary for me to add a bit less than 6 GB of data and index to the source WADPerfCounters table, which will serve as the source table when uploading to SQL Azure federation members.

The following table lists Row Count, Data Size and Index Size in MB from the WADPerfCounters table’s properties page, as well as MDF, Log, and Total sizes of the storage files in MB from the AzureDiagnostics database properties page:

| Files | Row Count | Data Size | Index Size | MDF Size | Log Size | Total Size |

| 0 | 0 | 0.000 | 0.000 | 2 | 1 | 3 |

| 1 | 398,000 | 94.227 | 104.391 | 201 | 4 | 205 |

| 2 | 796,000 | 188.453 | 208.734 | 399 | 5 | 403 |

| 3 | 1,194,000 | 282.672 | 313.078 | 486 | 1 | 487 |

| 4* | 1,592,000 | 376.898 | 10.188 | 486 | 2 | 488 |

| 5 | 1,990,000 | 471.117 | 12.727 | N/A | N/A | N/A |

| 6 | 2,388,000 | 565.344 | 15.273 | N/A | N/A | N/A |

| 7 | 2,786,000 | 659.570 | 17.820 | 777 | 2 | 778 |

| 8 | 3,184,000 | 753.793 | 20.367 | 873 | 2 | 875 |

| 9 | 3,582,000 | 848.016 | 22.914 | 970 | 3 | 971 |

| 10 | 3,980,000 | 942.252 | 25.469 | 1,068 | 3 | 1071 |

| 20** | 7,959,000 | 2,025.578 | 101.844 | 2176 | 3399 | 5574 |

* Deleted non-clustered indexes on Timestamp and CounterName tables to reduce database size and increase BULK INSERT performance. Dropping the non-clustered indexes reduced time to insert the 398,000 rows from ~75 to ~10 seconds = ~40,000 rows/second.

Cells marked N/A were not reported because database storage requirements were not refreshed to display correct values (inadvertently).

** Although the CreateWADPerfCountersWithDrop.script includes a

ALTER DATABASE [AzureDiagnostics]

SET RECOVERY SIMPLE

GO

statement, adding and populating the CounterId column generates a large log file. Database sizes with more than 20 files include CounterId values.

Note: The size of the 3,980,000-row database’s MDF file with the CounterId column populated is 1,111 MB and the Log file is 1 MB for a total of 1,112 MB, an increase of 43 MB.

Validating Measured Database Size by Calculation

Ryan Dunn described Calculating the Size of Your SQL Azure Database in a 3/4/2010 blog post using the following Dynamic Management View expression to return size in MB:

SELECT SUM(reserved_page_count) * 8.0 / 1024

FROM sys.dm_db_partition_stats

which returns 1066.117187 for 3,980,000 rows. That value is very close to the 1,068 MB reported by the database Files properties page’s Initial Size property value shown above.

Note: With the CounterId column populated, the size is 1110.5625 MB.

See the MSDN Library’s Monitoring SQL Azure Using Dynamic Management Views for more information.

The following table provides the length of the WADPerfCounters table’s nine columns, based on data in Books Online for fixed-length data types and the DATALENGTH(ColumnName) function for varchar data:

| Column Name | Data Type | Fixed Bytes | Variable Bytes | |

| Partition Key | bigint | 8 | ||

| Row Key | varchar(100) | 81 | ||

| Timestamp | datetime2(7) | 8 | ||

| EventTickCount | bigint | 8 | ||

| DeploymentId | varchar(50) | 32 | ||

| Role | varchar(20) | 8 | ||

| RoleInstance | varchar(20) | 13 | ||

| CounterName | varchar(100) | 51 (50.67 avg.) | ||

| CounterValue | decimal(16,8) | 9 | ||

| [CounterId] | int | 4 |

Assuming that SQL Azure Federations don’t add significant overhead to the tables, it should be safe to insert rows from the equivalent of nine WADPerfCountersTable*.txt files to the federation member tables. The six federation members (based on CounterName as the federation key) requires adding 6 * 9 = 54 WADPerfCountersTable*.txt files to the WADPerfCounters table.

Attempting to Deal with CounterValue Truncation Problems

Processing the WADPerformanceCountersTable-Page-3.txt file resulted in a report of 10 truncation errors in sequential rows 178746 through 178755. More than 10 truncation errors appears to abort the remaining BULK INSERT batches, not just the batch in which the errors occur. Following are the last messages from a WADTestTable script I wrote to test a single file:

In an attempt to determine the source of the errors, I opened WADPerformanceCountersTable-Page-3.txt in Excel 2010 so I could view the offending rows.

Excel row counts will be inflated by skipped row headers (178 for this example). To remove all 398 row headers, I sorted the file in descending order of CounterValue and deleted all rows with header names at the top of the worksheet:

Clearing the sort didn’t work, so I resorted by Partition Key and Row Key and scrolled to row 178746 through 178755, which didn’t show any evidence of values that would be truncated by 16,8 precision and scale values:

Excel doesn’t support changing floating point representations of bigint property values nor decimal seconds for datetime values, so it’s not practical to save the file and retest it by insertion.

Subsequent tests of files WADPerformanceCountersTable-Page-4.txt through WADPerformanceCountersTable-Page-11.txt, showed no further data truncation problems. Thus addition of rows from WADPerformanceCountersTable-Page-3.txt were skipped.

Creating and Assessing the Schema of an AzureDiagnostics.bacpac File for SQL Azure Compatibility

I followed the instructions in Ke Yang’s Microsoft Codename "SQL Azure Compatibility Assessment" Tutorial post updated 1/6/2012, installed the SQL Server Data Tools (SSDT) CTP4, started a SQL Server project in Visual Studio 2010, imported the AzureDiagnostics database, and chose Build | Build AzureDiagnostics.

I then logged into the service portal at https://assess.sql.azure.com/ with my Windows Live ID, clicked Brows, navigated to and opened the *.bacpac file:

I clicked Upload to start the assessment and waited about 15 minutes for the assessment to complete:

Clicking view produced the following message:

I added a copy of the AzureDiagnostics.dacpac file to my Windows SkyDrive account here

Update History

Updated 1/8/2011 3:30 PM PST: Corrected 1,067 MB log file size for 3,980,000 rows to 3 MB and total size to 1,071 MB, edited the last paragraph of the “Dealing with SQL Azure Database Size Issues” section and added “Validating Measured Database Size by Calculation” section. Added a detailed “Dealing with Non-Numeric Columns as Federation Keys” section.

Updated 1/7/2012 4:00 PM PST: Created an an AzureDiagnostics.dacpac file and assessed compatibility of the AzureDiagnostics’ dbo schema with SQL Azure using the new SQL Azure Compatibility Assessment tool (see the “Creating and Assessing the Schema of an AzureDiagnostics.bacpac File for SQL Azure Compatibility” at the end of this post.) Uploaded AzureDiagnostics.dacpac to my Windows SkyDrive account here.

Updated 1/7/2012 1:00 PM PST: You can download zipped versions of the 10 files used to create the initial source database for this post from my Windows SkyDrive account here. Average size of the *.zip files is about 4.7 MB each. The downloadable files include the *.sql scripts for creating and populating the WADPerformanceCounters table in a database named AzureDiagnostics.

Warning: Don’t use WADPerformanceCountersTable-Page-3.txt from WADPerformanceCountersTable-Page-3.zip; it throws data truncation errors, as described in the “Attempting to Deal with CounterValue Truncation Problems” section near the end of the post.

0 comments:

Post a Comment