Windows Azure and Cloud Computing Posts for 1/2/2012+

| A compendium of Windows Azure, Service Bus, EAI & EDI Access Control, Connect, SQL Azure Database, and other cloud-computing articles. |

• Updated 1/3/2012 3:00 PM PST with articles marked • by George Huey, Cihan Biyikoglu, Avkash Chauhan, Joseph Fultz, Jeff Barr and Himanshu Singh.

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Windows Azure Blob, Drive, Table, Queue and Hadoop Services

- SQL Azure Database and Reporting

- Marketplace DataMarket, Social Analytics and OData

- Windows Azure Access Control, Service Bus, Caching and Workflow

- Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch and Entity Framework v4+

- Windows Azure Infrastructure and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

Azure Blob, Drive, Table, Queue and Hadoop Services

• Avkash Chauhan (@avkashchauhan) offered yet another member of his Apache Hadoop series: Apache Hadoop on Windows Azure Part 10 - Running a JavaScript Map/Reduce Job from Interactive JavaScript Console of 11/3/2012:

Microsoft’s distribution of Apache Hadoop on Windows Azure lets you run JavaScript Map/Reduce jobs directly from web based Interactive JavaScript Console. To start with, let’s write a JavaScript code for Map/Reduce wordcount jobs as below:

FileName #Wordcount.js:

var map = function (key, value, context) {

var words = value.split(/[^a-zA-Z]/);

for (var i = 0; i < words.length; i++) {

if (words[i] !== "") {

context.write(words[i].toLowerCase(), 1);

}

}

};var reduce = function (key, values, context) {

var sum = 0;

while (values.hasNext()) {

sum += parseInt(values.next());

}

context.write(key, sum);

};

After that you can upload this wordcount.js file to HDFS and verify it as below:

js> fs.put()js> #lsFound 2 itemsdrwxr-xr-x - avkash supergroup 0 2012-01-02 20:25 /user/avkash/.oink-rw-r--r-- 3 avkash supergroup 418 2012-01-02 20:17 /user/avkash/wordcount.js

Now you can create a folder name “wordsfolder” and upload a few txt files. We will use this folder as input folder to run the word count map/reduce job.

js> #lsFound 3 itemsdrwxr-xr-x - avkash supergroup 0 2012-01-02 20:25 /user/avkash/.oink-rw-r--r-- 3 avkash supergroup 418 2012-01-02 20:17 /user/avkash/wordcount.jsdrwxr-xr-x - avkash supergroup 0 2012-01-02 20:22 /user/avkash/wordsfolderjs> #ls wordsfolder

Found 3 items

-rw-r--r-- 3 avkash supergroup 1395667 2012-01-02 20:22 /user/avkash/wordsfolder/davinci.txt

-rw-r--r-- 3 avkash supergroup 674762 2012-01-02 20:22 /user/avkash/wordsfolder/outlineofscience.txt

-rw-r--r-- 3 avkash supergroup 1573044 2012-01-02 20:22 /user/avkash/wordsfolder/ulysses.txt

Now we can run the JavaScript Map/Reduce job to count the top 15 words in descending order in the folder name “top15words” as below:js> from("wordsfolder").mapReduce("wordcount.js", "word, count:long").orderBy("count DESC").take(15).to("top15words")View LogIf you click the “View Log” link above in a new tab, you can see the activity about Map/Reduce job which I have added at the end of this blog:

Finally when the job is completed, the following folder “top15words” will be created as below:

js> #lsFound 4 itemsdrwxr-xr-x - avkash supergroup 0 2012-01-02 20:26 /user/avkash/.oinkdrwxr-xr-x - avkash supergroup 0 2012-01-02 20:31 /user/avkash/top15words-rw-r--r-- 3 avkash supergroup 418 2012-01-02 20:17 /user/avkash/wordcount.jsdrwxr-xr-x - avkash supergroup 0 2012-01-02 20:22 /user/avkash/wordsfolderNow we can read the data from the “top15words” folder:js> file = fs.read("top15words")the 47430of 25263and 18664a 14213in 13125to 12634is 7876that 7057it 7005on 5081he 5037with 4931his 4314as 4289by 4119Let’s parse the data also:

js> data = parse(file.data,"word, count:long")[0: {word: "the"count: 47430}1: {word: "of"count: 25263}2: {word: "and"count: 18664}3: {word: "a"count: 14213}4: {word: "in"count: 13125}5: {word: "to"count: 12634}6: {word: "is"count: 7876}7: {word: "that"count: 7057}8: {word: "it"count: 7005}9: {word: "on"count: 5081}10: {word: "he"count: 5037}11: {word: "with"count: 4931}12: {word: "his"count: 4314}13: {word: "as"count: 4289}14: {word: "by"count: 4119}]

Finally lets create a line graph from the results:

See the original post for the Map/Reduce Job results.

Avkash Chauhan (@avkashchauhan) continued his Apache Hadoop series with Apache Hadoop on Windows Azure Part 9 – Using Interactive JavaScript for Data Visualization on 1/2/2012:

Apache Hadoop on Windows Azure is integrated with a web-based interactive JavaScript console, which allows you to:

- Perform HDFS operations, including uploading/reading files to/from the HDFS

- Run MapReduce programs from .js scripts or JAR files, and monitor their progress

- Run a Pig job specified using a fluent query syntax in JavaScript, and monitor its progress

- Visualize data with graphs built using HTML5

Once you have built your Hadoop cluster on Windows Azure, you can launch Interactive JavaScript web console directly as below:

After the Interactive JavaScript is launched you can enter a set of commands or your own JavaScript directly from the browser:

For our example we will do the following:

- Upload a data file to HDFS

- Run JavaScript to parse the data

- Run Graph Visualizer with parsed data

Let’s collect some real time data about mobile market share for 2011:

http://www.netmarketshare.com/operating-system-market-share.aspx?qprid=9&qpcustomb=1

Create a txt file locally as mobilesharedata.txt locally:

iOS 52.10

JavaME 21.27

Android 16.29

Symbian 5.76

BlackBerry 3.51

Other 1.07Upload this file to HDFS from Interactive JavaScript console:

js> fs.put()

File uploaded.

Verify the file is uploaded:

js> #ls

Found 2 items

drwxr-xr-x - avkash supergroup 0 2012-01-02 20:37 /user/avkash/.oink

-rw-r--r-- 3 avkash supergroup 81 2012-01-02 20:52 /user/avkash/mobilesharedata.txt

Let’s read the data this

js> file = fs.read("/user/avkash/mobilesharedata.txt") iOS 52.10 JavaME 21.27 Android 16.29 Symbian 5.76 BlackBerry 3.51 Other 1.07Now parse the data as below:js> data = parse(file.data, "OS, MarketShare:long") [ 0: { OS: "iOS" MarketShare: 52 } 1: { OS: "JavaME" MarketShare: 21 } 2: { OS: "Android" MarketShare: 16 } 3: { OS: "Symbian" MarketShare: 5 } 4: { OS: "BlackBerry" MarketShare: 3 } 5: { OS: "Other" MarketShare: 1 } ]Set the graph visualization settings as below:

js> options = { title: "Mobile OS Market Share in 2011", orientation: 20, x: "OS", y: "MarketShare" } { title: "Mobile OS Market Share in 2011" orientation: 20 x: "OS" y: "MarketShare" }Now let’s visualize the data in Bar Graph:

js> graph.bar(data, options)Now let’s visualize the data in Pie Graph:

js> graph.pie(data, options)

Avkash Chauhan (@avkashchauhan) continued his Apache Hadoop series with Apache Hadoop on Windows Azure Part 8 – Hadoop Map/Reduce Administration from command line in Cluster on 1/1/2012:

After you created your Hadoop cluster in Windows Azure, you can remote into it to start the Map/Reduce administration. Most of the processing log & HDFS data is already available over port 50030 and 50070 however, you can run bunch of standard Hadoop commands directly from command line.

After you login to your main node, you will see Hadoop Command Shell shortcut is already there which launches the command as below:

D:\Windows\System32\cmd.exe /k c:\apps\dist\bin\hadoop.cmd

Once you start the Hadoop Shell shortcut you will see the list of commands you can use as below:

For example you can check the name node details by using “Hadoop namenode” command:

If you want to start a datanode you just run “Hadoop datanode” command:

Now let’s check if any jobs are running using command “hadoop job –list”

c:\apps\dist>hadoop job -list 0 jobs currently running JobId State StartTime UserName Priority SchedulingInfoNow let me start a Hadoop Job and then we will check the job list again:

c:\apps\dist>hadoop job -list 1 jobs currently running JobId State StartTime UserName Priority SchedulingInfo job_201112310614_0004 4 1325469341874 avkash NORMAL NAc:\apps\dist>hadoop job -status job_201112310614_0004 Job: job_201112310614_0004 file: hdfs://10.186.22.25:9000/hdfs/tmp/mapred/staging/avkash/.staging/job_201112310614_0004/job.xml tracking URL: http://10.186.22.25:50030/jobdetails.jsp?jobid=job_201112310614_0004 map() completion: 1.0 reduce() completion: 1.0 Counters: 23 Job Counters Launched reduce tasks=1 SLOTS_MILLIS_MAPS=19420 Launched map tasks=1 Data-local map tasks=1 SLOTS_MILLIS_REDUCES=15591 File Output Format Counters Bytes Written=123 FileSystemCounters FILE_BYTES_READ=579 HDFS_BYTES_READ=234 FILE_BYTES_WRITTEN=43645 HDFS_BYTES_WRITTEN=123 File Input Format Counters Bytes Read=108 Map-Reduce Framework Reduce input groups=7 Map output materialized bytes=189 Combine output records=15 Map input records=15 Reduce shuffle bytes=0 Reduce output records=15 Spilled Records=30 Map output bytes=153 Combine input records=15 Map output records=15 SPLIT_RAW_BYTES=126 Reduce input records=15As a new job has been started you will also see data coming out at datanode windows as well:

<Return to section navigation list>

SQL Azure Database and Reporting

• George Huey published a new SQL Azure Federation Data Migration Wizard to CodePlex on 12/12/2011 (missed when published.) From the documentation:

…

SQLAzureFedMW will shard your data and migrate it to the correct SQL Azure Federation Members. SQLAzureFedMW will prompt you for the source server (can be SQL Server or SQL Azure). SQLAzureFedMW will display the tables of your source database letting you select which tables you want to migrate to SQL Azure Federation. When you have selected the tables you want to migrate, select the “SQL Azure Federation Target” tab. Connect to your Federation Server and SQLAzureFedMW will show you a list of Federations. Select the Federation you want to work with and then see a list of Federation Members displayed in the right hand list view. You can select as many federation members as you wish. When you have the federation members selected, hit Next and SQLAzureFedMW will use BCP to shard your data out to local data files. Note that SQLAzureFedMW is smart enough to know federated tables and lookup tables. Lookup tables will be exported once while the federated tables will be sharded. SQLAzureFedMW will then display three tabs:

- BCP results tab (here is where you check to make sure BCP commands executed well).

- BCP out commands (This is just an FYI so you can see how the tables were split)

- BCP upload command (This is just as an FYI so you can see where your data is going to go)

Note that both 2 and 3 above contain complete BCP commands and you could copy and paste in a command windows and run. Once you are ready, hit Next and SQLAzureFedMW will run those commands against your federated members.

One thing to note at this time is that SQLAzureFedMW expects the table names to match (between source and target) and that the column types are the same. I have noticed that there are certain data types that cause BCP an issue (looks like a bug), so if you run into an BCP error, edit SQLAzureFedMW.exe.config file and change the “-n” switch on the BCP commands to “-c” and try again.

You can get SQLAzureMW here: http://sqlazuremw.codeplex.com (select Downloads).

• Cihan Biyikoglu (@cihanbiyikoglu) published Introduction to Fan-out Queries: Querying Multiple Federation Members with Federations in SQL Azure on 12/29/2011:

Happy 2012 to all of you! 2011 has been a great year. We now have federations live in production with SQL Azure. So lets chat about fanout querying.

Many applications will need querying all data across federation members. With version 1 of federations, SQL Azure does not yet provide much help on the server side for this scenario. What I’d like to do in this post is show you how you can implement this and what tricks you may need to engage when post processing the results from your fan-out queries. Lets drill in.

Fanning-out Queries

Fan-out simply means taking the query to execute and executing that on all members. Fan-out queries can handle cases where you’d like to union the results from members or when you want to run additive aggregations across your members such as MAX or COUNT.

Mechanics of how to execute these queries over multiple members is fairly simple. Federations-Utility sample application demonstrated how simple fan-out can be done under the Fan-out Query Utility Page. The application code is available for viewing here. The app fans-out a given query to all federation members of a federation. Here is the deployed version:

http://federationsutility-scus.cloudapp.net/FanoutQueryUtility.aspx

There is help available for how to use it on the page. The action starts with the button click event for the “Submit Fanout Query” button. Lets quickly walk through the code first;

- First the connection is opened and three federation properties are initialized for constructing the USE FEDERATION statement: federation name, federation key name and the minimum value to take us to the first member.

38: // open connection

39: cn_sqlazure.Open();

40:

41: //get federation properties

42: str_federation_key_name = (string)cm_federation_key_name.ExecuteScalar();

43: str_federation_name = txt_federation_name.Text.ToString();

44:

45: //start from the first member with the minimum value of the range

46: str_next_federation_member_key_value = (string) cm_first_federation_member_key_value.ExecuteScalar();

- In the loop, the app constructs and executes the USE FEDERATION routing statement using the above three properties and through each iteration connect to the next member in line.

51: //construct command to route to next member

52: cm_routing.CommandText = string.Format("USE FEDERATION {0}({1}={2}) WITH RESET, FILTERING=OFF", str_federation_name , str_federation_key_name , str_next_federation_member_key_value);

53:

54: //route to the next member

55: cm_routing.ExecuteNonQuery();

- Once the connection to the member is established to the member, query is executed through the DataAdapter.Fill method. The great thing about the DataAdapter.Fill method is that it automatically appends the rows or merges the rows into the DataSet.DataTables so as we iterate over the members, there is no additional work to do in the DataSet.

48: //get results into dataset

49: da_adhocsql.Fill(ds_adhocsql);

- Once the execution of the query in the current member is complete, app grabs the range_high of the current federation member. Using this value, in the next iteration the app navigates to the next federation member. The value is discovered through “select cast(range_high as nvarchar) from sys.federation_member_distributions”

69: str_next_federation_member_key_value = cm_next_federation_member_key_value.ExecuteScalar().ToString();

- The condition for the loop is defined at line#71. Simply expresses looping until the range_high value returns NULL.

71: while (str_next_federation_member_key_value != String.Empty);

Fairly simple!

What can you do with this tool? Now use the tool to do schema deployments to federations; To deploy schema to all my federation members, simply put a DDL statement in the query window… And it will run it in all federation members;

DROP TABLE language_code_tbl;

CREATE TABLE language_code_tbl(

id bigint primary key,

name nchar(256) not null,

code nchar(256) not null);I can also maintain reference data in federation members with this tool; Simply do the necessary CRUD to get the data into a new shape or simply delete and reinsert language_codes;

TRUNCATE TABLE language_code_tbl

INSERT INTO language_code_tbl VALUES(1,'US English','EN-US')

INSERT INTO language_code_tbl VALUES(2,'UK English','EN-UK')

INSERT INTO language_code_tbl VALUES(3,'CA English','EN-CA')

INSERT INTO language_code_tbl VALUES(4,'SA English','EN-SA')Here is how to get the database names and database ids for all my federation members;

SELECT db_name(), db_id()

Here is what the output looks like from the tool;

Here are more ways to gather information from all federation members: This will capture information on connections to all my federation members;

SELECT b.member_id, a.*

FROM sys.dm_exec_sessions a CROSS APPLY sys.federation__member_distributions b…Or I can do maintenance with stored procedures kicked off in all federation members. For example, here is how to update statistics in all my federation members;

EXEC sp_updatestats

For querying user data: Well, I can do queries that ‘Union All’ the results for me. Something like the following query where I get blog_ids and blog_titles for everyone who blogged about ‘Azure’ from my blogs_federation. By the way, you can find the full schema at the bottom of the post under the title ‘Sample Schema”.

SELECT b.blog_id, b.blog_title

FROM blogs_tbl b JOIN blog_entries_tbl be

ON b.blog_id=be.blog_id

WHERE blog_entry_title LIKE '%Azure%'I can also do aggregations as long as grouping involves the federation key. That way I know all data that belongs to each group is only in one member. For the following query, my federation key is blog_id and I am looking for the count of blog entries about ‘Azure’ per blog.

SELECT b.blog_id, COUNT(be.blog_entry_title), MAX(be.created_date)

FROM blogs_tbl b JOIN blog_entries_tbl be

ON b.blog_id=be.blog_id

WHERE be.blog_entry_title LIKE '%Azure%'

GROUP BY b.blog_idHowever if I would like to get a grouping that does not align with the federation key, there is work to do. Here is an example: I’d like to get the count of blog entries about ‘Azure’ that are created between Aug (8th month) and Dec (12th month) of the years.

SELECT DATEPART(mm, be.created_date), COUNT(be.blog_entry_title)

FROM blogs_tbl b JOIN blog_entries_tbl be

ON b.blog_id=be.blog_id

WHERE DATEPART(mm, be.created_date) between 8 and 12

AND be.blog_entry_title LIKE '%Azure%'

GROUP BY DATEPART(mm, be.created_date)the output contains a whole bunch of counts for Aug to Oct (8 to 10) form different members. Here is how it looks like;

How about something like the DISTINCT count of languages used for blog comments across our entire dataset? Here is the query;

SELECT DISTINCT bec.language_id

FROM blog_entry_comments_tbl bec JOIN language_code_tbl lc ON bec.language_id=lc.language_id‘Order By’ and ‘Top’ clauses have similar issues. Here are a few examples. With ‘Order by’ I don’t get a fully sorted output. Ordering issue is very common given most app do fan-out queries in parallel across all members. Here is TOP 5 query returning many more than 5 rows;

SELECT TOP 5 COUNT(*)

FROM blog_entry_comments_tbl

GROUP BY blog_id

ORDER BY 1I added the CROSS APPLY to sys.federation_member_distributions to show you the results are coming from various members. So the second column in the output below is the RANGE_LOW of the member the result is coming from.

The bad news is there is post processing to do on this result before I can use the results. The good news is, there are many options that ease this type of processing. I’ll list a few and I will explore those further in detail in future;

- LINQ to DataSet offers a great option for querying datasets. Some examples here.

- ADO.Net DataSets and DataTables offers a number of options for processing groupbys and aggregates. For example, DataColumn.Expressions allow you to add aggregate expressions to your Dataset for this type of processing. Or you can use DataTable.Compute for processing a rollup value.

There are others, some went as far as sending the resulting DataSet back to SQL Azure for post processing using a second T-SQL statement. Certainly costly but they argue TSQL is more powerful.

So far we saw that it is fairly easy to iterate through the members and repeat the same query: this is fan-out querying. We saw a few sample queries with ADO.Net that doesn’t need any additional work to process. Once grouping and aggregates are involved, there is some processing to do.

The post won’t be complete without mentioning a class of aggregations that will be harder to calculate: there are the none-additive aggregates. Here is an example; This query calculates a distinct count of languages in blog entry comments per month.

SELECT DATEPART(mm,created_date), COUNT(DISTINCT bec.language_id)

FROM blog_entry_comments_tbl bec JOIN language_code_tbl lc

ON bec.language_id=lc.language_id

GROUP BY DATEPART(mm,created_date)The processing of distinct is the issue here. Given this query, I cannot do any post processing to calculate the correct distinct count across all members. Style of these queries lend themselves to centralized processing so all comments language ids across days first need to be grouped across all members and then distinct can be calculated on that resultset.

You would rewrite the TSQL portion of the query like this;

SELECT DISTINCT DATEPART(mm,created_date), bec.language_id

FROM blog_entry_comments_tbl bec JOIN language_code_tbl lc

ON bec.language_id=lc.language_idThe output looks like this;

With the results of this query, I can do a DISTINCT COUNT on the grouping of months. If we take the DataTable from the above query, in TSQL terms the post processing query would be like;

SELECT month, COUNT(DISTINCT language_id)

FROM DataTable

GROUP BY monthSo to wrap up, fan-out queries is the way to execute queries across your federation members. With fanout queries, there are 2 parts to consider; the part you execute in each federation member and the final phase where you collapse the results from all members to a single result-set. Does this sound familiar; If you were thinking map/reduce you got it right! The great thing about fan-out querying is that it can be done completely in parallel. All the member queries are executed executed by separate databases in SQL Azure. The down side is in certain cases, you need to now consider writing 2 queries instead of 1. So there is some added complexity for some queries. In future I hope we can reduce that complexity. Pls let me know if you have feedback about that and everything else on this post; just comment on this blog post or contact me through the link that says ‘contact the author’.

…

*Sample SchemaHere is the schema I used for the sample queries above.

-- Connect to BlogsRUs_DB CREATE FEDERATION Blogs_Federation(id bigint RANGE) GO USE FEDERATION blogs_federation (id=-1) WITH RESET, FILTERING=OFF GO CREATE TABLE blogs_tbl( blog_id bigint not null, user_id bigint not null, blog_title nchar(256) not null, created_date datetimeoffset not null DEFAULT getdate(), updated_date datetimeoffset not null DEFAULT getdate(), language_id bigint not null default 1, primary key (blog_id) ) FEDERATED ON (id=blog_id) GO CREATE TABLE blog_entries_tbl( blog_id bigint not null, blog_entry_id bigint not null, blog_entry_title nchar(256) not null, blog_entry_text nchar(2000) not null, created_date datetimeoffset not null DEFAULT getdate(), updated_date datetimeoffset not null DEFAULT getdate(), language_id bigint not null default 1, blog_style bigint null, primary key (blog_entry_id,blog_id) ) FEDERATED ON (id=blog_id) GO CREATE TABLE blog_entry_comments_tbl( blog_id bigint not null, blog_entry_id bigint not null, blog_comment_id bigint not null, blog_comment_title nchar(256) not null, blog_comment_text nchar(2000) not null, user_id bigint not null, created_date datetimeoffset not null DEFAULT getdate(), updated_date datetimeoffset not null DEFAULT getdate(), language_id bigint not null default 1 primary key (blog_comment_id,blog_entry_id,blog_id) ) FEDERATED ON (id=blog_id) GO CREATE TABLE language_code_tbl( language_id bigint primary key, name nchar(256) not null, code nchar(256) not null ) GO

Cihan Biyikoglu (@cihanbiyikoglu) updated his Federations: Building Scalable, Elastic, and Multi-tenant Database Solutions with SQL Azure TechNet wiki article on 12/30/2011 (missed when published):

Introduction: What are Federations?

Federations simply bring in the sharding pattern into SQL Azure as a first class citizen. Sharding pattern is used for building many of your favorite sites on the web such as social networking sites, auction sites or scalable email applications such as Facebook, eBay and Hotmail. By bringing in the sharding pattern into SQL Azure, federations enable building scalable and elastic database tiers and greatly simplify developing and managing modern multi-tenant cloud applications.

Federations scalability model is something you are already greatly familiar with: Imagine a canonical multi-tier application: these applications scale-out their front and middle tiers for scalability. As the demand on the application varies, administrators add and remove new instances of the front end and middle tier nodes to handle the variance in the workload. With Windows Azure Platform this is easily achieved through easy provisioning of new capacity and the pay as you go model of the cloud. However database tiers have typically not provided support for the same elastic scale-out model. However with federations, SQL Azure enable database tiers to scale-out in a similar model. With federations, database tiers can be elastically scaled-out much like the middle and front tiers of the application based on application workload. Using federations, applications can expand and contract the number of nodes that service the database workload without requiring any downtime!

Figure 1: SQL Azure Federations can scale the database tier much like the front and middle tiers of your application.Federations bring a great set of benefits to applications.

- Unlimited Scalability:

Federations offer scale beyond the capacity limits of a single SQL Azure database. Using federations, applications can scale from 10s to 100s of SQL Azure databases and exploit the full power of the SQL Azure cluster.

- Elasticity for the Best Economics:

Federations provide easy repartitioning of data without downtime to exploit elasticity for best price-performance. Applications built with federations can adjust to variances in their workloads by repartition data. The online repartitioning combined with the pay-as-you-go model in SQL Azure, administrators of the application can easily optimize cost and performance by changing the number of databases/nodes they engage for their database workload at any given time. And no downtime is required for this change.

- Simplified Development and Administration of Scale-out Database Systems

Developing large scale application is greatly simplified with federations; Federations come with a robust programming & connectivity model for creating dynamic applications. With native tooling support for managing federations and with online repartitioning operations for orchestrating federation at runtime, federations greatly ease management of databases at scale!

- Simplified Multi-Tenancy:

Multi-tenancy provides great efficiencies by increasing density and cost per tenant characteristics. However a static decision on placement of tenants rarely work for long tail of tenants or for large customers that may hit scale limitations of the single database. With federations application don’t have to make a static decision about tenant placement. Federation provide repartitioning operations for efficient management of tenant placement and re-placement. ...And can deliver this without any applications downtime!

Figure 2: Federation provide a flexible tenancy model.

Who are Federations for?

Federations can help many types of database application to scale. Here are a few examples;

- Software Vendors Implementing Multi-tenant SaaS Solutions:

Many on premise application are developed with a single-tenant—per-database model. However for modern cloud applications a flexible tenancy model is needed. Static layout of tenants require overprovisioning and with cloud infrastructures, single node solutions may be limiting in scaling to spectrum of all your tenants; from the long-tail (very small customers) and to large-head (very large customers). With federations ISVs are not stuck with a static tenant layout. Federated database tiers can easily adapt to varying tenant types and workload characteristics and continue to grow as new tenants are acquired into the system.

Lets look at an ISV providing a customer relationship management sit. Some tenants start life small with a small customer portfolio and other tenants may have a very large portfolio of customers. Some of these tenants may purchase the silver package and others upgrade to gold or deluxe editions as their sales activity grow. With federations, silver package tenants start life in small, multi-tenant federation member, but can graduate to a dedicated federation member (a.k.a single-tenant-per-database model) when they upgrade to gold. With the deluxe package, tenants go to scale-out tenants over multiple federation members. Best part is, with federations upgrades from silver to gold to deluxe require no downtime or application code changes!

- Web Scale Database Solutions

Applications on the web face capacity planning challenges every day to handle varying and growing traffic. Peaks, bursts, spikes or new flood of users... With federations, applications on the web can handle these variances in their workloads by expanding and shrinking the capacity they engage in SQL Azure.

For example, imagine a web site that is hosting blogs, lets call the site Blogs’R’Us. At any given day, users create many new blog entries and other visit and comment on these blogs. Every day some of these blogs go viral. However it is hard to predict which blogs will go viral any given day. Placing these blogs on a static distribution layout across servers means that some servers will be saturated while others server sit idle… With federations, Blogs’R’Us does not have to be stuck with static partition layout. They can handle the shifts in traffic with federation repartitioning operations and they don’t need to take downtime to redistribute the data. As the workload grows, applications can continue to engage more nodes to provide unlimited scalability to the application from 100s of nodes that are available worldwide through the SQL Azure. With the pay-as-you-go model of SQL Azure combined with federations, applications also do not need to compromise on the economics with big upfront investment in computational resources and grow over time with online repartitioning operations just-in-time.

- NoSQL Applications

Applications built with NoSQL technologies can also benefit greatly from federations in SQL Azure. Federations bring a great set of the NoSQL properties to the SQL Azure platform such as the sharding pattern. With federations, SQL Azure adapts an eventually consistency model while preserving local consistency with full ACID transactions in federation members.

Another great benefit of federations is to scale-out tempdb which is the lightweight storage option that come with every reliable SQL Azure database. Each SQL Azure database is a reliable, replicated, highly available database. Federation members are simply system managed SQL Azure databases. With each federation member, applications also get a portion of the nodes TempDB. TempDB provides lightweight, high performance local storage.

Federations provide all the power of the SQL Azure database for storing unstructured data or semi structured data through data types such as XML or binary types.

These are just some of the examples of the NoSQL gene embedded in SQL Azure through federations. You can find a detailed discussion of this topic here

.

Federation Architecture

Federation implementation is extremely easy to work with. Here are a few of the concepts that will be helpful in understanding federations;

Federations: Federations are objects within a user database just like other objects such as views, stored procedures or triggers. However federation object is special in one way; it allows scaling out of parts of your schema and data out of the database to other system managed databases called federation members. Federation object represents the federation scheme which contains the federation distribution key, data type and distribution style. SalesDB in figure 2 below represent a user database with federations. There can be many federations to represent varying scale-out needs of subset of data – for example you can scale out orders in one federations and products and all its related objects in another federation under SalesDB.

Federation Members: Federation use system managed SQL Azure databases to achieve scale-out named federation members. Federation members provide the computational and storage capacity for parts of the federations workload and data. Collection of all federation members in a federation represent the collection of all data in the federation. Federation members are managed dynamically as data is repartitioned. Administrators decide how many federation members are used at any point in time using federation repartitioning operations.

Figure 3: SalesDB is the root database with many federations.Federation Root: Refers to the database that houses federation object. SalesDB is the root database in figure 3 above. Root database is the central repository for information about distribution of scaled-out data.

Federation Distribution Key: This is the key used for data distribution in the federations. In the federation definition, the distribution key represented by 3 properties:

- A distribution key label that is used for referring to the key,

- A data type to specify the valid data domain for the distribution such as uniqueidentifier or bigint, and

- A distribution type to specify the method for distributing the data such as ‘range’.

Federation Atomic Unit: Represent all data that belongs to a single instance of a federation key. An AU (federation atomic unit) contains all rows in all federated tables with the same federation key value. AUs are guaranteed to stay together in a single federation member and is never SPLIT further into multiple members. Federation members can contain many atomic units. For example, with a federation distribution key such as tenant_id, atomic unit refers to all rows across all federated tables for tenant_id=55.

Figure 4: Federation Atomic UnitsFederated Tables: Refer to tables that contain data that is distributed by the federation. Federated tables are created in federation members and contain a federation distribution key annotated with the FEDERATED ON(federation_distribution_key = column_name) clause when creating a table. You can find more details in the online documentation for the CREATE TABLE statement in SQL Azure. In figure 5 below, federated tables are marked light blue.

Reference Tables: Refer to tables that contain reference information to optimize lookup queries in federations. Reference tables are created in federation members and do not contain any FEDERATED ON annotation. Reference tables typically contain small lookup information useful for query processing such as zipcodes that is cloned to each federation member. In the figure below, reference tables are marked green.

Central Tables: Refer to tables that are created in the federation root for typically low traffic or heavily cached data that does not need to be scaled-out. Good examples are metadata or configuration tables that are accessed rarely by the application. In the figure below, central tables are marked orange.

Figure 5: Types of Tables in Federations. Federated tables are represented in blue. Reference tables are represented in green and central tables are represented in orange.Online Repartitioning: One of the fundamental advantages of federations is the online repartitioning operations that can be performed on federation objects. Federation allow online repartitioning of data through ALTER FEDERATION T-SQL commands. By repartitioning Orders_Fed with a SPLIT operation for example, administrators can move data to new federation members without downtime and expand computational capacity from 1 to 2 federation members. Federation members are placed in separate nodes and provide greater computational capacity to the application.

Figure 6: SPLIT operation creates 2 new federation members in the background and replicated all the data in the original source member to the new destination members.

How to Create a Federation?

For the walkthrough here, we'll be using the SQL Azure Management Portal. To go to the management portal you can click on the "Manage" buttong under SQL Azure in the Windows Azure Management Portal OR simply visit your fully qualified SQL Azure server name in the browser with an HTTPS:// protocol header like https://%3csql_azure_server_name%3e.database.windows.net/

.

Creating Federations: To create a federation in the SQL Azure Management Portal, you can click the New Federation icon on the database page.

Figure 7: Creating a federation.You can use the following T-SQL to create a federation as well:

CREATE FEDERATION blogs_federation(id BIGINT RANGE)You can find details of the CREATE FEDERATION statement in the SQL Azure documentation

. Basically, CREATE FEDERATION creates the federation and its first federation member.

You can view the details of the layout of your federation in the federation’s details page or using the following T-SQL:

SELECT * FROM sys.federations fed JOIN sys.federation_member_distributions fedmd ON fed.federation_id=fedmd.federation_id order by range_low

Figure 8: Federation’s detail page.Deploying Schema to Federations: Federation object allow you to scale out parts of your schema to federation members. Federation members are regular SQL Azure databases and have their private schemas. To deploy schema to federation members instead of the root database, you need to first connect to the federation member that you want to target. USE FEDERATION statement allow you to do just that. You can find details on the USE FEDERATION statement in SQL Azure documentation

. Once you are connected to the federation member, you can deploy your schema to this federation member using the same create object statements to create your objects like tables, stored procedures, triggers and views. To connect and deploy your schema, you can click the Query > New Query action on the federation member.

Figure 9: Actions on federation members in the federations detail page.With federations you provide additional annotations to signify federated tables and the federation key. The FEDERATED ON clause is included in CREATE TABLE statements for this purpose. Tables are the only objects that require special anotation. None of the other object can be deployed using the regular SQL Azure T-SQL syntax. Here is a sample T-SQL CREATE TABLE statement that creates a federated table:

USE FEDERATION blogs_federation(id=-1) WITH RESET, FILTERING=OFF

GO

CREATE TABLE blogs_tbl(

blog_id bigint primary key,

blog_title nvarchar(1024) not null,

...

) FEDERATED ON (id=blog_id)

Figure 10: Deploying schema using the online T-SQL editorScaling-out with Federations: Now that you have deployed your schema, you can scale out your federation to more members to handle larger traffic. You can do that in the federations detail page using the SPLIT action in the management portal or using the following T-SQL statement:.

ALTER FEDERATION blogs_federation SPLIT AT(id=100)

Repartitioning operations like SPLIT are performed online in SQL Azure so even if the operation take a while to perform, no application downtime is required while the operation is performed. You can find a detailed discussion of the online SPLIT operation here.

Figure 11: SPLIT action on a federationFederation page also provides detailed information on the progress of the SPLIT operation. If you refresh after submitting the SPLIT operation, you can monitor the federation operation through the federation page or using the following T-SQL;

SELECT * FROM sys.dm_federation_operations

Figure 12: Monitoring SPLIT action in the federation’s detail page.As the application scales, more SPLIT points are introduced. Put another way, as the application workload grow, administrators SPLIT federation into more federation members. Federation can easily power such large scale applications and provide great tooling to help administrators orchestrate at scale.

Figure 13: Federation’s detail pageAll of the above operations can be performed through T-SQL as well. For a full reference of T-SQL statements for federations, you can refer to SQL Azure online documentation. You can also visit my blog

for a detailed discussion of federation topics.

Further Information on Federations

You can find the official product documentation on federation here

. You can also get updates on federation through #sqlfederations

tag or Follow @cihangirb

for annoucements on federations.

Windows Azure Training Kit contains a sample SQL Azure database with federations. December update is available here

. There is a detailed video training on the Federation concepts here; Large Scale Database Solutions on SQL Azure with Federations

Also find detailed deep dives on my blog; http://blogs.msdn.com/b/cbiyikoglu/

. Here are a few blog posts that detail federations;

<Return to section navigation list>

MarketPlace DataMarket, Social Analytics and OData

No significant articles today.

<Return to section navigation list>

Windows Azure Access Control, Service Bus, Caching and Workflow

• Joseph Fultz wrote Windows Azure Caching Strategies for his Forecast Cloudy column and MSDN Magazine published it in the January 2012 issue:

My two-step with caching started back during the dot-com boom. Sure, I had to cache bits of data at the client or in memory here and there to make things easier or faster for applications I had built up until that point. But it wasn’t until the Internet—and in particular, Internet commerce—exploded that my thinking really evolved when it came to the caching strategies I was employing in my applications, both Web and desktop alike.

In this column, I’ll map various Windows Azure caching capabilities to caching strategies for output, in-memory data and file resources, and I’ll attempt to balance the desire for fresh data versus the desire for the best performance. Finally, I’ll cover a little bit of indirection as a means to intelligent caching.

Resource Caching

When referring to resource caching, I’m referring to anything serialized into a file format that’s consumed at the endpoint. This includes everything from serialized objects (for example, XML and JSON) to images and videos. You can try using headers and meta tags to influence the cache behavior of the browser, but too often the suggestions won’t be properly honored, and it’s almost a foregone conclusion that service interfaces will ignore the headers. So, giving up the hope that we can successfully cache slowly changing resource content at the Web client—at least as a guarantee of performance and behavior under load—we have to move back a step. However, instead of moving it back to the Web server, for most resources we can use a content delivery network.

Thinking about the path back from the client, there’s an opportunity between the front-end Web servers and the client where a waypoint of sorts can be leveraged, especially across broad geographies, to put the content closer to the consumers. The content is not only cached at those points, but more important, it’s closer to the final consumers. The servers used for distribution are known collectively as a content delivery/distribution network. In the early days of the Internet explosion, the idea and implementations of distributed resource caching for the Web were fairly new, and companies such as Akami Technologies found a great opportunity in selling services to help Web sites scale. Fast-forward a decade and the strategy is more important than ever in a world where the Web brings us together while we remain physically apart. For Windows Azure, Microsoft provides the Windows Azure Content Delivery Network (CDN). Although a valid strategy for caching content and moving it closer to the consumer, the reality is that CDN is more typically used by Web sites that have one or both conditions of high-scale and large quantities, or sizes of resources, to serve. A good post about using the Windows Azure CDN can be found on Steve Marx’s blog (bit.ly/fvapd7)—he works on the Windows Azure team.

In most cases when deploying a Web site, it seems fairly obvious that the files need to be placed on the servers for the site. In a Windows Azure Web Role, the site contents get deployed in the package—so, check, I’m done. Wait, the latest images from marketing didn’t get pushed with the package; time to redeploy. Updating that content currently—realistically—means redeploying the package. Sure, it can be deployed to stage and switched, but that won’t be without delay or a possible hiccup for the user.

A straightforward way to provide an updatable front-end Web cache of content is to store most of the content in Windows Azure Storage and point all of the URIs to the Windows Azure Storage containers. However, for various reasons, it might be preferable to keep the content with the Web Roles. One way to ensure that the Web Role content can be refreshed or that new content can be added is to keep files in Windows Azure Storage and move them to a Local Resource storage container on the Web Roles as needed. There are a couple of variations on that theme available, and I discussed one in a blog post from March 2010 (bit.ly/u08DkV). …

Read the entire story here.

<Return to section navigation list>

Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

• Himanshu Singh reported Japan’s Top Social Networking Site Taps Windows Azure To Run Its Annual Online Christmas Event in a 1/3/2012 post to the Windows Azure blog:

Mobile usage in Japan is among the highest in the world, with over 90% of the population using mobile devices. More than 90% of these users have mobile data plans, which are commonly 3G-enabled. And while 75% of these mobile internet users are tapping into “social networking” sites from their phones, the top app being used isn’t Facebook, Twitter or even MySpace. It’s a Japan-based social networking site called mixi.

Founded in 2004, mixi has more than 21.6 million users, which accounts for roughly 80% of the social networking market in Japan. For the past three years, mixi has been working to create the largest online Christmas event. This year’s event, called mixi Xmas 2011, enables mixi users to sign up to share holiday messages and send virtual gifts or online coupons to one another.

This year’s event started in November and by the second day of the campaign, active users exceeded one million with more than 2.5 regular active users regularly participating.

Organizers credit a large part of their success to the use of Windows Azure and the Content Delivery Network (CDN), which has given them the ability to scale resources dynamically in response to spikes in usage and traffic.

Read more about this story in the press release (translated from Japanese), or read the original Japanese version here.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

Brian Swan (@brian_swan) described Automating PHPUnit Tests in Windows Azure in a 1/3/2012 post to the [Windows Azure’s] Silver Lining blog:

To start the new year off, I’d like to follow up on a couple of posts I wrote last month: Thoughts on Testing OSS Applications in Windows Azure and Running PHPUnit in Windows Azure. In this post, I’ll show you how to deploy your PHPUnit tests with your application, have the tests run as a start up task, and have the results written to your storage account for analysis. Attached to this post is a .zip file that contains a skeleton project that you can use to automatically run PHPUnit tests when you deploy a PHP application to Azure. I’ll walk you though how to use the skeleton project, then provide a bit more detail as to how it all works (so you can make modifications where necessary).

However, first, a couple of high-level “lessons learned” as I worked through this:

- It’s all about relative directories. When you deploy an application to Windows Azure, there are no guarantees as to the location of your root directory. You know that it will be called approot, but your don’t know if that will be on the C:\, D:\, E:\, etc. drive (though, in my experience, it has always been E:\approot). In order to make sure your application and startup scripts will run correctly, you need to avoid using absolute paths (which seems to be common in the php.ini file).

- Know how to script, both batch scripts and Powershell scripts. I’m still relatively new to writing scripts, but they are essential to creating startup tasks in Azure. Powershell especially makes lots of handy Azure-specific information available through various cmdlets.

Anyway, to use the attached skeleton project, here’s what you need to do:

1. Download and unzip the attached AzurePHPWebRole.zip file. You will find the following directory structure:

-AzurePHPWebRole

-AzurePHPWebRole

-bin

-configurephp.ps1

-install-phpmanager.cmd

-runtests.ps1

-setup.cmd

-PHP

-resources

-WebPICmdLine

-(Web PI .dll files)

-tests

-(application files)

-(any external libraries)

-diagnostics.wadcfg

-web.config

-ServiceDefinition.csdef2. Copy your application files to the AzurePHPWebRole\AzurePHPWebRole directory. This is the application’s root directory. Your unit tests should be put in the tests directory.

3. Copy your local PHP installation to the AzurePHPWebRole\AzurePHPWebRole\bin\PHP directory. I’m assuming that you have PHPUnit installed as a PEAR package. If you followed the default installation of PEAR and PHPUnit, they will be included as part of your custom PHP installation. After copying your PHP installation, you’ll need to make two minor modifications:

a. Make sure that any paths in your php.ini file are relative (e.g. extension_dir=”.\ext”).

b. Edit your phpunit.bat file to eliminate the use of absolute paths. To do this, I’ve modified lines 1 and 7 to use %~dp0 as a substitute for the current directory in the code below:

1: if "%PHPBIN%" == "" set PHPBIN=%~dp0php.exe2: if not exist "%PHPBIN%" if "%PHP_PEAR_PHP_BIN%" neq "" goto USE_PEAR_PATH3: GOTO RUN4: :USE_PEAR_PATH5: set PHPBIN=%PHP_PEAR_PHP_BIN%6: :RUN7: "%PHPBIN%" "%~dp0phpunit" %*4. Generate a skeleton ServiceConfiguration.cscfg file with the following command (run from the AzurePHPWebRole directory):

cspack ServiceDefinition.csdef /generateConfigurationFile:ServiceConfiguration.cscfg /copyOnly5. Edit the generated ServiceConfiguration.cscfg file. You will need to specify osfamily=”2” and osversion=”*”, as well as supply your storage account connection information:

<?xml version="1.0"?><ServiceConfiguration xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"xmlns:xsd="http://www.w3.org/2001/XMLSchema"serviceName="AzurePHPWebRole"xmlns="http://schemas.microsoft.com/ServiceHosting/2008/10/ServiceConfiguration"osFamily="2"osVersion="*"><Role name="AzurePHPWebRole"><ConfigurationSettings><Setting name="Microsoft.WindowsAzure.Plugins.Diagnostics.ConnectionString" value="YourStorageAccountConnectionStringHere" /></ConfigurationSettings><Instances count="1" /></Role></ServiceConfiguration>6. Package your application for deployment. To create the .cspkg file that you need for deploying your application, run the following command from the AzurePHPWebRole directory:

cspack ServiceDefinition.csdef7. Deploy your application. Finally, you can deploy your application. This tutorial will walk you through the steps: http://azurephp.interoperabilitybridges.com/articles/deploying-your-first-php-application-to-windows-azure#new_deploy.

After successful deployment of your application (and I’m assuming you are deploying to the staging environment), results of your tests will be written to your storage account in a blob container called wad-custom. Assuming that your tests pass, you are then ready to promote your deployment to the production environment.

How does it all work?

Here’s how it works: Windows Azure diagnostics can pick up any file you want it to and send it your to storage account. If you look at the ServiceDefinition.csdef file in the attached skeleton project, you’ll see this:

<LocalResources><LocalStorage name="MyCustomLogs" sizeInMB="10" cleanOnRoleRecycle="false" /></LocalResources>That entry creates a local directory called MyCustomLogs when you deploy your project. If you also look at the diagnostics.wadcfg file in the skeleton project, you’ll see this:

<DataSources><DirectoryConfiguration container="wad-custom" directoryQuotaInMB="128"><LocalResource name="MyCustomLogs" relativePath="customlogs" /></DirectoryConfiguration></DataSources>That tells the Diagnostics module to look in the customlogs directory of the MyCustomLogs local resource and send the results to your storage account in a blob container called wad-custom. The startup tasks will write PHPUnit test results to the customlogs directory, which the Diagnostics module then transfers to your storage account. (For more information about Windows Azure diagnostics, see How to Get Diagnostics Info for Azure/PHP Applications Part 1 and Part 2.)

Of course, the tricky part is writing the scripts to run PHPUnit and write the results to customlogs. Going back to the ServiceDefinition.csdef file, you see it contains these lines:

<Startup><Task commandLine="install-phpmanager.cmd" executionContext="elevated" taskType="simple" /><Task commandLine="setup.cmd" executionContext="elevated" taskType="simple" /></Startup>Those lines tell Windows Azure to run the install-phpmanager.cmd, and setup.cmd scripts in order (the tasktype=”simple” makes sure they run serially). Let’s look at these scripts.

The install-phpmanager.cmd script installs the PHP Manager for IIS:

@echo offcd "%~dp0"ECHO Starting PHP Manager installation... >;> ..\startup-tasks-log.txtmd "%~dp0appdata"cd "%~dp0appdata"cd "%~dp0"reg add "hku\.default\software\microsoft\windows\currentversion\explorer\user shell folders" /v "Local AppData" /t REG_EXPAND_SZ /d "%~dp0appdata" /f"..\resources\WebPICmdLine\webpicmdline" /Products:PHPManager /AcceptEula >;> ..\startup-tasks-log.txt 2>>..\startup-tasks-error-log.txtreg add "hku\.default\software\microsoft\windows\currentversion\explorer\user shell folders" /v "Local AppData" /t REG_EXPAND_SZ /d %%USERPROFILE%%\AppData\Local /fECHO Completed PHP Manager installation. >> ..\startup-tasks-log.txtThe Powershell cmdlets for PHP Manager are used to modify your PHP installation; specifically the include_path setting. (Remember, we don’t have guarantees about where our application root will be.) You can see how the cmdlets are used in the configurephp.ps1 script (which will get called from the setup.cmd script):

Add-PsSnapin PHPManagerSnapinnew-phpversion -scriptprocessor .\php$include_path_setting = get-phpsetting -Name include_path$include_path = $include_path_setting.Value$current_dir = get-location$new_path = '"' + $include_path.Trim('"') + ";" + $current_dir.Path + "\php\PEAR" + '"'set-phpsetting -Name include_path -Value $new_path$extension_dir = '"' + $current_dir.Path + "\php\ext" + '"'set-phpsetting -Name extension_dir -Value $extension_dirThe setup.cmd simply calls the configurephp.ps1 and the runtests.ps1 scripts with an “Unrestricted” execution policy:

powershell -command "Set-ExecutionPolicy Unrestricted"powershell .\configurephp.ps1powershell .\runtests.ps1The runtests.ps1 script puts PHP in the Path environment variable, gets the location of our MyCustomLogs resource, and runs PHPUnit (with the output written to the customlogs directory):

Add-PSSnapIn Microsoft.WindowsAzure.ServiceRuntime[System.Environment]::SetEnvironmentVariable("PATH", $Env:Path + ";" + $pwd + "\PHP;")cd ..$rootpathelement = get-localresource MyCustomLogs$customlog = join-path rootpathelement.RootPath "customlogs\test.log"phpunit tests >$customlogThat’s basically it. If you give this a try, please let me know how it goes. I’m willing to bet there are areas in which this whole process can be improved.

Bruce Kyle reminded prospective Azure users that Spending Limits Highlight New Risk-Free Windows Azure Sign-Up in a 1/3/2011 post:

The December release of Windows Azure has unveiled a new Windows Azure site and sign-up experience. In the past, one of the biggest concerns and roadblocks was that you could overspend on your free trial.

The new Windows Azure Trial accounts and MSDN Accounts are now

completely risk-free given the institution of “spending limits”. By default all new trial accounts and newly provisioned MSDN benefits are created with a spending limit of $0. That means that if you exceed the monthly allotments of gratis services, your services will automatically be shut down and your storage placed in read-only mode until the next billing cycle. Then you can redeploy your services and take advantage of that month’s allotment (if any remains).

We do require you to enter a credit card (which helps verify your identity as a legitimate Azure users). But it will not be charged with the new default spending limit.

To see the steps, see Jim O’Neill’s post The New, Improved No-Risk Windows Azure Trial. He shows how you can sign up in just a few steps.

Josh Holmes described Activating BizSpark Azure Accounts in a 1/2/2012 post:

A question that I’ve been asked a number of times recently is how to activate an MSDN Azure account and more specifically, how to do it with a BizSpark account. To make it easy, I thought I’d blog that here.

For an up to date list of benefits you should visit http://www.microsoft.com/windowsazure/msdn-benefits/ but currently it’s as follows:

Signing up for BizSpark

Starting up, if you are a start-up (defined as less than 3 years old, less than $1 million in revenue, privately held and producing technology as your primary monetization) you should be on BizSpark. BizSpark gives you access to all of the Microsoft technologies that you’d need to develop your applications such as Windows, Azure, Visual Studio, Office (in case you need to integrate with it) and more. Just go to http://www.bizspark.com and click Apply Now.

If you have an existing LiveID, you can use that but the reality is that I recommend that you create a specific LiveID for the start-up because what happens if the person who originally signed up leaves the company? Or is out on a day when something needs to be done on the account? For that reason, I recommend creating a specific account for your BizSpark management.

I recommend, partly because of the number of lawyers in my family, that you read the terms and conditions but at the end, if you agree, there are two individual agreements that you need to agree to before you click next.

One of those is the BizSpark Startup Agreement and the other is the EULA.

Once you fill out the rest of the wizard, it goes into a process on our end. If you are in Ireland, that registration goes through a two phase approval process. The first phase is with a Network Partner and the second phase is currently me.

Once you are signed up, you can log into My BizSpark and click on the Get your Free Software link which will show you a link to MSDN. Reality is that it’s just pointing to MSDN and you can go there directly if you like.

You’ll just need to sign in there with a LiveID that’s associated with a BizSpark member.

Adding new developers to a BizSpark Account

Quick side note is that I’ve also been asked a number of times how to add additional developers to a BizSpark startup. Find the Manage section of the left hand navigation and find the Members link underneath that. Then you put in the new developer’s name and email address. That does not have to be their LiveID – it can be any email address. There will be an acceptance link in the email that will require the person to sign in with their own LiveID to access the bits and all.

Activating Your Azure Benefits from MSDN

Once you sign into MSDN and go to the “My Account” section, you should see the “Windows Azure Platform” link. This link will take you to the Windows Azure signup process and walk you through a longish wizard. At the end of that, you will be able leverage all of the benefits of MSDN on Azure.

One thing to warn you about at this point is that the Windows Azure signup does require a credit card to cover any overages. I recommend that you closely monitor your usage to make sure that you don’t go over.

Now, once you’re signed up for the Azure benefits, you can simply go to http://windows.azure.com to manage your account and deploy applications. The one portal serves as your management portal for your services, data, SQL Azure and any other services you’re signed up for such as Connect.

Have fun playing with Azure!

<Return to section navigation list>

Visual Studio LightSwitch and Entity Framework 4.1+

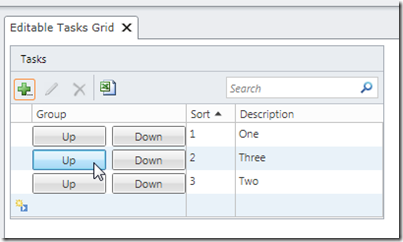

Michael Washington (@ADefWebserver) described a Visual Studio LightSwitch List Box That Can Be Ordered By The End-User

When you have a list of items that have a particular sort order, you may have the need to allow the end-user the ability to change the sort order. This article shows a solution.

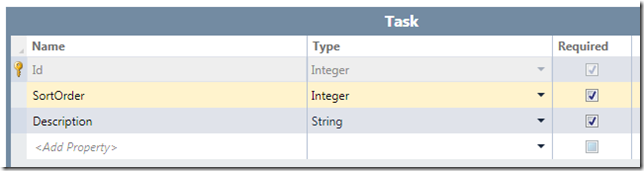

Tasks Table

We start off with a Task table.

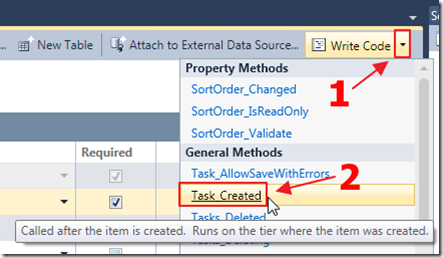

We override the Task_Created event to set a default value for SortOrder since it is a required field:

partial void Task_Created(){// set the sort orderthis.SortOrder = 0;}We also override the Tasks_Inserted method to set the sort order after the record is inserted:

partial void Tasks_Inserted(Task entity){// Get all saved Tasksvar colTasks = this.DataWorkspace.ApplicationData.Tasks.GetQuery().Execute();if (colTasks.Count() > 0){// Set sort order to the highest found plus oneentity.SortOrder =colTasks.OrderByDescending(x => x.SortOrder).FirstOrDefault().SortOrder + 1;}else{// set the sort order to number oneentity.SortOrder = 1;}}Create The Screen

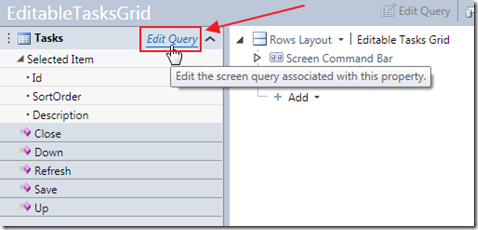

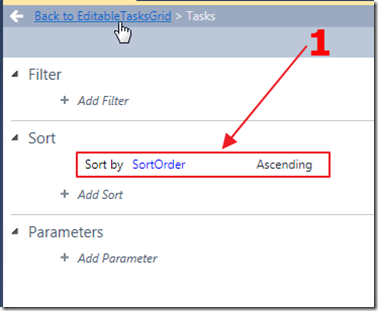

We create a Screen with the Tasks collection and select Edit Query.

We set the SortOrder for the collection.

Running The Application

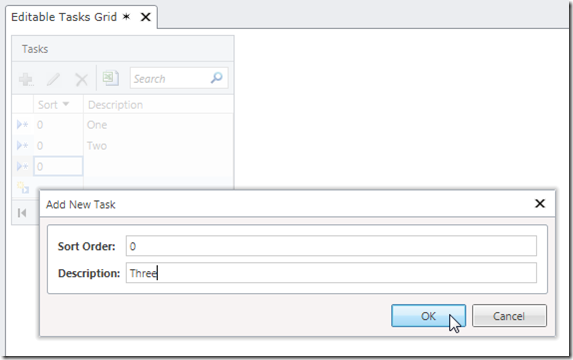

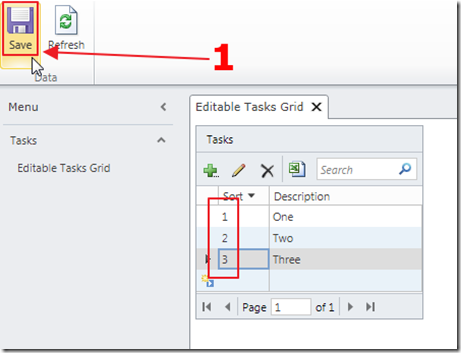

When we run the application, we see that the default value is set for each record…

…but when we click Save, the records will now have an incremental sort value.

Up And Down Buttons

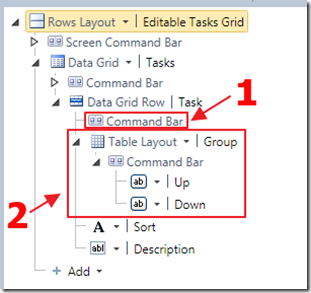

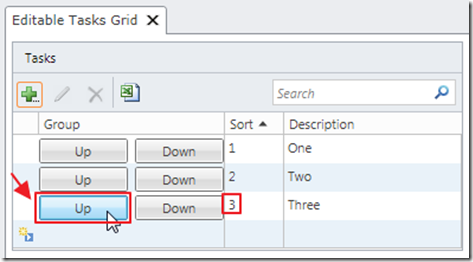

Next, we click on the Command Bar of the Data Grid Row, and add a Table Layout Group, and insert Up and Down buttons.

The following is the code used:

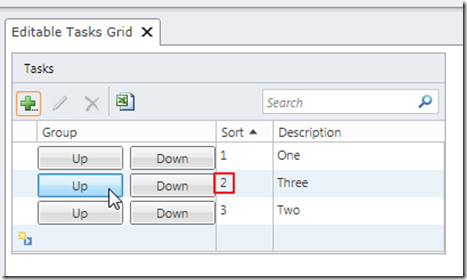

partial void Up_Execute(){var SelectedTask = Tasks.SelectedItem;int intSelectedTaskSortOrder = SelectedTask.SortOrder;// Determine if there is a Task with a SortOrder// that is one less than the current onevar TaskWithLowerSortOrder = (from Task in Taskswhere Task.SortOrder < intSelectedTaskSortOrderorderby Task.SortOrder descendingselect Task).FirstOrDefault();if (TaskWithLowerSortOrder != null){int intTaskWithLowerSortOrder = TaskWithLowerSortOrder.SortOrder;// Switch the SortIdsTaskWithLowerSortOrder.SortOrder = intSelectedTaskSortOrder;SelectedTask.SortOrder = intTaskWithLowerSortOrder;Save();Tasks.Refresh();Tasks.SelectedItem = null;}}partial void Down_Execute(){var SelectedTask = Tasks.SelectedItem;int intSelectedTaskSortOrder = SelectedTask.SortOrder;// Determine if there is a Task with a SortOrder// that is one higher than the current onevar TaskWithHigherSortOrder = (from Task in Taskswhere Task.SortOrder > intSelectedTaskSortOrderorderby Task.SortOrderselect Task).FirstOrDefault();if (TaskWithHigherSortOrder != null){int intTaskWithHigherSortOrder = TaskWithHigherSortOrder.SortOrder;// Switch the SortIdsTaskWithHigherSortOrder.SortOrder = intSelectedTaskSortOrder;SelectedTask.SortOrder = intTaskWithHigherSortOrder;Save();Tasks.Refresh();Tasks.SelectedItem = null;}}When we run the application, we can change the sort order of the items using the buttons.

Download Code

The LightSwitch project is available at http://lightswitchhelpwebsite.com/Downloads.aspx

Return to section navigation list>

Windows Azure Infrastructure and DevOps

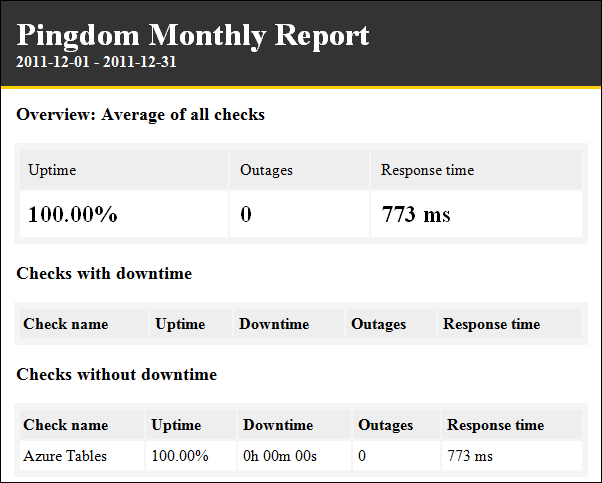

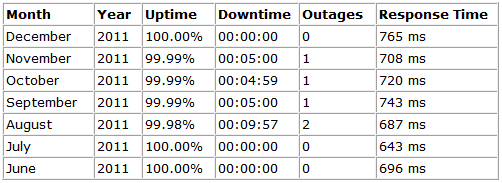

My (@rogerjenn) Uptime Report for my Live OakLeaf Systems Azure Table Services Sample Project: December 2011 post of 1/3/2012 starts with:

My live OakLeaf Systems Azure Table Services Sample Project demo runs two small Windows Azure Web role instances from Microsoft’s South Central US (San Antonio, TX) data center. Here’s its uptime report from Pingdom.com for December 2011:

…

This is the seventh uptime report for the two-Web role version of the sample project. Reports will continue on a monthly basis.

Mary Jo Foley (@maryjofoley) claimed “Microsoft has a number of new features on its Azure cloud roadmap that are slated to be out by spring 2012” in an introduction to her Microsoft's cloud roadmap for 2012: What's on tap article of 1/3/2012 for ZDNet’s All About Microsoft blog:

…

Here are a few of the deliverables on the roadmap that will help enable these scenarios:

- Consistent REST APIs for Windows Azure features and services, callable from any programming language (full SDK in Spring)

- SQL Azure Reporting Services (now a Q1 2012, rather than an end-of 2011 deliverable, I am hearing from one of my contacts)

- Ability to create a virtual private network (VPN) between on-premise servers and Windows Azure. (Note: I believe this is the Azure Connect offering, originally codenamed Project Sydney, which was supposed to be out before the end of 2011 in final form.)

- Ability to import and export large amounts of data by shipping files on a drive into Windows Azure blob storage

- Same availability promise (99.9%) for single and multiple instances

- Ability to mount/unmount more easily drives on running instances

- Support for more easily developing Azure apps not just on Windows, but also on Macs and Linux systems

- Support for the User Datagram Protocol (UDP) for streaming workloads and other application areas

- The ability to easily set up Wordpress and Drupal on Azure “without writing code”

- A preview of functionality allowing the creation, customization and management of private marketplaces (Note: I believe this is the codename “Roswell” technology about which I blogged late last year)

- Better Active Directory integration for migration: Both the ability to sign on using an on-premise Active Directory in Office 365, as well as technology that will help users migrate their line-of-business apps that are dependent on Active Directory to the cloud (supposedly “without making any changes”)

- Support for more easily uploading and sharing videos in a central depository across an enterprise, as well as the ability to easily stream to any device (including those running not just Silverlight, but also Flash, HTML5, iOS and Android)

…

I see the hand of Azure Application Corporate Vice President Scott Guthrie in some of these coming features, as well as on some of the clean-up work around tooling that’s happened lately around Windows Azure.

Read the full post here.

Mary Jo Foley (@maryjofoley) asserted “Microsoft is preparing to launch a new persistent virtual machine feature on its Azure cloud platform, enabling customers to host Linux, SharePoint and SQL Server there” in a deck for her Microsoft to enable Linux on its Windows Azure cloud in 2012 article of 1/2/2012 for ZDNet’s All About Microsoft blog:

This headline is not an error. I didn’t have one too many craft brews over the New Year’s weekend.

Microsoft is poised to enable customers to make virtual machines (VMs) persistent on Windows Azure, I’ve heard from a handful of my contacts who’ve asked not to be identified.

What does this mean? Customers who want to run Windows or Linux “durably” (i.e., without losing state) in VMs on Microsoft’s Azure platform-as-a-service platform will be able to do so. Microsoft is planning to launch a Community Technology Preview (CTP) test-build of the persistent VM capability in the spring of 2012, according to partners briefed by the company.

The new persistent VM support also will allow customers to run SQL Server or SharePoint Server in VMs, as well. And it will enable customers to more easily move existing apps to the Azure platform.

Windows Azure already has support for a VM role, but it’s not very useful at the moment. (I guess that explains Microsoft officials’ reticence to comment on any of my questions about Azure’s VM role support, in spite of my repeated requests.) …

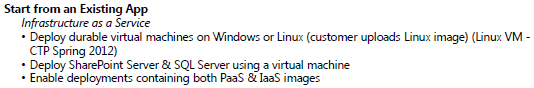

If you are still a doubter that this is coming, here’s a snippet from an Azure roadmap that one of my contacts shared with me which mentions the capability:

…

Read the entire the article here.

Dhananjay Kumar (@debug_mode) described how to Create [a] Hosted Service using [the] Windows Azure Management API on 1/2/2011:

In this post I will discuss, how could we create Hosted Service in Windows Azure using Windows Azure Management API?

To create Hosted Service you need to perform POST operation on the below URI. You need to change subscription id with your subscription Id.

https://management.core.windows.net/<subscription-id>/services/hostedservices

While making the request, Request Header must contain information as below,

Request Body must contain XML as body. XML elements should be in the same order as mentioned below.

<?xml version="1.0" encoding="utf-8"?> <CreateHostedService xmlns="http://schemas.microsoft.com/windowsazure"> <ServiceName>service-name</ServiceName> <Label>base64-encoded-service-label</Label> <Description>description</Description> <Location>location</Location> <AffinityGroup>affinity-group</AffinityGroup> </CreateHostedService>On successful creation of the hosted service status code 201 will get returned. Response contains no body.

For more details read MSDN reference here

To create hosted service I have created below function. To create service you need to pass below parameters

- Service Name : This is name of the service you want to create

- Label : This is service label

- Description : This is service description

- Location : This is data center in which service will get deployed

public String CreateHostedService(String subscriptionId, String serviceName, String label, String description, String location) { XDocument payload = CreatePayloadToCreateService(serviceName, label, description, location); string operationUri = "https://management.core.windows.net/"+subscriptionId+"/services/hostedservices"; String requestId = Invoke(payload,operationUri); return requestId; }In above function I am calling two different functions.

- One method to create payload. This payload will be passed as XML to perform POST operation

- Invoke method will perform actual HTTP request to Windows Azure portal.

Below function will create payload and return as XML. LINQ to XML is being used to create payload XML.

private XDocument CreatePayloadToCreateService(String serviceName, String label,String description, String location) { String base64LabelName = ConvertToBase64String(label); XNamespace wa = "http://schemas.microsoft.com/windowsazure"; XElement xServiceName = new XElement(wa + "ServiceName", serviceName); XElement xLabel = new XElement(wa + "Label", base64LabelName); XElement xDescription = new XElement(wa + "Description", description); XElement xLocation = new XElement(wa + "Location", location); //XElement xAffinityGroup = new XElement(wa + "AffinityGroup", affinityGroup); XElement createHostedService = new XElement(wa + "CreateHostedService"); createHostedService.Add(xServiceName); createHostedService.Add(xLabel); createHostedService.Add(xDescription); createHostedService.Add(xLocation); //createHostedService.Add(xAffinityGroup); XDocument payload = new XDocument(); payload.Add(createHostedService); payload.Declaration = new XDeclaration("1.0", "UTF-8", "no"); return payload; }Other method used in CreateHosetdService function was Invoke.This function is used to make HTTP request to Azure portal.

public String Invoke(XDocument payload,string operationUri) { HttpWebRequest httpWebRequest = Helper.CreateHttpWebRequest(new Uri(operationUri), "POST"); using (Stream requestStream = httpWebRequest.GetRequestStream()) { using (StreamWriter streamWriter = new StreamWriter(requestStream, System.Text.UTF8Encoding.UTF8)) { payload.Save(streamWriter, SaveOptions.DisableFormatting); } } String requestId; using (HttpWebResponse response = (HttpWebResponse)httpWebRequest.GetResponse()) { requestId = response.Headers["x-ms-request-id"]; } return requestId; }In this way you can create hosted service. I hope this post is useful. Thanks for reading.

Chris Hoff (@Beaker) posted When A FAIL Is A WIN – How NIST Got Dissed As The Point Is Missed on 1/1/2012:

Randy Bias (@randybias) wrote a very interesting blog titled Cloud Computing Came To a Head In 2011, sharing his year-end perspective on the emergence of Cloud Computing and most interestingly the “…gap between ‘enterprise clouds’ and ‘web-scale clouds’”

Given that I very much agree with the dichotomy between “web-scale” and “enterprise” clouds and the very different sets of respective requirements and motivations, Randy’s post left me confused when he basically skewered the early works of NIST and their Definition of Cloud Computing:

This is why I think the NIST definition of cloud computing is such a huge FAIL. It’s focus is on the superficial aspects of ‘clouds’ without looking at the true underlying patterns of how large Internet businesses had to rethink the IT stack. They essentially fall into the error of staying at my ‘Phase 2: VMs and VDCs’ (above). No mention of CAP theorem, understanding the fallacies of distributed computing that lead to successful scale out architectures and strategies, the core socio-economics that are crucial to meeting certain capital and operational cost points, or really any acknowledgement of this very clear divide between clouds built using existing ‘enterprise computing’ techniques and those using emergent ‘cloud computing’ technologies and thinking.

Nope. No mention of CAP theorem or socio-economic drivers, yet strangely the context of what the document was designed to convey renders this rant moot.

Frankly, NIST’s early work did more to further the discussion of *WHAT* Cloud Computing meant than almost any person or group evangelizing Cloud Computing…especially to a world of users whose most difficult challenges is trying to understand the differences between traditional enterprise IT and Cloud Computing

As I reacted to this point on Twitter, Simon Wardley (@swardley) commented in agreement with Randy’s assertions, but strangely what I found odd again was the misplaced angst by which the criterion of “success” vs “FAIL” that both Simon and Randy were measuring the NIST document against:

Both Randy and Simon seem to be judging NIST’s efforts against their lack of extolling the virtues, or “WHY” versus the “WHAT” of Cloud, and as such, were basically doing a disservice by perpetuating aged concepts rooted in archaic enterprise practices rather than boundary stretch, trailblaze and promote the enlightened stance of “web-scale” cloud.

Well…

The thing is, as NIST stated in both the purpose and audience sections of their document, the “WHY” of Cloud was not the main intent (and frankly best left to those who make a living talking about it…)

From the NIST document preface:

1.2 Purpose and Scope

Cloud computing is an evolving paradigm. The NIST definition characterizes important aspects of cloud computing and is intended to serve as a means for broad comparisons of cloud services and deployment strategies, and to provide a baseline for discussion from what is cloud computing to how to best use cloud computing. The service and deployment models defined form a simple taxonomy that is not intended to prescribe or constrain any particular method of deployment, service delivery, or business operation.

1.3 Audience

The intended audience of this document is system planners, program managers, technologists, and others adopting cloud computing as consumers or providers of cloud services.

This was an early work (the initial draft was released in 2009, final in late 2011,) and when it was written, many people — Randy, Simon and myself included — we still finding the best route, words and methodology to verbalize the “Why.” And now it’s skewered as “mechanistic drivel.”*

At the time NIST was developing their document, I was working in parallel writing the “Architecture” chapter of the first edition of the Cloud Security Alliance’s Guidance for Cloud Computing. I started out including my own definitional work in the document but later aligned to the NIST definitions because it was generally in line with mine and was well on the way to engendering a good deal of conversation around standard vocabulary.

This blog post summarized the work at the time (prior to the NIST inclusion). I think you might find the author of the second comment on that post rather interesting, especially given how much of a FAIL this was all supposed to be…

It’s only now with the clarity of hindsight that it’s easier to take the “WHY,” and utilize the “WHAT” (from NIST and others, especially practitioners like Randy) in a manner that is complementary so we can talk less about “what and why” and rather “HOW.”