Adding Missing Rows to a SQL Azure Federation with the SQL Azure Federation Data Migration Wizard v1

My Loading Big Data into Federated SQL Azure Tables with the SQL Azure Federation Data Migration Wizard v1.0 post of 1/12/2012 described a problem uploading part of the data for a federation member database in its “Auto-sharding Larger Data Batches” section near the end. This post describes the process I used to determine which of the 398,000 source rows were missing so I could restart the upload process with data for the correct row. The correct data is that which doesn’t cause a primary key constraint conflict and doesn’t result in any missing rows in the resultset.

Update 1/18/2011 9:30 AM PST: Added another 7,958,995 rows to the WADFederation with SQLAzureFedMW v1.2 to reach about 80% full on members 1 and 2. See end of post.

Update 1/16/2012 9:15 AM PST: Completed an 8-million row upload in 10 hours without errors. “See the Investigating Strange Storage Values Reported by Federation Member Pop Ups” section at the end of the post.

Update 1/14/2012 8:45 AM PST: My initial approach wasn’t successful, but executing a MERGE operation succeeded in replacing the missing rows. See the “Executing a MERGE Command to Add Missing Rows” sections near the end of this post.

Background

The Loading Big Data into Federated SQL Azure Tables with the SQL Azure Federation Data Migration Wizard v1.0 post described initially loading 1,000 rows to the federation root database (AzureDiagnostics1), splitting that database into five additional federation members based on CounterId values of 1 through 6. This was limited to 1,000 rows so as to minimize the time required for partitioning but still deliver a reasonable number of rows (166 or 167) to each partition member. The initial Timestamp value of that rowset, created from a WADPerformanceCountersTable-1000rows.txt tab-delimited text file, was 2011-07-25 10:33:21.9432881.

After creating the six-member federation, I uploaded a second rowset created from a WADPerformanceCountersTable-Page-79.txt file with 398,000 rows of data for the time period that immediately preceded the 1,000 row upload. It’s last timestamp value was 2011-07-25 10:33:21.9432881, the same as that for the 1,000-row rowset. (There are several successive rows with identical Timestamp values.) This addition failed for federation member 4 after adding 50,000 rows.

The reason for adding batches of data in reverse chronological order is that I had previously downloaded approximately 8 GB of Windows Azure diagnostic data in 1-GB increments for bulk loading into an SQL Azure 2008 R2 SP1 database in ascending date order. This database was intended for testing uploads on scale similar to that which might be common for large enterprises. Adding later values assured that primary key constraint conflicts wouldn’t occur.

Determining the Federation Member with Missing Rows

SQLAzureFedMW reported Communication link failure errors for only one of the six federation members but didn’t identify the CounterId for offending member. Therefore, I executed the following query in the SQL Azure Management Portal’s query editor (opened by clicking the New Query button in the page header) to count the number of rows in each member:

USE FEDERATION [WADFederation] ([Id] = n) WITH FILTERING = OFF, RESET

GO

SELECT COUNT(*) FROM WADPerfCounters

GO

where n equaled 1, 2, 3, 4, 5 and 6. This resulted in the following row counts:

| CounterId | Row Count |

| 1 | 66,501 |

| 2 | 66,500 |

| 3 | 66,500 |

| 4 | 50,167 |

| 5 | 66,500 |

| 6 | 66,499 |

The member with CounterId = 4 was the obvious culprit. SQLAzureFedMW adds rows in batches of 10,000, as specified by the -b 10000 parameter of:

The first addition contributed 167 rows.

Determining the Starting PartitionKey and RowKey Values

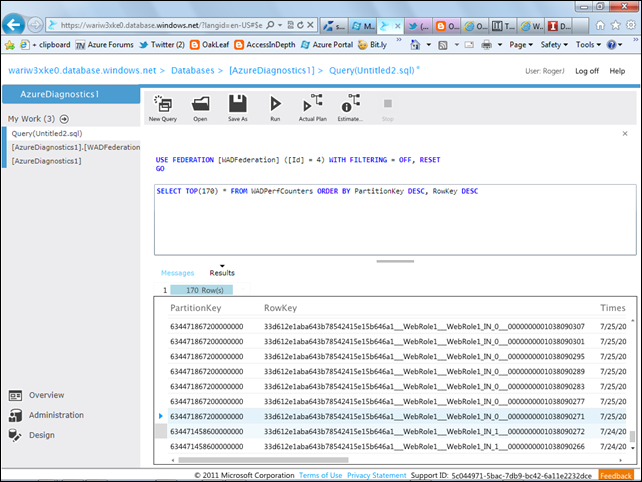

I believed that there would be a significant difference in Timestamp values in the rows that represented the junction of the two data sets, so I issued the following query in the query editing window for the member with Id = 4:

SELECT TOP(170) * FROM WADPerfCounters ORDER BY PartitionKey DESC, RowKey DESC

so as to include several rows of both resultsets in the grid, which appeared as follows:

The two selected rows had significant differences in PartitionKey and Timestamp values:

This led me to the conclusion that I could use the Timestamp value to insert rows into a new SQL Server table and then delete the first few rows whose PartitionKey and RowKey values overlapped existing values in the federation member.

The query grid truncates decimal fractions of Datetime2, 7 values, which it should not, so I executed the same query in SQL Server Management Studio (SSMS) 2008 R2 SP1:

The initial constraint is Timestamp >= 2011-07-24 23:12:33.9906722.

I then executed the following SELECT … INTO query in SSMS to create the source table for SQLAzureFedMW:

SELECT *

INTO WADPerfCounters4

FROM WADPerfCounters

WHERE CounterId = 4

which inserted 16,336 rows into the new table. 50,167 + 16,336 = 66,503, which is close to the 66,499 to 66,501 rows of the other five members.

Next, I executed

DELETE TOP(16323) FROM WADPerfCounters4

to remove all but a few rows uploaded to the federation member prior to the error.

I right-clicked the WADPerfCounters4 table icon and selected Edit First 200 Rows, which displayed the following:

The 20th row (highlighted above) corresponds to the second selected row in the preceding capture. I confirmed that the resultset was correctly ordered by PartitionKey and RowKey values by observing the values uniformly increased by 6. Thus, deleting the first 20 rows and repeating the upload operation should solve the problem.

I selected and deleted the first 20 rows for a row count of 33,667 and repeated the upload process with the 4th federation member selected in the SQL Azure Federation Target page:

I was surprised to encounter a primary key constraint conflict after a few seconds:

The problem might be due to this limitation noted in SQL Server 2008 R2 Books Online’s DELETE (Transact-SQL) topic:

The rows referenced in the TOP expression used with INSERT, UPDATE, or DELETE are not arranged in any order.

Interim Conclusion

It would probably be easier and safer to simply delete the rows added by the process that failed and then attempt addition of the entire rowset for the individual member.

To do so for this case, I wanted to delete the last 50,000 rows, which should leave the 167 rows added by the first 1,000-row upload to the initial member with the following statement:

USE FEDERATION [WADFederation] (Id = 4) WITH FILTERING = OFF, RESET

GO

DELETE TOP(50000) FROM WADPerfCounters ORDER BY PartitionKey DESC, RowKey DESC

GO

However, DELETE … FROM doesn’t support an ORDER BY clause. Even if it did, the rows deleted might not be those expected because they “are not arranged in any order.”

I then asked the SQL Azure Federations Team for their recommendations for solving this problem.

Executing a MERGE Command to Add Missing Rows

MSDN’s Inserting, Updating, and Deleting Data by Using MERGE topic explains SQL Server 2008 R2 new command as follows:

In SQL Server 2008, you can perform insert, update, or delete operations in a single statement using the MERGE statement. The MERGE statement allows you to join a data source with a target table or view, and then perform multiple actions against the target based on the results of that join. For example, you can use the MERGE statement to perform the following operations:

Conditionally insert or update rows in a target table. If the row exists in the target table, update one or more columns; otherwise, insert the data into a new row.

Synchronize two tables. Insert, update, or delete rows in a target table based on differences with the source data.

The MERGE syntax consists of five primary clauses:

The MERGE clause specifies the table or view that is the target of the insert, update, or delete operations.

The USING clause specifies the data source being joined with the target.

The ON clause specifies the join conditions that determine where the target and source match.

The WHEN clauses (WHEN MATCHED, WHEN NOT MATCHED BY TARGET, and WHEN NOT MATCHED BY SOURCE) specify the actions to take based on the results of the ON clause and any additional search criteria specified in the WHEN clauses.

The OUTPUT clause returns a row for each row in the target that is inserted, updated, or deleted.

For complete details on the syntax and rules, see MERGE (Transact-SQL).

This MERGE option requires:

- Adding a reference (source) table with the same structure as the federation member (target)

- Using SQLAzureFedMW to upload the rows from the original upload’s source table that have matching CounterId values (4)

- Executing the appropriate MERGE statement

Creating an Empty WADPerfCounters4 Reference Data Table in WADFederation Member 4

I navigated to federation member 4, opened a new query and then added a WADPerfCounters4 reference table to the member with the following query:

CREATE TABLE [WADPerfCounters4](

[PartitionKey] [bigint] NOT NULL,

[RowKey] [varchar](100) NOT NULL,

[Timestamp] [datetime2](7) NOT NULL,

[EventTickCount] [bigint] NOT NULL,

[DeploymentId] [varchar](50) NOT NULL,

[Role] [varchar](20) NOT NULL,

[RoleInstance] [varchar](20) NOT NULL,

[CounterName] [varchar](100) NOT NULL,

[CounterValue] [decimal](16,8) NOT NULL,

[CounterId] [int] NOT NULL,

CONSTRAINT [PK_WADPerfCounters] PRIMARY KEY CLUSTERED

(

[PartitionKey] ASC,

[RowKey] ASC,

[CounterId] ASC

)

)

The above is the same query as that of Creating a SQL Azure Federation in the Windows Azure Platform Portal’s step 15 without the FEDERATED ON (Id = CounterID) instruction:

Uploading Data to a Reference Table with SQLAzureFedMW v1

I launched SQLAzureFedMigWiz and specified the local AzureDiagnostics database’s WADPerfCounters4 as the Data Source table, which has 66,333 rows:

I selected the Id (4 to 5) member as the SQL Azure Federation Target table:

Note: The destination table is specified by the name of the source table in all cases.

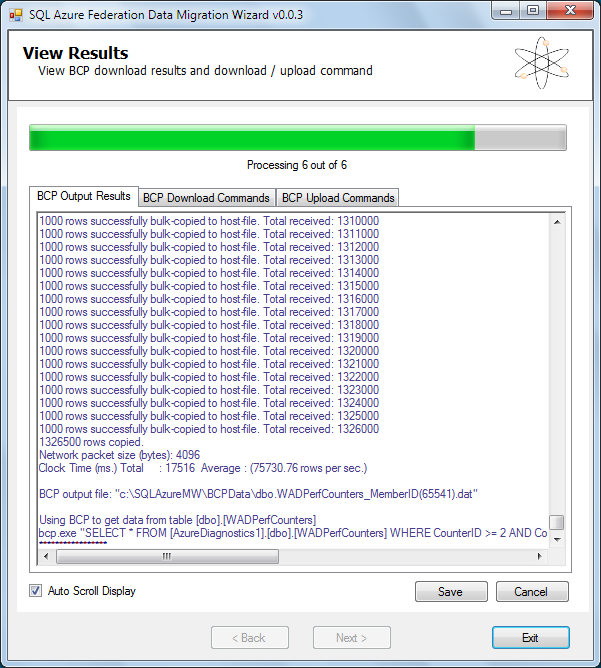

Here’s the data for the download from the local database:

and for the upload:

Here are the first few rows and columns of the table in the Portal’s UI:

Executing the MERGE Statement

Cihan Biyikoglu provided me with the the following MERGE statement:

MERGE INTO [WADPerfCounters] as target

USING WADPerfCounters4 as source

ON (target.[PartitionKey]=source.[PartitionKey]

AND target.[RowKey]=source.[RowKey]

AND target.[CounterId]=source.[CounterId])

WHEN NOT MATCHED BY TARGET THEN

INSERT ([PartitionKey], [RowKey], [Timestamp], [EventTickCount],

[DeploymentId], [Role], [RoleInstance], [CounterName], [CounterValue],

[CounterId])

VALUES(source.[PartitionKey], source.[RowKey], source.[Timestamp],

source.[EventTickCount], source.[DeploymentId], source.[Role],

source.[RoleInstance], source.[CounterName], source.[CounterValue],

source.[CounterId]);

Executing the statement produced in a few seconds the following message indicating a successful result:

I then verified that the total row count included the 167 rows added by the first operation with 1,000 total rows:

All other federation members have 66,500 +/- 1 rows, so correct recovery is confirmed.

Investigating Strange Storage Values Reported by Federation Member Pop Ups

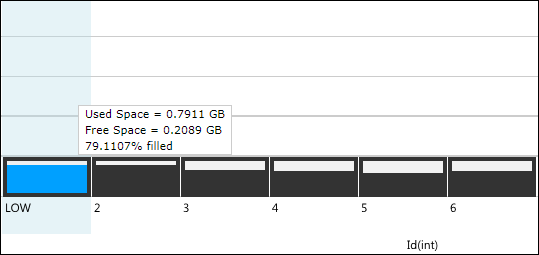

I noticed a strange variation in the space data reported by the pop ups for the boxes representing federation members:

The following table reports the row count, used space, free space and % filled values for each of the six presumably identically sized members:

| CounterId | Row Count | Used Space, GB | Free Space, GB | % Filled |

| 1 | 66,501 | 0.0204 | 0.9796 | 2.0386 |

| 2 | 66,500 | 0.0203 | 0.9797 | 2.0302 |

| 3 | 66,500 | 0.0174 | 0.9826 | 1.7395 |

| 4 | 66,500 | 0.0319 | 0.9681 | 3.1860 |

| 5 | 66,500 | 0.0159 | 0.9841 | 1.5892 |

| 6 | 66,499 | 0.0171 | 0.9829 | 1.7075 |

Part of the variation might be due to the relatively small size of the uploaded data, so I’m starting a 2 GB WADPerfCounters upload of 7,959,000 rows on 1/15/2012 at 8:30 AM PST in a single 10-hour batch. I’ve reported the results below.

SQLAzureFedMW created six BCP text files, each containing 1,326,500 rows with the same federation ID, in 00:01:24 and started uploading in parallel at 8:37 AM:

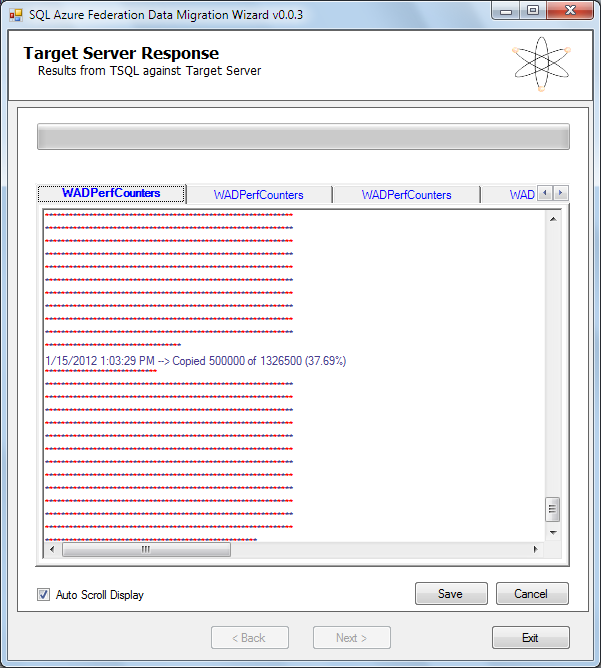

Here’s a capture of the federation member 1’s completion of the first batch of 500,000 rows on 1/15/2012 at 1:03 PM PST:

and the upload’s completion at 7:05 PM PST at a rate of 65.57 rows/sec:

The federation detail window’s member buttons show about 40% filled

The following table shows a similar pattern of differences in space statistics:

| CounterId | Row Count | Used Space, GB | Free Space, GB | % Filled |

| 1 | 1,393,001 | 0.4057 | 0.5943 | 40.5724 |

| 2 | 1,393,000 | 0.4057 | 0.5943 | 40.5716 |

| 3 | 1,393,000 | 0.3427 | 0.6573 | 34.2659 |

| 4 | 1,393,000 | 0.3378 | 0.6672 | 33.7830 |

| 5 | 1,393,000 | 0.3131 | 0.6869 | 31.3148 |

| 6 | 1,392,999 | 0.3321 | 0.6679 | 33.2146 |

The only varchar(n) field in the table that varies significantly in length is CounterName:

| CounterID | CounterName | Length |

| 1 | \Network Interface(Microsoft Virtual Machine Bus Network Adapter _2)\Bytes Sent/sec | 84 |

| 2 | \Network Interface(Microsoft Virtual Machine Bus Network Adapter _2)\Bytes Received/sec | 88 |

| 3 | \ASP.NET Applications(__Total__)\Requests/Sec | 46 |

| 4 | \TCPv4\Connections Established | 31 |

| 5 | \Memory\Available Mbytes | 25 |

| 6 | \Processor(_Total)\% Processor Time | 36 |

The pattern of Used Space and % Filled data follows the relative length of the CounterName, which might explain the differences. It’s clear that differences in average lengths of rows could play an important part in designing a sharding strategy that minimizes monthly cost.

Update 1/18/2011: Added another 7,958,995 rows to the WADFederation with SQLAzureFedMW v1.2 to reach about 80% full on members 1 and 2:

Note: The second group of 20 tab-separated values files used for this upload didn’t have the uniform 398,000 rows per page of the first group, as described in the Generating Big Data for Use with SQL Azure Federations and Apache Hadoop on Windows Azure Clusters post of 1/8/2012. The reason for this difference is clear at present.

The federation is now ready for performance testing of fan-out queries with the online Fan-Out Query Utility as described in Cihan Biyikoglu’s Introduction to Fan-out Queries (PART 1): Querying Multiple Federation Members with Federations in SQL Azure post of 12/29/2011. Stay tuned for a new post on this topic.

0 comments:

Post a Comment