Windows Azure and Cloud Computing Posts for 5/16/2011+

| A compendium of Windows Azure, Windows Azure Platform Appliance, SQL Azure Database, AppFabric and other cloud-computing articles. |

Dedicated to TechEd NorthAmerica 2011 Day 1

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database and Reporting

- Marketplace DataMarket and OData

- Windows Azure AppFabric: Access Control, WIF and Service Bus

- Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch

- Windows Azure Infrastructur and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Azure Blob, Drive, Table and Queue Services

No significant articles today.

<Return to section navigation list>

SQL Azure Database and Reporting

Zane Adam announced “Project Austin” for SQL Azure in a 5/15/2011 post:

We are continuing our innovation in the Windows Azure Platform and releasing at a frequent cadence of high-value new features and enhancements to our public cloud services. Today at TechEd I’m excited to make a few new announcements:

For AppFabric, this past month we have just released the Windows Azure AppFabric Caching service, and major enhancements to the Access Control service. At TechEd today we announced two new capabilities for both developers and IT Pros:

- We released today new features and enhancements as part of the Windows Azure AppFabric May CTP which adds to the Service Bus more comprehensive pub/sub messaging and enables new scenarios on Windows Azure platform. These scenarios include:

- Async Cloud Eventing – Distribute event notifications to occasionally connected clients (e.g. phones, remote workers, kiosks, etc);

- Event-driven SOA – Loose coupling to enable easy evolution of messaging topology over time; and

- Intra-App Messaging – Load leveling and load balancing for building highly scalable, resilient applications.

- We also announced capabilities coming in the Windows Azure AppFabric June CTP, which will provide the AppFabric Application Manager and Developer Tools that make it easy for developers to build, deploy, manage and monitor multi-tier (web, business logic, database) applications as a single logical entity. This CTP will also enable getting these benefits when running Windows Communication Foundation (WCF) and Windows Workflow Foundation (WF) services on AppFabric.

For SQL Azure we also recently released new Import/Export feature for SQL Azure as CTP. At TechEd today we announced a set of new developer and IT Pro enhancements:

- A new capability (Codename “Austin”) which will make Microsoft StreamInsight’s complex event processing capabilities (available in SQL Server today) available as a service on the Windows Azure platform. This allows customers and partners to build event-driven applications where the analysis of the events is performed in the cloud. This is in private CTP for now, but will be opened up for public CTP later in H2 on the SQL Azure Labs site.

- We are integrating the import/export features into the management portal. These features help simplify archiving SQL Azure databases and provide migration between SQL Server on-premises and SQL Azure. This makes it significantly easier to move databases back and forth between on-premises and cloud. Additionally, this enables new hybrid scenarios to extend on-premises data beyond the firewall to the cloud and reach both web and mobile users. By exporting an on-premises database to the cloud and utilizing SQL Azure Data Sync, data will be truly available and kept up to date anywhere – in any of Microsoft’s global datacenters, customer datacenters, and in branch offices for scenarios such as retail point of sale systems.

- We will also provide an enhanced management experience through the portal, including enhancements to the web based database manager (formerly called “Project Houston”) for additional schema management, and a new service for managing SQL Azure databases via web services with an OData endpoint.

I’d also like to briefly talk a little more about Codename “Austin”. To learn more about StreamInsight, you can start here, and it adds another dimension to our growing portfolio of cloud data services. Codename “Austin” now offers the capability for creating event-driven systems in the cloud that have high data rates, continuous queries, and low-latency requirements. Industries such as manufacturing, utilities, and web analytics use StreamInsight to identify meaningful trends and patterns as they happen to trigger immediate response actions. Some example scenarios include:

- Collecting data from manufacturing applications (e.g. real-time events from plant-floor devices and sensors);

- Financial trading applications (e.g. monitoring and capitalizing on current market conditions with very short windows of opportunity);

- Web analytics (e.g. immediate click-stream pattern detection and response with targeted advertising.); and

- “Smart grid” management (e.g. infrastructure for managing electric grids and other utilities, such as immediate response to variations in energy to minimize or avoid outages or other disruptions of service).

Complementary to BI, complex event processing (CEP) enables real time insight into vast volumes of streaming data; while BI enables analytics and insight into a set of existing data to inform future decision making. Taking StreamInsight into the cloud with Codename “Austin” provides the opportunity to use this as a service instead of implementing this yourself, but more importantly, be able to collect and process events from anywhere on the planet and derive trends from a vastly increased series of events since that data is sent to the cloud.

To read more on the AppFabric announcements you can read the post on the AppFabric Team Blog and visit www.appfabric.net.

To read more on the latest about the SQL Azure announcements at TechEd, check out the SQL Azure team blog.

We continue to listen to your feedback and come out with new and exciting enhancements to our cloud services. So check out the new capabilities and let us know what you think!

Steve Yi described the SQL Azure May 2011 Update in a 5/16/2011 post to the SQL Azure team blog:

Announced earlier today at TechEd, there are several key updates to the SQL Azure service that I wanted to share with you. Zane Adam covered these at a high level on his blog this morning as well as talking about improvements in AppFabric. The theme of this service release was to continue improving on making SQL Azure databases easier to manage, and the service enhancements go a long way towards making automation and keeping track of geo-distributed deployments more convenient.

For the May 2011 service update, there are four key improvements the engineering teams have been hard at work on:

- SQL Azure Management REST API – a web API for managing SQL Azure servers.

- Multiple servers per subscription – create multiple SQL Azure servers per subscription.

- JDBC Driver – updated database driver for Java applications to access SQL Server and SQL Azure.

- DAC Framework 1.1 – making it easier to deploy databases and in-place upgrades on SQL Azure.

Let’s go through these one by in a little more detail. For deeper technical details you can read more in the MSDN documentation here.

SQL Azure Management REST API: With the latest release, managing databases can be accomplished via a web API to programmatically manage SQL Azure servers along with configuring the firewall rules. While managing the servers via the Windows Azure developer portal is straightforward enough, doing this via an API provides the ability automate these tasks. Many SQL Azure solutions created by our partner ISVs create new databases or add a firewall rule when onboarding new customers. This API makes scenarios such as this much more convenient and efficient. The REST API we’ve implemented utilizes standard and open web protocols to make it easy to use by any variety of programming platforms.

Multiple servers per subscription: You can now create multiple SQL Azure servers per subscription, making it easier to manage multiple database deployments across different servers – whether they’re in the same datacenter, or a geo-distributed deployment across worldwide Windows Azure platform datacenters.

JDBC Driver: Java developers can download the updated driver here. The SQL Server JDBC Driver 3.0 driver is now available, a Type 4 JDBC driver. This version fully supports both SQL Server and SQL Azure and is free of charge. This enables on-premises Java applications to communicate with SQL Azure to make data available in the cloud; or to deploy a Java app to Windows Azure and utilize SQL Azure as the underlying data store. More information on Windows Azure cross-platform capabilities are here.

DAC Framework 1.1: At the end of March I put up a post about the new import/export feature for SQL Azure that makes migrating databases between on-premises SQL Server and SQL Azure pretty simple, and will be tightly integrated into the database tools shipping with the next version of SQL Server (“Denali”). Both schema and data are packaged together into a .bacpac file format (and no, I wasn’t involved in the naming of that file extension :) .

The improvements to the DAC Framework in 1.1 takes this a step further by enabling in-place upgrades of SQL Azure databases, changing the database schema as necessary while still preserving the data. Very cool. Used in conjunction with SQL Azure Data Sync, synchronizing data across on-premises and cloud creates very compelling opportunities to extend data from on-premises to reach users on the web, phone, tablets, and in next generation web apps via AJAX and jQuery.

Over the next few weeks I’ll post more updates and examples of the new service enhancements. Leave a comment and let me know which of these features you’re most interested in learning more about.

The SQL Server Team posted Microsoft Introduces the Cloud-Ready Information Platform on 5/16/2011:

TechEd North America is upon us today and we couldn’t be more thrilled. Microsoft continues to evolve and invest in the Information Platform and today during the TechEd Foundational Session, Microsoft SQL Server: The Data and BI Platform for Today and Tomorrow, Quentin Clark, Corporate Vice President, unveiled the Microsoft Cloud-Ready Information Platform.

The data explosion is happening at every level across every imaginable device, application and individual. Meanwhile IT needs to balance the proliferation of applications, globalization, increasingly powerful commodity hardware, demand for accessible insights, and new form factors such as the cloud, appliances and mobile devices. And they have to do this with an uptime and level of compliance that is simply expected.

The Cloud-Ready Information Platform brings customers a versatile database and business intelligence platform that will help them tackle the data explosion and evolve into the future through integrated private and public cloud offerings, optimized appliances, complete and scalable data warehouse offerings, scalable end-to-end business intelligence, and of course continued investments in the SQL Server database software.

So what does this mean? Well, it means you can start to break free from tradition and move the business forward with mission critical confidence, breakthrough insight and cloud on your terms.

- Mission-Critical Confidence

The cloud-ready information platform will protect an organization’s infrastructure – getting you the nines and performance you need at the right price, especially for mission critical workloads, with the new availability solution, SQL Server AlwaysOn and blazing-fast performance with Project “Apollo.” With Microsoft, customers reduce the need to trade off uptime for security patching– SQL Server continues to lead major database vendors with the fewest number of vulnerabilities [nist.org] and in turn this reduces the need to sacrifice uptime for patching. Customers who bet on Microsoft get more than a trusted platform; they get a trusted business partner and a huge ecosystem of experienced vendors.- Breakthrough Insight

Customers will quickly unlock breakthrough insights across thousands of users through highly interactive web-based data visualizations with Project “Crescent” and managed self-service analytics with PowerPivot all unified with the new BI semantic model. Insights are backed by credible consistent data made possible by new Data Quality Services and complete data warehousing solutions including Parallel Data Warehouse and Fast Track.- Cloud On Your Terms

Additionally, SQL Server will offer organizations the agility to quickly create and scale solutions that solve challenges and fuel new business opportunity from server to private or public cloud linked together by common tools—build once, deploy and manage wherever with SQL Server Developer Tools code name “Juneau.”What does cloud-ready mean for you?

SQL Server Code Name “Denali” will help customers bridge applications and workloads from traditional servers to private cloud to public cloud. Customers can take advantage of private and public clouds by scaling on demand with flexible deployment options, a common set of developer tools, and solutions to extend the reach of data across private and public cloud environments. “Denali” will deliver key features that help customers move to the cloud when they are ready and without the need to rewrite or retool investments.

- Scale on demand with flexible deployment options: A common architecture which spans traditional, private cloud and public cloud environments giving customers the ability to scale beyond the constraints of any one environment for maximum flexibility in deployments

- Fast time to market: A range of options for rapidly provisioning resources and reducing IT burden including Fast Track reference architectures to build private cloud solutions, pre-configured and optimized SQL Server appliances for ready-made solutions, and public cloud data services with SQL Azure

- Common set of tools across on-premises and cloud: Integrated set of developer tools and management tools for developing and administering applications across private cloud and public cloud giving developers and IT professionals maximum productivity, faster time to solution and lower on-ramp to building cloud solutions. Also, enhancements to the Data-tier Application Component (DAC) introduced in SQL Server 2008 R2 further simplify the management and movement of databases from on premises to cloud.

- Solutions to extend the reach of data: Support for technologies such as SQL Azure Data Sync to synchronize data across private and public cloud environments, OData to expose data through open feeds to power multiple user experiences and Windows Azure Marketplace DataMarket to monetize data or consume from multiple data providers.

Get ready for the evolution

The next SQL Server Code Name “Denali” CTP is coming this summer! Sign up today for the notification and be among the first to test the exciting new features and functionality.

Steve Yi listed SQL Azure And Data Services at TechEd 2011: What’s Coming This Week in a 5/16/2011 post to the SQL Azure Team Blog:

TechEd is this week! May 16-19 to be exact, in Atlanta. Unfortunately I won’t be there, I was at InterOp earlier this week in Vegas (I’ll send a recap of that in the next few days) and I’ll be sitting this one out and catching up on some work and start work on an idea for a cloud application that I’ve been thinking about for a while.

My colleague, Tharun Tharian, will be there so I encourage you to say hi if you see him. Of course he’ll be joined by the SQL Azure, DataMarket, and OData engineering teams delivering the latest on the respective services and technologies, and also manning the booth to answer your questions. You’ll find them hanging out at the Cloud Data Services booth if you want to chat with them during the conference.

For those of you going, below are the SQL Azure and OData sessions that I encourage you to check out the sessions listed below – either live or over the web. I encourage you to attend either Quentin Clark or Norm Judah’s foundational sessions right after the keynote to learn more about how to bridge on-premises investments with Windows Azure platform, and how SQL Server “Denali” and SQL Azure will work together in both the services offered, and updates in the tooling and development experience. You may even hear some new announcements about SQL Azure and other investments in cloud data services.

Enjoy the conference!

****

Foundational Sessions:

FDN04 | Microsoft SQL Server: The Data and BI Platform for Today and Tomorrow

- Speaker(s): Quentin Clark

- Monday, May 16 | 11:00 AM - 12:00 PM | Room: Georgia Ballrm 3

FDN05 | From Servers to Services: On-Premise and in the Cloud

- Speaker(s): Norm Judah

- Monday, May 16 | 11:00 AM - 12:00 PM | Room: Sidney Marcus Auditorium

SQL Azure Sessions:

DBI403 | Building Scalable Database Solutions Using Microsoft SQL Azure Database Federations

- Speaker(s): Cihan Biyikoglu

- Monday, May 16 | 3:00 PM - 4:15 PM | Room: C201

DBI210 | Getting Started with Cloud Business Intelligence

- Speaker(s): Pej Javaheri, Tharun Tharian

- Monday, May 16 | 4:45 PM - 6:00 PM | Room: B213

COS310 | Microsoft SQL Azure Overview: Tools, Demos and Walkthroughs of Key Features

- Speaker(s): David Robinson

- Tuesday, May 17 | 10:15 AM - 11:30 AM | Room: B313

DBI323 | Using Cloud (Microsoft SQL Azure) and PowerPivot to Deliver Data and Self-Service BI at Microsoft

- Speaker(s): Diana Putnam, Harinarayan Paramasivan, Sanjay Soni

- Tuesday, May 17 | 1:30 PM - 2:45 PM | Room: C208

DBI314 | Microsoft SQL Azure Performance Considerations and Troubleshooting

- Wednesday, May 18 | 1:30 PM - 2:45 PM | Room: B312

DBI375-INT | Microsoft SQL Azure: Performance and Connectivity Tuning and Troubleshooting

- Speaker(s): Peter Gvozdjak, Sean Kelley

- Wednesday, May 18 | 3:15 PM - 4:30 PM | Room: B302

COS308 | Using Microsoft SQL Azure with On-Premises Data: Migration and Synchronization Strategies and Practices

- Speaker(s): Mark Scurrell

- Thursday, May 19 | 8:30 AM - 9:45 AM | Room: B213

DBI306 | Using Contained Databases and DACs to Build Applications in Microsoft SQL Server Code-Named "Denali" and SQL Azure

- Speaker(s): Adrian Bethune, Rick Negri

- Thursday, May 19 | 8:30 AM - 9:45 AM | Room: B312

DataMarket & OData Sessions:

DEV308 | Creating and Consuming OData Services

- Tuesday, May 17 | 3:15 PM - 4:30 PM | Room: B402

COS307 | Building Applications with the Windows Azure DataMarket

- Speaker(s): Christian Liensberger, Roger Mall

- Wednesday, May 18 | 3:15 PM - 4:30 PM | Room: B312

DEV325 | Best Practices for Building Custom Open Data Protocol (OData) Services with Windows Azure

- Speaker(s): Alex James

- Thursday, May 19 | 1:00 PM - 2:15 PM | Room: C211

Development Sessions:

DEV312 | Code First Development in EF 4.1

- Tuesday, May 17 | 10:15 AM - 11:30 AM | Room: C307

DEV313 | Entity Framework 4 & Beyond: Building Real-World Apps

- Wednesday, May 18 | 10:15 AM – 11:30 AM | Georgia Ballroom 1

DEV207 | Introducing SQL Server Developer Tools, Codename “Juneau”

- Wednesday, May 18 | 3:00 PM - 4:15 PM | Room: B406

DEV314 | SQL Server Developer Tools, Codename “Juneau” & EF: Best Friends Forever

- Wednesday, May 18 | 5:00 PM - 6:15 PM | Georgia Ballroom 3

Interactive Sessions

DEV374-INT | OData Unplugged

- Wednesday, May 18 | 1:30 PM - 2:45 PM | B303

Product Demo Stations (all week during Expo hours)

- Entity Framework & SQL Server Developer Tools, Codename “Juneau”

- Data in the Cloud (OData, SQL Azure, Data Market)

<Return to section navigation list>

MarketPlace DataMarket and OData

See Steve Yi listed SQL Azure And Data Services at TechEd 2011: What’s Coming This Week in a 5/16/2011 post to the SQL Azure Team Blog in the SQL Azure Database and Reporting section above.

<Return to section navigation list>

Windows Azure AppFabric: Access Control, WIF and Service Bus

The AppFabric Team sported a new blog URL when Announcing the Windows Azure AppFabric CTP May and June Releases on 5/16/2011:

Today at the TechEd conference we announced that we have released enhancements to Service Bus as part of the Windows Azure AppFabric CTP May release, and the upcoming release of the AppFabric Developer Tools and Application Manager as part of the Windows Azure AppFabric CTP June release.

Service Bus is already a production service supported by a full SLA. We also have a CTP version of the service showcasing future enhancements in our LABS previews environment. Today we have released the Windows Azure AppFabric CTP May release that adds more comprehensive pub/sub capabilities to the Windows Azure platform which enhance and enable new scenarios.

The Service Bus enhancements include capabilities that enable more advanced pub/sub messaging through Queues with a durable store and Topics that enable subscriptions. You can learn more on these capabilities in this blog post: Introducing the Windows Azure AppFabric Service Bus May 2011 CTP. [See below.]

In addition, these enhancements enable connectivity to Service Bus from any platform or operating system through the REST/HTTP API. For example, it is possible for Java and PHP applications to connect to the Service Bus using the REST/HTTP API.

We are also adding more videos and code samples to the Windows Azure AppFabric Learning Series available on CodePlex regarding these capabilities. Here is the list of videos on these capabilities that have already been released, or are coming soon:

- An Introduction to the Windows Azure AppFabric Service Bus

- An Introduction to Windows Azure AppFabric

- Coming Soon: An Introduction to Service Bus Queues

- Coming Soon: An Introduction to Service Bus Topics

- Coming Soon: An Introduction to Service Bus Relay

- Coming Soon: How to use Service Bus Topics + Code Sample

- Coming Soon: How to use Service Bus Queues + Code Sample

- Coming Soon: How to use Service Relay + Code Sample

- Coming Soon: How to connect to Service Bus from Java and PHP applications Code Samples

In addition, we announced at TechEd that the upcoming Windows Azure AppFabric CTP June release will introduce new capabilities that make it easy for developers to build, deploy, manage and monitor multi-tier applications (across web, business logic and database tiers) as a single logical entity.

These new capabilities consist of:

- AppFabric Developer Tools which are enhancements to Visual Studio that enable to visually design and build end-to-end applications on the Windows Azure platform.

- AppFabric Application Manager which are runtime capabilities that enable automatic deployment, management and monitoring of the end-to-end application, and is supported by visual monitoring and analytics from within the cloud management portal.

- Composition Model which are a set of .NET Framework extensions for composing applications on the Windows Azure platform. This builds on the familiar Azure Service Model concepts and adds new capabilities for describing and integrating the components of an application. The Composition Model gets created by the AppFabric Developer Tools and used by the AppFabric Application Manager.

This CTP will also enable and make it easy to build, deploy, manage and monitor Windows Communication Foundation (WCF) and Windows Workflow Foundation (WF) services on AppFabric.

You can learn more by watching the Composing and Managing Applications with Windows Azure AppFabric video which we added to the Windows Azure AppFabric Learning Series available on CodePlex.

We will share more details on these capabilities with the release of the Windows Azure AppFabric June CTP.

The enhancements to Service Bus are available on our LABS previews environment at: http://portal.appfabriclabs.com/. So be sure to login and start checking out these new capabilities.

You should also download the new Windows Azure AppFabric SDK V2.0 CTP – May Update in order to be able to use these capabilities. Please remember that there are no costs associated with using the CTPs, but they are not backed up by any SLA.We would really like to get your feedback on these new capabilities showcased in the CTP, so make sure to ask questions and provide feedback on the Windows Azure AppFabric CTP Forum.

For questions and feedback on the production Service Bus please use the Connectivity for the Windows Azure Platform Forum.

To learn more about Windows Azure AppFabric:

- Visit the Windows Azure AppFabric website

- Visit the Windows Azure AppFabric Developer page

- Read the Windows Azure AppFabric Overview Whitepaper

- Watch the videos and code samples on the Windows Azure AppFabric Learning Series available on CodePlex

If you have not signed up for Windows Azure AppFabric yet be sure to take advantage of our free trial offer. Just click on the image below and get started today!

Read the MSDN Library’s Windows Azure AppFabric CTP May Release topic.

Clemens Vasters (@clemensv) of the AppFabric Team posted Introducing the Windows Azure AppFabric Service Bus May 2011 CTP on 5/16/2011:

A lot of partners and customers we talk to are telling us that they think of Service Bus as one of the key differentiators of the Windows Azure platform because it enables customers to build and interconnect applications that reflect the reality of where things stand with regard to moving workloads to cloud infrastructures: Today and for years to come, applications and solutions will straddle desktop and devices, customer-owned and operated servers and datacenters, and private and public cloud assets.

After a decade and more of application integration and process streamlining, no line-of-business application is and should ever again be an island.

If applications move to the cloud or if cloud-based SaaS solutions are to be integrated into enterprise solutions for individual customers, integration invariably requires capabilities like seamless access to services and secure, reliable message flow across network and trust boundaries. Also, as more and more applications are federated across trust boundaries and are built to work for multiple tenants, classic network federation technologies such as VPNs are often no longer adequate since they require a significant degree of mutual trust between parties as they permit arbitrary network traffic flow that needs to be managed.

We just released a new Community Technology Preview that shows that we’re hard at work and committed to expand Service Bus into a universal connectivity, messaging, and integration fabric for cloud-hosted and cloud-connected applications – and we invite you to take a look at our Windows Azure AppFabric SDK V2.0 CTP – May Update and accompanying samples.

Service Bus is already unique amongst platform-as-a-service offerings in providing a rich services relay capability that allows for global endpoint federation across network and trust boundaries.

As of today, we’re adding a brand-new set of cloud-based, message-oriented-middleware technologies to Service Bus that provide reliable message queuing and durable publish/subscribe messaging both over a simple and broadly interoperable REST-style HTTPS protocol with long-polling support and a throughput-optimized, connection-oriented, duplex TCP protocol.

The new messaging features, built by the same team that owns the MSMQ technology, but on top of a completely new technology foundation, are (of course) integrated with Service Bus’s naming and discovery capabilities and the familiar management protocol surface and allow federated access control via the latest release of the Windows Azure AppFabric Access Control service.

Queues

Service Bus Queues are based on a new messaging infrastructure backed by a replicated, durable store.

Each queue can hold up to 100MB of message content in this CTP, which is a quota we expect to expand by at least an order of magnitude as the service goes into production. Messages can have user-defined time-to-live periods with no enforced maximum lifetime.

The size of any individual message is limited to 256KB, but the session feature allows creating unlimited-size sequences of related messages whereby sessions are pinned to particular consumers and therefore enabling chunking of payloads of arbitrary sizes. The session state facility furthermore allows transactional recording of the progress a process makes as it consumes messages from a session and we also support session-based correlation, meaning that you can build multiplexed request/reply paths in a straightforward fashion.

Queues support reliable delivery patterns such as Peek/Lock both on the HTTP API and the .NET API that help ensuring processing integrity across trust boundaries where common mechanisms like distributed 2-phase transactions are challenging. Along with that, we have built-in detection of inbound message duplicates, allowing clients to re-send messages without adverse consequences if they’re ever in doubt whether a message has been logged in the queue due to intermittent network issues or an application crash.

In addition to a dead-letter facility for messages that cannot be processed or expire, Queues also allow deferring messages for later processing, for instance when messages are received out of the scheduled processing order and need to be safely put on the side while the process waits for a particular message to permit further progress.

Queues also support scheduled delivery – which means that you can hand a message to the queue infrastructure, but the message will only become available at a predetermined point in time, which is a very elegant way to build simple timers.

Topics

Service Bus Topics provide a set of new publish-and-subscribe capabilities and are based on the same backend infrastructure as Service Bus Queues – and have all the features I just outlined for Queues.

A Topic consists of a sequential message store just like a Queue, but allows for many (up to 2000 for the CTP) concurrent and durable Subscriptions that can independently yield copies of the published messages to consumers.

Each Subscription can define a set of rules with simple expressions that specify which messages from the published sequence are selected into the Subscription; a Subscription can select all messages or only messages whose user-or system defined properties have certain values or lie within certain value ranges. Rules can also include Actions, which allow modifying message properties as messages get selected; this allows, for instance, selecting messages by certain criteria and affinitizing those messages with sessions or to stamp messages with partitioning keys, amongst many other possible patterns.

The filtered message sequence represented by each Subscription functions like a virtual Queue, with all the features of Queues mentioned earlier. Thus, a Subscription may have a single consumer that gets all messages or a set of competing consumers that fetch messages on a first-come-first-served basis.

To name just a few examples, Topics are ideal for decoupled message fan-out to many consumers requiring the same information, can help with distribute work across partitioned pools of workers, and are a great foundation for event-driven architecture implementations.

Topics can always be used just like Queues by setting set up a single, unfiltered subscription and having multiple competing consumers pull messages from the subscription. The great advantage of Topics over Queues is that additional subscriptions can be added at any time to allow for additional taps on the message sequence for any purpose; audit taps that log pre-processing input messages into archives are a great example here.

Access Control Integration

This new CTP federates with the appfabriclabs.com version of the Access Control service, which is compatible with the Access Control “V2” service that is in available commercially since April. The current commercially available version of Service Bus federates with Access Control “V1”.

The Service Bus API to interact with Access Control for acquiring access tokens has not changed, but we are considering changes to better leverage the new federation capabilities of Access Control “V2”.

Customers who are setting up access control rules for Service Bus programmatically will find that there are significant differences between the management APIs of these two versions of the Access Control service. The current plan is to provide a staged migration for customers with custom access control rules on their Service Bus namespaces; migration will be an option for some period of time when we will operate the V1 and V2 versions of the Access Control Service side-by-side. We will publish concrete guidance for this migration over the next several months with initial details coming this week here on this blog.

What Changed and What’s Coming?

We believe that providing these capabilities in the cloud – paired with the features we already have available in Service Bus – will open up a whole new range of possibilities for cloud-hosted and cloud-enhanced applications. We have seen amazing business solutions built on Service Bus and based on customer feedback we’re convinced that the addition of a fully featured set of message-oriented middleware capabilities will enable even more powerful solutions to be built. Our intention is to make all capabilities contained in this preview commercially available in the second half of 2011.

The load balancing and traffic optimization features for the Relay capability of Service Bus that were added in the PDC’10 CTP of Service Bus have been postponed and are no longer available in this CTP. However, “postponed” does not mean “removed” and we are planning on getting these features back into a CTP release soon. We’ve traded these features for capabilities that we expect will be even more important for many customers: Full backwards compatibility between the current production release of Service Bus and the new version we’re presenting in this CTP, even though we have changed a very significant portion of the Service Bus backend. We are committed to provide full backwards compatibility for Service Bus when the capabilities of this CTP go into production, including backwards compatibility with the Microsoft.ServiceBus.dll that you already have deployed.

To help customers writing apps on platforms other than .NET we will also release Java and PHP samples for the new messaging capabilities in the next few weeks. These samples will be versions of the chat client implemented in the Silverlight and Windows Phone chat samples included in the SDK for this CTP release.

Lastly, and most importantly, the purpose of a Community Technology Preview is to collect feedback from the community. If you have suggestions, critique, praise, or questions, please let us know at http://social.msdn.microsoft.com/Forums/en-US/appfabricctp/

You can also Twitter me personally at @clemensv and I’ll let the team know what you have to say.

Ron Jacobs posted a Goodbye .NET Endpoint, Hello AppFabric Team Blog swansong to the .NET Endpoint blog on 5/16/2011:

Over the last year you’ve heard us talking about both Windows Azure AppFabric and Windows Server AppFabric. Today we are adding our final post to the .NET endpoint team blog and announcing the new AppFabric Team blog. This blog will be the home of content about all things AppFabric from Service Bus to Caching to WCF and WF.

If you’ve been following us here on the .NET Endpoint please be sure to follow the AppFabric Team Blog.

endpoint.tv becomes AppFabric.tv

As part of this change, endpoint.tv will now become AppFabric.tv. We will continue to bring you the best content about WF and WCF as well as the rest of the AppFabric world. Check it out at http://appfabric.tv

The Windows Identity and Access team posted Announcing the WIF Extension for SAML 2.0 Protocol Community Technology Preview! on 5/16/2011:

It is our pleasure to announce the availability of the first CTP release of the WIF (Windows Identity Foundation) Extension for the SAML 2.0 Protocol ! We heard your feedback about the necessity to have support for the SAML 2.0 protocol in WIF. Today, we announce an extension to WIF that delivers on that feedback.

This WIF extension allows .NET developers to easily create claims-based SP-Lite compliant Service Provider applications that use SAML 2.0 conformant identity providers such as AD FS 2.0.

This CTP release includes a set of samples that illustrate how to use the extension. You can download the package that includes the WIF Extension for SAML 2.0 Protocol and samples from here.

Key features of this extension include:

- Service Provider initiated and Identity Provider initiated Web Single Sign-on (SSO) and Single Logout (SLO)

- Support for the Redirect, POST, and Artifact bindings

- All of the necessary components to create a SP-lite compliant service provider application

We’ll be looking for your questions, comments, and other feedback on the claims based identity forum here. Watch this blog for future posts about the roadmap of this WIF extension.

WIF has a new Microsoft.com home page here.

Vittorio Bertocci (@vibronet) chimes in with Attention ASP.NET Developers: SAML-P Comes to WIF on 5/16/2011:

If I’d have a dollar for every time a customer or partner asked me if they could use WIF for consuming the SAML2.0 protocol… ok, I would not exactly buy a villa a Portofino, but let’s just say that this is one of the most requested features since WIF came out.

Well, dear .NET developers, rejoice: you no longer need to envy your friend the ADFS2 administrator. From now on you are gifted the ability to use ASP.NET for writing SAML-P SP-Lite compliant relying parties, which in fact I should probably call service providers just to add some local color.

The WIF team just released,and here I quote verbatim, the CTP of the WIF Extensions for SAML 2.0 Protocol.

At its core, what makes those extensions tick is the Saml2AuthenticationModule, which looks very similar (i.e. raises ~the same events, etc.) to the WSFederationAuthenticationModule and is in fact inserted in the pipeline more or less in the same way. The module lives in the assembly Microsoft.IdentityModel.Protocols.dll, together with the (lots of) classes it needs to implement the details of the SAML protocol.

The programming model may be similar, as one would expect, but of course the extensions implement features that are paradigmatically SAMLP. Examples? POST, Redirect and Artifact bindings; SP initiated and (can you believe that?) IP initiated SSO and SLO (single log out).

The package contains various other goodies: a good set of cassini-based samples, documentation that will get you started and that will help you to use ADFS2 as IP instead of the sample IP provided in the package. But my favourite is definitely the SamlConfigTool: it is a slightly more raw counterpart of fedutil/add STS reference, which can consume metadata from one IP and generate the corresponding SP config settings. And just like fedutil, it can generate the SP metadata so that the IP can easily consume it for automating the SP provisioning as well.

The WIF Extensions for SAML.20 Protocol unlock some new, interesting scenario: and of course, being this a CTP the WIF team really wants your feedback. If you’ll play with the extensions, please take a moment to chime in and let us know what you think!

<Return to section navigation list>

Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

No significant articles today.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

Michael S. Collier (@michaelcollier) provided details of the Update to Windows Azure Toolkit for Windows Phone 7 in a 5/16/2011 post:

Today Microsoft is releasing the third update to the Windows Azure Toolkit for Windows Phone 7. This is the third update in less than 2 months! It is great to see the teams moving so quickly to get new updates out. The second update (v1.1) included a little bit of new functionality, namely support for the Microsoft Push Notification Service, bug fixes, and some usability enhancements. This third update (v1.2) is a much more substantial update to the toolkit. Wade Wegner recently teased a few of these updates. Let’s take a quick look at some of them.

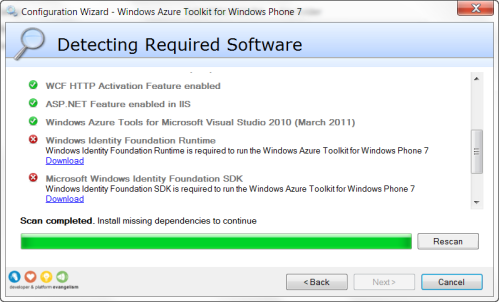

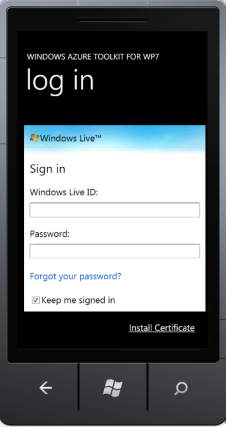

Support for Access Control Services

From the start you’ll notice at least two new software requirements: Windows Identity Foundation Runtime and Microsoft Windows Identity Foundation SDK. If you don’t have the WIF Runtime or SDK, the dependency checker will detect the missing software and provide you direct links to pages where you can download the appropriate version for your environment.

The Windows Identify Foundation (WIF) is used in support of what just may be the most exciting new feature of the toolkit – built-in support for Windows Azure Access Control Services. To get started, one of the first things you’ll want to do is create an ACS (v2) namespace. You can do that by opening the developer portal at http://windows.azure.com and going to the “Service Bus, Access Control & Caching” section.

You’ll need to grab the ACS namespace and management service key. To get that, you’ll want to click the desired namespace in the main window, and then the green “Access Control Service” button in the top ribbon. Additional details on this process can be found in toolkit’s documentation. Enter the namespace and key into the wizard when prompted. The toolkit will handle the rest for you. Pretty nice, huh?

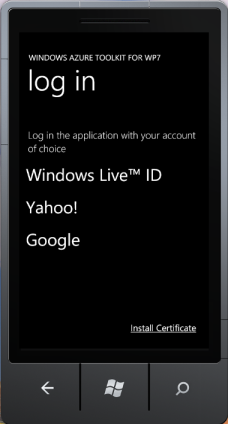

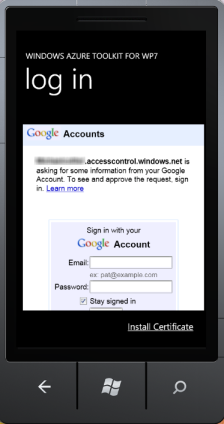

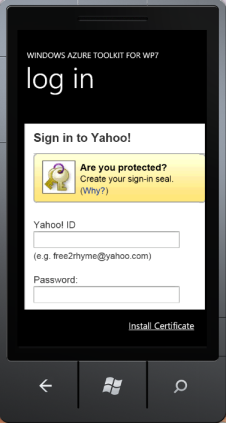

Now you can have your Windows Phone 7 application integrate with Windows Live, Yahoo!, and Google. For mobile users that are likely to have social identities to begin with, this gives them an easy on-ramp to your application! They can easily select the identity provider of choice.

Yahoo!

Windows Live ID

Once you authenticate, you’ll be prompted to complete the process by storing some information from the identity provider in a new Users table created on your behalf by the toolkit wizard.

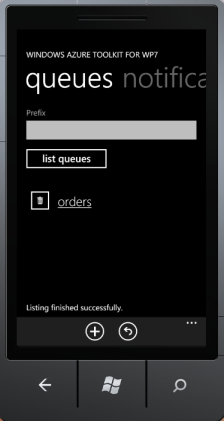

Support for Windows Azure Queues

Another great feature of the latest revision is support for Windows Azure Queues. This essentially completes the trifecta – support for Windows Azure tables, blobs, and now queues. Just like you can with tables and blobs, the toolkit now allows you to list existing queues in your storage account.

You can easily list queues in your account.

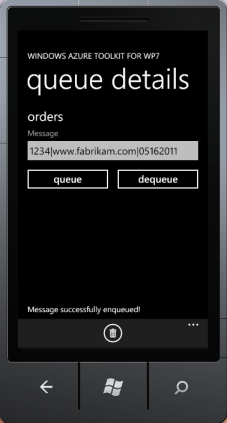

The toolkit also contains an easy sample to show how to add a new message to the queue.

If you add, then you should be able to delete too. By selecting the “dequeue” button, that’s exactly what will happen. The topmost message in the queue is removed from the queue.

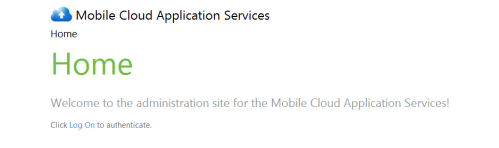

Improved User Interface

Finally, really nice update to the toolkit is a much more stylistic user interface. Previously the toolkit’s web admin interface was pretty, well, generic – straight out of the default ASP.NET MVC project styling. This latest update has a much improved UI that makes using the toolkit a lot more enjoyable experience.

Troubleshooting

This version of the Windows Azure Toolkit for Windows Phone 7 comes with much more help relating to some problems you might encounter. One that I’m very happy to see mentioned is help for those with Resharper installed. If you have Resharper installed and try to use the Windows Azure Toolkit for Windows Phone 7, you’ll likely run into a less than optimal experience – either errors when creating a new project and/or problems simply starting a new project. While the problem still exists, the 1.2 version of the troubleshooting documentation of the toolkit points out the problem and provides instructions on how to disable Resharper while you use the toolkit. I’m sure the team is hard at work on a permanent solution.

The v1.2 update looks to be a pretty impressive update to the Windows Azure Toolkit for Windows Phone 7. Be sure to check it out – I think you’ll enjoy it too!

Wade Wegner (@wadewegner) reported NOW AVAILABLE: Windows Azure Toolkit for Windows Phone 7 v1.2 on 5/16/2011:

Here it is – the Windows Azure Toolkit for Windows Phone 7 v1.2!

As I mentioned last week when I spoke about Updates Coming Soon to the Windows Azure Toolkit for Windows Phone 7, we have some really important and valuable additions to the toolkit.

Support and tooling for the Access Control Service 2.0

- Support for Windows Azure Storage Queues

- Updated UI/UX for the management web application

These are significant updates – particularly the support for ACS. Given the number of updates since version 1.0 – don’t forget that we added Microsoft Push Notification support, and more, in version 1.1 – I decided to redo the Getting Started with the Windows Azure Toolkit for Windows Phone 7 video.

I highly recommend you take a look at the following resources to learn more:

Getting Started with the Windows Azure Toolkit for Windows Phone 7

by Wade Wegner

Getting Started with ACS and the Windows Azure Toolkit for Windows Phone 7

- Vittorio Bertocci: Bring Your Active Directory in Your Pockets with ACS, OAuth 2.0 and the New Windows Azure Toolkit for Windows Phone 7

- Vittorio Bertocci: Windows Azure Toolkit for Windows Phone 7 1.2 will Integrate with ACS

- Wade Wegner: Using Windows Azure for Windows Phone 7 Push Notification Support

We also have a fantastic set of articles on CodePlex that you should take a look at:

- Setup and Configuration

- Toolkit Content

- Getting Started

- Creating a New Windows Phone 7 Cloud Application

- Running and Going Through the Windows Phone 7 Cloud Application

- Starting the Application

- Authenticating the User (ASP.NET Membership Authentication)

- Authenticating the User (ACS Authentication)

- Sending Microsoft Push Notifications

- Sending Apple Push Notifications

- Working with Tables, Blobs, and Queues

- User Authentication

- Troubleshooting

- Change Log

Version 1.2 Updates

In version 1.1 we introduced support for Microsoft Push Notification Services. In version 1.2 we default to adding this service, but we give you the option of excluding if it’s not required. Additionally, we also let you choose whether you want to support Apple Push Notification Services in now:

Then, you can easily use the Windows Azure Toolkit for iOS to work with this service running in Windows Azure.

As mentioned extensively by Vittorio, you can also choose to use ACS instead of the simple ASP.NET membership service.

Take a look at this article if you’re trying to determine which type of user authentication you should use. If you go with ACS, this produces a very nice login experience where you can choose one of your existing identity providers.

As with Blobs and Tables, we now provide full support for Windows Azure Queues. This allows you to enqueue and dequeue messages from your application.

Finally, we were not particularly pleased with the out-of-the-box ASP.NET theme, so we updated it. Inspired by the Metro Design guidelines for Windows Phone 7, we came up with something nice and fresh.

Breaking Changes

We’ve come far along enough now that it’s more important for us to track changes, in particular when they are breaking changes. If you used version 1.0 or 1.1 of this toolkit, I highly recommend you take a look at the Change Log. If you’ve started to use the toolkit for building applications, there are potentially a couple changes you should review. The two I’ll call out here are:

- In the AuthenticationService we changed the LoginModel class to Login. This means that you may have to update how authenticate to the membership service.

- We changed the CreateUserModel to RegistrationUser, and changed the name of its UserName property to Name. This class is used by the AuthenticationService to register new users.

An affect of these changes could be an error when interacting with user data stored in table storage. For local development, the best thing to do would be to reset local storage so that you don’t have the old schema stored in a table.

Next Steps

We’ll have at least a couple more releases of this toolkit over the next month or two, and I’ll share the details with you as soon as they are locked. For now, please be sure to let us know if you think we should target other scenarios. Submit your feedback on CodePlex and join the discussion!

I hope this helps!

Vittorio Bertocci (@vibronet) adds his two cents with Bring Your Active Directory in Your Pockets with ACS, OAuth 2.0 and the New Windows Azure Toolkit for Windows Phone 7 quickstart of 5/16/2011:

As promised last week, today Wade released the 1.2 version of the Windows Azure Toolkit for Windows Phone 7. And again as promised, this version has full support for ACS!

Using the new feature is super straightforward: you can see that by yourself in the quickstart video in which I walk through the simplest ACS configuration.

As mentioned in the teaser post last week, we purposefully kept the VS template very simple. As a result, the initial setup ends up with one application which supports Windows Live ID, Yahoo! and Google, the identity providers which are pre-configured within ACS.

However, once the project has been created nothing prevents you from working directly on you ACS namespace for adding new identity providers, such as Facebook or even your own Active Directory; those identity providers will automagically show up in the list of IPs in the login screen without he need of changing a single line of code. Want proof? Read on!

Adding a New WS-Federation Provider (like ADFS2)

Here I’ll assume that you went through the steps I show in the quickstart video and you ended up with the basic ACS application as created by the toolkit.

If you want to enable your users to sign in the application using their Active Directory accounts, you can go directly to the ACS namespace you are using and add you AD (assuming you have ADFS2 deployed) as an identity provider.

Note: Right now the toolkit bits are still handling access at the account level, which means that your users will need to go through the same sign-up step you have for users coming from social providers; however this does not subtract anything to the joy of being able to reuse your domain credentials on a device, instead of having to memorize yet another password. In the future the integration will be even more seamless: think claim mapping rules, along the lines of what we’ve done for integrating ADFS2 with Umbraco.

Well, let’s do it then: it will only take a minute!

As usual I don’t have an ADFS2 instance on my laptop, hence I’ll simulate it using SelfSTS. This time I picked the SelfSTS1 folder from the assets of the ACS and Federation lab, copied it under c:\temp and modified it a bit to emit a different set of claims:

I also changed its port from the config, generated a new certificate, hit Start and refreshed the federationmatadata.xml file (hint: use the URL from the metadata field to open the file in Notepad, then save it over the old metadata file). Those may not be strictly necessary, but I always do that for avoiding collisions.

Now that you have your ADFS2 simulation up & running, go to your namespace in the ACS portal at https://YOURNAMESPACE.accesscontrol.windows.net/v2/mgmt/web. From there go to Identity providers, hit Add, keep the default ws-federation and hit Next.

From here you can add your SelfSTS instance. Remember, it simulates your AD! If you have an ADFS2 instance, use that instead. Enter whatever name you want in the display name and login text link fields, upload the metadata file from your SelfSTS, scroll to the bottom of the page and hit Save.

Now click Rule groups form the left-hand menu, click on Default Rule Group for WazMobileToolkit, hit the Generate link, accept the defaults and click the Generate button. You can also hit Save for good measure, if you are superstitious .

And you’re done! Go back to Visual Studio and start the portal/service and the phone client as shown in the quickstart. Here there’s what you’ll see on the phone app:

That’s right! Just like that, now your AD appears as one of the options. Neat. If you pick that option, ACS will contact SelfSTS for authenticating you. If you would be using a real ADFS2 instance at this point you would be prompted for your credentials: but SelfSTS is a test utility which automatically authenticates you, hence you’ll go straight to the next screen:

…and from now on everything is exactly as for the social providers: the user gets an entry in the system, which will be used for handling authorization. You can see it in the Users table in the management portal.

Let me reiterate: in addition to being able to use credentials from Windows Live ID, Yahoo and Google, the user can now reuse his domain credentials to sign in from one Windows Phone client to one application whose backend is in the cloud (Windows Azure). And enabling all that took just few clicks on the ACS management portal, no code changes required.

Now, do you want to hear a funny story? We did not plan for this. I am not kidding. I am not saying that we are surprised, I totally expected this, what I mean is that this scenario didn’t take any specific effort to implement, it came out “for free” while implementing support for ACS and social providers.

When you admit users from social providers in your application, you don’t receive very detailed (or verifiable, excluding email) information about the users; hence the usual practice is to create an account for the user and mainly outsource credential management to the external identity providers.

In the walkthrough above I treated my simulated ADFS2 exactly in the same way, and everything worked thanks to the fact that we are relying on standards and ACS isolates the phone application and the backend from the differences between identity providers. It’s the usual federation provider pattern, with the twist demonstrated in the ACS + WP7 lab; in a future blog post I’ll go a bit deeper in the architecture of this specific solution.What could we accomplish if we’d explicitly plan for an identity provider like ADFS2? Well, for one: provision-less access, one of the holy grails of identity and access control. ADFS2 sources data from AD, hence can provide valuable information about its users (roles, job functions, spending limits, etc) which carries the reputation of the business running that AD instance. This means that we could greatly simplify the authorization flow, skipping the user registration step and authorizing directly according to the attributes in input. As mentioned above, we already have a good example of that in the ACS Extensions for Umbraco; that’s a feature that is very likely to make its way in the toolkit, too.

There you have it. The bits are in your hands now, and we can’t wait to find out what you’ll accomplish with them! If you have feedback, please do not hesitate to visit the discussion section in http://watoolkitwp7.codeplex.com/. Happy coding!

The Windows Azure Team put its spin on JUST ANNOUNCED: Windows Azure Toolkit for Windows Phone 7 v1.2 in a 5/16/2011 post:

During today’s keynote at TechEd North America, Microsoft’s Drew Robbins demonstrated how to build an application using the new Windows Azure Toolkit for Windows Phone 7 v.1.2. Now available for download here, this version includes some important new features, including:

- Support and tooling for the Access Control Service 2.0 (i.e. use identity federation like Live ID, Facebook, Google, Yahoo! and ADFS)

- Support for Apple Push Notification Services (with the Windows Azure Toolkit for iOS)

- Support for Windows Azure storage queues (simple enqueue and dequeue operations)

- Updated UI/UX for the management web application

- Code refactoring, simplification, and bug fixes

Check out these new videos on Channel 9 to help you get started with the toolkit:

Getting Started with the Windows Azure Toolkit for Windows Phone 7

Getting Started with ACS and the Windows Azure Toolkit for Windows Phone 7

You can also find additional information and guidance in the following related blog posts:

- NOW AVAILABLE: Windows Azure Toolkit for Windows Phone 7 v1.2

- Bring Your Active Directory in Your Pockets with ACS, OAuth 2.0 and the New Windows Azure Toolkit for Windows Phone 7

- Windows Azure Toolkit for Windows Phone 7 1.2 will Integrate with ACS

- Using Windows Azure for Windows Phone 7 Push Notification Support

Click here to read more about today’s announcements from TechEd North America. Click here to learn more about Windows Azure Toolkits for Devices.

The Windows Azure Team posted a brief Just Announced at Tech-Ed: Travelocity Launches Analytics System on Windows Azure case study on 5/16/2011:

Microsoft Corporate Vice President Robert Wahbe delivered the opening keynote this morning at Microsoft Tech-Ed North America 2011 in Atlanta, Georgia. During his talk, Wahbe outlined how the cloud is changing IT and demonstrated how Microsoft and Windows Azure are helping customers move their businesses to the cloud. One of the examples he used was Travelocity; their story is worth delving into because it illustrates the benefits a move to the cloud can create for an organization.

Founded in 1996, Travelocity is an online travel agency that connects millions of travelers with airlines, hotels, car-rental companies, and other services. In March 2010, business partners asked Travelocity to collect website metrics on customer shopping patterns. Travelocity decided to deploy the application in the cloud to avoid burdening its own data center.

Travelocity uses Windows Azure to provide compute power and storage for its business intelligence and analysis system. In doing so, it avoids burdening the capacity of its on-premises infrastructure, Thanks to cloud computing, Travelocity has fulfilled its partners’ requests for a system that collects metrics on customer interactions.

The company is also experiencing a shift in how it manages its development efforts. Because Microsoft manages the servers, configuration, and maintenance, Travelocity is able to build and deploy applications on a per-month subscription basis. It reduces costs while reaching its large customer base.

The company also benefits from the enormous scalability offered by Windows Azure, which ensures that customers from around the world can access Travelocity’s services reliably. Plus, because of a faster time-to-market and a flexible development environment, the company can experiment with new offerings and enhance the customer experience.

Click here to watch Wahbe’s opening keynote. Click here to read more about today’s announcements. You can read the Travelocity case study here.

<Return to section navigation list>

Visual Studio LightSwitch

Andy Kung continued his series with Course Manager Sample Part 5 – Detail Screens (Andy Kung) on 5/16/2011:

I’ve been writing a series of articles on the Course Manager Sample, if you missed them:

- Part 1: Introduction

- Part 2: Setting up data

- Part 3: User permissions & admin screens

- Part 4: Implementing the workflow

In Part 4, we identified the main workflow we want to implement. We created screens to add a student, search for a student, and register a course. In this post, we will continue and finish the rest of the workflow. Specifically, we will create detail screens for student and section records and a course catalog screen that allows user to filter sections by category.

Screens

Student Detail

Remember the Search Students screen we built in Part 4? If you click on a student link in the grid, it will take you to a student detail screen.

This is pretty cool. But wait… we didn’t really build this screen! In reality, LightSwitch recognizes that this is a common UI pattern, and therefore it generates a default detail screen for you on the fly. Of course, we can always choose to build and customize a detail screen, as we’re about to do for Student and Section.

Adding a detail screen

Create a screen using “Details Screen” template on Student table. In this screen, we want to also include student’s enrollment data, so let’s check the “Student Enrollments” box.

Make sure “Use as Default Details Screen” is checked. It means that this detail screen will be used as the detail screen for all student records by default. In other words, if you click on a student link, it will take you to this detail screen instead of the auto-generated one. As a side note, if you forget to set it as the default details screen here. You can also set the property of the Student table (in table designer).

By default, the details screen template lays out the student info on top and the related enrollment data on the bottom. We can make similar layout tweaks to the student portion as we did for “Create New Student” screen in Part 4 (such as moving the student picture to its own column, etc).

Including data from related tables

I’d like to draw your attention to the Enrollments portion of the screen. Since Enrollment is a mapping table between Student and Section, the grid shows you a student (shown as a summary link) and a section (shown as a picker). Neither of the fields is very useful in this context. What we really want is to show more information about each section (such as title, meeting time, instructor, etc.) in the grid. Let’s delete both Enrollment and Section under Data Grid Row.

Use the “+ Add” button and select “Other Screen Data.”

It will open the “Add Screen Data” dialog. Type “Section.Title”. You can use Intellisense to navigate through the properties in this dialog. Click OK.

The Title field of the Section will now appear in the grid. Follow similar steps to add some other section fields. The “Add Screen Data” dialog is a good way to follow the table relationship and include data that is many levels deep.

Making a read-only grid

Now we have an editable grid showing the sections this student is enrolled in. However, we don’t expect users to directly edit the enrollments data in this screen. Let’s make sure we don’t use editable controls (ie. TextBox) in grid columns. A quick way to do this is to select the “Data Grid Row” node. Check “Use Read-only Controls” in Properties. It will automatically selects read only controls for the grid columns (ie. TextBox to Label).

We also don’t expect users to add and delete the enrollments data directly in the data grid. Let’s delete the commands under data grid’s “Command Bar” node. In addition, data grid also shows you an “add-new” row for inline add.

We can turn it off by selecting the “Data Grid” node and uncheck “Show Add-new Row” in Properties.

Launching another screen via code

In Part 4, we’ve enabled the Register Course screen to take a student ID as an optional screen parameter. The Student picker will be automatically set when we open the Register Course screen from a Student detail screen. Therefore, we need a launch point in the student detail screen. Let’s add a button on the enrollment grid.

Right click on the Command Bar node, select Add Button.

Name the method RegisterCourse. This is the method called when the button is clicked.

Double click on the added button to navigate to the screen code editor.

Write code to launch the Register Course screen, which takes a student ID and a section ID as optional parameter.

Private Sub RegisterCourse_Execute() ' Write your code here. Application.ShowRegisterCourse(Student.Id, Nothing) End SubThat’s it for Student Detail screen. F5 and go to a student record to verify the behavior.

Section Detail

Now that we’ve gone through customizing the student detail screen, let’s follow the same steps for Section. Please refer to the sample project for more details.

- Create a screen using “Details Screen” template on Section table. Include section enrollments data

- Tweak the UI and make the enrollment grid read-only

- Add a button to the enrollment grid to launch Register Course screen

Course Catalog

In Course Catalog screen, we’d like to display a list of course sections. We’d also like to filter the list by the course category. In Part 2, we’ve created a custom query for exactly this purpose called SectionsByCategory. It takes a category ID as a parameter and returns a list of sections associated with the category. Let’s use it here!

Create a screen using “Search Data Screen” template. Choose SectionsByCategory as screen data.

In screen designer, you will see SectionsByCategory has a query parameter called CategoryId. It is also currently shown as a TextBox on the screen. User can enter a category ID via a text box to filter the list. This is not the most intuitive UI. We’d like to show a category dropdown menu on the screen instead.

Select SectionCategoryId (you can see it is currently bound to the query parameter) and hit DELETE to remove this data item. After it is removed, the text box will also be removed from the visual tree.

Click “Add Data Item” button in the command bar. Use the “Add Data Item” dialog to add a local property of Category type on the screen.

Select CategoryId query parameter, set the binding via property.

Drag and drop the Category property to the screen content tree (above the Data Grid). Set the “Label Position” of Category to “Top” in Properties.

Follow the Course Manager sample for some layout tweaks and show some columns as links (as we did in Search Students). Now, if you click on a section link. It will open up the Section Detail screen we customized!

Conclusion

In this post, we’ve completed the main workflow in Course Manger. We are almost done! All we need is a Home screen that provides some entry points to start the workflow.

Setting a screen as the home (or startup) screen is easy. Creating one that displays some static text and pictures require some extra steps. In the next post, we will conclude the Course Manager series by finishing our app with a beautiful Home screen!

Robert Green posted My TrainingCourses LightSwitch Demo with source code on 5/16/2011:

In the past month I have given the LightSwitch Beyond the Basics talk at both VS Connections in Orlando and VSLive in Las Vegas. Today I am giving the Building Business Applications with LightSwitch talk at TechEd. All 3 of these use the same basic application, although different variants. I promised I would make it available, and here is the demo script.

The application starts with existing SQL Server data, so the first step is to create a TrainingCourses database in SQL Server or SQL Express. To populate the database run the TrainingCourses.sql file. That will create the tables and relationships and also add sample data to the Customers, Courses and Orders tables. Next, create a new VB or C# LightSwitch project named TrainingCourses.

For the SharePoint part of the demo, create a Training Courses team site. Create a Course Notes list with Title, Course Number and Notes, all strings. Then import the items in the CourseNotesList workbook.

Return to section navigation list>

Windows Azure Infrastructure and DevOps

Yung Chou explained Window Azure Fault Domain and Update Domain Explained for IT Pros in a 5/16/2011 post:

Some noticeable advantages to run applications in Windows Azure are high availability and fault tolerance achieved by the so-called fault domain and update domain. These two terms represent important strategies adopted by Windows Azure for deploying and updating applications. With in this post and in all my articles, It should be noted that when discussing Windows Azure applications, Windows Azure and Fabric Controller (FC) are used interchangeably to represent the cloud OS in Windows Azure Platform, unless otherwise stated. And in the context of cloud computing, an application and a service are considered the same since all user applications are generally delivered as services.

Fault Domain

The scope of a physical unit failure is a fault domain which is in essence a single point of failure. And the purpose of identifying/organizing fault domains is to prevent a single point of failure. In a simplest form, a computer by itself connected to a power outlet is a fault domain. Apparently if the connection between a computer and its power outlet is off, this computer is down. Hence a single point of failure. As well, a rack of computers in a datacenter can be a fault domain since a power outage of a rack will take out the collection of hardware in the rack similar with what is shown in the picture here. Notice that how a fault domain is formed has much to do with how hardware is arranged. And a single computer or a rack of computers is not necessarily an automatic fault domain. Nonetheless, in Windows Azure a rack of computers is indeed identified as a fault domain. And the allocation of a fault domain is determined by Windows Azure at deployment time. A service owner can not control the allocation of a fault domain, however can programmatically find out which fault domain a service is running within.

Specifically, Windows Azure Compute service SLA guarantees the level of connectivity uptime for a deployed service only if more than one instance of each role of the service are specified by the service owner in the application definition, i.e. csdef file. Under this assumption, Windows Azure by default deploys the role instances of an application into "at least" 2 fault domains, which ensures fault tolerance and allows an application to remain available even if a server hosting one role instance of the application fails.

Upgrade Domain

On the other hand, an upgrade domain is a strategy to ensure an application stays up and running, i.e. highly available, while undergoing an update of the application. Windows Azure distributes the role instances of an application evenly when possible into multiple upgrade domains with each upgrade domain as a logical unit of the application’s deployment. When upgrading an application, it is then carried out one upgrade domain at a time. The steps are: stopping the instances of an intended role running in the first upgrade domain, upgrading the application, bringing the role instances back online followed by repeating the steps in the next upgrade domain. An application upgrade is completed when all upgrade domains are processed. By stopping only the instances running within one upgrade domain, Windows Azure ensures that an upgrade takes place with the least possible impact to the running service. A service owner can optionally control how many upgrade domains with an attribute, upgradeDomainCount, in service definition, i.e. the csdef file of an application. Below shows what is documented on the attribute in MSDN. It's however not possible to specify which role is allocated to which domain.

Observations

Within a fault domain, there is no concept of fault tolerance. Only when more than one fault domains are managed as a whole, is fault-tolerance applicable. In addition to fault domain and update domain, to ensure fault tolerance and high availability Windows Azure also has network redundancy built into routers, switches, and load-balancers. FC also sets check-points and stored the state data across fault domains to ensure reliability and recoverability.

Doug Rehnstrom described Windows Azure Interoperability in a 5/16/2011 post to the Learning Tree blog:

I was talking to a colleague last week and he asked, “If you deploy an application to Azure, can you move it to a different cloud provider later on?” To answer this question, let’s look at three things. First, what is Azure really? Second, does writing an Azure application lock you into Azure? And third, is there a need for standards in cloud-computing?

Azure is Just Windows Server in a Virtual Machine

Let’s say you write a Web application using ASP.NET. That application will run on any Windows server. Azure is really just a virtual machine running an instance of Windows server. So, moving an application from an Azure virtual machine to an Amazon EC2 virtual machine is no different than moving an application from a Dell server to an HP server. In fact, it would be quicker, because you wouldn’t have to buy the hardware or install the software. That is not to say there are no issues, but it’s really not that big a deal.

You might want to check out this article for a Look Inside a Windows Azure Instance.

Does using Azure Lock you into Microsoft as your Cloud Vendor

There are changes you’d likely make in your Web application to take optimal advantage of Azure’s architecture. For example, you would likely use Azure Storage for sessions, membership and online data. Check out the following article to find out why, Windows Azure Training Series – Understanding Azure Storage.

Surely, that would lock you into Azure. Well not really. Azure Storage can be accessed from anywhere via http. So, while you might be using Azure Storage from an Azure application, you could just as easily use it from an application running on EC2, Google App Engine or from your local area network. So, if you had an application using Azure specific features and wanted to move it, it would not mean a rewrite.

Is there a need for Standards in Cloud Computing

Whenever people start talking about a need for standards I get worried. Standards mean committees and meetings and great long documents. I would argue we already have the standards we need. All of Windows Azure is made available using http and a REST-based API. That means any platform that can make an http request, can use Windows Azure. The same can be said of Amazon Web Services and Google App Engine.

Microsoft provides Windows Azure for compute services, SQL Azure for relational database and Azure Storage for data services. Amazon has EC2, Elastic Beanstalk, S3 and RDS which collectively provide the same services. Google offers App Engine and Big Table. You can mix and match the services from these providers any way you think is best, and move between them over time.

Summary

So, yes you can deploy an Azure application today and move it elsewhere later. To learn more about Windows Azure, check out Learning Tree course 2602, Windows Azure Platform Introduction: Programming Cloud-Based Applications. Or, to learn more about cloud-computing in general, come to Learning Trees Cloud Computing course.

Jason Zander (@jlzander) described new DevOps features while Announcing ALM Roadmap in Visual Studio vNext at Teched in this 5/16/2011 post:

I get a lot of questions about the future of Visual Studio; while I can't talk about everything we're doing I am excited because today at Teched North America, I announced our vision for Application Lifecycle Management (ALM) in the next version of Visual Studio. Our vision for ALM can be broken down into three main themes:

Building on the momentum of Visual Studio 2010. If you haven’t tried out Visual Studio 2010, you can take it out for a test drive.

- Accelerating agile adoption – you can find great existing support today with more to come.

- Linking development and operations – ensuring a tight interaction between dev and ops.

When we asked people what the biggest problem they faced in successfully delivering software, they identified the need for better collaboration. We know that building software takes a team of people including developers, testers, architects, project planners, and more. Out of this observation, we created the strategy for our ALM offering which focuses on helping people collaborate in very tightly integrated ways:

- Collaboration – focus on the flow of value between team members no matter what role.

- Actionable Feedback – when feedback is required between team members, it should be in a form which is directly applicable to solving the problem at hand. For example when a tester communicates a defect to development it should include videos, screen shots, configuration information, and even an IntelliTrace log making it easier to find and fix the root problem.

- Diverse Work Styles – provide the best possible tool for each team member whether that is the Visual Studio IDE, the web browser, SharePoint, Office, or dedicated tooling.

- Transparent Agile Processes – Enable all of the above to work on a “single source of truth” from engineering tasks through project status. TFS provides this core that brings together all team members and their tools.

VS2005, VS2008, and VS2010 have all delivered new value following this path. For example VS2010 added deep Architect <-> Developer and Test <-> Developer interaction through solutions like architectural discovery, layering enforcement, automated testing, and IntelliTrace.

In the keynote today, I talked about how we have continued on this path by incorporating two additional important roles: stakeholders and operations. Even though this diagram greatly simplifies the flows throughout the application lifecycle, it captures the essence of planning, building, and managing software:

There are a number of scenarios that span the next version of Visual Studio for ALM. These scenarios improve the creation, maintenance and support of software solutions by focusing on improving the workflow across the entire team as well as across the entire lifecycle.