Windows Azure and Cloud Computing Posts for 10/12/2011+

| A compendium of Windows Azure, Windows Azure Platform Appliance, SQL Azure Database, AppFabric and other cloud-computing articles. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database and Reporting

- Marketplace DataMarket and OData

- Windows Azure AppFabric: Access Control, WIF and Service Bus

- Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch

- Windows Azure Infrastructur and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Azure Blob, Drive, Table and Queue Services

No significant articles today.

<Return to section navigation list>

SQL Azure Database and Reporting

The AppFabric CAT Team described Reliable Retry Aware BCP for SQL Azure on 5/12/2011:

Introduction – Motivation

Microsoft has a number of tools to import data into SQL Azure but as of now none of those are retry-aware. In other words, if a transient network fault occurs during the data import process, an error is raised and the executable is terminated. Cleanup of the partially completed import and re-execution of the process is left to the user. This cleanup is often met with a level of frustration. I was thusly motivated to create a BCP type program (I call it bcpWithRetry) to import data into SQL Azure in a reliable manner by responding to those transient fault conditions and resubmitting any unsuccessful batches.

The second motivation for writing such an application was to support the forthcoming release of the SQL Azure Federations feature. SQL Azure Federations provide a number of connectivity enhancements that provide better connection pool management and cache coherency. The cost is that a ‘USE FEDERATION’ statement must be executed to route queries to the appropriate Federated Member which contains the desired shard of data. This T-SQL statement must be performed prior to executing any queries at the backend SQL Azure database. Currently none of our products support SQL Azure Federations nor offer options or extensions points upon which import data into a Federated Member. In the future I will provide a follow-up to this blog post with an update to the bcpWithRetry client in which I include support for SQL Azure Federations. You can learn more about SQL Azure Federations by reading Cihan Biyiloglu’s SQL Azure posts.

In Closing

I will finish off the blog with a section describing the code and walk-through of some of the application arguments. I have tested the executable with a number of the input dimensions and was able to achieve 200k rows/sec importing a simple comma separated file with 10 million lines into my local SQL Server database. The throughput is 14k/sec when importing the same file into a SQL Azure database. You can find the code here.

Code Walk-Through

The bcpWithRetry program will import data directly into SQL Azure in a reliable manner. The foundation for this program is the SqlBulkCopy class and a custom implementation of the IDataReader interface which provides the ability to read directly from a flat text file. The scope is simple, the program (DataReader) reads data from a flat file line-by-line and bulk imports (SqlBulkCopy) this data into SQL Azure using fixed size transactionally scoped batches. If a transient fault is detected, retry logic is initiated and the failed batch is resubmitted.

The TransientFaultHandling Framework from the AppFabricCat Team was used to implement the retry logic. It provides a highly extensible retry policy framework with custom retry policies and fault handling paradigms for the Microsoft cloud offerings like Windows Azure AppFabric Service Bus and SQL Azure. It catches ‘9’ different SQL Azure exceptions with a default retry policy out-of-the-box. There was no reason for me to either implement my own or shop anywhere else.

The snippet of the code below shows the technique used to import data into SQL Azure. The CSVReader implements the IDataReader interface while the SqlAzureRetryPolicy class offers the necessary retry policy to handle transient faults between the client and SQL Azure. The ExecuteAction method of the SqlAzureRetryPolicy class wraps a delegate which implements the bulk copy import of data into SQL Azure. The SqlRowsCopied event handler will output progress information to the user. The RetryOccurred event provides notification of a transient fault condition and provided the hooks upon which to inform the CSVReader to reread rows from an internal ring buffer.

using (CSVReader sr = new CSVReader(CSVFileName, columns, batchSize, Separator)) { _csvReader = sr; SqlAzureRetryPolicy.RetryOccurred += RetryOccurred; SqlAzureRetryPolicy.ExecuteAction(() => { using (SqlConnection conn = new SqlConnection(ConnStr)) { conn.Open(); using (SqlBulkCopy bulkCopy = new SqlBulkCopy(conn, SqlBulkCopyOptions.UseInternalTransaction | Options, null)) { bulkCopy.DestinationTableName = TableName; bulkCopy.BatchSize = batchSize; bulkCopy.NotifyAfter = batchSize; bulkCopy.SqlRowsCopied += new SqlRowsCopiedEventHandler(OnSqlRowsCopied); foreach (var x in columns) bulkCopy.ColumnMappings.Add(x, x); sw.Start(); Console.WriteLine("Starting copy..."); bulkCopy.WriteToServer(sr); } } }); }The import facets of the CSVReader implementation is shown below. SqlBulkCopy will make a call to the IDataReader. Read () method to read a row of data and continue to do so until it fills its internal data buffer. The SqlBulkCopy API will then submit a batch to SQL Azure with the contents of the buffer. If a transient fault occurs during this process, the entirety of the batch will be rolled back. It is at this point that the transient fault handling framework will notify the bcpWithRetry client through its enlistment in the RetryOccurred event. The handler for this event merely toggles the CSVReader. BufferRead property to true. The CSVReader then read results from its internal buffer upon which the batch can be resubmitted to SQL Azure.

private bool ReadFromBuffer() { _row = _buffer[_bufferReadIndex]; _bufferReadIndex++; if (_bufferReadIndex == _currentBufferIndex) BufferRead = false; return true; } private bool ReadFromFile() { if (this._str == null) return false; if (this._str.EndOfStream) return false; // Successful batch sent to SQL if we have already filled the buffer to capacity if (_currentBufferIndex == BufferSize) _currentBufferIndex = 0; this._row = this._str.ReadLine().Split(Separator); _numRowsRead++; this._buffer[_currentBufferIndex] = this._row; _currentBufferIndex++; return true; }Application Usage

The bcpWithRetry application provides fundamental but yet sufficient options to bulk import data into SQL Azure using retry aware logic. The parameters mirror those of the bcp.exe tool which ships with SQL Server. Trusted connections are enabled so as to test against an on-premise SQL Server database. The usage is shown below.

C:\> bcpWithRetry.exe usage: bcpWithRetry {dbtable} { in } datafile [-b batchsize] [-t field terminator] [-S server name] [-T trusted connection] [-U username] [-P password] [-k keep null values] [-E keep identity values] [-d database name] [-a packetsize]...c:\>bcpWithRetry.exe t1 in t1.csv –S qa58em2ten.database.windows.net –U mylogin@qa58em2ten –P Sql@zure –t , –d Blog –a 819210059999 rows copied. Network packet size (bytes): 8192 Clock Time (ms.) Total : 719854 Average : (13975 rows per sec.) Press any key to continue . . .Reviewers : Curt Peterson, Jaime Alva Bravo, Christian Martinez, Shaun Tinline-Jones

More details propaganda for SQL Azure Federations without even an estimated date for its first CTP!

<Return to section navigation list>

MarketPlace DataMarket and OData

Alex James (@adjames) will conduct a DEV374-INT OData Unplugged Interactive Discussion session at TechEd North America 2011:

Wednesday, May 18 | 1:30 PM - 2:45 PM | Room: B303

- Session Type: Interactive Discussion

- Level: 300 - Advanced

- Track: Developer Tools, Languages & Frameworks

- Evaluate: for a chance to win an Xbox 360

- Speaker(s): Alex James

Bring your toughest OData or Windows Communication Foundation (WCF) Data Services questions to this chalk talk and have them answered by the OData team! All questions are fair game. We look forward to your thoughts and comments.

- Product/Technology: Open Data Protocol (OData)

- Audience: Developer, Web Developer/Designer

- Key Learning: Whatever they want to learn about OData

<Return to section navigation list>

Windows Azure AppFabric: Access Control, WIF and Service Bus

Clemens Vasters (@clemensv) offered A Bit Of Service Bus NetTcpRelayBinding Latency Math in a 5/11/2011 post:

From: John Doe

Sent: Thursday, May 12, 2011 3:10 AM

To: Clemens Vasters

Subject: What is the average network latency for the AppFabric Service Bus scenario?

Importance: HighHi Clemens,

A rough ballpark range in milliseconds per call will do. This is a very important metric for us to understand performance overhead.

Thanks,

John

From: Clemens Vasters

Sent: Thursday, May 12, 2011 7:47 AM

To: John Doe

Subject: RE: What is the average network latency for the AppFabric Service Bus scenario?Hi John,

Service Bus latency depends mostly on network latency. The better you handle your connections, the lower the latency will be.

Let’s assume you have a client and a server, both on-premise somewhere. The server is 100ms avg roundtrip packet latency from the chosen Azure datacenter and the client is 70ms avg roundtrip packet latency from the chosen datacenter. Packet loss also matters because it gates your throughput, which further impacts payload latency. Since we’re sitting on a ton of dependencies it’s also worth telling that a ‘cold start’ with JIT impact is different from a ‘warm start’.

With that, I’ll discuss NetTcpRelayBinding:

- There’s an existing listener on the service. The service has a persistent connection (control channel) into the relay that’s being kept alive under the covers.

- The client connects to the relay to create a connection. The initial connection handshake (2) and TLS handshake (3) take about 5 roundtrips or 5*70ms = 350ms. With that you have a client socket.

- Service Bus then relays the client’s desire to connect to the service down the control channel. That’s one roundtrip, or 100ms in our example; add 50ms for our internal lookups and routing.

- The service then sets up a rendezvous socket with Service Bus at the machine where the client socket awaits connection. That’s just like case 2 and thus 5*100ms=500ms in our case. Now you have an end-to-end socket.

- Having done that, we’re starting to pump the .NET Framing protocol between the two sites. The client is thus theoretically going to get its first answer after a further 135ms.

So the handshake takes a total of 1135ms in the example above. That’s excluding all client and service side processing and is obviously a theoretical number based on the latencies I picked here. You mileage can and will vary and the numbers I have here are the floor rather than the ceiling of relay handshake latency.

Important: Once you have a connection set up and are holding on to a channel all subsequent messages are impacted almost exclusively by the composite roundtrip network latency of 170ms with very minimal latency added by our pumps. So you want to make a channel and keep that alive as long as you can.

If you use the Hybrid mode for NetTcpRelayBinding and the algorithm succeeds establishing the direct socket, further traffic roundtrip time can be reduced to the common roundtrip latency between the two sites as the relay gets out of the way completely. However, the session setup time will always be there and the Hybrid handshake (which follows establishing a session and happens in parallel) may very well up to 10 seconds until the direct socket is available.

For HTTP the story is similar, with the client side socket (not the request; we’re routing the keepalive socket) with overlaid SSL/TLS triggering the rendezvous handshake.

I hope that helps,

Clemens also provided a brief Talking about Service Bus latency on my way to work podcast about this subject.

<Return to section navigation list>

Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

No significant articles today.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

Emil Velinov posted Windows Azure-Hosted Services: What to expect of latency and response times from services deployed to the Windows Azure platform to the AppFabric CAT blog on 5/12/2011:

I’ve stayed quiet in the blogosphere for a couple of months (well, maybe a little longer)…for a good reason – since my move from Redmond to New Zealand as a regional AppFabric CAT resource for APAC, I’ve been aggressively engaging in Azure-focused opportunities and projects, from conceptual discussions with customers to actual hands-on development and deployment.

By all standards, New Zealand is one of the best tourist destinations in the world; however, the country doesn’t make it to the top of the list when it comes to Internet connectivity and broadband speeds. I won’t go into details and analysis of why that is, but one aspect of it is the geographical location – distance does matter in that equation too. Where am I going with this, you might be wondering? Well, while talking to customers about cloud in general and their Windows Azure platform adoption plans, one of the common concerns I’ve consistently heard from people is the impact that connectivity will have on their application, should they make the jump and move to a cloud deployment. This is understandable, but I always questioned how much of this was justified and backed up by factual data and evidence matched against real solution requirements. The general perception seems to be that if it is anything outside of the country and remote, it will be slow…and yes – New Zealand is a bit of an extreme case in that regard due to the geographical location. Recently I came across an opportunity to validate some of the gossip.

This post presents a real-life solution and test data to give a hands-on perspective on the latency and response times that you may expect from a cloud-based application deployed to the Windows Azure platform. It also offers some recommendations for improving latency in Windows Azure applications.

I want to re-emphasize that the intent here is to provide an indication of what to expect from Azure-hosted services and solutions performance rather than provide hard baseline numbers! Depending on exactly how you are connected to the Internet (think a dial-up modem, for example, and yes – it is only an exaggeration to make the point!), what other Internet traffic is going in and out of your server or network, and a bunch of other variables, you may observe vastly different connectivity and performance characteristics. Best advice – test before you make ANY conclusions – either positive or not so positive J

The Scenario

Firstly, in order to preserve the identity of the customer and their solution, I will generalize some of the details. With that in mind, the goal of the solution was to move an on-premises implementation of an interactive “lookup service” to the Windows Azure platform. When I say interactive, it means performing lookups against the entries in a data repository in order to provide suggestions (with complete details for each suggested item), as the user types into a lookup box of a client UI. The scenario is very similar to the Bing and Google search text boxes – you start typing a word, and immediately the search engine provides a list of suggested multi-word search phrases based on the characters already typed into the search box, almost at every keystroke.

The high level architecture of the lookup service hosted on the Windows Azure platform is depicted in the following diagram:

Key components and highlights of the implementation:

- A SQL Azure DB hosts the data for the items and provides a user defined function (UDF) that returns items based on some partial input (a prefix)

- A Windows Azure worker role, with one or more instances hosting a REST WCF service that ultimately calls the “search” UDF from the database to perform the search

- An Azure Access Control Service (ACS) configuration used to 1) serve as an identity provider (BTW, it was a quick way to get the testing under way; it should be noted that ACS is not designed as a fully-fledged identity provider and for a final production deployment we have ADFS, or the likes of Windows Live and Facebook to play that role!), and 2) issue and validate OAuth SWT-based tokens for authorizing requests to the WCF service

- The use of the Azure Cache Service was also in the pipeline (greyed out in the diagram above) but at the time of writing this article this extension has not been implemented yet.

To come back to the key question we had to answer – could an Azure-hosted version of the implementation provide response times that support lookups as the user starts typing in a client app using the service, or was the usability going to be impacted to an unacceptable level?

Target Response Times

Based on research the customer had done and from the experience they had with the on-premises deployment of the service, the customer identified the following ranges for the request-response round-trip, measured at the client:

- Ideal – < 250ms

- May impact usability – 250-500ms

- Likely to impact usability – 500ms – 1 sec

- “No-go” – > 1 sec

Also, upon customer’s request, we also had to indicate the “Feel” factor – putting the response time numbers aside, does the lookup happen fast enough for a smooth user experience.

With the above in mind, off to do a short performance lab on Azure!

The Deployment Topology

After a couple of days working our way through the migration of the existing code base to SQL Azure, Worker Role hosting, and ACS security, we had everything ready to run in the cloud. I say “we” because I was lucky to have Thiago Almeida, an MVP from Datacom (the partner for the delivery of this project), working alongside with me.

In order to find the optimal Azure hosting configuration in regards to performance, we decided to test a few different deployment topologies as follows:

- Baseline: No “Internet latency” – the client as well as all components of the service run in the same Azure data center (DC) – either South Central US or South East Asia. In fact, for test purposes, we loaded the test client into the same role instance VM that ran the WCF service itself:

- Deployment 1: All solution components hosted in the South Central US

- Deployment 2: All solution components hosted in the South East Asia data center (closest to New Zealand)

- Deployment 3: Worker role hosting the WCF service in South East Asia, with the SQL Azure DB hosted in South Central US

- Deployment 4: Same as Deployment 3 with reversed roles between two DCs

Notes:

1) In the above topologies except the Baseline, the client, a Win32 app that we created for flexible load testing from any computer, was located in New Zealand; tests were performed from a few different ISPs and networks, including the Microsoft New Zealand office in Auckland and my home (equipped with a “blazing fast” ADSL2+ connection – for the Kiwi readers, I’m sure you will appreciate the healthy dose of sarcasm here J)

2) Naturally, Deployment 1 and 2 represent the intended production topology, while all other variations were tested to evaluate the network impact between a client and the Azure DCs, as well as between the DCs themselves.

- Deployment 5: For this scenario, we moved the client app to an Azure VM in South East Asia, while all of the service components (SQL Azure DB, worker role, ACS configuration) were deployed to South Central US.

The Results

Here we go – this is the most interesting part. Let’s start with the raw numbers, which I want to highlight are averages of tests performed from a few different ISPs and corporate networks, and at different times over a period of about 20 days:

I can’t resist from quoting the customer when we presented these numbers to them: “These [the response times] are all over the place!” Indeed, they are, but let’s see why that is, and what it all really means:

- The Baseline test with the client (load test app) running within the same Azure DC as all service components, the response times do not incur any latency from the Internet – all traffic is between servers within the same Azure DC/network and hence the ~65ms average time. This is mostly the time it takes for the WCF service to do its job under the best possible network conditions.

- Response times for Deployment 1 & 2 vary from 170ms up to about 250-260ms with averages for South Central US and South East Asia of 225ms and 184ms respectively. As mentioned earlier, these two deployments are the intended production service topology, with all service components as close to each other as possible, within the same Azure DC. The results also show that from New Zealand, the South East Asia DC will probably be the preferred choice for hosting; however the difference between South East Asia and South Central is not really significant – this will also be country and location specific. What is more important though is that the response times from both data centers are well within the Ideal range for the interactive lookup scenario. Needless to say – the customer was very pleased with this finding! And so were Thiago and I! J

- Next we have Deployment 3 & 4 (mirrors of each other) where we separate the WCF service implementation from the database onto different Azure DCs. Wow – suddenly the latency goes up to 660-690ms! Initially when I looked at this, and without knowing much about the service implementation (remember we migrated an existing code base from the on-premises version to Azure), I thought it was either a very slow network between the two Azure DCs or something in the ADO.NET/ODBC connection/protocol that was slowing down the queries to the SQL Azure DB hosted in the other DC. I was wrong with both assumptions – it turned out that the lookup service code wasn’t querying the DB just once, meaning that this scenario was incurring cross-DC Internet latency multiple times for a single request to the WCF service – comparing apples to oranges here.

Lesson learned: The combination between implementation logic and the deployment topology of your solution components may have a dramatic impact. Naturally – try to keep everything in your solution on the same DC. There are of course valid scenarios where you may need to run some components in different data centers (I know of at least one such scenario from a global ISV from the Philippines) – if this is your case too, be extremely careful to design for the minimum number of cross-DC communications possible. A great candidate for such scenarios is the Azure Cache Service which can cache information that has already been transferred from one DC to the other as part of a previous request.

- Deployment 5 as a scenario was included in the test matrix mostly based on the interest sparked by the significant response times observed while testing Deployment 3 and 4. The idea was to see how fast the network between two of the Azure DCs is. The result – well, at the end of the day it is just Internet traffic; and as such it is subject to exactly the same rules as the traffic from my home to either of the DCs, for example. In fact, the tests show that Internet traffic from New Zealand (ok, from my home connection) to either of the DCs is marginally faster than the traffic between the South East Asia and South Central US DCs. This surprised me a little initially but when you think about it – again, it’s just Internet traffic over long distances.

Traffic Manager

One other topology that came late into the picture but is well worth a paragraph on its own, is a “geo-distributed” deployment to two DCs, fronted by the new Azure Traffic Manager service recently released as a Community Technology Preview (CTP). In this scenario, data is synchronized between the DCs using the Azure Data Sync service (again in CTP at the time of writing). The deployment is depicted in the diagram below:

Requests from the client are routed to one of the DCs based on the most appropriate policy as follows:

(If you’ve read some of my previous blog articles, you’d already know that I keep pride in my artistic skill. The fine, subtle touches in the screenshot above are a clear, undisputable confirmation of that fact!)

In our case we chose to route requests based on best performance, which is automatically monitored by the Azure Traffic Manager service. BTW, if you need a quick introduction to Traffic Manager, this is an excellent document that covers most of the points you’ll need to know. The important piece of information here is that for Deployment 3 and 4, combined with Traffic Manager sitting in front, the impact on the response time was only about 10-15ms. Even with this additional latency our response times were still within the Ideal range (<250ms) for the lookup service, but now enhanced with the “geo-distribution and failover” capability! Great news and a lot of excitement around the board room table during the closing presentation with our customer!

Important Considerations and Conclusions

Well, since this post is getting a little long I’ll keep this short and sweet:

- If possible, keep all solution components within the same Azure Data center

- In a case where you absolutely need to have a cross-DC solution – 1) minimize the number of calls between data centers through smart design (don’t get caught!), and 2) cache data locally using the Azure Cache service

- Azure Traffic Manager is a fantastic new feature that makes cross-DC “load balancing” and “failover” a breeze, at minimal latency cost. As a pleasantly surprising bonus, it’s really simple – so, use it!!!

- Internet traffic performance will always have variations to some extent. All our tests have shown that with the right deployment topology, an Azure-hosted service will deliver the performance, response times, and usability for as demanding scenarios as interactive “on-the-fly” data lookups…even from an ADSL2+ line down in New Zealand! And to come back to the customer “Feel” requirement – it scored 5 stars. J

Thanks for reading!

Author: Emil Velinov

Contributors and reviewers: Thiago Almeida (Datacom), Christian Martinez, Jaime Alva Bravo, Valery Mizonov, James Podgorski, Curt Peterson

Simon Guest posted Microsoft releases Windows Azure Toolkit for iOS to InfoQ on 5/11/2011:

Following on from the recent release of the Windows Azure Toolkit for Windows Phone 7, Microsoft announced on May 9, 2011 that they were making available a version for Apple’s iOS, and are planning to release an Android version within the next month.

Jamin Spitzer, Senior Director of Platform Strategy at Microsoft, emphasized that the primary aim of the toolkits is to increase developer productivity when creating mobile applications that interact with the cloud.

Using the toolkits, developers can use the cloud to accelerate the creation of applications on the major mobile platforms. Companies, including Groupon, are taking advantage to create a unified approach to cloud-to-mobile user experience.

Microsoft is making the library, sample code, and documentation for the iOS version of the toolkit available on GitHub under the Apache License. With XCode’s native support for GitHub repositories, this means that developers can more easily access the toolkit in their native environment.

What can developers expect from the v1.0 release of the iOS toolkit?

This first release of the toolkit focuses on providing developers easy access to Windows Azure storage from native mobile applications. Windows Azure has three different storage mechanisms:

- Blob storage - used for storing binary objects, such as pictures taken on the phone.

- Table storage – used for storing structured data in a scalable way, such as user profiles or multiple high score tables for a game.

- Queues – a durable first-in, first-out queuing system for messages. For example, this could be used to pass messages between devices.

All of the above services are exposed via a REST API, however accessing these natively from the phone can be challenging, especially for developers who are new to iPhone development. The toolkit wraps the necessary REST calls into a native library that not only abstracts the underlying networking elements, but also reduces many operations (such as uploading a photo to Azure blob storage) to just a few lines of code.

Wade Wegner, Windows Azure Technical Evangelist, has put together a walkthrough for the toolkit, showing how the Windows Azure storage services can be accessed in two ways:

- Directly from the client, using an account name and access key obtained from the Windows Azure portal.

- Via a proxy service, for those not wanting to store their account name and access key on the device. The proxy service works by using ASP.NET authentication provider to validate a set of credentials, and then creating a shared key that can be used to access the storage for the duration of the session.

In his tutorial, Wegner shows how to create an XCode 4 project from scratch, import the library, and create code samples to index blob and table storage.

Future additions to the toolkit

As well as an Android version of the toolkit slated for June, Wegner also expands on additional features in other versions of the device toolkits, including:

- Support for Windows Azure ACS (Access Control Service) - providing an identity mechanism for developers looking to add authentication to their mobile apps, including federation with Facebook connect and other providers.

- Push notifications – the ability to construct and send Push notifications from an Azure role to a registered device.

Although the device toolkits are in their early stages, developers creating mobile applications with a need to interact with Windows Azure storage and other services will likely find the toolkits a useful addition.

<Return to section navigation list>

Visual Studio LightSwitch

Return to section navigation list>

Windows Azure Infrastructure and DevOps

David Linthicum asserted “The focus should be on which cloud formats can best serve businesses, not on whether private clouds count as 'real' clouds” as a deck for his The 'is the private cloud a false cloud?' debate is false post of 5/12/2011 to InfoWorld’s Cloud Computing blog:

I was at Interop in Las Vegas this week moderating a panel on the "false cloud debate." In short, the debate asks if private clouds are really clouds, or if "private cloud" is a marketing label for data centers and confusing the value of cloud computing. The panel consisted of James Waters from EMC VMware, James Urquhart from Cisco Systems, Peter Coffee from Salesforce.com, and John Keagy from GoGrid.

What struck me most about the "debate" was that it was not much of one at all. Although the panel started off bickering around the use, or overuse, of private clouds, the panelists quickly agreed that the private clouds have a place in the enterprise (to very different degrees), and that the end game is mixing and matching private and public cloud resources to meet the requirements of the business.

Public cloud advocates have said for years that the core value of public clouds is the ability to scale and provision on demand and on the cheap -- they're right. However, many fail to accept there may be times when the architectural patterns of public clouds best serve the requirements of the business when implemented locally -- in a private cloud.

If you accept that the value of cloud computing is in some circumstances best expressed in a private cloud, it should become apparent that the movement to the cloud should be prefaced by good architecture, requirements gathering, and planning. Those who view the adoption of cloud computing as simply a matter of private versus public are destined to not understand the core business issues, and they risk making costly mistakes.

Architecture has to lead the day, and sane minds need to focus on the ability of clouds to serve the business: private, public, hybrid, or none. There is no debate about that.

Ed Scannell asserted IT pros seek details on Microsoft cloud computing strategy in a 5/11/2011 post to SearchWindowsServer.com:

In recent years, Microsoft has dribbled bits and pieces of its cloud computing strategy on the Windows manager faithful. Now they think it’s time for the company to articulate a cohesive story.

Microsoft has a golden opportunity to do so at TechEd North America in Atlanta next week. The company has certainly produced a steady stream of news about Windows Azure, Office 365, Intune and a handful of other online-based applications and tools over the past couple of years. But IT pros still seek clarity on Microsoft’s cloud vision as it cobbles together these piece parts, much in the way IBM did in its cloud announcement last month.

"I think Microsoft is at a point in its cloud evolution where it needs a bigger picture presented,” said Dana Gardner, president and principal analyst at Interarbor Solutions, Inc. in Gilford, N.H. “It does have some building blocks but IT shops wonder how they (IT shops) can bring this together with existing infrastructures in a harmonious way so they aren't throwing out the baby with the bath water,"

With fast moving cloud competitors, such as Google and Amazon, which lack the on-premises infrastructure baggage owned by Microsoft, the software company might be wise to state its intentions to enterprises sooner rather than later.

"Microsoft has had trouble before communicating comprehensive strategies, as was the case with its on-premises software,” Gardner added. “It can't afford to make that mistake again with a lot of other players now pointing to the Microsoft hairball and offering cleaner, pure-play cloud offerings."

The adoption of cloud strategies can bring with it added expense and technological uncertainty. For this reason, IT professionals would find real world success stories involving a collection of Microsoft products somewhat reassuring.

"I know exactly what my IT costs are right now for things like licenses, people and upgrades,” said Eugene Lee, a systems administrator with a large bank. “But with the cloud those fixed costs can become unpredictable, so I need to know more about how all this would work. I would like to see some success stories from some (Windows-based) cloud shops.”

How much of the big picture Microsoft reveals publicly about its cloud strategy at this year's conference remains unclear. Corporate executives are expected to disclose more details about the company’s cloud strategy with regard to the Windows Azure Platform, SQL Azure and the Windows Azure App Fabric, though the company declined to comment on details.

"Even a little more visibility on how seamlessly, or not, applications written for the Azure (platform) will work with Office 365 could be useful for planning purposes," Lee said.

IT pros can also expect to hear more about the next generation of its management suite, System Center 2012. The latest version of the suite adds Concero, a tool that lets administrators manage virtual machines running on Microsoft Hyper-V through System Center Virtual Machine Manager. The software also lets administrators manage a variety of services that run on Windows Azure and Azure-based appliances.

More on Microsoft and the cloud

Full Disclosure: I’m a paid contributor to TechTarget’s SearchCloudComputing.com blog.

Hanu Kommalapati asserted “Cloud computing isn’t just about outsourcing IT resources. We explore ten benefits IT architects and developers will realize by moving to the cloud” as an introduction to his 10 Reasons Why Architects and Developers Should Care about Cloud Computing article of 5/10/2011 for Enterprise Systems Journal:

Cloud computing has come a long way during the past few years; enterprises no longer are curious onlookers but are active participants of this IT inflexion. Application developers and architects have much to gain from this new trend in computing. Cloud enables architects and developers with a self-service model for provisioning compute, storage, and networking resources at the speed the application needs.

The traditional on-premise computing model constrains IT agility due to the less-than-optimal private cloud implementations, heterogeneous infrastructures and procurement latencies, and suboptimal resource pools. Because cloud platforms operate highly standardized infrastructures with unified compute, storage, and networking resources, it solves the IT agility issues pervasive in the traditional IT model.

The following are among the benefits IT architects and developers will realize by moving to the cloud:

Benefit #1: Collection of Managed Services

Managed services are those to which a developer can subscribe without worrying about how those services were implemented or are operated. In addition to highly automated and self-service enabled compute services, most cloud platforms tend to offer storage, queuing, cache, database (relational and non-relational), security, and integration services. In the absence of managed services, some applications (such as hybrid-cloud applications) are impossible to write or will take a long time for developers to create the necessary plumbing code by hand.

Using cache service as an example, a developer can build a highly scalable application by merely declaring the amount of cache (e.g., 4GB) and the cache service will automatically provision the necessary resources for the cache farm. Developers need not worry about the composition and size of cache nodes, installation of the software bits on the nodes, or cache replication, and they don’t have to manage the uptime of the nodes. Similar software appliance characteristics can be attributed to the other managed services such as storage and database.

Benefit #2: Accelerated Innovation through Self-service

Developers can implement their ideas without worrying about the infrastructure or the capacity of the surrounding services. In a typical on-premise setting, developers need to acquire the VM capacity for computing tasks and database capacity for persistence, and they must build a target infrastructure environment even before deploying a simple application such as “Hello, world!”

Cloud allows developers to acquire compute, storage, network infrastructure, and managed services with a push of a button. As a result, developers will be encouraged to try new ideas that may propel companies into new markets. As history proves, often great technology success stories come from grass-root developer and architect innovations. …

Hanu is a Sr. Technical Director for Microsoft.

<Return to section navigation list>

Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

Mary Jo Foley (@maryjofoley) asserted Windows Azure [Platform] Appliances are still in Microsoft's plans in a 5/12/2011 post to her All About Microsoft blog for ZDNet:

Microsoft and its server partners have been noticeably mum about Microsoft’s planned Windows Azure Appliances, leading to questions as to whether Microsoft had changed its mind about the wisdom of providing customers with a private cloud in a box.

But based on comments made by a Microsoft Server and Tools division exec during his May 10 Jefferies Global Technology, Internet, Media & Telecom Conference presentation, it seems the Azure Appliances are still on the Microsoft roadmap.

In July 2010, Microsoft took the wraps off its plans for the Windows Azure Appliance, a kind of “private-cloud-in-a-box” available from select Microsoft partners. At that time, company officials said that OEMs including HP, Dell and Fujitsu would have Windows Azure Appliances in production and available to customers by the end of 2010. In January 2011, I checked in with Microsoft’s announced Windows Azure Appliance partners, some of whom hinted there had been delays. In March, HP began giving off mixed signals as to whether it would offer an Azure-based appliance after all.

Cut to May 10. Charles Di Bona, a General Manager with Server and Tools, was asked by a Jeffries conference attendee about his perception of integrated appliances, such as the ones Oracle is pushing. Here’s his response, from a transcript:

“I would hesitate to sort of comment on their (Oracle’s) strategy. But, look, those appliances are very interesting offerings. In some ways it’s sort of presenting a large enterprise, obviously, where the footprint is big a large enterprise with a sort of cohesive package where they don’t have to muck around with the inner workings, which in many ways is what the cloud does for a much broader audience.

“So, it’s an interesting way of sort of appealing to that sort of very high end constituency, which is sort of the sweet spot of what Oracle has done the past, with a sort of integrated offering that replicates in many ways the future of the cloud. Now, we still believe that the public cloud offers certain capabilities, and certain efficiencies that an appliance, a scaled-up appliance like Exadata is not going to offer them, and we think that that’s the long-term future of where things are going. But it’s an interesting way of appealing to that very high-end user now before they might feel comfortable going to the cloud.”

At this point, I was thinking: Wow! Microsoft has decided to go all in with the public cloud and deemphasize the private cloud, even at the risk of hurting its Windows Server sales. I guess the moderator thought the same, as he subsequently asked, “Microsoft’s approach is going to be more lean on that public cloud delivery model?”

Di Bona responded that the Windows Azure Appliances already are rolling out. (Are they? So far, I haven’t heard any customers mentioned beyond EBay — Microsoft’s showcase Appliance customer from last summer.) Di Bona’s exact words, from the transcript:

“Well, no, we’ve already announced about a year ago at this point that we’ve started to rollout some Windows Azure Appliances, Azure Appliances.They are early stage at this point. But we think of the private cloud based on what we’re doing in the public cloud, and the learnings we’re getting from the public cloud, and sort of feeding that back into that appliance, which would be more of a private cloud. It has some of the same characteristics of what Oracle is doing with Exadata. So, we don’t think of it as mutually exclusive, but we think that the learnings we’re getting from the public cloud are different and unique in a way that they’re not bringing into Exadata.”

My take: There has been a shift inside Server and Tools, in terms of pushing more aggressively the ROI/savings/appeal of the public cloud than just a year ago. The Server and Tools folks know customers aren’t going to move to the public cloud overnight, however, as Di Bona made clear in his remarks.

“(W)e within Server and Tools, know that Azure is our future in the long run. It’s not the near-term future, the near-term future is still servers, right, that’s where we monetize,” he said. “But, in the very, very long run, Azure is the next instantiation of Server and Tools.”

Microsoft execs told me a couple of months ago that the company would provide an update on its Windows Azure Appliance plans at TechEd ‘11, which kicks off on May 16 in Atlanta. Next week should be interesting….

The most important reason I see for fulfilling Microsoft’s WAPA promise is to provide enterprise users with a compatible platform to which they can move their applications and data if Windows Azure and SQL Azure don’t pan out.

I added the following comment to Mary Jo’s post:

Microsoft has been advertising more job openings for the Windows Azure Platform Appliance (WAPA) recently. Job openings for the new Venice (directory and identity) Team specifically reference WAPA as a target. It's good to see signs of life for the WAPA project, but MSFT risks a stillborn SKU if they continue to obfuscate whatever problems they're having in productizing WAPA with marketese.

<Return to section navigation list>

Cloud Security and Governance

No significant articles today.

<Return to section navigation list>

Cloud Computing Events

Nancy Medica (@nancymedica) announced on 5/12/2011 a one-hour How Microsoft is changing the game webinar scheduled for 5/18/2011 at 9:00 to 10:00 AM PDT:

The difference between Azure (PAAS) and Infrastructure As A

service and standing up virtual machines.- How the datacenter evolution is driving down the cost of

enterprise computing.- Modular datacenter and containers.

Intended for:CIOs, CTOs, IT Managers, IT Developers, Lead Developers

Presented by Nate Shea-han, Solution Specialist with Microsoft:

His focus area is on cloud offerings focalized on the Azure platform. He has a strong systems management and security background and has applied knowledge in this space to how companies can successfully and securely leverage the cloud as organization look to migrate workloads and application to the cloud. Nate currently resides in Houston, Tx and works with customer in Texas, Oklahoma, Arkansas and Louisiana.

<Return to section navigation list>

Other Cloud Computing Platforms and Services

Jim Webber described Square Pegs and Round Holes in the NOSQL World in a 5/11/2011 post to the World Wide Webber blog:

The graph database space is a peculiar corner of the NOSQL universe. In general, the NOSQL movement has pushed towards simpler data models with more sophisticated computation infrastructure compared to traditional RDBMS. In contrast graph databases like Neo4j actually provide a far richer data model than a traditional RDBMS and a search-centric rather than compute-intensive method for data processing.

Strangely the expressive data model supported by graphs can be difficult to understand amid the general clamour of the simpler-is-better NOSQL movement. But what makes this doubly strange is that other NOSQL database types can support limited graph processing too.

This strange duality where non-graphs stores can be used for limited graph applications was the subject of a thread on the Neo4j mailing list, which was the inspiration for this post. In that thread, community members discussed the value of using non-graph stores for graph data particularly since prominent Web services are known to use this approach (like Twitter's FlockDB). But as it happens the use-case for those graphs tends to be relatively shallow - "friend" and "follow" relationships and suchlike. In those situations, it can be a reasonable solution to have information in your values (or document properties, columns, or even rows in a relational database) to indicate a shallow relation as we can see in this diagram:

At runtime, the application using the datastore (remember: that’s code you typically have to write) follows the logical links between stored documents and creates a logical graph representation. This means the application code needs to understand how to create a graph representation from those loosely linked documents.

If the graphs are shallow, this approach can work reasonably well. Twitter's FlockDB is an existential proof of that. But as relationships between data become more interesting and deeper, this is an approach that rapidly runs out of steam. This approach requires graphs to be structured early on in the system lifecycle (design time), meaning a specific topology is baked into the datastore and into the application layer. This implies tight coupling between the code that reifies the graphs and the mechanism through which they're flattened in the datastore. Any structural changes to the graph now require changes to the stored data and the logic that reifies the data.

Neo4j takes a different approach: it stores graphs natively and so separates application and storage concerns. That is, where your documents have relationships between them, that's they way they're stored, searched, and processed in Neo4j even if those relationships are very deep. In this case, the logical graph that we reified from the document store can be natively (and efficiently) persisted in Neo4j.

What's often deceptive is that in some use-cases, projecting a graph from a document or KV store and using Neo4j might begin with seemingly similar levels of complexity. For example, we might create an e-commerce application with customers and items they have bought. In a KV or document case we might store the identifiers of products our customers had bought inside the customer entity. In Neo4j, we'd simply add relationships like PURCHASED between customer nodes and the product nodes they'd bought. Since Neo4j is schema-less, adding these relationships doesn’t require migrations, nor should it affect any existing code using the data. The next diagram shows this contrast: the graph structure is explicit in the graph database, but implicit in a document store.

Even at this stage, the graph shows its flexibility. Imagine that a number of customers bought a product that had to be recalled. In the document case we'd run a query (typically using a map/reduce framework) that grabs the document for each customer and checks whether a customer has the identifier for the defective product in their purchase history. This is a big undertaking if each customer has to be checked, though thankfully because it's an embarrassingly parallel operation we can throw hardware at the problem. We could also design a clever indexing scheme, provided we can tolerate the write latency and space costs that indexing implies.

With Neo4j, all we need to do is locate the product (by graph traversal or index lookup) and look for incoming PURCHASED relations to determine immediately which customers need to be informed about the product recall. Easy peasy!

As the e-commerce solution grows, we want to evolve a social aspect to shopping so that customers can receive buying recommendations based on what their social group has purchased. In the non-native graph store, we now have to encode the notion of friends and even friends of friends into the store and into the logic that reifies the graph. This is where things start to get tricky since now we have a deeper traversal from a customer to customers (friends) to customers (friends of friends) and then into purchases. What initially seemed simple, is now starting to look dauntingly like a fully fledged graph store, albeit one we have to build.

Conversely in the Neo4j case, we simply use the FRIEND relationships between customers, and for recommendations we simply traverse the graph across all outgoing FRIEND relationships (limited to depth 1 for immediate friends, or depth 2 for friends-of-friends), and for outgoing PURCHASED relationships to see what they've bought. What's important here is that it's Neo4j that handles the hard work of traversing the graph, not the application code as we can see in the diagram above.

But there's much more value the e-commerce site can drive from this data. Not only can social recommendations be implemented by close friends, but the e-commerce site can also start to look for trends and base recommendations on them. This is precisely the kind of thing that supermarket loyalty schemes do with big iron and long-running SQL queries - but we can do it on commodity hardware very rapidly using Neo4j.

For example, one set of customers that we might want to incentivise are those people who we think are young performers. These are customers that perhaps have told us something about their age, and we've noticed a particular buying pattern surrounding them - they buy DJ-quality headphones. Often those same customers buy DJ-quality decks too, but there's a potentially profitable set of those customers that - shockingly - don't yet own decks (much to the gratitude of their flatmates and neighbours I suspect).

With a document or KV store, looking for this pattern by trawling through all the customer documents and projecting a graph is laborious. But matching these patterns in a graph is quite straightforward and efficient – simply by specifying a prototype to match against and then by efficiently traversing the graph structure looking for matches.

This shows a wonderful emergent property of graphs - simply store all the data you like as nodes and relationships in Neo4j and later you'll be able to extract useful business information that perhaps you can't imagine today, without the performance penalties associated with joins on large datasets.

In these kind of situations, choosing a non-graph store for storing graphs is a gamble. You may find that you've designed your graph topology far too early in the system lifecycle and lose the ability to evolve the structure and perform business intelligence on your data. That's why Neo4j is cool - it keeps graph and application concerns separate, and allows you to defer data modelling decisions to more responsible points throughout the lifetime of your application.

So if you're fighting with graph data imprisoned in Key-Value, Document or relational datastores, then try Neo4j.

Be sure to read the comments. Thanks to Alex Popescu for the heads up for this tutorial.

Jim Webber of Neo Technologies is a leader of the Neo4j team.

Microsoft Research’s Dryad is a graph database whose Academic Release is available for public download.

Richard Seroter (@rseroter) described 6 Quick Steps for Windows/.NET Folks to Try Out Cloud Foundry in a 5/11/2011 post:

I’m on the Cloud Foundry bandwagon a bit and thought that I’d demonstrate the very easy steps for you all to try out this new platform-as-a-service (PaaS) from VMware that targets multiple programming languages and can (eventually) be used both on-premise and in the cloud.

To be sure, I’m not “off” Windows Azure, but the message of Cloud Foundry really resonates with me. I recently interviewed their CTO for my latest column on InfoQ.com and I’ve had a chance lately to pick the brains of some of their smartest people. So, I figured it was worth taking their technology for a whirl. You can too by following these straightforward steps. I’ve thrown in 5 bonus steps because I’m generous like that.

- Get a Cloud Foundry account. Visit their website, click the giant “free sign up” button and click refresh on your inbox for a few hours or days.

- Get the Ruby language environment installed. Cloud Foundry currently supports a good set of initial languages including Java, Node.js and Ruby. As for data services, you can currently use MySQL, Redis and MongoDB. To install Ruby, simply go to http://rubyinstaller.org/ and use their single installer for the Windows environment. One thing that this package installs is a Command Prompt with all the environmental variables loaded (assuming you selected to add environmental variables to the PATH during installation).

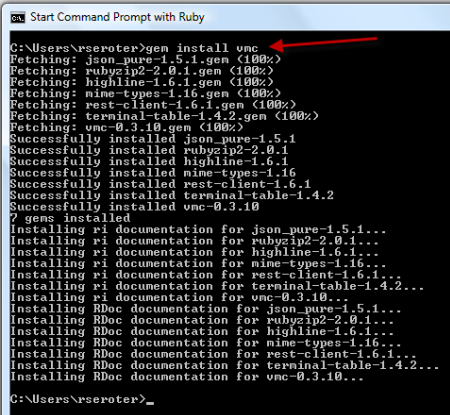

- Install vmc.You can use the vmc tool to manage your Cloud Foundry app, and it’s easy to install it from within the Ruby Command Prompt. Simply type:

gem install vmcYou’ll see that all the necessary libraries are auto-magically fetched and installed.

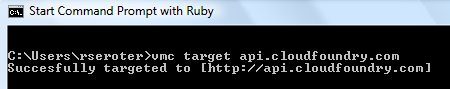

- Point to Cloud Foundry and log In. Stay in the Ruby Command Prompt and target the public Cloud Foundry cloud. You could also use this to point at other installations, but for now, let’s keep it easy.

Next, login to your Cloud Foundry account by typing “vmc login” to the Ruby Command Prompt. When asked, type in the email address that you used to register with, and the password assigned to you.- Create a simple Ruby application. Almost there. Create a directory on your machine to hold your Ruby application files. I put mine at C:\Ruby192\Richard\Howdy. Next we create a *.rb file that will print out a simple greeting. It brings in the Sinatra library, defines a “get” operation on the root, and has a block that prints out a single statement.

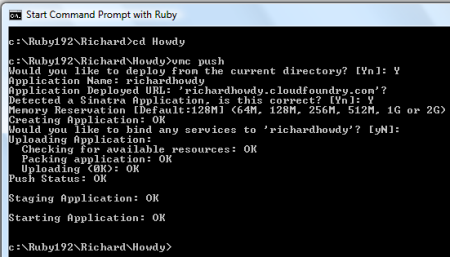

require 'sinatra' # includes the library get '/' do # method call, on get of the root, do the following "Howdy, Richard. You are now in Cloud Foundry! " end- Push the application to Cloud Foundry. We’re ready to publish. Make sure that your Ruby Command Prompt is sitting at the directory holding your application file. Type in “vmc push” and you’ll get prompted with a series of questions. Deploy from current directory? Yes. Name? I gave my application the unique name “RichardHowdy”. Proposed URL ok? Sure. Is this a Sinatra app? Why yes, you smart bugger. What memory reservation needed? 128MB is fine, thank you. Any extra services (databases)? Nope. With that, and about 8 seconds of elapsed time, you are pushed, provisioned and started. Amazingly fast. Haven’t seen anything like it. My console execution looks like this:

And my application can now be viewed in the browser at http://richardhowdy.cloudfoundry.com.Now for some bonus steps …

- Update the application. How easy is it to publish a change? Damn easy. I went to my “howdy.rb” file and added a bit more text saying that the application has been updated. Go back to the Ruby Command Prompt and type in “vmc update richardhowdy” and 5 seconds later, I can view my changes in the browser. Awesome.

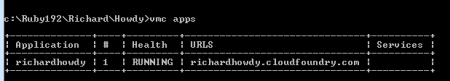

- Run diagnostics on the application. So what’s going on up in Cloud Foundry? There are a number of vmc commands we can use to interrogate our application. For one, I could do “vmc apps” and see all of my running applications.

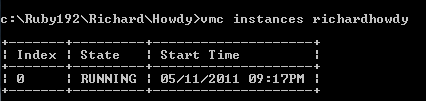

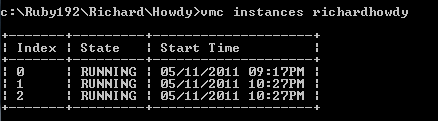

For another, I can see how many instances of my application are running by typing in “vmc instances richardhowdy”.- Add more instances to the application. One is a lonely number. What if we want our application to run on three instances within the Cloud Foundry environment? Piece of cake. Type in “vmc instances richardhowdy 3” where 3 is the number of instances to add (or remove if you had 10 running). That operation takes 4 seconds, and if we again execute the “vmc instances richardhowdy” we see 3 instances running.

- Print environmental variable showing instance that is serving the request. To prove that we have three instances running, we can use Cloud Foundry environmental variables to display the instance of the droplet running on the node in the grid. My richardhowdy.rb file was changed to include a reference to the environmental variable named VMC_APP_ID.

require 'sinatra' #includes the library get '/' do #method call, on get of the root, do the following "Howdy, Richard. You are now in Cloud Foundry! You have also been updated. App ID is #{ENV['VMC_APP_ID']}" endIf you visit my application at http://richardhowdy.cloudfoundry.com, you can keep refreshing and see 1 of 3 possible application IDs get returned based on which node is servicing your request.

- Add a custom environmental variable and display it. What if you want to add some static values of your own? I entered “vmc env-add richardhowdy myversion=1” to define a variable called myversion and set it equal to 1. My richardhowdy.rb file was updated by adding the statement “and seroter version is #{ENV['myversion']}” to the end of the existing statement. A simple “vmc update richardhowdy” pushed the changes across and updated my instances.

Very simple, clean stuff and since it’s open source, you can actually look at the code and fork it if you want. I’ve got a todo list of integrating this with other Microsoft services since I’m thinking that the future of enterprise IT will be a mashup of on-premise services and (mix of) public cloud services. The more examples we can produce of linking public/private clouds together, the better!

Charles Babcock asserted “Company executives tout the application platform provider's ability to quickly transfer customers out of the crashing Amazon cloud in April” as a deck for his Engine Yard Sees Ruby As Cloud Springboard report of 5/11/2011 from Interop Las Vegas 2011 for InformationWeek:

Providing programming platforms in the cloud, which seemed a little simple-minded when they first appeared--after all, programmers were already skilled at using the cloud, so how was the platform going to hold onto them--may not be so silly after all.

For one thing, everybody is starting to think it's a good idea. VMware is doing it with the Spring Framework and CloudFoundry.org. Red Hat is doing it with Ruby, Python, and PHP in OpenShift. Microsoft did it with Windows Azure and its .Net languages. And Heroku did it for Ruby programmers.

Another Ruby adherent in the cloud is Engine Yard, perhaps less well known than its larger San Francisco cousin, Heroku. Engine Yard is a pure platform play. It provides application building services on its site and, when an application is ready for deployment, it handles the preparation and delivery, either to Amazon Web Service's EC2 or Terremark, now part of Verizon Business.

Engine Yard hosts nothing itself. It depends on the public cloud infrastructure behind it. Nevertheless, Ruby programmers don't have to do anything to get their applications up and running in the cloud. Engine Yard handles all the details, and monitors their continued operation.

So what did the management of Engine Yard, a San Francisco-based cloud service for Ruby programmers, think last December of the acquisition of Heroku by Salesforce.com for $212 million. "We couldn't be happier," said Tom Mornini, co-founder and CTO as he sat down for an interview at Interop 2011 in Las Vegas, a UBM TechWeb event.

"Five years ago, I said, 'Ruby is it,'" he recalled. He respects Python and knows programmers at Google like that open source scripting language. But he thinks he made the right call in betting on Ruby. "Python is going nowhere. You're not seeing the big moves behind Python that you do with Ruby. You're not seeing major new things in PHP. For the kids coming out of college, Ruby is hot," he said.

Mike Piech, VP of product management, gave a supporting assessment. Salesforce's purchase was "one of several moves validating this space." Piech is four months out of Oracle, where he once headed the Java, Java middleware, and WebLogic product lines. "I love being at Engine Yard," he said.

When asked what's different about Engine Yard, he succinctly answered, "Everything." Mainly, he's enjoying the shift from big company to small, with more say over his area of responsibility.

Read more: Page 2: An Anomaly In The Programming World

<Return to section navigation list>

0 comments:

Post a Comment