Windows Azure and Cloud Computing Posts for 5/10/2011+

| A compendium of Windows Azure, Windows Azure Platform Appliance, SQL Azure Database, AppFabric and other cloud-computing articles. |

Reposted 5/13/2011 11:00 AM PDT with 5/11/2011 updates after Blogger outage recovery. Read Ed Bott’s Google's Blogger outage makes the case against a cloud-only strategy post of 5/13/2011:

The same week that Google made its strongest pitch ever for putting your entire business online, one of its flagship services has failed spectacularly. …

• Updated 5/11/2011 with new articles marked • from Paul McDougall, Giga Om, Shamelle, Yves Goeleven, Richard L. Santaleza, Lori MacVittie, Steve Yi, Windows Azure Team, Michael Coté, Randy Bias and the Windows Azure AppFabric LABS Team.

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database and Reporting

- Marketplace DataMarket and OData

- Windows Azure AppFabric: Access Control, WIF and Service Bus

- Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch

- Windows Azure Infrastructur and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Azure Blob, Drive, Table and Queue Services

Avkash Chauhan explained How to deploy ClickOnce Application using Windows Azure Storage in very simple steps in a 5/10/2011 post:

In this blog I will show you step by step how you can deploy a ClickOnce application designed using Microsoft Visual Studio 2010 using Windows Azure Storage.

In this case, I have a Windows Form Application name VHDMountAzureVM (http://mountvhdazurevm.codeplex.com/) which I will deploy to my Windows Azure Storage account name HappyBuddha (http://happybuddha.blob.core.windows.net/).

Step 1: Creating a public container in the Windows Azure Storage:

I have created a public container name "clickonceinstall" in the root location of my Windows Azure Storage account name "happybuddha" as below:

Based on above, my Clickonce install location will be described as below:

Step 2: Publishing Windows form Application:

In the VS2010 solution, launch "Publish" option as below:

In the Publish URL enter the Windows Azure Storage container URL (http://happybuddha.blob.core.windows.net/clickonceinstall) as below:

After you start the "Publish" option the follow files will be created as clickonce install package files:

Step 3: Copy all ClickOnce files to Windows Azure Storage Container:

In this step please copy 3 main files (setup.exe, publish.htm and <Application_Name>.application) along with the Application Files Folder directly to Windows Azure Storage container as below:

(If you are using CloudBerry Azure Storage Explorer, you can just drag and drop all the files at once from Windows Explorer to Azure Storage)

Step 4: Launching the installer URL:

Now we can launch the application installer URL as below:

Above when you will click on "Install" button Setup.exe application will be downloaded to your local machine which you can launch to start the installer which you can see in following screen shots:

After the application installation is completed the application will be launched as below:

Step 5: Launching application any time in the machine where the application is installed:

Once application is installed in the machine it will be available in the startup menu as below:

You can just launch the application by clicking the application name.

Step 6: Removing/Uninstalling the application

ClickOnce application does leave the application installation signature in the Control Panel > Programs and Feature > list as below:

You can uninstall the application just by double clicking the application above.

<Return to section navigation list>

SQL Azure Database and Reporting

• Steve Yi (pictured below) reported a new wiki article about SQL Azure Connection Security by Selcin Turkarslan on 5/11/2011:

Selcin Turkarslan has written an in-depth TechNet wiki article about connection security considerations when using SQL Azure. The article is primarily concerned with writing secure connection strings for SQL Azure Database. I’d highly recommend reading it, as it details good procedures and best practices when connecting with SQL Azure.

Click here to read "SQL Azure Connection Security"

If you have any questions about connection security within SQL Azure, please leave a comment. We’d like to be able to help you out if you have any questions.

• Steve Yi described a new 00:12:34 Video How To: Business Intelligence with Cloud Data on 5/11/2011:

This walkthrough discusses how businesses can take business intelligence to the cloud with SQL Azure. The video covers the benefits of SQL Azure—including the ability to establish business intelligence without adding hardware costs or IT overhead—and it also introduces SQL Azure Reporting.

The ability to embed SQL Azure reports into on-premises Apps and Windows Azure Web Apps—and the ability to create and export SQL Azure reports using available report authoring tools is also covered. The demonstration portion of the video shows users how to author a report with Business Intelligence Development Studio (BIDS), display reports, embed a report into a web app, and manage and deliver reports.

If you would like to view the code that was mentioned in this video, it is available on Codeplex.

David Pallman announced on 5/10/2011 a Webcast: Windows Azure Relational Data Architecture scheduled for 5/11/2011 at 10:00 to 11:00 AM PDT:

Tomorrow (Wed 5/11/11) I'll be giving the third in Neudesic's series of public webcasts on Windows Azure architecture. This month's webcast is on Windows Azure Relational Data.

In this webcast Windows Azure MVP and author David Pallmann will discuss the architecture of SQL Azure, the area of the Windows Azure platform responsible for providing relational database capabilities. You’ll learn how SQL Azure is similar to SQL Server in some respects and different in others, and what that means for developers and DBAs. In addition to SQL Azure Database we'll also discuss the upcoming SQL Azure services currently in preview--SQL Azure Reporting, SQL Azure Data Sync, and SQL Azure OData--as well as the Windows Azure DataMarket service. The session will include a survey of relational data design patterns.

REGISTER

Windows Azure Relational Data Architecture Webcast

Wed., May 11, 2011, 10:00 AM - 11:00 AM Pacific Time

Klint Finley posted From Big Data to NoSQL: The ReadWriteWeb Guide to Data Terminology (Part 1) in a 10/5/2011 to the ReadWriteEnterprise blog:

It's hard to keep track of all the database related terms you hear these days. What constitutes "big data"? What is NoSQL, and why are your developers so interested in it? And now "NewSQL"? Where do in-memory databases fit into all of this? In this series, we'll untangle the mess of terms and tell you what you need to know.

The first part covers data, big data, databases, relational databases and other foundational issues. In part two we'll talk about data warehouses, ACID compliance and more. In part three, we'll cover non-relational databases, NoSQL and related concepts.

Data

The best definition of data I've been able to find so far is from Diffen:

Data are plain facts. When data are processed, organized, structured or presented in a given context so as to make them useful, they are called Information." On the subject of whether data is singular or plural:It should be noted that data is plural (for datum), so the correct grammatical usage is "Data are misleading.". However, in practice people tend to use data as a singular form. e.g. "This data is misleading."

Big Data

In short, big data simply means data sets that are large enough to be difficult to work with. Exactly how big is big is a matter of debate. Data sets that are multiple petabytes in size are generally considered big data (a petabye is 1,024 terabytes). But the debate over the term doesn't stop there.

There are other factors that can make data difficult to work with, such as the speed at which data is updated or the data's lack of structure. Clive Longbottom of Quocirca suggests the term "unbounded data" for data that is fast or unstructured:

Indeed, in some cases, this is far more of a "little data" issue than a "big data" one. For example, some information may be so esoteric that there are only a hundred or so references that can be trawled. Once these instances have been found, analysing them and reporting on them does not require much In the way of computer power; creating the right terms of reference to find them may well be the biggest issue.Where might you run into big data or unbounded data? Social networks, where of users are adding status updates and comments at a high-speed. Or sensor networks with data about the surrounding environment is being stored at a fast pace. Or genomics, where huge amounts of genetic data is being processed.

Database

A database is simply a way of storing and organizing data. According to Wikipedia Simple English: "The data can be stored in many ways. Before computers, card files, printed books and other methods were used. Now most data is kept on computer files." A non-electronic database could be a card catalog or a filing cabinet.

When the term "database" is used, it's usually to refer to a database management system (DBMS), which is a piece of software designed to create and manage electronic databases. A simple example might be an electronic address book.

Data Store

Data store is an even more general term than database. It's a place where any type of data is kept. Databases are data stores, but a text file full of data could also be a data store. A text file with a list of names and addresses is a data store, but an address book application on your computer is a DBMS.

Schema

According to Wikipedia, a database system's schema is "its structure described in a formal language supported by the database management system (DBMS) and refers to the organization of data to create a blueprint of how a database will be constructed (divided into database tables)."

Relational Database or RDBMS

Here's where things get interesting. A relational database is a specific type of database in which data is stored in "relations." Relations are usually tables, with rows representing different "things" and columns representing different attributes of those things.

For example, let's look at a hypothetical database for an oversimplified blogging system. Each post has a set of attributes, such as title, author, category and the post content itself. Every post has these attributes, even if some are left blank. Here's a example:

The blogging system database might also have a table called "categories" that looks something like this:

The database's schema includes the facts that post content is stored in the posts table, that posts use the Category-Id attribute for categorization, that the names of categories are stored in the categories table, etc.

When we want to view - or "query" - a post, the software fetches each attribute from each column for the row of the post you want to look at and assembles it into a post.

If you want to query a list of categories that have been created but not used in posts, the software would cross-reference the categories table with the posts table, combine them into a new table and return a list of categories without posts. This cross-referencing and combining process is called "joining."

Here's a more traditional example:

Imagine this system extended out over a number of years. You could use queries to determine which of your customers hadn't placed an order in the past year and either call them or close their account.

This may seem straight forward, but the underlying mathematics is complex. It's based on the relational model, which was created by E.F. Codd in 1969.

RDBMS stands for relational database management system, which is the type of software used to create and manage relational databases. Examples include: Oracle RDBMS, IBM DB2, Microsoft SQL, Microsoft Access, MySQL, PostgreSQL and FileMaker.

SQL

SQL stands for Structured Query Language. It specifies the commands the commands the blog software must give the database server in order to display a particular blog post or list of tags. It makes it easy for someone with experience in one RDBMS to use another RDBMS with minimal re-training.

Here's the query, written in SQL, that would join the categories table with the posts table and check for unused categories in the blog database example from above:

SELECT categories.Category-name, posts.Post-Id FROM categories JOIN LEFT posts ON categories.Category-Id = posts.Category-Id WHERE Post-Id IS nullSpecial thanks to Tyler Gillies for his help with this series

Herman Mehling asserted “Research firm The 451 Group coins "NewSQL" to categorize a new breed of database designed for distributed environments -- like the cloud” as a deck for his NewSQL: The Relational Model Meets Distributed Architectures article of 5/10/2011 for DevX:

First came SQL, then NoSQL, and now there's another addition to the SQL nomenclature: NewSQL.

The latest addition comes from the research firm The 451 Group, which published a report last month entitled How will the database incumbents respond to NoSQL and NewSQL?

"NewSQL is our shorthand for the various new scalable/high performance SQL database vendors," explained 451 Analyst Matthew Aslett. "We previously referred to these products as 'ScalableSQL' to differentiate them from the incumbent relational database products. Since this implies horizontal scalability, which is not necessarily a feature of all the products, we adopted the term 'NewSQL' in the new report."

Aslett identified three major product areas in the space:

- NoSQL databases -- designed to meet the scalability requirements of distributed architectures, and/or schema-less data management requirements

- NewSQL databases -- designed to meet the requirements of distributed architectures or to improve performance such that horizontal scalability is no longer needed

- Data grid/cache products -- designed to store data in memory to increase application and database performance

Aslett emphasized that the term NewSQL, like NoSQL, should not be taken too literally.

"The new thing about NewSQL is the vendor, not the SQL," he said. "Like NoSQL, NewSQL is used to describe a loosely-affiliated group of companies."

What the NewSQL companies have in common is their development of new relational database products and services designed to bring the benefits of the relational model to distributed architectures, he said.

So which companies does the 451Group consider to be NewSQL vendors?

In the first group the firm includes: Akiban, Clustrix, CodeFutures, Drizzle, GeniDB, MySQL Cluster with NDB, MySQL with HandlerSocket, NimbusDB, RethinkDB, ScalArc, Schooner, Translattice and VoltDB.

The second group, which 451 classifies as NewSQL-as-a-service, includes Amazon Relational Database Service, Database.com, FathomDB, Microsoft SQL Azure, and Xeround.

Clearly there is the potential for NewSQL to overlap with NoSQL. For example, said Aslett, it remains to be seen whether RethinkDB will be delivered as a NoSQL key value store for memcached or a NewSQL storage engine for MySQL.

"NewSQL is not about attempting to re-define the database market using our own term, but it is useful to broadly categorize the various emerging database products at this particular point in time," said Aslett.

NewSQL as Cloud Database

In addition to the differentiators Aslett outlines between the various NewSQL solutions, Xeround's CEO Razi Sharir adds some more.

"Aslett did not use the term 'cloud database,'" said Sharir. "I would maintain that a cloud-database is one that's built from the ground-up optimally for the cloud environment, providing 'natural' and unlimited elasticity by using only cloud resources.

Tools, applications and solutions used in a traditional on-premise and/or hosted environment simply don't cut it anymore on the uniquely distributed environment of the cloud, said Sharir.

"To take advantage of the benefits of the cloud, a cloud-enabled solution needs to be designed, built and deployed in a cloud fashion, so that the core technology relies on virtualized resources -- with the cloud as an abstract management layer on top," he said.

Simply running a traditional hosted database in a VM is not sufficient for providing a database service that's optimal for the cloud, he added.

Sharir claimed Xeround is the only NewSQL solution to offer a native cloud Database-as-a-Service, and that the solution combines the best of both worlds: NoSQL and SQL.

"Underneath the hood, we're a fully virtualized cloud NoSQL database (DHT + distributed b-tree indexes and object store,) and on the forefront, we have a customized parser that enables us to offer various database flavors, which currently expose MySQL via the storage engine API," he said.

What lies ahead for SQL, NoSQL and NewSQL?

"The lines are blurring to the point where we expect the terms NoSQL and NewSQL will become irrelevant as the focus turns to specific use cases," said Aslett.

<Return to section navigation list>

MarketPlace DataMarket and OData

The Windows Azure Team announced MSDN Webcast May 24, 2011: Leveraging DataMarket to Create Cloud-Powered Digital Dashboards in a 5/10/2011 post:

If you want to learn more about the DataMarket section of the Windows Azure Marketplace, don’t miss the upcoming free MSDN webcast, “Leveraging DataMarket to create Cloud-Powered Digital Dashboards”, on Tuesday, May 24, 2011 at 8:00 am PST. During this one-hour session, ComponentArt Director of Development Milos Glisic, and Microsoft Program Manager Christian Liensberger will demonstrate how to create interactive, web-based digital dashboards in Microsoft Silverlight 4 and mobile dashboards on Windows Phone 7 using ComponentArt’s Data Visualization technology and Windows Azure Marketplace DataMarket.

Windows Azure Marketplace DataMarket is an information marketplace where users can easily discover, explore, subscribe, and consume trusted premium data and public domain data. Content partners who collect data can publish it on DataMarket to increase its discoverability and achieve global reach with high availability. Data from databases, image files, reports and real-time feeds is provided in a consistent manner through internet standards. And users can easily discover, explore, subscribe and consume data from both trusted public domains and from premium commercial providers.

Click here to learn more about this session and to register.

<Return to section navigation list>

Windows Azure AppFabric: Access Control, WIF and Service Bus

• The Windows Azure AppFabric LABS Team warned on 5/11/2011 about an Update to Windows Azure AppFabric LABS Scheduled Maintenance and Breaking Changes Notification (5/12/2011) on 5/12/2011:

[Update]: In the planned release scheduled for 5/12/2011, we are making sure that customers will have parity between the Access Control and Caching services (currently in production) and the LABS preview environments. As result there will be some impacts as described below in the updated sections.

Due to upgrades and enhancements we are making to Windows Azure AppFabric LABS environment, scheduled for 5/12/2011, users will have NO access to the AppFabric LABS portal and services during the scheduled maintenance downtime.

When:

- START: May 12, 2011, 10am PST

- [Update] END: May 12, 2011, 10pm PST

- Service Bus will be impacted until May 16, 2011, 10am PST

Impact Alert:

- AppFabric LABS Service Bus, Access Control and Caching services, and the portal, will be unavailable during this period.

- Additional impacts are described below.

Action Required:

Access to portal, Access Control and Caching will be available after the maintenance.

- Existing Access Control namespaces will be preserved.

- [Update] Existing Access Control configuration under the namespaces will not be available following the maintenance, to upgrade to parity to the production environment. Users should back up Access Control configuration in order to be able to restore following the maintenance, or transition the application over to the production environment (Access Control is not being charged for billing periods before January 1, 2012).

- Existing Caching namespaces and data will not be available following the maintenance, to upgrade to parity to the production environment. Users should back up Caching configuration in order to be able to restore following the maintenance, or transition the application over to the production environment (Caching is not being charged for billing periods before August 1, 2011).

- Service Bus will not be available until May 16, 2011, 10am PST.

- Furthermore, Service Bus namespaces will be preserved following the maintenance, BUT existing connection points and durable queues will not be preserved.

Users should back up Service Bus configuration in order to be able to restore following the maintenance.

Thanks for working in LABS and providing us valuable feedback.

For any questions or to provide feedback please use our Windows Azure AppFabric CTP Forum.Once the maintenance is over we will post more details on the blog.

Maarten Balliauw posted A Glimpse at Windows Identity Foundation claims on 5/10/2011:

For a current project, I’m using Glimpse to inspect what’s going on behind the ASP.NET covers. I really hope that you have heard about the greatest ASP.NET module of 2011: Glimpse. If not, shame on you! Install-Package Glimpse immediately! And if you don’t know what I mean by that, NuGet it now! (the greatest .NET addition since sliced bread).

This project is also using Windows Identity Foundation. It’s really a PITA to get a look at the claims being passed around. Usually, I do this by putting a breakpoint somewhere and inspecting the current IPrincipal’s internals. But with Glimpse, using a small plugin to just show me the claims and their values is a no-brainer. Check the right bottom of this '(partial) screenshot:

Want to have this too? Simply copy the following class in your project and you’re done:

1 [GlimpsePlugin()] 2 public class GlimpseClaimsInspectorPlugin : IGlimpsePlugin 3 { 4 public object GetData(HttpApplication application) 5 { 6 // Return the data you want to display on your tab 7 var data = new List<object[]> { new[] { "Identity", "Claim", "Value", "OriginalIssuer", "Issuer" } }; 8 9 // Add all claims found 10 var claimsPrincipal = application.User as ClaimsPrincipal; 11 if (claimsPrincipal != null) 12 { 13 foreach (var identity in claimsPrincipal.Identities) 14 { 15 foreach (var claim in identity.Claims) 16 { 17 data.Add(new object[] { identity.Name, claim.ClaimType, claim.Value, claim.OriginalIssuer, claim.Issuer }); 18 } 19 } 20 } 21 22 return data; 23 } 24 25 public void SetupInit(HttpApplication application) 26 { 27 } 28 29 public string Name 30 { 31 get { return "WIF Claims"; } 32 } 33 }

Enjoy! And if you feel like NuGet-packaging this (or including it with Glimpse), feel free.

Vittorio Bertocci (@vibronet) described Fun in Netherlands (Presenting @ DevDays, Before You Get Any Ideas) in a 5/10/2011 post:

As anticipated, a couple of weeks ago I came back to EU to present at the Belgian TechDays and at the Netherland’s DevDays.

For schedule constraints, I ended up being able to travel from Antwerp to the Hague only Thursday night (a nice train ride with Isabel, during which I once again confirmed that Spaniards and Italians are not that different after all). As a result, all my 3 sessions in Netherlands had to take place on a single tour de force on Friday. Kudos to some of the guys there, who came to hear my blabbering at 9:15, came back from more at 13:15, and even braved my session in the last slot of the conference at 4:15.

Fun fact: when I arrived to check in the speaker room on Friday morning, with my breakfast uniform (frys, jeans and long sleeved t-shirt got during one speaking engagement at Disneyworld), the lady at the counter told me that they had only medium speaker polos left. Let’s just say that the Mickey tee was much preferable to that...

The DevDays guys already uploaded the videos to Channel9, hence you can see for yourself:

Important: MSDN Blogs will clip the embeded player, double click on it while playing to set it at full screen.

Here that was early morning and I was still reasonably energetic. The deep dive session was a bit harder:

Here there’s the thing. A lot of our story for identity and access control in the cloud is ACS, hence the overlap between my usual intro to identity and the cloud and the first few slides of any ACS session is pretty significant (with the sole exception a room where the audience all played with ACS before, in which case you can skip). Here more than half of the room didn’t know anything about ACS; OTOH the other almost-half was at my earlier session. I knew I had to repeat the main intro & ACS demo for the benefit of the former, but the presence of the latter made me feel guilty that I was repeating stuff they heard just few hours earlier; hence I really glided through that part, in hindsight perhaps a bit too fast. However I got really good questions and really good laughs, hence I’ll go ahead and assume it was not a complete disaster after all.

The last session of the day I was, quite frankly, exhausted; and so was my audience, although the smaller room made it harder to hide and drift. However a Red Bull really helped here: I never ever drink it unless I have to drive after a long flight or, you know, I have to talk about SaaS to an audience that endured 2 super-packed conference days and is braving the last slot on a less than trivial topic. I didn’t re-watch myself, but I was told that things were pretty lively nonetheless.

The DevDays experience was great. Kudos to Arie Leeuwesteijn , Matthijs Hoekstra and the rest of the crew for a job well done. I had a lot of fun, and acquired a few tens of new twitter followers (at @vibronet) in the process. In fact, just for setting expectations I am usually not a prolific twitterer but all those people may just shame me into tweeting more!

I certainly hope I’ll have the chance to come back next year. Until then, don’t be shy: send me your questions, one you your colleagues can confirm that I may not get back to you right away but when I do you’ve got a lot of stuff to read.

<Return to section navigation list>

Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

• Yves Goeleven (@yvesgoeleven) posted Building Global Web Applications With the Windows Azure Platform – Offloading static content to blob storage or the CDN on 5/10/2011:

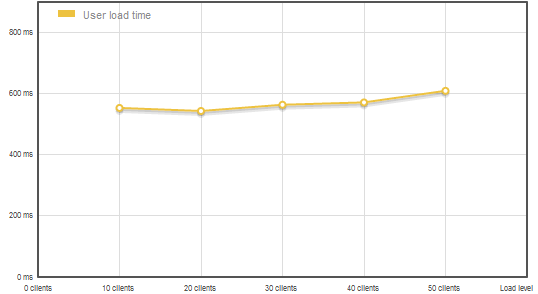

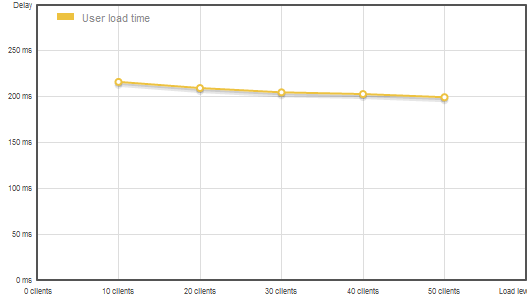

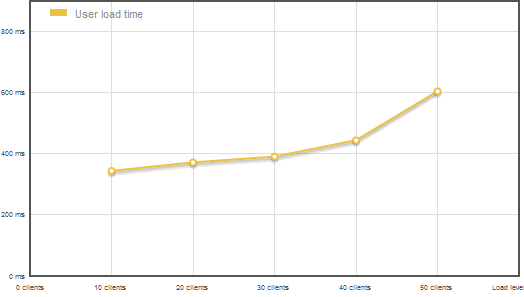

In this third post on building global web applications, I will show you what the impact of offloading images to blob storage or the CDN is in contrast to scaling out to an additional instance. Remember from the first post in this series that I had an extra small instance that started to show signs of fatigue as soon as more than 30 people came over to visit at once. Let’s see how this will improve by simply moving the static content.

In a first stage I’ve moved all images over to blob storage and ran the original test again, resulting in a nice scale up in terms of number of users the single instance can handle. Notice that the increase in users has nearly no impact on our role. I lost about 50ms in minimum response time though, in comparison to the initial test, but I would happy to pay that price in order to handle more users. If you need faster repsonse times than the ones delivered by blob storage, you really should consider enabling the CDN.

And I’ll prove it with this second test: I enabled the CDN for my storage account, a CDN (or Content Delivery Network) brings files to a datacenter closer to the surfer, resulting in a much better overall experience when visiting your site. As you can see in the following test result, the page response times decrease dramatically, down to 30 percent:

But I can hear you think, what if I would have scaled out instead? If you compare the above results to the test results of simply scaling out to 2 extra small instances, you can see that 2 instances only moved the tipping point from 30 users to 50 users, just doubling the number of users we can handle. While offloading the images gives us a way more serious increase for a much lower cost ($0.01 per 10.000 requests).

Note that the most probable next bottleneck will become memory, as most of the 768 MB’s are being used by the operating system already. To be honest I do not consider extra small instances good candidates for deploying web roles on, as they are pretty limited in 2 important aspects for serving content, bandwidth and memory. I do consider them ideal for hosting worker roles though, as they have quite a lot of cpu relative to the other resources and their price.

For web roles, intended to serve rather static content, I default to small instances as they have about 1GB of useable memory and 20 times the bandwith of an extra small role for only little more than twice the price. Still the bandwidth is not excessive, so you still want to offload your images to blob storage and the CDN.

Please remember, managing the capacity of your roles is the secret to benefitting from the cloud. Ideally you manage to use each resource for 80% without ever hitting the limit… Another smart thing to do, is to host background work loads on the same machine as the web role to use the cpu cycles that are often not required when serving relatively static content.

Next time, we’ll have a look at how to intelligently monitor your instances which is a prerequisite to being able to manage the capacity of your roles…

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

• The Windows Azure Team recommended that you Don’t Miss Real-Time Global Fan Chat Next Friday, May 20, 2011 with KISS Rock Legends Gene Simmons and Paul Stanley - Hosted by Ortsbo and Powered by Windows Azure on 5/11/2011:

Ortsbo.com, the real-time social media language translation platform, just announced that it will host “Ortsbo.com Presents: KISS Live & Global”, next Friday, May 20, 2011 at 7:30 am PDT with international rock legends and KISS co-founders Gene Simmons and Paul Stanley. Billed as the world’s first real-time, interactive global fan chat in 53 languages, the event will give fans the opportunity to submit questions and interact with Simmons and Stanley in their native language. The conversation will be instantly translated by the Ortsbo service. This event will serve as a prototype for future global commercial events with Live Nation, their artists and Ortsbo.com.

Built on the Windows Azure platform, Ortsbo.com eliminates the need to cut/copy and paste text into a translator by automatically translating typed text into the specified language instantly. Users simply indicate the desired language for their intended recipient and Ortsbo does the rest. Ortsbo even lets users conduct multiple chat sessions simultaneously across multiple social networks in multiple languages, all in real time.

Ortsbo.com enables real-time conversational translation in over 50 languages to well over 1 billion active chat accounts in more than 170 countries and/or territories and seamlessly integrates with most popular social media platforms.

Click here to pre-register for the KISS Live & Global event and to learn more about Ortsbo.

Kevin Casey asserted “Targeting SMBs and branch offices, CA will launch a version of its ARCserve platform on the Windows Azure cloud later this year in partnership with Microsoft” in a 5/10/2011 InformationWeek article:

CA Technologies announced on Tuesday that it will launch an online version of its ARCserve backup and recovery platform later this year, to be delivered in partnership with Microsoft via the Windows Azure cloud. The subscription-based software is intended to attract smaller business and branch office customers.

"This is very much targeted at the SMB market," Brian Wistisen, director of product management at CA, said in an interview. "It really allows a much more attractive model for them versus some of the more conventional kinds of backup solutions that they might be presented with."

Pricing has not been set, nor has a launch date. CA simply said the cloud subscription service will be available at some point in the second half of this year. CA isn't putting its definition of small business in permanent ink. Wistisen said that CA typically considers somewhere between 20 to 200 employees as the range, but notes that the software is built to scale into the midmarket. In terms of data volume, the cloud version of ARCserve could suit firms with just a few gigabytes up to several terabytes, according to Wistisen.

A better benchmark than the number of employees might be the size of the IT department. "It's meant for organizations that really don't have a lot of the resources or infrastructure in place to be able to manage and service more of a traditional backup solution," Wistisen said.

Given growing virtualization adoption among SMBs, there's one noteworthy catch: Because it will be delivered through Windows Azure, the cloud version of ARCserve will only support Microsoft's Hyper-V for backup of virtualized environments. That could change in the future, but at launch Citrix and VMware users will have to look elsewhere. "We're specifically focusing on the Windows Azure relationship right now, although there's a lot of potential we have and that we're looking at with regards to other platforms and other kinds of potential offerings," Wistisen said.

Wistisen describes the Azure-based version of ARCserve as a hybrid backup and recovery tool, noting that it will support on-site storage as well. That's important for both data redundancy as well as minimizing latency in recovery situations. "It really allows the best of both worlds from the standpoint of cloud-based data protection, but we're going to be allowing these customers to store that data locally as well for disaster recovery purposes," Wistisen said.

The hybrid model for backup and recovery appears to be gaining steam, at least among vendors catering to small and midsize firms. Symantec recently announced it will add both cloud and appliance versions of its client-side Backup Exec software. Earlier in the year, Cisco entered a partnership with Mozy to offer optional online backup on its network storage devices for SMBs.

"Customers are realizing it's very, very critical not to place all of your eggs in one basket in terms of storing your data," Wistisen said. "We're seeing a lot of demand for hybrid as a go-forward model, especially in SMB arenas where they may not have the resources to develop their own private cloud or an additional off-site repository."

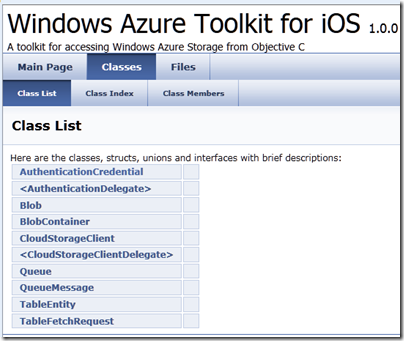

Bruce Kyle recommended that you Connect iPhone Apps to Windows Azure with New Toolkit in 5/10/2011 post to the US ISV Evangelism blog:

A new toolkit helps you connect your iPhone apps to the cloud. Check out Windows Azure Toolkit for iOS. This toolkit contains resources and services designed to make it easier for iOS developers to use Windows Azure.

This iOS toolkit includes the following pieces:

- A compiled Objective-C library for working with services running in Windows Azure (e.g. push notification, authN/authZ, and storage)

- Full source code for the objective-C library (along with Xcode project file)

- Sample iOS application that demonstrates how to use Windows Azure Storage with full source code

- Documentation

Take a look at Windows Azure Technical Evangelist Wade Wegner’s post for a detailed technical review of the iOS toolkit.

For more information and how to get the toolkit, see NOW AVAILABLE: Windows Azure Toolkit for iOS. Toolkit includes source code.

Also Windows Azure Toolkit for Android should be ready in June.

Tim Anderson (@timanderson, pictured below) asserted Microsoft’s Azure toolkit for Apple iOS and Android is a start, but nothing like enough in a 5/10/2011 post:

Microsoft ‘s Jamin Spitzer has announced toolkits for Apple iOS, Google Android and Windows Phone, to support its Azure cloud computing platform.

I downloaded the toolkit for iOS and took a look. It is a start, but it is really only a toolkit for Azure storage, excluding SQL Azure.

What would I hope for from an iOS toolkit for Azure? Access to SQL Server in Azure would be useful, as would a client for WCF (Windows Communication Foundation). In fact, I would suggest that the WCF RIA Services which Microsoft has built for Silverlight and other .NET clients has a more useful scope than the Azure toolkit; I realise it is not exactly comparing like with like, but most applications built on Azure will be .NET applications and iOS lacks the handy .NET libraries.

A few other observations. The rich documentation for WFC RIA Services is quite a contrast to the Doxyfile docs for the iOS toolkit and its few samples, though Wade Wegner has a walkthrough. One comment asks reasonably enough why the toolkit does not use a two or three letter prefix for its classes, as Apple recommends for third-party developers, in order to avoid naming conflicts caused by Obective C’s lack of namespace support.

The development tool for Azure is Visual Studio, which does not run on a Mac. Microsoft offers a workaround: a Cloud Ready Package which is a pre-baked Azure application; you just have to amend the configuration in a text editor to point to your own storage account, so developers without Visual Studio can get started. That is all very well; but I cannot imagine that many developers will deploy Azure services on this basis.

I never know quite what to make of these little open source projects that Microsoft comes up with from time to time. It looks like a great start, but what is its long-term future? Will it be frozen if its advocate within Microsoft happens to move on?

In other words, this looks like a project, not a strategy.

The Windows Azure Tools for Eclipse, developed by Soyatec and funded by Microsoft, is another example. I love the FAQ:

This sort of presentation says to developers: Microsoft is not serious about this, avoid.

That is a shame, because a strategy for making Azure useful across a broad range of Windows and non-Windows clients and devices is exactly what Microsoft should be working on, in order to compete effectively with other cloud platforms out there. A strategy means proper resources, a roadmap, and integration into the official Microsoft site rather than quasi-independent sites strewn over the web.

Related posts:

The Windows Azure Team posted Real World Windows Azure: Interview with Itzik Spitzen, VP of Research & Development at Gizmox on 5/10/2011:

MSDN: Can you tell us about Gizmox and the Instant CloudMove solution?

Spitzen: Gizmox was founded in 2007 with a vision to bridge the gap between the security, performance and ease of development traditionally provided by client/server desktop applications and the scale, economics and stability provided by the Cloud.

Based on our award-winning Visual WebGui technology we created migration solutions from client/server desktop to Web and the cloud. The Instant CloudMove solution enables the auto-transposition of application code that runs locally as a client/server application into an application that runs natively on Windows Azure as a rich Web application.

MSDN: What is unique about Instant CloudMove?

Spitzen: Visual WebGui CloudMove provides one of the shortest paths for moving client/server applications to Windows Azure. Our tools allow 85-95% of the migration process to be done automatically, which saves organizations from throwing away years of investments in code when looking to move to the cloud.

Once the code is transformed into Visual WebGui, applications can be accessed from mobile devices, tablets and PCs from the same application code, without the need for specific client installations.

MSDN: What are the key benefits of the solution for Windows Azure?

Spitzen: Visual WebGui's unique architecture and protocols are optimized for Windows Azure; our tests indicate savings of about 90% in bandwidth consumption and 50% on Windows Azure compute instances for many scenarios. We provide tools to assess the expected resource consumption of an existing application once it is migrated to Windows Azure to help predict the costs of running in the cloud before making the move.

MSDN: Can all client/server applications be transformed to Windows Azure using this solution?

Spitzen: Basically yes. We have tools for migrating from .NET and VB6 and we are working on automated solutions for migrating from technologies such as Oracle Forms and COBOL. Our solution also includes a free assessment tool, which provides a quick readiness test to Cloud. The tool generates a detailed report that indicates on the level of complexity and as a result the effort that would take to migrate a certain client/server application to the Cloud.

We are currently giving a 25% discount on transposition licensing and services to Windows Azure customers. Learn more about Windows Azure offers here. Learn more about the discounted services we are offering here.

VIDEO: CloudMove Transposition Process in Action

Click here to learn more about Gizmox. Click here to learn more about partner opportunities with the Windows Azure platform.

Click here to read how other companies are using the Windows Azure platform.

<Return to section navigation list>

Visual Studio LightSwitch

Beth Massi (@bethmassi) posted a Trip Report: DevDays/TechDays 2011, Netherlands on 5/10/2011:

Recently I spoke at DevDays 2011 in The Hauge, Netherlands, and what a show! It’s my second time speaking here at the World Forum – a great venue that holds a few thousand people. This is a professional developer and IT Pro conference that they put on every spring. I did 3 sessions and 1 “geek night” talk all on Visual Studio LightSwitch so I came up with some new content and demos that I think people enjoyed.

Introducing Visual Studio LightSwitch

This session was in a huge theater that had maybe 400-500 people. It was awesome to see such an interest in this new member of the Visual Studio family. In this session we build my version of the Vision Clinic application from scratch, end-to-end, including security and deployment. We do write some code but only some simple business rules and calculated fields, and in the end we have a full-blown business application. The goal is to show what LightSwitch can do for you out of the box without having to know any details of the underlying .NET technologies upon which it is built. I did the entire demo with just Visual Studio LightSwitch installed (not VS Pro or Ultimate) so that I could show how simplified the menus, toolbars, and tool windows are inside the development environment. The recording is available on Channel 9. I suggest downloading the High Quality WMV:

Video Presentation: Introduction to Visual Studio LightSwitch

When I asked who had downloaded the Beta already, only about 10% raised their hand so I made it my job to convince everyone to go download it afterwards ;-). I also asked how many people were not professional developers (didn’t get paid to write code) and a few people raised their hand (I expected only a few since this was a pro developer conference). Those few were IT pros and business people that came to listen in and one business person followed me to my next talks because of how excited he was in the prospect of using LightSwitch for his cloud consulting business.

What I showed in the session is pretty much exactly what I included in the LightSwitch Training Kit. If you look under the “LightSwitch Overview” on the right-hand sidebar on the opening page of the kit you will see the complete demo code and demo script that you can use for training folks at your local user groups. :-) Here are some more resources to check out that will help introduce you to Visual Studio LightSwitch:

- LightSwitch Developer Center & Learning Center

- LightSwitch Training Kit

- LightSwitch How Do I Videos

- LightSwitch Samples

- LightSwitch Team Blog

- LightSwitch Forums

Visual Studio LightSwitch – Beyond the Basics

This session drew about 100 or so attendees, and everyone but a couple people had either been to the intro session or already knew what LightSwitch was. In this talk I show what you can do with LightSwitch beyond just the screen templates and entity designer.

Video Presentation: Visual Studio LightSwitch – Beyond the Basics

I started off by quickly walking through the Course Manager sample which shows off features of Beta 2. This application does not have any custom controls or extensions and really shows what kind of powerful applications you can build right out of the box with just Visual Studio LightSwitch installed. Check out the series Andy has started on how he built the Course Manager.

We then dove into the LightSwitch API and I explained the save pipeline and the DataWorkSpace as well as talked a little bit about the underlying n-tier architecture upon which LightSwitch applications are built. I also showed how to build custom controls and data sources as well as use extensions. In this session I had LightSwitch installed into Visual Studio Professional so that I could show building custom controls. You create custom controls like you would any other Silverlight control in a Silverlight class library which can be referenced and used on screens. If you want to go a step further you can create a LightSwitch extension which (depending on the type of extension) integrates into the LightSwitch development environment and shows up like other built-in items.

To demonstrate custom controls, I built a simple Silverlight class library with a custom list box of my own and then showed how you can set up the data binding to the view model and reference the control on your LightSwitch screen. I also built a custom RIA service and showed how LightSwitch screens interacted with custom data sources. When I got to extensions, I used the Bing map control extension (which is included in the Training Kit) and loaded it into LightSwitch. Just like any other Visual Studio extension, LightSwitch extensions are also VSIX packages you just click on to install. I then added the map to a Patient details screen to display the address of the patient.

LightSwitch Advanced Development and Customization Techniques

In this session I showed some more advanced development and different levels of customization that you can do to your LightSwitch applications. There were about 50 people in the session and I recognized some of them as MVPs and other speakers. This isn’t surprising since the product is still in Beta, I was happy to see 50 people there ready to dive deeper. :-)

Video Presentation: LightSwitch Advanced Development and Customization Techniques

I started off by showing an application called “Contoso Construction” that I built using Visual Studio LightSwitch edition (no VS Pro installed) that has some pretty advanced features like:

- “Home screen” with static images and text similar to the Course Manager sample

- Personalization with My Appointments displayed on log in

- “Show Map..” links under the addresses in data grids

- Picture editors

- Reporting via COM interop to Word

- Import data from Excel using the Excel Importer Extension

- Composite LINQ queries to retrieve/aggregate data

- Custom report filter using the Advanced Filter Control

- Emailing appointments via SMTP using iCal format in response to events on the save pipeline

(I PROMISE I will get this sample uploaded but we want to use it at TechEd next week so I was asked to wait a week.)

During the session we built some of the parts of the application that any LightSwitch developer has access to. You don’t necessarily need VS Pro to write advanced LightSwitch code, you just need it to build extensions (which we did in the end). I showed how to access the code behind queries so you can write more advanced LINQ statements (and I messed up one of them too – my only demo hiccup of the conference so not so bad!) I showed some advanced layout techniques for screens and how to place static images and text on screens. I also showed how to flip to File View and access client and server projects in order to add your own classes. We injected some business rules into the save pipeline in order to email new, updated and canceled appointments and I walked through how to use content controls in Word to create report templates that display one-to-many sets of data.

I then went through the 6 LightSwitch extensibility points. Shells, themes, screen templates, business types, custom controls and custom data sources. I showed how to install and enable them and then we built a theme. I showed off the LightSwitch Extension Development Kit which is currently in development here. This will help LightSwitch extension developers build extensions quickly and easily and I used it to build a theme for the Contoso Construction application. Just like I mentioned before, LightSwitch extensions are similar to other Visual Studio extensions, they are also VSIX packages you just click on to install and manage via the Extension Manager in Visual Studio (also included in LightSwitch). For a couple good examples of extensions, that include all source code see:

You can get a good understanding of more advanced LightSwitch features by working through the LightSwitch Training Kit. If you look under the “LightSwitch Advanced features” section on the right-hand sidebar on the opening page of the kit you will see the demos and labs.

Here are some more advanced resources of Visual Studio LightSwitch to explore:

- LightSwitch Beta 2 Extensibility “Cookbook”

- The Anatomy of a LightSwitch Application

- Walkthrough of a Real-World LightSwitch Application used at Microsoft

- Channel 9 Interview: Inside LightSwitch

Fun Stuff

One of our ASP.NET MVPs and LightSwitch community champions, Stefan Kamphuis held down the fort at the Visual Studio LightSwitch booth. He said it was packed the entire conference. I stopped by a couple hours and there were a lot of great discussions around how LightSwitch works, how does it scale, and deployment options. I also found it cool that everyone that came by on my watch had backgrounds in VB6, Access, Cobol and/or FoxPro and struggled with the move to .NET in the beginning for these types of business applications (myself included).

My geek night session was titled “Build a Business Application in 15 minutes” but the session was 45 minutes long and no one brought beer. So I started asking people what king of bells and whistles we should add to the app. I screen scraped the session and speakers lists from the DevDays website and then used the Excel Import extension to upload the data into the system. The cool thing about this control is that it will also relate data entities together for you, so I had a list of speakers and their sessions. I then added a rating control from the Silverlight Toolkit and adapted it to use it in LightSwitch to give speaker ratings. I think people enjoyed it even though I didn’t have beer. ;-)

I stayed thru Saturday for Queensday and OMG what a party. I’ve never seen so many people dancing on the streets nor that much trash in my life. What an unforgettable experience. I had an absolute blast!

Beth gets a full-size mug shot to make her Oakland A’s badge readable.

Return to section navigation list>

Windows Azure Infrastructure and DevOps

• Shamelle posted Windows Azure Multi-tenancy Cloud Overview on 5/6/2011 to the Azure Cloud Pro blog (missed when posted):

I heard the word multi-tenancy about a year ago when it was brought up in a requirements meeting I was part of. While I can’t disclose the entire conversation, let’s just say one of the main requirements was, “This application should support multi-tenancy!”.

Since then my knowledge on multi-tenancy has grown (or so I’d like to think!) . As part of today’s blog post, I thought of writing an overview on Multi-tenancy to help anyone else, who’s hearing the word multi-tenancy for the first time!

What is Multi-tenancy?

Multi-tenancy refers to a principle in software architecture where a single instance of the software runs on a server, serving multiple client organizations (tenants)

(reference: wikipedia)For example (a simplified one!), let’s say there are 5 customers that require to use the same application. So instead of offering 5 copies of the OS, 5 copies of the database and 5 copies of the application, there could be 1 OS, 1 Database and 1 application on the server (note: Hardware resource capabilities may defer). Thus, the 5 customers would coexist within the application and work as though they had the entire application just to themselves.

Single-Tenant vs Multi-Tenant

Single-tenant has a separate, logical instance of the application for each customer.

Multi-tenant has a single logical instance of the application which is shared by many customers.(reference: Developing Applications for the Cloud on the Microsoft® Windows Azure(TM) Platform (Patterns & Practices))

Multi-Tenancy Architecture Options in Windows Azure

Multi-Tenancy in Windows Azure is not as clear cut as it is depicted in the above figure. It can get a lot more complicated! Meaning, components in Windows Azure can be designed to be single tenant or multi-tenant.

You think that’s hard enough, think again. Even for databases there can be many options. Do you want to share the entire database and schema with all the customers, or perhaps have one database then then have separate schemas etc. There is a good article on MSDN, titled Multi-tenant data architectures, if you like more information.

All in all, the underlying infrastructure is shared, allowing massive economy of scale with optimal repartition of load.

Additionally, multi-tenancy allows for easier application maintenance since all application code is in a single place. It is much easier (and cheaper!) to maintain, update and backup the application and its data.I will be writing more and maybe even tryout some examples with windows Azure code for multi-tenancy. Stay tuned..

Christian Weyer explained Erm, whats happening? Updating Windows Azure role configuration in a 5/20/2011 post:

Sometimes the question shows up why the change of configuration of a Windows Azure deployment does not take effect immediately (whatever that means).

If you change the configuration (via the portal or via the management API) of your Windows Azure deployment then the change will happen almost immediately.

Well, not exactly.The change will be rolled out to the instances using upgrade domains.

Source: Ryan Dunn’s TechEd 2010 presentation “Deploying, Troubleshooting, Managing and Monitoring Applications on Windows Azure”

For each upgrade domain a status change event will be raised and the instance can decide whether it will handle the change or whether it needs to be restarted.

Once the instances in that upgrade domain have reported that they are ready (either by handling the change, or by coming back online after restarting) then the fabric controller will move to the next upgrade domain.Hope this helps.

JP Morgenthal (@jpmorgenthal) claimed Dependency Creep Can Impact Your Cloud Migration Strategy in a 5/10/2011 post:

With Cloud Computing emerging on the scene as a solution to a number of computing use cases, it will drive modernization of your existing systems. Perhaps it's just a new interface for driving mobile access to corporate data or consolidating standalone servers into a Cloud for achieving greater utilization from fewer resources. In either case, the number of dependencies between system tiers is increasing shrinking the distance between them.

While we have been trained to believe silo systems are inefficient, they are much easier to develop businesses continuity plans around. In recent discussions with a Director of IT from an insurance company, it was relayed to me that the processes for managing in face of a disaster for his mainframe was well-tested every year for the past ten years. While his process still takes between four and five days to complete, the nature of restoring the mainframe systems are straightforward: turn on disaster recovery site hardware, load applications, load last known data, run missed jobs, etc.

The problem for this individual is that users have progressively stopped using these applications directly in the past five years. Instead, these users are now accessing the mainframe data through a hierarchy of applications that have been built over the years. Moreover, these applications have not been developed as part of a cohesive strategy that incorporates them into the business continuity planning in face of a disaster. So, now, there's a host of applications that are all feeding each other and there's no roadmap detailing the connectivity and flows between these applications nor a plan for the order in which they need to be restored in the event of a disaster. We call this dependency creep.

As difficult as dependency creep is as described above, if each of these applications are deployed on silo hardware in a single data center, there's an opportunity to catalog these applications after the fact. When these applications move into the Cloud and may be distributed across public and private nodes and integrated with other public services, the dependency creep becomes unwieldy and unmanageable. Moreover, without appropriate levels of communications between engineering and operations, these dependencies can become recursive. These recursive dependencies work fine when all services are up and available, but can be extremely problematic to restore if a very specific ordering is not followed.

So, what can you do today to avoid these issues later? First, initiate the development of a DevOps initiative within your enterprise. DevOps fosters communications between engineering and operations so that applications are developed with an understanding of how they will be deployed and operated. When operations and engineering operate in isolation, applications work fine in a pristine test environment, but tend to fall over when deployed in production. Engineers must understand the production environment that their application will be running in and operations must understand how the application works and how it is designed. Building a DevOps group may require individuals to learn new skills to support this effort.

Secondly, develop your IT services catalog. Your service catalog will provide you with the means of identifying dependencies. Unfortunately, the organic nature of IT means that we have built systems with the spaghetti-like interconnections that we typically associate with bad software development. Untangling those dependencies is going to take a concerted effort, but is critical to not only ensuring that you can survive a disaster but that you can respond to less critical outages as they occur without the “fire-drills” that typify many operations environments.

Migrating to the Cloud offers multiple benefits and offers opportunity to solve problems that were previously cost prohibitive. However, any time you open the doors to the data, it seems that the line rapidly forms to consume that data; or as it is commonly known as “build it and they will come!” Furthermore, once the business taps that source, ecosystems will build around it that includes business processes and applications. These dependencies must be cataloged, managed and incorporated into your business continuity planning or it's very likely your business will be significantly impacted by service outages.

No significant articles today.

<Return to section navigation list>

Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

Carl Brooks (@eekygeeky) reported Private cloud vendors send mixed message from Interop 2011 on 5/10/2011:

IT shops are on the hunt for practical uses for cloud computing, both private and public, while vendors are stuck on rehashing old debates about the meaning of and the business case for cloud computing.

A panel on private cloud at Interop this week saw representatives from Novell, IBM, NEC and Intel deliver a confusing mixed message. John Stetic, VP of product management at Novell, said private cloud was essentially a second step after virtualization and, at its core, mostly about automation and ease of provisioning for users. Rich Lechner, VP of cloud marketing for IBM, said it was great for delivering desktop information services.

"We have 100,000 in sales and marketing using business analytics in our private cloud," Lechner said. He added that IBM also had 15,000 to 20,000 developers in India and China who were using what he called a "more traditional" cloud model for virtual machines and test and development.

Cloud computing has been broadly defined by NIST since 2009 as online, self-service, pay-as-you-go access to computing power and IT services. The panel agreed that moving to private cloud meant installing tools and platforms in the data center to gain the kind of flexibility and capabilities demonstrated by public cloud providers, like Amazon, and that installing appropriate mechanisms for chargeback and accounting was vital. Some users took umbrage at this idea.

IT pros offer their two cents on cloud

"If anyone in the room is considering a cloud project and starting with charging mechanisms, don't do it," said Christian Reilly, speaking from the audience to the panel. "It'll fail and you'll go down all the [wrong] rabbit holes, so that's a piece of free advice."Reilly is an IT architect with a large multinational and considers his firm to have built a private cloud. He said the focus on accounting and infrastructure was a snooze, and what ended up being really important was delivering entire application stacks and services instead of focusing on infrastructure automation.

Also speaking from the floor, Randy Bias, CEO of consulting firm Cloudscaling, complained that it simply wasn't realistic to expect enterprises to try and ape Google or Amazon, since the enterprise data center has a completely different set of purposes and needs than that of a technology provider. He said it wasn't enough to want technology, there had to be specific, actionable uses for cloud computing techniques.

"Why are they going to adopt cloud?" he asked.

Novell's Stetic responded that it was necessary to take a long view, adding that cloud, like virtualization, would be adopted incrementally as users discovered it could actually be operationally more efficient. He said companies like Google and Facebook provided a "leading edge."

Despite the ambush from the audience, no one disagreed that cloud is here to stay and that enterprises are turning to it in various forms. It was also clear that enterprises are most interested in a hybrid, pastiche approach to cloud computing, picking and choosing from public, hosted cloud services where appropriate and revamping for private cloud-style operations over time.

"The reality is we're going to be hybrid cloud," said Dot Davis, who runs technical support operations for a large scientific equipment maker. She said her firm's IT was already a complicated web of services, applications, operations and products, and cloud computing wasn't likely to change her life.

Davis said despite the never-ending marketing noise around cloud, it was really just about finding and implementing the appropriate solution for a problem.

"I guess we'll all find some kind of Kool-Aid to drink," she said.

More from Interop:

Full disclosure: I’m a paid contributor to SearchCloudComputing.com.

<Return to section navigation list>

Cloud Security and Governance

• Lori MacVittie (@lmacvittie) warned A recent power outage in the middle of the night reveals automation without context can be expensive for aquariums – and data centers as an introduction to her Don’t Let Automation Water Down Your Data Center post of 5/11/2011 to F5’s Dev Central blog:

You may recall from several posts (Cloud Chemistry 101, The Zero-Product Property of IT and The Number of the Counting Shall be Three (Rules of Thumb for Application Availability) that one of my hobbies is “reefing.”

No, it’s not that kind of reefer madness, it’s the other kind – the kind associated with aquariums and corals and all manner of strange looking ocean-living fish. I only recently re-engaged after years of avoiding the hassle (and enjoyment) and have been learning a lot, especially in terms of what’s been learned by others during my off-years.

When I set up my most recent aquarium adventure – a 150 gallon reef – I decided to use an external sump. Think of it like an external reservoir in which all sorts of interesting filtering and water quality activities can be handled without all the tubes and equipment that might otherwise clutter up the main display tank. It’s also handy for setting up things like automated top-off systems, which automatically add fresh water to the system to compensate for evaporation. Such systems can, it turns out, be problematic if they aren’t enabled with the proper context in which to automatically kick in. It’s loose coupling of a system, much in the same way application delivery can abstract policy enforcement and infrastructure services from the applications it delivers, making the system more agile and able to be adapted to problems without disrupting the main tank, er, application.

MAINTAINING EQUILIBRIUM

I talked about achieving dynamic equilibrium in a previous post so suffice to say that as water evaporates from an aquarium only water is lost. This is not so critical a point in a freshwater system, but in a salt-water system it is absolutely important to understand.

The more “salt per gallon” in a salt-water aquarium, the higher the salinity. Obviously if salt is not evaporating but water is, then salinity increases. Conversely, if too much fresh water (i.e. 0 salinity) is added, salinity decreases. You might guess that a rather narrow range of salinity is required to support a reef. Too low or too high, and things start suffering rather quickly. The balance needs to be maintained in order to maintain a healthy ecosystem.

Now, the relationship between all the moving parts in my reef setup are very much like the complex relationships between components and resources in a data center. Water (requests and responses) flow out of the display tank and into the sump (application delivery controller), are filtered by a protein skimmer (web application firewall), and then returned via a return line to the main tank. As water evaporates it reaches a minimum level on an automated sensor (application health monitors) that trigger a response that forces additional fresh water (compute resources) into the sump, which re-establishes equilibrium and maintains salinity by sustaining a specific water-salt ratio in the ecosystem. Flow rates are equalized between input and output, and when the power is on everything runs smooth as pie.

But when power went out not once, but three times last week, that automation that saves me so much time under normal operations, bit me in the proverbial derriere.

AN EXCESS of RESOURCES

So what happens when the power goes out? Well, if not for the protein skimmer (C in the lovely diagram to the left) nothing. The pumps stop pumping and water in the display tank (A) which continues to drain from the overflow until it hits the siphon break and then stops.

That’s about 13 gallons of water. When added to the sump’s level of 10 gallons, that’s about 23 gallons of a 25 gallon capacity container. But add in the approximately 2 gallons from the protein skimmer combined with natural water displacement from the equipment and … wet floor. Water overflow. Once might not be too bad, but twice? Three times in one night?

But it wasn’t just the wet floor that was the problem. See, once the power returned the automated top-off system, recognizing the water level was down, did what it does best: pumped fresh, desalinated water into the sump. It did it so well, in fact, that the salinity levels in the entire system dropped from a comfortable 1.025 to a rock-bottom minimum of 1.023. Luckily that’s not “rock-bottom” in terms of survival, and everything that was alive is still doing well and in fact flourishing, but it pointed out a flaw with the automation I’d put into place – it’s not contextually aware. It’s not intelligent. It just … is.

A more experienced reefer (with these kinds of complex systems) would point out that salinity monitoring is essential and that a secondary system designed to ensure the maintenance of a specific specific gravity (another way to say salinity) is vital to maintaining the proper water chemistry. I would be inclined to agree after recent events, and find that this is a fine example of potentially similar problems with data center automation.

CONTEXT is CRITICAL

Let’s assume a data center that uses monitoring of application performance and has in place an auto-scaling or server flexing system that, when triggered, automatically adds new resources to a given application to improve performance, assuming resource consumption (high volume of users) is the core factor in performance.

That all sounds great in theory, like my reef setup, but in practice it can go horribly wrong. For example, if the reason resource availability is decreasing is due to a concerted DoS attack across multiple layers of the stack, adding more resources is unlikely to restore equilibrium. You can add compute resources all day but it won’t address the consumption of bandwidth or infrastructure resources caused by the attack. Without context, the automated system simply does without thinking what it’s been told to do. And if those resources are in a cloud-based environment for which you are charged by the instance hour, you may increase costs dramatically without seeing any return on that investment. Cloud-bursting can be a valuable tactical response to balancing the need for more capacity with costs, but if those resources are added without context then, like adding fresh-water to compensate for non-evaporative water loss to a salt-water system, you may be diluting the efficiency of the entire application delivery chain.

But if the automated system had visibility and context-awareness, if it was intelligent and could factor in all the variables – network and compute – it could react accordingly and perhaps take some other action that would address the real problem, like activating security-minded policies that throttle bandwidth based on usage patterns, or start blocking offending user sessions. The what is less important than how, for our purposes, because it’s really about having the context in the first place to enable the application of organizational-specific policies supporting operational goals. Without context, without collaboration, automation is likely to result in blind decisions – made without understanding the root cause and potentially causing more damage than good.

Context is critical to ensure that automation is supportive of – not detrimental to- operational efficiency and goals.

• Richard L. Santaleza announced the Third in our Cloud Computing Webinar Series in a 5/11/2011 post to the Information Law Group blog:

Legal Issues of Security and Privacy in Cloud Computing

Wednesday, May 24, 2011 – 12:30 pm ET

In this free upcoming webinar on cloud computing, Information Law Group Attorney, Richard Santalesa will examine the Legal Issues of Security and Privacy in Cloud Computing. To register, click here.

The government is getting involved in cloud computing in a major way – both as a growing consumer of cloud services and as a standard setter in developing technical best practices and recommendations for achieving and maintaining security and privacy when using cloud services. Recently the National Institute of Standards and Technology (NIST) released a draft for public comment of “Guidelines on Security and Privacy in Public Cloud Computing,” here, as part of ongoing developmental work by NIST’s various active cloud working groups.

In this webinar and the accompanying white paper we examine the technical and legal implications for various security and privacy issues arising from the NIST’s guidelines and other related NIST materials, with a focus on the legal considerations any team tasked with implementation of security best practices will need to grapple with.

David Linthicum (@DavidLinthicum) asserted “The hacks and Sony's incompetence involve bad security practice, not a flaw in cloud services per se” as a deck for his Why Sony's PSN problem won't take down cloud computing article of 10/5/2011 for InfoWorld’s Cloud Computing blog:

It's interesting to see the number of people calling for the downfall of cloud computing after the recent Amazon Web Services outage and, of course, Sony's huge PlayStation Network security blunder that potentially let loose 100 million credit card numbers. Clearly, the security was less than stellar and Sony is now scrambling to correct the issues after the fact.

Indeed, this Reuters article makes a case for Sony's ability to bring down the cloud. "Cloud computing companies have done a good job convincing customers that their data is safe, even though that may not be the case," said Gartner cloud security analyst Jay Heiser. The article goes on to cite a ton of cloud computing naysayers who point to the Sony incident as a reason not to go to cloud computing.

The logic around this sentiment is inconsistent. Those who are on the fence about public cloud computing take these incidents to a conclusion that defies logic. Just because Sony did not take the steps necessary to secure their data, how does that reflect on other public cloud computing providers?

Sony is not even a cloud computing provider. It does not sell PaaS, IaaS, or SaaS. However, it is another example of a large company that failed at the basic tenets of computing security. It happens all the time.

If you shun cloud computing because Sony compromised credit card numbers, do you also avoid laptops because of incidents over the years where they were lost or stolen, and the data on them (including credit card data) was compromised? Don't even get me started on thumb drives.

These concerns won't go away, whether or not you're using the cloud. Data will be compromised if those who own the data don't take steps to protect it -- regardless of where it's stored.

Jay Heiser asked How long does it take to reboot a cloud? in a 5/10/2011 post to his Gartner blog:

Commercial cloud computing raises two significant disaster recovery issues:

- What is the cloud provider’s ability to recover their own services?

- What is the enterprise’s ability to obtain an alternative to a vendor that can’t recover themselves?

To the extent that cloud computing actually exists, and actually is a new model, we have to consider that traditional forms of BCP/DR, and the validation of the existence and efficacy of such, may either need to be reinterpreted, or new practices and even vocabularies may be necessary. As a parallel, I think its fair to say that traditional IT security concepts and the related business requirements are still perfectly valid, but the relative degree to which traditional forms of risk assessment and testing can be applied has been reduced by this new model. The security domain doesn’t need to reinvent the wheel to respond to cloud computing, but there is every reason to think that current wheel designs (and tires, for that matter) are less than non-optimal for this new task. It is almost certainly the case that other IT risk domains also need to consciously consider how to apply their old concepts to this new computing style.

The practical implications of cloud ambiguity is a wilful lack of attention to architectural and build issues, with relatively greater levels of attention to operational processes as a sub-conscious form of compensation. The light shines strongly on operations, so that’s where everyone looks. The problem is that our understanding of what constitutes an appropriate set of processes is based upon the requirements of a single host using a familiar operating environment. I can make a Unix or Windows box as secure as you want, and I can back it up out the whing whang and have a high degree of confidence that come what may, I can restore service within an expected time frame.

In contrast, I have no basis for determining the propensity to fail, in either a confidentiality or data availability sense, of a proprietary environment based on hundreds of thousands of servers in 3 dozen data centers, tenanted by millions of users of hundreds of applications and services.

There are clear fault tolerant advantages to most commercial cloud services. A small to medium business, or a small business unit in any size business, can easily obtain a highly reliable level of service at a relatively low cost, and it can be done quickly and conveniently.

What is not the least bit clear is the relative ability of any Cloud Service Provider to restore your data into their services in cases in which their high availability, fault tolerance mechanisms do not protect your data. Indeed, in certain instances, the fault tolerant mechanisms can cause an auto-immune failure that virtually ensures that every live copy of your data will be impacted.