Windows Azure and Cloud Computing Posts for 1/19/2011+

| A compendium of Windows Azure, Windows Azure Platform Appliance, SQL Azure Database, AppFabric and other cloud-computing articles. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database and Reporting

- Marketplace DataMarket and OData

- Windows Azure AppFabric: Access Control and Service Bus

- Windows Azure Virtual Network, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch

- Windows Azure Infrastructure

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Azure Blob, Drive, Table and Queue Services

No significant articles today.

<Return to section navigation list>

SQL Azure Database and Reporting

My (@rogerjenn) updated Resource Links for SQL Azure Federations and Sharding Topics post of 1/19/2010 has taken over from an earlier article:

This post originated in the “SQL Azure Database and Reporting” section of my Windows Azure and Cloud Computing Posts for 1/15/2011+ post. I’ve been adding to the links on an almost daily basis, so I decided to create a free-standing article that I’ll update independently.

The earlier article no longer will be updated.

The Programming4Us blog presented OData with SQL Azure - OData Overview on 1/19/2010:

OData stands for Open Data protocol. It's a REST-based web protocol for querying and updating data completely independent of the platform or source. OData accomplishes this by utilizing and enhancing existing web technologies such as HTTP, JavaScript Object Notation (JSON), and the Atom Publishing Protocol (AtomPub). Through OData, you can gain access to a multitude of different applications and services from a variety of sources including relational databases, file systems, and even content-management systems.

The OData protocol came about from experiences implementing AtomPub clients and servers in an assortment of products over the past few years. OData relies on URIs for resource identification, which provides consistent interoperation with the Web, committing to an HTTP-based and uniform interface for interacting with the different sources. OData is committed to the fundamental web principles; this gives OData its great ability to integrate and interoperate with a plethora of services, clients, tools, and servers.

It doesn't matter if you have a basic set of reference data or are architecting an enterprise-size web application: OData facilitates the exposure of your data and associated logic as OData feeds, thus making the data available to be consumed by any OData-aware consumers such as business intelligence tools and products as well as developer tools and libraries.

1. OData Producers

An OData producer is a service or application that exposes its data using the OData protocol. This article —pertains to SQL Azure and OData, SQL Azure can expose data as OData. But so can SQL Server Reporting Services and SharePoint 2010, among other applications.

Many public (or live) OData services have been made available, which anyone can consume in an application. For example, Stack Overflow, NerdDinner, and even Netflix have partnered with Microsoft to create an OData API. You can view a complete list of such OData producers, or services, at www.odata.org/producers.

In the browser, you see a list of the categories by which you can browse or search for a movie offered by Netflix, as shown in Figure 1. You probably look at Figure 1 and think, "This looks a lot like WCF Data Services." That is correct, because, as stated earlier, OData facilitates the exposure of your data and associated logic as OData feeds, making it much easier via a standardized method to consume data regardless of the source or consuming application.

Thus, in Figure 1 you can see the categories via which you can search Netflix movie catalog. For example, you can see the different endpoints of the API through which to find a movie, such as Titles, People, Languages, and Genres.

You can begin navigating through the vast Netflix catalog by entering your query as a URI. For example, let's look at all the different genres offered by Netflix. The URI is http://OData.netflix.com/Catalog/Genres

You're given a list of genres, each of which is in an <entry> element with the name of the genre in the <Name> element in the feed, shown in Figure 2.

Figure 1. Netflix catalog

Figure 2. Netflix genres

Figure 2 shows the Comedy genre. Additional information lets you know what you need to add to the URI to drill down into more detail. For example, look at the <id> element. If you copy the value of that element into your browser, you see the detailed information for that genre.

Continuing the Comedy example, let's return all the titles in the Comedy genre. To do that, you need to append the /Titles filter to the end of the URI:

http://OData.netflix.com/Catalog/Genres('Comedy')/Titles

The Netflix OData service returns all the information for the movies in the Comedy genre. Figure 3 shows one of the movies returned from the service, displayed in the browser.

Figure 3. Viewing Netflix titles

At this point you're just scratching the surface—you can go much further. Although this article isn't intended to be a complete OData tutorial, here are some basic examples of queries you can execute:

To count how many movies Netflix has in its Comedy genre, the URI is http://netflix.cloudapp.net/Catalog/Genres('Comedy')/Titles/$count?$filter=Type%20eq%20'Movie'.

Your browser displays a number, and as of this writing, it's 4642.

To list all the comedies made in the 1980s, the URI is http://OData.netflix.com/Catalog/Genres('Comedy')/Titles?$filter=ReleaseYear%20le%201989%20and%20ReleaseYear%20ge%201980.

To see all the movies Brad Pitt has acted in, the URI is http://OData.netflix.com/Catalog/People?$filter=Name%20eq%20'Brad%20Pitt'&$expand=TitlesActedIn.

The key to knowing what to add to the URL to apply additional filters is in the information returned by the service. For example, let's modify the previous example as follows:

http://OData.netflix.com/Catalog/People?$filter=Name%20eq%20'Brad%20Pitt'

On the resulting page, several <link> elements tell you what additional filters you can apply to your URI:

<link rel="http://schemas.microsoft.com/ado/2007/08/dataservices/related/Awards"...

<link rel="http://schemas.microsoft.com/ado/2007/08/dataservices/related/TitlesActedIn"...

<link rel="http://schemas.microsoft.com/ado/2007/08/dataservices/related/TitlesDirected"...

These links let you know the information by which you can filter the data.

2. OData Consumers

An OData consumer is an application that consumes data exposed via the OData protocol. An application that consumes OData can range from a simple web browser (as you've just seen) to an enterprise custom application. The follow is a list of the consumers that support the OData protocol:

Browsers. Most modern browsers allow you to browse OData services and Atom-based feeds.

OData Explorer. A Silverlight application that allows you to browse OData services.

Excel 2010. Via PowerPivot for Excel 2010. This is a plug-in to Excel that has OData support.

Client libraries. Programming libraries such as .NET, PHP, Java, and Windows Phone 7 that make it easy to consume OData services.

Sesame (an OData browser). A browser built by Fabrice Marguerie specifically for browsing OData.

OData Helper for webMatrix. Along with ASP.NET, let's you retrieve and update data from any service that exposes its data via the OData protocol.

There are several more supported client libraries. You can find a complete list of consumers at www.odata.org/consumers.

OK, enough about OData. If you want to learn more, the OData home page is www.OData.org/home.

You should spend some time reading up on OData and start playing with some of the services provided by the listed producers. When people began getting into web services and WCF services, there was an obvious learning curve involved in understanding and implementing these technologies. Not so much with OData—it has the great benefit of using existing technologies to build on, so understanding and implementing OData is much faster and simpler.

Cihan Biyikoglu explained SQL Azure Federations: Robust Connectivity Model for Federated Data in a 1/18/2010 post:

In this post I wanted to focus on connectivity enhancements that come with SQL Azure Federations. SQL Azure Federation comes with a special FILTERING connection option that makes is safe to work with federated data and is critical for the fully-available-repartitioning component of federations. We’ll take an in depth look at this and build towards a great side effect of FILTERING connections; safe model for programming federated data and a great migration utility for multi-tenant applications. Let’s rewind back to the top. First; what sharded applications typically have to do for connectivity today, then look at how federation improve that…

Connecting to Sharded Databases [Today]

Today, when developing sharded applications, typically the data access layer will cache the shard directory (distribution of the data to the databases) at the application tier and will construct a connection string based on the shard key instance. Once the connection is established the application is responsible for writing queries that will target the specific shard key instance. Here is a quick ADO.Net sample; Lets assume again a tenant based system with a customers table and application targeting customer with id=55. dbname_postfix below refer to the actual database name you need to connect to that contain customer 55. With all your queries, the app needs to also remember to include customer_id=55 predicate in the WHERE clause to filter to the correct data subset.

1: SqlConnection cn = new SqlConnection(“Server=tcp:servername.db.windows.net;"+2: "Db=salesdb_”+dbname_postfix+”;User ID=username;Password=password;"+3: "Trusted_Connection=False;Encrypt=True”);4: cn.Open();5: …6: SqlCommand cm = new SqlCommand(“SELECT … FROM dbo.customers "+7: WHERE customer_id=55 and …”);8: cm.ExecuteQuery();9: …Connection Pool Management: One issue that is obvious here is that once you reach to large number of databases, your connection pool will start fragmenting. Connection pooling today creates a connection pool per hash of a connection string. Imagine this, 200 databases in your sharded app… You will end up with 200 connection pools per application instance with mostly each connection pool containing a few connection to the database. This setup typically means that you don’t get much connection reuse. Idle connections after a while will get disconnected you will have to reestablish connections from scratch.

Cache Coherency: Caching the shard map also comes with another problem; cache coherency. As you repartition data, you need to build logic to invalidate the shard directory that is cached and ensure that during repartitioning operation, you are either offline or have the code to handle race conditions around invalidation of the cache and moment of physical movement of data to ensure connection always get routed to the correct database. Imagine a case where you are moving customer 55 to a new database. During the data movement you need to be able to capture all changes to customer 55 and at the moment of the switchover you need to make sure connection routed to the old database needs to be routed to the new database or could suffer from invalid results to queries such as the one above.

Connecting to Federations

Connection String: With SQL Azure Federations, connection string for the application always point to the root database name. Here is what the code would look like to the example above. Imagine in this case that you have a root database called salesdb. Realize there is no dbname_postfix anymore. Instead USE FEDERATION statement does the routing right after connection is opened.

1: SqlConnection cn = new SqlConnection(“Server=tcp:servername.db.windows.net;"+2: "Db=salesdb;User ID=username;Password=password;"+3: "Trusted_Connection=False;Encrypt=True”);4: cn.Open();5: …Safety in Atomic Units: Atomic units refer to instances of federation key, such as all rows in all federated tables of a federation that contain customer_id=55. In federations, atomic units provide the one important guarantee about physical placement of partitioned data. All federation operations guarantee that rows that belong to the same atomic unit are always physically in the same federation member. That is, we never split the atomic unit to multiple federation members. (you can find a good review of the federation concepts such as atomic units and federated tables here).

Large part of federated application workloads target a single atomic unit at a time and depend on this guarantee. In fact the default connection type to federation members scope connections to atomic units through the USE FEDERATION statement such as the one below.

1: USE FEDERATION orders_federation(customer_id=55) WITH RESET, FILTERING=ONWhat does USE FEDERATION give you? #1 you are guaranteed to land in the correct federation member even if data is being repartitioned. #2 with the FILTERING option set, your connection is scoped to data that is part of customer_id=55 and you no longer have to remember to include the filter in your WHERE clause. Here is the refactored version of the above sample with USE FEDERATION. Realize that, with the FITLERING connection option set below, the query no longer needs to include the customer_id=55 predicate in the where clause. However if the application did include customer_id=55 in the WHERE clause, things would still work as expected.

1: SqlCommand cm = new SqlCommand(“USE FEDERATION orders_federation(customer_id=55) ”+

2: cm.ExecuteNoneQuery();3: SqlCommand cm = new SqlCommand(“SELECT … FROM dbo.customers WHERE …”)4: cm.ExecuteQuery();5: …With the FILTERING connection option set, the query on customers table “SELECT … FROM dbo.customers” will return only rows that have customer_id=55. That is the query execution engine will insert the predicate, customer_id=55” automagically for you when the target of your query is a federated table. This is true for all types of statements including INSERT, DELETE and UPDATE statements. The connection works just like a constraint. If you try to INSERT or UPDATE a row to a different customer_id instance, you get an error. However DELETE and SELECT statements simply effect no rows if you try to reach outside of the scope of customer_id=55.

Federations also support turning FILTERING off. This is useful when doing global changes to the federation member such as schema changes, modifications to reference tables such as updating data in your zipcode lookup table in a federation member, in cases where you want to do bulk operations or querying over many atomic units at the same time for efficiency.

Migrating to Multi-Tenant Model with Federations: Multi-tenancy is a great way to improve economics of your app by improving density of your tenants. In most classic apps, typically the tenants get a first class database. However with large number of tenants, it is easy to see that database management overhead gets out of control fast. If you can pack more tenants to each database by allowing multiple tenants in a single database, you can reduce the number of databases you need to manage.

Not so obvious at first, but a great side effect of FILTERING connection is, they make migration of business logic to a multi-tenant model much easier… Most classic apps today are built with database-per-tenant model. With single-database-per-tenant approach, your business logic and queries won’t have the tenant filtering in place. That is, you won’t have the customer_id filtering in your database traffic. If you have a large number of queries and stored procedures, it could be a pain to port to a multi-tenant solution and validate your solution. With FILTERING connection option set, it could be much easier to migrate over to a multi-tenant model with SQL Azure Federations. Your application would still have to migrate to use SQL Azure federations, however cost savings would be greatly amplified for some apps.

<Return to section navigation list>

MarketPlace DataMarket and OData

Anton Paramosh described Dynamics CRM 2011 support for Silverlight Web Resources in an 11/19/2010 post:

Dynamics CRM 2011 supports Silverlight application as Web Resource. I have created with testing purpose my first Silverlight Web Resource.

How to do that you can find out in file crmsdk2011.chm in SDK folder. I can't give you direct web link for now because Microsoft still not published this in MSDN. But you can download SDK here.

I used Visual Studio 2010, but you also need to install Microsoft Silverlight 4 Tools for Visual Studio 2010. To be able to work with all OData service capabilities application must be developed for Silverlight version 4.

When you are developing Silverlight Web Resource you need to know does your Web Resource need some contextual information or not. If not you are able to place your web resource either on some entity edit form or in another HTML Web Resource. In case if Silverlight Web Resource need context information but it's placed not on entity form, you have to add reference to ClientGlobalContext.js.aspx.

In SDK is available sample of Silverlight Web Resource sdk\samplecode\cs\generalprogramming\dataservices\crmodatasilverlight. Look at crmodatasilverlight\utilities\serverutility.cs file. It's designed to retrieve server url from context. But there available another properties. I've extended these class in order to able you retrieve them all.

public static class ServerUtility

{

private static ScriptObject PageContext

{

get

{

var xrm = (ScriptObject)HtmlPage.Window.GetProperty("Xrm");

var page = (ScriptObject)xrm.GetProperty("Page");

return (ScriptObject)page.GetProperty("context");

}

}

public static string GetAuthenticationHeader()

{

return (string)PageContext.Invoke("getAuthenticationHeader");

}

public static double GetOrgLcid()

{

return (double)PageContext.Invoke("getOrgLcid");

}

public static string GetOrgUniqueName()

{

return (string)PageContext.Invoke("getOrgUniqueName");

}

public static JsonValue GetQueryStringParameters()

{

ScriptObject a = (ScriptObject)PageContext.Invoke("getQueryStringParameters");

DataContractJsonSerializer sr = new DataContractJsonSerializer(a.GetType());

MemoryStream ms = new MemoryStream();

sr.WriteObject(ms, a);

JsonValue o = JsonObject.Load(ms);

ms.Close();

return o;

}

public static string GetServerUrl()

{

return (string)PageContext.Invoke("getServerUrl");

}

public static Guid GetUserId()

{

return new Guid ((string)PageContext.Invoke("getUserId"));

}

public static double GetUserLcid()

{

return (double)PageContext.Invoke("getUserLcid");

}

public static Guid[] GetUserRoles()

{

IList<Object> nonCastedRoles = ((ScriptObject)PageContext.Invoke("getUserRoles")).ConvertTo<List<Object>>();

List<Guid> castedRoles = new List<Guid>(nonCastedRoles.Count);

foreach (var currentNonCastedRole in nonCastedRoles)

{

castedRoles.Add(new Guid((string)currentNonCastedRole));

}

return castedRoles.ToArray();

}

}Context is ScriptObject class object. And you can access its properties using Invoke method like PageContext.Invoke("getAuthenticationHeader"). GetUserRoles and GetQueryStringParameters methods are more complicated than other. They returns not single value but JsonValue and array of Guid. In case GetUserRoles of we used ConvertTo to convert value to List<Object>

IList<Object> nonCastedRoles = ((ScriptObject)PageContext.Invoke("getUserRoles")).ConvertTo<List<Object>>();

But in case of GetQueryStringParameters we have Json object as ScriptObject. We do ScriptObject serialization and next deserialize them into JsonValue.ScriptObject a = (ScriptObject)PageContext.Invoke("getQueryStringParameters");

DataContractJsonSerializer sr = new DataContractJsonSerializer(a.GetType());

MemoryStream ms = new MemoryStream();

sr.WriteObject(ms, a);

JsonValue o = JsonObject.Load(ms);

Also you can use Json.Net to convert Json object directly into Dictionary<string, string>:

public static IDictionary<String, String> GetQueryStringParameters2()

{

ScriptObject json = (ScriptObject)PageContext.Invoke("getQueryStringParameters");

DataContractJsonSerializer sr = new DataContractJsonSerializer(json.GetType());

MemoryStream ms = new MemoryStream();

sr.WriteObject(ms, json);

ms.Position = 0;

var srd = new StreamReader(ms);

var jsonstring = srd.ReadToEnd();

IDictionary<String, String> dic = JsonConvert.DeserializeObject<Dictionary<string, string>>(jsonstring);

ms.Close();

return dic;

}

Anton is a Dynamics CRM and .NET Developer in Lviv, Ukraine.

The Microsoft Public Sector Developer Evangelism team at http://dev.govdata.eu recently published several sample data sets from the Netherlands as an element of the Open Government Data Initiative:

Welcome to Open Government Data Initiative (OGDI)

The Open Government Data Initiative (OGDI) is an initiative led by Microsoft Public Sector Developer Evangelism team. OGDI uses the Windows Azure Platform to make it easier to publish and use a wide variety of public data from government agencies. OGDI is also a free, open source 'starter kit' with code that can be used to publish data on the Internet in a Web-friendly format with easy-to-use, open API's. OGDI-based web API's can be accessed from a variety of client technologies such as Silverlight, Flash, JavaScript, PHP, Python, Ruby, mapping web sites, etc.

Whether you are a business wishing to use government data, a government developer, or a 'citizen developer', these open API's will enable you to build innovative applications, visualizations and mash-ups that empower people through access to government information. This site is built using the OGDI starter kit software assets and provides interactive access to some publicly-available data sets along with sample code and resources for writing applications using the OGDI API's.

Email us at askogdi@microsoft.com if you have government data sets that you would like us to publish or if you have other questions.

Here’s part of the first page of a geocoded list of 1,000 Dutch primary schools:

And the map view of part of the Netherlands with a maximum of 50 placemarks:

Here’s the entry for openbare basisschool vinckhuysen (Public School Vinckhuysen) selected in the preceding capture with PartitionKey and RowKey values for its Windows Azure Table entity:

<Return to section navigation list>

Windows Azure AppFabric: Access Control and Service Bus

The Windows Azure AppFabric CTP Team started a new Windows Azure AppFabric CTP MSDN forum on 1/19/2011.

This Windows Azure AppFabric CTP forum includes discussions on ideas, questions or defects from the Windows Azure AppFabric CTP versions available in the AppFabric Labs environment (Service Bus CTP & Caching CTP).

You’ll see six initial posts, ranging in date from 11/1/2010 to 1/10/2011. These have been moved from other fora by Microsoft’s Brian Aurich.

<Return to section navigation list>

Windows Azure Virtual Network, Connect, RDP and CDN

Alan Naim asked and answered Windows Azure Connect - What is it? in a 1/13/2011 post (missed when published):

Windows Azure Connect provides a simple and easy mechanism to setup IP-based network connectivity between on-premises and Windows Azure resources. This capability makes it easier for an organization to migrate their existing applications to the cloud by enabling direct IP-based network connectivity with their existing on-premises infrastructure. For example, a company can deploy a Windows Azure application that connects to an on-premises SQL Server database, or domain-join Windows Azure services to their Active Directory deployment. In addition, Windows Azure Connect makes it simple for developers to setup direct connectivity to their cloud-hosted virtual machines, enabling remote administration and troubleshooting using the same tools that they use for on-premises applications.

Some application scenarios for Windows Azure Connect include:

- Enable enterprise apps, which have migrated to Windows Azure, to connect on-premises servers (e.g. SQL Server ).

- Help applications running on Windows Azure to domain join on-premises Active Directory. Control access to Windows Azure roles based on existing AD accounts and groups.

- Remote administration and trouble-shooting of Windows Azure roles. E.g. Remote PowerShell to access info from Windows Azure instances.

You might also be asking – “How is Windows Azure Connect different from the Windows Azure AppFabric Service Bus?”

Windows Azure Connect and Windows Azure AppFabric Service Bus are complementary technologies. Windows Azure Connect provides IP-based network connectivity between on-premises and Windows Azure resources. Windows Azure Connect enables cloud-hosted virtual machines and on-premises resources to communicate as if they were on the same network. The Windows Azure AppFabric Service Bus provides application-level federation and connectivity for HTTP-based services using claims-based access control. Based upon their requirements, customers can choose the technology that is most appropriate for their needs. Service Bus offers “cloud presence” for enterprise services, both for internal use as well as partner use outside of the corporate network. It does this via a relay infrastructure that does not require opening up inbound ports allowing for secure and seamless NAT and firewall traversal. Service Bus is an application level connectivity service, and is particularly well-suited for situations where some of the end points being connected are not under the control of a single enterprise, or where application-level access control is desired. In combination with Access Control Service (ACS), Service Bus also simplifies federated security scenarios.

There are a couple of PDC talks on Azure Connect (http://bit.ly/cSXOaC) and “Connecting Cloud & On-Premise Apps” (http://bit.ly/bhoUkt) which goes through some of the considerations / trade-offs in moving to a hybrid distributed architecture & also various technologies that can be used for this.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

David Hardin explained how to use Azure IntelliTrace in a 1/19/2011 post:

Here is some great information on using IntelliTrace with Azure:

- IntelliTrace is specifically licensed in Visual Studio 2010 Ultimate (VS) as a Dev and Test tool for non-production environments.

- When IntelliTrace is enabled, the Azure role instances do not automatically restart after a failure. This allows Windows Azure to persist the IntelliTrace data after the failure; Azure waits for a developer to download the data and manually restart the role instance.

- IntelliTrace is implemented via IL rewriting, similar to a profiler.

- Specific events are configured in the CollectionPlan.xml file located in C:\Program Files (x86)\Microsoft Visual Studio 10.0\Team Tools\TraceDebugger Tools\en. The file’s content controls which methods are rewritten along with how trace data is later displayed in the VS debugger. There isn't a supported way to add custom events.

- Including the "call information" option basically rewrites all methods; there is a one-size-fits-all way to display the methods not present in the CollectionPlan.xml file.

- Visual Studio rewrites the IL before deployment.

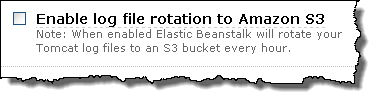

- The "Enable IntelliTrace" check box is only enabled when deploying from VS. IntelliTrace is not supported when only creating a service package which Op's will later upload to Azure, notice check box is disabled:

- IL rewriting adds calls to an API which logs the data through named pipes. Another process receives the data from the named pipe and writes it to local disk.

- When a developer right clicks an instance in VS Server Explorer and selects “View IntelliTrace logs”, VS copies the trace data from the instance's local disk to an Azure Storage blob named “intelitrace” and then to the developer’s local disk. After the logs are on the developer's disk the copy in Azure Storage is automatically deleted.

- In addition to viewing the data in VS there is an API for reading the data for custom tooling.

Adron Hall (@adronbh) announced his desire for better support for Test-Driven Design (TDD) in his Windows Azure SDK Unit Testing Dilemma — F5DD Plz K Thx Bye post of 1/19/2011:

I’m a huge advocate for high quality code. I will admit I don’t always get to write, or am always able to write high quality code. But day in and out I make my best effort at figuring out the best way to write solid, high quality, easy to maintain, easy to read code.

Over the last year or so I’ve been working with Windows Azure (Amazon Web Services and other Cloud/Utility Platforms & Infrastructure also). One of the largest gaps that I’ve experienced when working with Windows Azure is the gross disregard for unit testing and especially unit testing in a Test Driven Development style way. The design of the SDK doesn’t make unit testing a high priority, and instead focuses mostly on what one might call F5 & Run Development.

I’ll be the first to stand up and point out why F5 Driven Development (for more on this, check out Jeff Schumacher‘s Blog Entry) is the slowest & distracting ways to build high quality code. I’d also be one to admit that F5 Development encourages poor design and development. A developer has to juggle far too many things to waste time hitting F5 every few seconds to assure that the build is running and code changes, additions, or deletions have been made correctly. If a developer disregards running the application when forced to do F5 Development the tendancy is to produce a lot of code, most likely not refactored or tested, during each run of the application. The list of reasons to not develop this way can get long pretty quick. A developer needs to be able to write a test, implement the code, and run the test without a framework launching the development fabric, or worse being forced to not write a test and running code that launches a whole development fabric framework.

Now don’t get me wrong, the development fabric is freaking AWESOME!! It is one of the things that really sets Windows Azure apart from other platforms and infrastructure models that one can develop to. But the level of work and effort makes effectively, cleanly, and intelligently unit testing code against Windows Azure with the development fabric almost impossible.

But with that context, I’m on a search to find some effective ways, with the current SDK limitations and frustrations, to write unit tests and encourage test driven design (TDD) or behaviour driven design (BDD) against Windows Azure, preferably using the SDK.

So far I’ve found the following methods of doing TDD against Windows Azure.

- Don’t use the SDK. The easiest way to go TDD or BDD against Windows Azure and not being tightly bound to the SDK & Development Fabric is to ignore the SDK altogether and use regular service calls against the Windows Azure service end points. The problem with this however, is that it basically requires one rewrite all the things that the SDK wraps (albeit with better design principles). This is very time consuming but truly gives one absolute control over what they’re writing and also releases one from the issues/nuances that the Windows Azure SDK (1.3 comes to mind) has had.

- Abstract, abstract, and abstract with a lock of stubbing, mocking, more stubbing, and some more abstractions underneath all of that to make sure the development fabric doesn’t kick off every time the tests are run. I don’t want to abstract something just to fake, stub, or mock it. The level of indirection needed gets a bit absurd because of the design issues with the SDK. The big problem with this design process to move forward with TDD and BDD is that it requires the SDK to basically be rewritten as a whole virtual stubbed, faked, and mocked layer. Reminds me of many of the reasons the Entity Framework is so difficult to work with for testing (has the EF been cleaned up, opened up, and those nasty sealed classes removed yet??)

Now I’ll admit, sometimes I miss the obvious things and maybe there is a magic “build tests real easy right here” button for Windows Azure, but I haven’t found it. I’d love to hear what else people are doing to enable good design principles around Windows Azure’s SDK. Any thoughts, ideas, or things I ought to try would be absolutely great – I’d love to read them. Please do comment!

Benko offered a Benko-Quick-Tip: How to setup Windows Azure for Web Publish on 1/19/2011:

What’s the deal with a 10 minute wait time to deploy my Windows Azure project to the cloud? I understand that when I deploy Windows Azure Fabric is actually allocating instances and starting machines for me, but sometimes, especially in development,those 10 minutes can seem slow. Well, with the release of Windows Azure 1.3 and the addition of admin mode, full IIS we can work around that that nuisance and set up our instance to install web deploy for us so we can use a Web Publish to the instance’s IIS. Wade Wegner and Ryan Dunn have both published blog posts that detail how this is done, and I recommend reading thru them to get the details.

Benko includes an embedded video segment here.

The basic process that happens is that thru the magic of Startup tasks you can run the Web Platform Installer to do the work of adding the WebDAV publishing for you. Ryan bundled up the loose files into a plug-in zip file that you can add to your SDK’s plug in folder, to be able to complete the task quick and easily. You can download the plug-in from his site, simply download the file from the link and extract the contents to your "%programfiles%\Windows Azure SDK\v1.3\bin\plugins\WebDeploy" folder, and then adding the imports code to your Service Definition file:

Caution: This work around is meant only for a development purposes in which you have a single instance you’re deploying to. Because we make changes to the instance after deploy, if you re-publish the package whatever changes you’ve made and pushed to Windows Azure thru this method will be overwritten with whatever the last uploaded package contained. For that reason when you’re done working thru your changes you should go thru a re-deploy of your cloud package. I’ve created a new “Benko-Quick-Tip” video that shows how to do this at http://bit.ly/bqtAzWebDeploy.

By the way – if you’ve got an MSDN Subscription and want to see how to activate your benefits I’ve created a quick-tip video for that too – http://bit.ly/bqtAzAct1.

iq | cloud consulting reported on 1/19/2010 that it’s Ready to flaunt in record time with Windows Azure Solution:

flaunt-it.biz is a leading Social Commerce service. flaunt-it work with leading luxury and premium brands to help them engage with customers through Social Media to enhance brand awareness and drive sales. flaunt-it help their customers achieve competitive advantage through superior customer interaction and a flexible, insightful service.

As a start-up with big ambitions and a limited budget, flaunt-it wanted the maximum bang for their buck, but without compromising on their ability to scale quickly as their business takes off.

flaunt-it asked IQ to help them quickly and cost-effectively design, pilot and develop a facebook-integrated application that can easily scale up without investing £££'s in hardware and long-term hosting contracts. We designed a pilot for flaunt-it's launch customer and using our Agile Software Development methodology had the service ready for launch in only six elapsed weeks!

flaunt-it makes use of the latest Microsoft Cloud Technology including:

- Windows Azure Cloud Application Hosting with Web Roles and Worker Roles

SQL Azure secure cloud database

- Windows Azure Storage Queues to enable scalable, manageable growth without affecting end-user experience or application performance

- Microsoft Tag extending Social Media Integration into the 'real world'

Srinivasan Sundara Rajan prefaced his Cloud Computing: Dynamic Scaling in Windows Azure Revisited post of 1/19/2011 with “Third-party tool support for dynamic scaling in Windows Azure”:

My last article on comparing Dynamic Scaling Features between Windows Azure vs Amazon EC2 mentioned that Both EC2 and Azure provide an auto scaling feature. While EC2 provides a backbone and framework for auto scaling, Azure provides an API that can be extended. We are already seeing several third-party providers delivering tools for Azure auto scaling.

One such third-party auto scaling product company, Paraleap Technologies, has recently released a product called AzureWatch that provides a SaaS-based approach to scale the Windows Azure compute roles. Some of the observations about the product are mentioned below. The company also provides a free 14-day trial of the product whereby some of the facts can be ascertained in a live situation.

The following aspects of the product were observed, based on the technical documentation available with the product vendor.

SaaS-Based Solution

The core of the Azurewatch data collection and aggregation and decision-making process is available as a SaaS-based solution in the form of Azure Watch Service. The AzureWatch Service aggregates and analyzes performance metrics and matches these metrics against user-defined rules on a regular and configurable basis. When a rule produces a "hit," a scaling action occurs.However, there are some glue or controlling components installed on the ‘On Premise' systems in the form of the AzureWatch Monitor and Control Panel. The Monitor is responsible for sending raw metrics to SaaS-based systems and executing scaling actions. The Control Panel is a simple but powerful configuration and monitoring utility that allows you to configure custom rules and to monitor your instances.

This approach is useful, because much of the overhead of the Data Storage and maintenance of the metrics data is kept away from the enterprises and only a lightweight component in the form of the AzureWatch Monitor and Control Panel needs to be installed ‘On Premise.'

The following diagram, courtesy of vendor, explains the solution.

Rules Engine-Based Interface

As we have seen, Auto Scaling is typically handled by the Pro Active Monitoring, which is done by the AzureWatch Monitor coupled with the analysis and the metrics gathered by the AzureWatch Service. Finally the scaling action is taken based on the rules configured using an easy-to-use GUI tool.For each of the roles as part of your Azure subscription, Azurewatch provides simple predefined rules that can be tailored further. The two sample rules offered are simple rules that rely upon calculating a 60-minute average CPU usage across all instances within a Role. The Rule Edit screen is simple yet powerful. You can specify what formula needs to be evaluated, what happens when the evaluation returns TRUE, and what time of day should evaluation be restricted to.

Dashboards & Reports

The success of the monitoring tools are measured by the nature of the dashboards and reports, as the metrics data in the raw form is very difficult to understand. Dashboards in AzureWatch provide the following information.

- Instance Count

- Instance History

- Metrics Display based on Windows Counters

Proactive Monitoring

Like traditional data center-based monitoring tools, AzureWatch has built in notification capabilities so that emails are sent based on scaling conditions that happen. AzureWatch can track active/unresponsive/other instance counts for you. You can create rules that either trigger scaling actions or notification emails based upon conditions that rely on instance counts.Nice to Have

Currently the metrics watch service needs to be carefully watched, and metrics can get stale if the service stopped for some reason. It would be nice to have more ways to avoid the metrics getting stale.If new packages are installed, they may get missed from monitoring if the instructions are not followed.

If there are options to manually set the Metrics for some special reasons that may provide more control. It's similar to the way in which Oracle and other databases handle Stale statistics.

Summary

Over all AzureWatch and similar third-party tools will make the Cloud Deployments really fruitful because they're meant to improve the core tenant of the Cloud-based deployment, namely the elasticity and dynamic scaling.

Andy Cross (@andybareweb) described Implementing Windows Azure Custom Domain Names in a 1/18/2011 post:

Windows Azure provides a number of ways to customise the endpoints of your applications by using Custom Domain names.

- Custom Blob Domain Names

- Custom CDN Domain Names

- Custom Compute Domain Names

- Composite Custom Domain Names

This post will take you through the steps needed to configure all the above custom domain names in Windows Azure. In the below examples I will be using a storage account called “AzureFiddlerStore”, as it was the one created for my previous blog post on Running Fiddler in Windows Azure with AzureFiddlerCore. In this account I have a public container (called public) and a single file Azure.png.

Storage Custom Domains

Blob Storage is a great way to store files in the Cloud without having to worry about the load it may put on your servers. Blob Storage is hosted centrally by Microsoft, and so they will worry about load on the service for you. The downside to this is that you have to address the files in this system centrally too. To access my public file Azure.png, you construct a url with your account name, the service identifier (of blob), the windows.net domain name, the container and then the filename:

http://azurefiddlerstore.blob.core.windows.net/public/Azure.pngThis will initialize a download of the blob, since it is inside a public container. However, you may be unhappy with the domain name of “windows.net”. It is possible to apply a custom domain name over this, to prettify these urls. The next steps will show these steps in order to make the url:

http://azurefiddlerstore.bareweb.eu/public/Azure.pngNote that this is an arbitrary choice of subdomain, it could just as easily be the reverse:

http://erotsrelddiferuza.bareweb.eu/public/Azure.pngOr it could be any other arbitrary string

http://afs.bareweb.eu/public/Azure.pngConfiguring Storage Custom Domain Names

For configuring custom domain names, we do much of our work in two different control panels. Firstly the Windows Azure Management Portal (http://windows.azure.com) and secondly whatever DNS Management Portal your provider supplies. I work with GoDaddy, and so that’s the portal I will be using. I will try to make the DNS part as generic as possible, since yours may well differ.

To start with, log into the Windows Azure Management Portal, navigate to your Storage Accounts, click on the particular storage account you wish to add a domain for, and click the AddDomain button.

Click the Add Domain button in Management Portal

Once you have clicked this button, you are given a screen telling you what steps to take with your DNS provider.

Custom Domain Verification Details

Now it’s time to swap over to your DNS provider portal, and enter these details. You can’t copy and paste the above GUID based CNAME details, so you have to type it in manually. CAREFULLY!

Entering the CNAME

83d05958-fedc-437f-8db4-9dbc2fec7ae6.azurefiddlerstore 3600 IN CNAME verify.azure.com

Once you have done this, you can go back to the Windows Azure Management Portal and close the popup window that sits there. Doing this update the screen, and gives you a new row in the view with the type “Storage Custom Domain” and the status of “Pending”. Click the Validate Domain once you are happy with everything at the DNS side. It may be worth checking the dns entry resolves by using nslookup – to make sure your DNS provider has made the change.

Pending Validation

When you click on the Validate Domain button you are given a brief popup progress bar saying “Verifying”, and any errors are shown there. It could be that you need to wait to ensure your DNS settings are correctly propagated by your provider, or you may need to recheck your settings. Once this is successful, your screen will look like the below:

Successful added domain

On the write side of the screen, you are given some further information about the status of your domain:

Domain Status

There is one final step you need to take. If you try to access your asset by its new prettified name, you will get a dns error.

http://azurefiddlerstore.bareweb.eu/public/Azure.pngThis is because the dns is not ready yet!

If we take a step back, it’s clear to see why this is. We have created a CNAME on our DNS record for verification: 83d05958-fedc-437f-8db4-9dbc2fec7ae6.azurefiddlerstore, but we haven’t actually created the real CNAME yet! So go back to your DNS Provider and enter the real CNAME, with it pointing to the central domain name:

Entering the cname

azurefiddlerstore 3600 IN CNAME azurefiddlerstore.blob.core.windows.netOnce you have done this, you can check the DNS propogate by firing up a command window and checking it resolves correctly to a Microsoft domain:

NSLOOKUP

Once you are happy that this is working, you can access the new CNAME in a web browser to see that it connects to Azure:

Message from Azure when no blob uri is specified

Accessing the file now over the full url (note that it is case-sensitive) of

http://azurefiddlerstore.bareweb.eu/public/Azure.pnggives a correct download:

Successful download

Enabling CDN with a Custom Domain Name

Enabling a Custom Domain Name for the CDN is very similar to the above process. There is an additional 60 minute wait in this process while the CDN propagates the files in your blob storage.

Start of by navigating back to the Windows Azure Management Portal and the Storage Accounts page in particular. Click the account name you wish to enable the CDN for, and the “Enable CDN” button will become enabled. Click this to continue:

Click Enable CDN

This will give you a slash screen with the warning I mentioned earlier, that there is a 60 minute background task that will happen before your CDN will be usable. It is worth checking out the link provided in the popup for pricing.

60 Minute warning

Once you click “Enable”, this propagation will begin. There will be a brief pause first, with the status of the CDN being “Creating” and then “CDN Enabled”:

CDN Enabling

CDN Enabled

Now what we have is a CDN account, but it has the rather ugly domain name:

az20608.vo.msecnd.netAs soon as the CDN has propagated you will be able to access the url:

http://az20608.vo.msecnd.net/public/Azure.pngClick on the CDN entry, and at the top of the screen the “Add Domain” button will become visible again. This process is very similar to the earlier process of adding a domain to blobstorage account. You need to get your DNS portal open again!

Add a domain to a CDN

This will ask you for a custom domain for your CDN. Enter the url you want. I went for afscdn.bareweb.eu, but just to prove how it’s not a pattern you have to follow, I misspelt it and it all worked

Enter CDN custom domain name

Once you have entered this, you get a prompt similar to the earlier, asking to enter the verification domain CNAME records:

CDN Domain verification prompt

Skipping ahead as this is the same process as earlier:

Enter the verification CDN CNAME

After adding the CNAME, click Validate

Successful CDN CNAME verification

Remember to add the real CNAME as well!

Add the CNAME for the CDN

Now you have successfully set up the CDN with a custom domain name. Depending on how quickly you were able to follow the steps, the 60 minutes may well not be anywhere near up yet. Indeed, the 60 minutes is a guideline, and in my experience it may be more than 60 minutes, up to 180 minutes. If you get any DNS failures, it’s worth waiting another 60 minutes before calling on MS Support.

Eventually you will be able to resolve:

http://afscnd.bareweb.eu/public/Azure.pngCustom Compute Domain Names

Running an application in Windows Azure will give you a DNS name such as [mycloudaccount].cloudapp.net. This obviously isn’t ideal for production, where a domain name goes a long way to differentiating a brand and as any SEO expert will say will affect your rankings in major search engines. I will give two examples of how to achieve this, from a simple WebRole to the complex one defined in my blog post Azure: Running Multiple Web Sites in a Single WebRole.

Adding a Custom Compute domain name is actually much simpler than the above examples. This is because when you are configuring BlobStorage or CDNs, the remote services have to be configured to accept the incoming url and differentiate your request from others coming in with a custom URL, mapping back to your blobstorage account. Since your application doesn’t have that overhead, you can achieve it much fewer steps. I am using Staging environments in this example, but the process is if anything easier with production accounts.

I want to make sure that my application is available at:

http://azure.bareweb.euSimple Web Role

Whilst I call this a simple web role, it does include multiple Sites inside the single Role. The reason I refer to it as simple is that it runs its Sites on different Ports within that Role, so one domain name will apply across both entries. This is the ServiceDefinition.csdef

<?xml version="1.0" encoding="utf-8"?>

<ServiceDefinition name="MultipleWebSites" xmlns="http://schemas.microsoft.com/ServiceHosting/2008/10/ServiceDefinition">

<WebRole name="MasterWebRole">

<Sites>

<Site name="Web" physicalDirectory="../MasterWebRole">

<VirtualApplication name="child" physicalDirectory="../ChildVirtualApplication" />

<VirtualDirectory name="assets" physicalDirectory="../AssetsVirtualDirectory" />

<Bindings>

<Binding name="Endpoint1" endpointName="Endpoint1" />

</Bindings>

</Site>

<Site name="Second" physicalDirectory="../SecondMasterSite">

<VirtualApplication name="child" physicalDirectory="../ChildVirtualApplication" />

<VirtualDirectory name="assets" physicalDirectory="../AssetsVirtualDirectory" />

<Bindings>

<Binding name="Endpoint2" endpointName="Endpoint2" />

</Bindings>

</Site>

</Sites>

<Endpoints>

<InputEndpoint name="Endpoint1" protocol="http" port="80" />

<InputEndpoint name="Endpoint2" protocol="http" port="81" />

</Endpoints>

<Imports>

<Import moduleName="Diagnostics" />

</Imports>

</WebRole>

</ServiceDefinition>Firstly, deploy your service to Windows Azure as per the normal process. This will give you a DNS endpoint.

A started WebRole with DNS Name

In my case this was:

http://259c6a7dae974ba5bf80acd0e9aa81a1.cloudapp.netGoing to this in a browser with the original configuration (of two web sites in one web role on port :80 and :81) gave the following:

Built in DNS name on port 80

Built in DNS name on port 81

Now to make this work with a our domain (azure.bareweb.eu), we simply go to our DNS portal and add a CNAME of azure.bareweb.eu pointing to

259c6a7dae974ba5bf80acd0e9aa81a1.cloudapp.netDefine CNAME for Azure Compute

Now, once we have saved this and our DNS provider has propagated the change, we can go to

http://azure.bareweb.eu/and

http://azure.bareweb.eu:81/Custom Domain Name azure.bareweb.eu port 80

Custom Domain Name azure.bareweb.eu port 81

Composite WebRole

The composite WebRole takes the above a step further, and uses the Host Header method of differentiating the Websites within a single Web Role. This means that each Site will run on a separate domain name.

Deploy the site as you have done before, but with the ServiceDefinition.csdef as below:

<?xml version="1.0" encoding="utf-8"?>

<ServiceDefinition name="MultipleWebSites" xmlns="http://schemas.microsoft.com/ServiceHosting/2008/10/ServiceDefinition">

<WebRole name="MasterWebRole">

<Sites>

<Site name="Web" physicalDirectory="../MasterWebRole">

<VirtualApplication name="child" physicalDirectory="../ChildVirtualApplication" />

<VirtualDirectory name="assets" physicalDirectory="../AssetsVirtualDirectory" />

<Bindings>

<Binding name="Endpoint1" endpointName="Endpoint1" hostHeader="azure.bareweb.eu" />

</Bindings>

</Site>

<Site name="Second" physicalDirectory="../SecondMasterSite">

<VirtualApplication name="child" physicalDirectory="../ChildVirtualApplication" />

<VirtualDirectory name="assets" physicalDirectory="../AssetsVirtualDirectory" />

<Bindings>

<Binding name="Endpoint2" endpointName="Endpoint1" hostHeader="azuresecond.bareweb.eu" />

</Bindings>

</Site>

</Sites>

<Endpoints>

<InputEndpoint name="Endpoint1" protocol="http" port="80" />

</Endpoints>

<Imports>

</Imports>

</WebRole>

</ServiceDefinition>You will note that there is only a single InputEndpoint, and that where it is referenced, it uses the hostHeader attributes of

azure.bareweb.euand

azuresecond.bareweb.euOnce we have deployed this to Windows Azure, it will gives us a DNS name. In my case, this was:

a8b07a597f614059a227d946e25bcae4.cloudapp.netNote that we can’t access this URL directly anymore. Doing so will cause an error “Service Unavailable” since there is nothing set to run without a specific host header.

We can use this as the points to attribute of two new CNAME records:

Setting up CNAMES for composite web role

Now we can navigate to our web sites with these DNS CNAMES:

Accessing Azure.BareWeb.eu

Accessing azuresecond.bareweb.eu

Conclusion

Windows Azure has a number of ways of modifying the domain name endpoints of your application. With little effort you can completely brand the application to your own business name, removing any mention of core.windows.net or cloudapp.net from your end user experience.

I hope you have found this useful,

Wes Yanaga announced New Windows Azure and PHP Tutorials in a 1/18/2011 post to the US ISV Evangelism blog:

If you’re a PHP developer and new to Windows Azure, here are some updated tutorials and links to several tools.

- New Tutorials posted on http://azurephp.interoperabilitybridges.com/,

- Windows Azure tools for PHP updated to align with Windows Azure SDK1.3

- Windows Azure tools for PHP get an update and refreshed content by jccim

- The 3 following tools have been updated

- These updates are Windows Azure 1.3 SDK compatibility updates.

Important to know: Only the Windows Azure Companion leverages the “Full IIS” mode for now. The Cmd Line and Eclipse plug-in still deploy using the Hosted Web Core. It will come with next update – date still TBD.- If you haven’t had a chance to try the Windows Azure platform – click this link for a 30 day free trial and Use Promo Code: DPWE01

- Sign up for Microsoft Platform Ready to get access to technical, application certification testing and marketing support.

Samara Lynn reported Microsoft Dynamics CRM Poised for Cloud Battle in a 1/18/2010 article for PC Magazine:

On Monday, Microsoft chief executive Steve Ballmer and Kirill Tatarinov, corporate vice president of Microsoft Business Solutions, officially unveiled the new Microsoft Dynamics CRM online service at a launch event in Redmond. Microsoft Dynamics CRM Online is now available to businesses worldwide at a promotional price of $34 dollars per user, per month. The online CRM offering is based on Microsoft's on-premise CRM solution, Microsoft Dynamics CRM 2011.

The release of Dynamics CRM online is yet another aggressive foray the Redmond software giant is making into the cloud space. Microsoft is repeatedly hitting home three key factors of its new CRM online service, undoubtedly to distinguish it from competing CRM offerings from Salesforce.com and Oracle. These factors are:

- The delivery of a familiar experience to sales, service, and marketing user via a next-generation native Microsoft Outlook client, browser, and mobile devices.

- An intelligent experience through real-time dashboards, inline business intelligence, and guided process dialogs.

A connected experience through Windows Azure integration, cloud development, and Microsoft SharePoint capabilities through the new Microsoft Dynamics Marketplace; all as ways for customers and partners to configure and tailor Microsoft Dynamics CRM to meet specific business needs. [Emphasis added.]

Microsoft is not being subtle in its attempts to snatch market share away from Salesforce.com and Oracle. Customers of either those services that switch to Microsoft Dynamics CRM between now and June 30, 2011 qualify for the Cloud CRM for Less offer. Customers will receive up to $200 per user, applicable to services much as migrating data or customizing the solution.

<Return to section navigation list>

Visual Studio LightSwitch

Return to section navigation list>

Windows Azure Infrastructure

Bill Zack reported Microsoft Survey on Cloud Computing Released in a 1/19/2011 post to his Ignition Showcase blog:

Microsoft has just released a study of how 2,000 IT Decision makers are adopting and using Cloud Computing. If you are a software company that is interested in the market for your products and services and the impact of the cloud you need to read this study.

The study identifies the top cities across 10 markets including: New York, Philadelphia, Boston, Atlanta, Washington DC, Los Angeles, Chicago Dallas, San Francisco, and Detroit. It ranks them in terms of cloud-friendliness of enterprises and small businesses in those markets.

Some highlights from the study:

- Businesses of any size are highly reliant on partners to bring cloud into their organizations.

- Among enterprise-sized companies Boston ranks at the top cloud-friendly city while Washington DC ranks first among small-to-midsize companies.

- 54% of the national respondents say that they are hiring as a result of cloud services.

- Small-to-medium businesses have not fully embraced the cloud – yet.

See here for more details and how to get the study.

Derrick Harris reported Big Data, ARM and Legal Concerns on the Rise in Q4 in a 1/19/2011 post to GigaOm’s Structure blog:

Some might call the fourth quarter in the infrastructure space transformative. The rise of ARM-based processing suggests the days of x86 dominance might be coming to an end, while the Amazon Web Services-WikiLeaks controversy cast new light on the legal aspects of cloud computing.

Big data got bigger, meanwhile, as the Hadoop ecosystem expanded, and amid all these cutting-edge technologies, two archaic topics — Novell and Java — proved they aren’t going anywhere soon. From giants like VMware and IBM to smaller startups like Nimbula and Abiquo, news came from all corners of the Infrastructure market during the fourth quarter. Let’s take a look at some of the most noteworthy trends:

- The Amazon-WikiLeaks controversy shed light on the fact that many pundits appear oblivious to the legal aspects of cloud computing, which leads to overreaction when it appears that cloud providers are acting questionably. The reality is that providers are almost certainly acting within the rights granted to them by their terms of services, as well as by the Constitution. If it comes down to a decision between potentially facing legal action or removing questionably legal (or moral) content, it’s kind of crazy to expect cloud providers — especially publicly traded ones such as Amazon — to do anything other than what’s prudent.

- The x86 processor architecture appears down, but certainly not out. Alternative CPU architectures (particularly ARM) and GPUs are squeezing x86 from all directions, and are beginning to steal workloads in traditionally x86-dominant fields, such as HPC. However, it will take years before ARM processors or GPUs can ever really make a dent in x86 market share (or, in some cases, will even be on the market). So chipmakers such as Intel and AMD have some time to get creative if they’re determined to hitch their wagons to x86 for the foreseeable future.

- Platform as a service (PaaS) is no longer just infrastructure as a service’s (IaaS) younger, cooler brother. The acquisitions of Makara and Heroku by Red Hat and Salesforce.com, respectively, as well as VMware’s hosted PaaS project, illustrate that PaaS is a legitimate IT delivery model, even if mainstream adoption is still a few years away. Of course, anyone following the evolution of VMware’s vFabric or Microsoft Windows Azure might have realized this a while ago.

- Analysts and investors don’t really understand cloud computing, web infrastructure or the related data-center operation market. From complaints about web companies such as Google spending hundreds of millions on CAPEX to investors bailing because Equinix had a lackluster quarter, the fourth quarter illustrated reactionary thinking that’s contrary to the facts. Internet traffic and demand for data center space both keep growing, and will continue to do so as cloud computing actually starts catching on among mainstream businesses. All that space will make money at some point.

- Oracle really doesn’t care about open source. Its actions within the various Java governance bodies have done nothing but produce hurt feelings and flat-out animosity, which are only compounded by its ongoing lawsuit against Google. Whether Oracle is technically correct in either matter isn’t really the issue as far as open source advocates are concerned; they’re more concerned with things like openness and cooperation. Oracle, on the other hand, cares about making money. The question is whether it can do so without broad Java community support.

- The Hadoop ecosystem shows no signs of slowing its growth, but it’s unclear what will come of the web of partnerships and integrations. There are a few competing approaches to selling Hadoop shaping up, and there’s no guarantee that Cloudera’s partner-centric strategy, for example, will prevail against IBM’s Hadoop-as-application strategy. What’s certain, however, is that even companies nowhere near the cutting edge will utilize Hadoop at some point, because it’s becoming too ubiquitous to avoid forever.

- We’re a long way off from where we need to be on Green IT. Many energy-efficiency players still focus on saving money rather than actually reducing energy use, and even generally accepted notions (i.e., cloud computing is a net positive in terms of energy use) are coming under increased scrutiny. Startups selling software for monitoring energy use are raising money but are not necessarily attracting customers. Might it take a string of brownouts or governmental action to spur action toward truly green IT?

For more analysis of these events and a look forward into the next 18 to 24 months, read my latest report at GigaOM Pro.

Related Content From GigaOM Pro (subscription required)

Tim Huckaby (left) interviewed Wally McClure (right) on 1/11/2011 in a 00:04:21 Wally McClure and Tim Huckaby Bytes by MSDN video segment:

Do you believe in the Cloud? Wallace McClure, Founder and Architect of Scalable Development, Inc., does. His customers are extremely interested in the value and economies of scale that Cloud Computing, and more specifically, Windows Azure can bring. Building out an infrastructure that supports your web service or application can be expensive, complicated and time consuming. Or you could look to the Microsoft cloud. The Windows Azure platform is a flexible cloud–computing platform that lets you focus on solving business problems and addressing customer needs. Wally talks about all this, and more, in this interview with Tim Huckaby, and in his Windows Azure podcasts.

<Return to section navigation list>

Windows Azure Platform Appliance (WAPA), Hyper-V and Private Clouds

John Brodkin (@jbrodkin) reported Microsoft, HP selling $2M data warehouse appliance on 1/19/2011 in a NetworkWorld article via ComputerWorld:

Microsoft and Hewlett-Packard are teaming up to deliver a $2 million data warehouse appliance and four other hardware/software products in a bid to outshine recent moves by Oracle and IBM.

The HP Enterprise Data Warehouse Appliance is available this week starting at nearly $2 million, which does not include the price of Microsoft software, HP and Microsoft said. The big appliance, advertised as 200 times faster and 10 times more scalable than traditional SQL Server deployments, will include at least two racks of servers and storage, built around the HP ProLiant DL980 systems. Microsoft's SQL Server 2008 R2 Parallel Data Warehouse will be licensed separately.

A joint announcement by HP and Microsoft on Wednesday continues a $250 million partnership unveiled a year ago, and potentially gives the vendors a bigger stake in the market for integrated hardware-and-software appliances designed to run business applications. The moves could be seen as countering Oracle's Exadata database machine, a result of the Sun acquisition; and IBM's acquisition of Netezza, a maker of data warehouse appliances.

But Microsoft SQL Server general manager Doug Leland says the Microsoft/HP partnership is unique because it combines "the best software company on the planet with the best hardware company." Microsoft does not have any other partnerships "on a similar scale, in this particular arena," he says.

The appliances delivered by HP and Microsoft target a wide range of "application services such as business intelligence, data warehousing, online transaction processing and messaging," the vendors say in a press release. "The jointly engineered appliances, and related consulting and support services, enable IT to deliver critical business applications in as little as one hour, compared with potentially months needed for traditional systems."

The other systems won't be nearly as pricey as the HP Enterprise Data Warehouse Appliance.

The HP Business Decision Appliance, a business intelligence system built on top of an HP ProLiant DL380 server with eight cores, will start at nearly $28,000, not including the cost of SQL Server 2008 R2 and SharePoint 2010. The appliance, available today from HP and so-called HP/Microsoft Frontline channel partners, comes with three years of hardware and software support services. The three years of services will also be applied to the Enterprise Data Warehouse Appliance and a third product called the HP E5000 Messaging System.

The messaging system will be available in March and start at $36,000, not including the price of Microsoft Exchange Server 2010.

The new systems are rounded out with the HP Business Data Warehouse Appliance, designed for small and midsized businesses; and the HP Database Consolidation Appliance, which uses Hyper-V and SQL Server 2008 R2 to consolidate hundreds of databases into a smaller virtual environment. The Business Data Warehouse Appliance will be available in June and the Database Consolidation Appliance will be available in the second half of 2011.

HP and Microsoft said they are aiming to make the appliances modular enough to be adapted to private cloud networks and public cloud services such as Windows Azure. [Emphasis added.]

Dana Kaufman of the SQL Server Team posted HP Business Decision Appliance – A Closer Look at Backup and Availability Features on 1/19/2011:

Today we announced the availability of the HP Business Decision Appliance. This is the culmination of many months of engineering work between HP and Microsoft to develop a software/hardware solution to enable easy access to self-service business intelligence technology. The solution is designed for medium size businesses and departmental enterprise deployments.

The appliance is optimized for Windows Server 2008 R2, SQL Server 2008 R2, SharePoint 2010 and PowerPivot for SharePoint. One of our goals was for a simplified installation and configuration. Talking with customers, we found that for some businesses, deploying the above software stack took many months and required outside experts. We created a single installation program that prompts the users for a small set of questions and then installs and configures SharePoint, SQL Server and PowerPivot. From power up to a running SharePoint server takes about an hour in most cases.

I wanted to share with you a few items you might not have picked up from the announcements. The appliance installs SharePoint 2010 and PowerPivot for SharePoint as a Single Server Farm Installation. That means that the appliance is completely self-contained. SQL Server and all the SharePoint services are installed on this single appliance. The only external software requirement is that the customer has an Active Directory domain available. The appliance is joined to the domain as part of the appliance installation.

Because the solution is self-contained, we designed a good deal of redundancy into the appliance. The hardware has dual-power supplies, dual fans and multiple gigabit network cards. There are 8 SAS 300GB hard disks. Two of them are used for a mirrored system disk that contains the operating system and the recovery partition. The other 6 hard disks are configured in a RAID 5 array where the data and backup partitions are located. This disk configuration allows the appliance to survive a physical disk failure.

The appliance also has built-in backup and recovery capabilities. An Appliance Management Console is added to the SharePoint Central Administration during installation. In the Appliance Management Console, you will find options to backup the appliance and perform a factory reset. The appliance backup image uses Windows Server Backup to capture a complete image of the running server. Backups are stored in the Backup partition. Windows Backup can store multiple backups on the partition and will overwrite the older backup images when space is needed for the current backup. The Appliance Management Console also has a screen that allows you to view the appliance backup history.

NOTE: The onboard backup is meant as an interim backup solution. As your needs grow, you should consider moving to network backup storage or using a product like System Center Data Protection Manager, which has features to backup Windows Server 2008, SharePoint 2010, and SQL Server 2008 R2.

Factory reset lets you re-image the appliance back to the factory state. The appliance is returned to the state it was in when leaving the manufacturer. This re-initializes all of the hard drives and extracts and configures the boot image onto the disks. This allows you to re-image the box if you make a mistake or want to restart the configuration from the original state.

NOTE: Yes, it’s cool, but be careful with Factory Reset if you have used the onboard backup capabilities! Factory Reset re-initializes all drives, which means it will erase any backups that you have done previously to the backup partition.

A screenshot of the Appliance Management Console is shown below:As you can see, the appliance has a good deal of availably features right out of the box. In future posts I will cover additional features we added to the appliance and provide insight into some of the configuration changes we make to optimize the software running on the appliance.

Learn more about the HP Business Decision Appliance here and buy the appliance from HP here.

Dana Kaufman, Principle Program Manager, SQL Server Appliance Engineering Team

Twitter: www.twitter.com/dskaufman1

Britt Johnson of the SQL Server Appliance Team posted SQL Server Appliances – A Workload-based Appliance Design Philosophy on 1/18/2011:

I run the Appliance Engineering team for SQL Server. One of the questions I get asked most often about building appliances, is how do we go about designing a new appliance. We don’t start with a cool piece of hardware and figure out what we might be able to build out of it, rather we start by understanding what an appliance needs to do and work our way to choosing the right hardware.

Our general approach is what I like to call “workload-based appliance design” and I thought I would share some of the thinking we have developed as part of engineering some of the new SQL Server appliances you may have heard about already such as the HP Enterprise Data Warehouse (HPEDW), the HP Business Decision Appliance (HPBDA) and others you may not know about that we are just starting to talk about. This is not rocket science, but it is good engineering and allows us to work with our key hardware partners to build general purpose appliances at a lower total cost that you might expect given their capabilities.

W is for Workload: Let’s assume that we know we want to build an appliance for a specific workload and identified that workload. For this discussion let’s choose the “Self-service BI” or SSBI workload targeting a small to medium business or enterprise departments that want to use PowerPivot. From this starting point our engineering effort kicks off by gaining a deep understanding of the workload specifics. We run the workload as we understand it on real hardware – we call a design proxy - varying many parameters to understand workload variability. We talk with customers, consultants, MVPs, our own SQL CAT experts and the developers of the products are thinking about using. From that collected expert knowledge we build a specific model for the workload – and in the case of SSBI that evolved into an automated workload we could run and measure. It can be tough to agree on general workload characteristics, but it is critical to gain that level of understanding so specific tools can be built for performance and testing work needed later.

A is for Architecture: After a workload is understood, a survey is done to understand for the target workload what approaches or system architectures are appropriate. You can imagine for SSBI we looked at best practices related to PowerPivot, SharePoint, and SQL Server. We explored running the workload on the metal in different mixes of physical servers and in VMs - splitting up into multiple virtual servers. We also talked with customers about what capabilities they expected to find in a complete solution and that led us to take an “ecosystem” approach, bringing all the components together into a single server. We ran an extensive battery of tests to see if we could really get the architecture to work well to narrow our approach.

S is for Software: Once we have an approach we believe is sound we start looking at how to build the solution, the required software components. There are many software components required for a SSBI workload as we have defined beyond the basic products, determining exactly how to combine those together takes some effort. Making decisions on what to enable by default, how to configure all the components so they work well together takes a great deal of iteration. I like to think of this process as learning how to set the 10,000 knobs that exist in the software – at least establishing an initial setting. Reviewing those decisions with workload experts is a key activity at this stage of the process and often we find that the “best solution” is not necessarily consistent with common “best practice”. At this point we are starting to add considerable value to the solution – value that is difficult for any single IT organization to create since we are working directly with the world’s leading experts on all the components being utilized,.

H is for Hardware: The final step, selecting specific hardware, is an iterative process. For example the SSBI workload is especially memory intensive, so selecting the proper amount of RAM for the system was an important decision. We bought our engineering prototype hardware with the max amount of available RAM, but through performance tuning and optimization we were able to reduce the total memory to 96GB without impacting overall performance of our workload. Again the resulting appliance hardware contains the knowledge of many experts - for example we review the configuration of our DIMMs with the engineers who designed the mainboard we are using, we reviewed the RAID configuration with the team that built the RAID controller. This final stage is marked by rapid iteration of both hardware and software configuration, extensive performance and reliability testing to reach a final configuration – that configuration we capture and deliver with our hardware partners as an appliance.

When you think about the SQL Server appliance products, hopefully this will provide some context for how we create those products – our workload-centric engineering approach is important in making sure we can deliver a compelling product at a low total cost. And when you need to explain why you think a specific SQL Server appliance might be a good solution for your organization’s workload needs, remember: SQL Server Appliances have nothing to do with laundry, but we do use Workload, Architecture, Software, Hardware (WASH) as the basis for our engineering design process.

Britt Johnston, Principal Group Manager, SQL Server Appliance Engineering Team

Twitter: www.twitter.com/brittjohnston