Windows Azure and Cloud Computing Posts for 1/1/2011+

| A compendium of Windows Azure, Windows Azure Platform Appliance, SQL Azure Database, AppFabric and other cloud-computing articles. |

• Updated 1/2/2011 with articles marked •

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database and Reporting

- Marketplace DataMarket and OData

- Windows Azure AppFabric: Access Control and Service Bus

- Windows Azure Virtual Network, Server App-V, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch

- Windows Azure Infrastructure

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Azure Blob, Drive, Table and Queue Services

• Morebits continued a Windows Azure series with a Building Windows Azure Service Part 5: Worker Role Background Tasks Handler post to MSDN’s Technical Notes blog on 1/1/2011:

In this post you will create a worker role to read work items posted to a queue by the web role front-end. The worker role performs these tasks:

Extract the information about the guest book entry from the message queued by the web role in the Queue Storage.

- Retrieve the user entry from the Table Storage.

- Fetch the associated image from the Blob Storage and create a thumbnail and store it is as a blob. [Emphasis added.]

- Finally, update the entry to include the URL of the generated thumbnail.

To create the worker role

- In Solution Explorer, right click the Roles node in the GuestBook project.

- Select Add and click New Worker Role Project.

- In the Add New Role Project dialog window, select the Worker Role category and choose the Worker Role template for the language of your choice.

- In the name box, enter GuestBook_WorkerRole and click Add.

Figure 7 Creating Worker Role Project

- In Solution Explorer, right-click the GuestBook_WorkerRole project and select Add Reference.

- Switch to the Projects tab, select the GuestBookData project and click OK.

- In Solution Explorer, right-click the GuestBook_WorkerRole project, select Add Reference,

- Switch to the .Net tab, select the System.Drawing component and click OK.

- Repeat the procedure in the previous steps to add a reference to the storage client API assembly, this time choosing the Microsoft.WindowsAzure.StorageClient component instead.

- In the GuestBook_WorkerRole project, open the WorkerRole.cs file. Replace its content with the following code.

using System; using System.Collections.Generic; using System.Diagnostics; using System.Linq; using System.Text; using System.Threading; using Microsoft.WindowsAzure.Diagnostics; using Microsoft.WindowsAzure.ServiceRuntime; using System.Drawing; using System.IO; using GuestBook_Data; using Microsoft.WindowsAzure; using Microsoft.WindowsAzure.StorageClient; namespace GuestBook_WorkerRole { public class WorkerRole : RoleEntryPoint { private CloudQueue queue; private CloudBlobContainer container; public override void Run() { Trace.TraceInformation("Listening for queue messages..."); while (true) { try { // retrieve a new message from the queue CloudQueueMessage msg = queue.GetMessage(); if (msg != null) { // parse message retrieved from queue var messageParts = msg.AsString.Split(new char[] { ',' }); var uri = messageParts[0]; var partitionKey = messageParts[1]; var rowkey = messageParts[2]; Trace.TraceInformation("Processing image in blob '{0}'.", uri); // download original image from blob storage CloudBlockBlob imageBlob = container.GetBlockBlobReference(uri); MemoryStream image = new MemoryStream(); imageBlob.DownloadToStream(image); image.Seek(0, SeekOrigin.Begin); // create a thumbnail image and upload into a blob string thumbnailUri = String.Concat(Path.GetFileNameWithoutExtension(uri), "_thumb.jpg"); CloudBlockBlob thumbnailBlob = container.GetBlockBlobReference(thumbnailUri); thumbnailBlob.UploadFromStream(CreateThumbnail(image)); // update the entry in table storage to point to the thumbnail var ds = new GuestBookEntryDataSource(); ds.UpdateImageThumbnail(partitionKey, rowkey, thumbnailBlob.Uri.AbsoluteUri); // remove message from queue queue.DeleteMessage(msg); Trace.TraceInformation("Generated thumbnail in blob '{0}'.", thumbnailBlob.Uri); } else { System.Threading.Thread.Sleep(1000); } } catch (StorageClientException e) { Trace.TraceError("Exception when processing queue item. Message: '{0}'", e.Message); System.Threading.Thread.Sleep(5000); } } } public override bool OnStart() { DiagnosticMonitor.Start("DiagnosticsConnectionString"); // Restart the role upon all configuration changes RoleEnvironment.Changing += RoleEnvironmentChanging; // Set the global configuration setting publisher for the storage account, which // will be called when the account access keys are updated in the service configuration file. // Calling SetConfigurationSettingPublisher in OnStart method is important otherwise the // system raises an exception when FromConfigurationSetting is called. CloudStorageAccount.SetConfigurationSettingPublisher((configName, configSetter) => { try { configSetter(RoleEnvironment.GetConfigurationSettingValue(configName)); } catch (RoleEnvironmentException e) { Trace.TraceError(e.Message); System.Threading.Thread.Sleep(5000); } }); // Create a new instance of a CloudStorageAccount object from a specified configuration setting. // This method may be called only after the SetConfigurationSettingPublisher // method has been called to configure the global configuration setting publisher. // You can call the SetConfigurationSettingPublisher method in the OnStart method // of the worker role before calling FromConfigurationSetting. // If you do not do this, the system raises an exception. var storageAccount = CloudStorageAccount.FromConfigurationSetting("DataConnectionString"); // initialize blob storage CloudBlobClient blobStorage = storageAccount.CreateCloudBlobClient(); container = blobStorage.GetContainerReference("guestbookpics"); // initialize queue storage CloudQueueClient queueStorage = storageAccount.CreateCloudQueueClient(); queue = queueStorage.GetQueueReference("guestthumbs"); Trace.TraceInformation("Creating container and queue..."); bool storageInitialized = false; while (!storageInitialized) { try { // create the blob container and allow public access container.CreateIfNotExist(); // container.CreateIfNotExist(); var permissions = container.GetPermissions(); permissions.PublicAccess = BlobContainerPublicAccessType.Container; container.SetPermissions(permissions); // create the message queue queue.CreateIfNotExist(); storageInitialized = true; } catch (StorageClientException e) { if (e.ErrorCode == StorageErrorCode.TransportError) { Trace.TraceError("Storage services initialization failure. " + "Check your storage account configuration settings. If running locally, " + "ensure that the Development Storage service is running. Message: '{0}'", e.Message); System.Threading.Thread.Sleep(5000); } else { throw; } } } return base.OnStart(); } private void RoleEnvironmentChanging(object sender, RoleEnvironmentChangingEventArgs e) { if (e.Changes.Any(change => change is RoleEnvironmentConfigurationSettingChange)) e.Cancel = true; } private Stream CreateThumbnail(Stream input) { var orig = new Bitmap(input); int width; int height; if (orig.Width > orig.Height) { width = 128; height = 128 * orig.Height / orig.Width; } else { height = 128; width = 128 * orig.Width / orig.Height; } var thumb = new Bitmap(width, height); using (Graphics graphic = Graphics.FromImage(thumb)) { graphic.InterpolationMode = System.Drawing.Drawing2D.InterpolationMode.HighQualityBicubic; graphic.SmoothingMode = System.Drawing.Drawing2D.SmoothingMode.AntiAlias; graphic.PixelOffsetMode = System.Drawing.Drawing2D.PixelOffsetMode.HighQuality; graphic.DrawImage(orig, 0, 0, width, height); var ms = new MemoryStream(); thumb.Save(ms, System.Drawing.Imaging.ImageFormat.Jpeg); ms.Seek(0, SeekOrigin.Begin); return ms; } } } }

- Save and close the WorkerRole.cs file.

To configure the storage account used for worker role

In order for the worker role to use Windows Azure storage services, you must provide account settings as described next.

- In Solution Explorer, expand the Role node in the GuestBook project.

- Double click GuestBook_WokerRole to open the properties for this role and select Settings tab.

- Click Add Settings.

- In the Name column, enter DataConnectionString.

- In the Type column, from the drop-down list, select ConnectionString.

- In the Value column, from the drop-down list, select Use development storage.

Figure 8 Configuring Storage Account For Worker Role

- Click OK.

- Press Ctrl+S to save your changes.

For related topics, see the following posts.

- Building Windows Azure Service Part1: Introduction

- Building Windows Azure Service Part2: Service Project

- Building Windows Azure Service Part3: Table Storage

- Building Windows Azure Service Part4: Web Role UI Handler

- Building Windows Azure Service Part6: Service Configuration

- Building Windows Azure Service Part7: Service Testing

This application is similar to the original Windows Azure Queue and Blob Storage application described in my Cloud Computing with the Windows Azure Platform book.

• Ghaus Iftikhar Nakodari described on 1/1/2011 how to Backup To Azure Blob Storage via Windows Explorer Virtual Network Drive with Gladinet’s Cloud Desktop – Starter Edition:

We covered a way to map Windows Live SkyDrive as Windows Explorer Virtual Network Drive some months back. The app we used was Gladinet and this week they have updated it to support Windows Azure Blob Storage as well, making it easier to access the Windows Azure storage from within Windows Explorer.

After Gladinet is installed you will be shown a new dialog window to setup the initial setting. Here select ‘I just want to use the free starter edition’ and hit Next.

The Starter edition allows you to transfer a maximum of 1000 files in one go, i.e, per task. This does not mean that you cannot transfer more than 1000 files, you can but if you have more than 1000 files then you will have to break them into multiple tasks.

In the next step you have to enter your email address but it is optional. Now it will ask you to select the storage that you want to mount as virtual drive, select Azure Blob Storage and hit Next.

In the final step of the initial setup, it will ask you to verify the settings at the same time allow you to change any setting, such as, Change Virtual drive letter and name, change cache location, enable proxy, etc.

Once you are done with the initial setup, open the Azure Blob Storage folder inside the Gladinet virtual drive. Doing this will open a new dialog window asking for the Azure Blob Storage Account Name(EndPoint) and the Primary Access Key.

After you have entered the credentials, hit Next and it will download a plugin and automatically set it up. Once the process is complete you will be shown a backup dialog window asking you to backup folders, pictures, music, videos, and documents. If you don’t want to backup anything at the moment, you can cancel it and later create a new task from the system tray menu.

For demonstration, we have selected backup My Music option. In the next step you will be asked the file types that you can way to backup. Since Gladinet is a flexible app it allows you to add any new file type if it is not already included in the list.

When done, hit Next and you will be shown the preview of all files that will be backed up. You can choose to exclude some folders and their subfolders.

In the next step you will be asked to choose the online storage where you want the files to be backed up. Since we have added only Azure Blob Storage at the time, there is only one option listed below. Check it and hit Next.

Now in the final step you will be shown the overview of the backup. After confirmation hit Finish and Gladinet will start backing up the files and folders in the background. Gladinet sits in the system tray and allows greater control over the operation.

You can always backup your files quickly by selecting Backup My Files Online option from the Gladinet system tray context menu.

The most important options you will find here are the Task Manager and the option to pause all tasks. Clicking the former will open a task manager showing the activity in progress while the latter allows you to simply pause/resume the tasks.

It works on Windows XP, Windows Vista, Windows Server 2003/2008, and Windows 7. Both 32-bit and 64-bit versions are availabe, make sure you have installed the correct version for seamless and optimal performance.

<Return to section navigation list>

SQL Azure Database and Reporting

• Simon Sabin observed that Webscale is all about sharding and it’s coming to SQL Azure on 1/1/2011:

There are many that joke about developers always talking about webscale and needing to shard to be able to scale. In reality many systems, if not most, don’t need to be able to scale to numerous nodes because todays processing is so powerful. However in the cloud world where you don’t have 1 big box you have many little ones (instances) you need some way of sharding/federating/distributing data and load.

I’ve mentioned before a PDC presentation on what’s coming in SQL Azure. Well, they’ve put some more content out there including details on how to shard with the current Azure infrastructure:

http://blogs.msdn.com/b/sqlazure/archive/2010/12/23/10108670.aspx

The new stuff looks real neat and easy to use, but just because its not easy at the moment doesn’t mean its not possible, you just have to work at it.

Simon is a part of SQLKnowHow, a leading SQL Server training and consultancy company, and is also a Developer Skills Partner with SQLSkills. He runs the UK SQL user group in London and founded in 2007 SQL Bits, the largest SQL Server conference in Europe.

• The SQLAzureTutorial.com team posted a 00:09:22 How to signup for Microsoft SQL Azure - Cloud based database video segment on 11/24/2010 (missed when posted):

In this tutorial video on SQL Azure, we cover Microsoft SQL Azure signup

process. SQL Azure is a cloud based relational database built on SQL server technologies. It is a scalable, self managing and highly available database service hosted by Microsoft in the cloud. You can either sign up on the Windows Azure Platform website or the Microsoft online services customer portal. For our demo on SQL Azure we go ahead and sign into our Live account and select Windows Azure Platform Introductory Special.In this training demo on SQL Azure we also cover some of the background

information regarding pricing and features. We go ahead and create a SQL Azure server, setup administration username and password account and then walk you through rest of the setup process.

Peter Thorsteinson (a.k.a. PETERTEACH), published another 00:08:01 Windows Azure Platform Training Kit - Preparing your SQL Azure Account YouTube video on 12/31/2010:

This is the second of two episodes in his SQL Azure training videos.

Peter is a trainer with SQLSoft+ in Bellevue, WA, who focuses on Microsoft software development technologies. He blogs at http://www.stepuptransform.com.

Peter Thorsteinson (a.k.a. PETERTEACH), published a 00:10:00 Windows Azure Platform Training Kit - Preparing your SQL Azure Account YouTube video on 12/31/2010:

This is the first of two episodes in his SQL Azure training videos.

Peter is a trainer with SQLSoft+ in Bellevue, WA, who focuses on Microsoft software development technologies. He blogs at http://www.stepuptransform.com.

<Return to section navigation list>

MarketPlace DataMarket and OData

Microsoft added Windows Azure MarketPlace and WCF Data Services Developer Documentation to the Marketplace DataMarket on 12/28/2010:

The database can be queried for documentation specific to usage of Windows Azure MarketPlace.

<Return to section navigation list>

Windows Azure AppFabric: Access Control and Service Bus

The Windows Azure AppFabric Team reminded Azure developers about the MSDN Webcast: Windows Azure Boot Camp: Connecting with AppFabric (Level 200) to be held 2/7/2011 at 11:00 AM PST:

Register today for new Windows Azure AppFabric training! Join this MSDN webcast training on February 7, 2011 at 11:00 AM PST.

It's more than just calling a REST service that makes an application work; it's identity management, security, and reliability that make the cake. In this webcast, we look at how to secure a REST Service, what you can do to connect services together, and how to defeat evil firewalls and nasty network address translations (NATs).

Presenters: Mike Benkovich, Senior Developer Evangelist, Microsoft Corporation and Brian Prince, Senior Architect Evangelist, Microsoft CorporationIn addition, don’t miss these other Windows Azure and SQL Azure webcasts:

- 01/03: MSDN Webcast: Azure Boot Camp: Working with Messaging and Queues

- 01/10: MSDN Webcast: Azure Boot Camp: Using Windows Azure Table

- 01/17: MSDN Webcast: Azure Boot Camp: Diving into BLOB Storage

- 01/24: MSDN Webcast: Azure Boot Camp: Diagnostics and Service Management

- 01/31: MSDN Webcast: Azure Boot Camp: SQL Azure

- 02/14: MSDN Webcast: Azure Boot Camp: Cloud Computing Scenarios

<Return to section navigation list>

Windows Azure Virtual Network, Connect, Server App-V, RDP and CDN

Mary Jo Foley (@maryjofoley) reported Microsoft delivers test build of server app-virtualization technology on 12/31/2010:

Microsoft quietly delivered a promised Community Technology Preview (CTP) test build of its server application virtualization technology just before Christmas.

Company officials said at the Professional Developers Conference (PDC) in October 2010 to expect CTP 1 of server app-virtualiztion before the end of 2010. The final version of the technology is still on track for delivery in the second half of 2011, execs said on December 22.

At the PDC, Microsoft published a lengthy laundry list of new cloud technologies the company was planning to roll out in test and final form in the coming year-plus. I believe the server app-virtualiztion component was the last of the expected deliverables to make it out before the year-end deadline.

In June 2010, Microsoft execs said to expect Server application virtualiztion to be delivered via System Center Virtual Machine Manager v.Next, due in the second half of 2011. (That product is expected to debut as SCVMM 2012 when it ships.)

Server application virtualization (Server App-V), as explained in a December 22 TechNet blog post, builds on the client App-V technology that Microsoft currently offers as part of its Microsoft Desktop Optimization Pack (MDOP). It will allow users to separate application configuration and state from the underlying operating system. As the post noted:

“This separation and packaging enables existing Windows applications, not specifically designed for Windows Azure, to be deployed on a Windows Azure worker role. We can do this in a way where the application state is maintained across reboots or movement of the worker role.”

Server App-V converts traditional Windows Server 2008 apps into “state separated ‘XCopyable’ images without requiring code changes to the applications themselves,” the Softies explained.

Server App-V is meant to be a complementary technology to the Windows Azure VM Role technology, a test version of which Microsoft delivered in November. The two technologies are aimed at allowing customers to host more of their legacy applications in the cloud.

Kick off your day with ZDNet's daily e-mail newsletter. It's the freshest tech news and opinion, served hot. Get it.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

Sanjay Jain (@SanjayJain369) posted links to seven Dynamics CRM 2011: xRM Cloud Acceleration Lab Videos to the Ignition Showcase blog on 1/2/2011:

While hosting xRM Cloud Acceleration Lab (week of 6th Dec’10; Redmond, WA), I got an opportunity to chat with several ISVs, building their Cloud Offerings using Dynamics CRM 2011 Online and Windows Azure. Enjoy the short videos to learn more about various cloud solutions.

During lab, we also got an opportunity to share Top 10 Features of CRM 2011 in 10 minutes: CRM 2011 Top 10 in 10

Sanjay Jain, ISV Architect Evangelist, Microsoft Corporation

- Blog: http://Blogs.msdn.com/SanjayJain

- Twitter: http://twitter.com/SanjayJain369

• Bart Wullems asked The Windows Azure Platform Training kit: Where are we? in a reminder to Windows Azure developers of 1/2/2011:

First and foremost, welcome in 2011!

The Windows Azure Platform Training Kit includes a comprehensive set of technical content including hands-on labs, presentations, and demos that are designed to help you learn how to use the Windows Azure platform including: Windows Azure, SQL Azure and the Windows Azure AppFabric. With the speed that Microsoft is releasing new versions of the training kit, it’s easy to miss a new update or release.

So to get you up to date, December last year a new update has been released. The December update provides new and updated hands-on labs, demo scripts, and presentations for the Windows Azure November 2010 enhancements and the Windows Azure Tools for Microsoft Visual Studio 1.3. These new hands-on labs demonstrate how to use new Windows Azure features such as Virtual Machine Role, Elevated Privileges, Full IIS, and more.

Some of the specific changes with the December update of the training kit include:

- [Updated] All demos were updated to the Azure SDK 1.3

- New demo script] Deploying and Managing SQL Azure Databases with Visual Studio 2010 Data-tier Applications

- [New presentation] Identity and Access Control in the Cloud

- [New presentation] Introduction to SQL Azure Reporting

- [New presentation] Advanced SQL Azure

- [New presentation] Windows Azure Marketplace DataMarket

- New presentation] Managing, Debugging, and Monitoring Windows Azure

- [New presentation] Building Low Latency Web Applications

- [New presentation] Windows Azure AppFabric Service Bus

- [New presentation] Windows Azure Connect

- [New presentation] Moving Applications to the Cloud with VM Role

Oh wait, and of course a link to download it.

And a remark: Note that this release only supports Visual Studio 2010. So if you didn’t upgrade yet, now is a good time…

Bart is a Microsoft Certified Trainer and Professional, as well as a certified ScrumMaster located in Belgium.

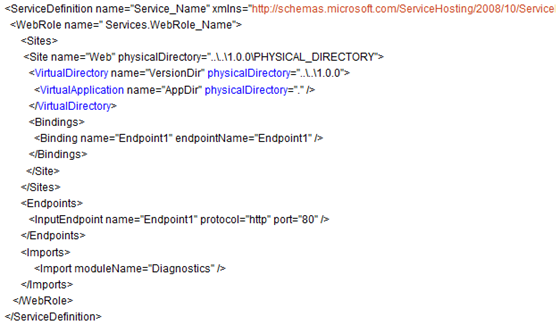

• Avkash Chauhan explained Windows Azure : How to define Virtual Directories in Service Definition (CSDEF) for your site? on 1/1/2011:

In Windows Azure SDK 1.3 you have ability to setup multiple sites within your full IIS role as well as Virtual directories.

When you are developing your application and needed to setup Virtual Directories, here I will explain how you can configure VirtualDirectory for specified Site in ServiceDefinition.csdef file.

So if you have your VS2010 Solution is located at:

C:\App\Enterprise\Release\Service_Name\1.0.0

And your Cloud Project is:

C:\App\Enterprise\Release\Service_Name\1.0.0\PHYSICAL_DIRECTORY

In that case the Service Definition (CSDEF) should be constructed for Virtual Directories as below:

You need to control TWO important things:

1. Please be sure to keep the total path size under 260 characters otherwise you will get another error.

2. The directory name must be under 248 characters which could also cause another error.

• Simon Sabin provided a workaround for a Newbie error with Windows Azure–403 not authorised on 12/18/2010 (missed when posted):

Azure uses certificates to manage secure access to the management side of things. I’ve started looking into azure for some SQLBits. So in doing so I needed to configure certificates to connect. VS nicely will create a cert for you and copies a path for you to use to upload to azure. I went to the Azure portal (https://windows.azure.com/Default.aspx) found the certificate bit and added the certificate.

However after doing this I couldn’t connect.

After trying numerous fruitless things, including looking round the portal for other places to put certs, I realised I must be missing something.

So went back to the portal and had another look, and what did I find but a “Management Certificates” option.

I added the certificate generated by Visual Studio (connection wizard pops up when you try and deploy stuff which allows you to generate a cert) and now I can connect.

So there is a page on the Home of the portal that takes you to http://msdn.microsoft.com/en-us/library/gg441575.aspx. What’s odd is that you would expect the Management Certs to be the first option because that is what is required to manage the certificates.

I hope the Windows Azure Platform Portal team reads Simon’s last paragraph.

Peter Thorsteinson (a.k.a. PETERTEACH), published another 00:10:00 Getting started with the Windows Azure Platform Training Kit YouTube video on 1/1/2011:

This is the second of two episodes in his Windows Azure training videos.

Peter is a trainer with SQLSoft+ in Bellevue, WA, who focuses on Microsoft software development technologies. He blogs at http://www.stepuptransform.com.

Peter Thorsteinson (a.k.a. PETERTEACH), published a 00:10:00 Intro to Windows Azure VS2010 YouTube video on 1/1/2011:

Peter is a trainer with SQLSoft+ in Bellevue, WA, who focuses on Microsoft software development technologies. He blogs at http://www.stepuptransform.com.

Roberto Bonini reported on 12/31/2010 porting the server code of his Client Server Chat with WCF app to an Azure Web Role:

Almost a year after I wrote this post promising to keep WCF Chat updated, I’m living up to that promise and updating my WCF Chat application over on Codeplex. The original release on Codeplex is actually just a zip file with the project in it. All things considered it was a knee-jerk posting in the mist of the openFF effort to clone Friendfeed. Of course, the original, actual reason why I posted it is lost to history. And in the middle of all that hoohah I never wrote an introduction to and an explanation of the codebase.

An Introduction

The WCF Chat application was actually a class assignment for a class that included, among other things, WCF, REST and TCP. Its actually interesting to see how that class has changed since I took it three years ago. This year, for example, its including MVC, but I digress. The fact is that my submission did go above and beyond the requirements. And the reason for that is that once I wrote the basic logic, the more complicated stuff was easy. In other words: given enough layers of abstractions, anything is easy.

Having dusted off and worked with the code for a few hours, its rather amazing how easy a chat application is. Now, that statement should be taken in the context of the fact that WCF is doing most of the heavy lifting. So getting the client to ask for data is a relatively trivial task. The tricky bit is the need for a callback.

In this case, I use callbacks for Direct Messages and File transfers. Now, you are probably wonder why I went through the trouble given that the sensible option is simply to poll a server. And it is a sensible option. Tweetdeck, Seesmic and other twitter clients all use polling. Basically, it was in the requirements that there should be two way communication. There are a number of way sto implement this. One could, for example, have a WCF service on the client that the server can call back to. This did occur to me, but its a complex and heavy handed approach, not to mention a resource intensive one. WCF always gives me headaches and so I was only going to do one service. So I wrote a TCP listener that received pings from the server on a particular port.

Thats one peculiarity about this app. The other is the way the server is actually written. We have the WCF service implementation and we have a second server class that the WCF service calls. There is a division of responsibility between the two. The service is always responsible for getting the data ready for transmission and the server does the actual application logic.

The client is fairly straightforward. It uses the Kryton Library from the Component Factory. Its a great UI library that I use anytime I have to write a Windows forms UI. The actual UI code is rather like the Leaning Tower of Pisa – its damn fragile. Basically because it relies on the component designer logic for the way controls are layered. So I haven’t touched it at all. In fact, I haven’t needed to. More on this later.

When you are looking at the client code, you’ll realise that for each type of message control, there is a message type base control. The reason for this is that I foolishly (and successfully) tried to use generic controls. In the current implementation, there is actually precious little code in the MessageBase control. The reason for this is mainly historical. There was originally a lot of code in there, mainly event code. In testing, I discovered that those events weren’t actually firing for reasons beyond understanding. So they were moved from the inherited class to the inheriting class. This is the generic control.

There are message type base controls that inherit from the MessageBase control, and pass in the type (Post, DM, File). This control is in turn inherited by the actual Message, DM or File control. The reason for this long inheritance tree is that the designer will not display generic controls. Rather, that was the case. I’ve yet to actually try it with Visual Studio 2010 Designer. As I said, I haven’t changed the client UI code and architecture at all.

The client has a background thread that runs a TCP Listener to listen for call backs from the server. Its on a background thread so that it does not block the UI thread. I used a background worker for this rather than the newer Task functionality built into .Net 4 that we have today.

Functionality

Basically, every one sees all the public messages on a given server. There is no mechanism to follow specific people or ever to filter the stream by those usernames. Archaic, I know, But I’m writing my chat application, not Twitter.

There are Direct Messages that can be directed to a specific user. Because the server issues callbacks for DM’s, they appear almost instantly in the users stream.

You can send files to specific users as well. These files are stored on the server when you sent them. The server will issue a call back to the user and the file will be sent to them when the client responds to that callback. You can also forward a file you have received to another user. Files are private only by implication. They are able to be accessed by whoever is informed of the files existence.

All of the above messages are persisted on the server. However, forwarding messages is not persisted in any shape or form.

Also, you can set status. This status is visible only when you are logged in. In fact, your username is only visible to others when you are logged in.

It should be noted that you have to use the Server Options panel to add and remove users.

Today’s Release

Todays changes basically upgrade everything to .Net 4 and make sure its compatible. Todays release does not take advantage of anything new other than some additional LINQ and extension methods. Taking advantage of the new stuff will require a careful think of how and where to use them. I’m not quite willing to sacrifice a working build for new code patterns that do the exact same thing.

The original server was actually just a console application. I took that original code and ported it to a Windows Service. There were trivial logic changes made at most. The UI ( i.e the Options form) that was part of that console application has been moved into its own project.

I also ported the server code to a Windows Azure web role. And let me tell you something- it was even easier that I had anticipated. The XML file and the collections I stored the streams in are replaced with Windows Azure Tables for Users, Streams, DMS and Files. The files themselves are written to Windows Azure Blobs rather than being written out to disk. [Emphasis added.]

The web role as written is actually single instance. The reason is that the collection that stores the active users (i.e. what users are active right now) is still a collection. I haven’t moved it moved it over to [W]indows [A]zure [T]ables yet. You could fire up more than one instance of this role, but all of them would have a different list of active users. And because Windows Azure helpfully provides you with a load balancer, there’s no guaranteeing which instance is going to respond to the client. There is a reason why [I] haven’t move that collection over to Windows Azure Tables. Basically, I’m not happy with it. If Azure had some sort of caching tier, using Velocity or something so [I] could instantiate a collection of objects to the cache and have all instances share that collection. The Windows Azure table would be changing from minute to minute with Additions, Edits and Deletions and I don’t think Windows Azure Tables would keep up. I’m interested to now what you think of this.

I also added an Options application to talk to the Windows Azure Web Role, and I wrote a WCF web service in webrole to support this application.

The client is essentially the same as it has always been. There is the addition of a domain when you are logging in – this could be for either cloud or service based server implementations. Since there is no default domain, the client needs one when you are logging in. The client will ask for one when logging in. [O]nce you have provided one, you’ll have to restart the application.

There are installers for all the applications except for the Cloud project. The service installer will install both the service and the Options application.

Bear in mind that for the Options Applications, there is no authentication and authorisation. If you run the app on a server with the ChatServer installed, or you point the CloudOptions app at the appropriate server, you are in control. This is a concern to me and will be fixed in a future release.

Future changes

I was tempted to write a HTTP POST server for all this. MVC makes it so easy to implement. There would be plenty of XML flying back and forth. Some of the WCF operations would require some high-wire gymnastics to implement as HTTP POST, but its possible. I might to this.

The one thing that I didn’t update was the version of the Kryton UI library I use. I’d very much like to use the latest version to write a new UI from scratch. Again its a possibility.

The fact is that once you start thinking of implementing following a la twitter, your database schema suddenly looks much more complicated. And since I’m not writing Twitter, I’ll pass.

If you have any suggestions on future changes, holler.

Final Words

Bear in mind that for the Options Applications, there is no authentication and authorisation. If you run the app on a server with the ChatServer installed, or you point the CloudOptions app at the appropriate server, you are in control. This is a concern to me and will be fixed in a future release.

Also, bear in mind that this is marked Alpha for a reason. If it eats your homework and scares your dog, its not my fault – I’m just some guy that writes code.

Finally, this code all works IN THEORY. I’ll be testing all these pieces throughly in the coming weeks.

Where you can get it

You can get it off Codeplex. The source code for all the above mentioned components is checked into SVN source control.

For this 1.1 Alpha release, you’ll find each setup files for each component in a separate downloadable zip file. The CloudServer code is included as is, since no setup files are possible for Cloud projects.

Roberto just graduated with Honours in Computer Science from the University of the West of Scotland.

Avkash Chauhan posted Windows Azure: Handling exception - No valid combination of account information found on 12/31/2010:

When you deploy your Windows Azure SDK 1.2 or 1.3 based application you might experience your application status is still stuck in "Starting" or cycling between "busy and starting".

If you have decided to use Windows Azure SDK 1.3 then you can configure and use [an] RDP Connection to access your Windows Azure VM through "Publish" dialog box on VS2010. Once you are into your Windows Azure VM, you can investigate the root cause of your problem. If you look at application section of event log, it is possible that you may see the following exception:

(Stack trace omitted for brevity.)

It is possible that your are getting this error because of DevelopmentStorage=true in your service definition file and you have some code in your application which is trying to access Windows Azure Storage.

When you run your application on Windows Azure, you must not use DevelopmentStorage=true however it will not cause major problem only if your code is not accessing Windows Azure Storage. If your code will access Windows Azure Storage and the string is set as "DevelopmentStorage=true" then your application will cause problem. So the problem will occur depend if you will access Windows Azure storage or not. That's why you will see a warning when uploading the application package.

To solve such [a] problem you [must] do two things:

1. Set DevelopmentStorage=false to be safe if not your code is not accessing Windows Azure Storage

2. Replace "DevelopmentStorage=true" with ”Conneciton_String_which_includes_your_storage_credentials_etc” if you are actively using Windows Azure Storage otherwise it will cause error.If you study above call stack you will see that the code actually trying to access the Cloud Storage account so you will need to pass the correct Connection string so you could use Windows Azure Storage. After replacing the "DevelopmentStorgage=true" with the correct Connection String this particular problem should be resolved.

Kevin Kell posted Cloud Computing, Learning Tree, Open Source and Moodle to the Learning Tree blog on 12/31/2010:

Happy New Year!

One benefit of cloud computing is that it facilitates examination (or re-examination!) of technologies that may previously have presented an administrative, financial or technical barrier. For example there are Amazon Machine Images which come pre-installed with open-source solutions as delivered as a “virtual appliance“.

Similarly the Azure Companion makes it very easy to get popular open-source applications running on Azure. [Emphasis added.]

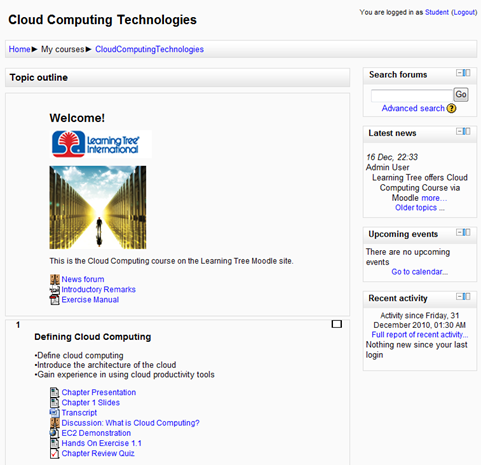

Recently I have been looking at one such application, Moodle, for potential use within Learning Tree. Moodle is an open-source virtual learning environment (VLE). It has been around for about 10 years and has a large installed base and user community. Moodle is popular in schools, universities and businesses. It is not new, but it is constantly evolving.

What is (relatively) new, however, is the degree to which cloud computing makes Moodle accessible. Whereas previously I might have had to acquire a server, set it up, download software and futz around for a few days to get everything to work I can now just use a community AMI provided by BitNami (there are others too) that comes with Moodle already configured. Literally within a matter of minutes my machine is up and running with a Moodle administrator account ready for me to start adding users and courses.

Since I am a newbie to Moodle there was a bit of a learning curve. The online educational resources at moodle.org and from within the community, though, are excellent. So, after some moodling about (yes, that is an actual verb!) I was able to construct a course with content that closely resembles our classroom course 1200 – Cloud Computing Technologies a Comprehensive Hands-On Introduction.

Figure 1 Cloud Computing course on Learning Tree Moodle site

In the course I have made use of some other cloud technologies as well: Google Docs and Microsoft Office Live to share the course slides and written transcript; YouTube for short demonstrations and Amazon CloudFront for delivery of longer streaming media such as the chapter presentation; EC2 for hands-on exercises. The course also utilizes Moodle’s discussion forums, blogs and quiz engine. Probably there are other resources and activities I could add to the course as it evolves.

So, how might we be able to use Moodle at Learning Tree? While it is well suited to “traditional” education based on semester long courses, is this asynchronous model compatible with Learning Tree’s format of short, intensive hands-on immersion? Is there any value at all in using a virtual learning environment in conjunction with our instructor led training?

I believe the answer may be yes. For example it is conceivable that a resource such as this could be used both as a pre-course preparation tool and post-course reference. Students who chose to use the site for preparation would come to the classroom having already absorbed some of the material. Classroom time could be more productively spent asking questions about material they did not understand and using the instructor to really get into more depth on things. After the class is over students could use the site as a reference and could continue to participate in discussions and blogs. They may even have access to the exact machine configurations they used in class to perform the hands-on exercises.

While these are issues that we will no doubt discuss internally I am curious to know what you think. If you are a past, present or potential future attendee at a Learning Tree course is this something that would be of interest to you? Do you have any previous educational experience using Moodle or other VLE? Would you use this as a resource either for preparation or review?

I am sure there are a variety of opinions out there …

<Return to section navigation list>

Visual Studio LightSwitch

Return to section navigation list>

Windows Azure Infrastructure

• Simon Ellis analyzed demand for cloud development experience for employment in The 2010 cloud computing winner post of 1/2/2011 to the Cloud Tweaks blog:

To identify a winning technology it’s usually best to go straight to the market and see what people are actually using. Winning technologies get adopted, new jobs get created and specialized skills get requested for.

Below is the job trend for the top 3 cloud vendors – Amazon, Microsoft and Google. While being non-scientific, this approach does raise some interesting points. Demand for Amazon cloud services is booming, both for compute and storage capabilities. There are almost triple the jobs requesting Amazon EC2 skills to those asking for Microsoft Azure capabilities. And Google AppEngine is surprisingly in little demand. So, is it fair to declare Amazon the 2010 cloud computing winner?

Adding Amazon EC2 and S3 counts almost doubles the difference in popularity of Amazon Web Services and Windows Azure or Google App Engine development experience.

Simon Ellis is the owner of labslice.com LabSlice is a Virtual Lab Management solution powered by Amazon EC2.

• Ben Kepes (@benkepes) wrote a Moving your Infrastructure to the Cloud: How to Maximize Benefits and Avoid Pitfalls whitepaper for Rackspace Hosting, who published it on 12/20/2010 (missed when published). From the Executive Summary and Introduction:

Executive Summary

With Cloud Computing becoming more widely utilized, it is important for organizations to understand ways to maximize benefits and minimize risks of a move to the cloud. This paper details the significant benefits that Cloud Computing brings and provides guidance to IT decision makers to help their decision making process. This is especially important given the plethora of vendors in the marketplace today. Buyers need to appreciate that assessing individual providers is critical to the success of Cloud Computing programs.

Introduction

Cloud Computing is a hot topic these days, with interest shown across all levels of the organization – from the C-suite, to Corporate IT through to end users. These various groups are all interested to see the potential benefits and possible pitfalls from Cloud Computing. Analyst firms have been quick to document both the current acceleration in Cloud Computing adoption and the potential market size in the years ahead. Daryl Plummer, analyst at Gartner says(1) that “Adoption of the cloud is rising rapidly – there’s no sign that it’s going back. We have to think about how [we will] deal with this, more than likely, you will be dealing with hundreds of cloud services [by 2015]”. Other analysts concur with a recent IDC survey for example finding that “savvy CIOs now see the cloud as being an extension of their sourcing strategies.”(2)

This interest has tended to create two distinct camps however. On the one hand cloud evangelists who seek to push cloud adoption immediately despite potential risks. On the other hand the conservative traditional technologists who seek to dismiss Cloud Computing as either the same as what has gone before, grossly over-hyped or even potentially dangerous to the organization itself.

We believe that Cloud Computing brings unprecedented benefits to organizations and will be the dominant way of acquiring technology in the future. In keeping with the highly important place Cloud Computing will have for organizations, we further believe that it is vital that leaders within business who are tasked with making technology acquisition decisions should be well appraised of both the benefits and the risks of Cloud Computing and should therefore be well placed to make good buying decisions.

This paper seeks to give decision makers some guidance so that they are well informed to ensure maximum cost savings, highest performance levels and strictest security from the infrastructure they ultimately invest in. This is important because, as we will show, the barriers to entry for a Cloud Computing vendor are relatively low. While this may mean there is more choice in the marketplace, it also introduces the potential for underfunded and low quality providers to enter the market and therefore organizations looking at moving to the cloud need to be especially cognisant of the various issues outlined in the following pages. …

Ben is the founder and managing director of Diversity Limited, a consultancy specializing in Cloud Computing/SaaS, Collaboration, Business strategy and user-centric design. More information on Ben and Diversity Limited can be found at http://diversity.net.nz

<Return to section navigation list>

Windows Azure Platform Appliance (WAPA), Hyper-V and Private Clouds

No significant articles today.

<Return to section navigation list>

Cloud Security and Governance

Richard D. Ackerman commented about The Clouds are Forming: The Legal Cloud Computing Association Announces its Formation and Web Presence to the EsquireTech blog on 12/31/2010:

Recognized leaders in legal cloud computing announced today the formation of the Legal Cloud Computing Association (LCCA), an organization whose purpose is to facilitate the rapid adoption of cloud computing technology within the legal profession, consistent with the highest standards of professionalism and ethical compliance.

The organization’s goal is to promote standards for cloud computing that are responsive to the needs of the legal profession and to enable lawyers to become aware of the benefits of computing technology through the development and distribution of education and informational resources.

The LCCA also announced the publication of its response to the ABA Commission on Ethics 20/20 Working Group with respect to the Commission’s September 10, 2010 call for comments on Client Confidentiality and the Use of Technology.

The group, consisting of Clio (Themis Solutions Inc.), DirectLaw, Inc., Rocket Matter, LLC and Total Attorneys, LLC, will cooperate with Bar Associations and other policy-forming bodies to release guidelines, standards, “best practices“, and educational resources relating to the use of cloud computing in the legal profession.

An informational website for the group: http://www.legalcloudcomputingassociation.org

You can see the rest of their press release at: http://www.legalcloudcomputingassociation.org/Home/industry-leaders-join-to-form-legal-cloud-computing-association

Of additional note is their response to the call for comments on client confidentiality and cloud computing in the legal profession: See, www.legalcloudcomputingassociation.org/Home/aba-ethics-20-20-response

My comments:

I think that the formation of a legal cloud computing association is not only timely, but incredibly necessary. All too often, the everyday practitioner ends up behind the ethics of a given technology and today’s way of practicing law requires vigilance in keeping up to date on the various developments in tech.

While its is often easy to employ a new technology, it does not mean that any given state bar association will understand it or make room for use of the new tech. This unavoidable gap in communications is readily evident in recent legal treatises on the issues. It simply may be that tech is moving so fast that there is no practical way for state bar associations to keep up with the developments. If this is the case, then any problems arising are something that can only be prevented by realtime communication between the tech-movers and the various bar associations. It is critically important that “cloud lawyers” have a voice in the state bar associations as well as within the tech community.

Having a voice in the tech community means that we will have ever-improving tools for our profession, movement toward an environmentally friendly practice, and better ways of enjoying solo practice. It also probably goes without saying that we also need to maintain our competitive edge on each other and for the benefit of the clients we advocate for.

Much thanks to the LCCA for starting this up and I wish them the absolute best coming into 2011 and beyond.

Related Articles

- ‘Cloud Computing’ v ‘On-Premise Solutions’ [Norman Feiner] (ecademy.com)

- Navigating the Cloudy Waters of Cloud Standards (itexpertvoice.com)

- Happy New Year from Cloud Expo 2011 New York! (java.sys-con.com)

- Cloud Computing: A Beautiful Gift of 21st Century (globalthoughtz.com)

- 9 Companies That Drove Cloud Computing in ’10: Cloud “ (gigaom.com)

- Cloud Computing in Libraries (therunninglibrarian.co.uk)

<Return to section navigation list>

Cloud Computing Events

• Ayende Rahien (@ayende) has scheduled a 90-minute Live Meeting presentation on European VAN on RavenDB on 11 January 2011:

I’ll be speaking about RavenDB in the EVAN [European VirtualAltNet(work)] on the 11th of January. Here are the details:

- Start Time: Tuesday, January 11, 2011 08:00 PM GMT*

- End Time: Tuesday, January 11, 2011 09:30 PM GMT

- Attendee URL: http://snipr.com/virtualaltnet (Live Meeting)

- VAN Calendar: http://www.virtualaltnet.com/Home/Calendar

(*) 8:00 PM UK, 9:00 PM Brussels, 3:00 PM EST, 1:00 PM MST and 12:00 Noon PST. For time zone arithmetic, you can consult the world clock site, use this time zone converter or every time zone.

Make sure that you don’t miss this excellent learning opportunity and we hope to see you there.

See http://ravendb.net/ for more details about Raven DB, which Ayende claims runs under Windows Azure. Click here for links to all his 2010 posts on Raven DB. I’ll post an update when I locate a tutorial for getting Raven DB running on the Windows Azure Production Fabric.

Vadim Kreynin posted Setting up RavenDB as an IIS application by pictures on 12/13/2010.

See The Windows Azure AppFabric Team reminded Azure developers about the MSDN Webcast: Windows Azure Boot Camp: Connecting with AppFabric (Level 200) to be held 2/7/2011 at 11:00 AM PST in the Windows Azure AppFabric: Access Control and Service Bus section above.

<Return to section navigation list>

Other Cloud Computing Platforms and Services

• Mario Meir-Huber asked (and answered) “Why is NoSQL more responsive than SQL-based systems?” in an introduction to his NoSQL – The Trend for Databases in the Cloud? post of 1/2/2011:

SQL seems to be somewhat old fashioned when it comes to scalable databases in the cloud. Non-relational databases (also called NoSQL) seem to take over in most data storage fields. But why do those databases seem to be more popular than the "classic" relational databases? Is it due to the fact that professors at universities "tortured" us with relational databases and therefore reduced our interest - the interest of the "new" generation for relational databases? Or are there some hard facts that tell us why relational databases are somewhat out of date?

I was at a user group meeting in Austria, Vienna, one month ago where I talked about NoSQL databases. The topic seemed to be of interest to a lot of people. However, we sat together for about four hours (my talk was planned for one hour only) discussing NoSQL versus SQL. I decided to summarize some of the ideas in a short article as this is useful for cloud computing.

If we look at what NoSQL offers, we'll find a numerous offers on NoSQL databases. Some of the most popular ones are MongoDB, Amazon Dynamo (Amazon SimpleDB), CouchDB, and Cassandra. Some people might think that non-relational databases might be for those people who are too "lazy" to do their complex business logic in the database. In fact, this logic reduces the performance of a system. If there is a need for a high-responsive and available system, SQL Databases might not be your best choice. But why is NoSQL more responsive than SQL-based systems? And why is there this saying that NoSQL allows better scalability than SQL-based systems? To understand this topic, we need to go back 10 years.

Dr. Eric A Brewer in his keynote "Symposium on Principles of Distributed Computing 2000" (Towards Robust Distributed Systems, 2000) addressed a problem that arises when we need high availability and scalability. This was the birth of the so-called "CAP Theorem." The CAP Theorem says that a distributed system can only achieve two out of the three states: "Consistency, Availability and Partition tolerance." This means:

- That every node in a distributed system should see the same data as all other nodes at the same time (consistency)

- That the failure of a node must not affect the availability of the system (availability)

- That the system stays tolerant to the loss of some messages

Nowadays when talking about databases we often use the term "ACID," but NoSQL is related to another term: BASE. Base stands for "Basically Available, Soft state, eventually consistent." If you want to go deeper into eventually consistent, read the post by Werner Vogels - Eventually Consistent revisited. BASE states that all updates that occur to a distributed system will be eventually consistent after a period of no updates. For distributed systems such as cloud-based systems, it is simply not possible to keep a system consistent at all times. This results in bad availability.

To understand eventually consistent, it might be helpful to look at how Facebook is handling their data. Facebook uses MySQL, which is a relational (SQL) database. However, they simply don't use such features as joins that MySQL offers them; Facebook joins data on the web server. You might think "What, are they crazy?" However, the problem is that the joins Facebook needs will sooner or later result in a very slow system. David Recordon, Manager at Facebook, stated that joins are better performing on the web server [1]. Facebook must know what is good performance or not as they will store some 50 petabytes of data by the end of 2010. Twitter, another social platform that needs to scale their platform, should also think about switching to NoSQL platforms. This will hopefully reduce the "fail whale" to a minimum [2].

Summing it up, NoSQL is relevant for applications that are in the need of large-scale global Internet applications. But are there any other benefits for NoSQL databases? Another benefit is that there are often no schemas associated with a table. This allows the database to adopt new business requirements. I've seen a lot of projects where the requirements changed over the years. As this is rather hard to handle with traditional databases, NoSQL allows easy adoption of such requirements. A good example of this is Amazon. Amazon stores a lot of data on their products. As they offer products of different types - such as personal computers, smartphones, music, home entertainment systems and books - they need a flexible database. This is a challenge for traditional databases. With NoSQL databases it's easy to implement some kind of inheritance hierarchy - just by calling the table "product" and letting every product have its own fields. Databases such as Amazon Dynamo handle this with key/value storage. If you want to dig deeper into Amazon Dynamo, read Eventually Consistent [3] by Werner Vogels.

Will there be some sort of "war" between NoSQL and SQL supporters like the one of REST versus SOAP? The answer is maybe. Who will win this case? As with SOAP versus REST, there won't be a winner or a loser. We will have more opportunities to choose our database systems in the future. For data warehousing and systems that require business intelligence to be in the database, SQL databases might be your choice. If you need high-responsive, scalable and flexible databases, NoSQL might be better for you.

Resources

Kristóf Kovács posted a Cassandra vs MongoDB vs CouchDB vs Redis vs Riak vs HBase comparison on 12/30/2010:

While SQL databases are insanely useful tools, their tyranny of ~15 years is coming to an end. And it was just time: I can't even count the things that were forced into relational databases, but never really fitted them.

But the differences between "NoSQL" databases are much bigger than it ever was between one SQL database and another. This means that it is a bigger responsibility on software architects to choose the appropriate one for a project right at the beginning.

In this light, here is a comparison of Cassandra, Mongodb, CouchDB, Redis, Riak and HBase:

CouchDB

- Written in: Erlang

- Main point: DB consistency, ease of use

- License: Apache

- Protocol: HTTP/REST

- Bi-directional (!) replication,

- continuous or ad-hoc,

- with conflict detection,

- thus, master-master replication. (!)

- MVCC - write operations do not block reads

- Previous versions of documents are available

- Crash-only (reliable) design

- Needs compacting from time to time

- Views: embedded map/reduce

- Formatting views: lists & shows

- Server-side document validation possible

- Authentication possible

- Real-time updates via _changes (!)

- Attachment handling

- thus, CouchApps (standalone js apps)

- jQuery library included

Best used: For accumulating, occasionally changing data, on which pre-defined queries are to be run. Places where versioning is important.

For example: CRM, CMS systems. Master-master replication is an especially interesting feature, allowing easy multi-site deployments.

Redis

- Written in: C/C++

- Main point: Blazing fast

- License: BSD

- Protocol: Telnet-like

- Disk-backed in-memory database,

- but since 2.0, it can swap to disk.

- Master-slave replication

- Simple keys and values,

- but complex operations like ZREVRANGEBYSCORE

- INCR & co (good for rate limiting or statistics)

- Has sets (also union/diff/inter)

- Has lists (also a queue; blocking pop)

- Has hashes (objects of multiple fields)

- Of all these databases, only Redis does transactions (!)

- Values can be set to expire (as in a cache)

- Sorted sets (high score table, good for range queries)

- Pub/Sub and WATCH on data changes (!)

Best used: For rapidly changing data with a foreseeable database size (should fit mostly in memory).

For example: Stock prices. Analytics. Real-time data collection. Real-time communication.

MongoDB

- Written in: C++

- Main point: Retains some friendly properties of SQL. (Query, index)

- License: AGPL (Drivers: Apache)

- Protocol: Custom, binary (BSON)

- Master/slave replication

- Queries are javascript expressions

- Run arbitrary javascript functions server-side

- Better update-in-place than CouchDB

- Sharding built-in

- Uses memory mapped files for data storage

- Performance over features

- After crash, it needs to repair tables

Best used: If you need dynamic queries. If you prefer to define indexes, not map/reduce functions. If you need good performance on a big DB. If you wanted CouchDB, but your data changes too much, filling up disks.

For example: For all things that you would do with MySQL or PostgreSQL, but having predefined columns really holds you back.

Cassandra

- Written in: Java

- Main point: Best of BigTable and Dynamo

- License: Apache

- Protocol: Custom, binary (Thrift)

- Tunable trade-offs for distribution and replication (N, R, W)

- Querying by column, range of keys

- BigTable-like features: columns, column families

- Writes are much faster than reads (!)

- Map/reduce possible with Apache Hadoop

- I admit being a bit biased against it, because of the bloat and complexity it has partly because of Java (configuration, seeing exceptions, etc)

Best used: If you're in love with BigTable. :) When you write more than you read (logging). If every component of the system must be in Java. ("No one gets fired for choosing Apache's stuff.")

For example: Banking, financial industry

Riak

- Written in: Erlang & C, some Javascript

- Main point: Fault tolerance

- License: Apache

- Protocol: HTTP/REST

- Tunable trade-offs for distribution and replication (N, R, W)

- Pre- and post-commit hooks,

- for validation and security.

- Built-in full-text search

- Map/reduce in javascript or Erlang

- Comes in "open source" and "enterprise" editions

Best used: If you want something Cassandra-like (Dynamo-like), but no way you're gonna deal with the bloat and complexity. If you need very good single-site scalability, availability and fault-tolerance, but you're ready to pay for multi-site replication.

For example: Point-of-sales data collection. Factory control systems. Places where even seconds of downtime hurt.

HBase

(With the help of ghshephard)

- Written in: Java

- Main point: Billions of rows X millions of columns

- License: Apache

- Protocol: HTTP/REST (also Thrift)

- Modeled after BigTable

- Map/reduce with Hadoop

- Query predicate push down via server side scan and get filters

- Optimizations for real time queries

- A high performance Thrift gateway

- HTTP supports XML, Protobuf, and binary

- Cascading, hive, and pig source and sink modules

- Jruby-based (JIRB) shell

- No single point of failure

- Rolling restart for configuration changes and minor upgrades

- Random access performance is like MySQL

Best used: Use it when you need random, realtime read/write access to your Big Data.

For example: Facebook Messaging Database (more general example coming soon)

Of course, all systems have much more features than what's listed here. I only wanted to list the key points that I base my decisions on. Also, development of all are very fast, so things are bound to change. I'll do my best to keep this list updated.

There’s a lengthy discussion of Kristóf’s post on YCombinator’s Hacker News.

<Return to section navigation list>

0 comments:

Post a Comment