Windows Azure and Cloud Computing Posts for 1/13/2011+

| A compendium of Windows Azure, Windows Azure Platform Appliance, SQL Azure Database, AppFabric and other cloud-computing articles. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database and Reporting

- Marketplace DataMarket and OData

- Windows Azure AppFabric: Access Control and Service Bus

- Windows Azure Virtual Network, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch

- Windows Azure Infrastructure

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Azure Blob, Drive, Table and Queue Services

Panagiotis Kefalidis described Patterns: Windows Azure – Upgrading your table storage schema without disrupting your service in an 8/19/2010 post (missed when published):

In general, there are two kind of updates you’ll mainly perform on Windows Azure. One of them is changing your application’s logic (or so called business logic) e.g. the way you handle/read queues, or how you process data or even protocol updates etc and the other is schema updates/changes. I’m not referring to SQL Azure schema changes, which is a different scenario and approach but in Table storage schema changes and to be more precise only on specific entity types because, as you already now, Table storage is schema-less. As in In-Place upgrades, the same logic applies here too. Introduce a hybrid version, which handles both the new and the old version of your entity (newly introduced properties) and then proceed to your “final” version which handles the new version of your entities (and properties) only. It’s a very easy technique and I’m explaining how to add new properties and of course remove although it’s a less likely scenario.

During my presentation at Microsoft DevDays “Make Web not War”, I’ve created an example using a Weather service and an entity called WeatherEntry, so let’s use it. My class looks like this:

[DataServiceKey("PartitionKey","RowKey")] public class WeatherEntry : TableServiceEntity { public WeatherEntry() { PartitionKey = "athgr"; RowKey = string.Format("{0:10}_{1}", DateTime.MaxValue.Ticks - DateTime.Now.Ticks, Guid.NewGuid()); } public DateTime TimeOfCapture{ get; set; } public string Temperature{ get; set; } }There is nothing special at this class. I use two custom properties, TimeOfCapture and Temperature and I’m going to make small change and I’ll add “SchemaVersion” which is needed to achieve the functionality I want. When I want to create a new entry, all I do now is instantiate a WeatherEntry, set the values and use a helper method called AddEntry to persist my changes.

public void AddEntry(string temperature, DateTime timeofc) { this.AddObject("WeatherData", new WeatherEntry { TimeOfCapture = timeofc, Temperature = temperature, SchemaVersion = "1.0" }); this.SaveChanges(); }I’m using TableServiceContext from the newly released StorageClient and methods like UpdateObject, DeleteObject, AddObject etc, exist in my data service context where AddEntry helper method relies. At the moment my Table schema looks like this:

It’s pretty obvious there is no special handling during saving of my entities but this is about to change in my hybrid version.The hybrid

I did some changes at my base class and I’ve added a new property. It’s holding the temperature sample area, in my case Spata where Athens International Airport is.

My class looks like this now:

[DataServiceKey("PartitionKey","RowKey")] public class WeatherEntry : TableServiceEntity { public WeatherEntry() { PartitionKey = "athgr"; RowKey = string.Format("{0:10}_{1}", DateTime.MaxValue.Ticks - DateTime.Now.Ticks, Guid.NewGuid()); } public DateTime TimeOfCapture{ get; set; } public string Temperature{ get; set; } public string SampleArea{ get; set; } public string SchemaVersion{ get; set;} }So, this hybrid client has somehow to handle entities from version 1 and entities from version 2 because my schema is already on version 2. How do you do that? The main idea is that you retrieve an entity from table storage and you check if SampleArea and SchemaVersion have a value. If they don’t, put a default value and save them. In my case my schema version number has to be 1.5 as this is the default schema number for this hybrid solution. One key point to this procedure is before you upgrade your client to this hybrid, you roll-out an update enabling “IgnoreMissingProperties” flag on your TableServiceContext. If IgnoreMissingProperties is true, when a version 1 client is trying to access your entities which are on version 2 and have those new properties, it WON’T raise an exception and it will just ignore them.

var account = CloudStorageAccount.FromConfigurationSetting("DataConnectionString"); var context = new WeatherServiceContext(account.TableEndpoint.ToString(), account.Credentials); /* Ignore missing properties on my entities */ context.IgnoreMissingProperties = true;Remember, you have to roll-out an update BEFORE you upgrade to this hybrid.

Whenever I’m updating an entity to Table Storage, I’m checking its version Schema and if it’s not “1.5” I update it and put a default value on SampleArea:

public void UpdateEntry(WeatherEntry wEntry) { if (wEntry.SchemaVersion.Equals("1.0")) { /* If schema version is 1.0, update it to 1.5 * and set a default value on SampleArea */ wEntry.SchemaVersion = "1.5"; wEntry.SampleArea = "Spata"; } /* Put some try catch here to * catch concurrency exceptions */ this.UpdateObject(wEntry); this.SaveChanges(); }My schema now looks like this. Notice that both versions of my entities co-exist and are handled just fine by my application.

Upgrading to version 2.0

Upgrading to version 2.0 is now easy. All you have to do is change the default schema number when you create a new entity to version 2.0 and of course update your “UpdateEntry” helper method to check if version is 1.5 and update the value to 2.0.

this.AddObject("WeatherData", new WeatherEntry { TimeOfCapture = timeofc, Temperature = temperature, SchemaVersion = "2.0" });and

public void UpdateEntry(WeatherEntry wEntry) { if (wEntry.SchemaVersion.Equals("1.5")) { /* If schema is version 1.5 it already has a default value, all we have to do is update schema version so our system won't ignore the default value */ wEntry.SchemaVersion = "2.0"; } /* Put some try catch here to * catch concurrency exceptions */ this.UpdateObject(wEntry); this.SaveChanges(); }Whenever you retrieve a value from Table Storage, you have to check if it’s on version 2.0. If it is, you can safely use its SampleArea value which is not the default any more. That’s because schema version is changed when you actually call “UpdateEntry” which means you had the chance to change SampleArea to a non-default value. But if it’s on version 1.5 you have to ignore it or update it to a new, correct value.

If you do want to use the default value anyway, you can create a temporary worker role which will scan the whole table and update all of your schema version numbers to 2.0.

How about when you remove properties

That’s a really easy modification. If you remove a property, you can use a SaveChangesOption called ReplaceOnUpdate during SaveChanges() which will override your entity with the new schema. Don’t forget to update your schema version number to something unique and put some checks into your application to avoid failures when trying to read non-existent properties due to newer schema version.

this.SaveChanges(SaveChangesOptions.ReplaceOnUpdate);

The Windows Management and Scripting Blog published Introduction to Table Storage in Windows and SQL Azure in January 2011:

In addition to SQL Azure, Windows Azure features four persistent storage forms Tables, Queues , Blobs and Drives. In this article we will focus on Tables.

Table Storage

Tables are a very fascinating new storage method offered in Windows Azure and are Microsoft’s Azure answer to Amazon SimpleDB. The SQL Azure database offers a wealth of features a modern database might be expected to provide. But for many purposes it is overkill. In the past nearly any structured data had to go into the database and incur the performance penalty that entailed. With Azure Tables data which has a relatively simple structure (below we use the example of a Movies data, which is a listing of Movies with different attributes such as title, category, date etc but is not a very complex dataset).

Tables offer structured data storage for data that has relatively simple relationships. Data is stored in rows and as tables are less structured and don’t have the overhead of a full database it is massively scalable and offers very high performance. The interface to Azure Tables is the familiar .NET suite of classes, LINQ, and REST.

To make an Azure Table first make a storage service in the Windows Azure Developer Portal, then make a storage account and from make tables. Each table is scoped to its storage account so different tables with the same name can be used but scoped to different storage accounts.

Table Data Model

Tables are composed of rows and columns. For the purposes of the Azure Table Data Model, rows are entities and columns are properties. For an Entity a set of Properties can be defined but several properties are mandatory – PartitionKey, RowKey and TimeStamp. PartitionKey and RowKey can be thought of as a clustered index which uniquely identifies an entity and defines the sort order. TimeStamp is a read-only property.

Partitions

Table partitions can be thought of as units of scale within Windows Azure which are used for load balancing. Tables are partitioned based on the PartitionKey, all entities on the same PartitionKey will be served by a single server. Therefore selection of an appropriate PartitionKey is central to achieving scalability and higher throughput on Windows Azure. It is vital to note that Azure implements throttling of an account when the resource utilization is very high, appropriate partitioning greatly reduces the potential for this happening by allowing the load to be distributed over different servers. The RowKey provides uniqueness within a single partition.

Partitions can be thought of as a higher level categories for the data with RowKeys are lower level data details. For example, for a ‘Movies’ table the PartitionKey could be the category of the movie such as comedy or sci-fi, RowKey could be the movie title (hopefully the combination of category and title would ensure uniqueness). Under load the table cold be split onto different servers based on the category.

For a write intensive scenario such as logging the PartitionKey would normally be a timestamp. In this instance there is a problem in partitioning as the write will also append to the bottom of the Table and partitioning based on a range will not be efficient as the final partition will always be the only active partition. The recommended solution to this is to add a prefix to the timestamp to ensure that the latest write operations are sent to different partitions.

In database design, tables should be split based on the data type. For example in an retailer’ s database, data of the type ‘customer’ with fields such as ‘name’, ‘address’ etc should be in a separate table to the ‘orders’ which only contains data on orders such as ‘product’, ‘order_date’ etc. But in Azure Tables these could both be efficiently stored in the same table as no space would be taken up by the empty fields (such as ‘order_date’ for a ‘customer’). To differentiate between the two types of data a ‘Kind’ property (column) can be added to each entity (row) which is in effect the table name if they were separated into two tables.

Table Operations

The operations are relatively similar to those of a conventional database – tables (which are analogous to the database) can be made, queried and deleted. Entities (rows) can have insert operations performed, delete operations, queries, and updated. There are two methods of update – Merge and Replace. Merge allows a partial update of the entity, thus if some of the properties of the entity are not given with the update they would not be updated (only the properties provided in the update are updated). Replace updates all the properties of an entity, if a property is not provided in the update it is removed from the entity. A newly introduced feature is Entity Group Transaction which is a transaction over a single partition.

Continuation Tokens

When a single entity(row is queried) the result is returned as with a database query. But when a range is requested Azure Tables can only return 1000 rows in a result set. If the result set is less than 1000 rows that result set is returned, if the result set is larger than 999 , the first 1000 rows of the result set are return together with a Continuation Token. The Table is then re-queried with the Continuation Token passed back to the Table until the query completes.

Continuation Tokens are returned for all results where the results is greater than one. They will also be returned if a query takes longer than 5 seconds (this is the maximum allowed by Azure after which the results are returned with a continuation token and the query must be rerun). Furthermore, continuation tokens are returned when the end of a partition range boundary is hit.Optimizing Queries

Querying a table with a range is a very serial process, with result sets being sent to the client and continuation tokens being sent back for processing until the query completes. This structure doe not allow for any parallel processing. To take advantage of parallel processing the query should be split into ranges based on the PartitionKey, for example instead of

[cc lang='sql' ]Select * from Movies where Rating > 4[/cc]

Use

[cc lang='sql' ]

Select * from Movies where PartitionKey >= ‘A’ and PartitionKey < ‘D’ and Rating > 4

Select * from Movies where PartitionKey >= ‘D’ and PartitionKey < ‘G’ and Rating > 4[/cc]

This enables the query to run in a parallel manner.

Similar to SQL Server, views can also be made to handle well loved queries.

Be careful using ‘OR’ in queries. SQL Azure Tables do not do any optimization on these queries. It is optimal to split the query into several separate queries.

Entity Group Transactions

EGT’s offer transaction-like operations on an Azure table. Up to 100 insert/update/delete commands can be performed in a single transaction provided the payload is under 4MB.

Related posts

<Return to section navigation list>

SQL Azure Database and Reporting

Intertech reported on 1/13/2011 the availability of an Intro to Azure Data Synch Recording video segment:

A big thanks to Liam Cavanagh from the SQL Azure team for presenting to our user group yesterday. To view the recording click here. To find out more about the Windows Azure User Group, click here. We encourage you to register for the group (free) and you will be notified of upcoming events. We also raffle off $1,000's of products from our sponsors. Hope to see you at an upcoming meeting.

Steve Yi [pictured below] posted Real-World SQL Azure: Interview with James Chen, Chief Technology Officer at LinkShare Labs on 1/12/2011:

As part of the Real-World SQL Azure series, we talked to James Chen, the Chief Technology Officer at LinkShare Labs, a division of Rakuten, about how his company is taking advantage of the Windows Azure platform, and particularly Microsoft SQL Azure, to power its new LinkShare Lightning application.

MSDN: Tell us about LinkShare. What services do you offer and what is your corporate vision?

Chen: LinkShare offers online marketing services, such as search engine marketing, lead generation, and affiliate marketing to connect advertisers with publishers, to help them both profitably grow their revenue. Going forward, our vision is to provide a single, flexible performance-marketing platform for the world. It will be the bridge from any publisher to any advertiser in any country. That means, for example, that a publisher in the U.S. could get compensated for lead generation in Japan.

MSDN: What differentiates LinkShare in the online advertising marketplace?

Chen: Unlike a generic ad network, LinkShare gets paid based on conversions—actual completed sales—not just the number of ad impressions or users’ clicks. But more than that, what differentiates us from competitors is that we focus on big, name-brand advertisers, and we offer expert consultative services along with our advanced patented technologies.

MSDN: What prompted LinkShare to start looking at cloud-based solutions?

Chen: It comes back to our vision. We wanted to provide a truly global system so that we could develop advertising applications that can be used anywhere. Behind this goal were two drivers: performance and cost. We needed a technology platform to build and run our applications on that could scale cost-effectively and that would require minimal development effort and support global deployment. Only a cloud platform—and cloud-based databases in particular—could meet those criteria. As a first step, we wanted to build and deploy our LinkShare Lightning cost-per-action marketing solution as a cloud-based application.

MSDN: Did you consider any cloud platforms besides the Windows Azure platform?

Chen: We looked at the other two leading providers. The first one would have required too much investment to make it productive for our developers. What made Windows Azure platform the clear winner over the second one is that Microsoft is a world-class provider of ‘platform as a service.’ Additionally, the commitment of Microsoft to cloud innovation and feature development is very important to us. Every quarter, new Windows Azure platform tools come out to support easy development, whereas with some competitors’ platforms, you have to do a lot of the work yourself by piecing together open source solutions to complete your development stack.

MSDN: LinkShare Lightning is highly data intensive. How does SQL Azure meet your database needs?

Chen: SQL Azure offers cost-effective, on-demand scalability. We have peak demand during the holiday shopping season that’s 10 times higher the rest of the year. We don’t want to add hardware for extra seasonal capacity, or change our software to handle the load for a short period of time; and with SQL Azure, we don’t have to. The best part of using SQL Azure is that we know our application is going to work no matter how big we scale it out.

MSDN: What are your plans for the platform going forward, and what benefits do you expect?

Chen: In order for us to scale our business globally and also profitably, we need a solution like the Windows Azure platform. Over time, this will save tens of millions of dollars a year and enable us to expand rapidly.

This main benefit of the platform is that we really don’t have to manage Windows Azure or SQL Azure in the traditional way that on-premises software and data centers require. I think almost all of the software development shops in the world will move in the direction we’re going—we'll handle development full time, and everything else will be taken care of for us in the cloud. When it comes to providing the best ‘platform as a service’ to developers, I think Microsoft is the visionary leader by far.

Read the full story at: http://www.microsoft.com/casestudies/casestudy.aspx?casestudyid=4000008989

To learn more, visit: www.sqlazure.com

The Windows Management and Scripting blog published SQL Azure migration to the cloud and back in January 2011:

As SQL Azure gets more well loved and widely used, users will have to develop reliable processes for migrating data to the cloud or bringing it back, either to local servers or a data warehouse on-premises. In more complex scenarios, some companies need to synchronize the data between local and remote Azure databases.

In this article, the first in a two-part series on SQL Azure migration and synchronization, I will examine several options for moving data. The second will focus on more complex scenarios in which ongoing data synchronization is needed.

For one-directional data movement, use one of the following technologies – SQL Server Import and Export Wizard, the bcp utility, SQL Server Integration Services (SSIS), or a community software called SQL Azure Migration Wizard. Let’s discuss these in detail.

SQL Server Import and Export Wizard.

This utility in general works fantastic for a one-time data migration, or for an occasional data refresh if you don’t mind doing it manually. The interface is simple — you run the wizard, select the tables you want to migrate, determine their destinations and perhaps tweak column mapping. You can run it from SQL Server Management Studio and connect to SQL Azure as long as you are using the SQL Server 2008 R2 client tools. Running it is a small tough because you will not see SQL Azure as an option for data source or destination. Instead, select the “.NET Framework Data Provider for SQL Server” option and then configure the properties dialog by supplying SQL Azure Server, username and password as shown in the dialog box in Figure 1.

Figure 1

If your data is sensitive, make sure to set the Encrypt option to “Right” so that your data goes over the Internet encrypted. You may find that your wizard might fail because it scripts tables without indexes by default. In SQL Azure it is required for a table to have a clustered index. If you are making a new table, the wizard doesn’t make any indexes, and data inserts fail. Therefore, you have to either make tables with a clustered index on the destination first, or in the wizard click on “Edit Mappings” for each table and manually modify the “MAKE TABLE” script to make a primary key as well.

Aside from this, my experiences with the wizard and SQL Azure haven’t been very excellent. Small tables migrated OK, but I was getting timeouts on larger tables. You get very small control over the handling of failures and you cannot set the batch size. For that reason, I recommend using SSIS over the wizard, especially if you have large tables and need more control over the migration process.

SQL Server Integration Services. Using SSIS with Azure is pretty straightforward, as long as you configure your connection in the way I described above. Also, you need to have the R2 version of SSIS to connect to SQL Azure. There are several differences from working with the SQL Server back end. Data transfers are much slower because you are sending data over the Internet, and also because the disk I/O in Azure in many cases doesn’t measure up to high-end database servers. You should encrypt the data, but that slows down data transfers as well.

Like with the wizard, I experienced frequent timeouts with data uploads. Keep in mind that your package might fail if there is a connectivity blip. Therefore, it might make sense to design the packages in a way so that when you restart them, they resume the work at the point of failure, as opposed to restarting all table migrations.

One way of doing that is to implement a logging table that keeps track of what tables have been uploaded. SSIS is the best tool for the job if you need to implement workflow logic, use transformations or send over data from flat files. If you use SSIS, make sure that in the Data Flow task you configure the ADO.NET destination to use the “Use Bulk Insert when possible” option. That allows you to use bulk load capabilities, and in my experience, using that option made data transfers run about four times quicker. Also, you may consider changing the default Batch Size to 1,000 or so.

If you lose the connection during data upload, you will not have to start over as you would with a batch size of 0. The data would be committed to the server in batches of 1,000, and you might be able to resume transfer without starting over, as long as you can start sending data from the point where the package failed.

The bcp utility. Another option for uploading or downloading data is using the bcp utility. There is a learning curve associated with using this command-line utility. But if you are comfortable with it, there is a compelling reason for using it — in general, bcp is the fastest way to load data. In most cases, it outperforms Data Transformation Services or SSIS. Other than that, using bcp with Azure works the same as it does when used against local servers.

SQL Azure Migration Wizard.

This tool (SQLAzureMW for small) is an open source utility that can help with your SQL Azure migration. It works really well, and I found it to be much more reliable and flexible than the wizard built into SQL Server Management Studio. You can get it from the CodePlex website, including the source code. The wizard supports the migration of many types of database objects, as you can see in Figure 2.

Figure 2

Once you select the objects you want to migrate, SQLAzureMW scripts out the objects and modifies them behind the scenes to make the syntax compatible with SQL Azure syntax. Then it uses the bcp utility and generates a DAT file for each table, and that contains the data in binary format, as in Figure 3.

Figure 3

Once SQLAzureMW connects to the SQL Azure server, it recreates the objects from generated scripts. Finally, it runs the bcp utility to upload data to the cloud, as seen in Figure 4.

Figure 4

SQLAzureMW provides a user-friendly interface and a lot of options for migrating your data and other objects. Keep in mind though that since it generates a data file for each table, you need to make sure you have sufficient space on the disk. You might still be better off using SSIS for very large tables or for using its workflow capabilities.

Here the related SQL Azure data synchronization post:

In my previous article, “SQL Azure migration: To the cloud and back again,” I discussed the options for moving data between local SQL Server instances and SQL Azure. In this article, we will look at more complex data exchange scenarios, including data synchronization and refreshing data in SQL Azure while maintaining availability.

Implementing data synchronization typically requires some up-front analysis to determine the best process and most suitable tools and technologies for the job. Among other things, you need to consider the number of tables to synchronize, required refresh frequency (this could differ greatly among tables in the same database), application uptime requirements and size of the tables. In general, the larger the tables are and the higher the required uptime, the more work is required on your part to implement data synchronization so that it doesn’t interfere with the applications using the database.

One of the simplest approaches to data synchronization is to make staging tables in the destination database and load them with data from the source database. In SQL Azure, do this using SQL Server Integration Services or the bcp utility, as discussed in the previous article. Once the data is in staging tables, run a series of T-SQL statements to compare the data between staging and “master” tables and get them in sync. Here is a simple sequence I’ve been using successfully on many projects:

- Use DELETE FROM command to join the staging and the master table and delete all rows from the master table that have no match in the staging table.

- Use UPDATE FROM command to join the staging table and the master table and update the records in the master table.

- Use INSERT command and insert into the master tables the rows that exist only in the staging table.

If you are using SQL Server 2008 or newer, utilize the MERGE statement to combine the second and third part into a single command. The MERGE statement is nicknamed UPSERTbecause it combines the ability to insert new rows and update existing rows in a single statement. So, it lends itself nicely for data synchronization.

Using the technique I described works well mainly for small to medium-sized tables because the table will be temporarily locked and therefore unavailable while these updates are taking place. But in my experience, it is a minor disruption that can be mitigated by synchronizing during low database usage times or by breaking up the updates into batches. The disadvantage here is that you have to implement custom code for each table.

I’ve also had success with a slightly modified approach, which takes away the need to implement a set of scripts for each table. After you load the staging tables, do the sp_rename stored procedure and swap the names of the master and the staging table. This can be done very quickly, even on many tables. Run each table swap inside of a TRY/CATCH block and roll back the transaction if the swap does not succeed.

Another technique many companies use to refresh data and at the same time keep it available is to simply maintain two copies of the database. One database is used by applications and the other one is used by the load process to truncate and reload tables. Once the load is done, you can rename both databases and swap them so that the one with the fresh data becomes the current database. SQL Azure initially didn’t support renaming of databases, but that feature works now. As an alternative to renaming, store a connection string pointing to a SQL Azure database in a local database and have your data load process modify the connection string and point to the refreshed database, that is, if the data load completed successfully.

Another option is to use the Microsoft Sync Framework, a platform for synchronizing databases, files, folders and other items. It allows you to programmatically synchronize database via ADO.NET providers and as of the 2.1 version, you can use the framework to synchronize between SQL Server and SQL Azure. Describing all the features and capabilities of the Sync Framework is beyond the scope of this article. For more information visit the Microsoft Sync Framework Developer Center. One of the advantages of the framework is that once you get up to speed with the basics, you can write applications that give you full control over SQL Azure data synchronization. Among other things, you will be able to utilize its features, such as custom conflict detection and resolution, and change tracking. Both of those features come in handy if you need to implement bidirectional data synchronization.

Microsoft developers used the Sync Framework to develop and release an application called Sync Framework Power Pack for SQL Azure. You can download and install this application, but first install the Microsoft Sync Framework SDK. The application runs as a wizard. After you specify the local and the SQL Azure database, select the tables you want to synchronize. You can also specify how you want to handle the situation in which the same row is updated in both databases. Figure 1 illustrates how you can choose whether the local database or SQL Azure database wins the resolution.

Figure 1

In the last step of the wizard, specify whether it should make a 1 GB or a 10 GB database in SQL Azure. The tool makes the specified database in SQL Azure and sets up both databases with objects needed for synchronization. It will make INSERT/UPDATE/DELETE triggers on each synchronized table. Also, for each table it will make another table with the “_tracking” suffix. It also makes a couple of database configuration tables called scope_config and scope_info. As data gets modified in either database, the triggers update the tracking tables with the details that will be used by the Sync Framework when it’s time to synchronize.

The wizard also makes a SQL Agent job that kicks off the sync executable passing the appropriate parameters. All you need to do is schedule the job to synchronize as often as needed. The tool is not super quick, but it works honestly well and, in many cases, it can handle your synchronization requirements. The largest drawback is that when you run the wizard, it insists on making a new SQL Azure database, and it fails if you specify an existing database. So, if you ever want to modify what tables should be synchronized, drop the Azure database and start over.

Related posts

<Return to section navigation list>

MarketPlace DataMarket and OData

Health 2.0 LLC offered a $5,000 prize, a ticket to the Health 2.0 2.0 San Diego conference, and a live on-stage demo in an Analyze This post of January 2011:

Analyze the free medical record dataset from Microsoft Azure DataMarket and Practice Fusion to answer the biggest healthcare questions in the US today. Use the de-identified data however you’d like: visualize healthcare trends, find adverse drug reactions, chart chronic disease, mashup the results with other Azure data or build an app. Basically, pick a pressing healthcare question and answer it using the tools provided by Microsoft and Practice Fusion.

We’re looking for the wow factor. Focus on data visualization to create healthcare studies and powerful apps built around the free dataset.

Bonus points for:

- Solutions that can be used to deliver better care, improve public health

- Ideas that can be directly applied by patients or doctors

- Entries related to chronic disease

- Applications that are accessible from anywhere by anyone (i.e. non-platform-specific applications that would work on any mobile device, generic HTML, etc.)

- Utilizing bleeding/cutting-edge technology (i.e. HTML5, GPS-based/location-aware tagging, social media, etc.)

- Plain English – translation of complex medical findings or technology into something easily understood

Terms and Conditions

Please submit your final challenge entry with three elements:

- One page write-up about the health question you asked and the solution you created

- Instructions for how we can demo the app, visualization or other tool you created

- A short DIY video showcasing your entry

Deadline for Submissions: February 28, 2011

-

Prize

$5,000 + ticket to Health 2.0 San Diego + live demo on stage -

Challenger

Microsoft Azure DataMarket & Practice Fusion -

Additional Resources

Visit the Microsoft Azure DataMarket to download the free Practice Fusion medical research dataset online. This HIPAA-compliant, clinical data contains 5,000 records including patient vitals, diagnoses, medications, prescriptions, immunizations and allergies.

While in the DataMarket, you can browse over 70 other trusted commercial and premium public domain data to potentially including in your entry. You can also visit www.practicefusion.com/research to learn more about the power of clinical data.

-

Judges

- Sudhir Hasbe, Sr. Product Manager, Microsoft

- Ryan Howard, CEO, Practice Fusion

- Matthew Douglass, VP of Product Development, Practice Fusion

-

Contact Information

healthchallenge@practicefusion.com

You can preview a simple analytic demo at Practice Solutions’ Prescription Index: Top 20 Prescriptions by Specialty page.

<Return to section navigation list>

Windows Azure AppFabric: Access Control and Service Bus

Vittorio Bertocci (@vibronet) reported New WIF Runtime EULA Allows for Redistribution! And Since I’m at it, Let’s Talk Windows Azure Startup Tasks in a 1/13/2011 post (images are missing due to MSDN blogs being down for maintenance):

Back in December one pretty important piece of news didn’t get as much air time as it would have deserved: we recently changed the WIF SDK EULA so that you can now freely (and happily) include & redistribute the WIF runtime (& samples) with your applications. Isn’t that awesome?

This came back to mind as I was presenting FabrikamShipping SaaS to some internal folks yesterday. As you recall, Monday we released the Windows Azure SDK 1.3-compliant version of the source code, where we take advantage of some of the cool new features of the platform.

One such new feature is the chance of telling Windows Azure to run some startup tasks as it brings instances to life, typically for preparing the execution environment, installing software your application needs, and so on. Can you already see where I am going with this?

From the very first version of the WIF on Windows Azure guide, one of the first problems we had to solve was to make the WIF runtime available to applications running in a Windows Azure role. The solution so far has been to set the copy local property of the WIF assembly reference to true, so that it would end up in the application package: but now that you have Startup Tasks in Windows Azure, you also have the option of installing the WIF runtime! That’s pretty neat, uh?

<Return to section navigation list>

Windows Azure Virtual Network, Connect, RDP and CDN

No significant articles today.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

Scott Densmore announced Windows Azure & Windows Phone 7 Hands On Labs Available on 1/13/2011:

After all the work we have done to ship the last 3 guides, we decided to go the extra mile and create some Hands On Labs (HOL) for each of the guides. These HOLs will guide you through development of the key features of the apps distributed with the books. Go get them today and learn even more about building Windows Azure and Windows Phone 7 applications.

Moving Applications to the Cloud

- Developing Applications for the Cloud

- Windows Phone 7 Developer Guide

Feed back always welcome.

Note:

These HOLs are using the Windows Azure 1.2 SDK. We are working on updating the content and HOLs to the Windows Azure 1.3 SDK. These will be available on CodePlex as soon as we get them done. [Emphasis added.]

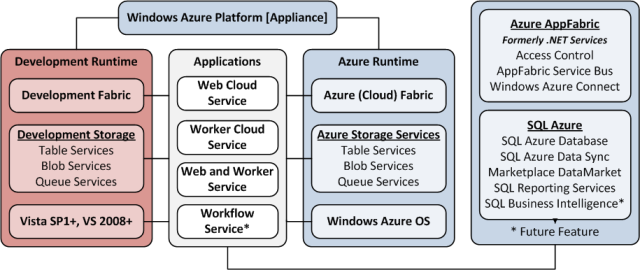

Manu Cohen-Yashur and David S. Platt started a Channel9 Windows Azure Jump Start (#) series with Windows Azure Jump Start (01): Windows Azure Overview on 1/12/2011:

Building Cloud Applications with the Windows Azure Platform

This session provides an engaging overview of why the Cloud is such a popular choice for applications, and how the Windows Azure Platform is the best alternative for you and your team.The Windows Azure Jump Start video series is for all architects and developers interested in designing, developing and delivering cloud-based applications leveraging the Windows Azure Platform. The overall target of this course is to help development teams make the right decisions with regard to cloud technology, the Azure environment and application lifecycle, Storage options, Diagnostics, Security and Scalability. The course is based on the Windows Azure Platform Training Course and taught by Microsoft Press authors, Manu Cohen-Yashur and David S. Platt.

As you’re enjoying the videos, you can access the content for this class, student files and links to demo code at the Windows Azure Born To Learn Forum.

Access to Windows Azure for the labs:

● Free Windows Azure Platform 30-day pass for US-based students (use promo code: MSL001)

● MSDN Subscribers: http://msdn.microsoft.com/subscriptions/ee461076.aspx

● Free access for CPLS, Partners: https://partner.microsoft.com/40118760

● Options for all others: http://www.microsoft.com/windowsazure/offersLearn more about the Windows Azure Platform through training and certification options from Microsoft Learning.

Following are links to all 12 current members of the series:

Session 01: Windows Azure Overview

Session 02: Introduction to Compute

Session 03: Windows Azure Lifecycle, Part 1

Session 04: Windows Azure Lifecycle, Part 2

Session 05: Windows Azure Storage, Part 1

Session 06: Windows Azure Storage, Part 2

Session 07: Introduction to SQL Azure

Session 08: Windows Azure Diagnostics

Session 09: Windows Azure Security, Part 1

Session 10: Windows Azure Security, Part 2

Session 11: Scalability, Caching & Elasticity, Part 1

Session 12: Scalability, Caching & Elasticity, Part 2, and Q&A

David Aiken (@TheDavidAiken) described how to Enable PowerShell Remoting on Windows Azure in a 10/12/2011 post:

If it takes you more than 1 line of code, you aren’t doing it right!

I started writing this post a week ago in response to a customer request, so excited I was I even tweeted about writing a post on PowerShell. My Bad – because right after I tweeted I hit a snag – which is worthy of a whole post on its own. Ignoring the snag right now – let me tell you how to do the above.

First – a BIG shout out to the PowerShell team at Microsoft who answered my endless questions. Also a big shout out to Lee Holmes – who once again saved my bacon.

Anyway…

At long last I’ve had a chance to “play” around with the new Windows Azure features we announced at PDC 2010. I thought it would be fun to enable PowerShell Remoting in Windows Azure Roles. (Note I’m talking about Web and Worker roles here – not VM Role).

With new features such as remote desktop, startup tasks and Azure Connect – setting up PowerShell should be easy.

First, I’m going to assume you have worked through the notes/tutorials/stuff to enable Azure Connect & Remote Desktop – that way this post stays within the realms of being relatively small.

Here is our checklist:

- Make sure the OS Family in the ServiceConfiguration.cscfg is set to “2” to enable R2.

- Create a user account so you can connect to the server.

- Add a startup task to open the firewall port.

- Add the Role to Azure Connect.

- Execute Lee’s magic script to enable PowerShell Remoting

PowerShell v2 is the version you need to do remoting. Server 2008 R2 contains PowerShell v2 in the box. We can tell Windows Azure to use an R2 server by changing the OSFamily in the ServiceCOnfiguration.cscfg to 2 as shown below:

<ServiceConfiguration serviceName="AzureMemcachedTest" xmlns="http://schemas.microsoft.com/ServiceHosting/2008/10/ServiceConfiguration" osFamily="2" osVersion="*">Next step is to create a user account so that you can actually connect to the server. The easiest way to do this is to enable remote desktop, which creates a user on the host.

Now to make sure the firewall port is open we will need. I created a .cmd file containing the following 2 commands. The first opens the firewall for WINRM, the second for ping. This was added in the root folder of my role project.

netsh advfirewall firewall add rule name="Windows Remote Management (HTTP-In)" dir=in action=allow service=any enable=yes profile=any localport=5985 protocol=tcpnetsh advfirewall firewall add rule name="ICMPv6 echo" dir=in action=allow enable=yes protocol=icmpv6:128,anyThen I added a startup task to ServiceDefinition.csdef:

<Startup><Task commandLine="EnablePowershellRemoting.cmd" executionContext="elevated" taskType="foreground"/></Startup>Next add the Role to Azure Connect. I’ll assume you know how to do this.

Now deploy your service. Once deployed, do the final step to connect the Azure connect network, and make sure the agent is installed on your computer.

The final step is to “turn on” PowerShell Remoting. For numerous reasons, you cannot just run a startup task with “Enable-PSRemoting” as the command. The biggest reason is that startup tasks run as local system and thus cannot actually complete the Enable-PSRemoting command.

This is where I got stuck for 3 days until Lee shared his script. The script is below and is fairly easy to follow. Basically it will connect to the VM and create a scheduled task to enable-psremoting.

##############################################################################

## Enable-RemotePsRemoting

##

## From Windows PowerShell Cookbook (O'Reilly)

## by Lee Holmes (http://www.leeholmes.com/guide)

##############################################################################

<#

.SYNOPSIS

Enables PowerShell Remoting on a remote computer. Requires that the machine

responds to WMI requests, and that its operating system is Windows Vista or

later.

.EXAMPLE

Enable-RemotePsRemoting <Computer>

#>

param(

## The computer on which to enable remoting

$Computername,

## The credential to use when connecting

$Credential = (Get-Credential)

)

Set-StrictMode -Version Latest

$VerbosePreference = "Continue"

$credential = Get-Credential $credential

$username = $credential.Username

$password = $credential.GetNetworkCredential().Password

$script = @"

`$log = Join-Path `$env:TEMP Enable-RemotePsRemoting.output.txt

Remove-Item -Force `$log -ErrorAction SilentlyContinue

Start-Transcript -Path `$log

## Create a task that will run with full network privileges.

## In this task, we call Enable-PsRemoting

schtasks /CREATE /TN 'Enable Remoting' /SC WEEKLY /RL HIGHEST ``

/RU $username /RP $password ``

/TR "powershell -noprofile -command Enable-PsRemoting -Force" /F |

Out-String

schtasks /RUN /TN 'Enable Remoting' | Out-String

`$securePass = ConvertTo-SecureString $password -AsPlainText -Force

`$credential =

New-Object Management.Automation.PsCredential $username,`$securepass

## Wait for the remoting changes to come into effect

for(`$count = 1; `$count -le 10; `$count++)

{

`$output = Invoke-Command localhost { 1 } -Cred `$credential ``

-ErrorAction SilentlyContinue

if(`$output -eq 1) { break; }

"Attempt `$count : Not ready yet."

Sleep 5

}

## Delete the temporary task

schtasks /DELETE /TN 'Enable Remoting' /F | Out-String

Stop-Transcript

"@

$commandBytes = [System.Text.Encoding]::Unicode.GetBytes($script)

$encoded = [Convert]::ToBase64String($commandBytes)

Write-Verbose "Configuring $computername"

$command = "powershell -NoProfile -EncodedCommand $encoded"

$null = Invoke-WmiMethod -Computer $computername -Credential $credential `

Win32_Process Create -Args $command

Write-Verbose "Testing connection"

Invoke-Command $computername {

Get-WmiObject Win32_ComputerSystem } -Credential $credentialOnce it finishes executing, you should be able to connect using:

PS> Enter-PSSession –ComputerName $computername –Credential RemoteDesktopUsername

Then you can work interactively – try get-process as an example.

Pretty neat, and great for debugging!

THIS POSTING IS PROVIDED “AS IS” WITH NO WARRANTIES, AND CONFERS NO RIGHTS

The MSDN Library updated its Managing Management Certificates for the Windows Azure Platform topic on 1/10/2011:

Management certificates permit client access to resources in your Windows Azure subscription. Management certificates are x.509 certificates that are saved as a .cer file and uploaded to Windows Azure. They are stored at the subscription level as opposed to service certificates that are stored with a specific hosted service.

Common uses of management certificates

- The CSUpload Command-Line Tool uses management certificates for authentication when deploying VM role images. For more information one using CSUpload to deploy VM role images, see How to Deploy an Image to Windows Azure.

- Requests made using the Windows Azure Service Management REST API require authentication against a certificate that you provide to Windows Azure; see Authenticating Service Management Requests for details. Using the Windows Azure Tools for Microsoft Visual Studio to deploy a service or view storage account data similarly requires authentication against a management certificate. You must upload a management certificate to Windows Azure using the Windows Azure Platform Management Portal .

- Windows Azure Tools for Microsoft Visual Studio use management certificates to authenticate a user to create and manage your deployments. For more information on using the Visual Studio tools to deploy applications, see Deploying the Windows Azure Application from Visual Studio.

See Also: Concepts

Managing Service Certificates

<Return to section navigation list>

Visual Studio LightSwitch

Karol Zadora-Przylecki posted Using Custom Controls to Enhance Your LightSwitch Application UI - Part 1 on 1/13/2011 (screen shots are missing because the MSDN blogs were down for maintenance when this item was being written:

Visual Studio LightSwitch provides a set of standard UI controls for displaying application data. These controls include common Windows UI elements like text boxes, as well as controls tailored for the data entry and editing tasks (like address viewer or data grid). Many applications can be built with only standard LightSwitch controls, but there are applications that require more advanced visualizations (e.g. charts or maps), or just have specific requirements that are not covered by standard control set. One possibility to address these requirements is to use a non-standard LightSwitch control. LightSwitch provides extensibility points for the 3rd party control vendors to extend the set of control available inside the IDE. From application development perspective they behave just like built-in controls—they show up in the same places in the IDE and their general working is no different from standard controls, so using them is very easy. The downside is that if a 3rd party control that satisfies the given scenario is not available, implementing one might be a task too difficult or labor-intensive for typical LightSwitch user. Fortunately there is an easier way: LightSwitch applications can use Silverlight “custom” controls directly, and re-using or authoring custom controls is much easier.

Custom controls defined

LightSwitch client application uses Silverlight framework as the foundation to build upon. LightSwitch controls are at the core just Silverlight controls, but they are enhanced with information and functionality that makes it possible for LightSwitch runtime to relieve the developer from many routine tasks associated with UI data binding, UI layout and command enablement. A custom control is a regular Silverlight control that is part of LightSwitch application UI (a screen). The main difference between LightSwitch controls and custom controls is that a custom control does not have LightSwitch-specific information associated with it. Therefore LightSwitch treats it as a “black box” and it is up to the developer to specify what data the control should display (data-bind the control to the screen) and to handle any events the control might raise. There are two possibilities here:

- The control might be built for a particular screen or entity (with intimate knowledge of the members of some screen or entity). For example, if we are building a custom control to display Customer data in a visually-rich way, we might explicitly bind parts of the control to Customer properties such as Name and Address. The advantage of this approach is that using this control will require little or no code, but obviously it cannot be used to display any other piece of data other than a Customer, so we lose some flexibility and reuse opportunities.

- The control might have no knowledge of the data it will display or the screen it will use—all data binding and interaction with the control can be specified in screen code. With this approach the control can be reused across different screens and applications. The drawbacks include the fact that there is more code to write and furthermore, the screen code targets a specific control which makes the screen harder to modify down the road. This goes against the notion of screen code being pure business logic.

In practice both of these two approaches can be used, even for a single control, and it is up to the developer to decide which one is more advantageous, given unique application requirements. An example will make things clearer, but before we jump into it, we need to learn how exactly custom controls show up on a screen.

Screen content tree and (custom) controls

A screen in a LightSwitch application is built of three elements:

- Screen members are what you see on the left in the screen designer inside LightSwitch IDE. They are the data the screen is operating on. Screen members can include collections of entities, single entities and scalar values. They can also include commands (both built-in and user-defined)

- Screen content tree defines the visual layout of the screen. It determines what is shown on the screen and how the information is visually arranged. Content tree consists of content items and it is shown on the right side of the screen designer. Some content items are used just for layout, but most are there to show a specific piece of screen data. In other words they are bound to a piece of data, or have a data binding. Content items can also have an associated control (visual) that will be used to visualize the item when the application is running.

- Screens can also have user code, which can be used to customize screen behavior programmatically and implement business logic. Screen code can be shown by clicking “Write Code” button in screen designer toolbar.

The screenshot below shows design view of a screen called ShipperListDetail.

[Missing]

Note how screen designer shows the associated control and the data binding for content items that have them. If you want to know more how content tree, controls and screen members work together please see The Anatomy of a LightSwitch Application Series Part 2 – The Presentation Tier.

So what does all this have to do with custom controls? Well, the way you add a custom control to a screen is by replacing the standard (default) control that LightSwitch assigns to a content item with a custom control. In the example above we have done it for the last control in the content tree and we will now show you how

Example: using Rating control for showing shipper rating

Let’s say we have a database of shippers that are available to ship goods from our manufacturing facility to various parts of the country. For now we will focus only on three pieces of information: shipper’s name, phone number and rating. Open Visual Studio, create a new LightSwitch project (you can call it “CustomControls”) and add a Shippers entity:

[Missing]

Also, our business rules state that shipper rating, if known, must be a number between 1 and 5, so click the Rating column in table designer, go to Properties window, find the “Custom Validation” link at the bottom of the property sheet for the Rating column and add the following code (only the body of the Rating_Validate method needs to be modified)

C#

public partial class Shipper

{

partial void Rating_Validate(EntityValidationResultsBuilder results)

{

if (this.Rating.HasValue)

{

if (this.Rating < 1 || this.Rating > 5)

{

results.AddPropertyError("Shipper rating (if known) must be between 1 and 5");

}

}

}

}VB

Public Class Shipper

Private Sub Rating_Validate(ByVal results As EntityValidationResultsBuilder)

If Me.Rating.HasValue Then

If Me.Rating < 1 Or Me.Rating > 5 Then

results.AddPropertyError("Shipper rating (if known) must be between 1 and 5")

End If

End If

End Sub

End ClassNext create a List and Details screen for Shippers entity, including details for the entity on the screen. Your screen should look like this in the designer:

[Missing]

Now we are ready to create the custom control to display the rating. We will use the Rating control from Silverlight toolkit, so if you do not have the toolkit installed yet, you can get it from http://silverlight.codeplex.com/. After you have the toolkit installed, right-click the solution node in Solution Explorer and choose Add | New Project. Choose Silverlight Class Library project and name it RatingControlWrapper. Choose Silverlight 4 as the target Silverlight version and delete the Class1 class automatically created as part of the project.

Note: this you won’t be able to complete this portion of the example (creating custom control wrapper) if you have only Visual Studio LightSwitch Beta 1 installed on your machine. You need both Visual Studio Professional (or higher SKU) and Visual Studio LightSwitch. This is because Silverlight class library projects are not supported by Visual Studio LightSwitch alone. Later on I will show you how to use Rating control directly and set up control binding from LightSwitch code; that does not require anything other than Visual Studio LightSwitch. Also, this portion of the example assumes familiarity with Silverlight user controls and XAML; for more information about these topics see Getting Started with Controls in Silverlight documentation.

After the project is created, right-click the project node and choose Add | New Item. Choose a Silverlight User Control item type and name the new control RatingControlWrapper. After the project is created, add a reference to System.Windows.Controls.Input.Toolkit assembly. You will find it under the directory where Silverlight toolkit is installed; on my machine it was “C:\Program Files (x86)\Microsoft SDKs\Silverlight\v4.0\Toolkit\Apr10\Bin”. Next open the RatingControlWrapper.xaml file in XAML view, add a namespace declaration for the local and toolkit namespaces and finally add the Rating control itself to the content of our wrapper control. You should end up with XAML file that has the content shown below; we have highlighted the portions of the control XAML that we have changed. Note that we have replaced the default Grid layout for the content with a simpler StackPanel; this will come in handy later.

RatingControlWrapper.xaml

<UserControl x:Class="RatingControlWrapper.RatingControlWrapper"

xmlns="http://schemas.microsoft.com/winfx/2006/xaml/presentation"

xmlns:x="http://schemas.microsoft.com/winfx/2006/xaml"

xmlns:d="http://schemas.microsoft.com/expression/blend/2008"

xmlns:mc="http://schemas.openxmlformats.org/markup-compatibility/2006"

xmlns:inputToolkit="clr-namespace:System.Windows.Controls;assembly=System.Windows.Controls.Input.Toolkit"

xmlns:local="clr-namespace:RatingControlWrapper"

mc:Ignorable="d"

d:DesignHeight="25" d:DesignWidth="300">

<StackPanel Orientation="Horizontal">

<inputToolkit:Rating ItemCount="5" HorizontalAlignment="Left" SelectionMode="Continuous" x:Name="RatingControl"

Value="{Binding Path=Screen.ShipperCollection.SelectedItem.Rating, Mode=TwoWay}">

</inputToolkit:Rating>

</StackPanel>

</UserControl>The most interesting portion of this code is the data binding specification: it binds the Rating control’s Value property (which controls how many rating “stars” the user sees, i.e. depicts the rating) to Screen.ShipperCollection.SelectedItem.Rating property. The TwoWay mode means that whenever one side of the binding changes, the other side will be updated. The default binding mode in Silverlight is OneWay, which means that UI reflects (screen) data; but changes in the UI do not affect the underlying data. We want the user to be able not only see the rating, but also to change the rating by clicking the control, giving a shipper desired number of “stars”, so we use TwoWay.

Now we can switch to our main project and replace the textbox that is used for the Shipper.Rating property with our custom control. Right-click the RatingControlWrapper project in the Solution Explorer and choose “Build”—it should build without errors. Open ShipperListDetail screen from our main application in the designer, select the Rating content item and open the control selection dropdown:

[Missing]

Choose “Custom Control” here. Switch to Properties window, scroll to Custom Control property and hit Change link—Add Custom Control window appears

[Missing]

Click “Add Reference” button, switch to Project tab and select RatingControlWrapper project. Hit OK to add project reference—you should now see the RatingControlWrapper assembly in the Add Custom Control dialog (see screenshot above). Expand the RatingControlWrapper namespace, select the RatingControlWrapper control and hit OK.

Handling data conversions and null values

At this point you could try to run the application and start adding some shippers. Our rating UI shows up, but does not quite work as expected—it seems like the only rating that sticks is five stars. Also there is no obvious way to clear the rating either. How can we fix this?

The first problem stems from the fact that the Value property of the Rating control is a floating-point number and Shippers.Rating column is an integer. We could store floating point numbers instead of integers for our rating otherwise we need to convert the Shipper’s rating of 1, 2, 3, 4 or 5 into 0.0 to 1.0 range that the Rating control can work with. Fortunately Silverlight has a concept of a value converter that is designed for just that. So let’s create a value converter for our control wrapper. Add a new class to the RatingControlWrapper project and name it Int2DoubleConverter. Then change the class code to this:

C#

using System;

using System.Diagnostics;

using System.Windows.Data;

namespace RatingControlWrapper

{

public class Int2DoubleConverter: IValueConverter

{

public object Convert(object value, Type targetType, object parameter, System.Globalization.CultureInfo culture)

{

if (value == null) return null;

double retval = System.Convert.ToDouble(value);

double scalingFactor;

if (double.TryParse(parameter as string, out scalingFactor))

retval /= scalingFactor;

return retval;

}

public object ConvertBack(object value, Type targetType, object parameter, System.Globalization.CultureInfo culture)

{

if (value == null) return null;

double retval = (double) value;

double scalingFactor;

if (double.TryParse(parameter as string, out scalingFactor))

retval *= scalingFactor;

return System.Convert.ToInt32(retval);

}

}

}VB

Imports System.Diagnostics

Imports System.Windows.Data

Public Class Int2DoubleConverter

Implements IValueConverter

Public Function Convert(ByVal value As Object, ByVal targetType As Type, ByVal parameter As Object, ByVal culture As System.Globalization.CultureInfo) As Object _

Implements IValueConverter.Convert

If value Is Nothing Then

Return Nothing

End If

Dim retval As Double = System.Convert.ToDouble(value)

Dim scalingFactor As Double

If Double.TryParse(CStr(parameter), scalingFactor) Then

retval /= scalingFactor

End If

Return retval

End Function

Public Function ConvertBack(ByVal value As Object, ByVal targetType As Type, ByVal parameter As Object, ByVal culture As System.Globalization.CultureInfo) As Object _

Implements IValueConverter.ConvertBack

If value Is Nothing Then

Return Nothing

End If

Dim retval As Double = CDbl(value)

Dim scalingFactor As Double

If Double.TryParse(CStr(parameter), scalingFactor) Then

retval *= scalingFactor

End If

Return System.Convert.ToInt32(retval)

End Function

End ClassTo make the converter work for various number ranges we are going to use a parameter (scaling factor). In our case the maximum rating is 5 and we will use this value as the scaling factor. Now open the RatingWrapperControl in the designer and add the converter infromation to the binding:

RatingControlWrapper.xaml

<UserControl x:Class="RatingControlWrapper.RatingControlWrapper"

xmlns="http://schemas.microsoft.com/winfx/2006/xaml/presentation"

xmlns:x="http://schemas.microsoft.com/winfx/2006/xaml"

xmlns:d="http://schemas.microsoft.com/expression/blend/2008"

xmlns:mc="http://schemas.openxmlformats.org/markup-compatibility/2006"

xmlns:inputToolkit="clr-namespace:System.Windows.Controls;assembly=System.Windows.Controls.Input.Toolkit"

xmlns:local="clr-namespace:RatingControlWrapper"

mc:Ignorable="d"

d:DesignHeight="25" d:DesignWidth="300">

<UserControl.Resources>

<local:Int2DoubleConverter x:Key="I2DConverter" />

</UserControl.Resources>

<StackPanel Orientation="Horizontal">

<inputToolkit:Rating x:Name="RatingControl" ItemCount="5" HorizontalAlignment="Left" SelectionMode="Continuous"

Value="{Binding Path=Screen.ShipperCollection.SelectedItem.Rating, Mode=TwoWay,

Converter={StaticResource I2DConverter}, ConverterParameter=5 }">

</inputToolkit:Rating>

</StackPanel>

</UserControl>Changing a shipper’s rating to unknown requires setting the underlying property to null, but the Rating control does not have this capability built-in. We can add it by using a link label and a bit of code. Add the following line to RatingControlWrapper.xaml:

RatingControlWrapper.xaml

<UserControl x:Class="RatingControlWrapper.RatingControlWrapper"

xmlns="http://schemas.microsoft.com/winfx/2006/xaml/presentation"

xmlns:x="http://schemas.microsoft.com/winfx/2006/xaml"

xmlns:d="http://schemas.microsoft.com/expression/blend/2008"

xmlns:mc="http://schemas.openxmlformats.org/markup-compatibility/2006"

xmlns:inputToolkit="clr-namespace:System.Windows.Controls;assembly=System.Windows.Controls.Input.Toolkit"

xmlns:local="clr-namespace:RatingControlWrapper"

mc:Ignorable="d"

d:DesignHeight="25" d:DesignWidth="300">

<UserControl.Resources>

<local:Int2DoubleConverter x:Key="I2DConverter" />

</UserControl.Resources>

<StackPanel Orientation="Horizontal">

<inputToolkit:Rating x:Name="RatingControl" ItemCount="5" HorizontalAlignment="Left" SelectionMode="Continuous"

Value="{Binding Path=Screen.ShipperCollection.SelectedItem.Rating, Mode=TwoWay,

Converter={StaticResource I2DConverter}, ConverterParameter=5 }">

</inputToolkit:Rating>

<HyperlinkButton Content="Clear" x:Name="hyperlinkButton1" Padding="4,0,0,0"

VerticalContentAlignment="Center" Width="50" Click="OnClearAction" />

</StackPanel>

</UserControl>Double-click the OnClearAction in XAML editor—this should result in opening the code-behind file for the control (RapidControlWrapper.xaml.cs or RapidControlWrapper.xaml.vb, depending on the language you use). Change OnClearAction method body to

C#

private void OnClearAction(object sender, RoutedEventArgs e)

{

this.RatingControl.Value = null;

}VB

Private Sub OnClearAction(ByVal sender As System.Object, ByVal e As System.Windows.RoutedEventArgs)

Me.RatingControl.Value = Nothing

End SubThat is it. Now you can launch the application and it works as expected

[Missing]

Setting up data binding via code

Our application works, but the data binding for our control is hard-coded into control definition. If the screen members change and the data binding path becomes invalid, the application will no longer work. I will now show you how to specify data binding for custom controls in screen code. In this way each screen can data-bind to the control in its unique way, so you can reuse the control with multiple screens.

First, open control definition again and remove the whole Value property binding. You can also delete the Int2DoubleConverter declaration from control resources. On the other hand, we want to expose the inner Rating control from our wrapper so that we can set a binding on it, so we’ll add the FieldModifier attribute to the control declaration. The XAML should now look like this

RatingControlWrapper.xaml

<UserControl x:Class="RatingControlWrapper.RatingControlWrapper"

xmlns="http://schemas.microsoft.com/winfx/2006/xaml/presentation"

xmlns:x="http://schemas.microsoft.com/winfx/2006/xaml"

xmlns:d="http://schemas.microsoft.com/expression/blend/2008"

xmlns:mc="http://schemas.openxmlformats.org/markup-compatibility/2006"

xmlns:inputToolkit="clr-namespace:System.Windows.Controls;assembly=System.Windows.Controls.Input.Toolkit"

xmlns:local="clr-namespace:RatingControlWrapper"

mc:Ignorable="d"

d:DesignHeight="25" d:DesignWidth="300">

<StackPanel Orientation="Horizontal">

<inputToolkit:Rating x:Name="RatingControl" x:FieldModifier="public" ItemCount="5" HorizontalAlignment="Left" SelectionMode="Continuous" />

<HyperlinkButton Content="Clear" x:Name="hyperlinkButton1" Padding="4,0,0,0" VerticalContentAlignment="Center" Width="50" Click="OnClearAction" />

</StackPanel>

</UserControl>Next open ShipperListDetails screen and override (screen)_Loaded method (in VB you will also have to add a reference to System.Windows.Controls.Input.Toolkit assembly to the client project). The Loaded method should look like this (including necessary namespace imports which you should put at the top of the file)

C#

using Microsoft.LightSwitch;

using System.Windows.Controls;

using System.Windows.Data;

using System.Diagnostics;

using RatingControlWrapper;

partial void ShipperListDetail_Loaded()

{

IContentItemProxy proxy = this.FindControl("RatingControl");

Debug.Assert(proxy != null);

if (proxy == null) return;

proxy.Invoke(() =>

{

var rw = proxy.Control as RatingControlWrapper.RatingControlWrapper;

Debug.Assert(rw != null);

if (rw == null) return;

var rc = rw.RatingControl;

var b = new Binding("Value");

b.Mode = BindingMode.TwoWay;

b.Converter = new Int2DoubleConverter();

b.ConverterParameter = "5";

rc.SetBinding(Rating.ValueProperty, b);

});

}VB

Imports System.Diagnostics

Imports System.Windows.Data

Imports System.Windows.Controls

Imports Microsoft.LightSwitch

Imports RatingControlWrapper

Private Sub ShipperListDetail_Loaded()

Dim proxy As IContentItemProxy = Me.FindControl("RatingControl")

Debug.Assert(proxy IsNot Nothing)

If proxy Is Nothing Then

Return

End If

proxy.Invoke(Sub()

Dim rw = TryCast(proxy.Control, RatingControlWrapper.RatingControlWrapper)

Debug.Assert(rw IsNot Nothing)

If rw Is Nothing Then

Return

End If

Dim rc = rw.RatingControl

Dim b = New Binding("Value")

b.Mode = BindingMode.TwoWay

b.Converter = New Int2DoubleConverter()

b.ConverterParameter = "5"

rc.SetBinding(Rating.ValueProperty, b)

End Sub)

End SubFor now let’s ignore the portion of the code that deals with finding controls and control proxies and focus on the last 5 lines where the data binding is set up. The binding mode and value converter setting look very similar to the previous example but where is the binding path?

Well, this is a different way to accomplish the same goal. Remember that the data context for a control is its content item. The binding specification here binds the Rating control’s Value property to the content item’s Value property. The content item does not just expose screen data; it has several properties that aid the UI layer (controls) in providing the best possible data editing experience. For example

- DisplayName property is used for showing a caption

- Description property can be used for a helpful tooltip

- IsProcessing property indicates whether underlying data is available or still being loaded from the database

- DataError property that contains error information if the data load fails

- (there is more)

The full list of properties exposed by content items is beyond the scope of this post; but for our purposes Value property is the most important and sufficient: this is the property that returns the underlying piece of screen data that the content item represents. In our case the content item represents Shipper.Rating property, so the Rating.Value will be bound to Shipper.Rating, which is exactly what we want. The only thing remaining is to make sure that the control has the Name property set to “RatingControl” in LightSwitch screen designer, otherwise our code won’t work.

[Missing]

Run the application and verify that it still behaves properly.

Summary

In this post we have seen how you can use custom Silverlight controls to enhance UI of LightSwitch applications. We have learned how custom controls plug into screen content tree and how to bind them to screen data, both from XAML as well as from code. We also used a value converter to overcome the problem of type mismatch between control property types and entity member types and added a control gesture to set the underlying data to null. In the second part of this post we will talk about how LightSwitch run time uses threads and what implications this has on controls and screen code. We will also cover some ways of making the screen and the control work together (interact) and we will show you how to make custom controls work with hierarchical data.

The SQL Server Team reported Microsoft SQL Server Compact 4.0 is available for download and use with WebMatrix on 1/13/2011. It sounds to me like a good candidate for a LightSwitch back end option in Beta 2:

The SQL Server Compact team is happy to announce that the next version of Microsoft SQL Server Compact 4.0 has been released, and is available for download and for use in the production systems at the location below:

http://www.microsoft.com/sql/compact

SQL Server Compact 4.0 has been designed, developed, tested and tuned over the course of last year and the release has been also vigorously verified by the vibrant MVP and developer community. The feedback from the developer community has helped to improve the quality of the SQL Server Compact 4.0 release and the Compact team would like to thank all the members of the community who participated in the release.

SQL Server Compact 4.0 and WebMatrix

- Default database for Microsoft WebMatrix: Compact 4.0 is the default database for Microsoft WebMatrix, which is the web stack that encapsulates all the technologies like ASP.NET, IIS Express, Editor and SQL Server Compact that are needed to develop, test and deploy ASP.NET websites to third party website hosting providers.

- Rapid website development with free, open source web applications: Popular open source web applications like mojoPortal, Orchard, Umbraco etc. support Compact 4.0 and can be used to rapidly develop, test and deploy websites.

- One click migration to SQL Server: As the requirements grow to the level of enterprise databases, the schema and data can be migrated from Compact to SQL Server using the migrate option in the WebMatrix IDE. This also adds a web.config xml file to the project that contains the connection string for the SQL Server. Once the migration completes, the website project seamlessly switches from using Compact to SQL Server.

- ADO.NET Entity Framework 4 (.NET FX 4) code-first and server generated keys: Compact 4.0 works with the code-first programming model of the ADO.NET Entity Framework. The columns that have server generated keys, like identity and rowguid for example, are also supported in Compact 4.0 when used with ADO.NET Entity Framework 4.0. Support for the code-first and for the server-generated keys rounds out the Compact support for ADO.NET Entity Framework.

- Improvements for setup, deployment, reliability, and encryption algorithms: The bases of Compact 4.0 have been strengthened to ensure that it can be installed without any problems, and can be deployed easily, and works reliably while providing the highest level of security for data.

WebMatrix Launch

WebMatrix v1 is ready to go, and starting Thursday, January 13, you'll be able to download it from http://www.microsoft.com/webmatrix

WebMatrix makes it easy for anyone to create a new web site using a template or an existing free open source application, customize it, and then publish it on the internet via a wide choice of hosting service providers. And yes, it's free.

WebMatrix lets you create web sites the way that you want to. We've spoken with countless web developers, and have learned what they want to create the next generation of web sites.

The main launch event for WebMatrix is CodeMash on January 13th. Microsoft will be simultaneously holding a live steaming event called Enter the WebMatrix, from http://microsoft.com/web/enter. This live event will be streamed as both an HD Smooth Stream as well as WMV for non-Silverlight users. The event starts with a one hour keynote where WebMatrix is introduced and demo’ed. Features will be highlighted as will special partners that have built special Helpers or Web Applications available within WebMatrix.

Return to section navigation list>

Windows Azure Infrastructure

TechNet’s Cloud Scenario Hub published Getting Business Done with the Cloud as its Home page in January 2011:

Cloud computing is delivering new capabilities to the IT Industry. Elastic computing that expands and contracts as you require enables organizations to deploy new innovative business solutions, often at a lower cost than traditional on-premise hardware solutions.

Microsoft’s cloud offerings build on familiar proven Windows technology and can be deployed both on-premise (“private cloud") or hosted services (“public cloud”). Public cloud solutions allow organizations to reduce capital costs and free up IT staff to concentrate on delivering greater business value. Private cloud solutions enable organizations to drive more efficiency and flexibility out of their existing IT investment. With the cloud, IT becomes the enabler to new business solutions and not the barrier.

Deciding if you can take advantage of cloud solutions requires answers to some basic questions. Finding those answers is not often straightforward, not to mention that no two business situations are exactly the same. The best way to make an informed decision is to compare common cloud implementation scenarios with your requirements.

This center provides resources and details on these common scenarios.

- Implementing Windows-based Applications for Burst Demand with Windows Azure

How to Manage Server Costs with Windows Azure