Windows Azure and Cloud Computing Posts for 10/8/2012+

| A compendium of Windows Azure, Service Bus, EAI & EDI,Access Control, Connect, SQL Azure Database, and other cloud-computing articles. |

•• Updated 10/11/2012 8:30 AM PDT with new articles marked ••.

• Updated 10/10/2012 10:45 AM PDT with new articles marked •.

Tip: Copy bullet(s), press Ctrl+f, paste it/them to the Find textbox and click Next to locate updated articles:

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Windows Azure Blob, Drive, Table, Queue, Hadoop and Media Services

- Windows Azure SQL Database, Federations and Reporting, Mobile Services

- Marketplace DataMarket, Cloud Numerics, Big Data and OData

- Windows Azure Service Bus, Access Control, Caching, Active Directory, and Workflow

- Windows Azure Virtual Machines, Virtual Networks, Web Sites, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch and Entity Framework v4+

- Windows Azure Infrastructure and DevOps

- Windows Azure Platform Appliance (WAPA), Hosting, Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

Azure Blob, Drive, Table, Queue, Hadoop and Media Services

•• Guarav Mantri (@gmantri) continued his series with Windows Azure Media Services-Part IV (Managing Access Policies via REST API) on 10/11/2012:

In the last post about Windows Azure Media Service (Media Service), I talked about how you can manage assets using REST API. You can read that post here: http://gauravmantri.com/2012/10/10/windows-azure-media-service-part-iii-managing-assets-via-rest-api/.

In this post, I’m going to take this further and talk about how to manage access policies using REST API. This post makes extensive use of concepts covered in earlier posts so I would recommend you go through them first.

Let’s get cracking!!!

Definition

Simply put, an access policy defines the permissions and duration of access to an asset. To explain, you could create an access policy which would grant say read permission on an asset for a duration of 60 minutes.

From the definition, it may seem like you create an access policy for an asset i.e. one-to-one kind of relationship, however that’s not the case. Access policies and assets share a many-to-many kind of relationship. What that means is that you can define an access policy and apply that access policy to one or more assets. Similarly an asset can have one or more access policies. The association between them is facilitated through what is called as “Locators”. We’ll cover locators in one of the next posts but for now let’s just define it: A locator is a URI which provides time-based access to a specific asset. …

Guarav continues with tables and source code for Access Policy Entity, Operations, and the complete source code for his project so far.

…

Summary

That’s it for this post. In the next post, we will deal with more REST API functionality and expand this library. I hope you have found this information useful. As always, if you find some issues with this blog post please let me know immediately and I will fix them ASAP.

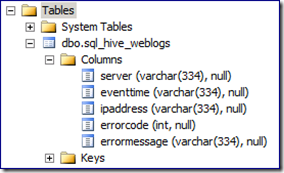

•• Denny Lee (@dennylee) described HiveODBC error message “..expected data length is 334…” in a 10/11/2012 post:

One of the odd HiveODBC error messages that I recently encountered on a project is that when I am extracting data from my Hive/Hadoop cluster using the HiveODBC driver, I end up getting an error message similar to:

OLE DB provider ‘MSDASQL’ for linked server ‘[MyHadoopCluster]‘ returned data that does not match expected data length for column ‘[MSDASQL].error_message’. The (maximum) expected data length is 334, while the returned data length is 387.

In this case, the error message was generated from connecting my SQL Server to a Mac OSX Hadoop 1.0.1 cluster running Hive 0.8.1 using the HiveODBC driver (why I insist on experimenting on my Macbook Air is a blog post for another time!). This is similar to the design as called by the SQL Server Analysis Services to Hive- A Klout Case Study. The first I thought that was curious was that if I had created a HiveODBC linked server connection from my sql server, and then ran a select * into statement,

select * from openquery(‘MyHadoopCluster’,

select * from hive_weblogs

)

then all the string columns were automatically set to varchar(334).

Digging into the data, I had in fact

hada string column where a bunch of rows had a length much greater than 334 characters. In my case, I had error messages that were potentially thousands of characters long. As reminded to me by Dave Mariani (@dmariani) and some colleagues over at Platon (thanks Stig Torngaard Hammeken and Morten Post), this in fact is a bug noted in jira Hive-3382: Strings truncated to length 334 when hive data is accessed through hive ODBC driver.So until this is fixed, what can you do about it?

- You could always using the substring function to shorten it such as substring(errormessage, 1, 334)

- When exporting data out of HiveODBC, instead of exporting out all of the columns, just export out the columns you need. Using the above sql_hive_weblogs example, I only needed the errormessage column when I needed to dig into the data, so what I did was export out the server, eventtime, ipaddress, and errorcode columns into my PowerPivot workbook/ SSAS cube / SQL database via the HiveODBC driver. When I needed the actual error message, I had the errorcode handy and then I ran a Hive query to get the actual error message.

- Related to the above approach, use SQOOP or export the table (or just the errorcode and errormessage columns) out as CSV to ultimately load that data into your final destination.

- If you do not have an errorcode handy, perhaps build a hash of the errormessage field so you can do the above two tricks. Examples of hashes in Hive include (but are not limited to) Hive MD5 UDF, Hive-1262 patch to add security/checksum UDFs, etc.

A tad frustrating at times, but in many BI-related cases, the full string isn’t required so the workarounds suggested above work fine.

Hopefully this can help you too!

• Carl Nolan (@carl_nolan) reported availability of the Framework for .Net Hadoop MapReduce Job Submission V1.0 Release in a 10/10/2012 post:

It has been a few months since I have made a change to the “Generics based Framework for .Net Hadoop MapReduce Job Submission” code. However I was going to put together a sample for a Reduce side join and came across a issue around the usage of partitioners. As such I decided to add support for custom partitioners and comparators, before stamping the release as a version 1.0.

The submission options to be added will be -partitionerOption (for partitioning the data) and -comparatorOption (for sorting). Before talking about these options lets cover a little of the Hadoop Streaming Documentation.

A Useful Partitioner Class

Hadoop has a library class, KeyFieldBasedPartitioner, that allows the MapReduce framework to partition the map outputs on key segments rather than the whole key. Consider the following job submission options:

-partitioner org.apache.hadoop.mapred.lib.KeyFieldBasedPartitioner -Dstream.num.map.output.key.fields=4 -Dmapred.text.key.partitioner.options=-k1,2The -Dstream.num.map.output.key.fields=4 option specifies that the map output keys will have 4 fields. In this case the the -Dmapred.text.key.partitioner.options=-k1,2 option tells the MapReduce framework to partition the map outputs by the first two fields of the key; rather than the full set of four keys.This guarantees that all the key/value pairs with the same first two fields in the keys will be partitioned to the same reducer.

To simplify this specification the submission framework supports the following command line options:

-numberKeys 4 -partitionerOption ”-k1,2”When writing Streaming applications one normally has to do the partition processing in the Reducer. However with these options the framework will correctly send the appropriate data to the Reducer. This was the biggest change that needed to be made to the Framework.

This partition processing is important for handling Reduce side joins; the topic of my next blog post.

A Useful Comparator Class

Hadoop also has a library class, KeyFieldBasedComparator, that provides sorting the key data. Consider the following job submission options:

-D mapred.output.key.comparator.class=org.apache.hadoop.mapred.lib.KeyFieldBasedComparator -D mapred.text.key.comparator.options=-k2,2nrHere the MapReduce framework will sort the outputs on the second key field; the -Dmapred.text.key.comparator.options=-k2,2nr option. The -n specifies that the sorting is numerical and the -r specifies that the result should be reversed. The sort options are similar to a Unix sort, but here are some simple examples:

Reverse ordering:

-Dmapred.text.key.comparator.options=-rNumeric ordering:

-Dmapred.text.key.comparator.options=-nSpecific sort specification:

-Dmapred.text.key.comparator.options=-kx,yFor the general sort specification the -k flag is used, which allows you to specify the sorting key using the the x, y values; as in the sample above.

To simplify this specification the submission framework supports the following command line option:

-comparatorOption ”-k2,2nr”The framework then takes care of setting the appropriate job configuration values.

Summary

Although one could define the Partitioner and Comparator options using the job configuration parameters hopefully these new options make the process a lot simpler. In the case of the Partitioner options it also allows the framework to easily identity the difference in the number of sorting and partitioner keys. This allows the correct data to be sent to each Reducer.

As mentioned, using these options, in my next post I will cover how to use the Framework for doing a Reduce side join.

Denny Lee (@dennylee) recommended Padding zero-length string data with HiveODBC to avoid an “esoteric error” in a 10/9/2012 post:

One of the more esoteric error messages that you may receive from the HiveODBC driver connection is:

SQL_ERROR Failed to get data for column zu

When connecting to Hive using the HiveODBC driver using a linked server connection, the full error message looks something like:

OLE DB provider “MSDASQL” for linked server “MySQLHive” returned message “SQL_ERROR Failed to get data for column zu”.

OLE DB provider “MSDASQL” for linked server “MySQLHive” returned message “SQL_ERROR get signed long int data failed for column 9. Column index out of bounds.”.

OLE DB provider “MSDASQL” for linked server “MySQLHive” returned message “Option value changed”.

Msg 7330, Level 16, State 2, Line 1

Cannot fetch a row from OLE DB provider “MSDASQL” for linked server “MySQLHive”.

Thanks to some digging by James Baker and Dave Mariani (@dmariani, VP Engineering at Klout), we realized that the ODBC Provider for Hive might not correctly handle zero-length string data returned from Hive.

As noted in the SQL Server Analysis Services to Hive case study, to avoid these issues, avoid returning empty strings from Hive. For more information, please reference page 12 of the case study.

Maarten Balliauw (@maartenballiauw) explained What PartitionKey and RowKey are for in Windows Azure Table Storage in a 10/8/2012 post:

For the past few months, I’ve been coaching a “Microsoft Student Partner” (who has a great blog on Kinect for Windows by the way!) on Windows Azure. One of the questions he recently had was around PartitionKey and RowKey in Windows Azure Table Storage. What are these for? Do I have to specify them manually? Let’s explain…

Windows Azure storage partitions

All Windows Azure storage abstractions (Blob, Table, Queue) are built upon the same stack (whitepaper here). While there’s much more to tell about it, the reason why it scales is because of its partitioning logic. Whenever you store something on Windows Azure storage, it is located on some partition in the system. Partitions are used for scale out in the system. Imagine that there’s only 3 physical machines that are used for storing data in Windows Azure storage:

Based on the size and load of a partition, partitions are fanned out across these machines. Whenever a partition gets a high load or grows in size, the Windows Azure storage management can kick in and move a partition to another machine:

By doing this, Windows Azure can ensure a high throughput as well as its storage guarantees. If a partition gets busy, it’s moved to a server which can support the higher load. If it gets large, it’s moved to a location where there’s enough disk space available.

Partitions are different for every storage mechanism:

- In blob storage, each blob is in a separate partition. This means that every blob can get the maximal throughput guaranteed by the system.

- In queues, every queue is a separate partition.

- In tables, it’s different: you decide how data is co-located in the system.

PartitionKey in Table Storage

In Table Storage, you have to decide on the PartitionKey yourself. In essence, you are responsible for the throughput you’ll get on your system. If you put every entity in the same partition (by using the same partition key), you’ll be limited to the size of the storage machines for the amount of storage you can use. Plus, you’ll be constraining the maximal throughput as there’s lots of entities in the same partition.

Should you set the PartitionKey to the same value for every entity stored? No. You’ll end up with scaling issues at some point.

Should you set the PartitionKey to a unique value for every entity stored? No. You can do this and every entity stored will end up in its own partition, but you’ll find that querying your data becomes more difficult. And that’s where our next concept kicks in…RowKey in Table Storage

A RowKey in Table Storage is a very simple thing: it’s your “primary key” within a partition. PartitionKey + RowKey form the composite unique identifier for an entity. Within one PartitionKey, you can only have unique RowKeys. If you use multiple partitions, the same RowKey can be reused in every partition.

So in essence, a RowKey is just the identifier of an entity within a partition.

PartitionKey and RowKey and performance

Before building your code, it’s a good idea to think about both properties. Don’t just assign them a guid or a random string as it does matter for performance.

The fastest way of querying? Specifying both PartitionKey and RowKey. By doing this, table storage will immediately know which partition to query and can simply do an ID lookup on RowKey within that partition.

Less fast but still fast enough will be querying by specifying PartitionKey: table storage will know which partition to query.

Less fast: querying on only RowKey. Doing this will give table storage no pointer on which partition to search in, resulting in a query that possibly spans multiple partitions, possibly multiple storage nodes as well. Wihtin a partition, searching on RowKey is still pretty fast as it’s a unique index.

Slow: searching on other properties (again, spans multiple partitions and properties).

Note that Windows Azure storage may decide to group partitions in so-called "Range partitions" - see http://msdn.microsoft.com/en-us/library/windowsazure/hh508997.aspx.

In order to improve query performance, think about your PartitionKey and RowKey upfront, as they are the fast way into your datasets.

Deciding on PartitionKey and RowKey

Here’s an exercise: say you want to store customers, orders and orderlines. What will you choose as the PartitionKey (PK) / RowKey (RK)?

Let’s use three tables: Customer, Order and Orderline.

An ideal setup may be this one, depending on how you want to query everything:

Customer (PK: sales region, RK: customer id) – it enables fast searches on region and on customer id

Order (PK: customer id, RK; order id) – it allows me to quickly fetch all orders for a specific customer (as they are colocated in one partition), it still allows fast querying on a specific order id as well)

Orderline (PK: order id, RK: order line id) – allows fast querying on both order id as well as order line id.Of course, depending on the system you are building, the following may be a better setup:

Customer (PK: customer id, RK: display name) – it enables fast searches on customer id and display name

Order (PK: customer id, RK; order id) – it allows me to quickly fetch all orders for a specific customer (as they are colocated in one partition), it still allows fast querying on a specific order id as well)

Orderline (PK: order id, RK: item id) – allows fast querying on both order id as well as the item bought, of course given that one order can only contain one order line for a specific item (PK + RK should be unique)You see? Choose them wisely, depending on your queries. And maybe an important sidenote: don’t be afraid of denormalizing your data and storing data twice in a different format, supporting more query variations.

There’s one additional “index”

That’s right! People have been asking Microsoft for a secondary index. And it’s already there… The table name itself! Take our customer – order – orderline sample again…

Having a Customer table containing all customers may be interesting to search within that data. But having an Orders table containing every order for every customer may not be the ideal solution. Maybe you want to create an order table per customer? Doing that, you can easily query the order id (it’s the table name) and within the order table, you can have more detail in PK and RK.

And there's one more: your account name. Split data over multiple storage accounts and you have yet another "partition".

Conclusion

In conclusion? Choose PartitionKey and RowKey wisely. The more meaningful to your application or business domain, the faster querying will be and the more efficient table storage will work in the long run

Gaurav Mantri (@gmantri) continued his series with Windows Azure Media Service-Part II (Setup, API and Access Token) on 10/8/2012:

In the previous post about Windows Azure Media Service (WAMS), I provided an introduction to the service as to how I understood it. I also explained some key terms associated with WAMS. You can read this post here: http://gauravmantri.com/2012/10/05/windows-azure-media-service-part-i-introduction/

In this post, we’ll talk about creating a media service through Windows Azure Portal, explore a bit of API & SDK and what options are available to you when it comes to develop and manage media applications using WAMS. We’ll also talk briefly about authentication/authorization and finally we’ll wrap this post with some code for writing our own library for managing WAMS.

Setup

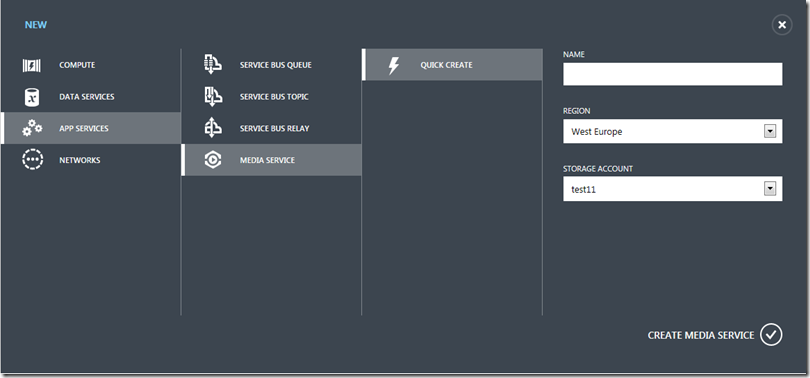

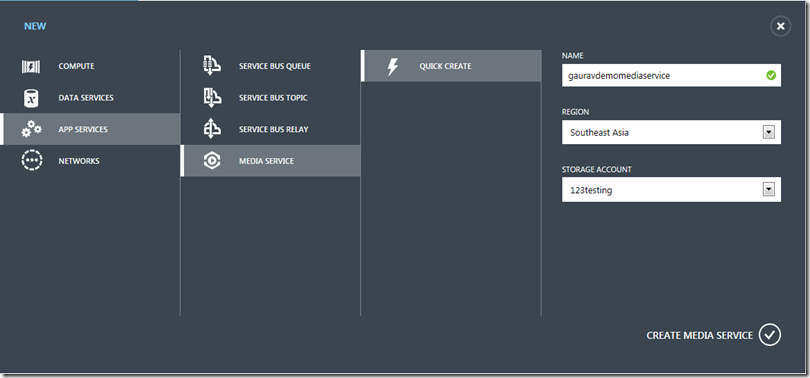

Before you start building media applications using WAMS, first thing you would need to do is create a media service. You can create a new media service through Windows Azure Portal. Following screenshots demonstrate the steps for the same.

1. Provide media service name & choose a storage account

First thing you would need to do is provide a media service name and choose a storage account where files related to various assets will be stored.

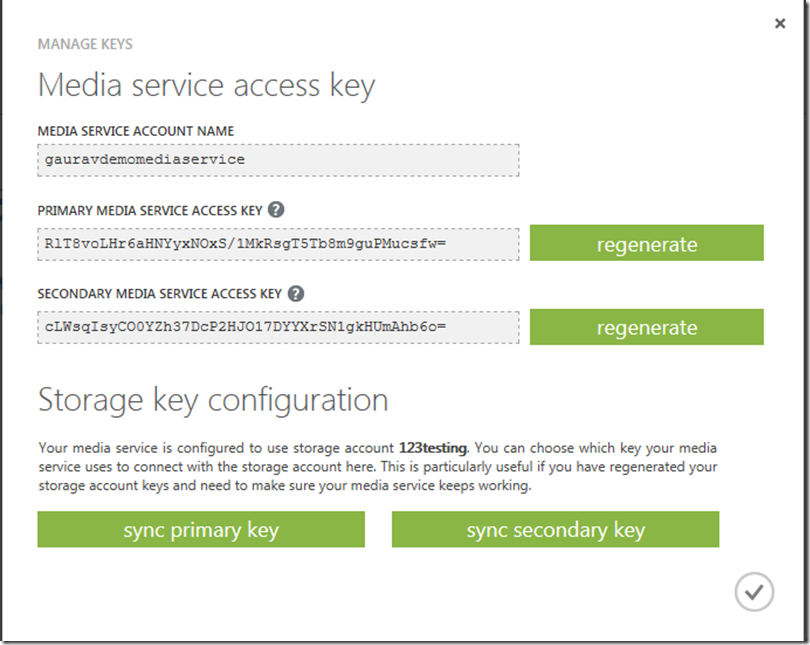

2. Manage access keys

Once media service has been created successfully, next thing you would want is to get hold of the access keys. If you have used Windows Azure Storage Service, you would be familiar with the concept of access keys. Essentially these are the keys required for securely communicating with WAMS. Anybody with access to these keys (and your service name) has complete access to your media service thus extreme care must be taken to protect these keys.

Another thing to notice here is the “Storage key configuration” section. While creating a media service, you specified which storage account you wish to use to store files. By choosing between “sync primary key” and “sync secondary key”, you are telling WAMS to use that particular key when communicating with the storage account. Also if you end up regenerating your storage account key (for whatever reason), by doing this you’re ensuring that your media service keeps working. I believe what it does is that it constantly polls storage service and fetches the storage account key you chose to keep in sync (total guess!!!)

Based on my testing if I don’t click on any of these “sync primary key” and “sync secondary key” buttons by default WAMS make use of storage account primary key. If I change my primary key, any operation I perform which involves WAMS to interact with this storage account will fail.

API & SDK

At the core of it, WAMS exposes management functionality through a REST API. It also provides a .Net SDK which essentially is a wrapper around REST API.

One may ask the question as to which route to go: REST API or .Net SDK? In my opinion, the answer depends on a number of factors, such as:

- Flexibility: Usually in my experience, consuming REST API gives you more flexibility as you are not bound by only the functionality exposed through SDK.

- Convenience: Obviously SDK provides you more convenience as most of the work as far as implementing REST API has already been done for you. Plus it includes some helper functionality (like moving files into blob storage) which is not there in REST API for WAMS.

- Platform Feature Compatibility: In my experience, SDK comes a bit after (sometimes long after) REST API. If we take Windows Azure Storage for example, there are still some things you could not do with SDK (version 1.7 at the time of writing) while they are available in the system and are exposed via REST API. If we look at WAMS in particular, the ability to create Job Templates and Task Templates are still not available through SDK at the time of writing this blog. Thus if you need these functionality, you would have to go REST API route.

- SDK Unavailability: Sometimes you don’t have a choice. For example, if you’re building functionality in say PHP and we know that currently there’s no SDK available for PHP. In that case you would need to use REST API. Having said this thing and given the way things are going in other parts of Windows Azure, I would not be surprised if the SDK for many common platforms (PHP, Java, node.js) arrive pretty soon.

REST API

A few things about REST API:

- Get an access token first: Before using REST API, you would need to get an access token first from Windows Azure Access Control Service (ACS). This is described in detail later in this blog post.

- Know about various request/response headers: Again when using REST API, understand about various headers you would need to pass in your request and the headers returned in response. You can find more information about this here: http://msdn.microsoft.com/en-us/library/windowsazure/hh973616.aspx.

.Net SDK

A thing or two I noticed about .Net SDK:

- Windows Azure Storage Client Library Version: Please note that at the time of writing this blog, the SDK has a dependency on Windows Azure Storage Client Library for SDK version 1.6 (library version 1.1) while the most latest SDK version is 1.7. Please make a note of that.

Authenticating (or is it Authorizing) REST API Requests

As mentioned above, before you could invoke WAMS REST API functionality you would need to get an access token from Windows Azure Access Control Service (ACS). In order to do so, a few things to keep in mind:

Endpoint:

Endpoint for getting an access token is: https://wamsprodglobal001acs.accesscontrol.windows.net/v2/OAuth2-13

HTTP Method:

HTTP Method for this request is POST.

Request Body:

Request body should be in this format:

grant_type=client_credentials&client_id=[client id value]&client_secret=[URL-encoded client secret value]&scope=urn%3aWindowsAzureMediaServicesFor example, if we take the values from above, the request body will be:

grant_type=client_credentials&client_id=gauravdemomediaservice&client_secret=RlT8voLHr6aHNYyxNOxS%2f1MkRsgT5Tb8m9guPMucsfw%3d&scope=urn%3aWindowsAzureMediaServicesResponse Body:

Response is returned in JSON format. Here’s how typical response looks like:

{ "token_type":"http://schemas.xmlsoap.org/ws/2009/11/swt-token-profile-1.0", "access_token":"http%3a%2f%2fschemas.xmlsoap.org%2fws%2f2005%2f05%2fidentity%2fclaims%2fnameidentifier=gauravdemomediaservice&urn%3aSubscriptionId=35d2de3b-a2cd-4160-aee5-f55d799962c4&http%3a%2f%2fschemas.microsoft.com%2faccesscontrolservice%2f2010%2f07%2fclaims%2fidentityprovider=https%3a%2f%2fwamsprodglobal001acs.accesscontrol.windows.net%2f&Audience=urn%3aWindowsAzureMediaServices&ExpiresOn=1349691469&Issuer=https%3a%2f%2fwamsprodglobal001acs.accesscontrol.windows.net%2f&HMACSHA256=LrliUJEEfUlBPTJJVlp35%1fS2XEX7xwWUQRN%2fb1NkJkM%3d", "expires_in":"5999", "scope":"urn:WindowsAzureMediaServices" }A few things to keep in mind here:

- access_token: This is the access token you would need when working with WAMS REST API. It must be included in every request.

- expires_in: This indicates the number of seconds for which the access token is valid. One must keep an eye on this value and ensure that the access token has not expired. One needs to get the access token again if the access token has expired.

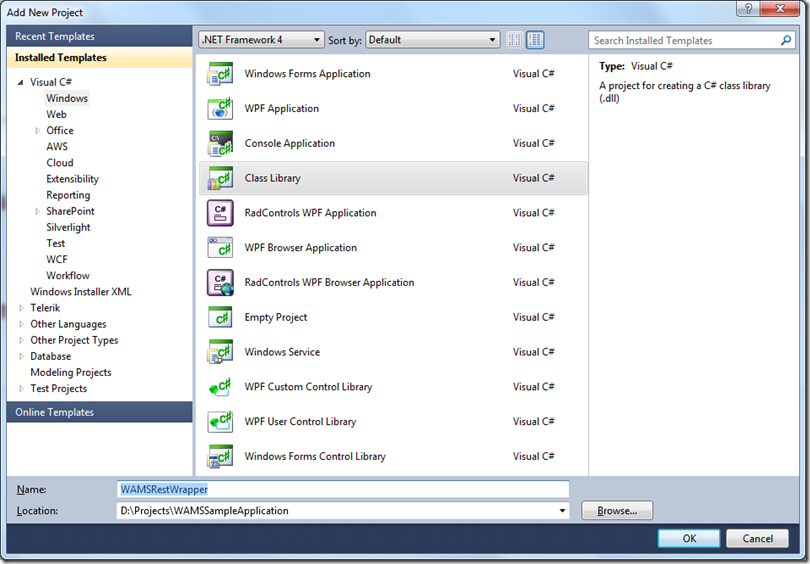

Sample Project

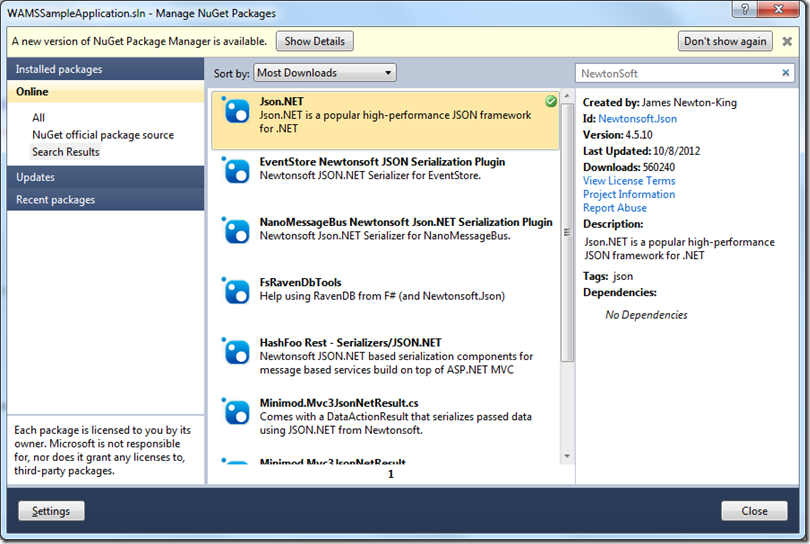

Now what we will do is create a simple class library project which would be the wrapper around REST API much like .Net SDK. Then we’ll start consuming this library in another project. We’ll use VS 2010 to create this project and make use of .Net framework version 4.0. For the sake of simplicity, we’ll call this project as “WAMSRestWrapper”. Also because this REST API sends and receives the data in JSON format, we’ll make use of Json.Net library.

Since I’ll also be learning the REST API, the code would not be the best quality code and would desire a lot of improvements. Please feel free to make improvements as you see fit.

In this blog post, only thing we will do is get the access token and store it with our application.

To do so, let’s first create a class called AcsToken. Members of this class will map to the JSON data returned by ACS.

using System; using System.Collections.Generic; using System.Linq; using System.Text; namespace WAMSRestWrapper { public class AcsToken { public string token_type { get; set; } public string access_token { get; set; } public int expires_in { get; set; } public string scope { get; set; } } }Next let’s create a class for fetching this ACS token. Since this class will be doing a lot of stuff in the days to come, let’s call it “MediaServiceContext” (inspired by MediaContextBase in .Net SDK).

using System; using System.Collections.Generic; using System.Linq; using System.Text; using System.Net; using System.Web; using Newtonsoft.Json; using System.Globalization; using System.IO; namespace WAMSRestWrapper { public class MediaServiceContext { private const string acsEndpoint = "https://wamsprodglobal001acs.accesscontrol.windows.net/v2/OAuth2-13"; private const string acsRequestBodyFormat = "grant_type=client_credentials&client_id={0}&client_secret={1}&scope=urn%3aWindowsAzureMediaServices"; private string _accountName; private string _accountKey; private string _accessToken; private DateTime _accessTokenExpiry; /// <summary> /// Creates a new instance of <see cref="MediaServiceContext"/> /// </summary> /// <param name="accountName"> /// Media service account name. /// </param> /// <param name="accountKey"> /// Media service account key. /// </param> public MediaServiceContext(string accountName, string accountKey) { this._accountName = accountName; this._accountKey = accountKey; } /// <summary> /// Gets the access token. If access token is not yet fetched or the access token has expired, /// it gets a new access token. /// </summary> public string AccessToken { get { if (string.IsNullOrWhiteSpace(_accessToken) || _accessTokenExpiry < DateTime.UtcNow) { var tuple = FetchAccessToken(); _accessToken = tuple.Item1; _accessTokenExpiry = tuple.Item2; } return _accessToken; } } /// <summary> /// This function makes the web request and gets the access token. /// </summary> /// <returns> /// <see cref="System.Tuple"/> containing 2 items - /// 1. The access token. /// 2. Token expiry date/time. /// </returns> private Tuple<string, DateTime> FetchAccessToken() { HttpWebRequest request = (HttpWebRequest)WebRequest.Create(acsEndpoint); request.Method = "POST"; string requestBody = string.Format(CultureInfo.InvariantCulture, acsRequestBodyFormat, _accountName, HttpUtility.UrlEncode(_accountKey)); request.ContentLength = Encoding.UTF8.GetByteCount(requestBody); request.ContentType = "application/x-www-form-urlencoded"; using (StreamWriter streamWriter = new StreamWriter(request.GetRequestStream())) { streamWriter.Write(requestBody); } using (var response = (HttpWebResponse)request.GetResponse()) { using (StreamReader streamReader = new StreamReader(response.GetResponseStream(), true)) { var returnBody = streamReader.ReadToEnd(); var acsToken = JsonConvert.DeserializeObject<AcsToken>(returnBody); return new Tuple<string, DateTime>(acsToken.access_token, DateTime.UtcNow.AddSeconds(acsToken.expires_in)); } } } } }The code is pretty straight forward. The constructor takes 2 parameters – account name and key and it exposes a public property called AccessToken. If an access token has never been fetched in this object’s lifecycle or has expired, a web request is made to ACS and token is fetched.

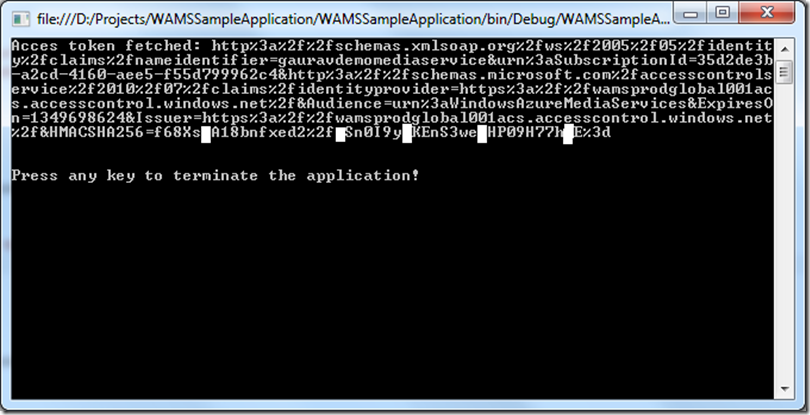

Now let’s write a simple console application which makes use of this library and all it does is prints out the access token on the console.

using System; using System.Collections.Generic; using System.Linq; using System.Text; using WAMSRestWrapper; using Microsoft.WindowsAzure.MediaServices.Client; namespace WAMSSampleApplication { class Program { static string accountName = "<your media service account name>"; static string accountKey = "<your media service account key>"; static MediaServiceContext context; static void Main(string[] args) { context = GetContext(); var accessToken = context.AccessToken; Console.WriteLine("Acces token fetched: " + accessToken); Console.WriteLine(); Console.WriteLine(); Console.WriteLine("Press any key to terminate the application!"); Console.ReadLine(); } static MediaServiceContext GetContext() { return new MediaServiceContext(accountName, accountKey); } } }Once we run this program, this is what we see on the console.

Now when invoking the WAMS REST API, we will make use of this access token.

Summary

That’s it for this post. In the next post, we will deal with more REST API functionality and expand this library. I hope you have found this information useful. As always, if you find some issues with this blog post please let me know immediately and I will fix them ASAP.

<Return to section navigation list>

Windows Azure SQL Database, Federations and Reporting, Mobile Services

Matteo Pagani (@qmatteoq) posted Having fun with Azure Mobile Services – The Windows Phone application on 10/9/2012:

Did you have fun using your Azure Mobile Service with your Windows 8 app? Good, because now the fun will continue! We will do the same operations using a Windows Phone application. As I already anticipated in the previous posts, actually there isn’t a Windows Phone SDK and the reason is very simple: Microsoft is probably waiting for the Windows Phone 8 SDK to be released, in order to proper support both its old and new mobile platform.

But we don’t have to worry: our service is a REST service that supports the OData protocol, so we can interact with it starting from now by using simple HTTP requests and by parsing the HTTP response.

To help us in our work we’ll use two popular libraries:

- RestSharp, that is a wrapper around the HttpWebRequest class that simplifies the code needed to communicate with a REST service. Sometimes RestSharp tries to be “too smart” for my taste, as we’ll see later, but it’s still a perfect candidate for our scenario.

- JSON.NET, that is a library that provides many useful features when you work with JSON data, like serialization, deserialization, plus a manipulation language based on LINQ called LINQ to JSON.

Let’s start! First you have to open Visual Studio 2010 (the 2012 release isn’t supported yet by the current Windows Phone SDK) and create a new Windows Phone project. Then, using NuGet, we’re going to install the two library: right click on the project, choose Manage NuGet Packages and look for the two needed libraries using the keywords RestSharp and JSON.NET.

The UI of the application will be very similar to the one we’ve used for the Windows 8 app, I’ve just replaced the ListView with a ListBox.

<StackPanel>

<ButtonContent="Insert data"Click="OnAddNewComicButtonClicked"/>

<ButtonContent="Show data"Click="OnGetItemButtonClicked"/>

<ListBoxx:Name="ComicsList">

<ListBox.ItemTemplate>

<DataTemplate>

<StackPanelMargin="0, 20, 0, 0">

<TextBlockText="{Binding Title}"/>

<TextBlockText="{Binding Author}"/>

</StackPanel>

</DataTemplate>

</ListBox.ItemTemplate>

</ListBox>

</StackPanel>Insert some data

To insert the data we’ll have to send a HTTP request to the service, using the POST method; the body of the request will be the JSON that represent our Comic object. Let’s see the code first:

privatevoidOnAddNewComicButtonClicked(objectsender, RoutedEventArgs e)

{

RestRequest request =newRestRequest("https://myService.azure-mobile.net/tables/Comics");

request.Method = Method.POST;

request.AddHeader("X-ZUMO-APPLICATION","your-application-key");

Comic comic =newComic

{

Title ="300",

Author ="Frank Miller"

};

stringjsonComic = JsonConvert.SerializeObject(comic,newJsonSerializerSettings

{

NullValueHandling = NullValueHandling.Ignore

});

request.AddParameter("application/json", jsonComic, ParameterType.RequestBody);

RestClient client =newRestClient();

client.ExecuteAsync(request, response =>

{

MessageBox.Show(response.StatusCode.ToString());

});

}The first thing we do is to create a RestRequest, which is the RestSharp class that represents a web request: the URL of the request (which is passed as parameter of the constructor) is the URL of our service.

Then we set the HTTP method we’re going to use (POST, in this case, since we’re going to add some data do the table) and we set the application’s secret key: thanks to Fiddler, by intercepting the traffic of our previous Windows 8 application, I’ve found that the key that in our Windows Store app was passed as a parameter of the constructor of the MobileService class is added as a request’s header, which name is X-ZUMO-APPLICATION. We do the same by using the AddHeader method provided by RestSharp.

The next step is to create the Comic object we’re going to insert in the table: for this task please welcome Json.net, that we’re going to use to serialize our Comic object, that means converting it into a plain JSON string. What does it mean? That if you put a breakpoint in this method and you take a look at the content of the jsonComic variable, you’ll find a plain text representation of our complex object, like the following one:

{

"Author" : "Frank Miller",

"Title" : "300"

}We perform this task using the SerializeObject method of the JsonConvert class: other than passing the Comic object to the method, we also pass a JsonSerializerSettings object, that we can use to customize the serialization process. In this case, we’re telling to the the serializer not to include object’s properties that have a null value. This step is very important: do you remember that the Comic object has an Id property that acts as primary key and that is automatically generated every time we insert a new item in the table? Without this setting, the serializer would add the Id property to the Json with the value “0”. In this case, the request to the service would fail, since it isn’t a valid value: the Id property shouldn’t be specified since it’s the database that takes care of generating it.

Once we have the json we can add it to the body of our request: here comes a little trick, to avoid that RestSharp tries to be too smart for us. In fact, if you use the AddBody method of the RestRequest object you don’t have a way to specify which is the content type of the request. RestSharp will try to guess it and will apply it: the problem is that, sometimes, RestSharp fails to correctly recognize the content type and this is one of these cases. In my tests, every time I’ve tried to add a json in the request’s body, RestSharp set the content type to text/xml, that it’s not only wrong, but actively refused by the Azure mobile service, since the only accepted content type is application/json.

By using the AddParameter method and by manually specifying the content type, the body’s content and the parameter’s type (ParameterType.RequestBody) we are able to apply a workaround to this behavior. In the end we can execute the request by creating a new instance of the RestClient class and by calling the ExecuteAsync method, that accepts as parameters the request and the callback that is executed when we receive the response from our service. Just to trace what’s going on, in the callback we simply display using a MessageBox the status code: if everything we did is correct, the status code we receive should be Created.

To test it, simply go to the Azure management portal, access to your service’s dashboard and go into the data tab: you should see the new item in the table.

Play with the data

Getting back the data for display purposes is a little bit simpler: it’s just a GET request, with the same features of the POST request. The difference is that, this time, we’re going to use deserialization, which is the process to convert the JSON we receive from the service into C# objects.

privatevoidOnGetItemButtonClicked(objectsender, RoutedEventArgs e)

{

RestRequest request =newRestRequest("https://myService.azure-mobile.net/tables/Comics");

request.AddHeader("X-ZUMO-APPLICATION","my-application-key");

request.Method = Method.GET;

RestClient client =newRestClient();

client.ExecuteAsync(request, result =>

{

stringjson = result.Content;

IEnumerable<Comic> comics = JsonConvert.DeserializeObject<IEnumerable<Comic>>(json);

ComicList.ItemsSource = comics;

});

}The first part of the code should be easy to understand, since it’s the same we wrote to insert the data: we create a RestRequest object, we add the header with the application key and we execute asynchronously the request using the RestClient object.

This time in the response and, to be more precisely, in the Content property we get the JSON with the list of all the comics that are stored in our table. It’s time to use Json.Net again: this time we’re going to use the DeserializeObject<T> method of the JsonConvert class, where T is the type of the object we expect to have back after the deserialization process. In this case, the return type is IEnumerable<Comic>, since our request returns a collection of all the comics stored in the table.

Some advanced scenarios for playing with the data

In the next posts we’ll see some more operations we can do with the data: the mobile service we’ve created with Azure supports the OData protocol; this means that we can perform additional operations (like filtering the results) directly with the HTTP request, without having to get the whole content of the table first. We’ll see how to do it, in the meantime… happy coding!

<Return to section navigation list>

Marketplace DataMarket, Cloud Numerics, Big Data and OData

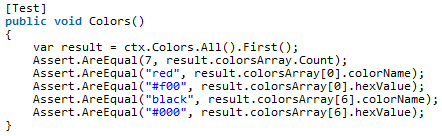

•• Vagif Abilov (@ooobject) announced MongoDB OData provider now supports arrays and nested collections on 10/11/2012:

It’s been a while since I blogged about MongOData – a MongoDB OData provider that I wrote to cross MongoDB and OData protocol. Even though the provider has not reached it’s “Version 1.0” state, the response was quite encouraging: I received suggestions and bug reports, and this was indeed a good motivation factor. One of requests was to add support for collections. Property collections haven’t been supported by OData until the most recent protocol version (version 3), and such late attention to this topic is partly explained by the fact that property collections are not currently supported by Entity Framework which is often used to create OData services using WCF Data Services classes. But this should not be a limiting factor for document databases where arrays are used instead of relations in traditional SQL databases. To fill the gap I have upgraded OData protocol used by MongOData to version 3 and added creation of metadata for BsonArray properties.

Let me show how it works using a simple example. Imagine we have a JSON document with a root element colorsArray that consists of a collection of pairs (colorName,hexValue). Here’s the sample document:

How will MongOData expose it if we create a MongoDB collection “Colors” and import there the document above? Let’s have a look at generated metadata for entity and complex type:

Note the type name assigned to a property colorsArray. It’s a collection of items of a type Mongo.Colors__colorsArray – MongOData generates it’s type definition when it reads the first element. It is assumed that all array elements have the same structure – a reasonable assumption if we expect to generate the collection’s metadata.

Now let’s see how data exposed by this OData service can be consumed from the client. On a client side I am using Simple.Data OData adapter – a library that I also happened to maintain.

MongOData can be downloaded as an MSI installer or as a NuGet package.

The WCF Data Services Team posted Important: Security Advisory 2749655 affects WCF DS on 10/9/2012:

What is the advisory?

Microsoft just released Security Advisory 2749655, which addresses “an issue involving specific digital certificates that were generated by Microsoft without the proper timestamp attributes.” If you are using WCF Data Services 5.0.1 or have previously installed the WCF Data Services MSI from the download center, you may run into this issue.

Does this issue create a security vulnerability?

The advisory notes that “this is not a security issue”, meaning that this issue is not creating a vulnerability. However, there could be a combination of factors which might cause a WCF Data Service to stop working, or cause our installer to fail to install. Microsoft recommends that you “apply the KB 2749655 update and any rereleased updates addressing this issue immediately”.

I installed WCF Data Services 5.0 or 5.0.1. What do I need to do?

There are up to three actions WCF Data Services customers should take:

- We recommend that you install the KB referenced above

- If you installed the WCF Data Services 5.0 MSI before Sept 26, 2012, you should download and install the replacement version of this MSI

- If you have a dependency on the WCF Data Services NuGet package, we recommend that you upgrade to 5.0.2; this should not make any functional difference since we only expect people will run into problems on install/uninstall, however updating these DLLs would ensure that you have validly signed DLLs

Our NuGet packages are:

- Microsoft.Data.Services.Client (WCF Data Services Client)

- Microsoft.Data.Services (WCF Data Services Server)

- Microsoft.Data.OData (ODataLib)

- Microsoft.Data.Edm (EdmLib)

- System.Spatial (System.Spatial)

<Return to section navigation list>

Windows Azure Service Bus, Access Control Services, Caching, Active Directory and Workflow

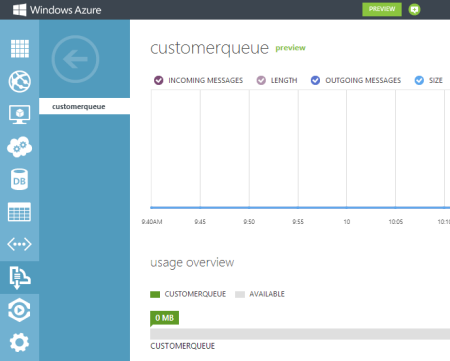

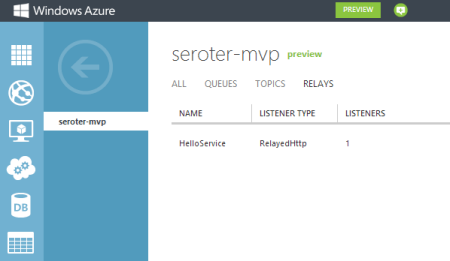

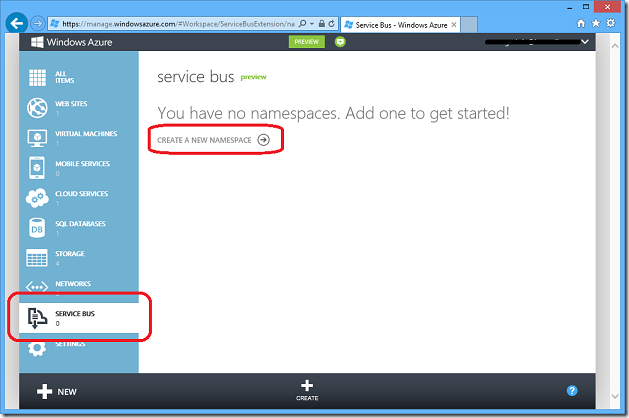

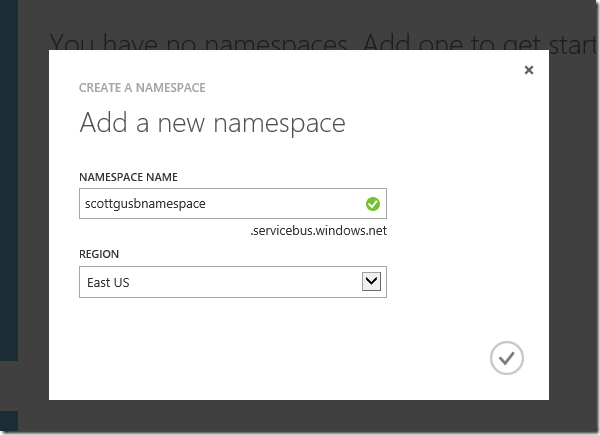

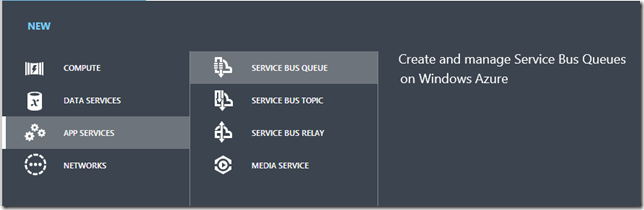

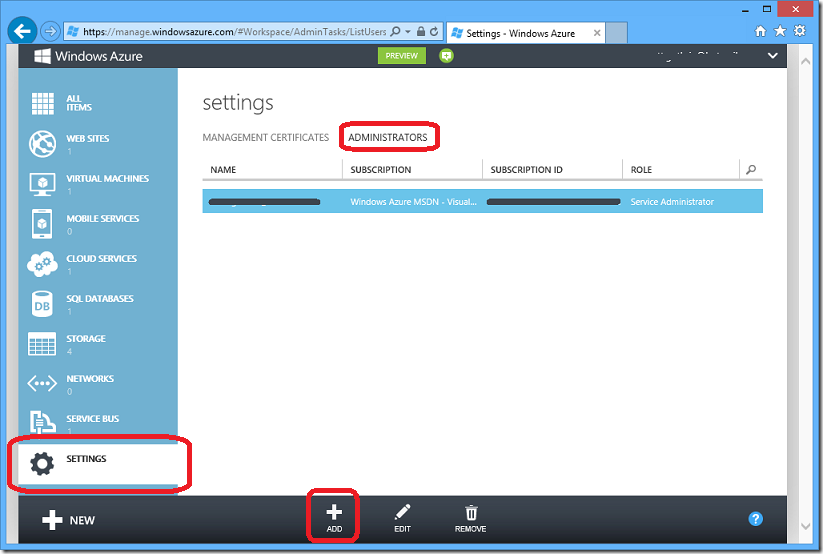

Richard Seroter (@rseroter, pictured below) recounted Trying Out the New Windows Azure Portal Support for Relay Services in a 10/8/2012 post:

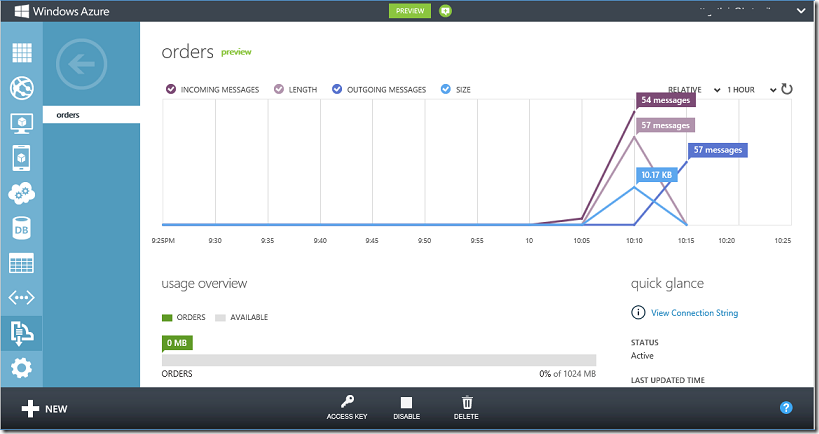

Scott Guthrie announced a handful of changes to the Windows Azure Portal, and among them, was the long-awaited migration of Service Bus resources from the old-and-busted Silverlight Portal to the new HTML hotness portal. You’ll find some really nice additions to the Service Bus Queues and Topics. In addition to creating new queues/topics, you can also monitor them pretty well. You still can’t submit test messages (ala Amazon Web Services and their Management Portal), but it’s going in the right direction.

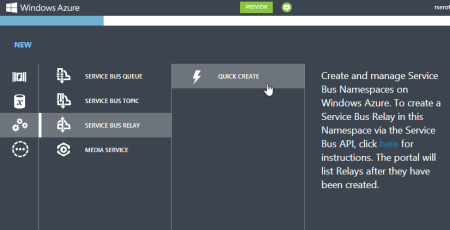

One thing that caught my eye was the “Relays” portion of this. In the “add” wizard, you see that you can “quick create” a Service Bus relay.

However, all this does is create the namespace, not a relay service itself, as can be confirmed by viewing the message on the Relays portion of the Portal.

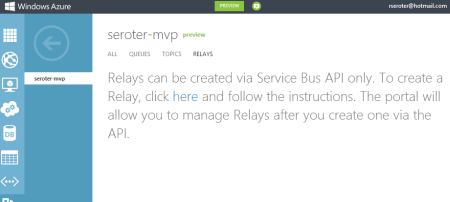

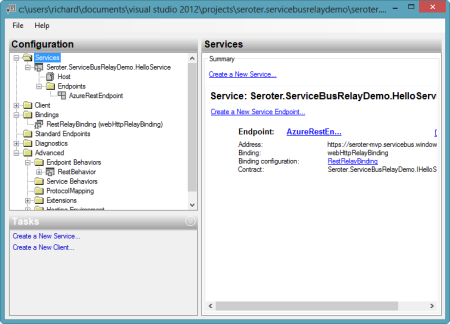

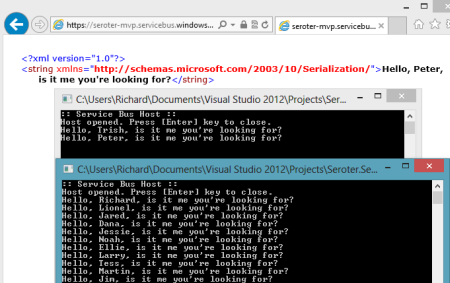

So, this portal is just for the *management* of relays. Fair enough. Let’s see what sort of management I get! I created a very simple REST service that listens to the Windows Azure Service Bus. I pulled in the proper NuGet package so that I had all the Service Bus configuration values and assembly references. Then, I proceeded to configure this service using the webHttpRelayBinding.

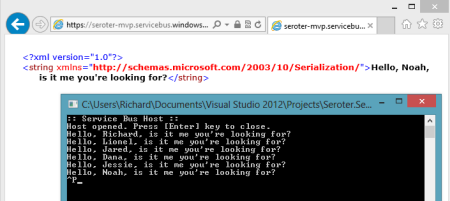

I started up the service and invoked it a few times. I was hoping that I’d see performance metrics like those found with Service Bus Queues/Topics.

However, when I returned to the Windows Azure Portal, all I saw was the name of my Relay service and confirmation of a single listener. This is still an improvement from the old portal where you really couldn’t see what you had deployed. So, it’s progress!

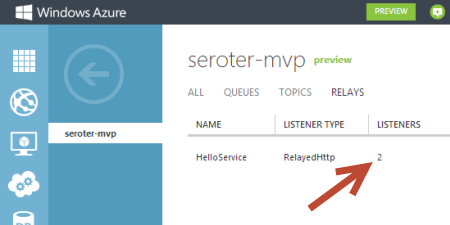

You can see the Service Bus load balancing feature represented here. I started up a second instance of my “hello service” listener and pumped through a few more messages. I could see that messages were being sent to either of my two listeners.

Back in the Windows Azure Portal, I immediately saw that I now had two listeners.

Good stuff. I’d still like to see monitoring/throughput information added here for the Relay services. But, this is still more useful than the last version of the Portal. And for those looking to use Topics/Queues, this is a significant upgrade in overall user experience.

Brian Swan (@brian_swan) described Using Memcache to access a Windows Azure Dedicated Cache in a 10/9/2012 post to the [Windows Azure’s] Silver Lining blog:

[A] few weeks ago, Larry and I wrote a couple of post about how to set up co-located caching using Windows Azure Caching (Preview) and access it from Ruby and PHP: Windows Azure Caching (Preview) and Ruby Cloud Services and PHP, Memcache, and Windows Azure Caching. What we didn’t cover in those posts was how to set up a role as a dedicated cache and then access it using the Memcache protocol. (In the co-located scenario, you dedicated a portion of a role’s memory to caching and access from the same role.) So, in this post I’ll cover how to set up a Node.js worker role that serves as a dedicated cache and a PHP web role that accesses the cache using the php_memcache extension. To follow the steps below, you’ll need a Windows Azure account (you can sign up for the free trial) and a Windows Azure Storage account.

Before I get into the details, there are a couple of things to note here:

- The steps in this tutorial assume that you are working on a Windows machine. The Windows Azure team is working on expanding Cloud Services development to other platforms.

- Windows Azure Caching (Preview) is not currently available in Windows Azure Web Sites.

- As you will see in the details below, all of the steps to enable this scenario are configuration steps. You can expect tooling support in the future that makes this work much easier.

- I have chosen to use a Node.js worker role as the dedicated cache simply because I wanted to. You could choose to use a worker role with any runtime of your choice. The same is true for the web role.

With those things in mind, here’s how to set things up:

1. Make sure you have the Windows Azure SDK 1.7 installed: download.

2. Install the Windows Azure PowerShell cmdlets: install. (For information about using the cmdlets, see How to use Windows Azure PowerShell.)

3. Create a new Windows Azure project with this command: New-AzureServiceProject <project name>. This will create a new directory (with the same name as <project name>). From within that directory, add a PHP web role and a Node.js worker role with the following commands: Add-AzurePHPWebRole and Add-AzureNodeWorkerRole. By default, the role names will be WebRole1 and WorkerRole1. Optionally, you can enable remote desktop access to these roles by running this command: Enable-AzureServiceProjectRemoteDesktop.

4. Locate the CachingPreview folder in the .NET SDK. (This is typically found here: C:\Program Files\Microsoft SDKs\Windows Azure\.NET SDK\2012-06\ref. ) Copy that folder and paste it in the bin folder of your web role (<project name>/WebRole1/bin). Finally, rename the pasted folder WindowsAzure.Caching.MemcacheShim. (The shim will be installed in a start up task that is defined in the next step.)

5. Open the project’s ServiceDefinition.csdef file (in the <project name> folder) and locate the <WebRole> element. Add the highlight element (below) as a child to the <Startup> element. This defines the task that will install the Memcache shim upon role start up.

<Startup>

<Task commandLine="setup_web.cmd > log.txt" executionContext="elevated">

<Environment>

<Variable name="EMULATED">

< RoleInstanceValue xpath="/RoleEnvironment/Deployment/@emulated" />

</Variable>

<Variable name="VERSION" value="5.3.13" />

<Variable name="DATACENTER" value="" />

<Variable name="RUNTIMEURL" value="" />

<Variable name="MANIFESTURL" value="http://azurertscu.blob.core.windows.net/php/runtimemanifest.xml" />

</Environment>

</Task>

<Task commandLine="WindowsAzure.Caching.MemcacheShim\MemcacheShimInstaller.exe" executionContext="elevated" />

< /Startup>6. Still in the <WebRole> element of the .csdef file, add the highlighted element (below) to the <Endpoints> element. This sets up an internal port for Memcache protocol communication. We’ll configure it to communicate with the dedicated cache later.

<Endpoints>

< InputEndpoint name="Endpoint1" protocol="http" port="80" />

<InternalEndpoint name="memcache_default" protocol="tcp">

< FixedPort port="11211" />

</InternalEndpoint>

< /Endpoints>7. Moving now to the <WorkerRole> element of the .csdef file, add the following element (below) as a child of the <WorkerRole> element. This will import the module that allows the worker role to serve as the dedicated cache.

<Imports>

<Import moduleName="Caching" />

< /Imports>8. Also as a child of the <WorkerRole> element, add the element below. This sets up a resource for collecting logs and dumps.

<LocalResources>

< LocalStorage name="Microsoft.WindowsAzure.Plugins.Caching.FileStore" sizeInMB="1000" cleanOnRoleRecycle="false" />

< /LocalResources>9. Now open the ServiceConfiguration.cscfg file (in the <project name> folder). Add the highlighted elements (below) to the <ServiceConfiguration> element for WorkerRole1. Note that you will also need to fill in your storage account name and key.

<Role name="WorkerRole1">

< ConfigurationSettings>

<Setting name="Microsoft.WindowsAzure.Plugins.Caching.NamedCaches" value="" />

<Setting name="Microsoft.WindowsAzure.Plugins.Caching.Loglevel" value="" />

<Setting name="Microsoft.WindowsAzure.Plugins.Caching.CacheSizePercentage" value="" />

<Setting name="Microsoft.WindowsAzure.Plugins.Caching.ConfigStoreConnectionString" value="DefaultEndpointsProtocol=https;AccountName=Your storage account name;AccountKey=Your storage account key" />

</ConfigurationSettings>

<Instances count="1" />

< /Role>In the next few steps, we’ll enable the memcache shim (on the PHP web role) to communicate with the dedicated cache on the worker role).

10. From the WebRole1 folder, delete the Web.cloud.config file. The shim will look for the Web.config file.

11. Open the Web.config file and delete the following element. If you plan to run your project in the Windows Azure Emulators, leave this element for now, but delete it before you publish your project to Windows Azure.

<appSettings>

<add key="EMULATED" value="true" />

< /appSettings>12. Still in the Web.config file, add the following element as the first child of the <configuration> element:

<configSections>

<section name="dataCacheClients"

type="Microsoft.ApplicationServer.Caching.DataCacheClientsSection, Microsoft.ApplicationServer.Caching.Core"

allowLocation="true"

allowDefinition="Everywhere" />

< /configSections>13. Again in the Web.config file, add the following element as a child of the <configuration> element (just not the first child). Make sure that the value of the identifier element is the name of the worker role (WorkerRole1 is the default name).

<dataCacheClients>

<tracing sinkType="DiagnosticSink" traceLevel="Error" />

< dataCacheClient name="DefaultShimConfig" useLegacyProtocol="false">

< autoDiscover isEnabled="true" identifier="WorkerRole1" />

</dataCacheClient>

< /dataCacheClients>14. Finally, you need to add the PHP memcache extension to the web role (it isn’t included by default). Do this by adding a php folder to the bin directory of your web role. In the php folder add a php.ini file with one line (this will be added to the role’s PHP configuration: extension=php_memcache.dll. Also, add an ext directory to the php folder and put the php_memcache.dll there (make sure it is the php 5.3, nts, VC9 version of the dll, which you can find here).

That’s all there is to the configuration.

Like I mentioned earlier, look for tooling soon that will make this work easier.

At this point, your application is ready for deployment, but we should add some PHP code that tests that the cache is working. To do this, I suggest adding a PHP file (cachetest.php) to the root of your web role with the following code:

<?php$memcache = new Memcache;$memcache->connect('localhost_WebRole1', 11211) or die ("Could not connect");$version = $memcache->getVersion();echo "Server's version: ".$version."<br/>\n";$tmp_object = new stdClass;$tmp_object->str_attr = 'test';$tmp_object->int_attr = 123;$memcache->set('key', $tmp_object, false, 10) or die ("Failed to save data at the server");echo "Store data in the cache (data will expire in 10 seconds)<br/>\n";$get_result = $memcache->get('key');echo "Data from the cache:<br/>\n";var_dump($get_result);?>Note the connection code (the server name is ‘localhost_<name of web role>’):

$memcache->connect('localhost_WebRole1', 11211) or die ("Could not connect");

After you deploy your project (Publish-AzureServiceProject), you should be able to browse to cachetest.php and see the output of the code above.

As always, we’d love feedback on this if you try it out.

<Return to section navigation list>

Windows Azure Virtual Machines, Virtual Networks, Web Sites, Connect, RDP and CDN

•• Tyler Doerksen (@tyler_gd) described Website Pricing, Shared or Reserved in a 10/11/2012 post:

Less than a month ago (Sept 17), there was an update to Windows Azure Websites. This update added a third option for hosting websites, the Free option.

Previously all Shared hosted sites were free but now you can choose between Free, Shared, or Reserved. Here is a brief summary of the functionality of each.

Free: You know what they say “Free is the new Shared” well in this case that’s right. Free is like shared in that your site could be hosted on the same VM as other sites but you cannot assign a different domain name to the site. With Free the site will always be <something>.azurewebsites.net

Shared: The same hosting as free in that the site is not on a dedicated VM, however with this option you pay for the ability to assign your own domain to the site, even without a subdomain or what is called a “naked domain”.

Reserved: With this option you can assign the domain like shared but all of your sites in that datacenter are hosted on a reserved instance. The key point here is that all your websites are hosted by the same reserved instance. So if you have a high traffic site that requires 2 VMs and another small site, both will gain the 2nd VM if they are in the same datacenter.

Pricing

Also before this change the only option that would show up on your bill was the reserved instance but now that there is a cost for the Shared instance, you may need to make some decisions on how you are hosting your sites.

Since all of your Reserved instance sites share one cost for the machine, you may want to go to reserved if you have a number of small traffic sites to host. However, at what point would you switch from Shared to Reserved? Take a look at these screenshots from the Azure pricing calculator.

•• Yochay Kiriaty (@yochayk) started a WAWS Preview to support .NET Framework 4.5 thread in the Windows Azure Web Sites Preview forum on 10/9/2012:

The Windows Azure Web Sites (WAWS) team is committed to listening to customer feedback, introducing new functionality and improving performance and user experience. In few days, we will update WAWS Preview to support.NET Framework 4.5, which has been one of the top asks from our customers.

With this update, all of WAWS web servers will run .NET Framework 4.5. This update is affecting all our web servers since our environment is fully managed. Also, .NET Framework 4.5 is an in place upgrade, running .NET Framework 4.5 and .NET Framework 4.0 side by side is not supported. Note that Web applications built with .NET Framework 4.0 will still work without any changes.

In preparation for this change, we have answered few questions that we think you might have.

Q: Why was my site upgraded to .NET Framework 4.5?

Windows Azure Web Sites has been upgraded to .NET Framework 4.5 due to popular demand in a strategic move to enable access to latest technology for our customers. As WAWS is a managed hosted environment all WAWS web servers are now running .NET 4.5

New features available in .NET Framework 4.5 are described at http://msdn.microsoft.com/en-us/library/vstudio/ms171868.aspx. New features available for ASP in .NET Framework 4.5 are described at http://msdn.microsoft.com/en-us/library/vstudio/hh420390.aspx and in more detail at http://www.asp.net/vnext/overview/whitepapers/whats-new

Q: How do I migrate my ASP.NET application to enable features in ASP.NET 4.5?

In order to provide the best backwards compatibility ASP.NET requires developers to target the .NET Framework 4.5. The Migration Guide to the .NET Framework 4.5 is a great place to start.

Q: What should I do if my app stops working?

We have worked very hard to make sure .NET Framework 4.0 applications work seamlessly with .NET 4.5. However, some changes in the .NET Framework may require changes to your web application code. Upgrading from earlier versions of .NET framework to 4.5 is easily achieved once you open your project in VS 2012. For more information about upgrading a project, see How to: Troubleshoot Unsuccessful Visual Studio Project Upgrades and follow the notes in the The Migration Guide to the .NET Framework 4.5.

Q: Can I configure my website to continue using .NET Framework 4.0 instead of upgrading to .NET Framework 4.5?

No .NET Framework 4.5 is an in-place upgrade that replaces .NET Framework 4, rather than a side-by-side installation.

Q: Why is .NET 4.5 not offered side-by-side with 4.0?

The .NET Framework 4.5 was designed as if it were a service pack to .NET 4.0 that also adds additional features to .NET 4.0. The .NET Framework 4.5 simply replaces previous assemblies during installation.

Q: Are HTML 5 Web Sockets available in Windows Azure Web Sites?

HTML 5 Web Socket support requires both .NET Framework 4.5 and Windows Server 2012. Today Windows Azure Web Sites is running on Windows Server 2008 R2, therefore HTML 5 Web Sockets are not supported on WAWS. Support for Windows Server 2012 is coming soon. [Emphasis added.]

Q: Why is the Configure tab in the Azure Portal showing .NET 4.0 as an option?

This feature is being deployed in multiple stages and the .NET framework options in the Configure tab are still pending an update to align with the naming convention for .NET 4.5.

If you discover any compatibility issues with your ASP.NET application running on Windows Azure Web Sites, we want to hear about them via Forums Feedback or Windows Azure Support.

• Manu Yashar-Cohen (@ManuKahn) commented about Connecting Cloud Services to Azure Virtual Network in a 10/10/2012 post:

A customer asked me if it is possible to connect cloud services to azure virtual network.

When creating a new virtual machine we specify the network to be used but when creating a new cloud service the portal does not provide a method to connect the new cloud service to an existing virtual network.

Well It is possible !!!

Michael Washam wrote a nice blog about it. [See post below.]The Idea is to put NetWorkConfiguration in the config file (.cscfg) of your deployment.

• Michael Washam (@MWashamMS) posted Connecting Web or Worker Roles to a Simple Virtual Network in Windows Azure on 8/6/2012 (missed when published):

In this post I’m going to show you how simple it is to connect cloud services to a virtual network.

Before I do that let me explain a couple of reason of why you would want to do this.

There are a few use cases for this.

Web or Worker Roles that need to:

- Communicate with a Virtual Machine(s)

- Communicate with other web or worker roles in a separate cloud service (same subscription and region though)

- Communicate to an on-premises network over a site to site VPN tunnel (not covered in this post)

In at least the first two cases you could accomplish the connectivity by opening up public endpoints on the cloud services and connecting using the public IP address. However, this method introduces latency because you are making multiple hops over each cloud service load balancer. Not to mention that there is a good chance that the endpoint you are trying to connect to would be more secure if it wasn’t exposed as a public endpoint. Connecting through a VNET is much faster and much more secure.

For this example I’m going to walk through a simple VNET that will accomplish the goal of connecting cloud services.

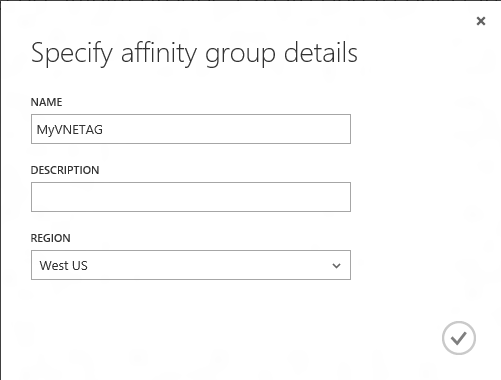

Step 1: Create an affinity group for your virtual network in the region where your cloud services will be hosted.

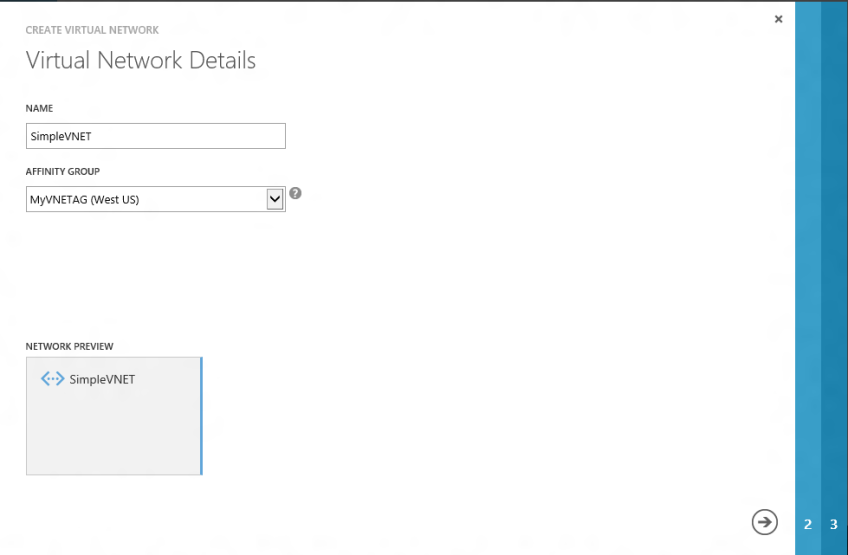

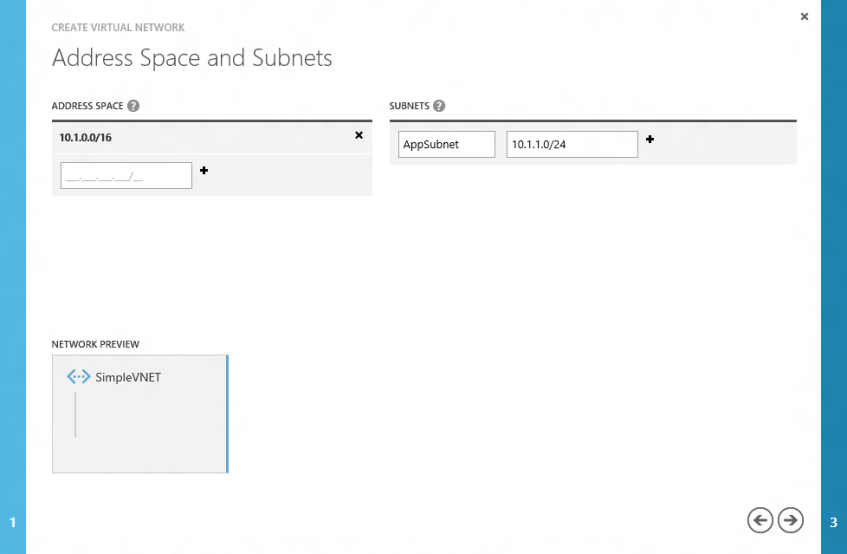

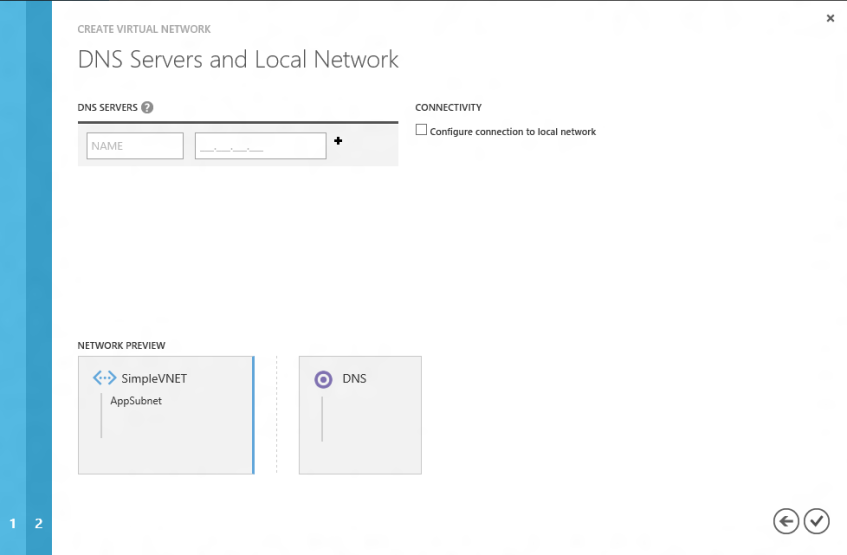

Step 2: Create a simple virtual network and specify the previously created affinity group along with a single subnet network configuration.

For the subnet details I am specifying 10.1.0.0/16 which is a class B address space. I’m only carving one subnet (10.1.1.0/24) out of the address space to keep things simple. Unless you need connectivity back to on-premises or are planning to lock down traffic between subnets when Windows Azure supports ACLs this will likely be a sufficient solution for simple connectivity.

Step 3: Deploy a Cloud Service to the VNET.

Unlike deploying a Virtual Machine you cannot specify virtual network settings on provisioning through the portal. The networking configuration goes inside of the .cscfg file of your Windows Azure deployment package.

To connect to this virtual network all you would need to do is paste the following below the last Role tag in your service configuration file (.cscfg) and deploy.

<NetworkConfiguration> <VirtualNetworkSite name="SimpleVNET" /> <AddressAssignments> <InstanceAddress roleName="MyWebRole"> <Subnets> <Subnet name="AppSubnet" /> </Subnets> </InstanceAddress> </AddressAssignments> </NetworkConfiguration>A few things to note about this configuration. If you have multiple roles in your deployment package you will need to add additional InstanceAddress elements to compensate. Another thing to point out is the purpose behind multiple subnets for each role. The idea is if you have an elastic service that has instances added/removed you could conceivably run out of addresses in the subnet you specify. If you specify multiple subnets to the role Windows Azure will automatically pull an address out of the next available subnet when the instance is provisioned if you run out of addresses from the first subnet.

Name resolution is another area to address. When deployed inside of a virtual network there is no default name resolution between instances. So if you are deploying a web role that will connect to a SQL Server running on a virtual machine you will need to specify the IP address of the SQL server in your web.config. For that specific scenario it would make sense to deploy the SQL VM first so you can actually get the internal IP address for your web app. It is of course possible to deploy your own DNS server in this environment. If you do you can specify the DNS server for your web/worker role instances with an additional XML configuration.

This would go right below the NetworkConfiguration element start tag:

<Dns> <DnsServers> <DnsServer name="mydns" IPAddress="10.1.1.4"/> </DnsServers> </Dns>Finally, to make a truly connected solution you would want to deploy additional services such as VM or other cloud service/web worker roles to the VNET. The beauty of this configuration is every application deployed into this VNET will be on the same virtual network and have direct low latency and secure connectivity.

P.S. Virtual Network and Subnet Names are case sensitive in the configuration file.

Alan Le Marquand reported Overview of Windows Azure Virtual Machines (IaaS) MVA Course now released on 10/8/2012:

The MVA team is pleased to announce the release of the new Overview of Windows Azure Virtual Machines (IaaS) course

About the Course

This course offers an overview of the features that make up the new Windows Azure Virtual Machines and Virtual Networks offerings. This course explains the Virtual Machine storage architecture and demonstrates how to provision and customize virtual machines, configure network connectivity between virtual machines, and configure site-to-site networks that enable true applications that span from on-premises to Windows Azure. This course also demonstrates features that enable you to create highly available Virtual Machine-based services.

Kristian Nese (@KristianNese) posted System Center 2012 SP1 - Virtual Machine Manager - The Review on 10/8/2012:

When I was so honored to receive the MVP award in 2010, it was in the Virtual Machine Manager expertise. This component lays close to my passion for virtualization and cloud computing in general, and it’s a core component in Microsoft's cloud solutions.

I have been using Virtual Machine Manager since the 2008 version and watched the development with big enthusiasm. The launch of System Center 2012 was beyond impressive, and Service Pack 1 – that will support Windows Server 2012 (Hyper-V) will be even more stunning.

Virtual Machine Manager 2012 SP1 – what value does it bring to your business?

System Center 2012 SP1 – Virtual Machine Manager is the management layer for your infrastructure like virtualization hosts, storage, networking (pooled resources) so you can deliver cloud services to your business and customers. I believe that there’s no need to dive into all the features in Hyper-V in Windows Server 2012, because you have most likely heard a lot of them by now. The bottom line is that many organizations, independent of the size of their businesses, are looking towards Microsoft’s premium hypervisor in these days. All the known challenges and limitations from earlier versions are now addressed in this release. Multi-tenancy, VM mobility, optimization in the entire stack, and simplified management, licensing and disaster recovery to mention a few, will automatically give your ROI a solid burst.

Virtual Machine Manager is an abstraction layer above your infrastructure and you can manage those components completely from a single pane of glass.

Investments made in storage will let customers benefit from JBOD and commodity hardware in their environment by using file storage(SMB 3.0) as an alternative to block storage (iSCSI, FC) which is often associated with expensive SAN’s, switches and cables.

Virtual Machine Manager will leverage SMB and file shares (also scale-out file servers) and take care of the required configuration (no need to map permissions on individually shares and folders).

Of course, if you have invested in a SAN solution, you can leverage this from VMM as well with the support for SMI-S protocol.To summarize the value of VMM for your fabric, VMM will support the lifecycle of your resources. All the way from bare-metal deployment of virtualization hosts by using PXE, creation of clusters, servicing and maintenance through the integration with WSUS. Needless to say, the bigger environment you got, it’s more likely that VMM will be a good friend of you.

Complexity and simplification

Network virtualization is a key feature in Hyper-V to support multi-tenancy. It’s a very powerful technique to scale your network as well, by using IP encapsulation – which is default in VMM (requires only one PA from the physical network fabric, instead of one PA for each CA if you are using IP rewrite). To configure network virtualization in Hyper-V without VMM, you must polish your kung-fu skills in Powershell. With all the respect to powershell, it’s great to configure and automate every single process in your system, but with network virtualization, it’s hard to manage a dynamic environment. And especially large environments with multiple hosts and clusters. This is where VMM comes to the playground and takes care of every bit, acting like a policy server controlling IP pools, VM networks and also routing within your environment, and also outside your network.

Beyond virtualization – and beyond private cloud

For those of you who have already played with the Beta, VMM introduces tenants in this build.

A tenant administrator can create and manage self-service users and VM networks. They can create and deploy their own VMs and services using the VMM console and a web portal.

To see the big reasons for this, we must first see the big Picture.

System Center 2012 SP1 – Orchestrator will include SPF – which is Service Provider Foundation.

This will let customers use VMM, OpsMgr and Orchestrator together in a multi-tenancy environment.

To explain this as simple as possible, you can use the SPF-activities in Orchestrator to create runbooks that will communicate with the VMM web service through OData, and use REST.

You can connect to SPF by using your own existing portal, Windows Azure Services for Windows Server and also System Center App Controller.

An interesting scenario here is when you have reached your capacity in your own private cloud, you can connect to a SPF-cloud (which could be a partner, or another cloud vendor) to increase capacity and scale to meet your needs. There might be reasons why you can’t use, or won’t use IaaS in Windows Azure for this, and that’s when this is really handy. Needless to say, App Controller will of course manage IaaS in Azure so that you can deploy virtual machines both on-premise and to the big blue cloud.So you are interested in the best management tool for your cloud infrastructure?

- Guess what!

System Center 2012 SP1 – Virtual Machine Manager will be the ultimate solution for you. Not only embracing the components in your own datacenter, and integrates with the other components of System Center, but it is also a framework to deliver automated and effective cloud solutions to your customers.

Liam Cavanagh (@liamca) described How to Host a Website on Azure and Link it to a Domain like .IO in a 10/6/2012 post:

.COM addresses are becoming harder and hard to come by. It seems each time you look, it is harder to get the name you want. Many startups have become creative in the way they name their site, for example if you wanted to create a site called score.com, you might try calling it scorable.com or scorability.com. Another option that seems to be getting more and more popular is to use non .COM domains such as instant.ly or ordr.in or ginger.io. Many of these domains are easier to get, but the downside for many is that they are typically more expensive and the process of configuring them is often harder. I want to talk about how I managed to create URL using the io domain which used a site hosted on Windows Azure (where all the html pages are hosted). I am going to assume you already know how to create a web site on Windows Azure.

Getting the Domain Name

In the past, I have used GoDaddy as the place that I set up most of my domains. For .COM’s this is incredibly cheap (most of the time you can find a promo code for just about anything) and I have found them to be extremely supportive and easy to work with. However, in this case I wanted to get a .IO domain which is actually a Indian Ocean domain because the .COM was owned by someone else trying to sell it. To do this, I used http://nic.io where it cost ~$93 for the year (as opposed to ~$8 for a .COM domain).

DNS Server Configuration

After I purchased the .io domain from nic.io, I learned that they needed to be provided with DNS settings requiring a Primary and Secondary DNS Server. This was kind of frustrating since GoDaddy provided me with this whenever I created a .COM address. So after looking a little more I learned that I could just use GoDaddy for this. In my case, I could just log into my existing GoDaddy account and configure this for no extra charge. For you, you could either look at another DNS Server provider or create an account with GoDaddy to do this.

GoDaddy DNS Configuration

Within my GoDaddy account, I launched the DNS Manager and chose to create a new Offsite Domain. This was pretty hidden which is why I am adding a screen shot of where I went.

In the Domain Name text box I entered my .IO domain name, clicked Next and copied the 2 DNS servers that were provided. Copy these as you will need them in a minute.

Create A Record

The next step is to configure the GoDaddy DNS servers so that when a request for my domain .io is requested, it know where to point the user to. In my case it is a Windows Azure website with a .cloudapp.net name. To do this config, in the GoDaddy DNS Dashboard you click on “Edit Zone” under the domain you just added. In the A Host section, choose “Add Record” and for “Host” use @, and for “Points To” add the IP address to your Azure hosted site.

Choose Save

Add DNS Servers to NIC.io.

The final step is to configure NIC.io so that it knows to use the GoDaddy domain servers you just configured. Log in to the admin panel for NIC.io and choose to Manage the DNS Settings. In the “DNS Servers or Mail & Web Forwarding Details”, for the primary server enter the first DNS server you copied from the previous steps and in the Secondary Server enter the second. If you only have one DNS server, I think that is probably ok. At the bottom choose “Modify Domain”.

Give it an hour or two

It usually takes an hour or two for these configurations to fully get updated. However, after that you should be all set to go.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

• Himanshu Singh (@himanshuks) reported Riak is now available on Windows Azure on 10/9/2012:

As announced on the Basho blog today, Riak is now available on Windows Azure as a fully supported and tested NoSQL database option. Riak brings a master-less, distributed database option to Windows Azure, allowing unlimited scale, built-in replication and fast querying options. Please visit these links for more information, extensive documentation and packaging tools.

Over the coming weeks Basho will work with the Microsoft team to add tools that make creation and benchmarking of large clusters simple—Basho is currently in development to automate the creation of Riak clusters for benchmarks.

We are excited to have this new offering on Windows Azure, so stay tuned for updates.

Gregory Leake posted Announcing StockTrader 6 Reference Application: Cross-Device Mobile Applications with Windows Azure Cloud Services on 10/9/2012:

Today I am happy to announce the availability of StockTrader 6, a new end-to-end reference application for Windows Azure and Windows Azure SQL Database. The sample application with full source code and Visual Studio solutions can be downloaded and installed from MSDN here.

If you want to check the application out before downloading it yourself, you can:

a) Browse a live deployment of the sample application on Windows Azure via HTML5/MVC here.

b) Install and run the working mobile applications:

- Install the iOS application from the Apple App Store.

- Install the Android application from the Google Play Store.

- The Windows 8 and Windows Phone clients are included in the download.

Sharing Client Code across Device Types

One of the cool things about the sample is that each mobile client has a native user-interface for each device type, but via Xamarin mono roughly 60% of the client code (including REST service calls, data communication, security, etc.) is shared across all device types and written entirely in C#.

Sharing a Single Azure Cloud Service Backend across Device Types

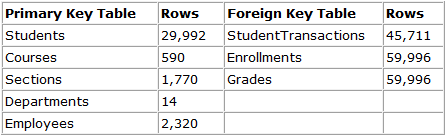

Also, all clients across all devices work against the single, common Windows Azure Cloud Service backend. The backend is a Windows Azure RESTful service that scales out across as many middle-tier compute instances as you want. The data tier has also been specifically designed to optionally take advantage of Windows Azure SQL Database Federation for data tier scale out.

More Details

The focus of the sample is thus two-fold: