Windows Azure and Cloud Computing Posts for 9/4/2012+

| A compendium of Windows Azure, Service Bus, EAI & EDI, Access Control, Connect, SQL Azure Database, and other cloud-computing articles. |

- Windows Azure Blob, Drive, Table, Queue, Hadoop and Media Services

- Windows Azure SQL Database, Federations and Reporting, Mobile Services

- Marketplace DataMarket, Cloud Numerics, Big Data and OData

- Windows Azure Service Bus, Access Control, Caching, Active Directory, and Workflow

- Windows Azure Virtual Machines, Virtual Networks, Web Sites, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch and Entity Framework v4+

- Windows Azure Infrastructure and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

Azure Blob, Drive, Table, Queue, Hadoop and Media Services

My (@rogerjenn) Analyze Big Data with Apache Hadoop on Windows Azure Preview Service Update 3 article of 9/5/2012 is available from the ACloudyPlace blog. It begins:

Big data is receiving more than its share of coverage by industry pundits these days, while startups offering custom Hadoop and related open-source distributions are garnering hundreds of millions of venture capital dollars. The term Hadoop usually refers to the combination of the open-source, Java-based Hadoop Common project and its related MapReduce and Hadoop Distributed File System (HDFS) subprojects managed by the Apache Software Foundation (ASF). Amazon Web Services announced the availability of an Elastic MapReduce beta version for EC2 and S3 on April 2, 2009. Google obtained US Patent 7,650,331 entitled “System and method for efficient large-scale data processing,” which covers MapReduce, on January 19, 2010 and subsequently licensed the patent to ASF.

Microsoft put its oar in the big-data waters when Ted Kumert, Corporate VP of Microsoft’s Business Platform Divison announced a partnership with Hortonworks to deliver Apache Hadoop distributions for Windows Azure and Windows Server at the Professional Association for SQL Server (PASS) Summit 2011 on October 12, 2011. (Hortonworks is a Yahoo! spinoff and one of the “big two” in Hadoop distribution, tools and training; Cloudera is today’s undisputed leader in that market segment.) Despite an estimated 58% compounded annual growth rate (CAGR) for commercial Hadoop services, reaching US$2.2 billion in 2018, Gartner placed MapReduce well into their “Trough of Disillusionment” period in a July 2012 Hype Cycle for Big Data chart (see Figure 1).

Figure 1. Gartner’s Hype Cycle for Big Data chart

The Hype Cycle for Big Data chart places MapReduce and Alternatives in the Trough of Disillusionment with two to five years to reach the Plateau of Productivity despite rosy estimates for a 58% CAGR and US$2.2 billion of Hadoop service income by 2018. GigaOm’s Sector RoadMap: Hadoop platforms 2012 research report describes a lively Hadoop platform market. I believe Gartner is overly pessimistic about Hadoop’s current and short-term future prospects.

The SQL Server Team released an Apache Hadoop for Windows Azure (AH4WS) CTP to a limited number of prospective customers on December 14, 2011. I signed up shortly after the Web release but wasn’t admitted to the CTP until April 1, 2012; my first blog post about the CTP was Introducing Apache Hadoop Services for Windows Azure of April 2. AH4WS Service Update 2 of June 29, 2012 added new features, updated versions (see Table 1) and increased cluster capacity by 2.5 times, which broke the logjam of users waiting to be onboarded to the CTP. Service Update 3 of August 21, 2012 added a REST APIs for Hadoop job submission, progress inquiry and killing jobs, as well as a C# SDK v1.0, PowerShell cmdlets, and direct browser access to a cluster.

Table 1. The components and versions of the SQL Server team’s Apache Hadoop on Windows Azure CTP service updates of June 29, 2012 (SU2) and August 21, 2012 (SU3). Component descriptions are from the Apache Software Foundation, except CMU Pegasus.

Component Description SU2 Version SU3 Version Hadoop Common The common utilities that support other Hadoop subprojects 0.20.203.1 1.0.1 Hadoop MapReduce A software framework for distributed processing of large data sets on compute clusters 0.20.203.1 1.0.1 HDFS Hadoop Distributed File System, the primary storage system used by Hadoop applications 0.20.203.1 1.0.1 Hive A data warehouse infrastructure that provides data summarization and ad hoc querying 0.7.1 0.8.1 Pig A high-level data-flow language and execution framework for parallel computation 0.8.1 0.9.3 Mahout A scalable machine learning and data mining library 0.5 0.5 Sqoop A tool designed for efficiently transferring bulk data between Apache Hadoop and structured datastores, such as relational databases 1.3.1 1.4.2 CMU Pegasus A graph mining package to compute degree distribution of a sample graph* 2 2

*Pegasus is an open-source graph mining package developed by people from the School of Computer Science (SCS), Carnegie Mellon University (CMU).

Note: The Yahoo! Apache Hadoop on Azure CTP group provides technical support for AH4WA, not the usual social.msdn.microsoft.com or social.technet.msdn.com forums for Windows Azure. …

Read more. See Links to My Cloud Computing Articles at Red Gate Software’s ACloudyPlace Blog for a complete list of my articles for ACloudyPlace.

Corey Fowler (@SyntaxC4) and Brady Gaster (@BradyGaster) produced a 00:27:52 David Pallmann Demonstrates azureQuery video for Channel9 on 9/4/2012:

Join your guides Brady Gaster and Cory Fowler as they talk to the product teams in Redmond as well as the web community.

In this episode, Windows Azure MVP David Pallmann introduces his latest creation, azureQuery. Inspired by the fluent interface jQuery provides web developers who use JavaScript to enhance their web applications, azureQuery offers a fluent interface to JavaScript developers who want to communicate with Windows Azure. The script library adds support for Windows Azure Blob Storage access via JavaScript, so web developers—and Windows 8 developers—can access their Windows Azure blob data directly from JavaScript in an HTML application. If you're a client-side developer and you want to add Windows Azure support directly into your applications, you've got to learn about azureQuery and how it can augment your development experience

Aspera, Inc. reported Aspera On Demand for Windows Azure Available as a Public Beta in a 9/4/2012 press release from Business Wire:

Aspera, Inc., creators of next-generation software technologies that move the world’s data at maximum speed, today announced the opening of the Aspera On Demand Platform as a Service (PaaS) for Microsoft Corp.’s Windows Azure, in beta. The interoperability of Aspera’s fasp transport software with Windows Azure further unlocks the vast capabilities of the Microsoft public cloud, helping users experience the benefits of seamless, high-speed and secure large data ingest and access for Windows Azure local or object storage.

Windows Azure’s ready-to-use Media Services allows customers to easily create complex media workflows built on Windows Azure Media Services and third-party technologies, and run them in the cloud for maximum flexibility, scalability and reliability. By integrating high-speed data transfer capabilities based on patented faspTM technology, Aspera On Demand for Windows Azure provides content providers and media partners with nearly unlimited scale-out transfer and storage capacity, allowing them to cost-effectively process and store huge volumes of digital media and make it available in the format that customers want, when they want it.

“Windows Azure Media Services gives customers ready-to-use services in the cloud for their digital content processing and distribution,” said Sudheer Sirivara, director, Windows Azure Media Services, Microsoft. “With Aspera’s on-demand transfer software and Windows Azure, customers can implement secure global workflows at scale, moving the largest files of any type at fast speeds, with confidence.”

Existing applications that already take advantage of Windows Azure can use Aspera and Windows Azure as a high-speed transport and storage platform. In addition, the latest Aspera Web applications--such as Aspera Shares, an easy-to-use web application for content ingest and sharing, and Aspera faspexTM, the flagship global person-to-person file exchange and collaboration software--offer full browsing and transfer capabilities with Windows Azure local and BLOB storage even when running outside of Windows Azure.

“The Aspera On Demand platform is at the core of the next wave of cloud enablement for data-intensive workflows. By allowing big data to be accessed and moved fast and securely to, from, and across remote infrastructures, media and entertainment companies can now actually leverage the cloud for their file-based workflows,” said Michelle Munson, Aspera president and co-founder. “Aspera and Windows Azure deliver on the cloud’s promise for media companies, allowing them to reap the benefits in terms of instant scale-up, flexibility and low cost, while also focusing on their core business instead of building IT infrastructure.”

For more information on how Aspera delivers on the promise of the cloud, or to qualify for the Aspera On Demand Azure Beta, contact Aspera at ondemand@asperasoft.com or visit http://cloud.asperasoft.com

About Aspera

Aspera is the creator of next-generation transport technologies that move the world’s data at maximum speed regardless of file size, transfer distance and network conditions. Based on its patented fasp™ protocol, Aspera software fully utilizes existing infrastructures to deliver the fastest, most predictable file-transfer experience. Aspera’s core technology delivers unprecedented control over bandwidth, complete security and uncompromising reliability. More than 1,700 organizations across a variety of industries on six continents rely on Aspera software for the business-critical transport of their digital assets.

Please visit www.asperasoft.comand follow us on Twitter @asperasoft for more information.

Tim Anglade (@timanglade) interviewed Mike Miller, Cloudant’s Chief Scientist, on 9/26/2010 and posted the 00:50:40 Understanding MapReduce video to his NoSQLTapes.com blog on 9/4/2012:

Volume 8: Understanding MapReduce — with Mike Miller

Recorded on September 26, 2010 in Seattle, WA.

The Chief Scientist at Cloudant explains what MapReduce is, how you can leverage it and more. With a slight emphasis on CouchDB and BigCouch use-cases.

The MapReduce paper is available at Google Labs. Mike was also kind enough to write a companion piece with more details & examples.

This video is available for download on vimeo (h.264, 720p, iPad & iPhone compatible)

<Return to section navigation list>

Windows Azure SQL Database, Federations and Reporting, Mobile Services

Sandrino DiMattia explained how to Backup and restore your SQL Azure database using PowerShell in a 9/5/2012 post:

Today there are a few good options to backup from SQL Azure, restore to SQL Azure or even migrate to SQL Azure:

- Announcing SQL Azure Import/Export Service Now in Production (with client)

- Enzo Backup for SQL Azure

- Red-Gate SQL Azure Backup

Now when I want to try something very quicky or with small databases I often use SQL Management Studio 2012 to import and export databases. Although this might not work with those huge 100 GB databases, it’s still a nice solution. If you right click on a database you can choose to export this database as a Data-tier application (which creates a bakpac file, often referred to as an ‘MSI’ containing the logical contents of your database).

The same goes if you right click your server, you can choose to import a Data-tier application which will create a new database on your server.

Backup and restore with PowerShell

The wizards in SQL Management Studio 2012 are very useful, but there will be times when you’ll need to do this on a regular basis. Since the export and import wizards of SQL Management Studio are referencing .NET assemblies, we can re-use these assemblies in a PowerShell script to automate the export and import. Here are the scripts:

Backup-SQLAzureDB.ps1

This is a very simple script allowing you to specify the connection string of your SQL Azure server, the database you wish to export and the output filename for the bacpac file. Note that this assumes that you have installed SQL Server Management Studio 2012 under: C:\Program Files (x86)\Microsoft SQL Server (if this isn’t the case you can simply specify the optional -SqlInstallationFolder parameter with the correct path).

Syntax:

.\Backup-SQLAzureDB.ps1 -ConnectionString “Server=tcp:….database.windows.net,1433;Database=MyDb;User ID=something@server;Password=pwd;Trusted_Connection=False;Encrypt=True;Connection Timeout=30;” -DatabaseName “MyDB” -OutputFile “C:\backup.bacpac”

At the end of the backup the script will show how long it took:

Restore-SQLAzureDB.ps1

This script is very similar to the previous script. But instead of calling the BackupBakpac method this script calls the ImportBakpac method allowing you to use a local *.bacpac file to create a new database.

The syntax is almost the same:

.\Restore-SQLAzureDB.ps1 -ConnectionString “Server=tcp:someServer.database.windows.net,1433;User ID=someUser@someServer;Password=MyPwd;Trusted_Connection=False;Encrypt=True;Connection Timeout=30;” -DatabaseName “SomeRestoredDb” -InputFile “C:\BackupFile.bacpac”

Both scripts are available on GitHub.

Todd Brix (@toddbrix) reported Windows Phone 8 SDK Preview opens for applications Sept. 12 in a 9/5/2012 post to the Windows Phone Developer blogs:

In June we provided an early look at some developer features of Windows Phone 8, promising to share the Software Development Kit (SDK) in late summer. Select partners and developers have already seen early builds of the new SDK, helping to test the new toolset and get started on Windows Phone 8 apps.

Today I’m happy to announce that the time has come to make the near-final kit available to more developers through the Windows Phone 8 SDK Preview Program. Next Wednesday I’ll share detailed instructions on how current Windows Phone developers with published apps can apply. But I do want to set your expectations that program access will be limited.

The full Windows Phone 8 SDK will be made publically available later this year when we unveil Windows Phone 8. Until then, we believe this program offers more published developers a way to explore the SDK and get started on the next wave of amazing Windows Phone apps.

Look for more details Wednesday, September 12.

Finally, if you watched Nokia unveil its new Windows Phone 8 handsets in New York City today—the Lumia 820 and 920—you probably saw the on-stage demo of some new Windows Phone 8 camera APIs and a feature we call Lenses. Watch for additional info about how you can create your own Lenses in the coming weeks.

Hopefully, Windows Azure Mobile Services will add Windows Phone 8 to it list of supported platforms when the Windows Phone team releases the SDK preview.

According to Mary Jo Foley (@maryjofoley) in her Windows Phone 8 software development kit now due 'later this year' post of 9/5/2012:

Microsoft's promised delivery of its Windows Phone 8 software development kit (SDK) some time "this summer" has now shifted to "later this year."

In a September 5 post on the "Windows Phone Developer" blog, Microsoft officials acknowledged the new, later timing. The new estimated delivery time for the full SDK is at the same time as when Microsoft launches Windows Phone 8. The Windows Phone 8 launch date is October 29, according to my sources. (Microsoft still hasn't officially confirmed the October 29 date, but I'm feeling pretty darn confident of it, for what it's worth.)

Next week, on September 12, Microsoft will "share detailed instructions on how current Windows Phone developers with published apps can apply to obtain the near-final Windows Phone 8 SDK seemingly in the next couple of weeks. However, the number of developers who will get the SDK will be "limited," officials warned. The full publicly-released Windows Phone 8 SDK isn't due until the Windows Phone 8 launch. …

Read more.

PRNewswire reported GreenSQL First to Provide Full Database Security on the Cloud for Microsoft® SQL Azure™ in a 9/5/2012 press release:

GreenSQL, http://www.greensql.com, the database security company, now offers complete database security for SQL Azure, satisfying both security and performance requirements of cloud-based databases.

"Security is the biggest concern of SMBs and enterprises when they contemplate the move to the cloud," says Amir Sadeh, CEO, GreenSQL. "With GreenSQL, companies can safely move to the Azure cloud with iron-clad assurances that data will be just as safe as it is on-site."

GreenSQL's software-based solution is installed as a front-end to SQL Azure; it fully camouflages and secures the Azure database, dynamically masks sensitive and confidential data in real-time, and provides monitoring and auditing of data access and administrative activities. Its caching dramatically increases database performance, reducing latency in cloud environments. By using GreenSQL, companies comply with regulations such as HIPAA, PCI, SOX, and Basel II.

"According to generally accepted industry statistics, SQL injection attempts occur more than 70 times per hour," continued Sadeh. "Our new technology ensures that Azure-stored data is just as secure against threats as privately hosted GreenSQL-protected databases."

GreenSQL protects SQL Azure databases in hybrid and fully hosted environments against external and internal threats, with enhanced SQL injection prevention and a database firewall. In a hybrid environment, GreenSQL becomes the secure gateway between on-premise applications and the SQL Azure cloud. On fully hosted applications, GreenSQL's separation-of-duties features ensure that only authorized personnel can access specified parts of the database.

While Microsoft Windows® and SQL Azure provide their own measures to protect multi-tenancy threats, they do not specifically enforce any database security policies. GreenSQL protects both the database and compute clouds, allowing companies with fully hosted applications to install and run GreenSQL on Azure.

"Businesses want an easy, efficient solution, and GreenSQL lets them run IT more securely and affordably," concluded Sadeh.

About GreenSQL

GreenSQL, the Database Security Company, delivers out-of-the-box database security solutions for small and mid-sized organizations. Started as an open source project back in 2006, GreenSQL became the no. 1 database security solution for MySQL with 100,000 users worldwide. In 2009, in response to market needs, GreenSQL LTD developed a commercial version, bringing a fresh approach to protecting databases of small- and medium-sized businesses.

GreenSQL provides database security solutions that are affordable and easy to install and maintain. GreenSQL supports Microsoft Azure, SQL Server (all versions including SQL Server 2012), MySQL and PostgreSQL.

Apparently GreenSQL didn’t receive the memo about the name-change to Windows Azure SQL Database (WASDB).

Isha Suri reported (belatedly) Windows Azure Mobile Services Launched in a 9/4/2012 post to SiliconANGLE’s DevOpsANGLE blog:

Windows Azure, the open and flexible cloud platform that enables you to quickly build, deploy, and manage applications, has recently announced its mobile services that will help enhance the productivity of developers to quickly build mobile apps. Besides, it will help easily connect scalable cloud backend to the client and mobile applications. With the launch of Windows Azure mobile services, developers can develop Windows 8 app in little time, and soon for Windows Phone, iOS, and Android devices.

It’s quite easy to build a simple Windows 8 app that is cloud-enabled using Windows Azure Mobile Services. All you need to do is to sign up for a no-obligation Free Trial, create an app using instructions, connect your mobile service to an existing Windows 8 client app, store data in cloud, and integrate user authentication/authorization and push notifications within your applications. Developers can create and run up to 10 Mobile Services in a free, shared/multi-tenant hosting environment, which can be scaled up later. The compute capacity can be used on a per-hour basis, which is a flexible model, and allows developers to scale up and down resources to match only what they need.

To summarize, Windows Azure mobile services help developers build mobile app experiences faster, and enable even better user experiences by connecting your client apps to the cloud. This indicated dual advantage for developers as well as the end-users/clients.

Earlier this month, Microsoft launched Windows Azure Active Directory with three major highlights; where developers can connect to a REST-based Web service to create, read, update and delete identity information in the cloud for use right within their applications, the developer preview allows companies to synchronize their on-premises directory information with WAAD and support certain identity federation scenarios as well; and developer preview supports integration of WAAD with consumer identity networks like Facebook and Google, making for one less ID necessary to integrate identity information with apps and services.

Filip W (@filip_woj) described Using Azure Mobile Services in your web apps through ASP.NET Web API in a 9/3/2012 post:

Because ZUMO is not just for Windows 8

Azure Mobile Services is the latest cool thing in town, and if you haven’t checked it out already I really recommend you do, i.e. in this nice introduction post by Scott Gu. In short, it allows you to save/retrieve data in and out of dynamic tables (think no-schema) directly from the cloud. This makes it a perfect data storage solution for mobile apps, but why not use it in other scenarios as well?

For now Azure Mobile Services (a.k.a. ZUMO) is being advertised for Windows 8 only (the SDK is targeted for Windows 8 applications), but there is no reason why you couldn’t use it elsewhere.

Let’s do that and use Web API as a proxy.

Azure Mobile Services

As mentioned, currently, the SDK (Microsoft.WindowsAzure.MobileServices) is Windows 8 only, and while it provides a really rich and fluent API, it’s effectively a wrapper around REST exposed by Azure Mobile Services.

Since that’s the case, we could easily replace that with a RESTful wrapper created with ASP.NET Web API and provide access to our Azure Mobile Services from anywhere – i.e. JavaScript in your web application. You might ask, why not call it directly from JS? Well, we can’t really do that due to cross domain issues. Moreover, saving the data is usually the last step, and in the process you might want to do a whole lot of other stuff, apply all kinds of business logic, to which Web API would have access on the server side.

Setup

The setup is really easy – just go to Azure, and sign up for Azure Mobile Services. It has a 3 month trial.

Then in the Azure Management Portal, choose New > Mobile Service > Create.

You could create a new SQL DB for it, or attach an existing one.

Then all that’s needed is to create a table. You just need a name and permissions, because the schema will be dynamic and dependant on what you save in the the DB.

Now you are good to go.

The model

For the sake of the example, I will use a simple model. Really what we want to do is just to make sure we can do CRUD operations in the ZUMO from the client side (JavaScript), so there is no need to get to fancy.

The model looks like this:

public class Team { public int? id { get; set; } public string Name { get; set; } public string League { get; set; } }

public class Team

{

public int? id { get; set; }

public string Name { get; set; }

public string League { get; set; }

}

We will also see that I don’t need any schema to save/update this type of model in the cloud.

Building the wrapper with Web API

What we really need to do CRUD on this model is a typical Web API REST-oriented controller.

We will expose the following operations:

1. GET /api/teams/ – get all teams

2. GET /api/teams/1 – get team by ID

3. POST /api/teams/ – add a new team

4. PUT /api/teams/ – update a team

5. DELETE /api/teams/1 – delete a team by ID

and then tunnel the request to the Azure Mobile Services.Access to Azure Mobile Services tables is normally protected with a key so we will have a the controller manage the key (we don’t want the clients doing that). The key needs to be sent with every request to Azure Mobile Services in the headers (alternatively, we could open up the CRUD on the table to anyone, it is possible with the service, but probably not very smart to do).

The skeleton of the controller looks like this:

public class TeamsController : ApiController

{

HttpClient client;

string key = "KEY_HERE";

public TeamsController()

{

client = new HttpClient();

client.DefaultRequestHeaders.Add("X-ZUMO-APPLICATION",key);

client.DefaultRequestHeaders.Accept.Add(new MediaTypeWithQualityHeaderValue("application/json"));

}

public IEnumerable<Team> Get()

{}

public Team Get(int id)

{}

public void Post(Team team)

{

if (ModelState.IsValid)

{}

throw new HttpResponseException(HttpStatusCode.BadRequest);

}

public void Put(Team team)

{

if (ModelState.IsValid)

{}

throw new HttpResponseException(HttpStatusCode.BadRequest);

}

public void Delete(int id)

{}

}

We will use the new HttpClient to communicate with the ZUMO RESTful service. We preset the key needed for authenticating us in the X-ZUMO-APPLICATION header key (you get your key from the Azure Management portal, by clicking on the “Manage keys” at the bottom of the page).

Azure Mobile Services exposes data in the following format:

1. GET https://[your_service].azure-mobile.net/tables/[table_name]/ – get all entries

2. GET https://[your_service].azure-mobile.net/tables/[table_name]?$filter – get single entry by ID (using OData).

3. POST https://[your_service].azure-mobile.net/tables/[table_name]/ – add a new entry.

4. PATCH https://[your_service].azure-mobile.net/tables/[table_name]/1 – update an entry

5. DELETE https://[your_service].azure-mobile.net/tables/[table_name]/1 – delete an entry by IDThis is almost the same what we have in a standard Web API controller, except by default in Web API projects you’d update with PUT and here you update with PATCH HTTP verb.

Data is submitted and returned via JSON

Get All

public IEnumerable<Team> Get()

{

var data = client.GetStringAsync("https://testservice.azure-mobile.net/tables/Teams").Result;

var teams = JsonConvert.DeserializeObject<IEnumerable<Team>>(data);

return teams;

}

Note, that here I wait for the result of the async operation, which is far from ideal, but it’s fine for a simple demo. I then used JSON.NET to deserialize the results to our POCO.

As expected, we get a nice list of content-negotiated (through Web API) list of NCAA teams.

Get by ID

public Team Get(int id)

{

var data = client.GetStringAsync("https://testservice.azure-mobile.net/tables/Teams?$filter=Id eq "+id).Result;

var team = JsonConvert.DeserializeObject<IEnumerable<Team>>(data);

return team.FirstOrDefault();

}

We use OData on the REST query to ZUMO, to filter by Id column. Again, we use JSON.NEt to deserialize. A small wrinkle is that OData filter will return an array of objects, but since we filter by ID we expect just one so we take the first one.

In this case, just as previously, we get a content negotiated response, this time with Washington State Cougars which we requested for.

Add an item

public void Post(Team team)

{

if (ModelState.IsValid)

{

var obj = JsonConvert.SerializeObject(team, new JsonSerializerSettings() { NullValueHandling = NullValueHandling.Ignore });

var request = new HttpRequestMessage(HttpMethod.Post, "https://testservice.azure-mobile.net/tables/Teams");

request.Content = new StringContent(obj, Encoding.UTF8, "application/json");

var data = client.SendAsync(request).Result;

if (!data.IsSuccessStatusCode)

throw new HttpResponseException(data.StatusCode);

}

throw new HttpResponseException(HttpStatusCode.BadRequest);

}

When we add an item, we will build up the HttpRequestMessage manually; we serialize the POCO to JSON, omit the null values (this is important – since this is a new item it will not have an ID, and a JSON item we submit to ZUMO cannot have an ID property in it, as it would throw a Bad Request error). Then we send the request, and in case it’s not successful we forward the exception down to the client.

We add an item by making a simple POST request. Because we use Web API for forwarding the calls, we could also make this request in XML. In this case we are adding Boise State Broncos from the Mountain West Conference.

Update an item

public void Put(Team team)

{

if (ModelState.IsValid)

{

var request = new HttpRequestMessage(new HttpMethod("PATCH"), "https://testservice.azure-mobile.net/tables/Teams/"+team.id);

var obj = JsonConvert.SerializeObject(team);

request.Content = new StringContent(obj, Encoding.UTF8, "application/json");

var data = client.SendAsync(request).Result;

if (!data.IsSuccessStatusCode)

throw new HttpResponseException(data.StatusCode);

}

throw new HttpResponseException(HttpStatusCode.BadRequest);

}

This is very similar to POST, except here we use a new HTTP verb, PATCH. Notice it’s not available in the HttpMethod enum so we specify it as a string (which is perfectly fine). We then send the object down to ZUMO.

Updating is a simple PUT. Since Boise St is moving to Big East next year, let’s update their league.

And now Boise is in the Big East.

Delete by id

public void Delete(int id)

{

var request = new HttpRequestMessage(HttpMethod.Delete, "https://testservice.azure-mobile.net/tables/Teams/" + id);

var data = client.SendAsync(request).Result;

if (!data.IsSuccessStatusCode)

throw new HttpResponseException(data.StatusCode);

}

Delete is very simple, just make a DELETE request to the URL with an appropriate ID.

We will delete Kentucky Wildcats, because, well, I don’t like them.

And they are gone.

Summary

We could also view all the data in the Azure management portal – and it’s all as expected (well not all as expected, since Missouri is in the SEC this year but I will not make a new screenshot

).

By the way, you might think it’s insane to forward the call via Web API like this – and in the process deserialize from JSON (in Web API) and then serialize again to send down to ZUMO. Yeah, it might sound a bit awkward if you do it straight up like this, but plase remember this is just a simple demo, intended just to show how you could forward the calls back and forth.

In a normal application, in between, you’d probably want to do some stuff with the Models, apply business logic, call different services, process the data and so on – before saving that in the data storage (Azure Mobile Services). Moreover, if you really wish just the basic call forwarding, you could change these Web API signatures to work with JSON object directly, rather than CLR types.

Ultimately, however, it is really easy and convenient to use Azure Mobile Services as a data storage for your app and Web API as a proxy in between, so I recommend you check it out.

<Return to section navigation list>

Marketplace DataMarket, Cloud Numerics, Big Data and OData

Joseph Fultz described using MongoDB with Windows Azure in his Humongous Windows Azure article for MSDN Magazine’s September 2012 issue. From the introduction:

Personally, I love the way things cycle. It always seems to me that each evolution of an object or a mechanism expresses a duality of purpose that both advances and restates a position of the past. Technology is a great place to see this, because the pace at which changes have been taking place makes it easy to see a lot of evolutions over short periods of time.

For me, the NoSQL movement is just such an evolution. At first we had documents, and we kept them in files and in file cabinets and, eventually, in file shares. It was a natural state of affairs. The problem with nature is that at scale we can’t really wrap our brains around it. So we rationalized the content and opted for rationalized and normalized data models that would help us predictably consume space, store data, index the data and be able to find it. The problem with rational models is that they’re not natural.

Enter NoSQL, a seeming mix of the natural and relational models. NoSQL is a database management system optimized for storing and retrieving large quantities of data. It’s a way for us to keep document-style data and still take advantage of some features found in everyday relational database management systems (RDBMSes).

One of the major tools of NoSQL is MongoDB from 10gen Inc., a document-oriented, open source NoSQL database system, and this month I’m going to focus on some of the design and implementation aspects of using MongoDB in a Windows Azure environment. I’m going to assume you know something about NoSQL and MongoDB. If not, you might want to take a look at Julie Lerman’s November 2011 Data Points column, “What the Heck Are Document Databases?” (msdn.microsoft.com/magazine/hh547103), and Ted Neward’s May 2010 The Working Programmer column, “Going NoSQL with MongoDB” (msdn.microsoft.com/magazine/ee310029).

First Things First

If you’re thinking of trying out MongoDB or considering it as an alternative to Windows Azure SQL Database or Windows Azure Tables, you need to be aware of some issues on the design and planning side, some related to infrastructure and some to development.

Deployment Architecture

Generally, the data back end needs to be available and durable. To do this with MongoDB, you use a replication set. Replication sets provide both failover and replication, using a little bit of artificial intelligence (AI) to resolve any tie in electing the primary node of the set. What this means for your Windows Azure roles is that you’ll need three instances to set up a minimal replication set, plus a storage location you can map to a drive for each of those roles. Be aware that due to differences in virtual machines (VMs), you’ll likely want to have at least midsize VMs for any significant deployment. Otherwise, the memory or CPU could quickly become a bottleneck.

Figure 1 depicts a typical architecture for deploying a minimal MongoDB ReplicaSet that’s not exposed to the public. You could convert it to expose the data store externally, but it’s better to do that via a service layer. One of the problems that MongoDB can help address via its built-in features is designing and deploying a distributed data architecture. MongoDB has a full feature set to support sharding; combine that feature with ReplicaSets and Windows Azure Compute and you have a data store that’s highly scalable, distributed and reliable. To help get you started, 10gen provides a sample solution that sets up a minimal ReplicaSet. You’ll find the information at bit.ly/NZROWJ and you can grab the files from GitHub at bit.ly/L6cqMF.

.jpg)

Figure 1 Windows Azure MongoDB Deployment

…

Read more.

<Return to section navigation list>

Windows Azure Service Bus, Access Control Services, Caching, Active Directory and Workflow

No significant articles today

<Return to section navigation list>

Windows Azure Virtual Machines, Virtual Networks, Web Sites, Connect, RDP and CDN

Paul Stubbs (@PaulStubbs) described Building a SharePoint 2010 Farm on Windows Azure with PowerShell in a 9/3/2012 post:

With the new Azure Virtual Machines it is now possible to run SharePoint 2010 and 2013 workloads. In my TechEd talks you can learn about the various options to run SharePoint on Azure.

- http://channel9.msdn.com/Events/TechEd/NorthAmerica/2012/AZR327

- http://channel9.msdn.com/Events/TechEd/Europe/2012/OSP334

I won’t rehash those talks again here. What I do want to share is some of the PowerShell code I used to build the farms. First a caveat that these are just rough scripts that I used to build the demo and have lots of opportunities for improvements. The script are good at demonstrating the various steps that are involved with building the Farm. Here is a diagram of the farm that we will build.

To get started read Automating Windows Azure Virtual Machines with PowerShell. Also if you are new to Azure Virtual Machines here is a crash course on the features that we will cover in the PowerShell scripts: Learn about Windows Azure Virtual Machines. With that out of the way let’s get started.

Create VNet

The first step is to create the virtual network. In the past the Cloud Service (previously called hosted services) was the container for multiple VMs to talk to each other. Now the Virtual Network (VNet) allows multiple Cloud Services to talk to each other. So in the case of our Farm we want the Active Directory/Domain Controller (AD/DC) to be in one Cloud Service and talk to the rest of our farm in another Cloud Service. By using 2 Cloud Services, you can start the AD/DC first and have the other Cloud Services join the domain, since we do not have static IPs. Here is the script to create the VNet. …

CreateVnet.ps1 script (49 lines) elided for brevity.

Create AD/DC

Once the VNet is in place you can create the Active Directory/Domain Controller. All this script does is create a VM in a Cloud Service. Once the VM is started you will need to manually RDP connect to the machine and run DCPROMO. Once DCPROMO is finished run ipconfig to determine the IP of the machine, which will be used in the Farm setup script. …

CreateDC.ps1 script (51 lines) elided for brevity.

Create Farm

The last step is to create the SharePoint Farm. …

CreateSPFarm.ps1 script (125 lines) elided for brevity.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

Himanshu Singh (@himanshuks, pictured below) posted Real World Windows Azure: Australian Sports Bookmaker Bets on Windows Azure for its Racing Microsite on 9/5/2012:

As part of the Real World Windows Azure series, I connected with Shane Paterson, Network Operations Manager at Centrebet to learn more about how the company used Windows Azure to build a dedicated Spring Racing Carnival microsite to provide its customers with race and betting information during the Carnival’s 2011 racing season. Read Centrebet’s success story here. Read on to find out what he had to say.

Himanshu Kumar Singh: Tell me about Centrebet.

Shane Paterson: Founded in Alice Springs, Northern Territory in 1992, Centrebet is one of the world’s largest online betting and gaming operators. In 1996, we became the first licensed bookmaker in the southern hemisphere to offer online sports betting. With annual turnover of more than A$1 billion a year, we offer up to 6,000 sports and horseracing betting markets worldwide each week, as well as an expanding list of gaming products.

HKS: What is your busiest time of year?

SP: Our busiest time of year is during the Spring Racing Carnival, a month-long series of high-stakes horseracing events held in Melbourne, Victoria. The Melbourne Cup, in particular, sees thousands of once-a-year punters bet on the big race, held on the first Tuesday in November each year. We take around four times the number of bets during the Melbourne Cup than we take on an average day. The traffic across our systems on that one day of the year is phenomenal.

HKS: Why did you decide to create a dedicated Spring Carnival microsite?

SP: Our online and mobile customers demand the most up-to-date information about the races and results, especially which horses are attracting the big money. If punters can see where the big bets are being laid, it greatly assists them in their own wagering. We wanted to build a dedicated microsite to provide vital and timely Spring Carnival race and betting information to our customers.

HKS: What challenges did you face as you planned this new microsite?

SP: The challenge was to get a site up and running for online and mobile punters in a short timeframe, without causing any disruption to our core betting operations. We would usually invest heavily in hardware to ensure we had the right infrastructure to remain operational during major sports events. This time we needed a cost-effective solution that wouldn’t be a drain on our existing bandwidth. And given the high number of users, the site also needed to handle large volumes of data quickly and distribute that information efficiently and seamlessly.

HKS: What options did you consider for developing your site?

SP: In canvassing our options, we initially considered building an internal cloud hosting solution, which would have cost us A$50,000 in hardware and been time-consuming to implement. On advice from IT consultancy and Microsoft Gold Certified Partner Breeze, we selected Windows Azure.

HKS: How did the development process go with Windows Azure?

SP: Together with Breeze, we built the new website on Windows Azure in just four months, in time for the start of Melbourne’s 2011 Spring Carnival. Windows Azure gave us the flexibility, scalability and performance reliability we needed to operate the site in the cloud without affecting our core infrastructure. It was also fast and incredibly easy to deploy.

HKS: What new features and services does the new microsite provide to your customers?

SP: By running the site on Windows Azure, we were able to put a lot of information at the fingertips of punters without putting any strain on the main processing engines that operate our core revenue-generating systems. The new site enabled our customers to access comprehensive betting information from the first major race meeting of the spring season in early October. This information included up-to-date prices; tips and big bets being placed; the form guide; and information about which horses to follow and which were leading the market.

Additionally, using BizTalk Server 2010 and SQL Server 2008 R2 as the website’s back end enabled us to populate our database with many different high-volume feeds – for example, the five biggest bets in the past hour – from existing internal systems and public Internet feeds.

HKS: Did the site result in an increase in business for Centrebet?

SP: Our Spring Carnival microsite running on Windows Azure seamlessly provided timely race and betting information to our global customers during our busiest time of year. On Melbourne Cup day, we took more than 400,000 bets with the help of the new site.

HKS: What about the technology benefits you’ve seen with Windows Azure?

SP: By using Windows Azure to run the Spring Carnival microsite, we ensured our network performed consistently well, because data was distributed over a large number of machines rather than a single database. And we always had access to sufficient bandwidth and processing power to ensure the site continued to run smoothly when the numbers of users and transactions spiked dramatically.

Windows Azure was also quick and easy to deploy because we didn’t need to invest in additional hardware, eliminating the time our IT staff would have taken to install, manage and maintain the system. And we saved a considerable amount of money in overhead and staff resources; the hardware we were considering purchasing would have cost around A$50,000; we no longer need to spend this.

And rather than having to continually invest in local infrastructure and bandwidth, we can use Windows Azure to manage and distribute scaled volumes of information cost-efficiently, when and in whichever way we choose. With Windows Azure, we can scale up at 10 minutes’ notice, but also scale back during quiet times.

The beauty is you pay for what you use. Our investment in new hardware and management costs is minimal. IT staff spend less time managing infrastructure. When demand is high, we can bring new equipment into play in hours rather than months, which saves time and money.

HKS: What business benefits have you seen with Windows Azure?

SP: Launching a dedicated Spring Racing Carnival microsite in time for the 2011 season gave our customers continuous access to information they needed to place bets during peak times, which was good for punters and good for our business. Windows Azure made that happen.

The success of the Spring Carnival microsite has laid the groundwork for us to build microsites for other major sporting events, such as the Australian Football League and National Rugby League grand finals and the Autumn Racing Carnival. In time, we may look at moving some of our primary business activities to Windows Azure.

Read how others are using Windows Azure.

<Return to section navigation list>

Visual Studio LightSwitch and Entity Framework 4.1+

Beth Massi (@bethmassi) posted LightSwitch Community & Content Rollup– August 2012 on 9/5/2012:

Last year I started posting a rollup of interesting community happenings, content, samples and extensions popping up around Visual Studio LightSwitch. If you missed those rollups you can check them all out here: LightSwitch Community & Content Rollups.

Visual Studio 2012 Released!

The big news in August was we finally released Visual Studio 2012 to the public on August 15! LightSwitch includes a variety of improvements in Visual Studio 2012, such as such as a new theme, the ability to access data from any OData source as well as create OData services, Active Directory integration, new business types, and a whole lot more. We are also working on support for HTML clients, which will be available as an add-on to VS2012. For more details on all the major new features in Visual Studio see Jason’s blog: Visual Studio 2012 and .NET Framework 4.5 released to the web!

Now that LightSwitch is a core part of the Visual Studio 2012 product line, Visual Studio now provides a comprehensive solution for developers of all skill levels to build line-of-business applications and data services quickly and easily for the desktop and cloud. For more information on Visual Studio 2012, head to http://microsoft.com/vstudio

LightSwitch Developer Center is now part of the Visual Studio Developer Center

With this product integration, we have also aligned the LightSwitch and Visual Studio web sites including a new integrated Developer Center and User Voice sites. Your URLs remain the same and will redirect to the new locations. The Developer Center is still your one-stop-shop for learning all about Visual Studio and building business applications with LightSwitch.

LightSwitch on the Visual Studio Developer Center: http://msdn.com/lightswitch

New “How Do I” Videos on LightSwitch Development in Visual Studio 2012

There are a ton of goodies that we’ve been releasing on the Developer Center, including a new series of “How Do I” videos on how to use the new LightSwitch features in Visual Studio 2012. Last week we released three new videos that you can check out on the “How Do I” video page.

- How Do I: Get Started with the LightSwitch Starter Kits?

- How Do I: Consume OData Services in a LightSwitch App?

- How Do I: Create OData Services with LightSwitch?

Updated LightSwitch Starter Kits for Visual Studio 2012

Starter Kits are project templates that help get you started building specific LightSwitch applications. You can download them right from the within Visual Studio and once installed they show up in your “New Project” dialog. Using the Starter Kits can help you learn about LightSwitch as well as get you started off right with a basic data model, queries and screens that you can customize further. (See this video on how to install them.) There are six Starter Kits available in both Visual Basic and C#.

See all LightSwitch Starter Kits here.

Beginning LightSwitch in Visual Studio 2012 Article Series

Back in December I created a sample and series of articles that took a beginner through developing a simple business application. The articles are aimed at the beginner programmer but should also be interesting to anyone just getting started with LightSwitch. In August I updated this series for Visual Studio 2012:

Are you completely new to LightSwitch and software development? Get started building an Address Book application with this Beginning LightSwitch Series.

- Part 1: What’s in a Table? Describing Your Data

- Part 2: Feel the Love. Defining Data Relationships

- Part 3: Screen Templates, Which One Do I Choose?

- Part 4: Too much information! Sorting and Filtering Data with Queries

- Part 5: May I? Controlling Access with User Permissions

- Part 6: Go beyond the box. Customizing LightSwitch Apps with Extensions

- Download the Competed Sample App

Building the LightSwitch Course Manager Sample

Andy also updated his super popular LightSwitch Team blog series on building the Course Manager sample using Visual Studio 2012. This is a great intermediate set of articles that walk through many aspects of building a professional quality business application using LightSwitch in Visual Studio 2012.

LightSwitch Course Manager End-to-End Application (Visual Studio 2012)

- Course Manager VS 2012 Sample Part 1 – Introduction

- Course Manager VS 2012 Sample Part 2 – Setting up Data

- Course Manager VS 2012 Sample Part 3 – User Permissions & Admin Screens

- Course Manager VS 2012 Sample Part 4 – Implementing the Workflow

- Course Manager VS 2012 Sample Part 5 – Detail Screens

- Course Manager VS 2012 Sample Part 6 – Home Screen

More Notable Content this Month

Extensions (see over 100 of them here!):

- With the release of Visual Studio 2012, ComponentOne has updated and re-released their Studio for LightSwitch.

Samples (see all 89 of them here):

- LightSwitch Course Manager End-to-End Application (Visual Studio 2012)

- Beginning LightSwitch in VS 2012 - Address Book Sample

- LightSwitch HTML Preview Sample App - Doctors Office

Team Articles:

- Beginning LightSwitch Series (6-part series & sample)

- Getting Started with LightSwitch in Visual Studio 2012

- Library: Reporting and printing in LightSwitch

- LightSwitch Course Manager End-to-End Application (6-part article series & sample)

- Publishing LightSwitch Apps to Azure with Visual Studio 2012–UPDATED

- Trip Report: St. Louis Day of .NET

- Visual Studio 2012 Released to Web!

Community Articles:

- A Visual Studio LightSwitch Picture File Manager

- A practical approach for intra-table auditing

- August 2012 Southern California LightSwitch User Group Meeting (With Beth Massi)

- Backup a LightSwitch project

- Calling in LightSwitch the SaveChanges method in an async way with an ExecuteCompleted event

- ComponentOne LightSwitch Studio

- Retrieve a record explicitly asynchronous with an ExecuteCompleted event

- Saving Occurrences of Recurring Appointments in Scheduler for LightSwitch

LightSwitch Team Community Sites

Become a fan of Visual Studio LightSwitch on Facebook. Have fun and interact with us on our wall. Check out the cool stories and resources. Here are some other places you can find the LightSwitch team:

LightSwitch MSDN Forums

LightSwitch Developer Center

LightSwitch Team Blog

LightSwitch on Twitter (@VSLightSwitch, #VS2012 #LightSwitch)Enjoy!

Paul Van Bladel (@paulbladel) described Attaching a debugger to the silverlight client of a deployed LightSwitch app in a 9/4/2012 post:

Introduction

I recently encountered a problem which only pops up in the deployed application (in production so to speak) and which is not reproducable when running the LightSwitch application in debug. Furthermore, the problem happened client side (in the silverlight code). As a result, activating tracing server side on the IIS server didn’t have any sense.

I tried without any result, to attach the debugger to the browser connected to the LightSwitch application . I always got “The breakpoint will not currently be hit”:

The magic solution

The magic solution is to install the silverlight debug helper which installs as a Visual Studio Extension and runs in the background until a Silverlight debug session is started. It can be downloaded here: http://visualstudiogallery.msdn.microsoft.com/b5f1634e-1608-4376-8f00-caf93f5f58c8

How to attach a debugger to the browser?

Just for completeness: select from the menu in visual studio:

Secondly, make sure to select the correct browser instance.

You will see now that the breakpoint red bullets are now nicely solid, and they will be hit:

Beth Massi (@bethmassi) announced LightSwitch in Visual Studio 2012 - New “How Do I” Videos Released! in a 9/4/2012 post:

Check it out! We’ve got three brand new LightSwitch “How Do I” videos available on the LightSwitch Developer Center!

How Do I: Get Started with the LightSwitch Starter Kits?

In this video, see how you can get a jump start building applications using the LightSwitch Starter Kits. Starter Kits are project templates that help get you started building specific LightSwitch applications. You can download them right from the within Visual Studio and once installed they show up in your “New Project” dialog. Using the Starter Kits can help you learn about LightSwitch as well as get you started off right with a basic data model, queries and screens that you can customize further.

How Do I: Consume OData Services in a LightSwitch App?

One of the new features added to LightSwitch in Visual Studio 2012 is the ability to consume OData services. Odata stands for "Open Data Protocol" and is a standard protocol for accessing data over the web. Many businesses use OData today as a means of exchanging data easily. In this video, see how you can take advantage of OData services in order to enhance your application's functionality.

How Do I: Create OData Services with LightSwitch?

One of the new features added to LightSwitch in Visual Studio 2012 is the ability to create OData services. Odata stands for "Open Data Protocol" and is a standard protocol for accessing data over the web. OData services can then be consumed from a variety of clients from a variety of platforms. In this video, see how you can create and expose smart data services to other clients using LightSwitch.

You can see all of the available “How Do I” videos here. I’ve got more on the way so check back often.

Julie Lerman (@julielerman) wrote Moving Existing Projects to EF 5 for the September 2012 issue of MSDN Magazine. From the introduction:

Among changes to the Microsoft .NET Framework 4.5 are a number of modifications and improvements to the core Entity Framework APIs. Most notable is the new way in which Entity Framework caches your LINQ to Entities queries automatically, removing the performance cost of translating a query into SQL when it’s used repeatedly. This feature is referred to as Auto-Compiled Queries, and you can read more about it and other performance improvements in the team’s blog post, “Sneak Preview: Entity Framework 5 Performance Improvements,” at bit.ly/zlx21L. A bonus of this feature is that it’s controlled by the Entity Framework API within the .NET Framework 4.5, so even .NET 4 applications using Entity Framework will benefit “for free” when run on machines with.NET 4.5 installed.

Other useful new features built into the core API require some coding on your part, including support for enums, spatial data types and table-valued functions. The Visual Studio 2012 Entity Data Model (EDM) designer has some new features as well, including the ability to create different views of the model.

I do most of my EF-related coding these days using the DbContext API, which is provided, along with Code First features, separately from the .NET Framework. These features are Microsoft’s way of more fluidly and frequently enhancing Entity Framework, and they’re contained in a single library named EntityFramework.dll, which you can install into your projects via NuGet.

To take advantage of enum support and other features added to EF in the .NET Framework 4.5, you’ll need the compatible version of EntityFramework.dll, EF 5. The first release of this package has the version number 5.

I have lots of applications that use EF 4.3.1. This version includes the migration support introduced in EF 4.3, plus a few minor tweaks that were added shortly after. In this column I’ll show you how to move an application that’s using EF 4.3.1 to EF 5 to take advantage of the new enum support in .NET 4.5. These steps also apply to projects that are using EF 4.1, 4.2 or 4.3.

I’ll start with a simple demo-ware solution that has a project for the DomainClasses, another for the DataLayer and one that’s a console application, as shown in Figure 1.

.png)

Figure 1 The Existing Solution That Uses EF 4.3.1This solution was built in Visual Studio 2010 using the .NET Framework 4 and the EF 4.3.1 version of EntityFramework.dll.

Return to section navigation list>

Windows Azure Infrastructure and DevOps

My (@rogerjenn) Uptime Report for my Live OakLeaf Systems Azure Table Services Sample Project: August 2012 = 99.92 % of 9/3/2012 begins:

My (@rogerjenn) live OakLeaf Systems Azure Table Services Sample Project demo runs two small Windows Azure Web role instances from Microsoft’s South Central US (San Antonio, TX) data center. This report now contains more than a full year of uptime data.

Here’s Pingdom’s Monthly Report for August 2012:

Here’s the detailed uptime report from Pingdom.com for August 2012:

Following is detailed Pingdom response time data for the month of August 2012:

This is the fifteenth uptime report for the two-Web role version of the sample project since it was upgraded to two instances. Reports will continue on a monthly basis.

Damon Edwards (@damonedwards) recommended that you Use DevOps to Turn IT into a Strategic Weapon in a 9/5/2012 post to his dev2ops: delivering change blog:

There has been a fairly clear pattern of focus emerging from discussions within the DevOps community. Lots of attention has been paid to the effects of DevOps within the walls of an IT organization. Far less attention has been paid to the effects of DevOps across the broader company. Almost no attention has been paid the effects of DevOps outside the walls of the company, specifically in relation to other companies and the markets in which you are competing.

This inward emphasis is understandable considering that DevOps is a movement started by technologists and is a relatively easy sell to other technologists -- breaking down silos, smoothing handoffs, baking in quality, rapid feedback, automating the hell out of everything -- all messages that stand on their own merits.

But if we stop our DevOps evangelism at the boundaries of the IT organization, we are also missing the most valuable return on our DevOps investments. Why? Because the lessons and principles of DevOps can unlock something that is increasingly rare for today’s companies. DevOps can turn your IT Operations into a sustainable competitive advantage for your company. This is the DevOps message needs to be spread throughout the business end of your company, right up to the CEO and the Board of Directors.

To understand how DevOps can transform IT Operations into a competitive advantage, you first have to look at the current context within which businesses are operating.

Business reality #1: Technology alone cannot provide a competitive advantage

"Technology's potential for differentiating one company from the pack - it's strategic potential - inexorably declines as it becomes accessible and affordable to all"

Nicholas G. Carr

From 'IT Doesn't Matter'The idea of technology being less and less of a competitive differentiator isn’t a new one. But by its very nature the big shift to online business has only hastened this decline in potential for differentiation.

Your customers are now coming to you via standard interfaces (browser or mobile app store) that connect to you via a standard protocol (http).

So by definition, your business has aligned itself behind a business channel where the point of transaction with your customers is on a level playing field with any of your competitors. …

Read more: Damon continues with a detailed analysis of the business realities and competitive advantages associated with DevOps expertise.

Mike McKeown (@nwoekcm) posted Best Ways to Optimize Diagnostics Using Windows Azure – Part 1 to the Aditi Technologies blog on 9/4/2012:

Windows Azure Diagnostics enables you to collect diagnostic data from an application running in Windows Azure. This data can be used for debugging and troubleshooting, measuring performance, monitoring resource usage, traffic analysis and capacity planning, and auditing. In this blog post, I will share some optimizations that I have learned while working with Windows Azure Diagnostics during my time at Aditi Technologies.

- Keep separate storage accounts – Keep your Azure data storage in a different storage account than your application data. There is no additional cost to do this. If for some reason you need to work with Microsoft support and have them muck through your diagnostics storage locations you don’t have to allow them access to potentially sensitive application data.

- Locality of storage and application – Make sure and keep your storage account in the same affinity group (data center) as the application that is writing to it. If for some reason you can’t do so, use a longer transfer interval so data is transferred less frequently but more of it is moved at once.

- Transfer interval - For most applications I have found that a transfer once every 5 minutes is a very useful rate.

- Sample interval – For most applications setting the sample rate to once per 1-2 minutes provides a good yet frugal sampling of data. Remember that when your sampled data is move to Azure storage you pay for all you store. So you want to store enough information to help you get a true window into that performance counter, but not too much that you pay unnecessarily for data you won’t need.

- Trace logging level – While using the Verbose logging filter for tracing may give you lots of good information, it is also very chatty and your logs will grow quickly. Since you pay for what you use In Azure only use the Verbose trace level when you are actively working on a problem. Once it is solved scale back to Warning, Error, or Critical levels which are less common and smaller amounts of messages written to the logs.

Stay tuned for my next post where I will write about the six additional ways to optimize the use of Windows Azure Diagnostics.

Brent Stineman (@BrentCodeMonkey) asserted You don’t really want an SLA! in a 9/4/2012 post:

I don’t often to editorials (and when I do, they tend to ramble), but I felt I’m due and this is a conversation I’ve been having a lot lately. I sit to talk with clients about cloud and one of the first questions I always get is “what is the SLA”? And I hate it.

The fact is that an SLA is an insurance policy. If your vendor doesn’t provide a basic level of service, you get a check. Not unlike my home owners insurance. If something happens, I get a check. The problem is that most of us NEVER want to have to get that check. If my house burns down, the insurance company will replace it. But all those personal mementos, the memories, the “feel” of the house are gone. So that’s a situation I’d rather avoid. What I REALLY want is safety. So [I] install a fire-alarm, I make sure I have an extinguisher in the kitchen, I keep candles away from drapes. I take measures to help reduce the risk that I’ll need to cash my insurance policy.

When building solutions, we don’t want SLA’s. What we REALLY want is availability. So we as the solution owners need to take steps to help us achieve this. We have to weight the cost vs. the benefit (do I need an extinguisher or a sprinkler system?) and determine how much we’re wiling to invest in actively working to achieve our own goals.

This is why when I get asked the question, I usually respond by giving them the answer and immediately jump into a discussion about resiliency. What is a service degradation vs. an outage? How can we leverage redundancy? Can we decouple components and absorb service disruptions? These are the types of things we as architects need to start considering, not just for cloud solutions but for everything we build.

I continue to tell developers that the public cloud is a stepping stone. The patterns we’re using in the public cloud are lessons learned that will eventually get applied back on premises. As the private cloud becomes less vapor and more reality, the ability to think in these new patterns is what will make the next generation of apps truly useful. If a server goes down, how quickly does your load balancer see this and take that server out of rotation? How do the servers shift workloads?

When working towards availability, we need to take several things in mind.

Failures will happen – how we deal with them is our choice. We can have the world stop, or we can figure out how to “degrade” our solution to keep anything we can going.

How are we going to recover – when things return to normal, how does the solution “catch up” with what happened during the disruption

[T]he outage is less important than how fast we react – we need to know something has gone wrong before our clients call to tell us

We (aka solution/application architects) really need to start changing the conversation here. We need to steer away from SLA’s entirely and when we can’t manage that at least get to more meaningful, scenario based SLA’s. This can mean instead of saying “the email server will be [available] 99% of the time” we switch to “99% of emails will be transmitted within 5 minutes”. This is much more meaningful for the end users and also gives s more flexibility in how we achieve it. And depending on how traffic.

Anyway, enough rambling for now. I need to get a deck that discusses this ready for a presentation on Thursday that only about 20 minutes ago I realized I needed to do. Fortunately, I have an earlier draft of the session and definitely have the passion and knowhow to make this happen. So time to get cracking!

Another issue with SLAs is that the value of credits given for SLA violations pales in comparison with the cost of service downtime to the service’s customer. I’m looking forward to seeing Brent’s deck.

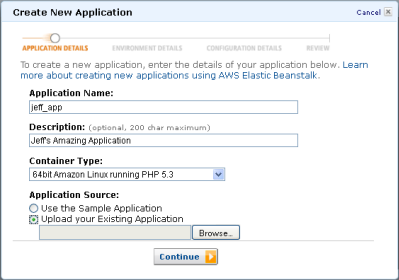

Paul Yuknewicz and Boris Scholl wrote Windows Azure: Developing and Deploying Windows Azure Cloud Services Using Visual Studio, which MSDN Magazine published in its September 2012 issue. From the introduction:

The Windows Azure SDK for .NET has come a long way since its first release. Functionality has been regularly added as the Windows Azure platform and associated tools evolved, such as adding Windows Azure Cloud Service projects to any Web app project, ASP.NET MVC versions 3 and 4 project support, and a dramatically streamlined publishing experience. This aligns with Microsoft’s overall vision to improve the tools used for cloud development and to better integrate with all aspects of the application development lifecycle.

As of June 2012, Windows Azure offers three compute container options to develop and run applications. These options include Windows Azure Web Sites (Preview) for quick and easy Web site and Web application deployment; Windows Azure Virtual Machines (Preview) for durable Windows Server and Linux Infrastructure as a Service (IaaS) virtual machines (VMs) and applications running on them; and Windows Azure Cloud Services, which provide reserved, infinitely scalable, n-tier options running on a Platform as a Service (PaaS). This article focuses on developing cloud services; you can learn more about all of the options at windowsazure.com.

We’ll walk through parts of the cloud service application development lifecycle using Visual Studio and highlight the new features as we progress through that lifecycle. After reading through the article, readers new to Windows Azure should have a basic understanding of cloud development with Visual Studio, and readers who already have experience with Windows Azure development in Visual Studio should have a good understanding of the new features available.

Getting Started

The Windows Azure SDK for .NET June 2012 release includes Visual Studio tools, which work on top of Visual Studio 2010 SP1 and Visual Studio 2012 release candidate (RC) and above. For this article, we’ll use Visual Studio 2012 RC. The best way to install the tools is to start Visual Studio, open the New Project dialog box and select the Cloud node. This will show the Get Windows Azure SDK for .NET project template.

That link will take you to the .NET Developer Center (bit.ly/v5MF7m) for Windows Azure and highlight the all-in-one installer for the SDK. …

Read more.

Zoiner Tejada (@ZoinerTejada) wrote A Quick Guide to the Windows Azure Management Portal for the 9/2012 issue of DevPro magazine:

Reading more requires registration.

David Linthicum (@DavidLinthicum) asked “Cloud technologies are mainly repackaged versions of each other. Who will seize the opportunity to create something new?” in a deck for his Cloud computing's me-too problem: Little boxes all the same article of 9/4/2012 for InfoWorld’s Cloud Computing blog:

I've been a big fan of "Weeds" on Showtime, now in its eighth season. If you're a fan, you know that the show have went back to its original theme music, "Little Boxes" by Malvina Reynolds: "Little boxes on the hillside/Little boxes made of ticky tacky/Little boxes on the hillside/Little boxes all the same."

Although the song's lyrics are about cookie-cutter suburban developments, they remind me of the cloud computing market, whose technology seems to be becoming "little boxes all the same."

As I go from conference to conference and provider to provider, the technology patterns I'm seeing are clearly starting to repeat. Part of this is a natural phenomemon when a technology market is hot: Everyone jumps in to offer the same new trend. Innovation in a hot market is risky, as it takes time to achieve and by definition means offering something different from what is "hot" (otherwise, it wouldn't be innovation). Offering what everyone else seems to be doing is the safer path.

A benefit of this "little houses all the same" approach is that enterprise customers can compare apples to apples. But what if they need oranges?

I covered a great example of this in a blog post last week on how the use of "open cloud" approaches is becoming the "new black" among cloud technology providers. The same can be said about cloud-based storage, database support, management, and even the reference models the vendors show you.

What's missing is innovation and creativity. There are many problems that still need solving in the cloud computing space, and new approaches should be created to solve them. Service governance comes to mind, as does service management. Also, most provisioning systems I see clearly need new and innovative approaches.

The people charged with running enterprise IT are the real victims here. They won't have access to the technology that can really change the game. Instead, they will have technology that is simply trying to provide what everyone else is doing: little boxes all the same.

Cloud computing is a chance to work with a freshly washed whiteboard and rethink how we approach computing. Although there is some innovation in the cloud today, I don't see the level of creativity we need to truly change the enterprise IT game. The same boxes in different packaging just won't get us there.

This issue appears to me to be related to IaaS; a la cart services for PaaS, such as Windows Azure SQL Database, Mobile Services, Media Services, Service Bus, Access Services, and the like definitely are distinguishing features.

<Return to section navigation list>

Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

Himanshu Singh (@himanshuks) reported a Cross-post: Windows Server 2012 is Here…And So is the Cloud OS on 9/4/2012:

Today’s launch of Windows Server 2012 delivers the cornerstone of the Cloud OS, as explained in a blog post today by Satya Nadella, President, Server & Tools Business at Microsoft. “With Windows Server 2012 and Windows Azure at its core,” writes Nadella, “we are delivering the Cloud OS. Based on our unique innovations in the most widely used operating systems, applications and cloud services, only Microsoft is able to offer the consistent platform that the cloud demands. It is that consistency that will enable customers to use common virtualization, application development, systems management, data and identity frameworks across all of their clouds.”

First unveiled at TechEd in June, the Cloud OS delivers a reimagined operating system that enables smart, modern apps across a company’s datacenter, a service provider datacenter, or the Windows Azure public cloud.

According to Nadella, Windows Server 2012 redefines the server operating system and introduces advanced storage, networking, virtualization, automation and end user access capabilities. It also delivers a transformational leap in the speed, scale and power that datacenters and applications can build on.

For more information, read the full blog post, and the press release.

Tim Anderson (@timanderson) asserted Windows Server 2012: a great upgrade in a 9/4/2012 post:

Remember how everyone hated Windows Vista but admins loved Server 2008? The awkward truth: they were built on the same core code. History may be about to repeat with Windows 8 and Server 2012, which also share code.

That said, I actually like Windows 8; but it is controversial because of its dual personality and the demands it places on existing Windows users to learn new ways to navigate the user interface. Server 8 has the Start screen too, but it boots to the desktop and most tools are available through the new Server Manager in any case, so I doubt it will cause much concern.

That is, if you install the GUI at all. Microsoft is a convert to the “No GUI on a server” idea and you are meant to install Server Core, which has no GUI, where possible, and run Server Manager on a Windows 8 client. There is also an intriguing intermediate option called the Minimal Server Interface, which has the GUI infrastructure but no Explorer shell or Internet Explorer. It sounds odd, but I quite like it; it is a bit like one of those stripped down Linux desktops where nothing gets in the way of the apps.

The Windows Server Evaluation Guide [pdf] does a good job of covering what’s new but runs to 177 pages, posing a challenge for those of us asked to review the new operating system in the usual 1500 words or fewer. I have had a go at this elsewhere. I will say though that from my first encounter with Server 2012, then called Server 8, at a press workshop in September 2011, I was impressed with the extent and significance of the new features. It does seem to me a breakthrough on several levels.

Virtualisation is one of course, with features like Hyper-V Replica doing what Microsoft should be doing: bringing features you would expect in large-scale enterprise setups within reach of small organisations. If you are not ready for public cloud, a couple of substantial servers running Hyper-V VMs with failover via Hyper-V Replica is an excellent setup.

Another is the effort Microsoft has put into modularisation and automation.

For modularity, Server 2012 is not quite at the level of the Debian server which hosts this web site, where I can add and remove packages with a simple apt-get command, but it is getting closer. You can now move between Server Core and full GUI simply by adding and removing features; it sounds easy, but represents an enormous untangling effort from the Windows team.

On the automation side, PowerShell has matured into a comprehensive scriptable, remoteable platform for managing Windows Server. I love the PowerShell History feature in the Active Directory Administration Center, which shows you the script generated by your actions in the GUI.

Storage is a big feature too. The new Storage Spaces are not aimed at Enterprises, but at the rest of us. We are beginning to see an end to the “help, we are running out of space on the C drive” problem which can cause considerable problems. You can even mount virtual disks as folders rather than drive letters, another sign that Windows is finally escaping its DOS (or CP/M?) heritage.

Annoyances? Lack of tool compatibility is one, specifically that you the new Hyper-V manager will not manage 2008 R2 VMs, and the new RSAT (Remote Server Administration Tools) require Windows 8.

More seriously, there are times when the beautiful new Server Manager UI gives mysterious errors, and as you drill down, you are back in the world of DCOM activation or some such nightmare from the past; which makes you realise that under the surface there is a ton of legacy still there and that Windows admins are not yet free from the burden of trawling the web for someone else with the same troubling error in Event Viewer. Maybe this is common to all operating systems; but Windows seems to have more than its share.