Windows Azure and Cloud Computing Posts for 9/13/2012+

| A compendium of Windows Azure, Service Bus, EAI & EDI,Access Control, Connect, SQL Azure Database, and other cloud-computing articles. |

•• Updated 9/16/2012 at 8:00 AM PDT with new articles marked ••.

• Updated 9/14/2012 at 4:30 PM PDT with new articles marked •.

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Windows Azure Blob, Drive, Table, Queue, Hadoop, Media and Online Backup Services

- Windows Azure SQL Database, Federations and Reporting, Mobile Services

- Marketplace DataMarket, Cloud Numerics, Big Data and OData

- Windows Azure Service Bus, Access Control, Caching, Active Directory, and Workflow

- Windows Azure Virtual Machines, Virtual Networks, Web Sites, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch and Entity Framework v4+

- Windows Azure Infrastructure and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

Azure Blob, Drive, Table, Queue, Hadoop, Media and Online Backup Services

Joe Panattieri (@talkin_cloud) asked Windows Azure Online Backup: Cloud Partner Opportunities? to the TalkinCloud blog on 9/12/2012:

Microsoft’s Windows Azure Online Backup, currently in preview, is a cloud storage service for Windows Server 2012. Plus, the online backup service now supports System Center 2012 SP1 and Windows Server Essentials 2012. But where do cloud integrators and channel partners fit in the conversation?

For pure Microsoft shops, I suspect Windows Azure Online Backup could be a compelling solution. But I think the vast majority of larger businesses now have mixed environments — Windows servers and Linux servers from a range of vendors (Red Hat, Novell, etc.). I don’t know if or how Microsoft will extend Windows Azure Online Backup to support Linux server distributions.

For channel partners plenty of additional questions remain — including:

- Will there be a targeted channel partner program for Windows Azure Online Backup?

- Can partners control end-customer pricing and billing for the backup service?

- How will the Microsoft service compare to other backup platforms hosted in the Windows Azure cloud? For instance, Symantec is planning a disaster recovery service for Azure. And CA Technologies’ ARCserve runs in Azure.

Meanwhile, many MSPs and VARs are embracing upstart cloud backup solutions for Windows Server. Fast-growth companies in the market include Axcient, Datto and Intronis — all three of which recently landed on the Inc. 5000 list of fastest-growing privately held companies in the U.S. And there are larger service providers, like EVault, reaching out to MSPs as well.

While those channel-friendly company position their cloud services for partners, Talkin’ Cloud continues to wonder how Windows Azure Online Backup will engage VARs and MSPs.

I doubt if many enterprises will trust their cloud backups to an “upstart” provider.

<Return to section navigation list>

Windows Azure SQL Database, Federations and Reporting, Mobile Services

•• My (@rogerjenn) Windows Azure Mobile Services Preview Walkthrough–Part 4: Customizing the Windows Store App’s UI of 9/15/2012 explains how to specify custom logos for splash screens and tiles:

According to the MSDN Library’s Packaging your Windows Store app topic:

By using the Store menu in Visual Studio, you can access the Windows Store and package your Windows Store app for distribution. You will use the Store as the primary way to sell or otherwise make available your apps. For more information, see Previewing the Windows Store and Selling apps.

You must package and prepare your app before you can upload it to the Store. The packaging process gets started when you create a Windows Store project or item based on a template. When you create a Windows Store app, Visual Studio creates a source file for the app package (Package.appxmanifest) and adds that file to your solution. The first time that you build the project, Visual Studio transforms the source file to the manifest file (AppxManifest.xml) and puts it in the output folder for the app. The manifest file describes your app, including its name, description, start page, splash screen, and logos. In addition, you use the manifest file to add capabilities and declarations to your app, such as the ability to access a webcam. You can use the Manifest Designer in Visual Studio to edit the properties in the Package.appxmanifest file. For more information, see Using the Manifest Designer (Windows Store apps). [Emphasis added.]

Emulating a commercial Windows Store app requires customizing the UI to replace default tile and splash-screen images with custom *.png or *.jpg logo images, such as this:

Prerequisites: Completion of the following OakLeaf Systems Walkthroughs:

- Windows Azure Mobile Services Preview Walkthrough–Part 1: Windows 8 ToDo Demo Application (C#)

- Windows Azure Mobile Services Preview Walkthrough–Part 2: Authenticating Windows 8 App Users (C#)

- Windows Azure Mobile Services Preview Walkthrough–Part 3: Pushing Notifications to Windows 8 Users (C#)

You must also create 150 x 150 and 310 x 150 logo, as well as a 620 x 300-px splash screen image files and save resized 24, 30, 50-px square logo files for other purposes in the \Documents\Visual Studio 2012\Projects\ProjectName\SolutionName\Assets folder, which contains the default logo files.

Assigning Images to Tiles and Splash Screens

1-1. Launch Visual Studio 2012 with your version of the OakLeaf ToDo app, and choose Project, Store, Edit App Manifest or double-click the Package.appxmanifest item in Solution Explorer to open the file in the App Manifest Designer and click the Packaging tab:

Note: This capture is from step 1-1 of Windows Azure Mobile Services Preview Walkthrough–Part 2: Authenticating Windows 8 App Users (C#).

1-2. Change the Package Display Name to OakLeaf ToDo, click the … button to open the Select Image form, and select the 50-px square image file:

1-3. Click the Application UI tab, type the Display Name, Short Name and Description, and select the image of the appropriate size for the Logo, Wide Logo, and Splash Screen:

Note: Assigning a 24-px image as the Badge Logo requires adding a Background Task, as described in MSDN’s Background task sample application, which is beyond the scope of this Walkthrough.

1-4. Press F5 to build and run the app, display the Start window and scroll to the location of the former default tile:

1-5. Type oa to search for the OakLeaf ToDo app, which displays the 50-px square tile:

1-6. If you specify the wide tile, the 150 x 310-px image file appears in the Start menu:

1-7. Right-click Solution Explorer’s Package.appxmainfest item and choose Open With, XML (Text) Editor to open the XML file in Visual Studio’s XML Editor:

The VisualElements element contains the name and location of each custom image.

Registering this demo app with the Windows Store resulted in an authentication failure that I’ve been unable to correct after two days of trying. As of 9/17/2012 at 8:00 AM PDT the app was in the Content Compliance evaluation state:

My (@rogerjenn) How Not to Get a Free Windows Store Developer Account with Your MSDN Subscription Benefit post of 9/13/2012 begins:

Antoine LeBlond’s Windows Store now open to all developers in 120 markets post to the Windows Store for Developers blog explains:

All eligible MSDN subscribers receive a free, one-year Windows Store developer account as part of their MSDN benefits. (Eligible subscriptions include Visual Studio Professional, Test Professional, Premium, Ultimate, and BizSpark.)

But doesn’t explain how to take advantage of the benefit. When you sign up directly and receive your Registration Code, there’s no opportunity to specify your MSDN subscription benefit.

The solution to this dilemma would appear to be:

1-1. Sign in to MSDN with the Live ID for your MSDN subscription and navigate to the My Account page:

1-2. Click the Get Code link to return an MSDN-specific registration code:

1-3. Click How to Register to open a help page:

1-4. Click the Copy Code to Clipboard button, click the Windows Dev Center link to open the Help Us Protect Your Account form, and past the code to the text box.

1-5. Optionally check the I Trust This PC checkbox and type a name for the computer you’re using:

1-6. Click Submit to receive the following message:

Doesn’t the Windows Store team have a QC team or developers who check their code?

And continues with a “How to Reduce the Price from $49.00 to $0.00 for the First Year with the Alphanumeric Code” section.

Robert Green announced Episode 48 of Visual Studio Toolbox: Windows Azure Mobile Services in a 9/12/2012 post:

In this episode, Josh Twist and Nick Harris introduce us to Windows Azure Mobile Services. This makes it incredibly easy to connect a scalable cloud backend to your client and mobile applications. It enables you to easily store structured data in the cloud that can span both devices and users and integrate it with user authentication as well as send out updates to clients via push notifications. Josh, with assistance from Nick, shows how to add these capabilities to any Windows 8 app in minutes.

Wes Yanaga (@wesysvc) reported Windows Store Open to All Developers in 120 Markets in a 9/12/2012 post to the US ISV Evangelism blog:

Yesterday, Microsoft announced that the Windows Store is open to all developers in 120 Markets. You can read more about it at the Windows Store for Developers Blog.

If you’ve already signed up—fantastic. We’re ready for your app. Haven’t signed up yet? Getting started is easy—just go to the Windows Store Dashboard on the Windows Dev Center and sign up. The dev tools are free, the SDK is ready, and we have a ton of great supporting content to help you build your app and submit it for Store certification. Sign up now, reserve your app names—we look forward to seeing your app in the Store in time for the general availability of Windows 8.

To kick start your effort, we’ve got some great events upcoming on the West Coast.

Join us for Hands-On-Labs and Application Competition!

Windows 8 is the single biggest opportunity for developers, EVER! Come to a Windows 8 Unleashed event (happening now, in a city near you). Kick-start your Windows 8 development in one of these events, hosted by developers for developers.

Agenda

Session I – Overview

- Metro Style Apps Overview

- Developing Metro Style Apps

- Working with Controls

- Implementing Navigation

Session II – Location and Data

- Working with Location

- Data Binding

- Roaming

- App Bar

Session III – Metro Principals

- Live Tiles & Toast

- Contracts

- Full, Fill, Snap and Portrait Views

- Advertising

- Session IV

- Application Competition!

Register Now - HERE

Required Software and Downloads

Please make sure to get the required software and downloads BEFORE coming to an event. You can find everything you need on the “Windows 8 Unleashed Installs” page.

The current version of Windows Azure Mobile Services is limited to Windows Store (a.k.a., Windows 8 Modern UI or Metro) apps.

<Return to section navigation list>

Marketplace DataMarket, Cloud Numerics, Big Data and OData

• See Beth Massi (@bethmassi) reported Silicon Valley Code Camp October 6th & 7th in a 9/14/2012 post in the Cloud Computing Events section below.

Derrick VanArnam posted Introducing the OData QueryFeed workflow activity to the Customer Feedback SQL Server Samples blog on 9/12/2012:

As a continuation of my previous blog post, I created an OData QueryFeed workflow activity sample. The sample represents an end to end data movement application utilizing a number of technologies including

- Using LINQ to project OData entity XML to entity classes.

- Creating custom workflow activities.

- Creating custom workflow designers containing OData schema aware expression editors and WorkflowItemsPresenters. A WorkflowItemsPresenter is used to drop OData Filter activities that format a fully qualified OData filter parameter.

- WPF ComboBox items hosting a button and checkbox.

- Hosting the Windows Workflow designer within Microsoft Excel 2010.

- Running a workflow from within a Microsoft Excel 2010 and Microsoft Word 2010 Addin.

- Using a workflow Tracking Participant to subscribe to activity states.

- Creating ModelItem and Office extension methods.

- Open XML 2.0 that renders OData entity properties as a Word 2010 table.

By providing feedback on this blog, or sending me an email, you can help define any part of the sample. My email address is derrickv@microsoft.com.

Source Code

http://msftdbprodsamples.codeplex.com/releases/view/94486.

User Story

The sample addresses the following user story:

As a developer, I want to create an OData feed activity so that an IT Analyst can consume any AdventureWorks OData feed within a business workflow.

The OData QueryFeed sample activity shows how to create a workflow activity that consumes an OData resource, and renders entity properties in a Microsoft Excel 2010 worksheet or Microsoft Word 2010 document. Using the sample QueryFeed activity, you can consume any OData resource. The sample activity uses LINQ to project OData metadata into activity designer expression items. By setting activity expressions, a fully qualified OData query string is constructed consisting of Resource, Filter, OrderBy, and Select parameters. Executing the activity returns OData entity properties.

The sample activity uses LINQ to project OData metadata into activity designer expression items.

Resource

Query string part

/CompanySales

EntitySets LINQ for Resource Items

public IEnumerable<EntitySet> EntitySets

{

get

{

//Arrange: A url to a WCF 5.0 service is given as InArgument_Url

XNamespace xmlns = "http://schemas.microsoft.com/ado/2008/09/edm";

//Act: A LINQ to XML query is constructed that projects EntitySet XML to an EntitySet class

IEnumerable<EntitySet> entitySets = from x in metadata

.Descendants(xmlns.GetName("EntitySet"))

select new EntitySet

{

Name = x.Attributes("Name").Single().Value,

Namespace = x.Attributes("EntityType").Single().Value.Split(new char[] { '.' })[0],

EntityType = x.Attributes("EntityType").Single().Value.Split(new char[] { '.' })[1]

};

//Return:

return entitySets;

}

}Filter

Query string part

$filter=OrderYear ne 2006 and Sales lt 10000

EntitySetSchema LINQ for Filter Items

public IEnumerable<EntityPropertySchema> EntitySetSchema(string resource)

{

IEnumerable<EntityPropertySchema> entrySchema = null;

if (resource != string.Empty)

{

XNamespace xmlns = "http://schemas.microsoft.com/ado/2008/09/edm";

entrySchema = from p in metadata

.Descendants(xmlns.GetName("EntityType")).Descendants(xmlns.GetName("Property"))

where p.Parent.Attribute("Name").Value == (from e in this.EntitySets where e.Name == resource select e.EntityType).Single<string>()

select new EntityPropertySchema

{

Parent = p.Parent,

Name = p.Attribute("Name").Value,

Type = p.Attribute("Type").Value,

MaxLength = p.Attribute("MaxLength") == null ? "NaN" : p.Attribute("MaxLength").Value

};

}

return entrySchema;

}OrderBy

Query string part

$orderby=ProductSubCategory asc,Sales desc

EntitySetSchema LINQ for Filter Items

See Filter

Select

Query string part

$select=ProductSubCategory,Sales

EntitySetSchema LINQ for Filter Items

See Filter

Rendering QueryFeed TableParts

The example QueryFeed workflow renders entity properties in any client that supports .NET Framework 4.0. The sample source code at http://msftdbprodsamples.codeplex.com/releases/edit/94486 shows rendering an EntityProperties TablePart in Microsoft Excel 2010 and Microsoft Word 2010. The sample source code shows how to use an extension method to extend a Microsoft Excel or Microsoft Word range.

Excel InsertEntityTable Extension Method

public static void InsertEntityTable(this Range activeCell, IEnumerable<IEnumerable<EntityProperty>> entityProperties, string styleName)

{

int currentColumn = 0;

int currentRow = 1;

Range range;

List<string> propertyNames = (from item in entityProperties select item).First<IEnumerable<EntityProperty>>().Select(n => n.Name).ToList<string>();

int columnCount = propertyNames.Count();

int rowCount = entityProperties.Count();

Globals.ThisAddIn.Application.ScreenUpdating = false;

//Data Columns

foreach (string name in propertyNames)

{

range = activeCell.get_Offset(1, currentColumn);

range.FormulaR1C1 = name;

currentColumn++;

}

currentColumn = 0;

//Data Values

foreach (IEnumerable<EntityProperty> items in entityProperties)

{

//row = new TableRow();

currentRow++;

foreach (EntityProperty item in items)

{

range = activeCell.get_Offset(currentRow, currentColumn);

range.FormulaR1C1 = item.Value;

currentColumn++;

}

currentColumn = 0;

}

Worksheet activeSheet = Globals.ThisAddIn.Application.ActiveSheet;

Range styleRange = activeCell.Range[activeSheet.Cells[2, 1], activeSheet.Cells[rowCount + 2, columnCount]];

string listObjectName = String.Format("Table{0}", activeSheet.ListObjects.Count);

try

{

activeSheet.ListObjects.AddEx(XlListObjectSourceType.xlSrcRange, styleRange, Type.Missing, XlYesNoGuess.xlYes).Name = listObjectName;

activeSheet.ListObjects[listObjectName].TableStyle = styleName;

}

catch

{

//Handle exception in a production application

}

styleRange.Columns.AutoFit();

Globals.ThisAddIn.Application.ScreenUpdating = true;

}My next post will show how to edit a QueryFeed workflow using a hosted Windows Workflow designer. A custom designer is included in the source code at http://msftdbprodsamples.codeplex.com/releases/view/94486.

You can read more about the QueryFeed workflow in the attached Introducing the OData QueryFeed Activity document.

Doug Mahugh (@dmahugh) interviewed Alessandro Catorcini (@catomaior) for Channel9’s OData Server module for Drupal on 9/12/2012:

The Open Data Protocol (OData) is a web protocol for querying and updating data that provides a way to unlock your data and free it from silos that exist in applications today. OData provides a simple standards-based mechanism for exposing information from relational databases, file systems, content management systems and other websites.

In this video, you'll learn about how the OData Server module can enable OData access to content in Drupal repositories. Doug Mahugh talks to Alessandro Catorcini (Principal Group Program Manager, Microsoft Open Technologies, Inc.) about how OData can open up Drupal sites to access from a wide variety of devices, services, and applications, including the growing OData consumer ecosystem. Then Patrick Wilson of R2 Integrated, the developer of the OData Server module, demonstrates how easy it is to set up the module for access to the nodes, bundles, and properties in a Drupal site.

The OData Server module uses the OData Producer Library for PHP, an open source library from MS Open Tech, to expose Drupal entities. For more information about open source OData libraries from MS Open Tech, see the blog post Open Source OData Tools for MySQL and PHP Developers.

<Return to section navigation list>

Windows Azure Service Bus, Access Control Services, Caching, Active Directory and Workflow

No significant articles today

<Return to section navigation list>

Windows Azure Virtual Machines, Virtual Networks, Web Sites, Connect, RDP and CDN

• Michael Washam (@MWashamMS) reported the availability of a Running SharePoint on Windows Azure Virtual Machines on 9/14/2012:

The Microsoft SharePoint engineering team has released a guidance paper on deploying SharePoint 2010 on Windows Azure Virtual Machines.

SharePoint on Windows Azure Virtual Machines

IMHO this will be a huge benefit for quickly spinning up new farms or dev and test environment.. For SharePoint trainers it sure beats lugging around giant laptops with massive amounts of storage for all of the VMs.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

•• Steve Peschka described Creating and Using a Certificate for the CSUpload Tool with Azure IaaS Services in a 9/15/2012 post:

In my posting on using SharePoint up in Azure IaaS services (http://blogs.technet.com/b/speschka/archive/2012/06/17/creating-an-azure-persistent-vm-for-an-isolated-sharepoint-farm.aspx), one of my friends – MikeTag, who demands that he be mentioned :-) – noticed that I didn’t have instructions for how to create a certificate and use that with the csupload command line tool. So to help those that may be having the same issue I am going to make a quick run through that process here.

To begin with, really the easiest way to create a certificate that you can use for this purpose is to open the IIS Manager on Windows 7 or Windows Server 2008 or later, and create a self-signed certificate in there. You can find it in IIS Manager by clicking on the server name, then double click on Server Certificates in the middle pane. That shows all of the installed certificates, and in the right task pane you will see an option to Create Self-Signed Certificate…

After you create your certificate you need to export it twice – once with the private key, and once without. The reason you do it twice is because you need to upload the certificate without the private key to Azure. The certificate with the private key needs to be added to your personal certificate store on the computer where you are making your connection to Azure with csupload. When you create the certificate in the IIS Manager it puts the certificate in the machine’s personal store, that’s why you need to export it and add it to your own personal store.

Exporting the certificates is fairly straightforward – just click on it in the IIS Manager then click on the Details tab of the certificate properties and click the Copy to File… button. I’m confident you can use the wizard to figure out how to export it with and without the private key. Once you have the export with the private key (the .pfx file), open the Certificates MMC snap-in and import it into the Personal store for your user account. For the export without the private key, just navigate to the Azure portal and upload it there. You want to click on the Hosted Services, Storage Accounts and CDN link in the bottom left navigation, and then click on the Management Certificates in the top left navigation. Note that if you don’t see these navigation options you are probably viewing the new preview Azure management portal, and you need to switch back to the current Azure management portal. You can do that by hovering over the green PREVIEW button in the top center of the page, then clicking the link to “Take me to the previous portal”.

When you’re in the Management Certificates section you can upload the certificate you exported (the .cer file). What’s nice about doing it this way is that you can also copy the subscription ID and certificate thumbprint right out of the portal. You’ll need both of these when you create the connection string for csupload. If you click on the subscription, or click on the certificate, you’ll see these values in the right info pane in the Azure Management Portal. Once you’ve copied the values out, you can plug them into a connection string for csupload like this:

csupload Set-Connection SubscriptionID=yourSubscriptionID;CertificateThumbprint=yourThumbprint;ServiceManagementEndpoint=https://management.core.windows.net

Once you do that you are good to go and start using csupload. If you get an error message that says: “Cannot access the certificate specified in the connection string. Verify that the certificate is installed and accessible. Cannot find a connection string.” – that means that the certificate cannot be found in your user’s Personal certificate store. Make sure that you have uploaded the .pfx file into it and try again.

David Pallman described his live HTML5 Calculator with Tape app running under Windows Azure in a 9/13/2012 post:

Here's an HTML5 calculator I created today. It performs the usual core operations and adapts its size for desktop, tablet, or phone using CSS media queries. At desktop or tablet size, it displays a results tape. This is client-side only HTML5, CSS, and JavaScript, so it doesn't require anything more than minimal web serving (I'm hosting these files out of low-cost Windows Azure blob storage)

Here’s a screen capture with IE 9:

Mary Jo Foley (@maryjofoley) asserted “Microsoft's Dynamics GP 2013, the second of its ERP products to move to Windows Azure, is on track for a December 2012 release” in a summary of her Microsoft moves closer to delivering another cloud-hosted ERP product article of 9/13/2012 for ZDNet’s All About Microsoft blog:

Microsoft released a beta of its Dynamics GP 2013 product on September 12, taking the company another step closer to delivering more of its ERP products in a cloud-hosted option.

Microsoft officials have said the company plans over time to make versions of all four of its ERP products available in Windows Azure-hosted options. Dynamics NAV 2013 is expected to be the first of the four to offer this option. Dynamics GP is slated to be the second.

Microsoft officials said this week that Dynamics GP 2013 is on track to be delivered in December 2012.

What else is in the Dynamics GP 2013 beta release besides the new cloud distribution option? According to Microsoft's blog post, the GP web client, "125+ functionality enhancements for our 43,000+ existing customers, and extensions to the integration GP has with the Microsoft stack. (That integration likely involves single sign-on with a common set of credentials across Dynamics and other Microsoft cloud-hosted properties.)

Microsoft officials have said that they believe it is more important for ERP than for CRM to provide users with choices -- on-premises, partner-hosted and Microsoft-hosted ERP options -- because ERP customers often have more qualms about moving to the cloud and/or are likely to stick with partner-hosted Dynamics offerings.

The Redmondians also have said that future releases of Microsoft's ERP products will be "cloud-first," meaning the cloud versions will be available ahead of the on-premises ones. As is the case with Dynamics CRM, Microsoft will be making two major updates a year to its ERP platforms once they're available both on-premises and in the cloud.

Microsoft's Dynamics CRM Online offering runs in Microsoft's datacenters, but is not Windows Azure-hosted. Microsoft officials have said they expect to move CRM Online to run on Azure at some point.

Pre-approved testers can download the GP 2013 beta from this site.

<Return to section navigation list>

Visual Studio LightSwitch and Entity Framework 4.1+

• See Beth Massi (@bethmassi) reported Silicon Valley Code Camp October 6th & 7th in a 9/14/2012 post in the Cloud Computing Events section below.

Joe Binder posted Visual Studio 2012 Launch: Building Business Apps with LightSwitch to the Visual Studio LightSwitch Team blog on 9/13/2012:

As you probably heard, yesterday was the online launch event for the release of Visual Studio 2012! If you missed the live keynotes you can watch them on demand on the Visual Studio 2012 Launch site. There are also a ton of recordings available on the major new features in Visual Studio 2012, just head to the agenda page to browse them all.

One of those videos shows off some of the new features in LightSwitch in Visual Studio 2012 that I encourage you to watch.

Watch: Building Business Applications with LightSwitch in Visual Studio 2012

LightSwitch for Visual Studio 2012 is the easiest way to build business applications for the desktop and the cloud. We’ll take a look at some of the biggest enhancements in LightSwitch, from producing and consuming OData feeds to creating touch-first HTML clients quickly, in this short video.

For more in-depth information on new LightSwitch features in Visual Studio 2012 see these articles:

OData Services Support

- Creating and Consuming LightSwitch OData Services

- Enhance Your LightSwitch Applications with OData

- LightSwitch Architecture: OData

- Using LightSwitch OData Services in a Windows 8 Metro Style Application

- Creating a LightSwitch Application Using the Commuter OData Service (Part 1, Part 2, Part 3)

- LightSwitch OData Consumption Validation in Visual Studio 2012

- Finding Your LightSwitch Produced OData Services in Visual Studio 2012

New Data Features

- Enhancements in Visual Studio 2012 for Sorting Data across Relationships

- LightSwitch Concurrency Enhancements in Visual Studio 2012

- User Defined Relationships within Attached Database Data Sources

- Filtering Data using Entity Set Filters

Security Enhancements

UI Design Improvements

- New Business Types: Percent & Web Address

- How to Add Images and Text to your LightSwitch Applications

- How to Use the New LightSwitch Group Box

- How to Format Values in LightSwitch

Deployment Enhancements

- LightSwitch IIS Deployment Enhancements in Visual Studio 2012

- Publishing LightSwitch Apps to Azure with Visual Studio 2012

For more information on the HTML client preview see:

Don Kiely reported Entity Framework Goes Open Source in a 9/13/2012 article for the DevPro blog:

One of the best data access technologies to come out of Microsoft in a long time, built on a foundation developed from several attempts over the years, has been Entity Framework. Microsoft positions Entity Framework as the mainstream ADO.NET data access technology, and an increasing number of developers are drinking the object-relational-mapping Kool-Aid by using its tools. That's a very good thing for productivity and application quality. Entity Framework beats writing low-level data access code nearly every time.

It was exciting news when the company announced in July that it would release the upcoming Entity Framework 6.0 as open source under the Apache 2.0 open source license on CodePlex. Microsoft calls this "development transparency," which I suppose it is, but the company is going to let anyone and everyone "engage and provide feedback on code checkins, bug fixes, new feature development and build and test the product on a daily basis using the most up to date version of the source code and tests," as Scott Guthrie said in his blog post that announced the move. Microsoft is nearly becoming an open source company, at least with some of its developer technologies. This is quite a change from a few years ago and will probably be a big factor in keeping the company relevant for far longer than some analysts had recently forecast.

Microsoft is managing its investments in open standards and open source through a new subsidiary, Microsoft Open Technologies, which it created last spring. According to Jean Paoli, the subsidiary's new president, "the subsidiary provides a new way of engaging in a more clearly defined manner. This new structure will help facilitate the interaction between Microsoft's proprietary development processes and the company's open innovation efforts and relationships with open source and open standards communities." Microsoft certainly seems to be making a long-term commitment to releasing strategic products as open source, which, in the case of Entity Framework, is a huge benefit to .NET database developers. It not only provides a way to share the source code but also enables community to contribute to the core technology.

The best thing about Microsoft's decision is that the company has committed to continue to provide the same resources and investments in Entity Framework as it had when it was originally created. So the move to open source isn't just cutting loose a dying technology so that those who foolishly keep using it can adapt to the technology's changing needs in the face of abandonment. Instead, Entity Framework will remain a core data access technology built in to the .NET Framework for the foreseeable future.

Entity Framework joins ASP.NET MVC 4, ASP.NET Web API, and Razor as open source. If you care about those technologies, you can get the source code, explore it, modify and adapt it to your own uses, and follow the daily builds and check-ins as Microsoft and the community move forward to new releases. These are indeed exciting times for .NET developers! …

Read more.

Paul Van Bladel (@paulbladel) posted (Un)deleting me softly to his LightSwitch for the Enterprise blog on 9/13/2012:

Introduction

There are occasions where you want that a delete of record (and the underlying object graph) does not result in a physical delete. Instead, the record (and the underlying object graph) is marked as deleted by means of a dedicated field “IsDeleted”, which gets the value true. This mechanism is called a soft delete.

Beth Massi has a great post on this subject (http://blo

gs.msdn.com/b/bethmassi/archive/2011/11/18/using-the-save-and-query-pipeline-to-archive-deleted-records.aspx). We’ll elaborate futher on this post.

The requirements

- we want to be able to apply a soft delete and make sure that the soft delete is propagated automatically to the underlying object graph;

- we want that entities can optionally participate in the soft delete ‘framework’, but if they participate, compile time checking will ensure that the entity has the “IsDeleted” field;

- we want to be able in a simple manner to activate filtering in such a way that entities which are soft deleted are not shown;

- we want to be able to “soft-undelete” an entity and -optionally- the underlying object graph. It make sense to make this feature permission dependent. Obviously, the usage of this feature goes together with de-activating the filtering on softdleleted items (in other words: when you want to undelete something, you need to see it).

The demo setting

To be able to test things in a decent manner, we make sure the object graph for our test setting is rich enough.

As you can see, we have both 1 to many structures as well as 1 to 0..1 relations.

How do we use the soft delete base infrastructure?

public partial class ApplicationDataService { partial void Customers_Filter(ref Expression<Func<Customer, bool>> filter) { filter = filter.NoSoftDeletesFilter<Customer>(); } partial void Customers_Deleting(Customer entity) { entity.ApplySoftDelete(); } partial void Orders_Deleting(Order entity) { entity.ApplySoftDelete(); } partial void CustomerAddressDatas_Deleting(CustomerAddressData entity) { entity.ApplySoftDelete(); } partial void OrderLInes_Deleting(OrderLIne entity) { entity.ApplySoftDelete(); }}So, server side, soft-delete for the above object graph is introduced with a few extension method calls.

The undelete functionaly is triggered client side:

partial void UndoSoftDelete_Execute() { this.Customers.SelectedItem.UndoSoftDelete(); }Each entity type you want to let participate in the soft delete engine, should implement the following interface:

public interface IIsSoftDeletable { bool IsDeleted { get; set; } }The base infrastructure

Following 3 extension methods make up the soft delete engine:

Make sure to include following class in the common project !

public static class SoftDeleteExtensions { public static Expression<Func<T, bool>> NoSoftDeletesFilter<T>(this Expression<Func<T, bool>> filter) where T : IIsSoftDeletable { ParameterExpression paramExpr = Expression.Parameter(typeof(T), "item"); PropertyInfo isDeletedPropInfo = typeof(T).GetProperty("IsDeleted", BindingFlags.Public | BindingFlags.GetProperty | BindingFlags.Instance); MemberExpression isDeletedPropExpr = Expression.Property(paramExpr, isDeletedPropInfo); ConstantExpression isTrue = Expression.Constant(true, typeof(bool)); BinaryExpression isNotDeleted = Expression.NotEqual(isDeletedPropExpr, isTrue); return (Expression.Lambda<Func<T, bool>>(isNotDeleted, paramExpr)); } public static void ApplySoftDelete(this IEntityObject entity) { if (entity is IIsSoftDeletable) { entity.Details.DiscardChanges(); entity.Details.Properties["IsDeleted"].Value = true; } else { throw new ArgumentException("You are trying to apply a filtering on soft deleted items, but the entity does not have an 'IsDeleted' field"); } } public static void UndoSoftDelete(this IEntityObject entity, bool recurse = false) { if (!TrySetIsDeletedProperty(entity)) return; if (recurse) { IEnumerable<INavigationPropertyDefinition> navigationProperties = entity.Details.GetModel().NavigationProperties; foreach (INavigationPropertyDefinition item in navigationProperties) { IAssociationEndDefinition associationEnd = item.Association.Ends.Where(e => e.EntityType.Name == entity.Details.EntitySet.Details.EntityType.Name).FirstOrDefault(); if (associationEnd != null) { var deleteRuleAttribute = associationEnd.Attributes.OfType<IDeleteRuleAttribute>().FirstOrDefault(); if (deleteRuleAttribute != null && deleteRuleAttribute.Action == DeleteAction.Cascade) { string navigationPropName = item.Name; if (item.ToEnd.Multiplicity == AssociationEndMultiplicity.Many) { foreach (var navigationPropValue in (entity.Details.Properties[navigationPropName].Value as IEnumerable<IEntityObject>)) { UndoSoftDelete(navigationPropValue); } } else { UndoSoftDelete(entity.Details.Properties[navigationPropName].Value as IEntityObject); } } } } } } private static bool TrySetIsDeletedProperty(IEntityObject entity) { bool result = false; if (entity is IIsSoftDeletable) { entity.Details.Properties["IsDeleted"].Value = false; result = true; } else { throw new ArgumentException("You are trying to apply a soft delete undo, but the entity does not have an 'IsDeleted' field"); } return result; } }As you can see, the softdelete filter is pretty advanced and is a combination of expression tree handling and reflective coding. I got some help of Justin Anderson via the LightSwitch forum (http://social.msdn.microsoft.com/Forums/en-US/lightswitch/thread/ff9c5bf4-001b-4d38-84b7-06af906eff14).

Note also the undo delete functionality is optionally recursive and if active we traverse the complete object graph.

Return to section navigation list>

Windows Azure Infrastructure and DevOps

•• Haishi Bai (@HaishiBai2010) posted Windows Azure Cloud Service on One A4 Page on 9/15/2012:

One picture is worth a thousand words. Here is my weekend creation – Tens of Windows Azure Cloud Services concepts, processes and features fit into one A4 page, with margins! Following the blue arrows, you can see how a Cloud Service is created, tested, deployed, and maintained. And along the way you get many details such as how different types of endpoint work, how diagnostic data is managed, where configuration settings come from, and even what are available OS and IIS choices.

There’s no better time to start with Cloud than Right Now, and you can get started with no upfront investment by getting a free 90-day trial from www.windowsazure.com. You can download PDF version of this poster by the link at the end of this post (link). Feel free to print it or mail it to your friends! And please come back to check out updated versions! Thank you and have a nice weekend!

Best regards,

Haishi

Windows Azure Technical Evangelist, Microsoft

Gaurav Mantri (@gmantri) posted About Windows Azure Publish Settings File and how to create your own Publish Settings File on 9/13/2012:

In this blog post I’m going to talk about the publish settings file for Windows Azure and how you can create your own publish settings file.

Publish Settings File

In this section we’re going to talk about publish settings file, how it works, and some observations about using this file. If you’re aware of this, please feel free to skip this section.

What Is It?

A publish settings file is an XML file which contains information about your subscription. It contains information about all subscriptions associated with a user’s Live Id (i.e. all subscriptions for which a user is either an administrator or a co-administrator). It also contains a management certificate which can be used to authenticate Windows Azure Service Management API requests. Typically a publish settings file look something like this:

<?xml version="1.0" encoding="utf-8"?> <PublishData> <PublishProfile PublishMethod="AzureServiceManagementAPI" Url="https://management.core.windows.net/" ManagementCertificate="MIIKPAIBAzCCCfwGCSqGSIb3DQEHAaCCCe0EggnpMIIJ5TCCBe4GCSqGSIb3DQEHAaCCBd8EggXbMIIF1zCCBdMGCyqGSIb3DQEMCgECoIIE7jCCBOowHAYKKoZIhvcNAQwBAzAOBAhSgQEdjGWWzgICB9AEggTI7EIY9TKTWkDqxG+j9Bnxw8k4a5OC3hw4lp8r/5Ch7uJ4AuY2cxNf+pt2gqejjcwxdhNn+suzebsrs3cI/7NEHka+hrxBrjZH1e5bUDjUAWYY6nm6iZYveS53nKcuwZHIjTrGBTa0xQSfMcBs5I5WaVfHVEKtVp67pLsInGBy+uExXwVk2/SmZFjKlKenQfSrnexUKvDt8WibWd/O42sqYIwcDPaKccSbbGNylFDal0cEkDLKPvpWBVwmPXsfPVcOuGKX1+LqxLX5+iCOND07+MS5gzD7c2IF+hTkOIk2CtDGV5rXBQWr5pqD5TecuYAPfuB5U6NtQ1Xzd0byiWqMP2zkTW3+KgEvxVHwzjYp24/4gCci5RnzTCINCT6OnZy3vWZLXEatyoys2iiZNTsEicV2J74na+1ChqPrFOErf5FvvHU6fVOsi/VpxQ2hq+crvwYrnM+mgpVl1Xai8ngwgAcruU9oK52z8hJSaRQ1zQNDFasepNRuSAFJzmddjF6w+6j3P04/i+ybTf/vIRuDH9tqujoYW2/LR6aaG+9GEfs1+g0Ld031nE6IbT9pM0HhIX4QfJhdby0G9fvGfvdXAQtWuK5AMHlrAp+G/ktGaGcoX3LyK9LeRp/JttSGCqB54XrVys4Uf6QRm1MB3o1czz8oqFVTxkqYjHocqRuCKIoxS/q13RpYWO1M9XJCKZ3iO+siYtJseAdUdqCjgJ1uD1UPBuZLHTrkPk+GSxFxjsYzh3Za3pAr/V4uArA4oujO1RP7v7a34cNQnhzWHjyvSnrvpYEyEfxg4nZtQMdTh6LY5NzI2QT0iCWgclRm47wlMYNUoSe4mDXPGgYgTntyqm+CHkTQxJjsD6Bb1B5xbns0/mRGegB2XofPjtShsqnsMLofhqVs8jFflYSKu7TpOeWZT55ItW6veTxpZNXtZbk8KAtsaT11p/6iNZ7vj9ptgFWkdFTgZt9EkHh+f68wd7CekBYK2fr5aw8iyxMY0iRdhoFOujnhAO+kfaCqi8i6k9+Yj8RreRvBvSv3V7vuUDZYzCofeucfR/qZAF9jEU8xaYrxj2HFOxFC+oTHJnak2W/rPL/TguTwtivthir/osRV2tvONPEIBOGAKKnoEM7aA3bNCpfeMsa2tyUZYmNWbIIWyKUGWKiRmC7dBLU+WTAHCXqyieWfusaQ+7Aoy5XQKOvYznR8SG6APv0jeoFG8S8NqU1Dlg1sDG5cRMcSdQbbP7ihbZS1BZhD6Z6W/NsBHdFsQ8GFI/4oZDkYEka201uc2zrp1HNMe3veH18t8H0EqLFkaiWa+gTWj1T7+xmE5XMNLhWJyf9+i8ncqPop6ND+mSILdXrkqsQhgLmY2cPxdIBvnzbfICk7e9ZQEe4FXRfQ3Du4eaSTqkj0jaCMbTPgc9WrqSoO7otu5N4UT46s59hJOJNDjs4TE/DaXui4/a4orO82UnEmP1+XyUsaGYW1pSPbFM0FrOo5hQoBRhJeSRFbwWV5v6L+sJDeykh9Lbz3qeaFcyBxuI6TSSW5oTGFKsBh1NaeXRkEFlWwEBwQMYALPwLYExRnt26IGhnhMBE8I+wbvqbv4aoOsSuyo240vi/Kfrjp9aGn2us0fw63cujZhZtAMYHRMBMGCSqGSIb3DQEJFTEGBAQBAAAAMFsGCSqGSIb3DQEJFDFOHkwAewAxADIARQBGAEQAOAA4ADUALQA3AEUARgA3AC0ANAA4ADEANwAtAEIAOQAzADQALQBBAEEARAAyAEUARgBBADAAQQBCADkARAB9MF0GCSsGAQQBgjcRATFQHk4ATQBpAGMAcgBvAHMAbwBmAHQAIABTAG8AZgB0AHcAYQByAGUAIABLAGUAeQAgAFMAdABvAHIAYQBnAGUAIABQAHIAbwB2AGkAZABlAHIwggPvBgkqhkiG9w0BBwagggPgMIID3AIBADCCA9UGCSqGSIb3DQEHATAcBgoqhkiG9w0BDAEGMA4ECIP11AEEFXKrAgIH0ICCA6jZ7y2kaiXQkwoOvHiwTZ1fdt91K9N3g9NgY0VY1ww5g8qMYGSkVWgq7+eWu373+no6Qgwh7xEWi/+FLDXc4FWX7TEJCPlnaZjYrdpAXjJdug3Jxxxhq0Tl9koZ/os6feOqy/zVyG2s9fPByC56n9mFQp0v/OA47bdl5of530zVX7ClejQdwENpCetLV400Pr123bG/TV9rxK4WGIE5V9OA3QBnQftkCjy7/J2BY2eaBvfV2+PMyUK8est/DGaM4WnuPIokMt6kIX6/zoZ6zDLBMYwC1ptI1JbR2J64E6NGElq1rZ/4phSTWz18njt6fuYJs6BckQt5/H/7N+L20gxYUEUyIjkhHpiBsEebIx5Q8FYt1s3gYxgCOn0JSR4AKMNTF9JFdbXJVVpukIy8HIBjoJSReF+D+GYdOlwexSnnajUCX7SIZiryGXSOfeHVffr/8UIfPOD3kdabhG3GkAHVdZmBbDMzTSa7u/g42YZncp85dUA4byOVbcGT6OOdzm+wlJnLgXnsmprXsnFhjk2gD0F7QzYFdRXU5mUgBuhzCnIQBKnR5eyS2LKLun//m1grhHkZAJ7wlm9bF2KxsmalQUu6j3dWa7aj4w7OJ+1eHZNSjfjsAS2IzglHpmpELdL0F9Hp8VYOvat9SkaybyDRDKudl84lUD1QxQvTluE/cDzcNN9f2/cpqrXKn28lGljalG6WhiOjrFH2mlbkblx2WYpvodGYGCnfGILtD+KJQbfs/oKSXj8VexH88MJrs9FJwFd0vLoiYmMAeVYzH/AgSiyXBEoTceUwU/nH8j0janXWzM3MKGso+hdYapcBqemywqpUvWQ49xc59LbEUB4Actr1iw7PHWkxelPjZMqVxRHy25R8uiCc++NTtUeHsDDSRj481a0+VoCy9L14S/qPBedvKX6Rl5FaLvLiPYp48IHFiW0XHDxQCiVKaXIRfR55GjzpuHFp/ofbFwB+nfybcmQq59zZihWezn5znu2ZrMLUqZygkhOdpA8eJeqEROormuwoNNeYWzATFIkrp8KZe+u+2iRNsa6ixLU/q4+R4X83iTcCHbganCl1S9jhTIT7NHZ++TD9pbwgHedSY2y+YxuTAZmFbQCKa8FvIQvRZ6jl7IFeuUkXgxAdtZk7Uirgsr7cN1PlEW5wyrIM3H/35XPVFcdR/ckpkExRlX3KPzQWNjbMy+ygkpGjZXDGwIAAm1iriBJ1NQMbS+b3V9Ag/jcMMDiExiMwNzAfMAcGBSsOAwIaBBTCmV5zt/CoSMkXdfeDuUPQ1HZ7BwQUCQKAaL+Em/bYMSpVzwFHw6REQLk="> <Subscription Id="d11fff51-e7c1-49ef-b833-f9204c337943" Name="My Awesome Subscription" /> </PublishProfile> </PublishData>What it does is that it eases your deployment process through Visual Studio or other tools which support it. To explain this, consider how things were done prior to the introduction of this functionality. One would need to go through the following steps:

- Create a self-signed certificate either using IIS or makecert utility.

- Import that certificate into your local certificate store.

- Export that certificate into .cer file format and upload that certificate into Windows Azure Portal.

- Now use that certificate in Visual Studio or any other tool which consumes Windows Azure Service Management API. You would need to specify your subscription id (meaning another trip to portal) for each subscription you would want to use.

With publish profile file, one would go through the following steps:

- Visit the link (see below – How To Get It) and download the file. You may need to sign using your Live Id.

- Use this file in the tools which support it like Visual Studio or Cerebrata’s Azure Management Tools.

- All your subscriptions will be imported successfully.

How To Get It?

To get it, please visit the following link: https://windows.azure.com/download/publishprofile.aspx

You may need to sign in with your Live Id. If the process is successful, you will be asked to save a file with “publishsettings” extension. You can open it in notepad or any text editor to see it’s contents.

How It Works?

So when you request a publish settings file from the link above, what Windows Azure does is that it creates a new management certificate and attaches that certificate to all of your subscriptions. The publish settings file contains raw data of that certificate and all your subscriptions. Any tool which supports this functionality would simply parse this XML file, reads the certificate data and installs that certificate in your local certificate store (usually Current User/Personal (or My)). Since Windows Azure Service Management API makes use of certificate based authentication and same certificate is present in both Windows Azure Management Certificates Section for your subscription and in your local computer’s certificate store, authentication works like a charm.

Some Comments

Here are some comments about using this file:

- Undoubtedly, this is a great way to get all your subscriptions into the tools which consumes Windows Azure Service Management API.

- Since this a text file containing not only your subscriptions but also the management certificate required to manage those subscriptions, extreme care must be taken to protect this file. Anybody who has access to this file has complete access to your subscriptions. If you think that this file is compromised, you must delete the certificate from the management certificates section in Windows Azure Portal to prevent any misuse.

- Currently there’s a limit of 10 management certificates per subscription. As mentioned above the process for creating a publish settings file always create a new certificate and associate with all subscriptions associated with your Live Id. If you try and generate publish settings file more than that, you will get an error.

- Currently the process of creating a publish profile file does not allow you to choose for which subscription you wish to generate this file. It automatically creates this file and puts all of your subscriptions in that file. This might pose some problem in certain scenarios:

For example consider the scenario where you’re a consultant working for different clients and are administrator/co-administrator on subscriptions for those clients. When you generate this file, it will not only try and create management certificates in each of your client’s subscriptions but also include all those subscription ids in this file. This would make it difficult for you to share this file with your colleagues at one client location.

In yet another scenario, consider you’re an administrator for your company’s Windows Azure subscriptions and you would want to use this file however you want to restrict access to those subscriptions. For example, you may have a subscription just for your QA environments and another one just for your production environments. Using this functionality when you generate the file, you can’t really specify that you wish to generate only for your QA environment subscription.

We’ll try and address those issues in the next section where we create a publish setting file for a subscription using an existing management certificate.

Creating Your Own Publish Settings File

We all realize that publish settings file eases the friction of managing your subscriptions considerably. Indeed it has its shortcomings but the ease factor clearly outweighs the shortcomings.

I was actually helping somebody out on MSDN forums where the person posted about these shortcomings. You can read that thread here: http://social.msdn.microsoft.com/Forums/en-US/windowsazuretroubleshooting/thread/5054447c-b04a-4a69-bf89-d17c441b1c73/. This gave me an idea about building a small utility which you can use to create your own publish settings file. In this section I’m going to describe that.

The Code

I built a simple console application which creates this publish setting file. The source code for the application is listed below. Feel free to use the code as is or modify it to suit your need.

using System; using System.Collections.Generic; using System.Linq; using System.Text; using System.Xml; using System.Security.Cryptography.X509Certificates; using System.IO; namespace CreatePublishSettingsFile { class Program { private static string subscriptionId = "[your subscription id]"; private static string subscriptionName = "My Awesome Subscription"; private static string certificateThumbprint = "[certificate thumbprint. the certificate must have private key]"; private static StoreLocation certificateStoreLocation = StoreLocation.CurrentUser; private static StoreName certificateStoreName = StoreName.My; private static string publishFileFormat = @"<?xml version=""1.0"" encoding=""utf-8""?> <PublishData> <PublishProfile PublishMethod=""AzureServiceManagementAPI"" Url=""https://management.core.windows.net/"" ManagementCertificate=""{0}""> <Subscription Id=""{1}"" Name=""{2}"" /> </PublishProfile> </PublishData>"; static void Main(string[] args) { X509Store certificateStore = new X509Store(certificateStoreName, certificateStoreLocation); certificateStore.Open(OpenFlags.ReadOnly); X509Certificate2Collection certificates = certificateStore.Certificates; var matchingCertificates = certificates.Find(X509FindType.FindByThumbprint, certificateThumbprint, false); if (matchingCertificates.Count == 0) { Console.WriteLine("No matching certificate found. Please ensure that proper values are specified for Certificate Store Name, Location and Thumbprint"); } else { var certificate = matchingCertificates[0]; var certificateData = Convert.ToBase64String(certificate.Export(X509ContentType.Pkcs12, string.Empty)); if (string.IsNullOrWhiteSpace(subscriptionName)) { subscriptionName = subscriptionId; } string publishSettingsFileData = string.Format(publishFileFormat, certificateData, subscriptionId, subscriptionName); string fileName = Path.GetTempPath() + subscriptionId + ".publishsettings"; File.WriteAllBytes(fileName, Encoding.UTF8.GetBytes(publishSettingsFileData)); Console.WriteLine("Publish settings file written successfully at: " + fileName); } Console.WriteLine("Press any key to terminate the program."); Console.ReadLine(); } } }How It Works?

Basically what this application does is reads the data for a certificate (you specify the thumbprint and certificate location), converts it into Base64 format string and writes that data in an XML file along with the subscription id. Pretty straight forward!!

Once you have created this file, you can share it with your team members and they can use it with Visual Studio or other tools to manage their subscriptions.

Some Considerations

There’re a few things you would need to keep in mind:

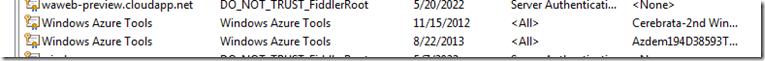

- The certificate you’re using must have private key associated with it. To check if the certificate you’re using has the private key, just look at the icon besides that certificate. It should show a little “key” in that as shown in picture below. Even though the sample application above would work perfectly fine and it will create the file however when you’re trying to authenticate using that certificate, you will get a 403 error.

- The certificate which gets placed in this file is Pkcs12 (Pfx) format with password as empty string. This is similar to the way certificate placed in the publish settings file generated by Windows Azure.

How Would I Go about Using It?

If I were you, I would make use of this utility this way:

- Create a new certificate either by using IIS or makecert utility.

- Install that certificate into my certificate store.

- Export that certificate from certificate store in cer file format and upload it in Windows Azure portal and associate it with my subscriptions.

- Then I would run this utility to create new publish settings file and distribute it (and test it of course before distributing it).

Summary

In this post we learnt (hopefully) about publish settings file and despite it’s shortcomings it’s very useful. We also saw how we can create our own publish settings file.

I hope you’ve found this information useful. As always, if you notice anything incorrect please let me know ASAP and I will fix it.

David Linthicum (@DavidLinthicum) asserted “Whether or not taxes are coming to a cloud near you, the uncertainty could slow cloud adoption and blur business cases” in a deck for his Don't tax the cloud! article of 9/13/2012 for InfoWorld’s Cloud Computing blog:

My good friend Joe McKendrick cites a recent survey of tax professionals at KPMG that concludes, frustratingly, that the "cloud is still so new that there still needs to be homework done on what may or may not be taxed." This kind of vagueness drives me nuts. Worse, it fosters a huge sense of financial uncertainty -- one most tech geeks don't even know exists -- around using cloud computing at all.

There are lots of opinions about whether the government has the authority to tax cloud computing. But there are no hard-and-fast laws, regulations, or court precedents to provide definitive answers as to whether cloud services can be taxed and, if so, what kind and for how much. If you're looking to deploy or use cloud computing, you could very well get a surprise tax bill or tax-based price increase at some point -- or not.

The cloud is not new, so why is this issue getting attention now? The reason: In the United States, there's a growing movement to tax services, not just the sale of physical goods, as government coffers at all levels have shrunk. Cloud computing could easily fall into the services category.

The taxation question has a profound effect on the business case for cloud computing. Today, your business plan might show a 20 percent savings over a five-year period by using public cloud computing. But taxes could wipe out that savings and your business justification, in which case you're better off keeping your data and compute cycles on premises.

I say "could" because the potential cloud services tax rate is unknown, as are which services might be taxable. The tax cost could be anywhere from trivial to obscene, if it materializes at all.

Back in the 1990s, the federal government placed a moratorium on taxing the emerging world of Web-based commerce (e-commerce). This let the new industry grow without having government's snout in the transaction trough. This year, states have all but ended that tax exemption, as e-commerce has become simply another form of sales, so its tax exemption created a tilted playing field against merchants with physical stores.

I ask that the feds create a tax moratorium for the cloud so that it can grow more quickly. Let's keep the cloud tax-free for a few years to encourage progress in cloud adoption.

It’s probably safe to say that David is referring to sales taxes. California begins taxing Amazon and other online purchases on 9/15/2012.

Brian Gracely (@bgracely) posted It's DevOps, or it's the Wrong Conversation on 9/11/2012:

As I was watching this thread develop, with various comments from people that live and breathe IT, one thing kept coming to mind. IT people often try and justify new technology with technology reasoning. It's analogous to answering a question with another question.

Far too often, because IT has almost always been looked at as a cost-center and measured for ROI based on cost-reduction or productivity improvements, technologist feel the need to drive the justification for a new project based on cost.

- How will it be cheaper than the last project?

- How will it reduce spending for the business in some way?

- How can this eliminate something that isn't as effective as this new technology?

The right answer is DevOps.

Huh? Why would I use a technology term, "DevOps", as the answer to the question when I just got done saying we shouldn't use technology to explain technology? Because the technology use of "DevOps" (short for the combined model of Development + Operations) has created the wrong way to shorten the appropriate words. To answer the question, "How to Connect IT and Business?", think of "DevOps" as Developing + Opportunities.

While IT often thinks about technology as a challenge, or a learning experience (or a career-path), the business only sees technology as a potential means to an end. An end that is often focused on the top-line of the company's P&L or Balance Sheet. The business is focused on how to develop that next opportunity to grow the business or position it strategically to alter the market dynamics. The means to that development could be through capital (new investments in people, equipment, partnerships, or technology), or new operational models that involve technology. Its success is ultimately measured in revenue or marketshare, but it has interim measurements like "time to market", "share of audience attention", "customer satisfaction levels". Things that technology can have a direct impact upon.

So if you want to be the IT professional that can better connect the technology you deliver to the business, take a step back from focusing on costs or the nuances of the technology, and instead focus on the right kind of DevOps. Be knowledgeable about how to refactor the IT project list to better impact a new business DevOps and you'll find the business beginning to come to you more often with a desire to partner for the overall business success.

ManageEngine reported ManageEngine Monitors Windows Azure with Applications Manager in a 9/11/2012 press release (missed when published):

ManageEngine, the real-time IT management company, today announced its performance monitoring software package, Applications Manager, now supports monitoring of the Microsoft Windows Azure cloud platform and applications running on the platform as well as monitoring of transactions in .NET environments. The company also introduced the Applications Manager Cloud Starter Edition for organizations looking to quickly leverage a proven, cost-effective solution for monitoring their cloud services.

With more revenue-critical applications on PaaS systems such as Windows Azure, organizations need to proactively and cost-effectively monitor the performance of their cloud-based applications to maximize uptime and to optimize capacity planning. Meanwhile, the success of .NET means that more IT departments have growing numbers of .NET applications running in both traditional and cloud environments, requiring the extension of performance monitoring to include the Microsoft framework.

“Traditionally, SaaS companies prefer to build versus buy their monitoring software,” said Gibu Mathew, director of product management at ManageEngine. “But today, those companies have less time to build monitoring solutions that are becoming increasingly complex. We’ve refined Applications Manager to address the complexity of supporting Windows Azure and .NET. We’ve also addressed IT departments’ time — and cash — crunch with a starter edition that lets users monitor public clouds and apps out of the box as well as retain the flexibility they need for monitoring their cloud services.”

Applications Manager Refinements

The Windows Azure monitoring support in Applications Manager helps IT administrators ensure their business-critical applications running on the Windows Azure platform are performing optimally at all times. It helps IT administrators to view the performance of web, worker and VM roles, troubleshoot performance issues proactively and also optimize resource usage. Among its many benefits, Windows Azure monitoring lets IT professionals:

- Discover Windows Azure applications and all role instances

- Monitor the different states of Windows Azure role instances, receive alerts for critical states and troubleshoot before end users are affected

- Monitor CPU usage, memory and network traffic of VMs

- Monitor request execution time, requests rejected and TCP connections of web apps

The latest release of Applications Manager also includes .NET transaction monitoring to help development teams identify slow spots in transactions by showing method level traces and database queries. Similarly, Applications Manager now provides Apdex user experience scores for .NET components, helping IT communicate application performance achievements to the line of business managers in business-friendly language.

Finally, the new Applications Manager Cloud Starter Edition targets organizations looking to launch cloud-based offerings. Like the rest of the Applications Manager editions, the Cloud Starter Edition is easy to deploy and provides a simple, easy-to-use interface for monitoring physical, virtual and cloud entities. The Cloud Starter Edition supports monitoring of private and public clouds as well as Linux servers, virtualization software such as VMware or in-memory databases like Memcached, and messaging software like RabbitMQ. Organizations having a much broader portfolio of applications — including commercial, packaged applications — in their data center can opt for either Professional or Enterprise editions of Applications Manager.

Pricing and Availability

Applications Manager 10.8 is available immediately with prices starting at $795 for up to 25 servers or applications. Applications Manager Cloud Starter Edition is also available immediately with prices starting at $495 for up to 50 servers or applications. A free, fully functional, 30-day trial version is available at http://ow.ly/7evOs.

For more information on Applications Manager, visit http://www.manageengine.com/apm. For more information on ManageEngine, please visit http://www.manageengine.com; follow the company blog at http://blogs.manageengine.com, on Facebook at http://www.facebook.com/ManageEngine and on Twitter at @ManageEngine.

<Return to section navigation list>

Windows Azure Platform Appliance (WAPA), Hosted, Hyper-V and Private/Hybrid Clouds

My (@rogerjenn) Windows Azure Services allows multi-tenant IaaS cloud article of 9/13/2012 for TechTarget’s Search Cloud Computing blog begins:

Microsoft added another arrow to its cloud computing quiver last month with the announcement of the technical preview of Windows Azure Services for Windows Server. The move enables cloud hosting service providers to offer customers subscriptions to multi-tenant Windows Azure VMs and high-density websites with SQL Server or MySQL databases from a private cloud. Whether enterprise IT can pass down this or similar self-service provisioning features to end users is the question.

The Windows Azure Services for Windows Server (WAS4WS) technical preview (TP) for Hosting Service Providers (HSP) provides only Windows Azure Infrastructure as a Service (IaaS) core services for a private cloud -- stateful virtual machines (VMs) and websites with persistent storage and optional SQL Server or MySQL back ends.

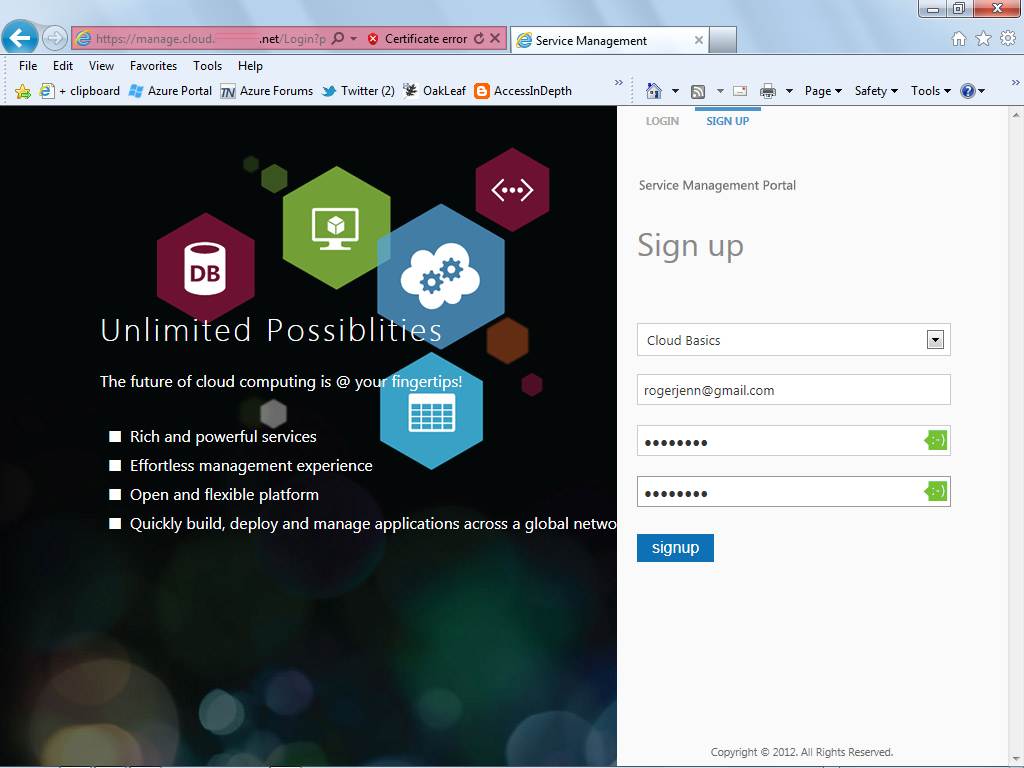

Figure 1. The portal's sign-up form lets potential tenants choose a subscription type, such as Cloud Basics, and provide credentials for managing user accounts.

As a customizable hosting-specific version of the new Modern UI Style (formerly Metro), the Windows Azure Management Portal allows admins to set up multiple subscription plans with varying limits on the number and size of Windows Azure Virtual Machines (WAVMs), Windows Azure Web Sites (WAWS) and SQL Server or MySQL databases (Figure 1). The portal takes advantage of a full-featured, RESTful management API. WAS4WS offers the same choice of Web apps and programming languages as the Microsoft-hosted WAWS.

When trying to understand what WAS4WS is, it's important to first look at what it isn’t. For one, it's not the elusive Windows Azure Platform Appliance (WAPA) that Microsoft announced at its Worldwide Partners Conference in 2010. WAPA was intended to enable selected partners -- initially Dell, HP, eBay and Fujitsu --to duplicate Windows Azure features in their own or their customers' data centers. Currently, Fujitsu is the only third-party provider running WAPA in a non-Microsoft data center.

WAS4WS doesn't include Windows Azure Storage services, nor does it deploy the Windows Azure fabric to implement high-availability features with data replication. Tenants will need to add Web Worker instances to ensure availability, and scale-out for traffic surges.

The closest public cloud equivalent of WAS4WS (other than WAVMs) is the Amazon Virtual Private Cloud (VPC) with Windows Server 2008 R2 images. Neither Amazon nor Google offers a private cloud version of their public cloud services, but Eucalyptus Systems has an agreement with Amazon Web Services (AWS) that enables users to migrate workloads between existing data centers and AWS while using the same management tools and skills across both environments.

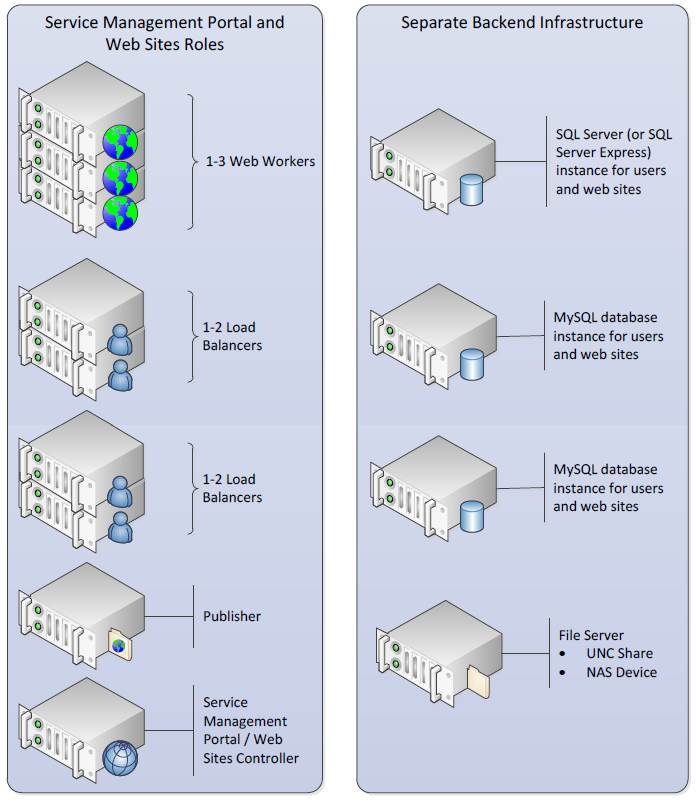

Figure 2. WAS4WS' Service Management Portal and API orchestrate Microsoft Systems Center 2012.

So, what is WAS4WS really? And what are its main features? The Windows Server team's Getting Started Guide includes a basic system diagram for the Windows Azure Services for Windows Server Technical Preview (Figure 2).

The following are a few important features in the Service Management Portal as well as Web Sites-specific server role descriptions:

- Web workers: A Web Sites-specific version of IIS Web server that processes clients' Web requests.

- Load balancer(s): An IIS Web server with a Web Sites-specific version of application request routing that accepts Web requests from clients, routes requests to Web workers and returns responses to clients.

- Publisher: The public version of WebDeploy and a Web Sites-specific version of an FTP that provides transparent content publishing for WebMatrix, Visual Studio and FTP clients.

- Service Management Portal/Web Sites Controller: A server that hosts several functions, including the following:

- Management Service -- Admin Site: Where administrators can create Web Site clouds, author plans and manage user subscriptions.

- Management Service -- Tenant Site: Where users can sign up and create Web Sites, virtual machines (VMs) and databases.

- Web Farm Framework to provision and manage server roles.

- Resource Metering service to monitor Web servers and site resource usage.

According to the guide, a future technical preview will support public DNS mapping; the current TP handles only a single domain with DNS A records. …

And continues with “Private cloud testing requires hefty hardware and software” and “Understanding the Microsoft licensing morass” sections.

Full disclosure: I’m a paid contributor to TechTarget’s SearchCloudComputing.com blog.

<Return to section navigation list>

Cloud Security and Governance

Richard Santalesa reported the availability of a Whitepaper – Local & State Govt Data Security and Cyber Risks on 9/13/2012:

Senior Attorney, Richard Santalesa introduced a whitepaper on legal risks and cyber insurance at this past week’s fall meeting of the New York State Association of Counties - dubbed the think tank for NY’s counties since 1923.

The white paper was released at a breakout session on the meeting agenda addressing “Cyber Security and Cyber Risks in Your County” where Mr. Santalesa’s co-author, Christine Marciano, President of Cyber Data Risk Managers, LLC, a leading cyber risk insurance broker and agent, discussed the landscape of data and information security risks that local and state governments face and how cyber risk insurance can be a tool for ameliorating such sudden and costly potential liabilities.

The white paper entitled Confronting Data Security Risks – Cyber Insurance As a Risk Management Tool For Local and State Government is available now for free download.

Ed Moyle (@securitycurve) posted Cloud security monitoring: Challenges and guidance to TechTarget’s SearchCloudSecurity.com blog on 9/12/2012:

Security monitoring isn't something most organizations have historically done well, for a number of reasons: large and increasing log data volumes, competing priorities from business-facing initiatives, lack of resources and review fatigue, among others. But in the cloud, monitoring becomes even more complicated and difficult because the same forces that make cloud possible can have a negative impact on monitoring controls and erode an organization's ability to take action in response to events.

This means taking a laissez-faire attitude to monitoring after a cloud service is implemented will result in either serious security blind spots or a near-complete inability to monitor at all. To avoid this, organizations need to think strategically about monitoring in a cloud environment; they need to think through how they approach monitoring in new cloud deployments, but they also need to review existing deployments to make sure monitoring capabilities are what they expect. You'd be surprised at how often organizations fail to do this, and how often they get burned as a result.

Cloud security monitoring challenges

Most security practitioners are probably familiar with some of the challenges that impact detective controls when they intersect cloud-enabling technologies like virtualization; for example, the impact a shift to "backplane communications" can have on networking security technologies like intrusion detection systems (IDS) when virtual hosts on the same hypervisor communicate directly without going over the network. But there are other more subtle impacts as well.

Specifically, consider what happens when traditional log management, log correlation or security information and event management (SIEM) tools are used to support a dynamic virtual environment where virtual machines are spawned on the fly to meet spikes in demand and are recycled when no longer required. Will existing monitoring tools provide relevant information in that bursting scenario? Unless you plan specifically for it, they probably won't. Even if you do configure transient or ephemeral hosts to send logs to a central log correlation and archival tool, trying to tie together log data originating from multiple ephemeral hosts (for example hosts using IP addresses that have been recycled multiple times over) can be an exercise in futility. Also, technologies like vMotion that dynamically change where virtual hosts reside on the network can have unintended consequences as well; for example, by relocating an image to a location where log data can no longer reach log collection and forwarding agents.

In addition to technical challenges, there are business and process challenges. Aside from the obvious --for example, shifts in scope where more sensitive applications are moved to an existing environment only evaluated for low-sensitivity usage -- there are also service provider issues. Is your service provider really keeping the types of records you think they are? Have you checked? And what support do you have for visibility into the lower levels of the stack that are (by design) a black box in the cloud model you're using (for example, the operating system logs in a Software as a Service (SaaS) or Platform as a Service (PaaS) deployment)?

Cloud security monitoring guidance

To develop a cloud security monitoring strategy, you'll need two pieces of data: anticipated scope of coverage (i.e. what systems you want to monitor) and a list of the monitoring capabilities you expect to be in place.

Your list of monitoring capabilities should include those implemented by your service provider (if you're using one), those you rolled out along with your cloud deployment, and those you currently rely upon (i.e. existing capabilities). With this list plus the scope, you can determine gaps between what you expect and what's actually in place. Closing these gaps should be included in your intermediate-term planning efforts. Options for how to address the gaps will depend on your cloud strategy; it can involve pushing back on service providers to implement more/better monitoring, deployment of new products, or expansion of the scope of existing controls.

To be most effective, the inventory of monitoring capabilities should also lead to tactical review for each capability. You need to specifically test each one in a routine (periodic) fashion; you'd be surprised how often seemingly subtle changes can directly impact monitoring controls. When that happens, failures can persist indefinitely without someone actually looking for those failures and fixing them. The tactical review should also include drills – simulated investigations that attempt to actively trace events to their source. This should involve requesting log artifacts from your service provider and sifting through application and OS log artifacts. The goal is to discover -- and ideally fix -- any problems before they have an opportunity to impact a real-life security event like a breach.

About the author:

Ed Moyle is a senior security strategist with Savvis as well as a founding partner of Security Curve.

Full disclosure: I’m a paid contributor to TechTarget’s SearchCloudComputing.com.

<Return to section navigation list>

Cloud Computing Events

• Beth Massi (@bethmassi) reported Silicon Valley Code Camp October 6th & 7th in a 9/14/2012 post:

Wow I can’t believe it’s that time of year again! I’ve been speaking at SVCC since it started 7 years ago and it just keeps getting bigger and bigger. Looks like the list of topics and schedule was just finalized and I’m excited at the great line-up of sessions in so many different technology areas. The one an only Scott Guthrie will be making an appearance on Sunday so be sure to check out his Azure sessions.

Yours truly will be speaking about business application development using LightSwitch in Visual Studio 2012 focusing on new features like OData support and publishing to Azure web sites. I’ll also be doing a session on our HTML client preview to show you how quick and easy we’re making mobile business app development. Hope you can join me!

Building Open Data (OData) Services and Applications using LightSwitch

The Open Data Protocol (OData) is a REST-ful protocol for exposing and consuming data on the web and has become the new standard for data-based services. Many enterprises use OData as a means to exchange data between systems, partners, as well as provide an easy access into their data stores form a variety of clients on a variety of platforms. In this session, see how LightSwitch in Visual Studio 2012 has embraced OData making it easy to consume as well as create data services in the LightSwitch middle-tier. Learn how the LightSwitch development environment makes it easy to define business rules and user permissions that always run in these services no matter what client calls them. Learn advanced techniques on how to extend the services with custom code. Finally, you will see how call these OData services from other clients like mobile, Office, and Windows 8 Metro-style applications.

Building Business Applications for Tablet Devices

“Consumerization of IT” is a growing trend whereby employees are bringing their personal devices to the workplace for work-related activities. The appeal of tablet devices for both consumer- and business-oriented scenarios has been a key catalyst for this trend; the growing expectation that enterprise apps should run on “my” device and take advantage of the tablet’s form factor and device capabilities has particularly opened up new challenges for custom business app development. In this demo-heavy presentation, we’ll show how LightSwitch in Visual Studio 2012 makes it easy to build HTML5/JS business apps that can be deployed to Azure and run on Windows 8, iOS, and Android tablets and mobile devices.

Hope to see you there!

Himanshu Singh (@himanshuks) posted this week’s Windows Azure Community News Roundup (Edition #36) on 9/14/2012:

Welcome to the latest edition of our weekly roundup of the latest community-driven news, content and conversations about cloud computing and Windows Azure. Here are the highlights for this week.

Articles and Blog Posts

Windows Azure Mobile Services (VIDEO) Channel 9 (posted Sept 12)

- Disk to Disk to Cloud Protection of Hyper-V Virtual Machines by @hvredevoort

- C1 Deployment of (Windows) Azure Web Roles Using TeamCity by @skirkland (posted Sept 11)