Windows Azure and Cloud Computing Posts for 7/28/2010+

| A compendium of Windows Azure, Windows Azure Platform Appliance, SQL Azure Database, AppFabric and other cloud-computing articles. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database, Codename “Dallas” and OData

- AppFabric: Access Control and Service Bus

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Windows Azure Infrastructure

- Windows Azure Platform Appliance (WAPA)

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Read the detailed TOC here (PDF) and download the sample code here.

Discuss the book on its WROX P2P Forum.

See a short-form TOC, get links to live Azure sample projects, and read a detailed TOC of electronic-only chapters 12 and 13 here.

Wrox’s Web site manager posted on 9/29/2009 a lengthy excerpt from Chapter 4, “Scaling Azure Table and Blob Storage” here.

You can now freely download by FTP and save the following two online-only PDF chapters of Cloud Computing with the Windows Azure Platform, which have been updated for SQL Azure’s January 4, 2010 commercial release:

- Chapter 12: “Managing SQL Azure Accounts and Databases”

- Chapter 13: “Exploiting SQL Azure Database's Relational Features”

HTTP downloads of the two chapters are available for download at no charge from the book's Code Download page.

Azure Blob, Drive, Table and Queue Services

Adron Hall posted Aggregated Web Services Pt I on 7/28/2010:

I’ve been working through an architecture scenario recently. This is what I have so far. Multiple external web services, some SOAP and some REST, and some data sources in a SQL Server Database, Azure Table Storage, and flat files of some sort. All of these sources need to be accessed by a web site for read-only display. In the diagram below I’ve drawn out the primary three points of reference. [Emphasis added.]

- The services that are external; Contract, Table Store, Document, Search, and Help Desk Services.

- The Website Web Services Facade, which would be an aggregated layer that then provides the various services via an internally controlled services layer.

- On top of that will be the web site, accessing the services from the aggregated layer with jQuery.

After creating this to get some basic idea of how these things should fit together, I moved on to elaborate on the web services aggregation layer. What I’ve sketched in this diagram is the correlation to architectural elements and the physical environments they would prospectively be deployed to. Again, broken out by the three tiers as shown above.

- Website and the respective jQuery, AJAX, and Market/CSS for display.

- Web Services, which include the actual architecture breakout; Facade Interface, Facade Aggregation Component, Cache & Non-cached DTOs (Data Transfer Objects), Cache Database/Storage, Caching Process, Lower Layer Aggregation Component, and the Poller Process for polling the external services.

- The cache is intended to use SQL Server, thus the red call out to the physical SQL Server cluster.

- The last tier, which isn’t being developed, but just providing data is the External Services, primarily shown to provide a full picture of all the layers.

Aggregate Web Services

I primarily drew up these diagrams for discussion of the architecture, poke holes in it, or otherwise. Which speaking of, if any readers have input, question, or are curious please type up a comment and I’ll answer it ASAP.

As the effort continues there are some other great how-to write ups I will be putting together. Everything from unit testing, mocking (with moq), how to setup test services, test services, and other elements of the project. I’ll have all this coming, so keep reading & let me know what you think of the design so far, subscribe via e-mail (look to the metadata section below), or grab the RSS for the blog (see below also).

<Return to section navigation list>

SQL Azure Database, Codename “Dallas” and OData

Updated My Linking Microsoft Access 2010 Tables to a SQL Azure Database tutorial on 7/28/2010:

Corrected author name from Ryan McMinn to Russell Sinclair

- Added more details about SQL Azure’s lack of support for extended properties (required for subdatasheets and lookup fields)

- Added a cumbersome workaround for lack of persistant subdatasheets

- And added demo of pass-through queries.

Still no workaround for lookup fields with SQL Azure

Wayne Walter Berry explains the differences between SQL Server and SQL Azure stored procedure caching in his Understanding the Procedure Cache on SQL Azure post of 7/28/2010 to the SQL Azure team blog:

Both SQL Server and SQL Azure have a procedure cache which is used to improve the performance of queries on the server. This blog post will talk about how the procedure cache works on SQL Azure.

SQL Azure has a pool of memory that is used to store both execution plans and data buffers. This pool of memory is used for all databases on the physical machine, regardless of owner of the database. Even though the pool is across all databases on the machine, no one can see execution plans that they do not own. The percentage of the pool allocated to either execution plans or data buffers fluctuates dynamically, depending on the state of the system. The part of the memory pool that is used to store execution plans is referred to as the procedure cache.

When any SQL statement is executed in SQL Azure, the relational engine first looks through the procedure cache to verify that an existing execution plan for the same SQL statement exists. SQL Azure reuses any existing plan it finds, saving the overhead of recompiling the SQL statement. If no existing execution plan exists, SQL Azure generates a new execution plan for the query.

Replicas the Procedure Cache and SQL Azure

An instance of a database running on SQL Azure is called a replica; there are three replicas of any one database running at a given time. One replica is considered the primary replica, all the read and write queries go to this replica. The other two replicas are considered secondary, any data written to the primary replica is also written to the secondary replicas. If the primary replica fails, needs to be cycled for updates, or there is a load balancing operation, then a secondary replica is promoted to the primary.

Each replica resides on different physical machines which are on separate fully redundant racks. Because, procedure cache is on a per server basis, if the primary replica fails/cycled, when the secondary is promoted there are no query plans for that database in the procedure cache.

Procedure Cache Statistics

SQL Azure keeps track of performance statistics for every query plan in the cache. You can view these statistics for your database queries using the dynamic managed view (DMV) sys.dm_exec_query_stats like so:

SELECT * FROM sys.dm_exec_query_statsFor a more details example, see this blog post, I showed how to find queries with poor I/O performance by querying sys.dm_exec_query_stats.

sys.dm_exec_query_stats only reports statistics for the primary replica for your SQL Azure database. If a secondary is promoted to the primary, the results from sys.dm_exec_query_stats might be much different, just seconds later. Queries in the cache might suddenly not be there, or execution counts could be smaller, typically you would see them grow over time.

Removing Execution Plans from the Procedure Cache

Execution plans remain in the procedure cache as long as there is enough memory to store them. When memory pressure exists, SQL Azure uses a cost-based approach to determine which execution plans to remove from the procedure cache.

SQL Azure removes plans from the cache, regardless of the plan owner; all plans across all databases existing on the server are evaluated for removal. There is no a portion of the memory pool set aside for each database. In other words, the procedure plan cache is optimized for the benefit of the machine not that individual database.

SQL Azure currently doesn’t support DBCC FREEPROCCACHE (Transact-SQL), so you cannot manually remove an execution plan from the cache. However, if you make changes to the to a table or view referenced by the query (ALTER TABLE and ALTER VIEW) the plan will be removed from the cache.

Summary

In many ways the procedure cache work much like SQL Server; SQL Azure is built on top of SQL Server. However, there are some differences and I hope you understand them better now. …

Mike Taulty’s OData: The Open Data Protocol post of 7/28/2010 explains OData with a series of hands-on queries against the NetFlix data service:

Mike [Ormond] asked me to write something about OData for this week’s MSDN Flash Newsletter – reproducing that below – mostly just a bit of fun as it’s hard to fit anything into these short articles.

It’s hard to write an article to teach you anything about OData in 500 words. Why set out on that journey when we can do something “less boring instead”;

Run up your browser. I recommend IE8 as it seems to have most control around viewing raw XML but you can use “View Source” in FireFox or Chrome.

Remember in IE8 to switch off the lipstick that it applies to ATOM/RSS data by using the following menu options;

Tools | Internet Options | Content | Feeds and Web Slices | Settings | Feed Reading View OFF

Point it at http://odata.netflix.com/Catalog (the Netflix movie catalog in OData format)

Notice that the default XML namespace is the open standard ATOM Publishing Protocol

Query for Movie People: http://odata.netflix.com/Catalog/People.

Notice that the default XML namespace is the open standard ATOM XML format

Double extra bonus points for spotting that it is supplemented with elements from the Open Data Protocol published under Microsoft’s Open Specification Promise

Scroll down to the bottom and notice that the data is being paged by the server – if you’re feeling adventurous, follow the hyperlink to the next page of data (the one with $skiptoken). If you’re got some time to kill, keep following those “next” links for a few hours

Try to locate Nicole Kidman:

http://odata.netflix.com/Catalog/People?$filter=endswith(Name,'Kidman')

Too many Kidmans? Choose the top record:

http://odata.netflix.com/Catalog/People?$filter=endswith(Name,'Kidman')&$top=1

Not perhaps the best way of finding Nicole (‘Papa?’), use the primary key instead:

http://odata.netflix.com/Catalog/People(49446)

Which films has Nicole been in? Who could forget the classic “Days of Thunder” with Tom Cruise as “Cole Trickle”:

http://odata.netflix.com/Catalog/People(49446)/TitlesActedIn

What if I wanted Nicole’s info as well as the titles she’s acted in?:

http://odata.netflix.com/Catalog/People(49446)?$expand=TitlesActedIn

Too much data? Sort these titles alphabetically and return the first page of data for a page size of 3:

http://odata.netflix.com/Catalog/People(49446)/TitlesActedIn?$orderby=ShortName&$top=3

But to do paging I’ll be needing the total record count (notice m:count element with value 47):

Next page:

Prefer JSON? Well, there’s no accounting for taste but I know “Cole Trickle” preferred it too:

Getting a feel for it? It’s not just about querying though…

OData is a web protocol based on open standards for RESTful querying and modification of exposed collections of data. It takes the basics of REST and adds mechanisms for addressing, metadata, batching, and data representation using HTTP, AtomPub, Atom and JSON.

Want to expose OData endpoints? See here. There is support in a number of products and also in .NET Framework (V3.5 Sp1 onwards) for easily exposing OData endpoints from standard data models such as those provided by Entity Framework and also from custom data models provided by your own code.

Want to consume OData endpoints? See here. There are client libraries for all kinds of clients including AJAX, full .NET, Silverlight and Windows Phone 7.

Either way, go here and watch Pablo explain it more fully (double-click the video once you’ve started it playing as no-one can be expected to watch a video playing in a 1.5inch square.)

Mike’s article is here.

Ron Jacobs posted a “not fully baked” 00:09:35 endpoint.tv - Canonical REST Service screen cast on 7/27/2010:

ca·non·i·cal [ kə nónnik'l ] conforming to general principles: conforming to accepted principles or standard practice

In this episode I'll tell you about my new Cannonical REST Service sample code on MSDN code gallery that demonstrates a REST Service built with WCF 4 that fully complies with HTTP specs for use of GET, PUT, POST, DELETE and includes unit tests to test compliance.

Cannonical REST Entity Service (MSDN Code Gallery)

Ron Jacobs

blog http://blogs.msdn.com/rjacobs

twitter @ronljacobs

<Return to section navigation list>

AppFabric: Access Control and Service Bus

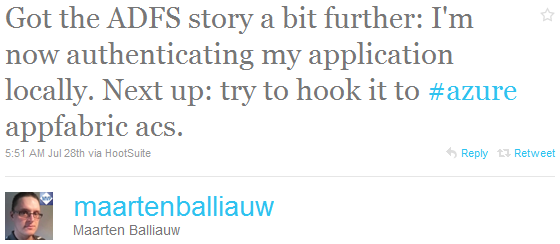

Maarten Balliauw reported about his attempt to hook up Active Directory Federation Services (AD FS) 2.0 with the Windows Azure AppFabric’s Access Control Services in this 7/28/2010 tweet:

As you’ll discover, hooking AD FS to AppFabric’s ACS is not a walk in the park. Stay tuned.

Keith Schultz’s Microsoft Active Directory Federation Services 2.0 article of 7/7/2010 for TechWorld offers this verdict and an overview and review of AD FS 2.0:

Active Directory Federation Services is a great way to extend trusted authenticated access between domains using claims-based authentication. The fact that it works with other open Web standards allows it to extend its reach into non-Microsoft domains, while still allowing trusted access and single-sign-on capabilities. It does require a little work to get set up, but once in place, the benefits really pay off.

Reminder: ARCast.TV - Architecting Solutions with Microsoft Azure AppFabric is a 00:14:07 interview about AppFabric fundamentals:

In this episode, Damon Squires, principal architect at RDA, discusses with Zhiming Xue where in a typical enterprise solution Microsoft Azure AppFabric -- consisting of the Service Bus and the Access Control Service -- may be used, how on-premise data are communicated with these services running in the cloud and what benefits such a hybrid solution may bring to enterprise customers.

Through his first-hand experience of working with the Azure AppFabric, Damon shares a few tips on configurations of service end-points and a workaround for debugging a solution based on the Azure App Fabric.

See John Fontana asks Where do we go from here? Thoughts from the Summit in a 7/28/2010 wrap-up to Ping Identity’s Cloud Identity Summit (#cis2010) in the Cloud Computing Events section.

Ping Identity explains Internet Single Sign-On & Federated Identity in this brief article from their Knowledge Center:

With employees accessing many different resources over the Internet to do their daily jobs, organizations need to maintain a secure working environment while ensuring users remain productive. Single Sign-On (SSO) is ideal for achieving both objectives. However, traditional SSO products were never designed to be used over the Internet with one organization managing user’s identities and a different, independent organizations providing the user’s applications.

Internet SSO, as the name implies, is single sign-on that works across the Internet. It allows users with Web browsers to securely and easily access multiple Web applications while only logging in once, even though the applications are most likely managed by different organizations. The key to implementing Internet SSO is a technology and a set of industry standards called “federated identity”. Federated identity allows identities to be shared securely across disparate networks and applications. It is the "glue" that enables Internet SSO to occur at scale.

Federated identity is the glue that enables Internet SSO to occur at scale across numerous identity providers and service providers.

In order for identity federation to work, a few prerequisites must be met. First, users must be authenticated by an organization such as their employer or a hosted authenticator such as Google Apps. The organization that “owns” the users’ identity is known as the Identity Provider or IdP. Identity federation also assumes that another group or organization owns the data and applications that IdP users need to access via Internet SSO. These organizations are known as Service Providers (SPs). Both the IdP and the SP need to be running federated identity software that supports the same federation protocol so the IdP and SP can communicate.

When a user wants to use federated identity to SSO into an application, all they have to do is click on a hyperlink or a shortcut to get directly into their application. If they are not already logged in to their IdP, they are prompted to do so before they are automatically redirected into their application.

The underlying system that makes this secure Internet SSO operation possible is completely invisible to the end user. Under the covers, when the user clicks on the link, the IdP securely communicates a predefined set of facts about the user called attributes to the SP so the SP can set up a Web session for the user.

Several different federated identity standards have been created over the years to address different use cases. By far the most widely deployed and popular federated identity standards is OASIS Security Assertion Marketing Language (SAML). SAML has emerged as the predominant standard for most businesses, government organizations and their service providers.

A similar and related standard, WS-Federation, continues to be found in Microsoft-centric environments. Newer protocols including OpenID, OAuth and CardSpace are attracting some interest for so-called user-centric use cases. Without these federated identity standards, each Internet SSO connection would have to be individually negotiated and engineered—think of the days before TCP/IP became the common protocol for networking.

Chuck Mortimore claims “scale mandates standards” in his slide deck for his (@cmort) Observations on Cloud Identity presentation to Ping Identity’s Cloud Identity Summit 2010 (#cis2010) is available:

Beware Chuck’s special transition effects; they might give you vertigo.

See also John Fontana asks Where do we go from here? Thoughts from the Summit in a 7/28/2010 wrap-up to Ping Identity’s Cloud Identity Summit (#cis2010) in the Cloud Computing Events section.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

Atul Verma shows you How to host a service in Azure and expose using WCF and Visual Studio 2010 : Issues Faced and Fixes in this 7/27/2010 post to his MSDN blog:

This blog is regarding my first attempt to explore “Hosting a service in Azure using WCF and Visual Studio 2010”, the issue I faced and the quick fixes required to make it running. You can explore the Windows Azure Platform at http://www.microsoft.com/windowsazure/

I faced a few issues and so sharing the workarounds. I won’t get into details in this post so keeping things simple and targeting the workarounds only.

1. Run the Visual Studio 2010 with elevated permission e.g. “Run as Administrator” option otherwise you will get a prompt as displayed below when creating/opening the Windows Azure Cloud Service project.

You may need to install Windows Azure Tools for Microsoft Visual Studio to enable the creation, configuration, building, debugging, running and packaging of scalable web applications and services on Windows Azure. The download link for Windows Azure Tools for MS Visual Studio 1.1 is http://www.microsoft.com/downloads/details.aspx?FamilyID=5664019e-6860-4c33-9843-4eb40b297ab6&displaylang=en

2. Create a New Project. Select Cloud from Installed Templates and then select Windows Azure Cloud Service and click OK.

3. Select the WCF Service Web Role as we are exposing service using WCF. I’ll go with the default code that gets generated and without making any change according to the context of this Blog. Build and Run the application.

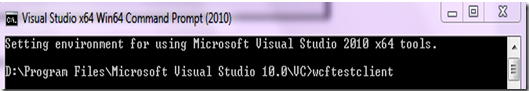

4. In order to test the WCF services we will use Microsoft WCF Test Client. This can be opened as displayed below:

5. We need to Add the Service to test by right clicking on the My Service Projects and then clicking Add Service. Here we need to specify the end point address e.g. in my case it was “//127.0.0.1:81/Service1.svc?wsdl”

6. In case you see the error “Service metadata may not be accessible” as displayed below we need to add a Service Behaviour in the Web.Config file as displayed in next step:

7. The section displayed in green font is required to resolve the error in above step. This is a known issue and there is a patch available for it at http://code.msdn.microsoft.com/wcfazure/Wiki/View.aspx?title=KnownIssues. You may see that IDE doesn’t recognise it shows a Warning so ignore the warning

<serviceBehaviors>

<behavior name="WCFServiceWebRole1.Service1Behavior">

<!-- To avoid disclosing metadata information, set the value below to false and remove the metadata endpoint above before deployment -->

<serviceMetadata httpGetEnabled="true"/>

<!-- To receive exception details in faults for debugging purposes, set the value below to true. Set to false before deployment to avoid disclosing exception information -->

<serviceDebug includeExceptionDetailInFaults="false"/>

<useRequestHeadersForMetadataAddress>

<defaultPorts>

<add scheme="http" port="81" />

<add scheme="https" port="444" />

</defaultPorts>

</useRequestHeadersForMetadataAddress>

</behavior>

</serviceBehaviors>8. Now when you run you may see the error displayed below in browser. This is a known issue and there is a patch available for it at http://code.msdn.microsoft.com/wcfazure/Wiki/View.aspx?title=KnownIssues. Install this patch and after installation restart your machine:

9. Now when you run the application it will run fine so it’s time to test it using Microsoft Windows Test Client. In case WCF HTTP Activation is turned off you may now see this error while trying to Add the service to test as in Step 5:

10. In Order to resolve the error above just turn on the WCF HTTP activation in “Turn Windows Features on and off” which is turned off as in image displayed below

11. Now add the service to the WCF test client as in Step 5. Voila finally everything is working fine. In order to test your WCF service choose any of the Operation e.g. GetData() in this case, double click it, specify the Value in Request section e.g. I have specified 23 and click Invoke. In the Response you will see the results:

12. At runtime, WCF services may return the following error: The message with To 'http://127.0.0.1:81/Service.svc' cannot be processed at the receiver, due to an AddressFilter mismatch at the EndpointDispatcher. Check that the sender and receiver's EndpointAddresses agree. The error can be corrected by applying the following attribute to the service class.

[ServiceBehavior(AddressFilterMode = AddressFilterMode.Any)]This concludes the article as we have Service hosted in Microsoft Azure platform and exposed using WCF and Visual Studio 2010. Time to explore the advanced features of Azure platform.

The Microsoft Case Studies Team posted AXA Group Relies on Cloud Services Solution to Efficiently Manage Insurance Claims on 7/13/2010:

AXA Seguros, part of AXA Group, is an insurance company that strives to deliver superior customer service, which is critical for success in this industry. The company is replacing an inefficient, manual, claims-management system with one based on the Windows Azure platform. AXA Seguros experienced simplified development and deployment, was able to focus its efforts on business logic rather than technology, and avoided capital expenses. …

AXA Seguros wanted a new claims-management system that did not rely on manual processes or infrastructure destined for obsolescence, but instead had the flexibility and scalability to grow with the business. At the same time, the insurance company did not want to make costly financial investments or go through the time-consuming process of procuring new infrastructure hardware—which, in this case, could take months and cost tens of thousands of dollars.

Solution: AXA Seguros decided to implement the Windows Azure platform in a pilot deployment for a new claims-management system. Windows Azure is a cloud services operating system that serves as the development, service hosting, and service management environment for the Windows Azure platform. Windows Azure provides developers with on-demand compute and storage to host, scale, and manage web applications on the Internet through Microsoft data centers. When it came to choosing a cloud services provider, AXA Seguros chose the Windows Azure platform because it is a comprehensive solution. “With other cloud providers, you only get part of the solution, but with Windows Azure, you get the entire infrastructure,” explains Juan Carlos Robles Navarro, Senior Software Engineer at AXA Seguros.

Organization Size: 216,000 employees

Organization Profile: AXA Seguros is part of the AXA Group, which provides insurance products and services to 96 million customers and posted 2009 revenues in excess of €90 billion (approximately U.S.$110 billion).

Software and Services:

- Windows Azure

- Microsoft SQL Azure

- Microsoft ASP.NET

- Microsoft .NET Framework

Vertical Industries: Insurance Industry

Country/Region: Mexico

Partner: EMLink

Read the entire case study here.

Return to section navigation list>

Windows Azure Infrastructure

Steve Ballmer wrote The Future Is Cloudy (And that suits Microsoft just fine) on 7/29/2010 for Forbes.com’s Commentary blog:

The scientists at NASA’s Jet Propulsion Lab had a problem--they had vast amounts of data from the Mars Rover and Mars Orbiter programs, but no easy way to share it with millions of researchers and space enthusiasts around the world.

The financial analysis firm RiskMetrics had a different problem--their business came in cyclical waves that overwhelmed their ability to deliver during peak times.

The Kentucky Department of Education had yet another problem--it needed a cost-effective way to close the technology gap between rich and poor school districts and provide hundreds of thousands of students, teachers, and staff with access to high-quality communications tools and educational resources.

For a solution, all three organizations turned to the cloud--and to Microsoft ( MSFT - news - people ).

The cloud is revolutionizing computing by linking the computing devices people have at hand to the processing and storage capacity of massive data centers, transforming computing from a constrained resource into a nearly limitless platform for connecting people to the information they need, no matter where they are or what they are doing.

That’s why the cloud has been a major focus for Microsoft for more than 15 years, dating back to the introduction of Hotmail, our Web-based e-mail service that today has more than 360 million users. And Exchange, our e-mail backbone for millions of businesses around the world, has been available as a hosted service for more than a decade.

It’s also the reason we’ll invest $9.5 billion in R&D this year--more than any other company in the world--with most of that devoted to cloud technologies. Right now, 70% of Microsoft’s 40,000 engineers work on cloud-related products and services. By next year, that number will grow to 90%.

We see five dimensions that shape our work in the cloud--and the impact the cloud will have on people’s lives:

--The cloud creates opportunities and responsibilities--opportunities for anyone with a good idea to reach customers anywhere in the world, combined with the responsibility to respect individual privacy everywhere and at all times.

The Microsoft Financial Analyst Meeting 2010 (#fam2010) press release of 7/29/2010 links to this post.

Sam Johnston (@samj) posted the slide deck for his pro-Cloud Standards BoF session at the 78th meeting of the Internet Engineering Task Force (IETF) in Maastricht, Netherlands:

Sam works for Google in Zurich.

Peter Schoof posted Getting Out of the Server Business and Into the Service Business: Talking Cloud With Jeff Kaplan to ebizQ’s Business Agility Watch blog on 7/29/2010:

Listen to my podcast with Jeff Kaplan, the Founder and Managing Director of ThinkStrategies. In this podcast we discuss government's use of the cloud and the far-reaching impact it will have on businesses in the future.

Listen to or download the 8:35 minute podcast below:

---TRANSCRIPT---

PS: Now, people often think that the government is generally behind the times in terms of technology. Would you say this is also true in terms of cloud computing?

JK: Not at all. In fact, the Obama Administration as well as other segments of the public sector are recognizing that cloud computing may in fact be a timely solution to meet the escalating budgetary challenges that they're facing in this tough economic environment.

PS: What are some of the key government initiatives that industries should pay attention to?

JK: Well, at the federal level, the CIO for the Obama Administration comes out of the technology industry and he announced early on that he's a big proponent of both software-as-a-service as well as the brother cloud computer phenomenon. And he's been working with government agencies as well as the leaders within the cloud computing sector to drive cloud computing alternatives into the federal policymaking process.

So that includes most recently a directive by the Office of Management and Budget to put a freeze on about $3 billion worth of spending that was planned for this year on new financial management systems to help control government spending at the federal level. What they want to do is evaluate those projects to determine whether or not they might be more effectively deployed on a cloud infrastructure and be a software-as-a-service solution model.

In addition, about a year or so ago, the federal government launched its own apps marketplace called Apps.gov and it's a terrific sight not only for government agencies but even for private institutions to take a look at. Because in addition to having an assortment of online software-as-a-service solutions available to be procured from the site itself, it also is a terrific resource center for industry best practices and even definitions of what is SaaS and cloud computing including the National Institute of Standards and Technologies definition of cloud computing which is a working whitepaper which they provide in a Word format which is terrific.

Then also, just to make the point even more dramatically, when we think about the cloud, you tend to think of the government being a laggard when it comes to technology. Of course, we know that NASA and other segments of the federal government have actually been in the lead in terms of developing new technologies. Well, so is the Department of Defense and it has been a big proponent of SaaSifying, if you will, some of their government processes.

They have recently been deploying at a customer relationship management system provided by a company called RightNow to improve the responsiveness of the agency, not only to their own employees' requirements but to third party contractors and U.S. citizens as well. So we're seeing a lot of things happening at the federal level. …

The transcript continues here.

Larry Dignan quotes Forrester Research’s James Staten (see post below) in his Companies can't handle the cloud computing truth; You're not ready post of 7/28/2010 to ZDNet’s Between the Lines blog:

Despite all the chatter about cloud computing, most companies aren’t even close to being ready for it.

In a Forrester Research note, analyst James Staten starts off with a sledgehammer:

Cloud computing — a standardized, self-service, pay-per-use deployment model — provides companies with rapid access to powerful and more flexible IT capabilities and at price points unreachable with traditional IT. Although many companies are benefitting from public cloud computing services today, the vast majority of enterprise infrastructure and operations (I&O) professionals view outside-the-firewall cloud infrastructure, software, and services as too immature and insecure for adoption. Their response: “I’ll bring these technologies in-house and deliver a private solution — an internal cloud.” However, cloud solutions aren’t a thing, they’re a how, and most enterprise I&O shops lack the experience and maturity to manage such an environment.

Why are these IT shops immature? They don’t have standard processes, automation or virtualized infrastructure. If company has a greenfield to play with the cloud is an option, argues Staten. Most companies will take years to create cloud computing infrastructure internally.

Also: Choosing the right cloud platform

You’re not yet a cloud - get on the path today

It’s hard to argue with Forrester’s take. There’s a lot of not-so-sexy grunt work to do before cloud computing is an option. IT services often aren’t standardized and you need that to automate. Meanwhile, various business units inside a company are rarely on the same infrastructure.

Staten argues that cloud computing will be a lot like virtualization adoption.

It took time to virtualize your server environment, and the same will be true with internal cloud computing. It’s been more than 10 years since server virtualization debuted, and only recently did it become mainstream, with more than 60% of enterprises using it on their x86 infrastructure. But we’re not done. As of September 2009, enterprises reported that less than half of their x86 server population was virtualized and that they would, on average, only get to 65% of their x86 servers virtualized by the end of 2010.

Nevertheless, companies have to at least start dabbling with cloud computing with new projects because developers and business unit leaders are already headed there. In any case, the march to cloud computing begins with a hard look at your IT infrastructure. Right now, you’re likely to have the march of a million cloud computing pitches. Staten notes:

You can’t just turn to your vendor partners to decode the DNA of cloud computing. They are all defining cloud in a way that indicates that their existing solutions fit into the market today — whether they actually do or not. The term “cloud” is thrown around sloppily, with many providers adding the moniker to garner new attention for their products.

More: Amazon, Google in investment mode; Building clouds isn’t cheap

Audrey Watters adds detail to James Staten’s You're Not Ready For Internal Cloud report of 7/26/2010 for Forrester Research in her Forget Arguments About Public Clouds, Enterprise Isn't Even Ready for the Private Cloud post of 7/28/2010 to the ReadWriteCloud blog:

The benefits of scalability and flexibility touted by cloud computing are rapidly pushing its adoption forward. And while there are still hesitations in some sectors about the emerging technologies - questions about security, control, and interoperability - the majority of tech experts see moving to the cloud as inevitable.

Nevertheless, it's still fairly common to hear the argument that the public cloud isn't quite ready or quite right for the enterprise. But a recent Forrester report contends that enterprise might not be ready for the private cloud either. Pointing to a survey that found only about 5% of IT shops have the experience internally to make the move to the cloud, the report, authored by James Staten with Christian Kane, Robert Whiteley, contends that many companies simply aren't ready.

Calling virtualization "the yellow brick road to the cloud," Staten recommends companies assess their own technological maturity and their adoption of virtualization in order to ascertain whether private cloud environments are the right move right now.

According to Staten, you're ready for the private cloud if you meet the following criteria:

- You have standardized most commonly repeated operating procedures.

- You have fully automated deployment and management.

- You provide self-service access for users.

- Your business units are ready to share the same infrastructure.

Staten notes that preparing your IT operations for Stage 4 will take many years, but says that moving to the cloud doesn't have to wait until then. Rather than a whole-scale switch, he recommends moving smaller projects and investments, such as development and testing, into the cloud. He also recommends outsourcing the internal cloud to an Infrastructure-as-a-Service cloud provider - preferably one that will offer some training.

The "reality check" that the Forrester report offers might not be what some IT departments or cloud advocates want to hear. But as Staten concludes, "The economics of cloud computing is too compelling for you sit on the sidelines waiting for the hype to die down. You need to start investing in IaaS now to understand how best to leverage it. You also need to embrace the fact that your developers and line-of-business leads aren't waiting for you to figure this out."

James Staten claims You're not yet a cloud - get on the path today in this 7/27/2010 post to his Forrester Research blog:

With all the hype and progress happening around cloud computing, we know that our infrastructure and operations professional clients are under pressure to have a cloud answer. This is causing some unproductive behavior and a lot of defensiveness. A growing trend is to declare victory – point to your virtual infrastructure where you can provision a VM in a few seconds and say, “See, I’m a cloud.” But you aren’t, really. And I think you know that.

Being a cloud means more than just using server virtualization. It means you have the people, process, and tools in place to deliver IT on demand, via automation, are sharing resources so you can maximize the utilization of assets and are enabling your company to act nimbly. In our latest Forrester report we document that to be a cloud you need to have:

- Standardized your most commonly repeated operating procedures.

- Fully automated deployment and most routine management tasks.

- Provided self-service access for internal users via a service catalog or portal.

- Made sure business units are sharing the same infrastructure.

And according to our analysis, very few – 5% – of enterprise IT ops teams today meet these requirements. We’ve documented a maturity path for organizations that help you get to this point but don’t expect to get there overnight. Our research shows it takes literally years to get cloud-ready.

This means you should begin investing now in cloud knowledge by experimenting with cloud infrastructures and cloud-in-a-box solutions to learn how clouds actually operate and what the delta is between where you are now and where you should aim to get.

Christian Kane contributed to this report.

David Linthicum takes on David Mitchell Smith in his Gartner Gets Cloud Computing Wrong article of 7/28/2010 to ebizQ’s Where SOA and Cloud Meet blog:

I have to thank Joe McKendrick for pointing out some recent comments from Gartner's David Mitchell Smith that tosses a bit of "cold water" on the clear links between SOA and cloud computing.

"In the world of SOA we talk of services as software, live components and objects (technical things), but in the real world when you talk about service it is outcome based... People will say 'we are doing SOA so we are ready for the cloud', but the difference between SOA services and the cloud context is huge. With cloud, you pay for the outcome, not the technology. In cloud the service terminology you are focusing on is a relationship between service provider and consumer not technology provider and consumer."

I don't get bent out of shape when analysts say these sorts of things, they even disagree within the firm. However, I wanted to make sure that we're not missing the larger point here around the links between SOA and cloud computing, and perhaps adjust Mr. Smith's thinking a bit here. I would first and foremost encourage him to read my book, Cloud Computing and SOA Convergence for about 300 pages of more detail I can't get into here.

If I understand the "analyst speak" here, I think he is getting at some truth in that cloud computing is really type of solution, or a solution pattern, and that SOA is an architecture, or a way to define those patterns. Clearly, can't define the solutions using cloud computing without architecture, and that architectural approach is SOA.

The larger issue here is that those moving to cloud typically don't like to talk about SOA as a means to get there, considering that SOA is an architectural approach or dare I say discipline, and cloud computing is new and cool technology that we're once again hopeful will eliminate the need for architecture and planning. It won't.

In order to be successful with cloud computing we can't lose sight that most successful cloud computing architectures are SOAs that leverage private, public, and hybrid cloud platforms. If you're considering leveraging cloud services without putting in the context of SOA, you'll crash and burn pretty quickly, trust me.

That said I'm happy to challenge Mr. Smith to a public debate on this very interesting topic...virtual glove slap across the face. :-)

Joe McKendrick analyzes Gartner analyst David Mitchell Smith’s take on SOA and cloud computing in a Gartner throws some cold water on SOA-cloud link post of 7/28/2010 to ZDNet’s Service Oriented blog:

There’s been no shortage of discussion about the transition between service-oriented architecture and cloud, particularly among vendors repositioning (or “cloud washing”) their offerings for the next big thing. My friend Dave Linthicum is adamant that many of the same principles and methodologies invoked for SOA — particularly effective governance — need to be applied to emerging cloud formations. He also points out that while SOA is the foundation, cloud is the delivery mechanism for services.

Gartner analyst David Mitchell Smith, however, sees things somewhat differently, saying SOA and cloud address different levels of service delivery. Smith, speaking at Gartner’s recent SOA and software summit in Sydney, was quoted in CIO as describing how SOA is more about the underlying technology, while cloud is about the business relationship:

“In the world of SOA we talk of services as software, live components and objects (technical things), but in the real world when you talk about service it is outcome based… People will say ‘we are doing SOA so we are ready for the cloud’, but the difference between SOA services and the cloud context is huge. With cloud, you pay for the outcome, not the technology. In cloud the service terminology you are focusing on is a relationship between service provider and consumer not technology provider and consumer.”

“There is a huge leap” in assuming SOA equals cloud. However, Smith adds that the concepts are related and undertaking SOA is a good thing to prepare for cloud. Still, he says, cloud is a “style of computing, not a technology or architecture.”

Fair enough, and I don’t think David Mitchell Smith and Dave Linthicum are too far apart on this point.

Still, people in the industry have spent years trying to hammer home the point that SOA is not about technology at all; it’s about building an architecture that effectively provisions business services when and where needed across enterprises.

Another point in which I beg to differ is the nature of the relationship between service providers and service consumers. Smith says cloud differs from SOA in that it “is all about trust and if you don’t trust the provider, you shouldn’t be doing it.”

The success of SOA, too, is built upon trust between service providers and consumers — even when they are part of the same enterprise. Without trust, there will be no service oriented architecture, let alone cloud.

James M. Connolly posted Yankee Group: Sky clears for cloud computing to the Mass[achusetts] High Tech blog on 7/27/2010:

More than half of U.S. enterprises now consider cloud computing a viable technology, with favorable views on cloud jumping by more than 50 percent in just a year, according to the Boston market research firm Yankee Group.

The information technology-oriented research house said in a report that “cloud computing is on the cusp of broad enterprise adoption.”

A year ago, less than 37 percent of enterprises told Yankee Group that they saw cloud computing as an enabler. In the most recent survey of 400 enterprises, those seeing the concept as a business enabler had jumped to 60 percent.

Other findings in the report included only 17 percent of respondents saying that they view Amazon and Google as trusted cloud partners, with more companies favoring IT providers such as IBM Corp., Hewlett-Packard Co.’s EDS, Cisco Systems Inc. and VMware; and most enterprises preferring private clouds. That reflects sentiment expressed at Mass High Tech’s “Health IT and the Cloud” forum held in June.

In April, Yankee Group announced that it had received $10 million in funding from its primary owner, Alta Communications of Boston, and that CEO Emily Nagle Green was searching for a new CEO, with Green remaining as chairman while she focused on evangelism and external market work.

Phil Wainwright explains Choosing the right cloud platform in a 7/26/2010 post to ZDNet’s Software as Service blog:

The emergence of a number of self-proclaimed ‘open’ cloud platforms presents any would-be cloud adopter with a confusing plethora of choice. Rackspace’s OpenStack initiative, unveiled last week, is the latest, but it’s by no means the only one [disclosure: Rackspace hosts my business and personal websites free of charge]. There are also portability initiatives like VMware’s ‘open PaaS‘ which, except in its use of the Spring stack, seems to be open in much the same way that Windows Azure is open (it’s a published standard and you have a choice of hosting partners).

For cloud adopters all these offerings, in their various ways, hold out the promise of pursuing a hybrid strategy. They’re attractive because they provide the option of putting some assets in the cloud while keeping others on trusted terra firma — or at the very least, a user can reserve the option of pulling their IT back off the provider’s cloud if they ever need to, avoiding lock-in to a single provider.

I’d like to dig into the multiple reasons why this can be attractive. But first let me pause for a moment to highlight a huge problem in all of this. There’s a very strong risk that all this choice merely serves to obscure the bigger picture of why people should choose a cloud platform in the first place. Too often, people get fixated on technology attributes like virtualization and IT automation. While these are useful, money-saving features, they aren’t the main point. Cloud computing is valuable because it’s in the cloud, where it benefits from all the advantages of running on infrastructure that’s shared by a broad cross-section of users and is ready to connect seamlessly to other cloud resources. See my earlier discussion, Cloud, it’s a Web thing.

Having said all that, there are a number of reasons why you might still want to adopt a cloud platform that, at the same time as having all that cloud goodness, allows you to move your applications somewhere else should you wish to:

That all-important comfort feeling. You may never move off the platform — experience shows that most cloud users stay put, even if they originally planned to go back in-house at a later date — but there’s a huge comfort in knowing that, if you ever need to, you can. Back in the late 1980s, I used to sell Compaq kit. The company had some humungously large disk arrays in its price list. A Compaq product manager once told me the company virtually never sold any, but had found it was essential to have them in the price list because buyers wanted to know they were there if they ever needed them. The existence of an off-cloud option serves the same role for cloud buyers.

Architectural portability. Yes, yes, yes, you know that your cloud provider offers the kind of scalability, availability and performance that you could only dream of. But just occasionally, there are architectural tweaks that would make a specific application run better in certain use cases. When it matters, you want the flexibility to be able to move to a host that offers that architecture.

Operational portability. The trouble with having only one choice of provider is that you’re stuck. If the provider jacks up its prices, starts offering poorer performance or bad customer service, or simply becomes less competitive over time, you have nowhere to go. You may also have needs that vary depending on the time of year, the type of application or even the geographic location of users (eg to comply with data privacy laws). An open platform gives you a choice of different hosts — including in-house — that you can move between in line with your operational needs. This is especially important when you’re using a cloud provider for sporadic burst capacity.

Service level flexibility. Although cloud providers may offer more service level granularity in the future, today they mostly offer just a single service level (in many cases, it’s not even specified or guaranteed). So the only way to select variable service levels — for example, to put certain applications on five-nines availability while others do perfectly well on a cheaper three-nines platform — is to use more than one provider. That’s a lot more complex to manage if each one has its own proprietary platform rather than one standardized, open stack.

Is all this just a matter of APIs? It depends what you need to specify and how sophisticated the APIs are. At present, the APIs don’t offer the granularity to cover a tenth of the needs I’ve outlined above — nor is it in the established providers’ interests to give away too much flexibility to move between platforms any sooner than they need to.

What we’re witnessing here is a struggle for market advantage between three separate contingents; first movers hold the field but there could be an opportunity for others to dislodge them by winning the mantle of most open or, perhaps, most vanilla.

Among first movers, Amazon holds a pre-eminence that some are already arguing is unassailable. Others still fancy Microsoft because of its installed base and its developer community, while some still rate Google or Apple because of their cloud presence in other areas.

The mantle of most open is being fought over by a crowd of contenders. Rackspace turned a neat trick by launching its claim with a useful sprinkling of NASA spacedust. Despite its proprietary core, VMware has made a strong play, aligning itself with Java developers and building on its credibility as a virtualization platform. Some of the lesser known second-tier players could quickly win mindshare by teaming up with a big telco or hardware vendor.

Either camp could claim the mantle of most vanilla. This is an attribute that works best in the cloud environment (think SOAP vs REST). The victor is likely to be the platform that does the best job of evolving to become an elegant, simple and straightforward mechanism that satisfies market demands for interoperability and portability — irrespective whether it achieves that through open APIs or a more extensive open framework.

Either way, the predominance of vanilla in the cloud environment stacks the odds against anyone who teams up with a telco or big systems vendor, since these are the players with the least skills or confidence in reducing systems to their simplest primitives. Most people who understand the cloud environment instinctively realize that this hands the advantage to Amazon, Google and other cloud-native players as the most likely long-term victors.

Windows Azure Platform Appliance

No significant articles today.

<Return to section navigation list>

Cloud Security and Governance

W. Scott Blackmer analyzes Mexico's New Data Protection Law in this 7/28/2010 post to the Info Law Group blog:

Mexico has joined the ranks of more than 50 countries that have enacted omnibus data privacy laws covering the private sector. The new Federal Law on the Protection of Personal Data Held by Private Parties (Ley federal de protección de datos personales en posesión de los particulares) (the “Law”) was published on July 5, 2010 and took effect on July 6. IAPP has released an unofficial English translation. The Law will have an impact on the many US-based companies that operate or advertise in Mexico, as well as those that use Spanish-language call centers and other support services located in Mexico.

Like the EU Data Protection Directive and the Canadian federal PIPEDA legislation, Mexico’s data protection statute requires a lawful basis, such as consent or legal obligation, for collecting, processing, using, and disclosing personally identifiable information. There is no requirement to notify processing activities to a government body, as in many European countries, but companies handling personal data must furnish notice to the affected persons. Individuals have rights of access, correction, and objection (on “legitimate grounds”) to processing or disclosure. In the event of a security breach that would significantly affect individuals, those persons must be promptly notified. The Law also addresses data transfers, both within and outside Mexico.

A federal agency, the Institute for Access to Information and Data Protection (IFAI), will provide interpretive guidance and supervise compliance with the new law. IFAI will investigate complaints and inquiries and may launch investigations on its own initiative. In addition to administrative sanctions including warnings and fines, the law contemplates criminal prosecution of violators, with more substantial fines and the possibility of imprisonment for those responsible for a security breach or for fraudulent or deceptive collection and use of personal data.

The Law regulates private parties that “process” personally identified or identifiable data, with exceptions for credit reporting agencies (which are already covered by separate legislation) and individuals recording data exclusively for personal use. Definitions largely track those of the EU Data Protection Directive, including a very broad definition of “processing” that includes any collection, use, storage, or disclosure of data. The Law also uses the concepts of “data controller” and “data processor” as found in the EU Directive, respectively signifying entities that decide to process personal data and entities that carry out processing on their behalf.

The Law departs from the EU Directive, however, in reflecting the habeas data concept found in several Latin American constitutions and statutes: the individual to whom personal data relates is treated as the “data owner.” The individual’s legal rights derive largely from this concept of ownership and the associated right to control whether and how personal data is used.

“Sensitive data” gets some additional protections under the Law, as it does in Europe. As defined in the Law, sensitive data denotes information that touches on the most intimate aspects of a person’s life or involves a serious risk of discrimination. This includes but is not limited to “special categories” of data listed in the EU Directive: race or ethnicity, health, sexual preference, religious or philosophical beliefs, political views, and trade union membership. The Mexican law expressly adds genetic data to this list but does not include special treatment for criminal records as the EU Directive does. …

Scott describes “eight general principals that data controllers must follow in handling personal data: legality, consent, notice, quality, purpose limitation, fidelity, proportionality, and accountability” and concludes:

Impact on US Companies

Many US companies have subsidiaries or distributors in Mexico, and data concerning Mexican employees, customers, and business contacts is often transferred to the US company for recordkeeping, contract fulfillment, business planning, market analysis, and other management purposes. Privacy notices in Mexico should mention these purposes and transfers, and the Mexican company may need to obtain opt-in consent in the case of sensitive and financial information. The US company must then handle data consistently with the privacy notice delivered by the Mexican affiliate or distributor, to avoid creating problems for the Mexican firm. For unrelated companies, data transfers should be covered by contractual terms that specify the relevant restrictions and provide for notice to the individuals unless an exception applies.

US companies also often contract with Mexican firms for Spanish-language call centers, customer support services, or outsourced data processing. Once customer data is processed by the Mexican company, it is subject to the Law, regardless of the location of the customers. US companies using such services in Mexico may expect that their vendors will increasingly refer in contracts to their own obligations under the Law and may require cooperation from the US companies in responding to privacy-related complaints and security breaches in Mexico.

Corporate groups operating in Mexico or using data-centric services in Mexico will need to stay abreast of IFAI decisions and changing business practices resulting from the new Law.

David Worthington (@dcworthington) reported Black Hat conference fields suggestions for software security on 7/28/2010 for SDTimes:

The Software Assurance Forum for Excellence in Code (SAFECode) engaged with developers at the Black Hat Technical Security Conference yesterday in a brainstorming session to clarify a vision for software security over the next decade.

More than 50 Black Hat attendees participated in the session. SAFECode Members include Adobe Systems, EMC, Juniper Networks, Microsoft, Nokia, SAP AG and Symantec.

The No. 1 issue was education and training, said Steve Lipner, senior director of security engineering strategy at Microsoft and SAFECode board member. The discussion focused on how to get new graduates and entry-level developers to be prepared to write secure code, or even be aware of the need to write secure code, he observed.

Some specific suggestions included adding software security classes to university curricula; getting universities to "push back" against employers that are more interested in applicants that know about new technologies but might not be able to create secure code; requiring developers to have security certifications to write code; and establishing a mindset for secure development.

"I don’t see this as very realistic," said Rex Black, president of Rex Black Consulting, a security group. "Academics telling practitioners how to do their job is not something that has traditionally gone down well in software engineering. What would be preferable, in my mind, would be for software and systems vendors to have the same kind of legal liability that vendors of other products—e.g., cars, airplanes, microwave ovens—have for the quality and safety of their products."

One attendee said that the security needs are different everywhere, and different companies have different requirements that can’t be covered in a course. Lipner said that most members on the panel have a policy in place that includes security requirements for developers.

"This would be a good thing, as would requiring certifications to test code. What’s important here is that the job market start to see value in such certifications," Black said.

The rest of the discussion had no single point of emphasis, but there was a lot of focus on secure Web (rather than client) application development, he said.

The HPC in the Cloud blog’s NTT and Mitsubishi Electric Develop Advanced Encryption Scheme to Increase Cloud Security post of 7/28/2010 begins:

Nippon Telegraph and Telephone Corporation (NYSE: NTT)("NTT") and Mitsubishi Electric Corporation (TOKYO:6503)("Mitsubishi Electric") today announced that they have developed a new advanced encryption (fine-grained encryption) scheme expected to become a potential solution to the security risks in cloud computing. This new encryption scheme achieves the most advanced logic in the encryption-decryption mechanism, which enables sophisticated and fine-grained data transmission/access control.

The rapid development of information and communication technology has led to the recent spread of cloud computing and other advanced network systems. These networks, however, transmit private or confidential information to the server to process, which demands higher security than current systems that use symmetric *1 and public *2 key encryption to maintain network security. These advanced network systems therefore require a more sophisticated encryption scheme.

NTT and Mitsubishi Electric have successfully developed a new fine-grained encryption scheme with the most advanced logic as an encryption-decryption mechanism. This scheme, developed using a mathematical approach called the "dual pairing vector spaces," *3 will allow network users to maintain highly confidential information encrypted even in cloud computing environments. This achievement will help expand cloud computing applications to fields where they could previously not be applied.

The details of this scheme will be presented at "CRYPTO 2010," the 30th International Cryptology Conference, which is scheduled to be held in Santa Barbara, California, USA from August 15 to 19, 2010.

Main features of the new fine-grained encryption scheme

1. Achieving the most general logic

For the past few years, fine-grained encryption has attracted many researchers in the field of cryptography. The new, fine-grained encryption scheme by the two companies achieves the most advanced logic that comprehends those of the existing fine-grained encryption schemes. This logic can be realized by comprising AND, OR, NOT and threshold gates.

One of the most significant achievements is that the NOT gate is now available, allowing cloud computing systems to manage databases easily and flexibly in cases of change in user attributes and other information.

2. Available to a variety of applications

In fine-grained encryption, a variety of parameters are added to the ciphertext and decryption key in the encryption-decryption logic. In this logic, attributes and predicates on them become the parameter of the ciphertext or decryption key. The newly developed encryption scheme is available to a variety of applications because it is capable of being used in either of the following forms: (1) attributes as the parameter of the decryption key, predicates as that of the ciphertext, and (2) attributes as the parameter of the ciphertext, predicates as that of the decryption key.

Andrew R. Hickey reported CA Outlines Three-Pronged Cloud Security Strategy in a 7/28/2010 article for ChannelWeb:

CA Technologies on Wednesday detailed its full-fledged cloud computing security onslaught; attacking the issue of cloud security from three sides: to the cloud, for the cloud and from the cloud.

The trifecta leverages CA's Identity and Access Management (IAM) offerings for governing cloud computing applications, as well as on-premise system, said Bill Mann, senior vice president of security strategy at CA.

"We think it's very important to have a pragmatic approach to solve the issues of cloud security," Mann said. "We view this strategy as complete because it addresses it from all three angles."

CA's approach involves a new product, a new customer case study and a new partner that enables CA to target cloud security on three different levels to boost security, ease compliance efforts and automate processes.

Putting pen to paper on a cloud security strategy continues CA's push into cloud computing. At its CA World event in Las Vegas in May, CA unveiled its Cloud-Connected Management Suite, a combination of several recently acquired cloud computing tools designed to make CA a dominating force in cloud computing management software. The Suite was the result of a nearly year-long acquisition shopping spree that included the acquisition of Cassatt and its software for operating data centers like cloud computing utilities; 3Tera and its platform for building and deploying cloud services; Oblicore and its service-level management applications; and Nimsoft and its line of IT performance and system availability tools

Central to CA's cloud security strategy is the launch of new CA Identity Manager capabilities that bring identity management tools to cloud applications. Mann said CA Identity Manager now supports user provisioning to Google (NSDQ:GOOG) Apps, Google's widely popular suite of cloud-based productivity applications like Gmail and Google Docs. Mann said users can now automate identity management functions, like role-based user provisioning and de-provisioning or self-service access requests to a single system for managing identities, for Google Apps in the cloud as well as applications that reside in-house.

The addition of Google Apps support "to the cloud" builds on Identity Manager's integration with Salesforce.com's application platform and applications in March. And Mann said CA won't stop with Google Apps.

"As our customers use more SaaS applications, we'll be supporting more and more," he said.

Second, CA showcased how its existing IAM solutions can control users, their access and how they can use information in public, private or hybrid clouds and achieve the same level of security as on-premise deployments. To highlight it's "for the cloud" strategy, Mann said MEDecision, a CA customer that offers collaborative health care management based in Wayne, Penn., is leveraging a host of CA solutions to manage its healthcare services.

For example, MEDecision is using CA SiteMinder, CA Federation Manager and CA SOA Security Manager to control access to its SaaS-based health management applications. Those systems include Alineo, a collaborative healthcare management platform for delivering outcome-driven case, disease and utilization management, and Nexalign, MEDecision’s collaborative healthcare decision support service that fosters better payer-patient-physician interactions.

Lastly, CA teamed up with a new partner, Acxiom Corporation, to delivery identity and access management as a service "from the cloud." Mann said the two companies will offer a fully managed service to streamline and simplify identifying, authenticating and granting security access to on-premise and cloud applications and services. In the relationship, CA provides the IAM technology while Acxiom adds the cloud platform, Mann said.

"The Acxiom service will be up and running in the summer," he said, adding that the partnership with Acxiom will open the door for more partners and providers to reach smaller clients, which haven't been targeted heavily in the identity management market.

"We built this strategy by spending time with customers," Mann said. "They want to know how they connect to the cloud and how they get access to cloud services."

Patrick Foxhaven wrote a Beating Cloud Lock-In: Our 3 Top Considerations white paper, which InformationWeek::Analytics posted as Informed CIO: Cloud Exit Strategy on 7/28/2010:

“I feel the need... the need for speed.” It’s not just an iconic ’80s movie line anymore. IT groups are flying into the public cloud without escape plans, drawn by rapid provisioning and the ability to get new services up and running in, literally, minutes.

Racing ahead of the pack is fine until you hit turbulence. One CIO we spoke with decided to change providers and asked for his company’s data to be returned. It was—weeks later, on disk, in a proprietary format. Yet in our recent InformationWeek Analytics 2010 Cloud Risk Survey of 518 business technology professionals, when we asked those with no plans to use public cloud services about their primary reasons for holding back, just 5% cited the risk of vendor lock-in.

Now, we’re not trying to discourage adoption of these services. Six in 10 respondents to that survey are doing, or plan to do, business with a cloud provider. The advantages are too great to ignore, and as a result, these vendors are here to stay. And in fact, we’ll highlight some providers that are working to help resolve lock-in concerns. What we are trying to do, however, is ensure that an exit strategy is a standard part of your planning process. In this report, we’ll discuss ways to make informed decisions on adopting cloud services so that changing your mind doesn’t put your business at risk. (C1480710)

Forrester analysts Chris McClean and Khalid Kark wrote Introducing The Forrester Information Security Maturity Model which carries a 7/27/2010 pubication date. From an excerpt:

Executive Summary

Security and risk professionals continue working their way into positions of greater authority and influence in their organizations. However, they still struggle at times to understand the full scope of their responsibilities, prioritize their initiatives, develop a coherent strategy, and articulate their value to the business. In response to these challenges, we have developed the Forrester Information Security Maturity Model. This comprehensive framework will allow you to identify the gaps in your security program and portfolio, evaluate its maturity, and better manage your security strategy. The model consists of four top-level domains, 25 functions, and 123 components, each with detailed assessment criteria; it provides a consistent and objective method to evaluate security programs and articulate their value.

TABLE OF CONTENTS

- You Asked For It — The Forrester Information Security Maturity Model

- Use The Maturity Model To Define, Measure, And Improve Security

- What The Maturity Model Can't And Can Do

RECOMMENDATIONS

- Fit The Model To Your Organization . . . Then Bring Everyone Together

- Related Research Documents

Buy Risk-Free

Price: US $499

Our Service Guarantee: If you are not completely satisfied, return it for a full refund.

Already a Forrester Client?

Log in to read this document.

<Return to section navigation list>

Cloud Computing Events

John Fontana asks Where do we go from here? Thoughts from the Summit in a 7/28/2010 wrap-up to Ping Identity’s Cloud Identity Summit (#cis2010):

It was a big-brain mixer last week at Ping’s Cloud Identity Summit (CIS). If you were a sponge, you went home soaking wet.

Integration, standards, services, security, identity, trust, implementation, cooperation, engineering.

Google, VeriSign, PayPal, Salesforce.com, Microsoft, SafeNet, Bitkoo, SecureAuth, Conformity, Ping, Intuit, Bechtel and other vendors and end-users all hit around those concepts and filled in some details.

Everyone who needs to play in the cloud identity game seemed to be in the rooms at the Keystone (Colo.) Conference Center for CIS.

Ping CEO Andre Durand started with the present, telling password proliferation that it was time to exit stage left. Google concurred. Microsoft’s keynote focused on the future and a unity message.

Microsoft technical fellow John Shewchuk highlighted the future with his federation demo, which included a relying party hosted on Amazon EC2, an R-STS running on Windows Azure, an identity provider on Google, and all accessed from Safari running on Windows 7.

Alex Balazs (Intuit), Christian Reilly/Brian Ward (Bechtel Corp.) were among end-users telling their trench stories, along with Doug Pierce (Momentum) who went to video with me to outline his story.

While some of the standards needed to usher in this cloud identity era are here today, others focused on enterprise identity are still in various forms of development even though they are beginning to become widely known and understood from a needs perspective.

OAuth 2, OpenID, trust models, audit, compliance and the like are still on the table, in terms of the enterprise.

Technologies such as SAML have been blazing the trail thus far. Burton Group in its May report “Market Profile: Identity Management 2010” calls out XACML and SAML as the important standards for the coming years for federation and the cloud.

Chuck Mortimore, product management director for identity and security for Salesforce.com, characterized SAML during his presentation as “entering the early majority phase and is the standard for peer-to-peer federation.”

He said the current emerging standards better have one thing in common: be simple and easy to implement.

What’s working today, he said, includes SAML, static trust, and the OpenID/OAuth 2.0 hybrid. His list of what’s not working was topped by passwords.

So what drove the urgency for nearly 200 people to travel up to the Rocky Mountains for three days of cloud identity dissection? And why is it important for these discussions to be carried into this week’s Burton Group Catalyst conference and another Cloud Identity Summit next year (ED. – mark your calendars for July 2011)?

Gartner lays it out this way.

Global sales of software-as-a-service (SaaS) in the enterprise application segment will hit $8.5 billion this year. That represents a 14% increase over last year’s enterprise spending ($7.5 billion).

Gartner attributes that uptick to the enterprise’s growing approval of cloud computing. What they left off is the part about securing it, (and some compliance, auditing, etc.) another message that was on the marquee at CIS.

“IT managers are thinking strategically about cloud service deployments; more-progressive enterprises are thinking through what their IT operations will look like in a world of increasing cloud service leverage. This was highly unusual a year ago," Gartner said.

And while there is a lot more work to be done pulling the infrastructure together to secure cloud computing, the time to make the unusual usual seems to be shrinking. Gartner estimates that in the next five years, companies will spend a cumulative $112 billion on SaaS, platform as a service (PaaS), and infrastructure as a service (IaaS) collectively.

John Seely Brown, visiting scholar at the University of Southern California, grabbed the industry's collective brain stem last night to open the Burton's Catalyst conference saying that the old inside/out IT architecture is evolving to outside/in and declared it the "new normal."

This week, the cloud identity focus will shift to Catalyst where the OpenID Foundation, the Information Card Foundation, the Open Identity Exchange, Kantara Initiative and Identity Commons will demonstrate enterprise uses of open identity as a business-enabler.

Ping will be in that mix with a host of others. Part of the work will showcase examples of using OpenID, Information Card, and SAML identities at different levels of assurance across multiple sites.

If you are in San Diego for the conference, try to duck your head in and take a look.

And don’t forget to check out other CIS wrap-ups from other conference participants: Anil Saldhana, co-chair of the OASIS IDCloud Technical Committee, Active Directory expert Sean Deuby,and software engineer Travis Spencer,

If you have your own CIS wrap-up, post your URL in the comments section below.

Eric Nelson preannounced Upcoming events/training in 2010 in this 7/28/2010 post:

In Microsoft UK we are working on a series of free/cheap events/training on the Windows Azure Platform and will soon (weeks not days) have the details to share. We hope to offer a broad range to suit different needs - from in-person to online, single day to multi day.

It should be great!

Chris Harding, the leader of the Open Group’s Cloud Computing Workgroup, posted A Peaceful Leap to Cloud Computing to Dana Gardner’s Briefings Direct blog on 7/28/2010:

History has many examples of invaders wielding steel swords, repeating rifles, or whatever the latest weapon may be, driving out people who are less well-equipped. Corporate IT departments are starting to go the same way, at the hands of people equipped with cloud computing.

Last week I was at The Open Group conference in Boston. The Open Group is neutral territory with a good view of the IT landscape: An ideal place to watch the conflict develop. [Link added.]

The Open Group Cloud Computing Work Group has been focused on the business reasons why companies should use cloud computing. The Work Group released three free white papers at the Boston conference, which I think are worth a closer look: “Strengthening your Business Case for Using Cloud,” “Cloud Buyers Requirements Questionnaire,” and “Cloud Buyers Decision Tree.” Three Work Group members, Penelope Gordon of 1Plug, Pam Isom of IBM, and Mark Skilton of Capgemini, presented the ideas from these papers in the conference’s Cloud Computing stream.

"Strengthening your Business Case for Using Cloud" features business use cases based on real-world experience that exemplify the situations, where companies are turning to cloud computing to meet their own needs. This is followed by an analysis intended to equip you with the necessary business insights to justify your path for using cloud.

My prediction: Over time, cloud will be able to occupy the fertile valleys, and corporate IT will be forced to take to the hills.