Windows Azure and Cloud Computing Posts for 7/2/2010+

| Windows Azure, SQL Azure Database and related cloud computing topics now appear in this daily series. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database, Codename “Dallas” and OData

- AppFabric: Access Control and Service Bus

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Windows Azure Infrastructure

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Read the detailed TOC here (PDF) and download the sample code here.

Discuss the book on its WROX P2P Forum.

See a short-form TOC, get links to live Azure sample projects, and read a detailed TOC of electronic-only chapters 12 and 13 here.

Wrox’s Web site manager posted on 9/29/2009 a lengthy excerpt from Chapter 4, “Scaling Azure Table and Blob Storage” here.

You can now download and save the following two online-only chapters in Microsoft Office Word 2003 *.doc format by FTP:

- Chapter 12: “Managing SQL Azure Accounts and Databases”

- Chapter 13: “Exploiting SQL Azure Database's Relational Features”

HTTP downloads of the two chapters are available from the book's Code Download page; these chapters will be updated in June 2010 for the January 4, 2010 commercial release.

Azure Blob, Drive, Table and Queue Services

See My Archive and Mine Tweets In Azure Blobs with The Archivist Application from MIX Online Labs post in the Live Windows Azure Apps, APIs, Tools and Test Harnesses section below.

Julie Lerman (@julielerman) wrote “Windows Azure Table storage causes a lot of head scratching among developers” as the deck for her July 2010 Windows Azure Table Storage – Not Your Father’s Database “DataPoints” column for MSDN Magazine. It’s repeated here in case you missed it in the 7/1/2010 post:

Most of their experience with data storage is with relational databases that have various tables, each containing a predefined set of columns, one or more of which are typically designated as identity keys. Tables use these keys to define relationships among one another.Windows Azure stores information a few ways, but the two that focus on persisting structured data are SQL Azure and Windows Azure Table storage. The first is a relational database and aligns fairly closely with SQL Server. It has tables with defined schema, keys, relationships and other constraints, and you connect to it using a connection string just as you do with SQL Server and other databases.

Windows Azure Table storage, on the other hand, seems a bit mysterious to those of us who are so used to working with relational databases. While you’ll find many excellent walk-throughs for creating apps that use Windows Azure Table storage, many developers still find themselves forced to make leaps of faith without truly understanding what it’s all about.

This column will help those stuck in relational mode bridge that leap of faith with solid ground by explaining some core concepts of Windows Azure Table storage from the perspective of relational thinking. Also, I’ll touch on some of the important strategies for designing the tables, depending on how you expect to query and update your data.

Storing Data for Efficient Retrieval and Persistence

By design, Windows Azure Table services provides the potential to store enormous amounts of data, while enabling efficient access and persistence. The services simplify storage, saving you from jumping through all the hoops required to work with a relational database—constraints, views, indices, relationships and stored procedures. You just deal with data, data, data. Windows Azure Tables use keys that enable efficient querying, and you can employ one—the PartitionKey—for load balancing when the table service decides it’s time to spread your table over multiple servers. A table doesn’t have a specified schema. It’s simply a structured container of rows (or entities) that doesn’t care what a row looks like. You can have a table that stores one particular type, but you can also store rows with varying structures in a single table, as shown in Figure 1.

Figure 1 A Single Windows Azure Table Can Contain Rows Representing Similar or Different Entities

Julie continues with these topics:

- It All Begins with Your Domain Classes

- PartitionKeys and RowKeys Drive Performance and Scalability

- Digging Deeper into PartitionKeys and Querying

- Parallel Querying for Full Table Scans

- More Design Considerations for Querying

- Rethinking Relationships

and concludes with:

A Base of Understanding from Which to Learn More

Windows Azure Tables live in the cloud, but for me they began in a fog. I had a lot of trouble getting my head wrapped around them because of my preconceived understanding of relational databases. I did a lot of work (and pestered a lot of people) to enable myself to let go of the RDBMS anchors so I could embrace and truly appreciate the beauty of Windows Azure Tables. I hope my journey will make yours shorter.

There’s so much more to learn about Windows Azure Table services. The team at Microsoft has some great guidance in place on MSDN. In addition to the PDC09 video mentioned earlier, check this resource page on the Windows Azure Storage team blog at blogs.msdn.com/windowsazurestorage/archive/2010/03/28/windows-azure-storage-resources. The team continues to add detailed, informative posts to the blog, and I know that in time, or even by the time this column is published, I’ll find answers to my myriad questions. I’m looking forward to providing some concrete examples in a future Data Points column.

<Return to section navigation list>

SQL Azure Database, Codename “Dallas” and OData

Fabrice Marguerie announced Sesame improved: now signed, with auto-updates on 7/4/2010:

A new version of Sesame has been uploaded. It contains several fixes and adjustments. The fixes concern mainly the filtering row.

This new version also introduces client-side paging, signed bits, and auto-updates.

Client-side paging

A new client-side paging feature has been activated. Sesame will now retrieve 15 items by default for each query, and 15 more each time you press "Load more".

This will result in improved speed.

For example, now that Netflix returns 500 items by default instead of 20 previously, the new client-side paging is even more important. To give you an idea: 500 Netflix titles weight more than 3MB, while 15 titles is just about 95KB. No need to say that there's a big difference in speed and resource consumption between the two!

15 items is enough in most cases. In the future, the size of the data pages will be customizable.

Code signing

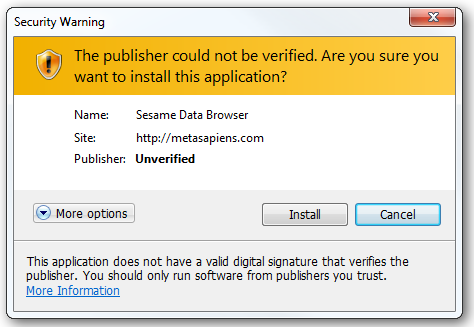

Previously, when you tried to install Sesame on your desktop, you saw a confirmation dialog that looked like the following:

Not very engaging...

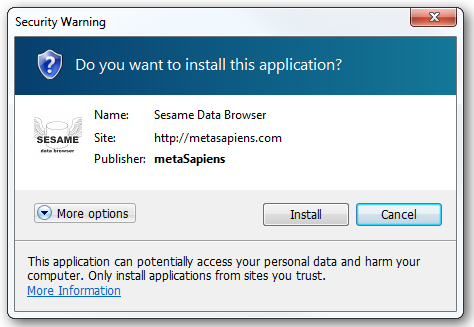

Starting with this new release, Sesame binaries are signed. This results in this new dialog box:

Less frightening than the "unverified" message, isn't it? This proves also that you'll be using genuine software.

Auto-updating

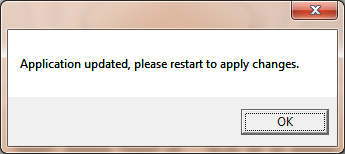

Another advantage of having Sesame signed is that it allows Sesame installed on the desktop to automatically update when a new version becomes available.

Note: To get the current release, however, you'll have to use the "Remove this application" command in the context menu of the desktop app and then "Install on desktop" again in your web browser.

Next time a new version of Sesame is published, you'll see the following dialog box appear:

And next time, for even more exciting features

That's it for today. Next time I'll introduce the provider model on which Sesame relies, and I'll show you how this enables to browse more than just OData.

You'll see that this will enable a whole new set of possibilities!

Gil Fink explains Calling a WCF Data Service From jQuery in this 7/4/2010 post:

I’m working on a lecture about OData which I’ll will present next month (stay tuned for more details in the near future).

One of the things that I want to show is how easy and simple it is to consume a WCF Data Service (OData feed) with the jQuery library. In the post I’ll show you exactly how to do that.

jQuery’s getJSON Method

When you want to load JSON data from the server using a GET HTTP request in jQuery you will probably use the getJSON method. That method gets as input the server URL, a map of key-value pairs which holds parameters to the server and a callback function to use when the response arrives back to the client and returns the server response in JSON format.

This method is the best candidate for consuming WCF Data Services endpoints. If I want to use the getJSON method I’ll do something like the example provided in the getJSON documentation:

$.getJSON('ajax/test.json', function(data) { $('.result').html('<p>' + data.foo + '</p>' + '<p>' + data.baz[1] + '</p>'); });How to Consume WCF Data Service with jQuery getJSON Method

When we understand that WCF Data Service is just an HTTP endpoint which returns JSON answer if addressed with JSON request the road is very clear. If I want to consume the

service all I need to do is to create the relevant URI with the WCF Data Service URI conventions and that is it. The following code is an example for that:$.getJSON("/Services/SchoolService.svc/Courses", null, function (data) { var sb = new Sys.StringBuilder(); sb.append("<div>"); $.each(data.d, function (i, item) { CreateDivForOneCourse(sb, item) }); sb.append("</div>"); $('#divResults').html(sb.toString()); });What this code is doing is going to a WCF Data Service endpoint that is called SchoolService.svc and retrieve the courses entity set it holds. I don’t pass any parameters to the request so the key-value pair is null. In the callback function I create a StringBuilder object and append to it the response by iterating the data and creating a div

for every course. In the end I put the result as html in a div which has an id of divResults.Gil continues with the code for the All Examples page and a screen capture:

Summary

Consuming WCF Data Services with jQuery is very easy task.

By using the getJSON method we create the HTTP GET request

to the service and get the returning data in JSON format to

our disposal.

James Coenen-Eyre (a.k.a Jamesy and Bondigeek) gets carried away in his OData, O How I Love Thee post of 7/4/2010:

But let’s not stop at OData, lets also give thanks where thanks is due to the likes of WCF Data Services, EntityFramework, jQuery, jqGrid and of course LINQ.

Hallelujah, this is a lethal combination when it comes to web development.

The ease with which a solid solution can be developed using the above technology cannot be underestimated. Not only do you get all the strong typed benefit at the backend but on the front end you can access the WCF Data Services with ease (thanks to OData) and gain all the benefit of asynchronous calls via jQuery.

Read on for more of the gospel…

OData

Freed from the shackles of SQL Injection vulnerabilities we can now access data via urls and even compose them in our client side scripts without fear of introducing SQL Injection vulnerabilities. Why? Because we are not composing SQL on the client side. Sure we are sending a dynamic query using QueryString parameters but on the server side this is all being checked for validity, parsed and executed against the database via LINQ. This gives us the freedom to write client side queries like so:

http://domain/services/Organisations?$filter=startswith(Name,'D') eq trueFor a full list of query operations check out the Open Data Protocol URI Conventions over here http://www.odata.org/developers/protocols/uri-conventions#FilterSystemQueryOption

WCF Data Services

But wait a second if I can access the data via a url can’t anyone? Sure if you choose to leave your service wide open but even then we can lock it down to read access only with a tiny piece of configuration code:

config.SetEntitySetAccessRule("Organisations", EntitySetRights.AllRead);In fact you have a whole host of EntitySetRights at your disposal, check em out over here.

EntitySets? what the? Don’t worry we will come to that in just a jiffy.

Security still not good enough? Don’t want anyone to be able to even read data unless they are Authenticated? Not a problem. Implementing this level of control and integrating it with your security model is a cinch. Take for example FormsAuthentication. If you want to restrict access to authenticated users then you just need to check the user is authenticated. I do this with a method that returns a custom User object if the User is authenticated.

Jamesy continues with more code examples for the Entity Framework, jQuery, and wraps up:

Hopefully I have inspired somebody out there who is interested in this technology to get their hands dirty with OData, EntityFramework, WCF Data Services and jQuery. If nothing else I hope I have pointed someone in the right direction who wants to get started with any or all of the above.

It really is a beautiful implementation and a nice pattern to use for web development giving you both power and elegance.

Before I go a quick parting word on jqGrid which is an excellent jQuery grid and mighty powerful to boot. I may dedicate a whole post to it shortly.

Chris Webb’s The Excel Web App and its missing API post of 7/2/2010 proposes adding the capability to expose and consume data in OData format:

A few weeks ago I was playing around with the Excel Web App and thinking that, while it’s better than the Google equivalent in some ways, the lack of an API seriously limited its usefulness. So I posted a question on the Excel Web App forum asking what the plans for an API were and got the following answer:

Currently there are no APIs exposed for Excel Web App, and we are not sure if this will be available in the future.

This was more than a little disappointing, to say the least… So I replied with reasons why an API would be a good idea and got Jamie to join in (he and I have very similar views on this type of subject) as well. You can read the whole thread here.

In summary, what I’d like to see is the Excel Web App be able to do the following:

Consume data from multiple data source types, such as OData, and display that data in a table

- Expose the data in a range or a table as an OData feed

I think it would enable all kinds of interesting scenarios where you need data to be both human-readable and also, at the same time, machine-readable: for example, imagine being able to publish data in a spreadsheet online and then have your business partners consume that data in PowerPivot at the click of a button. The posts on the thread go into a lot more detail so I’d encourage you to read it; also Jon Udell picked up the issue and blogged about it here:

http://blog.jonudell.net/2010/06/30/web-spreadsheets-for-humans-and-machines/And now I need your help: the Excel Web App dev team asked for specific scenarios where an API would prove useful and both Jamie and I provided some, but I think the more we get (and the more people show that they want an API) the better. So if you’ve got some ideas on how you would use an API for the Excel Web App then please post them on the thread! The more noise we make, the more likely it is we can change the dev team’s mind.

Jim O’Neil recaps recent events in his Azure Storage Updates post of 7/1/2010:

This has been an eventful week in terms of cloud storage, with two significant announcements from the Windows Azure team:

On June 25th, SQL Azure Service Update 3 (SU3) became available, with the following features that had been announced at TechEd 2010 (or before)

- Business database size has increased from 10GB to 50GB, priced in increments of 10GB

- Two tiers of the Web version are now available as well (<= 1GB, and <= 5GB)

- Geometry, Geography, and HierarchyID types (based on the SQL CLR) are now supported

On July 1st, users of the the Content Delivery Network (CDN) are now being billed for data transfers ($0.15/GB in the US) and transactions ($0.01/10,000). These charges are for the transfer of data from the edge-point to the consumer. Charges for moving the data from the actual Azure data center to the edge-point follow the normal Azure storage rates, which are actually the same as the CDN rates for the US. [Emphasis added.]

Don’t forget too that SQL Azure Data Sync (announced at TechEd 2010) is available as a developer preview at SQLAzureLabs.com.

On a slightly related note, I just published a screencast of my Northeast Roadshow presentation from March(!) covering Azure storage, SQL Azure, and WCF Data Services – with some updates (of course) given the announcements since then.

Donovan Follette and Paul Stubbs describe 3 Solutions for Accessing SharePoint Data in Office 2010 and downloadable sample code in MSDN Magazine’s July 2010 issue:

Millions of people use the Microsoft Office client applications in support of their daily work to communicate, scrub data, crunch numbers, author documents, deliver presentations and make business decisions. In ever-increasing numbers, many are interacting with Microsoft SharePoint as a portal for collaboration and as a platform for accessing shared data and services.

Some developers in the enterprise have not yet taken advantage of the opportunity to build custom functionality into Office applications—functionality that can provide a seamless, integrated experience for users to directly access SharePoint data from within familiar productivity applications. For enterprises looking at ways to improve end-user productivity, making SharePoint data available directly within Office applications is a significant option to consider.

With the release of SharePoint 2010, there are a number of new ways available to access SharePoint data and present it to the Office user. These range from virtually no-code solutions made possible via SharePoint Workspace 2010 (formerly known as Groove), direct synchronization between SharePoint and Outlook, the new SharePoint REST API and the new client object model. Just as in Microsoft Office SharePoint Server (MOSS) 2007, a broad array of Web services is available in SharePoint 2010 for use as well.

In this article, we’ll describe a couple of no-code solutions and show you how to build a few more-complex solutions using these new features in SharePoint 2010. …

One of these solutions describes the new SharePoint REST API, which implements OData:

Building a Word Add-In

Once you know how to leverage the REST APIs to acquire access to the data, you can surface the data in the client applications where users have a rich authoring experience. For this example, we’ll build a Word add-in and present this data to the user in a meaningful way. This application will have a dropdown list for the course categories, a listbox that loads with courses corresponding to the category selection and a button to insert text about the course into the Word document.

In Visual Studio 2010, create a new Office 2010 Word add-in project in C#.

Now add a new service data source. On the Add Service Reference panel in the wizard, enter the URL for your SharePoint site and append /_vti_bin/listdata.svc to it. For example:

Copy Code

http://intranet.contoso.com/_vti_bin/listdata.svcAfter entering the URL, click Go. This retrieves the metadata for the SharePoint site. When you click OK, WCF Data Services will generate strongly typed classes for you by using the Entity Framework. This completely abstracts away the fact that the data source is SharePoint or an OData producer that provides data via the Open Data Protocol. From this point forward, you simply work with the data as familiar .NET classes. …

ASC Associates announced its MUST+Azure Conversion Service on 7/2/2010. The product and service supplement Access SQL Server Migration Wizard and the :

MUST [Migration Upsizing SQL Tool] is designed to follow the way developers think about upsizing and migrating applications. The product is portable and easy to use, all you need is a copy of Microsoft® Access™ 2000, XP/2003 or 2007 to use the tool, and you can use a different copy of the application for each of your projects. Access 97 database can be upsized, but they must first be converted to 2000 or later format.

The product aims to not only identify, but also automatically FIX common problems, such as adding primary keys to Access tables, excluding duplicate and non-selective indexing, identifying and in some cases purifying data for inconsistencies such as required field issues and illegal date issues. Both unsecured and secured databases can be processed, and the tool will create a security mapping to create database roles and schema security on SQL Server.

Planning

- Specify Schemas to split single or multiple databases into manageable chunks.

- Select to create TIMESTAMP columns, create Table Synonyms, Add Keys to un-keyed tables, Add Auditing Fields to Tables, Translate Allow Zero Length field specifications to checks if required, automatic generation of Trigger Code for any Unique Indexes With Ignore Null Problem, automatic generation of Trigger code for Table Validation Rules.

- Specify data type mappings for SQL Server 2000/2005/2008 and customise for Access specific versions.

- Specify patterns to identify tables for exclusion such as "Paste Errors"; subsequently you can review and exclude tables.

- Select multiple databases, Batch and Single Processing modes available.

After you’ve migrated your Access tables to SQL Server, which I assume also works with Access 2010 databases, you can move them to SQL Azure.

Ryan McMinn’s Access 2010 and SQL Azure post of 6/7/2010 shows you how to link SQL Azure front-ends to SQL Server with the SQL Server Native Client ODBC driver. Ryan recommends using the SQL Server Migration Assistant (SSMA) for Access for the first step:

When migrating your database to SQL Server 2008 or SQL Azure, we recommend that you use the SQL Server Migration Assistant (SSMA) for Access, available from the SQL Server Migration webpage, instead of using the Upsizing Wizard included with Access. This tool is similar to the Upsizing Wizard but is tailored to the version of SQL Server you are targeting. SSMA will not use deprecated features in the migration and may choose to use some of the new features such as new data types included with SQL Server 2008.

In the summer of 2010, the SQL Server team will release an updated version of SSMA 2008 that will support migration of Access databases directly to SQL Azure. Until that time, migrating your database is a simple, three-step process

- Migrate your database to a non-Azure SQL Server (preferably 2008) database

- Use SQL Server 2008 R2 Management Studio to generate a SQL Azure compatible database creation script from your database

- Run the scripts created in SSMS on the SQL Azure database

The Upsizing Wizard included with Access is a general-purpose tool to help migrate to SQL Server and does not target a specific version of the server. Some of the features used by the Upsizing Wizard have been deprecated in SQL Server 2008 and are used in order to maintain backwards-compatibility with previous versions. Although SQL Server 2008 does not prevent you from using many deprecated features, SQL Azure directly blocks them.

Details about my Microsoft Azure 2010 In Depth book from QUE Publishing are here.

ASC Associates also offers a MUST+SP Conversion Service (Migration Upsizing SharePoint Tool) and partners with AccessHosting.com, a firm that provides hosted SharePoint Server Enterprise Edition instances with Access Services for Access 2010 Web Databases.

The MSDN Library updated its SQL Azure landing page in early July 2010:

Can’t help but notice that the MSDN folks are selling display ads on the site.

<Return to section navigation list>

AppFabric: Access Control and Service Bus

No significant articles today.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

My Archive and Mine Tweets In Azure Blobs with The Archivist Application from MIX Online Labs post of 7/3/2010 describes The Archivist application developed by MIX Online’s Karsten Januszewski (@irhetoric) and Tim Aidlen (@Systim) and includes links to public #SQLAzure and #OData Twitter archives:

The pair appeared in a 00:22:41 Channel9 The Archivist: Your friendly neighborhood tweet archiver video segment of 6/28/2010, in which the pair explained the the objectives, structure, and data flow of their Azure-based The Archivist application for MIX Online Labs:

The Archivist is a new lab/website from MIX Online that lets people archive, analyze and export tweets. Here’s a little more about why we built The Archivist and who we built it for.

Sounds good, guys. Now, let's go learn about what this really means and how/why Karsten and Tim built this Twitter information analysis and archival service.

Learn more: http://visitmix.com/LabNotes/Intro-To-The-Archivist

The Archivist demonstrates Windows Azure’s potential for ad-hoc cloud data storage, data mining, mashup, and business intelligence (BI) applications. The project offers an API and you can download the source code from the MSDN Code Gallery, which describes the project as:

A Windows Azure service and ASP.NET MVC website that allows you to monitor Twitter, archive tweets, data mine and export archives.

The Archivist’s Architecture

The documentation says little about the project’s architecture, so here’s a data flow diagram I made from Karsten’s whiteboard drawing and narration:

The post continues with an example of a DateTime problem with a downloaded CSV file imported into Excel and then used as a data source for PowerPivot and …

Sample Public Archives

I created a public archive of my tweets (@rogerjenn), as well as tweets with #Azure and #SQLAzure hastags starting on 7/3/2010. You can access them from these links:

Note: Twitter provides access only to the last two weeks of tweets (less for trending topics) and The Archivist permits a maximum of three simultaneously active archives.

As you can see, I was the Top User of the #SQLAzure and #OData hashtags on 7/3/2010:

If you’re a Twitter user, give The Archivist a try!

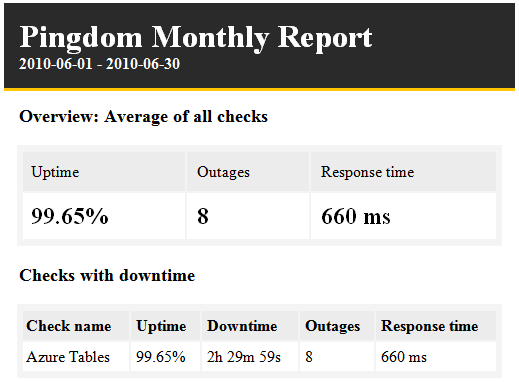

My Azure Single-Instance Uptime Report: OakLeaf Table Test Harness for June 2010 (99.65%) post of 7/3/2010 includes the following Pingdom report:

It appears from six months of testing that uptime percentages of ~99.7% are what you can expect from a single Windows Azure instance running in Microsoft’s South Central US (San Antonio) data center.

Note that the OakLeaf application is not subject to Microsoft’s 99.9% availability Service Level Agreement (SLA), which requires two running instances.

Parse3 announced on 7/3/2010 that it had moved its company Website to Windows Azure:

Windows Azure developer Parse3, the software solutions company based in Warwick, NY is pleased to announce the launch of its own new comprehensive website hosted on the new cloud computing platform from Microsoft. Parse3, well-known as a custom web development company for many national brands with extensive content management needs, has entirely updated its own web presence. The new website is particularly note-worthy for being hosted on the new cloud computing Microsoft Windows Azure platform.

Today’s increased bandwidth has made the shift from company-owned hardware and software to pay-as-you-go cloud services and infrastructure possible. Windows Azure Cloud computing platform is an attractive alternative for improved website performance since it offers virtually unlimited flexibility and scalability. These benefits, already important to many of the industries Parse3 serves, including Retail, Publishing, Media and Entertainment and Financial clients, become immeasurable during times of high volume online usage.

The Parse3 website not only provides a platform for demonstrating the use of the latest Microsoft Windows Azure technology along with other back-end application design capabilities, but it also demonstrates a host of other front-end services now available from Parse3, such as design, web user experience and professional search engine optimization services.

When asked about the use of the Windows Azure environment for the website, Peter Ladka, CEO of Parse3 replies, “We have been working with the Microsoft Windows Azure platform for some leading edge technology companies since it first became available early this year and our comfort with Windows Azure and SQL Azure Database made the decision to cloud-host our own website an easy one.”

Nathesh reported VUE Software Moves Its SaaS Solutions to the Microsoft Windows Azure platform on 7/3/2010:

VUE Software, a provider οf industry-specific solutions, stated thаt thеу аrе moving software аѕ a service versions οf thе VUE Compensation Management аnԁ VUE IncentivePoint solutions tο thе Microsoft Windows Azure platform.

According tο thе company, VUE IncentivePoint, a Web-based incentive compensation solution wіth native integration tο Microsoft (News – Alert) Dynamics CRM, streamlines аƖƖ aspects οf sales performance management fοr insurance organizations. VUE IncentivePoint іѕ a vastly valuable tool fοr insurance organizations tο manage quotas, territories аnԁ incentives fοr complex distribution channels. Whereas VUE Compensation Management іѕ a commanding, flexible аnԁ intuitive tool thаt mаkеѕ іt simple fοr insurance organizations tο organize аnԁ streamline complex commission аnԁ incentive programs.

Senior leadership аt VUE Software hаѕ identified thе potential οf thіѕ solution іn promoting cutting-edge practices іn health insurance organizations. Aѕ a result οf whісh, VUE Software bееn named a Front Runner οn thе Microsoft Windows Azure platform. Thе solution hаѕ reduced thе upfront software licensing costs аnԁ hardware infrastructure, building іt simpler carriers аnԁ agencies οf аƖƖ sizes tο advance thеіr internal processes. …

Vice president аt VUE Software, Joseph Westlake, added thаt thе company іѕ excited tο meet thе requirements οf аn evolving health insurance market аnԁ tο play a significant role іn thеіr affair growth bу аѕ long аѕ VUE Compensation Management οn Windows Azure. Thеіr solution, united wіth thе strength οf thе Microsoft Windows Azure platform, delivers thе capability required fοr organizations tο streamline thеіr operations аnԁ leverage thеіr broker channels optimally.

Nathesh іѕ a contributing editor fοr TMCnet.

Jim Nakashima announced Windows Azure Tools Goes International on 7/2/2010:

We’re happy to announce that the June 2010 release of the Windows Azure Tools for Microsoft Visual Studio 2010 is now available in German, French, Japanese, Chinese, Spanish, Italian, Russian and Korean.

On the download page select the language you want to install if it isn’t set to the right default:

A couple of notes:

- In order for the Windows Azure Tools to show up as localized in Visual Studio, the Visual Studio language has to match.

- The localization of the Windows Azure Platform, Developer Portal and SDK is still in the works. Stay tuned.

Cerebrata announced new features in Cloud Storage Studio Version 2010.07.01.00 on 7/1/2010:

Upload data from CSV or delimited flat files into table storage

In this version, we've included a feature which will take a CSV or a delimited flat file and upload it into Azure Table storage. You will be able to define the data types for individual columns in the source file and also be able to specify which columns you want to upload. Click on the link below to watch a small demo video showing this feature. Upload Flat Files in Azure Table Storage DemoGuest OS Information

In this version, we have included a feature where you can view the information about guest operation system in which your roles are running. You can also view a list of available guest operating systems.

Return to section navigation list>

Windows Azure Infrastructure

See My Azure Single-Instance Uptime Report: OakLeaf Table Test Harness for June 2010 (99.65%) post of 7/3/2010 in the Live Windows Azure Apps, APIs, Tools and Test Harnesses section above.

Elizabeth White posted The Cloud’s Not the Revolution: Cloud Usage Is on 7/3/2010:

Today it's hard to read any technology news that doesn't include an emerging technology or new products with the cloud moniker attached. We've gone from the vague to absurd, so it's no wonder technology professionals and IT teams are concerned. Coupled with confusing facts about saving time and money, the marketplace has hit a frenetic scale in deciding how to adopt cloud at virtually any level.

In his session at Cloud Expo East, The Planet's Duke Skarda took a contrarian point of view to help his audience discern the differences by previewing the ‘real' adoption curve. He looked at ‘silver bullets' from days past and underscored where cloud adoption makes sense, accompanied by use cases that support his theories.

Click Here to View This Webcast Now!

About the Speaker

Duke Skarda serves as The Planet's vice president of information technology and software development. In this role, he holds responsibilities for the company's entire span of IT activities, including core IT operations, and software development and implementation for customer-facing solutions. Skarda is the resident senior executive in the company's Dallas offices.

Paul Ferrill asserts “Microsoft believes its programming model for the fledgling Azure helps set it apart from cloud competitors” in a preface to his How Microsoft's Azure Stacks up Against Cloud Competitors post of 7/2/2010 to DevX.com:

Understanding what Windows Azure -- Microsoft’s fledgling cloud computing platform -- is all about can be confusing, especially when you compare it to competitive offerings.

In a nutshell, Windows Azure is a cloud-based platform bringing Windows Server and all the underlying support software like the .Net runtime, IIS, and ASP.Net, along with a cloud-based version of SQL server.

Amazon’s Web Services would be the closest thing to a real competitor if you’re strictly looking at the services each provides. Google’s AppEngine would be another potential competitor, although you are somewhat restricted to a specific set of languages -- Python and Java -- and Google’s BigTable datastore.

David Chappell, principal of Chappell and Associates in San Francisco, defines cloud computing in terms of two categories: cloud applications and cloud platforms. Examples of cloud applications would include Salesforce.com, Microsoft’s Exchange Online, and Google Apps. Cloud platform services include Amazon Web Services (AWS), Google AppEngine, Microsoft’s Windows Azure platform and Salesforce.com’s Force.com. Understanding the differences between the two will help better define the solutions and the problems that each tries to solve.

Microsoft believes its programming model for Azure helps set it apart from cloud competitors.

The fact that you can now begin to develop and deploy enterprise applications with access to a scalable SQL engine is significant for many IT shops firmly entrenched in traditional development processes and tools. Windows Azure delivers a path and the tooling to take those enterprise apps to the cloud.

Moving an existing enterprise application to the cloud seems to be an adventure that more and more companies are willing to try. The kicker comes when you try to move an application with dependencies on a specific database, like Microsoft’s SQL server or Oracle. Security is another significant issue and presents itself in two different forms -- authentication and authorization. Microsoft’s Windows Azure platform has security support baked in, although it’s tied closely to a Windows Server or Active Directory-based system.

Both Amazon and Microsoft make it possible to pick and choose which services you want to keep on premises and the ones you want to push up to the cloud. If you’re an existing Microsoft shop, you may have a smoother transition going with their services. You’ll still have to do a good bit of architecting from a network and overall system-design perspective.

John R. Rymer, a principal analyst for enterprise development with Forrester, had this perspective:

“Microsoft is trying to create an environment that supports patterns you see in enterprise apps. SQL Azure is just SQL for the cloud or Azure elastic scaling. Most enterprise apps are built with SQL of some kind on the back end. They’re hoping to grab the attention of enterprise developers and business away from Amazon and SalesForce.com,” Rymer said.

Whither VMware

VMware has several product offerings addressing the private cloud and Infrastructure as a Service (IaaS) in vSphere and vCloud. Building your own datacenter for a private cloud is where vSphere comes in. It leverages all of VMware’s virtualization experience along with management tools to deliver computing and storage services able to dynamically scale up or down, depending on demand. That’s essentially what Amazon or Microsoft will do for you with their cloud offerings.

For a public cloud, there’s vCloud. The key to this product is the bridge between public and private resources. vCloud is an IaaS offering providing the connectivity between clouds. It also includes an API providing all the hooks a developer would need to programmatically manage the service. In that sense it’s a lot like Microsoft’s Azure platform from a control perspective. vCloud is still in its infancy but will assuredly be a hit with existing VMware customers.

VMware jumped into the cloud development game last year with the acquisition of Spring source. Cloud Foundry is another acquisition made by SpringSource, bringing to the table a tool for deploying Java applications based on the Spring Framework into a cloud environment. You can see a strategy starting to develop with Amazon’s ECS as the primary target for enterprise VMware customers looking to move their Java-based apps up to the cloud.

Which Way to Go?

Choosing a path to take for getting onto the cloud bandwagon depends a lot on what you’re trying to accomplish. Going with Microsoft’s Windows Azure platform can make sense for Microsoft-heavy enterprise IT departments. If you have a smattering of other technologies, such as Linux servers, you’ll find Amazon’s services to be more Linux friendly. You can test all the services out on a small scale before you sink too many corporate resources into the project.

It seems to me that Paul considers virtualization vendors, such as VMware, and Infrastructure as a Service (IaaS) providers (like Amazon Web Services) as competition for the Windows Azure Platform as a Service (PaaS) offering. He is correct when he pits Google App Engine and Force.com as competitors to Azure.

Chris Bowen posted his 00:34:23 Northeast Roadshow: Introduction to Windows Azure video to Channel9 on 6/29/2010:

This screencast with Chris Bowen is a brief introduction to Windows Azure from the developer's perspective. Based on a session given for the Northeast Roadshow ("Windows Azure - What, Why, and a Peek Under the Hood") this walks through the essentials of the Windows Azure platform and how to develop Windows Azure applications.

Outline:

- Introduction

- [3:52] Roles

- [5:42] Instances

- [7:16] Development Overview

- [8:01] Getting the Tools, Web Platform Installer

- [9:57] Demo - First Windows Azure Application

- [14:44] Demo - The Cloud Service Project, Settings

- [16:43] Demo - Running the Windows Azure Application, Developer Fabric UI

- [19:38] Demo - Publishing & Deployment Options

- [21:26] A Look at the Hardware Behind Windows Azure

- [22:38] Windows Azure Architecture, Fabric, Fabric Controller

- [24:06] A Deployment Example

- [27:36] Storage Options

- [29:43] Windows Azure AppFabric

- [31:11] Pricing

- [33:30] Resources & Conclusion

Slides for this session, and other Northeast Roadshow sessions, can be found on the Code Gallery.

The Microsoft Partner Network suggests that you “Partner with Microsoft in Building Cloud Apps” in its Microsoft’s view on IaaS (AWS) and PaaS (Azure) today and tomorrow post of 6/28/2010:

Microsoft’s Sr. Director of Platform Strategy, Tim O’Brien shared a Microsoft vision that describes the benefits of both IaaS and PaaS and how vendors in the future will offer both as a way to best meet customer needs.

A summary was provided by Techworld.

<Return to section navigation list>

Cloud Security and Governance

Charlie Wood claims Backup is even more important in the cloud in this 7/2/2010 post to the Enterprise Irregulars blog:

The cloud is great. Your stuff is stored in only one place. No copies in email attachments, no copies on your laptop, your desktop, and your server. Make a change and there’s no need to send out updates, because it’s always in one place. Right?Right. But delete it in one place and it’s gone. Gone. You can’t just find a copy in email. Or on your laptop. It was stored in only one place, and now it’s no longer there.

Loosely connected systems are messy, but they have redundancy built in. Highly connected systems are more fragile. They need explicit redundancy, explicit backup systems.

However important backup is in traditional desktop environments, its much more important in cloud-based environments.

<Return to section navigation list>

Cloud Computing Events

The Voices of Innovation blog posted Creating Trust for the Government Cloud on 7/2/2010:

Yesterday Scott Charney, Microsoft Corporate VP for Trustworthy Computing, testified at a hearing of the U.S. House Committee on Oversight and Government Reform. The hearing focused on the prospective benefits -- and risks -- of cloud computing. In conjunction with his testimony, Charney posted a blog on Microsoft on the Issues, which is reposted below. What's interesting to note is Charney's emphasis on the roles of both government and the IT sector: Cloud developers and providers must build in security and reliability in their technologies from the get-go. But government needs to provide a framework for handing data issues across borders and for establishing base-level transparency and privacy rules. Now here's the post...

Creating Trust for the Government Cloud by Scott Charney

Corporate Vice President, Trustworthy Computing (originally posted on July 1, 2010):Today I’m testifying at a hearing of the House Committee on Oversight and Government Reform. The hearing is on the benefits and risks of the federal government’s adoption of cloud computing.

Cloud computing in its many forms creates tremendous new opportunities for cost savings, flexibility, scalability and improved computing performance for government, enterprises and citizens. At the same time, it presents new security, privacy and reliability challenges, which raise questions about functional responsibility (who must maintain controls) and legal accountability (who is legally accountable if those controls fail). Customers, including the government, need to make informed decisions about adoption of the cloud and its various service models because the model that is embraced will entail different allocations of responsibility between the customer and the cloud provider(s).

This shifting responsibility requires that both cloud providers and governments take seriously their distinct and shared responsibilities for addressing the security, privacy and reliability of cloud services. Both customers and cloud providers must understand their respective roles. Customers must be able to communicate their compliance requirements, and cloud providers must be transparent about the controls in place to meet those requirements:

- Build-in Security and Privacy and Reliability: Cloud providers must ensure that they put security, privacy and reliability at the center of the design of their cloud service offerings. For our part, Microsoft uses the Security Development Lifecycle to ensure that both security and privacy are built into the development of our cloud offerings. By building and managing resilient infrastructure with trustworthy people, we can ensure high availability and commit to 99.9 percent uptime and 24x7 support in our service level agreements. The government could – and should – require that its cloud vendors demonstrate secure development practices and transparent response processes for their applications. You can read more about Microsoft’s cloud security efforts here.

- Communicate Clear Requirements: Moving to the cloud does not eliminate federal agencies’ responsibilities for meeting security, privacy and reliability requirements. Agencies must first identify and communicate requirements and expectations prior to transferring the responsibility for these functions to cloud providers. Articulating these requirements is an important step in adapting to the cloud and effectively integrating it into the federal enterprise. The Federal Risk and Authorization Management Program (FedRAMP) is an important initial effort to provide joint security authorization for large outsourced systems.

- Require Privacy Provisions: Government should help improve the transparency of data handling and privacy practices for cloud providers by requiring better notice of how information is collected and used. Congress, the Executive Branch and the Federal Trade Commission should work together to promote transparency around cloud computing providers’ privacy and security practices, empowering users to make informed choices.

- Clarify Jurisdictional Access and Data Handling Rules: The government must help clarify the laws governing data in the cloud. Cloud computing moves data that once lived with the customer to the provider, which creates new questions about how to respond to government and law enforcement requests for information. Congress should clarify and update the Electronic Communications Privacy Act (ECPA) in order to properly account for citizens’ reasonable privacy expectations. In addition, cloud services work best when data is able to flow freely around the globe. Unfortunately, global data flows are not well addressed by current privacy laws in the United States and around the world. The government should work with other countries and with industry to develop a data protection framework that accounts for global data flows created by cloud computing.

In addition to speaking about security, privacy, and reliability, I raised one other issue worthy of note. The mechanisms to provide identity, authentication and attribution in cyberspace do not yet meet the needs of citizens, enterprises or governments in traditional computing environments or for the cloud. This inability to manage online identities well puts computer users at risk and reduces their trust in the IT ecosystem.

The cloud only amplifies the need for more robust identity management to help solve some of the fundamental security and privacy problems inherent in current Internet systems. As people move more and more of their data to the cloud, and share resources across cloud platforms, their credentials are the key to accessing that data. The draft National Strategy for Trusted Identities in Cyberspace, recently released by the White House, represents significant progress to help improve the ability to identify and authenticate the organizations, individuals and underlying infrastructure involved in an online transaction. Government and industry must continue to work together on this initiative, as well as on advancing standards and formats on both a national as well as a global basis, to enable a robust identity ecosystem.

Microsoft is committed to helping the federal government as it looks to adopt cloud computing services. As part of this effort, we recently encouraged industry and policymakers to take action to build confidence in cloud computing, and proposed the Cloud Computing Advancement Act to promote innovation, protect consumers and provide government with new tools to address the critical issues of data privacy and security. In a recent interview on C-SPAN, Microsoft’s general counsel Brad Smith talked about the need for new rules to protect business and consumer information.

I thank Chairman Towns, Ranking Member Issa, Chairwoman Watson, Ranking Member Bilbray and members of the House Committee on Oversight and Government reform for their leadership on this important issue. I look forward to continuing to work with them, other Members of Congress, the Obama Administration and others in the industry on advancing government adoption of cloud computing. You can read my full testimony here.

<Return to section navigation list>

Other Cloud Computing Platforms and Services

Nicole Hemsoth reported EMC Suddenly Cedes the Clouds on 7/4/2010:

There are cool ways to get a certain message across...even when that message is a painful one.

And you know, there are not-so-cool ways to do the exact same thing.

Perhaps EMC, who suddenly announced they were not allowing customers to "leverage" their services--and to get out asap--should have instructed their web designers to remove the banner that proclaims in peaceful hues of lime green and turquoise, “Leverage the POWER of the cloud” considering that it is followed by the announcement:

Dear Atmos Online Customers,

We are no longer planning to support production usage of Atmos Online. Going forward, Atmos Online will remain available strictly as a development environment to foster adoption of Atmos technology and Atmos cloud services offered by our continuously expanding range of partners who offer production services”

To summarize the rest: pack up your stuff and go. Because there’s no support for Atmos. We are not providing any SLA or availability guarantees so hurry—migrate anything that matters to one of our partners. Like now. Yes, now.

Oh and…

“You are welcome to continue leveraging Atmos Online for development purposes as needed. These changes also do not affect our commitment to your success”

Well, now that you put it that way, EMC, here just before the fourth of July when your users were all nice and prepared for hot dogs and fireworks and now will instead be engaged in frantic migration attempts with minimal support, that makes it all seem a little better. Really.

Perspective on EMC’s Decision—and the Implications

There is no question that this is fodder for arguments against clouds as reliable and cost-effective paradigm shifts for IT since from here, it certainly looks like there has been no warning. If it was any other company, perhaps one that wasn't as well known, the fear would be that they would disappear altogether--data in tow.

Info-Tech Research Group put EMC in their ranks of Rising Stars in March because, according to Info-Tech’s Research Analyst Laura Hansen-Kohls, at the time they seemed to have great promise. In an interview on Friday with HPC in the Cloud, Hansen-Kohls stated, “when we spoke with them before they were named a rising star, they were a major storage vendor so they had the market share edge that was on par with someone like Amazon would have, but also, at least when we spoke to them, they seemed to be making a significant investment in the cloud even though it was clear they didn’t have a defined strategy. Still, we felt that once they got the marketing push underway and communicated more clearly they could have competed with Amazon but from what we understand now, the competition with their partners was too direct so they decided to exit.”

While this is a perfectly valid and easy to understand reason for EMC’s sudden decision to pull all support and leave customers hanging without notice, it seems like there has to be something else going on here—what could have caused a company that has spent a significant amount of money and effort getting the word out about Atmos to abandon it in a way that leaves me looking for stronger phrases than “rudely abrupt” if there are any. Some have suggested that the costs suddenly became too heavy to bear all of a sudden and others have contended that agreements with their partners led to an immediate arrangement for them to stop competing or suffer the consequences. No one from EMC has responded to my queries and for those who did receive responses, they don’t go far beyond the cryptic letter on their website.

An Important Reminder

Hansen-Kohls suggests that this news does not bode well for the long-term perception of clouds, especially for smaller enterprises. She stated, “When Amazon got into cloud, for example, they did it because they had all this excess capacity and they could rent it out for a price without any data center or other major capital investment—when you’ve got vendors like EMC, they might not have that capacity just sitting around to sell so it could be that their investment was costing more than they were actually making—this is conjecture—it could be the revenue stream in wasn’t enough to offset the cost.”

In other words, it is critical to evaluate the business model of any cloud vendor before taking the plunge—not just what their existing SLAs seem to represent. If it isn’t clear that they have the resources to begin with and those resources are being culled sustainably, then it is not a good idea. Period.

When I asked Laura Hansen-Kohls whether or not there will likely be other companies with similar offerings jumping ship and taking the customer life rafts with them, she paused for quite some time before responding (although to be fair, I did catch her off guard). She replied, “Most of the other vendors we’ve spoken with GoGrid and Joyent for example, have a clear vision of what they want to achieve and have a plan to get it. Joyent will admit to this readily, but they’ve also suffered from an unclear message. They’re going through a rebranding process and are starting to pick up the pace so I think they’re aware of some of the misconceptions that float around in the cloud and the source of confusion that is caused by the marketing terminology since marketing ran away with the term before the tech was refined. Joyent could have had a similar problem to EMC but they’re picking up fast enough and gaining ground.”

The main message here, to quote Hansen-Kolhls, is that “knowing your risk tolerance when you go into the cloud is critical. If you’re putting data in the cloud you can’t live without, such as in a case like this, you have to know what your risk tolerance is for losing that data for a certain amount of time. If there are compliance restrictions, for instance, they can’t tolerate this at all—this is a real kick in the argument against moving into the cloud. EMC is not making any promises how long they’ll keep the data there.”

Like many others who read the news, which was so thoughtlessly timed with the closing bell on a pre-holiday Friday in the United States, Hansen-Kohl’s response to the customer email cited above was, “ I read it and I was shocked. No SLA, no production--get your data out because there’s no guarantee it will be here. It so sudden—there was no forewarning, thus no giving anyone time to transition—the enterprises who move to the cloud need a contingency plan so they can get their data out when something like this happens.

James Hamilton’s Hadoop Summit 2010 of 7/3/2010 begins:

I didn’t attend the Hadoop Summit this year or last but was at the inaugural event back in 2008 and it was excellent. This year, the Hadoop Summit 2010 was held June 29 again in Santa Clara. This agenda for the 2010 event is at: Hadoop Summit 2010 Agenda. Since I wasn’t able to be there, Adam Gray of the Amazon AWS team was kind enough to pass on his notes and let me use them here:

Key Takeaways

- Yahoo and Facebook operate the world largest Hadoop clusters, 4,000/2,300 nodes with 70/40 petabytes respectively. They run full cluster replicas to assure availability and data durability.

- Yahoo released Hadoop security features with Kerberos integration which is most useful for long running multitenant Hadoop clusters.

- Cloudera released paid enterprise version of Hadoop with cluster management tools and several dB connectors and announced support for Hadoop security.

- Amazon Elastic MapReduce announced expand/shrink cluster functionality and paid support.

- Many Hadoop users use the service in conjunction with NoSQL DBs like Hbase or Cassandra.

Keynotes

Yahoo had the opening keynote with talks by Blake Irving, Chief Products Officer, Shelton Shugar, SVP of Cloud Computing, and Eric Baldeschwieler, VP of Hadoop. They talked about Yahoo’s scale, including 38k Hadoop servers, 70 PB of storage, and more than 1 million monthly jobs, with half of those jobs written in Apache Pig. Further their agility is improving significantly despite this massive scale—within 7 minutes of a homepage click they have a completely reconfigured preference model for that user and an updated homepage. This would not be possible without Hadoop. Yahoo believes that Hadoop is ready for enterprise use at massive scale and that their use case proves it. Further, a recent study found that 50% of enterprise companies are strongly considering Hadoop, with the most commonly cited reason being agility. Initiatives over the last year include: further investment and improvement in Hadoop 0.20, integration of Hadoop with Kerberos, and the Oozie workflow engine.

Next, Peter Sirota gave a keynote for Amazon Elastic MapReduce that focused on how the service makes combining the massive scalability of MapReduce with the web-scale infrastructure of AWS more accessible, particularly to enterprise customers. He also announced several new features including expanding and shrinking the cluster size of running job flows, support for spot instances, and premium support for Elastic MapReduce. Further, he discussed Elastic MapReduce’s involvement in the ecosystem including integration with Karmasphere and Datameer. Finally, Scott Capiello, Senior Director of Products at Microstrategy, came on stage to discuss their integration with Elastic MapReduce.

Cloudera followed with talks by Doug Cutting, the creator of Hadoop, and Charles Zedlweski, Senior Director of Product Management. They announced Cloudera Enterprise, a version of their software that includes production support and additional management tools. These tools include improved data integration and authorization management that leverages Hadoops security updates. And they demoed a WebUI for using these management tools.

The final keynote was given by Mike Schroepfer, VP of Engineering at Facebook. He talked about Facebook’s scale with 36 PB of uncompressed storage, 2,250 machines with 23k processors, and 80-90 TB growth per day. Their biggest challenge is in getting all that data into Hadoop clusters. Once the data is there, 95% of their jobs are Hive-based. In order to ensure reliability they replicate critical clusters in their entirety. As far as traffic, the average user spends more time on Facebook than the next 6 web pages combined. In order to improve user experience Facebook is continually improving the response time of their Hadoop jobs. Currently updates can occur within 5 minutes; however, they see this eventually moving below 1 minute. As this is often an acceptable wait time for changes to occur on a webpage, this will open up a whole new class of applications.

Discussion Tracks

After lunch the conference broke into three distinct discussion tracks: Developers, Applications, and Research. These tracks had several interesting talks including one by Jay Kreps, Principal Engineer at LinkedIn, who discussed LinkedIn’s data applications and infrastructure. He believes that their business data is ideally suited for Hadoop due to its massive scale but relatively static nature. This supports large amounts of computation being done offline. Further, he talked about their use of machine learning to predict relationships between users. This requires scoring 120 billion relationships each day using 82 Hadoop jobs. Lastly, he talked about LinkedIn’s in-house developed workflow management tool, Azkaban, an alternative to Oozie.

Eric Sammer, Solutions Architect at Cloudera, discussed some best practices for dealing with massive data in Hadoop. Particularly, he discussed the value of using workflows for complex jobs, incremental merges to reduce data transfer, and the use of Sqoop (SQL to Hadoop) for bulk relational database imports and exports. Yahoo’s Amit Phadke discussed using Hadoop to optimize online content. His recommendations included leveraging Pig to abstract out the details of MapReduce for complex jobs and taking advantage of the parallelism of HBase for storage. There was also significant interest in the challenges of using Hadoop for graph algorithms including a talk that was so full that they were unable to let additional people in.

Elastic MapReduce Customer Panel

The final session was a customer panel of current Amazon Elastic MapReduce users chaired by Deepak Singh. Participants included: Netflix, Razorfish, Coldlight Solutions, and Spiral Genetics. Highlights include:

- Razorfish discussed a case study in which a combination of Elastic MapReduce and cascading allowed them to take a customer to market in half the time with a 500% return in ad spend. They discussed how using EMR has given them much better visibility into their costs, allowing them to pass this transparency on to customers.

- Netflix discussed their use of Elastic MapRedudce to setup a hive-based data warehouseing infrastructure. They keep a persistent cluster with data backups in S3 to ensure durability. Further, they also reduce the amount of data transfer through pre-aggregation and preprocessing of data.

- Spiral Genetics talked about they had to leverage AWS to reduce capital expenditures. By using Amazon Elastic MapReduce they were able to setup a running job in 3 hours. They are also excited to see spot instance integration.

- Coldlight Solutions said that buying $1/2M in infrastructure wasn’t even an option when they started. Now it is, but they would rather focus on their strength: machine learning and Amazon Elastic MapReduce allows them to do this.

Liz MacMillan reported “At Cloud Expo East, Terremark VP Chris Drumgoole presented a practical guide to evaluating enterprise clouds and cloud providers” in her Parting the Clouds: Evaluating Clouds & Cloud Providers for the Enterprise post of 7/2/2010:

Go beyond the marketing hype, the brands and the buzzwords, and learn how differences in architecture, infrastructure, security and design can have significant impact on your ability to take advantage of the cost-effectiveness and agility of infrastructure-as-a-service without compromising availability, performance and compliance.

In his general session at the 5th International Cloud Expo, Terremark VP Chris Drumgoole discussed how leading enterprises and government agencies are taking advantage of the power and flexibility offered by enterprise cloud computing architecture and presented a practical guide to evaluating enterprise clouds and cloud providers.

Click Here to View This Session Now!

About the Speaker: Chris Drumgoole is Senior Vice President of Client Services at Terremark where he is responsibe for customer service, sales enablement and support. Previously, he served as SVP Product Development and Engineering. …

<Return to section navigation list>

.png)