Windows Azure and Cloud Computing Posts for 7/1/2010+

| Windows Azure, SQL Azure Database and related cloud computing topics now appear in this daily series. |

- Update: Articles dated 7/1/2010 and earlier added after 7/2/2010 1:00 PM PDT are marked •

- Update 7/3/2010: Missing images replaced. Clicking J. D. Meier’s images no longer returns 404s.

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database, Codename “Dallas” and OData

- AppFabric: Access Control and Service Bus

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Windows Azure Infrastructure

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Read the detailed TOC here (PDF) and download the sample code here.

Discuss the book on its WROX P2P Forum.

See a short-form TOC, get links to live Azure sample projects, and read a detailed TOC of electronic-only chapters 12 and 13 here.

Wrox’s Web site manager posted on 9/29/2009 a lengthy excerpt from Chapter 4, “Scaling Azure Table and Blob Storage” here.

You can now download and save the following two online-only chapters in Microsoft Office Word 2003 *.doc format by FTP:

- Chapter 12: “Managing SQL Azure Accounts and Databases”

- Chapter 13: “Exploiting SQL Azure Database's Relational Features”

HTTP downloads of the two chapters are available from the book's Code Download page; these chapters will be updated in June 2010 for the January 4, 2010 commercial release.

Azure Blob, Drive, Table and Queue Services

• Julie Lerman (@julielerman) wrote “Windows Azure Table storage causes a lot of head scratching among developers” as the deck for her July 2010 Windows Azure Table Storage – Not Your Father’s Database “DataPoints” column for MSDN Magazine:

Most of their experience with data storage is with relational databases that have various tables, each containing a predefined set of columns, one or more of which are typically designated as identity keys. Tables use these keys to define relationships among one another.Windows Azure stores information a few ways, but the two that focus on persisting structured data are SQL Azure and Windows Azure Table storage. The first is a relational database and aligns fairly closely with SQL Server. It has tables with defined schema, keys, relationships and other constraints, and you connect to it using a connection string just as you do with SQL Server and other databases.

Windows Azure Table storage, on the other hand, seems a bit mysterious to those of us who are so used to working with relational databases. While you’ll find many excellent walk-throughs for creating apps that use Windows Azure Table storage, many developers still find themselves forced to make leaps of faith without truly understanding what it’s all about.

This column will help those stuck in relational mode bridge that leap of faith with solid ground by explaining some core concepts of Windows Azure Table storage from the perspective of relational thinking. Also, I’ll touch on some of the important strategies for designing the tables, depending on how you expect to query and update your data.

Storing Data for Efficient Retrieval and Persistence

By design, Windows Azure Table services provides the potential to store enormous amounts of data, while enabling efficient access and persistence. The services simplify storage, saving you from jumping through all the hoops required to work with a relational database—constraints, views, indices, relationships and stored procedures. You just deal with data, data, data. Windows Azure Tables use keys that enable efficient querying, and you can employ one—the PartitionKey—for load balancing when the table service decides it’s time to spread your table over multiple servers. A table doesn’t have a specified schema. It’s simply a structured container of rows (or entities) that doesn’t care what a row looks like. You can have a table that stores one particular type, but you can also store rows with varying structures in a single table, as shown in Figure 1.

Figure 1 A Single Windows Azure Table Can Contain Rows Representing Similar or Different Entities

Julie continues with these topics:

- It All Begins with Your Domain Classes

- PartitionKeys and RowKeys Drive Performance and Scalability

- Digging Deeper into PartitionKeys and Querying

- Parallel Querying for Full Table Scans

- More Design Considerations for Querying

- Rethinking Relationships

and concludes with:

A Base of Understanding from Which to Learn More

Windows Azure Tables live in the cloud, but for me they began in a fog. I had a lot of trouble getting my head wrapped around them because of my preconceived understanding of relational databases. I did a lot of work (and pestered a lot of people) to enable myself to let go of the RDBMS anchors so I could embrace and truly appreciate the beauty of Windows Azure Tables. I hope my journey will make yours shorter.

There’s so much more to learn about Windows Azure Table services. The team at Microsoft has some great guidance in place on MSDN. In addition to the PDC09 video mentioned earlier, check this resource page on the Windows Azure Storage team blog at blogs.msdn.com/windowsazurestorage/archive/2010/03/28/windows-azure-storage-resources. The team continues to add detailed, informative posts to the blog, and I know that in time, or even by the time this column is published, I’ll find answers to my myriad questions. I’m looking forward to providing some concrete examples in a future Data Points column.

Steve Marx and Ryan Dunn presented on 7/1/2010 another episode in Channel9’s Cloud Cover series: Cloud Cover Episode 17 - Using Queues (00:56:18):

Join Ryan and Steve each week as they cover the Microsoft cloud. You can follow and interact with the show at @cloudcovershow.

In this episode:

- Learn about the Queue API in Windows Azure.

- Discover some tips and tricks for how to use Queues effectively.

- Listen as we discuss best practices when using Queues.

Show Links:

Troubleshooting and Optimizing Queries in SQL Azure

SQL Azure Horizontal Partitioning - Part 2 of 1

SQL Azure SU3 is Live

WIF and Windows Azure

Windows Azure Articles from the Trenches

Handling Dequeue Errors with Queues

Steve Marx explains Deleting Windows Azure Queue Messages: Handling Exceptions in this 7/1/2010 post that augments the latest Cloud Cover episode:

This week’s episode of Cloud Cover [Queues, live 7/2/2010 AM] is all about Windows Azure queues. It’s a bit of a long episode, but there’s a lot of interesting technical content in there. During the show, a detail came up as Ryan and I were discussing queues and concurrency. At the time, I wasn’t sure exactly what guidance to give, so I committed to following up before the show went live.

To understand the situation, remember that Windows Azure employs reliable queueing, meaning that it guarantees no message is lost without being handled by a consumer. That means that consuming queue messages is a two step process. First, the consumer dequeues the message, specifying a visibility timeout. At this point, the message is invisible and can’t be retrieved by other consumers. When the consumer is finished with the message, it deletes it. If, however, the consumer is unable to finish processing the message, the visibility timeout will expire, and the message will reappear on the queue. This is what guarantees the message will eventually be handled.

This leads us to the situation Ryan and I were discussing on the show. The scenario is as follows:

- Instance #1 dequeues a message and starts working on it.

- The message’s visibility timeout expires, making it visible again on the queue.

- Instance #2 dequeues the message and starts working on it.

- Instance #1 finishes working on the message and tries to delete it.

At that last step, an error will be returned from the queue service. This is because the first instance no longer “owns” the message; it’s already been delivered to another instance. (The underlying mechanism is a pop receipt which is invalidated by the second dequeueing of the message.)

The question I couldn’t answer on the fly during the show was how to accurately detect this error and handle it in code. After some discussion with the storage team, this is the .NET code I’m recommending people use to identify this error:

try { q.DeleteMessage(msg); } catch (StorageClientException ex) { if (ex.ExtendedErrorInformation.ErrorCode == "MessageNotFound") { // pop receipt must be invalid // ignore or log (so we can tune the visibility timeout) } else { // not the error we were expecting throw; } }It would be nice if the storage client library included a constant for “MessageNotFound,” as it does for a number of other common error codes, but we can be confident that’s the right string by consulting the documentation on Queue Service Error Codes.

Note that I’m not just checking for the HTTP 404 status code, because that could mean some other things (like an incorrect queue name). Looking for the “MessageNotFound” error code is more specific and thus better to use.

Now go watch the latest Cloud Cover episode!

<Return to section navigation list>

SQL Azure Database, Codename “Dallas” and OData

• Wayne Walter Berry explains Finding Circular Foreign Key References in this 7/1/2010 post to the SQL Azure Team blog:

In the world of relational databases circular references are schema structures where foreign keys relating the tables create a loop. Circular references cause special types of issues when trying to synchronize two relational database where [referential integrity of] the foreign keys are enforced. Because of this issue, database schemas that contain circular references are restricted in the tools that can be used when synchronizing and replicating the database. This article will explain circular references and demonstrate a Transact-SQL script for determining if your database has a circular reference.

What is a Circular Reference?

Foreign keys create database-enforced integrity constraints. These constraints ensure that a row of data exists in one table before another table can reference it. They also prevent a dependent row from being deleted that another row references. In Figure 1 we see a simple foreign key between Address table and StateProvince table in the Adventure Works database.

Figure 1

A circular reference is one or more tables where the foreign keys create a loop. Figure 2 is an example.

Figure 2

In this case the City table contains a reference to the author; it is the author that wrote the description for the city. The Author table has a reference to the city, because each author lives in a city. So which came first, the city or the author? In all cases with circular references one of the foreign key columns must be accept a null value. This allows the data to be inserted in 3 passes:

- An insert into the table referenced by the nullable foreign key with the key set to null.

- An insert into the table with the non-null foreign key.

- An update to modify the nullable foreign key to reference the row inserted in step 2.

A circular reference is not limited to two tables, it might involve many tables, all bound together in one big circle.

Self-Referencing Tables

A special case circular reference is the self-referencing table. This is a table that has a foreign key column that references its own primary key. An example is a human resource schema that tracks employees and their bosses. In the employee table, there is a foreign key column called boss that references the primary key column in the employee table. Self-referencing tables always have a foreign key column which is nullable and at least one null exists. In the example above it would be the CEO, since he doesn’t have a boss his boss column is null.

Synchronizing Schemas with Circular References

Tables that are not involved in a circular reference are easy to synchronize, you make a complete table update the table without dependencies on it, then update the tables with foreign key dependences. In Figure 1 you would update the StateProvince table, then the Address table. This explanation is simplified, for example the deletes are done in the reverse order. If the tables have no circular references you can synchronize them table by table if you know their dependency order.

Synchronizing tables with circular references is much harder, because you have to update the tables row by row, jumping back and forth between the tables, inserting the nullable foreign key with nulls first, then updating them later. Again this is a simplified explanation; the point is that you can’t update the tables in a serial order if there are circular references.

There are really only a couple ways to synchronize database that contains tables with circular references:

- Perform a transaction based replication, much like SQL Server replication, which updates, inserts, and deletes the data in the same serial order as the data was changed in the source database

- Set the database into read-only mode, bulk copy the rows over to the destination database with the same primary keys, without check constraints on. Once you have moved all the tables, the source database can be taken out of read-only mode. I blog about doing this with bcp utility here.

- Deduce the possible orders of inserts, updates, and deletes row by row based on the dependencies and recreate those on the destination database. This is comparable to backwards engineering the transactions it took to update, insert and delete the data.

Detecting Circular References

The Transact-SQL script below uses a recursive cursor to detect if there are any circular references in your database schema. It can be run on your SQL Server database before you try to synchronize it with SQL Azure, or you can run it on your SQL Azure database. You can run it in the Query Window of SQL Server Management Studio; the output will be displayed as in the Message section.

If you have circular references the output will look like this:

dbo.City -> dbo.Author -> dbo.City dbo.Division -> dbo.Author -> dbo.City -> dbo.County -> dbo.Region -> dbo.Image -> dbo.Division dbo.State -> dbo.Image -> dbo.Area -> dbo.Author -> dbo.City -> dbo.County -> dbo.Region -> dbo.State dbo.County -> dbo.Region -> dbo.Author -> dbo.City -> dbo.County dbo.Image -> dbo.Area -> dbo.Author -> dbo.City -> dbo.County -> dbo.Region -> dbo.Image dbo.Location -> dbo.Author -> dbo.City -> dbo.County -> dbo.Region -> dbo.Image -> dbo.Location dbo.LGroup -> dbo.LGroup dbo.Region -> dbo.Author -> dbo.City -> dbo.County -> dbo.Region dbo.Author -> dbo.City -> dbo.Author dbo.Area -> dbo.Author -> dbo.City -> dbo.County -> dbo.Region -> dbo.Image -> dbo.AreaEach line is a circular reference, with a link list of tables that create the circle. The Transact-SQL script to detect circular references [has not been copied for brevity]. You can … [download it here].

Wayne Walter Berry begins a new SQL Azure series with PowerPivot for the DBA: Part 1 of 7/1/2010:

In this article I am going to tie some simple terminology and methodology in business intelligence and PowerPivot with Transact-SQL – bring it down to earth for the DBA. You don’t need to know Transact-SQL to build awesome reports with PowerPivot, however if you already do these articles will attempt to bridge the learning gap.

History

I have been hearing the term Business Intelligence (BI) thrown around for a while, mostly from executives wanting their companies to start “doing” business intelligence. I have also been avoiding learning about business intelligence assuming it involved complicated mathematics and a whole new set of terminology – putting off what appeared to be a big learning curve until I had more time. I prefer to learn new technology by building on what I already know; I really wanted to relate business intelligence to my other SQL Server skills, including Transact-SQL. I finally took the time to learn the basics of business intelligence and realized that there is a close tie in with my Transact-SQL skills.

PowerPivot

PowerPivot, the Excel 2010 extension, is a great way to get started with business intelligence. It lets you experiment with relationships and report building. With it you can quickly and easily prototype reports and investigate data issues before you commit to an ERD or server set up etc

Let get going a do a simple example: Connect to SQL Azure and the Adventure Works database, then import these tables:

- Sales.SalesOrderHeader

- Sales.SalesOrderDetail

- Production.Product

- Production.ProductSubcategory

- Production.ProductCategory

I covered how to connect to SQL Azure and import tables using PowerPivot in this blog post. The next step is to create a PivotTable. To do that go to the PowerPivot ribbon bar in Excel and choose PivotTable.

When the Pivot table appears and the docked PowerPivot Field List window add the LineTotal column from the SalesOrderDetail table in Values section of the field list, gives you the uninteresting PivotTable that looks like the one below.

This is the same as running this SELECT statement in Transact-SQL:

SELECT SUM(LineTotal) FROM Sales.SalesOrderDetailNow that we have gotten the “Hello World” example out of the way, add the ProductCategory.Name column to the Rows Labels in PowerPivot is just like creating a SELECT statement in Transact-SQL with a GROUP BY clause.

You would get the same output (without the Grand Total) in the PowerPivot sample above by executing this statement Transact-SQL Statement

SELECT ProductCategory.Name, SUM(LineTotal) FROM Sales.SalesOrderDetail INNER JOIN Production.Product ON Product.ProductID = SalesOrderDetail.ProductID INNER JOIN Production.ProductSubcategory ON Product.ProductSubcategoryID = ProductSubcategory.ProductSubcategoryID INNER JOIN Production.ProductCategory ON ProductSubcategory.ProductCategoryID = ProductCategory.ProductCategoryID GROUP BY ProductCategory.Name ...

Wayne continues his tutorial.

Kevin Kell demonstrates Creating SQL Azure Applications Quickly and Easily on 7/1/2010 with a link to a YouTube video:

I like tools. Tools are cool. Tools help you accomplish more in less time and with less effort. Tools help make hard topics like “cloud computing” accessible to mere mortals like myself.

Of course, to the purist, tools may be considered a bad thing. After all there is kind of a joy, a feeling of accomplishment, pride, or whatever, to be had after hacking out a particularly cool piece of code using only the command line. The same sorts of macho discussions have been the subject of water-cooler conversations for years. Remember when C programmers were considered “high level” by the machine language wonks?

Well, times have changed, and yet strangely remain somehow the same. That, however, is not the subject of this weeks’ post!

Today we are going to build a Windows Azure application from scratch. This application will use relational data stored in a SQL Azure database. We are going to build, test and deploy this application to the cloud in under 10 minutes. To accomplish this we will be using Microsoft Visual Studio 2010.

http://www.youtube.com/watch?v=r69ZxFgx9EI

So, what does this mean? Is the application a finished, polished deliverable product? Obviously not! However what we do have is a very good starting point from which we can go on to develop our application using the skills and tools that we are already familiar with. Did we need to learn some new things? Yes! But are they things that already fit into our understanding of how .NET applications are developed? Certainly!

In Learning Tree’s Window’s Azure Programming course we endeavor to hone your .NET programming skills to enable you to build and deploy applications to the cloud quickly and easily. You will learn how to take the skills you already know and apply them in this new paradigm called cloud computing. And you can do the same things, if you please!

The first OData Mailing List summary with items through 6/30/2010 appeared in my inbox on 7/1/2010. Here’s the description and a list of the first threads:

The OData Mailing List is for anyone interested in OData to ask questions about the protocol and to discuss how it should evolve over time. If you want to contribute, sign up here.

Below is a complete archive of the mailing list. Please read through the archive before posting to the mailing list, since the topic may have already been discussed.

- ODATA features request Wednesday, June 30, 2010 5:00 PM by Matthieu MEZIL

- Introduction and a question Tuesday, June 29, 2010 9:14 PM by Peter Mauger

- OData and W3C Tuesday, June 29, 2010 7:55 AM by Wood, Joe

Richi explained Creating a Netflix App using nRoute: A Step-by-Step Guide with OData on 6/30/2010:

With the latest release of nRoute we've added a trove of navigation-related features, to explain and demonstrate these features I've put together an end-to-end application that fronts the Netflix's oData Catalog. And so in this post we'll go through using these feature in a step-by-step manner to cumulatively compose a rich, extensible, and loosely-coupled application. Here is what we are going after:

View the nRoute's Netflix App (note you can also install the application on your desktop).

Guide Scope

Right off the bat, let me be clear, this post does not show you how to consume oData services or explain how to design iPad'ish UIs or troll on the virtues of MVVM - it purely concentrates on plugging-in nRoute's navigation and related features to compose our demo app. Familiarity with nRoute concepts is not a must, but will be much helpful. Secondly, just as I finalized this post, Netflix switched from a purely server-side paging-model to a hybrid client-server paging-model so I had to revisit the code, hence there might be a bit of mismatch from what's shown here and the actual code.

Rishi continues with his detailed tutorial.

<Return to section navigation list>

AppFabric: Access Control and Service Bus

Zane Adam’s Announcing availability of the Windows Azure AppFabric July Release appeared in the late afternoon of 7/1/2010, after entry of the item below:

The Windows Azure AppFabric July Release is live as of July 1st, 2010. This is our second update since commercial availability for the Windows Azure platform began earlier this year, and we are pleased to deliver on our commitment to provide you with updates and service improvements on a regular basis.

With this release, we are expanding the benefits of Windows Azure AppFabric in two key areas:

1. Extending AppFabric to web browsers and mobile devices.

2. Providing an SDK to support .NET 4.

Support for Rich Internet Applications Just Got Better With Commercial Support for Silverlight and Flash clients:

We have added support for cross-domain policy files, allowing Silverlight and Flash clients to make cross-domain calls to the Service Bus and Access Control Service. This is our first step in addressing the needs we have heard from you around providing first-class support for Rich Internet Applications.

New Azure AppFabric SDK Now Offers Support for .NET 4:

We are also delivering a new version of the Windows Azure AppFabric SDK that supports .NET 4. This allows clients and services written in .NET 4, whether on-premises or hosted in Windows Azure, to connect to the Service Bus and Access Control service. To obtain the latest copy of the AppFabric SDK, visit the AppFabric portal or the Download Center. For a complete list of known issues and changes in this release, please refer to the release notes. More details about AppFabric are available in our FAQ.

Marius Oiaga recommends that you Download the New Windows Azure Platform AppFabric SDK - July Update in his 7/1/2010 post to Softpedia:

Microsoft has updated the software development kit designed to help developers build on top of Windows Azure AppFabric. The July 2010 update of the Windows Azure AppFabric SDK v1.0 is now available for download from the Redmond company, offering the necessary API libraries as well as code samples for developers to put together connected applications leveraging the .NET Framework. Windows Azure AppFabric itself is set up to help customers create connected applications and services both in the Cloud but also on-premise.

The SDK “spans the entire spectrum of today’s Internet applications – from rich connected applications with advanced connectivity requirements to Web-style applications that use simple protocols such as HTTP to communicate with the broadest possible range of clients,” Microsoft stated.

The Windows Azure AppFabric SDK is obviously tailored to the two AppFabric services offered in conjunction with the Windows Azure platform, namely AppFabric Access Control and AppFabric Service Bus. For both, the software development kit brings to the table not only API libraries, and samples but also the tools and documentation necessary for devs to build their apps.

“The Service Bus and Access Control SDK comes with a number of interesting samples that illustrate how to leverage the various capabilities offered by the Service Bus and Access Control. You’ll find the samples in the SDK installation directory. Most of these samples have been designed to ask the user to specify the service namespace dynamically. Some will ask you to supply your credentials via the console window while others will require you to enter them in the application configuration file ahead of time. The samples make it easy to explore the features offered by these services so be sure to check them out,” the Redmond company added.

As of April 12, 2010, Windows Azure platform AppFabric reached the commercial availability milestone, and Microsoft started charging customers for its usage. The offering is a SLA-supported paid service as of April.

According to the Azure AppFabric Team:

New features include support for Rich Internet Application (RIA) clients, and support for .NET Framework 4. Read more about the newest release here.

From the Download Page:

Brief Description: Windows Azure AppFabric is a key component of the Windows Azure Platform. It includes two services: AppFabric Access Control and AppFabric Service Bus.

Overview: This SDK includes API libraries and samples for building connected applications with the .NET platform. It spans the entire spectrum of today’s Internet applications – from rich connected applications with advanced connectivity requirements to Web-style applications that use simple protocols such as HTTP to communicate with the broadest possible range of clients.

Be sure to read the Instructions before executing WindowsAzureAppFabricSDK.msi.

Eugenio Pace continues his WIF Interop series with Identity Federation Interoperability – WIF + ADFS + CA SiteMinder on 7/1/2010:

How it works:

Bill Zack’s Federated Cloud Secure Collaboration post of 7/1/2010 to the Innovation Showcase blog links to Hong Choing’s GSK Takes Secure Collaboration to the Cloud post of 6/23/2010:

Cloud computing represents a compelling and transformational shift in how Life Sciences industry conducts business. Project such as the GlaxoSmithKline (GSK) Federated Secure collaboration prove that the technology is finally catching up to the industry demands. This was discussed In a recent Microsoft Cloud Life Sciences event in Iselin, NJ on June 8th.

The Pharmaceutical industry business has changed dramatically from one that is done everything in-house to one that requires close collaborations among peer companies and event among competitors. These industry shifts in work-process requires new technology solutions to enable better and secured collaboration across multiple entities. Read more about it here.

• Scott Hanselman described Installing, Configuring and Using Windows Server AppFabric and the "Velocity" Memory Cache in 10 minutes in response to a reader’s comment on 7/1/2010:

A few weeks back I blogged about the Windows Server AppFabric launch (AppFabric is Microsoft's "Application Server") and a number of folks had questions about how to install and configure the "Velocity" memory cache. It used to be kind of confusing during the betas but it's really easy now that it's released.

Here's the comment:

Have you tried to setup a appfabric (velocity) instance ? I suggest you try & even do a blog post, maybe under the scenario of using it like a memcache for dasblog. I would love to know how to setup it up, it's crazy hard for what it is.

No problem, happy to help. I won't do it for dasblog, but I'll give you easy examples that'll take about 10 minutes.

Get and Install AppFabric

You can go to http://msdn.com/appfabric and download it directly or just do it with the Web Platform Installer.

Run the installer and select AppFabric Cache. If you're on Windows 7, you'll want to install the IIS 7 Manager for Remote Administration which is a little plugin that lets you manage remote IIS servers from your Windows 7 machine.

NOTE: You can also an automated/unattended installation as well via SETUP /i CACHINGSERVICE to just get caching.

The configuration tool will pop up, and walk you through a small wizard. You can setup AppFabric Hosting Services for Monitoring and Workflow Persistence, but since I'm just doing Caching, I'll skip it.

Scott continues his tutorial with detailed instructions.

• The Windows Azure AppFabric Team posted a New Sample: Consuming ACS and Service Bus from Flash on 6/30/2010, but IE 8’s Feed Reader feature didn’t catch it:

As part of the Windows Azure AppFabric July Release, we have added support for cross-domain policy files to enable cross-domains calls to Service Bus and Access Control Service from Silverlight and Flash clients. This new sample demonstrates how to consume the AppFabric Access Control Service (ACS) and the Service Bus's Message Buffer API from a Flash application.

You will find the sample as an attachment to this post.

WindowsAzurePlatformAppFabric_5F00_FlashSampleForMessageBuffer.zip Enjoy!

If you have any issues/questions/feedback with this sample, please address them in the Windows Azure AppFabric forums

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

Matt Davey sees Intelligent Clouds on the Horizon for Financial Services in this 7/1/2010 post to the HPC in the Cloud blog:

HPC has been around in the capital market financial space for a number of years. Is there now a need for a data aware cloud, that offers improved utilization and SLA coupled with lower latency, to move HPC to the next level?

We can begin to answer this question by looking at how HPC is used from a market risk perspective, initially providing some background on how market risk is calculated from a portfolio perspective and then discussing the need for a data aware cloud that leverages the Windows Server HPC 2008 R2 product

Both the buy-side (advising institutions concerned with buying) and sell-side (a firm that sells investment services to asset management firms - services such as broking/dealing, investment banking, advisory functions, and investment research) have had various HPC grid deployments for many years. Platform and DataSynapse are the two that I believe have the largest install bases within the London and New York financial communities.

Initially these HPC grid installations were not used in the most intelligent way - in some cases they were used to simply run Microsoft Excel sheet calculations in parallel. Unfortunately in certain places this is still the case today, which effectively means that we have non-optimum grid usage often leading to the failure of Service Level Agreements (SLA’s) coupled with higher running costs and job/task compute times.

Another issue that has been common in sell-side organizations is asset classes (rates, foreign exchange, commodities, etc) owning their own HPC grids partly due to the inability of the HPC grid to satisfy SLA’s appropriately.

One of the main uses of financial HPC grids has been to calculate risk, specifically Market Risk. Market risk is the risk that the value of a portfolio, either an investment portfolio or trade portfolio, will decrease due to the change in value of the market risk factors. The four standard market risk factors are stock prices, interest rates, foreign exchange rates, and commodity prices.

Before we look at how market risk is calculated using HPC grids, let’s look at how a HPC is used within the context of the trade life cycle. If we start with the trader, they would submit a buy or sell order to a market (e.g. exchange). On the order being accepted a trade would be created that becomes part of a portfolio (sometimes this is known as the traders book). For the lifetime of the trade being held, its market risk needs to be calculated so that the holder of the trade can understand what their profit/loss is overall. Often the market risk can be calculated with a closed form solution (which is what we will discuss for the rest of this article).

An HPC solution is ideal given the number of books that require market risk calculations especially with the ever increasing need to perform a trade market risk calculation when one or more of the market risk factors changes. With certain trades there is no closed form solution, thus forcing a Monte Carlo route which is compute intensive and thus again forcing an HPC solution.

In the simplest and possibly most common form, market risk is calculated as follows for a portfolio:

1. Snap appropriate market data, yield curves, forecast curves (the model) required for the calculations

The SD Times Editorial Board wrote From the Editors: Cloud? Mobile? We get it on 7/1/2010:

Microsoft talks about Azure and the cloud at its TechEd conference. Apple talks about the iPhone and the App Store at its Worldwide Developers Conference. We have dutifully covered these events and given them prominent play in this newspaper, but pardon us if we can’t get too worked up over them.

To paraphrase the Will Ferrell character Mugatu from the ridiculous 2001 movie "Zoolander:" “Cloud? Mobile? They’re the same story. Doesn't anybody notice this? I feel like I’m taking crazy pills!”

Indeed, if you only listened to Microsoft, Apple and many other companies, it would seem that all developers care about are building cloud applications and building mobile applications. However, to our eyes, cloud computing is only peripherally a developer issue, and mobile is more about a changing platform target (with a different business model and distribution vehicle) than a new programming paradigm.

No question, companies like Microsoft and Apple are businesses. It’s their job to come up with shiny new toys, both literally and figuratively, to keep the money flowing in. That’s why the hype machine, not only from their PR agencies but also from their pet analyst firms, keeps pounding the same message over and over and over again: It’s cheaper to develop applications for the cloud than it is to build out your data center. And you need your applications to behave as well on a mobile device as they will on a desktop.

OK. We get it.

We can’t help but notice, though, that things that are so critical to development organizations, such as the future of Java, leveraging multicore systems, quality assurance, intellectual property rights, and improving development team productivity, get much shorter shrift these days. Could it simply be that Oracle doesn’t yet have a plan for Java? That only hardware vendors are discussing multicore? Nobody knows how to improve software quality? IP and software rights are an open-source issue without major players behind it? And that developer productivity is, well, boring to the big guys?

The editors continue their lament on page 2.

Nicole Hemsoth analyzed Elite HPC and the Cloud Culture Clash for the HPC in the Cloud blog on 7/1/2010:

We have case studies. We have examples of HPC in action in the clouds in one form or another. But for a still vast majority of large supercomputing centers—the terra firma for traditional HPC—cloud discussions are but fluff. They revolve around business models. And for the lofty aims of the supercomputing elite, business models have nothing to do with the world they live in.

That resistance is rooted in solid arguments now--no question about it--but as the technologies that underlie cloud, including the development of low-latency boosts to cloud power evolve, these arguments are bound to weaken, leaving only a culture question. And this will probably coincide with the arrival of a new group of thinkers who were weaned on the cloud in its infancy via everything-imaginable-as-a-service. That means any of us under what, say 25?

To quote an unnamed director of a supercomputing site following an off-the-cuff discussion at International Supercomputing Conference this year, “what everyone’s forgetting is that we [supercomputing centers and large research centers] have no incentive to have anything to with clouds. They offer no real benefit outside of cost—and that’s not even assured. It’s just a business model. It has no value and we have no reason to consider it since what we have now performs well. Give me a reason to think it is going to revolutionize what we’re doing and I’ll be glad to take a look, but it has nothing to offer, at least not yet.”

Fair enough.

When it comes to clouds and HPC, at least in the scientific computing arena, the key question almost invariably comes down to the matter of performance, which has led to overarching question, “if cost isn’t the issue, why would I ever bother to experiment with the cloud when standard HPC has been providing the compute resources needed to begin with.” This question most often stems from research and academia where, true enough, the performance is predictable and there are no concerns about virtualization slowdowns and bottlenecks and the same security concerns that have been around since the cluster was first unleashed are essentially the same.

In short, It is hard to imagine a bright future for HPC in the cloud when the single greatest concern for HPC is rooted in performance--and the single most-discussed flaw with cloud is performance. The two most important goals are at complete odds with one another. At least in the present.

It’s hard to disagree with the points here when we're putting this in the context of the present--where is the incentive for big HPC users if the performance question still hasn’t been broadly answered? To refine this question a little further, where is the incentive for HPC users who already have invested in their own clusters since the smaller outfits who have ready-made HPC on demand via the cloud are often already in simply because of cost?

Even more importantly, if the incentive comes, who is to say that it will ever mean much considering that so many jobs are dependent on the world just as it is, thank you very much.

What these questions embody is substance, but underlying all of this is the issue of culture. Outside of performance, service-level worries, and the other mess of cloud issues we are all well aware of, all the incentive in the world still might not be enough. This makes me wonder what will happen when this fresh new crop of graduating PhD students comes trickling forth from Berkeley, MIT and other universities that are looking to clouds in varying ways will change the culture. Actually, I don’t really wonder…do you?

It was interesting that the unnamed source quoted at the beginning did clarify his position by inserting the “not yet” as it might mean that there is a glimmer of interest—that is, of course, if the performance roadblocks are lifted. Or it might have just been wishful thinking that he was actually considering the possibilities somewhere in the back of his mind. But probably not.

Bruce Guptill wrote Collaborative Cloud Solutions Slip Into Core Enterprise Workflow Research Alert for Saugatuck Technology on 6/30/2010 (site registration required):

What Is Happening? This week, Saugatuck published its annual SaaS/Cloud Business Solutions survey report for subscribers to our Continuous Research Service (CRS). In that report, we note that for several years, Collaborative solutions have been the clear leaders in SaaS/Cloud Business Solution adoption and use among user firms worldwide.

But the data from this year’s survey (see Note 1) indicate something slightly different. Cloud-based Collaborative solutions have fallen behind several others in terms of currently-installed (or planned through 2010) solutions, and they rank second in overall deployments-plus-plans to deploy through 2012 - just behind CRM, and even with Customer Service and Support applications.

Fig. 1: Cloud-based Business Solutions In Place and Planned Through 2012

Source: Saugatuck Technology Inc. March 2010 global survey; n = 790

Bruce continues with the usual “Why Is It Happening” and “Market Impact” sections.

The Microsoft Case Study Team released Social Media Service Provider [Sobees] Expands Offering, Attracts Customers with Cloud Services on 6/27/2010:

Sobees develops software that customers can use to manage all of their social media information streams with a single application. The aggregator is popular among consumers who use applications such as Twitter and Facebook, and it recently earned a spot as the leading application on the Yahoo! Mail portal in Europe. With that exposure, and the potential to acquire millions of new customers, Sobees wasn’t confident that the small hosting company it used could provide the reliability and scalability it needed. Sobees migrated its solution to the Windows Azure platform and also developed a new service on Windows Azure, which it plans to make available to other companies through the Microsoft technology code-named “Dallas.” By choosing Windows Azure, Sobees improved the scalability, reliability, and performance of its applications, and maintained costs, even with 10 times more business.

Situation

Based in Switzerland, Sobees develops software that consumers can use to aggregate social media applications into a single view. With the Sobees applications, customers can connect to their favorite social media applications—such as Twitter, Facebook, LinkedIn, and MySpace—to read RSS feeds, see friends’ status, update their own status, and share photos from one window. The small company, with only seven employees, has developed one of the most widely used social media aggregators, with one million users. In addition to its social media aggregator, Sobees also develops a real-time search platform that taps into top search engines, such as Microsoft Bing, and aggregates results into five categories: social media, video, image, web, and news.[T]hough Sobees initially had customers primarily based in Europe, as the company grew, it attracted customers in the United States and around the world. However, the small hosting company based in Switzerland did not have other data center locations, and customers outside Europe often experienced delays when using the Sobees service. “We started to experience latency, particularly with our customers in the United States,” says Bochatay. “When it became clear that we needed a new solution, it also was clear that we needed one with a worldwide data center presence to ensure the same level of service for our customers no matter where they are located around the globe.”

Solution

In June 2009, Sobees evaluated the Windows Azure platform with the Community Technology Preview release of the technology. Windows Azure is a cloud services operating system that serves as the development, service hosting, and service management environment for the Windows Azure platform. It provides developers with on-demand compute and storage to host, scale, and manage web applications on the Internet through Microsoft data centers.

We have 10 times the number of customers now. To provide the same service to the same number of customers …without Windows Azure would have easily cost three times as much.

Francois Bochatay

CEO and Cofounder, SobeesSobees considered other cloud services providers, including Amazon.com, in addition to hosting with an Internet service provider, but decided on Windows Azure for several reasons. With a worldwide Microsoft data center presence, Windows Azure offered the reliability and performance that Sobees sought in a solution. In addition, the on-demand scalability was a key deciding factor for Sobees. Finally, but equally important, was the compatibility of Windows Azure with the Sobees service. “Reliable, scalable hosting is only one part of the equation,” explains Rithner. “But no one can compete with Windows Azure when it comes to compatibility with the .NET Framework. That is very important to us, not only for development, but also for debugging and monitoring. The integration of the platform and tools offered a great and unique experience through development, deployment, and the update processes. Adopting Windows Azure was an easy choice.” …

Return to section navigation list>

Windows Azure Infrastructure

Bart DeSmet answered Where’s Bart’s Blog Been? in this 7/1/2010 post that exposes a forthcoming “LINQ to Anything” variant:

A quick update to my readers on a few little subjects. First of all, some people have noticed my blog welcomed readers with a not-so-sweet 404 error message the last few days. Turned out my monthly bandwidth was exceeded which was enough reason for my hosting provider to take the thing offline.

Since this is quite inconvenient I’ve started some migration of image content to another domain, which is work in progress and should (hopefully) prevent the issue from occurring again. Other measures will be taken to limit the download volumes.

Secondly, many others have noticed it’s been quite silent on my blog lately. As my colleague Wes warned me, once you start enjoying every day of functional programming hacking on Erik’s team, time for blogging steadily decreases. What we call “hacking” has been applied to many projects we’ve been working on over here in the Cloud Programmability Team, some of which are yet undisclosed. The most visible one today is obviously the Reactive Extensions both for .NET and for JavaScript, which I’ve been evangelizing both within and outside the company. Another one which I can only give the name for is dubbed “LINQ to Anything” that’s – as you can imagine – keeping me busy and inspired on a daily and nightly basis. On top of all of this, I’ve got some other writing projects going on that are nearing completion (finally). [Emphasis added.]

Anyway, the big plan is to break the silence and start blogging again about our established technologies, including Rx in all its glory. Subjects will include continuation passing style, duality between IEnumerable<T> and IObservable<T>, parameterization for concurrency, discussion of the plethora of operators available, a good portion of monads for sure, the IQbservable<T> interface (no, I won’t discuss the color of the bikeshed) and one of its applications (LINQ to WMI Events), etc. Stay tuned for a series on those subjects starting in the hopefully very near future.

See you soon!

David Linthicum asserts “With the market heating up for cloud computing talent, many are rushing to learn more and updating their résumés -- and a few are lying” in his The cloud job market: A golden opportunity for IT pros post of 7/1/2010 to InfoWorld’s Cloud Computing blog:

We're seeing a lot of strategic and well-publicized hires in the cloud computing world these days, such as Cisco Systems grabbing up cloud computing mainstay and former Sun Microsystems executive Lew Tucker to oversee its cloud computing initiatives. But most of the brokering of cloud computing talent is done behind the scenes. Most companies are considering or actually moving to cloud computing, and they're seeking their own experts to make the move. Can you cash in?

However, there are far too many positions chasing far too few qualified candidates these days, and that's causing some organizations to hire less-than-qualified people. It's also causing the cost of those people to go way up. I'm interrupted daily by a headhunter looking for "cloud people," offering executive-level comp packages for very technical and tactical gigs. Who says unemployment is still a problem?

So how do you take advantage of this spike in need for cloud computing ninjas?

First, read all you can get your hands on. With the hype raging around cloud computing, there are actually only a handful of books that do a good job teaching about the cloud. Make sure to purchase books that work through the requirements and the architecture first, and then learn specific cloud computing technologies. You need a good context. Also, read all of the better cloud blogs: Having current knowledge is key these days.

Second, learn by doing. One of the great things about cloud computing is that the services are often free for a trial period. Even if there is a cost, it's nominal. So get that Google App Engine account or play around with Amazon Web Services to see how this stuff actually works. Build a few prototypes to undertand the skills along with the concepts.

Finally, don't "cloudwash" yourself. Many people seeking to take advantage of the need for cloud computing people are "cloudwashing" their résumés to make themselves seem more relevant. This is pretty transparent to anybody in the know, and it nearly guarantees your stuff tossed will be tossed in the trash. Instead, focus on relevant, real skills and knowledge. Most employers understand this is new stuff, and it shouldn't count against you if you don't have two decades of cloud computing experience. After all, who does?

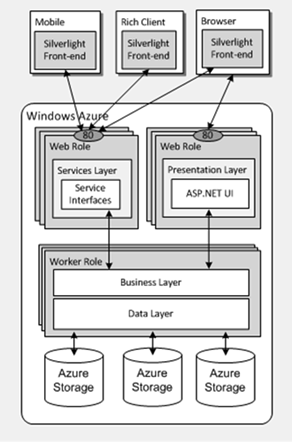

J. D. Meier describes Azure Canonical Application Architecture in this 7/1/2010 post:

While working with a customer last week at our Microsoft Executive Briefing Center (Microsoft EBC), one of the things we need[ed] to do was find a simple way to whiteboard the architecture. We walked through a lot of variations of application patterns, but the customer ultimately wanted to see one code base running on Azure that could support multiple clients (such as desktop, phone, browser, etc.) We found a model that was pretty straightforward and allowed us to easily map the customer’s scenario onto the Azure platform.

Once we had a model, it was a lot easier to drill down on things such as why SQL Azure vs. Azure Storage or how to flow claims or how to partition the database. It’s like a backdrop that you can overlay or drill down on security, performance, manageability, etc. It helped us quickly explore paths without getting into weeds and keeping the big picture in mind. It also helped us figure out where target some of the key architectural spikes.

Here are some of the models we walked through on the whiteboard:

Baseline

Azure Storage Example

SQL Azure Example

David Chou answered a blogger’s question about Active Directory and BizTalk in the Cloud? on 7/1/2010:

A colleague pointed me to an interesting blog post – Two products Microsoft should set free into Cloud, which ended with this question:

So Microsoft – here is a market that is begging to be served and yours to lose. While you still have work to do to make your to Azure Platform, Business Applications, Office Suite widely adopted in Cloud, BizTalk and Active Directory are the need of the hour and are ready to go. So waste no more time – let them free and watch them soar in Cloud.

Now, if cloud computing is simply outsourced hosting, then Microsoft could just start selling Active Directory and BizTalk as a SaaS offering today. But I tend to think that cloud computing represents a new paradigm (basically, more distributed computing than utility computing), and more value can be gained by leveraging cloud as a new paradigm.

Below is the rather lengthy comment I left on that blog.

Active Directory and BizTalk not being part of the Microsoft cloud platform today (either in SaaS or PaaS model) doesn’t mean Microsoft doesn’t want to “set them free into cloud”. In fact, our long-term roadmap has been to make all of our software products and platforms available in the cloud in some form.

So then why haven’t we? Shouldn’t it be pretty simple to deploy instances of Active Directory and BizTalk in Microsoft data centers and let customers use them, a-la-SaaS-style? The answer lies in the fundamental question – is cloud computing simply server hosting in other people’s data centers, or is it a new paradigm we can leverage to do things differently?

Microsoft’s approach to cloud computing is exactly that – provide the right solutions for cloud computing to effectively support the new paradigm. For example, as today you can see that in Microsoft’s SaaS offerings, there are both single-tenant and multi-tenant versions of Exchange, SharePoint, Office Communications Online suites; and in the PaaS offerings, SQL Azure is a fully multi-tenant relational database service and not simply hosted SQL Server, and Windows Azure’s native roles are provided via a higher abstraction, container-like model, and not simply hosted Windows Server.

So then the question is, what’s the right cloud model for Active Directory? That is still under consideration, but my personal opinion is that we still need to carefully evaluate a couple of factors:

Do customers really want to outsource their identity management solution? Is there really a lot of demand for hosted enterprise identity management services?

What are the true benefits of hosting the identity management solution elsewhere? Just some cost savings from managing your own servers? That might be the case for smaller companies but larger organizations prefer the private cloud approach

For example, the identity management solution is essential in managing access control across an IT architecture. Wouldn’t it work better if it’s maintained closer, in terms of proximity, to the assets it’s intended to manage? Keep in mind that most “pure cloud” vendors who advocate otherwise, use their own identity management infrastructure hosted in their own data centers

And from an external, hybrid cloud, and B2B integration perspective, identity federation works pretty well to enable single sign-on across resources deployed in separate data centers and security domains

Lastly, what’s the right model for cloud-based identity management solution? Is it making the online identity metasystem more “enterprise-like”, such as adding some of the fine-grained management capabilities to the Live ID infrastructure, or developing a multi-tenant version of Active Directory that can better address some of the consumer identity scenarios?

Similarly for BizTalk, many of the above points apply as well for its cloud aspirations, plus a few specific ones (again just my personal opinion):

Process and data integration between organizations (such as traditional B2B scenarios) and different cloud-based services operated by separate organizations, is a lot different from traditional enterprise integration scenarios where enterprise service bus type of solutions fit in today. It has a lot more to do with service management, tracking, and orchestration in an increasingly more service-oriented manner; as opposed to having system and application-specific adapters to enable communication

Also, EAI and ESB type of integration places the center of gravity in terms of context and entity definition within one enterprise. Cloud-based integration, such as outsourced process management, multi-enterprise integration, etc.; shifts the center of gravity into the cloud and in a much more shared/federated manner

Question then is, what is the right type of integration-as-a-service solution that would work well for cloud-based integration scenarios? We have many integration hub service offerings today, many grew from their EDI/VAN, managed FTP, B2B, supply chain management, e-commerce, and RosettaNet, ebXML, HL7 roots. The landscape for external integration is vastly more diverse and generic (in each vertical) than any one organization’s way of managing processes

Some initial direction can be observed in Windows Azure AppFabric today, with the Service Bus offering. It works as an Internet service bus to help facilitate communication regardless of network topologies. It advocates a federated application model in a distributed environment, where processes and data are integrated in a service-oriented manner. It’s a much more dynamic environment (changes are more frequent and preferred) than a more static environment in an on-premise systems integration scenario

Thus is it correct to simply have BizTalk hosted and sell it as a cloud-based integration solution? Will an on-premise systems integration approach effectively handle integration scenarios in a more dynamic environment?

Pure cloud pundits often ask “why not cloud?” But I think it’s also fair to counter that question with “why?” Not all IT functions and workloads are ideally suited for external deployment. A prudent architect should carefully consider what are the right things to move into the cloud, and what are the right things to still keep on-premise, instead of doing external cloud deployment just for the sake of doing so. There’s a big difference between “can” and “should”.

One way of looking at finding the right balance between what should move into the cloud, is where the users are. Applications that are consumed by users on the Web, are excellent candidates to move into public clouds. Internal business applications that support a back-office operation, often are still better maintained on-premise; closer to an organization’s workforce. It’s also a nice general approach of balancing trade-offs between security and control, scalability and availability.

Thus eventually Microsoft will have some form of enterprise-level identity management solution, and multi-enterprise integration solution, available as cloud-based services. But these don’t necessarily have to be hosted Active Directory and BizTalk Server as we know them today. :)

Tim Ferguson quoted Bob Muglia “Developer tools will be the key to Azure's success, says Microsoft chief” in a preface to his Windows Azure: Inside Microsoft's cloud computing strategy (Page 3) post to Silicon.com of 6/28/2010:

ANALYSIS

...saying: "[Azure is] a mature-released product but there's still a lot of work to be done. The list of features to add to Windows Azure is very long."

Microsoft is updating Azure every couple of months to make sure it's as compatible with as much technology as possible, is easy to use and can meet the expected increase in demand that will be placed on it, Muglia said.

"We're building all of these services and all of these facilities into the core platform. You don't have to be a high priest of Silicon Valley to write a cloud application on Windows Azure."

Among the requests for additional functionality from users have been services already seen on the on-premise Windows Server operating system, such as the recently introduced identity federation tools.

Other features have been added since February including different sizes of SQL Azure databases and virtual machines (VMs) - ranging from a single processor core with 1.75GB RAM and 250GB hard disk to VMs with eight core processors, 14GB RAM and 2000GB of hard disk space.

There are also plans to add collaboration application SharePoint and Dynamics CRM to the services available on Azure platform.

New services in the pipeline include reporting functionality for the various applications within Azure. Reporting has already been introduced for SQL Azure but Muglia says this will soon be extended to other applications to allow users to keep track of usage and demand.

A more complex project is focusing the encryption of data as it is entered, processed and taken out of Azure, while still allowing developers to query the data - the development of which Muglia describes as "not exactly rocket science - but it's close".

The constant demand to develop its Azure and other cloud computing technology, such as the Business Productivity Online Suite, has also prompted Microsoft to change its engineering processes.

"Our engineering processes have to change pretty substantially because instead of moving to deliver a piece of software every two to three years, we have to instead focus on how we can do a much more regular cadence of technology delivery. It's really the difference between when you ship something and you're done, to where you ship something [and] you're just beginning the process because you've got to maintain and run that service," Muglia said.

"It is a very different approach and it's one that we're either transforming or have already transformed our engineering organisation to really focus on," he added.

<Return to section navigation list>

Cloud Security and Governance

• Salvatore Genovese asked “What is the best way to manage my data in a shared computing environment?” as a preface to his Addressing the Top Threats of Cloud Computing post of 7/1/2010:

As you navigate the cloud, security may be one of your primary concerns. Are you wondering:

- What are the security threats I should be concerned about?

- How do I eliminate or minimize the threats?

- How do I secure my data outside of my firewall?

- What is the best way to manage my data in a shared computing environment?

To learn more about the top security threats facing cloud computing, the impact of each threat and the solutions and services available to address them, please download the FREE whitepaper from Unisys.

Salvatore Genovese is a Cloud Computing consultant and an i-technology blogger based in Rome, Italy.

J. Nicholas Hoover’s Informed CIO: Cloud Compliance in Government report is available for download from InformationWeek’s Analytics site:

Compliance can easily become a headache, especially when dealing with new technologies. New paradigms mean new twists on old questions about security, access, privacy and more. Nowhere has the head-scratching on compliance been as heavy as late as in cloud computing. From the Federal Information Security Management Act to the Export Administrative Regulations, compliance in the cloud requires a fresh analysis.

Among the key compliance concerns, FISMA likely looms largest: 80% of InformationWeek survey respondents placed assuring security of systems and data among the top three biggest issues their organizations face in moving ahead with cloud computing. Agencies will have to ensure that, among other things, cloud computing deployments meet system authorization requirements and can be continuously monitored. Multi-tenancy, virtualization, transparency by service providers, encryption and identity management all take on new roles in cloud compliance. A new General Services Administration-led process, known as FedRAMP, could soon simplify this process greatly, taking much of the hard work out of agencies' hands.

Records management could also pose new challenges, especially with public clouds. For example, since another agency or third party may be hosting agency data, records retention policies have to be explicitly laid out and followed by that third party, and safeguards have to be put in place to ensure an agency can get its data out when that's required.

There are other compliance implications as well. Export control regulations might require deep analyses of any data agencies allow to be hosted in overseas data centers; the Department of Defense has its own set of compliance requirements; and everything from the Health Insurance Portability and Accountability Act to the Federal Educational Rights and Privacy Act might apply, depending on circumstances.

Lori MacVittie posted Agile Operations: A Formula for Just-In-Time Provisioning to F5’s DevCentral blog on 7/1/2010:

One of the ways in which traditional architectures and deployment models is actually superior (yes, I said superior) to cloud computing is in provisioning. Before you label me a cloud heretic, let me explain. In traditional deployment models capacity is generally allocated based on anticipated peaks in demand. Because the time to acquire, deploy, and integrate hardware into the network and application infrastructure this process is planned for and well-understood, and the resources required are in place before they are needed.

In cloud computing, the benefit is that the time required to acquire those resources is contracted to virtually nothing, making capacity planning much more difficult. The goal is just-in-time provisioning – resources are not provisioned until you are sure you’re going to need them because part of the value proposition of cloud and highly virtualized infrastructure is that you don’t pay for resources until you need them. But it’s very hard to provision just-in-time and sometimes the result will end up being almost-but-not-quite-in-time. Here’s a cute [whale | squirrel | furry animal] to look at until service is restored.

While fans of Twitter’s fail whale are loyal and everyone will likely agree its inception and subsequent use bought Twitter more than a bit of patience with its often times unreliable service, not everyone will be as lucky or have customers as understanding as Twitter. We’d all really rather prefer not to see the Fail Whale, regardless of how endearing he (she? it?) might be.

But we also don’t want to overprovision and potentially end up spending more money than we need to. So how can these two needs be balanced?

THE VARIABLES

The first thing we need to do is know, in a given cloud, how long it will take to provision capacity and put it into the rotation. It would be nice if cloud providers offered a service devops could query to get the “current wait time” (a la customer service queues) but until then this timing will certainly need to be obtained by simply keeping track yourself.

The other “constant” (if there is such a thing in a elastic environment) is the capacity of the instances you are using. We’ll consider this a constant at this point because honestly, we’re not ready to move to the higher levels of enlightenment (and programmability) required to dynamically determine this value – though that will most certainly be the subject of a future, future post. Capacity needs to be in units measurable by the solution aggregating requests (a strategic point of control). This is almost certainly a Load balancer or application delivery controller of some kind, as these components are what enable elastic scalability and basically make cloud work. Typical units might be RPS (requests per second) but because of differences in the way different types of requests consume resources it may be easier and more consistent across applications to use connections, as in “concurrent open connections” as it is one of the limiting factors on capacity of application services.

The other two variables we need are only available at run-time, dynamically. You need to know the existing load – in the same units as capacity – and the current resource consumption rate. The resource consumption rate should be in the same units as capacity and in the same time unit as time to provision. If that’s minutes, use minutes. If that’s seconds, use seconds, and so on. It should be noted that the resource consumption rate is the harder of the two to obtain, requiring access to the historical performance statistics of the aggregating component (the load balancer).

But let’s assume you can and do have all these variables. How is that useful?

THE FORMULA

The formula is actually a fairly simple one once you’ve got the variables. You’re trying to figure out how much time you have before capacity is depleted (and hoping that the answer is smaller than the time to provision). Just-in-time provisioning, as the term implies, is an attempt to formulaically determine when to start the provisioning process such that capacity always meets demand without over-provisioning. Now, you’re always hedging your bets that a high resource consumption rate will continue in the next “time to provision”. It may be the case that the “spike” is over before the new instance is provisioned, but in this case you’re better safe than sorry, right? Unless your customers like seeing a [whale | squirrel | furry animal] and don’t mind the wait.

Consider the following example:

Total capacity right now is 1000 connections. The existing load is 800 connections. Connections are currently being consumed at a rate of 200 per minute. Provisioning more capacity takes 5 minutes. 1000 – 800 = 200 / 200 per minute = 1 minute of capacity left.

Provisioning should have begun at least 4 minutes ago, and optimally 9 minutes ago (too many years developing software for me – fudge factor included) to ensure capacity was available. In this situation, someone is getting a picture of a [whale | squirrel | furry animal].

The trick for devops is to tune the threshold at which the provisioning process begins. Too soon and you might be wasting resources (and money), too late and you end up with timeouts and angry users. Devops needs a way to programmatically evaluate the results and decide, based on the application (it may be more sensitive to failure than others) and the business significance of the transaction (purchase processes may need more warning than search or general browsing), when it is appropriate to start provisioning in such a way as to ensure availability without incurring a lot of cost overhead.

DYNAMIC INFRASTRUCTURE can ENABLE THIS TODAY

Now I’m sure this sounds like something out of science fiction, but it’s not. The variables can be obtained, if not easily, and the formula can easily be codified into scripts or management applications that enable this entire process to be automated. At a minimum, it should be possible for any skilled devop (developer or operations focused) to create a script/application/widget/gadget that gathers the data required and displays an alert when it’s time to provision – I suggest a nice HTML interface that encloses the entire page in BLINK tags, because nothing says FIX THIS NOW than BLINKING TEXT, right?

Regardless of how it’s actually to put to use, just-in-time provisioning is the goal of agile operations. How that happens is by leveraging cloud computing and highly virtualized data centers and combining that flexibility with the agility of a dynamic infrastructure. Remember, Infrastructure 2.0 isn’t just about configuration through automation. That’s nice, but it’s not the whole enchilada. It’s also about dynamism and flexibility at run-time, in providing actionable data and capabilities that allow elastic scalability to be truly elastic.

<Return to section navigation list>

Cloud Computing Events

No significant articles today.

<Return to section navigation list>

Other Cloud Computing Platforms and Services

Guy Rosen announced a new entrant in his State of the Cloud – July 2010 report of 7/1/2010:

After a brief intermission, we’re back this month with an action-packed State of the Cloud report. In this month’s analysis of the top cloud providers we’ll be debuting a newcomer into the charts which makes quite an entrance. We’ll also run the analysis with an alternative data set and see if it confirms or refutes our findings.

Snapshot for July 2010

Here are the results for this month. Welcome Linode.

The top pair continue their steady march forward with 13% and 19% growth for Amazon EC2 and Rackspace Cloud Servers respectively, as compared to the last report two months ago. Amazon EC2 is the first of our contenders to smash the 3000-site barrier.

Linode is this month’s surprise, jumping straight into third place. I was deliberating whether Linode should be included in the report. Following some lively discussions on Twitter, the consensus was that Linode looks like a duck and walks like a duck, even if it doesn’t bother quacking. (This, as opposed to some providers that work hard to market themselves cloud while they don’t really seem to be.)

Linode offer a rich feature set and have an outstanding reputation among their customer community. The last time I saw this picture it was Slicehost, who ended up being acquired by Rackspace to jumpstart their Cloud Servers offering. What does the future hold for Linode?

Trends

Uncovering Linode’s footprint in the historical data collected, we witness remarkable growth. Linode has grown by 270% over the past 11 months, more than any other of the providers tracked. …

Guy continued with a comparative analysis with data from Alexa, which didn’t show any difference in ranking.

James Hamilton’s Velocity 2010 post of 7/1/2010 includes links to a video of his Velocity 2010 Keynote and its slides, as well as a recap of his Facebook facilities and Yahoo Hadoop analyses:

I did a talk at Velocity 2010 last week. The slides are posted at Datacenter Infrastructure Innovation and the video is available at Velocity 2010 Keynote. Urs Holze Google Senior VP of infrastructure also did a Velocity keynote. It was an excellent talk and is posted at Urs Holzle at Velocity 2010. Jonathan Heilliger, Facebook VP of Technical Operations spoke at Velocity as well. A talk summary is up at: Managing Epic Growth in Real Time. Tim O’Reilly did a talk: O’Reilly Radar. Velocity really is a great conference.

Last week I posted two quick notes on Facebook: Facebook Software Use and 60,000 Servers at Facebook. Continuing on that theme, a few other Facebook Data points that I have been collecting of late:

From Qcon 2010 in Beijing (April 2010): memcached@facebook:

How big is Facebook:

- 400m active users

- 60m status updates per day

- 3b photo uploads per month

- 5b pieces of content shared each week

- 50b friend graph edges

- 130 friends per user on average

- Each user clicks on 9 pieces of content each month

Thousands of servers in two regions [jrh: 60,000]

Memcached scale:

- 400m gets/second

- 28m sets/second

- 2T cached items

- Over 200 TB

Networking scale:

- Peak rx: 530m pkts/second (60GB/s)

- Peak tx: 500m pkts/second (120GB/s)

Each memcached server:

- Rx: 90k pkts/sec (9.7MB/s)

- Tx 94k pkts/sec (19 MB/s)

- 80k gets/second

- 2k sets/s

- 200m items

Phatty Phatty Multiget

- Php is single threaded and synchronous so need to get multiple objects in a single request to be efficient and fast

- Cache segregration: Different objects have different lifetimes so separate out

- Incase problem: The use of multiget increased performance but lead to incast problem. The talk is full of good data and worth a read.

From Hadoopblog, Facebook has the world’s Largest Hadoop Cluster:

- 21 PB of storage in a single HDFS cluster

- 2000 machines

- 12 TB per machine (a few machines have 24 TB each)

- 1200 machines with 8 cores each + 800 machines with 16 cores each

- 32 GB of RAM per machine

- 15 map-reduce tasks per machine

The Yahoo Hadoop cluster is reported to be twice the node count of the Facebook cluster at 4,000 nodes: Scaling Hadoop to 4000 nodes at Yahoo!. But, it does have less disk:

4000 nodes

- 2 quad core Xeons @ 2.5ghz per node

- 4x1TB SATA disks per node

- 8G RAM per node

- 1 gigabit ethernet on each node

- 40 nodes per rack

- 4 gigabit ethernet uplinks from each rack to the core

- Red Hat Enterprise Linux AS release 4 (Nahant Update 5)

- Sun Java JDK 1.6.0_05-b13

- Over 30,000 cores with nearly 16PB of raw disk!

[Related content:]

Audrey Watters reported Open Source Cloud Software Eucalyptus Systems Closes $20 Million Investment Round for the ReadWriteCloud on 7/1/2010:

Eucalyptus Systems, a Santa Barbara based startup, announced today it has closed a $20 million round of funding. Led by new investor New Enterprise Associates (NEA) with participation from current investors Benchmark Capital and BV Capital, this is the second round of funding for the open source private cloud software provider, bringing its total capital raised to date to $25.5 million.

Eucalyptus provides software that allows organizations to deploy private and hybrid cloud computing environments within a secure IT infrastructure. Eucalyptus supports the same APIs as public clouds and boasts full compatibility with the Amazon Web Services infrastructure, addressing interoperability and facilitating the movement of data between public and private.

The software is available in two editions: Eucalyptus Open Source and the recently release Enterprise Edition.

The company says that today's VC funding will be used to scale development, marketing and sales, and Eucalyptus hopes to meet the increasing demand for cost-efficient and scalable infrastructure solutions that address enterprise needs for both private and hybrid clouds.

Although Eucalyptus took an early lead in this space, there has been substantial activity in this area lately, with the recent launch of Nimbula for example.

• Jon Bodkin asserted “A company exec notes that other companies fall short of having all the necessary software to build and move into a cloud” in the preface to his Red Hat says only it and Microsoft can build entire clouds post to InfoWorld’s Cloud Computing blog of 6/30/2010:

Red Hat has come up with an interesting sales pitch that seems to benefit rival Microsoft as much as Red Hat.