Windows Azure and Cloud Computing Posts for 11/11/2013+

Top Stories This Week:

- ‡ Brad Anderson (@InTheCloudMSFT) took on Amazon Web Services with his Success with Hybrid Cloud: Why Hybrid? post of 11/14/2013 in the Windows Azure Pack, Hosting, Hyper-V and Private/Hybrid Clouds section.

- S. “Soma” Somasegar posted Visual Studio 2013 Launch: Announcing Visual Studio Online to his blog on 11/13/2013 in the Windows Azure Cloud Services, Caching, APIs, Tools and Test Harnesses section.

- Dan Plastina (@TheRMSGuy) described Office 365 Information Protection using Azure Rights Management in an 11/11/2013 and two earlier posts to the Microsoft Rights Management Service (RMS) Team blog in the Cloud Security, Compliance and Governance section.

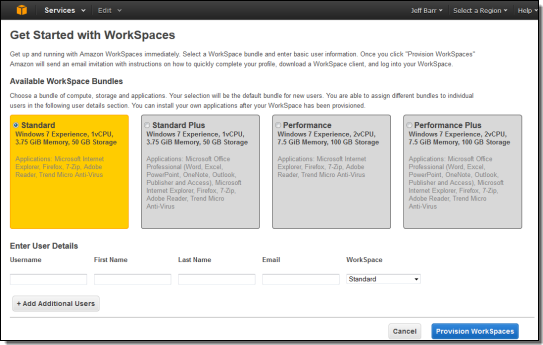

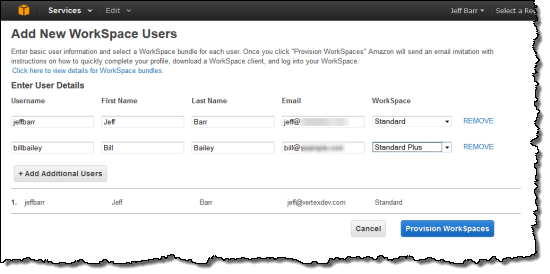

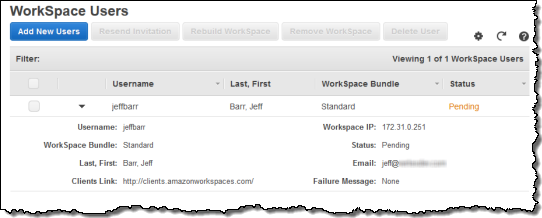

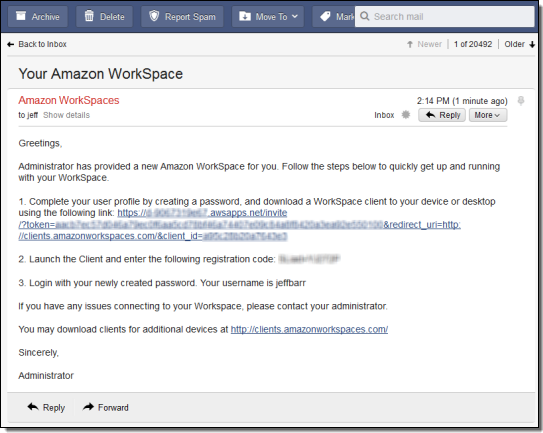

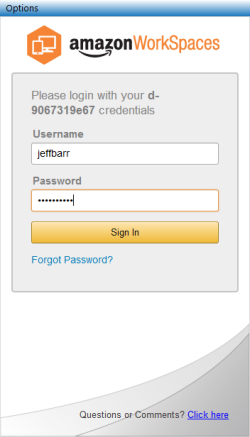

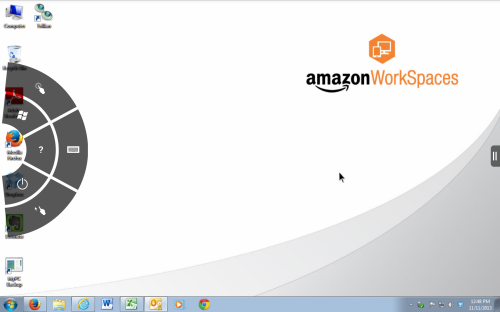

- Jeff Barr (@jeffbarr) announced Amazon WorkSpaces - Desktop Computing in the Cloud in an 11/13/2013 post at the end of the Other Cloud Computing Platforms and Services section.

| A compendium of Windows Azure, Service Bus, BizTalk Services, Access Control, Caching, SQL Azure Database, and other cloud-computing articles. |

‡ Updated 11/18/2013 with new articles marked ‡.

• Updated 11/16/2013 with new articles marked •.

Note: This post is updated weekly or more frequently, depending on the availability of new articles in the following sections:

- Windows Azure Blob, Drive, Table, Queue, HDInsight and Media Services

- Windows Azure SQL Database, Federations and Reporting, Mobile Services

- Windows Azure Marketplace DataMarket, Power BI, Big Data and OData

- Windows Azure Service Bus, BizTalk Services, Scheduler and Workflow

- Windows Azure Access Control, Active Directory, and Identity

- Windows Azure Virtual Machines, Virtual Networks, Web Sites, Connect, RDP and CDN

- Windows Azure Cloud Services, Caching, APIs, Tools and Test Harnesses

- Windows Azure Infrastructure and DevOps

- Windows Azure Pack, Hosting, Hyper-V and Private/Hybrid Clouds

- Visual Studio LightSwitch and Entity Framework v4+

- Cloud Security, Compliance and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

<Return to section navigation list>

Windows Azure Blob, Drive, Table, Queue, HDInsight and Media Services

• Neil MacKenzie (@mknz) explained [LINQ] Queries in the Windows Azure [Table] Storage Client Library v2.1 in a 11/3/2013 post (missed when published):

Windows Azure Storage has been a core part of the Windows Azure Platform since the public preview in 2008. It supports three storage features: Blobs, Queues and Tables. The Blob Service provides high-scale file storage – with prominent uses being: the storage of media files for web sites; and the backing store for the VHDs used as the disks attached to Windows Azure VMs. The Queue Service provides a basic and easy-to-use messaging system that simplifies the disconnected communication between VMs in a Windows Azure cloud service. The Table Service is a fully-managed, cost-effective, high-scale, key-value NoSQL datastore.

The definitive way to access Windows Azure Storage is through the Windows Azure Storage REST API. This documentation is the definitive source of what can be done with Windows Azure Tables. All client libraries, regardless of language, use the REST API under the hood. The Storage team has provided a succession of .NET libraries that sit on top of the REST API. The original Storage Client library had a strong dependence on WCF Data Services, which affected some of the design decisions. In 2012, the Storage team released a completely rewritten v2.0 library which removed the dependence and was more performant.

The Storage Client v2.0 library provided a fluent library for query invocation against Wizard Azure Tables. v2.1 of the library added a LINQ interface for query invocation. The LINQ interface is significantly easier to use than the fluent library while supporting equivalent functionality. Consequently, only the LINQ library is considered in this post.

The MSDN documentation for the Windows Azure Storage Library is here. The Windows Azure Storage team has provided several posts documenting the Table Service API in the .NET Storage v2.x library (2.0, 2.1). Gaurav Mantri has also posted on the Table Service API as part of an excellent series of posts on the Storage v2.0 library. I did a post in 2010 that described the query experience for Windows Azure Tables in the Storage Client v1.x library.

Overview of Windows Azure Tables

Windows Azure Tables is a key-value, NoSQL datastore in which entities are stored in tables. It is a schema-less datastore so that each entity in a table can have a different schema for the properties contained in it. The primary key, and only index, for a table is a combination of the PartitionKey and RowKey that must exist in each row. The PartitionKey specifies the partition (or shard) for an entity while the RowKey provides uniqueness within a partition. Different partitions may be stored on different physical nodes, with the Table Service managing this allocation.

The REST API provides limited query capability. It supports filtering as well as the specification of the properties to be returned. A query can be filtered on combinations of any of the properties in the entity. The right side of each filter must be against a constant, and it is not possible to compare values of different properties. It is also possible to specify a limit on the number of entities to be returned by a query. The general rules for queries are documented here with specific rules for filters provided here.

The Table Service uses server-side paging to limit the amount of data that may be returned in a single query. Server-side paging is indicated by the presence of a continuation token in the response to the query. This continuation token can be provided in a subsequent query to indicate where the next “page” of data should start. A single query has a hard limit of 1,000 entities in a single page and can execute for no more than 5 seconds. Furthermore, a page return is also caused by all the entities hosted on a single physical node having been returned. An odd consequence of this is that it is possible to get back zero entities along with a continuation token. This is caused by the Table Service querying a physical node where no entities are currently stored. The only query that is guaranteed never to return a continuation token is one that filters on both PartitionKey and RowKey. Note that any query which does not filter on either PartitionKey or RowKey will result in a table scan.

The Table Service returns queried data as an Atom feed. This is a heavyweight XML protocol that inflates the size of a query response. The Storage team announced at Build 2013 that it would support the use of JSON in the query response which should reduce the size of a query response. To reduce the amount of data returned by a query, the Table Service supports the ability to shape the returned data through the specification of which properties should be returned for an entity.

The various client libraries provide a native interface to the Table Service that hides much of the complexity of filtering and continuation tokens. For example, the .NET library provides both fluent and LINQ APIs allowing a familiar interaction with Windows Azure Tables.

ITableEntity

The ITableEntity class provides the interface implemented by all classes used to represent entities in the Storage Client library v2.x. ITableEntity defines the following properties:

- ETag- entity tag used for optimistic concurrency

- PartitionKey – partition key for the entity

- RowKey – row key for the entity

- Timestamp – timestamp for last update

The library contains two classes implementing the ITableEntity interface:

TableEntity provides the base class for user-defined classes used to represent entities in the Storage Client library. These derived classes expose properties representing the properties of the entity. DynamicTableEntity is a sealed class which stores the entity properties inside an IDictionary<String,EntityProperty> property named Properties.

The use of strongly-typed classes derived from TableEntity is useful when entities are all of the same type. However, DynamicTableEntity is helpful when handling tables which take full advantage of the schema-less nature of Windows Azure Tables and have entities with different schemas in a single table.

Basic LINQ Query

LINQ is a popular method for specifying queries since it provides a natural syntax that makes explicit the nature of the query and the shape of returned entities. The Storage Client library supports LINQ and uses it to expose various query features of the underlying REST interface to .NET.

A LINQ query is created using the CreateQuery<TEntity>() method of the CloudTable class. TEntity is a class derived from ITableEntity. In the query the where keyword specifies the filters and the select keyword specifies the properties to be returned.

With BookEntity being an entity class derived from TableEntity, the following is a simple example using the Storage Client library of a LINQ query against a table named book:

CloudStorageAccount storageAccount = new CloudStorageAccount(

new StorageCredentials(accountName, accountKey), true);

CloudTableClient tableClient = storageAccount.CreateCloudTableClient();

CloudTable bookTable = tableClient.GetTableReference(“book”);var query = from book in bookTable.CreateQuery<BookEntity>()

where book.PartitionKey == “hardback”

select book;foreach (BookEntity entity in query) {

String name = entity.Name;

String author = entity.Author;

}The definition of the query creates an IQueryable<BookEntity> but does not itself invoke an operation against the Table service. The operation actually occurs when the foreach statement is invoked. This example queries the book table and returns all entities where the PartitionKey property takes the value hardback. A table is indexed on PartitionKey and RowKey and data is returned from a query ordered by PartitionKey/RowKey. In this example the data is ordered by RowKey since the PartitionKey is fixed in the query.

The Storage Client library handles server-side paging automatically when the query is invoked. Consequently, if there are many entities satisfying the query a significant amount of data is returned.

Basic queries can be performed using IQueryable, as above. More sophisticated queries – with client-side paging and asynchronous invocation, for example – are handled by converting the IQueryable into a TableQuery.

Server Side Paging

The Storage library supports server-side paging using the Take() method. This allows the specification of the maximum number of entities that should be returned by query invocation. This limit is performed server-side so can significantly limit the amount of data returned and consequently the time taken to return the data.

For example, the above query can be modified to return a maximum of 10 entities:

var query = (from book in bookTable.CreateQuery<BookEntity>()

where book.PartitionKey == “hardback”

select book).Take(10);Note that this simple query can not by itself be used to page through the data from the client side. Multiple invocations always return the same entities. Paging through the data requires the handling of the continuation tokens returned by the server to indicate that there is additional data satisfying the query.

To handle continuation tokens, the IQueryable must be cast into a TableQuery<TElement>. This can be done either through direct cast or using the AsTableQuery() extension method. TableQuery<TElement> exposes an ExecuteSegmented() method which handles continuation tokens:

public TableQuerySegment<TElement>

ExecuteSegmented(TableContinuationToken continuationToken,

TableRequestOptions requestOptions = null,

OperationContext operationContext = null);This method invokes a query in which the result set starts with the entity indicated by an(opaque) continuation token, which should be null for the initial invocation. It also takes optional TableRequestOption (timeouts and retry policies) and OperationContext (log level) parameters. TableQuerySegment<TElement> is an IEnumerable that exposes two properties: ContinuationToken and Results. The former is the continuation token, if any, returned by query invocation while the latter is a List of the returned entities. A null value for the returned ContinuationToken indicates that all the data has been provided and that no more query invocations are needed.

The following example demonstrates the use of continuation tokens:

TableQuery<BookEntity> query =

(from book in bookTable.CreateQuery<BookEntity>()

where book .PartitionKey == “hardback”

select book).AsTableQuery<BookEntity>();TableContinuationToken continuationToken = null;

do {

var queryResult = query.ExecuteSegmented(continuationToken);foreach (BookEntity entity in queryResult)

{

String name = entity.Name;

String genre = entity.Genre;

}

continuationToken = queryResult.ContinuationToken;

} while (continuationToken != null);The TableQuery class also exposes various asynchronous methods. These include traditional APM methods of the form BeginExecuteSegmented()/EndExecuteSegmented() and modern Task-based (async/await) methods of the form ExecuteSegmentedAsync().

Extension Methods

The TableQueryableExtensions class in the Microsoft.WindowsAzure.Storage.Table.Queryable namespace provides various IQueryable<TElement> extension methods:

AsTableQuery() casts a query to a TableQuery<TElement>. Resolve() supports client-side shaping of the entities returned by a query. WithContext() and WithOptions() allow an operation context and request options respectively to be associated with a query.

Entity Shaping

The Resolve() extension method associates an EntityResolver delegate that is invoked when the results are serialized. The resolver can shape the output of the serialization into some desired form. A simple example of this is to perform client-side modification such as creating a fullName property out of firstName and lastName properties.

The EntityResolver delegate is defined as follows:

public delegate T EntityResolver<T>(string partitionKey, string rowKey,

DateTimeOffset timestamp,

IDictionary<string, EntityProperty> properties, string etag);The following example shows a query in which a resolver is used to format the returned data into a String composed of various properties in the returned entity:

var query =

(from book in bookTable.CreateQuery<DynamicTableEntity>()

where book.PartitionKey == “hardback”

select book).Resolve( (pk,rk,ts,props,etag) =>

String.Format(“{0} wrote {1} in {2}”,

props["Author"].StringValue, props["Name"].StringValue,

props["Year"].Int32Value));More sophisticated resolvers can be defined separately. For example, the following example shows the returned entities being shaped into instances of a Writer class:

EntityResolver<Writer> resolver = (pk, rk, ts, props, etag) =>

{

Writer writer = new Writer()

{

Information = String.Format(“{0} wrote {1} in {2}”,

props["Author"].StringValue, props["Name"].StringValue,

props["Year"].Int32Value)

};

return writer;

};var query =

(from book in bookTable.CreateQuery<DynamicTableEntity>()

where book.PartitionKey == “hardback”

select book).Resolve(resolver);Schema-Less Queries

The DynamicTableEntity class is used to invoke queries in a schema-free manner, since the retrieved properties are stored in a Dictionary. For example, the following example performs a filter using the DynamicTableEntity Properties collection and then puts the returned entities into a List of DynamicTableEntity objects:

var query = (

from entity in bookTable.CreateQuery<DynamicTableEntity>()

where entity.Properties["PartitionKey"].StringValue == “hardback” &&

entity.Properties["Year"].Int32Value == 1912

select entity);List<DynamicTableEntity> books = query.ToList();

The individual properties of each entity are accessed through the Properties collection of the DynamicTableEntity.

Summary

The Windows Azure Storage Client v2.1 library supports the use of LINQ to query a table stored in the Windows Azure Table Service. This library exposes, in a performant .NET library, all the functionality provided by the underlying Windows Azure Storage REST API.

• Bruno Terkaly (@brunoterkaly) described How to upload publicly visible photos from a Windows Store Application to Windows Azure Storage version 2.1 in an 11/4/2013 post (missed when published:

Overview

In this module we learn about uploading photos to Windows Azure Storage using the latest version of the Windows Azure Storage SDK (version 2.1).

Download Visual Studio Solution

Using Pre-Release Windows Azure Storage SDK

NuGet will be used to install the necessary assemblies and references. It represents the fastests and easiest way to support Windows Azure Storage from a Windows Store application.

Objectives

In this hands-on lab, you will learn how to:

- Upload images from a Windows Store application into Windows Azure Storage using Version 2 of the Windows Azure Storage Library.

Setup

In order to execute the exercises in this hands-on lab you need to set up your environment.

- Start Visual Studio

- Signed up with a Window Azure Account

…

Bruno continues with details of the six tasks involved.

… Summary

In this post, you learned a few things:

- How to upload files to Windows Azure Storage from a Windows Store application

- How to use the Windows Azure Portal to create a Storage Account

- How to use Server Explorer to view files in storage

- How to use NuGet to add support for Windows Azure Storage

Alexandre Brisebois (@Brisebois) described how to Create Predictable GUIDs for your Windows Azure Table Storage Entities in a 11/14/2013 post:

This week I was face with an odd scenario. I needed to track URIs that are store in Windows Azure Table Storage. Since I didn’t want to use the actual URIs as row keys I tried to find a way to create a consistent hash compatible with Windows Azure Table Storage Row & Primary Keys. This is when I came across an answer on Stack Overflow about converting URIs into GUIDs.

The "correct" way (according to RFC 4122 §4.3) is to create a name-based UUID. The advantage of doing this (over just using a MD5 hash) is that these are guaranteed not to collide with non-named-based UUIDs, and have a very (very) small possibility of collision with other name-based UUIDs. [source]

Using the code referenced in this answer I was able to put together an IdentityProvider who’s job is to generate GUIDs based on strings. In my case I use it to create GUIDs based on URIs.

public class IdentityProvider

{

public Guid MakeGuid(Uri uri)

{

var guid = GuidUtility.Create(GuidUtility.UrlNamespace, uri.AbsoluteUri);

return guid;

}

}Creating predictable GUIDs can come quite in handy with Windows Azure Table Storage, because it allows you to create a GUID based an entities’ content. By doing so, you can find entities without performing full table scans. Using the following code, found on GitHub, I was able to build an efficient URI matching system. Be sure to give credit if you use it.

using System;

using System.Security.Cryptography;

using System.Text;namespace Logos.Utility

{

/// <summary>

/// Helper methods for working with <see cref="Guid"/>.

/// </summary>

public static class GuidUtility

{

/// <summary>

/// Creates a name-based UUID using the algorithm from RFC 4122 4.3.

/// </summary>

/// <param name="namespaceId">The ID of the namespace.</param>

/// <param name="name">The name (within that namespace).</param>

/// <returns>A UUID derived from the namespace and name.</returns>

/// <remarks>

/// See <a href="http://code.logos.com/blog/2011/04/generating_a_deterministic_guid.html">

/// Generating a deterministic GUID</a>.

/// </remarks>

public static Guid Create(Guid namespaceId, string name)

{

return Create(namespaceId, name, 5);

}/// <summary>

/// Creates a name-based UUID using the algorithm from RFC 4122 4.3.

/// </summary>

/// <param name="namespaceId">The ID of the namespace.</param>

/// <param name="name">The name (within that namespace).</param>

/// <param name="version">The version number of the UUID to create; this value must be either

/// 3 (for MD5 hashing) or 5 (for SHA-1 hashing).</param>

/// <returns>A UUID derived from the namespace and name.</returns>

/// <remarks>

/// See <a href="http://code.logos.com/blog/2011/04/generating_a_deterministic_guid.html">

/// Generating a deterministic GUID</a>.

/// </remarks>

public static Guid Create(Guid namespaceId, string name, int version)

{

if (name == null)

throw new ArgumentNullException("name");

if (version != 3 && version != 5)

throw new ArgumentOutOfRangeException("version", "version must be either 3 or 5.");// convert the name to a sequence of octets

// (as defined by the standard or conventions of its namespace) (step 3)

// ASSUME: UTF-8 encoding is always appropriate

byte[] nameBytes = Encoding.UTF8.GetBytes(name);// convert the namespace UUID to network order (step 3)

byte[] namespaceBytes = namespaceId.ToByteArray();

SwapByteOrder(namespaceBytes);// comput the hash of the name space ID concatenated with the name (step 4)

byte[] hash;

using (HashAlgorithm algorithm = version == 3 ? (HashAlgorithm)MD5.Create() : SHA1.Create())

{

algorithm.TransformBlock(namespaceBytes, 0, namespaceBytes.Length, null, 0);

algorithm.TransformFinalBlock(nameBytes, 0, nameBytes.Length);

hash = algorithm.Hash;

}// most bytes from the hash are copied straight to the bytes of

// the new GUID (steps 5-7, 9, 11-12)

byte[] newGuid = new byte[16];

Array.Copy(hash, 0, newGuid, 0, 16);// set the four most significant bits (bits 12 through 15) of the time_hi_and_version field

// to the appropriate 4-bit version number from Section 4.1.3 (step 8)

newGuid[6] = (byte)((newGuid[6] & 0x0F) | (version << 4));// set the two most significant bits (bits 6 and 7) of the clock_seq_hi_and_reserved

// to zero and one, respectively (step 10)

newGuid[8] = (byte)((newGuid[8] & 0x3F) | 0×80);// convert the resulting UUID to local byte order (step 13)

SwapByteOrder(newGuid);

return new Guid(newGuid);

}/// <summary>

/// The namespace for fully-qualified domain names (from RFC 4122, Appendix C).

/// </summary>

public static readonly Guid DnsNamespace = new Guid("6ba7b810-9dad-11d1-80b4-00c04fd430c8");/// <summary>

/// The namespace for URLs (from RFC 4122, Appendix C).

/// </summary>

public static readonly Guid UrlNamespace = new Guid("6ba7b811-9dad-11d1-80b4-00c04fd430c8");/// <summary>

/// The namespace for ISO OIDs (from RFC 4122, Appendix C).

/// </summary>

public static readonly Guid IsoOidNamespace = new Guid("6ba7b812-9dad-11d1-80b4-00c04fd430c8");// Converts a GUID (expressed as a byte array) to/from network order (MSB-first).

internal static void SwapByteOrder(byte[] guid)

{

SwapBytes(guid, 0, 3);

SwapBytes(guid, 1, 2);

SwapBytes(guid, 4, 5);

SwapBytes(guid, 6, 7);

}private static void SwapBytes(byte[] guid, int left, int right)

{

byte temp = guid[left];

guid[left] = guid[right];

guid[right] = temp;

}

}

}

<Return to section navigation list>

Windows Azure SQL Database, Federations and Reporting, Mobile Services

No significant articles so far this week.

<Return to section navigation list>

Windows Azure Marketplace DataMarket, Cloud Numerics, Big Data and OData

• The WCF Data Services (OData) Team announced a New and improved EULA! for the .NET OData Client and ODataLib on 11/13/2013:

TL;DR: You can now (legally) use our .NET OData client and ODataLib on Android and iOS.

Backstory

For a while now we have been working with our legal team to improve the terms you agree to when you use one of our libraries (WCF Data Services, our OData client, or ODataLib). A year and a half ago, we announced that our EULA would include a redistribution clause. With the release of WCF Data Services 5.6.0, we introduced portable libraries for two primary reasons:

- Portable libraries reduce the amount of duplicate code and #ifdefs in our code base.

- Portable libraries increase our reach through third-party tooling like Xamarin (more on that later).

It took some work to get there, and we had to make some sacrifices along the way, but we are now focused exclusively on portable libraries for client-side code. Unfortunately, our EULA still contained a clause that prevented the redistributable code from being legally used on a platform other than Windows.

OData and Xamarin: Extending developer reach to many platforms

We are really excited about Microsoft’s new collaboration with Xamarin. As Soma says, this collaboration will allow .NET developers to broaden the reach of their applications and skills. This has long been the mantra of OData – a standardized ecosystem of services and consumers that enables consumers on any platform to easily consume services developed on any platform. This collaboration will make it much easier to write a shared code base that allows consumption of OData on Windows, Android or iOS.

EULA change

To fully enable this scenario, we needed to update our EULA. We, along with several other teams at Microsoft, are rolling out a new EULA today that has relaxed the distribution requirements. Most importantly, we removed the clause that prevented redistributable code from being used on Android and iOS.

The new EULA is effective immediately for all of our NuGet packages. This means that (even though we already released 5.6.0) you can create a Xamarin project today, take a new dependency on our OData client, and legally run that application on any platform you wish.

Thanks

As always, we really appreciate your feedback. It frequently takes us some time to react, but the credit for this change is due entirely to customer feedback. We hear you. Keep it coming.

<Return to section navigation list>

Windows Azure Service Bus, Scheduler, BizTalk Services and Workflow

• Guarav Mantri (@gmantri) continued his series with Windows Azure Scheduler Service – Part II: Managing Cloud Services on 11/10/2013:

In the previous post about Windows Azure Scheduler Service, we talked about some of the basic concepts. From this post, we will start digging deep into various components of this service and will focus on managing these components using REST API. As promised in the last post, this and subsequent posts in this series will be code heavy.

In this post we will focus on Cloud Service component of this service and we will see how you can manage Cloud Service using REST API.

Cloud Service

Just to recap from our last post, a cloud service is a top level entity in this service. You would need to create a cloud service first before you can create a job. Few things about cloud service:

- Consider a cloud service as an application in which many of your job will reside and execute. A subscription can have many cloud services.

- A cloud service is data center specific i.e. when you create a cloud service, it resides in a particular data center. If you want your jobs to be performed from many data centers, you would need to create separate cloud services in each data center.

The Code!

Now let’s code! What I have done is created a simple console application and tried to consume REST API there. Before we dig into cloud service, let’s do some basic ground work as we don’t want to write same code over and over again.

Subscription Entity

As mentioned in the previous blog post, to authorize your requests you would need subscription id and a management certificate (similar to authorizing Service Management API requests). To encapsulate that, I created a simple class called “Subscription”.

using System; using System.Collections.Generic; using System.Linq; using System.Security.Cryptography.X509Certificates; using System.Text; using System.Threading.Tasks; namespace SchedulingServiceHelper { /// <summary> /// /// </summary> public class Subscription { /// <summary> /// Subscription id. /// </summary> public Guid Id { get; set; } /// <summary> /// Management certificate /// </summary> public X509Certificate2 Certificate { get; set; } } }Helper

Since we are going to make use of REST API, I created some simple helper class which is responsible for doing web requests and handling responses. Here’s the code for that.

using System; using System.Collections.Generic; using System.Linq; using System.Net; using System.Text; using System.Threading.Tasks; namespace SchedulingServiceHelper { public static class RequestResponseHelper { /// <summary> /// x-ms-version request header. /// </summary> private const string X_MS_VERSION = "2012-03-01"; /// <summary> /// XML content type request header. /// </summary> public const string CONTENT_TYPE_XML = "application/xml; charset=utf-8"; /// <summary> /// JSON content type request header. /// </summary> public const string CONTENT_TYPE_JSON = "application/json"; private const string baseApiEndpointFormat = "https://management.core.windows.net/{0}"; public static HttpWebResponse GetResponse(Subscription subscription, string relativeUri, HttpMethod method, string contentType, byte[] requestBody = null) { var baseApiEndpoint = string.Format(baseApiEndpointFormat, subscription.Id); var url = string.Format("{0}/{1}", baseApiEndpoint, relativeUri); var request = (HttpWebRequest) HttpWebRequest.Create(url); request.ClientCertificates.Add(subscription.Certificate); request.Headers.Add("x-ms-version", X_MS_VERSION); if (!string.IsNullOrWhiteSpace(contentType)) { request.ContentType = contentType; } request.Method = method.ToString(); if (requestBody != null && requestBody.Length > 0) { request.ContentLength = requestBody.Length; using (var stream = request.GetRequestStream()) { stream.Write(requestBody, 0, requestBody.Length); } } return (HttpWebResponse)request.GetResponse(); } } public enum HttpMethod { DELETE, GET, HEAD, MERGE, PATCH, POST, PUT, } public static class Constants { public const string Namespace = "http://schemas.microsoft.com/windowsazure"; } }Cloud Service Operations

Now we are ready to consume REST API for managing Cloud Service.

Cloud Service Entity

Let’s first create a class which will encapsulate the properties of a cloud service. It’s a pretty simple class. We will use this class throughout this post.

using System; using System.Collections.Generic; using System.IO; using System.Linq; using System.Net; using System.Text; using System.Threading.Tasks; using System.Xml.Linq; namespace SchedulingServiceHelper { public class CloudService { /// <summary> /// Cloud service name. /// </summary> public string Name { get; set; } /// <summary> /// Cloud service label. /// </summary> public string Label { get; set; } /// <summary> /// Cloud service description. /// </summary> public string Description { get; set; } /// <summary> /// Region where cloud service will be created. /// </summary> public string GeoRegion { get; set; } } }Create

As the name suggests, you use this operation to create a cloud service. To create a cloud service, you would need to specify all of the four properties mentioned above. You can learn more about this operation here: http://msdn.microsoft.com/en-us/library/windowsazure/dn528943.aspx.

Here’s a simple function I wrote to create a cloud service. This function is part of the “CloudService” class I mentioned above:

public void Create(Subscription subscription) { try { string requestPayLoadFormat = @"<CloudService xmlns:i=""http://www.w3.org/2001/XMLSchema-instance"" xmlns=""http://schemas.microsoft.com/windowsazure""><Label>{0}</Label><Description>{1}</Description><GeoRegion>{2}</GeoRegion></CloudService>"; var requestPayLoad = string.Format(requestPayLoadFormat, Label, Description, GeoRegion); var relativePath = string.Format("cloudServices/{0}", Name); using (var response = RequestResponseHelper.GetResponse(subscription, relativePath, HttpMethod.PUT, RequestResponseHelper.CONTENT_TYPE_XML, Encoding.UTF8.GetBytes(requestPayLoad))) { var status = response.StatusCode; } } catch (WebException webEx) { using (var resp = webEx.Response) { using (var streamReader = new StreamReader(resp.GetResponseStream())) { string errorMessage = streamReader.ReadToEnd(); } } throw; } }A few things to keep in mind:

- For “GeoRegion” element, possible values are: “uswest“, “useast“, “usnorth“, “ussouth“, “north europe“, “west europe“, “east asia“, and “southeast asia“. Any other values will result in 400 error. I have tried creating a service with each and every one of them and was able to successfully create them. Update: Based on the feedback I received – even though you could create a cloud service in all regions, at the time of writing of this post, Windows Azure only allows you to create a Job Collection in “ussouth” and “north europe” regions. Thus for trying out this service, you may want to create a cloud service in these regions only.

- The name of your cloud service can’t contain any spaces. It can start with a number and can contain both upper and lower case alphabets and can also contain hyphens.

- Since you send “Label” and “Description” as part of XML payload, I’m guessing that you would need to escape them properly like replacing “<” sign with “<” and so on however documentation does not mention any of that. Furthermore I wish they had kept it consistent with other Service Management API calls where elements like these must be Base64 encoded.

Delete

As the name suggests, you use this operation to delete a cloud service. This operation deletes the cloud service and all resources in it thus you would need to be careful when performing this operation. To delete a cloud service, you would just need the name of the cloud service. You can learn more about this operation here: http://msdn.microsoft.com/en-us/library/windowsazure/dn528936.aspx.

Here’s a simple function I wrote to delete a cloud service. This function is part of the “CloudService” class I mentioned above:

public void Delete(Subscription subscription) { try { var relativePath = string.Format("cloudServices/{0}", Name); using (var response = RequestResponseHelper.GetResponse(subscription, relativePath, HttpMethod.DELETE, RequestResponseHelper.CONTENT_TYPE_XML, null)) { var status = response.StatusCode; } } catch (WebException webEx) { using (var resp = webEx.Response) { using (var streamReader = new StreamReader(resp.GetResponseStream())) { string errorMessage = streamReader.ReadToEnd(); } } throw; } }Get

This is one undocumented operation I stumbled upon accidently. This operation returns the properties of a cloud service. The endpoint you would use for performing this operation would be “https://management.core.windows.net/<subscription-id>/cloudServices/<cloud-service-id>” and the HTTP method you would use is “GET”.

Here’s a simple function I wrote to get information about a cloud service. This function is part of the “CloudService” class I mentioned above:

public static CloudService Get(Subscription subscription, string cloudServiceName) { try { var relativePath = string.Format("cloudServices/{0}", cloudServiceName); using (var response = RequestResponseHelper.GetResponse(subscription, relativePath, HttpMethod.GET, RequestResponseHelper.CONTENT_TYPE_XML, null)) { using (var streamReader = new StreamReader(response.GetResponseStream())) { string responseBody = streamReader.ReadToEnd(); XElement xe = XElement.Parse(responseBody); var name = xe.Element(XName.Get("Name", Constants.Namespace)).Value; var label = xe.Element(XName.Get("Label", Constants.Namespace)).Value; var description = xe.Element(XName.Get("Description", Constants.Namespace)).Value; var geoRegion = xe.Element(XName.Get("GeoRegion", Constants.Namespace)).Value; return new CloudService() { Name = name, Label = label, Description = description, GeoRegion = geoRegion, }; } } } catch (WebException webEx) { using (var resp = webEx.Response) { using (var streamReader = new StreamReader(resp.GetResponseStream())) { string errorMessage = streamReader.ReadToEnd(); } } throw; } }Get operation also returns information about all the resources contained in that service. We’ll get back to this function again when we talk about job collections.

List

This is another undocumented operation I stumbled upon accidently. This operation returns the properties of all cloud services in a subscription. The endpoint you would use for performing this operation would be “https://management.core.windows.net/<subscription-id>/cloudServices” and the HTTP method you would use is “GET”.

Here’s a simple function I wrote to get information about all cloud services in a subscription. This function is part of the “CloudService” class I mentioned above:

public static List<CloudService> GetAll(Subscription subscription) { try { using (var response = RequestResponseHelper.GetResponse(subscription, "cloudServices", HttpMethod.GET, RequestResponseHelper.CONTENT_TYPE_XML, null)) { using (var streamReader = new StreamReader(response.GetResponseStream())) { string responseBody = streamReader.ReadToEnd(); List<CloudService> cloudServices = new List<CloudService>(); XElement xe = XElement.Parse(responseBody); foreach (var elem in xe.Elements(XName.Get("CloudService", Constants.Namespace))) { var name = elem.Element(XName.Get("Name", Constants.Namespace)).Value; var label = elem.Element(XName.Get("Label", Constants.Namespace)).Value; var description = elem.Element(XName.Get("Description", Constants.Namespace)).Value; var geoRegion = elem.Element(XName.Get("GeoRegion", Constants.Namespace)).Value; cloudServices.Add(new CloudService() { Name = name, Label = label, Description = description, GeoRegion = geoRegion, }); } return cloudServices; } } } catch (WebException webEx) { using (var resp = webEx.Response) { using (var streamReader = new StreamReader(resp.GetResponseStream())) { string errorMessage = streamReader.ReadToEnd(); } } throw; } }Complete Code

Here’s the complete code for CloudService class with all the operations. …

[Duplicate code elided for brevity]

… Summary

That’s it for this post. I hope you will find it useful. As always, if you find any issues with the code or anything else in the post please let me know and I will fix it ASAP.

In the next post, we will talk about Job Collections so hang tight

• Guarav Mantri (@gmantri) began a Windows Azure Scheduler Service (WASS) series with Windows Azure Scheduler Service – Part I: Introduction on 11/10/2013:

Recently Windows Azure announced a new Scheduler Service in preview. In this blog post, we will talk about some basic stuff to get you started. In the subsequent posts, we will drill down into more details.

What

Let’s first talk about what exactly is this service. As the name suggests, this service allows you to schedule certain tasks which you wish to do on a repeated basis and let Windows Azure take care of scheduler part of that task execution.

For example, you may want to ping your website every minute to see if it is working properly (like checking for HTTP Status 200). You could use this service for that purpose.

Please do realize that it’s a platform service or in other words it provides a scalable platform for task scheduling. The service will not execute the task itself. That’s something you would need to do on your own.

If we take the above example of pinging website, what you can instruct this service to do is ping the website every minute and it will ping the website at the time/interval specified by you and keep the result in job history.Activating

Currently this service is in “Preview” mode thus you would need to activate this service first before you can use it. To activate this service, go to account management portal (https://account.windowsazure.com) and after logging in, click on “preview features” tab and then clicking on “try it now” button next to “Windows Azure Scheduler” to activate this service in your subscription.

That’s pretty much it! Now you’re ready to use this service.

How to Use?

At the time of writing this blog, Windows Azure Portal does not expose any user interface to manage this service. However there’s a REST API which exposes this functionality. You can write an application which consumes this REST API to interact with this service. If you’re a .Net programmer, there is a .Net SDK over the REST API available for you. This is available as a Nuget package which you can get from here: http://www.nuget.org/packages/Microsoft.WindowsAzure.Management.Scheduler/0.9.0-preview.

Sandrino Di Mattia (http://fabriccontroller.net) has written an excellent blog post on using this .Net SDK. I would strongly recommended reading his post about this: http://fabriccontroller.net/blog/posts/a-complete-overview-to-get-started-with-the-windows-azure-scheduler/.

We will focus on REST API in this series of blog posts.

Concepts

Now let’s talk about some concepts associated with this service. In a nutshell, there are three things you would need to understand:

Cloud Service

A cloud service is a top level entity in this service. You would need to create a cloud service first before you can create a job. Few things about cloud service:

- Consider a cloud service as an application in which many of your job will reside and execute. A subscription can have many cloud services.

- A cloud service is data center specific i.e. when you create a cloud service, it resides in a particular data center. If you want your jobs to be performed from many data centers, you would need to create separate cloud services in each data center.

Job Collection

A job collection is the next level in entity hierarchy. This is where you group similar jobs together in a cloud service. Few things about job collection:

- A job collection is responsible for maintaining settings, quotas, and thresholds which are shared by all jobs in that collection.

- Since a job collection is a child entity of a cloud service, it is again data center specific. For a job to be performed from many data centers, you would need to create separate job collections in each cloud service specific to that particular data center.

Job

A job is the actual unit of execution. This is what gets executed at the time specified by you. Few things about jobs:

- At the time of writing this blog, this service allows two kinds of jobs – 1) Invoking a web endpoint over http/https and 2) post a message to a queue.

- Do realize that the service is simply invoking a web endpoint or posting a message to a queue and returns back a result. What you want to do with the result is entirely up to you. For example, you have a log pruning task which you want to execute once every day. You could create a job which will put a message in a Windows Azure Storage Queue of your choice every day. What you do with that message would be entirely up to you.

Job History

As the name suggests, a job history contains the execution history of a job. It contains success vs. failure, as well as any response details.

Alternatives

Now let’s talk about some of the other alternatives available to you when it comes to executing scheduled jobs:

Aditi Task Scheduler

Aditi has a task scheduler service which is very similar in the functionality offered by this service (http://www.aditicloud.com/). Aditi’s service also allows you to ping web endpoints as well as post messages in a queue. Now the question is which one you should use

.

A few things that go in favor of using Aditi’s service are:

- Aditi’s service has been running in production whereas Windows Azure Scheduler Service is in preview so if you have production workloads where you would need this kind of service, you may want to consider Aditi’s service. Having said this, if you have been using Windows Azure Mobile Service’s Scheduling functionality, it has been backed by this service only. So if you’re somewhat concerned about it being in preview, Don’t [be].

At the time of writing, Windows Azure Scheduler Service has lesser features than Aditi’s Service. For example, Aditi’s service allows you to POST data to web endpoints. Also it supports basic authentication. These two features are not there in Windows Azure Scheduling Service as of today.Please see Update below.A few things that go in favor of Windows Azure Scheduler Service are:

- It is integral part of Windows Azure offering.

- Aditi’s service is only offered through Windows Azure Store. Since Windows Azure Store is not available in all countries, you may not be able to avail this service (I know, I’m not) from Aditi. Windows Azure Scheduler Service does not have this restriction.

UPDATE:

Based on the feedback I received, Windows Azure Scheduler Service supports all HTTP Methods (GET, PUT, POST, DELETE, HEAD). Furthermore it also supports basic authentication as well.

Roll Out Your Own

Other alternative would be roll out your own scheduler. I wrote a blog post sometime back which talked about this. You can read the post here: http://gauravmantri.com/2013/01/23/building-a-simple-task-scheduler-in-windows-azure/. If you’re going down this route, my recommendation would be not to implement your own timers but use something like Quartz.net.

REST API

Let’s talk briefly about the REST API to manage scheduler service. We will deal with in more detail in subsequent posts. You can learn more details about the REST API here: http://msdn.microsoft.com/en-us/library/windowsazure/dn479785.aspx.

Authentication

To authenticate your REST API calls to the manage scheduler service, you would use the same logic as you do today with authenticating Service Management API requests i.e. authenticate your requests using X509 Certificate. To learn more about authenticating Service Management API requests, click here: http://msdn.microsoft.com/en-us/library/windowsazure/ee460782.aspx.

Things you could do

At the time of writing of this blog post, here are the few things you could do with REST API:

- Create/Delete/Get/List Cloud Services

- Create/Update/Delete/Get/List Job Collections

- Create/Update/Delete/Get/List Jobs

We will take a deeper look at these operation in next post.

Summary

I’m pretty excited about the availability of this service. In fact, I could think (actually we’re thinking about) of a lot of scenarios where this service can be put to practical use. I hope you have found this post useful. Are you planning on using this service? If you please provide comments and let me and other readers know how you are planning on using this service.

This was pretty simple post with no code to show. In the next post, it will be all about code. I promise.

See Simon Munro’s comment regarding the cost of WASS versus Chron.

<Return to section navigation list>

Windows Azure Access Control, Active Directory, Identity and Workflow

No significant articles so far this week.

<Return to section navigation list>

Windows Azure Virtual Machines, Virtual Networks, Web Sites, Connect, RDP and CDN

• Maarten Balliauw (@maartenballiauw) posted Visual Studio Online for Windows Azure Web Sites on 11/13/2013:

Today’s official Visual Studio 2013 launch provides some interesting novelties, especially for Windows Azure Web Sites. There is now the choice of choosing which pipeline to run in (classic or integrated), we can define separate applications in subfolders of our web site, debug a web site right from within Visual Studio. But the most impressive one is this. How about… an in-browser editor for your application?

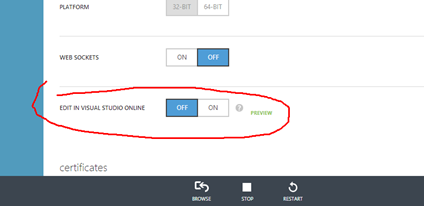

Let’s take a quick tour of it. After creating a web site we can go to the web site’s configuration we can enable the Visual Studio Online preview.

Once enabled, simply navigate to https://<yoursitename>.scm.azurewebsites.net/dev or click the link from the dashboard, provide your site credentials and be greeted with Visual Studio Online.

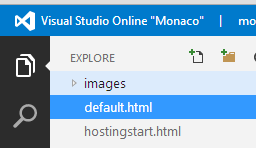

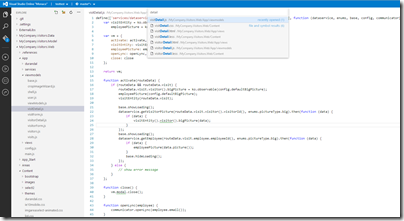

On the left-hand menu, we can select the feature to work with. Explore does as it says: it gives you the possibility to explore the files in your site, open them, save them, delete them and so on. We can enable Git integration, search for files and classes and so on. When working in the editor we get features like autocompletion, FInd References, Peek Definition and so on. Apparently these don’t work for all languages yet, currently JavaScript and node.js seem to work, C# and PHP come with syntax highlighting but nothing more than that.

Most actions in the editor come with keyboard shortcuts, for example Ctrl+, opens navigation towards files in our application.

The console comes with things like npm and autocompletion on most commands as well.

I can see myself using this for some scenarios like on-the-road editing from a Git repository (yes, you can clone any repo you want in this tool) or make live modifications to some simple sites I have running. What would you use this for?

• Edu Lorenzo (@edulorenzo) described Creating and Editing a simple website using Visual Studio Online in an 11/13/2013 post:

Ok. So here we are to try and:

- Create a simple website

- Add a folder for an image

- Add an image to the folder

- Display that image on the page

I start off with a blank azure website that I just created. Then opened it up with VSOnline.

click the Run button.

And VSOnline will give you the result in the Output window

So I click that url and see that the site is up and running.

Now I add a new folder to the site

So I now have…

I’ll try to add an image…

Oh wow! Right clicking gives me..

So I try to upload a screenshot from a recent speedtest :p

And.. try to add that to the site.

And test…

And while I was editing, I was pleased to see some form of IntelliSense.

• Brent Stineman (@BrentCodeMonkey) posted Monitoring Windows Azure VM Disk Performance on 11/13/2013:

Note: The performance data mentioned here is based on individual results during a limited testing period and should NOT be used as an indication of future performance or availability. For more information on Windows Azure storage performance and availability, please refer to the published SLA.

So I’ve had an interesting experience the last few days that I wanted to take a few minutes to share with the interwebs. Namely, monitoring some Windows Azure hosted virtual machines, not at the VM level, but the storage account that held the virtual machine disks.

The scenario I was facing was a customer that was attempting to benchmark the performance and availability of two Linux based virtual machines that were running an Oracle database. Both machines were extra-large VMs with one running 10 disks (1 os, 1 Oracle, 8 data disks) and the other with 16 disks (14 for data). The customer has been running an automated load against both machines and wanted to get a clear idea of how much they may or may not have been saturating the underlying Windows Azure Storage system as well as what could be contributing to the highly variable Oracle IOPS levels they were seeing.

To support this effort, I dug into something I haven’t looked at in depth for quite some time. Windows Azure Storage Analytics (aka Logging and Metrics). Except this time with a focus on what happens at the storage account with regards to the VM disk activity.

Enable Storage Analytics Proactively

Before we go anywhere, I need to stress that if you want to be able to see what’s going on with Azure Storage and your virtual machine, you’ll need to enable this BEFORE a problem occurs. If you haven’t already enabled logging, the only option you have to try and go “back in time” and look at past behavior is to open up a support ticket. So if you plan to do this type of monitoring, please be certain to enable analytics!

For Windows Azure VM disk metrics, we need to enable analytics on the blob storage account. As the link I just shared will let you know, you will need to call the “Set Blob Service Properties” api to set this (or use your favorite Windows Azure storage utility). I happen to use the Azure Management Studio from Redgate and it allows me to set the properties you see in this screen shot:

With this, I tell Azure Storage that I want it to log all blob operations (Read/Write/Delete) and retain that information for up too two days. I also enable metrics and ask it to retain that data for two days as well.

When I enable logging, Azure Storage will log all operations and persist that information into a series of blob files in a special container in the storage account called $logs. The Logging data will be written to blobs in the same storage account I am monitoring in a special container called $logs. Logs will be spread across multiple blob files as discussed in great detail in the MSDN article “About Storage Analytics Logging“. A word of caution, if the storage account is active, logging will produce a LARGE amount of data. In my case, I was seeing a new 150mb log file approximately every 3 minutes. That’s about 70gb per day. In my case, I’ll be storing about 140gb for my 2 days of retention which is only about $6.70 per month. Given the cost of the VM itself, this was inconsequential. But if I had shifted my retention period to a month… this can start to get pricy. Additionally, the storage transactions needed to write the logs to blog storage count against the account limit of 20,000/tps. To help reduce the risk of throttling coming into play to early, the virtual machines I’m monitoring have each been deployed into their own storage account.

The metrics are much more lightweight. These are written to a table and provide a per hour view of the storage account. These are the same values that get surfaced up in the Windows Azure Management portal storage account dashboard. I could easily retain these for a much longer period since it’s only a handful of rows being inserted per hour.

Storage Metrics – hourly summary

Now that we’ve enabled storage analytics and told it to capture the metrics, we can run our test and sit back and look for data to start coming in. After we’ve run testing for several hours, we can then look at the metrics. Metrics get thrown into a series of tables, but since I only care about the blob account, I’m going to look at $MetricsTransactionsBlob. We’ll have multiple rows per hours and can filter based on the type of operation, or get the roll-up across all operations. For general trends, it’s this latter figure I’m most interested in. So I apply a query against the table to get all user operations, “(RowKey eq ‘user;All’)“. The resulting query gives me 1 row per hour that I can look at to help me get a general idea of the performance of the storage account.

You’ll remember that I opted to put my Linux/Oracle virtual machine into its own storage account. So this hour summary gives me a really good, high level overview of the performance of the virtual machine. Key factors I looked at are: Availability (we want to make sure that’s above the storage account 99.9% SLA), Average End to End Latency, and if we have less than 100% availability, what is the count of the number of errors we saw.

I won’t bore you with specific numbers, but over a 24hr period I lowest availability I saw was 99.993% availability and with the most common errors being Server Timeouts, Client Errors, or Network Errors. Seeing these occasionally, as long as the storage account remains above 99.9% availability, should be considered normal ‘noise’. In the transient nature of the cloud, some errors are simply to be expected. We also kept an eye on average end to end latency which during our testing was fairly consistent in the 19-29ms range.

You can learn more about all the data available in these various storage metrics by reviewing ‘Storage Analytics Metrics Table Schema‘ on MSDN.

When we saw numbers that appears “unusual”, we then took the next logical step and inspected the detailed storage logs.

Blob Storage Logs – the devil is in the details

Alright, so things get a bit messier here. First off, the logs are just delimited format files. And while there the metrics can help tell us which period in time we want to look at, depending on the number of storage operations, we may have several logs we need to slog through (In my case, I was getting about 20 150mb log files per hour). So the first step when digging into the logs is to download them. So either write up some code, grab your favorite utility, or perhaps just log into the management portal and download the files for the timeframe you want to take a closer look at. Once that’s done, it’s time for some Excel (yeah, that spreadsheet thing…. Really).

The log files are semi-colon delimited files. As such, the easiest way I found to do ad-hoc inspection of the files is to open them up in a spreadsheet application like Excel. I open up Excel, then do the whole “File -> Open” thing to select the log file I want to look at. I then tell Excel it’s a delimited file with a semi-colon as the delimiter and in a few seconds it will import the file all nice and spreadsheet for me. But before we start doing anything, let’s talk about the log file format. Since the log file doesn’t contain any headers, we either need to know what columns contain the data we want, or add some headers. For the sake of keeping things easy for you (and saving a copy for myself), I created my own Excel file that already has all the log file fields declared in it. So you can just copy and paste from this spreadsheet into your log file once it’s loaded into Excel. For the remainder of this article, I’m going to assume this is what you’ve done.

With our log file headers, we can now start filtering the data. If we’re looking for errors, the first thing we’ll want to do is open up a log file and filter based on “request status”. To do this, select the “Data” tab and click on “filter”. This allows us to click on the various column headings and filter down what we’re looking at. The shot below shows a log that had a couple of errors in it. So I can easily remove the checkbox on “Success” to drill into those specific errors. This is handy if we want to know exactly what happened as the log also contains a “request-id-header” field. With that value, we can open up a support ticket and ask them to dig into the issue more deeply.

Now this is the first real caution I have. Between the metrics and the logs, we can get a really good idea of what types of errors are happening. But this doesn’t mean that every error should be investigated. With cloud computing solutions, there’s a certain amount of “transient” errors that are simply to be expected. It’s only if you see a prolonged, or persistent issue that you’d really want to dig into the errors in any real depth. One key indicator is to look at the logging metrics and keep an eye on the availability. If it falls below 99.9%, that means there may have been an SLA violation for the storage account. In that case, I’d take a look at the logs for that period and see what types of errors we saw. As long as the issue wasn’t caused by a spike in throttling (meaning we overloaded the system), there may be something worth having support look into. But if we’re at 99.999%, with the occasional network failure, timeout, or ‘client other’, we’re likely just seeing the “noise” one would expect from transient errors as the system adjust and compensates for changes to its underlying fabric.

Now since we’re doing benchmarking tests, there’s one other key thing I look at. The number of operations that are occurring on the blobs that are the various disks mounted into our virtual machine. This is another task where Excel can help out, by adding subtotals. Adding subtotals requires column headings so this is the part when you go “thank you Brent for making it so I just need to copy those in”. You’re welcome. J

The field we want to look at in the logs for our subtotal is the “requested-object-key” field. This value is the specific option in the storage account that was being access (aka the blob file or disk). Going again to the Data tab in Excel, we’ll select “subtotal” and complete the dialog box as shown at the left. This will create subtotals by object (disk) and allow us to see the count of operations against that object. So what we have is the operations performed on that disk during the time period covered by the log file. Using that value, we can then get a fairly good approximation of the “transactions per second” that the disk is generating against storage.

So what did we learn?

If you are doing IO benchmarking of the virtual machine (as I was), you may notice something odd. We observed that our Linux/Oracle Vm was reporting IOPS far above what we saw at the Windows Azure Storage level. This is to be expected because Oracle is trying to help buffer requests itself to increase performance. Add in any disk buffering we may have enabled, and the numbers could skew even further. Ultimately though, what we did establish during out testing was that we knew for certain when we were overloading the Windows Azure storage sub-system and contributing to server slowdowns that way. We were also able to observer several small instances where Oracle performance trailed off somewhat and that these were due to isolated incidents where we saw an increase in various errors or in end to end operation latency.

The host result here is that while virtual machine performance is related to the performance of the underlying storage subsystem, there’s no easy 1-to-1 relation between errors in one and issues in the other. Additionally, as you watch these over time, you understand why virtual machine disk performance can vary over time and shouldn’t be compared to the behaviors we’ve come to expect from a physical disk drive. We have also learned what we need to do to help us more affectively monitor Windows Azure storage so that we can proactively take action to address potential customer facing impacts.

I apologize for all the typos and for not going into more depth on this subject. I just wanted to get this all out into before I fell into the fog of my memory. Hopefully you find it useful.

Stefan Shackow wrote an Introduction to WebSockets on Windows Azure Web Sites on 11/14/2013:

Windows Azure Web Sites has recently added support for the WebSocket protocol. Both .NET developers and node.js developers can now enable and use WebSockets in their applications.

There is a new option on a web site’s Configuration tab to enable WebSockets support for an application.

Once WebSockets has been enabled for a website, ASP.NET (v4.5 and above) and node.js developers can use libraries and APIs from their respective frameworks to work with WebSockets.

ASP.NET SignalR Chat Example

SignalR is an open source .NET library for building real-time web apps that require live HTTP connections for transferring data. There is an excellent site with introductory articles and details on the SignalR library.

Since SignalR natively supports WebSockets as a protocol, SignalR is a great choice for running connected web apps on Windows Azure Web Sites. As an example, you can run this sample chat application on Windows Azure Web Sites.

The screen shot below shows the structure of the SignalR chat sample:

After creating a web application in Windows Azure Web Sites, enabling WebSockets for the application, and uploading the SignalR chat sample, you can run your very own mini-chat room on Windows Azure Web Sites!

The raw HTTP trace (shown below) from Fiddler shows how the WebSockets protocol upgrade request that is sent by the client-side portion of SignalR negotiates a WebSockets connection with the web server:

Request snippet:

GET https://sigr-chat-on-waws.xxxx.net/signalr/connect?transport=webSockets snip HTTP/1.1

Origin: https://sigr-chat-on-waws.xxxx.net

Sec-WebSocket-Key: hv2icF/iR1gvF3h+WKBZIw==

Connection: Upgrade

Upgrade: Websocket

Sec-WebSocket-Version: 13

…

Response snippet:

HTTP/1.1 101 Switching Protocols

Upgrade: Websocket

Server: Microsoft-IIS/8.0

X-Content-Type-Options: nosniff

X-Powered-By: ASP.NET

Sec-WebSocket-Accept: Zb4I6w0esmTDHM2nSpndA+noIvc=

Connection: Upgrade

…To learn more about building real-time web applications with SignalR, there is an extensive tutorial available on the SignalR overview website.

ASP.NET Echo Example

ASP.NET has supported WebSockets since .NET Framework v4.5. Developers will usually want to use higher level libraries like SignalR to abstract away the low-level details of managing WebSockets connections. However for the adventurous developer, this section shows a brief example of using the low-level WebSockets support in ASP.NET.

The ASP.NET Echo example project consists of a server-side .ashx handler that listens and responds on a WebSocket, and a simple HTML page that establishes the WebSocket connection and sends text down to the server.

The .ashx handler listens for WebSockets connection requests:

The .ashx handler listens for WebSockets connection requests:

Once a WebSocket connection is established, the handler echoes text back to the browser:

The corresponding HTML page establishes a WebSocket connection when the page loads. Whenever a browser user sends text down the WebSocket connection, ASP.NET will echo it back.

The screenshot below shows a browser session with text being echo’d and then the WebSockets connection being closed.

Node.js Basic Chat Example

Node.js developers are familiar with using the socket.io library to author web pages with long-running HTTP connections. Socket.io supports WebSockets (among other options) as a network protocol, and can be configured to use WebSockets as a transport when it is available.

A Node.js application should include the socket.io module and then configure the socket in code:

The sample code shown below listens for clients to connect with a nickname (e.g. chat handle), and broadcasts chat messages to all clients that are currently connected.

The following small tweak is needed in web.config for node.js applications using WebSockets:

This web.config entry turns off the IIS WebSockets support module (iiswsock.dll) since it isn’t needed by node.js. Nodej.js on IIS includes its own low-level implementation of WebSockets, which is why the IIS support module needs to be explicitly turned off.

Remember though that the WebSockets feature still needs to be enabled for your website using the Configuration portal tab in the UI shown earlier in this post!

After two clients have connected and traded messages using the sample node.js application, this is what the HTML output looks like:

The raw HTTP trace (shown below) from Fiddler shows the WebSockets protocol upgrade request that is sent by the client-side portion of socket.io to negotiate a WebSockets connection with the web server:

Request snippet:

GET https://abc123.azurewebsites.net/socket.io/1/websocket/11757107011524818642 HTTP/1.1

Origin: https://abc123.azurewebsites.net

Sec-WebSocket-Key: rncnx5pFjLGDxytcDkRgZg==

Connection: Upgrade

Upgrade: Websocket

Sec-WebSocket-Version: 13

…

Response snippet:

HTTP/1.1 101 Switching Protocols

Upgrade: Websocket

Server: Microsoft-IIS/8.0

X-Powered-By: ASP.NET

Sec-WebSocket-Accept: jIxAr5XJsk8rxjUZkadPWL9ztWE=

Connection: Upgrade

…WebSockets Connection Limits

Currently Azure Web Sites has implemented throttles on the number of concurrent WebSockets connections supported per running website instance. The number of supported WebSockets connections per website instance for each scale mode is shown below:

- Free: (5) concurrent connections per website instance

- Shared: (35) concurrent connections per website instance

- Standard: (350) concurrent connections per website instance

If your application attempts to open more WebSocket connections than the allowable limit, Windows Azure Web Sites will return a 503 HTTP error status code.

Note: the terminology “website instance” means the following - if your website is scaled to run on (2) instances, that counts as (2) running website instances.

You Might Need to use SSL for WebSockets!

There is one quirk developers should keep in mind when working with WebSockets. Because the WebSockets protocol relies on certain less used HTTP headers, especially the Upgrade header, it is not uncommon for intermediate network devices such as web proxies to strip these headers out. The end result is usually a frustrated developer wondering why their WebSockets application either doesn't work, or doesn't select WebSockets and instead falls back to less efficient alternatives.

The trick to working around this problem is to establish the WebSockets connection over SSL. The two steps to accomplish this are:

- Use the wss:// protocol identifier for your WebSockets endpoints. For example instead of connecting to ws://mytestapp.azurewebsites.net (WebSockets over HTTP), instead connect to wss://mytestapp.azurewebsites.net (WebSockets over HTTPS).

- (optional) Run the containing page over SSL as well. This isn’t always required, but depending on what client-side frameworks you use, the “SSL-ness” of the WebSockets connection might be derived from the SSL setting in effect for the containing HTML page.

Windows Azure Web Sites supports SSL even on free sites by using a default SSL certificate for *.azurewebsites.net. As a result you don’t need to configure your own SSL certificate to use the workaround. For WebSockets endpoints under azurewebsites.net you can just switch to using SSL and the *.azurewebsites.net wildcard SSL certificate will automatically be used.

You also have the ability to register custom domains for your website, and then configure either SNI or IP-based SSL certificates for your site. More details on configuring custom domains and SSL certificates with Windows Azure Web Sites is available on the Windows Azure documentation website.

<Return to section navigation list>

Windows Azure Cloud Services, Caching, APIs, Tools and Test Harnesses

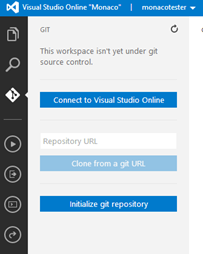

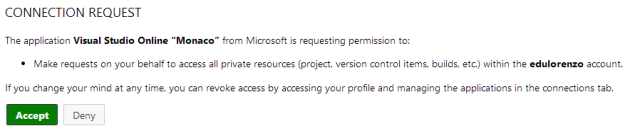

• Edu Lorenzo (@edulorenzo) described connecting Visual Studio Online to Windows Azure in an 11/13/2013 post:

This time.. I’ll connect my VSOnline to an existing TFS (Team Foundation Service) account.

So on Monaco..

Then

Then allow connection

And its done..

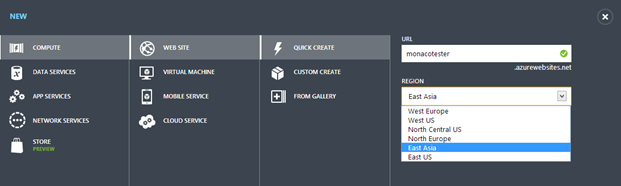

• Edu Lorenzo (@edulorenzo) posted Trying out the Monaco product on 11/13/2013:

So.. I go to my Azure account and create a new WebSite

I click “Configure” on the created site and WHOAH!!!

I hit save for that change then go back to the dashboard. And then again go WHOAH!!!

I click on that.. and Voila! Welcome to Monaco!

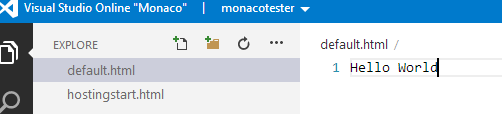

I add a new file “default.html” duh…

Of course.. can’t do away with Hello World

And there we go!

S. “Soma” Somasegar (@SSomasegar) posted Visual Studio 2013 Launch: Announcing Visual Studio Online to his blog on 11/13/2013:

Today, I’m very excited to launch Visual Studio 2013 and .NET 4.5.1. I am also thrilled to announce Visual Studio Online, a collection of developer services that runs on Windows Azure and extends the development experience in the cloud. Visual Studio 2013 and Visual Studio Online represent the beginning of a new era for Visual Studio, combining a powerful desktop IDE with rich developer services in the cloud.

As a part of our Cloud OS vision, Visual Studio 2013 and .NET 4.5.1 enable developers to build modern applications. At a time when the devices and services transformation is changing software development across the industry, the developer is at the center of this transformation. Visual Studio 2013, Visual Studio Online, MSDN and Azure offer the most complete experience for modern application development for the cloud.

To summarize some of the key news we shared today:

- Visual Studio 2013 and .NET 4.5.1 are available globally, and provide the best tools for modern application development on all of Microsoft’s latest platforms

Visual Studio Online is a new offering, providing a rich collection of developer services in the cloud that work hand-in-hand with the Visual Studio IDE on the desktop

- Hosted source control, work item tracking, agile planning, build and load testing services that were part of Team Foundation Service are now available in public preview as part of Visual Studio Online

- Visual Studio Online Application Insights is a new service that provides a 360 degree view of your applications health, based on data about availability, performance and usage

- Visual Studio Online “Monaco” is a coding environment for the cloud, in the cloud, offering light-weight, friction free developer experiences in the browser for targeted Azure development scenarios

- Microsoft and Xamarin are collaborating to help .NET developers broaden the reach of their applications to additional devices, including iOS and Android

- Visual Studio 2012 Update 4 is available today

You can get in-depth coverage of all of the Visual Studio 2013 launch details and announcements on Channel 9.

Visual Studio 2013

Visual Studio 2013 is the best tool for developers and teams to build and deliver modern, connected applications on all of Microsoft’s latest platforms. From Windows Azure and Windows Server 2012 R2 to Windows 8.1 and Office 365, Visual Studio 2013 provides development tools that help developers build great applications. Support for live debugging Azure sites from Visual Studio means you can set a breakpoint in a running service in the cloud, and step through it directly from Visual Studio. The new Cloud Business Application project type lets you build next-generation business applications leveraging Office 365 and Azure. And features like Energy Profiling and UI Responsiveness diagnostics make it easier than ever to build 5-star applications for the Windows Store.

For individual developers, Visual Studio 2013 brings great new developer productivity features. Features like Peek Definition and CodeLens provide key information about your code right where you are working in the Visual Studio editor.

And for teams, Visual Studio 2013 and Team Foundation Server 2013 offer new capabilities from Agile Portfolio Management to support for Git source control to the new Team Room feature. In partnership with Windows Server and Systems Center, we’ve enabled some great DevOps scenarios for on premise development. And we’ve integrated new Release Management features to automate deployment and do continuous delivery on the cloud.

For more on what’s new in Visual Studio 2013, .NET 4.5.1 and Team Foundation Server 2013 see What’s New.

Visual Studio Online

Today marks the beginning of a new era for Visual Studio with the availability of Visual Studio Online.

Visual Studio has evolved over the years. Starting with an integrated developer environment for the desktop, Visual Studio expanded to also include team development capabilities on the server with Team Foundation Server. We are now taking the next step, extending the Visual Studio IDE with a collection of developer services, hosted in Azure, which offers the best integrated end-to-end development experience for modern applications.

Today, we are announcing the availability of a broad range of developer services as part of Visual Studio Online. Several services that have been available in Team Foundation Service are now in public preview as part of Visual Studio Online: hosted source control, work item tracking, collaboration and a build service. The elastic load testing service we released in limited preview earlier this year is also now available in public preview as part of Visual Studio Online. In addition, we are announcing two new Visual Studio Online services: Visual Studio Online Application Insights and Visual Studio Online “Monaco”.

Visual Studio Online is free for teams up to 5 users. The combination of Visual Studio Express and the free plan for Visual Studio Online make it simple for developers to get started in a friction-free way. Visual Studio Online is also included as part of MSDN subscriptions. New Visual Studio Online subscription plans, including access to the Visual Studio Professional IDE, are also available starting today. You can check out the plans, and get started with VS Online at http://visualstudio.com/.

Visual Studio Online is made up of many services, including the following:

Hosted Source Control

Visual Studio Online provides developers with hosted source control, using either Git or Team Foundation Version Control (TFVC). The sources for your Visual Studio Online projects are readily available to sync to your desktop when you are logged into Visual Studio.

Work Items and Agile Planning

Whether you are managing a project’s work item backlog or planning for the next sprint, Visual Studio Online provides tools to support you agile development process.

Hosted Build Service

Visual Studio Online includes a hosted build service, making it easy to move your project’s builds to the cloud. Build results are available in both Visual Studio Online and Visual Studio 2013, providing an smooth integrated development experience.

Every Visual Studio Online account provides 60 minutes of free build time per month, making it friction free to get started with hosted build.

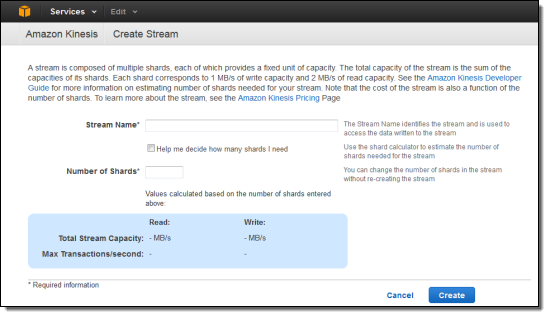

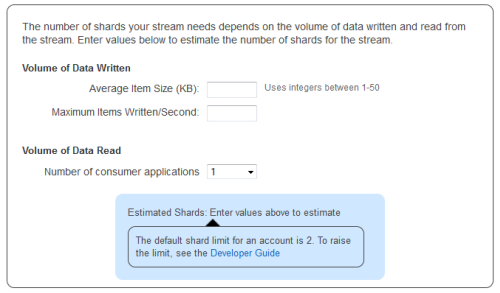

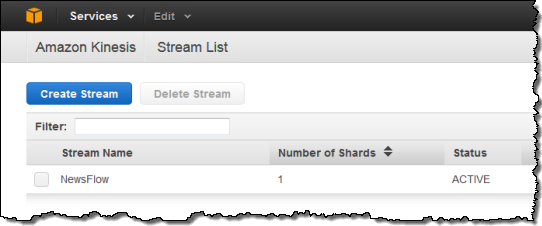

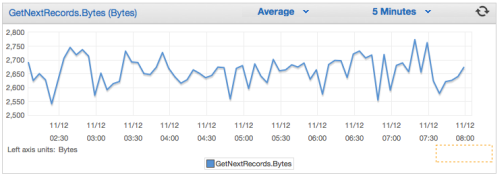

Elastic Load Test Service