Windows Azure and Cloud Computing Posts for 2/6/2012+

| A compendium of Windows Azure, Service Bus, EAI & EDI Access Control, Connect, SQL Azure Database, and other cloud-computing articles. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Windows Azure Blob, Drive, Table, Queue and Hadoop Services, Big Data

- SQL Azure Database, Federations and Reporting

- Marketplace DataMarket, Social Analytics and OData

- Windows Azure Access Control, Service Bus, and Workflow

- Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch and Entity Framework v4+

- Windows Azure Infrastructure and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

Azure Blob, Drive, Table, Queue and Hadoop Service, Big Data

Barb Darrow (@gigabarb) asked and answered Are small businesses ready for big data? Um, yes in a 2/8/2012 post to GigaOm’s Structure blob:

When SAP announced its HANA big data analytics for midmarket businesses Tuesday, one of the first questions on the call was what smaller businesses do with big data. There is doubt that smaller companies even need big data. They do.

Just as big enterprises look at unstructured data from social networks to gauge consumer sentiment, and relational data from databases, and perhaps machine data to see how well their equipment is working, smaller companies need the same data points. Many small companies are small, after all, because they’re just starting out, and the smart application and analysis of outside data is one key to growth.

“If you’re a sub shop, you probably don’t have to worry about big data, but if you’re a small online business, or a small financial service provider or a medical practice, you probably should,” said Laurie McCabe, cofounder and partner of The SMB Group, which researchers how small and medium businesses use technology. “There’s more and more data out there you can use to make decisions and get better outcomes.”

Even in the sub shop example, there are caveats. A single mom-and-pop store can probably do okay gauging consumer wants and needs in its area, but a small chain of such shops across a small geographic area had better keep its fingers on the pulse — watching its competitors’ special promotions, keeping tabs on consumer comments on Twitter and Facebook

And retail is a cauldron of big data needs. “Retailers need to predict trends, track in-store repeat business, look at pricing dynamics,” McCabe said. If you’re in that business, it’ll be harder for you to compete with companies that can leverage even just the public data if you can’t or don’t.

SAP’s new products take the structured and unstructured data analysis capabilities that were available in its hot-selling HANA in-memory database appliance and make them available as a software add-on to its BusinessOne and All-in-One for smaller companies.

All the reasons big companies have to use big data pertain to smaller companies, said Christian Rodatus, SVP for SAP HANA on the call. Smaller “manufacturing companies need text mining and analysis to provide better quality analysis [of their products] and predict the effect a lack of quality can have on the supply chain, dealing with the impact of production changes,” he said.

That being said, small and medium companies are notoriously cost-conscious. The availability of inexpensive SaaS-based analytics products will be attractive to such budget-constrained shops. Whether or not SAP’s new HANA software, which will ship later this year, takes off will depend on price — something SAP did not provide on Tuesday’s call.

Photo courtesy of Flickr user swanksalot.

Related research and analysis from GigaOM Pro:

Subscriber content. Sign up for a free trial.

Full disclosure: I’m a member of GigaOm Pro’s Analysts network (click R for my listing).

MarketWire asserted “Certified Cloudera Connector for Tableau Now Available” in a deck for a Cloudera and Tableau Empower Business Intelligence With Apache Hadoop press release of 2/7/2012:

Cloudera Inc., the leading provider of Apache Hadoop-based data management software and services, and Tableau Software, the global leader in rapid-fire business intelligence (BI) software, today announced an expanded integration between the two companies that provides enterprises with new capabilities to more easily extract business insights from their Big Data and visualize their data in new ways without needing the specific technical skills typically required to operate Hadoop.

Tableau has developed a Certified Cloudera Connector that is licensed to work with Cloudera's Distribution Including Apache Hadoop (CDH), the preferred and most widely deployed Hadoop platform. With this connector, Tableau users are able to access and analyze data stored in Hadoop through Hive and the new Cloudera connector. Tableau's rapid-fire business intelligence platform combined with Cloudera's expansive Big Data platform enables organizations to quickly and easily build interactive visualizations and dashboards that enable customers to have a conversation with their data.

"The Cloudera Connector for Tableau allows for easy ad-hoc visualization so you can see patterns and outliers in your data stores in Hadoop without needing any special configuration," said Dan Jewett, vice president of Product Management at Tableau. "And because we've partnered with Cloudera, we will continue to stay in the forefront of Hadoop developments and Big Data analytics."

"Data continues to grow at an unprecedented rate and customers need to be able to understand and use their data for all aspects of their business. We're pleased to see companies like Tableau taking advantage of the Cloudera Connect Partner Program to certify their technology and offer their customers the industry standard in Hadoop - CDH," said Ed Albanese, head of Business Development at Cloudera. "We are excited to provide our mutual customers with the Cloudera Connector for Tableau, furthering companies' abilities to visualize and utilize the data in their Hadoop clusters."

The Cloudera Connector for Tableau is one of a number of features in Tableau 7.0 that makes enterprise-wide data sharing and management faster and easier. It is integrated into the Tableau 7.0 release, meaning Customers can quickly create connections to their Hadoop cluster and begin deriving insights immediately. …

Microsoft offers similar, but not identical, features in Codename “Data Explorer” and Excel Cloud Data Analytics. It will be interesting to see how the new Apache Hadoop on Windows Azure’s Excel PowerPivot connection compares with Tableau’s features. Note that Tableau is one of the providers of data visualization for Windows Azure Marketplace DataMarket.

Robert Gelber provides a third-party view of Tableau’s capabilities in his Tableau Sharpens Swiss Knife Approach post to the Datanami blog of 7/8/2012.

Basier Aziz (@basierious) announced Cloudera Connector for Tableau Has Been Released in a 2/7/2012 post:

Earlier today, Cloudera proudly released the Cloudera Connector for Tableau. The availability of this connector serves both Tableau users who are looking to expand the volume of datasets they manipulate and Hadoop users who want to enable analysts like Tableau users to make the data within Hadoop more meaningful. Enterprises can now extract the full value of big data and allow a new class of power users to interact with Hadoop data in ways they priorly could not.

The Cloudera Connector for Tableau is a free ODBC Driver that enables Tableau Desktop 7.0 to connect to Apache Hive. Tableau users can thus leverage Hive, Hadoop’s data warehouse system, as a data source for all the maps, charts, dashboards and other artifacts typically generated within Tableau.

Hive itself is a powerful query engine that is optimized for analytic workloads, and that’s where this Connector is sure to work best. Tableau also, however, lets users ingest result sets from Hive into its in-memory analytical engine so that results returning from Hadoop can be analyzed much more quickly.

Setting up your connection involves only the following steps:

- Download and run the Cloudera Connector for Tableau executable.

- Point the Windows ODBC Data Source Administrator to the Cloudera ODBC Driver for Apache Hive.

- Identify the Hive data source in Tableau Desktop.

And you’re done!

Cloudera is pleased to continue to work with Tableau and with other vendors to enable more users in the enterprise to bring out Hadoop’s fullest value.

Avkash Chauhan (@avkashchauhan) answered Which one to choose between Pig and Hive? in a 2/7/2012 post:

Technically they both will do the job, you are looking from "either hive or Pig" perspective, means you don't know what you are doing yet. However if you first define the data source, scope and the result representation and then look for which one to choose between Hive or Pig, you will find they are different for your job now and choosing one instead of other will have extra benefits. At last both Hive and Pig can be extended with UDFs and UDAFs to make them look again same at the end so now you can think again which one was best.

For a person with roots in database & SQL, Hive is the best however for script kids or programmer, Pig has close resemblance.

Hive provides SQL like interface and relational model to your data, and if your data really unstructured, PIG is better choice. If you look at definition of a proper schema in HIVE which makes it closer in concept to RDBMS. You can also say that In Hive you write SQL, in Pig you execute a sequence of plans. Both Pig and Hive are abstractions on top of MapReduce, so for control and performance you would really need to use MapReduce. You can start with Pig and use MapReduce when you really want to go deeper.

Resources:

If you can ascertain the upshot of the “For a person with roots in database & SQL, Hive is the best however for script kids or programmer, Pig has close resemblance” sentence, please post a comment.

Neil MacKenzie (@mknz) described MongoDB, Windows Azure (and Node.js) in a 2/5/2012 post:

The Windows Azure ecosystem is being extended both by Microsoft and by third-party providers. This post focuses on one of these extensions – the MongoDB document database. It shows how MongoDB can be deployed in a Windows Azure hosted service and accessed from other roles in the hosted service using either traditional .NET or the recently released Node.js support.

NoSQL

During the last 30 years SQL databases have become the dominant data storage systems, with commercial offerings such as Oracle, Microsoft SQL Server and IBM DB2 achieving enormous commercial success. These data systems are characterized by their support for ACID semantics – atomicity, consistency, isolation and durability. These properties impose a certainty that is essential for many business processes dealing with valuable data. However, supporting ACID semantics becomes increasingly expensive as the volume of data increases.

The advent of Web 2.0 has led to an increasing interest in long-tail data whose value comes not from the value of a single piece of data but from the magnitude of the aggregate data. Web logs provide a paradigmatic example of this type of data. Because of the low value of an individual data item this type of data can be managed by data systems which do not need to support full ACID semantics.

Over the last few years a new type of data storage semantics has become fashionable: BASE -basically available, soft state, eventually consistent. While the name may be a bit forced to make the pun, the general idea is that a system adhering to BASE semantics, when implemented as a distributed system, should be able to survive network partitioning of the individual components of the system at the cost of offering a lower level of consistency than available in traditional SQL systems.

In a strongly-consistent system, all reads following a write receive the written data. In an eventually-consistent system, reads immediately following a write are not guaranteed to return the newly written value. However, eventually all writes should return the written value.

NoSQL (not only SQL) is a name used to classify data systems that do not use SQL and which typically implement BASE semantics instead of ACID semantics. NoSQL systems have become very popular and many such systems have been created – most of them distributed in an open source model. Another important feature of NoSQL systems is that, unlike SQL systems, they do not impose a schema on stored entities.

NoSQL systems can be classified by how data is stored as:

- key-value

- document

In a key-value store, an entity comprises a primary key and a set of properties with no associated schema so that each entity in a “table” can have a different set of properties. Apache Cassandra is a popular key-value store. A document store provides for the storage of semantically richer entities with an internal structure. MongoDB is a popular document store.

Windows Azure Tables is a key-value NoSQL store provided in the Windows Azure Platform. Unlike other NoSQL stores, it supports strong consistency with no eventually-consistent option. The Windows Azure Storage team recently published a paper describing the implementation of Windows Azure Tables.

10gen, the company which maintains and supports MongoDB, has worked with Microsoft to make MongoDB available on Windows Azure. This provides Windows Azure with a document store NoSQL system to complement the key-value store provided by Windows Azure Tables.

MongoDB

MongoDB is a NoSQL document store in which individual entities are persisted as documents inside a collection hosted by a database. A single MongoDB installation can comprise many databases. MongoDB is schemaless so each document in a collection can have a different schema. Consistency is tunable from eventual consistency to strong consistency.

MongoDB uses memory-mapped files and performance is optimal when all the data and indexes fit in memory. It supports automated sharding allowing databases to scale past the limits of a single server. Data is stored in BSON format which can be thought of as a binary-encoded version of JSON.

High availability is supported in MongoDB through the concept of a replica set comprising one primary member and one or more secondary members, each of which contains a copy of all the data. Writes are performed against the primary member and are then copied asynchronously to the secondary members. A safe write can be invoked that returns only when the write is copied to a specified number of secondary members – thereby allowing the consistency level to be tuned as needed. Reads can be performed against secondary members to improve performance by reducing the load on the primary member.

MongoDB is open-source software maintained and supported by 10gen, the company which originally developed it. 10gen provides downloads for various platforms and versions. 10gen also provides drivers (or APIs) for many languages including C# and F#. MongoDB comes with a JavaScript shell which is useful for performing ad-hoc queries and testing a MongoDB deployment.

Kyle Banker, of 10gen, has written an excellent book called MongoDB in Action. I highly recommend it to anyone interested in MongoDB.

MongoDB on Windows Azure

David Makogon (@dmakogon) worked out how to deploy MongoDB onto worker role instances on Windows Azure. 10gen then worked with him and the Microsoft Interoperability team to develop an officially supported preview release of the MongoDB on Windows Azure wrapper which simplifies the task of deploying a MongoDB replica set onto worker role instances.

The wrapper deploys each member of the replica set to a separate instance of a worker role. The mongod.exe process for MongoDB is started in the OnStart() role entry point for the instance. The data for each member is persisted as a page blob in Windows Azure Blob storage that is mounted as an Azure Drive on the instance.

The MongoDB on Windows Azure wrapper can be downloaded from github. The download comprises two directory trees: ReplicaSets containing the core software as the MongoDBReplicaSet solution; and SampleApplications containing an MVC Movies sample application named MongoDBReplicaSetMvcMovieSample. The directory trees contain a PowerShell script, solutionsetup.ps1, that must be invoked to download the latest MongoDB binaries.

The MongoDBReplicaSetMvcMovieSample solution contains four projects:

- MongoDBAzureHelper – helper class to retrieve MongoDB configuration

- MongoDBReplicaSetSample – Windows Azure project

- MvcMovie – an ASP.NET MVC 3 application

- ReplicaSetRole – worker role to host the members of a MongoDB replica set.

The MVCMovie project is based on the Intro to ASP.NET MVC 3 sample on asp.net website. It displays movie information retrieved from the MongoDB replica set hosted in the ReplicaSetRole instances. The ReplicaSetRole is launched as 3 medium instances each if which hosts a replica set member. The MongoDBAzureHelper and ReplcaSetRole projects are from the MongoDBReplicaSet solution.

The MongoDBReplicaSetMvcMovieSample solution can be opened in Visual Studio, then built and deployed either to the local compute emulator or a Windows Azure hosted service. The application has two pages: an About page displaying the status of the replica set; and a Movies page allowing movie information to be captured and displayed. It may take a minute or two for the replica set to come fully online and the status to be made available on the About page. When a movie is added to the database via the Movies page, it may occasionally require a refresh for the updated information to become visible on the page. This is because MongoDB is an eventually consistent database and the Movies page may have received data from one of the secondary nodes.

This example provides a general demonstration of how a MongoDB installation with replica sets is added to a Windows Azure project: add the MongoDBAzureHelper and ReplicaSetRole projects from the MongoDBReplicaSet solution and add the appropriate configuration to the ServiceDefinition.csdef and ServiceConfiguration.cscfg files.

The ServiceDefinition.csdef entries for ReplicaSetRole are:

<Endpoints>

<InternalEndpoint name=”MongodPort” protocol=”tcp” port=”27017″ />

</Endpoints>

<ConfigurationSettings>

<Setting name=”MongoDBDataDir” />

<Setting name=”ReplicaSetName” />

<Setting name=”MongoDBDataDirSize” />

<Setting name=”MongoDBLogVerbosity” />

</ConfigurationSettings>

<LocalResources>

<LocalStorage name=”MongoDBLocalDataDir” cleanOnRoleRecycle=”false”

sizeInMB=”1024″ />

<LocalStorage name=”MongodLogDir” cleanOnRoleRecycle=”false”

sizeInMB=”512″ />

</LocalResources>Port 27017 is the standard port for a MongoDB installation. None of the settings need be changed for the sample project.

The ServiceDefinition.csdef entries for MvcMovie are:

<ConfigurationSettings>

<Setting name=”ReplicaSetName” />

</ConfigurationSettings>The ServiceConfiguration.cscfg settings for the ReplicaSetRole are:

<ConfigurationSettings>

<Setting name=”MongoDBDataDir” value=”UseDevelopmentStorage=true” />

<Setting name=”ReplicaSetName” value=”rs” />

<Setting name=”MongoDBDataDirSize” value=”" />

<Setting name=”MongoDBLogVerbosity” value=”-v” />

<Setting name=”Microsoft.WindowsAzure.Plugins.Diagnostics.ConnectionString” value=”UseDevelopmentStorage=true” />

</ConfigurationSettings>The ServiceConfiguration.cscfg settings for the MvcMovie web role are:

<ConfigurationSettings>

<Setting name=”ReplicaSetName” value=”rs” />

<Setting name=”Microsoft.WindowsAzure.Plugins.Diagnostics.ConnectionString” value=”UseDevelopmentStorage=true” />

</ConfigurationSettings>It is critical that the value for the ReplicaSetName be the same in both the MvcMovie web role and the ReplicaSets worker role.

The replica set can also be accessed from the MongoDB shell, mongo, once it is running in the compute emulator. This is a useful way to ensure that everything is working since it provides a convenient way of accessing the data and managing the replica set. The application data is stored in the movies collection in the movies database. For example, the rs.stepDown() command can be invoked on the primary member to demote it to a secondary. MongoDB will automatically select one of the secondary members and promote it to primary. Note that in the compute emulator, the 3 replica set members are hosted at port numbers 27017, 27018 and 27019 respectively.

This sample demonstrates the process of adding support for MongoDB to a Windows Azure solution with a web role.

1) Add the following MongoDB projects to the solution;

- MongoDBAzureHelper

- ReplicaSetRole

2) Add the MongoDB assemblies to the web role:

- Mongo.DB.Bson (copy local)

- Mongo.DB.Driver (copy local)

- MongoDBAzureHelper (from the added project)

3) Add the MongoDB settings described earlier to the Windows Azure service configuration.

MongoDB on Windows Azure with Node.js

Node.js is a popular system for developing web servers using JavaScript and an asynchronous programming model. Microsoft recently released the Windows Azure SDK for Node.js which makes it easy to deploy Node.js web applications to Windows Azure.

The SDK provides an extensive set of PowerShell scripts – such as Add-AzureNodeWebRole, Start-AzureEmulator, and Publish-AzureService – which simplify the lifecycle management of developing and deploying a Node.js web application. It contains several introductory tutorials including a Node.js Web Application with Storage on MongoDB. This tutorial shows how to add a replica set implemented, as described earlier, to a web application developed in Node.js.

Summary

The MongoDB on Windows Azure wrapper and the Windows Azure SDK for Node.js tutorial have made it very easy to try MongoDB out in a Windows Azure hosted service.

Richard Seroter (@rseroter) posted Comparing AWS/Box/Azure for Managed File Transfer Provider on 2/6/2012:

As organizations continue to form fluid partnerships and seek more secure solutions than “give the partner VPN access to our network”, cloud-based managed file transfer (MFT) solutions seem like an important area to investigate. If your company wants to share data with another organization, how do you go about doing it today? Do you leverage existing (aging?) FTP infrastructure? Do you have an internet-facing extranet? Have you used email communication for data transfer?

All of those previous options will work, but an offsite (cloud-based) storage strategy is attractive for many reasons. Business partners never gain direct access to your systems/environment, the storage in cloud environments is quite elastic to meet growing needs, and cloud providers offer web-friendly APIs that can be used to easily integrate with existing applications. There are downsides related to loss of physical control over data, but there are ways to mitigate this risk through server-side encryption.

That said, I took a quick look at three possible options. There are other options besides these, but I’ve got some familiarity with all of these, so it made my life easier to stick to these three. Specifically, I compared the Amazon Web Services S3 service, Box.com (formerly Box.net), and Windows Azure Blob Storage.

Comparison

The criteria along the left of the table are primarily from the Wikipedia definition of MFT capabilities, along with a few additional capabilities that I added.

Feature

Amazon S3

Box.com

Azure Storage

Multiple file transfer protocols HTTP/S (REST, SOAP) HTTP/S (REST, SOAP) HTTP/S (REST) Secure transfer over encrypted protocols HTTPS HTTPS HTTPS Securely storage of files AES-256 provided AES-256 provided (for enterprise users) No out-of-box; up to developer Authenticate users against central factors AWS Identity & Access Management Uses Box.com identities, SSO via SAML and ADFS Through Windows Azure Active Directory (and federation standards like OAuth, SAML) Integrate to existing apps with documented API Rich API Rich API Rich API Generate reports based on user and file transfer activities Can set up data access logs Comprehensive controls Apparently custom; none found. Individual file size limit 5 TB 2 GB (for business and enterprise users) 200GB for block blob, 1TB for page blob Total storage limits Unlimited Unlimited (for enterprise users) 5 PB Pricing scheme Pay monthly for storage, transfer out, requests Per user Pay monthly for storage, transfer out, requests SLA Offered 99.999999999% durability and 99.99% availability of objects ? 99.9% availability Other Key Features Content expiration policies, versioning, structured storage options Polished UI tools or users and administrators; integration with apps like Salesforce.com Access to other Azure services for storage, compute, integration

Overall, there are some nice options out there. Amazon S3 is great for pay-as-you go storage with a very mature foundation and enormous size limits. Windows Azure is new at this, but they provide good identity federation options and good pricing and storage limits. Box.com is clearly the most end-user-friendly option and a serious player in this space. All have good-looking APIs that developers should find easy to work with.

Have any of you used these platforms for data transfer between organizations?

<Return to section navigation list>

SQL Azure Database, Federations and Reporting

Adam Hurwitz (@adam_hurwitz) announced a New Service Update Released for Microsoft Codename "Data Transfer" in a 2/6/2012 post:

I’m happy to announce that we have just released another update for the Microsoft Codename “Data Transfer” lab.

We listened to you. We received feedback from a lot of users on our feature voting site and through email. We took the top voted feature and made it our focus for this release.

Update and Replace data in SQL Azure

Now when you upload a file to SQL Azure, you will have the choice of importing the data into a new or existing table.

If you choose an existing table, then you will have the choice to Update or Replace the data in the table. Update is an upsert (update or insert depending on whether the primary key is found). Replace does a truncate and insert.

And then we’ve created a really easy-to-use UI for choosing the column mappings between your file and the table schema. With a click you can drag-and-drop the columns, either up and down to change position or to the Unmapped list.

Big Data

We have also done a lot of work on the internals of the service so that it can accept a very large volume of data. This is not something that you can see now because it takes a few of our short releases to surface the functionality. But stay tuned!

Enjoy – and please let us know how it goes and what you need.

I need Big Data. I wonder how many “short releases” will be needed “to surface the functionality”?

<Return to section navigation list>

MarketPlace DataMarket, Social Analytics and OData

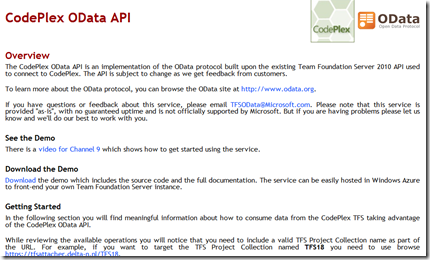

René van Osnabrugge (@renevo) described Installing the TFS OData service on your corporate TFS server in a 2/8/2012 post:

This blog post describes how to install and configure the OData TFS Service on your corporate TFS Server. I made this post because the TFS OData documentation does not really cover this topic very well. It covers the installation on Azure and on CodePlex.

A little background

My company Delta-N, built a nice Windows Phone application. The TFS Attacher. This application allows you to attach images to an existing work item on your TFS Server. For example when you draw pictures on a whiteboard during a stand up meeting, you can take a picture of the whiteboard and directly attach to the work item.

OData Service

The TFS Attacher works with the TFS OData Service. This service provides an elegant way to access and query TFS. Also some modify actions are enabled with this service. For example adding attachments to a work item.

You can find all about the OData Service on the blog of Brian Keller. You can download also from the Microsoft site.

Installing the Service

When you download the service and extract the bits, you’ll notice that it is not really an installable application, but more a set of source, docs and assemblies.

When I read the StartHere.htm or the word document inside the doc folder I got a little confused. I talked about azure and the examples were based on the CodePlex TFS.

I wanted to install the OData service on my TFS production Server. The document states that the Azure Tools for Visual Studio are needed to run the service. I do not want to pollute my production server with development tools.

So I tried some things from the documentation and tested some things and found a good work around to use the service on our corporate TFS.

Here are the steps I performed

Set up my Development environment

As I mentioned earlier, the OData download contains source code. The first thing I did was setting up my Development machine so that the OData service could be built and run. I followed the instructions that were in the document.

Most important thing is to run the setup.cmd. This install all the SDK’s and prerequisites. After installation open the ODataTFS.sln on your local computer and build solution.

Prepare Production Server

Now it’s time to prepare your production server to host the OData Service. It is not necessary that you host the OData Service on the TFS Production server. It can be any other server that can access your TFS Server but for now I chose to install it on the TFS Server.

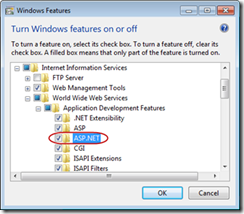

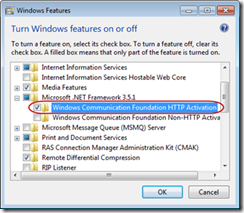

First I made sure that my IIS had the necessary prerequisites.

- Microsoft Visual Studio Team Explorer 2010

- Microsoft .NET Framework 4

- Internet Information Services 7, with the following features:

I created a new directory called ODataTFS and created a new website pointing to this directory. Note that a new application pool is created.

- Grant full access permissions to the IIS_ISUR user to the %programdata%\Microsoft\Team Foundation local folder.

- Grant read access permissions to the IIS_ISUR user to the ODataTFS folder

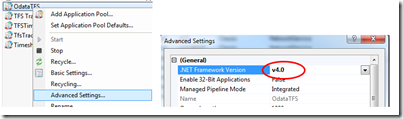

- Open the advanced settings of the application pool and set the .Net framework version to v4.0

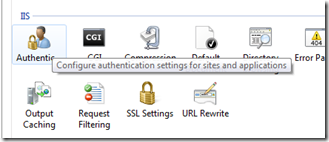

- Navigate to Authentication

- Disable all authentication methods

- Enable Anonymous authentication

- Start your application pool and website

Create deployment

Now it was time to create a deployable package which we can run on our production server. Surely, the nicest way to do this is to create an automated build on TFS to build your solution. However, in this case I will describe the easiest way to achieve this.

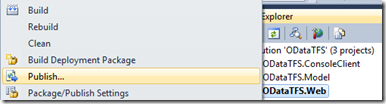

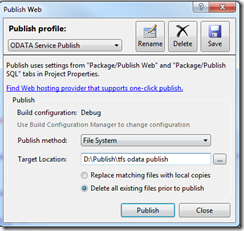

In the first step I opened the ODataTFS.sln and build it. Now I use the Publish Website option to create a deployable website.

The output in the directory is the set that you want to deploy. However, if you deploy this solution you will get an error message stating that some dll’s cannot be found.

This error is related to the fact that the Azure SDK is not and will not be installed on our production server. Luckily we can work around this.

Navigate to C:\Program Files\Windows Azure SDK\v1.5\bin\runtimes\base and copy the following files to the bin directory in your publish folder.

- Microsoft.WindowsAzure.ServiceRuntime.dll

- msshrtmi.dll (in subdir x86 or x64)

Now you need to make some modifications in the web.config. Again, the nice way is to do it in the automated build using Config Transformations but for now manually will do.

Remove the following section

1: <system.diagnostics>2: <trace>3: <listeners>4: <add type="Microsoft.WindowsAzure.Diagnostics.DiagnosticMonitorTraceListener, Microsoft.WindowsAzure.Diagnostics, Version=1.0.0.0, Culture=neutral, PublicKeyToken=31bf3856ad364e35" name="AzureDiagnostics">5: <filter type="" />6: </add>7: </listeners>8: </trace>9: </system.diagnostics>set compilation debug to false

1: <compilation debug="true" targetFramework="4.0" />Change this setting to your own TFS url. (e.g. https://tfs.mycompany.com/tfs)

1: <!-- TFS CODEPLEX SETTINGS -->2: <add key="ODataTFS.TfsServer" value="https://tfs.mycompany.com/tfs" />NOTE: Make sure you run the OData service and your tfs service under https.

Deploy and Test OData Service

Copy the contents of your publish directory to your production server in the directory that you created there. Make sure the website is started.

Navigate to the website. If it is set up correctly, the page will display the following:

Now edit the url so you can access the OData Service. For example to retrieve projects you type.

{odataurl}/{CollectionName}/Projects –> https://odatatfs.mycompany.com/DefaultCollection/Projects

You will be asked for credentials. These are TFS credentials. If it is set up correctly, a list of projects will appear.

More examples are on the start page that shows when you access the url of your service.

Summary and Links

Paul Miller posted Data Market Chat: Flip Kromer discusses Infochimps on 2/8/2012 to his Cloud of Data blog:

I originally recorded a podcast with Infochimps’ Flip Kromer way back in December 2009, when most of today’s data markets were just starting out. We spoke again last week, as part of my current series of Data Market Chats, and it’s interesting to begin exploring some of the ways in which Infochimps and its peers have evolved.

Describing Infochimps variously as a “SourceForge for data” or an “Amazon for data,” Flip argues that the site’s real value lies in bringing data from different sources together in one place. This, he suggests, is part of allowing customers to “bridge the gap from data to insight.”

Despite his impatience with some of the complexities of the Semantic Web ideal, Flip willingly embraces the lightweight semantics emanating from projects such as the search engine-backed schema.org. He also recognises the value of good metadata in making data easy to use, and in introducing a degree of comparability between data sets from different sources.

Towards the end of the conversation, Flip provides his perspective on some of the other players in this space. Microsoft’s Azure Data Market, for example, is Saks Fifth Avenue to Infochimps’ Amazon; one has ‘the best’ socks, whilst the other has all the socks.

Following up on a blog post that I wrote at the start of 2012, this is the fourth in a series of podcasts with key stakeholders in the emerging category of Data Markets.

Like most of my podcasts, this one is audio-only. I am conducting a short survey this week (only one question is mandatory) to gauge interest in alternative forms of podcast, and would be grateful if you could take a moment to record your view. I shall summarise the findings on Friday.

Related articles

- Data Market Chat: Tyler Bell discusses Factual (cloudofdata.com)

- Data Market Chat: Chris Hathaway discusses AggData (cloudofdata.com)

- Data Market Chat: Hjálmar Gíslason discusses DataMarket.com (cloudofdata.com)

- Data-as-a-Service startup Infochimps swaps out CEO (gigaom.com)

- Infochimps Acquires Y Combinator Startup Data Marketplace, Expanding Brand Holdings and Online Presence (prweb.com)

- What does a single day of Flickr uploads look like as real photos? (infochimps.com)

Steve Fox (@redmondhockey) described SharePoint Online and Windows Azure: Developing Secure BCS Connections in the Cloud on 2/6/2012:

Introduction

Over the Christmas vacation, a few of us got together and started down the path of how to not only secure external data connections to SharePoint Online, but more generally discussed a few different patterns that can be used to develop ‘complete cloud’ solutions; that is, solutions that leverage the collaborative platform and features of SharePoint Online and deploy solutions to SharePoint Online with code that lives in Windows Azure.

In this blog post, I’m going to cover how to secure an external data connection with the Business Connectivity Services (BCS) using a secure WCF service deployed to Windows Azure. The discussion will highlight three additional areas you need to consider when securing the connection. The blog post will reference a comprehensive hands-on lab at the end that you can walk through to replicate what is discussed.

Business Connectivity Services and SharePoint Online

Late last year, I wrote a blog post on SharePoint Online’s newest feature addition: BCS. On its own, BCS is a very interesting artifact; it enables you to connect external line-of-business (LOB) systems with SharePoint Online. So, what at first appears to be a normal looking list actually dynamically loads the external data from a cloud-based data source like SQL Azure. This special kind of list is known as an external list. For example, you can see how the below list exposes data from an external data source and provides the user read/write permissions on the data it dynamically loads.

Figure 1: External List Loading External Data

Now it’s not my intention in this post to give you an overview of the BCS; you can get a great introduction to SharePoint Online and BCS by reading this article: http://msdn.microsoft.com/en-us/library/hh412217.aspx. More to the point, my previous blog post(s) focused on how to walk through the creation of an external content type (ECT) using a WCF service deployed to Windows Azure that acts against a SQL Azure database, also hosted in the cloud. You can find a prescriptive walkthrough here: http://blogs.msdn.com/b/steve_fox/archive/2011/11/12/leveraging-wcf-services-to-connect-bcs-with-sharepoint-online.aspx. However, while the aforementioned blog post gave you a walkthrough of how to create the connection between an external data source and SharePoint Online, it didn’t discuss how to secure that connection.

Key Elements in Securing the Connection

While creating an open WCF service (e.g. HTTP-enabled service with no authentication) can be useful in some situations, say exposing bus schedule data or public demographic information, it’s doesn’t enable developers to add a level of security that you will often require. You can control permissions against the ECT, thus provisioning some consumers of the external list with read-only privileges and others with read/write privileges, but that’s only one layer—it doesn’t cover the service layer. To add an additional layer of security for the WCF service, in this pattern, will necessitate three additional steps:

1. Configuring the service to be an HTTPS endpoint rather than an HTTP endpoint.

2. Leveraging the UserNamePasswordValidator class to help authenticate the service.

3. Encrypting the WCF service with a certificate.

I’ll provide some discussion around these three elements and then to close this blog post will point you to a comprehensive hands-on lab that we’ve put together for you to walk through and test this pattern.

Configuring the WCF Service

To create an open or non-secure (HTTP) service, you can use BasicHttpBinding on the service. For example, the serviceModel snippet from my service web.config below illustrates how I used BasicHttpBinding to create a recent service that exposed Grades data in SharePoint Online.

… <system.serviceModel> <services> <service behaviorConfiguration="Grades_LOB_For_SP.Service1Behavior" name="Grades_LOB_For_SP.GradeService"> <endpoint address="" binding="basicHttpBinding" contract="Grades_LOB_For_SP.IGradesService"> <identity> <dns value="localhost" /> </identity> </endpoint> <endpoint address="mex" binding="mexHttpBinding" contract="IMetadataExchange" /> </service> </services> … </system.serviceModel> …However, when redeploying the service for security, you can employ a number of methods to either pass or look up the identity of the SharePoint user (or external system identity) when creating your WCF service. For example, you could amend your service configuration and move beyond BasicHttpBinding and use WSHttpBinding instead. Using WSHttpBinding provides a broader array of security options that you can use to authenticate the service. Thus, when the identity of the client is known, it is authorized to perform the functions that are built within the service—e.g. create, read, update, and delete web methods that execute against the back-end (external) data source.

… <system.serviceModel> … <bindings> <wsHttpBinding> <binding name="Binding"> <security mode="TransportWithMessageCredential"> <transport /> <message clientCredentialType="UserName" /> </security> </binding> </wsHttpBinding> </bindings> <behaviors> <serviceBehaviors> <behavior name="NewBehavior"> <serviceCredentials> <userNameAuthentication userNamePasswordValidationMode="Custom" customUserNamePasswordValidatorType="MyService.Identity.UserAuthentication, MyService" /> </serviceCredentials> <serviceMetadata httpsGetEnabled="true" /> <serviceDebug includeExceptionDetailInFaults="true" /> </behavior> </serviceBehaviors> </behaviors> <services> <service behaviorConfiguration="NewBehavior" name="MyService.Service"> <endpoint address="" binding="wsHttpBinding" bindingConfiguration="Binding" name="MyService.Service" contract="MyService.IContentService" /> <endpoint address="mex" name="MetadataBinding" binding="mexHttpsBinding" contract="IMetadataExchange"></endpoint> </service> </services> <serviceHostingEnvironment multipleSiteBindingsEnabled="true" /> </system.serviceModel> …From the above excerpt from my web.config file, you can see that there are a few more configuration options to consider/add when locking down your service. And debugging these in the cloud can be a challenge, so be sure to turn on errors and debug locally often before deploying into your cloud environment.

For more information on transport security, go here: http://msdn.microsoft.com/en-us/library/ms729700.aspx.

Using the UserNamePasswordValidator Class

When authenticating using WCF, you can create custom user name and password authentication methods and validate a basic claim being passed from the client. The creation and passing of the username and password claim can be accomplished by using the UserNamePasswordValidator class. For example, in the following code snippet, note that the Validate method passes two string parameters and in this case uses comparative logic to ‘authenticate’ the user.

… public override void Validate(string userName, string password) { if (null == userName || null == password) { throw new ArgumentNullException(); } if (!(userName == "SPUser1" && password == "SP_Password") && !(userName == "SPUser2" && password == "SP_Password2")) { throw new FaultException("Unknown Username or Incorrect Password"); } } …Note: The username and password are passed using the Secure Store Service Application ID. So, when you add the service using SharePoint Designer, you would add the username and password that would be validated within the WCF service.

This proved to be an interesting framework to test the authentication of the SharePoint Online user, but the reader should be aware that we still are discussing the pros and cons of implementing this pattern across different types of production environments. Perhaps for a small-scale production services architecture, you might leverage this, but for large, enterprise-wide deployments managing and mapping credentials for authorization would require a more involved process of building and validating the user.

You can find more information on the UserNamePasswordValidator class here: http://msdn.microsoft.com/en-us/library/aa702565.aspx.

Encrypting a WCF Service using Certificates

The final piece of creating the new and secured service was encrypting the WCF service using trusted certificates. The certificate not only enabled an encrypted connection between the WCF service and SharePoint Online, but it also established a trusted connection through the addition of an SSL certificate from a trusted certificate authority. For this service, you must create a trusted certificate authority because SharePoint Online does not trust a self-signed certificate. The username and password are passed in the request header, which is trusted by the WCF service. This provides transport level authentication for the WCF service.

Beyond the process of creating a trusted certificate authority, a couple of things you’ll need to be aware of:

- The Windows Azure VS tools automatically create a certificate and deploy it with your code. You can view it from your Settings in your project.

- You need to both include your trusted certificate authority with your service code and upload it to your Windows Azure management portal.

Note: I mention #1 because we ran into an error when deploying the service, and it turned out to be a mismatched certificate error with the auto-generated certificate in the tools. (Reusing the thumbprint from my trusted certificate authority got it working.)

Adding the trusted certificate authority is straightforward; you use the Cloud project Settings to add certificates, as per the figure below.

Figure 2: Certificates in Windows Azure Project

Also, note that you’ll need to ensure you enable both HTTP and HTTPS on your service endpoint—and then map the SSL Certificate Name to your secure HTTPS endpoint in your Windows Azure project.

Figure 3: HTTP and HTTPS Endpoints in the WCF Service Project

After you load your trusted certificate authority to your Windows Azure subscription, you’ll then note that you have the chained certs as well, as per the figure below.

Figure 4: Uploaded Certificates in Windows Azure

The net result of deploying the now secured service is that you can browse to the service and then click the security icon in the URL field to view the certificate information. You should also be able to browse your WSDL without any issue.

Figure 5: Final Service

At this point, you can then use this service endpoint to create the ECT in much the same way you did in my earlier post.

Summary & Specific Guidance

This blog post was a follow-up to my earlier post on using WCF to connect SQL Azure data to SharePoint Online. It was meant to highlight how the earlier non-secure WCF service that was connected to SharePoint Online could be extended and secured using 1) WCF HTTPS, 2) the UserNamePasswordValidator class, and 3) trusted certificate authority. I typically like to give prescriptive guidance for developers to use to test out these patterns, so to help you get on your way, we’ve put together a comprehensive hands-on lab that walks you through how to create a secure WCF service and connect to SharePoint Online using BCS in a step-by-step fashion.

You can download a lengthy hands-on lab that walks you through the specifics by going here: http://tinyurl.com/7oghxln.

This is an interesting movement forward in the ever-evolving SharePoint Online and Windows Azure development story. We’ll have more for you moving forward. Look for some additional information on general cloud patterns with SharePoint Online and Windows Azure and also some cross posts that will highlight service-bus relay patterns as well as additional information around identity management.

I’d like to thank a couple of the guys on my team (Paul and Matt) and the team from Hyderabad (Aniket, Manish, Raj, and Sharad) on their collaboration to get us this far. Lots more good stuff to come on SharePoint Online and Windows Azure development with quite a bit of interest on this from the community.

<Return to section navigation list>

Windows Azure Access Control, Service Bus and Workflow

Ravi Bollapragada explained how to Solve ‘Complex Data Restructuring’ Using the ‘List’ Operations in the Service Bus EAI/EDI Mapper in a 2/8/2012 post:

Problem at Hand

Anyone who has done serious mapping would have encountered the problem of transforming related data from one set of complex structures to another. This is because of the fact that different applications and systems use different ways to normalize data to best suit their needs. When such systems need to interoperate the data must be mapped from one schema structure to another.

Observed Pattern

One of the most prevalent patterns used to solve this problem, especially when dealing with complex restructuring, is to de-normalize the data into one flat structure and then normalize it to the target schema structure(s). BizTalk users had to deal with this logic using scripts (in the language of their choice). This was because BizTalk had no support storing intermediate de-normalized data, while the scripting languages did (For example, XSLT programmers typically de-normalize the data into one or more hashtables of complex data strings and then parse them out to the destination).

Lack of support for storing intermediate data would force users to develop scripts by themselves or scripting experts. The more such cases in a map the more it becomes a collection of scripts making mapping a programmer’s domain and mostly unmanageable and unreadable for non-programmers (IT pros, SMEs, Business Analysts). In most such cases, the visual mapping tool is typically sacrificed and a programming tool is adopted, resulting in higher costs in development and maintenance.

Good News, You Don’t Have to Know/Use Scripting to Solve the Problem

In the new transforms functionality of the Service Bus EAI & EDI Labs release, we have introduced support for storing intermediate data in Lists, and perform operations on Lists, the same way one would on source message tree. And all this through visual modelling on the mapper surface. Lists can store multiple members making them two-dimensional and almost equivalent to storing data in tables. Let me illustrate the usage of Lists in solving a complex problem of heterogeneous structural mapping.

The Much Dreaded LIN Loop with SDQ Segments in EDI Mapping

LIN Loops commonly help capture the information of items in an inventory tracking application. A nested structure of LIN segments, ZA and SDQ segments full information pivoted on each item type is reflected. The SDQ segment is a bit complicated as it stacks up multiple location and quantity pairs for the different line items. Mapping this structure to a target application structure that is pivoted on locations makes a challenging task; this can be addressed in a two stage procedure.

Stage1: De-normalize LIN,ZA,SDQ data into a list containing all the data. Here are the steps we followed:

This nested set of loop operations within List scope helped us denormalize all the item/location/qty/qty_type data. This data can now be restructured into any relevant target structure.

Stage 2: Use the denormalized data to build the target structure. Here are the steps we followed:

This second stage operations help us complete the target schema structure that is pivoted on locations and items within each location.

SUCCESS!!! Once you get the hang of it, this two-stage procedure supported by the new mapper, is simple, intuitive and readable. You could apply this to more complex HL Loop and other complex nested structures.

Please use Discretion

With great power comes great responsibility; storing data in Lists will add tax to the performance and other SLAs. For not-so-complex structural transformations, one could do without intermediate storage. We recommend that you use Lists only when you don’t have a workable alternative.

We are continuously striving to provide you with more usable and powerful mapping tools. Do optimize their usage against your maintainability and performance needs. And kindly share your feedback and suggestions to help us improve the mapper tools.

Abhishek Lal (@AbhishekRLal) began an Enterprise Integration Patterns with Service Bus (Part 1) series on 2/6/2012:

Last week I presented at TechReady a session titled: Achieving Enterprise Integration Patterns with Service Bus. Folks who are familiar with the work of Gregor Hohpe, and the book / site http://www.eaipatterns.com/ already know the power of these building blocks in solving integration problems. I will not cover all the material in detail here but wanted to share the key concepts that enable the use of Windows Azure Service Bus in implementing these patterns. As the title of this post indicates, this is a first step of many and here I will cover the following:

- Messaging Channel Pattern

- Publish-Subscribe

- Content based routing

- Recipient List

Publish-Subscribe

The scenario here is that a sender broadcasts an event to all interested receivers. A common use cases for this is event notification.

The way to implement this with Service Bus is to have a Topic with several Subscriptions. Each Subscription is for a recipient of the event notification. A single message sent to the Topic will be delivered to each Subscription (each will receive a copy of the message)

Some key features of Service Bus relevant to this pattern:

- A single Topic can have up-to 2000 Subscriptions

- Subscription can be individually managed with permissions

- Once a Subscription is created, it gets all subsequent messages sent to the topic

- Reliable delivery can be achieve thru PeekLock and Transactions

The code sample for this is available here.

Content based Routing

This is when we want to route a message to different recipients based on data contained in the message. The sender is not aware of the specific recipients but just sets appropriate metadata in the message. The recipients decide if a message is relevant to them based on its content.

Any scenario where you need to perform a single logical operation across different systems would need this, say an order processing system that routes orders to different departments.

The Service Bus implementation for this is thru use of SQL Rules on Subscriptions. These Rules contain Filters that are applied on the properties of the message and determine if a particular message is relevant to that Subscription.

Service Bus Features used:

- SQL Filters can specify Rules in SQL 92 syntax

- Typically Subscriptions have one Rule but multiple can be applied

- Rules can contain Actions that may modify the message (in that case a copy of the message is created by each Rule that modifies it)

- Actions can be specified in SQL 92 syntax too.

The code for this sample is available here.

Recipient List

There are scenarios where a sender wants to address a message to specific recipients. Here the sender sets metadata on the message that routes the message to specific subscribers.

Common use cases for this are order processing systems where the order needs to be fulfilled by a specific vendor or department that is selected by the user submitting the order. Email is another such messaging pattern.

The Service Bus implementation for this is thru use of SQL Rules on Subscriptions. We use the LIKE clause in SQL filters to achieve the desired results. The sender can just add the addresses of recipients in a comma-separated manner.

Service Bus Features used:

- SQL Filters with Rules in SQL 92 syntax

- LIKE clause for finding items addressed to Recipient

- Comma separated values to address multiple recipients

The code for this sample is available here.

No significant articles today.

<Return to section navigation list>

Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

No significant articles today.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

Bruno Terkaly (@brunoterkaly) described Cloud Applications and Application Lifecycle Management (Includes Azure) in a 2/8/2012 post:

Azure Application Lifecycle Management with Buck Woody http://blogs.msdn.com/b/buckwoody/archive/2012/02/07/application-lifecycle-management-overview-for-windows-azure.aspx ALM with Azure http://msdn.microsoft.com/en-us/library/ff803362.aspx David Chappell http://www.microsoft.com/global/applicationplatform/en/us/RenderingAssets/Whitepapers/What%20is%20Application%20Lifecycle%20Management.pdf Northwest Cadance Can Help http://itevent.net/application-lifecycle-management-alm-from-design-to-development Microsoft MSDN – Application Lifecycle Management http://msdn.microsoft.com/en-us/vstudio/ff625779

Brian Swan (@brian_swan) described Deploying PHP and ASP.NET Sites in a Single Azure Web Role in a 2/7/2012 post:

Last week, a colleague approached me with a question he’d been asked by a customer: How do you deploy both a PHP website and an ASP.NET website on the same Web Role in Windows Azure? Given the mixed environments many people work with today, I thought sharing the answer to his question might be helpful to others. However, unlike many of my other posts, the particular solution I’m going to share requires Visual Studio 2010 with the .NET SDK for Windows Azure installed. It should be possible to deploy both sites without Visual Studio…I just haven’t investigated that approach yet. (I will speed up that investigation if I hear clamoring for it in the comments.)

A couple of things before I get started…

First, I highly recommend that you watch this episode of Cloud Cover from minute 9:00 to minute 28:00: Cloud Cover Episode 37: Mulitple Websites in a Web Role. Even though the video looks at how to deploy multiple .NET websites, it contains just about all the information you need to deploy websites of mixed flavors. The video will provide context for the steps below…all I do is fill in a couple of minor details. (Big thanks to Steve Marx for helping me figure out the details.)

Second, before you build, package and deploy your applications, I suggest you do a little preliminary work. First create an empty Hosted Service on Windows Azure to provision the domain name for your ASP.NET website (e.g. mysite.cloudapp.net). Then, create a CNAME record for the domain name of your PHP site (e.g. www.myphpsite.com) that points to your Azure domain name (mysite.cloudapp.net). (I’m assuming you have a domain name, like www.myphpsite.com, registered already.) You can do these steps later, but doing them in advance makes the process below clearer.

Now you’re ready to get started…

1. Create a Windows Azure project with Visual Studio. You will be prompted to add a Web Role in this process. Choose any ASP.NET Web Role (I chose an ASP.NET MVC 3 Web Role). Click OK to accept default settings through the rest of the process.

2. Open the service definition file (ServiceDefinition.csdef). Add a <Site> element similar to the one below to the <Sites> element. (There will already be one <Site> element that corresponds to your ASP.NET site. This one will correspond to your PHP site.)

<Site name="YourSiteName" physicalDirectory="path\to\your\PHP\app"><Bindings><Binding name="Endpoint1" endpointName="Endpoint1" hostHeader="www.yourdomain.com" /></Bindings></Site>Note that you need to fill in values for the the name, physicalDirectory, and hostHeader attributes. The value for the name attribute is somewhat arbitrary, but the physicalDirectory attribute should point to your local PHP application, and the hostHeader value should be the domain name for your PHP application.

3. While you have the service definition file open, add the following <Startup> element right after the <Sites> element.

<Startup><Task commandLine="add-environment-variables.cmd" executionContext="elevated" taskType="simple" /><Task commandLine="install-php.cmd" executionContext="elevated" taskType="simple" ><Environment><Variable name="EMULATED"><RoleInstanceValue xpath="/RoleEnvironment/Deployment/@emulated" /></Variable></Environment></Task><Task commandLine="monitor-environment.cmd" executionContext="elevated" taskType="background" /></Startup>These startup tasks will install PHP and configure IIS to handle PHP requests when you deploy your applications. (We’ll add the files that are referenced in these tasks to our project next.)

Note: I’m borrowing the startup tasks and the referenced files from the default scaffold that you can create by using the Windows Azure SDK for PHP.

4. Add the files referenced in the startup tasks to your ASP.NET project. (The necessary files are in the .zip file attached to this post). When you are finished, you should have a directory structure that looks like this:

5. If you want to run your application using the Compute and Storage Emulators, you need to edit your hosts file (which you can find by going to Start->Run, and typing drivers - the hosts file will be in the etc directory). Add this line to the hosts file:

127.0.0.1 www.myphpsite.com

Now, press Ctrl+F5 (or select Start Without Debugging) to run your application in the emulator.

You should see your site brought up at http://127.0.0.1:81. If you browse to http://www.myphpsite.com:81, you should see you PHP site.

6. When you are ready to deploy your app, you can use the Visual Studio tools: http://msdn.microsoft.com/en-us/library/windowsazure/ff687127.aspx#Publish. After publishing, you should be able to browse to mysite.cloudapp.net to see your ASP.NET application, and (assuming you have set up a CNAME record for your PHP site) and browse to www.myphpsite.com to see your PHP site.

Bruno Terkaly (@brunoterkaly) listed Outbound ports that have to be opened for Azure development in a 2/7/2012 post.

Brent Stineman (@BrentCodeMonkey) proposed A Custom High-Availability Cache Solution in a 2/7/2012 post:

For a project I’m working on, we need a simple, easy to manage session state service. The solution needs to be highly available, low latency, but not persistent. Our session caches will also be fairly small in size (< 5mb per user). But given that our projected high end user load could be somewhere in the realm of 10,000-25,000 simultaneous users (not overly large by some standards), we have serious concerns about the quota limits that are present in today’s version of the Windows Azure Caching Service.

Now we looked around, Memcached, ehCache, MonboDB, nCache to name some. And while they all did various things we needed, there were also various pros and cons. Memcached didn’t have the high availability we wanted (unless you jumped through some hoops). MongoDB has high availability, but raised issues about deployment and management. ehCache and nCache well…. more of the same. Add to them all that anything that had a open source license would have to be vetted by the client’s legal team before we could use it (a process that can be time-consuming for any organization).

So I spent some time coming up with something I thought we could reasonably implement.

The approach

I started by looking at how I would handle the high availability. Taking a note from Azure Storage, I decided that when a session is started, we would assign that session to a partition. And that partitions would be assigned to nodes by a controller with a single node potentially handling multiple partitions (maybe primary for one and secondary for another, all depending on overall capacity levels).

The cache nodes would be Windows Azure worker roles, running on internal endpoints (to achieve low latency). Within the cache nodes will be three processes, a controller process, the session service process, and finally the replication process.

The important one here is the controller process. Since the controller process will attempt to run in all the cache nodes (aka role instances), we’re going to use a blob to control which one actually acts as the controller. The process will attempt to lock a blob via a lease, and if successful will write its name into that blob container. It will then load the current partition/node mappings from a simple Azure Storage table (I don’t predict us having more then a couple dozen nodes in a single cache) and verify that all the nodes are still alive. It then begins a regular process of polling the nodes via their internal endpoints to check on their capacity.

The controller also then manages the nodes by tracking when they fall in and out of service, and determining which nodes handle which partitions. If a node in a partition fails, it will assign that a new node to that partition, and make sure that the node is in different fault and upgrade domains from the current node. Internally, the two nodes in a partition will then replicate data from the primary to the secondary.

Now there will also be a hook in the role instances so that the RoleEnvironment Changing ad Changed events will alert the controller process that it may need to rescan. This could be a response to the controller being torn down (in which case the other instances will determine a new controller) or some node being torn down so the controller needs to reassign their the partitions that were assigned to those nodes to new nodes.

This approach should allow us to remain online without interruptions for our end users even while we’re in the middle of a service upgrade. Which is exactly what we’re trying to achieve.

Walkthrough of a session lifetime

So here’s how we see this happening…

- The service starts up, and the cache role instances identify the controller.

- The controller attempts to load any saved partition data and validate it (scanning the service topology)

- The consuming tier, checks the blob container to get the instance ID of the controller, and asks if for a new session ID (and its resulting partition and node instance ID)

- The controller determines if there is room in an existing partition or creates a new partition.

- If a new partition needs to be created, it locates two new nodes (in separate domains) and notifies them of the assignment, then returns the primary node to the requestor.

- If a node falls out (crashes, is being rebooted), the session requestor would get a failure message, and goes back to the controller for a new node for that partition.

- The controller provides the name of the previously identified secondary node (which is of course now the primary), and also takes on the process of locating a new node.

- The new secondary node will contact the primary node to begin replicate its state. The new primary will start sending state event change messages to the secondary.

- If the controller drops (crash/recycle), the other nodes will attempt to become the controller by leasing the blob. Once established as a controller, it will start over at step 2.

Limits

So this approach does have some cons. We do have to write our own synchronization process, and session providers. We also have to have our own aging mechanism to get rid of old session data. However, its my belie[f] that these shouldn’t be horrible to create so its something we can easily overcome.

The biggest limitation here is that because we’re going to be managing the in-memory cache ourselves, we might have to get a bit tricky (multi-gigabyte collections in memory) and we’re going to need to pay close attention to maximum session size (which we believe we can do).

Now admittedly, we’re hoping all this is temporary. There’s been mentions publically that there’s more coming to the Windows Azure Cache service. And we hope that we can at that time, swap out our custom session provider for one that’s built to leverage whatever the vNext of Azure Caching becomes.

So while not ideal, I think this will meet our needs and do so in a way that’s not going to require months of development. But if you disagree, I’d encourage you to sound off via the site comments and let me know your thoughts. .

Liam Cavanagh (@liamca) continued his What I Learned Building a Startup on Microsoft Cloud Services: Part 2 – Keeping Startup Costs Low series on 2/6/2012:

I am the founder of a startup called Cotega and also a Microsoft employee within the SQL Azure group where I work as a Program Manager. This is a series of posts where I talk about my experience building a startup outside of Microsoft. I do my best to take my Microsoft hat off and tell both the good parts and the bad parts I experienced using Azure.

One of the biggest differences between building a new service at Microsoft and in building a startup was in the way you look at startup costs. At Microsoft I didn’t worry as much about costs. For example, when you deploy your service to Windows Azure, you specify how many cores (or CPU’s) you want to allocate. At Microsoft we would make a best guess as to how many cores we needed. You might find it interesting to know that even though I work in the SQL Azure group within Microsoft, our groups still pays for our usage of Windows Azure, so it is important to keep costs down as much as possible. But, if our estimates are off by a few cores, it is not that big of a concern.

When I did my startup, this changed significantly. Suddenly I was faced with a potentially large monthly bill for my usage of Windows Azure. A single SQL Azure database to store user information was going to be at least $10 per month, a small VM with a single core for Windows Azure would be about $86.40 per month ($0.12 / hour * 24 hours * 30 days) and I will likely need two VMs to ensure constant availability of the service, plus an additional core for the administrative web site and I don’t even really know yet if this is going to be enough. Yikes, that would be at least $269.20/month and I am a long way from even starting to make some money. I can’t afford that…

So I did a little more research and I learned about a program at Microsoft called BizSpark. With this program I am able to get 1,500 hours (or 2 small VM) of Windows Azure and up to 5 GB (or 5 x 1GB) SQL Azure databases. This also provided me with 2,000,000 storage and queue transactions, but I did not realize that I would need queues at this point. Best of all, this free program runs for 3 years. That should be way more than enough time to get my service profitable.

The only issue I had was that I felt I would need 3 Windows Azure VM’s. Two for the Cotega service to provide a highly available SLA and one for the Web UI that the DBA would use to configure their notifications. In the end, I chose to use a hybrid model of services to accomplish this, but I will talk more about this in my next post.

Bruce Kyle described an MSDN Article: Building a Massively Scalable Platform for Consumer Devices on Windows Azure in a 2/6/2012 post to his US ISV Evangelism blog:

Excellent article shows how you can use web services hosted in Windows Azure and have it communicate across various devices, such as Windows Phone, iPhone, and Android. And have that service scale to terabytes in size.

My colleagues Bruno Terkaly and Ricardo Villalobos wrote the article for MSDN magazine Building a Massively Scalable Platform for Consumer Devices on Windows Azure.

The team describes:

- A RESTful Web services hosted in Windows Azure.

- JSON (and not XML) as the data format because it’s compact and widely supported.

And then they walk you through step-by-step in creating the Azure account, generating the Web service, and showing you code for the RESTful service. They show you how to deploy and then consume the service on each of the devices.

The explain, “Because Windows Azure-hosted RESTful Web services are based on HTTP, any client application that supports this protocol is capable of communicating with them. This opens up a wide spectrum of devices for developers, because the majority of devices fall into this category.”

How ISVs Scale with Consumer Devices on Azure

You can see how Sepia Labs uses these techniques for a real world application, Glassboard, in my Channel 9 video Social Media Goes Mobile with Glassboard on Azure.

Jialiang Ge posted two New Windows Azure Code Samples in a Microsoft All-In-One Code Framework 2012 January Sample Updates post of 2/5/2012:

Configure SSL for specific page(s) while hosting the application in Windows Azure (CSAzureSSLForPage)

Download: http://code.msdn.microsoft.com/CSAzureSSLForPage-e844c9fe

The sample was written by Narahari Dogiparthi – Escalation Engineer from Microsoft.

While hosting the applications in Windows Azure, developers are required to modify IIS settings to suit their application requirements. Many of these IIS settings can be modified only programmatically and developers are required to write code, startup tasks to achieve what they are looking for. One common thing customer does while hosting the applications on-premise is to mix the SSL content with non-SSL content. In Windows Azure, by default you can enable SSL for entire site. There is no provision to enable SSL only for few pages. Hence, Narahari has written sample that customers can use it without investing more time to achieve the task.

Change AppPool identity programmatically (CSAzureChangeAppPoolIdentity)

Download: http://code.msdn.microsoft.com/CSAzureChangeAppPoolIdentit-27099828

The sample was developed by Narahari Dogiparthi – Microsoft Escalation Engineer, too.

Most of customers test their applications to connect to cloud entities like storage, SQL Azure, AppFabric services via compute emulator environment. If the customer's machine is behind proxy that does not allow traffic from non-authenticated users, their connections fail. One of the workaround is to change the application identity. This cannot be done manually for Azure scenario since the app pool is created by Windows Azure when it is actually running the service. Hence, Narahari has written sample customers can use to change the AppPool identity programmatically.

<Return to section navigation list>

Visual Studio LightSwitch and Entity Framework 4.1+

Paul Patterson described Microsoft LightSwitch – Data First and Breaking Some Rules in a 2/7/2012 post:

This is is part two of a series of articles that walks through the A Little Productivity application and its source. In this article I talk about the data first approach I took in creating the business entities used in the application.As a “seasoned” (salt and peppered hair) professional, I’ve bee exposed to a lot of theory, best practices, and some times subjective methods for creating data architectures. From that massive book knowledge in the Narnia closet of my brain, I have found that the most effective way to build a data architecture is to… use common sense.

Interesting is how I come across people who challenge me on my data designs; which is good because I have never been one to claim to be perfect, and welcome the opportunity to learn from others ideas. However it seems that 98% of those challenges are based on subjective, or favourite, ways of doing things. It is rare that I get someone being objective in their criticism about what I have done. Even more interesting is when I dive deeper into the reasoning behind a person’s challenge, most of the time I get a, “…because that is the way it has always been done…”.

As years go by that conditioning takes over and seems to cloud the decision making process for some. All those rules make sense and should absolutely be considered, however these are only guidelines. Such is the case when I approach data design (data models, ERDs, and that sort of thing). Yes, there are best practices and so called “rules” that are considered when designing and implementing a data architecture, but like I said these are all just guidelines.

Here’s something to chew on… Think of those lines on the roads; those are guidelines. Sure, at peak traffic rush hours you absolutely want to drive your car within the parameters of the lines that are presented on the road. Those lines set the boundaries of usage that, during the busy rush hour, everyone on the road will have a common understanding of. These parameters set the expectations that all drivers can use when driving, which makes for a much safer and efficient movement of traffic.

Well, what about at 3:30 in the morning when you are the only one on the road? The same road with same lines exist, however you are the only vehicle on that road, and you know that taking a different path on that road will make for a much more efficient trip. Would you drive outside the parameters of those lines?

I’ve stopped at plenty of red lights where there is not other car for miles, and then chuckled to myself at how conditioned I have become to not proceed until there was a green light – that “rule” of the road.

What I am trying to say is that, despite being conditioned with all these rules about data architecture, it comes down to what makes the most sense. Following data design rules for the sake of following rules leads to bloated architectures that, in most cases, will make it difficult to use with those unique functional requirements you have to implement.

When I start down the path of designing a data architecture for a LightSwitch application, I generally start with following some basic relational database design concepts (see the section titled What is a Database in my article titled Top 10 Fundamentals for LightSwitch Newbies). With a high-level abstraction of my data, I use some simple guidelines to design the entities for my application. I then take a look at what I have in mind for the functionality of my application, and then refine my data design accordingly.

For example, take a look at how the information has been designed for managing the information about “stakeholders” (company, customers, contacts, addresses, and etc…). I could have easily created a table (entity) for each type of stakeholder, such as customer, employee, contact, and so on. However understanding that I wanted functionality that could maintain all this stakeholder data in a common context, I designed the entities accordingly.

A Customer can have one or more Address entities

A Contact can also have one or more Address entities.

So, both Customer and Contact entities can have Address entities (not the same address though).