Windows Azure and Cloud Computing Posts for 2/13/2012+

| A compendium of Windows Azure, Service Bus, EAI & EDI Access Control, Connect, SQL Azure Database, and other cloud-computing articles. |

• Updated 2/16/2012 with a few new articles marked •.

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Windows Azure Blob, Drive, Table, Queue and Hadoop Services

- SQL Azure Database, Federations and Reporting

- Marketplace DataMarket, Social Analytics and OData

- Windows Azure Access Control, Service Bus, and Workflow

- Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch and Entity Framework v4+

- Windows Azure Infrastructure and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

Azure Blob, Drive, Table, Queue and Hadoop Services, HPC

Avkash Chauhan (@avkashchauhan) posted Keys to understand relationship between MapReduce and HDFS on 2/15/2012:

Map Task (HDFS data localization):

The unit of input for a map task is an HDFS data block of the input file. The map task functions most efficiently if the data block it has to process is available locally on the node on which the task is scheduled. This approach is called HDFS data localization.

An HDFS data locality miss occurs if the data needed by the map task is not available locally. In such a case, the map task will request the data from another node in the cluster: an operation that is expensive and time consuming, leading to inefficiencies and, hence, delay in job completion.

Clients, Data Nodes, and HDFS Storage:

Input data is uploaded to the HDFS file system in either of following two ways:

- An HDFS client has a large amount of data to place into HDFS.

- An HDFS client is constantly streaming data into HDFS.

Both these scenarios have the same interaction with HDFS, except that in the streaming case, the client waits for enough data to fill a data block before writing to HDFS. Data is stored in HDFS in large blocks, generally 64 to 128 MB or more in size. This storage approach allows easy parallel processing of data.

HDFS-SITE.XML

<property> <name>dfs.block.size</name> <value>134217728</value> ç 128MB Block size </property>OR

<property> <name>dfs.block.size</name> <value>67108864</value> ç 64MB Block size (Default is this value is not set) </property>Block Replication Factor:

During the process of writing to HDFS, the blocks are generally replicated to multiple data nodes for redundancy. The number of copies, or the replication factor, is set to a default of 3 and can be modified by the cluster administrator as below:

HDFS-SITE.XML

<property> <name>dfs.replication</name> <value>3</value> </property>When the replication factor is three, HDFS’s placement policy is to:

- Put one replica on one node in the local rack,

- Another on a node in a different (remote) rack,

- Last on a different node in the same remote rack.

When a new data block is stored on a data node, the data node initiates a replication process to replicate the data onto a second data node. The second data node, in turn, replicates the block to a third data node, completing the replication of the block.

With this policy, the replicas of a file do not evenly distribute across the racks. One third of replicas are on one node, two thirds of replicas are on one rack, and the other third are evenly distributed across the remaining racks. This policy improves write performance without compromising data reliability or read performance.

Steve Peschka continued his series with The Azure Custom Claim Provider for SharePoint Project Part 2 on 2/14/2012:

In Part 1 of this series, I briefly outlined the goals for this project, which at a high level is to use Windows Azure table storage as a data store for a SharePoint custom claims provider. The claims provider is going to use the CASI Kit to retrieve the data it needs from Windows Azure in order to provide people picker (i.e. address book) and type in control name resolution functionality. Now let’s expand on this scenario a little more.

This type of solution plugs in pretty nicely to a fairly common scenario, which is when you want a minimally managed extranet. So for example, you want your partners or customers to be able to hit a website of yours, request an account, and then be able to automatically “provision” that account…where “provision” can mean a lot of different things to different people. We’re going to use that as the baseline scenario here, but of course, let our public cloud resources do some of the work for us.

Let’s start by looking at the cloud components were going to develop ourselves:

- A table to keep track of all the claim types we’re going to support

- A table to keep track of all the unique claim values for the people picker

- A queue where we can send data that should be added to the list of unique claim values

- Some data access classes to read and write data from Azure tables, and to write data to the queue

- An Azure worker role that is going to read data out of the queue and populate the unique claim values table

- A WCF application that will be the endpoint through which the SharePoint farm communicates to get the list of claim types, search for claims, resolve a claim, and add data to the queue

Now we’ll look at each one in a little more detail.

Claim Types Table

The claim types table is where we’re going to store all the claim types that our custom claims provider can use. In this scenario we’re only going to use one claim type, which is the identity claim – that will be email address in this case. You could use other claims, but to simplify this scenario we’re just going to use the one. In Azure table storage you add instances of classes to a table, so we need to create a class to describe the claim types. Again, note that you can instances of different class types to the same table in Azure, but to keep things straightforward we’re not going to do that here. The class this table is going to use looks like this:

namespace AzureClaimsData { public class ClaimType : TableServiceEntity { public string ClaimTypeName { get; set; } public string FriendlyName { get; set; } public ClaimType() { } public ClaimType(string ClaimTypeName, string FriendlyName) { this.PartitionKey = System.Web.HttpUtility.UrlEncode(ClaimTypeName); this.RowKey = FriendlyName; this.ClaimTypeName = ClaimTypeName; this.FriendlyName = FriendlyName; } } }I’m not going to cover all the basics of working with Azure table storage because there are lots of resources out there that have already done that. So if you want more details on what a PartitionKey or RowKey is and how you use them, your friendly local Bing search engine can help you out. The one thing that is worth pointing out here is that I am Url encoding the value I’m storing for the PartitionKey. Why is that? Well in this case, my PartitionKey is the claim type, which can take a number of formats: urn:foo:blah, http://www.foo.com/blah, etc. In the case of a claim type that includes forward slashes, Azure cannot store the PartitionKey with those values. So instead we encode them out into a friendly format that Azure likes. As I stated above, in our case we’re using the email claim so the claim type for it is http://schemas.xmlsoap.org/ws/2005/05/identity/claims/emailaddress.

Unique Claim Values Table

The Unique Claim Values table is where all the unique claim values we get our stored. In our case, we are only storing one claim type – the identity claim – so by definition all claim values are going to be unique. However I took this approach for extensibility reasons. For example, suppose down the road you wanted to start using Role claims with this solution. Well it wouldn’t make sense to store the Role claim “Employee” or “Customer” or whatever a thousand different times; for the people picker, it just needs to know the value exists so it can make it available in the picker. After that, whoever has it, has it – we just need to let it be used when granting rights in a site. So, based on that, here’s what the class looks like that will store the unique claim values:

namespace AzureClaimsData { public class UniqueClaimValue : TableServiceEntity { public string ClaimType { get; set; } public string ClaimValue { get; set; } public string DisplayName { get; set; } public UniqueClaimValue() { } public UniqueClaimValue(string ClaimType, string ClaimValue, string DisplayName) { this.PartitionKey = System.Web.HttpUtility.UrlEncode(ClaimType); this.RowKey = ClaimValue; this.ClaimType = ClaimType; this.ClaimValue = ClaimValue; this.DisplayName = DisplayName; } } }There are a couple of things worth pointing out here. First, like the previous class, the PartitionKey uses a UrlEncoded value because it will be the claim type, which will have the forward slashes in it. Second, as I frequently see when using Azure table storage, the data is denormalized because there isn’t a JOIN concept like there is in SQL. Technically you can do a JOIN in LINQ, but so many things that are in LINQ have been disallowed when working with Azure data (or perform so badly) that I find it easier to just denormalize. If you folks have other thoughts on this throw them in the comments – I’d be curious to hear what you think. So in our case the display name will be “Email”, because that’s the claim type we’re storing in this class.

The Claims Queue

The claims queue is pretty straightforward – we’re going store requests for “new users” in that queue, and then an Azure worker process will read it off the queue and move the data into the unique claim values table. The primary reason for doing this is that working with Azure table storage can sometimes be pretty latent, but sticking an item in a queue is pretty fast. Taking this approach means we can minimize the impact on our SharePoint web site.

Data Access Classes

One of the rather mundane aspects of working with Azure table storage and queues is you always have to write you own data access class. For table storage, you have to write a data context class and a data source class. I’m not going to spend a lot of time on that because you can read reams about it on the web, plus I’m also attaching my source code for the Azure project to this posting so you can [hack] at it all you want.

There is one important thing I would point out here though, which is just a personal style choice. I like to break out all my Azure data access code out into a separate project. That way I can compile it into its own assembly, and I can use it even from non-Azure projects. For example, in the sample code I’m uploading you will find a Windows form application that I used to test the different parts of the Azure back end. It knows nothing about Azure, other than it has a reference to some Azure assemblies and to my data access assembly. I can use it in that project and just as easily in my WCF project that I use to front-end the data access for SharePoint.

Here are some of the particulars about the data access classes though:

- I have a separate “container” class for the data I’m going to return – the claim types and the unique claim values. What I mean by a container class is that I have a simple class with a public property of type List<>. I return this class when data is requested, rather than just a List<> of results. The reason I do that is because when I return a List<> from Azure, the client only gets the last item in the list (when you do the same thing from a locally hosted WCF it works just fine). So to work around this issue I return claim types in a class that looks like this:

namespace AzureClaimsData { public class ClaimTypeCollection { public List<ClaimType> ClaimTypes { get; set; } public ClaimTypeCollection() { ClaimTypes = new List<ClaimType>(); } } }And the unique claim values return class looks like this:namespace AzureClaimsData { public class UniqueClaimValueCollection { public List<UniqueClaimValue> UniqueClaimValues { get; set; } public UniqueClaimValueCollection() { UniqueClaimValues = new List<UniqueClaimValue>(); } } }

- The data context classes are pretty straightforward – nothing really brilliant here (as my friend Vesa would say); it looks like this:

namespace AzureClaimsData { public class ClaimTypeDataContext : TableServiceContext { public static string CLAIM_TYPES_TABLE = "ClaimTypes"; public ClaimTypeDataContext(string baseAddress, StorageCredentials credentials) : base(baseAddress, credentials) { } public IQueryable<ClaimType> ClaimTypes { get { //this is where you configure the name of the table in Azure Table Storage //that you are going to be working with return this.CreateQuery<ClaimType>(CLAIM_TYPES_TABLE); } } } }

- In the data source classes I do take a slightly different approach to making the connection to Azure. Most of the examples I see on the web want to read the credentials out with some reg settings class (that’s not the exact name, I just don’t remember what it is). The problem with that approach here is that I have no Azure-specific context because I want my data class to work outside of Azure. So instead I just create a Setting in my project properties and in that I include the account name and key that is needed to connect to my Azure account. So both of my data source classes have code that looks like this to create that connection to Azure storage:

private static CloudStorageAccount storageAccount; private ClaimTypeDataContext context; //static constructor so it only fires once static ClaimTypesDataSource() { try { //get storage account connection info string storeCon = Properties.Settings.Default.StorageAccount; //extract account info string[] conProps = storeCon.Split(";".ToCharArray()); string accountName = conProps[1].Substring(conProps[1].IndexOf("=") + 1); string accountKey = conProps[2].Substring(conProps[2].IndexOf("=") + 1); storageAccount = new CloudStorageAccount(new StorageCredentialsAccountAndKey(accountName, accountKey), true); } catch (Exception ex) { Trace.WriteLine("Error initializing ClaimTypesDataSource class: " + ex.Message); throw; } } //new constructor public ClaimTypesDataSource() { try { this.context = new ClaimTypeDataContext(storageAccount.TableEndpoint.AbsoluteUri, storageAccount.Credentials); this.context.RetryPolicy = RetryPolicies.Retry(3, TimeSpan.FromSeconds(3)); } catch (Exception ex) { Trace.WriteLine("Error constructing ClaimTypesDataSource class: " + ex.Message); throw; } }

- The actual implementation of the data source classes includes a method to add a new item for both a claim type as well as unique claim value. It’s very simple code that looks like this:

//add a new item public bool AddClaimType(ClaimType newItem) { bool ret = true; try { this.context.AddObject(ClaimTypeDataContext.CLAIM_TYPES_TABLE, newItem); this.context.SaveChanges(); } catch (Exception ex) { Trace.WriteLine("Error adding new claim type: " + ex.Message); ret = false; } return ret; }One important difference to note in the Add method for the unique claim values data source is that it doesn’t throw an error or return false when there is an exception saving changes. That’s because I fully expect that people mistakenly or otherwise try and sign up multiple times. Once we have a record of their email claim though any subsequent attempt to add it will throw an exception. Since Azure doesn’t provide us the luxury of strongly typed exceptions, and since I don’t want the trace log filling up with pointless goo, I don’t worry about it when that situation occurs.

- Searching for claims is a little more interesting, only to the extent that it exposes again some things that you can do in LINQ, but not in LINQ with Azure. I’ll add the code here and then explain some of the choices I made:

public UniqueClaimValueCollection SearchClaimValues(string ClaimType, string Criteria, int MaxResults) { UniqueClaimValueCollection results = new UniqueClaimValueCollection(); UniqueClaimValueCollection returnResults = new UniqueClaimValueCollection(); const int CACHE_TTL = 10; try { //look for the current set of claim values in cache if (HttpRuntime.Cache[ClaimType] != null) results = (UniqueClaimValueCollection)HttpRuntime.Cache[ClaimType]; else { //not in cache so query Azure //Azure doesn't support starts with, so pull all the data for the claim type var values = from UniqueClaimValue cv in this.context.UniqueClaimValues where cv.PartitionKey == System.Web.HttpUtility.UrlEncode(ClaimType) select cv; //you have to assign it first to actually execute the query and return the results results.UniqueClaimValues = values.ToList(); //store it in cache HttpRuntime.Cache.Add(ClaimType, results, null, DateTime.Now.AddHours(CACHE_TTL), TimeSpan.Zero, System.Web.Caching.CacheItemPriority.Normal, null); } //now query based on criteria, for the max results returnResults.UniqueClaimValues = (from UniqueClaimValue cv in results.UniqueClaimValues where cv.ClaimValue.StartsWith(Criteria) select cv).Take(MaxResults).ToList(); } catch (Exception ex) { Trace.WriteLine("Error searching claim values: " + ex.Message); } return returnResults; }The first thing to note is that you cannot use StartsWith against Azure data. So that means you need to retrieve all the data locally and then use your StartsWith expression. Since retrieving all that data can be an expensive operation (it’s effectively a table scan to retrieve all rows), I do that once and then cache the data. That way I only have to do a “real” recall every 10 minutes. The downside is that if users are added during that time then we won’t be able to see them in the people picker until the cache expires and we retrieve all the data again. Make sure you remember that when you are looking at the results.

Once I actually have my data set, I can do the StartsWith, and I can also limit the amount of records I return. By default SharePoint won’t display more than 200 records in the people picker so that will be the maximum amount I plan to ask for when this method is called. But I’m including it as a parameter here so you can do whatever you want.

The Queue Access Class

Honestly there’s nothing super interesting here. Just some basic methods to add, read and delete messages from the queue.

Azure Worker Role

The worker role is also pretty non-descript. It wakes up every 10 seconds and looks to see if there are any new messages in the queue. It does this by calling the queue access class. If it finds any items in there, it splits the content out (which is semi-colon delimited) into its constituent parts, creates a new instance of the UniqueClaimValue class, and then tries adding that instance to the unique claim values table. Once it does that it deletes the message from the queue and moves to the next item, until the it reaches the maximum number of message that can be read at one time (32), or there are no more messages remaining.

WCF Application

As described earlier, the WCF application is what the SharePoint code talks to in order to add items to the queue, get the list of claim types, and search for or resolve a claim value. Like a good trusted application, it has a trust established between it and the SharePoint farm that is calling it. This prevents any kind of token spoofing when asking for the data. At this point there isn’t any finer grained security implemented in the WCF itself. For completeness, the WCF was tested first in a local web server, and then moved up to Azure where it was tested again to confirm that everything works.

So that’s the basics of the Azure components of this solution. Hopefully this background explains what all the moving parts are and how they’re used. In the next part I’ll discuss the SharePoint custom claims provider and how we hook all of these pieces together for our “turnkey” extranet solution. The files attached to this posting contain all of the source code for the data access class, the test project, the Azure project, the worker role and WCF projects. It also contains a copy of this posting in a Word document, so you can actually make out my intent for this content before the rendering on this site butchered it.

Attachment: Azure.zip

Avkash Chauhan (@avkashchauhan) described Hadoop Performance: How storage disk types in individual node will impact the job performance? in a 2/14/2012 post:

As you may have already know that Hadoop Cluster is network and disk, IO intensive. Recently I was trying to run a test scenario where I decided to change SATA hard disk to a high performance SSD Disk while keeping the cluster hardware the same. I was running the terra sort test to validate if having high performance SSD should have impacted the overall performance. I found that having SSD instead of SATA improved the test performance by ~20%.

After that, I tried to dig information on internet about other tests done in similar fashion to see what could be the best practice in this direction. The following recommendation I found from Intel by choosing appropriate combination of disk throughput, in-memory caching, cluster deployment and multi CPU box.

- We found SSDs to be very effective for both read and write operation.

- In-memory caching resulted in better response through setting right amount of “HEAP CACHE” to achieve higher cache hit percentage.

- Cluster environment served the requests faster where as “CPU I/O WAIT” spikes were noticed.

- Overall most of the CPUs remain idle during the test.

In a test demonstrated by Intel, th[e] impact of going from two to four disks in a node (doubling the IO):

- Job was completed in half the time with previous IO

- Increasing server cost by 10% increased, sort performance by 100%.

If we consider MapReduce local directory where mapped files are stored locally, adding multiple same disks to this mount could improve the performance.

Replacing SATA with SSD or PCIe based flash cards can improve IO for certain jobs. Performance increases vary by workload however in a strict sense this increases the per server cost while decreasing the cost per job / transaction.

Barton George (@Barton808) posted Hadoop World, a belated summary on 2/13/2012:

With O’Reilly’s big data conference Strata coming up in just a couple of weeks, I thought I might as well get around to finally writing up my notes from Hadoop World . The event, which was put on by Cloudera, was held last November 8-9 in New York city. There were over 1,400 attendees from 580 companies and 27 countries with two thirds of the audience being technical.

Growing beyond geek fest

The event itself has picked up significant momentum over the last three years going from 500 attendees, to 900 the second year, to over 1400 this past year. The tone has gone from geek-fest to an event focused also on business problems e.g. one of the keynotes was by Larry Feinsmith, managing director of the office of the CIO at JP Morgan Chase. Besides Dell, other large companies like HP, Oracle and Cisco also participated.

As a platinum sponsor, Dell had both a booth and a technical presentation. At the event we announced that we would be open sourcing the Crowbar barclamp for Hadoop and at out booth we showed off the Dell | Hadoop Big Data Solution which is based on Cloudera Enterprise.

Cutting’s observations

Doug Cutting, the father of Hadoop, Cloudera employee and chairman of the Apache software foundation, gave a much anticipated keynote. Here are some of the key things I caught:

- Still young: While Cutting felt that Hadoop had made tremendous progress he saw it as still young with lots of missing parts and niches to be filled.

- Big Top: He talked about the Apache “Bigtop” project which is an open source program to pull together the various pieces of the Hadoop ecosystem. He explained that Bigtop is intended to serve as the basis for the Cloudera Distribution of Hadoop (CDH), much the same way Fedora is the basis for RHEL (Redhat Enterprise Linux).

- “Hadoop” as “Linux“: Cutting also talked about how Hadoop has become the kernel of the distributed OS for big data. He explained that, much the same way that “Linux” is technically only the kernel of the GNU Linux operating system, people are using the word Hadoop to mean the entire Hadoop ecosystem including utilities.

Interviews from the event

To get more of the flavor of the event here is a series of interviews I conducted at the show, plus one where I got the camera turned on me:

- Hadoop World: Cloudera CEO reflects on this year’s event

- Hadoop World: Accel’s $100M Big Data fund

- Hadoop World: Battery Ventures EIR Todd P.

- Hadoop World: Talking to Splunk’s Co-founder

- Hadoop World: NoSQL database MongoDB

- Hadoop World: Talking HBase with Facebook’s Jonathan Gray

- Hadoop World: Ubuntu, Hadoop and Juju

- Hadoop World: Karmasphere and big data intelligence

- Hadoop World: Learning about NoSQL database Couchbase

- Hadoop World: O’Reilly Strata conference chair, Ed Dumbill

Extra-credit reading

Blogs regarding Dell’s crowbar announcement

- Dell to open source software to ease Hadoop install & management

- Developers: How to get involved with Crowbar for Hadoop

- Open source Crowbar code now available for Hadoop

- How to create a Basic or Advanced Crowbar build for Hadoop

Hadoop Glossary

- Hadoop ecosystem

- Hadoop: An open source platform, developed at Yahoo that allows for the distributed processing of large data sets across clusters of computers using a simple programming model. It is designed to scale up from single servers to thousands of machines, each offering local computation and storage. It is particularly suited to large volumes of unstructured data such as Facebook comments and Twitter tweets, email and instant messages, and security and application logs.

- MapReduce: a software framework for easily writing applications which process vast amounts of data (multi-terabyte data-sets) in parallel on large clusters of commodity hardware in a reliable, fault-tolerant manner. Hadoop acts as a platform for executing MapReduce. MapReduce came out of Google

- HDFS: Hadoop’s Distributed File system allows large application workloads to be broken into smaller data blocks that are replicated and distributed across a cluster of commodity hardware for faster processing.

- Major Hadoop utilities:

- HBase: The Hadoop database that supports structured data storage for large tables. It provides real time read/write access to your big data.

- Hive: A data warehousing solution built on top of Hadoop. An Apache project

- Pig: A platform for analyzing large data that leverages parallel computation. An Apache project

- ZooKeeper: Allows Hadoop administrators to track and coordinate distributed applications. An Apache project

- Oozie: a workflow engine for Hadoop

- Flume: a service designed to collect data and put it into your Hadoop environment

- Whirr: a set of libraries for running cloud services. It’s ideal for running temporary Hadoop clusters to carry out a proof of concept, or to run a few one-time jobs.

- Sqoop: a tool designed to transfer data between Hadoop and relational databases. An Apache project

- Hue: a browser-based desktop interface for interacting with Hadoop

Wenming Ye delivered a 01:44:43 Building and Running HPC Applications in Windows Azure via Channel9 in February (missed when published)

Windows Azure is an ideal environment for deploying compute-intensive apps that take advantage of the scale-on-demand capability of the cloud. The Windows Azure HPC Job Scheduler provides a resource manager and a set of runtimes for developing and deploying parallel and scale-out apps. This talk will present how to deploy a HPC cluster using both Visual Studio and powerShell, and shows an sample application that uses the HPC job scheduler in Windows Azure to rapidly create scalable compute and data-intensive services. Programming models include parallel apps using MPI, scale-out apps using WCF.

<Return to section navigation list>

SQL Azure Database, Federations and Reporting

Ambrish Mishra described Managing Federations in SQL Azure with the SQL Server Management Studio of SQL Server 2012 RC0 in a 2/15/2012 post to the Windows Azure Team blog:

In this blog post we will cover some new abilities in SQL Server Management Studio 2012 (SSMS) that enhance the ability to work with SQL Azure. Specifically, we will highlight support in SSMS for the new SQL Azure feature known as SQL Azure Federations, which was just introduced this past December. Federations in the SQL Azure database provide the ability to achieve greater scalability and performance from the database tier of the application through horizontal partitioning of data in multiple databases. One or more tables within a database are split by row, and are stored across multiple system-managed databases, called Federation members. This type of horizontal partitioning is often referred to as ‘sharding’. Detailed information about SQL Azure Federation is available on Cihan Biyikoglu’s blog, MSDN Online, and this video demonstration.

The SQL Azure Management Portal and the SQL Server Management Studio (SSMS) of the SQL Server 2012 RC0 release include rich tooling support for managing Federations. One can easily create new Federations, view the Federation meta-data & the Federation members, split Federation members, and work with the database objects in the Federation root and work with the Federation members. The features that were especially built for Federations in SSMS include the following:

Streamlined view in the Object Explorer: If one connects to the SQL Azure virtual server in the SSMS, only the user created databases are listed in the Object Explorer and the GUID-named Federation members are filtered out. This helps in reducing the clutter and makes it easier to work with the databases.

View, create, split and delete Federations: The Federation root database can have more than one Federation and all the Federations are listed under the node titled ‘Federations’ in the object explorer. The right-click context menu on the Federations node and the Federation members have the option to create new Federations, split and delete Federation members, view top 1000 Federation members and connect to a Federation member.

SQL Server Management Studio 2012 Screen Shot: The right-click context menu on a Federation has the options for viewing, splitting and deleting Federation members.

SQL Server Management Studio 2012 Screen Shot: Listing the Top 1000 Federation members in a Federation.

Scripting Federations: The right-click context menu on a Federation provides the option for scripting a Federation. The CREATE To scripting option for a Federation scripts out the layout of the Federation and includes all the splits in the Federation in form of ALTER FEDERATION…SPLIT AT. Running the script will recreate the Federation and all the Federation members in the Federation.

SQL Server Management Studio 2012 Screen Shot: Scripting a Federation.

Connecting to a Federation member: The ‘Connect to a Federation Member’ dialog is used for connecting to a Federation member by specifying a distribution value for the distribution key. The validator in the dialog lists the permissible range for the distribution value based on the data type of the key and also validates that the value is in the correct format and range.

SQL Server Management Studio 2012 Screen Shot: Connect to a Federation member dialog.

Viewing Federation member and the objects in it: After a successful connection to a Federation member, the member is displayed in the Object Explorer in a separate node. The Federation member is listed in a friendly name comprising of <Federation Root Database>::<Federation> (Federation Distribution Key = Range_Low..Range_High). The icon for the Federation member and the Federated table are different from the icons for other databases and tables to make it easy to distinguish Federation objects. The column in the Federated table that is linked to the Federation Distribution Key is labeled as ‘federated’ for easily identifying it. A federated table, when scripted out, is scripted with the ‘FEDERATED ON’ clause that can be used to recreate the table in other Federations.

SQL Server Management Studio 2012 Screen Shot: Viewing Federation member and the objects in it. The T-SQL Editor displays the CREATE To script for the Federated table Customers with the FEDERATED ON clause.

Reconnecting to a Federated member after it splits: A split operation can be in progress for a Federation member while there is a connection to it in the Object Explorer. After the split operation completes, the original Federation member database is deleted and the connection to the member in the Object Explorer becomes invalid. SSMS detects this and pops up a message that the split has completed and provides an option to invoke the ‘Connect to a Federation Member’ dialog to connect to the new Federation member database that now has the data from the original Federation member.

SQL Server Management Studio 2012 Screen Shot: Reconnecting to a Federation member after it splits using the ‘Connect to a Federation Member’ dialog.

Querying the Federation members in the T-SQL Editor: The T-SQL Editor displays the name of the Federation member that it is connected to in the same friendly syntax as used in the Object Explorer. The connection can be easily switched between the Federation root database and the Federation member using the Available Database dropdown control in the T-SQL Editor toolbar. If the USE FEDERATION query is issued to change the connection to another federation member or to the root, the T-SQL Editor detects that the connection has changed and updates the friendly name to the Federation member or the root that it is connected to.

SQL Server Management Studio 2012 Screen Shot: The Federation member is displayed in the same friendly syntax as used in the Object Explorer. The Available Databases dropdown control can be used to change connection to the Federation root.

SQL Server Management Studio 2012 Screen Shot: The friendly name is updated to the Federation member database that the T-SQL Editor is connected if the connection is changed using the T-SQL statement - USE FEDERATION CustomerFederation(cid=100) WITH RESET, FILTERING=OFF

Warning messages: Warning messages alert the users against taking action that can have far reaching impact. For example, if an attempt is made to delete the Federation root database, a warning message is displayed alerting the user that the database has Federations in it and deleting the database will also delete all the Federations in the database.

SQL Server Management Studio 2012 Screen Shot: Warning message when deleting a Federation root database.

With the native support for Federations in SSMS, administrators and developers can work with Federation through an intuitive interface that makes it easy to navigate and manage at scale. We would like to hear feedback on this experience. You can email your feedback to mailto:SAPESupport@microsoft.com.

Steven Martin posted an Announcing Reduced Pricing on SQL Azure and New 100MB Database Option Valentine’s Day article to the Windows Azure blog on 2/14/2012:

To meet evolving customer needs across both ends of the database size spectrum, we are lowering the price of SQL Azure and introducing a 100MB database option.

Customers will realize 48% to 75% savings for databases larger than 1GB. The 100MB DB option enables customers to get started using SQL Azure at half of the previous price, while still providing the full range of features including: high availability, fault tolerance, self-management, elastic scale-out, on-premises connectivity, and full Service Level Agreement. Full details on our new pricing can be found here.

Today’s price reductions and new entry-level database option are the result of both customer feedback and evolving usage patterns. Specifically, two usage patterns have emerged in the last 18 months. First, many projects start small but need to quickly grow in size. To promote this pattern, we are passing along better economies of scale and options for larger deployments. As your database grows, the price per GB will decline significantly. Second, many cloud adopters and customers with smaller workloads want an inexpensive option for modest usage. Just as we made a 150GB option available for customers with large database needs, we are providing the same level of choice at the other end of the spectrum with the 100MB option for smaller database needs. Today’s announcement is another step in our ongoing journey to help customers with a variety of scenarios embrace Cloud Computing.

The chart below provides a side by side comparison of the cost savings as cloud deployments grow and database sizes increase. Be sure to check out our pricing calculator and pricing page for additional details.

*Previous prices 50GB and larger reflect price cap of $499.95 announced December 12, 2011.

Steven is General Manager, Windows Azure Business Planning.

Cihan Biyiloglu wrote the following tweet on 2/15/2012:

Fantastic new pricing with

#sqlazure. Time to rewrite my "pricing with#sqlfederations" post#sqlserver#azure http://tinyurl.com/8ycjkq6

and replied as follows to my request for an ETA on the update:

@rogerjenn#sqlazure#sqlfederations I hope this week Roger...

Cihan Biyikoglu (@cihangirb) asked Want to demo or show federations to your boss? Here is the full package: Slides and the AdventureWorks database fully scaled-out with Federations in a 2/14/2012 post:

What a great day! first there is some great news on SQL Azure pricing changes and now the AdventureWorks database is out with a new version for SQL Azure and it contains a flavor that is scaled-out with Federations.

Thanks to Scott we now have a scalable AdventureWorks database full utilizing federations. Here is Scott’s blog announcing the news;

http://geekswithblogs.net/ScottKlein [See post below.]

Now you guys have the full package. Sample AdventureWorks with Federations and Slides to go tell your boss, your friends, family members and your personal trainer all about federations. Here are the slides for federations;

Cihan updated his Federations: Building Scalable, Elastic, and Multi-tenant Database Solutions with SQL Azure Tech*Net wiki article on 1/20/2012.

Scott Klein posted a Full Version of AdventureWorks database for SQL Azure and and SQL Azure Federations on 2/14/2012:

Some Background

The AdventureWorks database has been around for over a decade; a staple amongst sample databases. The first version of the AdventureWorks database appeared in time for SQL Server 2000. Microsoft has been good at keeping the AdventureWorks sample database up to date as new versions of SQL Server are released. Case-in-point: SQL Server 2012 is at RC0 and yet you can already find a version of AdventureWorks for it (albeit, it really isn’t that different from the SQL Server 2008 R2 version). They even have multiple versions depending on your needs (Data Warehouse, LT, OLAP, etc).

As a Corporate Technical Evangelist for SQL Azure and somewhat new to Microsoft recently, I was even glad to see a version for SQL Azure. Added to CodePlex in late 2009, the current zip file, AdventureWorks2008R2AZ, contains an install for two databases based on the AdventureWorks database; a small data warehouse database and a small light version of the full AdventureWorks database. However, neither of these database are the full AdventureWorks database that we know and love, so I set out to solve that and make a version for SQL Azure that utilizes the full AdventureWorks database. And, while I was at it, with all of the hype and talk surrounding SQL Azure Federations, I thought it would also be nice to see a Federated version of the AdventureWorks database.

Exciting News

Thus, I am happy to let you know of two new additions to the SQL Azure samples page on CodePlex. Starting today, two new installs are available. The full AdventureWorks database for SQL Azure, and a SQL Azure Federation version of the full AdventureWorks database are now available and can be downloaded from here:

http://msftdbprodsamples.codeplex.com/releases/view/37304

I’ll spend a few minutes and discuss these two databases individually regarding why the efforts were taken to migrate them to SQL Azure and what we hope you will get from them.

Full AdventureWorks for SQL Azure

As far as sample databases go, the AdventureWorks database is the king. It exists simply, yet elegantly, to illustrate the features and functionality of its corresponding version of SQL Server. As such, migrating the full version of the AdventureWorks database to SQL Azure was a must, in part for the following reasons:

- SQL Server as a Service – The primary goal of taking the AdventureWorks database and migrating it to SQL Azure is to show that SQL Azure is SQL Server served up as a PaaS service. Obviously there are some differences in the logical vs. physical administration aspects but the bottom line is that SQL Azure is a cloud-based relational database service that is built on SQL Server technologies, and what better way to prove that by taking an existing on-premises database and showing how easy it is to migrate it to SQL Azure.

- Supported Functionality and Migration Strategies – AS SQL Azure gains adoption, the question continues to exists as to what does it take to migrate an existing on-premises database to SQL Azure, and what functionality is, and is currently not, supported and the steps necessary in the migration process. This example answers those questions.

Everything that needed to be modified, changed, or removed to ensure support for SQL Azure has been documented on the CodePlex page for this database. For example, all ON PRIMARY statements have been removed, and we explain this and the reasons why on the CodePlex page. We list these out so you’ll have an idea of what was needed in order get the AdventureWorks database into SQL Azure.

Given that this is the first fore of the full AdventureWorks database into SQL Azure means there is much more to come.

Full AdventureWorks with SQL Azure Federations

SQL Azure Federations was launched December of 2011. There wasn’t a whole lot of fanfare when it was released but those who have been keeping up with SQL Azure were and certainly are aware of its existence, simply because Microsoft has been talking about it for well over a year. Thus, creating a Federated version of the AdventureWorks database for SQL Azure was also a must with the following thoughts in mind:

- Traction – What a better way to keep momentum going for SQL Azure Federations than to take a well-known sample database and Federate it! Developers can now look at a long-existing sample database that has been federated and use that as a starting point to understanding and working with SQL Azure Federations.

- Example – With SQL Azure Federations so new it makes sense to provide a real-life example on how to Federate an existing database.

- Coolness – Honestly, seeing a Federated version of the AdventureWorks database is just cool. Really.

The current Federated version of the AdventureWorks database federates on Customer. We specifically selected to federate on Customer because it provides a great base to build from. There were several tenants we could have federated on, such as Products or People, but for a first cut, and to help the “transition” into understanding Federations, we decided to start somewhat simple.

The installs for both of the databases, the full non-federated and the federated, is quite easy. Once installed you will be able to see all the databases in SQL Server Management Studio Object Explorer, including the Federation Member as shown in the following figure.

Even cooler is that you can manager your Federations via the SQL Azure Management Portal as shown in the Figure below.

What’s Next

We are already in the process of creating additional, more advanced, versions of this database, which you will see in the coming weeks and months.

Conclusion

As features and functionality is added to SQL Azure, these databases will be updated correspondingly.

Long Live the AdventureWorks database! Love it, use it!

<Return to section navigation list>

MarketPlace DataMarket, Social Analytics, Big Data and OData

Joe Kunk (@JoeKunk) asserted “Learn how to publish data in the Windows Azure Marketplace for a monthly income with just a few hours effort” in a deck for his Selling Data in the Windows Azure Marketplace article of 2/15/2012 for Visual Studio Magazine:

Making your database available for sale on the Microsoft Windows Azure Marketplace is straightforward, especially if you are publishing a SQL Azure table or exposing an OData service over HTTPS for exclusive use by the Marketplace. In just a few hours, your data can be submitted and ready to provide a monthly income without billing hassles.

In my November column, “Free Databases in the Windows Azure Marketplace,” I explored 13 low cost or free databases available for consumption via Web services and provided sample Visual Basic .NET code to access those services via an ASP.NET MVC 3 Web site. The next step is to show you how to get your own applications and data into the Windows Azure Marketplace for sale as a content provider.

Data Publishing

If you have data to publish in the DataMarket section of the Marketplace, the Data Publishing Kit is available for download here. Data can published to the Marketplace using multiple technologies: SQL Azure, a REST-based Web service, a SOAP-based Web service or an OData service.

The communication protocol tabular data stream (TDS) is supported for SQL Azure database tables. HTTP is the only supported transport protocol for REST, SOAP and OData services. The DataMarket, in conjunction with the Xbox and Zune billing system, manages all aspects of the credit card monthly billing including trial offers. Content providers are not involved in billing other than to receive payments. Microsoft retains a 20 percent commission on data subscription sales in the Marketplace.

Publishing data from SQL Azure allows you to expose selected tables and views. Note that stored procedures are not supported. Any column that can be queried needs to be included in one or more indices. Tables require a primary key. Columns can't have the same name as the table that contains them. Views can be used to present data that requires multiple tables to be logically joined. Database size is limited by SQL Azure, which currently supports a maximum of 150 GB.

End users can query the information as if they have access to the SQL Azure database. A query consists of any result set up to 100 rows. More than 100 rows in the result is split among pages; and each page represents a query for billing purposes. Multiple clones of the database can be created and the Marketplace will load balance among the clones. The IP address range 131.107.* must be whitelisted and “Allow Microsoft Services” set to true in the SQL Azure portal.

Publishing data via an OData-based Web service in ATOM format is the native format provided by the DataMarket. Content providers with an OData source pass their service through the DataMarket and the root of their service domain is replaced with the DataMarket service root to ensure proper billing. Otherwise, the data doesn't need to be present in Windows Azure. You can choose to expose service operations that limit the end user to specific information, or expose entity sets which allow flexible queries. The provider determines the number of rows to be returned and when paging is required.

Publishing data via a REST-based Web service is supported if the DataMarket service maps the REST service to an ATOM-based OData service as described above. To do this, all parameters need to be exposed as HTTP parameters. The Web service response must be in an XML format and have only one repeating element that contains the response set. Flexible query is not supported with a REST-based Web service.

Publishing data via a SOAP-based Web service is similar to a REST-based Web service. The only difference: DataMarket service call parameters can be provided in the XML body that's posted to the provider’s service.

For REST or SOAP Web services, a sample result set must be provided with specifications on how to map the data values to the strongly typed values returned by OData. A mapping of all service error codes to HTTP status codes is also required.

OData Service Sample

Providing data to the Windows Azure Marketplace via an OData service is straightforward. Create an OData service in Visual Studio and then make it available on an HTTPS Web site with basic username / password authentication required.

To demonstrate creating a simple OData service, I have created a sample SQL Server 2008 Express database with a single table containing Michigan zip code information as shown in Figure 1. The code download for this article includes a backup of the database and the scripts needed to create the database and populate it with data. Use Microsoft Visual Web Developer 2010 Express or above to create the application. You may also need to install Entity Framework 4.1 or a later version.

[Click on image for larger view.]Figure 1. Sample data for Windows Azure Marketplace OData provider

Create a Visual Studio ASP.NET Empty Web Application project. Add an ADO.NET Entity Data Model item to the project and follow the wizard prompts to add the USZipCodes table from the USZipInfo database. Next add a WCF Data Service item to the project. Update USZipCodes.svc.vb to initialize your Entity Data Model as shown below. It is acceptable to set the EntitySetRights to AllRead because security will be provided by Internet Information Services and not the application. I recommend that you set your entity PageSize to 100 rows to match the standard return set size in the Windows Azure Marketplace. …

Full disclosure: I’m a contributing editor for Visual Studio Magazine.

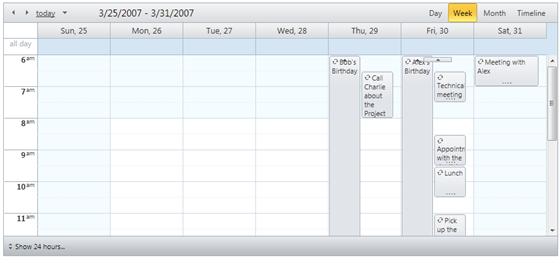

Telerik announced OData Binding for their Scheduler component on 2/15/2012:

RadScheduler supports binding to OData feed forth from Q1 2012!

In contrast to the standard WebService binding, the OData binding does not require serialized AppointmentData objects. Instead, the developer is free to expose whatever objects they want as a JSON feed, as long as the data is meaningful to the Scheduler. One can configure the DataKeys in the Odata configuration section.

The OData binding configuration section allows you set the following DataKeys to map the exposed feed to the binding fields:

- DataStartField

- DataEndField

- DataKeyField

- DataDescriptionField

- DataSubjectField

It is necessary to set InitialContainerName property to the OData Entity that contains your appointment data.

Finally, it is possible to chose whether to request the service via normal XHR call or using JSONP. Please have in mind that using JSONP is required if one is binding to a webservice hosted in a different domain. This requirement is due to the XSS restrictions that all browsers conform to.

Grouping by resources is not yet possible in the beta release, however, we will add this functionality for the final Q1 release.

<Return to section navigation list>

Windows Azure Access Control, Service Bus and Workflow

Thirumalai Muniswamy continued his Implementing Azure AppFabric Service Bus - Part 5 series on 2/14/2012:

This continuation post of Implementing Azure AppFabric Service Bus series. Look at the end of this post for other parts.

Till now we had seen developing WCF Service application and exposing to the public using Azure Service Bus and configuring Auto Start capability to the service as it required starting on IIS server restarts automatically.

In this post, I am going to provide a sample client application which consumes the CustomerService built using the WCF Application and exposed in previous posts. This application is a normal ASP.NET application which has the implementation to consume the service exposed on the Service Bus.

Before going to the actual implementation, below is the use case of this example.

- Required a screen to show list of customers in a GridView.

- The screen should also contain an entry form for adding/modifying customer records.

- The user can add a new customer by entering the customer information in the entry form and press Save button.

- The user can select an existing customer by selecting on the GridView using Select column hyperlink.

- On selection of a record, the information about the customer must be populated on the entry screen for updation.

- The user can update the customer information and Save again.

- The user can delete a particular customer using Delete hyperlink on the Delete column in GridView.

This is the functionalities of the screen. Now let’s get start the implementation of client application for consuming the service bus service:

Pre-requisite – As our project is going to be Windows Azure Project, make sure the latest Windows Azure SDK (v 6.0) installed.

Step 1: Open Visual Studio 2010 and select New Project from Start Page (or File => New => Project) for creating new Azure Project.

Step 2: Select the preferred language (here C#) and Windows Azure Project (from Cloud) from the Installed Templates. Enter the project name (DotNetTwitterSOPClient) and preferred Location then press OK to create.

Step 3: The Visual Studio will open New Windows Azure Project window. Select the ASP.NET Web Role from the .NET Framework 4 roles (left panel) and press > button to add to the Windows Azure Solution list. Press OK.

Step 4: Run the project once and verify is it working fine.

Step 5: Before going for functional implementation, let us first design the UI. So open the Default.aspx script source and add the below UI script in between the BodyContent.

<div> <table> <tr> <td>Customer Id</td> <td><asp:TextBox ID="txtCustomerId" runat="server" Width="120px"></asp:TextBox> </td> <td style="width:100px"></td> <td>Company Name</td> <td><asp:TextBox ID="txtCompanyName" runat="server" Width="250px"></asp:TextBox> </td> </tr> <tr> <td>Contact Name</td> <td><asp:TextBox ID="txtContactName" runat="server" Width="300px"></asp:TextBox> </td> <td></td> <td>Contact Title</td> <td><asp:TextBox ID="txtContactTitle" runat="server" Width="250px"></asp:TextBox> </td> </tr> <tr> <td rowspan="3">Address</td> <td rowspan="3"><asp:TextBox ID="txtAddress" runat="server" Width="300px" TextMode="MultiLine" Height="70px"></asp:TextBox> </td> <td rowspan="3"></td> <td>City</td> <td><asp:TextBox ID="txtCity" runat="server" Width="200px"></asp:TextBox> </td> </tr> <tr> <td>Region</td> <td><asp:TextBox ID="txtRegion" runat="server" Width="200px"></asp:TextBox> </td> </tr> <tr> <td>Country</td> <td><asp:TextBox ID="txtCountry" runat="server" Width="200px"></asp:TextBox> </td> </tr> <tr> <td>Phone No</td> <td><asp:TextBox ID="txtPhoneNo" runat="server" Width="200px"></asp:TextBox> </td> <td></td> <td>Fax No</td> <td><asp:TextBox ID="txtFax" runat="server" Width="200px"></asp:TextBox> </td> </tr> <tr> <td colspan="5" style="text-align:center"> <asp:Button ID="btnSave" runat="server" Width="100px" Text="Save" onclick="btnSave_Click" /> <asp:Button ID="btnClear" runat="server" Width="100px" Text="Clear" onclick="btnClear_Click" /> </td> </tr> <tr> <td colspan="5"> <asp:Label runat="server" ID="lblMessage" Text="" CssClass="Message"></asp:Label> <asp:HiddenField ID="hndCustomerId" runat="server" Value="" /> </td> </tr> </table> </div> <div> <asp:GridView ID="grdViewCustomers" runat="server" AllowPaging="True" AutoGenerateColumns="False" TabIndex="1" DataKeyNames="CustomerID" Width="100%" BackColor="White" CellPadding="3" BorderStyle="Solid" BorderWidth="1px" BorderColor="Black" GridLines="Horizontal" OnRowDataBound="grdViewCustomers_RowDataBound" OnPageIndexChanging="grdViewCustomers_PageIndexChanging" OnSelectedIndexChanging="grdViewCustomers_SelectedIndexChanging" OnRowDeleting="grdViewCustomers_RowDeleting"> <Columns> <asp:CommandField ShowSelectButton="True" HeaderText="Select" /> <asp:CommandField ShowDeleteButton="True" HeaderText="Delete" /> <asp:BoundField DataField="CustomerID" HeaderText="Customer ID" /> <asp:BoundField DataField="CompanyName" HeaderText="Company Name" /> <asp:BoundField DataField="ContactName" HeaderText="Contact Name" /> <asp:BoundField DataField="ContactTitle" HeaderText="Contact Title" /> <asp:BoundField DataField="Address" HeaderText="Address" /> <asp:BoundField DataField="City" HeaderText="City" /> <asp:BoundField DataField="Region" HeaderText="Region" /> </Columns> <RowStyle BackColor="White" ForeColor="#333333" /> <FooterStyle BackColor="#5D7B9D" Font-Bold="True" ForeColor="White" /> <PagerStyle BackColor="#284775" ForeColor="White" HorizontalAlign="Right" /> <SelectedRowStyle BackColor="#A5D1DE" Font-Bold="true" ForeColor="#333333" /> <HeaderStyle BackColor="#5D7B9D" Font-Bold="True" ForeColor="White" /> <AlternatingRowStyle BackColor="#E2DED6" ForeColor="#284775" /> </asp:GridView> </div>Here the HTML Script contains controls related entry form and a GridView to show list of customers.

Step 6: There are some events are added in the script file which are required to do the functionalities for the screen. Currently add empty event handler in code behind to make the screen work with no error.

public partial class _Default : System.Web.UI.Page { protected void Page_Load(object sender, EventArgs e) { } protected void grdViewCustomers_RowDataBound(object sender, GridViewRowEventArgs e) { } protected void grdViewCustomers_PageIndexChanging(object sender, GridViewPageEventArgs e) { } protected void btnClear_Click(object sender, EventArgs e) { } protected void grdViewCustomers_SelectedIndexChanging(object sender, GridViewSelectEventArgs e) { } protected void grdViewCustomers_RowDeleting(object sender, GridViewDeleteEventArgs e) { } protected void btnSave_Click(object sender, EventArgs e) { } }Now we are ready for implementation with Service Bus. You can run the project once and verify the screen works fine.

Step 7: We required the Customer entity class defined in WCF Service and exposed on Service Bus for desterilize the message comes from the service. To get the customer service model code, we have to use svcutil utility.

So, open the Visual Studio Command Prompt (2010), switch to required directory and run the below command.svcutil /language:cs /out:proxy.cs /config:app.config http://localhost/DotNetTwitterSOPService/CustomerService.svcNote: This command must be executed where the WCF Service runs. Because we are creating the code using the locally published url. The url will be specified in Web.Config on WCF Service Project. So you can open the project and get to know the url (or by running the ECF project also can know from the address bar).

Step 8: Add the Customer.cs file created using above command (or create a new Customer.cs file in the project and copy & paste from the Customer.cs file created).

Note:

- Make sure the namespace changed to WebRole namespace (WebRole).

- You required to add System.Runtime.Serialization assembly as reference to the project.

- Delete the code except Customer class such as ICustomerService, ICustomerServiceChannel interfaces and CustomerServiceClient class.

Step 9: As we are going to consume CustomerService from service bus, we required the ICustomerService interface to talk to the service. So, add a new class file to the Web Role (using right click -> Add -> Class) and name it as ICustomerService.cs.

Add the following code in the ICustomerService

[ServiceContract] public interface ICustomerService { [OperationContract] IList GetAll(); [OperationContract] Customer Get(string customerID); [OperationContract] int Create(Customer customer); [OperationContract] int Update(Customer customer); [OperationContract] int Delete(string customerID); [OperationContract] string GetValue(string value); }This is the same interface defined in the WCF Service in Part 3.

Note: You required to add System.ServiceModel assembly as reference in the project and add System.ServiceModel namespace in the namespace section.

Step 10: For connecting the service exposed on the Service Bus, we required the endpoint url (Service URI). But if we are consuming several services in a particular project, the service bus URI will differ for each service. So, we can define some important parameters in the Web.Config and generate the endpoint url in the code when required.I have defined six [five?] important parameters in the appSettings node on Web.Config. Below are the configuration script.

- ServiceNamespace – This is the namespace created in the Management portal under Service Bus, Access Control & caching section. This namespace will be configured in the WCF Service project and exposed to Service Bus.

- IssuerName - IssuerSecret is again from Management portal for security purpose.

- CustomerServiceEndPointNameTcp – for defining the service path defined for TCP protocol (sb:// endpoint).

- CustomerServiceEndPointNameHttp - for defining the service path defined for HTTPS protocol (https:// endpoint).

- ServiceConsumingProtocol – defined the connectivity will be based on which protocol. So we can switch the consuming protocol based on the requirement and network boundaries.

The CustomerServiceEndPointNameTcp, CustomerServiceEndPointNameHttp defines a part of endpoint url. For example, let us take the two endpoint url exposed to public for CustomerService.

https://ComDotNetTwitterSOP.servicebus.windows.net/Http/CustomerService/Test/V0100/

sb://ComDotNetTwitterSOP.servicebus.windows.net/NetTcp/CustomerService/Test/V0100/

The text in blue color defines the service path for each endpoint url.

Step 11: Next, I am going to define a helper class (CustomerHelper) for doing operations with CustomerService deployed on the Service Bus.

The CustomerHelper class has various methods for doing various operations. The list of methods and its usage follows.Step 12: Now we have completed the base work and can start integrating the code with UI. So let’s first start by binding the list of customer records to the GridView.

- Defining a private variable to hold the channel object of ICustomerService at the scope of class.

private ICustomerService channel = null;- OpenChannel() –This method is used to open the channel for making any communication with the service. So this method will be called before any method called on the service.

/// <summary> /// Method to close the Client Channel /// </summary> public void CloseChannel() { ((ICommunicationObject)channel).Close(); }- GetAll() – Method to get all the customers from the database.

/// <summary> /// Method to get all the Customers /// </summary> /// <returns>List of Customers</returns> public IList<Customer> GetAll() { OpenChannel(); IList<Customer> customers = channel.GetAll(); CloseChannel(); return customers; }As defined previously, before calling the GetAll() method at the service, we required to open the channel. So the OpenChannel() used to open the channel and CloseChannel() method used to close the channel.- Get(CustomerID) – Method to get the details of a particular Customer.

/// <summary> /// Method to get a particular Customer based on Customer Id /// </summary> /// <param name="customerID">Customer Id</param> /// <returns>Customer Object</returns> public Customer Get(string customerID) { OpenChannel(); Customer customer = channel.Get(customerID); CloseChannel(); return customer; }- Create(Customer) – For creating a new customer record in the database.

/// <summary> /// Method to create a new Customer record in the system /// </summary> /// <param name="customer">Customer object</param> /// <returns>No of Row affected</returns> public int Create(Customer customer) { OpenChannel(); int intNOfRows = channel.Create(customer); CloseChannel(); return intNOfRows; }- Update(Customer) – For updating an existing customer in the database.

/// <summary> /// Method to Update existing Customer in the System /// </summary> /// <param name="customer">Customer object</param> /// <returns>No of Row affected</returns> public int Update(Customer customer) { OpenChannel(); int intNOfRows = channel.Update(customer); CloseChannel(); return intNOfRows; }- Delete(Customer) – For Deleting an existing customer from the database.

/// <summary> /// Method to Delete an existing Customer from the system /// </summary> /// <param name="customer">Customer object</param> /// <returns>No of Row affected</returns> public int Delete(string customerID) { OpenChannel(); int intNOfRows = channel.Delete(customerID); CloseChannel(); return intNOfRows; }

Add below code in Page Load event and additional methods in code behind.protected void Page_Load(object sender, EventArgs e) { if (!Page.IsPostBack) { ClearScreen(); BindGrid(); } } /// <summary> /// Clearing the screen /// </summary> private void ClearScreen() { txtCustomerId.Text = ""; txtCompanyName.Text = ""; txtContactName.Text = ""; txtContactTitle.Text = ""; txtAddress.Text = ""; txtCity.Text = ""; txtRegion.Text = ""; txtCountry.Text = ""; txtPhoneNo.Text = ""; txtFax.Text = ""; lblMessage.Text = ""; hndCustomerId.Value = ""; } /// <summary> /// Method which binds the data to the Grid /// </summary> private void BindGrid() { try { CustomerHelper helper = new CustomerHelper(); grdViewCustomers.DataSource = helper.GetAll(); grdViewCustomers.DataBind(); } catch (Exception e) { } }Step 13: Add the below code for getting the details of a customer record on click of Select hyperlink on a particular row.protected void grdViewCustomers_SelectedIndexChanging(object sender, GridViewSelectEventArgs e) { hndCustomerId.Value = grdViewCustomers.Rows[e.NewSelectedIndex].Cells[2].Text.Trim(); if (hndCustomerId.Value.Length > 0) { CustomerHelper helper = new CustomerHelper(); Customer customer = helper.Get(hndCustomerId.Value); txtCustomerId.Text = customer.CustomerID; txtCompanyName.Text = customer.CompanyName; txtContactName.Text = customer.ContactName; txtContactTitle.Text = customer.ContactTitle; txtAddress.Text = customer.Address; txtCity.Text = customer.City; txtRegion.Text = customer.Region; txtCountry.Text = customer.Country; txtPhoneNo.Text = customer.Phone; txtFax.Text = customer.Fax; } }Step 14: Add below code for deleting a customer record on click of Delete hyperlink on a particular row.protected void grdViewCustomers_RowDataBound(object sender, GridViewRowEventArgs e) { if (e.Row.RowType == DataControlRowType.DataRow) { e.Row.Cells[1].Attributes.Add("onclick", "return confirm('Are you sure want to delete?')"); } } protected void grdViewCustomers_RowDeleting(object sender, GridViewDeleteEventArgs e) { try { string strCustomerID = grdViewCustomers.Rows[e.RowIndex].Cells[2].Text.Trim(); if (strCustomerID.Length > 0) { CustomerHelper helper = new CustomerHelper(); helper.Delete(strCustomerID); ClearScreen(); BindGrid(); lblMessage.Text = "Record deleted successfully"; } } catch (Exception ex) { lblMessage.Text = "There is an error occured while processing the request. Please verify the code!"; } }Step 15: Add below code saving a customer record when click of Save button.protected void btnSave_Click(object sender, EventArgs e) { try { Customer customer = new Customer(); customer.CustomerID = txtCustomerId.Text; customer.CompanyName = txtCompanyName.Text; customer.ContactName = txtContactName.Text; customer.ContactTitle = txtContactTitle.Text; customer.Address = txtAddress.Text; customer.City = txtCity.Text; customer.Region = txtRegion.Text; customer.Country = txtCountry.Text; customer.Phone = txtPhoneNo.Text; customer.Fax = txtFax.Text; CustomerHelper helper = new CustomerHelper(); if (hndCustomerId.Value.Trim().Length > 0) helper.Update(customer); else helper.Create(customer); ClearScreen(); BindGrid(); lblMessage.Text = "Record saved successfully"; } catch (Exception ex) { lblMessage.Text = "There is an error occured while processing the request. Please verify the code!"; } }Step 16: Below code when page changing the page index.protected void grdViewCustomers_PageIndexChanging(object sender, GridViewPageEventArgs e) { grdViewCustomers.PageIndex = e.NewPageIndex; grdViewCustomers.SelectedIndex = -1; BindGrid(); }Now you can run the project and verify the screen output. Below are the screen output[s] from my client.Note:

Download the working source code in C# here.

- In some enterprise the firewall stops any communication with public directly. Even my enterprise network boundaries also won’t allow this. I get below error when I consume the service from the app.

The token provider was unable to provide a security token while accessing 'https://comdotnettwittersop-sb.accesscontrol.windows.net/WRAPv0.9/'. Token provider returned message: 'Unable to connect to the remote server'.In that time, get the proxy address and configure the in the Web.Config settings as below and try again.

- Deleting the customer may not work as the record may referred in other tables.

The other links on Implementing Azure AppFabric Service Bus:

- Implementing Azure AppFabric Service Bus - Part 1

- Implementing Azure AppFabric Service Bus - Part 2

- Implementing Azure AppFabric Service Bus - Part 3

- Implementing Azure AppFabric Service Bus - Part 4

- Implementing Azure AppFabric Service Bus - Part 5

• Maarten Balliauw @martinballiauw reported the availability of his Slides for TechDays Belgium 2012: SignalR presentation on 2/16/2012:

It was the last session on the last day of TechDays 2012 so I was expecting almost nobody to show up. Still, a packed room came to have a look at how to make the web realtime using SignalR. Thanks for joining and for being very cooperative during the demos!

As promised, here are the slides: SignalR. Code, not toothpaste - TechDays Belgium 2012.

You can also find the demo code here: SignalR. Code, not toothpaste - TechDays Belgium 2012.zip (2.74 mb)

View more PowerPoint from Maarten Balliauw

PS: The book on NuGet (Pro NuGet) which I mentioned can be (pre)ordered on Amazon.

Clemens Vasters (@clemensv) described SignalR powered by Service Bus in a 2/13/2012 post:

Our friends over in the ASP.NET team are working on a very nice, lightweight web-browser eventing technology called SignalR. SignalR allows server-pushed events into the browser with a variety of transport options and a very simple programming model. You can get it via NuGet and/or see growing and get the source of on github. There is also a very active community around SignalR chatting on Jabbr.net, a chat system whose user model is derived from IRC, but that runs – surprise – on top of SignalR.

For a primer, check out the piece that Scott Hanselman wrote about SignalR a while back.

At the core, SignalR is a lightweight message bus that allows you to send messages (strings) identified by a key. Ultimately it’s a key/value bus. If you’re interested in messages with one or more particular keys, you walk up and ask for them by putting a (logical) connection into the bus – you create a subscription. And while you are maintaining that logical connection you get a cookie that acts as a cursor into the event stream keeping track of what you have an have not seen, which is particularly interesting for connectionless transports like long polling.

SignalR implements this simple pub/sub pattern as a framework and that works brilliantly and with great density, meaning that you can pack very many concurrent notification channels on a single box.

What SignalR, out-of-the-box, doesn’t (or didn’t) provide yet is a way to stretch its message bus across multiple nodes for even higher scale and for failover safety.

That’s where Service Bus comes in.

Last week, I built a Windows Azure Service Bus backplane for SignalR that allows deploying SignalR solutions to multiple nodes with message distribution across those nodes and ensuring proper ordering on a per-sender basis as well as node-to-node correctness and consistency for the cursor cookies. That code is Apache licensed and now available on github.

You can use this backplane irrespective where you host solutions that use SignalR, as long as your backend host has access to a Service Bus namespace. That’s obviously best in one of the Windows Azure datacenters, but will work just as well anywhere else, albeit with a few msec more latency.

If you want to try it out, here are the steps (beyond getting the code):

- Make a small SignalR app or take one from the SignalR samples (caveat below)

- Make a Windows Azure account and a Service Bus namespace. For that, follow the same steps as outlined in the Multi-Tier apps tutorial on MSDN.

- Compile the extension project and add it to your SignalR solution

- At initialization time (global.asax, startup, etcetc), you need to reference (using)the SignalR.WindowsAzureServiceBus namespace and then add the following initialization code: AspNetHost.DependencyResolver.UseWindowsAzureServiceBus(“{namespace}“,”{account}”, “{key}”, "{appname}", 2);

- Compile, run

In the above example, {namespace} is the Service Bus namespace you created following the tutorial steps, {account} is likely “owner” (to boot) and {key} is the default key you copied from the portal. {appname} is some string, without spaces, that disambiguates your app from other apps on the same namespace and 2 stands for splitting the Service Bus traffic across 2 topics.

Most of the SignalR samples don’t quite work yet in a scale-out mode since they hold local, per-node state. That’s getting fixed.

If you want to see SignalR and Service Bus in action right this second, you can hop into the Azure chat room on our test deployment of jabbr that runs across 4 nodes.

Steve Peschka began a series with The Azure Custom Claim Provider for SharePoint Project Part 1 on 2/11/2012:

Hi all, it’s been a while since I’ve added new content about SAML claims, so I decided to come back around and write some more about it in a way that links together some of my favorite topics – SharePoint, SAML, custom claims providers, the CASI Kit and Azure. This is the first part in a series in which I will deliver a proof of concept, complete with source code that you can freely use as you wish, that will demonstrate building a custom claims provider for SharePoint, that uses Windows Azure as the data source. At a high level the implementation will look something like this:

Users will log into the site using SAML federation with ACS. On the ACS side I’ll configure a few different identity providers – probably Google, Yahoo and Facebook. So users will sign in using their Google email address for example, and then once authenticated will be redirected into the site.

- I’ll use Azure queues to route claim information about users and populate Azure table storage

- I’ll have a WCF application that I use to front-end requests for data in Azure table storage, as well as to drop off new items in the queue. We’ll create a trust between the SharePoint site and this WCF application to control who gets in and what they can see and do.

- On the SharePoint side, I’ll create a custom claims provider. It will get the list of claim types I support, as well as do the people picker searching and name resolution. Under the covers it will use the CASI Kit to communicate with Windows Azure.

When we’re done we’ll have a fully end to end SharePoint-to-Cloud integrated environment. Hope you enjoy the results. Look for Part 2 next, where I’ll describe building out the Azure components.

Francois Lascelles described OAuth Token Management in a 2/10/2012 post (missed when published):

Tokens are at the center of API access control in the Enterprise. Token management, the process through which the lifecycle of these tokens is governed emerges as an important aspect of Enterprise API Management.

OAuth access tokens, for example, can have a lot of session information associated to them:

- scope;

- client id;

- subscriber id;

- grant type;

- associated refresh token;

- a saml assertion or other token the oauth token was mapped from;

- how often it’s been used, from where.

While some of this information is created during OAuth handshakes, some of it continues to evolve throughout the lifespan of the token. Token management is used during handshakes to capture all relevant information pertaining to granting access to an API and makes this information available to other relevant API management components at runtime.

During runtime API access, applications present OAuth access tokens issued during a handshake. The resource server component of your API management infrastructure, the gateway controlling access to your APIs, consults the Token management system to assess whether or not the token is still valid and to retrieve information associated to it which is essential to deciding whether or not access should be granted. A valid token in itself is not sufficient, does the scope associated to it grant access to the particular API being invoked? Does the identity (sometimes identities) associated with it also grant access to the particular resource requested? The Token management system also updates the runtime token usage for later reporting and monitoring purposes.

The ability to consult live tokens is important not only to API providers but also to owners of applications to which they are assigned. A Token management system must be able to deliver live token information such as statistics to external systems. An open API based integration is necessary for maximum flexibility. For example, an application developer may access this information through an API Developer Portal whereas a API publisher may get this information through a BI system or ops type console. Feeding such information into a BI system also opens the possibility of detecting potential threats from unusual token usage (frequency, location-based, etc). Monitoring and BI around tokens therefore relates to token revocation.