Windows Azure and Cloud Computing Posts for 12/26/2011+

| A compendium of Windows Azure, Service Bus, EAI & EDI Access Control, Connect, SQL Azure Database, and other cloud-computing articles. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Windows Azure Blob, Drive, Table, Queue and Hadoop Services

- SQL Azure Database and Reporting

- Marketplace DataMarket, Social Analytics and OData

- Windows Azure Access Control, Service Bus, and Workflow

- Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch and Entity Framework v4+

- Windows Azure Infrastructure and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

Azure Blob, Drive, Table, Queue and Hadoop Services

Himanshu Singh posted Cross-post: CTP of Apache Hadoop-based Services for Windows Azure Now Available on 12/27/2011:

The community technology preview (CTP) of Apache Hadoop-based Services for Windows Azure (or Hadoop on Azure) is now available, according to the blog post, “Availability of Community Technology Preview (CTP) of Hadoop based Service on Windows Azure” on the SQL Server Team blog.

And according to the blog post, “Helping make Hadoop Easier By Going Metro” on the Microsoft SQL Server Development Customer Advisory Team blog, “one of the great things about the new Hadoop on Azure service is that Hadoop is now ‘all Metro’ – that is a Metro UI with a Live Tile implementation.”

“What is even cooler,” the post continues, “is that you can interact with Hadoop, Hive, Pig-Latin, Hadoop Javascript framework, Windows Azure DataMarket, and Excel using the Hadoop on Windows Azure service.” Check out the Channel 9 video for Hadoop on Windows Azure for more information.

If you want an invite code for the CTP, please check out the fill out the Connect survey.

Click here to read the full blog post about the CTP of Apache Hadoop-based Services for Windows Azure. Click here to read the full blog post to learn more about Hadoop and Metro.

Don’t hold your breath for the invite. I requested one more than two weeks ago and have yet to receive it.

<Return to section navigation list>

SQL Azure Database and Reporting

No significant articles today.

<Return to section navigation list>

MarketPlace DataMarket, Social Analytics and OData

My (@rogerjenn) Mashup Big Data with Microsoft Codename “Data Explorer” - An Illustrated Tutorial of 7/27/2011 begins:

The dataset for this tutorial is a Windows Azure Marketplace DataMarket OData document from Microsoft’s Social Analytics team. I’ve been working with the API for Microsoft Codename “Social Analytics” since its introduction on 10/25/2011. My Microsoft tests Social Analytics experimental cloud article of 12/1/2011 for SearchCloudComputing.com describes how Social Analytics datasets enable discovery of consumer interest in and sentiment about products (Windows 8) or individuals (Bill Gates.)

Prerequisites: You need an invitation to Social Analytics’ private beta, which contains an invitation code (Windows Azure Marketplace DataMarket Account Key) to gain access to the Social Analytics OData Windows 8 dataset used in this tutorial. Apply for it here; select the Windows 8 slice for the VancouverWindows8 dataset. (“Vancouver” was Social Analytics’ earlier codename.) The invitation is required to use the Cloud (hosted in Windows Azure) implementation also. You must have Office 2010 SP1 or 2007 installed on the machine running the Data Explorer software.

Tip: If you have a Social Analytics Account Key, you can open and save the completed Cloud version of this tutorial’s sample app. See step 38 near the end of this post.

…

The following sections describe creating a Microsoft Codename “Data Explorer” desktop mashup that emulates most features of my sample Winform client app.

Obtaining a Social Analytics Account Key, Downloading the “Data Explorer” Desktop Client and Adding the Windows 8 Dataset

1. When you receive the Social Analytics invitation email, follow its instructions to enable access to the VancouverWindows8 dataset.

2. Log into the Windows Azure Marketplace DataMarket (Marketplace), click the My Account button and the navigation pane’s Account Keys link to open the Account Keys page:

3. Copy the Default Account Key’s Base64-encoded value to a safe place for reuse in step 10.

4. When you receive the Data Explorer invitation email, follow its instructions to activate your account for the hosted Cloud Client.

Note: The Cloud Client lets you publish your mashup to share it with others but isn’t required to complete this tutorial. The only difference between the Cloud and Desktop clients is that you save Snapshots to SQL Azure instead of SQL Server databases in step 32 and have the option to publish (host) them for private or public consumption in step 37 and later.

Tip: You can’t publish a populated snapshot with the Cloud version that was current when this post was written.

5. Visit the Microsoft Codename “Data Explorer” landing page, watch a one-minute video, and read about the product’s features:

6. Click the Download "Data Explorer" Desktop Client link to open the SQL Azure Labs Codename "Data Explorer" Client (Labs Release) page, download the x86 or x64 version of the DataExplorer.msi file which installs the Data Explorer workspace as well as an Office plugin that integrates Data Explorer into Excel.

Note: The installer version must correspond to the version of Microsoft Office 2010 SP1 or Office 2007 installed.

7. Run it to install the Desktop client, which adds a Microsoft Codename “Data Explorer” node to your All Programs menu with Microsoft Codename “Data Explorer” Home Page and Microsoft Codename “Data Explorer” choices.

8. Click the Microsoft Codename “Data Explorer” choice

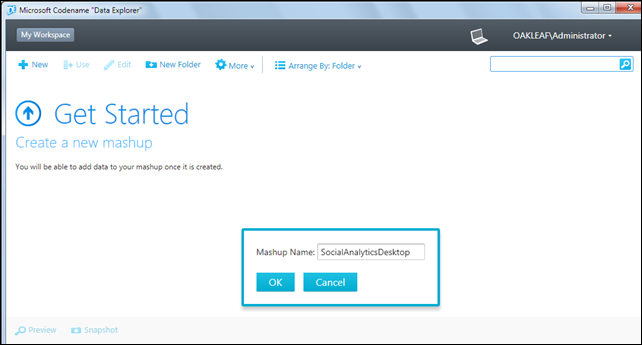

to start the Desktop Client, click New to open a dialog to name your first mashup, and type SocialAnalyticsDesktop or the like:

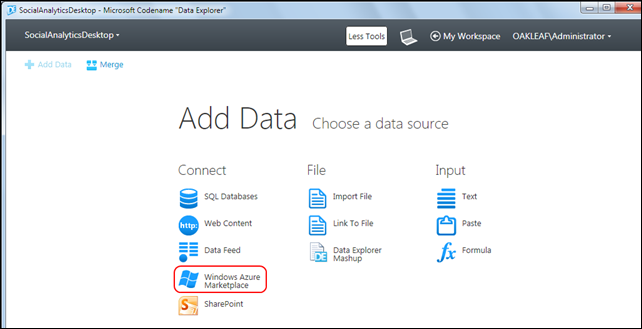

9. Click OK to open the Add Data page:

Tip: Choose Data Explorer Mashup to open a *.import file if you want to start a new mashup from an existing Desktop mashup saved on your machine or accessible on your LAN. The Cloud version disables the Link To File button. …

The tutorial continues with 22 more illustrated steps.

My (@rogerjenn) Microsoft Codename “Data Explorer” Cloud Version Fails to Save Snapshots of Codename “Social Analytics” Data of 7/27/2011 begins:

The problem is related to differences in the snapshot database schema between desktop and cloud versions, as shown below.

Update 12/27/2011 1:30 PM PST: See my new Mashup Big Data with Microsoft Codename “Data Explorer” - An Illustrated Tutorial post of 12/27/2011 for detailed instructions for creating and downloading the mashups that underlie this post.

Update 12/26/2011 8:45 AM PST: The “Data Explorer” Team is investigating the problem. See end of post.

Background

Microsoft described Codename “Data Explorer” in its 10/12/2011 product announcement as follows:

"Data Explorer” is a new concept which provides an innovative way to gain new insights from the data you care about. With "Data Explorer” you can discover relevant data sources, enrich your data by combining with other sources, and then publish and share your insights with others.

You can get started with “Data Explorer” by identifying the data you care about from the sources you work with (e.g. Excel spreadsheets, files, SQL Server databases, Windows Azure Marketplace, etc.). [Emphasis added.]

I’ve been working with the API for Microsoft Codename “Social Analytics” since its introduction on 10/25/2011. My Microsoft tests Social Analytics experimental cloud article of 12/1/2011 for SearchCloudComputing.com describes how Social Analytics datasets enable discovery of consumer interest in and sentiment about products (Windows 8) or individuals (Bill Gates.)

My downloadable Codename “Social Analytics” WinForms Client Sample App displays individual Tweets, Facebook posts, and occasional Stack Overload questions in a DataGrid control and summarizes daily counts of items referring to Windows 8 as well as their positive and negative sentiment (tone) in a graph, shown here for 12/25/2011 and the preceding 21 days:

Click figures to display full-size (1024 x 768 px) screen captures.

While testing the capability of Desktop and Cloud implementations of Microsoft Codename “Data Explorer” to emulate the features of my sample client app, I discovered the following:

Saving a Snapshot Containing Codename “Social Analytics” Data from the Desktop Client to a Local SQL Server Instance Works as Expected

…

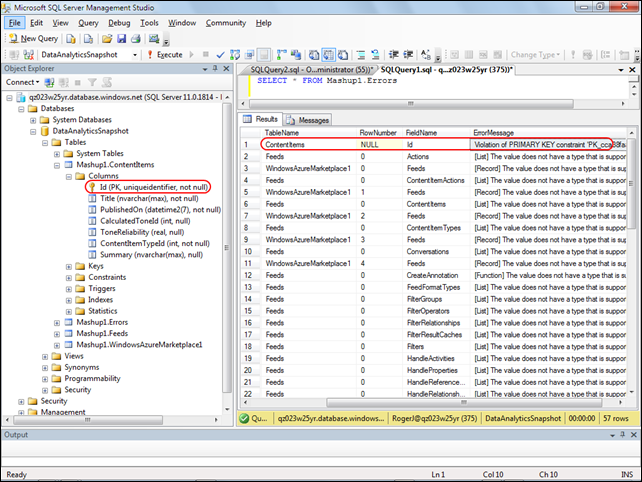

Saving a Snapshot Containing Codename “Social Analytics” Data from the Cloud Implementation to an SQL Azure Instance Fails with Primary Key Constraint Violation

Specifying an SQL Azure server (v11.0.1814) instance to store snapshot data, adding a new database, specifying the schema name, and creating a snapshot results in an empty ContentItems table. The following SSMS screen displays the cause of the problem:

Notice that the cloud implementation doesn’t add the row primary key column. Renaming the Id column to ItemGuid and adding an Index (row number) column named Id doesn’t solve the problem.

The post continues with more details.

<Return to section navigation list>

Windows Azure Access Control, Service Bus and Workflow

Richard Seroter (@rseroter) described Sending Messages to Azure AppFabric Service Bus Topics From Iron Foundry in a 12/27/2011 post:

I recently took a look at Iron Foundry and liked what I found. Let’s take a bit of a deeper look into how to deploy Iron Foundry .NET solutions that reference additional components. Specifically, I’ll show you how to use the new Windows Azure AppFabric brokered messaging to reliably send messages from Iron Foundry to an on-premises application.

The Azure AppFabric v1.5 release contains useful Service Bus capabilities for durable messaging communication through the use of Queues and Topics. The Service Bus still has the Relay Service which is great for invoking services through a cloud relay, but the asynchronous communication through the Relay Service isn’t durable. Queues and Topics now let you send messages to one or many subscribers and have stronger guarantees of delivery.

An Iron Foundry application is just a standard .NET web application. So, I’ll start with a blank ASP.NET web application and use old-school Web Forms instead of MVC. We need a reference to the Microsoft.ServiceBus.dll that comes with Azure AppFabric v1.5. With that reference added, I added a new Web Form and included the necessary “using” statements.

I then built a very simple UI on the Web Form that takes in a handful of values that will be sent to the on-premises subscriber(s) through the Service Bus. Before creating the code that sends a message to a Topic, I defined an “Order” object that represents the data being sent to the topic. This object sits in a shared assembly used by this application that sends the message, and another application that receives a message.

[DataContract] public class Order { [DataMember] public string Id { get; set; } [DataMember] public string ProdId { get; set; } [DataMember] public string Quantity { get; set; } [DataMember] public string Category { get; set; } [DataMember] public string CustomerId { get; set; } }The “submit” button on the Web Form triggers a click event that contains a flurry of activities. At the beginning of that click handler, I defined some variables that will be used throughout.

//define my personal namespace string sbNamespace = "richardseroter"; //issuer name and key string issuer = "MY ISSUER"; string key = "MY PRIVATE KEY"; //set the name of the Topic to post to string topicName = "OrderTopic"; //define a variable that holds messages for the user string outputMessage = "result: ";Next I defined a TokenProvider (to authenticate to my Topic) and a NamespaceManager (which drives most of the activities with the Service Bus).

//create namespace manager TokenProvider tp = TokenProvider.CreateSharedSecretTokenProvider(issuer, key); Uri sbUri = ServiceBusEnvironment.CreateServiceUri("sb", sbNamespace, string.Empty); NamespaceManager nsm = new NamespaceManager(sbUri, tp);Now we’re ready to either create a Topic or reference an existing one. If the Topic does NOT exist, then I went ahead and created it, along with two subscriptions.

//create or retrieve topic bool doesExist = nsm.TopicExists(topicName); if (doesExist == false) { //topic doesn't exist yet, so create it nsm.CreateTopic(topicName); //create two subscriptions //create subscription for just messages for Electronics SqlFilter eFilter = new SqlFilter("ProductCategory = 'Electronics'"); nsm.CreateSubscription(topicName, "ElecFilter", eFilter); //create subscription for just messages for Clothing SqlFilter eFilter2 = new SqlFilter("ProductCategory = 'Clothing'"); nsm.CreateSubscription(topicName, "ClothingFilter", eFilter2); outputMessage += "Topic/subscription does not exist and was created; "; }At this point we either know that a topic exists, or we created one. Next, I created a MessageSender which will actually send a message to the Topic.

//create objects needed to send message to topic MessagingFactory factory = MessagingFactory.Create(sbUri, tp); MessageSender orderSender = factory.CreateMessageSender(topicName);We’re now ready to create the actual data object that we send to the Topic. Here I referenced the Order object we created earlier. Then I wrapped that Order in the BrokeredMessage object. This object has a property bag that is used for routing. I’ve added a property called “ProductCategory” that our Topic subscription uses to make decisions on whether to deliver the message to the subscriber or not.

//create order Order o = new Order(); o.Id = txtOrderId.Text; o.ProdId = txtProdId.Text; o.CustomerId = txtCustomerId.Text; o.Category = txtCategory.Text; o.Quantity = txtQuantity.Text; //create brokered message object BrokeredMessage msg = new BrokeredMessage(o); //add properties used for routing msg.Properties["ProductCategory"] = o.Category;Finally, I send the message and write out the data to the screen for the user.

//send it orderSender.Send(msg); outputMessage += "Message sent; "; lblOutput.Text = outputMessage;I decided to use the command line (Ruby-based) vmc tool to deploy this app to Iron Foundry. So, I first published my website to a directory on the file system. Then, I manually copied the Microsoft.ServiceBus.dll to the bin directory of the published site. Let’s deploy! After logging into my production Iron Foundry account by targeting the api.gofoundry.net management endpoint, I executed a push command and instantly saw my web application move up to the cloud. It takes like 8 seconds from start to finish.

My site is now online and I can visit it and submit a new order [note that this site isn’t online now, so don’t try and flood my machine with messages!]. When I click the submit button, I can see that a new Topic was created by this application and a message was sent.

Let’s confirm that we really have a new Topic with subscriptions. I can first confirm this through the Windows Azure Management Console.

To see more details, I can use the Service Bus Explorer tool which allows us to browse our Service Bus configuration. When I launch it, I can see that I have a Topic with a pair of subscriptions and even what Filter I applied.

I previously built a WinForm application that pulls data from an Azure AppFabric Service Bus Topic. When I click the “Receive Message” button, I pull a message from the Topic and we can see that it has the same Order ID as the message submitted from the website.

If I submit another message from the website, I see a different message because my Topic already exists and I’m simply reusing it.

Summary

So what did we see here? First, I proved that an ASP.NET web application that you want to deploy to the Iron Foundry (onsite or offsite) cloud looks just like any other ASP.NET web application. I didn’t have to build it differently or do anything special. Secondly, we saw that I can easily use the Windows Azure AppFabric Service Bus to reliably share data between a cloud-hosted application and an on-premises application.

<Return to section navigation list>

Windows Azure VM Role, Virtual Network, Connect, RDP and CDN

No significant articles today.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

David Pallman published a Mobile & Global in 7 Steps with HTML5, MVC & Windows Azure summary and seven detailed posts on 12/27/2011:

In this series of posts I’ll be walking you through a samplethat shows web and cloud working well together. Recently I’ve been writing about responsive web design and a complementary concept, responsive cloud design. This sample and tutorial show them in action. The sample illustrates an application that is truly mobile and global, that can run anywhere and everywhere. It leverages HTML5 and open standards on the web client; works across desktop browsers,tablets, and phones; uses the Microsoft web platform (notably MVC) on the web server; runs on the Windows Azure cloud computing platform; and is globally deployed to data centers on 3 continents with automatic traffic management. That’s a lot of capability, but we’re going to accomplish all this in 7 straightforward steps.

Responsive ToursSample

The sample is for a fictitious company that operates city tours named Responsive Tours. The source code is on CodePlex at http://responsivetours.codeplex.comand the final result can be accessed online at http://responsive-tours.com.

Workflow

This sample is also intended to show designer <->developer <-> deployment workflow. The source code is in 7 progressive projects:

1. Design: Design site front end

2. Web Project: Import into web template

3. Integrate: Add dynamic content

4. Cloud-ready: Set up for Windows Azure

5. Secured: Sign-in with web/social identity

6. Deployed: Deployed to the cloud

7. Global: Deployed worldwide with traffic management

Of course, there should be overlap and iteration between designer and developer but as we're dealing with a relatively simple application here we can proceed serially.In this first post we’ll give you an overview of the sample, and in Parts 2 through 8 we’ll go into each step of this workflow in some detail.

1. Design: Design Site Front End

In this step a responsive web design HTML5 sample from Adobe is used as a starting point. It detects device characteristics and dimensions and adapts to desktop, tablet, or phone size screens automatically. The designer uses Adobe Dreamweaver to customize the sample for a fictitious city tour operator,Responsive Tours.This is Project 1 in the sample. You can run this project locally in a browser by opening index.html.

2. Web Project: Import into Web Template

In this step the design assets (HTML, CSS, JavaScript, images) are handed off to a web developer, who inserts them into a web template to create a web project. The developer can now work in Visual Studio. The web project's front-end is open standards-based and the back end uses the Microsoft web platform, including MVC3.This is Project 2 in the sample. You can run this project locally by opening the solution in Visual Studio and pressing F5.

3. Integrate: Add Dynamic Content

In this step the developer implements dynamic content for promotional items,querying a database on the server side. MVC-Razor and Knockout.js are used for data binding. Visual integration with Bing Maps is also added on the web client side.This step requires you to set up a database with a table for promotional items, and also to obtain a Bing Maps key.

This is Project 3 in the sample. You can run this project locally by opening the solution in Visual Studio and pressing F5.

4. Cloud-ready: Set up for Windows Azure

In this step the developer adds a Windows Azure project to the solution so that it can be run as a web role in the Windows Azure Simulation Environment on the local machine.This is Project 4 in the sample. You can run this project locally by opening the solution in Visual Studio and pressing F5. It will run in the Windows Azure Simulation Environment.

5. Secured: Sign-in with web identity

In this step the developer sets up authentication using the Access Contro lService. The user can sign in with a Windows Live, Yahoo!, or Google social/web identity.This step requires you to configure an Access Control Service namespace in the Windows Azure portal.

This is Project 5 in the sample. You can run this project locally by opening the solution in Visual Studio and pressing F5. It will run in the Windows Azure Simulation Environment.

6. Deployed: Deployed to the cloud

In this step the developer deploys the solution code and data to a the cloud Windows Azure data center. The promotional item images are moved to blob storage.This step requires you to create, configure, and populate a Hosted Service,Database, Blob Storage, and Access Control Service in a Windows Azure datacenter.

This is Project 6 in the sample. You can run this project online by directing your browser to the production URL for your hosted service. After signing in with the identity provider of your choice, the web site will run from a Windows Azure data center.

7. Global: Deployed worldwide with traffic management

In this step the developer deploys the solution to additional data centers for worldwide presence and sets up automated traffic management. The promotional item images are accessed via the Content Delivery Network for performance based on locale.This step requires you to create, configure, and populate 3 Hosted Services(each in a different data center), a Database, Blob Storage, the Access Control Service, and Traffic Management in Windows Azure.

This is Project 7 in the sample. You can run this project online by directing your browser to the traffic manager URL for your hosted service. After signing in with the identity provider of your choice, the web site will run in a randomly selected datacenter (US, Europe, or Asia).

Next: Mobile & Global with HTML5, MVC, and Windows Azure, Step 1: Design

The remaining seven posts in this series are:

- Mobile & Global with HTML5, MVC, and Windows Azure, Step 1: Design

- Mobile & Global with HTML5, MVC & Windows Azure, Step 2: Web Project

- Mobile & Global with HTML5, MVC & Windows Azure, Step 3: Dynamic Content

- Mobile & Global with HTML5, MVC & Windows Azure, Step 4: Cloud-Ready

- Mobile & Global with HTML5, MVC & Windows Azure, Step 5: Secured

- Mobile & Global with HTML5, MVC & Windows Azure, Step 6: Cloud-Deployed

- Mobile & Global with HTML5, MVC & Windows Azure, Step 7: Globally-Deployed

Bravo, David!

Bruce Kyle reported the availability of an ISV Video: Quark Brand Manager Goes Global in the Cloud on Windows Azure in a 12/27/2011 post to the US ISV Evangelism blog:

Quark Brand Manager provides distributed sales and marketing teams the opportunity to easily create, customize and deliver brand-compliant marketing materials on-demand. Quark chose Windows Azure for this new Software-as-a-Service (SaaS) solution.

Marketing manager for Brand Manager Nick Howard and engineering manager Amit Nayar talk with ISV Architect Evangelist Bruce Kyle about how they chose Windows Azure.

Video Link: Brand Manager Goes Global in the Cloud on Windows Azure

The team describes several Azure features that are key to their choice.

- Windows Azure helps the scale the application to the right size, up when lots of jobs put big demands on the system, back when demand lessens.

- It provides global location

- Able to get to market quicker.

- More affordable for clients.

The team describes scaling as being able to meet the demands for multiple types of tenants and workloads. So with Azure, the meet the user demands for processing large print jobs, add new customers worldwide, and add users worldwide.

Amit describes how developers expand their current skill set, while learning how to build architecturally sound applications for the cloud. He says, "Developer wouldn't want me to take away Azure now. It just fits the way we want to do business."

Developers can quickly deploy, closing gap between their dev environment and production environment. Easy to replicate between the cloud and development computers. In addition, developers are able to quickly respond to changing needs, making them able to accept business deals that might have been more difficult to implement. Azure a familiar place to the developers.

Amit explains how Quark does continuous integration with the cloud.

About Brand Manager

Brand Manager helps provide marketing manager with ways to control how those inside and outside the organization use logos, printed material, and business marketing collateral. It provides a workflow for the approval process

- Create customized, brand-controlled marketing materials, produced by anyone, anywhere.

- Empower your distributed sales and marketing teams to create personalized and localized sales and marketing materials on-demand, in minutes.

- Collaborate with stakeholders — such as brand managers, agencies, and legal and subject matter experts — in the review and approval process.

- Maintain brand consistency and compliance with controlled elements such as colors, fonts, taglines, and logos.

- Increase efficiency with version control of customized documents so you don't have to waste time searching for the most recent version.

- Print at your convenience using your in-house printer, your preferred vendor or optionally with the Quark Brand Manager local print network for fast, convenient, cost-effective local printing.

Getting Started with Windows Azure

Windows Azure is an open cloud platform that enables you to quickly build, deploy and manage applications across a global network of Microsoft-managed datacenters.

- You can build applications using any language, tool or framework.

- Start with Developing on Windows Azure to get the tools for your particular environment.

- Get the latest Azure Developer Training Kit.

The Microsoft TechNet Case Studies Team posted Using Windows Azure to Build and Expand Microsoft.com Social Media Capabilities on 12/26/2011:

The Social eXperience Platform (SXP), which is powered by Windows Azure® and SQL Azure®, has been providing Microsoft.com with social media features for over eighteen months. The Windows Azure platform has enabled SXP to grow and expand its capabilities on Microsoft.com by delivering performance, scalability, elasticity, and remarkable return on investment (ROI).

Download:

Technical Case Study, 291 KB, Microsoft Word file

Introduction

The Microsoft corporate website, www.microsoft.com, is among the top ten most-visited websites in the world. Microsoft.com averages over 250 million unique visits per month, 70 million page views per day, 15,000 connection requests per second, and an average of 35,000 concurrent connections over 80 web servers worldwide.

SXP was developed to enhance social media features, thereby amplifying the voice of the customer on Microsoft.com. In April 2010, SXP began serving its first tenant, the Video Showcase site on Microsoft.com.

Situation

In its first implementation, SXP provided several features in its first implementation on the Video Showcase site, including managed comments and ratings, profanity and spam filtering, and the ability to comment on or rate video content.

The Windows Azure platform hosts the primary SXP components, which are distributed across several Windows Azure Compute instances and SQL Azure databases.

SXP Architecture

SXP is implemented on Windows Azure as a number of different components that work together to provide a full set of social media capabilities:

SXP Web Services. Most of the SXP workload exists on SXP Web Services. The SXP Web Services run as a single Web Role across multiple compute instances in Windows Azure. SXP stores tenant data in SQL Azure databases.

SXP Monitor. The SXP Monitor is a small web application, running in a single instance Web Role that runs in the same data center as the Web service. The SXP Monitor provides the following functionality:

Pings the Web service every five seconds to determine service availability and performance

Summarizes the data from the Windows Azure Diagnostics storage tables

Windows Azure Storage. Windows Azure Storage provides diagnostics and log information.

Windows Azure Management Pack for Microsoft® System Center Operations Manager. System Center Operations Manager monitors the SXP key components through the Windows Azure Management Pack. The Windows Azure Management Pack provides insight into the performance and state for important aspects of the SXP environment. Operations Manager also trims the Windows Azure Diagnostics tables.

Keynote. Keynote is a third-party solution that provides global availability information.

Moderation Tool. The Moderation Tool manages comments, ratings, and feeds. The Moderation Tool is authenticated by Active Directory® Federation Services, which allows extending Active Directory Domain Services authentication into the cloud.

Results

As of November 2011—eighteen months after the Video Showcase implementation—SXP has become an integral part of the social and community-driven aspects of Microsoft.com.

Integrating a Cloud-Based Solution

As a cloud-based platform on Windows Azure, SXP integrates with any portion of Microsoft.com in much the same manner as it did originally with the Video Showcase site. A cloud-based solution has the capability to connect to on-premises components without being reliant on on-premises data centers. This means that integration with on-premises solutions is a modular process that can be reused from site-to-site, decreasing time and costs associated with integration. The ease of integration provided by SXP resulted in a steady stream of new tenants, each able to leverage the SXP capabilities alongside their own content. SXP has become more than a platform as a Service (PaaS); it provides social as a service to website tenants on Microsoft.com, enabling easy to implement social media capability.

Leveraging the Windows Azure Platform to Make SXP First and Best

SXP on Windows Azure continues to provide excellent results, thanks in part to its design and partnership with Windows Azure. The following areas of SXP implementation result in tangible and significant benefits for tenants.

Availability

With Windows Azure, SXP service releases are literally push-button activities with zero planned downtime. The SXP team uses the Virtual IP (VIP) Swap feature on the Windows Azure Platform Management Portal to promote its staging environment to a production environment. Service releases have occurred approximately every six weeks since SXP launched in April 2010. The process on Windows Azure is so refined and so simple that the team no longer accounts for service releases when anticipating events that may cause downtime. Since May 2011, SXP has been 100 percent available for over 200 consecutive days. Not one single transaction has been lost.

Performance, Scalability, and Elasticity

There have been no performance issues reported on the Video Showcase site. Resource utilization for the single tenant was insignificant when compared to the resources available in the medium compute instance of Windows Azure. Because of this resource availability, adding more tenants as demand for SXP grew required no scaling of provisioned components, simply the addition of tenants to the SXP Web service and the associated data stores. Site requests per day have grown from one tenant averaging 9,000 requests per day in April 2010, to over 2 million requests across 71 tenants in October 2011. Even with this increase in customer traffic, additional Windows Azure resources were not needed, with the exception of one week of inordinately high traffic. During this week, the Windows Azure compute instances were doubled to handle the increased traffic, a process that took less than one hour to increase, and then again, to decrease once normal capacity was again reached. This high level of elasticity enabled SXP to handle traffic and request peaks of over 1,800 percent of normal.

The following figure shows request traffic to SXP of a 72-hour period in June 2011. No increases to Windows Azure resources were required to handle this traffic.

Figure 1. SXP traffic over a 72-hour period in June 2011

Extensibility

SXP has benefited from the resiliency of the Windows Azure platform and become a highly extensible application. SXP uses a multi-staged environment and instantaneous and uninterrupted switchover from development to production environments. These allow SXP modifications and additions to be tested more rigorously and implemented more frequently, without concern for negatively impacting the production environment. Due to the cloud-based nature of SXP, integration with new sites on Microsoft.com has been a simple and repeatable process, without need for complicated development.

Cost and Pricing

Statistically, the most measurably impressive numbers for SXP have come from pricing and ROI. For the previous solution utilized by the Video Showcase website, the Microsoft Information Technology (IT) monthly hosting costs were $15,000 US. With Windows Azure and SXP, hosting costs for all 71 tenants—including the Video Showcase website—are currently under $900 US per month. If the Video Showcase site was the only tenant, SXP would provide a 91 percent savings over the previous. Factoring in the other SXP tenants, based on traffic consumption, costs are less than $46 per month. That is more than a 99 percent reduction in cost for the Video Showcase website. Figure 2 reviews the "Before" and "After" data points used to calculate ROI.

Figure 2. Return on investment comparison

Growing Beyond the Platform as a Service Model

As SXP's adoption has continued to grow, so have the abilities and options for SXP to be more than a cost savings for Microsoft IT to pass on to its customer and more than a PaaS. Solutions such as SXP—which can be leveraged across several sites to serve several clients—have created a new business model in IT departments. The significant lowering of application costs has evolved into completely recouping these costs and even making a profit from applications. The unique Windows Azure capabilities and infrastructure allow IT departments to create application environments that generate new possibilities for these departments to become a contributing part of the business model, rather than a necessary cost to maintain business operations.

Benefits

The benefits of SXP on Windows Azure are both quantifiable and compelling:

Reliability and availability. Hosting a solution on the Windows Azure platform removes almost all infrastructure maintenance requirements. By utilizing the Windows Azure built-in failover and backup features, developers can now focus instead on developing and expanding the website itself, rather than the infrastructure required to host it.

Performance consistent across significant usage peaks. The processing capacity of the Windows Azure Compute instances used for SXP allows for significantly more system load than the typical averages. This provides both resiliency across traffic spikes and consistent performance during extended periods of high traffic.

Easy-to-provision platform resources. As SXP grows and acquires new tenants, and as demand for resources expands beyond the current allocation, new instances can be provisioned and made part of the production environment in minutes, rather than days or potentially weeks.

Outage-free application maintenance and platform upgrades. The development and staging environments in Windows Azure allow SXP to transition between releases without impact to the production environment. This enables the SXP team to update and improve SXP on a more regular basis, without negatively affecting SXP tenants.

Measurable ROI and cost savings. The ROI for SXP on Windows Azure can easily make the case for Windows Azure on its own. A 99 percent savings on solution costs reduces the cost impact of the solution and allows IT departments to consider leveraging the platform for other solutions that may have been previously cost-prohibitive.

Ability to leverage applications and solutions into profit centers. Because of the flexible nature of SXP and its ability to provide services to multiple tenants using the same Windows Azure resources, SXP provides a new profit center business model for Microsoft IT. With Windows Azure-based solutions, organization can utilize the platform to not only reduce costs, but to create profit while developing new ways of providing IT application and platform solutions.

Lessons Learned and Best Practices

Microsoft IT has gained considerable knowledge of the specific Windows Azure qualities that make it unique from other development environments through the continued growth and adoption of SXP.

Treat Windows Azure as a "Black Box". Organizations need to learn to treat Windows Azure as its own platform, rather than thinking in terms of integrated, on-premises solutions that have been used historically.

Actively Monitor Operations. Because of their separated nature, Windows Azure-hosted solutions need to be monitored differently from on-premises solutions. Windows Azure-hosted solutions should be monitored using a combination of Azure-based and on-premises monitoring solutions.

Plan for Integration and Refactoring. When migrating an existing solution to Windows Azure or integrating Windows Azure with on-premises components, plan for modification of code and communication between platforms. Windows Azure will require different integration methods than a solution that is hosted on-premises

Delineate Environments. With Windows Azure, it is easy to provide separation between development, staging, and production environments. Use this separation to create clear borders between the environments, and to test the deployment process with new releases of application code.

Participate in the Windows Azure Community. Windows Azure requires a new way of looking at application and platform development. New ways to utilize Windows Azure for innovative solutions are being created daily, and new tools and integration components are being created by both Microsoft and third-party developers to solve issues they encounter. Organizations can take advantage of the Windows Azure community to develop their own solutions by leveraging the work and development of other Azure customers.

Conclusion

After eighteen months, SXP has grown beyond original expectations and created an entirely new infrastructure type for Microsoft IT. Windows Azure provides SXP with the capability to perform and scale better than any on-premises solution. Its cloud-based architecture has simplified the implementation and maintenance process considerably, while still maintaining a reliable and always available presence. Most significant of all, SXP on Windows Azure costs far less than its predecessor, and has shown the potential to evolve from a cost center into a profit center, thus creating an entirely new business model for applications of its type.

For More Information

Learn more about SXP, Windows Azure, and Cloud Computing with the following links:

MSDN: Learn more about the Windows Azure Platform

http://www.microsoft.com/windowsazure/learn/Microsoft Technet: Cloud Computing

http://aka.ms/ITShowcase/CloudTechNet Radio: Developing Applications on Windows Azure

http://technet.microsoft.com/en-us/edge/Gg663910.aspxMicrosoft Technet: Microsoft IT Showcase: How Microsoft Does IT

http://www.microsoft.com/technet/itshowcaseFor more information about Microsoft products or services, call the Microsoft Sales Information Center at (800) 426-9400. In Canada, call the Microsoft Canada information Centre at (800) 563-9048. Outside the 50 United States and Canada, please contact your local Microsoft subsidiary.

It’s good to see some firm ROI numbers for Azure deployments.

<Return to section navigation list>

Visual Studio LightSwitch and Entity Framework 4.1+

Paul Patterson explained Microsoft LightSwitch – Maintaining a Primary Child Entity in a 12/27/2011 post:

Capturing and storing data is one thing. Turning that data into useful information is something else. The true value of your LightSwitch application will come from how useful the outputs are to your users.

In this little gem of an article, I demonstrate how to define and maintain a “primary” entity for one to many entity relationships.

The Scenario

I have a very intuitive solution that enables me do a lot of customer relationship management (CRM) features, as well as bunch of other stakeholder management stuff (see my Simple Stakeholder Management article as a good reference) .

Dealing with all these stakeholders can be a chore, especially when maintaining the contact information about each of these stakeholders. Keeping track of all those addresses, phone numbers, and email addresses can be difficult. Even more of a task is to determine what contact information is the most appropriate, or targeted, to use.

To help me deal with all this information, I created a very effective solution that helps me better mitigate all that contact information. In a nutshell, I created a process that lets me define what piece of contact information is the “primary” information to use. This way I can quickly see what I should use, instead of sifting around the data to see which is the appropriate or correct information to use.

Still confused? Let me scale this scenario down a bit. Let’s say I have a customer and that customer is a big customer that has a large number of addresses, phone numbers, email addresses. And that customer has a large number of contacts (people), who also have one or more addresses, phone numbers, and so on. If I need to pick up the phone, or send a letter, what contact information do I use first?

Here is what I did…

Who’s on First, What’s on Second…

This article demonstrates how I implemented this “primary” scenario for customer addresses. The same scenario is easily applied to any kind of one to many relationships that you may have in your system.

My Address entity looks like this…

As you can see above, there is a relationship between the Address and a Company. A Company can have one or more Addresses. Also note the IsPrimary property, a Boolean type. This is what is used to define the address as the primary address for the related entity. Also, there are other relationships here, such as for Company and Contact entities – which is important to remember what is explained later in this article.

In the application I have a list-detail screen that lists all my customers. Selecting a customer from the list will display details about the customer, including the contact information such as the as addresses, phone numbers, emails, and people contacts. This is what that screen looks like when it is running…

The Address tab in the screen (shown above) displays a list of addresses for the selected customer. One of those addresses, the Home address, is defined as the primary address for the customer.

To maintain the address entity, and to mitigate the setting of the IsPrimary flag, I created a screen that is used exclusively for adding and editing addresses. The screen was created using the New Data screen template and then crafted so that it could be used to both add a new address entity as well as edit an existing address entity.

Here is what the address editor screen looks like when it is running…

Nothing spectacular really, but there is a lot happening under the hood that is important to understand. Before I move and show you some cool code, keep the following in mind about the models and their relationships (and constraints) used for a few of the stakeholders in the application:

- A Customer can have one or more Addresses

- A Customer must have one, and only one, Address defined as Primary.

- A Company can have one or more Addresses

- A Company must have one, and only one, Address defined as Primary.

- A Contact can have one or more Addresses (and like the customer above, must have only one primary address).

I don’t talk about the Company nor Contact entities here, but it follows the same principles, and you’ll see how I craft the Address screen so that it can be used to edit the addresses for all those entities.

So, to achieve all those above listed constraints, I did this…

First I created local properties for the screen. Each of these properties are used to define; first, whether or not the address being edited is a new address or an existing address, and second, which entity (a customer, company, or contact) the address is being edited for.

An additional string property named SourceScreenName was also added. This property is used for mitigating a refresh on the screen that opened the address editor screen (example shown later in the code).

For what it’s worth, here some of the noteworthy attributes of these screen properties…

- SourceScreenName = String, Is Parameter = False, Is Required = True

- AddressId = Integer, Is Parameter = True, Is Required = False

- CompanyId = Integer, Is Parameter = True, Is Required = False

- CustomerId = Integer, Is Parameter = True, Is Required = False

- ContactId = Integer, Is Parameter = True, Is Required = False

- AddressEntityParentName = String, Is Parameter = False, Is Required = False

Cool Coding Stuff…

First thing to do on this screen is to determine why it is being opened. This is all controlled via the values of the properties being passed as parameters to the screen.

For example, the following code is used when clicking the Edit button on the Addresses grid toolbar in the Customer list-detail screen shown earlier…

partial void AddressesEditSelected_Execute() { EditAddress(); } private void EditAddress() { int addressId = this.Addresses.SelectedItem.Id; Application.ShowCreateOrEditAddress(this.Name, addressId, null, null, null); }The above EditAddress method is used to open the address editor screen, using values for the screen parameters.

Here is how the address editor screen controls this using those same parameters…

partial void CreateOrEditAddress_InitializeDataWorkspace(List<IDataService> saveChangesTo) { if (AddressId.HasValue) { Address address = DataWorkspace.ApplicationData.Addresses.Where(a => (a.Id == AddressId)).FirstOrDefault(); this.AddressProperty = address; } else { this.AddressProperty = new Address(); } if (CompanyId.HasValue) { Company company = DataWorkspace.ApplicationData.Companies.Where(c => (c.Id == CompanyId)).FirstOrDefault(); this.AddressProperty.Company = company; if (company != null) AddressEntityParentName = company.CompanyName; } if (CustomerId.HasValue) { Customer customer = DataWorkspace.ApplicationData.Customers.Where(c => (c.Id == CustomerId)).FirstOrDefault(); this.AddressProperty.Customer = customer; if (customer != null) AddressEntityParentName = customer.CustomerName; } if (ContactId.HasValue) { Contact contact = DataWorkspace.ApplicationData.Contacts.Where(c => (c.Id == ContactId)).FirstOrDefault(); this.AddressProperty.Contact = contact; if (contact != null) AddressEntityParentName = contact.FirstName + " " + contact.LastName; } }Pretty crafty eh? Depending on which property I pass to the screen (as a parameter), the screen will add or edit the appropriate address for the appropriate entity.

Saving the Primary Address

With the screen properties, I can then control which values get updated, as well as do some magic to define whether or not the address is the “primary” address for the entity.

First, I hook into the Saving event of the entity…

…and the code for that event…

partial void CreateOrEditAddress_Saving(ref bool handled) { if (this.AddressProperty.IsPrimary == false) { if (HasExistingPrimaryAddress() == false) { string message = "There must be a primary address defined for this entity."; message += Environment.NewLine + Environment.NewLine; message += "This address will be the primary address, until another address is added or selected as primary."; this.ShowMessageBox(message); this.AddressProperty.IsPrimary = true; } } else { List<Address> addresses = new List<Address>(); if (this.AddressProperty.Company != null) { addresses = this.AddressProperty.Company.Addresses.ToList(); } if (this.AddressProperty.Customer != null) { addresses = this.AddressProperty.Customer.Addresses.ToList(); } if (this.AddressProperty.Contact != null) { addresses = this.AddressProperty.Contact.Addresses.ToList(); } ResetAllPrimaryAddress(addresses); this.AddressProperty.IsPrimary = true; } }The above code evaluates if the address being edited is set with the IsPrimary flag as false. If false, then a method is called to check to see if there are any other addresses for the entity. If no other addresses are found, then the address must have its IsPrimary flag set to true. If true, the logic sets all other addresses (if any) IsPrimary flag to false, and then saves the entity.

Here are the HasExistingPrimaryAddresses and ResetAllPrimaryAddress methods…

public bool HasExistingPrimaryAddress() { bool primaryWasFound = false; List<Address> addresses = new List<Address>(); if (CompanyId.HasValue) { Company company = DataWorkspace.ApplicationData.Companies.Where(c => (c.Id == CompanyId)).FirstOrDefault(); if (company != null) addresses = company.Addresses.ToList(); } if (CustomerId.HasValue) { Customer customer = DataWorkspace.ApplicationData.Customers.Where(c => (c.Id == CustomerId)).FirstOrDefault(); if (customer != null) addresses = customer.Addresses.ToList(); } if (ContactId.HasValue) { Contact contact = DataWorkspace.ApplicationData.Contacts.Where(c => (c.Id == ContactId)).FirstOrDefault(); if (contact != null) addresses = contact.Addresses.ToList(); } if (addresses.Count > 1) { foreach (Address address in addresses) { if (address.IsPrimary) primaryWasFound = true; } } return primaryWasFound; } private void ResetAllPrimaryAddress(List<Address> addresses) { if (addresses.Count > 1) { foreach (Address address in addresses) { if (address.IsPrimary) address.IsPrimary = false; } } }Finally, I simply hooked into the Saved event to close the update the addresses collection on the screen the originally opened the address editor, and then close the editor…

partial void CreateOrEditAddress_Saved() { IActiveScreen sourceScreen = null; switch (SourceScreenName) { case "CustomerListDetail": if (this.AddressProperty.Customer != null) sourceScreen = Application.ActiveScreens.Where(a => a.Screen is CustomersListDetail).FirstOrDefault(); break; case "EditCompanyDetail": sourceScreen = Application.ActiveScreens.Where(a => a.Screen is EditCompanyDetail).FirstOrDefault(); break; } // IActiveScreen sourceScreen = Application.ActiveScreens.Where(a => a.Screen.Name == SourceScreenName).FirstOrDefault(); if (sourceScreen != null) { sourceScreen.Screen.Details.Dispatcher.BeginInvoke(() => { switch (SourceScreenName) { case "CustomerListDetail": if (this.AddressProperty.Customer != null) ((CustomersListDetail)sourceScreen.Screen).Addresses.Refresh(); if (this.AddressProperty.Contact != null) ((CustomersListDetail)sourceScreen.Screen).ContactAddresses.Refresh(); break; case "EditCompanyDetail": ((EditCompanyDetail)sourceScreen.Screen).Addresses.Refresh(); break; } }); } this.Close(false); }Conclusion

I love the use of local screen properties. There is so much that can be done with relative ease using local screen properties.

Using the technique above provides for an intuitive method of getting more relative context from your data. I applied this same technique to addresses, phones, emails, other contacts, and etc. Now when I want only the necessary information about a stakeholder, that information is immediately available, instead of searching for it. I’ve also extended this process to include other primary types, such as which address is the main “billing” address.

Anywho, let me know if you get any use out of this method.

Paul Patterson described Microsoft LightSwitch – Simple but Effective Application Defaults in a 12/27/2011 post:

Most of the applications I do in LightSwitch involve some kind of single top-level entity, such as a company. Here is a tip on how to make sure that entity exists when the application first fires up…

When it comes to the outputs of an application, there is almost always going to be some high-level reference information that will be required. A good example of this is in the header and footers of reports where the name, and sometimes address, of the organization needs to be output.

Such is the case with the applications I have been building. An invoice, for example, will require the company name and address information on it. Or maybe there is some high-level defaults, such as a tax rate, that need to be used elsewhere in the system.

Building the tables and screens to manage this information is the easy part. But what if I had a constraint that information must exist when I first launch the application. Here is a simple, but effective, solution to solve this; using the Initialize event of the application.

In the scenario for this example, my “Company” information needs to exist when I first launch the application. This is so I can use the information for setting values on some screens, such as for displaying the name of my company on the top of each screen.

Yes, I already have a Company table as well as the appropriate screen to edit the company information. However, I want to save myself some time and not have to worry about evaluating whether or not the Company entity is null each time I needed to reference it.

So, here is what I did…

I opened up the properties dialog for my project, and selected to view the application code via the Screen Navigation tab…

Next, I simply select to add some custom code the application initialize event…

The custom code I add simply checks for an existing entity in my Company table, and creates one of there is not one there…

…here’s the code I used for setting the default…

private void SetDefaultCompany() { DataWorkspace dataWorkspace = Application.Current.CreateDataWorkspace(); Company company = dataWorkspace.ApplicationData.Companies.FirstOrDefault(); // if there is no company record yet, create one. if (company == null) { company = dataWorkspace.ApplicationData.Companies.AddNew(); company.CompanyName = "<<Enter your company name...>>"; dataWorkspace.ApplicationData.SaveChanges(); } }So, with that, I don’t have to do much work later to evaluate whether a company record exists or not, because I know it will always be there because of this simple, but effective, process.

For example, here is the screen where the Company entity can be edited. I don’t have to worry about the “Add” functionality, nor do I need to use up screen real-estate with a useless grid; because I am only one company…

I’ve also used this process in the initial deployment of an application. For example, to populate tables that users will not have access to, such as reference or look-up type tables.

Hope you find it useful.

Jan Van der Haegen (@janvanderhaegen) described Dealing with the error: “the following references require LightSwitch package designations” in a 12/26/2011 post:

What did you do to cause this error?

You created a LightSwitch extension. Awesome! On a side note, I hope you know you can use Extensions Made Easy to help you debug!

When you create a new LightSwitch extension, Visual Studio will add 5 projects to the solution for you, one LSPKG project, and one VSIX project. The LSPKG project references the 5 projects by default, and you added an extra reference (either an external DLL or a new project that you added to the solution).

What does this error mean?

The LightSwitch framework needs to know what to do with the references that you added to the LSPKG project, once your extension is installed and activated in a LightSwitch application. It seems to already know about the 5 default packages: MyNewExtension.Client will be added as a reference to your LightSwitch application Client project, MyNewExtension.Client.Design will be used by Visual Studio at design time, …

It doesn’t know what you want to happen to the reference that you added. It requires a “designation” for your “LightSwitch package”.

How can you fix it?

In the solution explorer, right-click your LSPKG project and select “Unload project”.

It will be grayed out and “temporary unavailable”. Right click it again and select “Edit MyNewExtension.Lspkg.csproj”.

You will see a xml file, and the answer to your problem is found at the bottom of that file.

You can see the ProjectReferences here that match the references of your LSPKG project. Notice the tags, underlined in blue, which your ProjectReference is missing, those are “LightSwitch package desitinations”… Add the one that seems like the one you need (LspkgClientContent, LspkgIDEContent or LspkgServerContent), save and close the xml file and right-click on the LSPKG project in your solution explorer to reload it.

Enjoy your LightSwitch extension!

Return to section navigation list>

Windows Azure Infrastructure and DevOps

Jack Greenfield described Geographically Distributed High Availability in an 12/26/2011 post:

This is the last post in the series on business continuity. It briefly describes how the service developer can provide geographically distributed high availability on the Azure platform.

The Azure platform currently does not directly support highly available, geographically distributed services. Customers can deploy services to multiple data centers, but the platform does nothing to help them integrate the separate deployments, or to achieve high availability with the resulting composite.

Geographically distributed high availability is essentially continuous disaster recovery. Each tenant’s data is replicated to multiple physical service instances. Load is distributed dynamically across those service instances to provide an optimal user experience. The two primary challenges are:

- integrating physical service instances to create a single virtual service, and

- minimizing data inconsistencies as client requests are routed among the different physical service instances.

Creating a Virtual Service

To create a single virtual service from a collection of physical service instances, clients of the service must use logical URIs that can be bound to different physical URIs. Using a global load balancer (GLB), a variety of policies can then be used to select a physical service instance to service a given client request. For example, many GLBs support the following types of routing:

- ratio, which routes some fraction of client requests to each physical service instance,

- geographical, which routes client requests to physical service instances in a given geography,

- proximity, which routes client requests to the nearest physical service instances, and

- round-robin, which routes client requests to physical service instances in a round-robin fashion.

Geographical routing is usually based on the requested domain name, but some services may require routing decisions to be based on other components of the request, such as path segments, query string parameters, or payload contents. In these cases, the service may use proximity routing to route requests to the nearest physical instance, which then proxies them to appropriate locations using a custom routing strategy.

Minimizing Data Inconsistencies

If the service data changes frequently, clients may see data inconsistencies, such as recently inserted data disappearing and recently deleted data reappearing, when their requests are routed to different service instances. One way to mitigate but not completely eliminate the inconsistencies is to affinitize clients to specific service instances, and to change affinities infrequently. For some data, this level of consistency may be acceptable. For other data, however, the service may have to use synchronous replication, which was described briefly in the previous post in this series, or to block writes for a client until all outstanding changes have propagated before routing their requests to a different service instance.

<Return to section navigation list>

Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

No significant articles today.

<Return to section navigation list>

Cloud Security and Governance

No significant articles today.

<Return to section navigation list>

Cloud Computing Events

No significant articles today.

<Return to section navigation list>

Other Cloud Computing Platforms and Services

No significant articles today.

<Return to section navigation list>

0 comments:

Post a Comment