Windows Azure and Cloud Computing Posts for 9/30/2013+

Top Stories This Week:

- Microsoft achieves FedRAMP JAB P-ATO for Windows Azure on 9/30/2013 according to the Wall Street Journal (see the press release in the Cloud Security, Compliance and Governance section below.)

- Windows 8.1 RTM and updated Sample Apps for Visual Studio 2013 RC are available for download (see the Windows Azure Cloud Services, Caching, APIs, Tools and Test Harnesses section below.)

- Sam Vanhoutte provided a Feature comparison between BizTalk Server and BizTalk Services (see the Windows Azure Service Bus, BizTalk Services and Workflow section below.)

| A compendium of Windows Azure, Service Bus, BizTalk Services, Access Control, Caching, SQL Azure Database, and other cloud-computing articles. |

‡ Updated 10/5/2013 with new articles marked ‡.

• Updated 10/3/2013 with new articles marked •.

Note: This post is updated weekly or more frequently, depending on the availability of new articles in the following sections:

- Windows Azure Blob, Drive, Table, Queue, HDInsight and Media Services

- Windows Azure SQL Database, Federations and Reporting, Mobile Services

- Windows Azure Marketplace DataMarket, Power BI, Big Data and OData

- Windows Azure Service Bus, BizTalk Services and Workflow

- Windows Azure Access Control, Active Directory, and Identity

- Windows Azure Virtual Machines, Virtual Networks, Web Sites, Connect, RDP and CDN

- Windows Azure Cloud Services, Caching, APIs, Tools and Test Harnesses

- Windows Azure Infrastructure and DevOps

- Windows Azure Pack, Hosting, Hyper-V and Private/Hybrid Clouds

- Visual Studio LightSwitch and Entity Framework v4+

- Cloud Security, Compliance and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

Windows Azure Blob, Drive, Table, Queue, HDInsight and Media Services

<Return to section navigation list>

Brian O’Donnell began a series with Windows Azure and the future of the personalized web : Intro with a 10/4/2013 post to Perficient’s Microsoft Technologies blog:

The internet is becoming increasingly personalized. It has transitioned from indexing massive wells of information to delivering personalized information, or recommendations based on complex searches. Evidence of this is seen in Google’s Knowledge graph, Amazon, the Bing engine, Facebook friends and twitter recommending people you may be interesting in following. Recommendations are everywhere on the web and with the introduction of HDInsight on Windows Azure the personalized web will grow even larger. HDInsight is an implementation of Apache Hadoop running natively within Windows Server. Hadoop is a very powerful distributed computing solution that can process massive quantities of data.

Incorporating “non-Microsoft” technologies baked into Microsoft based services and products is a newer development. The benefits to the IT professional are infinite. Let us take HDInsight as an example. For those not familiar with Linux and installing Hadoop on a distribution of clustered nodes the process can be frustrating and time consuming (to say the least). There are many guides on line and each guide pertains to its own flavor of Linux (Gentoo vs. Red Hat vs. Ubuntu vs. CentOS etc.). The process has gotten better over the years but is still quite cumbersome. To create a Hadoop cluster within Windows Azure, simply create an HDInsight cluster from the dashboard. In a few minutes you have a fully functional Hadoop cluster ready for processing.

You may be asking yourself; “Hadoop is a distributed computing system, what does it have to do with recommendations?”. Mahout is the answer. Mahout is an open source machine learning engine that is also managed by Apache. It contains many different types of algorithms and features, but one of its most prominent is its recommendation engine. The installation process is trivial so you will have Mahout up and running in an HDInsight cluster in no time. To install Mahout on your cluster download the latest release in zip file format from the Mahout website. Copy the zip file to your one of your cluster nodes and extract the contents to C:\apps\dist. That’s it! Not only have you just installed Mahout, but you have also deployed it to your Hadoop cluster.

Next I will walk through the installation process and use Mahout to process data.

‡ Kevin Kell posted Big Data on Azure – HDInsight to the Learning Tree blog on 10/3/2013:

The HDInsight service on Azure has been in preview for some time. I have been anxious to start working with it as the idea of being able to leverage Hadoop using my favorite .NET programming language has a great appeal. Sadly I had never been able to successfully launch a cluster. Not, that is, until today. Perhaps I had not been patient enough in previous attempts, although on most tries I waited over an hour. Today, however, I was able to launch a cluster in the West US region that was up and running in about 15 minutes.

Once the cluster is running it can be managed through a web-based dashboard. It appears, however, that the dashboard will be eliminated in the future and that management will be done using PowerShell. I do hope that some kind of console interface remains but that may or may not be the case.

Figure 1. HDInsight Web-based dashboard

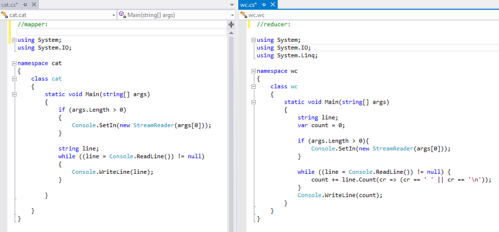

To make it easy to get started Microsoft provides some sample job flows. You can simply deploy any or all of these jobs to the provisioned cluster, execute the job and look at the output. All the necessary files to define the job flow and programming logic are supplied. These can also be downloaded and examined. I wanted to use a familiar language to write my mapper and reducer so I selected the C# sample. This is a simple word count job which is quite commonly used as an easily understood application of Map/Reduce. In this case the mapper and reducer are just simple C# console programs that read and write to stdin and stdout which are redirected to files or Azure Blob storage in the job flow.

Figure 2. Word count mapper and reducer C# code

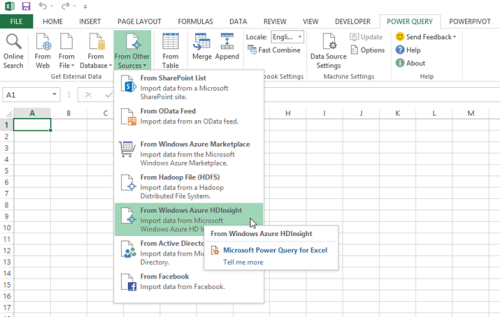

One thing that is pretty cool about the Microsoft BI stack is that it is pretty straightforward to work with HDInsight output using the Microsoft BI Tools. For example the output from the job above can be consumed in Excel using the Power Query add-in.

Figure 3. Consuming HDInsight data in Excel using Power Query

That, however, is a discussion topic for another time!

If you are interested in learning more about Big Data, Cloud Computing or using Excel for Business Intelligence why not consider attending one of the new Learning Tree courses?

Neil MacKenzie (@nmkz) posted an Introduction to Windows Azure Media Services on 9/30/2013:

Windows Azure Media Services (WAMS) is a PaaS offering that makes it easy to ingest media assets, encode them and then perform on-demand streaming or downloads of the resulting videos.

The WAMS team has been actively proselytizing features as they become available. Mingfei Yan (@mingfeiy) has a number of good posts and she also provided the WAMS overview at Build 2013. Nick Drouin has a nice short post with a minimal demonstration of using the WAMS SDK to ingest, process and smooth stream a media asset. John Deutscher (@johndeu) has several WAMS posts on his blog including an introduction to the MPEG DASH preview on WAMS. Daniel Schneider and Anthony Park did a Build 2013 presentation on the MPEG DASH preview.

Windows Azure Media Services is a a multi-tenant service with shared encoding and shared on-demand streaming egress capacity. The basic service queues encoding tasks to ensure fair distribution of compute capacity and imposes a monthly egress limit for streaming. Encoding is billed depending on the quantity of data processed, while streaming is billed at the standard Windows Azure egress rates. It is possible to purchase reserved units for encoding to avoid the queue – with each reserved unit being able to perform a single encoding task at a time (additional simultaneous encoding tasks would be queued). It is also possible to purchase reserved units for on-demand streaming – with each reserved unit providing an additional 200Mbps of egress capacity. Furthermore, the Dynamic Packaging for MPEG-DASH preview is available only to customers which have purchased reserved units for on-demand streaming.

The entry point to the WAMS documentation is here. The Windows Azure Media Services REST API is the definitive way to access WAMS from an application. The Windows Azure Media Services SDK is a .NET library providing a more convenient way to access WAMS. As with most Windows Azure libraries, Microsoft has deployed the source to GitHub. The SDK can be added to a Visual Studio solution using NuGet.

The Windows Azure SDK for Java also provides support for WAMS development. The Developer tools for WAMS page provides links to these libraries as well as to developer support for creating on-demand streaming clients for various environments including Windows 8, Windows Phone, iOS and OSMF.

The Windows Azure Portal hosts a getting started with WAMS sample. The Windows Azure Management Portal provides several samples on the Quick Start page for a WAMS account.

Windows Azure Media Services Account

The Windows Azure Management Portal provides a UI for managing WAMS accounts, content (assets), jobs, on-demand streaming and media processor. A WAMS account is created in a specific Windows Azure datacenter. Each account has an account name and account key, that the WAMS REST API (and .NET API) uses to authenticate requests. The account name also parameterizes the namespace for on-demand streaming (e.g., http://MyMediaServicesAccount.origin.mediaservices.windows.net).

Each WAMS account is associated with one or more Windows Azure Storage accounts, and are used to store the media assets controlled by the WAMS account. The association of a storage account allows the WAMS endpoint to be used as a proxy to generate Windows Azure Storage shared-access signatures that can be used to authenticate asset uploads and downloads from/to a client without the need to expose storage-account credentials to the client.

Workflow for Handling Media

The workflow for using WAMS is:

- Setup – create the context used to access WAMS endpoints.

- Ingestion – upload one or more media files to Windows Azure Blob storage where they are referred to as assets.

- Processing – perform any required process, such as encoding, to create output assets from the input assets.

- Delivery – generate the locators (URLs) for delivery of the output assets as either downloadable files or on-demand streaming assets.

Setup

WAMS exposes a REST endpoint that must be used by all operations accessing the WAMS account. These operations use a WAMS context that manages authenticated access to WAMS capabilities. The context is exposed as an instance of the CloudMediaContext class.

The simplest CloudMediaContext constructor for this class takes an account name and account key. Newing up a CloudMediaContext causes the appropriate OAuth 2 handshake to be performed and the resulting authentication token to be stored in the CloudMediaContext instance. Behind the scenes, the initial connection is against a well-known endpoint (https://media.windows.net/), with the response containing the the actual endpoint to use for this WAMS account. The CloudMediaContext constructor handles with initial authentication provided by the WAMS account name and account key and subsequent authentication provided by an OAuth 2 token.

CloudMediaContext has a number of properties, many of which are IQueryable collections of information about the media services account and its current status including:

- Assets – an asset is a content file managed by WAMS.

- IngestManifests – an ingest manifest associates a list of files to be uploaded with a list of assets.

- Jobs – a job comprises one or more tasks to be performed on an asset.

- Locators – a locator associates an asset with an access policy and so provides the URL with which the asset can be accessed securely.

- MediaProcessors – a media processor specifies the type of configurable task that can be performed on an asset.

These are “expensive” to populate since they require a request against the WAMS REST API so are populated only on request. For example, the following retrieves a list of jobs created in the last 10 days:

The filter is performed on the server, with the filter being passed in the query string to the appropriate REST operation. Documentation on the allowed query strings seems light.

Note that repopulating the collections requires a potentially expensive call against the WAMS REST endpoint. Consequently, the collections are not automatically refreshed. Accessing the current state of a collection – for example, to retrieve the result of a job – may require newing up a new context to access the collection.

Ingestion

WAMS tasks perform some operation that converts an input asset to an output asset. An asset comprises one or more files located in Windows Azure Blob storage along with information about the status of the asset. An instance of an asset is contained in a class implementing the IAsset interface which exposes properties like:

- AssetFiles – the files managed by the asset.

- Id – unique Id for the asset.

- Locators – a locator associates an asset with an access policy and so provides the URL with which the asset can be accessed securely.

- Name – friendly name of the asset.

- State – current state of the asset (initialized, published, deleted).

- StorageAccountName – name of the storage account in which the asset is located.

The ingestion step of the WAMS workflow does the following:

- creates an asset on the WAMS server

- associates files with the asset

- uploads the files to the Windows Azure Blob storage

The asset maintains the association between the asset Id and the location of the asset files in Windows Azure Blob storage.

WAMS provides two file uploading techniques.

- individual file upload

- bulk file ingestion

Individual file upload requires the creation of an asset and then a file upload into the asset. The following example is a basic example of uploading a file to WAMS:

WAMS uses the asset as a logical container for uploaded files. In this example, WAMS creates a blob container with the same name as the asset.Id and then uploads the media file into it as a block blob. The asset provides the association between WAMS and the Windows Azure Storage Service.

This upload uses one of the WAMS methods provided to access the Storage Service. These methods provide additional functionality over that provided in the standard Windows Azure Storage library. For example, they provide the ability to track progress and completion of the upload.

When many files must be ingested an alternative technique is to create an ingestion manifest, using a class implementing the IIngestManifest interface, providing information about the files to be uploaded. The ingest manifest instance then exposes the upload URLs, with a shared access signature, which can be used to upload the files using the Windows Azure Storage API.

Note that the asset Id is in the form: nb:cid:UUID:ceb012ff-7c38-46d5-b58b-434543cd9032. The UUID is the container name which will contain all the media files associated with the asset.

Processing

WAMS supports the following ways of processing a media asset:

- Windows Azure Media Encoder

- Windows Azure Media Packager

- Windows Azure Media Encryptor

- Storage Decryption

The Windows Azure Media Encoder takes an input media asset and performs the specified encoding on it to create an output media asset. The input media asset must have been uploaded previously. WAMS supports various file formats for audio and video, and supports many encoding techniques which are specified using one of the Windows Azure Media Encoder presets. For example, the VC1 Broadband 720P preset creates a single Windows Media file with 720P variable bit rate encoding while the VC1 Smooth Streaming preset produces a Smooth Streaming asset comprising a 1080P video with variable bit rate encoding at 8 bitrates from 6000 kbps to 400kbps. The format for the names of output media assets created by the Windows Azure Media Encoder is documented here.

The Windows Azure Media Packager provides an alternate method to create Smooth Streaming or Apple Http Live Streaming (HLS) asset. The latter cannot be created using the Windows Azure Media Encoder. Rather than use presets, the Windows Azure Media Packager is configured using an XML file.

The Windows Azure Media Encryptor is used to manage the encryption of media assets, which is used in the digital rights management (DRM) of output media assets. The Windows Azure Media Encryptor is configured using an XML file.

Windows Azure Storage Encryption is used to decrypt media assets.

Media assets are processed by the creation of a job comprising one or more tasks. Each task uses one of the WAMS processing techniques described above. For example, a simple job may comprise a single task that performs a VC1 Smooth Streaming encoding task to create the various output media files required for the smooth streaming of an asset.

For example, the following sample demonstrates the creation and submission of a job comprising a single encoding task.

This sample creates a job with some name on the WAMS context. It then identifies an appropriate WAMS encoder and uses that to create a VC1 Broadband 720p encoding task which is added to the job. Then, it identifies an asset already attached to the context, perhaps the result of a prior ingesting on it, and adds it as an input to the task. Finally, it adds a new output asset to the task and submits.

When completed, the output asset files will be stored in the container identified by the asset Id for the output asset of the task. There are two files created in this sample:

- SomeFileName_manifest.xml

- SomeFileName_VC1_4500kbps_WMA_und_ch2_128kbps.wmv

The manifest XML file provides metadata – such as bit rates – for the audio and video tracks in the output file.

Delivery

WAMS supports both on-demand streaming and downloads of output media assets. The files associated with an asset are stored in Windows Azure Blob Storage and require appropriate authentication before they can be accessed. Since the processed files are typically intended for wide distribution some means must be provided whereby they can be accessed without the need to share the highly-privileged account key with the users.

WAMS provides different techniques for accessing the files depending on whether they are intended for download or smooth streaming. It uses and provides API support for the standard Windows Azure Storage shared-access signatures for downloading media files to be downloaded. For streaming media it hosts an endpoint that proxies secured access to the files in the asset.

For both file downloads and on-demand streaming, WAMS uses the an IAccessPolicy to specify the access permissions for a media resource. The IAccessPolicy is then associated with an ILocator for an asset to provide the path with which to access the media files.

The following sample shows how to generate the URL that can be used to download a media file:

The resulting URL can be used to download the media file in code or using a browser. No further authentication is needed since the query string of the URL contains a shared-access signature. A download URL looks like the following:

The following sample shows how to generate the URL for on-demand streaming:

This generates a URL for a on-demand streaming manifest. The following is an example manifest URL:

This manifest file can be used in a media player capable of supporting smooth streaming. A demonstration on-demand streaming player can be accessed here.

Summary

The Windows Azure Media Services team has done a great job in creating a PaaS media ingestion, processing and content-provision service. It is easy to setup and use, and provides both Portal and API support.

<Return to section navigation list>

Windows Azure SQL Database, Federations and Reporting, Mobile Services

‡‡ Alexandre Brisebois (@Brisebois) described Creating NONCLUSTERED INDEXES on Massive Tables in Windows Azure SQL Database in a 9/29/2013 post:

There are times on Windows Azure SQL Database when tables get to a certain size and that trying to create indexes results in timeouts.

A few months ago when I started to get these famous timeouts, I had reached 10 million records and I felt like I was looking for a needle in a hay stack!

This blog post is all about creating NONCLUSTERED INDEXES, I will try to go over best practices and reasons to keep in mind when you use them in Windows Azure SQL Database.

Interesting Facts

- If a CLUSTERED INDEX is present on the table, then NONCLUSTERED INDEXES will use its key instead of the table ROW ID.

- To reduce the size consumed by the NONCLUSTERED INDEXES it’s imperative that the CLUSTERED INDEX KEY is kept as narrow as possible.

- Physical reorganization of the CLUSTERED INDEX does not physically reorder NONCLUSTERED INDEXES.

- SQL Database can JOIN and INTERSECT INDEXES in order to satisfy a query without having to read data directly from the table.

- Favor many narrow NONCLUSTERED INDEXES that can be combined or used independently over wide INDEXES that can be hard to maintain.

- Create Filtered INDEXES to create highly selective sets of keys for columns that may not have a good selectivity otherwise.

- Use Covering INDEXEs to reduce the number of bookmark lookups required to gather data that is not present in the other INDEXES.

- Covering INDEXES can be used to physically maintain the data in the same order as is required by the queries’ result sets reducing the need for SORT operations.

- Covering INDEXES have an increased maintenance cost, therefore you must see if performance gain justifies the extra maintenance cost.

- NONCLUSTERED INDEXES can reduce blocking by having SQL Database read from NONCLUSTERED INDEX data pages instead of the actual tables.

Creating a NONCLUSTERED INDEX

In order to look at a few examples lets start with the following table.

CREATE TABLE [dbo].[TestTable] (

[Id] UNIQUEIDENTIFIER DEFAULT (newid()) NOT NULL,

[FirstName] NVARCHAR (10) NOT NULL,

[LastName] NVARCHAR (10) NOT NULL,

[Type] INT DEFAULT ((0)) NOT NULL,

[City] NVARCHAR (10) NOT NULL,

[Country] NVARCHAR (10) NOT NULL,

[Created] DATETIME2 (7) DEFAULT (getdate()) NOT NULL,

[Timestamp] ROWVERSION NOT NULL,

PRIMARY KEY NONCLUSTERED ([Id] ASC)

);GO

CREATE CLUSTERED INDEX [IX_TestTable]

ON [dbo].[TestTable]([Created] ASC);

The table has a CLUSTERED INDEX on the Created column. The reasons why this might be an interesting choice for the CLUSTERED INDEX were discussed in my previous blog post about building Clustered Indexes on non-primary key columns in Windows Azure SQL Database.

For the sake of this example, lets imagine that this table contains roughly 30 millions records. Creating a NONCLUSTERED INDEX on this table might result in a timeout or an aborted transaction due to restrictions imposed by Windows Azure SQL Database. One of these restrictions, is that a transaction log cannot exceed 1GB in size.

On Windows Azure SQL Database, you must use the ONLINE=ON option in order to reduce locking and the transaction log size. Furthermore, this option will greatly reduce your chances of getting a timeout.

CREATE INDEX [IX_TestTable_Country_City_LastName_FirstName]

ON [dbo].[TestTable]

([Country] ASC,[City] ASC,[LastName] ASC,[FirstName] ASC)

WITH(ONLINE=ON);Creating a Filtered NONCLUSTERED INDEX

A where clause is added to the index. This results in a Filtered index and greatly helps to create smaller and more effective INDEXES. I recommend taking a closer look at this type of index because bytes are precious on Windows Azure SQL Database.

CREATE INDEX [IX_TestTable_Type_LastName_FirstName_where_Type_greater_than_1]

ON [dbo].[TestTable]

([Type] ASC,[LastName] ASC,[FirstName] ASC)

WHERE [Type] > 1

WITH(ONLINE=ON);Creating a Covering NONCLUSTERED INDEX

The include clause can be used to include columns that are selected by the queries but that are not filtered upon through where clauses. The include clause can also be used to include columns that cannot be added to the INDEX key.

CREATE INDEX [IX_TestTable_Covering_Index]

ON [dbo].[TestTable]

([Type] ASC,[LastName] ASC,[FirstName] ASC)

INCLUDE([City], [Country], [Id])

WITH(ONLINE=ON);

Philip Fu posted [Sample Of Sep 30th] How to view SQL Azure Report Services to the Microsoft All-In-One Code Framework blog on 9/30/2013:

Sample Download : http://code.msdn.microsoft.com/CSAzureSQLReportingServices-e3ffff52

The sample code demonstrates how to access SQL Azure Reporting Service.

You can find more code samples that demonstrate the most typical programming scenarios by using Microsoft All-In-One Code Framework Sample Browser or Sample Browser Visual Studio extension. They give you the flexibility to search samples, download samples on demand, manage the downloaded samples in a centralized place, and automatically be notified about sample updates. If it is the first time that you hear about Microsoft All-In-One Code Framework, please watch the introduction video on Microsoft Showcase, or read the introduction on our homepage http://1code.codeplex.com/.

<Return to section navigation list>

Windows Azure Marketplace DataMarket, Cloud Numerics, Big Data and OData

‡‡ Leo Hu explained oData and JSON Format in a 10/4/2013 post:

The Open Data Protocol (OData) is a data access protocol built on core protocols like HTTP and commonly accepted methodologies like REST for the web. OData provides a uniform way to query and manipulate data sets through CRUD operations (create, read, update, and delete).

JSON is a lightweight data-interchange format. It is easy for humans to read and write. It is easy for machines to parse and generate. JSON is a text format that is completely language independent. JSON is built on a collection of name/value pairs realized in most modern languages.

OData’s JSON format extends JSON by defining a set of canonical annotations for control information such as ids, types, and links, and custom annotations MAY be used to add domain-specific information to the payload.

A key feature of OData’s JSON format is to allow omitting predictable parts of the wire format from the actual payload. Expressions are used to compute missing links, type information, and other control data on client side. Annotations are used in JSON to capture control information that cannot be predicted (e.g., the next link of a collection of entities) as well as a mechanism to provide values where a computed value would be wrong (e.g., if the media read link of one particular entity does not follow the standard URL conventions).

Computing values from metadata expressions on client side could be very expense. To accommodate for this the Accept header allows the client to control the amount of control information added to the response.

This doc is aiming to provide a quick overview of what’s the JSON payload would be look like in various typical scenarios.

Data model Used for the Sample

We use the Northwind database as the SQL sample database in all our JSON examples. Northwind is a fictitious company that imports and exports foods globally. The Northwind sample database provides a good database structure for our JSON experiment.

Please see http://msdn.microsoft.com/en-us/library/ms227484(v=vs.80).aspx to get instructions of how to setup the database with your sqlserver

The following database diagram illustrates the Northwind database structure:

Response payload samples

This section provides JSON samples of response payloads for the payload kinds supported in OData. The samples are based on a northwind domain.

Read an EntitySet

http://services.odata.org/V3/OData/OData.svc/Products?$format=json

{

"odata.metadata":"http://services.odata.org/V3/OData/OData.svc/$metadata#Products",

"value":[

{

"ID":0,

"Name":"Bread",

"Description":"Whole grain bread",

"ReleaseDate":"1992-01-01T00:00:00",

"DiscontinuedDate":null,

"Rating":4,

"Price":"2.5"

},

{

"ID":1,

"Name":"Milk",

"Description":"Low fat milk",

"ReleaseDate":"1995-10-01T00:00:00",

"DiscontinuedDate":null,

"Rating":3,

"Price":"3.5"

},

{

"ID":2,

"Name":"Vint soda",

"Description":"Americana Variety - Mix of 6 flavors",

"ReleaseDate":"2000-10-01T00:00:00",

"DiscontinuedDate":null,

"Rating":3,

"Price":"20.9"

},

…

]

}Read an Entity

http://services.odata.org/V3/OData/OData.svc/Products(2)?$format=json

{

"odata.metadata":"http://services.odata.org/V3/OData/OData.svc/$metadata#Products/@Element",

"ID":2,

"Name":"Vint soda",

"Description":"Americana Variety - Mix of 6 flavors",

"ReleaseDate":"2000-10-01T00:00:00",

"DiscontinuedDate":null,

"Rating":3,

"Price":"20.9"

}Read Property

http://services.odata.org/V3/OData/OData.svc/Products(2)/Description?$format=json

{

"odata.metadata":"http://services.odata.org/V3/OData/OData.svc/$metadata#Edm.String",

"value":"Americana Variety - Mix of 6 flavors"

}http://odataservices.azurewebsites.net/OData/OData.svc/Products(0)/Name?$format=application/json

{

"odata.metadata":"http://odataservices.azurewebsites.net/OData/OData.svc/$metadata#Edm.String",

"value":"Bread"

}Remove an Entity

DELETE http://services.odata.org/V3/(S(ettihtez1pypsghekhjamb1u))/OData/OData.svc/Products(200) HTTP/1.1

DataServiceVersion: 1.0;NetFx

MaxDataServiceVersion: 3.0;NetFx

Accept: application/json;odata=minimalmetadata

Accept-Charset: UTF-8

User-Agent: Microsoft ADO.NET Data Services

Host: services.odata.org

Create an Entry

POST http://services.odata.org/v3/(S(ettihtez1pypsghekhjamb1u))/odata/odata.svc/Products HTTP/1.1

DataServiceVersion: 3.0;NetFx

MaxDataServiceVersion: 3.0;NetFx

Content-Type: application/json;odata=minimalmetadata

Accept: application/json;odata=minimalmetadata

Accept-Charset: UTF-8

User-Agent: Microsoft ADO.NET Data Services

Host: services.odata.org

Content-Length: 180

Expect: 100-continue

{

"odata.type":"ODataDemo.Product",

"Description":null,

"DiscontinuedDate":null,

"ID":200,

"Name":"My new product from leo",

"Price":"5.6",

"Rating":0,

"ReleaseDate":"0001-01-01T00:00:00"

}Update an Entry with PATCH and PUT

PATCH

Services SHOULD support PATCH as the preferred means of updating an entity. PATCH provides more resiliency between clients and services by directly modifying only those values specified by the client.

PATCH http://services.odata.org/V3/(S(ettihtez1pypsghekhjamb1u))/OData/OData.svc/Products(200) HTTP/1.1

DataServiceVersion: 3.0;NetFx

MaxDataServiceVersion: 3.0;NetFx

Content-Type: application/json;odata=minimalmetadata

Accept: application/json;odata=minimalmetadata

Accept-Charset: UTF-8

User-Agent: Microsoft ADO.NET Data Services

Host: services.odata.org

Content-Length: 180

Expect: 100-continue

{

"Name":"Update name from leo"

}PUT

Services MAY additionally support PUT, but should be aware of the potential for data-loss in round-tripping properties that the client may not know about in advance, such as open or added properties, or properties not specified in metadata. Services that support PUT MUST replace all values of structural properties with those specified in the request body

PUT http://services.odata.org/V3/(S(ettihtez1pypsghekhjamb1u))/OData/OData.svc/Products(200) HTTP/1.1

DataServiceVersion: 3.0;NetFx

MaxDataServiceVersion: 3.0;NetFx

Content-Type: application/json;odata=minimalmetadata

Accept: application/json;odata=minimalmetadata

Accept-Charset: UTF-8

User-Agent: Microsoft ADO.NET Data Services

Host: services.odata.org

Content-Length: 180

Expect: 100-continue

{

"odata.type":"ODataDemo.Product",

"Description":null,

"DiscontinuedDate":null,

"ID":200,

"Name":"Update name from leo",

"Price":"5.6",

"Rating":0,

"ReleaseDate":"0001-01-01T00:00:00"

}Read Complex Type

http://services.odata.org/V3/OData/OData.svc/Suppliers(1)/Address?$format=json

{

"odata.metadata":"http://services.odata.org/V3/OData/OData.svc/$metadata#ODataDemo.Address",

"Street":"NE 40th",

"City":"Redmond",

"State":"WA",

"ZipCode":"98052",

"Country":"USA"

}Read Entry with expanded navigation links

http://odataservices.azurewebsites.net/OData/OData.svc/Products(0)/Category?$expand=ID&$format=json

{

"odata.metadata":"http://odataservices.azurewebsites.net/OData/OData.svc/$metadata#Categories/@Element",

"ID":0,

"Name":"Food"

}Read Service document

http://odataservices.azurewebsites.net/OData/OData.svc/?$format=json

{

"odata.metadata":"http://odataservices.azurewebsites.net/OData/OData.svc/$metadata",

"value":[

{

"name":"Products",

"url":"Products"

},

{

"name":"Advertisements",

"url":"Advertisements"

},

{

"name":"Categories",

"url":"Categories"

},

{

"name":"Suppliers",

"url":"Suppliers"

}

]Read an EntitySet with full metadata

http://services.odata.org/V3/OData/OData.svc/Products?$format=application/json;odata=fullmetadata

{

"odata.metadata":"http://services.odata.org/V3/OData/OData.svc/$metadata#Products",

"value":[

{

"odata.type":"ODataDemo.Product",

"odata.id":"http://services.odata.org/V3/OData/OData.svc/Products(0)",

"odata.editLink":"Products(0)",

"Category@odata.navigationLinkUrl":"Products(0)/Category",

"Category@odata.associationLinkUrl":"Products(0)/$links/Category",

"Supplier@odata.navigationLinkUrl":"Products(0)/Supplier",

"Supplier@odata.associationLinkUrl":"Products(0)/$links/Supplier",

"ID":0,

"Name":"Bread",

"Description":"Whole grain bread",

"ReleaseDate@odata.type":"Edm.DateTime",

"ReleaseDate":"1992-01-01T00:00:00",

"DiscontinuedDate":null,

"Rating":4,

"Price@odata.type":"Edm.Decimal",

"Price":"2.5"

},

{

"odata.type":"ODataDemo.Product",

"odata.id":"http://services.odata.org/V3/OData/OData.svc/Products(1)",

"odata.editLink":"Products(1)",

"Category@odata.navigationLinkUrl":"Products(1)/Category",

"Category@odata.associationLinkUrl":"Products(1)/$links/Category",

"Supplier@odata.navigationLinkUrl":"Products(1)/Supplier",

"Supplier@odata.associationLinkUrl":"Products(1)/$links/Supplier",

"ID":1,

"Name":"Milk",

"Description":"Low fat milk",

"ReleaseDate@odata.type":"Edm.DateTime",

"ReleaseDate":"1995-10-01T00:00:00",

"DiscontinuedDate":null,

"Rating":3,

"Price@odata.type":"Edm.Decimal",

"Price":"3.5"

},

]

}Read an Entity with full metadata

http://services.odata.org/V3/OData/OData.svc/Products(2)?$format=application/json;odata=fullmetadata

{

"odata.metadata":"http://services.odata.org/V3/OData/OData.svc/$metadata#Products/@Element",

"odata.type":"ODataDemo.Product",

"odata.id":"http://services.odata.org/V3/OData/OData.svc/Products(2)",

"odata.editLink":"Products(2)",

"Category@odata.navigationLinkUrl":"Products(2)/Category",

"Category@odata.associationLinkUrl":"Products(2)/$links/Category",

"Supplier@odata.navigationLinkUrl":"Products(2)/Supplier",

"Supplier@odata.associationLinkUrl":"Products(2)/$links/Supplier",

"ID":2,

"Name":"Vint soda",

"Description":"Americana Variety - Mix of 6 flavors",

"ReleaseDate@odata.type":"Edm.DateTime",

"ReleaseDate":"2000-10-01T00:00:00",

"DiscontinuedDate":null,

"Rating":3,

"Price@odata.type":"Edm.Decimal",

"Price":"20.9"

}Read Entry with expanded navigation links with full metadata

{

"odata.metadata":"http://odataservices.azurewebsites.net/OData/OData.svc/$metadata#Categories/@Element",

"odata.type":"ODataDemo.Category",

"odata.id":"http://odataservices.azurewebsites.net/OData/OData.svc/Categories(0)",

"odata.editLink":"Categories(0)",

"Products@odata.navigationLinkUrl":"Categories(0)/Products",

"Products@odata.associationLinkUrl":"Categories(0)/$links/Products",

"ID":0,

"Name":"Food"

}

<Return to section navigation list>

Windows Azure Service Bus, BizTalk Services and Workflow

• Sam Vanhoutte (@SamVanhoutte) provided a Feature comparison between BizTalk Server and BizTalk Services in a 10/3/2013 post to his Codit.eu blog:

I have given quite some sessions and presentations recently on Windows Azure BizTalk Services. We had the opportunity to work together with the product team on WABS as a strategic Launch Partner. I had a lot of discussions and questions on the technology and a lot of these questions were focused on a comparison with BizTalk Server…

Therefore, I decided to create this blog post, I created a comparison table, much like the comparison tables you can see on consumer web sites (for mobile phones, computers, etc…)

If you need more information or have some feedback, don’t hesitate to contact me.

Connectivity & adapters

Some adapters are not applicable in cloud services (File, for example), where others are. BizTalk Services has a lot of adapters not available. Custom adapters can only be written through the outbound WCF bindings.

Core messaging capabilities

The biggest difference here is the routing pattern that is totally different between both products. More can be read in an earlier post: Windows Azure Bridges & Message Itineraries: an architectural insight. If you want durable messaging, Service Bus queues/topics are the answer, but the biggest problem is that WABS cannot have subscriptions or queues as sources for bridges.

Message processing

We have good feature parity here on these items. The main thing missing would be JSON support. For that, we have written a custom component already that supports JSON.

Management & deployment experience

In my opinion, this is where the biggest challenge lies for WABS. Administration and management is really not what we are used with BizTalk Server. Configuration is very difficult (no binding file concept) and endpoint management is also not that easy to do.

Trading partner management & EDI

The TPM portal of WABS is really very nice and much friendlier than the BizTalk admin console of BizTalk. The biggest issue with EDI is the fact that there is no possibility to extend and customize the EDI bridge…

Extensibility

Luckily the product team did good efforts to add extensibility and the usability of custom bridge components should still be evolved well.

Security

Added value services

This is really where BizTalk Server leads, compared to WABS. And for most real solutions, these services are often needed.

<Return to section navigation list>

Windows Azure Access Control, Active Directory, Identity and Workflow

‡ Pradeep reported Windows Azure Active Directory Has Processed Over 430 Billion User Authentications in a 10/3/2013 post to Microsoft-News.com:

Windows Azure AD is the Microsoft’s Active Directory in the cloud. It offers enterprise class identity services in the cloud with support for multi-factor authentication and more. Microsoft yesterday announced no.of new features and stats about Azure AD yesterday.

As of yesterday, we have processed over 430 Billion user authentications in Azure AD, up 43% from June. And last week was the first time that we processed more than 10 Billion authentications in a seven day period. This is a real testament to the level of scale we can handle! You might also be interested to learn that more than 1.4 million business, schools, government agencies and non-profits are now using Azure AD in conjunction with their Microsoft cloud service subscriptions, an increase of 100% since July.

And maybe even more amazing is that we now have over 240 million user accounts in Azure AD from companies and organizations in 127 countries around the world. It is a good thing we’re up to 14 different data centers – it looks like we’re going to need it.

Also they announced these free enhancements for Windows Azure AD:

- SSO to every SaaS app we integrate with – Users can Single Sign On to any app we are integrated with at no charge. This includes all the top SAAS Apps and every app in our application gallery whether they use federation or password vaulting. Unlike some of our competitors, we aren’t going to charge you per user or per app fees for SSO. And with 227 apps in the gallery and growing, you’ll have a wide variety of applications to choose from.

- Application access assignment and removal – IT Admins can assign access privileges to web applications to the users in their directory assuring that every employee has access to the SAAS Apps they need. And when a user leaves the company or changes jobs, the admin can just as easily remove their access privileges assuring data security and minimizing IP loss

- User provisioning (and de-provisioning) –IT admins will be able to automatically provision users in 3rd party SaaS applications like Box, Salesforce.com, GoToMeeting. DropBox and others. We are working with key partners in the ecosystem to establish these connections, meaning you no longer have to continually update user records in multiple systems.

- Security and auditing reports – Security is always a priority for us. With the free version of these enhancements you’ll get access to our standard set of access reports giving you visibility into which users are using which applications, when they were using and where they are using them from. In addition, we’ll alert you to un-usual usage patterns for instance when a user logs in from multiple locations at the same time. We are doing this because we know security is top of mind for you as well.

Our Application Access Panel – Users are logging in from every type of devices including Windows, iOS, & Android. Not all of these devices handle authentication in the same manner but the user doesn’t care. They need to access their apps from the devices they love. Our Application Access Panel is a single location where each user can easily access and launch their apps.

• Vittorio Bertocci (@vibronet) explained Provisioning a Windows Azure Active Directory Tenant as an Identity Provider in an ACS Namespace–Now Point & Click! in a 10/3/2013 post:

About one year ago I wrote a post about how to provision a Windows Azure AD tenant as an identity provider in an ACS namespace.

Lots of things changed in a year! Since then, we spoke more at length about the relationship between AAD and ACS. If you didn’t read that post, please make a quick jaunt there as it’s super important you internalize its message before reading what I’ll write here. Done? excellent!

In the past year Windows Azure AD made giant steps in term of usability and features set, and hit general availability. That means that many of the artisanal steps I described in the old walkthrough are no longer necessary today. In fact, you can do everything I’ve described there just by filling forms in the Windows Azure portal!

The idea behind the scenario remains the same. You have a web application which trusts an ACS namespace. You want one or more Ad tenants to be available among the identity providers in that namespace. Hence what you need to do is

- Provision in the AAD tenant the ACS namespace in form of a web app (so that it can be a recipient of tokens issued by AAD)

- Provision in the ACS namespace the STS associated to the AAD tenant

The rest is usual ACS: create RPs, add rules, the usual drill (which can be automated by the Identity and Access tool in VS2012. No equivalent capability in VS2013 exists, see the note at the beginning of the post).

Too abstract? Let’s turn this into instructions.

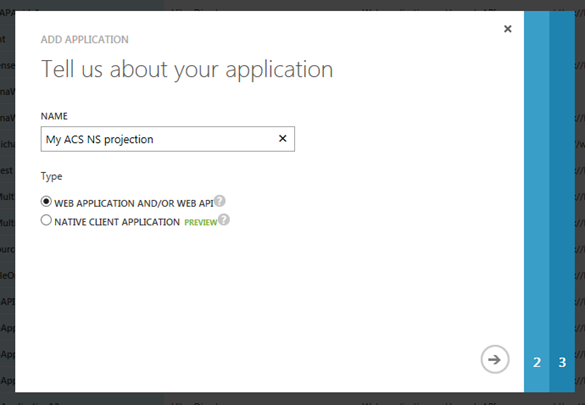

Provision in the AAD tenant the ACS namespace in form of a web app

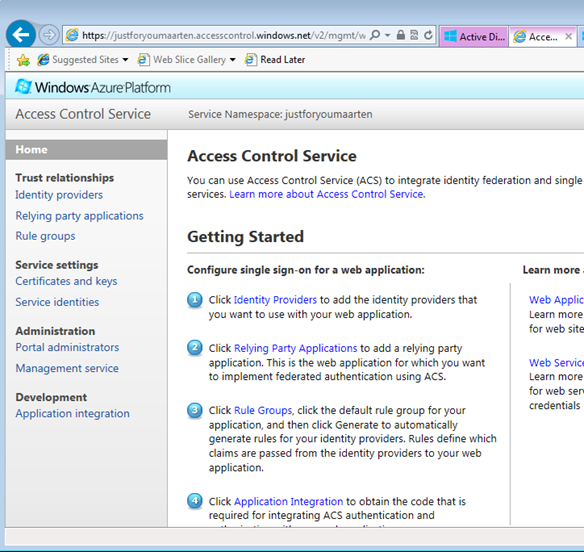

Here I’ll assume you already have a Windows Azure subscription, an ACS namespace and a Windows Azure AD tenant. Let’s say that your namespace is https://justforyoumaarten.accesscontrol.windows.net.

Navigate to https://manage.windowsazure.com/, sign in, head to the AD tab, click on your directory, click on the applications header, and hit the “ADD” button on the bottom center area of the command bar.

Leave the default (web application), assign a name you’ll remember and move to the next screen.

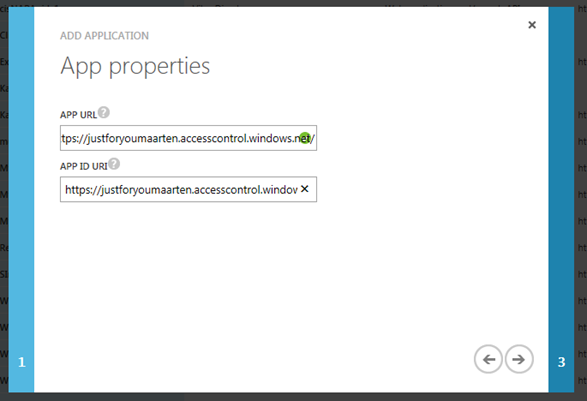

Here, paste the namespace in both fields. Why? Simple. The URL is where the token will be redirected upon successful auth, and you want that to be the ACS namespace. The URI is the audience for which the token will be scoped to, and any value OTHER than the entityID of the ACS namespace (as you find it in the ACS metadata docs) would be interpreted by ACS as a replayed token from some MITM. Sounds like Klingon? Don’t worry! That’s a level of detail you don’t need to deal with, as long as you follow the above instructions exactly.

Move to the next screen.

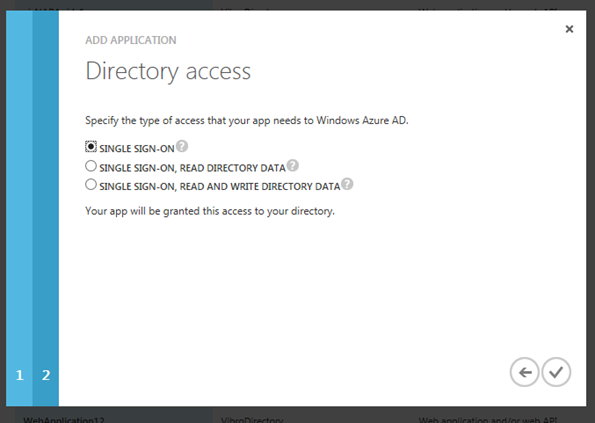

ACS will make no attempts to call the Graph, hence you can leave the default (SSO) and finalize.

Congratulations! Now your AD tenant knows about your ACS namespace and can issue tokens for it!

Provision in the ACS namespace the STS associated to the AAD tenant

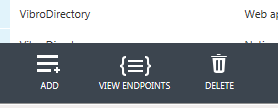

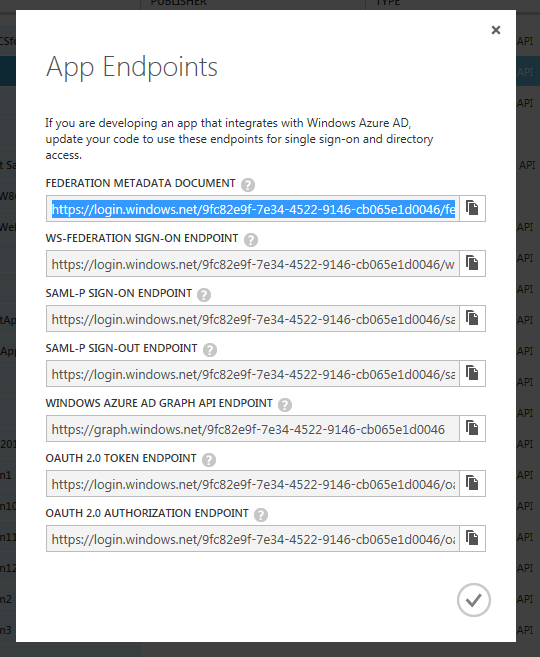

Time to do the same in the opposite direction. Before you leave the app list, there’s a last thing we need to do here: click on the “view endpoints” command on the bottom of the bar.

When you do so, the portal will display the collection of endpoints that you need to know if you want to interact with your AAD tenant at the protocol level:

You’ll want to put in the clipboard the fed metadata document, as it will be what we will use for introducing our AAD tenant to the ACS namespace.

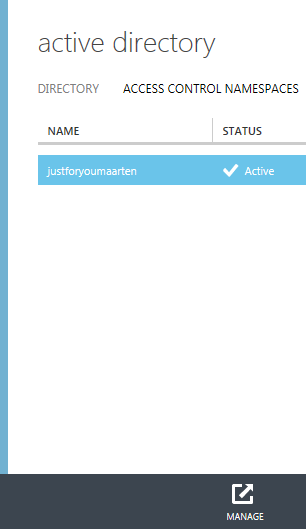

Click the big back arrow on the top left corner of the screen, which will bring you back to the top level active directory screen. This time, click on the header access control namespaces.

Here you’ll find your namespace. Select it, then click on the “manage” button in the bottom command bar. That will lead you to the ACS portal for managing the namespace.

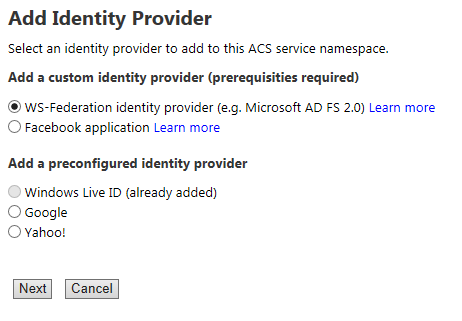

Head to the Identity providers section. Once here, select WS-Federation identity provider and click next:

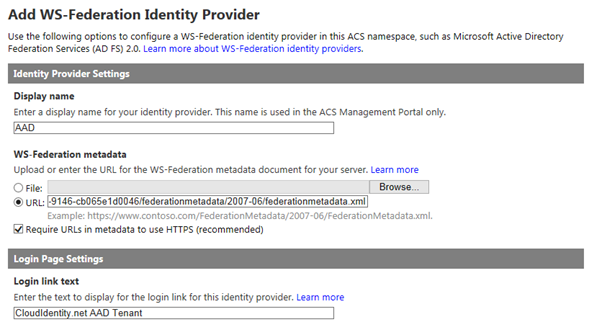

Choose anything you want as display name and login link text. Paste the address of the AAD tenant’s federation metadata in the URL text field of the metadata section.

and you’re done! Hit Save.

App work

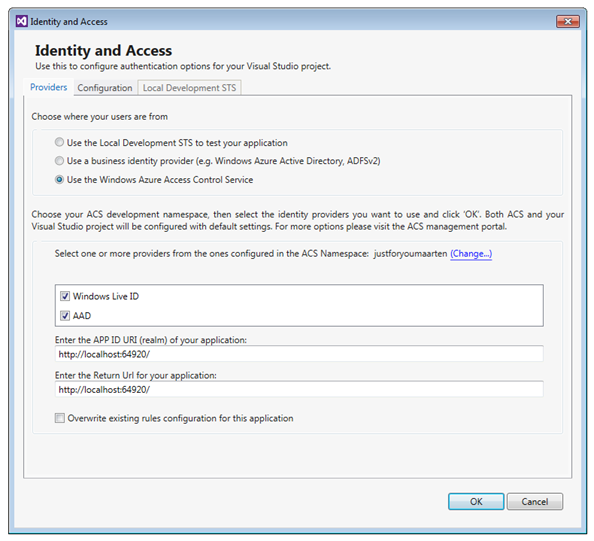

All the service side work for enabling the scenario is done. All that’s left is the work you’d need to do for every RP app you want to create in the ACS namespace. For that, the instructions are pretty much the same as in the old post: use the identity and access tool for VS2012, and that will take care of creating the RP entry, create the associated rules, configure your app to outsource web sign on to ACS, and so on. I won’t repeat the step by step instructions here, but to show my good faith I’ll throw in a couple of screenshots to demonstrate that it works on my machine®.

Here there’s the tool, hooked up to the ACS NS:

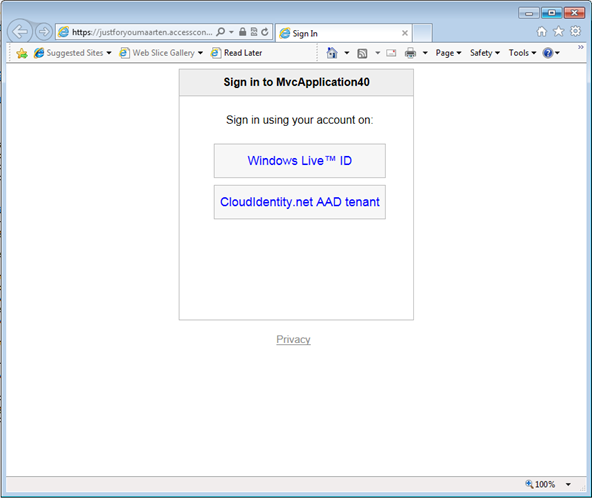

Here there’s the familiar HRD page:

Pick AAD and…

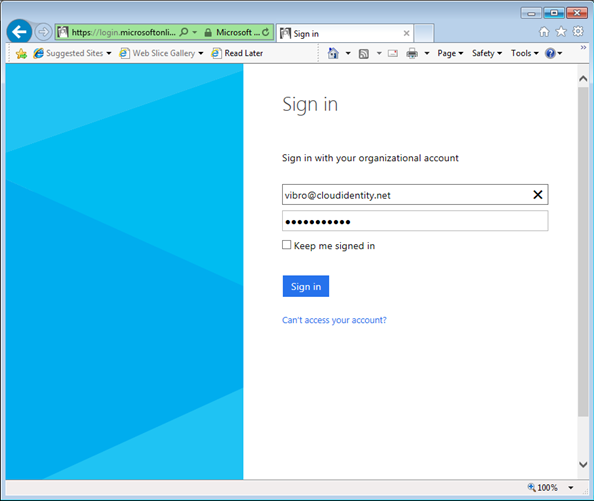

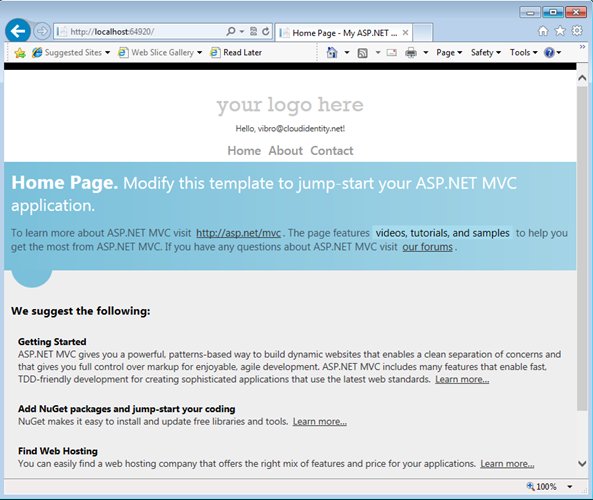

…you get in with your organizational account.

Neat, if I may say so myself!

Wrap

I’ve been wanting to write this post for a while, but of course I never found the time… then, about 30 mins ago somebody on a mail thread asked if there was a point & click solution for this scenario. I sat down, went through the motions while occasionally snapping screenshots and writing my rambling instructions… I was prepared to go to sleep much later, and instead here there’s the finished post already! I love how Windows Azure AD matured as a technology in such a short time.

Vittorio Bertocci (@vibronet) posted Getting Acquainted with ADAL’s Token Cache on 10/1/2013:

A token cache has been one of the top requests from the development community since I have been in the business of securing remote resources. It goes back to the first days of the Web Service Enhancements; it got even more pressing with WCF, where having token instances buried in channels often led to gimmicks and hacks; its lack became obvious when WIF introduced CreatingChannelWithIssuedToken to allow (tease?) you to write your own, without providing one out of the box.

Well, my friends: rejoice! ADAL, our first client-only developer’s library, features a token cache out of the box. Moreover, it offers you a model to plug your own to match whatever storage type and isolation level your app requires.

In this post I am going to discuss concrete aspects of the cache and disregard most of the why’s behind the choices we made. There’s a more abstract discussion to be had about what “session” really means for native clients, and – although in my mind the two are inextricably entangled – I’ll make an effort to keep most theory out, and tackle that in a later post.

Why a Client Side Cache?

Here there’s a quick positioning of ADAL’s cache. “Cache” is a pretty loaded term, and its use here is somewhat special: it’s important to wrpa your head around what we are trying to enable here.

Performance and As Little User Prompting As Possible

Quick, what’s the first purpose of a cache that comes to mind? That’s right: performance. I’ll keep data close to my heart, so that when I need it again it will be right here, FAST.

That is one of the raison d’être of the ADAL cache, of course. Acquiring tokens entails putting stuff in and out of a wire, occasionally doing crypto, and so on: that’s expensive stuff. But it’s not the only reason.

Those issues might not be as pressing for an interactive application as they are for a backend or a middle tier. Think about it: your app only needs to appear snappy to ONE user at a time, and that user’s reaction times are measured in the tenth of seconds it takes for the dominoes of the synapses to fall over each other as neurotransmitters travel back & fro.

In the case of native applications, the performance requirements might be more forgiving; but performance is not the only parameter that can test the user’s patience. For example: there are few things that can annoy a user more than asking him/her to enter and re-enter credentials. Once the user actively participated to an authentication flow, you better hold on to the resulting token for as long as it is viable and ask for the user’s help again only when absolutely necessary. Fail to do so, and your app will be the recipient of their wrath (low ratings, angry reviews, uninstalls, personal attacks).

Well, good news everyone! The ADAL cache helps you to store the tokens you acquire, and retrieves them automatically when they are needed again. It keeps the number user prompts as low as it is “physically” possible.

In fact, it goes much farther than that: if the authority provides mechanisms for silently refreshing access tokens, as Windows Azure AD and Windows Server AD do, ADAL will take advantage of that feature to silently obtain new access tokens. You just keep calling AcquireToken, all of this is completely transparent to you. [Emphasis added.]

If none of those silent method for obtaining tokens work out, only then ADAL resorts to show up the authentication dialog (and even in that case, there might be a cookie which will spare your user from having to do anything. More about that below).

Multiple Users and Application State

That alone would have been enough value for us to implement the feature, but in fact there’s more. Unfortunately this is the part that would benefit from the theoretical discussion I want to postpone, but I don’t think I’ll be able to avoid mentioning it at least a bit here.

In web apps you typically sign in as a given user, and you are that user for the duration of the session. When you sign out, all the artifacts in the session associated to that user are flushed out.

A native app can work that way too, if it is a client for a specific service; but in fact, that might not be the case. Sometimes rich clients will allow you to connect multiple accounts at once, from different providers (think a mail client connecting to many web mail services, or a calendar app aggregating multiple calendar services) or even from the same (thin of an admin sometimes acting as himself, sometimes acting as his boss).If you’d be dealing with a single user, flushing out a session might be implemented by simply clearing up the entire cache; but for multiple users, you need to get finer control. When the end user wants to disconnect a specific account you need to selectively find all the tokens associated to that account only, and get rid of them without disturbing the rest of the cache.

Now, you can repeat the same reasoning for any entity that is relevant when requesting tokens: resources (the app might aggregate multiple services), providers, everything that comes to mind.Fortunately, ADAL can help you with all of the above – thanks to a small shift in perspective: instead of being locked up in some private variable, ADAL’s cache is fully accessible to you. You can query it using your favorite combination of LINQ and lambda notation, and do whatever you’d expect to be able to do with an IDictionary<>.

Important: as long as you don’t need advanced session manipulation, the cache remains fully transparent to you. AcquireToken will consult the cache on your behalf without asking you to know any of the underlying details. The ability of querying the cache is on top of its traditional use.

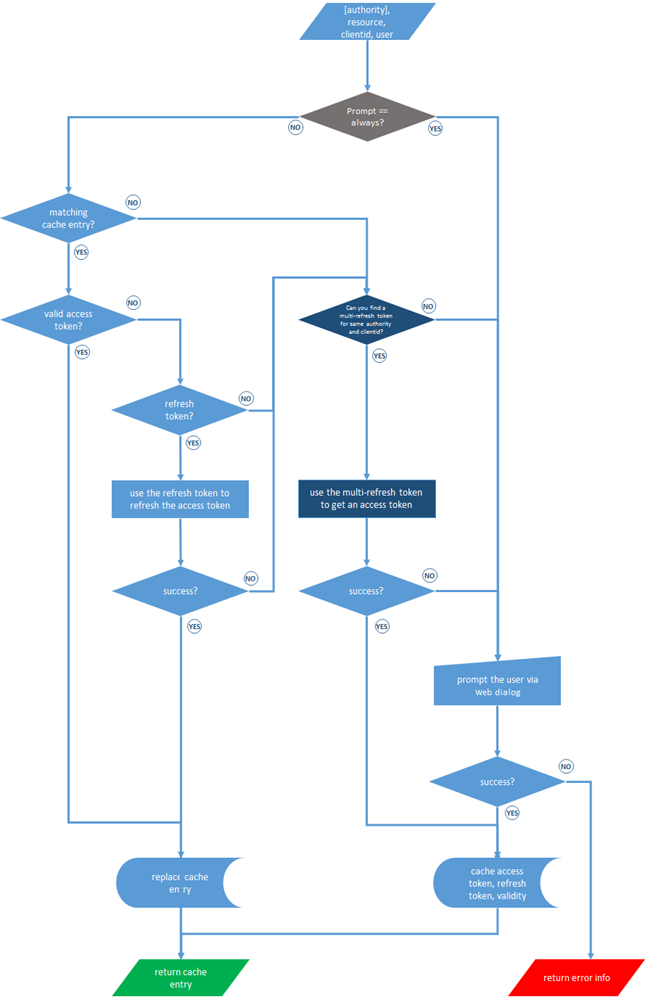

How AcquireToken Works with the Cache

ADAL comes with an in-memory cache out of the box. Unless you explicitly pass at AuthenticationContext construction time an instance of your own cache class (or NULL if you want to opt out from caching) ADAL will use its default implementation.

The default implementation lives in memory, is static (e.g. every AuthenticationContext created in the application shares the store and searches the same collection) and (beware!) is not thread safe. If you want a different isolation model, or to persist data, you can plug your own. This is the only extensibility point in the entire ADAL! I’ll touch on that later.

As you use AcquireToken, you’ll be using the cache without even knowing it’s there. I already went through this in the post about AuthenticationResult, and although it’s tempting to go through this again now that you know a bit more about the cache, that would make the post grow far beyond what I intended. If you didn’t read that post, please head there and sweep through that before reading further. I’ll wait.

[…time passes…]

Welcome back!

Here is a flow chart that might help to get an idea of what AcquireToken actually does with the cache. At the cost of being boring: you don’t need to know any of that in order to use AcquireToken taking advantage of the cache. This is only meant for people who want to know more, and to help you troubleshoot if the behavior you observe is not in line with what you expect. To that end: please remember that Windows Azure AD and Windows Server AD have small differences here.

The Cache Structure

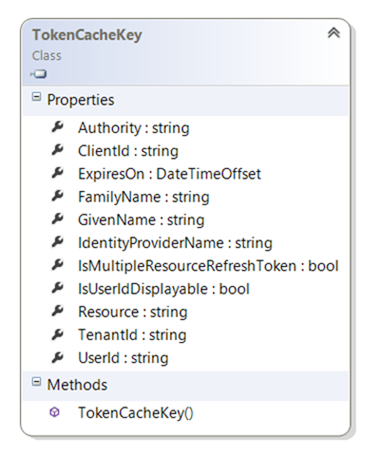

The cache structure is pretty simple; it’s a IDictionary<TokenCacheKey,string>.

You are not supposed to know, given that you would never need to look into that directly, but the Value side of the KeyValuePair contains, in fact, the entire AuthenticationResult for the entry.

The thing that should get your interest, conversely, is TokenCacheKey. Here it is:

That’s mostly a flattened view of the AuthenticationResult info, except for the actual tokens.

In the opening of the post I said that the cache serves two purposes, helping AcquireToken to prompt as little as possible and helping you to assess & manipulate the token collection (hence, the session state) of your client.

AcquireToken uses only a subset of the key members, typically the ones that affect the contract between the client and the target resource (Authority, ClientId, Resource) and the mechanics of the authentication itself (ExpiresOn, UserId). None of the other entries come into play during AcquireToken.

All the other info are there mostly for your benefit: instead of forcing you to remember those extra settings in your own store every time you get back an AuthenticationResult and later join them to the cache, we save them for you directly there. That allows you to use them in your own queries, for display purposes or for whatever other function your scenario might require.And apropos, here there are few examples of queries you might want to do.

AuthenticationContext ac = new AuthenticationContext("hahha"); var allUsersInMyBelly = ac.TokenCacheStore.GroupBy(p => p.Key.UserId).Select(grp => grp.First());The query above returns a cache entry for each unique users – where “user” is used in the sense of UserId, see this post for an explanation of what that really means for ADFS & ACS). You might want to use this query for finding out how many/which unique users are connected to your application, for example to enumerate them in your UX.

var allTokensForAResource = ac.TokenCacheStore.Where(p => p.Key.Resource == https://localhost:9001);The query above is very straightforward, it lists all the tokens scoped for a given resource. You might want to use that to discover which users (and/or which authorities) in your app currently have access to it.

var allUsersInMyBellyThatCanAccessAGivenResource = ac.TokenCacheStore.Where( p => p.Key.Resource == "https://localhost:9001").GroupBy( p => p.Key.UserID).Select( grp => grp.First());I knooow, I am terrible at formatting those things… but I have to do something to get it to fit in this silly blog’s theme! But I digress. This query

combines the first two to return all the users that have access to a specific resource.

foreach (var s in ac.TokenCacheStore.Where (p => p.Key.UserId == "vittorio@cloudidentity.net").ToList()) mac.Cache.Remove(s.Key);The query above deletes all the tokens associated to a specific user.

bool IsItGood = ac.TokenCacheStore.Where( p => p.Key == "https://localhost:9001").First().Key.ExpiresOn > DateTime.Now;Finally, this one tells you if the access token for a given resource is about to expire. This one can come in handy when you know that there are clock skews in your system (ADAL does not take clock skew considerations into account, given that they’re largely a matter between the authority ant the resource).

Dude, Get Your Own

I expect that many, many scenarios will require a persistent cache which can survive app shutdowns and restarts. That will likely means using different persistent store types for different apps, in all likelihood the same persistent storage you already use for your own app data.

Furthermore: different apps will require different isolation levels, perhaps segregating token cache stores per AuthenticationContext instance, tenancy boundaries and whatever else your unique scenario calls for.That’s why we made an exception to the otherwise ADAL’s adamant rule “as little knobs as the main scenarios require”, and made the cache pluggable.

You can easily write your own cache, all you need to do is implementing an IDictionary<TokenCacheKey, string>. Our most excellent SDET extraordinaire Srilatha Inavolu created a good example of custom cache, which saves tokens in CredMan: you can see her implementation here.

Now, I heard from some early adopters that implementing IDictionary requires fleshing out a lot of methods, and it could have been done with a far slimmer API surface. That is true: that said, we believe that there will be far more people querying the cache than people implementing custom cache classes. Furthermore, whereas querying the cache will be most often than not entangled in each app’s unique logic (hence bad candidates for componentization and reuse), custom cache classes are components that might end up being implemented by few gurus in the community and downloaded ad libitum by everybody else. And those gurus can most certainly implement the 15 required methods in their sleep.

Given the above, we choose to use an IDictionary to give you something extremely familiar to work with. Identity is complicated enough, we didn’t want you to have to learn yet another way of querying a collection.

This had other tradeoffs (KeyValuePair is a struct, which makes LINQ materialization problematic; implementing an efficient cache on the middle tier will require extra care) but after much thought we believe this will serve well our mainline scenario, native clients. If you have feedback please let us know, we can always adjust the aim in v2!

Wrap

You asked for a client side token cache: you got it!

ADAL’s cache plays an essential role in keeping complexity out of your native applications, while at the same time taking full advantage of the OAuth2 features (like refresh tokens) and AD features (like multi-resource refresh tokens) to reduce user prompts to a minimum and keep your app as snappy as possible.

I believe that one of the reasons for which we were able to add cache support is that ADAL makes the token acquisition operation very explicit, providing a very natural plug for it. WCF buried the token acquisition in channels and proxies, but in so doing it tied the acquired token lifecycle to the lifecycle of the channel itself and made it hard to aggregate all tokens for the app.

This, coupled to the fact that REST services greatly diminish the need for a structured proxy, makes me hopeful that ADAL’s model is actually an improvement and will make your life easier in that department!

<Return to section navigation list>

Windows Azure Virtual Machines, Virtual Networks, Web Sites, Connect, RDP and CDN

‡ Kevin Remde (@KevinRemde) continued his series with Why doesn’t remote desktop to my Windows Azure VM work? (So many questions. So little time. Part 48.) on 10/4/2013:

An attendee at our IT Camp in Saint Louis a few weeks ago had an problem that is understandable:

“Thanks for training session, I have a question. Tried to RDP one of my VM’s at work and I can’t connect. Possible firewall port issue? I am going to try and connect from home tonight.”

You're already onto the issue. It’s important to remember that the port that you’re using for RDP is not the traditional 3389.

“It’s not? How does that work?”

Let’s step back for a second and consider what you see when you first create a virtual machine in Windows Azure and you get to the screen where “endpoints” are defined. By default, it looks something like this…

…Notice that, even though the operating system is going to have Remote Desktop enabled and will be listening on the traditional port 3389, the external “public port” value that will be redirected to the “private port” 3389 is going to be something different.

“Why?”

Security. We take the extra precaution of randomizing this port so that tools that are scanning for open 3389 ports out there won’t find those machines and then start attempting to log in.

So the answer to your question: Yes, it’s a firewall issue. And I bet it worked from home later that night.

---

Let’s go one step further here and propose a couple of solutions to this, in case you also run into this problem.

Solution #1: Open up the proper outbound firewall ports

In the properties of your virtual machine, you can find what “public port” was assigned to the VM under the endpoints tab…

So this web server of mine is answering to my RDP requests via my ability to connect to it’s service URL and port 56537. Since I am not restricting outbound ports, this isn’t a problem for me. But knowing what this port is can help you understand what needs to be opened for a particular machine.

“Is there a range of ports that I need to have open outbound?”

The port that will be assigned automatically is going to come from the “ephemeral port range” for dynamic or private ports (as defined by the Internet Assigned Numbers Authority) of 49152 to 65535. So if you simply enable outbound connections through that range, the defaults should work well for you.

Solution #2: Modify the VM End Points

You’ll note on the above picture that there is an “edit” option. You have the ability to edit and assign whatever port you want for the public port value. For example, I could do this…

…and just use port 3389 directly. Of course, this would defeat the purpose for using a random, non-standard port for remote desktop connections. But it could be done.

Solution #3: Use some other remote desktop-esque tool over some other port.

The server you’re running as a VM in Windows Azure is your machine, so there’s no reason you couldn’t install some other tool of choice for doing management or connecting to a remote desktop type of connection. Understand the application, what port needs to be enabled on the firewall of the server, and then add that port as an endpoint; either directly mapped with the same public/private port or using some other public port. It is entirely configurable and flexible. And as long as you’ve enabled the public port value as a port you’re allowing outbound from your workplace, you’re golden.

Solution #4: Use a Remote Desktop Gateway

How about instead of connecting to machines directly, you do something more secured, manageable, and along the same lines of what you would consider for allowing secured access into your own datacenter remote desktop session hosts: Configure one server as the gateway for access to the others. In this way you have the added benefits of just one open port; and that port is SSL (443). You’re very likely already allowing out port 443 for anyone doing secured browsing (HTTPS://…), so the firewall won’t get in the way.

No significant articles so far this week.

<Return to section navigation list>

Windows Azure Cloud Services, Caching, APIs, Tools and Test Harnesses

‡ Eduard Koller (@eduardk) offered A sneak peek at four new Nagios and Zabbix plugins for Windows Azure in a 9/19/2013 post to the Interoperability @ Microsoft blog (missed when published):

Busy times at MS Open Tech! Today we’d like to share with the Azure community a sneak peek at our work on four new plugins for Nagios and Zabbix. It’s early days, but we care about your feedback and love working in the open, so effective today you can take a look at our github repo and see what we are working on to make monitoring on Azure easy and immediate for users of Nagios and Zabbix.

What you can play with today is:

- A plugin for Windows Azure Storage, that will allow you to monitor ingress, egress, requests, success ratio, availability, latency, and more

- A plugin for Windows Azure SQL Databases, that will allow you to monitor ingress, egress, requests, success ratio, availability, latency, etc

- A plugin for Windows Azure Active directory, that will allow you to monitor changes in user and group information (userdelta, groupdelta)

- A plugin for Windows Azure Platform-as-a-Service (PaaS) Worker Roles, that will allow you to monitor cpu, memory, asp.net stats, web service stats, and other compute stats

Note that all compute plugins can be also used to monitor Windows Azure Infrastructure-as-a-Service (IaaS) Virtual Machines

The steps for installing and running the plugins are documented in this ReadMe.

Nagios and Zabbix have established themselves as popular choices for lightweight enterprise-class IT and infrastructure monitoring and alerting. The vibrant open source community built around Nagios has contributed hundreds of plugins (most of which are also compatible with Zabbix) to enable developers, IT professionals and DevOps pros to monitor a variety of entities, from servers to databases to online services. We love to help our customers that know and use those tools, and we are committed to supporting monitoring on Azure using open source technologies.

This is a work in progress, and we’d love to hear from users to make our implementation of these popular tools the best it can be. The Plugins are available on our github repo, and we welcome your feedback and contributions. Send us a pull request if you’d like to contribute to these projects, or leave a comment/email if you have some feedback for us. See you on github!

• Riccardo Becker (@riccardobecker) posted The Wireframe of Geotopia on 10/3/2013:

Using the Visual Studio 2013 RC, I created a cloud project with an ASP.NET webrole. When you add a webrole to your cloud project, the screen below appears.

I want to create an MVC app and want to use Windows Azure Active Directory authentication. To enable this you need to create a new Directory in your Active Directory in the Windows Azure portal. When you create a new directory, this screen appears.

Add some users to your directory. In this case, I have "riccardo@geotopia.onmicrosoft.com" for now.

Go back to Visual Studio and select the Change Authencation button. Next, select the Organization Acounts and fill in your directory.When you click Ok now you need to login with the credentials of one of your users you created in your directory. I log in with riccardo@geotopia.onmicrosoft.com. Next, select Create Project and your MVC5 app is ready to go. Before this application is able to run locally, in your development fabric, you need to change the return URL in the Windows Azure portal. Go to applications in the designated Directory on the Windows Azure portal.

The newly created MVC project appears there, in my case it's Geotopia.WebRole. In this screen you see the app url which points to https://localhost:44305. This URL is NOT correct when you run the MVC5 app as a cloud project in the development fabric. Click the application in the portal and select Configure. Change the app URL and the return URL to the correct url when your app runs locally in development fabric.

In my case: https://localhost:8080. When you run your app, you get a warning about a problem with the security certificate, but you can ignore this for now. After logging in succesfully, you will be redirected to the correct return URL (configured in the portal) and showing you that you are logged in. I also created a bing map which centers on the beautiful Isola di Dino in Italy with a bird's eye view.

In the next blog I will show how to create a Geotopic on the Bing Map and how to store it in Table Storage and how add your own fotographs to it to show your friends how beautiful the places are you visited. This creates an enriched view, additional to the bing map.

• The Windows Azure CAT Team (@WinAzureCAT) described Cloud Service Fundamentals – Caching Basics in a 10/3/2013 post:

The "Cloud Service Fundamentals" application, referred to as "CSFundamentals," demonstrates how to build database-backed Azure services. In the previous DAL – Sharding of RDBMS blog post, we discussed a technique known as sharding to implement horizontal scalability in the database tier. In this post, we will discuss the need for Caching, the considerations to take into account, and how to configure and implement it in Windows Azure.

The distributed cache architecture is built on scale-out, where several machines (physical or virtual) participate as part of the cluster ring with inherent partitioning capabilities to spread the workload. The cache is a <key, value> lookup paradigm and the value is a serialized object, which could be the result set of a far more complex data store operation, such as a JOIN across several tables in your database. So, instead of performing the operation several times against the data store, a quick key lookup is done against the cache.

Understanding what to cache

You first need to analyze the workload and decide the suitable candidates for caching. Any time data is cached, the tolerance of “staleness” between the cache and the “source of truth” has to be within acceptable limits for the application. Overall, the cache can be used for reference (read only data across all users) such as user profile, user session (single user read-write), or in some cases for resource data (read-write across all users using lock API). And in some cases the particular dataset may not be ideally suited for caching – for example, if a particular data set is changing rapidly, or the application cannot tolerate staleness, or you need to perform transactions.

Capacity Planning

A natural next step is to estimate the caching needs of your application. This involves looking at a set of metrics, beyond just the cache size, to come up with a starting sizing guide.

- Cache Size: Amount of memory needed can be roughly estimated using the average object size and number of objects.

- Access Pattern & Throughput requirements: The read-write mix provides an indication of new objects being created, rewrite of existing objects or reads of objects.

- Policy Settings: Settings for Time-To-Live (TTL), High Availability (HA), Expiration Type, Eviction policy.

- Physical resources: Outside of memory, the Network bandwidth and CPU utilization are also key. Network bandwidth may be estimated based on specific inputs, but mostly this has to be monitored and then used as a basis in re-calculation.

A more detailed capacity planning spreadsheet is available at http://msdn.microsoft.com/en-us/library/hh914129

Azure Caching Topology

The table below lists out the set of PAAS options available on Azure and provides a quick description

Type

Description

In-Role dedicated

In the dedicated topology, you define a worker role that is dedicated to Cache. This means that all of the worker role's available memory is used for the Cache and operating overhead. http://msdn.microsoft.com/en-us/library/windowsazure/hh914140.aspx

In-Role co-located

In a co-located topology, you use a percentage of available memory on application roles for Cache. For example, you could assign 20% of the physical memory for Cache on each web role instance. http://msdn.microsoft.com/en-us/library/windowsazure/hh914128.aspx

Windows Azure Cache Service

The Windows Azure Cache Service, which currently (in Sep 2013) is in Preview. Here are a set of useful links: http://blogs.msdn.com/b/windowsazure/archive/2013/09/03/announcing-new-windows-azure-cache-preview.aspx

http://msdn.microsoft.com/en-us/library/windowsazure/dn386094.aspx

Windows Azure Shared Caching

Multi-tenanted caching (with throttling and quotas) which will be retired no later than September 2014. More details are available at http://www.windowsazure.com/en-us/pricing/details/cache/. It is recommended that customers use one of the above options for leveraging caching.

Implementation details

The CSFundamentals application makes use of In-Role dedicated Azure Caching to streamline reads of frequently accessed information - user profile information, user comments. The In-Role dedicated deployment was preferred, since it isolates the cache-related workload. This can then be monitored via the performance counters (CPU usage, network bandwidth, memory, etc.) and cache role instances scaled appropriately.

NOTE: The New Windows Azure Cache Service was not available during implementation of CSFundamentals. It would have been a preferred choice if there was a requirement for the cached data to be made available outside of the CSFundamentals application.

The ICacheFactory interface defines the GetCache method signature. ICacheClient interface defines the GET<T> and PUT<T> methods signature.

public interface ICacheClient

AzureCacheClient is the implementation of this interface and has the references to the Windows Azure Caching client assemblies, which were added via the Windows Azure Caching NuGet package.

Because the DataCacheFactory object creation establishes a costly connection to the cache role instances, it is defined as static and lazily instantiated using Lazy<T>.

The app.config has auto discovery enabled and the identifier is used to correctly point to the cache worker role:

<autoDiscover isEnabled="true" identifier="CSFundamentalsCaching.WorkerRole" />

NOTE: To modify the solution to use the new Windows Azure Cache Service, replace the identifier attribute with the cache service endpoint created from the Windows Azure Portal. In addition, the API key (retrievable via the Manage Keys option on the portal) must be copied into the ‘messageSecurity authorizationInfo’ field in app.config.

The implementation of the GET<T> and PUT<T> methods uses the BinarySerializer class, which in turn leverages the Protobuf class for serialization and deserialization. protobuf-net is a .NET implementation of protocol buffers, allowing you to serialize your .NET objects efficiently and easily. This was added via the protobuf-net NuGet package.

Serialization produces a byte[] array for the parameter T passed in, which is then stored in Windows Azure Cache cluster. In order to return the object requested for the specific key, the GET method uses the Deserialize method.

This blog provides an overview of Caching Basics. For more details, please refer to ICacheClient.cs, AzureCacheFactory.cs, AzureCacheClient.cs and BinarySerializer.cs in the CloudServiceFundamentals Visual Studio solution.

The Windows Store Team announced Windows 8.1 RTM has arrived and “MSDN and TechNet subscribers can download Windows 8.1 RTM, Visual Studio 2013 RC, and Windows Server 2012 R2 RTM builds today” on 9/30/2013. From MSDN’s Windows 8.1 Enterprise (x64) - DVD (English) Details: