Windows Azure and Cloud Computing Posts for 11/9/2012+

| A compendium of Windows Azure, Service Bus, EAI & EDI,Access Control, Connect, SQL Azure Database, and other cloud-computing articles. |

‡ New: Scott Guthrie’s deployment of an Office App to Windows Azure in Monday’s keynote (scroll down), Windows Azure Tiered (Paid) Support, Windows Azure certified as one of the Top 500 of the world’s largest supercomputers, LightSwitch Tutorial for SharePoint Apps.

• Hot: Reuven Cohen (@ruv) reported The Battle For The Cloud: Amazon Proposes ‘Closed’ Top-Level .CLOUD Domain in an 11/6/2012 article for Forbes.com in the Other Cloud Computing Platforms and Services section below (updated 11/11/2012).

Editor’s note: I finally caught up with articles missed during Alix’s and my vacation at the Ahwahnee Hotel’s Yosemite Vintners’ Holiday Event, Session 1, 11/4 through 11/7/2012. Check out my photos of the trip on SkyDrive.

•• Updated 11/12/2012 with new articles marked ••.

• Updated 11/11/2012 with new articles marked •.

Tip: Copy bullet(s) or dagger, press Ctrl+f, paste it/them to the Find textbox and click Next to locate updated articles:

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Windows Azure Blob, Drive, Table, Queue, Hadoop and Media Services

- Windows Azure SQL Database, Federations and Reporting, Mobile Services

- Marketplace DataMarket, Cloud Numerics, Big Data and OData

- Windows Azure Service Bus, Caching, Access & Identity Control, Active Directory, and Workflow

- Windows Azure Virtual Machines, Virtual Networks, Web Sites, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch and Entity Framework v4+

- Windows Azure Infrastructure and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

Azure Blob, Drive, Table, Queue, Hadoop and Media Services

•• Tomica Kaniski (@tkaniski) described Configuring Online Backup for Windows Server 2012 in an 11/12/2012 post:

As Windows Server 2012 includes many new and improved features, some of them often go unnoticed. The objective of this article is to explain how to set up and use one of them – Online Backup.

Online Backup feature in Windows Server 2012 provides you with option to store some of your backups into the cloud (Windows Azure). Current offering includes free preview of this service for customers of Windows Server 2012, Windows Server 2012 Essentials and System Center 2012 during a period of 6 months and allowing you to store up to 300 GB of backups per account.

This feature is available as additional download – software agent which needs to be installed on any server that will be using the Online Backup feature. This piece of software is providing you the connection to Windows Azure Online Backup service.

Installing, configuring and using the Online Backup feature is not very complicated. Basically, what you need to do is to install the Online Backup agent on the server you want to use Online Backup feature for, register the server in online service, create schedule with selecting what will be backed up (and when), and then wait for the backup to occur.

In more detail, here are the steps you need to take to make the Windows Online Backup work on a plain Windows Server 2012 installation:

1. Enable the Windows Server Backup feature

Windows Server Backup feature is part of Windows Server installation, but disabled by default. So, the first step is to enable it. You can enable it using the new Server Manager interface - select Manage, Add Roles and Features and, finally, select the Windows Server Backup feature and finish the wizard.

You can also install this feature by using the DISM command-line tool – you need to run Command Prompt (As Admin) and then the command dism /online /Enable-Feature:WindowsServerBackup.

2. Register for Windows Azure Online Backup

Next step is to set up an account for the Azure Online Backup at http://www.windowsazure.com/en-us/home/features/online-backup/. The registration process is simple, and at the end of it, you will get account info that will be used in steps that follow.

3. Download the backup agent from Management Portal (Dashboard)

Sign in to the Windows Azure Online Backup dashboard https://portal.onlinebackup.microsoft.com/en-US/Dashboard using the account information from the previous step. In the Overview section of your dashboard, just click on Download and Install button to get the backup agent setup file (download size is about 14 MB).

4. Install the agent

Installation of the downloaded agent software is pretty straightforward – prerequisites are the Windows PowerShell (which is installed by default on Windows Server 2012) and Microsoft Online Services Sign-in Assistant which will be automatically installed during setup. After finishing the installation wizard, you need to open the Windows Server Backup console and verify if Online Backup entry is visible on the left-hand side. If it is visible, the agent installation was successful, and you can proceed to the next step. Leave the console open.

5. Register the server in Windows Azure Online Backup service

If you select Online Backup entry in the Windows Server Backup console, you will get additional options on the right-hand side. Select Register Server option to start a wizard for configuring the online backup.

If you are using proxy to connect to the Internet, insert the required info about it. Next you will configure a passphrase for encrypting the backups in online service. Passphrase can be generated automatically if you click the Generate Passphrase button, or you can enter one by yourself (keep in mind that it needs to be a minimum of 16 characters long).

After that, you just need to enter your credentials to access Windows Azure Online Backup (from step 2), and the server will be added to your online account.

6. Create backup schedule

Now you can create the backup schedule by using the option Schedule Backup on the right-hand side of the Windows Server Backup console. Wizard for creating the backup schedule is similar to the wizard that is used to create the schedule locally. Basically, you need to select what will be backed up (you cannot select System State Backup, only files and folders – for System State Backup you still need to use the Local Backup option), when do you want the backup to occur (you can select one or more days of the week and up to 3 times per day) and retention period (available options are 7, 15, and 30 days).

7. Run backup now (or wait for scheduled backup to occur)

Final step is to select the option Backup Up Now or wait for scheduled backup to occur. Keep in mind that the backup will require some amount of your network (and Internet) bandwidth, so run the backup outside of business hours, if possible...

After this final step, your Windows Server 2012 will be backing up “to the cloud”. Restoring the files and folders is also made simple by using the Recover Data wizard in the same (Windows Server Backup) console.

Just to mention – one of the “cool” settings regarding the Online Backup feature is bandwidth throttling which allows you to control the amount of bandwidth that backup is using during work or non-work hours. This is the setting that provides you with the flexibility of doing the backups also during the work hours, which is really nice. The throttling settings are located under the Change Properties option which is visible once you register your server and set up the backup schedule…

•• M Sheik Uduman Ali (@udooz) described WAS StartCopyFromBlob operation and Transaction Compensation in an 11/12/2012 post:

The latest Windows Azure SDKs v1.7.1 and 1.8 have a nice feature called “StartCopyFromBlob” that enables us to instruct Windows Azure data center to perform cross-storage accounts blob copy. Prior to this, we need to download chunks of blob content then upload into the destination storage account. Hence, “StartCopyFromBlob” is more efficient in terms of cost and time as well.

The notable difference in version 2012-02-12 is that copy operation is now asynchronous. It means once you made a copy request to Windows Azure Storage service, it returns a copy ID (a GUID string), copy state and HTTP status code 202 (Accepted). This means that your request is scheduled. Post to this call, when you check the copy state immediately, it is most probably in “pending” state.

StartCopyFromBlob – An TxnCompensation operation

An extra care is required while using this API, since this is one of the real world transaction compensation service operations. After making the copy request, you need to verify the actual status of the copy operation at later point in time. The later point in time would be varied from very few seconds to 2 weeks based on various constraints like source blob size, permission, connectivity, etc.

The figure below shows a typical sequence of StartCopyFromBlob operation invocation.

CloudBlockBlob and CloudPageBlob classes in Windows Azure storage SDK v1.8 provide StartCopyFromBlob() method which in turn calls the WAS REST service operation (http://msdn.microsoft.com/en-us/library/windowsazure/dd894037.aspx). Based on the Windows Azure Storage Team blog post (http://blogs.msdn.com/b/windowsazurestorage/archive/2012/06/12/introducing-asynchronous-cross-account-copy-blob.aspx), this request is placed on internal queue and it returns copy ID and copy state. The copy ID is an unique ID for the copy operation. This can be used later to verify the destination blob copy ID and also the way to abort copy operation later point in time. CopyState gives you copy operation status, number of bytes copying, etc.

Note that sequence 3 “PushCopyBlobMessage” in the above figure is my assumption about the operation.

ListBlobs – Way for Compensation

Although copy ID is in your hand, there is no simple API that receives array of copy IDs and to return the appropriate copy states. Instead, you have to call CloudBlobContainer‘s ListBlobs() or GetXXXBlobReference() to get the copy state. If the blob is created by the copy operation, then it will have the CopyState.

CopyState might be null for blobs that are not created by copy operation

The compensation action here is to take what we need to do when a blob copy operation is neither succeeded nor in pending state. Mostly, the next call of StartCopyFromBlob() will end up with successful blob copy. Otherwise, further remedy should be taken.

Final Words

It is very pleasure[able] to use StartCopyFromBlob(). It would be much more pleasure[able], if the SDK or REST version provides simple operations like the following:

- GetCopyState(string[] copyIDs) : CopyState[]

- RetryCopyFromBlob(string failedCopyId) : void

•• Jim O’Neil (@jimoneil) continued his Channel9 video series with Practical Azure #2: What About Blob? on 11/12/2012:

As I kick off the coverage of the Windows Azure platform in earnest, join me on Channel 9 for this episode focusing on the use of blob storage in Windows Azure.

Download: MP3 MP4

(iPod, Zune HD)High Quality MP4

(iPad, PC)Mid Quality MP4

(WP7, HTML5)High Quality WMV

(PC, Xbox, MCE)

And here are some of the additional reference links covered during the presentation:

- Getting your 90-day FREE Azure Trial

- Windows Azure Hands-on Labs Online

- MSDN Virtual Labs: Windows Azure

- Blob Service Concepts

- Managing Access to Containers, Blobs, Tables, and Queues

- Understanding Block Blobs and Page Blobs

How to use the Windows Azure Blob Storage Service with: .NET

- How to use the Windows Azure Blob Storage Service with: Java

- How to use the Windows Azure Blob Storage Service with: PHP

- How to use the Windows Azure Blob Storage Service with: Node.js

- How to use the Windows Azure Blob Storage Service with: Python

- Azure Code Samples (blob)

- CloudBerry Explorer

•• Mark Kromer (@mssqldude) posted Big Data with SQL Server, part 2: Sqoop in an 11/11/2012 post:

I started off my series on Hadoop on Windows with the new Windows distribution of Hadoop known as Microsoft HDInsight, by talking about installing the local version of Hadoop on Windows. There is also a public cloud version of Hadoop on Azure: http://www.hadooponazure.com.

Here in part 2, I’ll focus on moving data between SQL Server and HDFS using Sqoop.

In this demo, I’m going to move data between a very simple sample SQL Server 2012 database that I’ve created called “sqoop_test” with a single table called “customers”. You’ll see the table is very simple for this demo with just a customer ID and a customer name. What I’m going to do is to show you how the Microsoft & Hortonworks Hadoop distribution for Windows (HDInsights) includes Sqoop for moving data between SQL Server & Hadoop.

You can also move data between HDFS and SQL Server with the Linux distributions of Hadoop and Sqoop by using the Microsoft Sqoop adapter available for download here.

First, I’ll start with moving data from SQL Server to Hadoop. When you run this command, you will “import” data into Hadoop from SQL Server. Presumably, this would provide a way for you to perform distributed processing and analysis of your data via MapReduce once you’ve copied the data to HDFS:

sqoop import –connect jdbc:sqlserver://localhost –username sqoop -password password –table customers -m 1

I have 1 record inserted into my customers table and the import command places that into my Hadoop cluster and I can view the data in a text file, which most things in Hadoop resolve to:

> hadoop fs -cat /user/mark/customers/part-m-00000

> 5,Bob Smith

My SQL Server table has 1 row (see below) so that row was imported into HDFS:

The more common action would likely move data into SQL Server from Hadoop and to do this, I will export from HDFS to SQL Server. I have a database schema for my data in Hadoop that I created with Hive that creates a table called Employees. I’m going to tranform those into Customer records in my SQL Server schema with Sqoop:

> sqoop export –connect jdbc:sqlserver://localhost –username sqoop -password password -m 1 –table customers –export-dir /user/mark/data/employees3

12/11/11 22:19:24 INFO mapreduce.ExportJobBase: Transferred 201 bytes in 32.6364 seconds (6.1588 bytes/sec)

12/11/11 22:19:24 INFO mapreduce.ExportJobBase: Exported 4 records.Those MapReduce jobs extract my data from HDFS and send it to SQL Server so that now when I query my SQL Server Customers table, I have my original Bob Smith record plus these 4 new records that I transferred from Hadoop:

<Return to section navigation list>

Windows Azure SQL Database, Federations and Reporting, Mobile Services

Herve Roggero (@hroggero) described how to Backup [and Restore] SQL Database Federation[s] in an 11/8/2012 post:

One of the amazing features of Windows Azure SQL Database is the ability to create federations in order to scale your cloud databases. However until now, there were very few options available to backup federated databases. In this post I will show you how Enzo Cloud Backup can help you backup, and restore your federated database easily. You can restore federated databases in SQL Database, or even on SQL Server (as regular databases).

Generally speaking, you will need to perform the following steps to backup and restore the federations of a SQL Database:

- Backup the federation root

- Backup the federation members

- Restore the federation root

- Restore the federation members

These actions can be automated using: the built-in scheduler of Enzo Cloud Backup, the command-line utilities, or the .NET Cloud Backup API provided, giving you complete control on how you want to perform your backup and restore operations.

Backing up federations

Let’s look at the tool to backup federations. You can explore your existing federations by using the Enzo Cloud Backup application as shown below. As you can see, the federation root and the various federations available are shown in separate tabs for convenience. You would first need to backup the federation root (unless you intend to restore the federation member on a local SQL Server database and you don’t need what’s in the federation root). The steps are similar than those to backup a federation member, so let’s proceed to backing up a federation member.

You can click on a specific federation member to view the database details by clicking at the tab that contains your federation member. You can see the size currently consumed and a summary of its content at the bottom of the screen.

If you right-click on a specific range, you can choose to backup the federation member. This brings up a window with the details of the federation member already filled out for you, including the value of the member that is used to select the federation member. Notice that the list of Federations includes “Federation Root”, which is what you need to select to backup the federation root (you can also do that directly from the root database tab). Once you provide at least one backup destination, you can begin the backup operation. From this window, you can also schedule this operation as a job and perform this operation entirely in the cloud. You can also “filter” the connection, so that only the specific member value is backed up (this will backup all the global tables, and only the records for which the distribution value is the one specified). You can repeat this operation for every federation member in your federation.

Restoring Federations

Once backed up, you can restore your federations easily. Select the backup device using the tool, then select Restore. The following window will appear. From here you can create a new root database. You can also view the backup properties, showing you exactly which federations will be created.

Under the Federations tab, you can select how the federations will be created. I chose to recreate the federations and let the tool perform all the SPLIT operations necessary to recreate the same number of federation members. Other options include to create the first federation member only, or not to create the federation members at all.

Once the root database has been restored and the federation members have been created, you can restore the federation members you previously backed up. The screen below shows you how to restore a backup of a federation member into a specific federation member (the details of the federation member are provided to make it easier to identify).

Conclusion

This post gave you an overview on how to backup and restore federation roots and federation members. The backup operations can be setup once, then scheduled daily.

Jim O’Neil (@jimoneil) completed his series with Windows 8 Notifications: Push Notifications via Windows Azure Web Sites (Part 3) on 11/8/2012:

It’s finally time to get to the “Azure” piece of this three-parter! Those of you who haven't read Part 1 and Part 2 may want to at lease peruse those posts for context. Those of you who have know that I created a Windows Store application (Boys of Summer) that features push notifications to inform its end users of news related to their favorite major league teams. The Windows Store application works in concert with a Cloud Service (hosted as a Windows Azure Web Site) to support the notification workflow, and this post tackles that last piece of the puzzle, as highlighted below.

There are two main components to this cloud service: an ASP.NET MVC site that heavily leverages the Web API and backing storage in the form of a MySQL database. The deployment mechanism is a Windows Azure Web Site, with MySQL being selected (over Windows Azure SQL Database) since a 20MB instance of MySQL is included free of charge in the already free-of-charge entry level offering of Web Sites. I don’t anticipate my application needing more horsepower than already included in that free tier of Windows Azure Web Sites, but it’s nice to know I can step up to the shared or reserved model should Boys of Summer become wildly successful!

What follows is a rather lengthy but comprehensive post on how I set this service up inside of Windows Azure. I’ve split it into seven sections so you can navigate to the parts of specific interest to you:

- Step 1. Get your Windows Azure account

- Step 2. Create a new Windows Azure Web Site

- Step 3. Set up the MySQL database

- Step 4. Build the APIs for the cloud service

- Step 5. Implement the ASP.NET MVC page that issues the notifications

- Step 6. Implement interface with the Windows Notification Service (WNS)

- Step 7. Deploy the completed solution to Windows Azure

Step 1: Get Your Windows Azure Account

There are a number of ways to get a Windows Azure account, and one of the easiest for ‘kicking-the-tires’ is the 90-day Free Trial. If you’re an MSDN subscriber, you already have a monthly allotment of hours as well, and all you need to do is activate the benefit.

Likewise, WebsiteSpark and BizSpark participants get monthly benefits by virtue of the MSDN subscriptions that come with those programs. Of course, you can also opt for one of the paid plans as well or upgrade after trying out one of the free tiers.

Step 2: Create a New Windows Azure Web Site

Once you’ve logged into the Windows Azure portal with your Microsoft account, you’ll be able to provision any of the services available, via the NEW option at the lower left.

For Boys of Summer, I needed a Web Site along with a database.

If you don’t see Windows Azure Web Sites listed on the left sidebar, or it’s disabled, you’ll need to visit your account page and select the preview features menu option to enable Web Sites (and other features that are not yet fully released). The activation happens in a matter of minutes, if not faster.

To create the Web Site, only a few bits of information need be entered on the two screens that appear next:

- the URL for the Web Site, which must be a unique URL (in the azurewebsites.net domain). It’s this URL that will be target of the RESTful service calls made from my Windows Store application.

- the location of the data center that will host the service.

- what type of database to create (MySQL here, but SQL Database is also an option).

- a database connection string name.

- the name of the database server.

- the data center location hosting the MySQL database, which should be the same data center housing the Web Site itself; otherwise, you’ll incur unnecessary latency as well as potential bandwidth cost penalties.

The last checkbox on the page confirms agreement with ClearDB’s legal and privacy policy as they are managing the MySQL database instances.

Once the necessary details have been entered, it takes only a minute or two to provision the site and the database, during which the status is reflected in the portal. When the site is available, it can be selected from the list of Web Sites in the Azure portal to access the ‘getting started’ screen below:

The site (with default content) is actually live at this point, which you can confirm by pressing the BROWSE button on the menu bar at the bottom of the screen.

To get the information I need to develop and deploy the service, I next visit the DASHBOARD option which provides access to a number configuration settings. I specifically need the first two listed in the quick glance sidebar on the right of the dashboard page:

- I’ll use the MySQL connection string to create the tables needed to store the notification URIs for the various clients, and

- I’ll save the publish profile (as a local file) to later be imported into Visual Studio 2012. That will allow me to deploy my ASP.NET application directly to Windows Azure. Do be aware that this file contains sensitive information enabling deployment to the cloud, so treat publish settings file judiciously.

At this point, I won’t even need to revisit the Windows Azure portal, but of course there are a number of great monitoring and scaling features I may want to consult there once my site is up and running.

Step 3. Set up the MySQL Database

In the Web API service implementation, I’m using Entity Framework (EF) Code First along with a basic Repository pattern on a VERY simple data model that abstracts the two tables with the column definitions shown below.

I used the open source MySQL Workbench (with the connection string data from the Windows Azure portal) to create the tables and populate data into the teams table. There’s also a very simple stored procedure that is invoked by one of the service methods that I’ll discuss a bit later in this post:

CREATE PROCEDURE updatechanneluri

(IN olduri VARCHAR(2048), IN newuri VARCHAR(1024)) BEGIN UPDATE registrations SET Uri = newuri WHERE Uri = olduri; ENDSince I opted for MySQL, I needed to install a provider, and specifically I installed Connector/Net 6.6.4, which supports Entity Framework and Visual Studio 2012. As of this writing, the version of the Connector/Net available via NuGet did not.

Step 4. Build the APIs for the Cloud Service

There are numerous options for building a cloud service in Windows Azure Web Sites – .NET, PHP, Node.js – and a number of tools as well like Visual Studio and Web Matrix. I opted to use ASP.NET MVC and the Web API in .NET 4.5 within Visual Studio 2012 (as you can see below).

If you’re new to the ASP.NET MVC Web API, I highly recommend checking out Jon Galloway’s short screencast ASP.NET Web API, Part 1: Your First Web API to give you an overview of the project components and overall architectural approach.

My service includes three controllers: the default Home controller, a Registrations controller, and a Teams controller. Home Controller provides a default web page to send out the toast notifications; I’ll look at that one in Step 6.

The other two controllers specifically extend ApiController and are used to implement various RESTful service calls. Not surprisingly, each corresponds directly to one of the two Entity Framework data model classes (and by extension, the MySQL tables). These controllers more-or-less carry out CRUD operations on those two tables.

Step 4.1 Coding TeamsController

The application doesn’t modify any of the team information, so there are only two read operations needed:

public Team Get(String id)retrieves information about a given team given its id; the Team class is one of the two entity classes in my Entity Framework model.

This method is invoked via an HTTP GET request matching a route of http://boysofsummer.azurewebsites.net/api/teams/{id}, where id is the short name for the team, like redsox or orioles.public HttpResponseMessage GetLogo(String id, String fmt)retrieves the raw bytes of the image file for the team logo. Returning an HttpResponseMessage value (versus the actual data) allows for setting response headers (like Content-type to “image/png”). The implementation of this method ultimately reaches in to the teams table in MySQL to get the logo and then writes the raw bytes of that logo as StreamContent in the returned HttpResponseMessage. If a team has no logo file, an HTTP status code of 404 is returned.

This method responds to a HTTP GET request matching the route http://boysofsummer.azurewebsites.net/api/logos/{id}/png, where id is again the short name for a given team.

Why didn't I use a MediaTypeFormatter? If you've worked with the Web API you know it leverages the concept of content negotiation to return different representations of the same resource by using the Accept: header in the request to determine the content type that should be sent in reply. For instance, the resource http://boysofsummer.azurewebsites.net/api/teams/redsox certainly refers to the Red Sox, but does it return the information about that team as XML? as JSON? or something else?

The approach I'd hoped for was to create a MediaTypeFormatter, which works in conjunction with content negotiation to determine how to format the result. So I created a formatter that would return the raw image bytes and a content type of image/png whenever a request for the team came in with an Accept header specifying image/png. Any other requested type would just default to the JSON representation of that team's fields.

For this to work though, the incoming GET request for the URI must set the Accept header appropriately. Unfortunately, when creating the toast template request (in XML) you only get to specify the URI itself, and the request that is issued for the image (by the inner workings of the Windows 8 notification engine) includes a catch-all header of Accept: */*

My chosen path of least resistance was to create a separate GET method (GetLogo above) with an additional path parameter that ostensibly indicates the representation format desired (although in my simplistic case, the format is always PNG).

Step 4.2 Coding RegistrationsController

The Registrations controller includes three methods which manage the channel notification registrations that associate the application users’ devices with the teams they are interested in tracking. As you would probably expect, those methods manipulate the registrations table in MySQL via my EF model and repository implementation.

public HttpResponseMessage Post(Registration newReg)inserts a new registration record into the database. Each registration record reflects the fact that a client (currently identified by a given notification channel URI) is interested in tracking a given team, identified the team id (or short name). The combination of channel URI and team should be unique, so if there is an attempt to insert a duplicate, the HTTP code of 409 (Conflict) is returned; a successful insertion yields a 201 (Created).

This POST method is invoked by the Windows Store application whenever the user moves a given team’s notification toggle switch to the On position. The URI pattern it matches is http://boysofsummer.azurewebsites.net/api/registrations, and the request body contains the channel URI and the team name formatted as JSON.

public HttpResponseMessage Delete(String id, String uri)deletes an existing record from the registrations database, in response to a user turning off notifications for a given team via the Windows Store application interface. A successful deletion results in a HTTP code of 204 (No Content), while an attempt to remove a non-existent record returns a 404 (Not Found).

The DELETE HTTP method here utilizes a URI pattern of http://boysofsummer.azurewebsites.net/api/registrations/{id}/{uri} where id is the team’s short name and uri is the notification channel URI encoded in my modification of Base64.

public HttpResponseMessage Put(String id, [FromBody] UriWrapper u)modifies all existing records matching the notification channel URI recorded in the database with a new channel URI provided via the message body. This is used when a given client’s notification channel URI ‘expires’ and a new one is provided, so that the client can continue to receive notifications for teams to which he previously subscribed. This method, by the way, is the one that leverages the MySQL stored procedure mentioned earlier to more efficiently update potentially multiple rows.

This PUT method matches the URI pattern http://boysofsummer.azurewebsites.net/api/registrations/{id} where id is the previous notification channel URI as recorded in the MySQL database. The replacement URI is passed via the message body as a simple JSON object. Both URIs are Base64(ish) encoded. The update returns a 204 (No Content) regardless of whether any rows in the Registration table are a match.

Step 5. Implement the ASP.NET MVC Page That Issue the Notifications

For the sample I’m presenting here, I adapted the default ASP.NET MVC home page to provide a way to trigger notifications to the end users of the Boys of Summer Windows Store app. At the moment, it’s quite a manual process and assumes that someone is sitting in front of a browser – perhaps scanning sports news outlets for interesting tidbits to send. That’s not an incredibly scalable scenario, so ‘in real life’ there might be a (semi-)automated process that taps into various RSS or other feeds and automatically generates relevant notifications.

The controller here has two methods, a GET and a POST. The GET is a simple one-liner that returns the View with the list of teams populated from the model via my team repository implementation.

The POST, which is fired off when the Send Notification button is pressed, is the more interesting of the two methods, and appears in its entirety below with a line-by-line commentary.

1: [HttpPost]2: public async Task<ActionResult> Index3: (String notificationHeader, String notificationBody, String teamName)4: {5: // set XML for toast6: String toast = SetToastTemplate(notificationHeader, notificationBody,7: String.Format("{0}api/logos/{1}", Request.Url, teamName));8:9: // send notifications to subscribers for given team10: List<NotificationResult> results = new List<NotificationResult>();11: foreach (Registration reg in RegistrationRepository.GetRegistrations(teamName))12: {13: NotificationResult result =14: await WNSHelper.PushNotificationAsync(reg.Uri, toast);15: results.Add(result);16:17: if (result.RequiresChannelUpdate())18: RegistrationRepository.RemoveRegistration(teamName, reg.Uri);19: }20:21: // show results of sending 0, 1 or multiple notifications22: ViewBag.Message = FormatNotificationResults(results);23:24: return View(TeamRepository.GetTeams().ToList());25: }Lines 2-3

The POST request pulls the three form fields from the view, namely the Header Text, Message, and TeamLines 6-7

A helper method is called to format the XML template; it’s simply a string formatting exercise to populate the appropriate parts of the template. This implementation only supports the ToastImageAndText02Template.Line 11

A list of all the registrations for the desired team is returned from the databaseLines 13-14

A notification is pushed for each registration retrieved using a helper class that will be explained shortly.Line 15

A list of NotificationResult instances records the outcome of each notification, including error information if present. Note though that you can never know if a notification has successfully arrived at its destination.Lines 17-18

If the specific error detected when sending the notification indicates that the targeted notification channel is no longer valid, that registration is removed from the database.

For instance, it may correspond to a user who has uninstalled the application in which case it doesn’t make sense to continue sending notifications there and, if unchecked, could raise a red flag with the administrators of WNS.Line 22

A brief summary of the notification results is included on the reloaded web page. If a single notification was sent, a few details are provided; if multiple notifications were attempted, only the number of successes and failures is shown.

For a production application, you may want to record each attempt in a log file or database for review to ensure the application is healthy. Additionally, failed attempts can give some high level insight to the usage patterns of your applications – who’s on line when and perhaps how many have uninstalled your app.Line 24

As with the GET request, the model (the list of baseball teams) is reloaded.Step 6. Implement the Interface with the Windows Notification Service (WNS)

This is the fun part: the exchange between my cloud service and WNS, which does all the heavy lifting in terms of actually delivering the toast to the devices - via the implementation behind Line 14 above:

await WNSHelper.PushNotificationAsync(reg.Uri, toast)WNSHelper is a class I wrote to abstract the message flow between the service and WNS and as such is a simpler (but less robust) version of the Windows 8 WNS Recipe code available in the Windows Azure Toolkit for Windows 8 (the source for which is located in the /Libraries/WnsRecipe of the extracted toolkit). The code for WNSHelper is available a Gist (if you want to dive deeper), but here it is in pictures:

First, my service (via the RefreshTokenAsync method) initiates a request for an access token via the OAuth 2.0 protocol. It uses Live Services to authenticate the package SID and client secret I obtained from the Windows Store when registering my application. The package SID and client secret do need to be stored securely as part of the Cloud Service, since they are the credentials that enable any agent to send push notifications to the associated application.

The HTTP request looks something like the following. Note the SID and the client secret are part of the URL-encoded form parameters along with grant_type and scope, which are always set to the values you see here.

POST https://login.live.com/accesstoken.srf HTTP/1.1 Content-Type: application/x-www-form-urlencoded Host: login.live.com Content-Length: 210 grant_type=client_credentials&scope=notify.windows.com& client_id=REDACTED_SID&client_secret=REDACTED_SECRETAssuming the authentication succeeds, the response message will include an access token, specifically a bearer token, which grants the presenter (or ‘bearer’) of that token the privileges to carry out some operation in future HTTP requests. The HTTP response message providing that token has the form:

HTTP/1.1 200 OK Content-Type: application/json;charset=UTF-8 Cache-Control: no-store Pragma: no-cache { "access_token" : "mF_9.B5f-4.1JqM", "token_type" : "Bearer", "expires_in" : 86400 }An access token is nothing more than a hard-to-guess string, but note there is also an expires_in parameter, which means that this token can be used only for a day (86400 seconds), after which point additional requests using that token will result in an HTTP status code of 401 (Unauthorized). This is important, and I’ll revisit that shortly.

Once the Cloud Service has the bearer token, it can now send multiple push notifications to WNS by presenting that token in the Authorization header of push notification HTTP requests. The actual request is sent to the notification channel URI, and the message body is the XML toast template.

A number of additional HTTP headers are also supported including X-WNS-Type, which is used to indicate the type of notification (toast, tile, badge, or raw) and X-WNS-RequestForStatus, which I employ to get additional information about the disposition of the notification.

Here’s a sample notification request as sent from my cloud service:

POST https://bn1.notify.windows.com/?token=AgYAAADCM0ruyKK… HTTP/1.1 Content-Type: application/xml Host: bn1.notify.windows.com

Authorization: Bearer mF_9.B5f-4.1JqM

X-WNS-Type: wns/toast

X-WNS-RequestForStatus: true

Content-Length: 311 <toast> <visual>

<binding template="ToastImageAndText02">

<image id="1" src="http://boysofsummer.azurewebsites.net/api/logos/tigers/png" />

<text id="1">Breaking News!!!</text>

<text id="2">The Detroit Tigers have won the ALCS!</text>

</binding>

</visual>

</toast>Assuming all is in order, WNS takes over from there and delivers the toast to the appropriate client device. For toast notifications, if the client is off-line, the notification is dropped and not cached; however, for tiles and badges the last notification is cached for later delivery (and when the notification queue is in play, up to five notifications may be cached).

If WNS returns a success code, it merely means it was able to process the request, not that the request reached the destination. That bit of information is impossible to verify. Depending on the additional X-WNS headers provided in the request, some headers will appear in the reply providing diagnostic and optional status information.

Now if the request fails, there are some specific actions you may need to take. The comprehensive list of HTTP response codes is well documented, but I wanted to reiterate a few of the critical ones that you may see as part of ‘normal’ operations.

200

Success – this is a good thing401

Unauthorized – this can occur normally when the OAuth bearer token expires. The service sending notifications should continue to reuse the same OAuth token until receiving a 401. That’s the signal to reissue the original OAuth request (with the SID and client secret) to get a new token.

This means of course, that you should check for a 401 response when POSTing to the notification channel, because you’ll want to retry that operation after a new access token has been secured.406

Not Acceptable – you’ve been throttled and need to reduce the rate at which you are sending notifications. Unfortunately, there’s no documentation of how far you can push the limits before receiving this response.410

Gone – the requested notification channel no longer exists, meaning you should purge it from the registry being maintained by the cloud service. It could correspond to a client that has uninstalled your application or one for which the notification channel has expired and a new one has not been secured and recorded.My implementation checks for all but the throttling scenario. If a 401 response is received, the code automatically reissues the request for an OAuth token (but with limited additional error checking). Similarly, a 406 response (or a 404 for that matter) results in the removal of that particular channel URI/team combination from the MySQL Registrations table.

Step 7. Deploy it all

Only one thing left! SHIP IT! During the development of this sample I was able to do a lot of debugging locally, relying on Fiddler quite a bit to learn and diagnose problems with my RESTful API calls, so you don’t have to deploy to the cloud right away – though with the free Windows Azure Web Site tier there’s no real cost impact for doing so. In fact, my development was somewhat of a hybrid architecture, since I always accessed the MySQL database I’d provisioned in the cloud, even when I was running my ASP.NET site locally.

When it comes time to move to production, it’s incredibly simple due to the ability to use Web Deploy to Windows Azure Web Sites. When I provisioned the site at the beginning of this post, I downloaded the publication settings file from the Windows Azure Portal. Now via the Publish… context menu option of the ASP.NET MVC project, I’m able in Visual Studio 2012 (and 2010 for that matter) to import the settings from that file and simply hit publish to push the site to Windows Azure.

Truth be told, there was one additional step – involving MySQL. Windows Azure doesn’t have the Connector / NET assemblies installed, nor does it know that it’s an available provider. That’s easily remedied by including the provider declaration in the web.config file of the project:

<system.data> <DbProviderFactories> <add name="MySQL Data Provider" invariant="MySql.Data.MySqlClient" description=".Net Framework Data Provider for MySQL" type="MySql.Data.MySqlClient.MySqlClientFactory, MySql.Data" /> </DbProviderFactories> </system.data>and making sure that each of the MySQL referenced assemblies has its Copy Local property set to true. This is to ensure the binaries are copied over along with the rest of the ASP.NET site code and resource assets.

With the service deployed, the only small bit of housekeeping remaining is ensuring that the Windows Store application routes its traffic to the new endpoint, versus localhost or whatever was being used in testing. I just made the service URI an application level resource in my Windows Store C#/XAML app so I could easily switch between servers.

<x:String x:Key="ServiceUri">http://boysofsummer.azurewebsites.net:12072</x:String>Finis!

With the service deployed, the workflow I introduced two posts ago with the picture below has been realized! Hopefully, this deep-dive has given you a better understanding of the push notification workflow, as well as a greater appreciation for Windows Azure Mobile Services, which abstracts much of this lower-level code into a Backend-as-a-Service offering that is quite appropriate for a large number of similar scenarios.

<Return to section navigation list>

Marketplace DataMarket, Cloud Numerics, Big Data and OData

‡ Bill Hilf (@bill_hilf) posted Windows Azure Benchmarks Show Top Performance for Big Compute to the Windows Azure blog on 11/13/2012:

151.3 TFlops on 8,064 cores with 90.2 percent efficiency

Windows Azure now offers customers a cloud platform that can cost effectively and reliably meet the needs of Big Compute. With a massively powerful and scalable infrastructure, new instance configurations, and a new HPC Pack 2012, Windows Azure is designed to be the best platform for your Big Compute applications. In fact, we tested and validated the power of Windows Azure for Big Compute applications by running the LINPACK benchmark. The network performance was so impressive –151.3 TFlops on 8,065 cores with 90.2 percent efficiency—that we submitted the results and have been certified as one of the Top 500 of the world’s largest supercomputers.

Hardware for Big Compute

As part of our commitment to Big Compute we are announcing hardware offerings designed to meet customers’ needs for high performance computing. We will offer two high performance configurations: The first with 8 cores and 60 GB of RAM, and a second with 16 cores with 120 GB of RAM. Both configurations will also provide an InfiniBand network with RDMA for MPI applications.

The high performance configurations are virtual machines delivered on systems consisting of:

- Dual Intel Sandybridge processors at 2.6 GHz

- DDR3 1600 MHz RAM

- 10 GigE network for storage and internet access

- InfiniBand (IB) 40 Gbps network with RDMA

Our InfiniBand network supports remote direct memory access (RDMA) communication between compute nodes. For applications written to use the message passing interface (MPI) library, RDMA allows memory on multiple computers to act as one pool of memory. Our RDMA solution provides near bare metal performance (i.e., performance comparable to that of a physical machine) in the cloud, which is especially important for Big Compute applications.

The new high performance configurations with RDMA capability are ideal for HPC and other compute intensive applications, such as engineering simulations and weather forecasting that need to scale across multiple machines. Faster processors and a low-latency network mean that larger models can be run and simulations will complete faster.

LINPACK Benchmark

To demonstrate the performance capabilities of the Big Compute hardware, we ran the LINPACK benchmark, submitted the results and have been certified as one of the Top 500 of the world’s largest supercomputers. The LINPACK benchmark demonstrates a system’s floating point computing power by measuring how fast it solves a dense n by n system of linear equations Ax = b, which is a common task in engineering. This approximates performance when solving real problems.

We achieved 151.3 TFlops on 8,064 cores with 90.2 percent efficiency. The efficiency number reflects how close to the maximum theoretical performance a system can achieve, calculated as the machine’s frequency in cycles per second times the number of operations it can perform. One of the factors that influences performance and efficiency in a compute cluster is the capability of the network interconnect. This is why we use InfiniBand with RDMA for Big Compute on Windows Azure.

Here is the output file from the LINPACK test showing our 151.3 Terraflop result.

What’s impressive about this result is that it was achieved using Windows Server 2012 running in virtual machines hosted on Windows Azure with Hyper-V. Because of our efficient implementation, you can get the same performance for your high performance application running on Windows Azure as on a dedicated HPC cluster on-premises.

Windows Azure is the first public cloud providers to offer virtualized InfiniBand RDMA network capability for MPI applications. If your code is latency-sensitive, our cluster can send a 4 byte packet across machines in 2.1 microseconds. InfiniBand also delivers high throughput. This means that applications will scale better, with a faster time to result and lower cost.

Application Performance

The chart below shows how the NAMD molecular dynamics simulation program scales across multiple cores running in Windows Azure with the newly announced configurations. We used 16-core instances for running the application, so 32 and more cores require communication across the network. NAMD really shines on our RDMA network, and the solution time reduces impressively as we add more cores.

How well a simulation scales depends on both the application and the specific model or problem being solved.

We are currently testing the high performance hardware with a select group of partners and will make it publicly available in 2013.

Windows Azure Support for Big Compute with Microsoft HPC Pack 2012

We began supporting Big Compute on Windows Azure two years ago. Big Compute applications require large amounts of compute power that typically run for many hours or days. Examples of Big Compute include modeling complex engineering problems, understanding financial risk, researching disease, simulating weather, transcoding media, or analyzing large data sets. Customers doing Big Compute are increasingly turning to the cloud to support a growing need for compute power, which provides greater flexibility and economy than having the work done all on-premise.

In December 2010, the Microsoft HPC Pack first provided the capability to “burst” (i.e., instantly consume additional resources in the cloud to meet extreme demand in peak usage situations) from on-premises compute clusters to the cloud. This made it easy for customers to use Windows Azure to handle peak demand. HPC Pack took care of provisioning and scheduling jobs, and many customers saw immediate return on their investment by leveraging the always-on cloud compute resources in Windows Azure.

Today, we are pleased to announce the fourth release of our compute cluster solution since 2006. Microsoft HPC Pack 2012 is used to manage compute clusters with dedicated servers, part-time servers, desktop computers, and hybrid deployments with Windows Azure. Clusters can be entirely on-premises, can be extended to the cloud on a schedule or on demand, or be can be all in the cloud and active only when needed.

The new release provides support for Windows Server 2012. Features include Windows Azure VPN integration for access to on-premises resources, such as license servers, new job execution control for dependencies, new job scheduling policies for memory and cores, new monitoring tools, and utilities to help manage data staging.

Microsoft HPC Pack 2012 will be available in December 2012.

Big Compute on Windows Azure today

Windows Azure was designed from the beginning to support large-scale computation. With the Microsoft HPC Pack, or with their own applications, customers and partners can quickly bring up Big Compute environments with tens of thousands of cores. Customers are already putting these Windows Azure capabilities to the test, as the following examples of large-scale compute illustrate.

Risk Reporting for Solvency II Regulations

Milliman is one of the world's largest providers of actuarial and related products and services. Their MG-ALFA application is widely used by insurance and financial companies for risk modeling, it integrates with the Microsoft HPC Pack to distribute calculations to HPC clusters or burst work to Windows Azure. To help insurance firms meet risk reporting for Solvency II regulations, Milliman also offers MG-ALFA as a service using Windows Azure. This enables their customers to perform complex risk calculations without any capital investment or management of an on-premises cluster. The solution from Milliman has been in production for over a year with customers running it on up to 8,000 Windows Azure compute cores.

MG-ALFA can reliably scale to tens of thousands of Windows Azure cores. To test new models, Milliman used 45,500 Windows Azure compute cores to compute 5,800 jobs with a 100 percent success rate in just over 24 hours. Because you can run applications at such a large scale, you get faster results and more certainty in the outcomes as a result of not using approximations or proxy modelling methods. For many companies, complex and time-consuming projections have to be done each quarter. Without significant computing power, they either have to compromise on how long they wait for results or reduce the size of the model they are running. Windows Azure changes the equation.

The Cost of Insuring the World

Towers Watson is a global professional services company. Their MoSes financial modeling software applications are widely used by life insurance and annuity companies worldwide to develop new offerings and manage their financial risk. MoSes integrates with the Microsoft HPC Pack to distribute projects across a cluster that can also burst to Windows Azure. Last month, Towers Watson announced they are adopting Windows Azure as their preferred cloud platform.

One of Towers Watson’s initial projects for the partnership was to test the scalability of the Windows Azure compute environment by modeling the cost of insuring the world. The team used MoSes to perform individual policy calculations on the cost of issuing whole life policies to all seven billion individuals on earth. The calculations were repeated 1,000 times across risk-neutral economic scenarios. To finish these calculations in less time, MoSes used the HPC Pack to distribute these calculations in parallel across 50,000 compute cores in Windows Azure.

Towers Watson was impressed with their ability to complete 100,000 hours of computing in a couple of hours of real time. Insurance companies face increasing demands on the frequency and complexity of their financial modeling. This test demonstrated the extraordinary possibilities that Windows Azure brings to insurers. With Windows Azure, insurers can run their financial models with greater precision, speed and accuracy for enhanced management of risk and capital.

Speeding up Genome Analysis

Cloud computing is expanding the horizons of science and helping us better understand the human genome and disease. One example is the genome-wide association study (GWAS), which identifies genetic markers that are associated with human disease.

David Heckerman and the eScience research group at Microsoft Research developed a new algorithm called FaST-LMM that can find new genetic relationships to diseases by analyzing data sets that are several orders of magnitude larger than was previously possible and detecting more subtle signals in the data than before.

The research team turned to Windows Azure to help them test the application. They used the Microsoft HPC Pack with FaST-LMM on 27,000 compute cores on Windows Azure to analyze data from the Wellcome Trust study of the British population. They analyzed 63,524,915,020 pairs of genetic markers, looking for interactions among these markers for bipolar disease, coronary artery disease, hypertension, inflammatory bowel disease (Crohn’s disease), rheumatoid arthritis, and type I and type II diabetes.

Over 1,000,000 tasks were scheduled by the HPC Pack over 72 hours, consuming the equivalent of 1.9 million compute hours. The same computation would have taken 25 years to complete on a single 8-core server. The result: the ability to discover new associations between the genome and these diseases that could help potential breakthroughs in prevention and treatment.

Researchers in this field will have free access to these results and independently validate their own lab’s results. These researchers can calculate the results from individual pairs and the FaST-LMM algorithm on-demand with free access in the Windows Azure Data Marketplace.

Big Compute

With a massively powerful and scalable infrastructure, the new instance configurations, and the Microsoft HPC Pack 2012, Windows Azure is designed to be the best platform for your Big Compute applications.

We invite you to let us know about Big Compute interests and applications by contacting us at – bigcompute@microsoft.com.

- Bill Hilf, General Manager, Windows Azure Product Marketing.

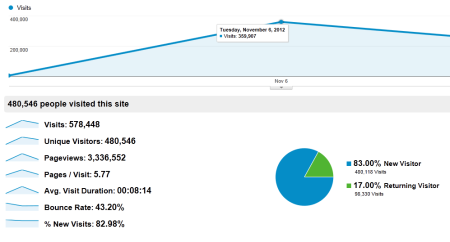

John Furrier (@furrier) posted Opinion: How Big Data Can Change the Game – Big Data Propels Obama to Re-election to the SiliconAngle blog on 11/9/2012:

As the election buzz about how Obama won the election in the most horific economic conditions any incu[m]bant has ever seen, many want to know why. America has yelling for years and Barack extracted that signal from the noise using big data to “listen”. He aligned his message to those who were speaking to him.

On election day America spoke and Barack Obama has won re-election. One key element of Obama’s victory that cannot be overlooked: Big Data.

Its influence on this election has been poorly documented but it played a huge role in returning Obama to the White House. CNN, Fox and the networks completely missed the Big Data/Silicon Valley angle. I’ve been saying Big Data can disrupt all industries and here it has disrupted the election. It literally put Obama over the top. It was that close. Romney just got out played in the big data listening game. Just ask Nate Silver. Enough said there.

I have been closely following the election. We had two capable candidates. Romney offered a strong fiscal policy. Obama’s social agenda was spot on. The Republicans, however, are “completely out to lunch” on the pulse of America, unable to fully understand the diversity of the country and the demographic make-up of today’s voters.

If Steve Jobs were alive he may have volunteered to help Obama promote his campaign. Well he was there in spirit because it was, Jobs’ iPhone that did help. Smartphones, social media, big data and predictive analytics all played a key role in Obama’s re-election bid, serving as a parallel “ground game” to the traditional “knocking on doors” ground game.

In the summer of 2011, I met with Rayid Ghani, the chief scientist of Obama’s campaign. Rayid was formerly with Accenture Technology Labs in Chicago. It was Rayid’s job to capture the “data firehose” and work with other “alpha geeks” to develop the algorithms that fully aligned Obama’s messages to shifting voter sentiment. The messages were then shared in real-time across social media, Twitter, text messaging and email. Rayid and his team, including volunteers from Google, LinkedIn and other start-ups in Silicon valley, collected massive amounts of voter data and were able to respond to concerns almost instantly. Without this big data effort, I’m not sure Obama wins re-election.

America is about hope and growth. This is why many found Romney’s fiscal policies so attractive. But it is Obama that speaks to the heart of the upward mobility aspirations of hard working Americans, including entrepreneurs, wanna-be entrepreneurs, and immigrants who want to start their own business and contribute to society. This is the new middle class that Obama speaks to. They represent new opportunities and new growth – mirroring the country’s new demographics. It is not the old guard.

Today’s tech culture has shifted the game in terms of voting and politics. There’s a whole new generation of people coming into the electorate who are young and have a definite perspective about what the future should look like. It’s more inclusive and globally oriented. They don’t take their cues from Big Media. They use their smartphones, Twitter, Facebook and other social media to help understand what is most important to them. They use these same tools to spread their message even further. The Obama campaign understood this. The Romney campaign did not.

Using Big Data, the Obama campaign could understand real-time sentiment across targeted groups and respond almost instantly with a message that could be quickly received and spread instantly to their friends and family using these same tools. This is not spam. These were well crafted messages that people wanted to receive. Big Data allowed the campaign to clue into sentiment right away, craft the right message and respond.

The Obama campaign was not using social media simply to get out their message but using social media to help create signals, align those signals with the voters, and then mobilize those who received their signals. This is much more than texting a person urging them to vote or asking them for money. Those are important but this new big data “listening” effort was more about synthesizing cultural sentiment. Mobilizing people, connecting with everyone, giving everyone a voice, and helping spread their message.

The days when old media and traditional gatekeepers can define the issues are over. Campaigns now need to look at Twitter, Facebook and crowd behavior to understand the pulse of the electorate. When the book is written on this election it’s going to be written how big data, Internet culture, and mobile phones enabled people to share their opinions, give everyone a voice and connect with one another.

Obama understood big data and this new generation of voter, Romney did not. Silicon Valley and even credit to the “crazy ones” like Steve Jobs were essential in helping Obama win re-election.

Here is my video of my comments on the subject on SiliconANGLE.TV NewsDesk

SiliconANGLE.tv is beta testing our NewsDesk or CubeDesk as we get ready to go 24/7 as a global tech video network coverage in the SiliconANGLE.TV mission to be the ESPN of Tech.

Stay Tuned

John didn’t mention the Epic [Fail] Whale: Romney volunteers say ‘Orca’ was debacle reported by Natalie Jennings (@ngjennings, no relation) in an 11/9/2012 article for the Washington Post:

Mitt Romney election day volunteers say a buggy and not-properly-tested poll-monitoring program created by the campaign stymied their voter monitoring efforts on Tuesday.

Orca, as the program was called, was designed as a first-of-its-kind tool to employ smartphones to mobilize voters, allowing them to microtarget which of their supporters had gone to the polls.

It was kept under close wraps until just before election day. Romney campaign communications director Gail Gitcho explained it to PBS on Monday as a massive technological undertaking, with 800 people in Boston communicating with 34,000 volunteers across the country.

According to John Ekdahl in a blistering post on the Ace of Spades blog, the deployment of the new program was sloppily executed from the outset, causing confusion among the volunteers.

Ekdhal wrote that instruction packets weren’t sent to volunteers until Monday night, and they arrived missing some crucial bits of information, including how to access the app and material the volunteers would need at the polls.

From what I saw, these problems were widespread. People had been kicked from poll watching for having no certificate. Others never received their pdf packets. Some were sent the wrong packets from a different area. Some received their packet, but their usernames and passwords didn’t work…

By 2 pm, I had completely given up. I finally got a hold of someone at around 1 pm and I never heard back. From what I understand, the entire system crashed at around 4 pm. I’m not sure if that’s true, but it wouldn’t surprise me. I decided to wait for my wife to get home from work to vote, which meant going very late (around 6:15 pm). Here’s the kicker, I never got a call to go out and vote.

A volunteer from Colorado gave a similar account to the Brietbart Web site, and said “this idea would only help if executed extremely well. Otherwise, those 37,000 swing state volunteers should have been working on GOTV.”

The Romney team had seemed confident in its product before election day. Spokeswoman Andrea Saul said on Monday it would give the campaign an “enormous advantage.”

Update: Romney digital director Zac Moffatt’s response to Orca’s critics

See also ArsTechnica’s Inside Team Romney's whale of an IT meltdown article of 11/9/2012 by Sean Gallagher:

Doug Mahugh (@dmahugh) posted a 00:19:46 OData and DB2: open data for the open web video segment to Channel 9’s Interoperability section on 11/9/2012:

OData is a web protocol that unlocks data silos to facilitate data access from browsers, desktop applications, mobile apps, cloud services, and other scenarios. In this screencast, you'll see how easy it is to set up on OData service (deployed on Windows Azure) for an IBM DB2 database (running on IBM's cloud service), with examples of how to use the service from browsers, client apps, phone apps, and Microsoft Excel.

Here's an overview of the screencast, with links to specific sections:

- [00:29] architectural overview. A high-level view of the components that make up the demo service.

- [02:23] connecting to an IBM DB2 database. How to install the DB2 driver and set up a connection to a DB2 database.

- [03:45] creating the entity data model. How to create an entity data model in Visual Studio, including selecting tables from the data source, customizing names, hiding columns, and defining relationships, complex types, and scalar properties.

- [08:44] creating the WCF data service (OData feed). How to create a WCF data service that exposes the entity data model as an OData endpoint.

- [10:43] testing the service locally. Debugging the service in Visual Studio, and querying the service to return the metadata that defines the entity data model.

- [12:34] OData queries in the browser. Examples of the simple and consistent syntax of OData queries, and how to return tables/collections or individual entities, how to filter and sort results, and how to display a stored image in its native mime type.

- [15:05] consuming the OData service from a .NET client application. A simple ASP.NET Dynamic Data Entities web site that can be used to navigate and edit the data exposed by the OData service.

- [16:07] consuming the OData service from a Windows Phone app. Consuming the OData service from a Windows Phone application.

- [17:11] consuming the OData service from Excel with the PowerPivot add-in. With the free PowerPivot add-in, you can use Excel to query, analyze, and report on data from any OData service.

Derrick Harris (@derrickharris) reported Facebook open sources Corona — a better way to do webscale Hadoop in an 11/8/2012 post to GigaOm’s Cloud blog:

Facebook is at it again, building more software to make Hadoop a better way to do big data at web scale. Its latest creation, which the company has also open sourced, is called Corona and aims to make Hadoop more efficient, more scalable and more available by re-inventing how jobs are scheduled.

As with most of its changes to Hadoop over the years — including the recently unveiled AvatarNode — Corona came to be because Hadoop simply wasn’t designed to handle Facebook’s scale or its broad usage of the platform. What kind of scale are we talking about? According to Facebook engineers Avery Ching, Ravi Murthy, Dmytro Molkov, Ramkumar Vadali, and Paul Yang in a blog post detailing Corona on Thursday, the company’s largest cluster is more than 100 petabytes; it runs more than 60,000 Hive queries a day; and its data warehouse has grown 2,500x in four years.

Further, Ching and company note — echoing something Facebook VP of Infrastructure Engineering Jay Parikh told me in September when discussing the future of big data startups — Hadoop is responsible for a lot of how Facebook runs both its platform and its business:

Almost every team at Facebook depends on our custom-built data infrastructure for warehousing and analytics, with roughly 1,000 people across the company — including both technical and non-technical personnel — using these technologies every day. Over half a petabyte of new data arrives in the warehouse every 24 hours, and ad-hoc queries, data pipelines, and custom MapReduce jobs process this raw data around the clock to generate more meaningful features and aggregations.

So, what is Corona?

In a nutshell, Corona represents a new system for scheduling Hadoop jobs that makes better use of a cluster’s resources and also makes it more amenable to multitenant environments like the one Facebook operates. Ching et al explain the problems and the solution in some detail, but the short explanation is that Hadoop’s JobTracker node is responsible for both cluster management and job-scheduling, but has a hard time keeping up with both tasks as clusters grow and the number of jobs sent to them increase.

Further, job-scheduling in Hadoop involves an inherent delay, which is problematic for small jobs that need fast results. And a fixed configuration of “map” and “reduce” slots means Hadoop clusters run inefficiently when jobs don’t fit into the remaining slots or when they’re not MapReduce jobs at all.

Corona resolves some of these problems by creating individual job trackers for each job and a cluster manager focused solely on tracking nodes and the amount of available resources. Thanks to this simplified architecture and a few other changes, the latency to get a job started is reduced and the cluster manager can make fast scheduling decisions because it’s not also responsible for tracking the progress of running jobs. Corona also incorporates a feature that divvies a cluster into resource pools to ensure every group within the company gets its fair share of resources.

The results have lived up to expectations since Corona went into full production in mid-2012: the average time to refill idle resources improved by 17 percent; resource utilization over regular MapReduce improved to 95 percent from 70 percent (in a simulation cluster); resource unfairness dropped to 3.6 percent with Corona versus 14.3 percent with traditional MapReduce; and latency on a test job Facebook runs every four minutes has been

Despite the hard work put into building and deploying Corona, though, the project still was a way to go. One of the biggest improvements currently being developed is to enable resource management based on CPU, memory and other job requirements rather than just the number of “map” and “reduce” slots needed. This will open Corona up to running non-MapReduce jobs, therefore making a Hadoop cluster more of a general-purpose parallel computing cluster.

Facebook is also trying to incorporate online upgrades, which would mean a cluster doesn’t have to come down every time part of the management layer undergoes an update.

Why Facebook sometimes must re-invent the wheel

Anyone deeply familiar with the Hadoop space might be thinking that a lot of what Facebook has done with Corona sounds familiar — and that’s because it kind of is. The Apache YARN project that has been integrated into the latest version of Apache Hadoop similarly splits the JobTracker into separate cluster-management and job-tracking components, and already allows for non-MapReduce workloads. Further, there is a whole class of commercial and open source cluster-management tools that have their own solutions to the problems Corona tries to solve, including Apache Mesos, which is Twitter’s tool of choice.

However, anyone who’s familiar with Facebook knows the company isn’t likely to buy software from anyone. It also has reached a point of customization with its Hadoop environment where even open-source projects from Apache won’t be easy to adapt to Facebook’s unique architecture. From the blog post:It’s worth noting that we considered Apache YARN as a possible alternative to Corona. However, after investigating the use of YARN on top of our version of HDFS (a strong requirement due to our many petabytes of archived data) we found numerous incompatibilities that would be time-prohibitive and risky to fix. Also, it is unknown when YARN would be ready to work at Facebook-scale workloads.

So, Facebook plods forward, a Hadoop user without equal (save for maybe Yahoo) left building its own tools in isolation. What will be interesting to watch as Hadoop adoption picks up and more companies begin building applications atop it is how many actually utilize the types of tools that companies like Facebook, Twitter and Quantcast have created and open sourced. They might not have commercial backers behind them, but they’re certainly built to work well at scale.

Feature image courtesy of Shutterstock user Johan Swanepoel.

Full disclosure: I’m a registered GigaOm analyst.

<Return to section navigation list>

Windows Azure Service Bus, Access Control Services, Caching, Active Directory and Workflow

See Vittorio Bertocci (@vibronet) reported on 11/8/2012 that he will present a session at the pattern & practices Symposium 2013 event in Redmond, WA on 1/15-1/17/2012 in the Cloud Computing Events section below.

<Return to section navigation list>

Windows Azure Virtual Machines, Virtual Networks, Web Sites, Connect, RDP and CDN

•• Mike Wood (@mikewo) wrote Windows Azure Websites – A New Hosting Model for Windows Azure and Red Gate Software published it in their Simple-Talk newsletter on 10/29/2012 (missed when published):

Whereas Azure works fine for established websites with a healthy visitor-count, it hasn't suited developers who wanted to just try the platform out, or to rapidly develop a number of hosted websites. The deployment process seemed too slow. Microsoft have now found a way of accommodating the latter type of usage with Windows Azure Websites (WAWS).

Since Microsoft released their Windows Azure Platform several years ago, they’ve received a lot of feedback that deployment of hosted websites and applications took too long. Users also pointed out that the platform was a little out of the reach of the hobbyist who just wanted to try things out. These folks just wanted to run a website and quickly iterate changes to that site as they wrote their code.

In response, Microsoft have decided to encourage people who just want to try out the platform, and hobbyists who wish to play with the technology, by creating the 90 Day Free Trial. They have also announced a new high-density website hosting feature that can go a long way in helping out small shops and hobbyists by improving the deployment speed, and allowing fast iterations for simple websites

Windows Azure Websites

Up until June of this year, Windows Azure had only one way of hosting a web application: their Hosted Services offering, now known as Cloud Services. When you performed a new deployment, the platform would identify a physical server with enough resources based on your configuration, create a virtual machine from scratch, attach a separate VHD that had your code copied to it, boot the machine, and finally configure load balancers, switches and DNS so that your application would come online. This process could take about five to twelve minutes to complete, and sometimes longer. When you consider all those things that are going on behind the scenes I think the wait is quite reasonable given that some enterprise shops have to wait days or weeks to get a new machine configured for them. The point here is that, for Cloud Services, the container that your application or solution runs in is a virtual machine.

In June, Microsoft provided a way to deploy websites faster by introducing Windows Azure Websites (WAWS). WAWS is a high density hosting option which uses an Application Pool Process (W3WP.exe) as the container in which your site runs. A computer process is much faster to start up than a full virtual machine: Therefore deployments and start up times for WAWS are a great deal quicker than Cloud Services. Since these processes are so fast to start up, any idle sites can be shut down (have their process stopped) to save resources, and then started back up when requests come in. By bringing sites up and down, a higher number of sites can then be distributed across a smaller number of machines. This is why it is termed ‘high density hosting’. You can host not only .NET based websites, but also sites running PHP, Node.js and older ASP.

One Website, Please

You can create and deploy a Windows Azure Website in just a few minutes. You can even select an open source application such as DasBlog or Joomla to get a jump start on your website if you like. You first need to have a Windows Azure Account by taking advantage of that Free Trial I mentioned or, if you have a MSDN subscription, you can use your Azure Benefits for MSDN. Once you have a Windows Azure account you can follow the instructions on some of the tutorials provided by Microsoft to get a website deployed. There are several great tutorials out there on getting started so I wanted to focus more on what is happening behind the scenes to make all this work.

Once you have a new, shiny website created in Windows Azure Websites, the site itself isn’t running until a request comes in. That means that, even though you can see the website in the portal that says “running”, it is not yet actually deployed to a machine in the Windows Azure datacenters. The site is registered with the Windows Azure platform and will be deployed once a request comes in. This is known as a “cold request” because the site is not deployed yet.

During a cold request, someone asks for something hosted on your website (an image, the home page, etc.). When the request comes in, it is first passed to the Windows Azure Load Balancer which sees that it is destined for a Windows Azure Website property rather than one of the Cloud Services. The request passes on to a set of servers running the IIS Application Request Routing module (I’ve nicknamed these the pirate servers since they run “ARR”). These ARR servers then look to see if they know where this website is running. Since the site hasn’t actually been started yet, the ARR servers won’t find the site and will look up the site in the Runtime Database that contains metadata for all registered websites in Windows Azure. With the information garnered from the Runtime DB, the platform then looks at the virtual resources available and selects a machine to deploy the site to.