Windows Azure and Cloud Computing Posts for 11/5/2012+

| A compendium of Windows Azure, Service Bus, EAI & EDI,Access Control, Connect, SQL Azure Database, and other cloud-computing articles. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Windows Azure Blob, Drive, Table, Queue, Hadoop and Media Services

- Windows Azure SQL Database, Federations and Reporting, Mobile Services

- Marketplace DataMarket, Cloud Numerics, Big Data and OData

- Windows Azure Service Bus, Caching, Access & Identity Control, Active Directory, and Workflow

- Windows Azure Virtual Machines, Virtual Networks, Web Sites, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch and Entity Framework v4+

- Windows Azure Infrastructure and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

Azure Blob, Drive, Table, Queue, Hadoop and Media Services

Bruno Terkaly (@brunoterkaly) posted Essential Knowledge for Azure Table Storage on 11/8/2012:

- Azure tables are used to store non-relational structured data at massive scale

- There are many types of storage options for the MS cloud. We will focus on Azure tables.

- Here is what we'll cover:

- When to use Azure Tables

- When are the appropriate to consider

- Understanding that Azure Tables are collection of entities

- Access Azure Tables directly or through a cloud application

- Key Features of Azure Tables

- Relationship between accounts, Tables, and entities

- Efficient Inserts and Updates

- Designing for scale

- Query Design and Performance

- Understanding Partition Keys

- How data is partitioned

- Coding considerations

- Azure Table Query Concepts

- Understanding TableServiceEntity/TableServiceContext

- Additional Resources

When to use Azure tables

- These are some typical use case scenarios for using Azure tables.

- Azure tables are optimized for capacity and performance (scale)

Azure Tables : When Appropriate

- SQL Database is limited currently to 150 GB without federation. Federation can be used to increase the size beyond 150 GB.

- If your code requires strong relational semantics, Azure tables are not appropriate. They don't allow for join statements.

- You can think of Azure tables as nothing more than a collection of objects. Note that each entity (similar to a row in a table) could have different attributes. In the diagram above, the second entity does not have a city property.

- One of the beauties of Azure Tables is that your can replicate across data centers, aiding in disaster recovery.

Tables: A collection of entities

- A table is a collection of entities.

- An entity is like an object. It has name/value pairs.

- An entity is kind of like a row in a relational database table, with the caveat that entities don't need to have the exact same attributes.

Accessing Azure Table Storage From Azure

- Any application that is capable of http is capable of communicating with Azure tables. That is because Azure tables are REST-based. This means a Java or PHP application can directly perform CRUD (create, read, update, delete) operations on an Azure Table.

Accessing Azure Table Storage From Azure

- Azure cloud applications can be hosted in the same data center as the Azure Table Storage. The compelling point here is that the latency from the cloud application is very low and can read and update the data at very high speeds.

Features: Azure Table Storage

- One of the key features of Azure tables is the low cost. You can use the Pricing Calculator to determine your predicted costs at http://www.windowsazure.com/en-us/pricing/calculator/

- It is important to remember that Azure tables are non-relational and therefore joins are not possible.

- Azure tables can automatically span multiple storage nodes, maintaining performance. This is based on the partition key that you define. It is very important to consider the partition key carefully as it determines performance.

- Transactions can occur only within Partition Keys. This is another example of why you must carefully consider Partition Keys.

- The data is replicated 3 times, including alternate data centers.

Relationships among accounts, tables, and entities

- Note that an account can have multiple tables and that each table can have one or more entities.

- Note the URL that is used to access your tables. This is the URL that any client that is http-capable can use.

Efficient Inserts and Updates

- Special semantics are available to make inserts and updates efficient. The bottom line is that you can do either an update or insert in just one operation.

Designing For Scale

- The Partition Key and RowKey are required properties for each entity. They play a key role on how the data is partitioned and scaled. They also determine performance for various queries. As mentioned previously, they also play a role in transactions (transactions cannot span Partition Keys).

- How to issue efficient queries will be addressed later in this post.

Query Design & Performance

- Performance is always an important consideration. The spectrum of speed varies considerably, depending on the type of query you issue. Specific examples are provided later in this post.

Understanding Partition Keys

- This slide illustrates how your entities get distributed across partition nodes. Note that the partition key determines how data is spread across storage nodes.

How Data is Partitioned

- The key point here is that every entity is uniquely identified by the combination of partition key and row key. You can think of partion key and row key together being similar to a primary index in a relational table.

How data is partitioned

- Azure will automatically manage both the partitioning and the replication of your entities. I am trying to emphasize how important it is to consider the partition key and row key.

Coding Considerations

- Note that Query 1 is fast because it performs and exact match on partition key and row key. It only returns one entity.

- Query 2 is slower than Query 1 because it does a range-based query.

- Query 3 is slower than Query 2 because it doesn't leverage the row key.

Azure Table Query Concepts

- Queries 4 and 5 are very slow because they don't use the partition key. This is equivalent to a full table scan with SQL Server. You want to avoid this at all costs. You may need to re-consider your partition keys and row keys if you find yourself issuing these type of queries.

- You may even want to keep duplicate copies of your data in other tables that are optimized for certain types of queries.

Understanding TableServiceEntity/TableServiceContext

- The table above stores email addresses. The partition key is the domain part of the email address and the mailname is the row key.

- TableServiceEntity and TableServiceContext are used when programming with C# or Visual Basic. By deriving from TableServiceEntity you can define your own entities that get stored in tables. TableServiceContext is used when you wish to perform CRUD operations on tables and is not illustrated here.

Additional Resources

- The Windows Azure Training Kit is the best way to get up and running.

- One of the labs is called Exploring Windows Azure Storage. It provides excellent examples on using storage.

- It can be found here (once you install the training kit) C:\WATK\Labs\ExploringStorage\HOL.htm

Denny Lee (@dennylee) described An easy way to test out Hive Dynamic Partition Insert on HDInsight Azure in an 11/7/2012 post:

One you get your HadoopOnAzure.com cluster up and running, an easy way to test out Hive Dynamic Partition Insert (the ability to load data into multiple partitions without the need to load each partition individually) on HDInsight Azure is to use the HiveSampleTable already included and the scripts below. You can execute the scripst from the Hive Interactive Console or from the Hive CLI.

1) For starters, create a new partitioned table

CREATE TABLE hivesampletable_p ( clientid STRING, querytime STRING, market STRING, devicemake STRING, devicemodel STRING, state STRING, querydwelltime STRING, sessionid BIGINT, sessionpagevieworder BIGINT ) PARTITIONED BY (deviceplatform STRING, country STRING) ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' LINES TERMINATED BY '\n' STORED AS TEXTFILE2) Then to insert data into your new partitioned table, run the script below

set hive.exec.dynamic.partition=true; set hive.exec.dynamic.partition.mode=nonstrict; FROM hivesampletable h INSERT OVERWRITE TABLE hivesampletable_p PARTITION (deviceplatform = 'iPhone OS', country) SELECT h.clientid, h.querytime, h.market, h.devicemake, h.devicemodel, h.state, h.querydwelltime, h.sessionid, h.sessionpagevieworder, h.country WHERE deviceplatform = 'iPhone OS';Some quick call outs:

- The first two set statements indicate to Hive that you are running a dynamic partition insert

- The HiveQL statement populates the hivesampletable_p (that you just created) from the HiveSampleTable.

- Notice that partition statement has two clauses noting that we are partitioning by deviceplatform and country

- We have specified deviceplatform = ‘iPhone OS’ indicating that all of this data should only go into the iPhone OS set of partitions. The where clause ensure that this is being filtered correctly.

- Also specified is country (with no value) meaning that all country values will have their own partitions as well.

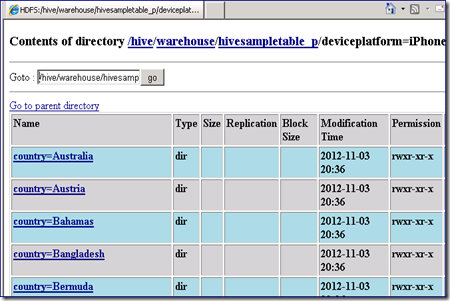

The easiest way to visualize the partitions being created is to Remote Desktop into the name node and open up the Hadoop Name Node Status (the browser link is available on the desktop when you RDP into the name node). Click Browse the File System > hive > warehouse > hivesampletable_p.

You will notice in the hivesampletable_p, there is a folder called deviceplatform=iPhone%20OS representing the deviceplatform partitioning scheme. Clicking on the iPhone OS folder you will see multiple folders – one for each country – as noted with the country partitioning scheme.

Joe Giardino, Serdar Ozler, Veena Udayabhanu and Justin Yu of the Windows Azure Storage Team posted Windows Azure Storage Client Library 2.0 Tables Deep Dive on 11/6/2012:

This blog post serves as an overview to the recently released Windows Azure Storage Client for .Net and the Windows Runtime. In addition to the legacy implementation shipped in versions 1.x that is based on DataServiceContext, we have also provided a more streamlined implementation that is optimized for common NoSQL scenarios.

Note, if you are migrating an existing application from a previous release of the SDK please see the overview and migration guide posts.

New Table implementation

The new table implementation is provided in the Microsoft.WindowsAzure.Storage.Table namespace. There are three key areas we emphasized in the design of the new table implementation: usability, extensibility, and performance. The basic scenarios are simple and “just work”; in addition, we have also provided two distinct extension points to allow developers to customize the client behaviors to their specific scenario. We have also maintained a degree of consistency with the other storage clients (Blob and Queue) so that moving between them feels seamless.

With the addition of this new implementation users have effectively three different patterns to choose from when programing against table storage. A high level summary of each and a brief description of the benefits they offer are provided below.

- Table Service Layer via TableEntity – This approach offers significant performance and latency improvements over the WCF Data Services, but still offers the ability to define POCO objects in a similar fashion without having to write serialization / deserialization logic. Additionally, the optional EntityResolver provides the ability to easily work with heterogeneous entity types returned via queries without any additional client objects or overhead. Additionally, users can optionally customize the serialization behavior of their entities by overriding the ReadEntity or WriteEntity methods. The Table Service layer does not currently expose an IQueryable, meaning that queries need to be manually constructed (helper functions are exposed via static methods on the TableQuery class, see below for more). For an example see the NoSQL scenario below.

- Table Service Layer via DynamicTableEntity – This approach is provided to allow user’s direct access to a Dictionary key value pairs. This is particularly useful for more advanced scenarios such as defining entities whose property names are dictated at runtime, entities with large amount of properties, server side projections, and bulk updates of heterogeneous data. Since DynamicTableEntity implements the ITableEntity interface all results, including projections, can be persisted back to the server. For an example see the Heterogeneous Update scenario below.

- WCF Data Services – Similar to the legacy 1.7x implementation, this approach exposes an IQueryable allowing users to construct complex queries via LINQ. This approach is recommended for users with existing code assets as well as non-latency sensitive queries as it utilizes greater system resources. The WCF Data Services based implementation has been migrated to the Microsoft.WindowsAzure.Storage.Table.DataServices namespace. For additional details see the DataServices section below.

Note, a similar table implementation using WCF Data Services is not provided in the recently released Windows 8 library due to limitations when projecting to various supported languages.Dependencies

The new table implementation utilizes the OdataLib components to provide the over the wire protocol implementation. These libraries are available via NuGet (See the resources section below). Additionally, to maintain compatibility with previous versions of the SDK, the client library has a dependency on System.Data.Services.Client.dll which is part of the .Net platform. Please Note, the current WCF Data Services standalone installer contains version 5.0.0 assemblies, referencing these assemblies will result in a runtime failure.

You can resolve these dependencies as shown below

NuGet

To install Windows Azure Storage, run the following command in the Package Manager Console.

PM>Install-Package WindowsAzure.Storage

This will automatically resolve any needed dependencies and add them to your project.

Windows Azure SDK for .NET - October 2012 release

- Install the SDK (http://www.windowsazure.com/en-us/develop/net/ click on the “install the SDK” button)

- Create a project and add a reference to %Program Files%\Microsoft SDKs\Windows Azure\.NET SDK\2012-10\ref\Microsoft.WindowsAzure.Storage.dll

- In Visual Studio go to Tools > Library Package Manager-> Package Manager Console and execute the following command.

PM> Install-Package Microsoft.Data.OData -Version 5.0.2

Performance

The new table implementation has shown significant performance improvements over the updated DataServices implementation and the previous versions of the SDK. Depending on the operation latencies have improved by between 25% and 75% while system resource utilization has also decreased significantly. Queries alone are over twice as fast and consume far less memory. We will have more details in a future Performance blog.

Object Model

A diagram of the table object model is provided below. The core flow of the client is that a user defines an action (TableOperation, TableBatchOperation, or TableQuery) over entities in the Table service and executes these actions via the CloudTableClient. For usability, these classes provide static factory methods to assist in the definition of actions.

For example, the code below inserts a single entity:

CloudTable table = tableClient.GetTableReference([TableName]); table.Execute(TableOperation.Insert(entity));

Execution

CloudTableClient

Similar to the other Azure Storage clients, the table client provides a logical service client, CloudTableClient, which is responsible for service wide operations and enables execution of other operations. The CloudTableClient class can update the Storage Analytics settings for the Table service, list all tables in the account, and can create references to client side CloudTable objects, among other operations.

CloudTable

A CloudTable object is used to perform operations directly on a given table (Create, Delete, SetPermissions, etc.), and is also used to execute entity operations against the given table.

TableRequestOptions

The TableRequestOptions class defines additional parameters which govern how a given operation is executed, specifically the timeouts and RetryPolicy that are applied to each request. The CloudTableClient provides default timeouts and RetryPolicy settings; TableRequestOptions can override them for a particular operation.

TableResult

The TableResult class encapsulates the result of a single TableOperation. This object includes the HTTP status code, the ETag and a weak typed reference to the associated entity. For TableBatchOperations, the CloudTable.ExecuteBatch method will return a collection of TableResults whose order corresponds with the order of the TableBatchOperation. For example, the first element returned in the resulting collection will correspond to the first operation defined in the TableBatchOperation.

Actions

TableOperation

The TableOperation class encapsulates a single operation to be performed against a table. Static factory methods are provided to create a TableOperation that will perform an Insert, Delete, Merge, Replace, Retrieve, InsertOrReplace, and InsertOrMerge operation on the given entity. TableOperations can be reused so long as the associated entity is updated. As an example, a client wishing to use table storage as a heartbeat mechanism could define a merge operation on an entity and execute it to update the entity state to the server periodically.

Sample – Inserting an Entity into a Table

// You will need the following using statements using Microsoft.WindowsAzure.Storage; using Microsoft.WindowsAzure.Storage.Table; // Create the table client. CloudTableClient tableClient = storageAccount.CreateCloudTableClient(); CloudTable peopleTable = tableClient.GetTableReference("people"); peopleTable.CreateIfNotExists(); // Create a new customer entity. CustomerEntity customer1 = new CustomerEntity("Harp", "Walter"); customer1.Email = "Walter@contoso.com"; customer1.PhoneNumber = "425-555-0101"; // Create an operation to add the new customer to the people table. TableOperation insertCustomer1 = TableOperation.Insert(customer1); // Submit the operation to the table service. peopleTable.Execute(insertCustomer1);TableBatchOperation

The TableBatchOperation class represents multiple TableOperation objects which are executed as a single atomic action within the table service. There are a few restrictions on batch operations that should be noted:

- You can perform batch updates, deletes, inserts, merge and replace operations.

- A batch operation can have a retrieve operation, if it is the only operation in the batch.

- A single batch operation can include up to 100 table operations.

- All entities in a single batch operation must have the same partition key.

- A batch operation is limited to a 4MB data payload.

The CloudTable.ExecuteBatch which takes as input a TableBatchOperation will return an IList of TableResults which will correspond in order to the entries in the batch itself. For example, the result of a merge operation that is the first in the batch will be the first entry in the returned IList of TableResults. In the case of an error the server may return a numerical id as part of the error message that corresponds to the sequence number of the failed operation in the batch unless the failure is associated with no specific command such as ServerBusy, in which case -1 is returned. TableBatchOperations, or Entity Group Transactions, are executed atomically meaning that either all operations will succeed or if there is an error caused by one of the individual operations the entire batch will fail.

Sample – Insert two entities in a single atomic Batch Operation

// You will need the following using statements using Microsoft.WindowsAzure.Storage; using Microsoft.WindowsAzure.Storage.Table; // Create the table client. CloudTableClient tableClient = storageAccount.CreateCloudTableClient(); CloudTable peopleTable = tableClient.GetTableReference("people"); peopleTable.CreateIfNotExists(); // Define a batch operation. TableBatchOperation batchOperation = new TableBatchOperation(); // Create a customer entity and add to the table CustomerEntity customer = new CustomerEntity("Smith", "Jeff"); customer.Email = "Jeff@contoso.com"; customer.PhoneNumber = "425-555-0104"; batchOperation.Insert(customer); // Create another customer entity and add to the table CustomerEntity customer2 = new CustomerEntity("Smith", "Ben"); customer2.Email = "Ben@contoso.com"; customer2.PhoneNumber = "425-555-0102"; batchOperation.Insert(customer2); // Submit the operation to the table service. peopleTable.ExecuteBatch(batchOperation);TableQuery

The TableQuery class is a lightweight query mechanism used to define queries to be executed against the table service. See “Querying” below.

Entities

ITableEntity interface

The ITableEntity interface is used to define an object that can be serialized and deserialized with the table client. It contains the PartitionKey, RowKey, Timestamp, and Etag properties, as well as methods to read and write the entity. This interface is implemented by the TableEntity and DynamicTableEntity entity types that are included in the library; a client may implement this interface directly to persist different types of objects or objects from 3rd-party libraries. By overriding the ITableEntity.ReadEntity or ITableEntity.WriteEntity methods a client may customize the serialization logic for a given entity type.

TableEntity

The TableEntity class is an implementation of the ITableEntity interface and contains the RowKey, PartitionKey, and Timestamp properties. The default serialization logic TableEntity uses is based off of reflection where all public properties of a supported type that define both get and set are serialized. This will be discussed in greater detail in the extension points section below. This class is sealed and may be extended to add additional properties to an entity type.

Sample – Define a POCO that extends TableEntity

// This class defines one additional property of integer type, since it derives from // TableEntity it will be automatically serialized and deserialized. public class SampleEntity : TableEntity { public int SampleProperty { get; set; } }DynamicTableEntity

The DynamicTableEntity class allows clients to update heterogeneous entity types without the need to define base classes or special types. The DynamicTableEntity class defines the required properties for RowKey, PartitionKey, Timestamp, and ETag; all other properties are stored in an IDictionary. Aside from the convenience of not having to define concrete POCO types, this can also provide increased performance by not having to perform serialization or deserialization tasks.

Sample – Retrieve a single property on a collection of heterogeneous entities

// You will need the following using statements using Microsoft.WindowsAzure.Storage; using Microsoft.WindowsAzure.Storage.Table; // Define the query to retrieve the entities, notice in this case we // only need to retrieve the Count property. TableQuery query = new TableQuery().Select(new string[] { "Count" }); // Note the TableQuery is actually executed when we iterate over the // results. Also, this sample uses the DynamicTableEntity to avoid // having to worry about various types, as well as avoiding any // serialization processing. foreach (DynamicTableEntity entity in myTable.ExecuteQuery(query)) { // Users should always assume property is not there in case another client removed it. EntityProperty countProp; if (!entity.Properties.TryGetValue("Count", out countProp)) { throw new ArgumentNullException("Invalid entity, Count property not found!"); } // Display Count property, however you could modify it here and persist it back to the service. Console.WriteLine(countProp.Int32Value); }Note: an ExecuteQuery equivalent is not provided in the Windows Runtime library in keeping with best practice for the platform. Instead use the ExecuteQuerySegmentedAsync method to execute the query in a segmented fashion.

EntityProperty

The EntityProperty class encapsulates a single property of an entity for the purposes of serialization and deserialization. The only time the client has to work directly with EntityProperties is when using DynamicTableEntity or implementing the TableEntity.ReadEntity and TableEntity.WriteEntity methods.

The samples below show two approaches that can be a player’s score property. The first approach uses DynamicTableEntity to avoid having to declare a client side object and updates the property directly, whereas the second will deserialize the entity into a POJO and update that object directly.

Sample –Update of entity property using EntityProperty

// You will need the following using statements using Microsoft.WindowsAzure.Storage; using Microsoft.WindowsAzure.Storage.Table; // Retrieve entity TableResult res = gamerTable.Execute(TableOperation.Retrieve("Smith", "Jeff")); DynamicTableEntity player = (DynamicTableEntity)res.Result; // Retrieve Score property EntityProperty scoreProp; // Users should always assume property is not there in case another client removed it. if (!entity.Properties.TryGetValue("Score ", out scoreProp)) { throw new ArgumentNullException("Invalid entity, Score property not found!"); } scoreProp.Int32Value += 1; // Store the updated score gamerTable.Execute(TableOperation.Merge(player));Sample – Update of entity property using POJO

// You will need the following using statements using Microsoft.WindowsAzure.Storage; using Microsoft.WindowsAzure.Storage.Table; public class GamerEntity : TableEntity { public int Score { get; set; } } // Retrieve entity TableResult res = gamerTable.Execute(TableOperation.Retrieve<GamerEntity>("Smith", "Jeff")); GamerEntity player = (GamerEntity)res.Result; // Update Score player.Score += 1; // Store the updated score gamerTable.Execute(TableOperation.Merge(player));EntityResolver

The EntityResolver delegate allows client-side projection and processing for each entity during serialization and deserialization. This is designed to provide custom client side projections, query-specific filtering, and so forth. This enables key scenarios such as deserializing a collection of heterogeneous entities from a single query.

Sample – Use EntityResolver to perform client side projection

// You will need the following using statements using Microsoft.WindowsAzure.Storage; using Microsoft.WindowsAzure.Storage.Table; // Define the query to retrieve the entities, notice in this case we only need // to retrieve the Email property. TableQuery<TableEntity> query = new TableQuery<TableEntity>().Select(new string[] { "Email" }); // Define a Entity resolver to mutate the entity payload upon retrieval. // In this case we will simply return a String representing the customers Email address. EntityResolver<string> resolver = (pk, rk, ts, props, etag) => props.ContainsKey("Email") ? props["Email"].StringValue : null; // Display the results of the query, note that the query now returns // strings instead of entity types since this is the type of EntityResolver we created. foreach (string projectedString in gamerTable.ExecuteQuery(query, resolver, null /* RequestOptions */, null /* OperationContext */)) { Console.WriteLine(projectedString); }Querying

There are two query constructs in the table client: a retrieve TableOperation which addresses a single unique entity and a TableQuery which is a standard query mechanism used against multiple entities in a table. Both querying constructs need to be used in conjunction with either a class type that implements the TableEntity interface or with an EntityResolver which will provide custom deserialization logic.

Retrieve

A retrieve operation is a query which addresses a single entity in the table by specifying both its PartitionKey and RowKey. This is exposed via TableOperation.Retrieve and TableBatchOperation.Retrieve and executed like a typical operation via the CloudTable.

Sample – Retrieve a single entity

// You will need the following using statements using Microsoft.WindowsAzure.Storage; using Microsoft.WindowsAzure.Storage.Table; // Create the table client. CloudTableClient tableClient = storageAccount.CreateCloudTableClient(); CloudTable peopleTable = tableClient.GetTableReference("people"); // Retrieve the entity with partition key of "Smith" and row key of "Jeff" TableOperation retrieveJeffSmith = TableOperation.Retrieve<CustomerEntity>("Smith", "Jeff"); // Retrieve entity CustomerEntity specificEntity = CustomerEntity)peopleTable.Execute(retrieveJeffSmith).Result;

TableQuery

TableQuery is a lightweight object that represents a query for a given set of entities and encapsulates all query operators currently supported by the Windows Azure Table service. Note, for this release we have not provided an IQueryable implementation, so developers who are migrating applications to the 2.0 release and wish to leverage the new table implementation will need to reconstruct their queries using the provided syntax. The code below produces a query to take the top 5 results from the customers table which have a RowKey greater than 5.

Sample – Query top 5 entities with RowKey greater than or equal to 5

// You will need the following using statements using Microsoft.WindowsAzure.Storage; using Microsoft.WindowsAzure.Storage.Table; TableQuery<TableEntity> query = new TableQuery<TableEntity>().Where(TableQuery.GenerateFilterCondition("RowKey", QueryComparisons.GreaterThanOrEqual, "5")).Take(5);In order to provide support for JavaScript in the Windows Runtime, the TableQuery can be used via the concrete type TableQuery, or in its generic form TableQuery<EntityType> where the results will be deserialized to the given type. When specifying an entity type to deserialize entities to, the EntityType must implement the ITableEntity interface and provide a parameterless constructor.

The TableQuery object provides methods for take, select, and where. There are static methods provided such as GenerateFilterCondition, GenerateFilterConditionFor*, and CombineFilters which construct other filter strings. Some examples of constructing queries over various types are shown below

// 1. Filter on String TableQuery.GenerateFilterCondition("Prop", QueryComparisons.GreaterThan, "foo"); // 2. Filter on GUID TableQuery.GenerateFilterConditionForGuid("Prop", QueryComparisons.Equal, new Guid()); // 3. Filter on Long TableQuery.GenerateFilterConditionForLong("Prop", QueryComparisons.GreaterThan, 50L); // 4. Filter on Double TableQuery.GenerateFilterConditionForDouble("Prop", QueryComparisons.GreaterThan, 50.50); // 5. Filter on Integer TableQuery.GenerateFilterConditionForInt("Prop", QueryComparisons.GreaterThan, 50); // 6. Filter on Date TableQuery.GenerateFilterConditionForDate("Prop", QueryComparisons.LessThan, DateTime.Now); // 7. Filter on Boolean TableQuery.GenerateFilterConditionForBool("Prop", QueryComparisons.Equal, true); // 8. Filter on Binary TableQuery.GenerateFilterConditionForBinary("Prop", QueryComparisons.Equal, new byte[] { 0x01, 0x02, 0x03 });Sample – Query all entities with a PartitionKey=”SamplePK” and RowKey greater than or equal to “5”

string pkFilter = TableQuery.GenerateFilterCondition("PartitionKey", QueryComparisons.Equal, "samplePK"); string rkLowerFilter = TableQuery.GenerateFilterCondition("RowKey", QueryComparisons.GreaterThanOrEqual, "5"); string rkUpperFilter = TableQuery.GenerateFilterCondition("RowKey", QueryComparisons.LessThan, "10"); // Note CombineFilters has the effect of “([Expression1]) Operator (Expression2]), as such passing in a complex expression will result in a logical grouping. string combinedRowKeyFilter = TableQuery.CombineFilters(rkLowerFilter, TableOperators.And, rkUpperFilter); string combinedFilter = TableQuery.CombineFilters(pkFilter, TableOperators.And, combinedRowKeyFilter); // OR string combinedFilter = string.Format(“({0}) {1} ({2}) {3} ({4})”, pkFilter, TableOperators.And, rkLowerFilter, TableOperators.And, rkUpperFilter); TableQuery<SampleEntity> query = new TableQuery<SampleEntity>().Where(combinedFilter);Note: There is no logical expression tree provided in the current release, and as a result repeated calls to the fluent methods on TableQuery overwrite the relevant aspect of the query. Additionally,

Note the TableOperators and QueryComparisons classes define string constants for all supported operators and comparisons:

TableOperators

- And

- Or

- Not

QueryComparisons

- Equal

- NotEqual

- GreaterThan

- GreaterThanOrEqual

- LessThan

- LessThanOrEqual

Scenarios

NoSQL

A common pattern in a NoSQL datastore is to work with storing related entities with different schema in the same table. In this sample below, we will persist a group of heterogeneous shapes that make up a given drawing. In our case, the PartitionKey for our entities will be a drawing name that will allow us to retrieve and alter a set of shapes together in an atomic manner. The challenge becomes how to work with these heterogeneous entities on the client side in an efficient and usable manner.

The table client provides an EntityResolver delegate which allows client side logic to execute during deserialization. In the scenario detailed above, let’s use a base entity class named ShapeEntity which extends TableEntity. This base shape type will define all common properties to a given shape, such as it Color Fields and X and Y coordinates in the drawing.

public class ShapeEntity : TableEntity { public virtual string ShapeType { get; set; } public double PosX { get; set; } public double PosY { get; set; } public int ColorA { get; set; } public int ColorR { get; set; } public int ColorG { get; set; } public int ColorB { get; set; } }Now we can define some shape types that derive from the base ShapeEntity class. In the sample below we define a rectangle which will have a Width and Height property. Note, this child class also overrides ShapeType for serialization purposes. For brevities sake the Line, and Ellipse entities are omitted here, however you can imagine representing other types of shapes in different child entity type such as triangles, trapezoids etc.

public class RectangleEntity : ShapeEntity { public double Width { get; set; } public double Height { get; set; } public override string ShapeType { get { return "Rectangle"; } set {/* no op */} } }Now we can define a query to load all of the shapes associated with our drawing and an EntityResolver that will resolve each entity to the correct child class. Note, that in this example aside from setting the core properties PartitionKey, RowKey, Timestamp, and ETag, we did not have to write any custom deserialization logic and instead rely on the built in deserialization logic provided by TableEntity.ReadEntity.

// You will need the following using statements using Microsoft.WindowsAzure.Storage; using Microsoft.WindowsAzure.Storage.Table; TableQuery<ShapeEntity> drawingQuery = new TableQuery<ShapeEntity>().Where(TableQuery.GenerateFilterCondition("PartitionKey", QueryComparisons.Equal, "DrawingName")); EntityResolver<ShapeEntity> shapeResolver = (pk, rk, ts, props, etag) => { ShapeEntity resolvedEntity = null; string shapeType = props["ShapeType"].StringValue; if (shapeType == "Rectangle") { resolvedEntity = new RectangleEntity(); } else if (shapeType == "Ellipse") { resolvedEntity = new EllipseEntity(); } else if (shapeType == "Line") { resolvedEntity = new LineEntity(); } // Potentially throw here if an unknown shape is detected resolvedEntity.PartitionKey = pk; resolvedEntity.RowKey = rk; resolvedEntity.Timestamp = ts; resolvedEntity.ETag = etag; resolvedEntity.ReadEntity(props, null); return resolvedEntity; };Now we can execute this query in a segmented Asynchronous manner in order to keep our UI fast and fluid. The code below is written using the Async Methods exposed by the client library for Windows Runtime.

List<ShapeEntity> shapeList = new List<ShapeEntity>(); TableQuerySegment<ShapeEntity> currentSegment = null; while (currentSegment == null || currentSegment.ContinuationToken != null) { currentSegment = await drawingTable.ExecuteQuerySegmentedAsync( drawingQuery, shapeResolver, currentSegment != null ? currentSegment.ContinuationToken : null); shapeList.AddRange(currentSegment.Results); }Once we execute this we can see the resulting collection of ShapeEntities contains shapes of various entity types.

Heterogeneous update

In some cases it may be required to update entities regardless of their type or other properties. Let’s say we have a table named “employees”. This table contains entity types for developers, secretaries, contractors, and so forth. The example below shows how to query all entities in a given partition (in our example the state the employee works in is used as the PartitionKey) and update their salaries regardless of job position. Since we are using merge, the only property that is going to be updated is the Salary property, and all other information regarding the employee will remain unchanged.

// You will need the following using statements using Microsoft.WindowsAzure.Storage; using Microsoft.WindowsAzure.Storage.Table; TableQuery query = new TableQuery().Where("PartitionKey eq 'Washington'").Select(new string[] { "Salary" }); // Note for brevity sake this sample assumes there are 100 or less employees, however the client should ensure batches are kept to 100 operations or less. TableBatchOperation mergeBatch = new TableBatchOperation(); foreach (DynamicTableEntity ent in employeeTable.ExecuteQuery(query)) { EntityProperty salaryProp; // Check to see if salary property is present if (!ent.Properties.TryGetValue("Salary", out salaryProp)) { if (salaryProp.DoubleValue < 50000) { // Give a 10% raise salaryProp.DoubleValue = 1.1; } else if (salaryProp.DoubleValue < 100000) { // Give a 5% raise salaryProp.DoubleValue = *1.05; } mergeBatch.Merge(ent); } else { throw new ArgumentNullException("Entity does not contain Salary!"); } } // Execute batch to save changes back to the table service employeeTable.ExecuteBatch(mergeBatch);Persisting 3rd party objects

In some cases we may need to persist objects exposed by 3rd party libraries, or those which do not fit the requirements of a TableEntity and cannot be modified to do so. In such cases, the recommended best practice is to encapsulate the 3rd party object in a new client object that implements the ITableEntity interface, and provide the custom serialization logic needed to persist the object to the table service via ITableEntity.ReadEntity and ITableEntity.WriteEntity.

Continuation Tokens

Continuation Tokens can be returned in the segmented execution of a query. One key improvement to the [Blob|Table|Queue]ContinuationTokens in this release is that all properties are now publicly settable and a public default constructor is provided. This, in addition to the IXMLSerializable implementation, allows clients to easily persist a continuation token.

DataServices

The legacy table service implementation has been migrated to the Microsoft.WindowsAzure.Storage.Table.DataServices namespace and updated to support new features in the 2.0 release such as OperationContext, end to end timeouts, and asynchronous cancellation. In addition to the new features, there have been some breaking changes introduced in this release, for a full list please reference the tables section of the Breaking Changes blog post.

Developing in Windows Runtime

A key driver in this release was expanding platform support, specifically targeting the upcoming releases of Windows 8, Windows RT, and Windows Server 2012. As such, we are releasing the following two Windows Runtime components to support Windows Runtime as Community Technology Preview (CTP):

- Microsoft.WindowsAzure.Storage.winmd - A fully projectable storage client that supports JavaScript, C++, C#, and VB. This library contains all core objects as well as support for Blobs, Queues, and a base Tables Implementation consumable by JavaScript

- Microsoft.WindowsAzure.Storage.Table.dll – A table extension library that provides generic query support and strong type entities. This is used by non-JavaScript applications to provide strong type entities as well as reflection based serialization of POCO objects

The images below illustrate the Intellisense experience when defining a TableQuery in an application that just reference the core storage component and when the table extension library is used. EntityResolver, and the TableEntity object are also absent in the core storage component, instead all operations are based off of the DynamicTableEntity type.

Intellisense when defining a TableQuery referencing only the core storage component

Intellisense when defining a TableQuery with the table extension library

While most table constructs are the same, you will notice that when developing for the Windows Runtime all synchronous methods are absent which is in keeping with the specified best practice. As such, the equivalent of the desktop method CloudTable.ExecuteQuery which would handle continuation for the user has been removed. Instead developers are supposed to handle this segmented execution in their application and utilize the provided ExecuteQuerySegmentedAsync methods in order to keep their apps fast and fluid.

Summary

This blog post has provided an in depth overview of developing applications that leverage the Windows Azure Table service via the new Storage Client libraries for .Net and the Windows Runtime. Additionally, we have discussed some specific differences when migrating existing applications from a previous 1.x release of the SDK.

Joe Giardino

Serdar Ozler

Veena Udayabhanu

Justin YuWindows Azure Storage

Resources

Get the Windows Azure SDK for .Net

We Azure table users are still waiting for secondary indexes, which has been promised for several years.

Mark Kromer (@mssqldude) started a series with Hortonworks on Windows – Microsoft HDInsight & SQL Server – Part 1 on 11/6/2012:

I’m going to start a series here on using Microsoft’s Windows distribution of the Hadoop stack, which Microsoft has released in community preview here together with Hortonworks: http://www.microsoft.com/en-us/download/details.aspx?id=35397.

Currently, I am using Cloudera on Ubutnu and Amazon’s Elastic MapReduce for Hadoop & Hive jobs. I’ve been using Sqoop to import & export data between databases (SQL Server, HBase and Aster Data) and ETL jobs for data warehousing the aggregated data (SSIS) while leaving the detail data in persistent HDFS nodes. Our data scientists are analyzing data from all 3 of those sources: SQL Server, Aster Data and Hadoop through cubes, Excel, SQL interfaces and Hive. We are also using analytical tools: PowerPivot, SAS and Tableau.

That being said, and having spent 5 years previously @ Microsoft, I was very much anticipating getting the Windows distribution of Hadoop. I’ve only had 1 week to play around with it so far and I’ve decided to begin documenting my journey here in my blog. I’ll also talk about it so far, along with Aster, Tableau and Hadoop on Linux Nov 7 @ 6 PM in Microsoft’s Malvern office, my old stomping grounds: http://www.pssug.org.

As the group’s director, one of the reasons that I like having a Windows distribution of Hadoop is so that we are not locked into an OS and can leverage the broad skill sets that we have on staff & off shore and so that we don’t tie ourselves to hiring on specific backgrounds when we analyze potential employee experience.

When I began experimenting with the Microsoft Windows Hadoop distribution, I downloaded the preview file and then installed it from the Web Installer, which then created a series of Apache Hadoop services, including the most popular in the Hadoop stack that drives the entire framework: jobtracker, tasktracker, namenode and datanode. There are a number of others that you can read about from any good Hadoop tutorial.

The installer created a user “hadoop” and an IIS app pool and site for the Microsoft dashboard for Hadoop. Compared to what you see from Hortonworks and Cloudera, it is quite sparse at this point. But I don’t really make much use of the management consoles from Hadoop vendors at this point. As we expand our use of Hadoop, I’m sure we’ll use them more. Just as I am sure that Microsoft will expand their dashboards, management, etc. and maybe even integrate with System Center.

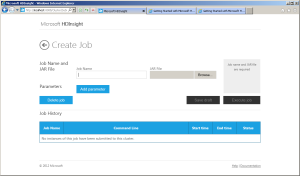

You’ll get the usual Hadoop namenode and MapReduce web pages to view system activity and a command-line interface to issue jobs, manage the HDFS file system, etc. I’ve been using the dashboard to issue jobs, run Hive queries and download the Excel Hive drive, which I LOVE. I’ll blog about Hive next, in part 2. In the meantime, enjoy the screenshots of the portal access into Hadoop from the dashboard below:

This is how you submit a MapReduce JAR file (Java) job:

Here is the Hive interface for submitting SQL-like (HiveQL) queries against Hadoop using Hive’s data warehouse metadata schemas:

Saravanan G described HOW TO: Copy files between Windows Azure storage accounts in an 11/6/2012 post to the Aditi Technologies blog:

This blog will guide you with simple steps on how to copy files between storage accounts (blob to blob). Most often, this may be helpful when you need to copy vhd files between Windows Azure storage accounts.

Prerequisite: You need to download and install the Node.js tool from here.

Then, run the following command from the Node.js command prompt

(Go To à Start | Node.js(x64) | Node.js command prompt – For a Windows machine)

Windows Machine: npm install azure –g (Need to run in administrator mode).

Linux Machine: sudo npm install azure –g (Need to run this command with elevated privileges).

To make sure your installation was successful, type azure in the command prompt. This will list all the Azure related commands.

vm is the command that helps you to manage your Azure virtual machine.

Typing azure vm –help in command prompt will list all the Azure virtual machines related commands.

azure vm disk upload is the command that copies your files between storage accounts (Blob to Blob).

Syntax: azure vm disk upload

Example:

azure vm disk upload "http://sourcestorage.blob.core.windows.net/vhds/sample.vhd" "http://destinationstorage.blob.core.windows.net/vhds/sample.vhd" "DESTINATIONSTORAGEACCOUNTKEY"

Note: Your source location container should be public or you can also specify the URL with shared access signature.

Here the copy is made between the Windows Azure storage itself. Not even one of the files is copied to your local machine. This makes the transfer much faster.

I tried copying 1TB file from one blob container to another blob container in the same storage location for which it took less than 5 minutes. This may take more time when we copy files between different storage accounts (a 30GB file took me one hour when I was copying it to different storage accounts).

Avkash Chauhan (@avkashchauhan) described Hadoop adventures with Microsoft HDInsight in an 11/5/2012 post:

What is HDInsight?

HDinsight is the product name for Microsoft installation of Hadoop and Hadoop on azure service. HDInsight is Microsoft’s 100% Apache compatible Hadoop distribution, supported by Microsoft. HDInsight, available both on Windows Server or as an Windows Azure service, empowers organizations with new insights on previously untouched unstructured data, while connecting to the most widely used Business Intelligence (BI) tools on the planet.

It is available in two mode:

HDInsight as Cloud Service: Cloud Version running on Windows Azure

- HDInsight as Local Cluster: A downloadable version to runs locally on Windows Server and Desktop

In this article we will see how to use HDInsight on local machine.

Where to get it?

- You can download HDInsight Preview version from the link below:

- http://www.microsoft.com/web/handlers/webpi.ashx?command=GetInstallerRedirect&appid=HDINSIGHT-PREVIEW&mode=new

What does Windows installer brings to your machine:

After the installation is completed you will see the following applications are installed:

- Microsoft HDInsight Community Technology Preview Version 1.0.0.0

- Hortonwoks Data Platform 1.0.1 Developer Preview Version 1.0.1

- If you do not change the installed component, Python 2.7.3150 is also installed

- Java and C++ runtime is also installed as required in the machine

Once installer is completed you will see the following shortcuts are setup in your machine:

Here is the list of shortcuts:

- Hadoop Command Line

- Microsoft HDInsight Dashboard

- Hadoop MapReduce Status

- Hadoop Name Node Status

By default the Hadoop is installed at C:\Hadoop as below:

If you launch the “Hadoop command Line” you will see the list of commands as below:

- namenode -format format the DFS filesystem

- secondarynamenode run the DFS secondary namenode

- namenode run the DFS namenode

- datanode run a DFS datanode

- dfsadmin run a DFS admin client

- mradmin run a Map-Reduce admin client

- fsck run a DFS filesystem checking utility

- fs run a generic filesystem user client

- balancer run a cluster balancing utility

- fetchdt fetch a delegation token from the NameNode

- jobtracker run the MapReduce job Tracker node

- pipes run a Pipes job

- tasktracker run a MapReduce task Tracker node

- historyserver run job history servers as a standalone daemon

- job manipulate MapReduce jobs

- queue get information regarding JobQueues

- version print the version

- jar <jar> run a jar file

- distcp <srcurl> <desturl> copy file or directories recursively

- archive -archiveName NAME <src>* <dest> create a hadoop archive

- daemonlog get/set the log level for each daemon

- or

- CLASSNAME run the class named CLASSNAME

Most commands print help when invoked w/o parameters.

Try checking the Version as below:

c:\Hadoop\hadoop-1.1.0-SNAPSHOT>hadoop version

Hadoop 1.1.0-SNAPSHOT

Subversion on branch -r

Compiled by jenkins on Wed Oct 17 22:28:56 PDT 2012

From source with checksum 80f5614dfb0743b569344f051a07b37d

Now if you launch “Microsoft HDInsight Dashboard” shortcut you will see the dashboard running locally as below:

Launching “Hadoop MapReduce Status” shortcut will give you the following info:

And Launching “Hadoop Name Node Status” shortcut you will see the following:

So as you can see above, you do have Hadoop Cluster running on your local machine.

Play with it a little more and my next article is coming with more info on this regard.

Have fun with Hadoop!!

Hanu Kommalapati (@hanuk, pictured below) asserted Windows Azure’s Flat Network Storage to Enable Higher Scalability Targets in an 11/4/2012 post:

Brad Calder, a Distinguished Engineer at Microsoft, recently blogged about higher scalability targets for Azure Storage enabled by Flat Network architecture: Windows Azure’s Flat Network Storage and 2012 Scalability Targets. Windows Azure’s implementation of Flat Network toplogy, referred to as “Quantum 10” (Q10) network architecture, is influenced by the Microsoft Research paper: VL2: A Scalable and Flexible Data Center Network presented at SIGCOMM’09.

Once software improvements are fully rolled out for flat network implementation, which will happen per Brad by the end of 2012, will provide the following scalability targets for each Azure Storage account created after June 7th, 2012:

Storage Account Scalability Targets

- Capacity – Up to 200 TBs

- Transactions – Up to 20,000 entities/messages/blobs per second

- Bandwidth for a Geo Redundant storage account

- Ingress - up to 5 gigabits per second

- Egress - up to 10 gigabits per second

- Bandwidth for a Locally Redundant storage account

- Ingress - up to 10 gigabits per second

- Egress - up to 15 gigabits per second

Please keep in mind that any storage account created before June 7th, 2012 need to be migrated to newly created storage account to take advantage of the above higher scalability targets.

Since each storage account is composed of many partitions, it is important to understand the scalability targets (achieved with 1kb object size) for a single partition which are given below:

Partition Scalability Targets

- Single Queue (all the messages in a queue are accessed through a single partition)

- Up to 2,000 messages/second

- Single Table Partition (all the entities in a table with the same partition key)

- Up to 2,000 entities/second (with efficient partitioning scheme one should be able to achieve 20,000 entities/second)

- Single Blob (each blob will be in its own partition as the partition key for blog is “container name + blob name”)

- Up to 60 MBytes/sec

The above are the high end numbers and as Brad suggests, your mileage will vary depending on the object sizes and access patterns. So, establishing application specific scalability numbers before production deployment helps with the scale out planning of your Azure infrastructure as application load ramps up.

Read Brad’s excellent paper titled Windows Azure Storage: A Highly Available Cloud Storage Service with Strong Consistency for the internals of Azure Storage architecture presented at SIGOPS’11.

<Return to section navigation list>

Windows Azure SQL Database, Federations and Reporting, Mobile Services

Matteo Pagani (@qmatteoq) described Azure Mobile Services for Windows Phone 8 in an 11/8/2012 post:

During the BUILD conference, right after the announcement of the public release of the Windows Phone 8 SDK, Microsoft has also announced, as expected, that Azure Mobile Services, that we’ve learned to use in the previous posts, are now compatible with Windows Phone 8.

Do you remember the tricky procedure that we had to implement in this post to manually interact with the server? If you’re going to develop an application for Windows Phone 8 you can forget it, since now the same great SDK we used to develop a Windows Store app is now available also for mobile. This means that we can use the same exact APIs and classes we’ve seen in this post, without having to make HTTP requests to the server and manually parsing the JSON response.

The approach is exact the same: create an instance of the MobileServiceClient class (passing your service’s address and the secret key) and start doing operations using the available methods.

The only bad news? If your application is targeting Windows Phone 7, you still have to go with the manual approach, since the new SDK is based on WinRT and it’s compatible just with Windows Phone 8.

You can download the SDK from the following link: https://go.microsoft.com/fwLink/?LinkID=268375&clcid=0×409

Josh Twist (@joshtwist) posted BUILD 2012: The week we discovered ‘kickassium' on 11/3/2012:

It’s been a very long week, but a very good one. Windows Azure Mobile Services got it’s first large piece of airtime at the BUILD conference and the reaction has been great. Here’s just a couple of my favorite quotes so far from the week:

“Mobile Services is the best thing at BUILD, and there’s been a lot of cool stuff at BUILD” – Attendee in person

“I'm tempted to use Windows Azure #mobileservices for the back end of everything from now on. Super super awesome stuff. #windowsazure” – Andy Cross

“Starting #Azure #MobileServices with @joshtwist. I heard that in order to make it they had to locate the rare mineral Kickassium. #bldwin”- James Chambers

Hackathon

The BUILD team also hosted a hackathon and Mobile Services featured prominently. In fact two of the three winners of the hackathon was built on Mobile Services and you can watch the team talk about their experience in their live interview on Channel 9 (link to come when the content goes live). Again, some favorite quotes from the winning teams (some of which were mentored by the incredible Paul Batum):

“I was watching the Mobile services talk on the live stream, and as I was watching it I started hooking it up. By the time he finished his talk, I got the backend for our app done” – Social Squares, winner

“We got together on Monday and we did a lot of work – he did a service layer, I did a web service layer, we did bunch of stuff that would help [our app] to communicate, and then we went to Josh’s session… and we threw everything away and used Mobile Services. What took us roughly 2000 lines of code, we got for free with Mobile Services” – QBranch, winner

Sessions

I had three presentations at BUILD, including a demo at the beginning of the Windows Azure Keynote – check it out. Mobile Services is 10 minutes in: http://channel9.msdn.com/Events/Build/2012/1-002

I also had two breakout sessions and I’m pleased to announce that the code for these is now available (links below each session):

Developing Mobile Solutions on Windows Azure Part I

We take a Windows Phone 8 application that has no connectivity and uses no cloud services, to building out a whole connected scenario in 60 minutes. There’s a lot of live coding, risk and we even get (entirely by coincidence) James Chambers up on stage for some audience interaction that doesn’t quite go to plan! The code for this is up on github here (download zip).

Also, be sure to checkout my colleagues Nick and Chris’ awesome session which follows on from this: Developing Mobile Solutions on Windows Azure Part II.

Windows 8 Connectathon with Windows Azure Mobile Services

In this session, I build a Windows 8 application starting from the Mobile Services quickstart, going into some detail on authentication, scripts and push notifications including managing channels. The code for is up on github here (download zip) and – due to popular demand I created a C# version of the Windows 8 client. The Windows Phone client was pretty easy – I’ll leave that as an exercise for the reader.

Channel 9 Live

Paul and I were also interviewed by Scott Hanselman on Channel 9 Live – right after the keynote. We had a blast talking to Scott about Mobile Services and got to answer some questions coming in from the audience.

One of the outcomes of the Channel 9 interview was we promised to setup a Mobile Services UserVoice. We never want to break a promise on Mobile Services so here you go: http://mobileservices.uservoice.com – so please log your requests and get voting! Don’t forget about our forums and always feel free to reach out to me on twitter @joshtwist.

Glenn Gailey (@ggailey777) described Mobile Services: request new features, //build// demos, and a new poster in an 11/2/2012 post:

Some interesting things today for fans of Windows Azure Mobile Services, Microsoft’s cloud-based backend solution for mobile device apps…

Mobile Services Feedback Site is Open

I wanted to let you know that the Windows Azure Mobile Services team has opened up their feedback request site for you to provide feedback on and propose new ideas for new Mobile Services features. I’ve already added mine, go add yours!

http://mobileservices.uservoice.com/forums/182281-feature-requests

New Windows Azure Platform Poster

Also, there is a cool new Window Azure platform poster that we made for the //build/ conference, which features Mobile Services prominently.

Check it out: www.microsoft.com/en-us/download/details.aspx?id=35473

I hope it shows up soon in Server Posterpedia app.

Checkout Josh’s Demo at //build/

I watched Satya (President of MS Server and Tools) give his keynote this past Wednesday morning at the //build/ conference. The first thing that he talked about was Mobile Services—featuring a smokin’ hot demo by Josh Twist. Satya also called Mobile Services his “favorite” Azure service.

In this demo, Josh creates a new mobile service and a new Windows Store app, hooks-up push notifications, integrates the app with SkyDrive, and triggers a notification from a second Windows Phone 8 app, all in about 5 min. You have to check it out.

http://channel9.msdn.com/Events/Build/2012/1-002

I’ve seen a bunch of these demos from Josh, and they are getting better every time. Great job Josh (his demo starts about 11min into Satya’s talk).

Here are two other talks on Mobile Services given at //BUILD/:

<Return to section navigation list>

Marketplace DataMarket, Cloud Numerics, Big Data and OData

Doug Mahugh (@dmahugh) reported on OData at Information on Demand 2012 in an 11/8/2012 post to the Interoperability @ Microsoft blog:

I attended IBM’s Information on Demand conference two weeks ago, where I had the opportunity to talk to people about OData (Open Data Protocol). Microsoft and IBM are collaborating on the development of the OData standard in the OASIS OData Technical Committee, and for this conference we were demonstrating a simple OData feed on a DB2 database, consumed by a variety of client applications.

Here’s a high-level view of the architecture of the demo app:

to the Interoperability @ Microsoft blogFor this demo, we deployed an OData service on Windows Azure that exposes a few entities from a DB2 database running on IBM’s cloud platform. By leveraging WCF Data Services in Visual Studio, we were able to create this OData feed in a matter of minutes.

Here’s a screencast that shows the steps involved in creating the demo service and consuming it from various client devices and applications:

For more information about using OData with DB2 or Informix, see “Use OData with IBM DB2 and Informix” on the IBM DeveloperWorks site.

The growing OData ecosystem is enabling a variety of new scenarios to deliver open data for the open web, and it was great to have the opportunity to learn from so many perspectives this week! Standardizing a URI query syntax and semantics means that data providers and data consumers can focus on innovative ways to add value by combining disparate data sources, and assures interoperability between a wide variety of data producers and consumers. To learn more about OData, including the ecosystem, developer tools, and how you can get involved, see this blog post.

Special thanks to Susan Malaika, Brent Gross, and John Gera of IBM for all of their help with putting together the demo and their support at the booth throughout the conference. We’re looking forward to continued collaboration with our colleagues at IBM and the many other organizations involved in the ongoing standardization of OData!

<Return to section navigation list>

Windows Azure Service Bus, Access Control Services, Caching, Active Directory and Workflow

Alex Simon described Enhancements to Windows Azure Active Directory Preview in an 11/7/2012 post:

I’m happy to have the opportunity to share with you another set of enhancements we’ve recently added to our Windows Azure Active Directory Preview. We were excited by the response we got from developers when we launched the Preview in May of this year and over the last few months we’ve worked hard to make it even better based on your feedback and your use of the platform.

The latest enhancements we’ve added are:

- Added graphical user interfaces for registering your application in a directory tenant.

- Support for the SAML 2.0 protocol for Web SSO.

- Sign out support for the WS-Federation protocol in Web SSO.

- Differential query in the Graph API.

- An updated version of the Windows Azure Authentication Library which is now 100% managed code.

- The ability to federate Directory Tenants with Access Control Namespaces.

Making It Easier to Connect Your Application to Windows Azure Active Directory

When we first launched the Windows Azure Active Directory Preview, we used the Microsoft Online Services Module for Windows PowerShell as the tool administrators used to grant applications access to a company’s Windows Azure AD tenant. While PowerShell is a great approach for some admins, we knew that we also needed to provide a graphical user interface that made it easy for admins to grant your applications access with a few simple clicks.

For developers building applications that need single-sign-on with a single directory tenant, such as an enterprise developer building a web-based line of business application, we’ve developed an extension to Visual Studio that makes it easy to register an application in a directory tenant as part of the development process. This extension is part of the ASP.NET Fall 2012 Update. You can read more about the Windows Azure AD authentication extension part of the update here.

For developers building multi-tenant software-as-a-service applications, we’ve built a web-based experience for your customers to register your application in their directory tenant. You can now give us details about your application, including the access your application requires to the customer’s directory tenant, and your customers can set up single-sign-on and grant your app access to their directory as part of an experience that is integrated with your application’s sign-up process. You can optionally publish your application in to the Windows Azure Marketplace so that customers can discover your application and its benefits.

To help you take advantage of this opportunity, we’ve developed some resources to help get you started:

- The Windows Azure AD Developer Portal to walk you through building apps.

- A walk-through with sample application that demonstrates how to build and register an app.

We would love to get your feedback on this experience, so please provide feedback and questions on our Windows Azure AD forums.

Updates to Web SSO with Windows Azure Active Directory

Addition of SAML 2.0 protocol support for Web SSO

Many of the developers who are participating in the Windows Azure AD developer preview have requested we add support for the SAML 2.0 federation protocol. Many SaaS applications already support SAML 2.0 as a way to sign-in to their applications, and having this support would make it even simpler for these developers to integrate with Windows Azure AD.

We are excited to announce that we now provide this capability and have tested it with a variety of application providers. We’ll be adding integration documentation as we go forward, but a good place to learn more is this MSDN documentation on Windows Azure AD support for SAML 2.0.

Sign out Support in WS-Federation

We have included the ability for applications to support the single sign-out capability for those using the WS-Federation protocol. We will also be adding sign-out support for SAML 2.0 in a future update.

You can now use a URI as an Application Identifier

When we originally launched the Developer Preview we noted that the Audience field in tokens sent by Windows Azure AD was in SPN format and included both the identifier of the application and the identifier of the tenant, while most other federation systems allow applications to be identified by a URI. We have updated the Preview to enable the use of URIs as application identifiers. For example, instead of receiving a token with Audience value of “spn:<appID_GUID>@<tenantID_GUID>”, it can now simply be “https://www.example.com”.

NOTE: Applications that were registered with Windows Azure AD before we deployed this update will no longer accept sign-in responses and must be updated to continue to function properly. We discuss this in detail in this forum post.

Differential Query: Get Delta Changes to Objects You Care About in the Graph

Part of the power of a Graph API is taking action based on the information in the directory that your application is targeting. With differential query, we’ve given you the ability to listen in to only the information you care about so that your application can be both faster and easier to develop.

A differential query request returns all changes made to specified entities during the time between two consecutive requests. For example, if you make a differential query request an hour after the previous differential query request, only the changes made to objects in the scope of the query during that hour will be returned. This functionality is especially useful when you need to cache directory data in your local application store and keep it in sync with the contents of the directory.

We believe this is a great addition to the platform for application developers, and we have some resources to get you started using this in your application:

Update to the Windows Azure Authentication Library Preview

We continue to work to make rich apps and REST development easier and more secure. In this update we are re-releasing part of the Windows Azure Authentication Library (WAAL) with some important improvements:

- The library is now 100% managed. This eliminates the constraints that resulted from the mixed mode nature of the first release. You can now use the library regardless of the “bitness” of your target environment, and the VC runtime is no longer required.

- The first WAAL preview concentrated in one single package both client and protected resource features. That led to a number of constraints, such as the dependency on the WIF1.0 runtime and the impossibility of developing with the .NET 4.0 Client Profile. In this refresh we refactored WAAL to work exclusively in the client role, eliminating those restrictions. We will provide the functionality to address the protected resource role in separate components: more about that soon.

The new WAAL bits are already at work in other products! The Windows Azure Active Directory integration features in the ASP.NET Fall 2012 Update use WAAL as part of the setup and publication experience. WAAL is also used in our new sample app to secure calls to the Graph API.

We will soon update the WAAL samples to take advantage of the new managed-only package. At that time, we will retire the current x86- and x64-specific packages.

Ability to Federate Directory Tenants with Windows Azure Access Control Namespaces

With the changes in the way in which Windows Azure AD handles application identifiers, you are now able to use directory tenants as identity providers in Windows Azure Access Control service namespaces. This new capability opens up a number of interesting scenarios such as enabling your web application to accept both organizational identities from directory tenants and consumer identities from social providers such as Facebook, Google, Yahoo!, Microsoft accounts or any other OpenID provider. You can find detailed instructions on how to implement the scenario in this post.

Breaking Changes to the Developer Preview

As we respond to your feedback and add or modify features, sometimes we have to make breaking changes to the behavior of the Preview. If you’ve already developed applications using Windows Azure Active Directory or plan to build applications now, please make sure you periodically check the Windows Azure Active Directory Forums for notification of upcoming changes.

We Look Forward to Hearing from You!

We’re excited to hear from you about our latest round of enhancements. If you’d like to see them in action now, you can check out Vittorio Bertocci’s presentation from the BUILD 2012 conference.

And here’s one final note about how to get Windows Azure Active Directory. We’ve had a number of people surprised to find out that every Office 365 customer already has a Windows Azure Active Directory tenant! Windows Azure Active Directory is used by a number of Microsoft cloud services today, and through the developer preview it is possible to extend the usage of these directory tenants to other applications. It’s also possible to obtain a “standalone” directory tenant through our provisioning system.

Vittorio Bertocci (@vibronet) described Provisioning a Windows Azure Active Directory Tenant as an Identity Provider in an ACS Namespace in an 11/7/2012 post:

Thanks to the improvements introduced in the latest refresh of the developer preview of Windows Azure Active Directory, we are finally able to support a scenario you often asked for: provisioning a Windows Azure Active Directory tenant as an identity provider in an ACS namespace.

In this post I am going to describe how to combine the various parts to achieve the desired effect, from the high level architecture to some very concrete advice on putting together a working example demonstrating the scenario.

Overview

Let’s say that we want to develop one MVC application accepting users coming from both Facebook and one customer’s Windows Azure AD, say from Trey Research, which uses a Windows Azure AD directory tenant for its cloud-based workloads.

One way in which you could decide to implement the solution would be to outsource most of the authentication work to one ACS namespace (let’s call it LeFederateur, in honor of my French-speaking friends) and configure that namespace to take care of the details of handling authentication requests to Facebook or to Trey Research. Your setup would look like the diagram below (click on the image for the full view).

From right to left, bottom to top:

- Your application is configured (via WIF) to redirect unauthenticated requests to the ws-federation endpoint of the ACS namespace

- The ACS namespace contains a representation of your application, in form of relying party

- The ACS namespace contains a set of rules which describe what claims should be passed on (as-is, or somehow processed) from the trusted identity providers to your application

- The ACS namespace contains the coordinates of the identity providers it trusts; in this case

- There is an entry for a Facebook application IdP type, created according to the usual ACS provisioning flow for Facebook apps

- There is an entry for a WS-Federation IdP type, pointing to the Try Research’s directory tenant. To be exact, pointing to the metadata document hosted at https://accounts.accesscontrol.windows.net/treyresearch1.onmicrosoft.com/FederationMetadata/2007-06/FederationMetadata.xml

- Facebook contains the definition of the app you want to use for handling Fb authentication via ACS

- The Trey Research’s directory tenant contains a service principal which describes the ACS namespace’s WS-Federation issuing endpoint as a relying party

I am sure that the ones among you who are already familiar with the concept of federation provider are not especially surprised by the above. This is a canonical topology, where the application outsources authentication to a federation provider which in turn handles relationships with the actual identity sources, in form of identity providers. The ACS namespace plays the role of the federation provider, and the Windows Azure AD tenant plays the role of the identity provider.

Rather, the question I often got in the last few months was: what prevented this from working before the latest update?That’s pretty simple. In the first preview ServicePrincipals could only be used to describe applications with realms (in the ws-federation sense) following a fixed format, namely spn:<AppId>@<TenantId> (as described here) which ended up being used in the AudienceRestriction element of the issued SAML token. That didn’t work well with most of the existing technologies supporting claims-based identity, including with ACS namespaces: in this specific case, LeFederateur would process incoming tokens only if their AudienceRestriction element would contain “https://lefederateur.accesscontrol.windows.net/” and that was simply inexpressible. With the improvements we made to naming in this update, service principals can use arbitrary URIs as names, resulting in arbitrary realms & audience restriction clauses: that restored the ability of directory tenants to engage as identity providers through classic ws-federation flows, including making ACS namespace integration (or any other federation provider's implementation) possible.

Walkthrough

Enough with the philosophical blabber! Ready to get your hands dirty? Here there’s a breakdown in individual tasks:

- create (or reuse) an ACS namespace

- create a Facebook app and provision it as IdP in the ACS namespace

- create (or reuse) a directory tenant

- provision a service principal in the directory tenant for the ws-federation endpoint of the ACS namespace

- provision the directory tenant’s ws-federation endpoint as an IdP in the ACS namespace

- create an MVC app

- use the VS2012 Identity and Access tools for connecting the application to the ACS namespace; select both Facebook and the directory tenant as IdPs

- Optional: modify the rule group in ACS so that the Name claim in the incoming identity will have a consistent value to be shown in the MVC app UI

many of those steps are well-known ACS tasks, which I won’t re-explain in details here; rather, I’ll focus on the directory-related tasks.

1. Create (or reuse) an ACS namespace

This is the usual ACS namespace creation that you know and love… but wait, there is a twist!