Windows Azure and Cloud Computing Posts for 4/23/2010+

| Windows Azure, SQL Azure Database and related cloud computing topics now appear in this weekly series. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Table and Queue Services

- SQL Azure Database, Codename “Dallas” and OData

- AppFabric: Access Control and Service Bus

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Windows Azure Infrastructure

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Read the detailed TOC here (PDF) and download the sample code here.

Discuss the book on its WROX P2P Forum.

See a short-form TOC, get links to live Azure sample projects, and read a detailed TOC of electronic-only chapters 12 and 13 here.

Wrox’s Web site manager posted on 9/29/2009 a lengthy excerpt from Chapter 4, “Scaling Azure Table and Blob Storage” here.

You can now download and save the following two online-only chapters in Microsoft Office Word 2003 *.doc format by FTP:

- Chapter 12: “Managing SQL Azure Accounts and Databases”

- Chapter 13: “Exploiting SQL Azure Database's Relational Features”

HTTP downloads of the two chapters are available from the book's Code Download page; these chapters will be updated for the January 4, 2010 commercial release in April 2010.

Azure Blob, Table and Queue Services

Jason Farrell’s Using Blobs with Windows Azure post of 4/23/2010 describes managing images as Windows Azure Blobs:

So before I left for Japan I went to the Azure Bootcamp in Southfield, MI. My goal was to understand how I could potentially use Azure to deliver better solutions for customers. To that end, upon my return I have started to play with what I learned and see where it takes me.

To that end, among the many facets of Azure that intrigue me, I decided to play with storage first. Coming back from Japan, I have about 600 pictures that I wanted to manage somehow. I figured I would try to see how it I could work with images. To this end, I created a very basic ASP .NET Web Application with a couple pages, one to add containers and then add blobs to those containers, then another to view those blobs and delete them.

He continues with sample code, which is available for download.

The Windows Azure Storage Team describes how to work around a bug in the SaveChangesWithRetries and Batch Option with OData in this 4/22/2010 post:

We recently found that there is a bug in our SaveChangesWithRetries method that takes in the SaveChangesOptions in our Storage Client Library.

public DataServiceResponse

SaveChangesWithRetries(SaveChangesOptions options)

The problem is that SaveChangesWithRetries does not propagate the SaveChangesOptions to OData client’s SaveChanges method which leads to each entity being sent over a separate request in a serial fashion. This clearly can cause problems to clients since it does not give transaction semantics. This will be fixed in the next release of our SDK but until then we wanted to provide a workaround.

The goal of this post is to provide a workaround for the above mentioned bug so as to allow “Entity Group Transactions” (EGT) to be used with retries. If your application has always handled retries in a custom manner, you can always use the OData client library directly to issue the EGT operation, and this works as expected with no known issues as shown here:

context.SaveChanges(SaveChangesOptions.Batch);

We will now describe a BatchWithRetries method that uses the OData API “SaveChanges” and provides the required Batch option to it. As you can see below, the majority of the code is to handle the exceptions and retry logic. But before we delve into more details of BatchWithRetries, let us quickly see how it can be used.

MDSN offers an updated Differences Between Development Storage and Windows Azure Storage Services online help topic:

There are several key differences between the local development storage services and the Windows Azure storage services.

General Differences

- Development storage supports only a single fixed account and a well-known authentication key. This account and key are the only credentials permitted for use with development storage. They are:

Account name: devstoreaccount1 Account key: Eby8vdM02xNOcqFlqUwJPLlmEtlCDXJ1OUzFT50uSRZ6IFsuFq2UVErCz4I6tq/K1SZFPTOtr/KBHBeksoGMGw==Important The authentication key supported by development storage is intended only for testing the functionality of your client authentication code. It does not serve any security purpose. You cannot use your production storage account and key with development storage. You should not use the development storage account with production data.

- Development storage is not a scalable storage service and will not support a large number of concurrent clients.

- The URI scheme supported by development storage differs from the URI scheme supported by the cloud storage services. The development URI scheme specifies the account name as part of the hierarchical path part of the URI, rather than as part of the domain name. This difference is due to the fact that domain name resolution is available in the cloud but not on the local computer. For more information about URI differences in the development and production environments, see Understanding Storage Service URIs.

Differences for Blob Service

- The local Blob service only supports blob sizes up to 2 GB.

- If two requests attempt to upload a block to a blob that does not yet exist, one request will create the blob, and the other may return status code 409 (Conflict), with storage services error code BlobAlreadyExists. This is a known issue.

Differences for Table Service

- Date properties in the Table service on development storage support only the range supported by SQL Server 2005 (i.e., they are required to be later than January 1, 1753). All dates before January 1, 1753 are changed to this value. The precision of dates is limited to the precision of SQL Server 2005, meaning that dates are precise to 1/300th of a second.

- Development storage supports partition key and row key property values of less than 900 bytes. The total size of the account name, table name, and key property names together cannot exceed 900 bytes.

- The total size of a row in a table in development storage is limited to less than 1 MB.

- Development storage does not validate that the size of a batch in an entity group transaction is less than 4 MB. Batches are limited to 4 MB in Windows Azure, so the developer must ensure that a batch does not exceed this size before transitioning to the Windows Azure storage services in the cloud.

- In development storage, querying on a property that does not exist in the table returns an error. Such a query does not return an error in the cloud.

- In development storage, properties of data type Edm.Guid or Edm.Binary support only the Equal (

eq) and NotEqual (ne) comparison operators in query filter strings.

<Return to section navigation list>

SQL Azure Database, Codename “Dallas” and OData

My Eight OData Sessions Scheduled for TechEd North America 2010 lists six breakout sessions, one Birds of a Feature, and one Hands-On Lab (HOL) sessions scheduled as of 4/23/2010 for TechEd North America 2010.

Thomas LaRock provides links to recent SQL Azure Videos in this 4/22/2010 post:

Since I have been railing on SQL Azure lately, I thought I could share with you some of the videos you can watch for free over at MSDN. In case you were not aware, there is a LOT of free content over at MSDN and I am trying to utilize it more and more these days. Right now I am using it as a way to understand more about SQL Azure and I thought I could share three videos that you may also find useful.

- MSDN Video: Microsoft SQL Azure Security Model (Level 200) This session will cover the following topics:• Authentication• Authorization

- MSDN Video: Microsoft SQL Azure RDBMS Support (Level 200) This session will cover the following topics:• Creating, accessing and manipulating tables, views, indexes, roles, procedures, triggers, and functions• Insert, Update, and Delete• Constraints• Transactions • Temp tables• Query Support

- MSDN Webcast: geekSpeak: SQL Azure Under the Hood with Chris Rolon (Level 200) In this episode of geekSpeak, Chris Rolon gives us a look under the hood of Microsoft SQL Azure to see how was constructed. Chris discusses the issues involving high availability, failure detection, automatic failover, and the distributed data fabric.

You alsom might check out his Related Posts to other SQL Azure topics.

See the Windows Azure Storage Team’s description of how to work around a bug in the SaveChangesWithRetries and Batch Option with OData in the Azure Blob, Table and Queue Services section.

Beth Massi shows you how to Add Spark to Your OData: Consuming Data Services in Excel 2010 Part 2 in this 4/22/2010 tutorial post to her Beth Massi - Sharing the goodness that is VB blog:

Last post I talked about how you can create your own WCF data services (OData) and use PowerPivot to do some powerful analysis inside Excel 2010 as well as how to use the new sparklines feature. If you missed it:

Add Spark to Your OData: Consuming Data Services in Excel 2010 Part 1

In this post I want to show how you can create your own Excel client to consume and analyze data via an OData service exposed by SharePoint 2010. I’ll show you how to write code to call the service, perform a LINQ query, data bind the data to an Excel worksheet and generate charts. I’ll also show you how you can add these cool sparklines in code.

Consuming SharePoint 2010 Data Services

You can build your own document customizations and add-ins for a variety of Microsoft Office products using Visual Studio. You can provide data updating capabilities, integration with external systems or processes, write your own productivity tools, or extend Office applications with anything else that you can imagine with .NET. For more ideas and tutorials, check out the VSTO Developer Center and the VSTO Team blog.

Let’s create a customization to Excel that analyzes some data from SharePoint 2010. SharePoint 2010 exposes its list data and content types via an OData service that you can consume from any client. You can also use the service to edit data in SharePoint as well (as long as you have rights to do so). If you have SharePoint 2010 installed, you can navigate to the data service for the site that contains the data you want to consume. To access the data service of the root site you would navigate to http://<servername>/_vti_bin/ListData.svc.

For this example, I created a sub-site called Contoso that has a custom list called Incidents for tracking the status of insurance claims. Items in the list just have a Title and a Status field. When I navigate my browser to the Contoso data service http://<servername>/Contoso/_vti_bin/ListData.svc, you can see the custom lists and content types get exposed as well.

However, there is a better way to explore the types that OData services expose using a Visual Studio 2010 extension called the Open Data Protocol Visualizer. You can install this extension directly from Visual Studio 2010. On the Tools menu select Extension Manager, then select the Online Gallery tab, choose the Tools node and from there you can install the visualizer. Once you restart Visual Studio you can add a service reference to the data service, right-click on it and select View in Diagram. Then you can select the types you want to explore:

Beth continues with the fully illustrated details for “Creating a Document Customization for Excel 2010 using Visual Studio 2010.” It’s refreshing to see VB.Net in posts by a Microsoft employee other than Paul Vick.

The Microsoft Codename “Dallas” Team published a 17-page, downloadable Microsoft Codename "Dallas" Whitepaper on 4/22/2010. From the Summary:

Microsoft Codename "Dallas" is a new cloud service that provides a global marketplace for information including data, web services, and analytics. Dallas makes it easy for potential subscribers to locate a dataset that addresses their needs through rich discovery. When they have selected the dataset, Dallas enables information workers to begin analyzing the data and integrating it into their documents, spreadsheets, and databases. Similarly, developers can write code to consume the datasets on any platform or simply include the automatically created proxy classes. Applications from simple mash-ups to complex data-driven analysis tools are possible with the rich data and services provided. Applications can run on any platform including mobile phones and Web pages. When users begin regularly using data, managers can view usage at any time to predict costs. Dallas also provides a complete billing infrastructure that scales smoothly from occasional queries to heavy traffic.

For subscribers, Dallas becomes even more valuable when there are multiple subscriptions to different datasets: although there may be multiple content providers involved, data access methods, reporting and billing remains consistent.

For content providers, Dallas represents an ideal way to market valuable data and a ready-made solution to e-commerce, billing, and scaling challenges in a multi-tenant environment – providing a global marketplace and integration points into Microsoft’s information worker assets.

The download page’s Brief Description section begins with “HIMSSManagingTheRevolutionEPMWhitePaper,” which indicate that the white paper might have been intended for presentation at the most recent Healthcare Information and Management Systems Society (HIMSS) Annual Conference & Exhibition, held on 2/20 through 2/24/2010 in Orlando Florida or at the colocated meeting of the Microsoft Health Users Group. EPM is an abbreviation for [medical] Enterprise Practice Management.

Sajid Qayyum describes Restrictions of Stored Procedures in SQL Azure and offers workarounds in this 4/22/2010 post:

While migrating my stored procedures to SQL Azure, I received errors in some because of lack of support of some of the functionality. I had to find a workaround to successfully export them to SQL Azure.

Working with XML

sp_xml_preparedocument

It reads the XML string within the SP, parses it using MSXML parser and provides a handle to the parsed document, which is stored locally by the SQL Server. This parsed document is a tree representation of the various nodes in the XML document. It is not supported by SQL Azure.

sp_xml_removedocument

It removes the parsed xml document created as a result of sp_xml_preparedocument. It is not supported by SQL Azure.

OpenXML

It provides a rowset view over an xml document. It is not supported by SQL Azure. …

Sajid continues with an example of the sp_xml_removedocument problem and provides a workaround.

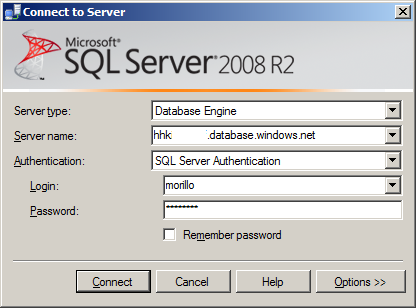

Alberto Morillo reminds us from the Dominican Republic on 4/22/2010 about the infamous Cannot Connect to SQL Azure error:

Applies to: Microsoft SQL Server 2008, Microsoft SQL Server 2008 R2, SQL Azure.

Problem Description.

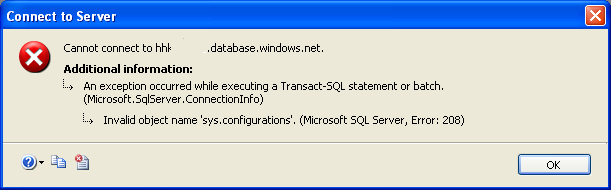

While trying to connect to my SQL Azure server using Microsoft SQL Server Management Studio 2008, I received the following error:

Connect to Server

Cannot connect to hhkxxxxxxx.database.windows.net

Additional information:

An exception occurred while executing a Transact-SQL statement or batch.

(Microsoft.SqlServer.ConnectionInfo)

Invalid object name ‘sys.configurations’. (Microsoft SQL Server, Error: 208)

Cause.

As David Robinson documented here, the Object Browser of Microsoft SQL Server Management Studio 2008 does not work with SQL Azure instances.Solution.

David posted a workaround for this issue here.However, if you use Microsoft SQL Server Management Studio 2008 R2 to get connected to SQL Azure you won't have any inconvenience. This version of Microsoft SQL Server Management Studio is fully compatible with SQL Azure.

You might find Alberto’s Getting Started with Windows Azure Platform post of interest.

<Return to section navigation list>

AppFabric: Access Control and Service Bus

No articles of significance on this topic today.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

The onCloudComputing blog reported VIDIZMO announces the success of Live Webcast conducted for BRTF symposium Windows Azure Platform on 4/23/2010:

VIDIZMO announces the success of live Webcast conducted for Blue Ribbon Task Force (BRTF) on Economics of Sustaining Digital Information, introduced by Shaun O’Connor, Solution Specialist at Microsoft US Federal Government. The symposium addressed pressing issues in today’s information age, and proposed solutions to preserve ever-growing amount of digital data and information over the Web. VIDIZMO accomplished successful live Webcast of the complete event and the presentations organized during the event, using its Microsoft Silverlight built application, Live Presenter & Recorder, hosted and served via Windows Azure.

The event observed overwhelming response, and received the attention of diverse, worldwide audience as the launch of the event was pronounced, “The response to the symposium has been extraordinary – we filled all the seats in just a few days,” stated Francine Berman, co-chair of the Blue Ribbon Task Force.

“Microsoft is excited VIDIZMO has invested in supporting Silverlight and the next-generation application development platform,” said Brian Goldfarb, director of the developer platform group at Microsoft Corp. “VIDIZMO truly provides it users with the tools to create unique experiences that run across many and varied devices.”

Symposium presented seven streamlined sessions and panel discussions with renowned professionals and economists from the industry including Hal R. Varian - Chief Economist at Google, Chris Lacinak - Founder and President at Audiovisual Preservation Solutions, Derek Law – Board Chair at JISC Advance, and Keynote, Thomas Kalil – Deputy Director for Policy in the Office of Science and Technology Policy at Executive Office of the President of the United States.

Hosted by Microsoft Corp., the event required an end-to-end solution that could provide live video Webcast of the symposium directly to an unanticipated number of audiences, the deadline was short and BRTF required quick to implement, cost effective solution. Vital features requested by BRTF included interactive chat between the presenter and the audience, ability to synchronize content such as PowerPoint slides, images, sounds, and caption to the live video stream, closed captioning to meet 508 (c) compliance, simple playback, and additionally, BRTF wanted to make symposium recordings available for those unable to attend or wanted to revisit the knowledge stream, “We wanted to both webcast the Conversations and provide video after the symposium on the Task Force website to share the information with the widest possible audience,” said Francine Berman.

Bob Familiar’s @HOME WITH WINDOWS AZURE post to the InnovateShowcase blog of 4/23/2010 describes a two-hour Webcast by Brian Hitney, Jim O’Neil and John McClelland:

Brian Hitney, Jim O’Neil and John McClelland are running a WebCast program that will guide you through the process of building and deploying a large scale Azure application.

In less than two hours they will build and deploy a real cloud app that leverages the Azure data center and helps make a difference in the world. Yes, in addition to building an application that will leave you with a rock-solid understanding of the Azure platform, the solution you deploy will contribute back to Stanford’s Folding@home distributed computing project.

There’s no cost to you to participate in this session and each attendee will receive a temporary, self-expiring, full-access account to work with Azure for a period of 2-weeks.

For this briefing you will:

Receive a temporary, self-expiring full-access account to work with Azure for a period of 2-weeks at no cost - accounts will be emailed to all registered attendees 24-48 hours in advance of each event.

Build and deploy a real cloud app that leverages the Azure data center

Sweet!

Ronin Research analyzes Microsoft TownHall’s “real deal engagement” in a Microsoft’s Releases TownHall To Improve Engagement post of 4/23/2010:

When it comes to government and politics, there is what is called engagement and then there is the real deal engagement.

There are agencies that launch twitter streams, maybe have an RSS feed for their blog, they may have a Facebook fan page, and they think or at least say that they are engaging the public. There are politicians that successfully use the same tools to build a groundswell of volunteers and to educate the public about their political platform. They both think that they are engaging the public.

But if you look at these closely what you will see in the comments, in the tweets, on the wall, and in most any other social media channel that they use is the agency posting one thing, some citizens responding, and then nothing more from the agency.

Last time I checked that does not constitute engagement. A one-way conversation is not engagement. Sure, you might see a few responses back from the agency but that seems to be as rare as an honest politician. That is at its best marketing and at is worst appeasement. …

Ronin concludes:

The Bottom Line: Microsoft has provided a good tool that will probably be of the most use for political campaigns and sitting politicians, though government agencies looking to gather general input will also find it useful. It will not immediately replace the formal comment and response mechanisms that are already in place for public comments on agency policies, but it will encourage government agencies to turn to the social media space to gather public comments. What do you think?

Bruce Kyle reports Epson Brings Paper to the Cloud on Windows Azure in this 4/22/2010 post to the US ISV Developer Community blog:

The team at Epson Imaging Technology Center (EITC) has created an application called Marksheets that converts marks on paper forms into user data on Windows Azure Platform. Mark sheets are forms that can now be printed on standard printers and marked similar to the optically scanned standardized tests that we've all used.

Epson software developer Kent Sisco talks with me about how he created this sample application in the video on Channel 9 titled From Paper to the Cloud -- Epson's Cloud App for Printer, Scanner and Windows Azure.

The demo shows an admin configuring a Marksheet form. The form could be part of an application at a kiosk at a golf course. Next, the video shows a golfer printing out a score sheet and then marks the scorecard for each hole of golf. Once the golf day is completed, the user scans the sheet even if the score sheet is crumpled or torn.

The golfer's marks are then sent to a Windows Azure worker role where the image is translated to data and stored in SQL Azure. Users can then access their golf scores from a Windows Azure ASP.NET application.

The demo is a prototype for printing, scanning, and data storage applications for education, medicine, government, and line-of-business. You can apply this technology to create your own data input form or mark sheet. Users can print the form on demand then mark, scan, access their data in the cloud.

If you are interested in working with Epson on making paper forms for inputs to your software, send me a message to the USISVDE Contact Page. It won’t be recorded as a comment to this post. Be sure to include your email address and I’ll put you in touch with the key people at Epson so you can be nominated into their early adopter program to try out Marksheets and help form future product plans.

A unique and interesting application for PaaS clouds.

LinkedIn reported a Dynamics Cloud Services Channel Development Mgr at Microsoft Corporation job opening on 4/21/2010:

- Location: Bellevue, Wa (Greater Seattle Area)

- Type: Full-time

- Experience: Mid-Senior level

- Functions: Sales

- Industries: Computer Software

- Posted: April 21, 2010

- Compensation: salary plus bonus

- Employer Job ID: 717754

Job Description

Dynamics Cloud Services Channel Development Manager

Are you interested in building a world-wide Software plus Services (S+S) partner community and channel development strategy? Are you knowledgeable about business applications on the web, industry transformation to services, and enabling Microsoft field and partners to make the shift to this exciting, emerging business model? Do you want to be part of the team defining our Dynamics S+S go to market strategy across thousands of partners? Do you enjoy leading key workstreams across product teams, readiness, marketing, sales, operations, and support? …As Cloud Services Channel Development Manager, this role will operationalize S+S channel and field partner strategy and execution for Dynamics ERP Partner Hosted and Attached Services and CRM Online as well as establish alignment for programmatic partner initiatives with BPOS and Azure while driving the future field and channel adoption of S+S for Dynamics. [Emphasis added.] …

Brian Boruff reports “CSC’s Legal Solutions Suite software is now offered on the Windows Azure Platform” in his How CSC And Microsoft Azure Put Lawyers In The Cloud post of 4/19/2010:

In the [embedded video] clip, Brian explains how CSC is the 6th largest software company in America, according to Software Magazine, serving almost every industry across the globe. That begins to paint the picture of how important these technology and business developments in cloud computing are to CSC and its customers and partners.

“We’re strong believers in and committed to Azure and cloud, and the innovations it will drive for our customers,” Brian explains.

How strong? CSC has thousands of developers engineering solutions on the Azure platform. CSC is actually moving some of its own applications and services to the Azure platform.

This is where the lawyers come in (but in a good way). CSC’s Legal Solutions Suite software is now offered on the Windows Azure Platform. It’s a collaborative digital workspace for the applications and business process management firms need but can easily get bogged down in if carried out through antiquated means. You can read about how 6,000 firms have adopted the CSC LSS service offering on the linked MSDN blog.

Jim Nakashima explains Viewing GAC’d Assemblies in the Windows Azure OS in this detailed 3/22/2010 tutorial that I missed when posted:

One of the main issues you can run into when deploying is that one of your dependent assemblies is not available in the cloud.

This will result in your cloud service never hitting the running state, instead it will cycle between the initializing, busy and stopping states.

(the other main reason is that a storage account, especially the one included in the default template, is pointing to development storage – see this post about running the app on the devfabric with devstorage turned off to weed that issue out)

To help you understand what assemblies are available in the cloud and which are not, this post goes through the steps of building a cloud service that will output the assemblies in the GAC – a view into the cloud, similar to my certificate viewing app. (Download the Source Code)

Here’s the final product showing the canonical example of how System.Web.Mvc is not a part of the .NET Framework 3.5 install and needs to be XCOPY deployed to Windows Azure as part of the service package:

Since the GAC APIs are all native, I figured the easiest way to do what I want and leverage a bunch of existing functionality is to simply include gacutil as part my cloud service, run it and show the output in a web page. …

Jim continues with source-code examples and additional screen captures.

Return to section navigation list>

Windows Azure Infrastructure

Lydia Leong’s Getting real on colocation post analyzes why her U.S. colocation forecasts are so much lower that other analyst firms:

Of late, I’ve had a lot of people ask me why my near-term forecast for the colocation market in the United States is so much lower (in many cases, half the growth rate) when compared with those produced by competing analyst firms, Wall Street, and so forth.

Without giving too much information (as you’ll recall, Gartner likes its bloggers to preserve client value by not delving too far into details for things like this), the answer to that comes down to:

- Gartner’s integrated forecasting approach

- Direct insight into end-user buying behavior

- Tracking the entire market, not just the traditional “hot” colo markets …

If I’m going to believe in gigantic growth rates in colocation, I have to believe that one or more of the following things is true:

- IT stuff is growing very quickly, driving space and/or power needs

- Substantially more companies are choosing colo over building or leasing

- Prices are escalating rapidly

- Renewals will be at substantially higher prices than the original contracts …

I don’t think, in the general case, that these things are true. (There are places where they can be true, such as with dot-com growth, specific markets where space is tight, and so on.) They’re sufficiently true to drive a colo growth rate that is substantially higher than the general “stuff that goes into data centers” growth rate, but not enough to drive the stratospheric growth rates that other analysts have been talking about.

Note, though, that this is market growth rate. Individual companies may have growth rates far in excess or far below that of the market.

I could be wrong, but pessimism plus the comprehensive approach to forecasting has served me well in the past. I came through the dot-com boom-and-bust with forecasts that turned out to be pretty much on the money, despite the fact that every other analyst firm on the planet was predicting rates of growth enormously higher than mine.

(Also, to my retroactive amusement: Back then, I estimated revenue figures for WorldCom that were a fraction of what they reported, due to my simple inability to make sense of their reported numbers. If you push network traffic, you need carrier equipment, as do the traffic recipients. And traffic goes to desktops and servers, which can be counted, and you can arrive at reasonable estimates of how much bandwidth each uses. And so on. Everything has to add up to a coherent picture, and it simply didn’t. It didn’t help that the folks at WorldCom couldn’t explain the logical discrepancies, either. It just took a lot of years to find out why.) [Emphasis added.]

Rob Preston wrote “Give it some credit: Early signs show a company committed to transforming the way it builds products and serves customers (and generates profits)” as the preface to his Down To Business: Microsoft The Cloud Innovator post of 4/23/2010 to InformationWeek:

Microsoft, always the butt of criticism because of its enormous size and influence, is taking more jabs than usual about its perceived inability to innovate. One former executive, writing in The New York Times, called the company "a clumsy, uncompetitive innovator" because, he argued, entrenched Microsoft product teams regularly undermine up-and-coming ones. My colleague Bob Evans worries that Microsoft is "drifting toward fat and complacent, prone to bold talk but tepid action." The message boards are teaming with unfavorable comparisons to Apple, Google, Amazon, and other competitors that are rattling the high-tech rafters with their exciting products and novel delivery models.

I pretty much agreed with that thinking before heading to Redmond last Thursday for a day of meetings with Microsoft's top executives, but I came away more confident (or less skeptical) about the company's ability to shake things up, especially in the cloud. Sure, all the execs, including CEO Steve Ballmer, were on message, reiterating Ballmer's March manifesto that Microsoft is now "all in" when it comes to cloud computing. But as with Bill Gates' "Internet tidal wave" missive in 1995, the proof is in the execution, and most early signs show a company committed to transforming the way it builds products and serves customers (and generates revenue and profit), rather than one just trying to capitalize on the latest hype.

Take the Windows Azure Services Platform. Released in February, this cloud-based application development environment isn't just something Microsoft plucked out of the vapor. The company has been working on it for more than four years, with the goal of providing developers on-demand compute, storage, and networking to host, scale, and manage apps in Microsoft data centers via the Web. Windows Azure is mainly for .Net apps, but it also supports PHP, Java, Ruby, and Python. Later this year, Active Directory will be able to issue a federated identity to authenticate users of Azure services.

"Interest is off the charts" was the predictable assessment of Bob Muglia, president of Microsoft's Server and Tools business, though he and other company execs note that mainstream adoption of Azure is still years away and that Microsoft is now absorbing prodigious customer feedback to iterate the platform. As for everyday apps such as Exchange, SharePoint, and Dynamics CRM, Microsoft sees half of its revenue from those products coming from cloud-based versions within four years. "We need to be (and are) willing to change our business models to take advantage of the cloud," Ballmer, the self-described "old PC salesman," wrote in his March memo to employees. Compare those words and deeds to the equivocation about software as a service from SAP and Oracle, and then tell me who the laggards are.

“Cloud's ability to make IT more agile is undeniable, says CIO.com's Bernard Golden” in his 4/23/2010 Cloud Computing: It's the Economics Stupid post to CIO.com. “But once that benchmark is met, the cost effectiveness of the internal cloud versus public providers will be a key measure of success”:

The question of costs associated with cloud computing continue to be controversial. You may recognize in this blog's title, an homage to the motto of Bill Clinton's 1992 Presidential campaign: "It's the Economy, Stupid." The motto referred to the decision by the Clinton campaign to focus relentlessly on how the U.S. economy was doing in 1992, sidestepping other issues and always, always circling back to the economic outlook for the US. I was reminded of this by some recent discussions on Twitter that discussed the importance of economics in terms of cloud adoption.

This question of cloud economics arises especially in the context of the endless discussions about private vs. public clouds (private usually being thought of as referring to a cloud environment inside a company's own data center). Some people assert that private clouds obviously must be less expensive, because one owns the equipment and is not paying what is, in effect, a rental fee. The obvious analogy is buying a car vs. renting a car. If one uses a car every day, it's clearly less expensive to own rather than pay a daily rental fee to, say, Hertz. Sometimes this argument is made stronger by noting that public cloud providers also are profit-seeking enterprises, so an extra tranche of end-user cost is present, representing the profit margin of the public offering.

The proponents of public cloud computing cost advantages point to the economies of scale large providers realize. At a recent "AWS in the Enterprise" event, Werner Vogels, CTO of Amazon, noted that Amazon buys "10s of racks of servers at a time" and gets big discounts because of this. Also, AWS buys custom-designed equipment that leave out unneeded, power-using features like USB ports. Moreover, the public cloud providers implement operations automation to an extreme degree and thereby drop the labor cost factor in their clouds.

There is yet a third approach about cloud economics that calls for blend of private and public (sometimes referred to as hybrid) which marries the putative financial advantages of self-owned private clouds and the resource availability of highly elastic public clouds; this can be summarized as "own the base and rent the peak."

What is interesting about Twitter discussion around this topic is that people point to surveys about private cloud interest indicating the real motive behind the move to cloud computing is agility, i.e., the ability to obtain computing resources very quickly and in an on-demand fashion. Cost savings of cloud computing were considered secondary to rapid resource availability.

InformationWeek Analytics: Cloud Computing’s Informed CIO: Government Clouds offers on 4/23/2010 Michael Biddick’s “The Business Case for Government Clouds” report, the first of a four-part series. Here’s the abstract:

Federal IT pros are being inundated with information on the benefits of cloud computing, and 2010 will indeed be ayear when some of the barriers to cloud computing in the federal market are lowered. It's time for CIOs in government agencies to begin developing business cases for cloud environments.

This report is the first in a four-part series intended to help government IT pros get started on the path to elastic, on-demand computing. In this report, we assess variables that factor into the decision of whether, and how, to implement cloud computing in federal environments. We explore usage scenarios where the business case for clouds is strongest, as well as some of the barriers.

The business case for cloud computing is related to an agency’s commitment to driving down costs: 64% of federal IT pros responding to an InformationWeek Analytics survey say that loweringthe cost of IT operations is a key business driver of cloud computing. Applications that areutilities areprime candidates to be offered from a cloud environment. Federal agencies stand to see considerable savings in getting their e-mail, collaboration, CRM, and other utility apps from a cloud.

One of the major benefits of cloud computing is the ability to procure what you need based on current requirements and scale up or down on demand. Cloud services can be accounted for as operational expenses, reducing the need for capital investment in the data center.

Security is the number one barrier to cloud adoption in the federal market. Look for incremental ways to move services into a cloud that are low in risk. Guard against putting critical data in the cloud until there's been a bit more advancement of the security framework.

Jeffrey Schwartz goes Inside Microsoft's Private Cloud in this 4/22/2010 post to the Redmond Channel Partner Online blog:

I had the opportunity this week to see Microsoft's portable data centers, which the company showcased here in New York.

In honor of Earth Day, I thought it would be fitting to describe what Microsoft is showcasing because it does portend its vision for the next generation data centers that have self-cooling systems and servers that don't require fans.

Microsoft first demonstrated the portable data centers at its Professional Developers Conference back in November in concert with the launch of Windows Azure. It gained further prominence last month when Microsoft CEO Steve Ballmer made his "we're all in the cloud" proclamation at the University of Washington with these huge units in tow. The one I saw in New York was 20-feet long by seven feet wide but Microsoft also has one that stretches 40 feet.

These portable data centers, which are designed to be housed outdoors, are packed with loads of racks, blade servers, load balancers, controllers, switches and storage all riding on top of Windows Azure and Microsoft's latest systems management and virtualization technology. …

It is also a reasonable bet that while they are not on Microsoft's official product roadmap, customers will ultimately be able to buy their own Azure powered containers that, in some way, emulate this model, most likely from large systems vendors such as Cisco, Dell, EMC Hewlett-Packard, IBM and custom system builders.

Speaking at the Microsoft Management Summit in Las Vegas Tuesday, Bob Muglia, president of the company's systems and tools business was the latest to suggest as much. "The work that we're doing to build our massive-scale datacenters we'll apply to what you're going to be running in your datacenter in the future because Microsoft and the industry will deliver that together," Muglia said, according to a transcript of his speech.

Microsoft will buy 100,000 computers this year for its own data centers, Muglia said. They will be housed in these containers weighing roughly 600,000 pounds, equipped on average with 2,000 servers and up to a petabyte of data. …

David Makogon offers a screen capture of Bill Lodin’s Windows Azure: How Do I Store Data in Tables? presentation with a Windows Azure CDN caption:

Very cool! msdev.com (@realmsdev) is using #Azure CDN edge-caching for video delivery.

<Return to section navigation list>

Cloud Security and Governance

David Linthicum claims “A recent survey shows that business folks are doing an end run around corporate IT by adopting cloud services” in his Sneaking around IT to get to the cloud 4/23/2010 post to InfoWorld’s Cloud Computing blog:

A Ponemon Institute survey recently piqued my interest. In the 2010 Access Governance Trends Survey, 87 percent of respondents said too many employees were able to access information that should have been out of reach. And guess what? Cloud computing was a factor -- 73 percent of respondents said that cloud-based applications were enabling business users to skirt organizational controls.

The core issue is IT's loss of control over its assets, including data. Let's face it -- departments are sick of waiting for development and deployment of core business applications or infrastructure services, and they're going directly to a cloud computing provider to get what they need. Think of it as a kind of technological infidelity.

Going around IT and straight to the cloud has become common practice in the last few years. Salesforce.com built its business selling directly to the sales staff rather than to IT; eventually, IT was forced to accept SaaS (software as a service) after the fact. I've watched those battles firsthand.

Today, things are even worse. Now you can get storage as a service, database as a service, and even complete application servers and app dev platforms that are delivered on-demand. With such endless resources available, corporate fiefdoms are creating so-called rogue clouds -- their own array of cloud computing services, including data repositories, that they alone control. IT may not have a clue about what's going on.

The trouble with the rogue approach is that there's no way to ensure that data is handled in accordance with corporate policies. Worse, that data may come with compliance issues, including personal medical or financial information where the law dictates how the data is handled and where it can reside. …

Gorka Sadowski asks “How transitive is Trust?” as he continues his Logs for Better Clouds series with Logs for Better Clouds - Part 5: Daisy Chaining Clouds of 4/23/2010:

So we talked about some of the challenges - and hence opportunities - faced by Cloud Providers. Last time we talked about Trust, and how important Trust is for business relationships.

Trust is already difficult in pretty straightforward environments, but in the context of Clouds, it can become very fuzzy... Read on.

Clouds: Providers, Clients, Partners and Competitors... all at the same time!

We could imagine a world where there are so many cloud providers, so many interconnections between them and so many trust relationships that end-client duties are performed by different cloud providers based on the time of the day, the type and complexity of the task or any other criteria.

This means that a Cloud Provider can be both provider and client.

We could even envision that some Cloud Providers can be both partners as well as competitors. In fact, it is not uncommon for business entities to collaborate on some projects and compete on others. So are these Cloud Providers friendly partners or are they enemies?

In the following diagram, Client A1 uses B1 and B2 as a SaaS Software as a Service provider. B1 uses C1 and C2 as PaaS Platform as a Service provider. And C1 uses D1 and D2 as IaaS Infrastructure as a Service provider.

Client A1 and Client A2 are competitors. Cloud Providers B1 and C2 also are competitors, although C2 provides service to B1.

How would it be possible for all of these entities to collaborate despite requirements for secrecy in a climate of suspicion?

Figure 4 - The layered structure of subcontracting within Cloud Providers. …

John Kindervag’s Dialoging about Tokenization and Transaction Encryption post of 22 Apr 2010 to his Forrester Research Security and Rick blog discusses PCI security requirements:

Last week I published two research reports on the hottest topic in PCI: Tokenization and Transaction Encryption. Part 1 was an introduction into the topic and Part 2 provided some action items for companies to consider during their evolution of these technologies. Respected security blogger Martin McKeay commented on Part 1. Serendipitously, Martin was also in Dallas (where I live) last week and we got an opportunity to chat in person about the report and other security topics.

Martin’s post highlighted several issues that deserve some response. He felt that I “glossed over several important points people who are considering either technology need to be aware of.” Let[‘s] review those items:

Comment: “This is one form of tokenization, but it completely ignores another form of tokenization that’s been on the rise for several years; internal tokenization by the merchant with a (hopefully) highly secure database that acts as a central repository for the merchant’s cardholder data, while the remainder of the card flow stays the same as it is now.”

Response: Tokenization and Trans-E are huge topics that could command an entire book. The purpose of the reports was to provide an introduction into a very complicated issue. Our immediate goal was to provide a definition of the terms and issues and not to answer every potential question that our clients might have. Since Forrester clients are primarily interested in outsourcing their credit card processing in a manner which reduces their PCI scope, we, therefore, focused upon that type of tokenization. Clearly there will be multiple use cases for this type of technology and we will address the expansion of this research as needed.

Comment: “Another criticism I have of the paper is that while it does a good job of explaining that true end to end encryption is from the POS to the acquiring bank, it doesn’t do as good a job in explaining the complexities and pitfalls of point-to-point encryption (P2P).”

Response: The debate on just what to call the type of encryption used in these solutions is both volatile and complex. For the purposes of this research we wanted to extract ourselves from the semantics of the debate and focus on the core concepts. This is why we used the term “Transaction Encryption.” All of the potential issues involving how encryption will be done – end-to-end or point-to point – is a lively topic that was not particularly useful for this particular research. The report does spend a fair bit of time introducing several of the transaction issues including the specific nomenclatures and the current status of various encryption standards bodies. The important thing we wanted to emphasize is that tokenization and transaction encryption are interrelated technologies that together can form a solution that increases security and eases the compliance burden.

Thanks Martin for engaging in this dialog about this important topic. This type of discussion is important to all of the participants in payment card security and I hope others will jump into the debate.

<Return to section navigation list>

Cloud Computing Events

Bill McNee is the author of Saugatuck Research’s Fresh From Paris: Cloud Strategic Planning Positions (SPPs) – And New Channel Research Research Alert of 4/21/2010 (site registration required):

What Is Happening? Earlier this week, Saugatuck Technology was scheduled to speak at the EuroCloud France conference in Paris (April 20, 2010). Unfortunately, clouds of volcanic ash kept Saugatuck CEO Bill McNee from flying across the Atlantic to deliver his Cloud Computing keynote. However, as the saying goes – the show must go on – and Louis Naugès of Paris-based Revevol quickly stepped in and volunteered to deliver the presentation (in this case, in his native French).

Luckily, Saugatuck and Naugès share a similar worldview as it concerns many of the key issues associated with the growth of, and shift to, the Cloud – by McNee’s reckoning, 80 percent or more. In fact, we value Louis’ ongoing and well respected blog (http://nauges.typepad.com/my_weblog/), which we regularly read with keen interest.

With the understanding that Louis would represent Saugatuck, and NOT speak as the President of Revevol, nor as the un-abashed advocate for Google that he normally is (as Revevol is a significant Google VAR) – we quickly agreed to this proposition, with the further understanding (in good humor) that Pierre-José Billotte, President of EuroCloud France would be authorized on our behalf to throw a shoe at Louis should he begin bashing the competition too much!

Why Is It Happening? Since I haven’t heard of any shoe throwing (and have only received several positive emails), we assume that the nearly 150 attendee’s (mostly IT vendors, ISVs, telco’s and consultants) gained good value from our new research. Thank you, Louis!

Our new main tent presentation, a variant of which will be delivered in a few weeks at the upcoming SIIA / OpSource All About the Cloud conference (May 10-12, San Francisco), highlighted some of the key research findings and Strategic Planning Positions (SPPs) from our recently published Research Report SSR-706, entitled Lead, Follow, and Get Out of the Way: A Working Model for Cloud IT through 2014 (25Feb2010) – see Note 1.

In addition, the presentation also introduced a number of new industry frameworks and models – as well as additional SPPs – based on several concurrent research threads that Saugatuck is deeply in the middle of, including finishing up our fifth annual SaaS / Cloud Business Solutions research program (and recently completed global survey) that will culminate in a number of new premium research deliverables that we will publish in the coming months.

Bill continues with “some of the additional SPPs [Strategic Planning Positions] that the presentation highlighted.”

Revevol is an authorized reseller of Google Apps. A reasonably good Google translation of Louis Naugès latest blog post, which I believe is longer than any of mine, is here. Louis’ version of the EuroCloud France presentation with a couple of graphs of Saugatuck’s key findings is here. You can download a PDF with all slides from the EuroCloud France presentation here. An extraordinarily comprehensive and perceptive business and technical analysis of cloud computing’s near future.

David Makogon announced he’ll be presenting on May 12 – Launching and Monitoring your First Azure App to the Azure User Group:

Join me online May 12 at 4pm Eastern, when I present at the Azure User Group, hosted in LiveMeeting. During this talk, we’ll go over the basic building blocks of an Azure application. We’ll then create our first Azure app and see how to deploy it into the cloud. We’ll also see how to monitor an app once it’s deployed.

Please register here if you’d like to attend.

For more information about the Azure User Group, visit the LinkedIn group page or follow AzureUG on twitter.

Following on Twitter.

PlugAndPlayTechCenter.com will present its CloudPlay conference at 440 N. Wolfe Rd., Sunnyvale, CA 94085 on 4/29/2010:

Though cloud computing is not new, the consumer driven need for high volume, scalable storage and platform solutions is quickly becoming a market phenomenon and everyone is scrambling to be on top. Who will win the race?

Calling all entrepreneurs, developers, and investors! Please join us as cloud industry leaders gather to discuss leading trends in cloud computing, including a comparison of Cloud Platform providers, VC interest in the industry, and workshops/panels examining costs, management, security, and development. The conference will include a demo session featuring the hottest startups leveraging various cloud computing platforms.

Microsoft BizSpark is the Platinum Sponsor and Matt Thompson,

Microsoft’s General Manager, Developer & Platform Evangelism, will present the opening keynote at 9:30 to 10:00 AM PDT:

Anatomy of Clouds: Now and in the Future

Cloud computing is being positioned as the solution for most of the hard web/internet problems today (scale, large data set consistency, semantic web, identity/privacy, monetizing the social graph). Cloud computing is many things, and there are many flavors of Clouds - but at their core, Clouds share a number of characteristics (and challenges). This talk will detail a number of these characteristics; the business models these engender; some of the challenges they share; and where the technology is headed. In addition this talk will delve into how development for the Cloud is opening up new and interesting models including emerging aspects like "data as a service."

Microsoft’s David Chou will follow Matt with:

Tech Track: Building on Windows Azure

Microsoft's Windows Azure platform is a cloud-based application platform hosted in Microsoft data centers, which provides a comprehensive set of services that can be used individually or together. Windows Azure can be used to build new applications to run from the cloud or enhance existing applications with cloud-based capabilities. In this session, we will provide a technical overview of the Windows Azure platform, and show how applications can be developed and deployed into the cloud.

David’s CloudPlay – Cloud Computing Event in Mountain View on April 29, 2010 post of 4/23/2010 includes a link to a discount code for a 20% savings in the registration fee:

Special 20% Savings (Discount code: svcloud)

Join us for CloudPlay! We will feature a keynote from Jeff Nick, CTO of EMC, Steve Lucas, SVP of Business User Sales, SAP, Susie Wee, CTO of Cloud Services at HP, and panels/workshops tackling the hottest trends in Cloud Computing today from leaders both big and small.

Though cloud computing is not new, the consumer driven need for high volume, scalable storage and platform solutions is quickly becoming a market phenomenon and everyone is scrambling to be on top. Who will win the race?

Cloud industry leaders will gather to discuss leading trends in cloud computing, including a comparison of Cloud Platform providers, VC Cloud interest, and workshops examining costs, management, security, and development. The conference will include a demo session featuring the hottest companies leveraging various cloud computing platforms, presenting startups will be enterprise oriented.

The Windows Azure Bootcamp Team announced a Bootcamp in Nashville, TN on 6/23 to 6/24/2010:

- Nashville, TN

- Dates : June 23-24, 2010, 8:00am-5:00pm

Register Here

Address

Microsoft Office

2555 Meridian Blvd, Suite 300

Franklin, Tennessee 37067Trainers

Special Notes

- Snacks will be provided. Attendees must provide their own lunch.

- See our What to Bring page to see what you need to have on your computer when you bring it.

The page footer contains the following comment that’s unusual for a Microsoft-sponsored site: “This is the footer. No one looks down here. Hello from Columbus Design.”

<Return to section navigation list>

Other Cloud Computing Platforms and Services

Alex Williams posits Yahoo!'s Smart Investment: The Hadoop Community in this 4/23/2010 post to the ReadWriteCloud blog:

More than 250 people attended a Hadoop developer event at Yahoo! this week, demonstrating again the level of interest the company has in open-source big data initiatives.

Yahoo! says it is the world's biggest Hadoop supporter. We say that's undoubtedly correct. Yahoo! supports community developer events throughout the world. In February it supported the first Hadoop! event in India. In June, it will host the Hadoop Summit.

Yahoo! is not always recognized for its cloud computing efforts but its deep commitment to Hadoop shows how the company views the ways that big data can be used to solve major technology issues such as spam.

Hadoop, according to Wikipedia, "is a Java software framework that supports data-intensive distributed applications under a free license. It enables applications to work with thousands of nodes and petabytes of data."

The developer conference featured discussions from the Hadoop community, including a presentation about using it to fight spam lead and a discussion led by a lead engineer from Facebook.

Vishwanath Ramarao is director of anti-spam engineering for Yahoo! Mail. According to the Yahoo! developer blog, Vish described the intricate cat-and-mouse games played with spammers, and how Yahoo! uses Hadoop to abstract away the complexity of large scale data analysis and provide deep insight into spammer campaigns. …

Alex continues with links to additional Hadoop resources.

Dana Gardner claims “You want the benefits of the Internet, you give up some data about yourself along the way” in the preface to his With Jigsaw buy, Salesforce.com Shows That Lead Generation Is the New Advertising in this 4/23/2010 to his Briefings Direct blog:

Salesforce.com's buy of Jigsaw is the latest, most indicative market mover in the transition to a lead generation economy.

Twitter's forays into a sponsored tweets business model announced last week at Chirp is another. Yahoo selling its soul to Microsoft for Bing is another. And just about everything that Google does is but another. And everything that Facebook does? Ditto. Apple loves the idea, one download at a time. Amazon? One purchase at a time.

These players are poised to grease the skids leading to a lead generation economy, one that makes conventional and current online advertising no more relevant than rabbit ear antennas for the top of your black and white television.

Only a year into the data-driven decade, and the ways in which user-, buyer- and social-interactions are rapidly being brought to bear on B2C and B2B commerce are piling up -- as never before. The model makes especially good sense for B2B, as these decisions are more often data- and information-driven, not emotionally charged as the advertising-juiced B2C domain so often is. And more and more B2B purchases start and end with an online search. …

Lori MacVittie writes “There have been many significant events over the past decade, but looking back these are still having a significant impact on the industry” as the prefix to her Hindsight is Always Twenty-Twenty post of 4/23/2010 about network load balancing, Interop and other virtualization-relation topics:

Next week is Interop. Again. This year it’s significant in that it’s my tenth anniversary attending Interop. It’s also the end of a decade’s worth of technological change in the application delivery industry, the repercussions and impact of which in some cases are just beginning to be felt. We called it load balancing back in the day, but it’s grown considerably since then and now encompasses a wide variety of application-focused concerns: security, optimization, acceleration, and instrumentation to name a few. And it’s importance to cloud computing and dynamic infrastructure is only now beginning to be understood, which means the next ten years ought to be one heck of a blast.

Over these past ten years there’s been a lot of changes and movement and events that have caused quite the stir. But reflecting on those ten years and all those events and changes brings to the fore a very small subset of events that, in hindsight, have shaped application delivery and set the stage for the next ten years. I’m going to list these events in order of appearance, and to do that we’re going to have to go all the way back to the turn of the century (doesn’t that sound awful?).

Lori continues with “THE BIRTH of INFRASTRUCTURE 2.0”, “THE DEATH of NAUTICUS NETWORKS” and “THE NETWORK AS A SERVICE” topics.

Werner Vogels wrote I am looking for new application and platform services on 4/23/2010:

The ecosystem of new application and platform services in the cloud is the future of application development. It will drive rapid innovation and we'll see a wealth of mobile, web and desktop applications arrive that we couldn't dream about a few years ago, and these building blocks are the enablers of that. These services will be delivered not only by new startups but also by enterprises looking to capitalize on their IP.

As examples of such services I always use Twillio (voice &sms) and Simplegeo (location), but it is time to start building out my knowledge of all the different services that are in the ecosystem. If you run such a service or know of one that I should be checking out, please leave the info in the comments below. I'll be using that information in presentations and some future writings on this topic.

Werner’s post proves that it’s difficult to demonstrate the features of IaaS clouds, even one as popular as Amazon EC2.

Joab Jackson asserts Microsoft, Oracle Differ on Cloud Visions in this 4/22/2010 article for PCWorld magazine:

At the Cloud Computing Expo held this week in New York, executives from Microsoft and Oracle shared how they see cloud computing working its way into the enterprise.

The companies offered disparate visions, however, with Microsoft emphasizing its public cloud offerings and Oracle touting tools for building out internal clouds.

Both software giants agreed, however, that enterprise use of clouds is best done on an as-needed basis, in what their executives called "a hybrid model."

"I'd argue that if you'd run today's applications in the cloud with exactly the same utilization as you would in your own data center ... [it] will probably cost you more," said Hal Stern, Oracle president and former Sun Microsystems chief technology officer for services, during one talk.

The advantage of the cloud, Stern argued, is elasticity. It is those "impulse functions of demand, where you want to go to 100 CPUs to 1,000 CPUs, but give them back," he said.

"If you look at every one of the cases that has been held up as a great case of public cloud, they ran for a period time and then put the resources back," Stern said. "That's what made them cost effective." …

Joab continues with an analysis of Oracle and Microsoft cloud offerings.

… One choice Khalidi did not discuss much was that of running a private cloud, or run services in a cloud model for internal consumption, from within an organization's firewalls.

During the question-and-answer session, one audience member asked if Microsoft would release the Azure tools so they could be used for private clouds. Khalidi said the Microsoft would release these tools, but that "they are not there yet," in terms of being packaged for private cloud use.

Later, in a chat with IDG News Service, Khalidi said that Microsoft plans not only to offer most of its software as cloud offerings, but also offer the technologies it uses for enabling cloud services as stand-alone products. The two will go hand-in-hand, he said.

<Return to section navigation list>