Windows Azure and Cloud Computing Posts for 2/25/2010+

| Windows Azure, SQL Azure Database and related cloud computing topics now appear in this weekly series. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Table and Queue Services

- SQL Azure Database (SADB)

- AppFabric: Access Control, Service Bus and Workflow

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Windows Azure Infrastructure

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Read the detailed TOC here (PDF) and download the sample code here.

Discuss the book on its WROX P2P Forum.

See a short-form TOC, get links to live Azure sample projects, and read a detailed TOC of electronic-only chapters 12 and 13 here.

Wrox’s Web site manager posted on 9/29/2009 a lengthy excerpt from Chapter 4, “Scaling Azure Table and Blob Storage” here.

You can now download and save the following two online-only chapters in Microsoft Office Word 2003 *.doc format by FTP:

- Chapter 12: “Managing SQL Azure Accounts and Databases”

- Chapter 13: “Exploiting SQL Azure Database's Relational Features”

HTTP downloads of the two chapters are available from the book's Code Download page; these chapters will be updated for the January 4, 2010 commercial release in February 2010.

Azure Blob, Table and Queue Services

See articles by Jeff Barr, Werner Vogels and James Hamilton about new “strong consistency” features for Amazon SimpleDB in the Other Cloud Computing Platforms and Services section.

<Return to section navigation list>

SQL Azure Database (SADB, formerly SDS and SSDS)

No signigicant articles so far today.

<Return to section navigation list>

AppFabric: Access Control, Service Bus and Workflow

See Vittorio Bertocci’s Identity @ MIX10 post of 10/24/2010 in the Cloud Computing Events section for details on two WIF sessions.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

Eric Nelson reports a deployment change in his Windows Azure Portal surfaces the relationship between billing and deployed post of 2/25/2010:

I previously posted on when you get charged with Windows Azure as it is contrary to many folks expectations. Today (25th Feb 2010) I was pleased to see a new warning on the portal.

Note that you only need to delete the deployment not the service. And to delete you must first stop it.

Well done team.

Milly Shaw describes on 2/25/2010 Southampton University’s use of Windows Azure in its Cloud computing in space program:

Dr Steven Johnston from Southampton University explains how his team has used cloud computing to predict satellite collisions in space.

10,000 space objects are currently being tracked. These objects are satellites, typically space debris. Johnston’s team are using Azure to project the orbits to predict collisions.

Cloud computing is elastic - it can be scaled up or down, which can be useful for processor-intensive work such as Southampton’s Space Situational Awareness System Tech[nology].

There is a scale of cloud computing, from Amazon Web Services - where you are responsible for managing a virtual machine - to Google Docs, in which you have no control at all over the machines you are working with. In the middle lies Windows Azure. You can’t configure Azure yourself, but it does have a more managed infrastructure.

While Southampton’s space-tracking system relies on Azure, Steven freely admits that both cloud computing in general and Azure in particular have limitations: there’s no standard API for moving a VM, which essentially locks in a user to a particular system; there are bandwidth problems with moving data; and the inherent access limitations make it more difficult to create clusters.

Microsoft Research supports the Microsoft Institute for High Performance Computing at Southampton University; here’s a link to its Cloud Computing for Planetary Defense presentation, which includes a “Space Situational Awareness” “Azure Demo” topics.

Kristin Bockius reports U.S. Public Sector CIO Summit 2010 Day 2: Windows Azure Platform Apps Meeting Real Government Needs in this 2/25/2010 post to the new Bright Side of Government blog:

We recently launched the Microsoft Windows Azure Development Contest for state and local government partners to provide an opportunity for all of our partners to showcase their development skills by creating a Windows Azure-based application that meets real needs of government customers. Voting closed last week, the judges have made their final decisions, and the results of the contest will be announced later today here at the CIO Summit. In the mean time, we would like to highlight a handful of the innovative applications that partners have already developed for government use:

- COMAND – Developed by Trafrec Corp, this Windows Mobile application allows law enforcement officers to create or capture accident, citation, field interview, tow and DUI reports from the field. The data is synced to servers in the cloud in real time, and the sharing of captured data (which can also be accessed from the cloud back at the station) eliminates duplication and reduces reporting errors. Trafrec provides the software licensing at no cost to law enforcement agencies including police departments, DMVs, and DOTs. To view a demonstration of the application, check out this online presentation.

- iLink GIS Framework – State and local government agencies are always looking for more efficient ways to deliver current contextual information online to their citizens, so iLink Systems created its “GIS Mapping Applications Framework” to meet this need. This reusable framework allows easy creation and deployment of intuitive, map-based solutions that can visualize social services, health services, public safety, schools, arts, and recreation. To try out this application, take a look at the demo script here.

- LiveBallot – The Democracy Live system was deployed in 19 elections last year to help state and local governments reduce the costs associated with paper sample ballots, voter pamphlets, and other paper-based voter information. Democracy Live’s LiveBallot is capable of delivering voter information to over 200 million eligible U.S. voters and provides on-demand display of a voter’s specific balloting information. For more information, visit www.liveballot.com.

- FullArmor AppPortal – AppPortal is a software + services solution that enables government organizations to deploy and manage App-V virtual applications through a cloud-based service or through internal infrastructure. Built on the Windows Azure platform, AppPortal can be implemented without any capital expenditures in datacenters, servers, or traditional system management software, and the deployment can be centrally managed, self-service enabled or a combination of both. For more information, visit FullArmor’s AppPortal Web site.

- Miami 311 – Miami 311 is a public-facing, open government transparency solution that allows citizens to monitor and analyze non-emergency requests, like a pothole repair or sidewalk damage, which are mapped out visually based on calls to 3-1-1. Miami 311 also serves as a dashboard for City Commissioners to see and monitor citizen requests in their district. For more information, see yesterday’s Bright side blog post on Miami’s Windows Azure application. [See details in interview below.]

As the Windows Azure Platform evolves and new tools are developed, our state and local government customers will benefit from even greater flexibility, functionality, and reliability in the cloud, and citizens will benefit from greater transparency and more open government at the state and local level. We will post the results of Microsoft Windows Azure Platform Development Contest later today, so be sure to follow additional coverage here on Bright Side and look for our tweets @Microsoft_Gov with the hashtag #USPSCIO.

The U.S. Public Sector CIO Summit 2010 site is here.

Eric Nelson’s Q&A: How do I find out the status of the Windows Azure Platform services? of 2/25/2010 describes the Windows Azure status status dashboard and service history:

The Windows Azure Platform includes a status dashboard as well as RSS feeds for individual services and regions.

You get to see the current status:

As well as the history of an individual service:

As I’m based in the UK, I subscribe to the North Europe feeds for each of the services:

The Windows Azure Team presented Real World Windows Azure: Interview with James Osteen, Assistant Director of Information Technology for the City of Miami on 2/24/2010:

As part of the Real World Windows Azure series, we talked to James Osteen, Assistant Director of Information Technology for the City of Miami, about using the Windows Azure platform to deliver the city's 311 citizen response application and the benefits that Windows Azure provides. Here's what he had to say:

MSDN: Tell us about the City of Miami and the role that IT plays in its management.

Osteen: The City of Miami is in southeastern Florida and serves more than 425,000 citizens. With a team of 80 employees, the IT department plays a critical role because we continuously strive to improve existing services and offer new services to the residents of Miami.

MSDN: What was the biggest challenge the City of Miami faced prior to implementing Windows Azure?

Osteen: The 311 application that we wanted to develop, which would give residents the ability to submit and track nonemergency incidents used mapping technology that required significant computing resources. Unfortunately, we have limited budget and resources, including a five-year procurement cycle for server hardware. We needed a cost-effective, scalable solution that would maximize what resources we do have.

MSDN: Can you describe the solution you built with Windows Azure to help maximize your limited resources?

Osteen: This month we're launching our 311 application. We're using MapDotNet UX, an off-the-shelf product built for Windows Azure that integrates with Windows Azure storage services and Bing maps for enterprise, and also has an interface based on Microsoft Silverlight 3.0 browser plug-in. It gives us the powerful mapping technology that we need to enable residents to track nonemergency service requests and view other open requests in a particular area. We're using Blob Storage to store spatial data, which we previously had in shapefile and KML formats.

Figure 1: Miami 311 Application-Built on Windows Azure, the Miami 311 application enables citizens to report and track nonemergency incidents. …

MSDN: What makes your solution unique?

Osteen: Windows Azure is going to radically reshape how we develop new services for our citizens. For instance, the development fabric enables our developers to run and test an application on their local computer before deploying it, so we do not have to use multiple testing, debugging, and production environments before deploying new applications or updates. This means that we're going to significantly increase how fast we can go to market with new features or applications. Windows Azure is the future for the City of Miami.

MSDN: What are some of the key benefits the City of Miami has seen since implementing Windows Azure?

Osteen: In addition to the fast development, we're able to reduce costs. With Windows Azure, we can scale horizontally and vertically very quickly as demand requires, without worrying about trying to predict our server needs five years in advance. Also, by relying on Microsoft-hosted data centers, we've improved our disaster-recovery strategy, which is important in our hurricane-prone region.

Read the full story here.

The Silverlight Team chimes in with a Visit the McNuggets® Village to see how McDonald’s® uses Silverlight in their latest campaign post on 2/24/2010 which includes a link to the Silverlight/Azure app:

McDonald’s has used Silverlight and a host of other exciting Microsoft technologies to build a Vancouver 2010 Winter Olympics-themed campaign for McNuggets called “Dip it. Dunk it. Let the games begin”. The site, which runs only during the Winter Olympic games, features a mini game where you can give Gold, Silver or Bronze awards to either the all-new, Sweet Chili Sauce® (only available during the games), or to one of your favorite classic dipping sauces. Explore venues within the village while gaining sports information.

Make a stop at the Athletes Dorm to watch video profiles of Olympic athletes. At the Future Gold Training Center, browse through photos, videos and read blogs from “McDonald’s Champion Kids®”.

The campaign was created by Tribal DDB Worldwide, a leading creative agency based in the U.S. Midwest. Tribal DDB chose to develop the McNuggets Village using Silverlight 3 for two primary reasons: cost and speed to market.

The Silverlight application calls out to WCF services running on Windows Azure. A Silverlight-based IE8 web slice tracks game scores using Windows Azure for hosting. Amazingly, the entire Windows Azure infrastructure was built in only 2 days! Data is stored in SQL Azure, and the WCF services/content (images, video, etc.) are running in Windows Azure. [Emphasis added.]

John Moore reports a new partnership between Microsoft and electronic health record [EHR] vendor Eclipsys in his The Migration to Modular HIT Apps post of 2/24/2010:

Chilmark has been seeing a progressive movement by a number of HIT providers, especially among HIE vendors (Axolotl, Covisint and Medicity) to open up their HIE platform (publish APIs) to potentially support a multitude of modular apps to meet various provider needs. Basically, these vendors are moving to a Platform as a Service (PaaS) model, each taking a slightly different spin on a PaaS that will likely require Chilmark to produce a separate report to explore further. What is important though for this industry is that this is a fairly nascent trend that will likely accelerate in the future.

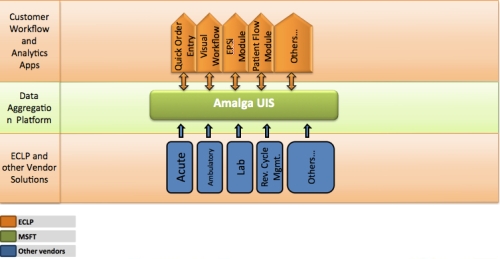

And today, we can add one more vendor to the PaaS mix, Microsoft, who announced a partnership with EHR vendor, Eclipsys who has built several modular apps (Data Connectivity, Quick Order Entry and Visual Workflow) on top of Microsoft’s Amalga UIS. Eclipsys will be demonstrating these apps next week at HIMSS.

What’s in it for all Stakeholders:

Microsoft is taking Amalga UIS from simply being a data aggregator/reporting engine to becoming a platform similar to HealthVault thereby making the data that it aggregates actionable by the apps that ride on top of it. This creates a higher value proposition for Amalga UIS in future deals with large hospitals and IDNs.

Eclipsys & other HIT vendors now have an opportunity to enter accounts that may have been dominated by large, monolithic solutions from such companies as Cerner and Epic. It may also provide smaller HIT vendors an ability to rise above the noise and gain some traction in the market.

Hospital CIOs & end users will no longer be strictly tied to only those apps provided by their core HIT vendor(s), but may now be able to “flex-in” certain “best-of-breed” apps as needed to meet specific internal needs/requirements. In our briefing call with Microsoft yesterday, Microsoft stated that the Amalga UIS APIs will also be made available to customers allowing them to build their own apps, further increasing the utlity of Amalga UIS.

There’s no direct tie to Windows Azure in John’s post, but it’s a good bet that an Amalga PaaS offering would offer the option of hosting in Microsoft’s data centers.

Gaurav Mantri announces the availability of Cerebrata’s Azure Diagnostics Manager - A WPF based rich client to manage Windows Azure Diagnostics as of 2/21/2010:

I am pleased to announce the availability of Azure Diagnostics Manager utility in private beta. In PDC, Windows Azure team announced the new Diagnostics API and Management however we felt that there was a gap (and thus a great opportunity) regarding the tooling to surface this data. Thus we built this utility so that developers can get access to the diagnostics data in a user friendly way.

What is Azure Diagnostics Manager:

Azure Diagnostics Manager (ADM) is a WPF based rich client application using which you can manage diagnostics data logged by your applications using Diagnostics API made available by Windows Azure. Here are the features available in ADM in its current release:

- Event Viewer: View/download events logged by your applications in a “Windows Events Viewer” like user interface.

- Performance Counters Viewer: View/download performance counters data logged by your applications in a “Perfmon” like user interface.

- Trace Logs Viewer: View/download trace data logged by your applications.

- Infrastructure Logs Viewer: View/download infrastructure logs data.

- IIS Logs: View/Download IIS logs.

- IIS Failed Request Logs: View/Download IIS Failed Request logs

In the next few versions we’ll be adding these features:

- Support for Crash Dump Logs

- Support for On Demand Transfer

- Support for Remote Diagnostics Management

Return to section navigation list>

Windows Azure Infrastructure

Lori MacVittie claims “There’s a reason for the angst elicited by inaccurate definitions of cloud computing and it may lead to rethinking a laissez-faire view of such definitions” in her May I Mambo Dogface to the Banana Patch? post of 2/25/2010:

Language impacts our perception and can dramatically change the way we understand – or don’t understand – ideas. Because one of the primary uses of language is to present arguments or assert propositions such as “We need to allocate X percent of our budget to a cloud computing initiative” it makes it important that everyone involved in the conversation agrees on basic meanings and definitions. This is one of the reasons I, at least, have a conniption whenever someone who is attempting to educate people on a particular technological concept completely misses the ball. If we don’t clearly articulate what is and is not cloud computing the danger is that business-stakeholders and end-users will see cloud computing as nothing all that difficult, or nothing spectacular..we are in danger of the folks who often fund such initiatives not “getting it.” Language is our common ground, or at least it’s supposed to be.

The following grossly inaccurate definition is brought to you by Reading Eagle. Its “What is cloud computing?” article asserts that “84% of Americans use cloud computing in some form.” Then goes on to explain in more depth (and I use that phrase loosely and intentionally to prove a point).

Lori continues with an indictment of the Reading Eagle definition she quotes as being for the Internet, not cloud computing and asks:

WHO is in CONTROL of CLOUD COMPUTING INITIATIVES?

It’s important to remember that IT does not exist in a vacuum; other constituents have a stake in IT and exert their influence over the direction IT takes in many ways, including the budget. Many cloud computing surveys and studies have asked about budget size and type, but very few take the extra step to ask who – or what group – actually has control and influence over those budgets, which is at least as interesting – if not more so – than the actual budget details itself.

Saugatuck Technology claims “Core research on Cloud use drives effective strategies of ‘Lead, Follow, and Get Out of the Way’” in its Saugatuck Releases First Cloud Leadership Study press release of 2/25/2010:

Traditional IT and business leadership strategies and tactics will be of limited effectiveness in a Cloud-based environment where anyone can do practically anything they want or need to. Thus, how user organizations plan for and manage Cloud IT will have to improve upon established practices and policies. IT, Finance, and line-of-business executives must learn and use new, combined management tactics when it comes to Cloud IT.

This core finding is the foundation of the latest research study from Saugatuck Technology Inc., titled “Lead, Follow, and Get Out of the Way: A Working Model for Cloud IT through 2014.” Released today via Saugatuck’s website, the study uses interviews with experienced user organization executives, analysis of global survey data, and insights from leading Cloud providers, to build a realistic, working model of Cloud evolution, adoption, and most importantly, cost-effective management.

“Cloud adoption right now is a point-solution phenomenon, and we know from every previous instance of IT that point solutions cost more in the long run,” according to Saugatuck Managing Director Bruce Guptill, the study’s lead author. “If IT, Finance, and business leaders can see and understand how Cloud adoption is growing and changing, they can manage it, and take advantage of tremendous cost efficiencies. In this report, we have developed a simple, evolutionary model of Cloud IT adoption and its impact over time. And we deliver guidance to user executives as well as to providers of Cloud IT and traditional IT as to how best to manage the Cloud’s present and future.”

The press release continues with more “key findings.” As I recall, a more common form of the title is Thomas Paine’s “Lead, follow or get out of the way.”

Dilip Krishnan’s Book Excerpt and Interview: Cloud Computing and SOA Convergence in Your Enterprise: A Step-by-Step Guide begins:

A new book by David Linthicum, Cloud Computing and SOA Convergence in Your Enterprise: A Step-by-Step Guide, describes how to get the enterprise ready for cloud computing by carefully modeling enterprise data, information services and processes in a service oriented manner to make the transition to providing and consuming cloud services easier.

The book [offers] high level prescriptive guidance on the approaches to moving enterprise services and business processes to the cloud. David starts the discussion of his book with the “why?” of moving to the cloud and he goes on to define the cloud computing and the various components that make up cloud solutions, i.e. Storage-as-a-Service, Database-as-a-Service, Information-as-a-Service, Application-as-a-Service etc. and examines the need and business drivers for moving such services to the cloud.

In his book, David suggests a bottom-up approach starting from modeling the data, then the services that move the information to achieve business activities and finally the process that tie these activities together. He advocates modeling the governance of these services and processes and a plan to test these processes in the cloud.

Addison-Wesley / Prentice Hall provided Infoq readers with the excerpt describing techniques modeling the data and services for the cloud. The excerpt is a condensed version of the following chapters from the book.

- Chapter 5: Working from Your Data to the Clouds

- Chapter 6: Working from Your Services to the Clouds

Dilip continues with a lengthy Q&A session with David. I purchased the book and recommend it highly.

TechNet Flash editor Mitch Irsfeld offers a Clearing the Fog Around Cloud Computing post, which begins:

Describing the general concept of cloud computing, its promise and benefits, is fairly straightforward. But the varied approaches and emerging product offerings from a growing list of vendors create confusion, especially as you look at it from the standpoint of your existing infrastructure. Microsoft's approach to cloud services allows you to deploy cloud workloads and integrate them back into your existing on-premises systems.

I've collected a few resources to get you going:

- Clearing the Fog Around Cloud Computing

TechNet Magazine takes a closer look at the Windows Azure Platform and the Business Productivity Online Suite.- Microsoft Cloud Services: a New Model for Business Computing

This online brochure provides a summary of Microsoft Cloud Services benefits via covering the following topics: Definition of cloud computing, Microsoft Cloud Services, the three principles of the Microsoft cloud computing strategy, Microsoft offers, and proof points.- Security in Cloud Computing - a Microsoft Perspective (Level 100)

An introduction to the security benefits and challenges that cloud computing will bring; discusses some important considerations that organizations should address before embracing the cloud.- Wrapping Up the TechNet 2.0 Preview Series

By the time you read this, Keith Combs will have nearly completed his blog series on the new TechNet experience. That can only mean one thing: yes, we're getting close. …

<Return to section navigation list>

Cloud Security and Governance

GovInfoSecurity.com posted on 2/15/2010 an Infosec Guru Ron Ross on NIST's Revolutionary Guidance podcast:

NIST senior computer scientist Ron Ross heads a National Institute of Standards and Technology-Defense Department team that created the just-released information security guidance for federal agencies: Special Publication 800-37, Revision 1, Guide for Applying the Risk Management Framework to Federal Information Systems: A Security Life Cycle Approach.

In an interview with GovInfoSecurity.com, Ross discusses the:

- Importance of the new guidance that provides for real-time monitoring of IT systems.

- Challenges federal agencies face in adopting NIST IT security guidance.

- State of cybersecurity in the federal government.

Ross was interviewed by GovInfoSecurity.com's Eric Chabrow.

The highly regarded NIST senior computer scientist and information security researcher serves as the institute's FISMA implementation project leader. He also supports the State Department in the international outreach program for information security and critical infrastructure protection. Ross previously served as the director of the National Information Assurance Partnership, a joint activity of NIST and the National Security Agency.

Robert Westervelt’s Cloud security issues, targeted attacks to be hot-button topics at RSA post of 2/25/2010 to SearchSecurity.com covers the upcoming RSA conference in San Francisco:

… Many companies are moving slowly with cloud computing adoption, Crawford said, despite an increasing number of vendors refitting their technologies and their distribution methods to provide cloud-based services. The integration between servers, storage networks and management software is adding more complexity, and the complexity and lack of visibility into cloud environments breeds insecurity. All of the challenges being cited could be causing companies to be more cautious in adopting cloud-based services. In a recent Enterprise Management Associates survey of 850 IT executives, only 11% indicated they planned to implement cloud in the next 12 months, Crawford said.

Some of the challenges include the lack of visibility in cloud environments and the loss of control over company data and how it's being secured by service providers. Crawford said too many vendor proprietary systems are complicating cloud adoption. New standards could change all that, he added, citing the MashSSL Alliance, which focuses on establishing secure channels during browser sessions, as a good start.

"There's considerable attention being paid to the potential risks of cloud computing, but the number of organizations planning on adopting cloud computing models is small compared to a lot of the hype in the market," Crawford said. "A lot of the hype about cloud services out there is out of proportion to what companies are really doing."

Click here for more details on the RSA Conference 2010.

Angela Moscaritolo asks “What should IT personnel and executives at enterprises know before adopting a cloud computing model? How are CISOs dealing with the trend of consumerization? How will mobile app stores affect the threat environment?” in her Crime, cloud & consumerization on tap at RSA Conference article of 2/24/2010 for SC Magazine:

… Individuals from Verizon Business, SaaS web security vendor Zscaler, and web-based DNS management software provider OpenDNS also plan to discuss outsourcing and cloud computing in a session called “SaaS-based security solutions,” scheduled for March 3.

Cloud computing is one of the most hyped trends in IT security – and will also be another major theme of the show, said Scott Crawford, managing research director at Enterprise Management Associates, during Wednesday's call. He will moderate the session on SaaS.

“The number of organizations planning to adopt a cloud computing model is small compared to the hype and expectations in the market,” Crawford said.

A top priority and one of the most difficult challenges of cloud computing is ensuring data is protected, he said. Manageability and performance are other concerns.

During the March 3 session, panelists plan to discuss what organizations need to know before jumping into a service-based model for security.

David Linthicum asserts “Legal issues such as data privacy and compliance regs may have you considering where the clouds actually reside” in his When clouds need to stop at the border post of 2/25/2010 to the InfoWorld Cloud Computing blog:

Clouds are everywhere and can be used from anywhere, right? Wrong. The fact is that when considering national laws, you may find that your data is legally not able to leave the border.

That's the case in many parts of Europe that forbid some data from being transmitted or stored outside of the country. Canada also has some rules that prohibit some data being stored in the United States due to the U.S. Patriot Act's provisions that let the federal government examine corporate records.

To get around this issue, several cloud computing providers, such as Amazon.com and Salesforce.com, have points of presence in many developed countries. There's a performance argument for this distribution of systems, but another reason is to adhere to many laws directing where some data can legally reside.

It's important to note that the legal issues are local to where your customer resides. You have to understand the laws and make sure that personally identifiable data and some financial records are kept local if required by the law. …

Jay Heiser asks Do We Need Cloud Computing Laws? in this 10/24/2010 post to the Gartner Blog:

… Let me go on the record and say that I’m not aware of any modern society that has thrived without the rule of law. Law is needed, yet it is impossible to ever get it exactly right. Bad rules can be counterproductive, encouraging the negative behavior they are meant to reduce. The Basel II Accord, which promulgated the quantification of operational risk, arguably encouraged financial service firms to take greater risks by giving them a justification mechanism.

2010 is looking like ‘The Year of Privacy’ in the US Federal Government. H.R. 2221, the Data Accountability and Trust Act (DATA), was voted on by the House and has been read at the Senate, where it is currently under committee review. A parallel bill in the Senate, S. 1490:Personal Data Privacy and Security Act of 2009, was approved by committee, but appears to have been superseded by the House’s somewhat eponymous DATA bill. Up to a dozen other proposed bills nibble away at identity theft, social security number conventions, and the use of PII, so clearly the legislative branch has an appetite for this issue.

DATA and S. 1490 require more than just breach notification. Organizations with PII must take proactive efforts to control private information, expecting “the Federal Trade Commission ( FTC) to promulgate regulations requiring each person engaged in interstate commerce that owns or possesses electronic data containing personal information to establish security policies and procedures.” It is no coincidence that the FTC has begun a series of roundtable discussions on the privacy implications of new IT practices and technologies, including social networking and cloud computing. …

The Brookings Institute held a governance studies event in January on Cloud Computing for Business and Society. In his keynote speech, Microsoft’s Brad Smith strongly suggested the need for regulations on cloud computing. This is not a new theme for Microsoft. Ray Ozzie suggested similar ideas last year during a Gartner interview. I don’t want to second guess Microsoft’s motivations in lobbying so consistently for a larger Federal presence in their new business area, but one interpretation could be that they expect regulations to function as a barrier to market entry, reducing competitive pressures in the cloud.

<Return to section navigation list>

Cloud Computing Events

Brian Gorbett recommends attending a two-day Azure Boot Camp on 6/1 and 6/2/2010 at the Microsoft Chicago office: 200 E Randolph St, Suite 200, Chicago, Illinois 60601, US:

Event Overview: Join us for this 2 day deep dive program to help prepare you to deliver solutions on the Windows Azure Platform. We have worked to bring the region’s best Azure experts together to teach you how to work in the cloud. Each day will be filled with training, discussion, reviewing real scenarios, and hands on labs. Snacks and drinks will be provided, we advise you to make your own lunch arrangements prior to the event.

You will also need to bring a computer loaded with the software listed below. For more information please visit www.azurebootcamp.com, or email us at info@azurebootcamp.com.

See Event ID: 1032444477 to register, learn what software you need to preload to the PC you bring and read a detailed a detailed course-contents list.

Bill Wilder announces an update to the agenda for the upcoming Boston Azure User Group meeting in his Curt Devlin to Speak about Identity in the Cloud at Boston Azure Meeting post of 2/25/2010:

Thursday February 25, 2010 at NERD in Cambridge, MA

The following is an update to the agenda for the upcoming Boston Azure User Group meeting this coming Thursday.

To RSVP for the meeting (helps you breeze through security and helps us have enough pizza on hand), for directions, and more details about the group, please check out http://BostonAzure.org.

To get on the Boston Azure email list, please visit http://bostonazure.org/Announcements/Subscribe.

[6:00-6:30 PM] Boston Azure Theater

The meeting activities begin at 6:00 PM with Boston Azure Theater, which is an informal viewing of some Azure-related video. This month will feature the first half of

Matthew Kerner’s talk on Windows Azure Monitoring, Logging, and Management APIs from the November 2009 Microsoft PDC conference.[6:30-7:00 PM] Upcoming Boston Azure Events and Firestarter

Around 6:30, Bill Wilder (that’s me) will first show off an interesting CodeProject contest, then will lead a discussion about the future of the Boston Azure user group and the upcoming All-Day-Saturday-May-8th event.

Curt Devlin will take the stage at 7:00 PM.

Vittorio Bertocci’s Identity @ MIX10 post of 10/24/2010 asks:

Have you booked your trip to Vegas yet? Amidst all the excitement for Windows Phone 7 Series, Silverlight & the cloud, if you know how to search the MIX content you’ll find two identity pearls:

Caleb Baker: Using Windows Identity Foundation For Creating Identity-Driven Experiences in Silverlight

Come learn how you can leverage Windows Identity Foundation to simplify access to your Silverlight applications and delight your users with custom-tailored experiences. Discover how you can enable single sign on for your Silverlight applications no matter where they are hosted, explore how you can use claims-based identity to adapt the user experience for customers, learn how to take advantage of web services protected by federated security… all with the same consistent developer APIs, that Windows Identity Foundation already offers for ASP.NET and WCF projects.

That’s right, an entire session about one of the questions you ask the most about WIF: how to use it with Silverlight? Caleb is gong to go into the details of how you can use those two technologies together. Really looking forward to that one!

Mike Jones: Improving the Usability and Security of OpenID

OpenID is gaining popularity as an Internet identity system. Nonetheless, it is widely recognized that both usability and security issues are limiting the adoption and applicability of OpenID as it exists today; both kinds of issues can be improved by the introduction of an active client for OpenID. This session will describe a community collaboration to explore these issues through working code. We will demonstrate an experimental multi-protocol version of Windows CardSpace that enables end users to bring their OpenIDs with them to sites, while mitigating phishing attacks, including its use at production OpenID sites.

Mike is going to explore another combination, CardSpace and OpenID. Among other things, that’s precisely what I mentioned in the Mordor post… another session I would definitely not miss.

Besides the sessions themselves, this will be a chance to speak with them and others from the WIF PG. You are still on time for registering to the conference! If you are in Vegas that week, don’t forget to swing by & say hi… as usual, I am still very recognizable :-)

<Return to section navigation list>

Other Cloud Computing Platforms and Services

James Urquhart offers his commentary about CA to acquire cloud platform provider 3Tera in this 3/24/2010 post:

… The technology assets and key personnel of utility computing management software provider Cassatt were acquired in June, and service level management software provider Oblicore was acquired earlier this year.

CA also plans to expand 3Tera's virtualization support beyond the Xen virtualization platform, to include VMWare ESX and Microsoft HyperV. [Emphasis added.]

Jay Fry, vice president of Business Unit Strategy for CA's Cloud Products and Solutions Business Line, said in a phone interview that there were three key ways that 3Tera enhances CA's cloud story.

First, they bring customers. According to 3Tera's Web site, there are around 30 service provider partners running their cloud services on AppLogic, and Fry noted that they have a handful of enterprises who have standardized on the platform as well. Second, their user interface and application delivery technologies are a strong complement to CA's portfolio of infrastructure management tools.

Finally, 3Tera will allow CA to simplify the process of implementing and using private cloud computing services using CA's product portfolio, according to Fry. "Enterprises are not even sure how to get started with private cloud, and [AppLogic] makes it easier to talk about those first couple steps," noted Fry. …

Jeff Barr’s Amazon SimpleDB Consistency Enhancements post of 10/24/2010 announces:

We've added two new features to Amazon SimpleDB to make it even easier for you to implement several different data storage and retrieval scenarios.

The first new feature allows you to do a consistent read. Up until now, SimpleDB implemented eventually consistent reads. You now have the option to choose the type of read which best meets the needs of each part of your application. Before I dive into the specifics, here's a quick guide to the two types of reads:

- Eventually consistent reads offer the lowest read latency and the highest read throughput. However, the reads can return data that has been made obsolete or overwritten by a very recent write. In general, the time window where this can occur is smaller than one second.

- Consistent reads always return the most recent data, with the potential for slightly higher read latency and a small reduction in read throughput.

SimpleDB's Select and GetAttributes functions now accept an optional ConsistentRead flag. This flag has a default value of false, so existing applications will continue to use eventually consistent reads. If the flag is set to true, SimpleDB will return a consistent read.

The second new feature allows you to issue SimpleDB PutAttributes and DeleteAttributes operations on a conditional basis. In other words, you can tell SimpleDB to perform the indicated operation if and only if a given single-valued attribute has the value specified in the PutAttributes or Delete call. You can easily implement counters (the value itself is effectively the version number), delete accounts only if the current balance is zero, and insert an item only if it does not exist.

Jeff continues with a discussion about implementing optimistic concurrency control with these new features. Amazon also offers an Amazon SimpleDB Consistency Enhancements tutorial of 2/24/2010.

Werner Vogels compares eventual consistency with optimistic consistency based on SimpleDB’s new ConsistentRead flag, and PutAttributes and Delete Attributes functions in his Choosing Consistency post of 3/24/2010:

Amazon SimpleDB has launched today with a new set of features giving the customer more control over which consistency and concurrency models to use in their database operations. There are now conditional put and delete operations as well a new "consistent read" option. These new features will make it easier to transition those applications to SimpleDB that are designed with traditional database tools in mind.

Revisiting the Consistency Challenges

Architecting distributed systems that need to reliably operate at world-wide scale is not a simple task. There are many factors that come into play when you need to meet stringent availability and performance requirements under ultra-scalable conditions. I laid out some of these challenges in an article explaining the concept of eventual consistency. If you need to achieve high-availability and scalable performance, you will need to resort to data replication techniques. But replication in itself brings a whole set of challenges that need to be addressed. For example updates to data now needs to happen in several locations, so what do you do if one or more of those locations is (temporarily) not accessible?

A whole field of computer science is dedicated to finding solutions for the hard problems of building reliable distributed systems. Eric Brewer of UC Berkeley summarized these challenges in what has been called the CAP Theorem, which states that of the three properties of shared-data systems--data consistency, system availability, and tolerance to network partitions--only two can be achieved at any given time. In practice the possibility of network partitions is a given, so the real trade-off is between availability and consistency: In a system that needs to be highly available there are a number of scenarios under which consistency cannot be guaranteed and for a system that needs to be strongly consistent, there are a number of scenarios under which availability may need to be sacrificed. These trade-off scenarios generally involve edge conditions that almost never happen, but in a system that needs to operate at massive scale serving trillions and trillions of requests on a daily basis, one needs to be prepared to handle these cases. …

James Hamilton adds his I love eventual consistency but... discussion of new SimpleDB features on 2/24/2010:

I love eventual consistency but there are some applications that are much easier to implement with strong consistency. Many like eventual consistency because it allows us to scale-out nearly without bound but it does come with a cost in programming model complexity. For example, assume your program needs to assign work order numbers uniquely and without gaps in the sequence. Eventual consistency makes this type of application difficult to write.

Applications built upon eventually consistent stores have to be prepared to deal with update anomalies like lost updates. For example, assume there is an update at time T1 where a given attribute is set to 2. Later, at time T2, the same attribute is set to a value of 3. What will the value of this attribute be at a subsequent time T3? Unfortunately, the answer is we’re not sure. If T1 and T2 are well separated in time, it will almost certainly be 3. But it might be 2. And it is conceivable that it could be some value other than 2 or 3 even if there have been no subsequent updates. Coding to eventual consistency is not the easiest thing in the world. For many applications its fine and, with care, most applications can be written correctly on an eventually consistent model. But it is often more difficult. …

James continues with an analysis of how data stores that implement strong consistency can be scaled.

<Return to section navigation list>