Windows Azure and Cloud Computing Posts for 2/10/2010+

| Windows Azure, SQL Azure Database and related cloud computing topics now appear in this weekly series. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Table and Queue Services

- SQL Azure Database (SADB)

- AppFabric: Access Control, Service Bus and Workflow

- Live Windows Azure Apps, Tools and Test Harnesses

- Windows Azure Infrastructure

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Read the detailed TOC here (PDF) and download the sample code here.

Discuss the book on its WROX P2P Forum.

See a short-form TOC, get links to live Azure sample projects, and read a detailed TOC of electronic-only chapters 12 and 13 here.

Wrox’s Web site manager posted on 9/29/2009 a lengthy excerpt from Chapter 4, “Scaling Azure Table and Blob Storage” here.

You can now download and save the following two online-only chapters in Microsoft Office Word 2003 *.doc format by FTP:

- Chapter 12: “Managing SQL Azure Accounts and Databases”

- Chapter 13: “Exploiting SQL Azure Database's Relational Features”

HTTP downloads of the two chapters are available from the book's Code Download page; these chapters will be updated for the November CTP in February 2010.

Azure Blob, Table and Queue Services

Julian Lai announced a new Microsoft Connect site for WCF Data Services (formerly ADO.NET Data Services and Project “Astoria”) in his Vote for future Data Services Features post of 2/9/2010:

Now that our current releases are winding down we’ve begun shifting some of our focus to planning for the next round. To help us prioritize our future work we’d like to hear from you. To make it easy to submit feedback directly to our teams (Entity Framework, WCF Data Services, etc) we’ve created a Connect site. The site enables you to easily file bugs, request a feature or vote up/down features or bugs previously entered by other users. Anyone can browse the bugs and feature requests already submitted, but to file a new bug report, feature request or to vote up/down a bug/feature you must login with your Live ID account. This is done such that each user is limited to 1 vote per feature/bug.

Get your voice heard! Visit our Connect site now to file your favorite feature request and vote on the ones already there. We look forward to your feedback.

<Return to section navigation list>

SQL Azure Database (SADB, formerly SDS and SSDS)

Bruce Kyle observes in his SQL Azure Q&A post of 2/9/2010:

The SQL Azure team has put together a set of questions and answers in their blog post, SQL Azure Database Now Generally Available – SLAs in effect.

Following are the questions answered:

- What is Microsoft SQL Azure Database?

- What are the key features are included in SQL Azure Database?

- How does Microsoft differentiate SQL Azure Database from Microsoft SQL Server?

- How is SQL Azure Database different from working with any hosted database?

<Return to section navigation list>

AppFabric: Access Control, Service Bus and Workflow

Alin Irimie’s Service Bus Connection Pricing post of 2/9/2010 provides a formula for calculating the monthly cost of service bus connections, but its use isn’t clear to me. Here’s what the Windows Azure Platform Pricing page has to say:

Service Bus connections

- $3.99 per connection on a “pay-as-you-go” basis

- $9.95 for a pack of 5 connections

- $49.75 for a pack of 25 connections

- $199 for a pack of 100 connections

- $995 for a pack of 500 connections

Data transfers

- Data transfers = $0.10 in / $0.15 out / GB - ($0.30 in / $0.45 out / GB in Asia)*

* No charge for inbound data transfers during off-peak times through June 30, 2010

These rates for AppFabric are effective for usage beginning in April, 2010. All AppFabric usage prior to April, 2010 will not be charged.

AppFabric Service Bus connections can be provisioned individually on a “pay-as-you-go” basis or in a pack of 5, 25, 100 or 500 connections. For individually provisioned connections, you will be charged based on the maximum number of connections you use for each day. For connection packs, you will be charged daily for a pro rata amount of the connections in that pack (i.e., the number of connections in the pack divided by the number of days in the month). You can only update the connections you provision as a pack once every seven days. You can modify the number of connections you provision individually at any time.

Service Bus connection pricing still remains a mystery to me, but it appears expensive on the surface.

<Return to section navigation list>

Live Windows Azure Apps, Tools and Test Harnesses

Ramaprasanna’s Configuring VM size in Windows Azure post of 2/10/2010 begins:

Post PDC November 09 Microsoft introduced the options for the developers to have custom VM sizes in Widows Azure cloud.

In this article let’s see how one can go about configuring the VMsize in Windows Azure application.

and continues with an illustrated tutorial:

His Error when compiling Azure app in Visual Studio 2010 Beta post of 2/9/2010 explains an issue when you use the Feb 2009 build of the Azure SDK with VS 2010 Beta 2. Most folks are probably are running VS 2010 RC by now.

Eric Nelson reports MSDN Flash Podcast 020 - David Gristwood and AWS [Active Web Solutions] talk Azure in this 2/9/2010 post:

On January 1st I switched focus to 100% Azure. My friend and colleague David Gristwood has been firmly focused on Azure through 2009 working with early adopters. We decided to record a podcast talking about what we are up to and how Microsoft UK has been helping early adopters using deep dive labs, workshops and training. We also have a stab at describing the Windows Azure Platform in 1 minute (I hopelessly overrun by 100%) and we finish with an interview between David and Active Web Solutions (AWS). AWS are an early adopter of Azure and give a great insight into the benefits they have seen.

Split roughly as:

- 15 minutes David and I “having a chat” :-)

- 15 minutes on the AWS interview.

We suspect this will be the start of a regular series of Azure focused podcasts. Hey, maybe even a spin off podcast. Time will tell :)

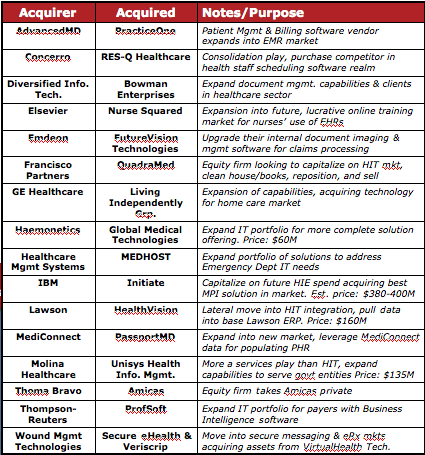

John Moore asserts Acquisitions Creating White Hot Market for Healthcare IT in this 2/9/2010 post to the Chilmark Research blog:

Since the beginning of 2010 there has been a series of acquisitions in healthcare IT (HIT) market, which recently culminated in one of the largest, IBM’s acquisition of Initiate. Triggering this activity is the massive amount of federal spending on HIT, (stimulus funding via ARRA which depending on how you count it, adds up to some $40B) that will be spent over the next several years to finally get the healthcare sector up to some semblance of the 21st century in its use of IT. But one of the key issues with ARRA is that this money needs to be spent within a given time frame, thus requiring software vendors to quickly build out their solution portfolio, partner with others or simply acquire another firm.

And it is not just traditional HIT vendors doing the acquiring (AdvancedMD, Emdeon, Healthcare Mgmt Systems, MediConnect, etc.). As the table below shows, many of these acquisitions are being driven by those who wish to get into this market (Thoma Bravo, Wound Mgmt Technologies, etc.) and capitalize upon future investments that will be made by those in the healthcare sector. …

Jeff Beeler blogged “Going live” with the Visual Studio 2010 Release Candidate on 2/8/2010, the day that the VS 2010 RC became available to MSDN subscribers:

We are pleased to declare that the Release Candidate of Visual Studio 2010 and the .NET Framework 4 will be supported as a “go live” release. This means that VS2010 RC has met high standards of quality for pre-release software, and we are licensing it for developing and deploying production applications.

We hope that you will consider using VS2010 RC for “go live” production purposes. As you use the release, we want to hear from you at our Connect site if you find any bugs.

In order to decide whether or not using VS2010 in a “go live” scenario is right for you, you should carefully understand the terms and limitations of the “go live” license. Start by reading the license terms for VS2010 RC, which includes the official “go live” licensing terms.

VS2010 RC is licensed for use through June 30, 2010 at which point you’ll need to upgrade to the final release to continue using the product. …

As of 2/10/2010, the RC is available to all comers here.

Michael Coté’s The return of good, old fashioned application development [to a cloud-y world] essay of 2/8/2010 asks:

How do you start to think about software development in a cloud-y world?

- What are the new features delivering across the cloud enables? What does it mean to: deliver a new feature whenever you like (whether your customer likes it or not); deliver different features to different groups of users and test which one is “best”; open up APIs that anyone can integrate with; give your customers “unlimited” processing and storage; allow your users to aggregate configuration and usage patterns and “learn” from each other rather than reinvent the wheel; etc.

- How does your software development process change to match your new delivery platform? How do you manage: delivering 52 features piece-mail, one a week, instead of in one big “release” a year; feed that user tracking into your product management and UX decisions; treat operations staff as part of your team (or take on their job in delivering over the cloud); manage the community that hangs off the open (or not so open) APIs that your customers come to rely on; track the usage and value (to you and your customers) of features over the course of years; keep a separate “portfolio” of products when your customer sees them all coming from the same URL; what would it mean if you delivered an “app” that existed for just 4 weeks; etc.

Above even these questions, is a more basic one: are you a software vendor, or a developer working on in-house software? Vendors, whose job is to make money from software, have much different ways of dealing with these questions than in-house developers whose job is to service their organization making money with software.

If you’re charged with software development, which ever side of the fence you’re on, as we start finish hammering out the operations concerns for all this cloud computing hoopla, it’s a good time to start figuring out the new features you can start delivering to customers and how your iteration-to-iteration process has to start changing.

Return to section navigation list>

Windows Azure Infrastructure

William Vambenepe predicts Azure’s AppFabric will “soon support a BizTalk-like process engine” in his Is Business Process Execution the killer app for PaaS? post of 2/10/2010:

Have you noticed the slow build-up of business process engines available “as a service”? Force.com recently introduced a “Visual Process Manager”. Amazon is looking for product managers to help customers “securely compos[e] processes using capabilities from all parts of their organization as well as those outside their organization, including existing legacy applications, long-running activities, human interactions, cloud services, or even complex processes provided by business partners”. I’ve read somewhere (can’t find a link right now) that WSO2 was planning to make its Business Process Server available as a Cloud service. I haven’t tracked Azure very closely, but I expect AppFabric to soon support a BizTalk-like process engine. And I wouldn’t be surprised if VMWare decided to make an acquisition in the area of business process execution.

Attacking PaaS from the business process angle is counter-intuitive. Rather, isn’t the obvious low-hanging fruit for PaaS a simple synchronous HTTP request handler (e.g. a servlet or its Python, Ruby, etc equivalent)? Which is what Google App Engine (GAE) and Heroku mainly provide. GAE almost defined PaaS as a category in the same way that Amazon EC2 defined IaaS. The expectation that a CGI or servlet-like container naturally precedes a business process engine is also reinforced by the history of middleware stacks. Simple HTTP request-response is the first thing that gets defined (the first version of the servlet package was java.servlet.* since it even predates javax), the first thing that gets standardized (JSR 53: servlet 2.3 and JSP 1.2) and the first thing that gets widely commoditized (e.g. Apache Tomcat). Rather than a core part of the middleware stack, business process engines (BPEL and the like) are typically thought of as a more “advanced” or “enterprise” capability, one that come later, as part of the extended middleware stack.

But nothing says it has to be that way. If you think about it a bit longer, there are some reasons why business process execution might actually be a more logical beach head for PaaS than simple HTTP request handlers.

Lori MacVittie asserts “Agreed that cloud vendors need to differentiate on services. Disagreed that cloud standards will not forward that cause and that virtualization platform makes a difference” in her That Whole Concept is Broken post of 2/10/2010:

The battle for virtualization platform dominance rages on, but it will not be virtualization that makes or breaks a cloud computing offering; it will be the diversity – or lack thereof - of the services it offers. We need to stop focusing on virtualization as the be-all and end-all of cloud computing and start bending our efforts toward what really matters: the ability of providers to efficiently offer a broad set of differentiating services and of customers to take advantage of them to architect a cloud-based solution that delivers their applications efficiently, securely, and as fast with as little manual interference as possible. Citrix CTO Simon Crosby touches on this point in a recent interview with John Furrier, “VMWare Had Nothing To Do With The Cloud Trend. Their Strategy is Flawed”, on the topic of cloud computing and virtualization.

I don’t see any viable opportunities for cloud vendors if all of them are offering a homogeneous set of services designed by one company called VMWare. That whole concept is broken.

I’m going to agree with Simon with the caveat that he could have ended his statement at “homogeneous set of services” and the concept would still be broken. Cloud computing isn’t about any one virtualization platform, it’s about the services that it can provide – from the network to storage to application network to ease of provisioning and management. Those services must eventually encompass the whole of a traditional IT infrastructure and they must be accessible and manageable by the customer. And they need to be portable across cloud implementations lest customers continue to balk at the prospect of locking themselves in to any one cloud computing provider or architecture. Crosby questioned the need for Cloud APIs and standardization a little later in his interview saying, “Should Cloud APIs be standardized? If there was a standard then all the clouds would look the same.” …

Lori then explains why she disagrees with Simon Crosby.

Ellen Rubin’s Legacy Apps: The Next Frontier in the Cloud post of 2/10/2010 to the Kendall Square Cloud blog asserts:

Although cloud computing momentum continues to build and scarcely a day goes by without a new cloud announcement or study, there's been little real enterprise adoption and almost no meaningful case studies. In part, that's because early cloud providers and vendors were focused on developers and technology start-ups when they designed their offerings, and larger, more established organizations were rarely on their radar screen. While start-ups can easily embrace new technologies and architectures, enterprises have far more constraints and have been largely limited to 'tire kicking' the cloud with small applications that aren't particularly meaningful for the business.

Cloud computing is now entering a new stage as CIOs and IT managers recognize that cloud computing is going to become an integral part of the enterprise computing environment. For it to be strategic as opposed to experimental, they need to know that the cloud can integrate with their existing data center infrastructure and incorporate the legacy applications that reside there. That's where the major pain points, complexity, and costs have always been, and where the cloud can potentially offer its greatest returns. In our discussions with CIOs, we hear this theme over and over.

Legacy apps cover the entire installed base of applications running on a company's internal infrastructure. They include everything from highly-used apps that are optimized for particular hardware to older versions of apps that must be maintained for specific customers as well as test and development environments, and apps used for internal purposes such as training. The true enterprise payoff for cloud computing will come from the ability to offload a wide range of legacy apps that don't need to run in the data center to a cloud environment where they can be managed more cost-effectively. Not all legacy apps will make sense to move to the cloud initially (or perhaps ever), so the trick will be to select the right ones. …

Ellen is a co-founder of CloudSwitch.

Tom Bitman asks and answers “Do cloud computing providers understand customer requirements? Do customers understand their requirements? No and no, and this is a problem” as an introduction to his Cloud Computing: Through a Glass, Darkly post of 1/10/2010 to the Gartner blog:

Today, most cloud computing providers offer one-size-fits-all services – with few options or service level alternatives. As the market matures, there will be thousands of providers, each trying to differentiate by focusing on specific market needs, and offering service level alternatives and options to attract specific types of customers.

This sounds great, except service providers don’t really know what options the market needs, and perhaps more importantly, potential customers don’t always understand their service level requirements.

Service providers will figure this out by experimenting, and making adjustments as they go. Some of these adjustments will be hard and expensive to make (and retrofit). Some providers will simply fail. The customers will figure this all out by getting burned.

No doubt, there are many service needs that can be fulfilled just fine by one-size-fits-all services – go for it. But the next stage of cloud computing use – more varied, business-critical services – will require something more.

A key to success for cloud computing is getting the interface right, and getting the service requirements right. The interface defines the service offering in detail for the customer, and the service options link directly to automation behind the interface. Building this automation isn’t easy – and many providers will focus on specific market needs rather then create a huge array of options. If success requires new options, that means new automation, and that might require fundamental architecture changes. Providers who guess right on market requirements before they build their service offering will have a definite advantage over those who need to make a (perhaps costly) mid-course correction. [Emphasis Tom’s.] …

Tom is a Gartner analyst. Cartoon by Doug Savage.

Eric Nelson summarizes Brad Smith’s presentation last week in Brussels in a Brad Smith on the Cloud in the 21st century post of 2/10/2009:

Something a little different. No mentions of web roles, nodes or queues – but interesting nevertheless.

Brad Smith gave Microsoft’s vision of cloud computing devoted to the economic success of Europe. The event was organized by E!Sharp in the Auditorium of the Museum of Art and History, in Brussels, on January 26th. Brad Smith is Senior Vice President and General Counsel of Microsoft Corporation.

Brad gives a strong steer on our commitment to (amongst other things):

- First class Interop

- Supporting Open Source in the Cloud – he includes a discussion of the work by Domino Pizza to use Azure

Plus plenty of reality checks on Cloud and why it is something governments, organisations and individuals need to embrace. Ultimately a lot of it is about confidence.

The four challenges the industry needs to address with the help of government are:

- Connectivity – we need high speed reliable connectivity. Governments need to focus on enabling this for their citizens

- Single Market – harmonisation across geographies. We need to recognise that data is now regularly moving across geographies. Need to recognise a “consumer in Italy, stores email in Ireland, managed by a US company”

- Privacy - “Do people still care?”. Brad - “Absolutely”. The “right to choose” who sees your information.

- Security – laws and enforcement against digital crimes. Cooperation across geographies. Principals that companies must adhere to.

A lot of his focus was on the need for the European Union to look at the above rather than member states if Cloud is to be adopted widely.

Brad Smith is Microsoft’s General Counsel and Senior Vice President, Legal and Corporate Affairs.

Mary Jo Foley quotes Lokad’s Joannes Vermorel in her Microsoft's weakest cloud link: The Windows Azure Console? article of 2/9/2010 for ZDNet’s All About Microsoft blog:

It’s just one early user’s opinion, but it sounds like Microsoft needs to beef up the integrated console component of Windows Azure to keep its cloud-computing customers happy.

Microsoft began charging users for Azure compute and storage as of February 2, 2010. One of the showcase customers the company has trotted out as an early adopter is Lokad, a forecasting software/services vendor.

On February 8, Lokad founder Joannes Vermorel, blogged his “big wish list” for Windows Azure. He itemized his suggestions for new features and tweaks that the “would turn Azure into a killer product, deserving a lion-sized market share in the cloud computing marketplace.”

Having used the Azure service in beta/pilot form for a year, Vermorel knows well the ins and outs of Microsoft’s offering. He had a number of suggestions for Microsoft about the core Windows Azure cloud-operating-system core, ranging from smaller virtual machines, to per-minute (as opposed to current) per-hour pricing. (There have been other calls for Microsoft to offer cheaper pricing for smaller Azure customers.) Vermorel also made a case for some new features for SQL Azure.

But there’s one piece of Azure that Vermorel said he doesn’t like at all: The Windows Azure Console. From his post:

“The Windows Azure Console is probably the weakest component of Windows Azure. In many ways, it’s a real shame to see such a good piece of technology so much dragged down by the abysmal usability of its administrative web client.” …

Mary Jo ends her story with the following item about Erik Meijer and the Volta project:

Speaking of LINQ, cloud expert and Oakleaf Systems blogger Roger Jennigs recently had an interesting update on the “father of LINQ” Erik Meijer. Connecting some dots, Jennings came to the realization that Microsoft’s discontinued Volta project has been reborn as the Reactive Extensions for .Net (and is still being championed by Meijer). Meijer is running the Cloud Programmability Team at Microsoft these days. Those Reactive Extensions are currently not on a path to commercialization; they’re a project in Microsoft’s DevLabs. But who knows when and how they’ll surface as elements of future releases of .Net and Silverlight?

Judith Hurwitz explains Why hardware still matters– at least for a couple of years in this 2/9/2010 post:

It is easy to assume that with the excitement around cloud computing would put a damper on the hardware market. But I have news for you. I am predicting that over the next few years hardware will be front and center. Why would I make such a wild prediction. Here are my three reasons [greatly abbreviated]:

- Hardware is front and center in almost all aspects of the computer industry. …

- The cloud looms. …

- The clash between cloud and on premise environments. …

So, in my view there will be an incredible focus on hardware over the next two years. This will actually be good for customers because the level of sophistication, cost/performance metrics will be impressive. This hardware renaissance will not last. In the long run, hardware will be commoditized. The end game will be interesting because of the cloud. It will not a zero sum game. No, the data center doesn’t go away. But the difference is that purpose built hardware will be optimized for workloads to support the massively scaled environments that will be the heart of the future of computing. And then, it will be all about the software, the data, and the integration.

David Linthicum asserts “Microsoft may find that it has but one shot to make it in the cloud” in his Microsoft Azure is available, but does anyone care? post of 2/9/2010 to InfoWorld’s Cloud Computing blog:

I'm not sure if anybody noticed, but the long-awaited Microsoft Azure cloud computing platform went into billable production last week after a pretty short beta period by Microsoft standards. (I plan on digging a bit deeper into Azure this year.) However, I was taken slightly aback by the lack of fanfare.

As you may remember, one of my predictions for 2010 was Microsoft finally being relevant in the cloud, and I'm still sticking to that. However, with Azure's late entry into the platform and infrastructure services marketplace, will Microsoft be able to catch up with other infrastructure and platform cloud offerings? Or will its success in the cloud be limited to the forthcoming cloud version of its popular Office platform?

I found David’s answer to the last question to be lacking in substance. Remembering that Lotus 1-2-3 and WordPerfect preceded Microsoft Excel and Word in the productivity apps market might help with finding the answer.

Dave also asks on 2/10/2010 Can Microsoft Catch Up by Giving Away Azure? in InformationWeek’s Intelligent Enterprise blog:

Microsoft Azure finally went into production amidst much fanfare. The long-awaited Azure platform is Microsoft's big bet in the cloud computing space, but can they catch up to AWS, Salesforce.com, Google, and other existing cloud players?

That said, it is no coincidence that Microsoft is basically giving away Azure to deserving government scientists for three years in a program between Microsoft Corp. and the National Science Foundation. …

Once again Microsoft is late to the party. However, they continue to hold a special space in the hearts of many enterprises, a brand loyalty that most cloud computing providers just don't have. The concept here is to get as many users on the platform as possible, in the shortest amount of time. However, is that a good strategy for Microsoft?

If you look at the history of Microsoft they seem to get into games late, and still win. Their entrance into the emerging Web in the '90s was almost kicking and screaming after the Microsoft Network was released. However, once they set their sites on the Web, they owned the browser market after only a year.

The cloud is a bit different. Cloud computing providers have already established their presence in the market. It's going to be difficult to attack users who are already loyal to one or two of the larger players, that is... unless you're willing to give it away for free. …

I wouldn’t call ~$100/month for a single small Windows Azure instance to be “Giving Away Azure.”

Jim Nakashima’s Where to Find your Windows Azure Billable Usage Info offers a guided tour through the Microsoft Online Services’ Customers Portal maze:

This weekend I had someone email me asking me to blog about where to find the usage info used for billing because they were having a hard time finding it and figured others were having difficulty as well.

Coincidentally, I saw an internal thread this morning where someone was asking the very same question so... Erik, I believe you are correct, a post will probably be helpful for folks.

To find your usage info, go to:

https://mocp.microsoftonline.com (From the Developer Portal, you can click on the "Billing" link in the upper right hand corner.

I'm going to walk through getting to the actual bill because I know some people get this far but get lost in some of these pages. …

My version of the trip is Determining Your Azure Services Platform Usage Charges of 1/28/2010.

The Innov8showcase team’s IT Architecture in 2010: Editor's Choice post of 2/8/2010 reports:

Diego Dagum, editor-in-chief at the Architecture Journal, has posted links to a set of articles that lay out the future for cloud computing:

- [ZDNet] The decade in tech: Top 5 stories of the 00s (video) From the Google IPO, to the rise of social networking, it's been an important decade for tech innovation, Two IT content editors talk about the five most important tech events of the decade and what they mean for the technology industry going forward.

- [The Economist] Cloud Computing in the Times Ahead An interview to Brian Boruff, CSC's vice president of Cloud Computing and Software Services. CSC is a Gold Certified MS Partner.

- [Redmond Magazine] Microsoft, Google Debate Cloud Future Google Vice President of Research Alfred Spector and Microsoft Architect Evangelist Bill Zack debated their views on how data will be stored and shared in the future.

- Finally Released: Windows Azure platform, Microsoft's Own Cloud Windows Azure is now commercially available. Usage during the month of January 2010 will be at no charge. Customers to be charged from February 1st, 2010.

- [Information Week] The Top 10 CIO Issues For 2010 For CIOs, 2010 will require new emphases on customers, revenue, external information, and a passion for rapid change.

- [Datamation] It's Time for IT Managers to Adopt Cloud Computing The author of this opinion column considers that those who don't make the move in 2010, will not only be left behind, but will risk losing their jobs as well.

- [IT Business Edge] Fully Clouded by 2012? The author predicts that virtualization and cloud computing will bring an end to IT infrastructure at small and mid-sized organizations, who would outsource these resources to regional dedicated data centers.

- [Ars Technica] Microsoft and HP pump $250 million into cloud computing Microsoft and Hewlett-Packard announced a three-year partnership to simplify IT environments through a wide range of converged hardware, software, and professional services solutions. The goal is to deliver the "next generation computing platform" by leading the adoption of cloud computing.

<Return to section navigation list>

Cloud Security and Governance

Carl Brooks (@eekygeeky) interviews K. Scott Morrison in this Typical network security policies useless in the cloud, warns security pro post of 2/10/2010 to SearchCloudComputing.com:

K. Scott Morrison, CTO and chief architect for Layer 7 Technologies, has a pedigree that includes IBM and medical imaging at the University of British Columbia. He's a prolific author and expert on compliance, governance and standards-based application architecture and security. Morrison says that security should be the primary concern for cloud users and that they may be bringing more assumptions about security into the cloud than they actually realize.

Morrison recently presented SOA Federation Across the Extended Enterprise and Cloud with Forrester Research. He says that security has to be designed with an understanding as to what you're getting -- and what you're giving up.

Where are the concerns for building out into the cloud? It's easy and relatively cheap: all the virtual machines are out there, waiting to be used.

K. Scott Morrison: It's kind of a double whammy. Right now, certainly, you can take your app and build it on your own version of Red Hat or whatever. There's lots of that kicking around, but you've really got to know how to secure that app. When you think about it, the problem with moving out in to the cloud is that everything you put out there has to be secured basically to the same level that you would secure an application in your DMZ.

In other words, you're out there in the Wild West and everything has to be hardened for potential attack from literally any angle, and that's hard stuff to do. It's rocket science stuff. …

Karl also answers these questions in detail:

- Are people bringing assumptions about their own data centers, about what's inside and what's outside and where the edge is, out to the cloud and making mistakes this way? …

- A vastly higher level of risk than people assume even for accepted transmissions over the internet or email? …

- It's not all that bad, is it? Cloud providers do provide some basic protections, right? …

- Boundary of control is easy right now -- it stops at the edge of my network, which is where I start with security. If not the network, where do I set my boundary lines? …

- That's not trivial. Some applications don't play nice with others, not all services are designed to cough up all of the auditing that you want and so on? …

Jay Heiser asserts “[T]here ARE no best practices” for managing cloud security and risk in his If you can’t stand the heat, get your cloud out of the kitchen post of 2/9/2010 to the Gartner blogs:

… I receive several calls a week from people looking for the best practices on managing cloud computing security and risks. My quick response is that there ARE no best practices. That always leads to the question “well, what is everyone else doing?” My answer to this is lengthier and more complex, but it still boils down to the fact that nobody knows what the best practices are going to be. We have some ideas, but the only defensible statement is that this is a period of experimentation and it is too early for definitive conclusions.

Technology vendors, users groups, and governmental agencies are all anxious to provide some leadership in this area. Once the cloud dust clears, its possible that some technique or technology that is new today will be considered the ‘best practice’ for ensuring low risk use of cloud computing for the processing of sensitive data. Until then, temper your expectations.

The various forms of cloud computing are all new. It is a computing model with many hypothetical vulnerabilities, and when used as a multi-tenanted externally-provisioned service, it is undoubtedly the case that entire classes of failure have not yet been considered. …

Steve Clayton points to new Cloud security whitepapers in this 2/9/2010 post:

I just noticed Roger Halbheer has posted a couple of new whitepapers on Cloud Security that are not the usual techno megafest – these are more targeted at the executive summary level.

The first paper is written by Microsoft’s Trustworthy Computing organization and is a high-level overview of the Cloud and its security opportunities and challenges. It’s aptly titled Security in Cloud Computing Overview.

Cloud Computing Security Considerations is a CIO or CEO level paper and Roger is looking for feedback on this one – you can drop him a line at roger.halbheer@microsoft.com. I think it does a good job of covering compliance and risk management; identity and access management; service integrity; endpoint integrity; and information protection.

Joab Jackson reports “Get ready for client-side SQL injection attacks, one researcher warns” in his ShmooCon: Web app storage open to attack article of 2/8/2010 for Network World:

New forms of off-line client-side storage, such as those specified by the emerging HTML 5 set of standards, could open entirely new kinds of attacks to Web application users, said Michael Sutton, vice president of security research for cloud security firm ZScaler.

"As sites start to adopt Google Gears and HTML 5, this whole concept of stealing data from client-side relational databases will become a much, much bigger issue," said Sutton, speaking Sunday at the ShmooCon hacker conference in Washington. "In my opinion [they are] a lot easier to attack."

As ever more applications are being developed that run entirely over the Web, a number of new technologies have been introduced to put small relational databases on users' machines. A database on the client machine can store user data, allowing applications to be used while not on the Internet.

While such off-line storage extends the flexibility of Web applications, it also opens up an entirely new type of vulnerability for users, one that allows snoopers to copy and change the content of these databases, Sutton said.

Joab continues with Sutton’s description of a specific vulnerability of HTML 5 client-side RDBMSs to SQL injection attacks.

<Return to section navigation list>

Cloud Computing Events

Cloud Slam Event Announces the Second Annual, 5-Day, Global Virtual Conference, Covering All Aspects of Cloud, Taking Place April 19-23, 2010 in this 2/10/2010 press release:

Cloud Slam Event announces that Cloud Slam '10, a virtual conference with the mission of promoting Cloud Computing topics via conferences, news, and blogs, will commence on April 19.

Organized by leading experts and authorities in the field, Cloud Slam '10 will highlight research, developments and accomplishments by industry leaders whose contributions are helping to shape Cloud Computing. …

The five-day virtual conference, which will feature keynote speakers such as: Matt Bross, Global CTO, Huawei Technology; Chris C. Kemp, CIO, NASA Ames Research Center; Parker Harris, EVP Technology, salesforce.com; Hal Stern, VP, Sun Microsystems, a subsidiary of Oracle Corporation; Mark de Simone, Chief Business Development Officer, Cordys; Simon Crosby, CTO Data Center & Cloud, Citrix, and Andy Brown, Managing Director, Global Technology Strategy, Architecture and Optimization, Bank of America. Cloud Slam '10 provides industry leaders and professionals an opportunity to learn about published research, unique or emerging ideas, industry best practices, and networking opportunities with peers.

Mario Szpuszta reviewed his Azure presentation in a Cloud Computing & Windows Azure – MSDN Briefing in Austria from January 26th post of 2/10/2010:

On January 26th I presented at our MSDN-Briefing on Cloud Computing and Windows Azure. During the session I introduced our (and my) point-of-view on Cloud Computing. Within the demos I migrated my good old MicroBlog sample I created for a presentation at the technical university of Vienna last year to run on Windows Azure.

Downloads: presentation here, demo here

Codefest.at posting incl. links to recordings (German, only)

Here are the little challenges I had for the migration outlined (please note, that this is just a simple sample – for bigger apps you definitely will run in some more issues – Rainer Stropek and I will talk about these in our Session at Big>Days 2010 in March):

- MicroBlog was a Web Site project – Azure requires Web Application Projects. So I had to convert the project. For doing so, follow these steps:

- Create a new Web Application Project in the same solution as you have your Web Site Project.

- Copy all Contents from the Web Site Project to the Web Application Project.

- Right-click the web application project and select “Convert to Web Application Project” from the context menu.

- Fix remaining compile errors or other errors (namespace changes etc.)

- The ASP.NET Membership API and Roles API scripts and aspnet_regsql both do not work on SQL Azure because they’re using features not supported by SQL Azure. Fortunately Microsoft released a fixed set of scripts and a tool called aspnet_regsqlazure to solve this problem and make Membership API and Roles API available for SQL Azure: http://code.msdn.microsoft.com/KB2006191.

The remaining parts of the application worked pretty well, of course I extended it during the briefing to use Blob- and Table-Storage. But more on that hopefully in later posts.

Randy Bias reminds us on 2/9/2010 that CloudConnect 2010 is slightly over a month away:

If you’re not aware of it, CloudConnect 2010 is coming up on March 15-18th in Santa Clara, CA. Our team is running the Cloud Migrations track and a special breakout session on building Private Clouds. We managed to get some really great panelists for the Migrations track and we’re going to move from talking about the high level business issues like ‘how do I move to cloud?’ to in-depth technical discussions. In between, we’ll showcase some real world cloud migration stories (two great use cases teed up right now!). For the final panel we’ll discuss how internal and external clouds are built if you’re trying to understand “build vs. buy.” So, true to form with the rest of this blog there will be a good mix of both business and technical.

Hope you can join us. You can use the code “CNJRCC06″ to get a free expo pass or 40% off the entire event. You can register here. We look forward to seeing you there.

The Cloud Computing Congress announces free Mobile Cloud Computing Workshops: Applications, Networks, Handsets & Social Media Apps in the Cloud at the Mobile Social Media forum to be held on 3/15/2010 at Olympia, London UK:

The workshops examine why mobile and cloud computing are the perfect fit, and how this can be used to generate the next generation mobile experience. The workshops also cover practical demo of hybrid and mobile cloud computing, and how core functionality can be expanded to mobile devices for the cloud. View the free to attend workshops here.

Additional [exhibition] workshops at the event include cloud application development, Microsoft Azure overview and more. To register for the free to attend workshop please go here. [Emphasis added.]

Co-located conference streams include: Social Media World Forum, Enterprise Social Media, and Social TV

<Return to section navigation list>

Other Cloud Computing Platforms and Services

IBM announced the launch of its Academic Cloud to Speed Delivery of Technology Skills to College Students in a 2/10/2010 press release:

Today at a conference in New York, IBM (NYSE: IBM) announced it will make key parts of its software portfolio available in a cloud computing environment to more easily allow professors around the world to incorporate technology into their curricula. IBM brought together more than 200 academic and industry leaders at the conference to explore how best to integrate technology into all aspects of a college education so the next generation of global entrepreneurs will be differentiated by an education that applies technology to areas such as information management and business analytics, digitized patient records, and clean technologies.

IBM is working initially with 20 colleges and universities across the United States to help them use a new Academic Skills Cloud and will add additional schools over time. The new cloud will provide academia an opportunity to use IBM software at no charge without having to install and maintain it themselves. Cloud computing is a new consumption and delivery model inspired by consumer Internet services. Businesses are rapidly adopting cloud computing to use information technology and services over a secure network.

“The ability to apply technology will be essential to differentiate our graduates as they prepare to enter the work force,” says Jeff Rice, Executive Director of Career Management at Ohio State’s Fisher College of Business. “Fisher College has established multiple research centers where faculty, students, and business leaders can collaborate on contemporary business issues. Several of these centers focus on implementing technologies to improve business processes or commercializing new technologies. The convergence of faculty, students, and business in such educational environments is a winning formula for both higher education and the global economy.” …

(l. to r.) Dean Roger Norton, Marist College, with student Carol Hagedorn, and Jim Corgel, general manager of IBM's academic initiative. IBM today announced an academic cloud that will help colleges integrate technology into their curricula.

Oracle EMEA announed the Oracle Cloud Computing Forum on 2/23/2010 8:30 AM GMT at the Royal Opera House, 7 Bow Street, London WC2E 7AH, UK:

Take Advantage of Cloud Computing Today

Ready to break through the haze around cloud computing? In this full-day event for IT professionals, Oracle experts clarify how organisations can take advantage of enterprise cloud computing. You’ll learn the what, why, and how of cloud computing, so you can develop your organisation’s own cloud strategy and roadmap.

You’ll see real-world solutions in action and learn how Oracle is helping enterprises achieve breakthrough agility, quality of service, and efficiency, while controlling security, compliance, and cost.

Attend the Cloud Computing Forum to learn:

- How IT can become a private cloud service provider for your users

- How to evolve existing enterprise architectures to a cloud model

- How to leverage public clouds from providers like Amazon Web Services

Don’t miss this opportunity to learn how your organisation can break through with cloud computing.

I wouldn’t count on seeing Larry Ellison at the Forum. Amazon Web Services is a sponsor.

Michael Weinberg reports and asks Google Launches Developer Blog; Software Partner Program to Follow? in this 2/10/2010 post to the VAR Guy blog:

The VAR Guy speculated months ago that Google might launch a partner program for independent software vendors (ISVs). The fact that they just launched a Google Apps Developer Blog is just fuel for the fire. Here’s why.

Back in November 2009, Google Apps channel manager Jeff Ragusa pointed out that their APIs were open to any VAR or MSP who could figure out a way to leverage them to add value to their software as a service (SaaS) offerings. Well, now that Google also offers cloud storage, that ball is rolling even faster.

All the same, what sense does it make to launch a blog just for those developers building products around Google Apps unless they’re trying to reach out to them? And why reach out to ISVs now, unless you’re testing the waters to partner with them sooner rather than later?

To me, the question isn’t “will Google partner with software developers.” The question is “when will Google release a full-fledged cloud computing platform to compete with Amazon Web Services and Windows Azure?” They have the infrastructure, the know-how, and the business acumen. They’re cultivating a community of Google-loyal developers, as evidenced by this blog launch.

Why doesn’t Google give ISVs the whole sandbox, rather than a few SaaS toys?

Michael Sheehan’s Feature Release: GoGrid Dedicated Servers, List View, Edit Load Balancer & Others post of 2/10/2010 reports:

Today, the team at GoGrid is pleased to announce several new enhancements and features to our Cloud Infrastructure Hosting service. With us, it is all about trying to make our Cloud offering as powerful as possible. To that end, we have released our latest version of GoGrid, available now! Some highlights include (each of which I will go into further details later on in this post):

- GoGrid Dedicated Servers

- List View of GoGrid Objects

- Edit f5 Load Balancers

- New Login Page

- Self-Service Support Links

- Other Items …

GoGrid’s dedicated server monthly pricing is roughly competitive with Windows Azure hourly pricing, but doesn’t include an operating system or most Azure services:

| Server | Cores | RAM (GB) | Hard Drives | Setup | Month | Annual | Term |

|---|---|---|---|---|---|---|---|

| Standard | 4 | 8 | 2 x 320GB SATA RAID1 | $0 | $200 | $2,000 | 1 Month |

| Advanced | 8 | 12 | 2 x 500GB SATA RAID1 | $0 | $350 | $3,500 | 1 Month |

| Ultra | 8 | 24 | 5 x 147GB SAS RAID5 | $0 | $600 | $6,000 | 1 Month |

Amazon Web Services’ 2/10/2010 Newsletter announces:

- Consolidated Billing for AWS Accounts

- Versioning Support for Amazon S3 Now Available Across all Regions

- Lower Pricing for Outbound Data Transfer

- Amazon Elastic MapReduce Now Supports Job Flow Debugging via the AWS Management Console

Om Malik reports about an interview of Amazon CTO Werner Vogels on Amazon’s Web Services, Startups and Innovation post of 2/1/2010, which I missed when posted:

Over the past four years, I have watched with amazement the rise of Amazon Web Services. What started out as a basic S3 storage service and now includes a content delivery network and virtual private clouds has disrupted — and transformed — the entire technology landscape. Wall Street doesn’t know what to make of AWS, but here in startupville, its utility is pretty clear.

While there is a lot of talk about lean startups and agile startups, had it not been for Amazon’s web services, Internet startups would still be stuck spending on infrastructure without knowing if their service was every going to be a hit. What if San Francisco-based online storage service Dropbox had been forced to start by building its own infrastructure, like back in the old days? It never would have been able to grow and scale as quickly as it has. More importantly, it would have had to spend hundreds of thousands of dollars to just hang its proverbial shingle. Instead, it used Amazon’s S3 storage service.

At the DLD Conference in Munich last week, over a cup of tea, I sat down for a casual chat with Werner Vogels, Amazon’s chief technology officer. I asked him about the company’s role as a catalyst for innovation. In classic Amazon style, he dismissed the notion, instead choosing to focus entirely on the “cloud.” …