Windows Azure and Cloud Computing Posts for 1/27/2010+

| Windows Azure, SQL Azure Database and related cloud computing topics now appear in this weekly series. |

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Table and Queue Services

- SQL Azure Database (SADB)

- AppFabric: Access Control, Service Bus and Workflow

- Live Windows Azure Apps, Tools and Test Harnesses

- Windows Azure Infrastructure

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Read the detailed TOC here (PDF) and download the sample code here.

Discuss the book on its WROX P2P Forum.

See a short-form TOC, get links to live Azure sample projects, and read a detailed TOC of electronic-only chapters 12 and 13 here.

Wrox’s Web site manager posted on 9/29/2009 a lengthy excerpt from Chapter 4, “Scaling Azure Table and Blob Storage” here.

You can now download and save the following two online-only chapters in Microsoft Office Word 2003 *.doc format by FTP:

- Chapter 12: “Managing SQL Azure Accounts, Databases, and DataHubs*”

- Chapter 13: “Exploiting SQL Azure Database's Relational Features”

HTTP downloads of the two chapters are available from the book's Code Download page; these chapters will be updated for the November CTP in January 2010.

* Content for managing DataHubs will be added as Microsoft releases more details on data synchronization services for SQL Azure and Windows Azure.

Off-Topic: OakLeaf Blog Joins Technorati’s “Top 100 InfoTech” List on 10/24/2009.

Azure Blob, Table and Queue Services

The ADO.NET Data Services Team (a.k.a. the OData Team) announced Data Services Update for .NET 3.5 SP1 – Now Available for Download on 1/27/2010:

We’re very excited to announce that the “Data Services Update for .NET Framework 3.5 SP1” (formerly known as “ADO Data Services v1.5”) has been re-released and is available for download; the issue with the previous update has been resolved. If your target is Windows7 or Windows 2008 R2 you can pick it up here. For all other OS versions you can get the release from here. This release targets the .NET Framework 3.5 SP1 platform, provides new client and server side features for data service developers and will enable a number of new integration scenarios such as programming against SharePoint Lists.

As noted in the release plan update post, this release is a redistributable, in-place update to the data services assemblies (System.Data.Services.*.dll) which shipped as part of the .NET Framework 3.5 SP1. Since this is a .NET Framework update, this release does not include an updated Silverlight client library, however, we are actively working on an updated Silverlight client to enable creating SL apps that take full advantage of the new server features shipped in this release. We hope to have the updated SL client available shortly into the new year. …

This final release includes all the features that were in the prior CTP1 release and CTP2 releases. …

The team goes on to detail a long list of features and delivers a brief FAQ. The question is “When will Storage Client support all these new features?”

Jerry Huang claims If you know C#, you know Windows Azure Storage in this 1/26/2010 post to his Gladinet blog:

I have been using Gladinet Cloud Desktop to manage files on the Azure Blob Storage for a while now. It has a drive letter, accessible from Windows Explorer and it works just like another network drive on my PC.

However, just like when you are driving a car on a daily basis but sometimes still curious about what is under the hood and check the oil level on weekends, I am curious about how Azure Storage works.

To dive into the Azure Storage, you will need the Azure SDK (Nov 2009 Release) to work with Visual Studio 2005 or VS08. VS 2010 will have Azure SDK built in.

First, you will need to have some basic knowledge about the Azure Blob Storage. As shown in the following picture, Each Windows Live ID (Master Azure Account) can have multiple projects (Accounts). Each Account has multiple containers. Each containers may have multiple Blobs. Each Blob may have multiple blocks. After you know this, the rest will be just C# and .NET. …

Pablo Castro breaks a long silence about Astoria topics in his personal blog with an Implementing only certain aspects of OData post of 1/26/2010:

While we focus on keeping things simple, the whole OData protocol does have a bunch of functionality in it, and you don't always need the whole thing. If you're implementing a client or a server, how much of OData do you need to handle?

OData is designed to be modular and grow as you need more features. We don't want to dictate exactly everything a service needs to do. Instead we want to make sure that if you choose to do something, you do it in a well-known way so everybody else can rely on that. …

If you’re not up to date on Microsoft’s latest technology name metamophoses, OData (O[pen]Data) is the new name for ADO.NET Data Services (formerly code-named Astoria.) Pablo’s last Astoria-related post was in October 2009.

<Return to section navigation list>

SQL Azure Database (SADB, formerly SDS and SSDS)

Liam Cavanagh reviews my new Data Sync post (see below) in his Sending Email Notifications Using SQL Azure Data Sync post of 1/28/2010 to the Microsoft Sync Framework blog:

I love to see when users take the products we have created and add extensions to it to suit their specific needs. Earlier this week I talked about how Hilton Giesenow's extended SQL Azure Data Sync to create a custom synchronization application using VB.NET to allow him to do custom conflict resolution and get better control of events in his webcast series "How Do I: Integrate an Existing Application with SQL Azure?". Today, Roger Jennings of Oakleaf Blog pointed me to his overview of SQL Azure Data Sync in one of his latest posts: "Synchronizing On-Premises and SQL Azure Northwind Sample Databases with SQL Azure Data Sync".

I really like this latest blog post by Roger because not only does he walk through the capabilities of the tool from start to finish, but he also spends some time talking about one of the common issues users have had using the tool (I call it the "Dreaded ReadCred Issue"). But most of all I enjoyed reading how Roger took what had been done in Hilton's webcast and expanded it even further. At the end of the post Roger explains how you can add the ability to send email notifications when the synchronization process fails, succeeds or both. Definitely a very useful capability for a DBA. …

Many thanks, Liam.

My Synchronizing On-Premises and SQL Azure Northwind Sample Databases with SQL Azure Data Sync article of 1/28/2008 begins:

SQL Azure Data Sync[hronization] is an alternative to using SQL Server 2008 R2 Management Studio (SSMS) [Express] or the SQL Azure Migration Wizard (SQLAzureMW) v3.1.4+ for replicating schemas of on-premises and SQL Azure databases and bulk-loading a snapshot of data after creating the schema.

The most obvious application for SQL Azure Data Sync is automatically maintaining an on-premises backup of an SQL Azure database. Another common use is synchronizing databases stored in multiple data centers for disaster protection.

Note: This post is an addendum to the updated version of Chapter 13, “Exploiting SQL Azure Database’s Relational Features” of my Cloud Computing with the Windows Azure Platform book.

Contents:

- Committing to SQL Azure Data Sync

- Sync Framework Background and Prerequisites

- The Computing Environment Used for this Example

- Setting Up SQL Server Agent for Data Synchronization

- Adding the SMTP Server Feature and Enabling Database Mail

- Creating the On-Premises Northwind Sample Database

- Creating and Populating the NorthwindDS SQL Azure Database

- Running the SyncToSQLAzure-Sync_Northwind SQL Server Agent Job Manually

- Sending E-Mail Notifications when Data Sync Jobs Fail, Succeed or Both

- Working Around “ReadCred Failed” Errors when Running the SQL Agent Job

The post is lengthy and “lavishly illustrated.”

Rob Sanders describes building A Dynamic Data Website with SQL Azure and the Entity Framework in this 1/26/2010 post:

This is part of a series of entries written about Microsoft’s new SQL Azure database service and the Entity Framework v4.

Following on from my previous posts (check them out before continuing) – this article assumes you have followed steps outlined in the previous posts to create various models and accounts etc.

- Part 1 - Working with Entity Framework v4 and SQL Azure

- Part 2 - Next Steps with SQL Azure

- Part 3 - Putting it all together – SQL Azure

- Part 4 - (this post)

- Addendum - Getting a SQL Azure Database

… Our next step is to create a Dynamic Data website. If you haven’t come across this yet, it’s most likely because you haven’t been using Visual Studio 2010 or the .Net Framework 4.0. Recently introduced and compatible with both LINQ-to-SQL and the Entity Framework, this nice site template makes use of the dynamic nature of both LINQ-to-SQL [.dbml] (SqlMetal) and Entity Framework [.edmx] data models. …

<Return to section navigation list>

AppFabric: Access Control, Service Bus and Workflow

Brian Loesgen continues his series with Azure Integration Part 3 - Sending a Message from an ESB off-ramp to Azure’s AppFabric ServiceBus of 1/25/2010:

This is the third post in this series. So far, we have seen:

- Post #1: Creating an ESB on-ramp that receives from Azure’s AppFabric Service Bus

- Post #2: Sending a Message from BizTalk to Azure’s AppFabric ServiceBus with a Dynamic Send Port

In this third post, we will see how to use an ESB off-ramp to send a message to the Windows Azure AppFabric ServiceBus. We will actually be doing the same thing as we did in the second post, however, we’ll be doing it in a different way.

There is an accompanying video for this post (as I did with the others too), which you can find here.

The sequence used here is:

- Message is picked up from a file drop (because that’s how most BizTalk demos start:))

- An itinerary is retrieved from the itinerary repository and applied to the message

- The itinerary processing steps are performed, and the message is sent to the ServiceBus

- The message is retrieved by the receive location I wrote about in my previous post

- A send port has a filter set to pick up messages received by that receive port, and persists the file to disk

The last two steps are not covered here, but are shown in the video.

<Return to section navigation list>

Live Windows Azure Apps, Tools and Test Harnesses

My Determining Your Azure Services Platform Usage Charges post of 1/28/2010 is and illustrated tutorial for determining usage patters before Microsoft begins charging for the platform services on 2/1/2010:

I see many questions from Azure Services Platform users about determining usage of Windows Azure compute, storage and AppFabric resources, as well as SQL Azure Databases, but seldom see an answer.

Here’s how to determine your daily usage of these resources:

1. Sign into Microsoft Online Service’s Customer Portal and log in with the Windows Live ID for your Windows Azure and SQL Azure services (click reduced-size images to display 1024x768 captures):

2. Click the View my Bills link (highlighted above) to open the Profile Orders page:

…

And continues with the details of how to load the daily usage details into an Excel workbook.

Ben Riga’s Windows Azure Lessons Learned: RiskMetrics of 1/28/2010 continues his “Lessons Learned” series;

In this episode of “Azure Lessons Learned” Rob Fraser from RiskMetrics talks about the work they’ve done with Windows Azure to scale some of their heavy computational workloads out to thousands of nodes on Windows Azure.

RiskMetrics specializes in helping to manage risk for financial institutions and government services. The solution they built on Windows Azure is primarily for calculating financial risk for their clients. Calculating the risk on portfolios of financial assets is an incredibly compute-intensive problem to solve (Monte Carlo simulations on top of Monte Carlo simulations). There is an ongoing and increasing demand for this type of computation. RiskMetrics calculations require enormous computational power but the need for that power tends to come in peaks. That means the required hardware is idle for much of the time. Windows Azure solves this problem by allowing RiskMetrics to quickly acquire the very large number of required processors, use them for a short time and then release them.

Channel 9: Windows Azure Lessons Learned: RiskMetrics

To give you a sense of the scale RiskMetrics is talking about, the initial target is to use 10,000 worker roles on Windows Azure. And that’s just a beginning as Rob thinks they could eventually be using as many as 30,000.

John Moore delivers an Update: Siemens Brings HealthVault to Europe in this 1/28/2010 analysis of the Siemens/Microsoft agreement:

Today, Siemens announced that it has struck a deal with Microsoft to create a German instance of the HealthVault platform to serve the citizens of Germany. In a deal similar to the one that Microsoft struck with Canadian telecom, Telus, Siemens IT Solutions and Services (SIS) will re-purpose the base HealthVault platform to meet Germany’s legal framework for Personal Health Information (PHI) and seek German partners to create a rich ecosystem of data providers (insurers, providers) and apps/services to serve this market.

After a joint briefing with Microsoft and Siemens as well as interviews with three German software firms, SAP (one of the world’s largest enterprise software companies), ICW (healthcare IT infrastructure & PHR solutions) and careon (case/disease mgmt & PHR solutions), here is the scoop:

The Skinny:

Siemens SIS is 38,000 employees strong operating in 20 countries with about 10% of those employees dedicated to the healthcare market. In addition to a deep presence in Germany, Siemens SIS supports CareNet in Belgium – leading one to conclude that Belgium may be the next extension of this agreement with Microsoft.

This is an exclusive license between Microsoft and Siemens to serve the German market and both companies stated that this is a very long-term contract as it will takes years to develop, deploy and gain traction. Terms of agreement were not disclosed, but both companies will share in revenue generated.

Target market/business model is to sell the HealthVault service to potential sponsors that have a desire to improve care and disease management. Likely candidates include payers and employers. Hospitals are also a potential target market.

Service will go live in second half of 2010 and include the entire HealthVault platform, including Connection Center for biometric devices. Existing HealthVault ecosystem partners with solutions pertinent to the German market will be included and Siemens is currently in discussions with many eHealth companies in Germany to on-board them as well upon formal launch of the platform later this year. …

John continues with detailed “Impressions, Prospects, Challenges” and “The Wrap” sections.

David Linthicum writes “There are three models of cloud computing, and the one you use determines the kind of performance you get” as a preface to his How to gauge cloud computing performance in this 12/28/2009 post:

Does cloud computing perform well? That depends on whom you ask. Those using SaaS systems and dealing with standard Web latency can't tell you much about performance. However, those using advanced "big data" systems have a much different story to relate.

You need to consider the performance models, which you can break into three very basic categories:

- Client-oriented (performance trade-off)

- Cloud-oriented (performance advantage)

- Hybrid (depends on the implementation)

Dave continues with analyses of the three models.

Vijay Rajgopalan reports New version of Zend Framework adds support for Microsoft Windows Azure in this 1/28/2010 post to the Interoperability@Microsoft blog:

Zend Technologies Inc. has announced the availability of Zend Framework 1.10, which among other new features includes support for Microsoft Windows Azure cloud services. We’re very excited about this key milestone, which is the result of a fruitful collaboration! This particular project started last year when we announced the Windows Azure SDK for PHP CTP release and upcoming support in Zend Framework. I also want to thank again Maarten Balliauw who has been a key contributor to the initial project.

With the new Zend Framework 1.10, by simply using the new Zend_Service_WindowsAzure component, developers can easily call Windows Azure APIs from their PHP applications and leverage the storage services, including Blob Storage, Table Storage and Queue Service, offering them a way to accelerate web application development and scale up on demand.

With this announcement, PHP Developers now have great choice when it comes to writing web applications targeting Windows Azure. Besides the Windows Azure SDK included in Zend Framework, there is Windows Azure SDK for PHP which is already prepackaged in Windows Azure tools for Eclipse and the more simpler Simple Cloud API. …

The Windows Azure Team reports Jordan Brand Social Mosaic Goes Live with Windows Azure Cloud Services on 1/27/2010:

We love sharing stories about the interesting and creative ways that customers are using Windows Azure Platform. One great new story about a cool new implementation is the Mosaic 23/25 built by Wirestone for Jordan Brand, a division of Nike, Inc., to celebrate their 25-year anniversary during the NBA 2010 All-Star Weekend. Jordan Brand is leveraging Microsoft solutions to engage consumers to creatively market their new "Air Jordan 2010" collection and enable enthusiasts to personally connect with the Jordan Brand. …

Some of the underlying capabilities of the Windows Azure platform used to support the Social Mosaic include:

- Windows Azure Blob Storage - The natural place to store the uploaded photos and resultant deep zoom imagery.

- Windows Azure Queue Storage - Used to provide an asynchronous mediation point between Azure Blob Storage and backend Worker Roles; also used to queue email notifications.

- Windows Azure Table Storage - Used to quickly and efficiently store data around Worker Role scale out coordination, leveraging atomic change guarantee patterns.

- SQL Azure - Used to relationally store other important schema governed data, thus providing a more rich functionality to manage select data.

Head to the Social Mosaic and see for yourself how these great technologies are working to help create a global groundswell of brand advocacy for Jordan Brand, and how it brings the Jordan Brand experience to life.

Nancy Gohring reports “The company is working on moving its child exploitation app to the cloud” in her Ballmer: The cloud will bring new apps to law enforcement post of 1/27/2010 to NetworkWorld:

A move to the cloud will enable new kinds of applications that public safety and law enforcement agencies can use to do their jobs better, Microsoft’s CEO said during its annual Worldwide Public Safety Symposium on Wednesday.

“It’s fantastically important,” Microsoft CEO Steve Ballmer said at the event at the company’s Redmond, Washington, headquarters. “The cloud isn’t just about cost and efficiency but building a whole new generation of applications that will be far more able to get the job done than anything that would have been able to be built in yesterday’s model.”

Microsoft’s Child Exploitation Tracking System is an example of how moving applications to the cloud can advance an application. CETS is a software product that law enforcement officers use to search, share and analyze evidence in child exploitation cases across police agencies.

CETS is deployed in 10 countries including Canada and Spain. However, data in CETS is not shared across borders.

Microsoft is now working with Interpol to explore moving CETS to the cloud so that agencies in different countries can share data, Ballmer said. “If ever there was an application that would benefit from coming to the cloud so data could be shared by law enforcement in multiple jurisdictions, this is the app,” he said. Criminals involved in child exploitation typically work across borders, meaning law enforcement often loses track of perpetrators as they cross borders. “This highlights why a move to the cloud makes a difference,” Ballmer said. …

Eric Nelson reports the Results of Cloud Computing Survey - Part 1: Is Cloud relevant? in this 1/27/2010 post:

On January 7th 2010 I kicked off a survey on Cloud Computing and the Windows Azure Platform (Now closed). A big thanks to the 100 folks who completed the survey. I have been through the results and removed a few where folks clearly dropped out after page 1 (which is fine – but I felt it wasn’t helping the results).

I promised to share the results which I will do over four posts. This is the first of those four.

- Part 1: Is Cloud relevant?

- Part 2: How well do you know the technologies of Microsoft, Amazon, Google and SalesForce?

- Part 3: What Plans around the Windows Azure Platform?

- Part 4: My analysis

Some observations:

- I am a .NET developer (well, I try to be), therefore my expectation is that most folks replying would in the main be .NET developers. This will obviously means the results will end up favouring MS technologies.

- I am UK based, hence most of the respondents are from the UK.

- At the time of creating the survey I had only just switched to Azure. Hence I think my “readership” at that point were not “Azure fans” or “Cloud fans” – instead they were likely a cross section of the development landscape. Which I think makes the answer to question 2 very interesting. …

Eric continues with screen captures of replies to the questionnaire.

Jim Nakashima’s Windows Azure Debugging: Matching an Instance in the DevFabric to its Process tutorial of 1/26/2010 begins:

One of the things I run into from time to time is the need/desire to match an instance I’m watching in the Development Fabric UI back to its process.

This may be because I am sitting on a breakpoint in Visual Studio and want to look at the corresponding logs for that process in the DevFabric UI or because I want to use some non Windows Azure aware tool that requires a process ID.

For example, given a Web and a Worker role with 3 instances each, I can watch the instances in the development fabric UI by right clicking on the Windows Azure task tray icon and select “Show Development Fabric UI”:

This is useful for a couple of reasons, sometimes I want to double check the status of my roles and instances but more commonly I want to watch the logs on a per instance basis. …

Hovhannes Avoyan asks “But who’s actually got some form of cloud solution working for them?” as the introduction to his Companies Recognize Importance of Cloud, But Minority Act post of 1/26/2010:

Symantec’s cloud survey was conducted by Applied Research and polled 1,780 globally with at least 1,000 employees.

Some interesting points about cloud computing were made in Symantec’s recent 2010 State of the Data Center survey. Basically, the report offers a lot of statistics that say companies think cloud computing is an important priority but that actual deployments and activity remain pretty low.

For example, more than half surveyed said cloud computing was an important priority for this year, according to a report I read about the survey. Among them, 57% said private cloud computing is either somewhat or absolutely important; 54% had the same assessment about hybrid cloud computing; and 53% said the same about public cloud computing.

But who’s actually got some form of cloud solution working for them? Only about 20%, apparently – using it as a cost-containment strategy. Of those, just under one-quarter used private cloud computing last year to cut or tighten costs, while 22% relied on a hybrid cloud solution and only one in five used public cloud computing. …

Howard D. Smith’s Dynamax digital signage on Azure post of 1/26/2009 reports:

Dynamax Technologies is to launch a new digital signage application using the Windows Azure platform. digitalsignage.NET will enable customers to securely access their digital signage system from anywhere in the world. It also supports easier administration, whilst removing from the user the arduous task of maintaining servers and technical infrastructure.

"The Windows Azure platform provides greater choice and flexibility in how we develop and deploy scalable, but easy-to-use digital signage solutions, to our world wide partners and customers,” said Howard D. Smith, Director and CTO at Dynamax. “There has always been the issue of scalability and resilience with traditional hosting technologies for SaaS solutions; Windows Azure helps us to address that.

“digitalsignage.NET by dynamax automates and simplifies critical processes such as managing your own servers and data centers, managing security and scalability planning. It is designed for all levels of users; from the single screen deployment right through to enterprise level deployments, all on a simple low cost subscription pricing model. Built on ASP.NET technologies and designed from the ground up to be an easy-to-use digital signage cloud application, digitalsignage.NET by dynamax, we feel, will herald a new benchmark in the digital signage market.”

“Through the technical and marketing support provided by the Front Runner program, we are excited to see the innovative solutions built on the Windows Azure platform by the ISV community,” said Doug Hauger, general manager for Windows Azure Microsoft. “The companies who choose to be a part of the Front Runner program show initiative and technological advancement in their respective industries.”

Wikipedia says: “Digital signage is a form of electronic display that shows information, advertising and other messages.”

Lydia Leong analyzes the Microsoft/Intuit Windows Azure/QuickBooks partnership in her Cloud ecosystems for small businesses post of 1/26/2010:

As I’ve been predicting for a while, Microsoft and Intuit have joined forces around Quickbooks and Azure: Microsoft and Intuit announced that Intuit would name Microsoft’s Windows Azure as the preferred platform for cloud app development on its Intuit Partner Platform. This is an eminently logical partnership. MSDN developers, are a critical channel for reaching the small business with applications, Azure is evolving to be well-suited to that community, and Intuit’s Quickbooks is a key anchor application for the small business. Think of this partnership as the equivalent of Force.com for the small business; arguably, Quickbooks is an even more compelling anchor application for a PaaS ecosystem than CRM is. …

Whatever your business is, if you want to create a cloud ecosystem, you need an anchor service. Take something that you do today, and leverage cloud precepts. Consider doing something like creating a data service around it, opening up an API, and the like. (Gartner clients: My colleague Eric Knipp has written a useful research note on this topic entitled Open RESTful APIs are Big Business.) Use that as the centerpiece for an ecosystem of related services from partners, and the community of users.

Michael Coté’s Using the Intuit Partner Platform, Alterity’s story – RIA Weekly #69 post of 1/26/2010 to the Enterprise Irregulars blog delivers his latest podcast:

In this episode, I talk with Alterity’s Brian Sweat about launching their new application, Easy Analytics for Inventory, on the Intuit Partner Platform. We talk about IPP, Flex, cloud, analytics, and the ready to go QuickBooks customer-base of 4.5 million users – a pretty exciting setup for one development team’s story on using RIAs and the cloud. [Emphasis added.] …

Michael adds:

If you’re interested in an overview of IPP, I suggest last week’s episode with Intuit architect Jeff Collins.

Adam Bird explains Automating Azure deployment with Windows PowerShell in this 1/22/2010 post:

One of the pre-requisites I had for using Azure was that it could be deployed automatically as part of an integration and deployment process.

A quick scan through the Labs in the Windows Azure Platform Kit, which by the way have been an excellent resource so far, gave me Windows PowerShell as the option.

It proved pretty easy to get up and running. Here's my quick start guide. …

Return to section navigation list>

Windows Azure Infrastructure

James Urquhart reports about feedback on his original proposal in his Payload descriptor for cloud computing: An update post of 2/28/2010 to C|Net News’ The Wisdom of Clouds blog:

Recently, I outlined my thoughts around simplifying application delivery into cloud-computing environments. At the time, I thought what was needed was a way to package applications in a universal format, whether targeted for infrastructure or platform services, Java or Ubuntu, VMs or disk drives.

The core concept was to define this format so that it combines the actual bits being delivered with the deployment logic and run-time service level parameters required to successfully make the application work in a cloud. …

Thankfully, I received tremendous feedback on the application packaging post, both in the comments on CNET and from a large number of followers on Twitter. The feedback was amazing, and it forced me to reconsider my original proposal.

…

Brenda Michelson reports Enterprise Management Associates: CapEx reduction is largest, but not sole, cloud computing benefit in this 1/28/2010 post:

Each time I think I have my cloud computing survey list set, another is released. The latest is from Enterprise Management Associates, in a report entitled The Responsible Cloud. The report price is outside of my price range, but Data Center Knowledge provides a good summary.

The survey sample:

“Enterprise Management Associates (EMA) interviewed 159 enterprises with active, or immediately planned cloud deployments, and reports that 75 percent said private cloud is the preferred model. Fifty two percent are implementing both on-premises and off-premises clouds…”

The key findings, according to Data Center Knowledge:

“Of the enterprises already running cloud computing, lowered IT capital costs (hardware, facilities, licenses, etc.) was cited by 61% of respondents. One quarter of all respondents reported that they had reduced both capital expenditure and operational expenditures such as staff, power, rent and maintenance costs.”

Her article continues with more findings from th eData Center Knowledge post.

Lori MacVittie writes “I haven’t heard the term “graceful degradation” in a long time, but as we continue to push the limits of data centers and our budgets to provide capacity it’s a concept we need to revisit” as an introduction to her How to Gracefully Degrade Web 2.0 Applications To Maintain Availability post of 1/27/2010:

You might have heard that Twitter was down (again) last week. What you might not have heard (or read) is some interesting crunchy bits about how Twitter attempts to maintain availability by degrading capabilities gracefully when services are over capacity.

“Twitter Down, Overwhelmed by Whales” from Data Center Knowledge offered up the juicy details:

The “whales” comment refers to the “Fail Whale” – the downtime mascot that appears whenever Twitter is unavailable. The appearance of the Fail Whale indicates a server error known as a 503, which then triggers a “Whale Watcher” script that prompts a review of the last 100,000 lines of server logs to sort out what has happened.

When at all possible, Twitter tries to adapt by slowing the site performance as an alternative to a 503. In some cases, this means disabling features like custom searches. In recent weeks Twitter.com users have periodically encountered messages that the service was over capacity, but the condition was usually temporary. At times of heavy load for more on how Twitter manages its capacity challenges, see Using Metrics to Vanquish the Fail Whale.

I found this interesting and refreshing at a time when the answer to capacity problems is to just “go cloud”, primarily because even if (and that’s a big if) “the cloud” was truly capable of “infinite scale” (it is not) it is almost certainly a fact that most organization’s budgets are not capable of “infinite payments” and cloud computing isn’t free. …

Kevin Jackson writes “Available now for pre-order on Amazon, this guide is a crystal ball into the future of business” as an introduction to his Review: Executive's Guide to Cloud Computing by Eric Marks and Bob Lozano of 1/27/2010:

Recently, I had the privilege of reviewing an advance copy of Executive's Guide to Cloud Computing by Eric Marks and Bob Lozano.

Available now for pre-order on Amazon, this guide is a crystal ball into the future of business.

Not a technical treatise, this excellent book is an insightful description of how cloud computing can quickly sharpen the focus of information technology and line executives onto the delivery of real value.

Using clear prose, Eric and Bob explain how cloud computing elevates IT from it's traditional support role into a new and prominent business position.

The Windows Azure Support Team announced Dallas Feature Voting and reminded folks to vote for Windows Azure and SQL Azure features in We would love to hear your Azure Ideas of 1/26/2010:

Do you want to have a say in the future of Azure? Do you have a great idea that would really make one of the Azure products really great? Well we would love to hear from you. You can submit your ideas on http://www.mygreatwindowsazureidea.com.

You can also vote on any of the existing suggestions to help bring awareness to the great idea. The Azure teams will be monitoring this site and looking to add additional features into future versions of the products.

There is also a place for SQL Azure Feature Voting and Dallas Feature Voting.

John Treadway asserts in his Private (external) Clouds in 2010 post of 1/26/2010:

At the enterprise level, the interest in private clouds still exceeds serious interest in public clouds. Gartner and others predict that private cloud investments in the enterprise will exceed public cloud through 2012. In my conversations with people, there appears to be some confusion as to just what is a private cloud, where you might find them, and how they can be used.

My definition of what distinguishes a private cloud from a public cloud is very simple — tenancy at the host level. If more than one organization is sharing the physical infrastructure, it’s Public. If it’s just you on the box – it’s Private. It’s not about where it runs, because some of my smarter cloud colleagues in the industry believe that the hottest deployment model for private clouds this year will be external — in someone else’s data center. That means that “most” private cloud deployments in 2010 could very well NOT be inside the corporate firewall. [Emphasis added.] …

Dustin Arnheim is “Defining elastic application environments” in his What Does Elastic Really Mean? article of 1/26/2010:

In terms of cloud computing in application environments, elasticity is perhaps one of the more alluring and potentially beneficial aspects of this new delivery model. I’m sure that to many of those responsible for the operational and administrative aspects of these application environments, the idea that applications and associated infrastructure grows and shrinks based purely on demand, without human intervention mind you, sounds close to a utopia. While I would never dispute that such capability can make life easier and your environments much more responsive and efficient, it’s important to define what elasticity means for you before you embark down this path. In this way, you can balance your expectations against any proposed solutions. …

Steve Clayton reported on a speech Microsoft’s Brad Smith gave in Brussels on 1/26/2010 in The cloud in Europe – challenges and opportunities of the same date:

Brad Smith, Senior Vice President and General Counsel at Microsoft gave a speech titled “Technology leadership in the 21st century: How cloud computing will change our world”. It follows a similar speech he gave last week in the US about building confidence in the cloud. In that speech, he made some direct requests of US Congress and US industry to act together. He called for both bodies to act to provide a “safe and open cloud” and for the US Congress to deliver a Cloud Computing Advancement Act. Big, bold words and frankly it’s what customers should be demanding of all cloud vendors, not just Microsoft. There is

goldsilver in the cloud for sure, but wherever there are riches, there are inevitably those looking to profit illegally. While many consumers put blind faith in the cloud, Government and industry can’t afford to do so. There are other challenges regarding policy that I’ll touch on below.In today’s speech in Brussels, Brad focused on a number of areas – some very similar, but some new themes too. He talked about the huge potential the cloud holds for small and medium businesses in terms of economic growth and job creation. Put simply, the cloud enables small guys to have IT operations just like big guys and pay only for what they use.

The Etro Study, “The Economic Impact of Cloud Computing on Business Creation, Employment and Output in Europe” concluded that the adoption of cloud computing solutions could create a few hundred thousand new small-and medium-sized businesses in Europe, which in turn could have a substantial impact on unemployment rates (reduced by 0.3/0.6 %) and GDP growth (increased by 0.1/0.3%). The study also concluded that these positive benefits will be “positively related to the speed of adoption” of cloud computing. …

David F. Carr’s 5 Things You Need to Know about Platform as a service of 1/25/2010 expands on the following points:

- It's like glue. …

- You can test it now. …

- Start small. …

- Scalability isn't guaranteed. …

- Platforms aren't Portable. …

What’s more interesting about this post to CIO.com is that the San Francisco Chronicle picked it up for its “Business” section. Unlike many other authors of these short summaries, Carr includes Windows Azure in his analysis.

Joel York analyzes Cloud Computing vs. SaaS – Mass Customization in the Cloud in this 1/25/2010 post:

SaaS Do #8 Enable Mass Customization is a core principle for building SaaS applications. Salesforce.com, for example, has taken it to new heights with offerings such as the Force.com platform. However, do SaaS-based development platforms such as Force.com represent a fundamental shift in application development, or are they simply the SaaS equivalent of Microsoft Visual Basic for Access? How do they stack up against cloud computing platforms like Amazon Web services? This post examines the potential for competitive advantage through mass customization in cloud computing vs. SaaS.

The short answer is this…

Mass customization in cloud computing is more natural, more flexible, and offers more potential for competitive advantage than in the wildest dreams of SaaS, because cloud computing is built on Web services that are a) inherently abstracted, b) independent components and c) accessible at every layer of the technology stack. …

<Return to section navigation list>

Cloud Security and Governance

Ellen Rubin writes “To codify data security and privacy protection, the industry turns to auditable standards” as a preface to her Security vs. Compliance in the Cloud post of 1/28/2010:

Security is always top of mind for CIOs and CSOs when considering a cloud deployment. An earlier post described the main security challenges companies face in moving applications to the cloud and how CloudSwitch technology simplifies the process. In this post, I’d like to dig a little deeper into cloud security and the standards used to determine compliance.

To codify data security and privacy protection, the industry turns to auditable standards, most notably SAS 70 as well as PCI, HIPAA and ISO 27002. Each one comes with controls in a variety of categories that govern operation of a cloud provider’s data center as well as the applications you want to put there. But what does compliance really mean? For example, is SAS 70 type II good enough for your requirements, or do you need PCI? How can your company evaluate the different security claims and make a sound decision?

Ellen continues with detailed discussions about:

- SAS 70 (Types I and II)

- PCI (and Its HIPAA Component)

- Compliance Building Blocks

- Deploying to the Cloud

Lori MacVittie asserts “Using HTTP headers and default browser protocol handlers provides an opportunity to rediscover the usability and simplicity of the mailto protocol” in her How to Make mailto Safe Again post of 1/28/2010:

Over the last decade it's become unsafe to use the mailto protocol on a website due to e-mail harvesters and web scraping. No one wants to put their e-mail address out on teh Internets because two minutes after doing so you end up on a trillion SPAM lists and the next thing you know you're changing your e-mail address.

But people still wanted to share contact information, so it became common practice to spell out your e-mail address, such as l.macvittie AT F5 dot com. But e-mail harvesters quickly figured out how to circumvent that practice so people got even more inventive, describing how to type the @ sign instead. For example, you can send me an e-mail at l.macvittie SHIFT 2 f5.com. But that's inconvenient and isn't easily automated, and eventually the e-mail harvesters figure that one out, too. …

Lori goes on to explain three solutions.

David Linthicum explains Why design-time service governance makes less sense in the cloud in this 1/26/2010:

I seem to have struck a nerve with my post "Cloud computing will kill these 3 technologies," including my assertion that that design-time service governance would fall by the wayside when considering the larger cloud computing picture. Although this is unwelcome news for some, the end result will still be the same and a prediction I stand by.

<Return to section navigation list>

Cloud Computing Events

Andrew Coates reports on 1/28/2010 that “The one and only Dave Lemphers is coming back to Australia for a week at the end of February” for Windows Azure User Group Briefings:

The one and only Dave Lemphers is coming back to Australia for a week at the end of February for a whirlwind tour of the country to coincide with the launch in Australia of Windows Azure. He'll be doing public technical briefings hosted by the user groups in 5 capital cities.

Register Here for the 2/22/2010 session in Adelaide.

If/when there are registration links for the events in the other 4 cities, I'll update them here. Otherwise, just rock up at the date/time above.

For more details about Windows Azure in Australia, see Greg Willis' post from last month.

John McClelland announced Live Meeting: Is Azure Right For My Business? Webcast for 2/25/2010 in this 1/28/2010 post:

Did you miss the Azure roadshow for Microsoft Partners in December? Are you still pondering the business opportunity behind Azure? Well block off 2-hours on your calendar today to attend our Live Meeting presentation of the roadshow content on Thursday, February 25th. Gather up your team for an extended lunch to learn from leading edge partners on what the Windows Azure opportunity can mean for your business. For more information and to register for the event go to https://swrt.worktankseattle.com/webcast/3936/preview.aspx. …

John is a Principal Partner Evangelist with Microsoft's U.S. Developer and Platform Evangelism team.

Matt Deacon reported Microsoft Architect Insight Conference 2010 – registration open! in this 1/28/2010 post:

The 5th annual Microsoft Architect Insight Conference is now open for registration!

This year we’re moving back to the full 2-day, 3-track format of previous years but with the flexibility to choose whether to attend one or both days.

Day 1 will focus on Architecting Today: From Cost to Innovation

Day 2 will focus on Architecting Tomorrow: Implications of CloudThere are a pile of cracking key notes (see some of our headliners below) and the breakouts will focus on the needs of the Enterprise, Solution and Infrastructure architect, on top we are planning a series of interactive tracks so we can delve deeper into the topics addressed in the main break outs!

- Iain Mortimer, Chief Architect, Merrill Lynch Bank of America

- Andy Hopkirk, Head of Projects and Programmes and Director e-GIF Programme, NCC

- David Sprott, CEO, Everware-CBDi International and Founder CBDi Forum

- Ivar Jacobson, Ivar Jacobson International

- Kim Cameron, Chief Architect of Identity and Distinguished Engineer, Microsoft Corp

- Steve Cook, Software Architect, Visual Studio Team System, Microsoft Corp

The conference will be held at Microsoft London (Cardinal Place), 100 Victoria Street, London SW1E 5JL on 3/31/2010 and 4/1/2010.

David Terrar reported in EuroCloud UK members making sense of Cloud standards and security of 1/28/2010:

The newly formed EuroCloud UK group held their first member meeting a week ago at the Thistle City Barbican Hotel – a panel led group discussion on Cloud standards and security. Chaired by Phil Wainewright, the panel experts were Dr. Guy Bunker, independent consultant and blogger, formerly Symantec’s chief scientist and co-author of ENISA’s cloud security assessment document, Ian Moyse, Channel Director of SaaS provider Webroot, and Adrian Wright, MD, Secoda Risk Management, formerly global head of information security at Reuters.

In the spirit of cooperation we had invited Lloyd Adams from Intellect and Jairo Rojas from BASDA because we want to ensure that the three UK Cloud and SaaS vendor groups keep in close contact and try to coordinate their various deliverables and activities as much as is practical. In addition we invited Richard Anning who heads the ICAEW’s IT Faculty. As I’ve reported before, Phil, Jairo, Richard and I have been in discussions, triggered by Dennis Howlett, about trying to achieve some form of pragmatic standard or quality mark on security and best practice. We decided to use this discussion to identify if there are any sensible, existing standards or initiatives that we could adopt or incorporate in to our thinking. …

SugarCRM Announces SugarCon 2010 Registration and Call for Papers Now Open for Global Customer and Developer Conference in San Francisco, Calif., April 12-14 in this 1/26/2010 post:

SugarCRM, the world's leading provider of open source customer relationship management (CRM) software, today announced that it will host SugarCon 2010, its global customer, partner and developer conference, April 12-14, at The Palace Hotel in San Francisco, Calif.

“Whether you are a customer, partner, developer or just interested in learning about the future of business applications, cloud computing and open source, we invite you to spend three exciting days with us.”

The theme for SugarCon 2010 is “Evolve Your CRM.” Conference keynotes, tracks and sessions will offer practical advice on how companies can take advantage of the big trends – cloud computing, social networking and open source – impacting how companies attract and retain customers. To learn more, please visit: http://www.sugarcon.com.

Attendance cost is $499 per person. Early-bird registrants will receive a 40 percent discount if they register before Saturday, February 13. Please visit: http://www.sugarcrm.com/crm/events/sugarcon/register.html to register.

The conference will include a Business Apps in the Cloud track covering Microsoft Azure, Amazon EC2, GoGrid, Rackspace:

This track will help developers decide where to spend their time and how to start innovating today.

Geva Perry reports about the Under the Radar: Commercializing the Cloud conference in this 1/26/2010 post:

I'm happy to say that this year I'll be working with the organizers of the excellent Under The Radar (UTR) conference as a Content Advisor and on the start-up selection committee. The conference takes place on April 16, 2010 at the Microsoft campus in Mountain View, CA.

If you haven't had a chance to attend past events, it is a unique and kind of fun format where startups get to present to a panel of judges (typically VCs, Fortune 500 execs, analysts, etc.) and receive a critique on the spot.

It's a great place for companies to get exposed to and network with venture capitalists, journalists, potential customers and partners. A lot of great startups presented at UTR and went on to do great things, including Zimbra, 3Tera, Elastra, Heroku, Flickr, LinkedIN, Twilio, Sauce Labs and many others.

If you'd like your company to present you can apply here or just leave me a comment or contact me via Twitter.

Bruce Kyle delivers the details of Free Windows Azure Training Events Nationwide in this 1/26/2010 post to the US ISV Developer Community blog:

Come join in a 15-city tour of Windows Azure events for developers and IT Pros.

Join your local MSDN Events team as we take a deep dive into cloud computing and the Windows Azure Platform.

For more details and for other events in your area, see MSDN Live Events for Developers.

tbtechnet announced the Windows Azure Platform Application Development Contest! on 1/25/2010:

It’s always great to see contests and challenges the get the creative thinking into top gear around new technologies.

This page just went live: http://www.msdev.com/promotions/default.aspx

Windows Azure Platform Application Development Contest!

January 22-February 5, 2010

Attention Partners! Give your applications visibility in the USA Public Sector community. Participate in the State and Local Government Azure Applications Development Contest! Created for Microsoft Partners who focus on the State and Local Government market. Promote new applications and innovative ideas that you are creating for cloud computing on the Azure Services Platform.

Don't Miss Out! The deadline for submissions is February 5, 2010, and winners will be announced at the CIO Summit on February 25, 2010.

Register and view rules - Visit the contest website: http://www.microsoft.com/government/azure

David Liebowitz explains his start-up’s SQL Scrubs applicatoin’s use of Windows Azure in Partner Interview: SummitCloud of 1/25/2010 on Channel9:

SummitCloud is a BizSpark startup that produces analysis and compliance software used by suppliers and retailers. Microsoft Partner Evangelist John McClelland and I sat down with SummitCloud CEO David Leibowitz to talk about SummitCloud, BizSpark, and the Microsoft Partner Network.

This is an audio interview and I've just added a few slides to it so that it would be easier to view online.

You can find out more about SummitCloud by visiting their web site at http://summitcloud.com.

You can find out more about the community edition of SQL Scrubs here.

Paul Tremblett asserts “Simpler solutions are often better than their more complex counterparts” in his Amazon SimpleDB: A Simple Way to Store Complex Data article of 1/22/2010 for Dr. Dobbs:

The presence of the last two letters in the name "Amazon SimpleDB" is perhaps unfortunate; it immediately invokes images of everything we have learned about databases; unless, like me, you cut your teeth on a hierarchical database like IMS, that means relational databases and all of the baggage that comes with them: strictly defined fields, constraints, referential integrity and having most of what you are allowed to do defined and controlled by a DBA -- hardly deserving of being described as simple. To allay any apprehensions even thinking of such things might arouse, let me state that Amazon SimpleDB is not just another relational database. So just what is SimpleDB? The most effective way I have found to understand SimpleDB is to think about it in terms of something else we all use and understand -- a spreadsheet.

<Return to section navigation list>

Other Cloud Computing Platforms and Services

Geva Perry asks “Is someone out there working on an open source implementation of the Rackspace Cloud API or Microsoft Azure?” in his Does AppScale Have a Commercial Future? post of 1/28/2010:

About 15 months ago I asked the question in the title of this blog post about an open source project from UC Santa Barbara called Eucalyptus (Does Eucalyptus Have a Commercial Future?). Eucalyptus did indeed go on to become a commercial company funded by top-tier VC Benchmark Capital and BV Capital.

A couple of weeks ago, I received an email from Jonathan Kupferman, a master's student at that same university -- UCSB -- who is one of the team members on a project called AppScale. As Jonathan eloquently explains:

“The main idea behind AppScale is to create an open source version of Google AppEngine to allow people to deploy their AppEngine applications on their own hardware or in other clouds. It is of course all open source and runs on top of Xen/KVM and both EC2 and Eucalyptus. Perhaps the simplest way to explain AppScale is: AppScale is to Google AppEngine what Eucalyptus is to Amazon EC2.”

Eric Nelson continues his survey series with Part 2: How well do you know the technologies of Microsoft, Amazon, Google and SalesForce? of 12/28/2010:

On January 7th 2010 I kicked off a survey on Cloud Computing and the Windows Azure Platform (Now closed).

I promised to share the results which I will do over four posts. This is the second of those four.

- Part 1: Is Cloud relevant?

- Part 2: How well do you know the technologies of Microsoft, Amazon, Google and SalesForce?

- Part 3: What are your plans for using the Windows Azure Platform?

- Part 4: My analysis

Eric’s post details answers to a pair of questions about familiarity with Windows Azure’s competitors.

Brenda Michelson’s Gojko Adzic: Tips for keeping your sanity (and apps) in the cloud post of 1/28/2010 begins:

Although Software Design is further down my enterprise considerations list, when Gojko Adzoc’s post on lessons he has learned developing in Amazon’s AWS environment, I knew I had to pass it along. The post describes new challenges for developers who have previously worked in a purpose-built, directly controlled, infrastructure environment. These challenges range from server reliability to storage speed. After articulating the challenges, Adzic offers advice on “How to keep your sanity”:

“It took me a while to understand that just deploying the same old applications in the way I was used to isn’t going to work that well on the cloud. To get the most out of cloud deployments, applications have to be designed up-front for massive networks and running on cheap unstable web boxes. But I think that is actually a good thing. Designing to work around those constraints makes applications much better – faster, easier to scale, cheaper to operate. Asynchronous persistence can significantly improve performance but I never thought about that before deploying to the cloud and running into IO issues. Data partitioning and replication make applications scale better and work faster. Sections of the system that can work even if they can’t see other sections help provide a better service to customers. This also makes the systems easier to deploy, because you can do one section at a time. …

In the post, Adzic maintains that he is a cloud computing advocate, his goal of the post, and the presentation it came from, was to “expose some of the things that you won’t necessarily find in marketing materials.”

Read Adzic’s post. Remember the 4th Enduring Aspect of Cloud Computing.

Paul Krill reports Oracle hails Java but kills Sun Cloud in this 1/27/2010 article for InfoWorld (published by ITWorld):

… No interest in cloud utilities. The prognosis was not so positive for Sun Cloud, the public computing platform announced by Sun in March 2009 that was due to be deployed last summer. "We're not going to be offering the Sun Cloud service," said Edward Screven, Oracle's chief corporate architect. [Paul’s emphasis.]

Oracle CEO Larry Ellison has questioned just how new or important the cloud computing concept actually is. But, even though Oracle will not sell compute cycles through the Sun Cloud similar to what Amazon.com does, the company will offer products to serve as building blocks for public and private clouds, company officials said. …

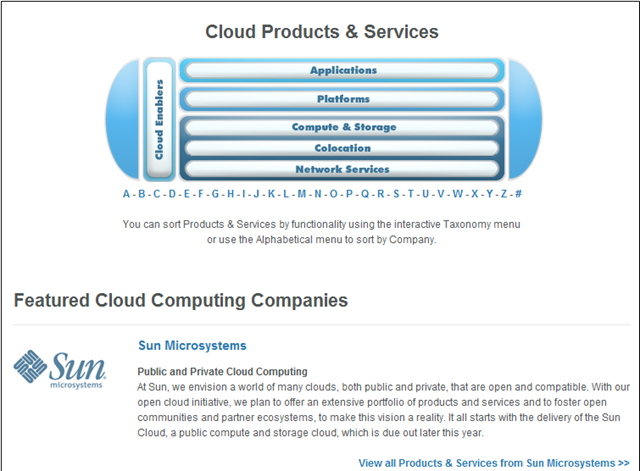

CloudBook’s Cloud Products & Services directory was featured the Sun Cloud on 1/28/2010, despite the fact that Oracle announced the demise of that product two days earlier:

Michael Coté analyzes the future of the Sun/Oracle (Snor[a]cle) combine in his Oracle and Sun – Quick Analysis post of 1/27/2010 to the Enterprise Irregulars blog:

Oracle had it’s big, 5 hour event today explaining what they’ll be doing with Sun and the new strategies and company that will result. As immense as the topic is, below is an equally sprawling selection of my commentary. While folks like myself relish this kind of thing, I expect most others will have what they call a “meh” reaction, not too sure about what’s new and different. Well, Oracle trying to be your single source for all enterprise IT is the main thing, Oracle trying to take over IBM’s market-spot as a second. …

Michael continues with detailed

- Summary a la Twitter

- Summary: Portfolio Rollerball

- The Single Stack

- Why Oracle thinks it’ll work this time

- The single-sourced stack

- The Cloud

Topics. He begins “The Cloud” with:

There wasn’t much mention of “cloud computing,” with words like “clusters” and “grids” being used as synonyms. Larry Elision reserved most of the cloud zingers for himself, and they amounted to one of his kinder statements “everything’s called cloud now, there’s nothing but cloud computing.” Which is another way of saying, if everything is a cloud, “cloud” is meaningless.

And, of course, they took what I call the LL Cool J stance on cloud computing: don’t call it an innovation, we been doin’ it for years! With virtualization and cloud computing, this is what every elder company has said, so it’s expected.

Ellison said Solaris would be the best at running clusters, “or I guess the [popular] word is ‘cloud,’” he tacked on. So, there you go: cloud for Oracle is about all that existing cluster and grid stuff we’ve had for year. Presumably, with new innovation laced on-top. And really, with “cloud computing” potentially having the same specter as “open source” (cheap), who can blame that attitude?

Stephen O’Grady joins his RedMonk partner with Sunset: The Oracle Acquisition Q&A of 1/28/2010:

If yesterday’s epic five hour webcast discussing Oracle’s plans for its finally acquired Sun assets was a long time coming for the analysts listening in, you can imagine how much of a wait it’s been for those on both sides of the transaction. It’s been roughly nine months, remember, since the database giant announced its intention to acquire the one time dot com darling.

Between Ellison, Kurian, Phillips and the rest, we got our share of answers yesterday. But as is almost always the case in such situations, there was as much left unsaid as said. Meaning that significant questions remain; some which can be answered, some which we’ll only be able to answer in future. To tackle a few of these, let’s turn to the Q&A. …

Stephen continues with a very long Q&A section.

Judith Hurwitz tackles the same task as Michael Coté in her Oracle + Sun: Five questions to ponder analysis of 1/27/2010. Here are the five questions (abbreviated):

- Issue One: Can Oracle recreate the mainframe world?

- Issue Two: Can you package everything together and still be an open platform?

- Issue Three: Can you manage a complex computing environment?

- Issue Four: Can you teach an old dog new tricks? Can Oracle really be a hardware vendor?

- Issue Five. Are customers ready to embrace Oracle’s brave new world?

As expected, Judith provides a well thought out answer to each.

Reuven Cohen’s Calculating Cloud Service Provider ROI of 1/27/2010 analyzes the new Cisco IaaS ROI and Configuration Guidance Tool:

… Cisco's ROI tool does a good job of shedding light on the complexities in defining cloud infrastructure focused business models. It outlines components such as operational costs like Labor, Power, Maintenance as well as capital costs including Data Center Build out (Construction), system integration, storage and compute. For me by far the most interesting part of the calculator is the compute related options. They've broken them down into 3 basic VM categories (Power, Average, and Light)

I also found the proposed scale (number of servers, customers etc) in which the ROI tool was built quite telling about the market Cisco is going after with minimum capital expenditures in the 12 - 15 million dollar range for a Cisco based IaaS deployment with smaller deployments actually returning negative ROI results. …

Be sure to read the comments from Cisco’s Chris Hoff (@Beaker) and Sunil Chhab.

Dan Morrill explains How HP could give IBM a run for its money in Cloud Computing Security in this 1/26/2009 analysis of the recent HP/Microsoft cloud-computing partnership:

… With HP’s new playground on Azure, as well as a tool set that helps them work out complex policies and support mechanisms for information security inside the cloud it might be possible to start seeing virtual private clouds that are certifiable against many of the security processes that need to be in place to meet audit and regulatory requirements as I talked about yesterday in my “Can regulators keep up with Cloud Computing?” article. This is what makes cloud computing security interesting, companies may not have a choice anymore – they will have to start outsourcing their information security practices until they can hire smart professionals that have deep skills in this. HP is providing a direct avenue along with Microsoft to ensure that compliance, along with standards and practices can be met – abet only with an outsourced company as support.

HP has a play ground, a deep understanding of the Azure network, tools to help support and define the security standards and practices that might meet audit standards, this is going to be a tough bit of competition. While I would not count anyone out, especially IBM, HP seems to be working in a very smart direction that can truly help support cloud computing security.

<Return to section navigation list>