Microsoft Codename “Data Transfer” and “Data Hub” Previews Don’t Appear Ready for BigData

Or even MediumData, for that matter:

- Background

- Uploading with Microsoft Codename “Data Hub”

- Uploading with Microsoft Codename “Data Transfer”

- Conclusion

- Subsequent Events (New)

Neither the Codename “Data Transfer” utility nor Codename “Data Hub” application CTPs would load 500,000 rows of a simple Excel worksheet saved as a 17-MB *.csv file to an SQL Azure table.

The “Data Transfer” utility’s Choose a File to Transfer page states: “We support uploading of files smaller than 200 MB, right now,” but neither preview publishes a row count limit that I can find. “Data Hub” uses “Data Transfer” to upload data, so the maximum file size would apply to it, too.

Both Windows Azure SaaS offerings handled a 100-row subset with aplomb, so the issue appears to be row count, not data structure.

••• Update 5/15/2012 7:45 AM PDT to the 4/24/2012 update below: A member of the Microsoft Data Hub/Transfer team advised that the known erroneous row count and random upload failure issues have been fixed. I will retest uploads later this week and report my results here and to the team.

.csv upload in DataMarket is preview only. We will disable it in a mean time in order to reduce confusion. …

I found no indication that *.csv file uploads were “preview only” during my tests. IMO, eliminating *.csv uploads is a bad idea because it will discourage ordinary contributors from participating in the DataMarket with freely download content. In this case, ordinary contributors would need to provide their own SQL Azure Web database at $9.95 per month. Other remaining content-provision options, such as Web services and OData feeds, aren’t practical for such contributors.

I assume that Windows Azure DataMarket is using Codename “Data Hub”/”Data Transfer” to upload these files. If so, fixing the problem with Codename “Data Hub” should solve the problem with uploading *.csv files to DataMarket.

Disabling a feature rather than fixing it isn’t my idea of a good policy.

Note: The Codename “Data Transfer” team implemented “My Great Windows Azure Idea” to Enable Append or Replace Option for SQL Azure Uploads of 11/20/2011 on 2/6/2012.

Links to related posts:

- Two Months of U.S. Air Carrier Flight Delay Data Available on the Windows Azure Marketplace DataMarket (5/2/2012)

- Creating a Private Data Marketplace with Microsoft Codename “Data Hub” (4/27/2012)

- Test-Drive SQL Azure Labs’ New Codename “Data Transfer” Web UI for Copying *.csv Files to SQL Azure Tables or Azure Blobs 11/30/2011

• Update 5/4/2012 10:30 AM PDT: See details about the forcible disconnect from Fiddler2 at the end of this post. Also added five more On_Time_Performance_2011_MM.csv files to my SkyDrive account to complete the series for the year 2011.

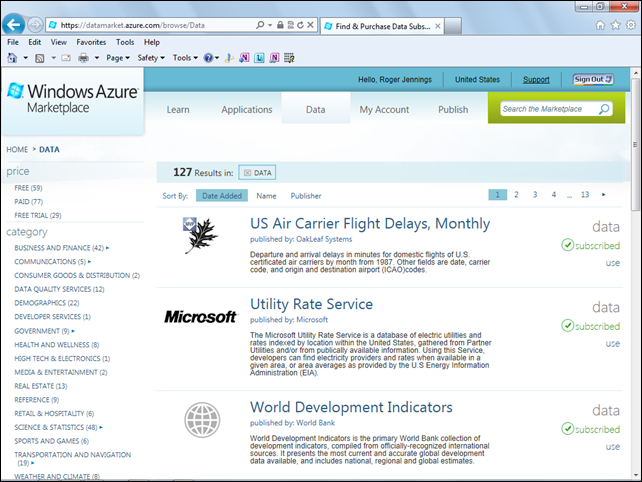

Update 5/2/2012 3:00 PM PDT: I was unable to upload additional ~500,000-row monthly On_Time_Performance_YYYY_MM.csv files to my free US Air Carrier Flight Delays, Monthly dataset on the public Windows Azure Marketplace Data market today. Forced disconnects similar to those I reported for Codename “Data Hub” occurred. Here’s a screen capture of the free public offer:

To subscribe to the data set, go the the Windows Azure Marketplace DataMarket landing page, create an account if you don’t have one, log in, and type OakLeaf in the Search the Marketplace text box to display the data and app offers:

Click the US Air Carrier Flight Delays, Monthly link to open the Offer page, and click the Sign Up button to open the eponymous page:

Mark the I have read and agree to … check box and click the Sign Up button to open the Thank You page:

This process adds a link to your Data list:

Update 4/24/2012 8:15 AM PDT: A member of the Microsoft Data Hub/Transfer team advised that the erroneous row count and random upload failure problems I reported for Codename “Data Transfer” were known issues and the team was working on them. I was unable to upload the ~500,000-row files with Codename “Data Hub”; see the added “Results with Codename “Data Hub” Uploads” section at the end of the post.

Update 4/23/2012 10:00 AM PDT: Two members of Microsoft Data Hub/Transfer team reported that they could upload the large test file successfully. Added “Computer/Internet Connection Details” section below. Completed tests to determine maximum file size I can upload. The My Data page showed anomalous results but only the 200k row test actually failed on 4/23. See the Subsequent Events section.

Background

The Creating the Azure Blob Source Data section of my Using Data from Windows Azure Blobs with Apache Hadoop on Windows Azure CTP post of 4/6/2012 described the data set I wanted to distribute via a publicly accessible, free Windows Azure DataMarket dataset. The only differences between it and the tab-delimited *.txt files uploaded to blobs that served as the data source for an Apache Hive table were

- Inclusion of column names in the first row

- Addition of a formatted date field (Hive tables don’t have a native date or datetime datatype)

- Field delimiter character (comma instead of tab)

Following is a screen capture of the first 20 data rows of the 500,000-row On_Time_Performance_2012_1.csv table:

You can download sample On_Time_Performance_YYYY_MM.csv files from the OnTimePerformanceCSV folder of my Windows Live SkyDrive account. On_Time_Performance_2012_0.csv is the 100-row sample file described in the preceding section; On_Time_Performance_2012_1.csv has 486,133 data rows.

Tab-delimited sample On_Time_Performance_YYYY_MM.txt files (without the first row of column names and formatted date) for use in creating blobs to serve as the data source for Hive databases are available from my Flight Data Files for Hadoop on Azure SkyDrive folder.

Provision of the files through a private Azure DataMarket service was intended to supplement the SkyDrive downloads.

Computer/Internet Connection Details:

Intel 64-bit DQ45CB motherboard with Core 2 Quad CPU Q9950 2.83 GHz, 8 GB RAM, 750 GB RAID 1 discs, Windows 7 Premium SP1, IE 9.0.8112.16421.

AT&T commercial DSL copper connection, Cayman router, 2.60 Mbps download, 0.42 Mbps upload after router reboot, 100-Mbps wired connection from Windows 2003 Server R&RA NAT.

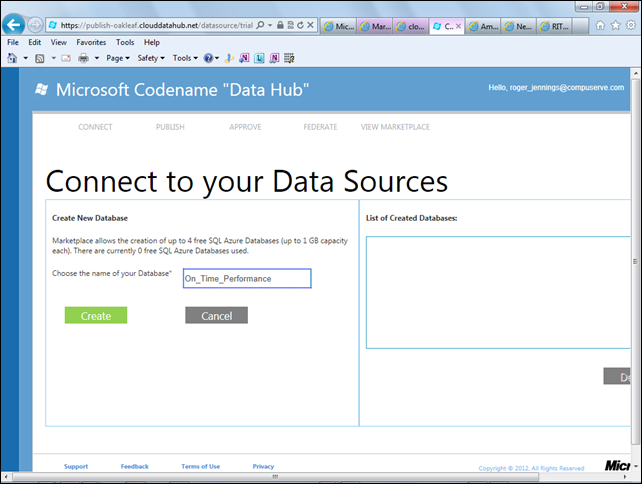

Uploading with Microsoft Codename “Data Hub”

Codename “Data Hub” provides testers with up to four free SQL Azure 1-GB Web databases, so I created a connection to a new On_Time_Performance database:

I then specified the ~500,000-row On_Time_Performance2012_1.csv file for January 2012 as the data source and clicked Upload:

The site provided no indication of any activity, although my DSL router indicated data was being uploaded. After a few minutes, the server disconnected. Reloading the page showed no change in status.

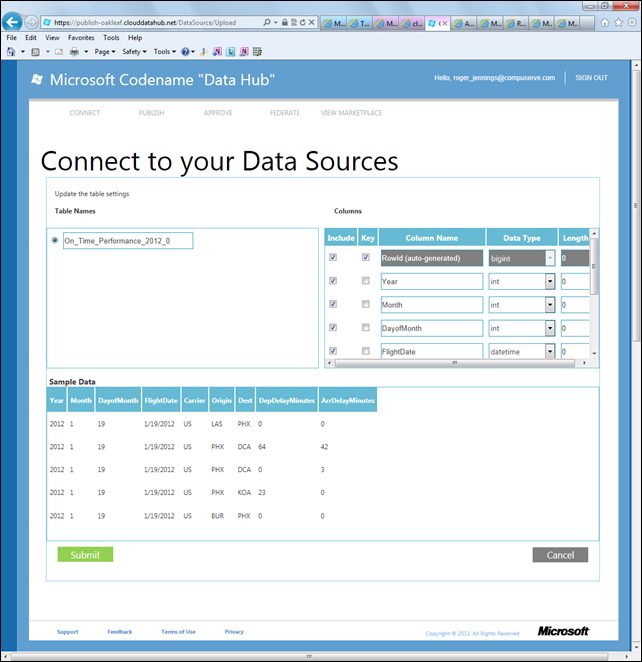

I then tried uploading the 100-row On_Time_Performance_2012_0.csv, which opened the following page after about 10 seconds:

I accepted the suggested data types and clicked Submit, which added the data to the table.

Uploading with Microsoft Codename “Data Transfer”

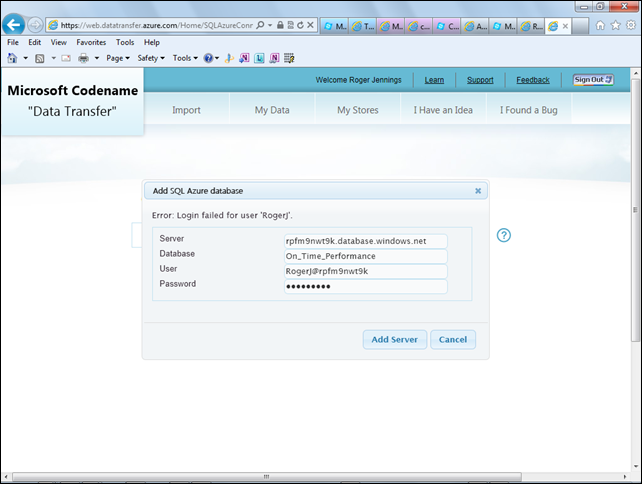

I created a new database in an existing OakLeaf SQL Azure instance because “Data Transfer” doesn’t provide free 1-GB Web databases. I repeated the above process with Codename “Data Transfer” but encountered a bug which prevented use of the # (and presumably other symbols) in the existing database access password:

Update 4/23/2012: A member of Microsoft’s Data Transfer team was able to reproduce the # symbol problem.

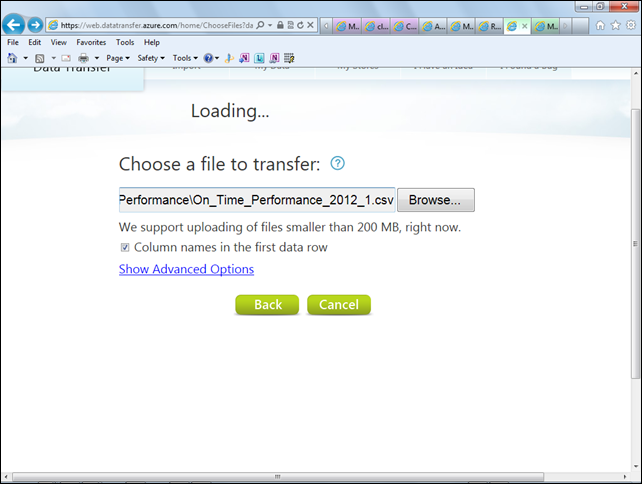

Selecting On_Time_Performance_2012_1.csv to upload by clicking Analyze caused the app to hang in the Loading … condition:

Canceling the process and selecting 100-row On_Time_Performance_2012_0.csv resulted in the expected Update the Table Settings page appearing in about 10 seconds:

Clicking Save resulted in a Submit Succeeded message.

Conclusion

Neither Codename “Data Hub” nor Codename “Data Transfer” appears to be ready for prime time. Hopefully, a fast refresh will solve the problem because users’ Codename “Data Hub” preview invitations are valid only for three weeks.

Subsequent Events

Members of the Microsoft Data Transfer/Data Hub team weren’t able to reproduce my problem on 4/22 and 4/23/2012. They could process the 486,133-row On_Time_Performance_2012_1.csv file without difficulty. To determine at what file size uploading problems occurred for me, I created files of 1,000, 10,000, 100,000, 150,000, and 200,000 data rows from On_Time_Performance_2012_1.csv. I’ve uploaded these files to the public OnTimePerformanceCSV folder of my Windows Live SkyDrive account.

Results with Codename “Data Transfer” Uploads

All files appeared to upload on Monday morning, 4/23/2012, but My Data showed incorrect Last Job Status data for all but the 10,000-row set. I used Codename “Data Transfer” instead of “Data Hub” to obtain Job Status data. Data below was refreshed about 15 minutes after completion of the the 2012_1.csv file; I failed to save the 1,000-row set:

The 100k file created 100,000 rows, as expected, 200k added no rows to the table, and a rerun of 2012_1 created the expected 486,133 rows:

Microsoft’s South Central US (San Antonio) data center hosts the e3895m7bbt database. It’s possible that problems affecting Windows Compute there on 4/19 and 4/20 (see my Flurry of Outages on 4/19/2012 for my Windows Azure Tables Demo in the South Central US Data Center post) spilled over to SQL Azure on 4/22/2012, but that’s unlikely. However, the unexpected results with the 200k table and anomalous Last Job Status data indicates an underlying problem. I’ll update this post as I obtain more information on the problem from Microsoft.

Results with Codename “Data Hub” Uploads

I was able to upload all test files (100, 1,000, 10,000, 100,000, 150,000 and 200,000 rows) but unable to upload the On_Time_Performance_2012_1.csv file to one of the four free databases with Codename “Data Hub” after three tries. The service forcibly disconnects after data upload completes, so data doesn’t transfer from the blob to the database table.

So I used the data source I created with Codename “Data Transfer” as an external data source to publish the data. None of the data fields were indexed, which displayed the following error (in bold red type) in the “My Offerings” page’s Status/Review section:

All queryable columns must be indexed: Not all queryable columns in table "On_Time_Performance_2012_1" are indexed. The columns that are not indexed are: "ArrDelayMinutes", "Carrier", "DayofMonth", "DepDelayMinutes", "Dest", "FlightDate", "Month", "Origin", "Year".

Codename “Data Transfer” doesn’t offer an option to index specific columns so I added indexes on all fields except RowId, DayofMonth, Month and Year with SQL Server Management Studio and cleared the Queryable checkboxes for these fields on the My Offerings - Data Source page.

Here’s Data Explorer’s Table View of part of the first few rows:

Check the Codename “Data Transfer” feedback page for my improvement suggestions:

- Enable grouping and aggregate functions for vizualization in data explorer

- Preseve original field order, don't sort alphabetically (or provide user choice)

- Enable local saving of the My Offerings page

- Widen text boxes in the Links to Documentation pane of the My Offerings page

- Add a save, edit and continue buttons at the bottom of each My Offerings page

- Provide status bars to display upload and transfer to SQL Azure progress

- Allow gzip uploads of *.csv files

“Data Hub” doesn’t appear to have its own feedback page.

• Update 5/4/2012: Fiddler2 returns the following message when the Windows Azure Marketplace DataMarket forcibly closes the connection:

[Fiddler] ResendRequest() failed: Unable to write data to the transport connection: An existing connection was forcibly closed by the remote host. < An existing connection was forcibly closed by the remote host

Here’s Fiddler’s Tunnel to HTTPS request:

CONNECT publish.marketplace.windowsazure.com:443 HTTP/1.0

User-Agent: Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; WOW64; Trident/5.0; BOIE9;ENUS)

Host: publish.marketplace.windowsazure.com:443

Content-Length: 0

Connection: Keep-Alive

Pragma: no-cacheA SSLv3-compatible ClientHello handshake was found. Fiddler extracted the parameters below.

Major Version: 3

Minor Version: 1

Random: 4F A4 0D C2 93 73 E8 BF B8 1B 72 0C F9 18 9F 10 20 DB DC 69 CC 10 EA 23 03 52 EB D7 CC DE 9B A5

SessionID: 39 40 00 00 43 4D 4A 4A C8 09 67 51 4A D9 C1 0C 36 7E 2D CB 12 DB B0 F5 49 03 81 57 86 B2 4D F9

Ciphers:

[002F] TLS_RSA_AES_128_SHA

[0035] TLS_RSA_AES_256_SHA

[0005] SSL_RSA_WITH_RC4_128_SHA

[000A] SSL_RSA_WITH_3DES_EDE_SHA

[C013] TLS1_CK_ECDHE_RSA_WITH_AES_128_CBC_SHA

[C014] TLS1_CK_ECDHE_RSA_WITH_AES_256_CBC_SHA

[C009] TLS1_CK_ECDHE_ECDSA_WITH_AES_128_CBC_SHA

[C00A] TLS1_CK_ECDHE_ECDSA_WITH_AES_256_CBC_SHA

[0032] TLS_DHE_DSS_WITH_AES_128_SHA

[0038] TLS_DHE_DSS_WITH_AES_256_SHA

[0013] SSL_DHE_DSS_WITH_3DES_EDE_SHA

[0004] SSL_RSA_WITH_RC4_128_MD5Compression:

[00] NO_COMPRESSIONExtensions:

renegotiation_info 00

server_name publish.marketplace.windowsazure.com

status_request 01 00 00 00 00

elliptic_curves 00 04 00 17 00 18

ec_point_formats 01 00

and the HTTPS response:

HTTP/1.0 200 Connection Established

FiddlerGateway: Direct

StartTime: 10:11:30.902

Connection: closeEncrypted HTTPS traffic flows through this CONNECT tunnel. HTTPS Decryption is enabled in Fiddler, so decrypted sessions running in this tunnel will be shown in the Web Sessions list.

Secure Protocol: Tls

Cipher: Aes128 128bits

Hash Algorithm: Sha1 160bitsKey Exchange: RsaKeyX 2048bits== Server Certificate ==========

[Subject]

CN=publish.marketplace.windowsazure.com[Issuer]

CN=Microsoft Secure Server Authority, DC=redmond, DC=corp, DC=microsoft, DC=com[Serial Number]

7EDE070F0008000251F2[Not Before]

11/15/2011 2:43:11 PM[Not After]

11/14/2013 2:43:11 PM[Thumbprint]

C7FC0219C14C8D274B6630BC1DEE0C3AFF757602

The ResendRequest()’s HTTP GET request is as follows:

GET https://publish.marketplace.windowsazure.com/favicon.ico HTTP/1.1

Accept: */*

Accept-Encoding: gzip, deflate

User-Agent: Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; WOW64; Trident/5.0; BOIE9;ENUS)

Host: publish.marketplace.windowsazure.com

Connection: Keep-Alive

Cookie: l=en-US; _vis_opt_s=9%7C; _vis_opt_exp_79_combi=2; _vis_opt_exp_82_combi=2; _vis_opt_exp_90_combi=1%2C1; _vis_opt_exp_89_combi=5; _vis_opt_exp_107_combi=2; _vis_opt_exp_107_goal_1=1; _vis_opt_exp_108_combi=2; _vis_opt_exp_108_goal_1=1; _vis_opt_exp_114_combi=1; FedAuth=

The authentication token is elided for brevity. Here’s the response without the document:

HTTP/1.1 404 Not Found

Content-Type: text/html

Server: Microsoft-IIS/7.5

X-Powered-By: ASP.NET

Date: Fri, 04 May 2012 17:11:31 GMT

Content-Length: 1245

0 comments:

Post a Comment