Windows Azure and Cloud Computing Posts for 4/1/2013+

| A compendium of Windows Azure, Service Bus, EAI & EDI, Access Control, Connect, SQL Azure Database, and other cloud-computing articles. |

•• Updated 4/6/2013 with new articles marked ••.

• Updated 4/5/2013 with new articles marked •.

Note: This post is updated weekly or more frequently, depending on the availability of new articles in the following sections:

- Windows Azure Blob, Drive, Table, Queue, HDInsight and Media Services

- Windows Azure SQL Database, Federations and Reporting, Mobile Services

- Marketplace DataMarket, Cloud Numerics, Big Data and OData

- Windows Azure Service Bus, Caching, Access Control, Active Directory, Identity and Workflow

- Windows Azure Virtual Machines, Virtual Networks, Web Sites, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch and Entity Framework v4+

- Windows Azure Infrastructure and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security, Compliance and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

Azure Blob, Drive, Table, Queue, HDInsight and Media Services

The Windows Azure Storage Team described AzCopy – Using Cross Account Copy Blob on 3/31/2013:

Please download AzCopy CTP2 here, and there is a previous blog of AzCopy CTP1 here as a reference

New features have been added in this release

- Support of Cross-Account Copy Blob: AzCopy allows you to copy blobs within same storage accounts or between different storage accounts (visit this blog post for more details on cross account blob copy). This enables you move blobs from one account to another efficiently with respect to cost and time. The data transfer is done by the storage service, thus eliminating the need for you to download each blob from the source and then upload to the destination. You can also use /Z to execute the blob copy in re-startable mode.

- Added /MOV: This option allows you move files and delete them from source after copying. Assuming you have delete permissions on the source, this option applies regardless of whether the source is Windows Azure Storage or the local file system.

- Added /NC: This option allows you to specify the concurrent network calls. By default, when you upload files from local computer to Windows Azure Storage, AzCopy will initiate network calls up to eight times the number of cores this local computer had to execute concurrent tasks. For example, if your local computer has four cores, then AzCopy will initiate up to 32 (eight times of 4) network calls at one time. However, if you want to limit the concurrency to throttle local CPU and bandwidth usage, you can specify the maximum concurrent network calls by using /NC. The value specified here is the absolute count and will not be multiplied by the core count. So in the above example, to reduce the concurrent network calls by half, you would specify /NC:16

- Added /SNAPSHOT: This option allows you transfer blob with snapshots. This is a semantic change, as in AzCopy CTP 1 (Released in October, 2012), it would transfer a blob’s snapshots by default. However starting from this version, by default AzCopy won’t transfer any snapshots while copying a blob. Only with /SNAPSHOT specified, AzCopy will actually transfer all the snapshots of a blob to destination, however these snapshots will became separate blobs instead of snapshots of original base blob in destination, so each of the blobs will be full charged for (not block reuse between them). The transferred blob snapshots will be renamed in this format: [blob-name] (snapshot-time)[extension].

For example if readme.txt is a source blob and there are 3 snapshots created for it in source container, then after using /SNAPSHOT there will be 3 more separate blobs created in destination container where their names will looks like

readme (2013-02-25 080757).txt

readme (2012-12-23 120657).txt

readme (2012-09-12 090521).txt

For the billing impact compare blob snapshots to separate blob, please refer to this blog post Understanding Windows Azure Storage Billing- Added /@:response-file: This allows you store parameters in a file and they will be processed by AzCopy just as if they had been specified on the command line. Parameters in the response file can be divided as several lines but each single parameter must be in one line (breaking 1 parameter into 2 lines is not supported). AzCopy parses each line as if it is a single command line into a list of parameters and concatenates all the parameter lists into one list, which is treated by AzCopy as from a single command line. Multiple response files can be specified, but nested response file is not supported, instead it will be parsed as a location parameter or file pattern. Escape characters are not supported, except that “” in a quoted string will be parsed as a single quotation mark. Note that /@: after /- does not means a response file, it is treated as a location parameter or file pattern like other parameters

Examples

Here are some examples that illustrate the new features in this release.

Copy all blobs from one container to another container under different storage account

AzCopy https://<sourceaccount>.blob.core.windows.net/<sourcecontainer>/ https://<destaccount>.blob.core.windows.net/<destcontainer>/ /sourcekey:<key> /destkey:<key> /S

The above command will copy all blobs from the container named “sourcecontainer” in storage account “sourceaccount” to another container named “destcontainer” in storage account “destaccount”

If you have base blob with snapshots, please add/Snapshot to move all snapshots with base blob to destination, please be noted the blob snapshot will be renamed to this format in destination: [blob-name] (snapshot-time)[extension]

AzCopy https://<sourceaccount>.blob.core.windows.net/<sourcecontainer>/ https://<destaccount>.blob.core.windows.net/<destcontainer>/ /sourcekey:<key> /destkey:<key> /S /SNAPSHOT

For example if you have readme.txt with 3 snapshots in source container, then it will be as below in destination container

readme.txtreadme (2013-02-25 080757).txt

readme (2012-12-23 120657).txt

readme (2012-09-12 090521).txtIf you’d like to delete those blobs from the source container when the copy is complete, then just added /MOV as below

AzCopy https://<sourceaccount>.blob.core.windows.net/<sourcecontainer>/ https://<destaccount>.blob.core.windows.net/<destcontainer>/ /sourcekey:<key> /destkey:<key> /MOV /S

You can also create a response file to make it easier of running same command again and again. Create a txt file called “myAzCopy.txt” with content below

#URI of Source Container

https://<sourceaccount>.blob.core.windows.net/<sourcecontainer>/

#URI of Destination Container

https://<destaccount>.blob.core.windows.net/<destcontainer>/Then you can run the command below to transfer files from source container to destination container

AzCopy /@:C:\myAzCopy.txt /sourcekey:<key> /destkey:<key> /MOV /S

WenMing Ye (@wenmingye, a.k.a. HPC Trekker) recommended big data developers Make another small step, with the JavaScript Console Pig in HDInsight in a 3/31/2013 post:

Our previous blog, MapReduce on 27,000 books using multiple storage accounts and HDInsight [see post below] showed you how to run the Java version of the MapReduce code against the Gutenberg dataset we uploaded to the blog storage. We also explained how you can add multiple storage accounts and access them from your HDInsight cluster. In this blog, we’ll take a smaller step and show you how this works with the JavaScript example, and see if it can operate on a real dataset.

The JavaScript Console gives you simpler syntax, and a convenient web interface. You can do quick tasks such as running a query, and check on your data without having to RDP into your HDInsight cluster head node. It is for convenience only; not meant for complex workflow. The JavaScript has a few features built in, that includes being able to use the HDFS commands such as ls, mkdir, copy files, etc. Moreover, it allows you to invoke pig commands.

Let's go through the process of running the PIG Script with the entire Gutenberg collection, we first uploaded the MapReduce word count file, WordCount.js [link] by typing fs.put() it brings up dialog box for you to upload the WordCount.js file.

Next, you can verify that the WordCount.js file has been uploaded properly by typing #cat /user/admin/WordCount.js. As you noticed, HDFS commands that normally looks like: hdfs dfs –ls has been abstracted to #ls.

We then ran a Pig command to kick off a set of map reduce operations. The JavaScript below is compiled into Pig Latin Java and then executed.

pig.from("asv://textfiles@gutenbergstore.blob.core.windows.net/").mapReduce("/user/admin/WordCount.js", "word, count:long").orderBy("count DESC").take(10).to("DaVinciTop10")

- Load files from ASV storage, notice the format, asv://container@storageAccoutURL.

- Run MapReduce on the dataset using WordCount.js, results are in the format of, words, and count key value pair.

- Sort the key value dictionary by descending count value.

- Copy top 10 of the values to the DaVinciTop10 directory in the default HDFS.

This process may take 10s of minutes to complete, since the dataset is rather large.

The View Log link provides detailed progress logs.

You can check the progress by RDP into the HeadNode, it will give you more detailed progress than the “View Log” link on the JavaScript Console.

Click on The Reduce link in the Table above to check on the Reduce Job, notice there shuffle and sort processes. Shuffle basically is the process where the reducer is fed with output with all the mappers output that it needs to process.

Click into the Counters link, there are significant amount of data being read and written in this process. The nice thing about Map Reduce jobs is that you can speed up the process by adding more compute resources. The mapping phase can be significantly speed up by running more processes in parallel.

When everything finishes, the summary page tells us that the pig script was really about 5 different jobs, 07 – 11. for learning purposes, I’ve posted my results at: https://github.com/wenming/BigDataSamples/blob/master/gutenberg/results.txt

The JavaScript Console also provides you with simple graph functions.

file = fs.read("DaVinciTop10")

data = parse(file.data, "word, count:long")

graph.bar(data)

graph.pie(data)

When we compare the entire Gutenberg collection with just the Davinci.txt file, there’s a significant difference, with our new data we can certainly estimate the occurrences of these top words in the English language more accurately than just looking through 1 book.

Conclusion

More data always gives us more confidence, that’s why big data processing is so important. When it comes to processing large amounts of data, parallel big data processing tools such as HDInsight (Hadoop) can deliver results faster than running them on single workstations. Map Reduce is like the assembly language of Big Data, Higher level languages such as PIG Latin can be decomposed into a series of map reduce jobs for us.

WenMing Ye (@wenmingye, a.k.a. HPC Trekker) described MapReduce on 27,000 books using multiple storage accounts and HDInsight in a 3/30/2013 post:

In our previous blog, Preparing and uploading datasets for HDInsight, we showed you some of the important utilities that are used on the Unix platform for data processing. That includes Gnu Parallel, Find, Split, and AzCopy for uploading large amounts of data reliably. In this blog, we’ll use an HDInsight cluster to operate on the Data we have uploaded. Just to review, here’s what we have done so far:

Downloaded ISO Image from Gutenberg, copied the content to a local dir.

- Crawled the INDEXES pages and copied English only books (zips) using a custom Python script.

- Unzipped all the zip files using gnu parallel, and then took all the text files combined and then split them into 256mb chunks using find and split.

- Uploaded the files in parallel using the AzCopy Utility.

Map Reduce

Map reduce is the programming pattern for HDInsight, or Hadoop. It has two functions, Map and Reduce. Map takes the code to each of the nodes that contains the data to run computation in parallel, while reduced summarizes results from map functions to do a global reduction.

In the case of this word count example In JavaScript, the mapping function below, simply splits words from a text document into an array of words, then it writes it to a global context. The map function takes three parameters, key, value, and a global context object. Keys in this case are individual files, while the value is the actual content of the documents. The Map function is called on every compute node in parallel. As you noticed, it writes a key-value pair out to the global context: the word being the key and the value being 1 since it counted 1. Obviously the output from the mapper could contain many duplicate keys (words).

// Map Reduce function in JavaScript

var map = function (key, value, context) {

var words = value.split(/[^a-zA-Z]/);

for (var i = 0; i < words.length; i++) {

if (words[i] !== "")

context.write(words[i].toLowerCase(), 1);

}

}};The reduce function also takes key, values, context parameters and is called when the Map function completes. In this case, it takes output from all the mappers, and sums up all the values for a particular key. In the end you get word:count key value pair. This gives you a good feel of how map reduce works.

var reduce = function (key, values, context) {

var sum = 0;

while (values.hasNext()) {

sum += parseInt(values.next());

}

context.write(key, sum);

};To run map reduce against the dataset we have uploaded, we have to add the blob container in the cluster’s configuration page, if you are trying to learn how to create a new cluster. Please take a look at this video: Creating your first HDInsight cluster and run samples

HDInsight’s Default Storage Account: Windows Azure Blob Storage

The diagram below explains the difference between HDFS, or the distributed file system natively to Hadoop, and the Azure blob storage. Our engineering team had to do extra work to make the Azure blob storage system work with Hadoop.

The original HDFS uses of many local disks on the cluster, while azure blob storage is a remote storage system to all the compute nodes in the cluster. For beginners, all you have to know is that the HDInsight team has abstracted both systems for you through the HDFS tool. And you should use the Azure blob storage as a default, since when you tear down the cluster, all your files will still persist in the remote storage system.

On the other hand, when you tear down a cluster, the content you store on HDFS contained on the cluster will disappear with it. So, only store temp data that you don’t mind losing in HDFS. Or before you tear down the cluster, you should copy them to your blob storage account.

You can explicitly reference hdfs (local) by using hdfs:// while asv:/// to reference files in the blob storage system. (default).

Adding Additional Azure Blob Storage container to your HDInsight Cluster

On the head node of the HDInsight cluster in C:\apps\dist\hadoop-1.1.0-SNAPSHOT\conf\core-sites.html, you need to add:

<property>

<name>fs.azure.account.key.[account_name].blob.core.windows.net</name>

<value>[account-key]</value>

</property>For example, in my account, I simply copied the default property and added the new name/key pair.

In the RDP session, using the Hadoop commandline console, we can verify the new storage can be accessed.

In the JavaScript Console, it works just the same.

Deploy and Run word count against the second storage

In the samples page in the HDInsight Console.

Deploy the Word Count sample.

Modify Parameter 1 to: asv://textfiles@gutenbergstore.blob.core.windows.net/ asv:///DaVinciAllTopWords

Navigate all the way back to the main page and click on Job History, find the job that you just started running.

You may also check more detailed progress in the RDP session, recall that we have 40 files, and there are 16 mappers total (16 cores) running in parallel. The current status is: 16 complete, 16 running 8 pending.

The job completed within about 10 minutes, and the results are stored in DaVinciAllTopWords directory.

The results is about 256mb

Conclusion

We showed you how to configure additional ASV storage on your HDInsight Cluster to run Map Reduce Jobs against. This concludes our 3 part blog Set.

The Microsoft Enterprise Team (@MSFTenterprise) posted Changing the Game: Halo 4 Team Gets New User Insights from Big Data in the Cloud on 3/27/2013 (missed when pubished):

In late 2012, Halo 4 gamers took to their Xbox 360 consoles en masse for a five-week online battle. They all had the same goal: to see whose Spartan could climb to the top of the global leaderboards in the largest free-to-play Halo online tournament in history.

Using the game’s multiplayer modes, players participating in the tournament—the Halo 4 “Infinity Challenge”—earned powerful new weapons and armor for their Spartan-IV and fought their way from one level to the next. And with 2,800 available prizes, there was plenty of incentive to play.

Behind the scenes, a powerful new Microsoft technology platform called HDInsight was capturing data from the cloud and feeding daily game statistics to the tournament’s operator, Virgin Gaming. Virgin not only used the data to update online leaderboards each day; it also relied on the data to detect cheaters, removing them from the boards to ensure that the right gamers got the chance to win.

But this new technology didn’t just support the Infinity Challenge. From day one, the Xbox 360 game has been using the Hadoop open source framework to gain deep insights into players. The Halo 4 development team at 343 Industries is taking these insights and updating the game almost weekly, using direct player feedback to tweak the game. In the process, the game’s multiplayer ecosystem continues to evolve with the community as the title matures in the marketplace.

Tapping into the Power of the Cloud

Using the latest technology has always been important to the Halo 4 development team. Since the award-winning game launched in November 2012, the team has used the Windows Azure cloud development platform to power the game’s back-end supporting services. These services run the game’s key multiplayer features, including leaderboards and avatar rendering. Hosting the multiplayer parts of the game in Windows Azure also gives the Halo 4 team a way to quickly and inexpensively increase or decrease server loads as needed.

As Microsoft prepared to officially release the game, 343 Industries wanted to find a solution to mine user data with the hope of gaining insight into player behavior and gauging the overall health of the game after its release. Additionally, the Halo 4 development team was tasked with feeding daily data about the five-week online Infinity Challenge tournament to Virgin Gaming, a Halo 4 partner.

To meet these business requirements, the Halo 4 team knew it needed to find business intelligence (BI) technology that would work well with Azure. “One of the great things about the Halo team is how they use cutting-edge technology like Azure,” says Alex Gregorio, a program manager for Microsoft Studios, which developed Halo 4. “So we wanted to find the best BI environment out there, and we needed to make sure it integrated with Azure.”

Because all game data is housed in Azure, the team wanted to find a BI solution that could effectively produce BI information from that data. The team also needed to process this data in the same data center, minimizing storage costs and avoiding charges for data transfers across two data centers. The team also wanted full control over job priorities, so that the performance and delivery of analytical queries would not be affected by other processing jobs run at the same time. “We had to have a flexible solution that was not on-premises,” states Gregorio.

Microsoft HDInsight: Big Data Analytics in Azure

Although it considered building its own custom BI solution, the Halo 4 team decided to use the Windows Azure HDInsight Service, which is based on Apache Hadoop, an open-source software framework created by Yahoo! Hadoop can analyze huge amounts of unstructured data in a distributed manner. Designed for large groups of machines that do not share memory, Hadoop can operate on commodity servers and is ideal for running complex analytics.

HDInsight empowers users to gain new insights from unstructured data, while connecting that data to familiar BI tools. “Even though we knew we would be one of the earliest customers of HDInsight, it met all our requirements,” says Tamir Melamed, a development manager on the Halo 4 team. “It can run any possible queries, and it is the best format for integration with Azure. And because we owned the services that produce the data and the BI system, we knew we would be using resources in the best, most cost-effective way.”

The Halo 4 team wrote Azure-based services that convert raw game data collected in Azure into the Avro format, which is supported by Hadoop. This data is then pushed from the Azure services in the Avro format into Windows Azure binary large object (BLOB) storage, which HDInsight is able to utilize with the ASV protocol. The data can then be accessed by anyone with the right permissions from Windows Azure.

Every day, Hadoop handles millions of data-rich objects related to Halo 4, including preferred game modes, game length, and many other items. With Microsoft SQL Server PowerPivot for SharePoint as a front-end presentation layer, Azure BLOBs are created based on queries from the Halo 4 team.

PowerPivot for Excel loads data from HDInsight using the Hive ODBC driver software library for the Hive data warehouse framework in Hadoop. A PowerPivot workbook is then uploaded to PowerPivot for SharePoint and refreshed nightly within SharePoint, using the connection string stored in the workbook via the Hive ODBC driver to HDInsight. The Halo 4 team uses the workbooks to generate reports and facilitate their viewing of interactive data dashboards.

Using the Flexibility and Agility of Hadoop on Azure

For the Halo 4 team, a key benefit of using HDInsight was its flexibility, which allowed for separating the amount of the raw data from the processing size needed to consume that data. “With previous systems, we never had the separation between production and raw data, so there was always the question of how running analytics would affect production,” says Mark Vayman, lead program manager for the Halo services group. “Hadoop running on Azure BLOBs solved that problem.”

With Hadoop, the team was able to build a configuration system that can be used to turn various Azure data feeds on or off as needed. “That really helps us get optimal performance, and it’s a big advantage because we can use the same Azure data source to run compute for HDInsight on multiple clusters,” says Vayman. “It made it easy for us to drive business requests for analysis through an ad-hoc Hadoop cluster without affecting the jobs being run. So developers outside the immediate BI team can actually go in and run their own queries without being hindered by the development load our team has. Ultimately, the unique way in which Hadoop is implemented on Azure gives us these capabilities.”

Halo 4 developers have also benefited from the agility of Hadoop on Azure. “If we get a business request for analytics on Azure data, it’s very easy for us find a specific data point in Azure and get analytics on that data with HDInsight,” says Melamed. “We can easily launch a new Hadoop cluster in minutes, run a query, and get back to the business in a few hours or less. Azure is very agile by nature, and Hadoop on Azure is more powerful as a result.”

Shifting the Focus from Storage to Analysis

HDInsight was also instrumental in changing the Halo 4 team’s focus from data storage to useful data analysis. That’s because Hadoop applies structure to data when it’s consumed, as opposed to traditional data warehouse applications that structure data before it’s placed into a BI system. “In Windows Azure, Hadoop is essentially a place where all the raw data can be dumped,” says Brad Sarsfield, a Microsoft SQL Server developer. “Then we can decide to apply structure to that data at the point where it’s consumed.”

Once the Halo 4 team became aware of this capability, it shifted its mindset. “That realization had a subtle but profound effect on the team,” Sarsfield says. “At a certain point, they flipped from worrying about how to store and structure the data to concentrating on the types of questions they could ask from the data—for example, what game modes users were playing in, or how many players were playing at a given time. The team saw that it could much more readily respond to the initial requests for business insight about the game itself.”

Gaining New Insights from the Halo 4 “Infinity Challenge”

With an ability to focus more tightly on analysis, the Halo 4 team turned its attention to the Infinity Challenge. “Using Microsoft HDInsight, we were able to analyze the data during the five weeks of the Infinity Challenge,” says Vayman. “With the fast performance we got from the solution, we could feed that data to Virgin Gaming so they could update the leaderboards on the tournament website every day.”

In addition, because of the way the team set up Hadoop to work within Azure, the Halo team was able to perform analysis during the Infinity Challenge to detect cheaters and other abnormal player behavior. “HDInsight gives us the ability to easily read the data,” says Vayman. “In this case, there are many ways in which players try to gain extra points in games, and we were able to look back at previous data stored in Azure and identify user patterns that fit certain cheating characteristics, which was unexpected.”

After receiving this data from the Halo 4 team, Virgin Gaming sent out a notification that any player found or suspected of cheating would be immediately removed from the leaderboards and the tournament in general. “That was a great example of Hadoop on Azure giving us powerful analytical capabilities,” says Vayman.

Making Weekly Updates Based on User Trends

HDInsight gives the Halo 4 team daily updated BI data pulled from the game, which provides visibility into user trends. For example, the team can view how many users play every day, as well as the average length of a game and the specific game features that players use the most. Vayman says, “Having this kind of insight helps us gauge the overall health of the game and allows us to correlate the game’s sales numbers with the number of people that actually end up playing.”

Getting insights from Hadoop, in addition to Halo 4 user forums, also helps the Halo 4 team make frequent updates to the game. “Based on the user preference data we’re getting from Hadoop, we’re able to update game maps and game modes on a week-to-week basis,” says Vayman. “And the suggestions we get in the forums often find their way into the next week’s update. We can actually use this feedback to make changes and see if we attract new players. Hadoop and the forums are great tuning mechanisms for us.”

The team is also taking user feedback and giving it to the game’s designers, who can take it into consideration when thinking about creating future editions of Halo.

Targeting Players Through Email

The flexibility of the HDInsight BI solution also gives the Halo 4 team a way to reach out to players through customized campaigns, such as the series of email blasts the team sent to gamers in the initial weeks after the launch. During that campaign, the team set up Hadoop queries to identify users who started playing on a certain date. The team then wrote a file and placed it into a storage account on Windows Azure, where it was sent through SQL Server 2008 R2 Integration Services into a database owned by the Xbox marketing team.

The marketing team then used this data to send new players two emails: a generic “Welcome to Halo 4” email the day after a player began playing, and another custom email seven days later. This second email was actually one of five different emails, tailored to each user. Based on player preferences demonstrated during the week of play, this email suggested different game modes to players. The choice of which email each player received was determined by the HDInsight system. “That gave marketing a new way to possibly retain users and keep them interested in trying new aspects of the game,” Gregorio says. The Halo 4 marketing team plans to run similar email campaigns for the game until a new edition is released. “Basing an email campaign on HDInsight and Hadoop was a big win for the marketing team, and also for us,” adds Vayman. “It showed us that we were able to use data from HDInsight to customize emails, and to actually use BI to improve the player experience and affect game sales.”

Expanding the Use of Hadoop

Based on the success of HDInsight as a powerful BI tool, Microsoft has started to expand the solution to other internal groups. One group, Microsoft IT, is using HDInsight to improve its customer-facing website. “Microsoft IT is using some of the internal Azure service logs in Hadoop to mine data for use in identifying error patterns, in addition to creating reports on the site’s availability,” says Vayman. Another internal team that processes very large data volumes is also using Hadoop on Azure for analytics. “Halo 4 really helped lead the way for both projects,” Vayman says.

One reason Hadoop is becoming more widely used is that the technology continues to evolve into an increasingly powerful BI tool. “The traditional role of BI within Hadoop is expanding because of the raw capabilities of the platform,” says Sarsfield. “In addition to just BI reporting, we’ve been able to add predictive analytics, semantic indexing, and pattern classification, which can all be leveraged by the teams using Hadoop.”

Adoption is also growing because users do not have to be Hadoop experts to take advantage of the technology’s data insights. “By hooking Hadoop into a set of tools that are already familiar, such as Microsoft Excel or Microsoft SharePoint, people can take advantage of the power of Hadoop without needing to know the technical ins and outs. It’s really geared to the masses,” says Vayman. “A good example of that is the data about Infinity Challenge cheaters that we gave to Virgin Gaming. The people receiving that data are not Hadoop experts, but they can still easily use the data to make business decisions.”

No matter what new capabilities are added to it, there’s no doubt that HDInsight will continue to affect business. “With Hadoop on Windows Azure, we can mine data and understand our audience in a way we never could before,” says Vayman. “It’s really the BI solution for the future.”

WenMing Ye (@wenmingye, a.k.a. HPC Trekker) posted an 00:11:00 Introduction To Windows Azure HDInsight Service video to Channel9 on 3/22/2013 (missed when published):

This is a general Introduction to Big Data, Hadoop, and Microsoft's new Hadoop-based Service called Windows Azure HDInsight. This presentation is divided into two videos, this is Part 1. We cover Big Data and Hadoop in this part of the presentation. The relevant blog post is: Let there be Windows Azure HDInsight. The up-to-date presentation is on github at: http://bit.ly/ZY6DzN

<Return to section navigation list>

Windows Azure SQL Database, Federations and Reporting, Mobile Services

• Dhananjay Kumar (@Debug_Mode) described Step by Step working with Windows Azure Mobile Service Data in JavaScript based Windows Store Apps in a 4/5/2013 post:

In my last post I discussed Step by Step working with Windows Azure Mobile Service Data in XAML based Windows Store Apps [see article below]. In this post we will have a look on working with JavaScript based Windows Store Apps. Last post was divided in two parts. First part was Configure Windows Azure Mobile Service in Portal. You need to follow step 1 from last step to configure Windows Azure Mobile Service Data. To work with this proceed as given in following steps,

- Configure Windows Azure Mobile Service in Portal. For reference follow Step 1 of this Blog

- Download Windows Azure SDK and install.

Now follow [this] post to work with Windows Azure Mobile Service Data from JavaScript based Windows Store Application.

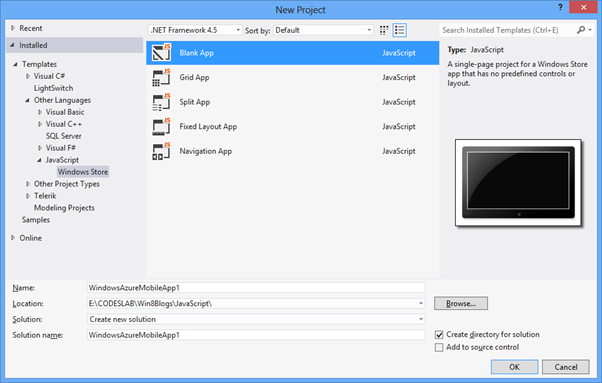

Create [a] Windows Store Application in JavaScript

Create [a] Blank App from creating Blank App template from JavaScript Windows Store App project tab.

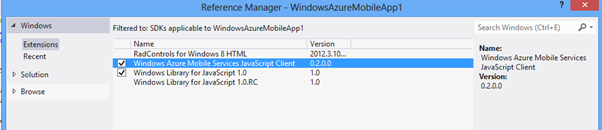

After creating project add Windows Azure Mobile Services JavaScript Client reference in project.

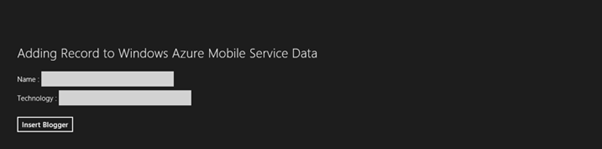

After adding reference let us go ahead and design app page to add a blogger in table. We are going to put two text boxes and one button. On click event of the button blogger will be inserted as row in data table of Windows Azure Mobile Services.

<body> <h2>Adding Record to Windows Azure Mobile Service Data</h2> <br /> Name : <input id="txtname" type="text" /> <br /> Technology : <input id="txttechnology" type="text" /> <br /> <br /> <button id="btnInsert" >Insert Blogger</button> </body>Application will look like following,

We need to add reference of Windows Azure Mobile Service on HTML as following

<script src="//Microsoft.WinJS.1.0/js/ui.js"></script> <script type="text/javascript" src="/MobileServicesJavaScriptClient/MobileServices.js"></script>Next let us create client for Windows Azure Mobile Service. To create this you need to pass application URL and application Key. Client in JavaScript based application can be created as following

args.setPromise(WinJS.UI.processAll()); var client = new Microsoft.WindowsAzure.MobileServices.MobileServiceClient( "https://appurl", "appkey" );Now let us create a proxy table. Proxy table can be created as following and after creating proxy table we can add record in table as following

var bloggerTable = client.getTable('techbloggers'); var insertBloggers = function (bloggeritem) { bloggerTable.insert(bloggeritem).done(function (item) { //Item Added });On click event of button we need to call insertBloggers javascript function.

btnInsert.addEventListener("click", function () { insertBloggers({ name: txtname.value, technology: txttechnology.value }); });On click event of button you should able to insert blogger in Windows Azure Mobile Service Data table. In further post we will learn to perform update, delete and fetch data.

• Dhananjay Kumar (@Debug_Mode) posted Step by Step working with Windows Azure Mobile Service Data in XAML based Windows Store Apps on 4/4/2013:

In this post we will take a look on working with Windows Azure Mobile Service in XAML based Windows Store Application. We will follow step by step approach to learn goodness of Windows Azure Mobile Service. In first part of post we will configure Windows Azure Mobile Service in Azure portal. In second part of post we will create a simple XAML based Windows Store application to insert records in data table. This is first post of this series and in further posts we will learn other features of Windows Azure Mobile Services.

Configure Windows Azure Mobile Service on Portal

Step 1

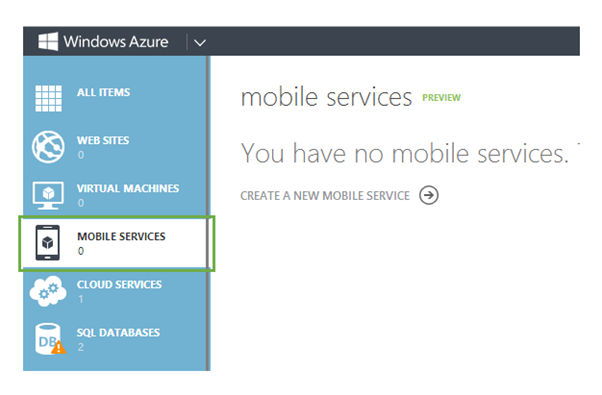

Login to Windows Azure Management Portal here

Step 2

Select Mobile Services from tabs in left and click on CREATE NEW MOBILE SERVICE

Step 3

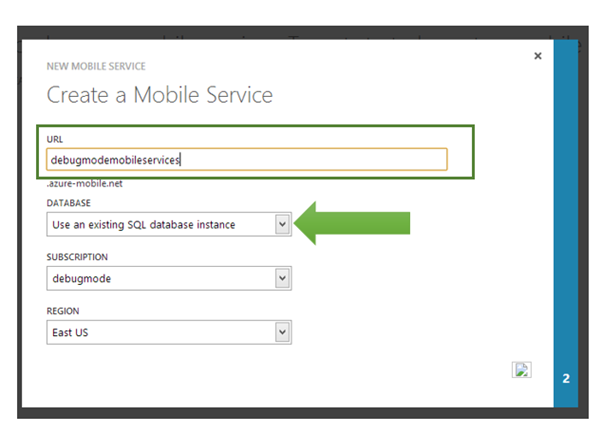

In this step provide URL of mobile service. You have two choice either to create mobile service in existing database or can create a new database. Let us go ahead and create a new database. In DATABSE drop down select option of Create New SQL database instance. Select SUBSCRIPTION and REGION from drop down as well.

Step 4

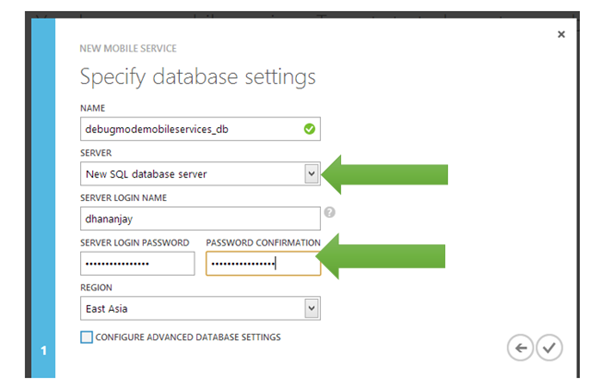

On next screen you need to create database. Choose either existing database server or create a new one. You need to provide credential to connect with database servers.

Step 5

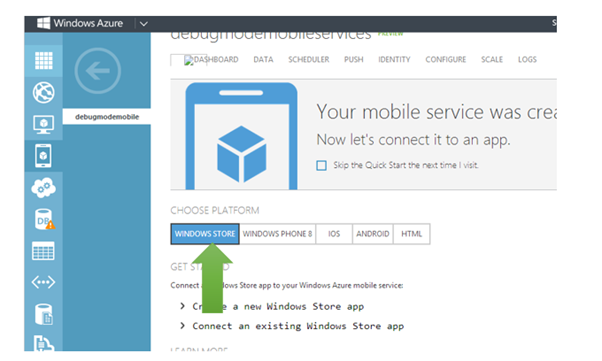

After Successful creation of mobile services you need to select platform. Let us go ahead and choose Windows Store as platform

Step 6

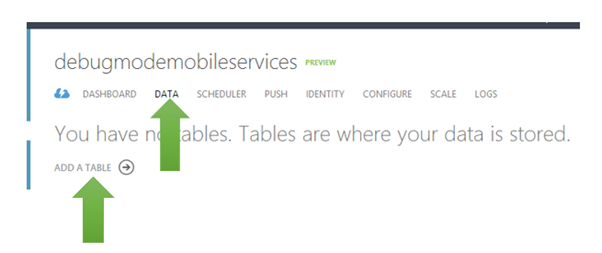

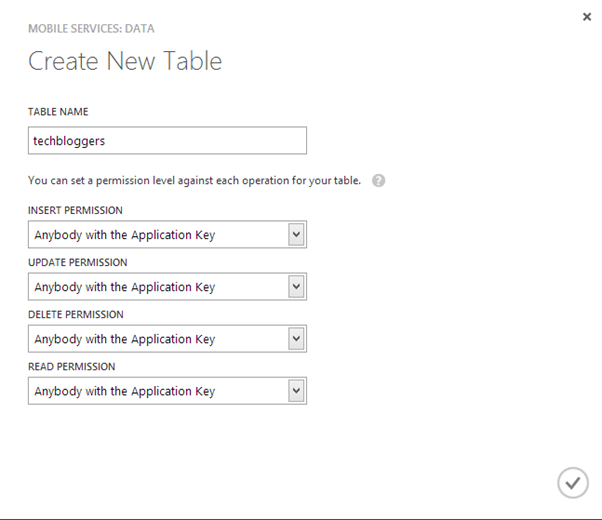

After selecting platform click on Data in menu. After selecting Data click on ADD A TABLE

Next you need to provide Table name. You can provide permission on table. There are three options available

- Anybody with Application Key

- Only Authenticated Users

- Only Scripts and Admins

Let us leave default permission level for the table.

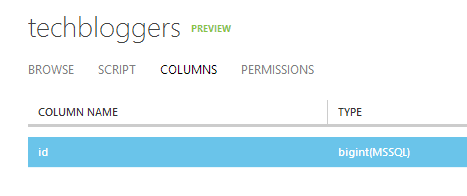

Step 7

Next click on tables. You will be navigated to table dashboard. When you click on Columns you will find one default created column id. This column gets created automatically. This column must be there in Windows Azure Mobile Service table.

On enabling of dynamic schema when you will add JSON objects from client application then columns will get added dynamically.

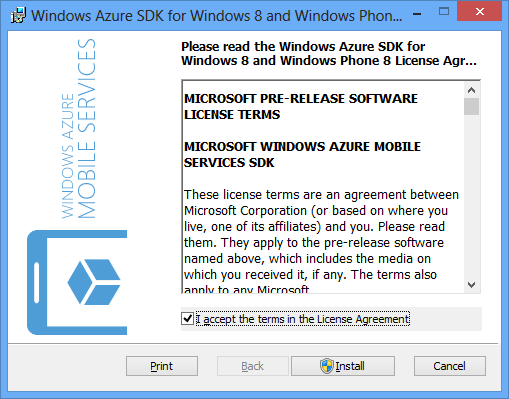

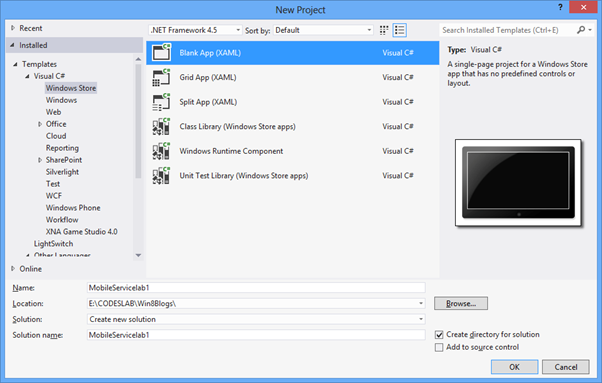

Create Windows Store Application in XAML

Very first you need to install Windows Azure SDK for Windows Phone and Windows 8.

After installing create a Windows Store Application by choosing Blank App template.

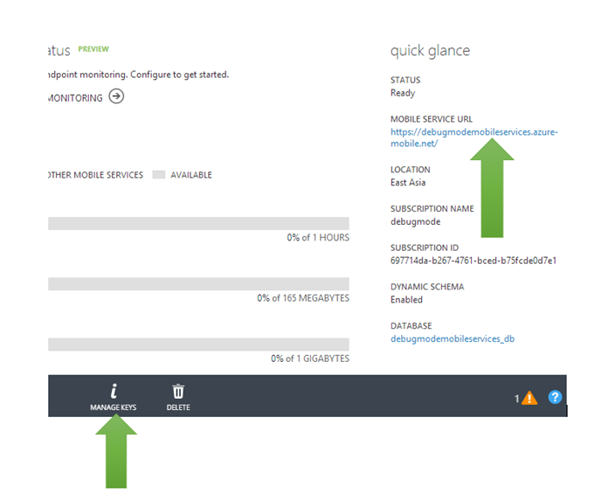

Before we move ahead to create Windows Store App let us go back to portal and mange App URL and key. You need key and application URL to work with Windows Azure Mobile Services from Windows Store application. You will find key and application URL at the portal.

Now go ahead and add following namespaces on MainPage.xaml.cs

using Microsoft.WindowsAzure.MobileServices; using System.Runtime.Serialization;Next you need to create entity class representing table from the Windows Azure Mobile Service. Let us create entity class as following. We are creating entity class TechBloggers.

public class TechBloggers { public int id { get; set; } [DataMember(Name="name")] public string Name { get; set; } [DataMember(Name = "technology")] public string Technology { get; set; } }After creating entity class go ahead and global variables.

MobileServiceClient client; IMobileServiceTable<TechBloggers> bloggerstable;Once global variable is defined in the constructor of page you need to create instance of MobileServiceClient and MobileServiceTable. Let us go ahead and create that in constructor of the page.

public MainPage() { this.InitializeComponent(); MobileServiceClient client = new MobileServiceClient("https://youappurl", "appkey"); bloggerstable = client.GetTable<TechBloggers>(); }Now let us go back and design app page. On XAML let us put two textboxes and one button. On click event of button we will insert bloggers in the table.

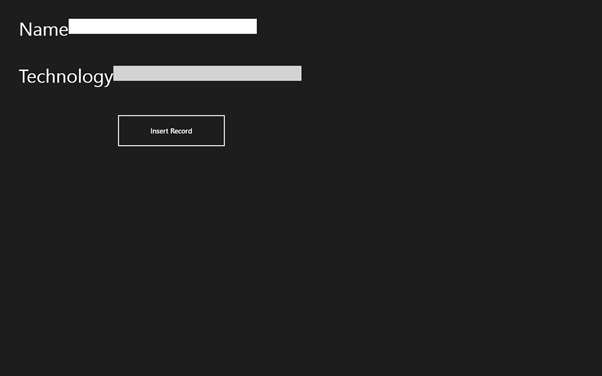

<Grid Background="{StaticResource ApplicationPageBackgroundThemeBrush}" > <Grid.RowDefinitions> <RowDefinition Height="100" /> <RowDefinition Height="100" /> <RowDefinition Height="100" /> </Grid.RowDefinitions> <StackPanel Grid.Row="0" Orientation="Horizontal" Margin="40,40,0,0"> <TextBlock Text="Name" FontSize="40" /> <TextBox x:Name="txtName" VerticalAlignment="Top" Width="400" /> </StackPanel> <StackPanel Orientation="Horizontal" Grid.Row="1" Margin="40,40,0,0"> <TextBlock Text="Technology" FontSize="40" /> <TextBox x:Name="txtTechnology" VerticalAlignment="Top" Width="400" /> </StackPanel> <Button Grid.Row="2" x:Name="btnInsert" Click="btnInsert_Click_1" Content="Insert Record" Height="72" Width="233" Margin="248,42,0,-14" /> </Grid>Application will look like as given in below image. I know this is not best UI. Any way creating best UI is not purpose of this post

On click event of button we can insert a record to table using Windows Azure Mobile Services using following code

private void btnInsert_Click_1(object sender, RoutedEventArgs e) { TechBloggers itemtoinsert = new TechBloggers { Name = txtName.Text, Technology = txtTechnology.Text }; InserItem(itemtoinsert); }InsertItem function is written like following,

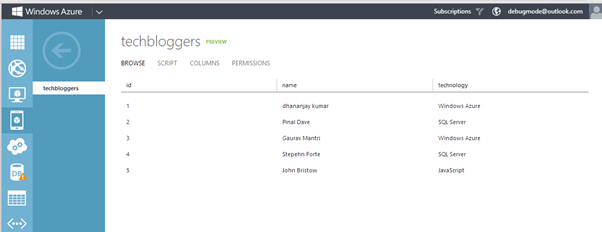

private async void InserItem(TechBloggers itemtoinsert) { await bloggerstable.InsertAsync(itemtoinsert); }On click event of button you can insert records in Windows Azure Mobile Service data table. To verify inserted records browse to portal and click on table.

In further posts we will learn update, delete and view of the records.

Clemens Vasters (@clemensv) produced a 00:25:34 Windows Azure Mobile Services - for Organizations and the Enterprise video for Channel9 on 3/25/2013 (missed when published):

Last week in Redmond I had a chat with coworker Josh Twist from our joint Azure Mobile team (owning Service Bus and Mobile Services) about the relevance of Mobile Services for organizations and businesses.

As the app stores grow, there's increasing competitive pressure on organizations of all sizes to increase the direct consumer engagement through apps on mobile devices and tablets, and doing so is often quite a bit of a scalability leap from hundreds or thousands of concurrent internal clients to millions of direct consumer clients.

Mobile Services is there to help and can, also in conjunction with Service Bus and other services form Microsoft and partners, act as a new kind of gateway to enterprise data and compute assets.

Other Windows Azure Mobile Services videos:

- Introduction to Windows Azure Mobile Services

- iOS Support in Windows Azure Mobile Services

- Windows Store app - Creating your first app using the Windows Azure Mobile Services Quickstart

<Return to section navigation list>

Marketplace DataMarket, Cloud Numerics, Big Data and OData

•• Julie Lerman (@julielerman) described Getting Started with WCF Data Services 5.4, OData v3 and JSON Light in a 4/6/2013 post to her Don’t Be Iffy blog:

TLDR: Setup steps to get WCFDS 5.4 up and running

JULBS

JUBLS(Julie’s Usual Lengthy Back Story):WCF Data Services (aka WCFDS) updates are being released at a rapid pace. I think they are on a hoped-for 6-week release schedule and they just released 5.4 a few days ago.

One of the reasons is that they are adding in more and more support for features of OData v3. But rather than waiting until ALL of the features are in (by which time OData v4 may already be out! :)) they are pushing out updates as they get another chunk of features in.

Be sure to watch the http://blogs.msdn.com/astoriateam blog for announcements about release candidates (where you can provide feedback) and then the releases. Here for example is the blog post announcing the newest version: WCF Data Services 5.4.0 Release.

I wanted to move to this version so I could play with some of the new features and some of the features we’ve gotten starting with v 5.1 – for example the support for the streamlined JSON output (aka “JSON light”) and Actions & Functions.

As there are a number of steps involved for getting your WCFDS to use v 5.4, I somehow performed them in an order that left me in the cold. I couldn’t even get JSON light output.

So after scratching my head for way too long, I finally started the service from scratch and this time it worked.

- Create a new project to host your service. I recommend a WCF Services Application project

- Delete the IService1.cs and Service1.cs files created in the new project.

- Add a new WCF Data Service item

Remove the references to the following WCFDS 5.0 assemblies:

Microsoft.Data.EdmMicrosoft.Data.ODataMicrosoft.Data.ServicesMicrosoft.Data.Services.ClientSystem.SpatialUsing Nuget, install WCF Data Services Server. The current version available on Nuget is 5.4.

When you install this, Nuget will also install the other 4 assemblies (Edm, OData, Services.Client and System.Spatial)- UPDATE! Mark Stafford (from the WCFDS team) let me in on a “secret”. The WCFDS template uses Nuget to pull in WCFDS5 APIs, so you can just use Nuget to UPDATE those assemblies! (this is for VS2012. For VS2010, use the crossed out steps 4 & 5 :))

- Install Entity Framework 5 from Nuget

That should be everything you need to do to have the proper version of WCFDS.

Now you can follow a normal path for creating a data service.

In case you’re not familiar with that the basic steps (just getting a service running, not doing anything fancy here)

- Add reference(s) to any projects that contain your data layer (in my case a data layer with EF’s DbContext) and domain classes.

- Modify the WCFDataService class that the Add New Item wizard created so that it uses your context. My context is called CoursesContext, so here I’ve specified that context and simply exposed all of my entity sets to be available as read-only data using the SetEntityDataAccessRule. That is not a best practice for production apps but good enough to get me started.

public class MyDataService : DataService<CoursesContext> { public MyDataService() { Database.SetInitializer(new CoursesInitializer()); }public static void InitializeService(DataServiceConfiguration config) { config.SetEntitySetAccessRule("*", EntitySetRights.AllRead); config.DataServiceBehavior.MaxProtocolVersion = DataServiceProtocolVersion.V3; config.UseVerboseErrors = true; } }My data layer includes a custom initializer with a seed method so in the class constructor, I’ve told EF to use that initializer. My database will be created with some seed data.

Notice the MaxProtocolVersion. The WCFDS item template inserted that. It’s just saying that it’s okay to use OData v3.

I added the UseVerboseErrors because it helps me debug problems with my service.

Now to ensure that I’m truly getting access to OData v3 behavior, I can open up a browser or fiddler and see if I can get JSON light. JSON light is the default JSON output now.

WCFDS now lets me leverage OData’s format parameter to request JSON directly in the URL. I can even do this in a browser.

Chrome displays it directly in the browser:

Internet Explorer’s default behavior for handling a JSON response is to download the response as text file and open it in notepad.

In Fiddler, I have to specify the Accept header when composing the request. Since JSON light is the default, I only have to tell it I want JSON (application/json).

Fiddler outputs the format nicely for us to view:

If I want the old JSON with more detail, I can tell it to spit out the odata as “verbose” (odata=verbose). Note that it’s a semi-colon in between, not a comma.

(I’m only showing the JSON for the first course object, the second is collapsed)

Checking for the existence of the JSON Light format and that it’s even the new JSON default, helps me verify that I’m definitely using the new WCFDS APIs and getting access to the OData v3 features that it supports. Now I can go play with some of the other new features! :)

• Max Uritsky (@max_data) broke a two-year blog silence with his Windows Azure Marketplace – new end user experiences, new features, new data and app services, but still the same old excitement and added value! post of 4/5/2013:

Hello Windows Azure Marketplace users,

We’re back with another exciting set of announcements! In the past 4 months, we’ve not only supported the launch of a new storefront, but also made great strides in making our service more resilient, added a feature to help users guard against interruptions to service, added a huge portfolio of data services and app services, while continuing to improve the user experience on the portal and from Office products.

If you are a Developer, you will be very excited to know that we launched the Azure Store, which is a marketplace for data services and app services on the Windows Azure Portal, in October 2012. Want to learn more? Check out the keynote from Build 2012!

If you are an Analyst or an Information Worker, you will be interested in knowing that we improved the experience of using Windows Azure Marketplace from Excel PowerPivot, and we helped with the launch of Data Explorer, which is a data aggregation and shaping tool from SQL Information Services. Download Data Explorer and start playing with it right away!

Here are a few snapshots from Data Explorer:

Here are a few snapshots with the improved experience from Excel PowerPivot:

We have also continued to improve the user experience on the Windows Azure Marketplace Portal. We added a sitemap, which is a one-stop shop access point to all the important resources on the websites. With this feature, discoverability of resources on the website was greatly improved. We also made an improvement to the data visualization tool on our portal, Service Explorer, by replacing it with the more metro-looking Query Builder, to unify the data exploration experience found in Excel and PowerPivot. With this release, we also added support for download to PowerPivot 2010 and PowerPivot 2013, to target a broader set of customers.

We also added a feature in which users can opt-in to Windows Azure Marketplace automatically refilling their subscriptions when the subscription balances are low – we got a lot of feedback from our users that there ought to be a way to automatically re-subscribe to a subscription when the subscription balance runs out, and we heard the feedback, and we released the feature called Auto Refill, that did exactly that. Hmm, too good to be true? Read more about Auto Refill.

Here is a snapshot of the sitemap:

Here is a snapshot of the new data visualization tool on the Marketplace Portal:

Here are a few snapshots of Auto-Refill:

We have also released a good list of content in the past 5 months, and one of the interesting offerings was the Synonyms API that we made available through the Windows Azure Marketplace. The Synonyms API returns alternate references to real world entities like products, people, locations, and more.

For instance, the API intelligently matches the following real world entities to commonly used synonyms:

- Product synonyms: “Canon 600D” is synonymous with “canon rebel t3i”, etc

- People synonyms: “Jennifer Lopez”” is synonymous with “jlo”, etc

- Place synonyms: “Seattle Tacoma International Airport” is synonymous with “sea tac”, etc.

Try out this cool API for yourself right here.

We also released a ton of great content by Dun & Bradstreet, Digital Folio and our other key publishers, and here’s a short list:

- Delta One - Rates and yields derived from real market data

- 300.000 Economic Indicators for 196 Countries - 300.000 economic indicators, exchange rates, stock market indexes, government bond yields and commodity prices for 196 countries

- Derivative Data - Derivative price information on the Euro Stoxx 50 equity index

- Calendar Mapping Table for Data Warehouses - Full set of hierarchies for the standard calendar and the ISO week structure

- Drug Prescribing by GP Practices in England, Published by: Custom Web Apps, Ltd

- LetMobile Secure Mobile Email, Published by: LetMobile

- True False WebUpload, Published by: True False S.r.l.

- Retail Intelligence API - Event History: All Product Elements, Published by: Digital Folio

- Retail Intelligence API - Summaries: All Product Elements, Published by: Digital Folio

- Retail Intelligence API - Event History: Product Price Only, Published by: Digital Folio

- Retail Intelligence API - Summaries: Product Price Only, Published by: Digital Folio

- The City of Barcelona’s Barcelona Facilities

- The City of Barcelona’s Barcelona Car Registrations in 2009

- The City of Barcelona’s Barcelona City Blocks

- The City of Barcelona’s Barcelona Basic Addresses

- MacroTrenz’s U.S. Bank Financia Condition & Performance Data Service

- Custom Web Apps’ Crime Statistics for England & Wales

- Dun & Bradstreet’s Business Insight

- Dun & Bradstreet’s Bustling Manufacturers & Business Services List

- EUHealth’s European Health Care Data

- ScientiaMobile’s WURFL Cloud Service

- Profity‘s Timesplitter

- Active Cloud Monitoring - Active Cloud Monitoring by MetricsHub monitors your cloud applications and automatically scales your service up or down based on rules you set. Within minutes, you'll improve up-time and decrease cost.

- Bitline - Stop spending time managing image infrastructure. Use Bitline to handle all your image processing, from cropping, to rotations, or filters. We even offer screenshots of websites! Blitline leverages cloud based services and provides reliable, scalable, enterprise ready performance.

- EZDRM Hosted PlayReady DRM - EZDRM, Hosted PlayReady DRM provider, offers Microsoft PlayReady DRM for protection and content access control of your digital media. Our solution supports Microsoft Smooth Streaming (.piff/.ismv), PlayReady Video (.pyv), playReady Audio (.pya) and Windows Media (.wmv, .wma) file formats.

- Cloudinary - Cloudinary streamlines your web application’s image manipulation needs. Cloudinary’s cloud-based servers automate image uploading, resizing, cropping,optimizing, sprite generation and more.

- Embarke Email Analytics - Embarke is next-generation analytics for email. Learn behaviors about your users that will help you increase engagement and revenue. Integration takes less than 15 minutes!

- Scheduler - Time-based eventing made simple. Schedule CRON jobs with ease.

- Pusher - Pusher makes it easy to push any type of content to browsers and devices in real time.

- VS Anywhere - Real-Time Collaboration Platform for Visual Studio 2010/2012. Share Solutions, Projects, Code and Visual Studio Designers worldwide in Real-Time

To get a full list of data services, please click here and to get a full list of all the applications available through the Windows Azure Marketplace, please click here.

• S. D. Oliver described Getting data from Windows Azure Marketplace into your Office application in a 4/3/2013 post to the Apps for Office and SharePoint blog:

This post walks through a published app for Office, along the way showing you everything you need to get started building your own app for Office that uses a data service from the Windows Azure Marketplace. Today’s post is brought to you by Moinak Bandyopadhyay. Moinak is a Program Manager on the Windows Azure Marketplace team, a part of the Windows Azure Commerce Division.

Ever wondered how to get premium, curated data from Windows Azure Marketplace, into your Office applications, to create a rich and powerful experience for your users? If you have, you are in luck.

Introducing the first ever app for Office that builds this integration with the Windows Azure Marketplace – US Crime Stats. This app enables users to insert crime statistics, provided by DATA.GOV, right into an Excel spreadsheet, without ever having to leave the Office client.

One challenge faced by Excel users is finding the right set of data, and apps for Office provides a great opportunity to create rich, immersive experiences by connecting to premium data sources from the Windows Azure Marketplace.

What is the Windows Azure Marketplace?

The Windows Azure Marketplace (also called Windows Azure Marketplace DataMarket or just DataMarket) is a marketplace for datasets, data services and complete applications. Learn more about Windows Azure Marketplace.

This blog article is organized into two sections:

- The U.S. Crime Stats Experience

- Writing your own Office Application that gets data from the Windows Azure Marketplace

The US Crime Stats Experience

You can find the app on the Office Store. Once you add the US Crime Stats app to your collection, you can go to Excel 2013, and add the US Crime Stats app to your spreadsheet.

Figure 1. Open Excel 2013 spreadsheet

Once you choose US Crime Stats, the application is shown in the right pane. You can search for crime statistics based on City, State, and Year.

Figure 2. US Crime Stats app is shown in the right task pane

Once you enter the city, state, and year, click ‘Insert Crime Data’ and the data will be inserted into your spreadsheet.

Figure 3. Data is inserted into an Excel 2013 spreadsheet

What is going on under the hood?

In short, when the ‘Insert Crime Data’ button is chosen, the application takes the input (city, state, and year) and makes a request to the DataMarket services endpoint for DATA.GOV in the form of an OData Call. When the response is received, it is then parsed, and inserted into the spreadsheet using the JavaScript API for Office.

Writing your own Office application that gets data from the Windows Azure Marketplace

Prerequisites for writing Office applications that get data from Windows Azure Marketplace

- Visual Studio 2012

- Office 2013

- Office Developer Tools for Visual Studio 2012

- Basic Familiarity with the OData protocol

- Basic familiarity with the JavaScript API for Office

- To develop your application, you will need a Windows Azure Marketplace Developer Account and a Client ID (learn more)

- Web server URL where you will be redirected to after application consent. This can be of the form ‘http://localhost:port_number/marketplace/consent’. If you are hosting the page in IIS on your development computer, supply this URL to the application Register page when you create your Marketplace app.

How to write Office applications using data from Windows Azure Marketplace

The MSDN article, Create a Marketplace application, covers everything necessary for creating a Marketplace application, but below are the steps in order.

- Register with the Windows Azure Marketplace:

- You need to register your application first on the Windows Azure Marketplace Application Registration page. Instructions on how to register your application for the Windows Azure Marketplace are found in the MSDN topic, Register your Marketplace Application.

- Authentication:

- Receiving Data from the Windows Azure Marketplace DataMarket service

To get a real feel of the code that powers the US Crime Stats, be on the lookout for the release of the code sample on the Office 2013 Samples website.

• The WCF Data Services Team reported the WCF Data Services 5.4.0 Release on 4/2/2013:

Today we are releasing version 5.4.0 of WCF Data Services. As mentioned in the prerelease post, this release will be NuGet packages only. That means that we are not releasing an updated executable to the download center. If you create a new WCF Data Service or add a reference to an OData service, you should follow the standard procedure for making sure your NuGet packages are up-to-date. (Note that this is standard usage of NuGet, but it may be new to some WCF Data Services developers.)

Samples

If you haven’t noticed, we’ve been releasing a lot more frequently than we used to. As we adopted this rapid cadence, our documentation has fallen somewhat behind and we recognize that makes it hard for you to try out the new features. We do intend to release some samples demonstrating how to use the features below but we need a few more days to pull those samples together and did not want to delay the release. Once we get some samples together we will update this blog post (or perhaps add another blog post if we need more commentary than a gist can convey).

What is in the release:

Client deserialization/serialization hooks

We have a number of investments planned in the “request pipeline” area. In 5.4.0 we have a very big set of hooks for reaching into and modifying data as it is being read from or written to the wire format. These hooks provide extensibility points that enable a number of different scenarios such as modifying wire types, property names, and more.

Instance annotations on atom payloads

As promised in the 5.3.0 release notes, we now support instance annotations on Atom payloads. Instance annotations are an extensibility feature in OData feeds that allow OData requests and responses to be marked up with annotations that target feeds, single entities (entries), properties, etc. We do still have some more work to do in this area, such as the ability to annotate properties.

Client consumption of instance annotations

Also in this release, we have added APIs to the client to enable the reading of instance annotations on the wire. These APIs make use of the new deserialization/serialization pipelines on the client (see above). This API surface includes the ability to indicate which instance annotations the client cares about via the Prefer header. This will streamline the responses from OData services that honor the

odata.include-annotationspreference.Simplified transition between Atom and JSON formats

In this release we have bundled a few less-noticeable features that should simplify the transition between the Atom and (the new) JSON format. (See also the bug fixes below on type resolver fixes.)

Bug fixes

In addition to the features above, we have included fixes for the following notable bugs:

- Fixes an issue where reading a collection of complex values would fail if the new JSON format was used and a type resolver was not provided

- Fixes an issue where ODataLib was not escaping literal values in IDs and edit links

- Fixes an issue where requesting the service document with application/json;odata=nometadata would fail

- Fixes an issue where using the new JSON format without a type resolver would create issues with derived types

- (Usability bug) Makes it easier to track the current item in ODataLib in many situations

- Fixes an issue where the LINQ provider on the client would produce $filter instead of a key expression for derived types with composite keys

- (Usability bug) Fixes an issue where the inability to set EntityState and ETag values forced people to detach and attach entities for some operations

- Fixes an issue where some headers required a case-sensitive match on the WCF DS client

- Fixes an issue where 304 responses were sending back more headers than appropriate per the HTTP spec

- Fixes an issue where a request for the new JSON format could result in an error that used the Atom format

- Fixes an issue where it was possible to write an annotation value that was invalid according to the term

- Fixes an issue where PATCH requests for OData v1/v2 payloads would return a 500 error rather than 405

We want your feedback

We always appreciate your comments on the blog posts, forums, Twitterverse and e-mail (mastaffo@microsoft.com). We do take your feedback seriously and prioritize accordingly. We are still early in the planning stages for 5.5.0 and 6.0.0, so feedback now will help us shape those releases.

<Return to section navigation list>

Windows Azure Service Bus, Caching Access Control, Active Directory, Identity and Workflow

• Glen Block (@gblock) reported “we just pushed our first release of socket.io-servicebus to npm!” in a 4/4/2013 e-mail message and tweet. From NPM:

socket.io-servicebus - socket.io store using Windows Azure Service Bus

This project provides a Node.js package that lets you use Windows Azure Service Bus as a back-end communications channel for socket.io applications.

Library Features

- Service Bus Store

- Easily connect multiple socket.io server instances over Service Bus

Getting Started

Download Source Code

To get the source code of the SDK via git just type:

git clone https://github.com/WindowsAzure/socket.io-servicebus cd ./socket.io-servicebusInstall the npm package

You can install the azure npm package directly.

npm install socket.io-servicebusUsage

First, set up your Service Bus namespace. Create a topic to use for communications, and one subscription per socket.io server instance. These can be created either via the Windows Azure portal or programmatically using the Windows Azure SDK for Node.

Then, configure socket.io to use the Service Bus Store:

var sio = require('socket.io'); var SbStore = require('socket.io-servicebus'); var io = sio.listen(server); io.configure(function () { io.set('store', new SbStore({ topic: topicName, subscription: subscriptionName, connectionString: connectionString })); });The connection string can either be retrieved from the portal, or using our powershell / x-plat CLI tools. From here, communications to and from the server will get routed over Service Bus.

Current Issues

The current version (0.0.1) only routes messages; client connection state is stored in memory in the server instance. Clients need to consistently connect to the same server instance to avoid losing their session state.

Need Help?

Be sure to check out the Windows Azure Developer Forums on Stack Overflow if you have trouble with the provided code.

Contribute Code or Provide Feedback

If you would like to become an active contributor to this project please follow the instructions provided in Windows Azure Projects Contribution Guidelines.

If you encounter any bugs with the library please file an issue in the Issues section of the project.

Learn More

For documentation on how to host Node.js applications on Windows Azure, please see the Windows Azure Node.js Developer Center.

For documentation on the Azure cross platform CLI tool for Mac and Linux, please see our readme [here] (http://github.com/windowsazure/azure-sdk-tools-xplat)

Check out our new IRC channel on freenode, node-azure.

Vittorio Bertocci (@vibronet) described Auto-Update of the Signing Keys via Metadata on 4/2/2013:

TL;DR version: we just released an update to the ValidatingIssuerNameRegistry which makes it easy for you to write applications that automatically keep up to date the WIF settings containing the keys that should be used to validate incoming tokens.

The Identity and Access Tools for Visual Studio 2012 will pick up the new version automatically, no action required for you.The Validation Mechanism in WIF’s Config

When you run the Identity and Access Tool for VS2012 (or the ASP.NET Tools for Windows Azure AD) you can take advantage of the metadata document describing the authority you want to trust to automatically configure your application to connect to it. In practice, the tool adds various WIF-related sections in your web.config; those are used for driving the authentication flow.

One of those elements, the <issuerNameRegistry>, is used to keep track of the validation coordinates that must be used to verify incoming tokens; below there’s an example.<issuerNameRegistry type="System.IdentityModel.Tokens.ValidatingIssuerNameRegistry,System.IdentityModel.Tokens.ValidatingIssuerNameRegistry"> <authority name="https://lefederateur.accesscontrol.windows.net/"> <keys> <add thumbprint="C1677FBE7BDD6B131745E900E3B6764B4895A226" /> </keys> <validIssuers> <add name="https://lefederateur.accesscontrol.windows.net/" /> </validIssuers> </authority> </issuerNameRegistry>The key elements here are the keys (a collection of thumbprints indicating which certificates should be used to check the signature of incoming tokens) and the validIssuers (list of names that are considered acceptable values for the Issuer element of the incoming tokens (or equivalent in non-SAML tokens)).

That’s extremely handy (have you ever copied thumbprints by hand? I did, back in the day) however that’s not a license for forgetting about the issuer’s settings. What gets captured in config is just a snapshot of the current state of the authority, but there’s no guarantee that things won’t change in the future. In fact, it is actually good practice for an authority to occasionally roll keys over.

If a key rolls, and you don’t re-run the tool to update your config accordingly, your app will now actively refuse the tokens signed with the new key; your users will be locked out. Not good.

Dynamically Update of the Issuer Coordinates in Web.Config

WIF includes fairly comprehensive metadata manipulation API support, which you can use for setting up your own config auto-refresh; however it defaults to the in-box implementation of IssuerNameRegistry, ConfigBasedIssuerNameRegistry, and in this post I made clear that ValidatingIssuerNameRegistry has clear advantages over the in-box class. We didn’t want you to have to choose between easy config refresh and correct validation logic, hence we updated ValidatingIssuerNameRegistry to give you both.

In a nutshell, we added a new static method (WriteToConfig) which reads a metadata document and, if it detects changes, it updates an <issuerNamerRegistry> in the web.config to reflect what’s published in metadata. Super-easy!

I would suggest invoking that method every time your application starts: that happens pretty often if you use the IIS defaults, and it is a safe time to update the web.config without triggering unwanted recycles. For example, here there’s how your global.asax might look like:

using System; using System.Web.Http; using System.Web.Mvc; using System.Web.Optimization; using System.Web.Routing; using System.Configuration; using System.IdentityModel.Tokens; namespace MvcNewVINR { public class MvcApplication : System.Web.HttpApplication {

protected void RefreshValidationSettings() { string configPath =AppDomain.CurrentDomain.BaseDirectory + "\\" + "Web.config"; string metadataAddress =ConfigurationManager.AppSettings["ida:FederationMetadataLocation"]; ValidatingIssuerNameRegistry.WriteToConfig(metadataAddress, configPath); } protected void Application_Start() { AreaRegistration.RegisterAllAreas(); WebApiConfig.Register(GlobalConfiguration.Configuration); FilterConfig.RegisterGlobalFilters(GlobalFilters.Filters); RouteConfig.RegisterRoutes(RouteTable.Routes); BundleConfig.RegisterBundles(BundleTable.Bundles); RefreshValidationSettings(); } } }..and that’s it

We also added another method, GetIssuingAuthority, which returns the authority coordinates it read without committing them to the config: this comes in handy when you overwrote ValidatingIssuerNameRegistry to use your custom logic, issuers repository, etc and you want to write down the info in your own custom schema.

Self-healing of the issuer coordinates is a great feature, and I would recommend you consider it for all your apps: especially now that it’s really easy to set up.

This post is short by Vittorio’s (and my) standards!

Alan Smith continued his series with Website Authentication with Social Identity Providers and ACS Part 3 - Deploying the Relying Party Application to Windows Azure Websites on 4/1/2013:

Originally posted on: http://geekswithblogs.net/asmith/archive/2013/04/01/152568.aspx

In the third of the series looking at website authentication with social identity providers I’ll focus on deploying the relying party application to a Windows Azure Website. This will require making some configuration changes in the management console in ACS, and the web.config file, and also changing the way that session cookies are created

The other parts of this series are here:

- Website Authentication with Social Identity Providers and ACS Part 1

- Website Authentication with Social Identity Providers and ACS Part 2 – Integrating ACS with the Universal Profile Provider

The relying party website has now been developed and tested in a development environment. The next stage is to deploy the application to Windows Azure Websites so that users can access it over the internet. The use of the universal profile provider and a Windows Azure SQL Database as a store for the profile information means that no changes in the database or configuration will be required when migrating the application.

Creating a Windows Azure Website

The first step is to create a Windows Azure Website in the Azure management portal. The following screenshot shows the creation of a new website with the URL of relyingpartyapp.azurewebsites.net in the West Europe region.

Note that the URL of the website must be unique globally, so if you are working through this solution, you will probably have to choose a different URL.

Configuring a Relying Party Application

With the website created, the relying party information will have to be configured for Windows Azure Active Directory Access Control, formally known as Windows Azure Access Control Service, (ACS). This is because the relying party configuration is specific to the URL of the website.

One option here is to create a new relying party with the URL of the Windows Azure Website, which will allow the testing of the op-premise application as well as the Azure hosted website. This will require the existing identity providers and rules to be recreated and edited for the new application. A quicker option is to modify the existing configuration, which is what I will do here.

The existing relying party application is present in the relying party applications section of the ACS portal.

In order to change the configuration for the host application the name, realm and return URL values will be changed to the URL of the Windows Azure Website URL (http://relyingpartyapp.azurewebsites.net/). The screenshot below shows these changes.

For consistency, the name of the rule group will also be changed appropriately.

With these changes made, ACS will now function for the application when it is hosted in Windows Azure Websites.

Configuring the Relying Party Application

For the relying party application website to integrate correctly with ACS, the URL for the website will need to be updated in two places in the web.config file. The following code highlights where the changes are made.

<system.identityModel>

<identityConfiguration>

<claimsAuthenticationManager type="RelyingPartyApp.Code.CustomCam, RelyingPartyApp" />

<certificateValidation certificateValidationMode="None" />

<audienceUris>

<add value="http://relyingpartyapp.azurewebsites.net/" />

</audienceUris>

<issuerNameRegistry type="System.IdentityModel.Tokens.ConfigurationBasedIssuerNameRegistry, System.IdentityModel, Version=4.0.0.0, Culture=neutral, PublicKeyToken=b77a5c561934e089">

<trustedIssuers>

<add thumbprint="B52D78084A4DF22E0215FE82113370023F7FCAC4" name="https://acsscenario.accesscontrol.windows.net/" />

</trustedIssuers>

</issuerNameRegistry>

</identityConfiguration>

</system.identityModel>

<system.identityModel.services>

<federationConfiguration>

<cookieHandler requireSsl="false" />

<wsFederation

passiveRedirectEnabled="true"

issuer="https://acsscenario.accesscontrol.windows.net/v2/wsfederation"

realm="http://relyingpartyapp.azurewebsites.net/"

requireHttps="false" />

</federationConfiguration>

</system.identityModel.services>

Deploying the Relaying Party Application to Windows Azure Websites Website

The next stage is to deploy the relying party application can be deployed to Windows Azure Websites. Clicking the download publish profile link will allow a publish profile for the website to be saved locally, this can then be used by Visual Studio to deploy the website to Windows Azure Websites.

Be aware that the publish profile contains the credential information required to deploy the website, this information is sensitive, so adequate precautions must be taken to ensure it stays confidential.

To publish the relying party application from Visual Studio, right-click on the RelyingPartyApp project, and select Publish.

Clicking the Import button will allow the publish profile that was downloaded form the Azure management portal to be selected.

When the publish profile is imported, the details will be shown in the dialog, and the website can be published.

After publication the browser will open, and the default page of the relying party application will be displayed.

Testing the Application

In order to verify that the application integrates correctly with ACS, the login functionality will be tested by clicking on the member’s page link, and logging on with a Yahoo account. When this is done, the authentication process takes place successfully, however when ACS routes the browser back to the relying party application with the security token, the following error is displayed.

Note that I have configured the website to turn off custom errors.

<system.web>

<customErrors mode="Off"/>

<authorization>

<!--<deny users="?" />-->

</authorization>

<authentication mode="None" />

The next section will explain why the error is occurring, and now the relying party application can be configured to resolve the error.

Configuring the Machine Key Session Security Token Handler

The default SessionSecurityTokenHandler used by WIF to create a secure cookie is not supported in Windows Azure Websites. The resolution for this is to configure the MachineKeySessionSecurityTokenHandler to be used instead. This is configured in the identityConfiguration section of the system.identityModel configuration for the website as shown below.

<system.identityModel>

<identityConfiguration>

<securityTokenHandlers>

<remove type="System.IdentityModel.Tokens.SessionSecurityTokenHandler, System.IdentityModel, Version=4.0.0.0, Culture=neutral, PublicKeyToken=b77a5c561934e089" />

<add type="System.IdentityModel.Services.Tokens.MachineKeySessionSecurityTokenHandler, System.IdentityModel.Services, Version=4.0.0.0, Culture=neutral, PublicKeyToken=b77a5c561934e089" />

</securityTokenHandlers>

<claimsAuthenticationManager type="RelyingPartyApp.Code.CustomCam, RelyingPartyApp" />

<certificateValidation certificateValidationMode="None" />

<audienceUris>

<add value="http://relyingpartyapp.azurewebsites.net/" />

</audienceUris>

<issuerNameRegistry type="System.IdentityModel.Tokens.ConfigurationBasedIssuerNameRegistry, System.IdentityModel, Version=4.0.0.0, Culture=neutral, PublicKeyToken=b77a5c561934e089">

<trustedIssuers>

<add thumbprint="B52D78084A4DF22E0215FE82113370023F7FCAC4" name="https://acsscenario.accesscontrol.windows.net/" />

</trustedIssuers>

</issuerNameRegistry>

</identityConfiguration>

</system.identityModel>

With those changes made, the website can be deployed, and the authentication functionally tested again.