Windows Azure and Cloud Computing Posts for 3/20/2010+

| Windows Azure, SQL Azure Database and related cloud computing topics now appear in this weekly series. |

• Updated 3/21/2010: A few Sunday articles.

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Table and Queue Services

- SQL Azure Database (SADB)

- AppFabric: Access Control and Service Bus

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Windows Azure Infrastructure

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Read the detailed TOC here (PDF) and download the sample code here.

Discuss the book on its WROX P2P Forum.

See a short-form TOC, get links to live Azure sample projects, and read a detailed TOC of electronic-only chapters 12 and 13 here.

Wrox’s Web site manager posted on 9/29/2009 a lengthy excerpt from Chapter 4, “Scaling Azure Table and Blob Storage” here.

You can now download and save the following two online-only chapters in Microsoft Office Word 2003 *.doc format by FTP:

- Chapter 12: “Managing SQL Azure Accounts and Databases”

- Chapter 13: “Exploiting SQL Azure Database's Relational Features”

HTTP downloads of the two chapters are available from the book's Code Download page; these chapters will be updated for the January 4, 2010 commercial release in February 2010.

Azure Blob, Table and Queue Services

Sreenivasa Ragavan explains Windows Azure – How to Use Table Storage in this illustrated tutorial of 3/19/2010:

I am going to create a Windows Azure Application which uses Table Storage to store my TODO Lists or TASKS. Windows Azure Table Storage is Semi Structured and Query able Table Storage and its a collections of Entities. These Entity have Primary key and set of Pre defined property.

Windows Azure Table service has two key properties: PartitionKey and the RowKey. These properties together form the table's primary key and uniquely identify each entity in the table. Every entity in the Table service also has a Timestamp system property, which allows the service to keep track of when an entity was last modified. This Timestamp field is intended for system use and should not be accessed by the application.

Table service does not enforce any schema for tables, which makes it possible for two entities in the same table to have different sets of properties.

Sreeni continues with step-by-step instructions for creating and deploying a to-do list to Windows Azufre.

<Return to section navigation list>

SQL Azure Database (SADB, formerly SDS and SSDS)

Jas Sandhu reports Microsoft on Open Source, OData, the Web and the Cloud at OSBC on 3/19/2010:

Microsoft was once again at the Open Source Business Conference (OSBC) held at the Palace Hotel in San Francisco on March 17-18, 2010. As Platinum Sponsors, there was good presence by quite a few softies at the event, as attendees and delivering sessions.

Stuart McKee, Microsoft's National Technology Officer for the United States, delivered a keynote address to attendees titled "Open Source at Microsoft: Meeting customer, developer and partner needs through a diversified ecosystem". McKee talked about the opportunities for open source applications interoperating with Microsoft platforms. From Windows, to SharePoint to Azure, and how increased flexibility and choice for the consumers of these technologies is good for everyone involved. McKee shared how internally Microsoft is changing and responding to a call from customers who demand a diverse ecosystem that includes open source. McKee gave examples of software from Apache, the MySQL database, and PHP all running on Microsoft's Windows Azure cloud platform. [Emphasis added.]

Microsoft in recent years has been endorsing open source via efforts such as sponsoring the Apache Foundation. The Microsoft-backed CodePlex Foundation, meanwhile, was set up last year as an effort to enable collaboration between open source communities and software companies. “More than ever, we are continuing to improve interoperability with open source products and platforms in addition to working with customers looking to optimize their mixed IT environments. Interoperability is important not only for the business world, but also for state and local governments. That's because the business of government is really about outcomes, regardless of how solutions are created," McKee said.

Brian Goldfarb, the Director of Developer Platforms at Microsoft, participated on a panel titled "The Web Is the Platform," along with Dion Almaer from Palm and Dave Mcallister at Adobe. Mark Driver from Gartner moderated. It was an interesting discussion with most parties agreeing on the web as a platform that provides opportunity for companies to build business models, use different approaches and how open source plays a very strong part in that vision. Goldfarb shared how the Microsoft /web site for the Microsoft Web platform, features 23 open source applications out of a total of 25 applications. They include software from popular open source companies such as Acquia Drupal, Wordpress, Joomla, Umbraco, DotNetNuke, You can find them and more listed in the gallery.

It was also great to see the folks at Geeknet at the Bird-of-a-Feather (BOF) talking about how Open Source on Windows is steadily climbing. 82% about 350,000 projects are Windows compatible and that is not a small number and fabulous news for those of us working with diverse languages and in mixed environments. These guys know something about the community considering they run sites like SourceForge, Slashdot, ThinkGeek, Ohloh, and freshmeat with over 40 million geeks visiting them. …

Strongly held positions do change at Microsoft.

Brian Prince claims NoSQL Database Movement Gains Ground as Alternative in this 3/18/2010 article for eWeek’s Database blog:

Recent announcements from Twitter and Digg.com underscore the growing awareness of NoSQL databases as an alternative to relational database management systems. But just what the future holds for NoSQL is an open question.

The buzz around the NoSQL movement in the past year has grown considerably, to the point where advocates organized a one-day conference in Boston just last week to discuss its future.

Recent announcements from Twitter and Digg.com supporting a NoSQL approach added fuel to this buzz, and while its ultimate growth among enterprises remains a subject of discussion, one thing is clear—the idea of a NoSQL database

is starting to get a new level of consideration in the enterprise.

Made up of a variety of nonrelational data stores, the NoSQL movement spans open-source projects such as Cassandra, CouchDB and MongoDB, as well as products from companies like Mark Logic, which makes a native X M L database. While the hype around the subject is relatively new, various NoSQL alternatives such as data grids, Google BigTable, Amazon Dynamo and other solutions are not, and have actually been around for years, noted Nati Shalom, CTO </STOCKTICKER itxtvisited="1">of GigaSpaces.

“As with many trends, it's probably a convergence of trends rather the something particular [that led to the rise of NoSQL],” Shalom said. “On the business side, social networking has changed quite significantly our Web experience, from read mostly Websites we're turning into heavy read/write sites like Twitter and Facebook with lots of content driven by users rather than the site provider. … Under that condition, lots of common practices such as static provisioning based on peak load and read mostly clusters started to break completely and forced a completely different way of doing things.” …

<Return to section navigation list>

AppFabric: Access Control and Service Bus

• Jason Follas continues his Azure AppFabric Access Control series with Windows Azure platform AppFabric Access Control: Obtaining Tokens of 3/22/2010:

In this, the third part of a series of articles examining Windows Azure platform AppFabric Access Control (Part 1, Part2), I will continue developing the [somewhat contrived] nightclub web service scenario and demonstrate how to obtain a token from ACS that can be used to call into a Bartender web service.

A Quick Review

Our distributed nightclub system has four major components: A Customer application coupled with some external identity management system, a Bartender web service, and ACS, which acts as our nightclub’s Bouncer.

A Customer application ultimately represents a user that wishes to order a drink from the Bartender web service. The Bartender web service must not serve drinks to underage patrons, so it needs to know the user’s age (this is known as a “claim”). The nightclub has no interest in maintaining a comprehensive list of customers and their ages, so instead, a Bouncer ensures that the user’s claimed age comes from a trusted source (this is known as an “issuer”). If the claim’s source can be verified, the Bouncer will give the customer a token containing its own set of claims that the Bartender web service will recognize. In the end, the Bartender doesn’t have to concern itself with all of the possible issuers – it only needs to recognize a single token generated by the Bouncer (ACS).

Jason’s previous articles in this series are:

- Windows Azure platform AppFabric Access Control: Introduction (3/8/2010)

- Windows Azure platform AppFabric Access Control: Service Namespace (3/17/2010)

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

• Jim Zimmerman, one of the developers of the Facebook Azure Toolkit, offers Deploying an Azure Project using TFS 2010:

… I am writing this on the plane now on the way to MIX 2010 where I am speaking on this topic. I am very excited to be able to speak at MIX. I am also tired since i am leaving SXSW. Awesome conference by the way! Anyway on with the post. :)

So, one of the biggest pains in any development is deployment. And when it comes to Azure, it is easy to deploy, but still is a very manual process. Also you need to deploy from your local Visual Studio which can be very problematic. For instance, lets say you publish your package locally, deploy to staging only to find out that another developer had just checked in a fix and you forgot to get latest before you deployed. Well since it can take sometimes 15 minutes to deploy to staging because of firing up multiple instances, this can become very painful to have to deploy again right after you just did because of one simple css change or any other change.

So after going through this pain more than once, actually more like 30+ times, I got off of my butt and figured out how to deploy to azure from TFS 2010. This was a lot of work actually to get to work. First thing you have to be able to do is build the cloud project on the server. After trying to change the registry, etc to get the Azure targets file to work, I decided just to install visual studio 2010 on the server along with the Azure SDK. This is the only way I knew that it would actually build.

So compiling the solution on the server is the first step. The second step is to actually package the solution so that is can be deployed to Azure. You have to do this by command line using cspack. This was also a little tricky as I had to figure out where the path was for the web and worker roles for the cspack to know what to package. Basically there is a folder called ProjectName.csx where your compiled app is placed when it is built. …

Jim continues with code and additional details.

You can download these scripts on our new Project on Codeplex that I worked with Microsoft on called the Facebook Azure Toolkit. Scott Densmore helped me create this awesome framework that makes it much easier to write apps for Azure. There are a lot of useful libraries and scripts in that project that have nothing to do with Facebook, but more for Azure. We are probably going to create another project on Codeplex that is just an Azure Toolkit. This build script will be more refactored in the future, but wanted to get it out now for those looking to do this now. More to come…

John McClelland interviews David Makogon in this 3/18/2010 Channel9 video:

With a couple of projects now under his belt, David Makogon, Senior Consultant at RDA and a Microsoft Solutions Advocate and Virtual Technical Specialist for Azure has the experience needed to help customers navigate the unchartered waters of cloud computing. In an interview with John McClelland, Partner Evangelist at Microsoft, David outlines the top 3 questions he hears from customers pondering a move to Microsoft’s Azure Services Platform. David shares his perspective regarding costs, security and readiness; building trust and mitigating risk in the cloud; and the benefits, pre- and post-deployment of a cloud solution.

MindTree’s PR group asserts “MindTree to Demonstrate a Success Story at the Forrester Infrastructure and Operations Forum in Dallas” in its MindTree to Showcase Benefits of Managing Infrastructure for the Cloud Utilizing the Windows Azure Platform press release of 3/17/2010:

MindTree Limited, a global IT Solutions Company, today announced that it will demonstrate the benefits of running and managing an enterprise infrastructure on the Windows Azure platform at the Forrester Infrastructure and Operations (I&O) Forum. The demonstration will specifically present on how to use cloud as the post-recession strategy for improving infrastructure efficiencies, driving down costs and increasing savings.

MindTree is a silver sponsor of the Forrester Infrastructure and Operations (I & O) Forum, to be held in Dallas, Texas, on March 17 and 18, 2010. MindTree, at booth # 206, will present its success in improving efficiencies in infrastructure management by using the Windows Azure platform.

"MindTree strongly believes that cloud computing will play a key role in the post-recession strategies of many companies. Cloud computing can help companies improve efficiencies, reduce significant upfront and maintenance costs, improve savings, enhance security and contribute to eco-friendly initiatives," said MindTree General Manager and Head of Managed Services, Raju Chellaton. "While cloud computing delivers what it promises, there are still a few questions that CIOs need to answer before following their peers. MindTree has leveraged its expertise in infrastructure management with Microsoft's leadership in cloud services to help IT leaders make informed decisions for their business."

Return to section navigation list>

Windows Azure Infrastructure

Liam Eagle reports The New Face of Hosting, According to Microsoft’s John Zanni from Germany on 3/17/2010:

Wednesday’s third keynote session at WebhostingDay was “The New Face of Hosting,” presented by John Zanni, general manager for the worldwide software plus services industry at Microsoft’s communications sector.

He began by echoing the sentiment first presented a little earlier in the day by Serguei Beloussov of Parallels, that there is a big opportunity for SMBs and hosting providers in the cloud, with similar numbers, stating that small businesses make up about 95 percent of the businesses in any given country.

He also says that 52 percent of small businesses report that revenue has increased in the last 12 months, up from 39 percent last year. And 59 percent saw IT as critical to their success, up 20 points from 12 months ago. And 65 percent of the companies they interviewed used some type of hosted service, with 73 percent of those that don’t are considering buying them.

This all illustrates, he says, that small and medium sized companies are starting to grow again, and the ones that are growing faster see IT as critical, and those companies are looking at purchasing IT as a hosted service.

Zanni says SMBs looking to buy hosted IT services are looking for agility, reliability, accountability and security, all of which are components that together provide peace of mind, which means that the service provider becomes the “trusted advisor.” This sort of relationship, he says, is very sticky. A customer tends to stay very loyal to a service provider they view as a trusted advisor.

He shows a slide with data from Tier 1 research showing a range of hosted services arranged according to profit margin, revealing that the more complex the service the higher it tends to rank on the profit margin chart. …

Liam signs off with:

Concluding, he recommends that you take a look at how IT is sold, versus how hosting is sold. It’s not transactional. It is a solution.

He also emphasized the sea change at Microsoft, toward the cloud, a movement you can gain some understanding of by visiting the cloud section of the company’s website.

<Return to section navigation list>

Cloud Security and Governance

No significant articles today.

<Return to section navigation list>

Cloud Computing Events

• Eric Nelson has posted his Slides and links for Entity Framework 4 and Azure from Devweek 2010, according to this 3/21/2010 post:

Last week (March 2010) I presented on Entity Framework 4 and the Windows Azure Platform at www.devweek.com. As usual, it was a great conference and I caught up with lots of old friends and made some new ones along the way.

Entity Framework 4: Entity Framework 4 In Microsoft Visual Studio 2010

View more presentations from Eric Nelson.

Windows Azure and SQL Azure: Building An Application For Windows Azure And Sql Azure

View more presentations from Eric Nelson. …

• Jim O’Neill writes in his Win with WebsiteSpark – April 1st (No foolin’) post of 3/21/2010:

Microsoft and its hosting partner, Navisite, are sponsoring a free event on April 1st at Microsoft’s New England Research and Development (NERD) Center in Cambridge MA.

The event focuses on the WebsiteSpark program, through which development and design firms of 10 employees or less can get free development and design tools as well as Windows Web Server and SQL Server for a three-year period to get their business up and running [details here].

Chris and I will be among those presenting at the event, which includes training sessions on

- Benefits of utilizing hosting service

- Search Engine Optimization

- Web development and design tools

Register for this free event at http://navisiteandmicrosoft.eventbrite.com/

Pete Silva’s Lori MacVittie Interview at Cloud Connect post of 3/20/2010 describes his interview of a fellow F5 technical teamer:

I got a chance to sit down with another member of the Technical Marketing Team at F5, Lori MacVittie at the Cloud Connect conference in Santa Clara this week. We chat about Web 2.0, Infrastructure 2.0, dynamic networks, cloud interoperability standards, what 3.0 looks like and a few other things. Thanks Lori!

Pete’s post includes an embedded YouTube viewer for the interview.

Brenda Michelson’s David Linthicum’s Cloud Computing Podcast: Dave and Brenda talk Cloud Connect post of 3/20/2010 states:

On Friday, David Linthicum invited me on his cloud computing podcast to chat about what we heard, and didn’t hear, at the Cloud Connect conference. Naturally, our discussion wound its way to the connections of cloud computing, enterprise architecture, service-oriented architecture and data architecture.

Our podcast is Picking Apart Cloud Connect. Check it out.

I had the pleasure of meeting Brenda during the San Francisco Cloud Computing Club event on 3/16/2010.

Erik Meijer, Wes Dyer, Jeffrey van Gogh, and Bart De Smet delivered a Reactive Extensions for JavaScript presentation at MIX10:

Come hear how the Reactive Extensions ("Rx") framework takes care of the difficult parts of asynchronous programming by viewing asynchronous computations as push-based collections. Instead of focusing on the hard parts, developers now can start dreaming about the endless possibilities of orchestrating and synchronizing computations at a high-level of abstraction. In this session we cover the design philosophy of the new Reactive Extensions for JavaScript, rooted on the deep duality between the well-known iterator and the observer design patterns. From this core understanding, we start looking at various combinators and operators defined over observable collections, as provided by Rx, driving concepts home by a bunch of samples. Democratizing asynchronous programming starts today. Don't miss out on it!

Mike Swanson’s MIX10 Wrap Up post of 3/20/2010 does what it’s title claims, with an emphasis on problems caching archived videos:

Wow! What a week. The MIX10 event is over, and I hope that everyone made it home safely. It was great to meet everyone in-person, and I love that we referred to each other by our Twitter handles (I heard “Hi, Anyware” many times this week). I thought I’d provide some early data on the event that you might find interesting.

- The all-you-can-eat buffet of keynote and session video recordings were online for awhile, but the download demand was so high that our global CDN couldn’t cache the files. Unfortunately, we had to shut off the downloads to give the almost 70GB (!) of content time to make it to the edge of the network. When we re-enable downloads later today, the download experience should be much, much better. Sessions are provided as a high-quality WMV, a regular quality WMV, and as a MP4 file for mobile devices. For most of the sessions, the PowerPoint slides are also available.

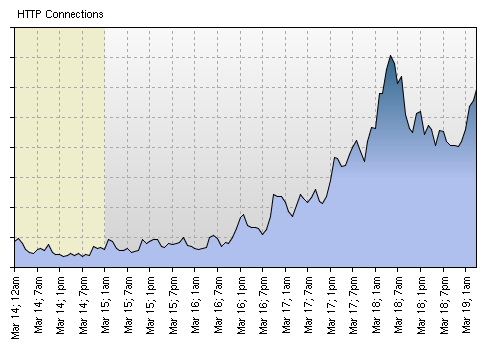

Here’s a graph that shows the spike in connections right up until we turned off the downloads. Realize that each of these connections was trying to grab 100s of megabytes of video data. …

Mike, who’s a Microsoft technical evangelist, continues with a list of downloading options and a list of the top 20 sessions ranked by “overall satisfaction.”

<Return to section navigation list>

Other Cloud Computing Platforms and Services

• Dana Gardner provides excerpts from his interview with two HP execs in a Burn the Silos and Jump Into the Data Pool article of 3/21/2010 for E-Commerce Times:

There's a realignment going on in data centers. Traditional technology silos are giving was to adaptive pools, shareable by any application. Let's put the benefits of converged infrastructure under the microscope and see how they can provide stepping stones to private cloud initiatives. Careful -- there are a lot of moving parts.

Improved data center productivity now appears to be a natural progression from converged infrastructure. Many enterprise data centers have embraced a shared service management model to some degree, and now converged infrastructure applies the shared service model more broadly to leverage modular system design and open standards, as well as to advance proven architectural frameworks.

The result is a realignment of traditional technology silos into adaptive pools that can be shared by any application, as well as optimized and managed as ongoing services. Under this model, resources are dynamically provisioned efficiently and automatically, gaining more business results productivity. This also helps rebalance IT spending away from a majority of spend on operations and more toward investments, innovations and business improvements.

This latest BriefingsDirect discussion explores the benefits of a converged infrastructure approach, and now how to better understand attaining a transformed data center environment. We'll see how converged infrastructure provides a stepping stone to private cloud initiatives. But as with any convergence, there are a lot of moving parts, including people, skills, processes, services, outsourcing options, and partner ecosystems.

We're here with two executives from HP (NYSE: HPQ) to delve deeply into converged infrastructure and to learn more about how to get started and deal with some of the complexity, as well as to know what to expect as payoff. Please welcome Doug Oathout, vice president, converged infrastructure at HP Storage, Servers and Networking; and John Bennett, worldwide director, Data Center Transformation Solutions at HP.

Dana Gardner is principal analyst at Interarbor Solutions.

Alex Williams analyzes The Virtualization Wars: Microsoft and Citrix v. VMware in this 3/20/2010 post:

Watch this battle unfold. The virtualization wars are just getting started.

On one side we have Microsoft, which announced changes in its licensing structures this week. The change reflects an understanding that the customer wants full access to its virtualization platform and not be charged a tax for that right to access it on a PC, no matter if it is at work or in their home.

And in true fashion, Microsoft is on the attack, Citrix at its side, in a full on fight with VMware for the virtualization market.

On the VMware side, we see a company ready to move into Microsoft's customer base by offering more than virtualization as witnessed with its recent acquisition of Zimbra. VMWare is gearing up to tap into the Microsoft Exchange market by combining its virtualization technology with the Zimbra email platform. …

Alex continues with a “Microsoft Offers Some Flexibility” in licensing and related topics.

Mark Smith asserts HP Takes Technology Portfolio to the Clouds with New Growth Strategy in this 3/19/2010 post to the Ventana Research blog:

HP is a technology giant with annual revenue over $115 billion and an expanding technology portfolio that includes servers, storage, printers, computers and software; it also offers a broad range of outsourcing and consulting services. I attended the company’s 2010 global analyst summit in Boston to get an update from executives on its enterprise business portfolio of technology and services. The event was kicked off by Ann Livermore, executive vice president for enterprise business, a long-time leader in HP. HP has amassed a sizable portfolio of acquisitions, including EDS in 2008, and its pending deal to acquire 3Com will pair it up competitively against Cisco Systems. Now HP has intentions of being a player in cloud computing by providing technology that can easily be utilized virtually across the planet. It appears well poised for this task unless the likes of IBM can challenge HP directly.

HP’s multiyear strategy to lead enterprise computing in products, software and services is based on the convergence of technology infrastructure. HP is positioned to meet the next generation of corporate computing through what I call “computing as a service,” in which organizations can rely on HP to meet a range of needs without having to purchase and maintain in-house technology in their data centers. For this new generation of cloud computing solutions and services, HP has found customers in Verizon and government agencies such as the Defense Information Systems Agency, among others. This approach enables HP to provide its own technology to virtualize computing resources, and it has all the necessary technology, including management and automation software to support the process. HP also sees this as a unique opportunity to leverage its services organizations to develop and deploy new applications and services to operate within the cloud. It is still working out the mechanics of provisioning its computing power for its clients so they can scale their operations efficiently. HP also finds a significant business opportunity in application transformation and migration services when companies want to hand off hosting and management to service providers. …

Mark, who’s CEO & EVP Research for Ventana Research, continues his analysis and concludes:

The 2010 HP analyst summit was full of great insights about its current and future business. We sometimes underrate its role in the realm of technology innovation, which is a misperception, because it has taken steps to monetize on every aspect of its technology from printers to services to software. There are some areas where it is leaving of the field to IBM, Oracle and SAP to compete, for instance enterprise applications and middleware, but instead seeks to be the provider of the underlying computing platform such software requires. I would have liked to hear from CEO Mark Hurd, who is always up front and personal in his appearances, but I guess he does have a lot to do in managing a $100 billion plus business with over 300k employees. As this technology giant continues to march forward, I hope to see someone take its software business to the next level and be more evidently competitive against the other major providers in the market. HP definitely has plenty of upside as it strives to dominate its markets around the world.

Not to mention the recent HP-Microsoft agreement: Private Azure Clouds Float the $250 Million HP/Microsoft Agreement of 1/13/2010.

Sam Ramji presented Building Apigee for Multiple Clouds to Shlomo Swidler's panel "Writing Code for Many Clouds" at CloudConnect 2010:

At Sonoa, we have an enterprise product which we turned into a service called Apigee. From the first, we needed to move beyond just being packaged as a VM and “deployable anywhere” to really living in the cloud.

This is what we’ve learned so far – some of which we anticipated and some of which we reacted to. Build, deploy, and manage – of the three basic parts of running a service only deploy and manage really change. The big difference is in operationalization of the system.

Most recently we realized that we needed to be HA across providers and get total control of our latency, so we are building a new datacenter on Rackspace as well. This is a work in progress so I’ll be reporting from the front lines.

Finally, we’ve helped implement a multi-cloud architecture for ING which has taught us something about where multi-cloud services may be headed.

Sam discusses issues managing “The first cloud: EC2” and “The second cloud: Rackspace” and then asks “Is there an easier way?” …

For an infrastructure play like Apigee, we don’t think so. Given our customer promise of near-zero and predictable latency we need as much control as possible. For an application-level service play though, we think some parts can be easier. We’re built on Sonoa technology that manages all of our cloud API traffic processing, as is ING, a financial service company that’s moving to cloud. Their challenge is elasticity in financial modeling – specifically the Monte Carlo simulation workload which is compute-intensive and highly intermittent in use of resource. When you’re running the simulation, you need all the compute resource you can get. When you’re not running a simulation, you need almost none. …

Rajkumar Buyya1, Rajiv Ranjan and Rodrigo N. Calheiros1 are co-authors of an InterCloud: Utility-Oriented Federation of Cloud Computing Environments for Scaling of Application Services whitepaper, which offers the following abstract:

Cloud computing providers have setup several data centers at different geographical locations over the Internet in order to optimally serve needs of their customers around the world. However, existing systems do not support mechanisms and policies for dynamically coordinating load distribution among different Cloud-based data centers in order to determine optimal location for hosting application services to achieve reasonable QoS levels. Further, the Cloud computing providers are unable to predict geographic distribution of users consuming their services, hence the load coordination must happen automatically, and distribution of services must change in response to changes in the load. To counter this problem, we advocate creation of federated Cloud computing environment (InterCloud) that facilitates just-in-time, opportunistic, and scalable provisioning of application services, consistently achieving QoS targets under variable workload, resource and network conditions. The overall goal is to create a computing environment that supports dynamic expansion or contraction of capabilities (VMs, services, storage, and database) for handling sudden variations in service demands.

This paper presents vision, challenges, and architectural elements of InterCloud for utility-oriented federation of Cloud computing environments. The proposed InterCloud environment supports scaling of applications across multiple vendor clouds. We have validated our approach by conducting a set of rigorous performance evaluation study using the CloudSim toolkit. The results demonstrate that federated Cloud computing model has immense potential as it offers significant performance gains as regards to response time and cost saving under dynamic workload scenarios. …

<Return to section navigation list>