Windows Azure and Cloud Computing Posts for 3/3/2010+

| Windows Azure, SQL Azure Database and related cloud computing topics now appear in this weekly series. |

Update 3/4/2010: Added a link to Jay Fry’s Two cloud computing Rorschach tests: 'legacy clouds' and the lock-in lesson post of 11/11/2009 about a November, 2009 San Francisco Cloud Computing Club meeting to the Windows Azure Infrastructure section.

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Table and Queue Services

- SQL Azure Database (SADB)

- AppFabric: Access Control and Service Bus

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Windows Azure Infrastructure

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon or Barnes & Noble (in stock.)

Read the detailed TOC here (PDF) and download the sample code here.

Discuss the book on its WROX P2P Forum.

See a short-form TOC, get links to live Azure sample projects, and read a detailed TOC of electronic-only chapters 12 and 13 here.

Wrox’s Web site manager posted on 9/29/2009 a lengthy excerpt from Chapter 4, “Scaling Azure Table and Blob Storage” here.

You can now download and save the following two online-only chapters in Microsoft Office Word 2003 *.doc format by FTP:

- Chapter 12: “Managing SQL Azure Accounts and Databases”

- Chapter 13: “Exploiting SQL Azure Database's Relational Features”

HTTP downloads of the two chapters are available from the book's Code Download page; these chapters will be updated for the January 4, 2010 commercial release in February 2010.

Azure Blob, Table and Queue Services

Learning Tree International offers Windows Azure Storage – Part 1, a brief tutorial about Azure blob storage in this 3/3/2010 post:

Software applications often need to store data and applications written for Windows Azure are no exception. Windows Azure provides three types of storage: queues, blobs and tables. As we have already seen something about queues in a prior post we now turn our attention to blobs and tables. Part 1 will cover blobs and Part 2 will cover tables.

Azure storage is based on REST. All storage objects are available through standard HTTP calls to a named URI. In practice, for .NET developers, though, it is probably easiest to just use the StorageClient assembly included as of version 1.1 of the Azure SDK.

Learning Tree also has posted the following introductory tutorials to promote their Windows Azure Platform Introduction: Programming Cloud-Based Applications. Other recent Azure-related posts are:

Saurabh Vartak shows you how to Create a Windows Azure Storage service to store the blobs in this 3/3/2010 post:

This article describes what Windows Azure Blobs are, how to create and display the same on our local environment and lastly how to create a Windows Azure Storage service to store the blobs on the cloud.

The article explains all of the above using a very simple web application, which will be uploading the files (blobs) to the storage. The web application will also be retrieving the URIs of the blobs which are already uploaded to the storage.

<Return to section navigation list>

SQL Azure Database (SADB, formerly SDS and SSDS)

No significant articles today.

<Return to section navigation list>

AppFabric: Access Control and Service Bus

Warwick Ashford reports RSA 2010: identity management key to cloud security, says Microsoft’s Scott Charney in this 3/3/2010 story for ComputerWeekly.com:

Identity is important on the internet, but this is amplified in the cloud, says Scott Charney, corporate vice-president of Microsoft's Trustworthy Computing Group.

"Identity and privacy will be key to cloud computing," he told the RSA Conference 2010 in San Francisco.

It is critical to produce technologies that better allow organisations to manage identity, he said, to provide more secure and private access to both onsite and cloud applications.

As part of efforts to enable a more trusted internet, Charney announced Microsoft's release of a preview of Microsoft's U-Prove technology.

U-Prove is designed to enable online providers to better protect privacy and enhance security through the minimal disclosure of information in online transactions.

"To encourage broad community evaluation and input, Microsoft is providing core portions of the U-Prove intellectual property under the Open Specification Promise," said Charney.

Announcing that Microsoft has made available open source software development kits in C# and Java, he challenged developers to create software to help protect individual privacy.

Charney said Microsoft is working with the Fraunhofer Institute to integrate U-Prove and other Microsoft technologies with the German Government's planned electronic identity cards.

According to Charney, the project envisages enabling [German] citizens to use their electronic identity cards as the basis for accessing online services with minimal disclosure of information. …

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

My February 2010 Uptime Reports for OakLeaf Azure Test Harness Running in the South Central US Data Center post of 3/3/2010 reports:

For the first time, Mon.itor.us reported 100% uptime for the live OakLeaf Systems Azure Table Services Sample Project during an entire month, albeit a short month.

The post includes screen captures of Mon.itor.us and Pingdom uptime summaries for the month of February and links to three earlier monthly uptime reports.

The Windows Azure Team reports NASA and Microsoft Issue Pathfinder Innovation Challenge to Help Classify Mars Images in this 3/2/2010 post:

At PDC last fall, NASA and Microsoft made a number of announcements, including new data APIs in Codename Dallas and a Silverlight+ASP.NET MVC 2 site on the Windows Azure platform. Did you know that we also launched a programming competition with NASA to help classify Mars images?

Named after the first mission from Earth to send a rover to the surface of another planet, the goal of the Pathfinder Innovation Challenge is to find .NET developers who can help NASA classify the hundreds of thousands of images collected from the Spirit and Opportunity Mars Rover expeditions. This challenge provides developers with a unique opportunity to leverage their coding skills to help NASA, while also offering a chance to win some great prizes ranging from NASA swag and ZuneHDs to a trip to see the next Mars Rover launch.

The competition consists of four different Leagues; two, the Global Cooperation League and the Intelligence League are most relevant to .NET developers. The Global Cooperation League, uses Silverlight and NASA's Windows Azure-hosted APIs in Codename Dallas to build casual games designed to allow regular citizens to help classify images. Check out the counting craters application, Help Map Mars to see an example. The Intelligence League uses the power of the Windows Azure platform to create automated and efficient image-processing applications that can rapidly detect and label interesting objects within the Mars Expedition Rover images.

Full details about the competition can be found on the Pathfinder Innovation Challenge website. Be sure to check out the Learn How-Videos and Key Downloads page for additional information, official entry form and directions to acquire a token for accessing NASA APIs in Codename Dallas.

Return to section navigation list>

Windows Azure Infrastructure

Lori MacVittie claims “Microsoft Dynamic Infrastructure Toolkit for Systems Center (DIT-SC) is hopping forward, literally, into the network. With or without established standards, this dog is going to hunt” in her Microsoft Hops Into Infrastructure 2.0 post of 3/3/2010:

It takes time to develop standards, something we often overlook. When the foundational standards upon which the Internet were being developed there were (almost) no users, no broadband, and no real urgency to get something available. The adoption of disruptive, highly volatile technologies such as virtualization and cloud computing result in an environment in which today’s standards groups are not afforded the luxury of time. Organizations want, nay they need, standards now and if they aren’t forthcoming vendors and customers alike will move steadily forward with their own implementation.

The myriad “cloud APIs” submitted to various standards organization indicate this pattern of behavior has already begun and will continue until the dust settles and one (and hopefully only one) API comes out on top. Microsoft may have come “late” to the cloud computing table, but it’s certainly making up time by moving forward with its Dynamic Infrastructure Toolkit for System Center.

The Dynamic Infrastructure Toolkit for System Center is a free, partner-extensible toolkit that will enable datacenters to dynamically pool, allocate, and manage resources to enable IT as a service. Whether you’re an enterprise customer, a systems integrator, or an independent software vendor, the toolkit will help you create agile, virtualized IT infrastructures.

What’s a bit different about Microsoft’s Dynamic Infrastructure Toolkit for System Center (DIT-SC) is that it’s not focusing on standardizing the interface to the cloud, a la Yet Another Cloud API, but rather it’s focused inward, on operations, much in the same way the cloud API of Yahoo! is highly focused on internal rather than external operations.

Bill Zack reviews A Pragmatic Hybrid Approach to Cloud Computing white paper tin this 3/3/2010 post to the Innov8Showcase blog:

The paper on Cloud Computing that is referenced in this article (“Outlook: Partly Cloudy with Sunny Spells to Follow”) is one of the best treatments of the subject of Public, Private and Hybrid clouds that I have ever read.

It was written [for] and delivered at a Cloud Computing event in the UK.

“Last week over 120 came to the UK's most important cloud computing event. Attendees were eager to find out about the realities of cloud computing and how to get the most from this latest IT focus, and key contributors Microsoft and Dot Net Solutions presented a new white paper.

With constant pressure to manage IT costs, cloud solutions offer a new way to provide cost effective IT solutions, but without having to transfer all of your technology over to the cloud in one major leap.

Matt Deacon, Chief Architectural Advisor at Microsoft, and Dan Scarfe, CEO of Dot Net Solutions, outlined a pragmatic hybrid approach to cloud computing. They outlined how customers can combine cloud solutions with their existing applications and infrastructure, to achieve IT cost savings, flexibility and the ability to bring new ideas to market rapidly and effectively.”IM[NS]HO Everyone should read it.

Matthew Broersma’s Cray and Microsoft join forces on cloud datacentres post of 3/3/2010 reports:

Supercomputer maker Cray's custom engineering group has teamed up with Microsoft Research to look into lowering the costs of running cloud-computing datacentres.

The initiative will make use of the HPC specialist's intellectual property in system design, including high-density packaging, Cray said in its announcement on Tuesday. The partners will also work on efficient power delivery and cooling innovations, with the aim of reducing facility, power and hardware costs.

"The results of the project have the potential to deliver significant cost savings for operating a cloud-computing datacentre," said Cray vice president of custom engineering, Chuck Morreale, in a statement.

The alliance is the first step into the commercial market for Cray's custom engineering group, which was formed in 2008. The group builds special-purpose supercomputers for individual customers, applying Cray technologies such as the EcoPhlex liquid-cooling system and providing custom data-management and consulting systems. Last summer, Cray said the custom engineering division was growing more quickly than the rest of the company …

See the University of Washington’s Computer Science & Engineering School Microsoft’s Steve Ballmer at UW, Thursday March 4 post of 3/2/2010 in the Cloud Computing Events section.

Juniper Research reports More than 130 million Enterprise Users in the Mobile Cloud by 2014 in this 3/3/2010 post:

Juniper research, a Media Partner of the Cloud Computing Congress, reports on new mobile cloud computing findings.

As collaborative applications become ever more important for enterprise, a new report from Juniper Research has found that the number of enterprise customers using mobile cloud-based applications will rise to more than 130 million by 2014, facilitated by platform as a service (PaaS) deployments from key players such as Google and Microsoft.

The report found that the market for connected enterprise apps had benefitted from the success of Apple’s iPhone and App Store. It said that this was reflected both by a marked increase in both the number of enterprise apps available to end-users and also in the fact that such apps were themselves becoming far more attractive given the wide-ranging enhancements to smartphone user interfaces in the wake of the iPhone launch.

Furthermore, Juniper’s Mobile Cloud Applications research found that as cloud providers were increasingly opening up their APIs for application providers seeking to develop browser-based or thin client applications, this provided greater opportunity and incentive for developers seeking to reduce the costs associated by porting apps across multiple platforms.

According to report author Dr Windsor Holden, “A cloud-based ecosystem for enterprise apps will be attractive both for developers and enterprises alike. For developers, cloud opens up a far wider potential audience for their products; for enterprise customers, outsourcing application management to a remote third-party, costed on a scalable, pay-per-use basis, offers far more flexibility combined with a significant reduction in capital expenditure.” …

Alex Williams analyzes the Juniper cloud research in his How Many Enterprise Workers Will Work in the Mobile Cloud? Try 130 Million post of 3/3/2010:

Juniper Research has come out with more analysis that shows just how big the mobile enterprise market will be for cloud computing.

According to Juniper, the advent of collaboration tools will mean that 130 million enterprise workers will use the mobile cloud by 2014.

The steep climb will be in part due to the Platform-as-a-Service (PaaS) offerings from companies like Google and Microsoft. [Emphasis added.]

The rise is already evident as more people adopt smartphones. In particular, Juniper points to the iPhone and the App store as catalysts for the growth. People are using their iPhones to use a variety of applications for the work they do.The report also cites the iPhone user interface and how it has spurred adoption and lead to a higher level of quality in smartphones across the wider market.

The report also cites how cloud computing providers have opened up their API's, which in turn has attracted waves of developers. The benefits to developer and customers are cited as reasons why we will see the surge. Developers can easily deploy applications to the cloud. And the greater variety of offerings drives a higher customer base.

Jay Fry recommends Going rogue, cloud computing-style: what you can learn by going around IT, as well as the San Francisco Cloud Computing Club in this 3/2/2010 post:

I have to say, the San Francisco Cloud [Computing] Club (#sfcloudclub on Twitter) is a great place to hear good ideas get batted around.

For the uninitiated, the group is a bunch of cloud computing experts, thinkers, and doers from around the Bay Area who occasionally give up an evening for a good discussion/argument or two about what’s happening in this market. (I wrote up one of the previous lively, cloud-filled conversations this group hosted in an earlier post: “Two cloud computing Rorschach tests: ‘legacy clouds’ and the lock-in lesson” for those who want to get a taste for the group’s content.)

As the group is getting ready for an expanded meeting of the minds connected with the upcoming Cloud Connect conference in Santa Clara (March 15-18), I realized that I’m still mulling over an idea or two about the psychology of cloud computing adoption brought up about the during the group’s MLK Day get-together in January.

Big companies will adopt cloud computing very conservatively, right?

Back at that meeting, we spent a bit of time on how companies are actually adopting cloud computing. The difficulty that big companies currently have with the public cloud has been covered extensively. It’s worrisome for them, thanks to things we’ve all heard about, like security, performance and reliability, and lock-in worries. At Cloud Club, we discussed how many orgs, as a result, are (officially, anyway) saying that they want to work on private clouds instead.

But most interestingly, we also discussed the truly amazing tendency that people in even in the most conservative IT organizations have to, well, “go rogue.”

In some of the most locked-down IT environments, folks try innovative stuff on their own, even when it doesn’t meet all of their strict requirements. Why? To help them get their job done in a much better or faster way. …

Jay continues with analysis of the benefits of “going rogue” with cloud computing.

Update 3/4/2010: Jay Fry’s Two cloud computing Rorschach tests: 'legacy clouds' and the lock-in lesson post of 11/11/2009 chronicles a November, 2009 San Francisco Cloud Computing Club meeting:

This week's San Francisco Cloud Computing Club gathering was a great place to meet some of the movers and shakers in the cloud computing market (or at least the ones within a short drive of San Francisco). The event's concept was to spend some quality time talking through cloud computing issues with a crowd of people who spend all day thinking about the cloud and working on making it a reality.

As James Urquhart (CNET blogger from Cisco) guided the conversation, I heard two interesting points of contention that divided the room pretty dramatically. It struck me that each comment could actually serve as a Rorschach test of a person's view of how powerful and important the concept of cloud computing actually is.

One question was about where IT has been; the other helps define where we might be able to go. Both say a lot about the approach to cloud computing that you're likely to take.

- Is cloud computing compelling enough to change how IT runs existing apps? …

- Has the industry learned its lesson about lock-in? …

So, my point in highlighting this subset of the Cloud Club conversations was this: keep an eye on these questions. They are some of more interesting things playing out in the cloud computing space.

And, for as much fun as it was to spend several hours in good-natured arguments over these and other topics in a penthouse in San Francisco, none of the answers will appear magically, nor overnight. We leave that to time, actual customers, and the market to decide.

David F. Carr asserts “Cloud computing sets some businesses free. But it also locks them in” in his Happily Locked Into The Cloud article o f3/2/2010 for Forbes Magazine:

As CEO of Vetrazzo, a Richmond, Calif., firm that makes chic high-end building materials out of recycled glass, James Sheppard has embraced cloud computing. The applications he uses to run his business are accessed--and even programmed and designed--over the Internet.

Vetrazzo built a custom application that makes use of the servers and software provided by Salesforce.com. These programs provide many of the services familiar to the thousands of other Salesforce.com ( CRM - news - people ) customers. But Vetrazzo went beyond customization of what Salesforce.com provides. Instead of purchasing an enterprise resource planning system from an SAP ( SAP - news - people ) or an Oracle, Vetrazzo essentially created its own, built with Salesforce.com's programming tools.

Sheppard, a former enterprise software salesman, began running his business on the application a year ago and says it has set him free. The system administrators at Salesforce.com are the ones who have to worry about most of the technical details of keeping the system reliable, secure and available.

But that freedom has come with a trade-off, in the form of vendor lock-in. Sheppard's core business application is running on a proprietary platform created by Salesforce.com and available only to its customers. That means, for example, that Sheppard would not be able to transfer his application to another data center. …

David continues with his analysis of the cloud’s vendor lock-in threat.

Joe Weinman promises "At the upcoming Cloud Connect conference, we will evaluate a variety of scenarios that determine where and when the move to cloud computing makes financial sense” in his Time To Do The Math On Cloud Computing article of 3/2/2010 for InformationWeek:

In two weeks, I'll be in Santa Clara at the industry's newest event, Cloud Connect. I'm chairing a track focused on Cloudonomics, a term I coined a couple of years ago to connote the complex economics of using cloud infrastructure, platform, and software services. There are a number of great technical tracks planned, but mine is focused on what I consider to be the most important question: "Why do cloud?"

This is the question that any business-focused CIO must ask. After all, CIO's have a small number of projects that they can really focus on in any given year, and major initiatives must have a compelling rationale or won't get supported by senior leadership, including the board. The technology will only be important if the business value is clear and compelling.

The head of security company McAfee gives his views on the state of IT security today.

In practice, there are a number of reasons to leverage the cloud. One is agility: Resources and services that are immediately available for on-demand use clearly enhance agility over months-long engineering, procurement, and installation efforts. This can help to introduce new services, test new code, enter new markets, or meet unexpected sales spikes. Another is user experience. Larger cloud providers' globally dispersed footprint can bring highly interactive processing closer to the end user.

But perhaps topping the list is total cost reduction. According to a recent Yankee Group report, 43% of enterprises cite cost control as a rationale for interest in the cloud. So, how exactly should we think about cloud costs? That's the topic of my track. …

Phil Wainwright makes the case for private clouds in his Cloud, it’s a web thing post of 3/2/2010 to the Enterprise Irregulars blog, which replies to Randy Bias’ Debunking the “No Such Thing as a Private Cloud” Myth essay:

Having read (hat-tip Dennis Howlett) Randy Bias’ article at Kendallsquare on Debunking the “No Such Thing as a Private Cloud” Myth I have to say — rather like the apocryphal Irish direction-giver — if I’d wanted to make a case for private cloud, I wouldn’t have started from there. Randy and I joined a civilized conversation a few weeks back as a follow-up to my earlier post on this topic, and I fear he’s already forgotten every dam’ thing I said. So I guess I’ll have to reiterate it.

But first, let me (shock, horror!) make the case, such as it is, for private cloud. It looks like we’re stuck with the term, along with all the ugly implementations that are going to be classed under it and which I fear will ultimately lead to its becoming discredited — unfairly dragging the reputation of true cloud computing through the same mud as it does so. As you can see, I still distrust the term, mainly because it is so open to misinterpretation, and I shan’t be using it myself without heavy qualification and many caveats — which, by the way, should make for an interesting panel discussion with Verizon, IBM and others that I’ll be moderating at the All About Cloud event in San Francisco this May [see disclosure]. But I do see circumstances where it’s possible to make a case for implementing cloud-like infrastructure in a private environment, and Randy, despite starting his exposition from completely the wrong starting point, does end up making a statement about private cloud with which I can heartily agree:

“The private cloud model is a critical transitional step. It is an essential component to help larger organizations move their compute capacity to the public cloud.” …

You can read the complete article @ Software as Services.

Mark Little’s Cloud as the death of middleware? post of 2/13/2010 is an essay, which begins:

Over the last few months I've been hearing and reading people suggesting that the Cloud ([fill in your own definition]) is either the death of middleware, or the death of "traditional" middleware. What this tells me is that those individuals don't understand the concepts behind middleware ("traditional" or not). In some ways that's not too hard to understand given the relatively loose way in which we use the term 'middleware'. Often within the industry middleware is something we all understand when we're talking about it, but it's not something that we tend to be able to identify clearly: what one person considers application may be another's middleware component. In my world, middleware is basically anything that exists above the operating system and below the application (I think the fact that these days we tend to ignore the old ISO 7 Layer stack is a real shame because that can only help such a definition.) …

He concludes:

Of course there'll be new things that we'll need to add to the infrastructure for supporting Cloud applications, just as JEE doesn't match CORBA exactly, or CORBA doesn't match DCE etc. There may be new frameworks and languages involved too. But this new Cloud wave (hmmm, mixing metaphors there I think) needs to build on what we've learned and developed rather than being an excuse to reimplement or remake the software world in "our" own image. That would be far too costly in time and effort, and I have yet to be convinced that it would result in anything substantially better than the alternative approach. If I were to try to sum up what I'm saying here it would be: Evolution Rather Than Revolution!

Mark is the CTO for JBoss at Red Hat.

<Return to section navigation list>

Cloud Security and Governance

Dana Gardner writes “Cloud Security Alliance defines top threats to secure cloud computing” as a lead-in to his Cloud Computing Services Are the Next Generation of IT Briefings Direct post of 3/3/2010:

It's one of the major issues that keeps cloud computing from working its way deeper and more quickly into the enterprise IT mainstream.

But what are the potential threats around using cloud services? How can companies make sure business processes and data remain secured in the cloud? And how can CIOs accurately assess the risks and benefits of cloud adoption strategies?Hewlett-Packard (HP) and the Cloud Security Alliance (CSA) answer these and other questions in a new research report entitled, "Top Threats to Cloud Computing Report."

The report, which was highlighted during the Cloud Security Summit at the RSA conference this week, taps the knowledge of information security experts at 29 enterprises, solutions providers and consulting firms that deal with demanding and complex cloud environments. [Disclosure: HP is a sponsor of BriefingsDirect podcasts.] …

Dana concludes:

I'll be moderating a panel in San Francisco in conjunction with RSA later this week on the very subject of cloud security with Jeremiah Grossman, founder and Chief Technology Officer of WhiteHat Security; Chris Hoff, Director of Cloud & Virtualization Solutions at Cisco Systems and a Founding Member of the CSA, and Andy Ellis, Chief Security Architect at Akamai Technologies.

We'll be rebroadcasting the panel "live" with call-in for questions and answer at noon ET on March 31. More details to come.

Alex Williams reports Cloud Computing Security: What The Big Guns Have In Store in this 3/2/2010 post from the RSA 2010 conference to the ReadWriteCloud blog:

The RSA Conference is the event for news to spill over with talk about cloud computing security.

Let's take a look at some of the announcements from the major players in the market, including Intel, Novell and Cisco.

Intel, RSA, VMware and the Trusted Server

According to Dark Reading, the companies demonstrated a proof-of-concept for "building security into the cloud computing infrastructure."

The companies are playing on the white listing concept, which has gained wider acceptance in the security world. The idea being that if a server is trusted or white listed then it will be far less vulnerable to malware attacks …

Novell and the Cloud Security Alliance

Novell is teaming up with the Cloud Security Alliance to push standards for cloud computing. The effort is called the "Trusted Cloud Initiative." According to Information Week, Novel will develop standards for cloud security, compliance, identity management and other related issues.

Cloud computing is essentially a world with no standards whatsoever. Everyone has different meanings for the fundamental aspects that are a part of cloud computing. That leaves the customer with little way to fully understand what they are committing to with a cloud vendor.

In its second year, The Cloud Security Alliance consists of technology companies seeking to develop use cases about the processes and practices around cloud computing security.

Cisco: Developing Mobile Phone Security

Cisco is developing an "always on" security system for mobile devices. According to Computerworld, the service would give enterprise managers the ability to control cloud-based applications on an employee's mobile device.

See Warwick Ashford’s RSA 2010: identity management key to cloud security, says Microsoft’s Scott Charney post of 3/3/2010 in the AppFabric: Access Control and Service Bus section.

Jon Oltsik claims “Cisco, EMC, Intel and VMware are all over cloud security'” in his RSA 2010: Cloud security announcements dominate already post of 3/2/2010 to NetworkWorld’s Back to Cisco Subnet:

It's pouring in San Francisco but ironically the RSA Conference is already pointed toward clouds -- in this case cloud computing security.

There were two announcements yesterday around securing private clouds. New initiative king Cisco announced its "Secure Borderless Network Architecture," which is actually pretty interesting. Cisco wants to unite applications and mobile devices through an "always-on" VPN. In other words, Cisco software will enforce security policies for mobile devices about which applications they can use and when -- without user intervention. Pretty cool but you would need a whole bunch of new Cisco stuff to make this happen.

On another front, industry big-wigs EMC, Intel, and VMware are pushing for a "hardware root of trust" for cloud computing. The goal here is to create technology that lets cloud providers share system state, event, and configuration data with customers in real-time. In this way, customers can integrate cloud security with their own security operations processes and management. This is extremely important for regulatory compliance. (Note: Another reason why EMC/RSA bought Archer Technologies).

These interesting announcement probably presage a 2010 RSA Conferernce trend -- "all cloud all of the time." Since ESG Research indicates that only 12% of midsized (i.e. less than 1,000 employees) and enterprise (i.e. more than 1,000 employees) will prioritize cloud spending in 2010, all of this cloud yackety yack may be a bit over the top.

Two other announcement worth noting here:

1. An actual leading voice on cloud computing security the Cloud Security Alliance (CSA) teamed up with IEEE to survey users about cloud computing security. Users overwhelmingly want to see industry standards and soon. Bravo CSA and IEEE, I couldn't agree more.

2. I like the F5 Networks/Infoblox announcement around DNSSEC. The two companies will offer integration technology between F5 load balancers and Infoblox DNSSEC. This partnership blends the security of DNSSEC with the reality of distributed web-based apps and infrastructure. Kudos to the companies, the Federal government will be especially pleased.

Jon also finds “Lots of cloud hype -- a little bit of real wisdom” at RSA as he reports in RSA Day #1: A Whirlwind of Activity post to NetWorld World of 3/3/2010:

I'm in San Francisco at the RSA Conference, 2010. Attendance is up over last year's recession version of the show and a more up-beat vibe all around.

Of course, the smell of industry hype is also in the air in the form of cloud computing. RSA's Art Coviello (amongst others), crowed about how the cloud was the ultimately destiny of Internet computing. Cisco announced an "always-on" cloud computing VPN. EMC, Intel, and VMware want "roots of trust" for cloud hardware.

The good news is that we are talking about security as we develop cloud security so in theory, security may be "baked" into the cloud. The bad news is that we are talking about future and extremely hyped technology when we have serious security problems today. Will talking about Cloud Computing circa 2012 address today's cybercrime problems? The industry needs to focus on addressing today's threats or cloud computing will never arrive.

On a more positive note, Microsoft's Scott Charney stole the show yesterday. Unlike other speakers, Scott was more pragmatic by addressing things like quarentining infected PCs, government cybersecurity responsibilities and tactics, and public-private partnerships. Charney did mention cloud by declaring that identity and privacy are critical for cloud security (I wholeheartedly agree) and then announced that Microsoft has developed a new identity software framework called U-Prove and that it will be offering the code as open source for all to examine it and build upon it. Kudos to Charney and Microsoft to introducing real action into the marketing buzz. …

<Return to section navigation list>

Cloud Computing Events

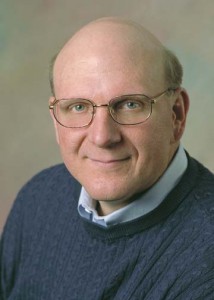

The University of Washington’s Computer Science & Engineering School reports in their Microsoft’s Steve Ballmer at UW, Thursday March 4 post of 3/2/2010:

Steve Ballmer, Microsoft CEO, will discuss what’s ahead for computing, with a particular focus on how cloud computing will change the way people and businesses use technology. The event will take place on Thursday March 4 at 10 a.m. in the Microsoft Atrium of the Paul G. Allen Center for Computer Science & Engineering. Plentiful open seating and standing room will be available. Please join us for what’s sure to be an interesting talk about the world of tech.

A portable Microsoft datacenter, housed in a cargo container, will be available for tours all day long. Microsoft’s IT Pre-Assembled-Component (ITPAC) is one example of the company’s strategy to modularize the entire datacenter. During the tour, learn about the design decisions and functionality that will power the next generation of technology.

See Microsoft information on the talk, plus webcast information, here. Dan Reed blog post here. Microsoft “Azure cloud science” TechFest web page, including a UW oceanography collaboration, here. TechFlash post here.

Maps and directions here. UW webcast information here. Web-archived videos of the event will be linked here and from the Microsoft site.

The Microsoft press release is Microsoft on Cloud Computing.

Abhishek Baxi’s March Architect Innovation Cafe Webcasts post of 3/3/2010 announced a Windows Azure Design Patterns Webcast on 3/26/2010 at 10:00 AM PST:

Abstract: One of the challenges in adopting a new platform is finding usable design patterns that work for developing effective solutions. The Catch-22 is that design patterns are discovered and not invented. Nevertheless it is important to have some guidance on what design patterns make sense early in the game.

This webcast attacks the problem through a set of application scenario contexts, Azure features and solution examples. It is unique in its approach and the fact that it includes the use of features from all components of the Windows Azure Platform including the Windows Azure OS, Windows Azure AppFabric and SQL Azure. In this webcast you will learn about the components of the Windows Azure Platform that can be used to solve specific business problems.

Register here.

Geva Perrry will moderate an Open APIs Panel at OSBC, according to this 3/1/2010 post:

I was kindly invited by Matt Asay to moderate a panel at the Open Source Business Conference (OSBC) on March 18 at San Francisco. Below are the details of the panel.

I did not write the description below (it was already there when I got on board), but I think it makes some interesting points. That said, I'd love to get any comments, suggestions, ideas on what you think the panel should be talking about. What's your take on the topic and what do you think are the interesting drivers and barriers for open API adoption -- and how do open APIs affect cloud computing and business in general.

Please share your thoughts in the comments.

Open Cloud – Open APIs and Economic Growth

Moderator: Geva Perry

Panelists:

Scott Metzger, CTO, TransUnion

Dion Hinchcliffe, CTO, Hinchcliffe & Company

Peter Coffee, Director Platform Research, Salesforce.com

Mike Olson, CEO, Cloudera

Sam Ramji, VP Strategy, SonoaOpenness has been used as an index of economic growth in international markets. With the rapid expansion of cloud computing, we have seen a wave of business expansion based on open APIs which has enabled innovation by 3rd parties, much as open source has enabled this trend in software. Openness is unevenly distributed however, and in some areas open APIs do not promise open data, which threatens the expansion of the cloud economy. What are the key concerns for technologists in the openness of cloud computing? How can we collectively drive the standards of the industry towards an open cloud? Come to this session to learn about the state of the art and challenge the panelists on the value and requirements of openness in the cloud.

<Return to section navigation list>

Other Cloud Computing Platforms and Services

Mikael Ricknäs claims Amazon Web Services CTO out to Prove Enterprise Chops in a 3/2/2010 article for PC World:

There is still a misconception that Amazon Web Services exists to sell the company's excess server capacity, but that is not the case, CTO Werner Vogels said during a keynote at Cebit.

"There is a myth out there that when Christmas comes, suddenly, all of the foundations under your building will be gone ... that is obviously not the case," said Vogels, referring to Amazon's high-volume sales during the holidays.

During his speech, he showcased the customers that are using Amazon Web Services, with the aim of persuading skeptics that his company offers a viable option.

For example, also at Cebit Software AG announced ARISalign, which offers business process management as a service using Amazon Web services' cloud computing. Time-to-market and tight control over costs were among the reasons Software AG picked Amazon, Vogels said.

Also, the growth of Amazon's cloud can be seen in the number of objects stored on S3 (Simple Storage Service). The number grew from 54 billion at this time last year to more than 100 billion, according to Vogels.

Bigger scale lets Amazon Web Services offer lower prices than competitors, according to Vogels. The latest price cut was Feb. 1 for outbound data transfer. …