Windows Azure and Cloud Computing Posts for 8/6/2012+

| A compendium of Windows Azure, Service Bus, EAI & EDI,Access Control, Connect, SQL Azure Database, and other cloud-computing articles. |

•• Updated 8/12/2012 with new articles marked ••.

• Updated 8/11/2012 with new articles marked •.

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Windows Azure Blob, Drive, Table, Queue and Hadoop Services

- SQL Azure Database, Federations and Reporting

- Marketplace DataMarket, Cloud Numerics, Big Data and OData

- Windows Azure Service Bus, Access Control, Caching, Active Directory, and Workflow

- Windows Azure Virtual Machines, Virtual Networks, Web Sites, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch and Entity Framework v4+

- Windows Azure Infrastructure and DevOps

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private/Hybrid Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

Azure Blob, Drive, Table, Queue and Hadoop Services

• Richard Seroter (@rseroter) described Combining Clouds: Accessing Azure Storage from Node.js Application in Cloud Foundry in an 8/9/2012 post:

I recently did a presentation (link here) on the topic of platform-as-a-service (PaaS) for my previous employer and thought that I’d share the application I built for the demonstration. While I’ve played with Node.js a bit before, I thought I’d keep digging in and see why @adron won’t shut up about it. I also figured that it’d be fun to put my application’s data in an entirely different cloud than my web application. So, let’s use Windows Azure for data storage and Cloud Foundry for application hosting. This simple application is a registry (i.e. CMDB) that an organization could use to track their active systems. This app (code) borrows heavily from the well-written tutorial on the Windows Azure Node.js Dev Center.

First, I made sure that I had a Windows Azure storage account ready to go.

Then it was time to build my Node.js application. After confirming that I had the latest version of Node (for Windows) and npm installed, I went ahead and installed the Express module with the following command:

This retrieved the necessary libraries, but I now wanted to create the web application scaffolding that Express provides.

I then updated the package.json file added references to the helpful azure module that makes it easy for Node apps to interact with many parts of the Azure platform.

{ "name": "application-name", "version": "0.0.1", "private": true, "scripts": { "start": "node app" }, "dependencies":{ "express": "3.0.0rc2" , "jade": "*" , "azure": ">= 0.5.3", "node-uuid": ">= 1.3.3", "async": ">= 0.1.18" } }Then, simply issuing an npm install command will fetch those modules and make them available.

Express works in an MVC fashion, so I next created a “models” directory to define my “system” object. Within this directory I added a system.js file that had both a constructor and pair of prototypes for finding and adding items to Azure storage.

var azure = require('azure'), uuid = require('node-uuid') module.exports = System; function System(storageClient, tableName, partitionKey) { this.storageClient = storageClient; this.tableName = tableName; this.partitionKey = partitionKey; this.storageClient.createTableIfNotExists(tableName, function tableCreated(err){ if(err) { throw error; } }); }; System.prototype = { find: function(query, callback) { self = this; self.storageClient.queryEntities(query, function entitiesQueried(err, entities) { if(err) { callback(err); } else { callback(null, entities); } }); }, addItem: function(item, callback) { self = this; item.RowKey = uuid(); item.PartitionKey = self.partitionKey; self.storageClient.insertEntity(self.tableName, item, function entityInserted(error) { if(error) { callback(error); } else { callback(null); } }); } }I next added a controller named systemlist.js to the Routes directory within the Express project. This controller used the model to query for systems that match the required criteria, or added entirely new records.

var azure = require('azure') , async = require('async'); module.exports = SystemList; function SystemList(system) { this.system = system; } SystemList.prototype = { showSystems: function(req, res) { self = this; var query = azure.TableQuery .select() .from(self.system.tableName) .where('active eq ?', 'Yes'); self.system.find(query, function itemsFound(err, items) { res.render('index',{title: 'Active Enterprise Systems ', systems: items}); }); }, addSystem: function(req,res) { var self = this var item = req.body.item; self.system.addItem(item, function itemAdded(err) { if(err) { throw err; } res.redirect('/'); }); } }I then went and updated the app.js which is the main (startup) file for the application. This is what starts the Node web server and gets it ready to process requests. There are variables that hold the Windows Azure Storage credentials, and references to my custom model and controller.

/** * Module dependencies. */ var azure = require('azure'); var tableName = 'systems' , partitionKey = 'partition' , accountName = 'ACCOUNT' , accountKey = 'KEY'; var express = require('express') , routes = require('./routes') , http = require('http') , path = require('path'); var app = express(); app.configure(function(){ app.set('port', process.env.PORT || 3000); app.set('views', __dirname + '/views'); app.set('view engine', 'jade'); app.use(express.favicon()); app.use(express.logger('dev')); app.use(express.bodyParser()); app.use(express.methodOverride()); app.use(app.router); app.use(express.static(path.join(__dirname, 'public'))); }); app.configure('development', function(){ app.use(express.errorHandler()); }); var SystemList = require('./routes/systemlist'); var System = require('./models/system.js'); var system = new System( azure.createTableService(accountName, accountKey) , tableName , partitionKey); var systemList = new SystemList(system); app.get('/', systemList.showSystems.bind(systemList)); app.post('/addsystem', systemList.addSystem.bind(systemList)); app.listen(process.env.port || 1337);To make sure the application didn’t look like a complete train wreck, I styled the index.jade file (which uses the Jade module and framework) and corresponding CSS. When I executed node app.js in the command prompt, the web server started up and I could then browse the application.

I added a new system record, and it immediately showed up in the UI.

I confirmed that this record was added to my Windows Azure Storage table by using the handy Azure Storage Explorer tool. Sure enough, the table was created (since it didn’t exist before) and a single row was entered.

Now this app is ready for the cloud. I had a little bit of a challenge deploying this app to a Cloud Foundry environment until Glenn Block helpfully pointed out that the Azure module for Node required a relatively recent version of Node. So, I made sure to explicitly choose the Node version upon deployment. But I’m getting ahead of myself. First, I had to make a tiny change to my Node app to make sure that it would run correctly. Specifically, I changed the app.js file so that the “listen” command used a Cloud Foundry environment variable (VCAP_APP_PORT) for the server port.

app.listen(process.env.VCAP_APP_PORT || 3000);To deploy the application, I used vmc to target the CloudFoundry.com environment. Note that vmc works for any Cloud Foundry environment, including my company’s instance, called Web Fabric.

After targeting this environment, I authenticated using the vmc login command. After logging in, I confirmed that Cloud Foundry supported Node.

I also wanted to see which versions of Node were supported. The vmc runtimes command confirmed that CloudFoundry.com is running a recent Node version.

To push my app, all I had to do was execute the vmc push command from the directory holding the Node app. I kept all the default options (e.g. single instance, 64 MB of RAM) and named my app SeroterNode. Within 15 seconds, I had my app deployed and publicly available.

With that, I had a Node.js app running in Cloud Foundry but getting its data from a Windows Azure storage table.

And because it’s Cloud Foundry, changing the resource profile of a given app is simple. With one command, I added a new instance of this application and the system took care of any load balancing, etc.

Node has an amazing ecosystem and its many modules make application mashups easy. I could choose to use the robust storage options of something AWS or Windows Azure while getting the powerful application hosting and portability offered by Cloud Foundry. Combining application services is a great way to build cool apps and Node makes that a pretty easy to do.

Karl Ots (@fincooper) described Using Azure Storage Explorer in an 8/9/2012 post:

Creating a Storage account

Go to Windows Azure Portal.

Select New -> Storage -> Quick create and fill in your storage name. If you have multiple subscriptions (trial, paid, MSDN etc), be careful about which one you select.

After a few minutes, the storage is created and you can view its details. The storage account keys can be viewed and regenerated at Manage keys section.

Azure Storage Explorer

Download and install Azure Storage Explorer from their Codeplex project.

Add an account. The storage account name is the one you selected in storage creation.

Here’s a screenshot of Azure Storage Explorer displaying data about an Azure Table storage. You can noe easily view and edit eny type of Azure storage you want (table, blob, queue). Pair this with the excellent WCF Test Client and you are good to go!

Stefan Groschupf (@SefanGroschupf, pictured below) casted a baleful eye on Hadoop and Hive in a Bursting the Big Data Bubble guest post of 8/8/2012 to Andrew Brust’s (@andrewbrust) Big Data blog for ZDNet: A Big Data analytics company CEO exposes some common, and potentially harmful, misunderstandings about Big Data:

This guest post comes from Stefan Groschupf, CEO of Datameer. The opinions expressed here are his, not mine. That said, I think Stefan makes some excellent points and provides a valuable, sober critique of today’s Big Data “goldrush.”

We’re in the middle of a Big Data and Hadoop hype cycle, and it's time for the Big Data bubble to burst.

Yes, moving through a hype cycle enables a technology to cross the chasm from the early adopters to a broader audience. And, at the very least, it indicates a technology’s advancement beyond academic conversations and pilot projects. But the broader audience adopting the technology may just following the herd, and missing some important cautionary points along the way. I’d like to point out a few of those here.

Riding the Bandwagon

Hype cycles often come with a "me too" crowd of vendors who hastily rush to implement a hyped technology, in an effort to stay relevant and not get lost in the shuffle. But offerings from such companies may confuse the market, as they sometimes end up implementing technologies in inappropriate use cases.Projects using these products run the risk of failure, yielding virtually no ROI, even when customers pony up significant resources and effort. Customers may then begin to question the hyped technology. The Hadoop stack is beginning to find itself receiving such criticism right now.

Bursting the Big Data bubble starts with appreciating certain nuances about its products and patterns. Following are some important factors, broken into three focus areas, that you should understand before considering a Hadoop-related technology.

Hadoop is not an RDBMS killer

Hadoop runs on commodity hardware and storage, making it much cheaper than traditional Relational Database Management Systems (RDBMSes), but it is not a database replacement. Hadoop was built to take advantage of sequential data access, where data is written once then read many times, in large chunks, rather than single records. Because of this, Hadoop is optimized for analytical workloads, not the transaction processing work at which RDBMSes excel.

Low-latency reads and writes won’t, quite frankly, work on Hadoop’s Distributed File System (HDFS). Mere coordination of writing or reading single bytes data requires multiple TCP/IP connections to HDFS and this creates very high latency for transactional operations.

However, the throughput for reading and writing larger chunks of data in a well-optimized Hadoop cluster is very fast. It's good technology, when well-understood and appropriately applied.

Hives and Hive-nots

Hive allows developers to query data within Hadoop using a familiar Structured Query Language (SQL)-like language. A lot more people know SQL than can write Hadoop’s native MapReduce code, which makes use of Hive an attractive/cheaper alternative to hiring new talent, or making developers learn Java and MapReduce programming patterns.

There are, however, some very important tradeoffs to note before making any decision on Hive as your big data solution:

- HiveQL (Hive’s dialect of SQL) allows you to query structured data only. If you need to work with both structured and unstructured data, Hive simply won’t work without certain preprocessing of the unstructured data.

- Hive doesn’t have an Extract/Transform/Load (ETL) tool, per se. So while you may save money using Hadoop and Hive as your data warehouse, along with in-house developers sporting SQL skill sets, you might quickly burn through those savings maintaining custom ETL scripts and prepping data as requirements change.

- Hive uses HDFS and Hadoop’s MapReduce computational approach under the covers. This means, for reasons already discussed, that end users accustomed to normal SQL response times from traditional RDBMSes are likely to be disappointed with Hive’s somewhat clunky batch approach to “querying”.

Real-time Hadoop? Not really.

At Datameer, we’ve written a bit about this in our blog, but let’s explore some of the technical factors that make Hadoop ill-suited to real-time applications.Hadoop’s MapReduce computational approach employs a Map pre-processing step and a Reduce data aggregation/distillation step. While it is possible to apply the Map step on real-time streaming data, you can’t do so with the Reduce step. That’s because the Reduce step requires all input data for each unique data key to be mapped and collated first. While there is a hack for this process involving buffers, even the hack doesn’t operate in real-time, and buffers can only hold smaller amounts data.

NoSQL products like Cassandra and HBase also use MapReduce for analytics workloads. So while those data stores can perform near real-time data look-ups, they are not tools for real-time analytics.

Three blind mice

While there are certainly other Big Data myths that need busting out there, Hadoop’s inability to act as an RDBMS replacement, Hive’s various shortcomings and MapReduce’s ill-suited-ness to real-time streaming data applications present the biggest stumbling blocks, in our observation.In the end, realizing the promise of Big Data will require getting past the hype and understanding appropriate application of the technology. IT organizations must burst the Big Data bubble and focus their Hadoop efforts in areas where it provides true, differentiated value.

Mike Washam (@MWashamMS) described Copying VHDs (and other blobs) between storage accounts in an 8/7/2012 post:

The Windows Azure cross platform command line tools expose an extremely useful new feature in Windows Azure Storage which is the ability to asynchronously copy blobs into storage accounts. The source blobs do not HAVE to be in a Windows Azure storage account. They could be anywhere that is publicly accessible. For more details on this new feature see Gaurav’s post on new Windows Azure storage features.

In this post I’m going to show how you can use this feature to copy VHDs between storage accounts which is a very commonly requested activity.

To get started you will need to download and configure the command line tools:

http://www.windowsazure.com/en-us/develop/nodejs/how-to-guides/command-line-tools/Once downloaded and configured the syntax for moving the blobs is simple:

azure vm disk upload <source-path> <target-blob-url> <target-storage-account-key>The source path could be any publicly available source location such as an http server:

azure vm disk upload http://sourcewebsite.cloudapp.net/copyme.zip http://targetstorageaccount.blob.core.windows.net/testcopy/copyme.zip TARGETSTORAGEACCOUNTKEYThe copy is made by the Windows Azure storage service itself so the bits are never copied to your local machine which makes the transfer MUCH faster than doing this using storage tools.

To copy VHDs you can use the same syntax:

azure vm disk upload http://sourcestorageaccount.blob.core.windows.net/migratedvhds/myvm.vhd http://targetstorageaccount.blob.core.windows.net/migratedvhds/myvm.vhd TARGETSTORAGEACCOUNTKEYKeep in mind that the source storage container either needs to be marked public or you can also specify the URL with a shared access signature which is the recommended approach for security reasons (put it in quotes). The target storage account doesn’t need the signature because you are required to specify the storage account key.

Before you ask – yes we definitely will have this functionality in the Windows Azure PowerShell cmdlets in the near future.

<Return to section navigation list>

SQL Azure Database, Federations and Reporting

• Nuno Filipe Godihno (@nunogodinho) posted Tips & Tricks to Build Multi-Tenant Databases with SQL Databases on 7/11/2012:

Last Wednesday I delivered another session at the Visual Studio Live @ Redmond conference this time about “Tips & Tricks to Build Multi-Tenant Databases with SQL Databases”. The feedback from the session attendees was very good and this is a quick summary of the most important aspects.

First thing in order to be successful is understanding exactly what is Windows Azure SQL Databases (formally SQL Azure) and how it works. If we look at it from a high level, SQL Databases are actually:

- SQL Server database technology delivered as a service on Windows Azure

- This is actually a Shared Environment where we have SQL Server capabilities and features available in a pay as you go, and scalable mode. This of course doesn’t mean we have all the existing features and capabilities from SQL Server, since some of them would probably create some issues since this is a Shared Environment and also because the goal is High Availability and Scalability.

- Ideal for both simple and complex applications

- It’s a way for us to have a Relational Database as a Service quickly and powerful which can be used in all types of solutions, but in order to get the best out of it we really need to understand and adjust how it works and avoid things like being throttled, for example.

- Enterprise-ready with automatic support for HA

- True since it provide a higher level of management since we don’t deal with physical machines anymore but with the actual storing of data this way focusing more on what’s more important for our solution and our business.

- Also this provides us an automatic support that enables us to have High Availability without ‘a lot of work’.

- Designed to scale out elastically with demand

- SQL Databases were created to scale and of course that’s part of it’s DNA, since without the ability to scale elastically it wouldn’t be fit for the Cloud. This will be allowed with SQL Federations.

After a high-level understanding of what is a SQL Database in Windows Azure, it’s important to understand also what are the scaling strategies that we can use, since this way we can better use them whenever needed. And so the strategies are:

- Horizontal Partitioning or Sharding

- Spreads data across similar nodes

- All nodes have the same schema

- Allows us to achieve massive scale out both in terms of Data Size and Load

- We need to understand that while doing that we aren’t going to be able to get the complete list of data within a single query, and so it’s something we need to understand and consider.

- Vertical Partitioning

- Spreads data across Dis-Similar nodes

- Each node has it’s own schema where the data is stored. Eg. SQL Database, Table Storage, Blob Storage, …

- Allows us to place the data where it makes more sense by slitting the data we have for a solution and understanding how it’s used and how it should be stored so we can take the best out of it.

- In this case we need to understand that by doing that we a splitting at the row level, so if we want a complete row (if we were to be thinking about a regular database) we won’t be able to get that in 1 only query, since one part can be in a DB, another in Table Storage and another in Blob Storage.

- Hybrid Partitioning

- This is when we spread our data both in Vertically and Horizontally.

Now let’s have a quick look at SQL Federations:

- Integrated database sharding that can scale to hundreds of nodes

- Provides the ability to do Horizontal Partitioning or Sharding to data inside SQL Databases in a quick and ‘easy’ way.

- Multi-tenancy via flexible repartitioning

- Provide the ability to achieve multi-tenancy inside the same Database by providing the ability to split data horizontally.

Online split operations to minimize downtime

- Automatic data discovery regardless of changes in how data is partitioned

Finally, before we get to the actual Tips & Tricks we need to understand the multi-tenancy strategies that are typically used, and they are:

- Separate Servers

- This provides the best isolation possible and it’s regularly done On-Premises, but it’s also the one that doesn’t enable cutting costs, since each tenant has it’s own server, sql, license and so on.

- Separate Databases

- Very used in order to provide isolation for customer, because we can associate different logins, permissions and so on to each DB. Considered by many the only way to provide isolation for tenants.

- Separate Schema

- Also a very good way to achieve multi-tenancy but at the same time share some resources since everything it’s inside the same DB but the schemas used are different, one for each Tenant. That allows us to even customize a specific tenant without affecting others.

- Row Isolation

- Everything is shared in this option, Server, Database and even Schema. The only way they are differentiated is based on a TenantId or some other column that exists on the table level.

So now that we had a high-level view of all this let’s take a look at some of the Tips & Tricks for it, and they are:

- Choose the right Multi-Tenancy Strategy

- One of the most important steps for delivering a Multi-Tenant solution is understand exactly what should be the approach we should use, and normally the simplest one isn’t actually the best. For example, if we think about Isolation the best might seem Separate Server or Separate Database, but that means that from an economics standpoint we aren’t going to be very competitive, and so for this we need to understand that is we go further for a more shared approach, like Row Isolation, the impact in terms of development might be that at the beginning the investment is bigger, but in the long run that will pay off.

- Also important is that if we want Multi-Tenancy and Isolation the only solution is not separating everything, since that is something we can enforce programmatically, through security permissions and so on. It’s just might take a bit more effort but the end result should be that we achieve some other customers that we usual didn’t because of the prices.

- Don’t forget Security Patterns

- Some time when we start creating Multi-Tenant Databases we start thinking about sharing everything and forget about the Security part of that. This is actually a common mistake that can cost a lot, and in order to make things work we should never forget to:

- Filter

- Use an intermediate layer that will receive all the requests to the DB and infer the Tenant filter, so that nobody has access to something that shouldn’t. Of course this also means that no one should have direct access to the DB.

- Permissions

- Very important when we consider Multi-Tenant Databases is the Permissions and how we can affect them. When we use Separate Servers, Databases or either Schemas, we can actually associate different logins, roles and so on to the different Tenants, but when we are in a Row Isolation model that isn’t possible, and that’s why the intermediate layer, that is actually your Data Layer will be very important since not only provides access to the data inferring the filter by Tenant, but also allows us to introduce permissions to access certain parts of the data. For example by leveraging Windows Azure Active Directory Access Control Service.

- Encryption

- This is of a huge importance. IF YOUR DATA IS SENSITIVE JUST ENCRYPT IT. SQL Databases don’t have the ‘With Encryption’ for Columns but this doesn’t mean I can’t really insert encrypted data in the database, I just need to do it on another layer, again in an intermediate layer.

- Also very important when we encrypt our data is to understand the method we’re going to use. Normally one of the best methods, if we don’t want anyone that isn’t part of a Tenant to access the data and have different encryptions per Tenant so that even if someone gets the full DB it won’t access the full data, is to use X.509 Client Certificates. This is a very good approach since the Client is actually the one that has the Certificate that is used, but it also means that we cannot count of doing background calculation with that data in the Cloud since we don’t have the certificate. So it’s a balance.

- A quick reminder is that IF YOU SHARE A SECRET IT STOPS BEING A SECRET, it’s just like telling a secret to a child. So for this reason, if you use X.509 Client Certificates to encrypt the data, and then register all those certificates in a Windows Azure Role, that isn’t the best approach because if someone get access to that role it will have access to the KEYS OF THE KINGDOM.

- Choose your partitions wisely

- When you choose the partitions it should be based on the ‘hotness’ of the data and not on the ‘# of records’. This is a very important premise since we normally see partitions being made in a way that they all have the same amount of records and data, and this doesn’t mean the solution will perform, because if we have one partition that has all the most commonly used data and another one with less common we won’t have any benefit with the partition. So the important part is to partition your data based on how the data is used and how commonly it is used, since in order to get good partitioning sometimes we can have a partition with very few records and another one with thousands.

- So it’s also important to before partitioning the data understand how that data will be consumed and used, since that will allow us to better understand what is the most used data or not.

- Choose your Partition Keys correctly

- We can have several ways of defining partition keys and the most common are:

- Natural Keys

- Choosing a Natural key is usually one of the most used ways in order to select a partition key, and some samples are:

- Tenant

- Country

- Region

- Date

- …

- But sometimes this isn’t the best approach since if we go for the Tenant that doesn’t partition based on the ‘hotness’ just based on the Tenant and if a tenant is small has less information and so it will be faster than a tenant that is larger because it has more data and all in the same partition. This is exactly what we want to avoid. The same thing happens with Country, since really isn’t the best way since if we use this we might have more customers from a specific customer than from another, and the same with region. When we partition by data, it will mean that everybody would be affected by everyone else data, since everything would be in the same partition.

- What this mean is that while Natural Keys are one of the most used partition keys they aren’t actually the best choice because it’s very difficult to find something that allows us to partition it optimally.

- Mathematical

- This is another option for partition key since what we can do is used things like Hash or Modulo operator and other options to generate a mathematical calculation in order to find the ‘hotness’ point.

- Being this a very interesting approach is actually really difficult also since you need to understand your data very well as well as built mathematical formulas which isn’t everyone best hobby and capability.

- Lookup Based

- Another option is this one, Lookup based, this is actually the best since it really looks at how the data is used and consumed in order to find out the best partition key. In some cases this will mean a concatenation of something like ‘TenantId+Date’ or something like that, because in this case we’ll be saying that every tenant is partitioned independently and even at the same time is partitioning its own data making it faster.

- Leverage SQL Federations to handle Multi-Tenancy

- SQL Federations is a very good way to leverage Horizontal Partitioning (Sharding) of data for you solutions since allows us a ‘quick and easy’ way to partition our data and provide at the same time Isolation, since each Federation Member is actually a separate DB that is generated, but when we look at it we only see the main DB.

- Currently the limitations with this is actually the fact the SQL Federations only allow the partition key to be of types BigInt, Int, UniqueIdentifier and VarBinary. It would be great if it would support also Varchar but we can’t have all, unfortunately, but if we go for a partition key like ‘TenantId+Date’ this can be actually a number and so fall inside the BigInt possibility.

- Don’t forget that you’ll continue to need to perform backups

- Sometimes when we are in a multi-tenant environment we forget we still need to provide backups and not only for us, but most of the times the customer wants also to have a copy of the data and that is more challenging.

- In order to do that backups we can use the Export capability from SQL Databases as well as some third-party tools like RedGate’s SQL Azure Backup.

- To leverage a backup of the data for the customer we can leverage SQL Data Sync since it allows us to create filters in the data sets and so we can filter by tenant and get one Data Sync per Tenant this way making everyone happy.

So those are some of the Tips & Tricks you can use in order to be successful building Multi-Tenant Databases in Windows Azure SQL Databases. I hope that helps and would love to have your thoughts about it.

I believe the name change from SQL Azure to Windows Azure SQL Database (WASD) is as bad as Metro to Modern UI Style; both are train wrecks. Click here for a Mac user’s view on the Metro name change.

Andrew Stegmeier of the Microsoft Access team posted a described of the relationship between Access 2013 and SQL Server/SQL Azure on 8/8/2012:

This post was written by Russell Sinclair, a Program Manager on the Access Team.

Access 2013 web apps feature a new, deep integration with SQL Server and SQL Azure. In Access 2010, when you created a web application on SharePoint, the tables in your database were stored as SharePoint lists on the site that housed the application. When you use Access 2013 to create a web app on SharePoint, Access Services will create a SQL Server or SQL Azure database that houses all of your Access objects. This new architecture increases performance and scalability; it also opens up new opportunities for SQL developers to extend and work with the data in Access apps.

How it Works

When you create a web app in Access 2013, you'll choose a SharePoint site where you want it to live. Your app can be accessed, managed, or uninstalled from this site just like any other SharePoint app. In the process of creating your app in SharePoint, we provision a SQL Server database that will house all of the objects and data that your application requires. The tables, queries, macros, and forms are all stored in this database. Whenever anyone visits the app, enters data, or modifies the design, he'll be interacting with this database behind the scenes. If you create an app in Office 365, the database is created in SQL Azure. If you create an app on a SharePoint server that your company hosts, Access will create the database in the SQL Server 2012 installation that was selected by your SharePoint administrator. In either case, the database created is specific to your app and is not shared with other apps.

As you build your app, you can add tables, queries, views, and macros to deliver the functionality you and your users need. Here's what happens in the database when you create each of these objects:

Tables

When you add table to your Access app, a SQL Server table is created in the database. This table has the same name you gave it in Access, as do the fields you create in the client. The data types that are used in the SQL Server database match the types you would expect: text fields use nvarchar; number fields use decimal, int or float; and image fields are stored as varbinary(MAX).

Consider the following table in Access:

The resulting table in SQL Server looks like this:

Queries

When you add a query to your app, Access creates a SQL Server view (or a table-valued function (TVF), if your query takes parameters). The name of the view or TVF matches the name you used in Access. We even use formatting rules when generating the T-SQL, so if you view the definition directly in SQL Server, it will be easy to understand.

This is a query designed in Access:

It is stored as a formatted statement in SQL Server:

CREATE VIEW [Access].[MyQuery]

AS

SELECT

[MyTable].[ID],

[MyTable].[String Field],

[MyTable].[Date Field]

FROM

[Access].[MyTable]

WHERE

[MyTable].[Date Field] > DATEFROMPARTS(2012, 7, 16)Data Macros

Data macros come in two flavors: event data macros and standalone macros.

You can create event data macros by opening a table in design view and clicking on any of the Events buttons in the Table ribbon.

Event data macros are implemented on SQL Server as AFTER triggers on the table to which they belong.

You can create a standalone macro from the Home ribbon by clicking the Advanced button in the Create section and choosing Data Macro from the list of items. This type of macro can take parameters and is persisted as a stored procedure in SQL Server.

Views

Views in Access 2013 are the parts of your app that display your data in the browser—database experts might call them forms. They are also stored in the database. Since they are HTML and JavaScript rather than SQL objects, they are stored as text in the Access system tables.

SQL Server Schemas

Within the database, Access makes use of three separate SQL Server schemas: Access; AccessSystem; and AccessRuntime.

The AccessSystem schema contains system tables that store the definitions of each object in a format that Access Services understands, as well as bits and pieces of information that are necessary in order for the item to work well in the runtime or design time surface.

The Access schema contains all of the tables, queries, and macros created by you, the app designer. Everything in this schema is the implementation of the objects you designed in SQL Server.

The AccessRuntime schema contains a number of items that we use in Access Services to optimize the runtime behavior of your application.

So What?

You might be wondering why these details are important. For some users, the only visible effect of the new SQL Server back-end will be increased speed and reliability. They don't need to worry about the technical details. More advanced users, though, can directly connect to the SQL Server or SQL Azure database from outside of their Access app, which enables a whole new frontier of possibilities for advanced integration and extensions. This is big!

To enable external connections, simply click on the File menu to go to the Backstage. Under the Connections section, you'll find the SQL Server login credentials that you can use to connect to your database in SQL Server Management Studio, ASP.NET, or any other application that supports SQL Server.

The Manage connections button contains a number of commands that allow you to manage connections to the SQL Server database. You'll find that you can generate a read-only login and a read-write login. Use the read-only login when you want to connect to the SQL Server database from a program or app that doesn't need to modify the data, such as a reporting tool. Use the read-write login when you want to connect to the database and modify or enter new data. For example, you could create a public website in ASP.NET that allowed internet users to submit applications that get stored in your Access database.

Please note, however, that this functionality is not currently available in the Office 365 Preview. If you'd like to try it out, though, you can download the Microsoft SharePoint Server 2013 Preview and set it up on your own servers.

SQL Server Rocks

We are really excited about these changes to Access 2013 and we hope you are as well. SQL Azure and SQL Server give Access 2013 a powerful data engine to house your data. They also enable many new scenarios for advanced integration and extension. We can't wait to hear about the great new apps that you'll build with Access.

What’s not clear from Russ’ article is how do SQL Server and Windows Azure SQL Database (formerly SQL Azure) handle multi-valued list boxes, which require backing by SharePoint lists in Access 2010.

<Return to section navigation list>

MarketPlace DataMarket, Cloud Numerics, Big Data and OData

•• Amit Choudhary described Creating OData Service Using WCF DataService in a 8/7/2012 post to the C# corner (missed when published):

What is OData

This should be the first question if you're new to this term. Here is a brief introduction to it.

"The Open Data Protocol (OData) is a Web protocol for querying and updating data that provides a way to unlock your data and free it from silos that exist in applications today. OData does this by applying and building upon Web technologies such as HTTP, Atom Publishing Protocol (AtomPub) and JSONto provide access to information from a variety of applications, services, and stores. The protocol emerged from experiences implementing AtomPub clients and servers in a variety of products over the past several years. OData is being used to expose and access information from a variety of sources including, but not limited to, relational databases, file systems, content management systems and traditional Web sites." Reference - http://www.odata.org

Want to know more, like What, Why and How? Visit: http://www.odata.org/introduction

Now after having gone through the specification of OData you'll can learn where it can fit your requirements when designing service-architecture aplications.

Let's avoid digging too deeply into these consideartions and return to the subject of how to create an OData service using a WCF DataService.

So go ahead and launch your VisualStudio 2010 (or 2012 if you have that one installed; it's available as a RC version at the time of writing this article).Now create a web project or rather add a class library project. (Let's maintain a little layering and separation of business.)

The following are a simple step-by-step walkthrough, keeping in mind that someday some beginner (new to VS) might be having trouble with written instructions. The following shows creation of a Class Library:

Name it "Demo.Model" as we're creating a sample service and this will work as Model (Data) for our service.Now go ahead and add a new item -> "ADO.Net Entity Data Model":

Name it "DemoModel.edmx". When you click Add, a popup will be waiting for your input. So either you can generate your entities from the database or you can just create an empty model which further can be used to generate your database. Make your choice.

But we're simply going to use the existing database as I already have a nice test database from Stackoverflow dumps.

Click "Next >" and get your connection string using "New Connection" and you're ready to click "Next >" again:

Select your database object to include into your Entities.

For creating the OData service we only need the Tables. So after clicking "Finish" your Entities diagram should look like this. Here's the important note: the relationship between the entities should be well-defined because the OData will be searching/locating your related entities based on your query (learn about URI Queries).

We're close enough to finish the job more than 50% work is done to create the service. Forget the time when we used to define/create/use the client proxy and other configuration settings including creating the methods for every type of data required.

(Note:- This article will only show you how to get the data from the OData service. I can in the future write about Create, Delete and Edit using HTTP requests PUT, DELETE and POST.)

Now proceed to adding a new Web project (you should choose ASP.Net Empty website) from the templates; see:

Add the project reference Demo. Model to the newly added website.

Now add a new item in the web project i.e. WCF Data service.

Now open the code behind file of your service file and write this code:1. [JSONPSupportBehavior]

2. //[System.ServiceModel.ServiceBehavior(IncludeExceptionDetailInFaults = true)] //- for debugging

3. public class Service : DataService<StackOverflow_DumpEntities>

4. {

5. // This method is called only once to initialize service-wide policies.

6. public static void InitializeService(DataServiceConfiguration config)

7. {

8. config.SetEntitySetAccessRule("*", EntitySetRights.AllRead);

9. //Set a reasonable paging site

10. config.SetEntitySetPageSize("*", 25);

11. //config.UseVerboseErrors = true; //- for debugging

12. config.DataServiceBehavior.MaxProtocolVersion = DataServiceProtocolVersion.V2;

13. }

14.

15. /// <summary>

16. /// Called when [start processing request]. Just to add some caching

17. /// </summary>

18. /// <param name="args">The args.</param>

19. protected override void OnStartProcessingRequest(ProcessRequestArgs args)

20. {

21. base.OnStartProcessingRequest(args);

22. //Cache for a minute based on querystring

23. HttpContext context = HttpContext.Current;

24. HttpCachePolicy c = HttpContext.Current.Response.Cache;

25. c.SetCacheability(HttpCacheability.ServerAndPrivate);

26. c.SetExpires(HttpContext.Current.Timestamp.AddSeconds(60));

27. c.VaryByHeaders["Accept"] = true;

28. c.VaryByHeaders["Accept-Charset"] = true;

29. c.VaryByHeaders["Accept-Encoding"] = true;

30. c.VaryByParams["*"] = true;

31. }

32.

33. /// <summary>

34. /// Sample custom method that you OData also supports

35. /// </summary>

36. /// <returns></returns>

37. [WebGet]

38. public IQueryable<Post> GetPopularPosts()

39. {

40. var popularPosts =

41. (from p in this.CurrentDataSource.Posts orderby p.ViewCount select p).Take(20);

42.

43. return popularPosts;

44. }

45. }Here we added some default configuration settings in the InitializeService() method. Note that I'm using the DataServiceProtocolVersion.V3 version. This is the latest version for the OData protocol in .Net, you can get it by installing the SP for WCF 5.0 (WCF Data Services 5.0 for OData V3) and replacing the references of System.Data.Services, System.Data.Services.Client to Microsoft.Data.Services, Microsoft.Data.Services.Client.

Otherwise it's not a big deal, you can still have your DataServiceProtocolVersion.V2 version and It works just fine.

Next in the above snippet I've added some cache support by overriding the method OnStartProcessingRequest().

Also I have not forgotten about the JSONPSupportBehavior attribute on the class. This is an open source library that enables your data service to have JSONP support.

First of all you should know why and what JSONP does over JSON. Keeping it simple and brief:

- Cross domain support

- Embedding the JSON inside the callback function call.

Hence.. We're done creating the service. Wondered!!? Don't be. Now let's run this project and see what we've got. If everything goes well then you'll get it running like this:

Now here's the interesting part.

Play with this service and see the OData power. Now you've seen that we didn't have any custom method implementation here and we'll be directly working with our entities using the URL and getting the returned format as Atom/JSON. :) .. Sounds cool?

If you have LINQPAD installed then launch it otherwise download and install it from here.

Do you know that LINQPAD supports the ODataServices. So we'll add a new connection and select WCF Data service as our datasource.

Now click next and enter your service URL. (Make sure the service that we just created is up and running)

The moment you click OK you'll see your all entities in the Connection panel.

Select your service in the Editor and start typing your LINQ queries:

I need the top 20 posts ordered by View count.. blah blah. So here is my LINQ query.

- (from p in Posts

- orderby p.ViewCount

- select p).Take(20)

Now run this with LINQPAD and see the results:

Similarly you can write any nested, complex LINQ queries on your ODataService.

Now here is the key of this. The root of the whole article:

This is the OData protocol URI conventions that helped you filter and order your data from entities. At the end of this article you've just created and API like Netflix, Twitter or another public API has.

Moreover, we used JSONPSupportBehavior so you can just add one more parameter to the above URL and enter it in a browser and go, as in:

In the next article we'll see how to consume this service in a simple plain HTML.

Roope Astala posted Cloud Numerics and Latent Semantic Indexing, Now with Distributed Sparse Data on 8/8/2012:

You may recall our earlier blog post about Latent Semantic Indexing and Analysis, where we analyzed a few hundred documents. With new features of “Cloud Numerics” Wave 2 we can repeat the analysis: not just for few hundred but for many thousands of documents. The key new features we will use are distributed sparse matrices and singular value decomposition for dimensionality reductions of sparse data. We’ll then use DataParallel.Apply to compute the cosine similarities between documents.

The dataset we use is derived from SEC-10K filings of companies for the year of 2010. There are 8944 documents in total; the number of terms is over 100,000. We have computed the tfidf term-document matrix and stored it as triplets (term number, document number, tfidf frequency). This matrix was computed using Hadoop and Mahout from pre-parsed filings, and then saved into a regular CSV file. We will use the “Cloud Numerics” CSV reader to read in this data. For those interested, “Cloud Numerics” can also read the triplets from the HDFS (Hadoop Distributed File System) format directly. This is a topic for another blog post.

Step 1: Preparation

To run the example, we recommend you deploy a Windows Azure cluster with two compute nodes, which you can do easily with the “Cloud Numerics” Deployment Utility. Alternatively, if you have a local computer with enough memory –-more than 8 GB free-– you can download the files (see next step) and attempt the example on that local computer.

We’ll be creating the application --method by method-- in the steps below.

1. Open Visual Studio, create a new Microsoft “Cloud Numerics” Application, and call it SparseLSI.

2. In the Solution Explorer frame, find the MSCloudNumericsApp project and add a reference to Microsoft.WindowsAzure.StorageClient assembly. We’ll need this assembly to read and write data to and from Azure storage.

3. Next, copy and paste the following piece of code to the Program.cs file as the skeleton of the application.

using System;

using System.Collections.Generic;

using System.Linq;

using System.Text;

using Microsoft.WindowsAzure;

using Microsoft.WindowsAzure.StorageClient;

using Microsoft.Numerics;

using msnl = Microsoft.Numerics.Local;

using msnd = Microsoft.Numerics.Distributed;

using Microsoft.Numerics.Mathematics;

using Microsoft.Numerics.Statistics;

using Microsoft.Numerics.LinearAlgebra;

using Microsoft.Numerics.DataParallelOps;

namespace MSCloudNumericsApp

{

public class Program

{

}

}Step 2: Copy Data to Your Own Storage Account

The input data for this application is stored at cloudnumericslab storage account:

http://cloudnumericslab.blob.core.windows.net/lsidata/SEC_10K_2010.csv

and:

http://cloudnumericslab.blob.core.windows.net/lsidata/Docmapping_2010.csv

The methods we will use to read in data to distributed arrays do not support public access to blobs, so you must copy the data to your own Windows Azure storage account.

To do this, let’s add following methods to the “Cloud Numerics” application:

This routine instantiates a CloudBlobClient instance, given an account name and key:

static CloudBlobClient GetBlobClient(string accountName, string accountKey)

{

var storageAccountCredential = new StorageCredentialsAccountAndKey(accountName, accountKey);

var storageAccount = new CloudStorageAccount(storageAccountCredential, true);

return storageAccount.CreateCloudBlobClient();

}This method enables your application to download and upload blobs between storage accounts:

static void CopyDataToMyBlob(CloudBlobClient blobClient, string originUri, string destContainerName, string destBlobName)

{

var originBlob = new Microsoft.WindowsAzure.StorageClient.CloudBlob(originUri);

var destContainer = blobClient.GetContainerReference(destContainerName);

destContainer.CreateIfNotExist();

var destBlob = destContainer.GetBlobReference(destBlobName);

destBlob.UploadText(originBlob.DownloadText());

}The third method copies two blobs: the CSV file that holds the term-document matrix, and a list of document names that maps term-document matrix columns to specific companies.

static void CopySecData(CloudBlobClient blobClient, string myContainerName, string secBlobName, string mapBlobName)

{

var secUri = @"http://cloudnumericslab.blob.core.windows.net/lsidata/SEC_10K_2010.csv";

var mapUri = @"http://cloudnumericslab.blob.core.windows.net/lsidata/Docmapping_2010.csv";

CopyDataToMyBlob(blobClient, secUri, myContainerName, secBlobName);

CopyDataToMyBlob(blobClient, mapUri, myContainerName, mapBlobName);

}Step 3: Read Data from the CSV File into a Sparse Matrix

Create a method that reads data in “triplet” format (row, column, value), slices it into three vectors, and then assembles it into a sparse matrix. As an input argument, our reader will take a class that implements the IParallelReader interface, because this will give us flexibility to use the same method for data stored locally or on Azure (this point becomes more clear in Step 9). The triplet data is shaped n-by-3 where n is total number of unique words in each document of the corpus. The slicing operation yields first, second, and third column as separate vectors, where the first two correspond to indices of terms and documents, respectively. To convert them into row and column indices, we cast the first two into long integers and then call the distributed sparse matrix constructor. Add the following method to the skeleton program from previous step.

static msnd.SparseMatrix<double> ReadTermDocMatrix(msnd.IO.IParallelReader<double> reader)

{

var triplet = msnd.IO.Loader.LoadData<double>(reader);

var rowIndices = triplet.GetSlice(new Slice(), 0).ConvertTo<long>();

var columnIndices = triplet.GetSlice(new Slice(), 1).ConvertTo<long>();

var nonzeroValues = triplet.GetSlice(new Slice(), 2).ConvertTo<double>();

return new msnd.SparseMatrix<double>(rowIndices, columnIndices, nonzeroValues);

}Step 4: Read List of Documents

From the sparse matrix alone we cannot tell how columns map to filings by specific companies. To provide this mapping, we read in an indexed list of documents. This list was originally produced during the pre-processing of documents.

The following method reads the data file from Windows Azure to a string:

static List<string> ReadDocumentList(CloudBlobClient blobClient, string inputContainerName, string inputBlobName)

{

var resultContainer = blobClient.GetContainerReference(inputContainerName);

var resultBlob = resultContainer.GetBlobReference(inputBlobName);

return GetColumnToDocumentMapping(resultBlob.DownloadText());

}This method converts the string into list of strings, one for each document:

static List<string> GetColumnToDocumentMapping(string documentList)

{

var rows = documentList.Split('\n');

List<string> columnToDocumentMap = new List<string>();

foreach (var row in rows)

{

var namenum = row.Split(new char[] { ',' });

columnToDocumentMap.Add(namenum[1].Substring(0,namenum[1].Length-1));

}

return columnToDocumentMap;

}Step 5: Compute Sparse Singular Value Decomposition

The dimensionality of the term-document matrix is huge. In a sense, each document can be considered as a vector in t-dimensional space, where t is the number of words --over 100,000-- in the corpus. Also, the term-document matrix is very sparse, each document uses only a small subset of all available words. We, therefore, can reduce the dimensionality of the problem by applying singular value decomposition.

This SVD operation has three major effects that facilitate subsequent analysis by:

- Reducing noise.

- Grouping together features that explain most of the variance between documents.

- Reducing the dimensionality of the feature space and raw memory footprint of the data.

static msnd.NumericDenseArray<double> ReduceTermDocumentMatrix(msnd.SparseMatrix<double> termDocumentMatrix, long svdComponents)

{

// Compute sparse SVD

var svd = Decompositions.Svd(termDocumentMatrix, svdComponents);

msnd.NumericDenseArray<double> sMatrix = new msnd.NumericDenseArray<double>(svd.S.Shape[0], svd.S.Shape[0]);

sMatrix.Fill(0);

for (long i = 0; i < svd.S.Shape[0]; i++)

{

sMatrix[i, i] = svd.S[i];

}

// Return (V*S). Note that after this step, rows correspond to documents

return Operations.MatrixMultiply(svd.V, sMatrix);

}The number of SVD components determines the reduced dimensionality. In this example we’ll specify a value of 100; you can experiment with different values. The output is a V*S matrix in reduced-dimensional subspace, which we will use for further analysis.

Step 6: Compute Cosine Similarities Between Documents

At this stage, each document is represented as a row vector in V*S matrix. Now, we’d like to find which documents are most similar to a specific document of interest.

To compare the documents we’ll use a cosine similarity measure. Let’s first add a class with a method that computes cosine similarity between a pair of documents. Note that the first vector is supplied as an argument to the constructor. The reason for this design is that we can then pass the cosine similarity method as an argument to the DataParallel.Apply(), to compute the similarity between a single document of interest and all other documents in parallel. We also mark the class as serializable so it can be passed to DataParallel.Apply().

// Method for computing cosine similarities between documents

[Serializable]

public class SimilarityComparer

{

msnl.NumericDenseArray<double> _documentVector1;

public SimilarityComparer(msnl.NumericDenseArray<double> documentVector1)

{

_documentVector1 = documentVector1;

}

public double CompareSimilarity(msnl.NumericDenseArray<double> documentVector2)

{

double sqnorm1 = ArrayMath.Sum(_documentVector1*_documentVector1);

double sqnorm2 = ArrayMath.Sum(documentVector2 * documentVector2);

return Operations.DotProduct(_documentVector1,documentVector2) / BasicMath.Sqrt(sqnorm1 * sqnorm2);

}

}Then, we’ll add a method that first looks up the row index of document of interest and gets the slice (row vector) corresponding to that document. It then instantiates SimilarityComparer using that vector, and calls DataParallel.Apply with SimilarityComparer.CompareSimilarity as the method to execute in parallel against rows of the V*S matrix. We sort the results by their similarity comparison score, select the top 10, and write the result to a string.

static string GetSimilarDocuments(List<string> columnToDocumentMap, msnd.NumericDenseArray<double> sv, string interestingCompany)

{

int interestingDocIndex = columnToDocumentMap.IndexOf(interestingCompany);

if (interestingDocIndex == -1) { throw new System.ArgumentException("Company not found!"); }

StringBuilder output = new StringBuilder();

// Compute cosine similarity

var documentVector1 = sv.GetSlice( interestingDocIndex,new Slice());

var similarityComparer = new SimilarityComparer(documentVector1.ToLocalArray());

var similarityResult = DataParallel.Apply(similarityComparer.CompareSimilarity, sv.DimensionSplit(0));

var sortedSimilarity = ArrayMath.ArgSort(similarityResult.Flatten(), false, 0);

long selectedDocuments = 10;

for (long i = 0; i < selectedDocuments; i++)

{

output.Append(columnToDocumentMap[(int)sortedSimilarity.Index[i]] + ", " + sortedSimilarity.Value[i] + "\n");

}

return output.ToString();

}Step 7: Write out Result

We write the results to a Windows Azure blob as a text file. The blob is made public, so you can view the results using URI http:\\<myaccount>.cloudapp.net\lsioutput\lisresult.

static void CreateBlobAndUploadText(string text, CloudBlobClient blobClient, string containerName, string blobName)

{

var resultContainer = blobClient.GetContainerReference(containerName);

resultContainer.CreateIfNotExist();

var resultBlob = resultContainer.GetBlobReference(blobName);

// Make result blob publicly readable so it can be accessed using URI

// https://<accountName>.blob.core.windows.net/lsiresult/lsiresult

var resultPermissions = new BlobContainerPermissions();

resultPermissions.PublicAccess = BlobContainerPublicAccessType.Blob;

resultContainer.SetPermissions(resultPermissions);

resultBlob.UploadText(text);

}Step 8: Put it All Together

Finally, we put together the pieces in a Main method of the application: we specify the input locations, load in data, reduce the term-doc matrix, select Microsoft Corp. as a company of interest, and find similar documents. We also added a Boolean “useAzure” to provide for the optional scenario where local input and output of data is needed for cases where you want to run the application on your workstation, not on Windows Azure.

Note that the strings accountName, accountKey need to be changed to hold the name and key of your own Windows Azure storage account. In case you intend to run on local workstation, ensure that localPath points to the folder that holds the input files.

public static void Main()

{

NumericsRuntime.Initialize();

// Update these to correct Azure storage account information and

// file locations on your system

string accountName = "myAccountName";

string accountKey = "myAccountKey";

string inputContainerName = "lsiinput";

string outputContainerName = "lsioutput";

string outputBlobName = "lsiresult";

// Update this path if running locally

string localPath = @"C:\Users\Public\Documents\";

// Not necessary to update file names

string secFileName = "SEC_10K_2010.csv";

string mapFileName = "Docmapping_2010.csv";

CloudBlobClient blobClient;

// Change to false to run locally

bool useAzure = true;

msnd.SparseMatrix<double> termDoc;

List<string> columnToDocumentMap;

// Read inputs

if (useAzure)

{

// Set up CloudBlobClient

blobClient = GetBlobClient(accountName, accountKey);

// Copy data from Cloud Numerics Lab public blob to your local account

CopySecData(blobClient, inputContainerName, secFileName, mapFileName);

columnToDocumentMap = ReadDocumentList(blobClient, inputContainerName, mapFileName);

var azureCsvReader = new msnd.IO.CsvSingleFromBlobReader<double>(accountName, accountKey, inputContainerName, secFileName, false);

termDoc = ReadTermDocMatrix(azureCsvReader);

}

else

{

blobClient = null;

var dataPath = localPath + secFileName;

string documentList = System.IO.File.ReadAllText(localPath + mapFileName);

columnToDocumentMap = GetColumnToDocumentMapping(documentList);

var localCsvReader = new msnd.IO.CsvFromDiskReader<double>(dataPath, 0, 0, false);

termDoc = ReadTermDocMatrix(localCsvReader);

}

// Perform analysis

long svdComponents = 100;

var sv = ReduceTermDocumentMatrix(termDoc, svdComponents);

string interestingCompany = "MICROSOFT_CORP_2010";

string output = GetSimilarDocuments(columnToDocumentMap, sv, interestingCompany);

if (useAzure)

{

CreateBlobAndUploadText(output, blobClient, outputContainerName, outputBlobName);

}

else

{

System.IO.File.WriteAllText(localPath + "lsiresult.csv", output);

}

Console.WriteLine("Done!");

NumericsRuntime.Shutdown();

}Once all pieces are together, you should be ready to build and deploy the application. In Visual Studio’s Solution Explorer, Set AppConfigure Project as the StartUp Project, build and run the application, and use the “Cloud Numerics” Deployment Utility to deploy to Windows Azure. After a successful run, the resulting cosine similarities should look like the following:

The algorithm has produced a list of technology companies as one would expect. As a sanity check, note that MICROSOFT_CORP_2010 has similarity score of 1 with itself.

This concludes the example; the full example code is available as a Visual Studio 2010 solution at Microsoft Codename “Cloud Numerics” download site.

I find it interesting that Codename “Cloud Numerics” for .NET developers survived SQL Azure Labs’ first cut, but consumer-oriented Codename “Social Analytics” didn’t.

See Stefan Groschupf (@SefanGroschupf, pictured below) casts a baleful eye on Hadoop and Hive in a Bursting the Big Data Bubble guest post to Andrew Brust’s (@andrewbrust) Big Data blog for ZDNet in the Windows Azure Blob, Drive, Table, Queue and Hadoop Services section above.

No significant articles today.

<Return to section navigation list>

Windows Azure Service Bus, Access Control Services, Caching, Active Directory and Workflow

• Maarten Balliauw (@maartenballiauw) described ASP.NET Web API OAuth2 delegation with Windows Azure Access Control Service in a 8/7/2012 post:

If you are familiar with OAuth2’s protocol flow, you know there’s a lot of things you should implement if you want to protect your ASP.NET Web API using OAuth2. To refresh your mind, here’s what’s required (at least):

OAuth authorization server

- Keep track of consuming applications

- Keep track of user consent (yes, I allow application X to act on my behalf)

- OAuth token expiration & refresh token handling

- Oh, and your API

That’s a lot to build there. Wouldn’t it be great to outsource part of that list to a third party? A little-known feature of the Windows Azure Access Control Service is that you can use it to keep track of applications, user consent and token expiration & refresh token handling. That leaves you with implementing:

- OAuth authorization server

- Your API

Let’s do it!

On a side note: I’m aware of the road-to-hell post released last week on OAuth2. I still think that whoever offers OAuth2 should be responsible enough to implement the protocol in a secure fashion. The protocol gives you the options to do so, and, as with regular web page logins, you as the implementer should think about security.

Building a simple API

I’ve been doing some demos lately using www.brewbuddy.net, a sample application (sources here) which enables hobby beer brewers to keep track of their recipes and current brews. There are a lot of applications out there that may benefit from being able to consume my recipes. I love the smell of a fresh API in the morning!

Here’s an API which would enable access to my BrewBuddy recipes:

1 [Authorize] 2 public class RecipesController 3 : ApiController 4 { 5 protected IRecipeService RecipeService { get; private set; } 6 7 public RecipesController(IRecipeService recipeService) 8 { 9 RecipeService = recipeService; 10 } 11 12 public IQueryable<RecipeViewModel> Get() 13 { 14 var recipes = RecipeService.GetRecipes(User.Identity.Name); 15 var model = AutoMapper.Mapper.Map(recipes, new List<RecipeViewModel>()); 16 17 return model.AsQueryable(); 18 } 19 }

Nothing special, right? We’re just querying our RecipeService for the current user’s recipes. And the current user should be logged in as specified using the [Authorize] attribute. Wait a minute! The current user?

I’ve built this API on the standard ASP.NET Web API features such as the [Authorize] attribute and the expectation that the User.Identity.Name property is populated. The reason for that is simple: my API requires a user and should not care how that user is populated. If someone wants to consume my API by authenticating over Forms authentication, fine by me. If someone configures IIS to use Windows authentication or even hacks in basic authentication, fine by me. My API shouldn’t care about that.

OAuth2 is a different state of mind

OAuth2 adds a layer of complexity. Mental complexity that is. Your API consumer is not your end user. Your API consumer is acting on behalf of your end user. That’s a huge difference! Here’s what really happens:

The end user loads a consuming application (a mobile app or a web app that doesn’t really matter). That application requests a token from an authorization server trusted by your application. The user has to login, and usually accept the fact that the app can perform actions on the user’s behalf (think of Twitter’s “Allow/Deny” screen). If successful, the authorization server returns a code to the app which the app can then exchange for an access token containing the user’s username and potentially other claims.

Now remember what we started this post with? We want to get rid of part of the OAuth2 implementation. We don’t want to be bothered by too much of this. Let’s try to accomplish the following:

Let’s introduce you to…

WindowsAzure.Acs.Oauth2

“That looks like an assembly name. Heck, even like a NuGet package identifier!” You’re right about that. I’ve done a lot of the integration work for you (sources / NuGet package).

WindowsAzure.Acs.Oauth2 is currently in alpha status, so you’ll will have to register this package in your ASP.NET MVC Web API project using the package manager console, issuing the following command:

Install-Package WindowsAzure.Acs.Oauth2 -IncludePrereleaseThis command will bring some dependencies to your project and installs the following source files:

App_Start/AppStart_OAuth2API.cs - Makes sure that OAuth2-signed SWT tokens are transformed into a ClaimsIdentity for use in your API. Remember where I used User.Identity.Name in my API? Populating that is performed by this guy.

Controllers/AuthorizeController.cs - A standard authorization server implementation which is configured by the Web.config settings. You can override certain methods here, for example if you want to show additional application information on the consent page.

Views/Shared/_AuthorizationServer.cshtml - A default consent page. This can be customized at will.

Next to these files, the following entries are added to your Web.config file:

1 <?xml version="1.0" encoding="utf-8" ?> 2 <configuration> 3 <appSettings> 4 <add key="WindowsAzure.OAuth.SwtSigningKey" value="[your 256-bit symmetric key configured in the ACS]" /> 5 <add key="WindowsAzure.OAuth.RelyingPartyName" value="[your relying party name configured in the ACS]" /> 6 <add key="WindowsAzure.OAuth.RelyingPartyRealm" value="[your relying party realm configured in the ACS]" /> 7 <add key="WindowsAzure.OAuth.ServiceNamespace" value="[your ACS service namespace]" /> 8 <add key="WindowsAzure.OAuth.ServiceNamespaceManagementUserName" value="ManagementClient" /> 9 <add key="WindowsAzure.OAuth.ServiceNamespaceManagementUserKey" value="[your ACS service management key]" /> 10 </appSettings> 11 </configuration>

These settings should be configured based on the Windows Azure Access Control settings. Details on this can be found on the Github page.

Consuming the API

After populating Windows Azure Access Control Service with a client_id and client_secret for my consuming app (which you can do using the excellent FluentACS package or manually, as shown in the following screenshot), you’re good to go.

The WindowsAzure.Acs.Oauth2 package adds additional functionality to your application: it provides your ASP.NET Web API with the current user’s details (after a successful OAuth2 authorization flow took place) and it adds a controller and view to your app which provides a simple consent page (that can be customized):

After granting access, WindowsAzure.Acs.Oauth2 will store the choice of the user in Windows Azure ACS and redirect you back to the application. From there on, the application can ask Windows Azure ACS for an access token and refresh the access token once it expires. Without your application having to interfere with that process ever again. WindowsAzure.Acs.Oauth2 transforms the incoming OAuth2 token into a ClaimsIdentity which your API can use to determine which user is accessing your API. Focus on your API, not on OAuth.

• Haishi Bai (@haishibai2010) posted the Zen of implementing claim-based authentication with Windows Azure ACS on 8/10/2012:

I’ve been working with digital identities on-and-off for the past 4 to 5 years, and I’ve witnessed amazing efforts made by Microsoft (and others) to make claim-based architecture more approachable by the developer communities. I’m thrilled to see how Microsoft, especially the WIF team, strives to make security easier and easier over the years for .Net developers. On the other hand, because of the inherit complexity of security protocols and topologies, implementing claim-based authentication requires careful execution, regardless of the tooling supports you get. In this pot I’ll list several “zen shorts” that are distilled from my experiences on such projects, and I hope they can provide some useful guidance that leads to successful implementations.

It is better to start weaving your fishing nets than merely coveting fish at the water – Bangu

Very few people are security experts. Actually this is the fact that has fueled claim-based architecture. Instead of attempting to handle the nitty-gritty security details, you can focus on your application logics while utilizing tens of years of collective experiences from field experts to protect your applications and services. Over the years there have been many standards have been introduced and widely adopted, such as SAML, ws-Trust, ws-Federation, OAuth, OpenID – just to name a few. Do you need to download the specifications and read on those standards? No. However, it’s very important to gain a high-level understanding of these standards. Especially, you need to know what roles they play and how they are involved in different processes.

Clear mind is like the full moon in the sky – Seung Sahn

Claim-based authentication involves a number of participants. At minimum we have the user, the client, the relying party, and the identity provider. And as your circle of trust expands, more and more players are looped in. If you don’t keep a clear mind of the overall topology and the relationships among various parties, it’s very easy to get confused. What makes claim-based authentication implementation challenging is that EVERYTHING has to be configured correctly. Even if you’ve got 99% correct, nothing works until you figure out the remaining 1%. Without a clear understanding of responsibilities of each participant, it gets harder and harder to identify places you need to work on. Making all the parts to work together will appear to be as daunting as trying to align all the starts in the sky. On the other hand, if you remain a clear mind of how things are supposed to work, fitting different pieces together is as easy as resolving a 4-piece jigsaw puzzle. The pieces will fit together naturally – you are certain the fit is correct when you make one, and you can easily spot what went wrong.

A journey of a thousand miles begins with a single step – Laozi

Don’t try everything at the same time. Instead, start simple and build on prior successes. What I often like to do is to start with no security – make sure your web application and service works so that you are not thrown off by 500 errors. Then, implement security piece-by-piece. For example, start with simple trust relationship with ACS or a STS with HTTP, without encryption, certificate validation, request validation, or any custom handlers. Get that working first, and then add other pieces one by one, and verify each step as you go along. This strategy is crucial to a smooth implementation. Otherwise there will be too many moving pieces at hand and it’s extremely hard to get them to fit together in on shot.

What I just mentioned may sound really simple – that’s good! Keep these simple points in mind when you start your journey with claim-based authentication. I’m sure you’ll find them useful. At last, here are some specific tips and reminders:

- Trusts are mutual. A trust is a two-way relationship. It can be established only when two parties trust each other. For example, for a Relying Party to work with an Identity Provider, the Relying Party needs to trust the Identity Provider as a trustworthy authority, and the Identity Party needs to trust the Relying Party as a legit audience.

- Every detail matters. It should be quite obvious that “http” is not “https” and “microsoft” is not “microsoft:8080”. And in many cases “http://somehost” is not the same as “http://somehost/” either. You may be surprised that a great portion of problems I’ve seen over the years are caused by simple mismatches among addresses and identifiers.

- Certificates play key roles in establishing trust relationships, providing identifications, signing tokens, and encrypting/decrypting messages. Very often you’ll need to work with multiple certificates. Knowing their purposes and rightful places is very important to avoid confusions.

- Utilize federation metadata whenever possible. And keep your own metadata accurate. Many utilities rely on federation metadata and they can greatly simply many configuration steps. Utilize metadata whenever possible, and keep your metadata up-to-date to make interoperating with your partners easier.

• Nathan Totter (@ntotter) and Nick Harris (@cloudnick) produced CloudCover Episode 86 - Windows Azure Active Directory with Vittorio Bertocci on 8/10/2012:

Join Nate and Nick each week as they cover Windows Azure. You can follow and interact with the show at @CloudCoverShow.

In this episode Nick and Nate are joined by Vittorio Bertocci (@vibronet) who tells us all about Windows Azure Active Directory, demonstrates the Graph API and the new Windows Azure Authentication Library.

For additional details please see:

- Windows Azure AD announcement

- Windows Azure AD deep dive

- Windows Azure Authentication Library announcement

- Windows Azure Authentication Library deep dive

In the News:

The //BUILD/ conference sold out a few minutes after registration opened, I hear.

Haishi Bai (@haishibai2010) described Windows Azure Web Site (targeting .Net Framework 4.0) and ACS on 8/10/2012:

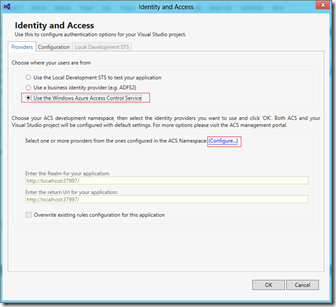

ACS is a natural choice for securing not only Windows Azure Cloud Services but also Windows Azure Web Sites. In both cases, WIF enables you to quickly configure your web applications to work with ACS, which in turn allows you to federate with many identity providers such as Windows Live and Google. This post provides a step-by-step tutorial of how to create a simple web site and to enable ACS for authentication. NOTE: This post is written in early August 2012, when Windows Azure Web Site hosting environment doesn’t support .Net Framework 4.5, while the new Identity and Access Tool I’m using is designed for 4.5 object models. Things are supposed to be much easier once 4.5 is enabled. Especially, you should be able to skip Update web.config file (.Net Framework 4.0) section altogether. For the sake of simplicity, some corners are cut in this tutorial, but the overall process should stand valid.

- Visual Studio 2012.

- Identity and Access Tool extension (you can download from msdn).

- Windows Azure subscription with Web Site enabled.

- You know how ACS works, and you’ve created your ACS namespace.

Create a simple ASP.NET MVC 4 Web Application that uses ACS for authentication

First, let’s create a simple ASP.NET web application and enable ACS authentication.

- Launch Visual Studio 2012 as an administrator.

- Create a new ASP.NET MVC 4 Web application.