Windows Azure and Cloud Computing Posts for 7/8/2013+

Top news this week:

- Jeff Barr described an EC2 Dedicated Instance Price Reduction in a 7/10/2013 post to his Amazon Web Services blog. See the Other Cloud Computing Platforms and Services section below for details.

- Steven Martin announced a new Premium offer for Windows Azure SQL Database in a 7/8/2013 post to the Windows Azure Team blog. See the Windows Azure Access Control, Active Directory, and Identity section below for details.

- The Microsoft Business Intelligence Team reported Power BI for Office 365 Revealed at Microsoft Worldwide Partner Conference (WPC). See the Windows Azure Marketplace DataMarket, Power BI, Big Data and OData section below for details.

- Steven Martin reported that new application access enhancements for Windows Azure Active Directory are now available in Preview. See the Windows Azure Access Control, Active Directory, and Identity section below for details.

| A compendium of Windows Azure, Service Bus, BizTalk Services, Access Control, Connect, SQL Azure Database, and other cloud-computing articles. |

•• Updated 7/13/2013 with new articles marked ••.

‡ Updated 7/12/2013 with new articles marked ‡.

• Updated 7/11/2013 with new articles marked •.

Note: This post is updated weekly or more frequently, depending on the availability of new articles in the following sections:

- Windows Azure Blob, Drive, Table, Queue, HDInsight and Media Services

- Windows Azure SQL Database, Federations and Reporting, Mobile Services

- Windows Azure Marketplace DataMarket, Power BI, Big Data and OData

- Windows Azure Service Bus, BizTalk Services and Workflow

- Windows Azure Access Control, Active Directory, and Identity

- Windows Azure Virtual Machines, Virtual Networks, Web Sites, Connect, RDP and CDN

- Windows Azure Cloud Services, Caching, APIs, Tools and Test Harnesses

- Windows Azure Infrastructure and DevOps

- Windows Azure Pack, Hosting, Hyper-V and Private/Hybrid Clouds

- Visual Studio LightSwitch and Entity Framework v4+

- Cloud Security, Compliance and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

Windows Azure Blob, Drive, Table, Queue, HDInsight and Media Services

•• Guarav Mantri (@gmantri) explained Uploading Large File By Splitting Into Blocks In Windows Azure Blob Storage Using Windows Azure SDK For PHP in a 7/13/2013 post:

In this blog post, we will see how you can upload a large blob in blob storage using Windows Azure SDK for PHP. I must state that I don’t know anything about PHP and did this exercise in order to help somebody out on StackOverflow. What helped me in the process is excellent documentation on PHP’s website and my knowledge on how Windows Azure Blob Storage Service REST API works.

What I realized during this process is that it’s fun to get out of one’s comfort zone (.Net for me) once in a while. It’s extremely rewarding when you accomplish something.

Since I’m infamous for writing really long blog posts, if you’re interested in seeing the final code, either scroll down below to the bottom of this post or head on to StackOverflow. Otherwise please read on.

Since we’re uploading really large files, the procedure would be to split the file in chunks (blocks), upload these chunks and then commit those chunks.

Getting Started

I’m assuming you have installed Windows Azure SDK for PHP. If not you can download it from here: http://www.windowsazure.com/en-us/downloads/?sdk=php. This SDK depends on some external packages. For this blog post, only thing we need is to install Http_Request2 PEAR package which you can download from here: http://pear.php.net/package/HTTP_Request2.

Add Proper Classes

We just have to ensure that we have referenced all the classes we need in our code

<?php require_once 'WindowsAzure/WindowsAzure.php'; use WindowsAzure\Common\ServicesBuilder; use WindowsAzure\Common\ServiceException; use WindowsAzure\Blob\Models\Block; use WindowsAzure\Blob\Models\BlobBlockType; ?>Get Azure Things in place

This would mean creating an instance of “BlobRestProxy” class in our code. For the purpose of this blog, I’m uploading a file in storage emulator.

$connectionString = "UseDevelopmentStorage=true"; $instance = ServicesBuilder::getInstance(); $blobRestProxy = $instance -> createBlobService($connectionString); $containerName = "[mycontainer]"; $blobName = "[myblobname]";Here’re the operations we would need to do:

Read file in chunks

To read file in chunks, first we’ll define the chunk size

define('CHUNK_SIZE', 1024*1024);//Block Size = 1 MBThen we’ll get the file handler by specifying the file name and opening the file

$handler = fopen("[full file path]", "r");and now we’ll read the file in chunks

while (!feof($handler)) { $data = fread($handler, CHUNK_SIZE); } fclose($handler);Prepare blocks

Before this, there are a few things I want to mention about blocks:

- A file can be split into fifty thousand (50000) blocks.

- Each block must be assigned a unique id (block id).

- All block ids must have the same length. I would encourage you to read my previous blog for more details regarding this.

- When sending to Windows Azure, each block id must be Base64 encoded.

Based on this, what we’re going to do is assign each block an incrementing integer value and to keep block id length the same, we’ll pad it with zeros so that the length of the block id is 6 characters.

$counter = 1; $blockId = str_pad($counter, 6, "0", STR_PAD_LEFT);Then we’ll create an instance of “Block” class and add that block id there with type as “Uncommitted”.

$block = new Block(); $block -> setBlockId(base64_encode($blockId)); $block -> setType("Uncommitted");Then we add this block to an array. This array will be used in the final step for committing the blocks (chunks).

$blockIds = array(); array_push($blockIds, $block);Upload Blocks

Now that we have the chunk content ready, we just need to upload it. We will make use of “createBlobBlock” function in “BlobRestProxy” class to upload the block.

$blobRestProxy -> createBlobBlock($containerName, $blobName, base64_encode($blockId), $data);We would need to do this for each and every block we wish to upload.

Committing Blocks

This is the last step. Once all the blocks have been uploaded, we need to tell Windows Azure Blob Storage to create a blob by adding all blocks we’ve uploaded so far. We will make use of “commitBlobBlocks” function again in “BlobRestProxy” class to commit the block.

$blobRestProxy -> commitBlobBlocks($containerName, $blobName, $blockIds);That’s it! You should be able to see the blob in your blob storage. It’s that easy.

Complete Code

Here’s the complete code:

<?php require_once 'WindowsAzure/WindowsAzure.php'; use WindowsAzure\Common\ServicesBuilder; use WindowsAzure\Common\ServiceException; use WindowsAzure\Blob\Models\Block; use WindowsAzure\Blob\Models\BlobBlockType; define('CHUNK_SIZE', 1024*1024);//Block Size = 1 MB try { $connectionString = "UseDevelopmentStorage=true"; $instance = ServicesBuilder::getInstance(); $blobRestProxy = $instance -> createBlobService($connectionString); $containerName = "[mycontainer]"; $blobName = "[myblobname]"; $handler = fopen("[full file path]", "r"); $counter = 1; $blockIds = array(); while (!feof($handler)) { $blockId = str_pad($counter, 6, "0", STR_PAD_LEFT); $block = new Block(); $block -> setBlockId(base64_encode($blockId)); $block -> setType("Uncommitted"); array_push($blockIds, $block); $data = fread($handler, CHUNK_SIZE); echo " \n "; echo " -----------------------------------------"; echo " \n "; echo "Read " . strlen($data) . " of data from file"; echo " \n "; echo " -----------------------------------------"; echo " \n "; echo "Uploading block #: " . $blockId . " into blob storage. Please wait."; echo " \n "; echo " -----------------------------------------"; echo " \n "; $blobRestProxy -> createBlobBlock($containerName, $blobName, base64_encode($blockId), $data); echo "Uploaded block: " . $blockId . " into blob storage."; echo " \n "; echo " -----------------------------------------"; echo " \n "; $counter = $counter + 1; } fclose($handler); echo "Now committing block list. Please wait."; echo " \n "; echo " -----------------------------------------"; echo " \n "; $blobRestProxy -> commitBlobBlocks($containerName, $blobName, $blockIds); echo "Blob created successfully."; } catch(Exception $e){ // Handle exception based on error codes and messages. // Error codes and messages are here: // http://msdn.microsoft.com/en-us/library/windowsazure/dd179439.aspx $code = $e->getCode(); $error_message = $e->getMessage(); echo $code.": ".$error_message."<br />"; } ?>Summary

As you saw, how insanely easy it is to upload a large file in blob storage using PHP SDK. I didn’t (and still don’t) know anything about PHP but I was able to put this code together in a matter of hours. I think if you’re a PHP developer, you should be able to do so in minutes. I hope you’ve found this information useful. As always, if you find any issues with the post please let me know and I’ll fix it ASAP.

‡ Joe Giardino of the Windows Azure Storage Team posted Introducing Storage Client Library 2.1 RC for .NET and Windows Phone 8 on 7/11/2013:

We are pleased to announce the public availability of 2.1 Release Candidate (RC) build for the storage client library for .NET and Windows Phone 8. The 2.1 release includes expanded feature support, which this blog will detail.

Why RC?

We have spent significant effort in releasing the storage clients on a more frequent cadence as well as becoming more responsive to client feedback. As we continue that effort, we wanted to provide an RC of our next release, so that you can provide us feedback that we might be able to address prior to the “official” release. Getting your feedback is the goal of this release candidate, so please let us know what you think.

What’s New?

This release includes a number of new features, many of which have come directly from client feedback (so please keep this coming), which are detailed below.

Async Task Methods

Each public API now exposes an Async method that returns a task for a given operation. Additionally, these methods support pre-emptive cancellation via an overload which accepts a CancellationToken. If you are running under .NET 4.5, or using the Async Targeting Pack for .NET 4.0, you can easily leverage the async / await pattern when writing your applications against storage.

Table IQueryable

In 2.1 we are adding IQueryable support for the Table Service layer on desktop and phone. This will allow users to construct and execute queries via LINQ similar to WCF Data Services, however this implementation has been specifically optimized for Windows Azure Tables and NoSQL concepts. The snippet below illustrates constructing a query via the new IQueryable implementation:

var query = from ent in currentTable.CreateQuery<CustomerEntity>()

where ent.PartitionKey == “users” && ent.RowKey = “joe”

select ent;

The IQueryable implementation transparently handles continuations, and has support to add RequestOptions, OperationContext, and client side EntityResolvers directly into the expression tree. To begin using this please add a using to the Microsoft.WindowsAzure.Storage.Table.Queryable namespace and construct a query via the CloudTable.CreateQuery<T>() method. Additionally, since this makes use of existing infrastructure optimization such as IBufferManager, Compiled Serializers, and Logging are fully supported.

Buffer Pooling

For high scale applications, Buffer Pooling is a great strategy to allow clients to re-use existing buffers across many operations. In a managed environment such as .NET, this can dramatically reduce the number of cycles spent allocating and subsequently garbage collecting semi-long lived buffers.

To address this scenario each Service Client now exposes a BufferManager property of type IBufferManager. This property will allow clients to leverage a given buffer pool with any associated objects to that service client instance. For example, all CloudTable objects created via CloudTableClient.GetTableReference() would make use of the associated service clients BufferManager. The IBufferManager is patterned after the BufferManager in System.ServiceModel.dll to allow desktop clients to easily leverage an existing implementation provided by the framework. (Clients running on other platforms such as WinRT or Windows Phone may implement a pool against the IBufferManager interface)

Multi-Buffer Memory Stream

During the course of our performance investigations we have uncovered a few performance issues with the MemoryStream class provided in the BCL (specifically regarding Async operations, dynamic length behavior, and single byte operations). To address these issues we have implemented a new Multi-Buffer memory stream which provides consistent performance even when length of data is unknown. This class leverages the IBufferManager if one is provided by the client to utilize the buffer pool when allocating additional buffers. As a result, any operation on any service that potentially buffers data (Blob Streams, Table Operations, etc.) now consumes less CPU, and optimally uses a shared memory pool.

.NET MD5 is now default

Our performance testing highlighted a slight performance degradation when utilizing the FISMA compliant native MD5 implementation compared to the built in .NET implementation. As such, for this release the .NET MD5 is now used by default, any clients requiring FISMA compliance can re-enable it as shown below:

CloudStorageAccount.UseV1MD5 = false;

Client Tracing

The 2.1 release implements .NET tracing, allowing users to enable log information regarding request execution and REST requests (See below for a table of what information is logged). Additionally, Windows Azure Diagnostics provides a trace listener that can redirect client trace messages to the WADLogsTable if users wish to persist these traces to the cloud.

To enable tracing in .NET you must add a trace source for the storage client to the app.config and set the verbosity

<system.diagnostics>

<sources>

<source name="Microsoft.WindowsAzure.Storage">

<listeners>

<add name="myListener"/>

</listeners>

</source>

</sources>

<switches>

<add name="Microsoft.WindowsAzure.Storage" value="Verbose" />

</switches>

…The application is now set to log all trace messages created by the storage client up to the Verbose level. However, if a client wishes to enable logging only for specific clients or requests they can further configure the default logging level in their application by setting OperationContext.DefaultLogLevel and then opt-in any specific requests via the OperationContext object:

// Disbable Default Logging

OperationContext.DefaultLogLevel = LogLevel.Off;// Configure a context to track my upload and set logging level to verbose

OperationContext myContext = new OperationContext() { LogLevel = LogLevel.Verbose };blobRef.UploadFromStream(stream, myContext);

New Blob APIs

In 2.1 we have added Blob Text, File, and Byte Array APIs based on feedback from clients. Additionally, Blob Streams can now be opened, flushed, and committed asynchronously via new Blob Stream APIs.

New Range Based Overloads

In 2.1 Blob upload API’s include an overload which allows clients to only upload a given range of the byte array or stream to the blob. This feature allows clients to avoid potentially pre-buffering data prior to uploading it to the storage service. Additionally, there are new download range API’s for both streams and byte arrays that allow efficient fault tolerant range downloads without the need to buffer any data on the client side.

IgnorePropertyAttribute

When persisting POCO objects to Windows Azure Tables in some cases clients may wish to omit certain client only properties. In this release we are introducing the IgnorePropertyAttribute to allow clients an easy way to simply ignore a given property during serialization and de-serialization of an entity. The following snippet illustrates how to ignore my FirstName property of my entity via the IgnorePropertyAttribute:

public class Customer : TableEntity

{

[IgnoreProperty]

public string FirstName { get; set; }

}Compiled Serializers

When working with POCO types previous releases of the SDK relied on reflection to discover all applicable properties for serialization / de-serialization at runtime. This process was both repetitive and expensive computationally. In 2.1 we are introducing support for Compiled Expressions which will allow the client to dynamically generate a LINQ expression at runtime for a given type. This allows the client to do the reflection process once and then compile a lambda at runtime which can now handle all future read and writes of a given entity type. In performance micro-benchmarks this approach is roughly 40x faster than the reflection based approach computationally.

All compiled expressions for read and write are held in a static concurrent dictionaries on TableEntity. If you wish to disable this feature simply set TableEntity.DisableCompiledSerializers = true;

Easily Serialize 3rd Party Objects

In some cases clients wish to serialize objects in which they do not control the source, for example framework objects or objects form 3rd party libraries. In previous releases clients were required to write custom serialization logic for each type they wished to serialize. In the 2.1 release we are exposing the core serialization and de-serialization logic for any CLR type via the static TableEntity.[Read|Write]UserObject methods. This allows clients to easily persist and read back entities objects for types that do not derive from TableEntity or implement the ITableEntity interface. This pattern can also be especially useful when exposing DTO types via a service as the client will longer be required to maintain two entity types and marshal between them.

Numerous Performance Improvements

As part of our ongoing focus on performance we have included numerous performance improvements across the APIs including parallel blob upload, table service layer, blob write streams, and more. We will provide more detailed analysis of the performance improvements in an upcoming blog post.

Windows Phone

The Windows Phone client is based on the same source code as the desktop client, however there are 2 key differences due to platform limitations. The first is that the Windows Phone library does not expose synchronous methods in order to keep applications fast and fluid. Additionally, the Windows Phone library does not provide MD5 support as the platform does not expose an implementation of MD5. As such, if your scenario requires it, you must validate the MD5 at the application layer. The Windows Phone library is currently in testing and will be published in the coming weeks. Please note that it will only be compatible with Windows Phone 8, not 7.x.

Summary

We have spent considerable effort in improving the storage client libraries in this release. We welcome any feedback you may have in the comments section below, the forums, or GitHub.

• Eric D. Boyd (@EricDBoyd) described My Windows Azure Data Services Session at WPC 2013 in a 7/8/2013 post:

This afternoon, I have the privilege of joining Scott Klein and Joanne Marone on stage at the 2013 Microsoft Worldwide Partner Conference. If you are wanting to learn more about key Windows Azure scenarios that we see with our customers and how Windows Azure Data Services help you drive more opportunities in these key scenarios, you will not want to miss this interactive session. You will have the chance to get involved, ask questions and get involved in this interactive session. The when, where and what for this session is below.

Drive Opportunities with Windows Azure Data Services

When: Monday, July 8th @ 4:30 PM

Session Code: SC27i

Room: GRBCC: 372 A

Create new business opportunities with Windows Azure, which enables partners to mix-and-match cloud-based data management services to reimagine application design and IT solutions. In this session, you will be exposed to a variety of real-world scenarios that can be used to solve today’s real-world challenges and, based on Microsoft experience, you’ll see where the hidden revenue potential lies.

Great new samples and tutorials for Mobile Services integration scenarios

<Return to section navigation list>

Windows Azure SQL Database, Federations and Reporting, Mobile Services

‡ Mike Taulty (@mtaulty) described Azure Mobile Services–Custom APIs and URI Paths in a 7/12/2013 post:

In the previous post where I was taking a look at Custom URIs in Azure Mobile Services I’d got to the point where I was defining server-side handlers in JavaScript for specific HTTP verbs.

For instance, I might have a service at http://whatever.azure-mobile.net/myService (I don’t have this service by the way) and I might want to accept actions like;

- GET /myService

- GET /myService/1

- POST /myService

- PATCH /myService/2

- DELETE /myService/3

In my previous post I didn’t know how to do this – that is, I didn’t know how I could enable Mobile Services such that I could add something like a resource identifier as the final part of the path and so I ended up using a scheme something like:

- GET /myService?id=1

- DELETE /myService?id=2

and so on.

Since that last post, I’ve been educated and I learned a little bit more by asking the Mobile Services team. I suspect that my ignorance largely comes from not having a real understanding of node.js and also of the express.js library that seems to underpin Mobile Services. Without that understanding of the surrounding context I feel I’m poking around in the dark a little when it comes to figuring out how to tackle things that aren’t immediately obvious.

Since asking the Mobile Services team, I’ve realised that the answer was already “out there” in the form of this blog post which covers similar ground to mine but comes from a more trusted source so you should definitely take a look at that and the section called “Routing” is the piece that I was missing (along with this link to the routing feature from express.js).

Even with that already “out there”, I wanted to play with this myself so I set up a service called whatever.azure-mobile.net and added a custom API to it called myService.

When you do that via the Azure management portal, some nice bit of code somewhere throws in a little template example for you which looks like this;

exports.post = function(request, response) { // Use "request.service" to access features of your mobile service, e.g.: // var tables = request.service.tables; // var push = request.service.push; response.send(200, "Hello World"); };and what I hadn’t appreciated previously was that this is in some ways a shorthand way of using the register function from express.js as in a longer-hand version might be;

exports.register = function(api) { console.log("Hello"); api.get('*', function(request, response) { response.send(200, "Hello World"); }); };Now, when I first set about trying to use that I crafted a request in Fiddler;

and I got a 404 until I realised that I needed to append the trailing slash - I’m unsure of exactly how that works;

but this does now mean that I can pick up additional paths from the URI and do something with them and there’s even some pattern matching built-in for me which is nice. For instance;

exports.register = function(app) { app.get('/:id', function(request, response) { response.send(200, "Getting record id " + request.params.id); }); app.get('*', function(request, response) { response.send(200, "Getting a bunch of data"); }); app.delete('/:id', function(request,response) { console.log("Deleting record " + request.params.id); response.send(204); }); };and that’s the sort of thing that I was looking for in the original post – from trying this out empirically, it does seem to be important to add the more specific routes prior to the less specific routes. That seemed to make a difference to handling /myService/ versus /myService/1.

• Glenn Gailey (@ggailey777) described Great new samples and tutorials for Mobile Services integration scenarios in a 7/11/2013 post:

I just wanted to let everyone know that Paolo Salvatori, one of the rock stars on the Customer Advisory Team (CAT), has just published a suite of integration tutorials for Windows Azure Mobile Services, including sample project code, covering the following key Windows Azure enterprise and LOB scenarios:

How to integrate a Mobile Service with a SOAP Service Bus Relay Service

This sample demonstrates how to integrate a mobile service with a line of business application running on-premises via Service Bus Relayed Messaging. The Access Control Service is used to authenticate the mobile service against the underlying application.- How to integrate a Mobile Service with a REST Service Bus Relay Service

This sample demonstrates how to integrate a mobile service with a line of business application running on-premises via Service Bus Relayed Messaging. The Access Control Service is used to authenticate the mobile service against the underlying application.- How to use Windows Azure Table Storage in Windows Azure Mobile Services

This sample demonstrates how to use Windows Azure Table Storage with Mobile Service to store data instead of the default SQL Database tables.- How to integrate Mobile Services with Windows Azure BizTalk Services

This sample demonstrates how to integrate a mobile service with line of business applications, running on-premises or in the cloud, by using Windows Azure BizTalk Services and Service Bus Relayed Messaging. The Access Control Service is used to handle security.- How to integrate Mobile Services with BizTalk Server via Service Bus

This sample demonstrates how to integrate a mobile service with line of business applications, running on-premises or in the cloud, via BizTalk Server 2013, Service Bus Brokered Messaging and Service Bus Relayed Messaging. The Access Control Service is used to handle security.

This is just super content that Mobile Services has been needing for a while. Great job Salvatori!

See Paolo’s article at the end of this section.

• Jim O’Neil (@jimoneil) produced a Practical Azure #24: Windows Azure Mobile Services video on 7/8/2013:

Well, this one went a bit longer than most, but only because Windows Azure Mobile Services has so many cool features that are relevant to just about anyone building mobile applications today - regardless of platform. Join me for this pre-Build 2013 session, in which I build a Windows Phone 8 application complete with data, authentication, and push notifications hosted on Window Azure Mobile Services Backend-as-a-Service offering.

Note too that there were some significant announcements at Build 2013 regarding Windows Azure Mobile Services, so be sure to check out these sessions as well:

Mobile Services - Soup to Nuts

- Building Cross-Platform Apps with Windows Azure Mobile Services

- Protips for Windows Azure Mobile Services

- Connected Windows Phone Apps Made Easy with Azure Mobile Services

- Securing Windows Store Applications and REST Services with Active Directory

- Going Live and Beyond with Windows Azure Mobile Services

Download: MP3 MP4

(iPod, Zune HD)High Quality MP4

(iPad, PC)Mid Quality MP4

(WP7, HTML5)High Quality WMV

(PC, Xbox, MCE)And here are the Handy Links for this episode:

Steven Martin (@stevemar_msft) announced a new Premium offer for Windows Azure SQL Database in a 7/8/2013 post to the Windows Azure Team blog:

… To further advance Windows Azure’s platform services for business-critical applications in the cloud, we are excited to announce a new Premium offer for Windows Azure SQL Database. Available via a limited preview in a few weeks, the Premium offer will help deliver greater performance for cloud applications by dedicating a fixed amount of reserved capacity for a database including its built-in secondary replicas. [Emphasis added.] …

See the Windows Azure Access Control, Active Directory, and Identity section below for details.

The SQL Server Team (@SQLServer) posted A Closer Look at the Premium Offer for Windows Azure SQL Database on 7/9/2013:

As part of the main keynote yesterday at the Worldwide Partner Conference (WPC) in Houston, Texas, Satya Nadella, Server and Tools President, discussed partner and customer innovations around modern business applications built with the Windows Azure platform. As part of this cloud momentum, Satya announced a new Premium offer for Windows Azure SQL Database that delivers more predictable performance. With the limited preview for this new Premium database offer coming in a few weeks, we wanted to take a closer look at the additional value SQL Database will deliver.

This new capability enables both customers and Service Integrator (SI), ISV, and CSV partners to raise the bar on the types of modern business application services and products they can build and offer to customers. The Premium offer for Windows Azure SQL Database will help deliver more powerful and predictable performance for cloud applications by dedicating a fixed amount of reserved capacity for a database including its built-in secondary replicas. Reserved capacity is ideal for cloud-based applications with the following requirements:

- Sustained resource demand

- Many concurrent requests

- Predictable latency

As part of our product testing, customers with business-critical cloud applications are already experiencing tremendous value from using the Premium offer with reserved capacity:

- MYOB: “The Premium offer from Windows Azure SQL Database plays a valuable role in MYOB’s cloud solutions offering. We moved across only a few weeks ago and are already enjoying the benefits of a more insulated infrastructure environment. Our many AccountRight Live clients rely on Windows Azure, where the reservation service sits, as the first step in accessing the cloud file that stores their critical business financial data. It supports significant traffic – our clients log in thousands of times each day.” James Scollay - MYOB GM, Business Division

- easyJet: “We use Windows Azure SQL Database for our seating selection tool online at easyJet. The reserved capacity with the new SQL Database Premium offer has enabled us to add an extra facet of performance predictability during important periods of peak workload where customer demands are high. This reliable, business-grade solution has also allowed us to gather telemetry against a known, fixed resource so we can better benchmark and capacity plan our solutions moving forward.” Bert Craven, Enterprise Architect Manager

Initially, we will offer two reservation sizes: P1 and P2. P1 offers better performance predictability than the SQL Database Web and Business editions, and P2 offers roughly twice the performance of P1 and is suitable for applications with greater peaks and sustained workload demands. Premium database are billed based on the Premium database reservation size and storage volume of the database.

Premium Database:

Storage: $0.095 per GB per month (prorated daily)

If you are as excited as we are and want a heads up when the preview is available, sign up for Microsoft Cloud OS Bits Alert—we’ll send you an email when signups can begin! Preview is initially limited and subscriptions on a free trial are not eligible for a Premium database.

Paolo Salvatore (@babosbird) explained How to integrate a Mobile Service with a SOAP Service Bus Relay Service in a 7/8/2013 post:

Introduction

This sample demonstrates how to integrate Windows Azure Mobile Service with a line of business application running on-premises via Service Bus Relayed Messaging. The Access Control Service is used to authenticate Mobile Services against the underlying application.

Scenario

A mobile service receives CRUD operations from an HTML5/JavaScript and Windows Store app, but instead of accessing data from a SQL Azure database, it invokes a LOB application running in a corporate data center. The LOB system uses WCF service layer to expose its functionality via SOAP to external applications. In particular, the WCF service uses a BasicHttpRelayBinding endpoint to expose its functionality via a Service Bus Relay Service. The endpoint is configured to authenticate incoming calls using a relay access token issued ACS. The WCF service accesses data from the ProductDb database hosted by a local instance of SQL Server 2012. In particular, the WCF services uses the new asynchronous programming feature provided by ADO.NET 4.5 to access data from the underlying database.

Architecture

The following diagram shows the architecture of the solution.

Message Flow

- The HTML5/JavaScript site or Windows Store app sends a request to a user-defined custom API of a Windows Azure Mobile Service via HTTPS. The HTML5/JS application uses the invokeApi method exposed by the HTML5/JavaScript client for Windows Azure Mobile Services to call the mobile service. Likewise, the Windows Store app uses the InvokeApiAsync method exposed by the MobileServiceClient class. The custom API implements CRUD methods to create, read, update and delete data. The HTTP method used by the client application to invoke the user-defined custom API depends on the invoked operation:

- Read: GET method

- Add: POST method

- Update: POST method

- Delete: DELETE method

- The custom API sends a request to the Access Control Service to acquire a security token necessary to be authenticated by the underlying Service Bus Relay Service. The client uses the OAuth WRAP Protocol to acquire a security token from ACS. In particular, the server script sends a request to ACS using a HTTPS form POST. The request contains the following information:

- wrap_name: the name of a service identity within the Access Control namespace of the Service Bus Relay Service (e.g. owner)

- wrap_password: the password of the service identity specified by the wrap_name parameter.

- wrap_scope: this parameter contains the relying party application realm. In our case, it contains the http base address of the Service Bus Relay Service (e.g. http://paolosalvatori.servicebus.windows.net/)

- ACS issues and returns a security token. For more information on the OAuth WRAP Protocol, see How to: Request a Token from ACS via the OAuth WRAP Protocol.

- The mobile service user-defined custom API extracts the wrap_access_token from the security token issued by ACS. The custom API uses a different function to serve the request depending on the HTTP method and parameters sent by the client application:

Each of the above functions performs the following actions:

- getProduct: this function is invoked when the HTTP method is equal to GET and the querystring contains a productid or id parameter. This method calls the GetProduct operation exposed by the underlying WCF service.

- getProducts: this function is invoked when the HTTP method is equal to GET and the querystring does not contain any parameter. This method calls the GetProducts operation exposed by the underlying WCF service.

- getProductsByCategory: this function is invoked when the HTTP method is equal to GET and the querystring contains a category parameter. This method calls the GetProductsByCategory operation exposed by the underlying WCF service.

- addProduct: this function is invoked when the HTTP method is equal to POST and the request body contains a new product in JSON format. This method calls the AddProduct operation exposed by the underlying WCF service.

- updateProduct: this function is invoked when the HTTP method is equal to PUT or PATCH and the request body contains an existing product in JSON format. This method calls the UpdateProduct operation exposed by the underlying WCF service.

- deleteProduct: this function is invoked when the HTTP method is equal to DELETE and the querystring contains a productid or id parameter. This method calls the DeleteProduct operation exposed by the underlying WCF service.

- Creates a SOAP envelope to invoke the underlying WCF service. In particular, the Header contains a RelayAccessToken element which in turn contains the wrap_access_token returned by ACS in base64 format. The Body contains the payload for the call. The node-uuid Node.js module is used to generate a unique id for the security token at each call. This module is downloaded from Git using NPM (Node Package Manager) and then uploaded to the mobile service again using Git. See below to see more on how to accomplish this task.

- Uses the https Node.js module to send the SOAP envelope to the Service Bus Relay Service.

- The Service Bus Relay Service validates and remove the security token, then forwards the request to one the listeners hosting the WCF service.

- The WCF service uses the new asynchronous programming feature provided by ADO.NET 4.5 to access data from the ProductDb database. For demo purpose, the WCF service runs in a console application, but the sample can easily be modified to host the service in IIS.

- The WCF service returns a response message to the Service Bus Relay Service.

- The Service Bus Relay Service forwards the message to the mobile service. The custom API performs the following actions:

- Uses the xml2js Node.js module to change the format of the response SOAP message from XML to JSON.

- Flattens the resulting JSON object to eliminate unnecessary arrays.

- Extracts data from the flattened representation of the SOAP envelope and creates a response message in JSON object.

- The mobile service returns data in JSON format to the client application.

NOTE: the mobile service communicates with client applications using a REST interface and messages in JSON format, while it communicates with the Service Bus Relay Service using the SOAP protocol.

Access Control Service

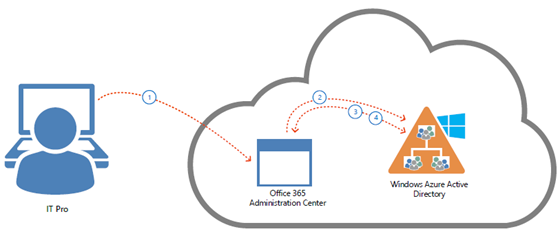

The following diagram shows the steps performed by a WCF service and client to communicate via a Service Bus Relay Service. The Service Bus uses a claims-based security model implemented using the Access Control Service (ACS). The service needs to authenticate against ACS and acquire a token with the Listen claim in order to be able to open an endpoint on the Service Bus. Likewise, when both the service and client are configured to use the RelayAccessToken authentication type, the client needs to acquire a security token from ACS containing the Send claim. When sending a request to the Service Bus Relay Service, the client needs to include the token in the RelayAccessToken element in the Header section of the request SOAP envelope. The Service Bus Relay Service validates the security token and then sends the message to the underlying WCF service. For more information on this topic, see How to: Configure a Service Bus Service Using a Configuration File.

Prerequisites

- Visual Studio 2012 Express for Windows 8

- Windows Azure Mobile Services SDK for Windows 8

- and a Windows Azure account (get the Free Trial)

Building the Sample

Proceed as follows to set up the solution.

Create the Mobile Service

Follow the steps in the tutorial to create the mobile service.

- Log into the Management Portal.

At the bottom of the navigation pane, click +NEW.

Expand Mobile Service, then click Create.

This displays the New Mobile Service dialog.

In the Create a mobile service page, type a subdomain name for the new mobile service in the URL textbox and wait for name verification. Once name verification completes, click the right arrow button to go to the next page.

This displays the Specify database settings page. NOTE: as part of this tutorial, you create a new SQL Database instance and server. However, the database is not used by the present solution. Hence, if you already have a database in the same region as the new mobile service, you can instead choose Use existing Database and then select that database. The use of a database in a different region is not recommended because of additional bandwidth costs and higher latencies.

In Name, type the name of the new database, then type Login name, which is the administrator login name for the new SQL Database server, type and confirm the password, and click the check button to complete the process.

Define the custom API

- Log into the Windows Azure Management Portal, click Mobile Services, and then click your app.

- Click the API tab, and then click Create a custom API.

This displays the Create a new custom API dialog.

- Enters products in the API NAME field. Select Anybody with the Application Key permission for all the HTTP methods and then click the check button.

This creates the new custom API.

- Click the new products entry in the API table.

- Click the Scripts tab and replace the existing code with the following: …

Paolo continues with hundreds of lines of source code.

<Return to section navigation list>

Windows Azure Marketplace DataMarket, Power BI, Big Data and OData

•• Max Uritsky (@max_data) reported Windows Azure Marketplace is available in 50 additional countries and features new exciting content including our own Bing Optical Character Recognition service in a 7/11/2013 post:

Hello Windows Azure Marketplace users,

We have some very exciting news to share with you – we are now commercially available in 50 additional countries!, taking our total countries support up to 88. We also added some new and exciting content to the Marketplace, including Microsoft’s Optical Character Recognition service recently announced at //build conference, new data services from D&B, French postal offices locations directly from La Poste and great UK location services from MapMechanics.

1) Customers from the following countries can now purchase and consume qualified Marketplace offers:

Algeria, Argentina, Bahrain, Belarus, Bulgaria, Croatia, Cyprus, Dominican Republic, Ecuador, Egypt, El Salvador, Estonia, Guatemala, Iceland, India, Indonesia, Jordan, Kazakhstan, Kenya, Kuwait, Latvia, Liechtenstein, Lithuania, Macedonia, Malta, Montenegro, Morocco, Nigeria, Oman, Pakistan, Panama, Paraguay, Philippines, Puerto Rico, Qatar, Russia, Saudi Arabia, Serbia, Slovakia, Slovenia, South Africa, Sri Lanka, Taiwan, Thailand, Tunisia, Turkey, Ukraine, United Arab Emirates, Uruguay, Venezuela.

Already registered in a different market?

You can migrate your account to your native market by few simple steps:

- Go to https://datamarket.azure.com/account

- Cancel any existing application or data subscriptions

- Click “Edit” on “Account Information” page

- Select the region associated with your Microsoft Account

- Click “Save”

- Subscribe to all your favorite offers again and enjoy new features like local currency pricing and billing

2) Bing OCR (Optical Character Recognition) Control is now available on the Windows Azure Marketplace – you can now leverage Microsoft’s cloud-based optical character recognition capabilities and integrate it into your Windows 8 and Windows 8.1 apps. Click here to check out the offer and here to learn more about the OCR service.

3) We are pretty pumped to have a dataset from the prestigious French Postal Office, La Poste. Please check out the offer here.

Here is a snapshot of the offer in our Service Explorer:

4) D&B (Dun & Bradstreet), the company known as the leading source of commercial data and insight on businesses, has added six new APIs to the growing list of data offers on the Windows Azure Marketplace. Check out the new offerings from D&B:

Identification:

- Company Cleanse & Match for Microsoft SQL Server Data Quality Services – uses SQL Server 2012 Data Quality Services with D&B dataset as reference data for data cleansing project

- Global Address Validation – lets you verify and clean global company addresses.

Enrichment:

- Company Demographics – Address and Phone – can be explored directly in the Marketplace, or by using Excel or via API programming.

- Company Firmographics – Industry and Sales – same access methods as above

- Company Family Hierarchy – Related Companies – same access methods as above

Discovery:

- D&B Business Insight ( AKA Company prospect builder ) - same access methods as above.

To get a full list of data services, please click here and to get a full list of all the applications available through the Windows Azure Marketplace, please click here.

The Microsoft Business Intelligence Team (@MicrosoftBI) reported Power BI for Office 365 Revealed at Microsoft Worldwide Partner Conference (WPC) on 7/8/2013:

Today, we are pleased to announce a new offering that builds on our self-service BI story -- Power BI for Office 365. Unveiled this morning by Satya Nadella, President of the Server and Tools Business, during his keynote at our annual Worldwide Partner Conference, Power BI for Office 365 is a complete Self-service BI solution delivered as part of Excel and as an offer for Office 365.

Power BI provides everyone with powerful new ways to work with data in Excel and Office 365. Search, discover, and access data from public and internal sources and then transform and shape that data from within Excel. Analyze and create bold interactive visualizations to uncover insights to then share and collaborate out through new BI experiences for Office 365.

For more information, see the Power BI team blog post, “Announcing Power BI for Office 365”. This post will give you more information on the features and benefits, some great examples and screenshots, and links to additional resources. Sound interesting? The public preview of Power BI for Office 365 will be available this summer. You can sign up now at http://www.office.com/powerbi to be notified when the preview is available

For more information, see the new Power BI blog at http://blogs.msdn.com/b/powerbi/.

The Microsoft “Data Explorer” Preview for Excel Team (@DataExplorer) reported "Data Explorer" is now Microsoft Power Query for Excel in a 7/6/2013 post:

Along with the Power BI related announcements at the Worldwide Partner Conference on July 8 2013, we are pleased to announce that Microsoft Power Query for Excel (previously known as codename “Data Explorer”) has reached General Availability and is now available for download. Please follow the Power BI blog for future updates and how-to articles on Power Query. We also announced the upcoming preview of Power BI for Office 365, to learn more visit us at office.com/powerbi

<Return to section navigation list>

Windows Azure Service Bus, BizTalk Services and Workflow

<Return to section navigation list>

Windows Azure Access Control, Active Directory, Identity and Workflow

Francois Lascelles (@flascelles) explained Federation Evolved: How Cloud, Mobile & APIs Change the Way We Broker Identity in a 7/9/2013 post:

The adoption of cloud by organizations looking for more efficient ways to deploy their own IT assets or as a means to offset the burden of data management drives the need for identity federation in the enterprise. Compounding this is the mobile effect from which there is no turning back. Data must be available any time, from anywhere and the identities accessing it must be asserted on mobile devices, in cloud zones, always under the stewardship of the enterprise.

APIs serve federation by enabling lightweight delegated authentication schemes based on OAuth handshakes using the same patterns as used by social login. The standard specifying such patterns is OpenID Connect where a relying party subjects a user to an OAuth handshake and then calls an API on the identity provider to discover information about the user thus avoiding having to setup a shared secret with that user – no identity silo. This new type of federation using APIs is easier to implement for relying party as it avoids parsing and interpreting complex SAML messages with XML digital signatures both of which tend to suffer from interoperability challenges.

Now, let’s turn this around, sometimes what needs to be federated is the API itself, not just the identities that consume it. For example, consider the common case of a cloud API consumed by a social media team on behalf of an organization. When the social media service is consumed from mobile apps, the cloud API is consumed directly and the enterprise has no ability to control or monitor information being posted on its behalf.

In addition to this lack of control, this simplistic cloud api consumption on behalf of an organization by a group of users require that they share the organization account itself, including the password associated to it. The security implications of shared passwords are often overlooked. Shared service accounts multiply the risk of a password being compromised. There are numerous recent examples of enterprise social media being hacked with disastrous PR consequences. Famous examples from earlier this year include twitter hacks of the Associated Press leading to a false report of explosions at the White House and Burger King promoting competitor McDonalds.

Federating such cloud API calls involves the applications sending the API calls through an API broker under the control of the organization. Each of these API calls is made through an enterprise identity context, that is, each user signs in with its own enterprise identity. The API broker then ‘converts’ these API calls into API calls to the cloud provider using the identity context of the organization.

In this case, federating the cloud API calls means that the enterprise controls the organization’s account. Its password is not shared or known by anybody outside of an administrator responsible for maintaining a session used by an API broker. Users responsible for acting on that cloud service on behalf of the organization can do so while mobile but are authenticated using their enterprise credentials. The ability of a specific user to act on behalf of an organization is controlled in real time. This can for example be based on attributes read from a user directory or pre-defined white list in the broker itself.

By configuring policies in this broker, the organization has the ability to filter the information sent to and received from the cloud provider. The use of the cloud provider is also monitored and the enterprise can generate its own metrics and analytics relating to this cloud provider.

On July 23, I will be co-presenting a Layer 7 webinar with CA’s Ehud Amiri titled Federation Evolved: How Cloud, Mobile & APIs Change the Way We Broker Identity. In this webinar, we will examine the differences between identity federation across Web, cloud and mobile, look at API specific use cases and explore the impact of emerging federation standards.

Steven Martin (@stevemar_msft) posted Going ‘Cloud First’ with Windows Azure from Microsoft’s Worldwide Partners Conference on 7/8/2013:

There’s no doubt that the cloud is providing tremendous opportunity to our partners, with businesses forecast to spend $98 billion on public cloud services by 2016 worldwide, according to IDC. Today at the Worldwide Partner Conference in Houston, we’re excited to connect with our partners to talk about new ways they can seize this opportunity to help their customers thrive. We are committed to helping our partners and customers embrace cloud computing, using a “cloud-first” approach to all we do, from our partner programs to our engineering principles to our product innovations.

Windows Azure is a key ingredient of this approach, and today we’re highlighting a number of ways for partners to integrate with the cloud through new offerings for both platform and infrastructure services.

First, we’re pleased to announce that new application access enhancements for Windows Azure Active Directory are now available in Preview at no additional cost. These new enhancements enable integration of identities across both Microsoft and third-party SaaS applications, making it super easy for users to get their work done without having to remember individual user ids and passwords for each online app. You don’t need to recreate all of your corporate identities; you can easily synch the on-premise Active Directory with Windows Azure Active Directory and be up and running in no time. If you are running Office 365 you are already running an Azure cloud directory! (Emphasis added.)

Here are some of the key capabilities you’ll find in the Preview:

- Seamlessly enable single sign-on for many popular pre-integrated cloud apps

- Add and remove identities from the top SaaS apps such as Office365, Box, Salesforce and others using the Azure Management Portal

- Report on unusual application access patterns using predefined security reports

- Launch assigned apps from a single web page called the Access Panel.

Windows Azure Active Directory provides unmatched integration and global scale. There isn’t another cloud provider in the market that delivers the level of identity integration across platform and infrastructure services, third party SaaS apps, and on-premise directories via a single identity store. We’ll continue to innovate in this space in the coming months including more pre-integrated apps so keep an eye out for future updates. For a deeper dive on the new enhancements read Alex’s blog post.

To further advance Windows Azure’s platform services for business-critical applications in the cloud, we are excited to announce a new Premium offer for Windows Azure SQL Database. Available via a limited preview in a few weeks, the Premium offer will help deliver greater performance for cloud applications by dedicating a fixed amount of reserved capacity for a database including its built-in secondary replicas. [Emphasis added.]

At our Build developer conference just a few weeks ago we introduced a new investments in monitoring, alerting and autoscale. Starting today, you can also use autoscale with Windows Azure Mobile Services Standard and Premium tiers, enabling you to automatically scale your mobile applications based on demand. There is no additional cost for this feature while in preview. Check it out on the Mobile Services page.

Also at Build we shared that SQL Server 2014 and Windows Server 2012 R2 preview images are ready for use with Windows Azure Infrastructure Services. Today we are also including the Windows HPC Pack to this list of supported workloads. Whether you are testing the latest parallel algorithm you have written for that risk modeling app or need infrastructure to offload your periodic batch processing jobs, you can simply deploy Windows HPC Pack in Azure and let Windows Azure do the heavy lifting for you.

In addition to building great products and services just about everything that we do at Microsoft involves our deep partner ecosystem and we’re thankful for the many great companies that we’ve had the opportunity to integrate with over the years across the many products we produce, both on premises and in the cloud. Let’s take a closer look at the opportunities that partners have to integrate with Windows Azure.

If you are a solution integrator there are some great opportunities to help customers and the cloud opens many new opportunities. Customers everywhere are evaluating how the cloud can help them to get ahead of their competition, innovate more quickly, and scale globally all while benefiting from cloud economics. Here are a few ways that you can work with your customers right now.

- Assist customers with their strategy for integrating their existing on premises identities with the new opportunities that the cloud brings to the market for accessing Saas Apps. Help them to design, integrate and operationalize the new world of identity and access management.

- Assist customers with their strategy to integrate Windows Azure Infrastructure Services for development and test scenarios, migrating apps to take advantage of cloud scale and to integrate the cloud with on-premises infrastructure.

For example, Microsoft Partner Skyline Technologies is moving their business forward with new service offerings based on Microsoft’s cloud services and they recently helped Trek to take their business to the next level using Azure.

“Microsoft’s Azure and Cloud offerings have resulted in two new practice areas for Skyline – Azure Services and Office 365. These new services have created better alignment with our customers by allowing us to develop cloud strategies that provide holistic solutions while reducing cost and time to market.” – Kenny Young - Director, Cloud Computing & Development at Skyline Technologies

If you’re an ISV, Windows Azure provides you with a global cloud platform for developing your apps and it’s available at your fingertips. You can take your app from local to global in no time. CaptiveLogix is taking advantage of cloud innovation to expand their business and help their customers develop new applications.

“Windows Azure has allowed CaptiveLogix to grow our business by providing us a platform to incubate customers and deliver proof of concepts on Azure Infrastructure Services, while also offering the full elasticity of Windows Azure platform services for new applications and systems integrations. We are in a place where cloud has quickly become a viable option for most organizations and Azure has positioned CaptiveLogix well to offer custom solutions that meet the needs of customers today and into the foreseeable future. With a clear roadmap and access to pre-release technology we are able to continually offer our customers growth opportunities.” Tim Fernandes, CaptiveLogix

Partners also have a great opportunity to integrate with the Windows Azure Store to raise visibility to the solutions you’ve built on the platform as well as an additional channel for monetizing your offerings.

These partners see the opportunity, innovation and new capabilities the cloud can offer. They are working to evolve their businesses, are helping customers on the journey to the cloud and are seeing tremendous opportunity ahead of them. But don’t take it from us, try Windows Azure for yourself and see how you can help to move your business and customers forward.

The Office 365 Team offers an Identity and Authentication in the cloud: Office 2013 and Office 365 (Poster) in *.pdf format. Sample illustration:

Windows Azure Virtual Machines, Virtual Networks, Web Sites, Connect, RDP and CDN

• Craig Kitterman (@craigkitterman, pictured below) posted Byron Tardiff’s Scaling Up and Scaling Out in Windows Azure Web Sites article on 7/11/2013:

When you are starting on a new web project, or even just beginning to develop web sites and applications in general, you might want to start small. But you probably won’t stay that way. You might not want to expend resources on a new web farm when you are entering the proof-of-concept phase, but when things get going, you also wouldn’t launch a major marketing campaign on a server under your desk. Developing and deploying in the cloud on Windows Azure Web Sites is no different.

In this blog post, I’ll take you through the ways that you can develop, test, and go live, all while staying within budgeted time and costs.

Standard, Free, and Shared modes in Windows Azure Web Sites

One of the most important considerations when deploying your web site is choosing the right pricing tier for your site. Once you exit the development and test cycle, this will be your corporate web presence site, or an important new digital marketing campaign. or line-of-business app. So you want to make sure that your site is as available and responsive as your business needs demand, while staying comfortably within budget.

Your choice depends on a number of factors like:

- How many individual sites are you planning to host? For example, a digital marketing campaign might include a social-media page for each service you are using, and a different landing page for each target segment.

- How popular do you expect the sites to be? When should traffic levels change? You estimate might be based on numbers of employees for a line-of-business application, or the number of Twitter followers and Facebook Likes for a campaign site. You may also expect differences in traffic due to seasonaility, demand-generation activities like social media activities and ads.

- How much resources (CPU, memory, and bandwidth) will the sites consume?

One of the best things about Windows Azure Web Sites is that you do not need to be able to answer this questions at the time that you launch your web apps and web site into production. Using the scale options provided in the Management Portal you can scale your site on the fly according to user demand and your business goals.

Site Modes in Windows Azure Web Sites

Windows Azure Web Sites (WAWS) offers 3 modes: Standard, Free, and Shared.

Each one of these modes – Standard, Shared, and Free -- offers different set of quotas that control how many resources your site can consume and provides different scaling capabilities. These quotas are summarized in the chart below.

Standard mode & Service Level Agreement (SLA)

Standard mode runs on dedicated instances, making it different from the other ways to buy Windows Azure Web Sites. It also comes with no limits on the CPU usage, and the largest amount of included storage of the 3 modes. See the table above for details.

Standard also has some important capabilities worth highlighting:

- No data egress bandwidth limit – The first 5 GB of data served on the site is included, additional bandwidth is priced according to the “pay-as-you-go” rate.

- Custom DNS Names – Free mode does not allow custom DNS. Standard allows CNAME and A Records.

Standard mode carries an enterprise-grade SLA (Service Level Agreement) of 99.9% monthly, even for sites with just one instance. Windows Azure Web Sites can provide this SLA on a single instance site because of our design, which includes on the fly site provisioning functionality. Provisioning happens behind the scenes without the need to change your site, and happens transparently to any site visitor. By doing this we eliminate availability concerns as part of the scale equation.

Shared and Free modes

Simply put, Shared and Free modes do not offer the scaling flexibility of Standard, and they have some important limits.

Free mode runs on shared Compute resources with other sites in Free or Shared mode, and has an upper limit capping the amount of CPU time that that site (and the other Free sites under the subscription) can use per quota interval. Once that limit is reached, the site – and the other Free sites under the subscription – will stop serving up content/data until the next quota interval. Free mode also has a cap on the amount of data the site can serve to clients, also called “data egress”.

Shared mode, just as the name states, also uses shared Compute resources, and also has a CPU limit – albeit a higher one than Free, as noted in the table above. Shared mode also allows 5 GB data egress with “pay-as-you go” rates beyond that.

So, while neither Free nor Shared is likely to be the best choice for your production environment due to the limits above, they are useful. Free is fine for limited scenarios like trying and learning Windows Azure Web Sites, such as learning how to setup a publish config, connect to Visual Studio, or deploy with TFS, Git, or other deployment tools. Shared has additional capabilities vs. Free that make it great for development and testing out your site under limited, controlled load. For more serious production environments, Standard has way more to offer.

Scale operations, your code, and user sessions/experience

Scale operation have no impact on existing user sessions, beyond improving the user experience when the operation scales the site up, or scales the site out.

Additionally, each scale operation happens quickly – typically within seconds – and does not require changes to your site’s code, nor a redeployment of your site.

Next we’ll discuss what it means to “scale up” and to “scale out.”

Windows Azure Web Sites Scaling Dynamics

Windows Azure Web sites offers multiple ways to scale your website using the Management Portal. These operations are also available if you are managing your site via Microsoft Visual Studio 2012, as detailed in our service documentation.

Scale Up

A scale up operation is the Azure Web Sites cloud equivalent of moving your non-cloud web site to a bigger physical server. So, scale up operations are useful to consider when your site is hitting a quota, signaling that you are outgrowing your existing mode or options. In addition, scaling up can be done on virtually any site without worrying about the implications of multi-instances data consistency.

Two examples of scale up operations in Windows Azure Web Sites are:

- Changing the site mode: If you choose Standard mode, for example, your web site will have no quotas imposed on the CPU usage, and data egress will only be limited by the cost of data egress over the included 5 GB/month.

- Instance Size in Standard mode : In Standard mode, Windows Azure Web Sites allows a choice of different instance sizes, Small, Medium, and Large. This is also analogous to moving to a bigger physical server with increasing numbers of cores and amounts of memory:

- Small: 1 core, 1.75 GB memory

- Medium: 2 cores, 3.5 GB memory

- Large: 4 cores, 7 GB memory\

Scale Out

A scale out operation is the equivalent of creating multiple copies of your web site and adding a load balancer to distribute the demand between them. When you scale out a web site in Windows Azure Web Sites there is no need to configure load balancing separately since this is already provided by the platform.

To scale out your site in Windows Azure Web Sites you would use the Instance Count slider to change the instance count between 1 and 6 in Shared mode and 1 and 10 in reserved mode. This will generate multiple running copies of your website and handle the load balancing configurations necessary to distribute incoming requests across all instances.

To benefit from scale OUT operations your site must be multi-instance safe. Writing a multi-instance safe site is beyond the scope of this posting, but please refer to MSDN resources for .NET languages, such as http://msdn.microsoft.com/en-us/library/3e8s7xdd.aspx

Scale up and scale out operations can be combined for a website to provide hybrid scaling. The same contentions about multi-instance sites would apply to this scenario.

Autoscaling and Scaling in Windows Azure PowerShell

In this blog post, I discussed the concepts involved in scaling up and scaling out in Windows Azure Web Sites, focusing on doing these tasks manually via the Management Portal; similar manual settings are also available in Visual Studio.

We have also added Autoscaling to Windows Azure Web Sites, allowing unattended changes to the scale up/scale out settings on your web site in response to demand.

In addition, the Windows Azure PowerShell allows some scaling operations as well as versatile control of your site and subscription.

Final Thoughts

Windows Azure Web Sites allows you to develop, deploy, and test a web site or web app at low, or even no cost, and seamlessly scale that site all the way up to a more production-ready configuration, and then to scale further in a cost-effective way.

I focused in this blog post on scaling up and scaling out in your web site, but, keep in mind that your site is potentially only a portion of a more complex application that uses other components such as databases, data feeds, storage, or 3rd Party Web API’s. Each one of this components will have its own scale operations and should be taken into consideration when evaluating your scaling options.

Scaling your web sites will have cost implications, of course. And an easy way to help estimate your costs and the impact a given scale operation will have on your wallet, is to use the Azure Pricing Calculator.

• Dave Bost (@davebost) continued his series with Moving a WordPress Blog to Windows Azure – Part 5: Moving From a Subfolder to the Root on 7/11/2013:

In Part 1, I created a new WordPress site hosted on Windows Azure. In Part 2, I transferred all of the relelvant content from my old WordPress site to my new one hosted on Windows Azure. In Part 3, we made the necessary configuration changes from my domain registrar to Windows Azure to have my custom domain (http://davebost.com) direct people to my new blog site on Windows Azure. In Part 4, I had to define some URL Rewrite rules with a web.config file to handle my custom permalinks in my WordPress blog.

In Four (somewhat) short steps, I’ve completely moved my WordPress blog over to Windows Azure! However, I’m not done yet. As part of this transition, I made the decision to finally move my blog off of a subfolder off of my site (http://www.davebost.com/blog) to the root of my domain (http://www.davebost.com).

This made me nervous as I imagined all of the links scattered across the Interwebs that I’ve built up for the past 10 years suddenly blowing up. However, with a little plug-in magic and a new URL Rewrite redirection rule I was able to finally check off this task that’s been on my list for several years!

The Database Search/Replace Magic

WARNING! Before you do anything, make sure you have a backup of your WordPress site and WordPress database. See WordPress Backups on steps on how to protect yourself. I am not responsible for any catastrophic events that may take place.

The good news is you are migrating from an existing site, so you should be covered. Plus, short of completely deleting your database without a backup, any changes made should be easily remedied. But…you’ve been warned!

My WordPress content database is littered with relics of the past. Namely various references to my old blog address (http://davebost.com/blog). From various WordPress configuration settings to my post content as well. The recommended approach found within the Moving WordPress documentation is to search all references of the past and replace with the new. According to this document, there are no less than 15 (!) steps to accomplish this task. Thankfully, the great folks over at interconnect/it have created a Search and Replace for WordPress Databases Script to accomplish this feat for us.

If you’re running Windows, I recommend ‘unblocking’ the zip file once it’s downloaded and before you unzip it. Open up Windows Explorer and navigate to the folder containing the downloaded zip file. Right-click on the zip file name and select properties. On the ‘General’ tab, click the ‘Unblock’ button and click ‘Ok’.

Unzip the file and upload the ‘searchreplacedb2.php’ script file to the root of your WordPress site.

Some helpful steps on how to upload files to your WordPress site using FTP can be found in Part 2 and Part 4.

Run the script by opening your favorite web browser and navigating to the location of the script file (ie. http://davebost.com/searchreplacedb2.php).

Click the ‘Submit’ button to have the script retrieve your database connection strings as defined in your wp-config file.

On the ‘Tables’ step, I kept the defaults and selected Continue.

For my purposes, I chose to replace the following on the ‘What to replace?’ step:

Thankfully, I didn’t encounter any errors:

Don’t forget that once the script is finished running to DELETE THE SCRIPT FILE FROM YOUR SITE!

After a cursory scan of my blog content, everything seems to be in working order!

Handling 301 Redirects with a URL Rewrite Rule

Now that my site content has been updated with the new permalink content, what about all of those hanging links to my content scattered across the Internet. Are they forever broken? Thankfully, with a little URL Rewrite magic, they’re not. The recommended approach to notify the various search engines of this change and handle the redirection from existing links is to use a 301 Redirect.

To handle this for my purposes, I added a Redirect rule to my <system.webServer> configuration section in my web.config file:

<system.webServer>

<rewrite>

<rules>

<rule name=”RedirectRule” stopProcessing=”true”>

<match url=”^blog/?(.*)$” ignoreCase=”true” />

<action type=”Redirect” url=”http://www.davebost.com/{R:1}”

redirectType=”Permanent” />

</rule>

…

</rules>

</rewrite>

</system.webServer>Setting ‘redirectType’ to ‘Permanent’ identifies this as a 301 Permanent Redirect. In short, the match regular expression looks for the string ‘blog’ within the url, captures everything following ‘blog/’ and replaces {R:1} with the contents in the <action> element. My rule defined in Part 4 for handling the custom permalinks in my WordPress site follows this RedirectRule definition.

WE DID IT! In a few short steps we migrated a WordPress site over to Windows Azure with a couple of additional tweaks required for my particular purposes to move from a subfolder to the root of my domain. FINALLY!

What I’ve learned is that within a matter of a minute or so, I can stand up a WordPress site on Azure. And within an hour or two, I can migrate all of my data over from my old WordPress site to my new site hosted on Windows Azure.

I hope these instructions were valuable in your pursuit. Let me know how everything turns out in the comments.

GOOD LUCK!

Dave Bost (@davebost) continued his series with Moving a WordPress Blog to Windows Azure – Part 4: Pretty Permalinks and URL Rewrite rules on 7/11/2013:

We’re down to the last few, albeit most important, steps in this little project.

In Part 1, we created a new WordPress site hosted on Windows Azure. Part 2, we transferred the content from our old site to the new one. With Part 3, we set up our custom domain with some DNS wizardry.

In this fourth step, I’m going to walk through the steps to make sure my custom permalinks are handled correctly in Windows Azure.

As with most blogs, I want to have “pretty permalinks”. I’m opting for something like http://mysite.com/2013/07/10/my-blog-post-entry over the less friendly http://mysite.com/?p=123. Chances are your permalinks are configured just the way you want them. After all, your entire existing WordPress site options and configurations were moved over as part of the data content transfer in Part 2.

However, if you’ve elected to go with any custom permalink format in WordPress other than the default, you’re going to need to set up some URL Rewrite rules.

Although your WordPress site hosted on Windows Azure is running PHP, it’s running PHP on an instance of Internet Information Server (IIS). Setting up URL Rewrite rules on an IIS server is slightly different than modifying an .htaccess file on an Apache instance.

URL Rewrite rules are defined in the web.config file in IIS.

That’s right. Even though we’re running an PHP website, web.config is still utilized for any server configuration items, including URL Rewrite rules.

Open up your favorite text editor and create a ‘web.config’ file with the following contents:

<?xml version=”1.0″ encoding=”utf-8″ ?>

< configuration>

<system.webServer>

<rewrite>

<rules>

<rule name=”Main Rule” stopProcessing=”true”>

<match url=”.*” />

<conditions logicalGrouping=”MatchAll”>

<add input=”{REQUEST_FILENAME}” matchType=”IsFile” negate=”true” />

<add input=”{REQUEST_FILENAME}” matchType=”IsDirectory” negate=”true” />

</conditions>

<action type=”Rewrite” url=”index.php” />

</rule>

</rules>

</rewrite>

</system.webServer>

< /configuration>Save the ‘web.config’ file and upload it to the root folder of your WordPress application. For my purposes this is the same location for the root of my website as well. As stated in Part 2, an easy method to upload files to your website is through FTP. The FTP HOST NAME and DEPLOYMENT / FTP USER information is available on the website’s dashboard in the Windows Azure Management Portal. You may have to reset your FTP login credentials if you haven’t already done so. You can do this by clicking on the ‘Reset your deployment credentials’ link on your websites Dashboard page.

More information on creating this URL Rewrite rule is available on the IIS Product site.

Assuming you have custom permalinks for your website, you should be up and running with your WordPress on Windows Azure now. CONGRATULATIONS!

However, that’s not the end of this article series. I chose to take a big leap during this transition to running my WordPress blog on Windows Azure to finally removing my blog off of a subdomain (http://davebost.com vs. http://davebost.com/blog). We’ll cover that adventure next.

My (@rogerjenn) Uptime Report for My Live Windows Azure Web Site: June 2013 = 99.34% post of 7/9/2013 begins:

My Android MiniPCs and TVBoxes blog runs WordPress on WebMatrix with Super Cache on Windows Azure Web Site (WAWS) Preview and ClearDB’s MySQL database (Venus plan) in Microsoft’s West U.S. (Bay Area) data center. Service Level Agreements aren’t applicable to the Web Services Preview; only sites with two or more Reserved Web Site instances qualify for the usual 99.95% uptime SLA.

I use Windows Live Writer to author posts that provide technical details of low-cost MiniPCs with HDMI outputs running Android JellyBean 4.1+. The site emphases high-definition 1080p video recording and rendition.

The site commenced operation on 4/25/2013. To improve response time, I implemented WordPress Super Cache on May 15, 2013.

Here’s Pingdom’s summary report for June 2013:

And continues with detailed downtime and response time reports.

<Return to section navigation list>

Windows Azure Cloud Services, Caching, APIs, Tools and Test Harnesses