Windows Azure and Cloud Computing Posts for 9/24/2009+

Windows Azure, Azure Data Services, SQL Azure Database and related cloud computing topics now appear in this weekly series.

Windows Azure, Azure Data Services, SQL Azure Database and related cloud computing topics now appear in this weekly series.

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Table and Queue Services

- SQL Azure Database (SADB)

- .NET Services: Access Control, Service Bus and Workflow

- Live Windows Azure Apps, Tools and Test Harnesses

- Windows Azure Infrastructure

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use these links, click the post title to display the single article you want to navigate.

Tip: Copy •, •• or ••• to the clipboard, press Ctrl+F and paste into the search text box to find updated articles.

••• Update 9/27/2009: Added links to live Azure demo projects to my “Cloud Computing with the Windows Azure Platform” Short-Form Table of Contents and SQL Azure Chapters post of 9/26/2009, David Linthicum on MDM for cloud-based data, Krishnan Subramanian on EC2 growth, Andrea Di Maio on social sites and government record-keeping and Simon Wardley muses If Sauron was the Microsoft Cloud Strategist.

•• Update 9/26/2009: New Azure TechNet Events 9/29/2009, Chris Hoff (@Beaker) strikes a blow against the Operating System, Neil Ward-Dutton’s cloud research report and slide deck, and more. My “Cloud Computing with the Windows Azure Platform” Short-Form Table of Contents and SQL Azure Chapters post provides details about the book’s SQL Azure content.

• Update 9/25/2009: Steve Marx says in a comment that BizSpark includes Azure also, Bruno Tekaly intervews Juval Lowy about EnergyNet, Jim Nakashima uses the Web Platform Installer to set up Azure tools, Michael Arrington interviews Steve Balmer and elicits an explanation of “three screens and a cloud’’, James Hamilton delivers props to Microsoft for its Dublin data center, and more.

Cloud Computing with the Windows Azure Platform published 9/21/2009. Order today from Amazon.

Read the detailed TOC here (PDF). Download the sample code here. Discuss the book on its WROX P2P Forum.

Azure Blob, Table and Queue Services

••• My “Cloud Computing with the Windows Azure Platform” Short-Form Table of Contents and SQL Azure Chapters post of 9/26/2009 now has links to six live Azure demo projects from five chapters:

Instructions and sample code for creating and running the following live demonstration projects are contained in the chapter indicated:

- Chapter 4: OakLeaf Systems Azure Table Services Sample Project

- Chapter 4: OakLeaf Systems Azure Blob Services Test Harness

- Chapter 5: OakLeaf Systems Azure Table Test Harness (Secure Sockets Layer, Personally Identifiable Data Encrypted with AES-128)

- Chapter 6: OakLeaf Systems Azure Table Test Harness: HTTPS, Encrypted, with ASP.NET Membership Provider

- Chapter 7: OakLeaf Systems Azure Test Harness with Windows Live ID Authentication

- Chapter 8: OakLeaf Systems: Photo Gallery Azure Queue Test Harness

These sample projects will remain live as long as Microsoft continues to subsidize Windows Azure demonstration accounts. See my Lobbying Microsoft for Azure Compute, Storage and Bandwidth Billing Thresholds for Developers post of 9/8/2008.

Eric Nelson has posted a preliminary slide deck for his Design considerations for storing data in the cloud with Windows Azure slides to be presented at the Software Architect 2009 conference on 9/30 at 2:00 PM in the Royal College of Physicians, London:

The Microsoft Azure Services Platform includes not one but two (arguably three) ways of storing your data. In this session we will look at the implications of Windows Azure Storage and SQL Data Services on how we will store data when we build applications deployed in the Cloud. We will cover “code near” vs “code far”, relational vs non-relational, blobs, queues and more

<Return to section navigation list>

SQL Azure Database (SADB, formerly SDS and SSDS)

Amit Piplani’s Multi Tenant Database comparison post of 9/23/2009 distinguishes Separate Databases; Shared Database, Separate Schema; and Same Database, Same Schema models by the following characteristics:

- Security

- Extension

- Data Recovery

- Costs

- When to use

Amit’s Multi-Tenancy Security Approach post of the same date goes into more detail about security for multi-tenant SaaS projects:

<Return to section navigation list>

.NET Services: Access Control, Service Bus and Workflow

• Bruno Terkaly interviews Juval Lowy – The EnergyNet and the next Killer App in this 00:09:23 Channel 9 video posted on 9/23/2009:

Many people love to speculate on what the next Killer App might be. Juval Lowy will use his ideal killer app as the basis of his upcoming workshop at PDC09 on November 16th. He’d like to think that the EnergyNet … might provide such a cohesive blending of commercial and residential energy use, the devices which consume that energy and the resources that provide it, that this will become an important piece of our daily lives. It will save money, save energy, and perhaps even save the planet.

In his workshop, he is going to illustrate how the notion of an EnergyNet is made possible through technologies such as Windows Communication Foundation and the .NET Service Bus. [Emphasis added]

The Channel9 description page includes links for more information on the workshop and more details on EnergyNet.

It’s not all skitttles and beer for the SmartMeters that Pacific Gas & Electric Co. (PG&E), our Northern California utility is installing to measure watt-hours on an hourly basis, as Lois Henry’s 'SmartMeters' leave us all smarting article of 9/12/2009 in The Bakersfield Californian:

… Hundreds of people in Bakersfield and around the state reported major problems since Pacific Gas & Electric started installing so-called smart meters two years ago. Complaints have spiked as the utility began upgrading local meters with even "smarter" versions.

It's not just the bills, many of which have jumped 100, 200 -- even 400 percent year to year after the install. It's also problems with the online monitoring function and the meters themselves, which have been blowing out appliances, something I was initially told they absolutely could not do. …

<Return to section navigation list>

Live Windows Azure Apps, Tools and Test Harnesses

••• Jayaram Krishnaswamy’s Two great tools to work with SQL Azure post of 9/24/2009 gives props to SQL Azure Manager and SQL Azure Migration Wizard as “two great tools to work with SQL Azure.” Jayaram continues:

SQL Azure Migration Wizard is a nice tool. It can connect to (local)Server as well as it supports running scripts. I tried running a script to create 'pubs' on SQL Azure. It did manage to bring in some tables and not all. It does not like 'USE' in SQL statements (to know what is allowed and what is not you must go to MSDN). For running the script I need to be in Master(but how?, I could not fathom). I went through lots of "encountered some problem, searching for a solution" messages. On the whole it is very easy to use tool.

George Huey applied some fixes to his MigWiz as described in my Using the SQL Azure Migration Wizard with the AdventureWorksLT2008 Sample Database post (updated on 9/21/2009). With these corrections and a few tweaks, MigWiz imported the schema and data for the AdventureWorksLT2008 database into SQL Azure with no significant problems.

• Jim Nakashima Describes Installing the Windows Azure Tools using the Web Platform Installer in this 9/24/2009 post:

Today, the IIS team released a the Web Platform Installer 2.0 RTW. Among the many cool new things (more tools, new applications, and localization to 9 languages) is the inclusion of the Windows Azure Tools for Microsoft Visual Studio 2008.

Install the Windows Azure Tools for Microsoft Visual Studio 2008 using the Web Platform Installer.

Why should you care? As many of you know, before using the Windows Azure Tools, you need install and configure IIS which requires figuring out how to do that and following multiple steps. The Web Platform Installer (we call it the WebPI) makes installing the Tools, SDK and IIS as simple as clicking a few buttons.

• Charles Babcock claims the Simple API Is Part Of A Rising And Open Tide To The Cloud in his 9/24/2009 post to InformationWeek’s Cloud Computing Weblog:

What's notable about the open source project announced yesterday, Simple API for cloud computing, are the names that are present, IBM, Microsoft and Rackspace, and the names that are not: Amazon, for one, is not a backer, and let's just stop right there.

The Simple API for Cloud Applications is an interface that gives enterprise developers and independent software vendors a target to shoot for if they want an application to work with different cloud environments. It is not literally a cross cloud API, enabling an application to work in any cloud. Such a thing does not exist, yet.

You can read more about Zend’s Simple Cloud API and Zend Cloud here.

Jim Nakashima’s Using WCF on Windows Azure post of 9/23/2009 announces:

Today, the WCF team released a patch that will help you if your are using WCF on Windows Azure.

Essentially, if you use the "Add Service Reference..." feature in Visual Studio or svutil to generate WSDL for a service that is hosted on Windows Azure either locally or in the cloud, the WSDL would contain incorrect URIs.

The problem has to do with the fact that in a load balanced scenario like Windows Azure, there are ports that are used internally (behind the load balancer) and ports that are used externally (i.e. internet facing). The internal ports were showing up in the URIs.

Also note that this patch is not yet in the cloud, but will be soon. i.e. it will only help you in the Development Fabric scenario for now. (Will post when the patch is available in the cloud.)

While we're on the topic of patches, please see the list of patches related to Windows Azure.

The latter link is to the Windows Azure Platform Downloads page, which also offers an Azure training kit, tools, hotfixes, and SDKs.

<Return to section navigation list>

Windows Azure Infrastructure

••• Simon Wardley imagines If Sauron was the Microsoft Cloud Strategist in this 0/27/2009 scenario:

Back in March 2008, I wrote a post which hypothesised that a company, such as Microsoft, could conceivably create a cloud environment that meshes together many ISPs / ISVs and end consumers into a "proprietary" yet "open" cloud marketplace and consequently supplant the neutrality of the web.

This is only a hypothesis and the strategy would have to be straight out of the "Art of War" and attempt to misdirect all parties whilst the ground work is laid. Now, I have no idea what Microsoft is planning but let's pretend that Sauron was running their cloud strategy. …

With the growth of the Azure marketplace and applications built in this platform, a range of communication protocols will be introduced to enhance productivity in both the office platform (which will increasingly be tied into the network effect aspects of Azure) and Silverlight (which will be introduced to every device to create a rich interface). Whilst the protocols will be open, many of the benefits will only come into effect through aggregated & meta data (i.e. within the confines of the Azure market). The purpose of this approach, is to reduce the importance of the browser as a neutral interface to the web and to start the process of undermining the W3C technologies. …

Following such a strategy, then it could be Game, Set and Match to MSFT for the next twenty years and the open source movement will find itself crushed by this juggernaut. Furthermore, companies such as Google, that depend upon the neutrality of the interface to the web will find themselves seriously disadvantaged. …

Seems to me to be a more likely strategy for SharePoint Server, especially when you consider MOSS is already a US$1 billion business.

••• James Hamilton’s Web Search Using Small Cores post of 9/27/2009 begins:

I recently came across an interesting paper that is currently under review for ASPLOS. I liked it for two unrelated reasons: 1) the paper covers the Microsoft Bing Search engine architecture in more detail than I’ve seen previously released, and 2) it covers the problems with scaling workloads down to low-powered commodity cores clearly. I particularly like the combination of using important, real production workloads rather than workload models or simulations and using that base to investigate an important problem: when can we scale workloads down to low power processors and what are the limiting factors?

and continues with an in-depth analysis of the capability of a Fast Array of Wimpy Nodes (FAWN) and the like to compete on a power/performance basis with high-end server CPUs.

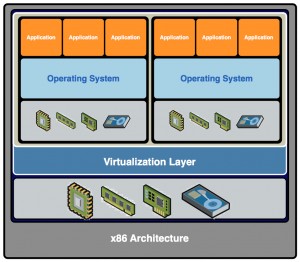

•• Chris Hoff (@Beaker) strikes a blow against the Operating System in his Incomplete Thought: Virtual Machines Are the Problem, Not the Solution… post of 9/25/2009:

Virtual machines (VMs) represent the symptoms of a set of legacy problems packaged up to provide a placebo effect as an answer that in some cases we have, until lately, appeared disinclined and not technologically empowered to solve.

If I had a wish, it would be that VM’s end up being the short-term gap-filler they deserve to be and ultimately become a legacy technology so we can solve some of our real architectural issues the way they ought to be solved.

That said, please don’t get me wrong, VMs have allowed us to take the first steps toward defining, compartmentalizing, and isolating some pretty nasty problems anchored on the sins of our fathers, but they don’t do a damned thing to fix them.

VMs have certainly allowed us to (literally) “think outside the box” about how we characterize “workloads” and have enabled us to begin talking about how we make them somewhat mobile, portable, interoperable, easy to describe, inventory and in some cases more secure. Cool.

There’s still a pile of crap inside ‘em.

What do I mean?

There’s a bloated, parasitic resource-gobbling cancer inside every VM. For the most part, it’s the real reason we even have mass market virtualization today.

It’s called the operating system:

•• Neil Ward-Dutton’s Exploring the business value of Cloud Computing “Strategic Insight,” a 15-page research report of 9/23/2009 for MWD Advisors, which carries this abstract:

2009 has been the year in which Cloud Computing entered mainstream industry consciousness. Cloud computing – a model of technology provision where capacity on remotely hosted, managed computing platforms is made publicly available and rented to multiple customers on a self-service basis – is on every IT vendor’s agenda, as well as entering the research agendas of many CIOs.

But how does Cloud Computing really deliver business value to your organisation, and what kinds of scenarios are best suited to it? What’s the real relationship between “Public” and “Private” Cloud offerings in value terms? This report answers these questions.

See also Neil’s 13 slides from his Articulating the value of Cloud Computing presentation of 9/25/2009 at Microsoft’s Cloud Architect Forum, London.

• Michael Arrington interviews Steve Balmer and elicits an explanation of “three screens and a cloud’’ in this lengthy Exclusive Interview With Steve Ballmer: Products, Competition, The Road Ahead post of 9/24/2009, which includes a full transcript:

On Microsoft’s “three screens and the cloud” strategy: Ballmer says it’s a “fundamental shift in the computing paradigm.” He added “We used to talk about mainframe computer, mini computer, PC computing, client server computing, graphical computing, the internet; I think this notion of three screens and a cloud, multiple devices that are all important, the cloud not just as a point of delivery of individual applications, but really as a new platform, a scale-out, very manageable platform that has services that span security contacts, I think it’s a big deal.”

• Steve Marx said “[T]he Windows Azure Platform is part of BizSpark as well” [as Azure] in a comment to this post. Paul Krill wrote a “Microsoft launches BizSpark to boost Azure” article on 11/5/2008 for InfoWorld:

Looking to boost Web-based ventures and its new Windows Azure cloud services platform, Microsoft on Wednesday is announcing Microsoft BizSpark, a program providing software and services to startups.

"The cornerstone [of the program] is to get into the hands of the startup community all of our development tools and servers required to build Web-based solutions," said Dan'l Lewin, corporate vice president of Strategic and Emerging Business Development at Microsoft. Participants around the globe also gain visibility and marketing, Lewin said.

BizSpark will be leveraged as an opportunity to boost the Azure platform, with participants having access to the Azure Services Platform CTP (Community Technology Preview) introduced last week.

"We expect many of them will be taking advantage of cloud services," as part of their company creation, Lewin said.

Steve observed in his message to me: … “You don’t see it in the offering now because Windows Azure hasn’t launched yet (and is free for everyone).” But the Azure Services Platform CTP included with BizSpark hadn’t launched in November 2008 and was “free for everyone” also.

The question, of course, is “How many free instances of Windows Azure and SQL Azure will WebsiteSpark (and BizSpark) participants receive?”

Scott Guthrie’s Announcing the WebsiteSpark Program post of 9/24/2009 and the WebsiteSpark site list lotsa swag “for independent web developers and web development companies that build web applications and web sites on behalf of others” :

- 3 licenses of Visual Studio 2008 Professional Edition

- 1 license of Expression Studio 3 (which includes Expression Blend, Sketchflow, and Web)

- 2 licenses of Expression Web 3

- 4 processor licenses of Windows Web Server 2008 R2

- 4 processor licenses of SQL Server 2008 Web Edition

- DotNetPanel control panel (enabling easy remote/hosted management of your servers)

However, Scott’s ASP.NET marketing team didn’t mention:

- Several instances of the Windows Azure Platform (including .NET Services)

- A couple instances of SQL Azure

I’ve been Lobbying Microsoft for Azure Compute, Storage and Bandwidth Billing Thresholds for Developers since early September but haven’t received any support from the Azure team.

Steve Marx told me in a message this morning (Thursday):

I believe Windows Azure and SQL Azure will be included in WebsiteSpark. You don’t see it in the offering now because Windows Azure hasn’t launched yet (and is free for everyone).

It seems to me that being upfront about Windows Azure and SQL Azure swag, including the number of instances, would “incentivize” a large number of Web developers and designers. (Would you believe the Windows Live Writer spell checker likes “incentivize?”)

How about including some Azure swag with BizSpark signups, too?

Mary Jo Foley’s Microsoft makes Web development tools available for free and Brier Dudley’s Microsoft giving free tools -- lots of them -- to woo Web designers posts of the same date throw more light on the WebsiteSpark program.

• James Hamilton delivers props to Microsoft for its Dublin data center in his Chillerless Data Center at 95F post of 9/24/2009:

This is 100% the right answer: Microsoft’s Chiller-less Data Center. The Microsoft Dublin data center has three design features I love: 1) they are running evaporative cooling, 2) they are using free-air cooling (air-side economization), and 3) they run up to 95F and avoid the use of chillers entirely. All three of these techniques were covered in the best practices talk I gave at the Google Data Center Efficiency Conference (presentation, video). …

Microsoft General Manager of Infrastructure Services Arne Josefsberg blog entry on the Dublin facility: http://blogs.technet.com/msdatacenters/archive/2009/09/24/dublin-data-center-celebrates-grand-opening.aspx.

In a secretive industry like ours, it’s good to see a public example of a high-scale data center running hot and without chillers. Good work Microsoft.

Microsoft EMEA PR announced Microsoft Expands Cloud Computing Capabilities & Services in Europe on 9/24/2009:

Microsoft today announced the opening of its first ‘mega data centre’ in Europe to meet continued growth in demand for its Online, Live and Cloud services. The $500 million total investment is part of Microsoft’s long-term commitment in the region, and is a major step in realising Microsoft’s Software plus Services strategy.

The data centre is the next evolutionary step in Microsoft’s commitment to thoughtfully building its cloud computing capacity and network infrastructure throughout the region to meet the demand generated from its Online, Live Services and Cloud Services, such as Bing, Microsoft Business Productivity Online Suite, Windows Live, and the Azure Services Platform. [Emphasis added.]

UK, Irish and European Windows Azure and, presumably, SQL Azure users should find a substantial latency reduction as they move their projects’ location to the new Dublin data center.

Steve Clayton reported from Dublin at the data center’s opening in his I’ve seen the cloud…it lives in Dublin of 9/24/2009:

I’m in sunny Dublin today (yep, it’s sunny here) for the grand opening of Microsoft’s first “mega datacenter” outside of the US. What you may ask is a mega datacenter? Well basically it’s an enormous facility from we’ll deliver our cloud services to customers in Europe and beyond.

I had the chance to check the place out last month and have a full tour and it’s incredible. Okay there isn’t much to see but that’s sort of the point. It’s this big information factory that is on a scale that you’ll not see in many other places in the world and run with an astonishing level of attention to detail.

It’s also quite revolutionary and turns out to be our most efficient data center thus far. Efficiency is measured by something called PUE that essentially looks at how much power your use vs the power you consume. The ultimate PUE of course is 1.0 though the industry average is from 2-2.4. Microsoft’s data centers on average run at 1.6 PUE but this facility takes that down to 1.25 through use of some smart technology called “air”. Most datacenters rely on chillers and a lot of water to keep the facility cool – because of the climate in Dublin, we can use fine, fresh, Irish air to do the job which has significant benefits from an environmental point of view. Put simply, it saves 18 million litres of water each month.

David Chou claims Infrastructure-as-a-Service Will Mature in 2010 in this brief interview of 9/24/2009 with the Azure Cloud OS Journal’s Jeremy Geelan: “Chou speaks out on where he thinks Cloud Computing will make its impact most noticeably looking forwards”:

While acknowledging that lots of work is currently being done to differentiate and integrate private and public cloud solutions, Microsoft Architect David Chou believes that Infrastructure-as-a-service (IaaS) is the area of Cloud Computing that will make its impact most noticeably in 2010 - especially for startups, and small-medium sized businesses.

David Chou is a technical architect at Microsoft, focused on collaborating with enterprises and organizations in areas such as cloud computing, SOA, Web, distributed systems, security, etc., and supporting decision makers on defining evolutionary strategies in architecture.

What about Platform as a Service, Azure’s strong suite. When does PaaS mature, David?

Microsoft bloggers Jeff Barnes, Zhiming Xue, Bill Zack and Bob Famiiliar bring you the new Innovation Showcase Web site that Bob says is “devoted to keeping you informed of the great solutions being created by our customers and partners using Windows 7, Windows Azure, Silverlight 3 and Internet Explorer 8.”

So far, I haven’t seen any examples of showcased Windows Azure solutions, but maybe I didn’t look closely enough.

<Return to section navigation list>

Cloud Security and Governance

••• Tony Bishop starts a new series with his Enterprise-class clouds, part 1: Security and performance post of 9/23/2009 for InfoWorld:

The intent of the blogs is to provide the thought leadership for readers seeking to create a sound strategy for exploiting cloud computing for the enterprise.

••• Dave Kearns recommends checking out Forefront Indentity Manager 2010 if you’re considering Azure in his Microsoft to release identity product of 9/22/2009 to NetworkWorld:

My primary interest at last week's European version of The Experts Conference was Microsoft's upcoming Forefront Identity Manager 2010. If you haven't been following closely, you might know this soon to release product as Identity Lifecycle Manager (ILM) "2" (I don't know why there are quote marks around the number 2, but it is always written that way).

Thus, FIM is the successor to ILM, which was the successor to Microsoft Identity Integration Server (MIIS), which was the successor to the Microsoft Metadirectory Service (MMS) and on and on. None of these, if memory serves, ever reached version 2. I used to complain about how Sun Microsystems would constantly tinker with the name of their directory product, but even they occasionally got out a version 2 (or higher). …

All in all, this is the best identity product I've seen from Microsoft since Cardspace. If you're heavily invested in Microsoft technology, if you're looking at Microsoft Azure for cloud computing possibilities or if you feel that Active Directory should be the basis of your organization's identity stack, then you should definitely look at Forefront Identity Manager 2010, and even download the release candidate to take it for a test drive. [Emphasis added.]

A quick review of the technical materials available on Microsoft’s FIM Web site doesn’t expose any references to its use with Windows Azure specifically.

••• Glenn Laffel, MD, PhD’s three-part series of Medical Data in the Internet “cloud” articles for Practice Fusion:

is now complete and well worth a read by anyone who plans to process Electronic Medical Records (EMR) or Personal Health Records (PHR) in the cloud.

••• Andrea Di Maio’s Governments on Facebook Are Off The Records post of 9/26/2009 discusses conflicts between the Watergate-inspired Presidential Records Act of 1978 and posts by administration officials to social networking sites:

On 19 September Macon Phillips, the White House Director of New Media, posted on the White House blog about Reality Check: The Presidential Records Act of 1978 meets web-based social media of 2009, addressing the important topic of how to interpret in social media terms a law passed after the Watergate issue to make sure that any record created or received by the President or his staff is preserved, archived by NARA (the National Archives and Records Administration), which in turn releases them to the public in compliance with the relevant privacy act.

There is one very important passage in Phillips’ post:

“The White House is not archiving all content or activity across social networks where we have a page – nor do we want to. The only content archived is what is voluntarily published on the White House’s official pages on these sites or what is voluntarily sent to a White House account.” …

••• David Linthicum posits MDM Becoming More Critical in Light of Cloud Computing in this 9/27/2009 post to the eBizQ blog:

Master data management (MDM) is one of those topics that everyone considers important, but few know exactly what it is or have an MDM program. "MDM has the objective of providing processes for collecting, aggregating, matching, consolidating, quality-assuring, persisting and distributing such data throughout an organization to ensure consistency and control in the ongoing maintenance and application use of this information." So says Wikipedia.

I think that the lack of MDM will become more of an issue as cloud computing rises. We're moving from complex federated on-premise systems, to complex federated on-premise and cloud-delivered systems. Typically, we're moving in these new directions without regard for an underlying strategy around MDM, or other data management issues for that matter. …

•• Hilton Collins summarizes governmental issues in his lengthy Cloud Computing Gains Momentum but Security and Privacy Issues Persist article of 9/25/2009 for the Government Technology site:

… "What tends to worry people [about cloud computing] are issues like security and privacy of data -- that's definitely what we often hear from our customers," said Chris Willey, interim chief technology officer of Washington, D.C.

Willey's office provides an internal, government-held, private cloud service to other city agencies, which allows them to rent processing, storage and other computing resources. The city government also uses applications hosted by Google in an external, public cloud model for e-mail and document creation capabilities. …

"Google has had to spend more money and time on security than D.C. government will ever be able to do," Willey said. "They have such a robust infrastructure, and they're one of the biggest targets on the Internet in terms of hacks and denial-of-service attacks." …

"If I have personally identifiable information -- credit cards, Social Security numbers -- I wouldn't use cloud computing," said Dan Lohrmann, Michigan's chief technology officer. "But if it's publicly available data anyway -- [like] pictures of buildings in downtown Lansing we're storing -- I might feel like the risk is less to use cloud computing for storage." …

• Jon Oltsik’s white paper, A Prudent Approach for Storage Encryption and Key Management, according to the GovInfoSecurity Web site, “cuts through the hype and provides recommendations to protect your organization's data, with today's budget. Oltsik shows you where to start, how to focus on the real threats to data, and what actions you can take today to make a meaningful contribution to stopping data breaches.” (Free site registration required.):

The white paper covers:

- What are the real threats to data today

- Where do you really need to encrypt data first

- How does key management fit into your encryption plans

- What shifts in the industry and vendor developments will mean to your storage environment and strategy

Jon is a Principal Analyst at ESG.

• John Pescatore’s Benchmarking Security – Are We Safe Yet? post of 9/24/2009 begins:

I still cringe at that scene in Marathon Man where Laurence Olivier puts Dustin Hoffman in the dentist chair and tortures him while asking “Is it safe??” In fact, now I cringe even more because it reminds me of so many conversations between CEOs/CIOs and CISOs: “OK, we gave you the budget increase. Is it safe now???”

Of course, safety is a relative thing. As the old saw says about what one hunter said to the other when they ran into the angry bear in the woods: “I don’t have to outrun the bear, I only have to outrun you.” Animals use “herd behavior” as a basic safety mechanism – humans call it “due diligence.” …

John is a Garter analyst.

Amit Piplani’s Multi-Tenancy Security Approach post of 9/23/2009 goes into detail about security for multi-tenant SaaS projects. See the SQL Azure Database (SADB) section.

<Return to section navigation list>

Cloud Computing Events

•• TechNet Events presents Real World Azure – For IT Professionals (Part I) on 9/29/2009:

Cloud computing and, more specifically, Microsoft Azure are questions on the minds of IT professionals everywhere. What is it? When should I use it? How does it apply to my job? Join us as we review some of the lessons Microsoft IT has learned through Project Austin, an incubation project dogfooding the use of Microsoft Azure as a platform for supporting internal line-of-business applications.

In this event we will discuss why an IT operations team would want to pursue Azure as an extension to the data center as we review the Azure architecture from the IT professional’s point of view; discuss configuration, deployment and scaling Azure-based applications; review how Azure-based applications can be integrated with on-premise applications; and how operations teams can manage and monitor Azure-based applications.

We will additionally explore several specific Azure capabilities:

- The Azure roles (web, web service and worker)

- Azure storage options

- Azure security and identity options

If you are interested, we would like to invite you to our afternoon session for architects and developers. (See Below)

Project Austin is new to me.

When: 9/29/2009 6:30 AM to 10:00 AM PT (8:00 AM to 12:00 N CT)

Where: The Internet (Live Meeting) Click here to register.

•• TechNet Events presents Real World Azure – For Developers and Architects (Part II) on 9/29/2009:

When: 9/29/2009 11:00 AM to 3:00 PM PT (1:00 PM to 5:00 PM CT)In this event we will start by reviewing cloud computing architectures in general and the Azure architecture in particular. We will then dive deeper into several aspects of Azure from the developer’s and architect’s perspective. We will review the Azure roles (web, web service and worker); discuss several Azure storage options; review Azure security and identity options; review how Azure-based applications can be integrated with on-premise applications; discuss configuration, deployment and scaling Azure-based applications; and highlight how development teams can optimize their applications for better management and monitoring.

If you are interested, we would like to invite you to our morning session for IT Professionals (see above).

Where: The Internet (Live Meeting) Click here to register.

•• Neil Ward-Dutton’s 13 slides from his Articulating the value of Cloud Computing presentation of 9/25/2009 at Microsoft’s Cloud Architect Forum, Cardinal Place, London provide an incisive overview of cloud computing services and architecture.

Neil is Research Director for MWD Advisors, which specializes in cloud-computing research. His The seven elements of Cloud Computing's value of 8/20/2009 provide more background on the session’s approach.

MWD offers an extensive range of research reports free-of-charge to Guest Pass subscribers. The research available as part of this free service provides subscribers with a solid foundation for IT-business alignment based on our unique perspective on key IT management competencies.

I’ve subscribed. See also his Exploring the business value of Cloud Computing “Strategic Insight” research report.

•• John Pironti will present a 1.5-hour Key Considerations for Business Resiliency webinar on 10/21/2009 and 11/9/2009 sponsored by the GovInfoSecurity site:

Organizations understand the need for Business Continuity and Disaster Recovery in the face of natural, man-made and pandemic disasters. But what about Business Resiliency, which brings together multiple disciplines to ensure minimal disruption in the wake of a disaster?

When: 10/21/2009 3:30 PM ET, 12:30 PM PT; 11/9/2009 10:00 AM ET, 7:00 AM PTRegister for this webinar to learn:

- How to assemble the Business Resiliency basics;

- How to craft a proactive plan;

- How to account for the most overlooked threats to sustaining your organization - and how to then test your plan effectively.

Where: Internet (Webinar.) Register here.

• Kevin Jackson’s Dataline, Lockheed Martin, SAIC, Unisys on Tactical Cloud Computing post of 9/25/2009 reports “that representatives from Lockheed Martin, SAIC, and Unisys will join me in a Tactical Cloud Computing "Power Panel" at SYS-CON's 1st Annual Government IT Conference & Expo in Washington DC on October 6, 2009”:

When: 10/6/2009Tactical Cloud Computing refers to the use of cloud computing technology and techniques for the support of localized and short-lived information access and processing requirements.

Where: Hyatt Regency on Capitol Hill hotel, Washington DC, USA

Eric Nelson has posted a preliminary slide deck for his Design considerations for storing data in the cloud with Windows Azure slides to be presented at the Software Architect 2009 conference on 9/30/2009 at 2:00 PM in the Royal College of Physicians, London. For details, see the Azure Blob, Table and Queue Services section.

When: 9/30/2009 to 10/1/2009Where: Royal College of Physicians, London, England, UK

Tech in the Middle will deliver a Day of Cloud conference on 10/16/2009:

Calling all software developers, leads, and architects. Join us for the day on Friday October 16, 2009 as we discuss the 'Cloud'. The day is focused on developers and includes talks on all the major cloud platforms: Google, Amazon, Sales Force & Microsoft.

Each talk will cover the basics for that platform. We will then delve into code, seeing how a solution is constructed. We cap off the day with a panel discussion. When we are done, you should have enough information to start your own experimentation. In 2010, you will be deploying at least one pilot project to a cloud platform. Kick off that investigation at Day of Cloud!

Agenda

- 7:30-8:30 AM Registration/Breakfast

- 8:30-10:00 AM Jonathan Sapir/Michael Topalovich: Salesforce.com

- 10:15-11:45 AM Wade Wegner: Azure

- 11:45-12:30 PM Lunch

- 12:30-2:00 PM Chris McAvoy: Amazon Web Services

- 2:15-3:45 PM Don Schwarz: Google App Engine

- 4:00-5:00 PM Panel

Early-bird tickets are $19.99, regular admission is $30.00. As of 9/24/2009, there were 28 tickets remaining.

When: 10/16/2009 8:00 AM to 5:00 PM CTWhere: Illinois Technology Association, 200 S. Wacker Drive, 15th Floor, Chicago, IL 60606 USA

Gartner Announces 28th Annual Data Center Conference December 1-4, 2009 at Caesar’s Palace, Las Vegas:

Providing comprehensive research, along with the opportunity to connect with the analysts who’ve developed it, the Gartner Data Center Conference is the premier source for forward-looking insight and analysis across the broad spectrum of disciplines within the data center. Our team of 40 seasoned analysts and guest experts provide an integrated view of the trends and technologies impacting the traditional Data Center.

The 7-track agenda drills-down to the level you need when it comes to next stage virtualization, cloud computing, power & cooling, servers and storage, cost optimization, TCO and IT operations excellence, aging infrastructures and the 21st-century data center, , consolidation, workload management, procurement, and major platforms. Key takeaways include how to:

- Keep pace with future-focused trends like Green IT and Cloud Computing

- Increase agility and service quality while reducing costs

- Manage and operate a world-class data center operation with hyper-efficiency

When: 12/1/ to 12/4/2009

Where: Ceasar’s Palace hotel, Las Vegas, NV, USA

<Return to section navigation list>

Other Cloud Computing Platforms and Services

••• Dina Bass wrote EMC chairman detects sea change in tech market for Bloomberg News but her detailed analysis ended up in the Worcester Telegram on 9/27/2009:

Joseph M. Tucci pulled EMC Corp. out of a two-year sales slump after the dot-com bust. Now he’s gearing up for round two: an industry shakeup that he expects to be even more punishing.

Tucci, 62, says the global economic crisis and a shift to a model where companies get computing power over the Internet will drown at least some of the biggest names in computing. …

… Tucci says, EMC, the world’s largest maker of storage computers, will hold on to its roughly 84 percent stake in VMWare Inc., the top maker of so-called virtualization software, which helps run data centers more efficiently. He plans to work more closely with Cisco Systems Inc. and said he will continue to make acquisitions. …

EMC is headquartered in Hopkintown, which is close to Worcester.

••• Michael Hickens explains How Cloud Computing Is Slowing Cloud Adoption in this 9/24/2009 post to the BNet.com blog:

There’s the cloud, and then there’s the cloud. The first cloud everyone talked about was really software-as-a-service (SaaS), a method for delivering applications over the Internet (the cloud) more effectively and cheaply than traditional implementations installed behind corporate firewalls, as exemplified by the likes of Salesforce.com, Successfactors, NetSuite and many others.

Then along came this other cloud, the infrastructure that you could rent by the processor, by the gigabyte of storage, and by the month, and which would expand and contract dynamically according to your needs, which Amazon, Microsoft, IBM and many other vendors offer. …

Now, however, there’s another option for enterprise IT, which is to run applications in the cloud but continuing to use the applications that have already been customized for your purposes and enjoy widespread adoption within the organization. As Lewis put it, cloud infrastructure “allows you to take your custom applications that are sticky within your organization and put them into a cloud environment. …

That might not have been a primary motivation for Microsoft to start offering Azure, its cloud infrastructure play, but I’m sure that staving off threats to its enterprise applications business went into its thinking.

••• Krishnan Subramanian analyzes Guy Rosen’s 9/21/2009 Anatomy of an Amazon EC2 Resource ID post in his Amazon EC2 Shows Amazing Growth post of 9/27/2009:

Amazon EC2, the public cloud service offered by Amazon, has been growing at an amazing rate. From their early days of catering to startups, they have grown to have diverse clients from individuals to enterprises. Guy Rosen, the cloud entrepreneur who tracks the state of the cloud, has done some research on the resource identifier used by Amazon EC2 and come up with some interesting stats. I thought I will add it here at Cloud Ave for the benefit of our readers.

- During one 24 hour period in the US East - 1 region, 50,242 EC2 instances were requested

- During the same period, 12,840 EBS volumes were requested

- And, 30,925 EBS snapshots were requested

However, the most interesting aspect of Guy Rosen's analysis is his calculation that 8.4 million EC2 instances have been launched since the launch of Amazon EC2. These are pretty big numbers showing success for cloud based computing. Kudos to Amazon for the success. …

Krishnan goes on to criticize Amazon’s EC2 pricing in a similar vein to my complaints about Windows Azure not being priced competitively with the Google App Engine for light traffic.

•• Charles Babcock’s Develop Once, Then Deploy To Your Cloud Of Choice post of 9/25/2009 to InformationWeek’s Cloud Computing blog begins:

IBM's CTO of Cloud Computing, Kristof Kloeckman, says IBM has demonstrated software engineering as a cloud process. At the end of the process, a developer deploys his application to the cloud of choice. As of today, that cloud better be running VMware virtual machines. In the future, the choice may be broader.

One of the obstacles to cloud computing is the difficulty of deploying a new application to the cloud. If that process could be automated, it would remove a significant barrier for IT managers who want to deploy workloads in the cloud.

IBM, with years of experience in deploying virtualized workloads, is attacking the problem from the perspective of cloud computing. In China, it now has two locations where software development in the cloud is being offered as a cloud service, one in a computing center in the city of Wuxi and another in the city of Dongying.

•• Maureen O’Gara reports IBM’s Linux-Based ‘Cloud-in-a-Box’ Makes its First Sale to Dongying city and analyzes the apparent half-million US$ sale of two racks in this 9/26/2009 post:

… Taking a page out of Cisco’s book – from the chapter on so-called smart cities – IBM late Thursday announced that the city of Dongying near China’s second-largest oil field in the midst of the Yellow River Delta is going to build a cloud to promote e-government and support its transition from a manufacturing center to a more eco-friendly services-based economy.

Dongying, which can turn the widgetry into a revenue generator, means to use the cloud to jumpstart new economic development in the region.

IBM has sold the Dongying government on its scalable, redundant, pre-packaged CloudBurst 1.1 solution – effectively an instant “cloud-in-a-box” – that IBM is peddling as the basis of its Smart City blueprint around China and elsewhere. …

CloudBurst is priced to start at $220,000. Dongying is starting with two racks.

• Eric Engleman reports Amazon explores partnership with Apptis on federal cloud in this 9/25/2009 post to his Amazon Blog for the Puget Sound Business Journal (PSBJ):

Amazon.com is clearly interested in finding government customers for its cloud computing services. The ecommerce giant has been quietly building an operation in the Washington, D.C. area and Amazon Chief Technology Officer Werner Vogels is making a big sales pitch to federal agencies. Now we're hearing that Amazon is exploring a partnership with Apptis -- a Virginia-based government IT services company -- to provide the federal government with a variety of cloud services.

Amazon and Apptis together responded to an RFQ (request for quotes) put out by the U.S. General Services Administration, Apptis spokeswoman Piper Conrad said. Conrad said the two companies are also "finalizing a general partnership." She gave no further details, and said Apptis executives would not be able to comment.

The General Services Administration (GSA) put out an RFQ seeking "Infrastructure-as-a-Service" offerings, including cloud storage, virtual machines, and cloud web hosting. The deadline for submissions was Sept. 16. …

Still no word about Microsoft’s proposal, if they made one for the Windows Azure Platform.

• Alin Irimie describes Shared Snapshots for EC2 Elastic Block Store Volumes in this 9/25/2009 post:

Amazon is adding a new feature which significantly improves the flexibility of EC2’s Elastic Block Store (EBS) snapshot facility. You now have the ability to share your snapshots with other EC2 customers using a new set of fine-grained access controls. You can keep the snapshot to yourself (the default), share it with a list of EC2 customers, or share it publicly.

The Amazon Elastic Block Store lets you create block storage volumes in sizes ranging from 1 GB to 1 TB. You can create empty volumes or you can pre-populate them using one of our Public Data Sets. Once created, you attach each volume to an EC2 instance and then reference it like any other file system. The new volumes are ready in seconds. Last week I created a 180 GB volume from a Public Data Set, attached it to my instance, and started examining it, all in about 15 seconds.

Here’s a visual overview of the data flow (in this diagram, the word Partner refers to anyone that you choose to share your data with):

B. Guptill and R. McNeil present Dell Buys Perot: Return of the Full-Line IT Vendor?, a Saugatuck Research Alert dated 9/23/2009, which explains, inter alia, “Why is it happening”:

First and foremost, Perot enables and accelerates Dell’s expansion into large enterprise data centers. In many regards, Dell’s hardware business has been totally commoditized, and it has had limited success moving upstream into larger accounts, which typically treat PCs, x86 servers, and low-end storage as adjuncts to larger data center-focused IT operations. In fact, Dell hopes that Perot will help Dell become more involved with conversations at the CIO level, related to standardization, consolidation, and other key initiatives, which Dell’s HW sales executives may not have had access to.

The alert goes on to analyze the market impact of the acquisition. (Free site registration required.)

•• Pascal Matzke adds Forrester’s insight in his Dell's acquisition of Perot Systems is a good move but won't be enough post of 9/21/2009 to the Forrester Blog for Vendor Strategy Professionals:

Together with some of my Forrester analyst colleagues earlier today I listened into the conference call hosted by executives of both - Dell and Perot Systems - to explain the rationale behind Dell's announcement to buy Perot for US$ 3.9 billion cash. There has been some speculation lately about Dell possibly making such a move, but the timing and the target they finally picked came as a bit of a surprise to everyone. The speculation was rooted in some of the statements made by Steve Schuckenbrock, President of Large Enterprise and Services at Dell, earlier this year where he pronounced that Dell would get much more serious around the services business. Now, you would of course expect nothing less from someone like Steve - after all he has spend much of his professional career prior to Dell as a top executive in the services industry (with EDS and The Feld Group). To this end Steve and his team finally delivered on the expectation, even more so as this had not been the first time that Dell promised a stronger emphasis on services. …

Pascal concludes:

… But the big challenge pertains to changing the overall value proposition and brand perception of Dell. Dell’s current positioning is still that of a product company that provides limited business value beyond the production and delivery of cost efficient computing hardware and resources. While there is a lot to gain from Perot’s existing positioning the challenge will be to do so by creating a truly consistent and integrated image of the new Dell. The question then quickly becomes whether this is about following in the shoes of IBM and/ or HP or whether Dell has something really new to offer here. That is something Dell has failed to articulate so far and so the jury is still out on this one.

US Department of Energy awards the Lawrence Berkeley National Laboratory (run by the University of California) $7,384,000.00 in American Recovery and Reinvestment Act (ARRA) 2009 operations funding for the Laboratory’s Magellan Distributed Computing and Data Initiative to:

[E]nhance the Phase I Cloud Computing by installing additional storage to support data intensive applications. The cluster will be instrumented to characterize the concurrency, communication patterns, and Input/Output of individual applications. The testbed and workload will be made available to the community for determining the effectiveness of commercial cloud computing models for DOE. The Phase I research will be expanded to address multi-site issues. The Laboratory will be managing the project and will initiate any subcontracting opportunities.

Made available to what community? My house is about three (air) miles from LBNL (known to locals as the “Cyclotron.”) Does that mean I’ll get a low-latency connection?

184-inch Cyclotron building above UC Berkeley during WWII or the late 1940s.

Credit: Lawrence Berkeley National Laboratory.

Luiz André Barroso and Urs Hölzle wrote The Datacenter as a Computer: An Introduction to the Design of Warehouse-Scale Machines, a 120-page e-book that’s a publication in the Morgan & Claypool Publishers series, Synthesis Lectures on Computer Architecture. Here’s the Abstract:

As computation continues to move into the cloud, the computing platform of interest no longer resembles a pizza box or a refrigerator, but a warehouse full of computers. These new large datacenters are quite different from traditional hosting facilities of earlier times and cannot be viewed simply as a collection of co-located servers. Large portions of the hardware and software resources in these facilities must work in concert to efficiently deliver good levels of Internet service performance, something that can only be achieved by a holistic approach to their design and deployment. In other words, we must treat the datacenter itself as one massive warehouse-scale computer (WSC).

We describe the architecture of WSCs, the main factors influencing their design, operation, and cost structure, and the characteristics of their software base. We hope it will be useful to architects and programmers of today’s WSCs, as well as those of future many-core platforms which may one day implement the equivalent of today’s WSCs on a single board.

The acknowledgment begins:

While we draw from our direct involvement in Google’s infrastructure design and operation over the past several years, most of what we have learned and now report here is the result of the hard work, the insights, and the creativity of our colleagues at Google.

The work of our Platforms Engineering, Hardware Operations, Facilities, Site Reliability and Software Infrastructure teams is most directly related to the topics we cover here, and therefore, we are particularly grateful to them for allowing us to benefit from their experience.

James Hamilton reviewed the book(let) favorably in his The Datacenter as a Computer post of 5/16/2009.

Jim Liddle’s Security Best Practices for the Amazon Elastic Cloud of 9/24/2009 begins:

Following on from my last post, Securing Applications on the Amazon Elastic Cloud, One of the biggest questions I often see asked is “Is Amazon EC2 as a platform secure”? This is like saying is my vanilla network secure? As you do to your internal network you can take some steps to make the environment as secure as you can …

and continues with a list of the steps.

Jim also authors the Cloudiquity blog, which primarily covers Amazon Web Services.

Business Software Buzz reports Salesforce.com Updating to Service Cloud 2 in this post of 9/23/2009, which claims:

Salesforce.com has had a pretty strong showing in 2009, due in part to the company’s introduction of Cloud Service (a SaaS application) at the beginning of this year. Early this month, Salesforce announced an upgrade to this application, Service Cloud 2, which consists of three phases to be launched from now until early 2011.

One of the Service Cloud 2’s web-based options is already available to Salesforce.com customers: Salesforce for Twitter. The company integrated Twitter into their platforms in March 2009—and was one of the first enterprise software developers to do so—and now the integration functions within the Service Cloud. This update allows users to track and monitor conversations in Twitter, as well as tweet from the Service Cloud.

Hot damn! Salesforce for Twitter! I can hardly wait to run the ROI on that one.

<Return to section navigation list>