Windows Azure and Cloud Computing Posts for 1/15/2011+

| A compendium of Windows Azure, Windows Azure Platform Appliance, SQL Azure Database, AppFabric and other cloud-computing articles. |

•• Updated 1/17/2011: Sharding links in the SQL Azure Database and Reporting section.

• Updated 1/16/2011 with later articles marked •

Note: This post is updated daily or more frequently, depending on the availability of new articles in the following sections:

- Azure Blob, Drive, Table and Queue Services

- SQL Azure Database and Reporting

- Marketplace DataMarket and OData

- Windows Azure AppFabric: Access Control and Service Bus

- Windows Azure Virtual Network, Connect, RDP and CDN

- Live Windows Azure Apps, APIs, Tools and Test Harnesses

- Visual Studio LightSwitch

- Windows Azure Infrastructure

- Windows Azure Platform Appliance (WAPA), Hyper-V and Private Clouds

- Cloud Security and Governance

- Cloud Computing Events

- Other Cloud Computing Platforms and Services

To use the above links, first click the post’s title to display the single article you want to navigate.

Azure Blob, Drive, Table and Queue Services

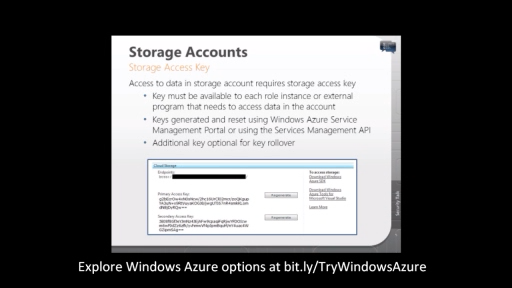

See Graham Calladine explained Windows Azure Platform Security Essentials: Module 3 – [Blog, Queue and Table] Storage Access in a 00:22:35 Channel9 video segment posted on 1/15/2011 in the Cloud Security and Governance section below.

No significant articles today.

<Return to section navigation list>

SQL Azure Database and Reporting

•• Cihan Biyikoglu published a list of blog posts and white papers about SQL Azure Federations on 12/16/2011 in a SQL Azure Federation answer to a question posted in the Windows Azure Platform - SQL Azure, Windows Azure Storage & Data forum:

We have talked about SQL Azure Federations technology at PDC and PASS this year and since then, we pushed out a number of posts and papers out. To help bring all these together, here is the list;

- Introduction to App design with Sharding and Horizontal Partitioning Technique [Title: “Sharding with SQL Azure”]: This whitepaper summarizes the technique and highlights the new application modeling best practices for best scalability and economics.

- Evaluation of Scale-up vs Scale-out: how to evaluate the scalability options?

- Intro to SQL Azure Federations: SQL Azure Federations Overview

- Perfect scenarios and typical applications that highlight the power of SQL Azure Federations technology

- How to scale out an app with SQL Azure Federations – quick walk through of building and app with SQL Azure Federations.

We will announce any news about partner programs to get a preview of the technology. Please stay tuned. In the meantime, if you have any questions about the technology, I am happy to help. You can reach me through the forums or through the blog http://blogs.msdn.com/b/cbiyikoglu/.

Here are a few additional sharding-related articles in reverse date order:

- Shard (database architecture) Wikipedia article last modified 1/3/2011

- Know Your Data 12/27/2010 blog post by Wayne Berry

- Scale Out Your SQL Azure Database 12/24/2010 blog post by Wayne Berry

- Sharding With SQL Azure 12/23/2010 blog post related to the below by Steve Yi

- Sharding with SQL Azure 12/14/2010 detailed TechNet wiki article by Michael Heydt (first member of Cihan’s links)

- SQL Azure Backup and Restore Strategy 11/18/2010 TechNet wiki article by Wayne Berry

- SQL PASS 2010, Nov 8th to 12th in Seattle 11/5/2010 post by Cihan Biyikoglu describing his two PASS presentations about SQL Azure

- Building Scalable Database Solution with SQL Azure - Introducing Federation in SQL Azure, 10/29/2010 blog post by Cihan Biyikoglu

- Building Scale-Out Database Solutions with SQL Azure, video of 10/29/2010 PDC 2010 presentation by Lev Novik

- Windows Azure: Cost Architecting for Windows Azure 10/2010 TechNet Magazine article by Maarten Balliauw

- The Real Cost of Indexes 8/19/2010 blog post by Wayne Berry

- SQL Azure Horizontal Partitioning: Part 2 6/24/2010 blog post by Wayne Berry

- Vertical Partitioning in SQL Azure: Part 1 5/17/2010 blog post by Wayne Berry

- Connections and SQL Azure 5/11/2010 blog post by David Robinson

- Uniqueidentifier and Clustered Indexes 5/5/2010 blog post by David Robinson

- Exporting Data from SQL Azure: Import/Export Wizard 5/4/2010 blog post by Wayne Berry

See also Understanding Federated Database Servers: SQL Server 2008 R2 in the MSDN Library. SQL Server federation uses the Enterprise edition’s Distributed Partitioned Views. Related topics include:

- Designing Federated Database Servers

- Implementing Federated Database Servers

- Creating Partitioned Views

Sharding is a complex topic and SQL Azure Federation technology will automate much of the administrative work and programming required. This article will up updated periodically as new posts and other publications become available.

Andrew Glover wrote Java development 2.0: Sharding with Hibernate Shards, Horizontal scalability for relational databases, on 8/31/2010 for IBM’s DeveloperWorks site. From the abstract:

Sharding isn't for everyone, but it's one way that relational systems can meet the demands of big data. For some shops, sharding means being able to keep a trusted RDBMS in place without sacrificing data scalability or system performance. In this installment of the Java development 2.0 series, find out when sharding works, and when it doesn't, and then get your hands busy sharding a simple application capable of handling terabytes of data.

Following are some of the Hibernate Shard resources for Java, which Andy listed in his article:

- Java development 2.0: This developerWorks series explores technologies and tools that are redefining the Java development landscape, including NoSQL (May 2010), SimpleDB (June 2010), and CouchDB (November 2009).

- "Sharding with Max Ross: Hibernate Shards" (JavaWorld, July 2008): Andy Glover talks with the founder of Hibernate Shards about the motivations behind the project and how to leverage shards effectively.

- "Think twice before sharding" (Andrew Glover, thediscoblog.com, June 2008): Sharding doesn't come without risks, so find out more about them before you commit.

- "An Unorthodox Approach to Database Design : The Coming of the Shard" (Todd Hoff, HighScalability.com, August 2009): A nice overview of sharding from a site that specializes in architectures for managing big data.

- "Building Scalable Databases: Pros and Cons of Various Database Sharding Schemes" (Dare Obasanjo, 25HoursADay.com, January 2009): Uses Facebook as a real-world example to explore sharding strategies.

- "Rethinking the traditional DAO pattern" (Andrew Glover, thediscoblog.com, June 2008): Learn more about folding DAOs into normal domain objects, a la Grails and Rails.

Ayende Rahien issued SQL Azure, Sharding and NHibernate: A call for volunteers, which has many interesting comments about SQL Azure and sharding, on 9/6/2009. His NHibernate Shards: Progress Report appeared on 10/18/2009. Stay tuned for more resources related to [N]Hibernate Shards.

• Matt Masson [pictured below] answered Why does my package run slower in BIDS than DTEXEC? on 1/15/2011:

Jamie Thomson recently suggested that you should run your packages using DTEXEC when doing performance testing, as it (typically) performs much better then when the same package is run in BIDS. If you haven’t already read it, go do that now. It has a pretty picture in it and everything. I’ll wait.

The purpose of this post was to call attention to 1) acknowledge the truthiness of Jamie’s post, 2) call attention to some of the great comments on the post, and 3) shed some light on why this is.

The Comments

- John Welch reminds people that running DTEXECUI is not the same as running DTEXEC. As we’ll see, it has some of the same overhead as BIDS does, and does not perform as well as running DTEXEC directly.

- Chris Randall called out that pressing Ctrl-F5 within BIDS invokes DTEXEC. There is a bit more startup cost, but actual package performance should be the same.

Why is BIDS slower than DTEXEC?

There are a number of factors, most of which are purely theoretical for me – I haven’t done deep analysis to determine the actual cost of each of these items, but they should give you the general idea….

- Startup – When starting the debug process, Visual Studio will save all open files, synchronize the project files, and switch to the debugging mode/view.

- Events – BIDS listens for a number of events thrown by the package so it can update the row counts, change the box colors, and populate the Execution Results tab.

- Debugging – SSIS needs to interact with the Visual Studio debugging interfaces to allow for breakpoints, script debugging, and data viewers.

- Child Packages – When running packages with Execute Package Tasks, the child packages will be opened and displayed in BIDS. When this happens, you’re paying the cost of de-serializing the package, determining the layout, drawing the shapes, hooking up additional listeners, etc.

- COM Interop – The SSIS runtime is COM based (native), while the BIDS designer code is .NET (managed). There’s overhead cost anytime the process needs to cross native/managed boundaries. There’s also an inter-process communication overhead for Visual Studio (devenv.exe) and the SSIS debug host (DtsDebugHost.exe).

- Memory – In my experience, this has been the biggest factor for slow BIDS performance, especially when dealing with large packages. More on this in the next section.

Is BIDS always slower than DTEXEC?

No. For smaller packages (single data flow, source –> destination), there might not be a difference. In some cases, BIDS might even be a little faster – which I assume is because the package object is reused, and doesn’t need to be loaded fresh from disk. Generally, larger packages will perform better with DTEXEC, because they have more work for BIDS to do (more objects to draw, more events to filter, etc). Memory (RAM) can become a factor with large packages as well. Since Visual Studio is a 32bit process, it has a 2gb memory limit. You can easily hit the point where BIDS will start swapping to disk if you have multiple large packages open, are using a number of IDE extensions, or have multiple project types loaded.

Conclusion

If something performs slowly in BIDS, chances are it will perform the same or faster with DTEXEC. Perf testing different designs in BIDS, like seeing if is using OLE DB Command is slower than doing things in a batch, is just fine to do in BIDS. If you’re trying to get an idea of whether your package execution time fits into your ETL batch window, make sure you measure your results using DTEXEC.

Matt’s advice is likely to apply if you use SSIS to migrate data to SQL Azure.

See Graham Calladine explained Windows Azure Platform Security Essentials: Module 3 – [SQL Azure] Storage Access in a 00:22:35 Channel9 video segment posted on 1/15/2011 in the Cloud Security and Governance section below.

<Return to section navigation list>

MarketPlace DataMarket and OData

• The Programming4Us site published Programming with SQL Azure : Record Navigation in WCF Data Services on 1/16/2011:

Let's talk a few more minutes about the WCF Data Service you built and how you can use that to navigate through records. Record navigation is one of the things that really gets me excited about WCF Data Services. Let's dive right in.

If your project is still running, stop the project and open the Solution Explorer and navigate to the data service. For simplicity, you'll do this right from the solution. Right mouse click the data service and select View in Browser. The service will fire up and what you see is a REST (Representational State Transfer) based service on top of your relational database, a mere XML representation of the service and the entities exposed via the service, shown in Figure 1. You see the entities listed because you set the entity set rights to ALL. If you were to go back to the code a few pages back where you set the entity set rights, and comment those lines out, you would not see the entities listed (Docs, TechGeoInfoes, and Users).

Figure 1. Viewing the WCF Data Service via REST

The question though, is how do you view the data that you really want to see. The answer is simple. Specify an entity at the end of your URI, and you'll see data for that entity. For example, specify the following URI to see data on the Users entity:

http://localhost:51176/TechBioDataService.svc/Users

Take care to get the case correct. Entity names are case sensitive. Specify users instead of Users, and you'll get a "The webpage cannot be found" error message.

Take time out now to mistype an entity name and see the resulting message. That way you'll more readily recognize the problem when you make same mistake inadvertently. Sooner or later, you all make that mistake.

1. Disabling Internet Explorer's Feed Reading View

At this point you either get an XML representation of the data in the Users table, or the web page shown in Figure 2. If it's the latter, then you need to go turn off the feed reading view in Internet Explorer IE. That is because IE thinks that the data coming back is the type you would get in an RSS feed. You can see in the message that the browser thinks you're trying to view an RSS feed.

To fix the RSS feed issue you need to turn off this feature in Internet Explorer. With IE open, from the Tools menu select Options which open the Internet Options dialog. This dialog has a number of tabs along the top which you might be familiar with. Select the Content tab and on that tab click the Settings button under Feeds and Web Slices.

Clicking the Settings button will display the Settings dialog, and on this dialog you need to uncheck the Turn on feed reading view checkbox, shown in Figure 3. Click OK on this dialog and the Internet Options dialog.

Figure 3. Disabling Feed Viewing

2. Viewing the Final Results

Back on your web page, press F5 to refresh the page. What you should get back now is a collection of Users, shown in Figure 4, by querying the underlying database for the Users.

However, you aren't done yet because there is still so much more you can do here. For example, the page you're currently looking at displays all the Users, but what if you want to return a specific user?

Looking at the data you can see that the each record contains the id of the specific row, and you can use that to your advantage by including that in your URI. For this example let's use ID 113. Modify the URI by appending the number 113 to the end of the URI enclosed in parenthesis, as shown in Figure 5.

By loading the URI which includes the id of a specific record, I can now drill down further and return just the record I am looking for. This is just like applying a WHERE clause to a T-SQL query, in this case WHERE ID = 113. In this case I have queried the underlying store for a specific user by passing the appropriate ID in the URI.

Additionally I can return a specific field by adding the field I want to the URI, such as:

http://localhost:51176/TechBioDataService.svc/Users(113)/Name

Specifying the specific field along with the id will return just the field you request. In the code snipped above, the value in the Name column for User ID 113 is returned, as shown in Figure 6.

You can also use this same methodology to navigate between tables. For example, you could do the following to return documents for a specific User ID:

http://localhost:51176/TechBioDataService.svc/Users(113)/Docs

Figure 4. Viewing All Users

Figure 5. Viewing a Specific User

Figure 6. Viewing the Name of a Specific Users

While this information isn't critical to connect to SQL Azure it's good information to have so you know how REST services work and can benefit from its functionality in your application. While this article did not go deep into the Entity Framework or REST technology, there are plenty of good books by APress or information on MSDN about this technology. I highly recommend that you explore these technologies further to enhance your SQL Azure applications.

• Ron Jacobs explained WCF FaultContract and FaultException best practices in his WCF Spike FaultContract, FaultException<TDetail> and Validation post of 1/14/2011:

Ready to have some fun? Today I spent the day investigating WCF Faul[t]Contracts and FaultException and some best practices for argument validation. I’m going to do the same in a future post on Workflow Services but I felt it best to really understand the topic from a WCF point of view first.

Investigation Questions

- What happens when a service throws an exception?

- What happens if a service throws a FaultException?

- What happens if the service operation includes a FaultContract and it throws a FaultException<TDetail>?

- How can I centralize validation of DataContracts?

Scenario 1: WCF Service throws an exception

Given

- A service that throws an ArgumentOutOfRangeException

- There is no <serviceDebug> or <serviceDebug includeExceptionDetailInFaults="false" /> in web.config

When

- The service is invoked with a data that will cause the exception

Then

- The client proxy will catch a System.ServiceModel.FaultException

- The FaultCode name will be “InternalServiceFault”

- The Fault.Reason will be

"The server was unable to process the request due to an internal error. For more information about the error, either turn on IncludeExceptionDetailInFaults (either from ServiceBehaviorAttribute or from the <serviceDebug> configuration behavior) on the server in order to send the exception information back to the client, or turn on tracing as per the Microsoft .NET Framework 3.0 SDK documentation and inspect the server trace logs."

Conclusions

No surprises here, probably anyone who has done WCF for more than 5 minutes has run into this. For more information see the WCF documentation Sending and Receiving Faults. You might be tempted to just turn on IncludeExceptionDetailInFaults but don’t do it because it can lead to security vulnerabilities. Instead you need a better strategy for dealing with exceptions and that means you need to understand FaultException

Scenario 2: WCF Service throws a FaultException

As we saw in the previous example, WCF already throws a FaultException for you when it encounters an unhandled exception. The problem is in this case that we want to let the caller know they sent an invalid argument even when they are not debugging.

Given

- A service that throws a FaultException

public string GetDataFaultException(int data){ if (data < 0) { // Let the sender know it is their problem var faultCode = FaultCodeFactory.CreateVersionAwareSenderFaultCode( ContractFaultCodes.InvalidArgument.ToString(), ContractConstants.Namespace); var faultReason = string.Format(Resources.ValueOutOfRange, "Data", Resources.ValueGreaterThanZero); throw new FaultException(faultReason, faultCode); } return "Data: " + data; }When

- The service is invoked with a data value that will cause an exception

Then

- The client proxy will catch a System.ServiceModel.FaultException

- The FaultCode.Name will be “Client” (for SOAP 1.1) or “Sender” (for SOAP 1.2)

- The Fault.Reason will be Argument Data is out of range value must be greater than zero

Conclusions

The main thing is that the client now gets a message saying that things didn’t work and its their fault. They can tell by looking at the FaultException.Code.IsSenderFault property. In my code you’ll notice a class I created called CreateVersionAwareSenderFaultCode to help deal with the differences between SOAP 1.1 and SOAP 1.2. You will find it in the sample.

Some interesting things I learned while testing this

- You should specify a Sender fault if you determine that there is a problem with the message and it should not be resubmitted

- BasicHttpBinding does SOAP 1.1 while the other bindings do SOAP 1.2 so if you are working with both you need to do the version aware sender fault code.

- FaultException.Action never shows up on the client side so don’t bother with it.

- FaultException.HelpLink and FaultException.Data (and other properties inherited from Exception) do not get serialized and will show up empty on the client side so you can’t use them for anything.

While this is better than throwing unhandled exceptions, there is an even better way and that is FaultContracts

Scenario 3: WCF Service with a FaultContract

Given

- A service operation with a FaultContract

- and a service that throws a FaultException<TDetail>

var argValidationFault = new ArgumentValidationFault{ ArgumentName = "Data", Message = string.Format(Resources.ValueOutOfRange, "Data", Resources.ValueGreaterThanZero), HelpLink = GetAbsoluteUriHelpLink("DataHelp.aspx"),}; throw new FaultException<ArgumentValidationFault>(argValidationFault, argValidationFault.Message, faultCode);When

- The service is invoked with a data value that will cause an exception

Then

- The client proxy will catch a System.ServiceModel.FaultException

- The FaultCode.Name will be “Client” (for SOAP 1.1) or “Sender” (for SOAP 1.2)

- The Fault.Reason will be Argument Data is out of range value must be greater than zero

- The FaultDetail will be the type TDetail which will be included in the generated in the service reference

Conclusions

This is the best choice. It allows you to pass all kinds of information to clients and it makes your error handling capability truly first class. In the sample code one thing I wanted to do was to use the FaultException.HelpLink property to pass a Url to a help page. Unfortunately I learned that none of System.Exception’s properties are propagated to the sender. No problem, I just added a HelpLink property to my ArgumentValidationFault type and used it instead in FaultException.Details.HelpLink

Some interesting things I learned while testing this

- You can specify an interface in the [FaultContract] attribute but it didn’t seem to work on the caller side for catching a FaultException<T>

Recommendation

Use [FaultContract] on your service operations. You should probably create a base type for your details and perhaps have a few subclasses for special categories of things. Remember that whatever you expose in the FaultContract is part of your public API and that versioning considerations that apply to other DataContracts apply to your faults as well.

• The Microsoft Web Camps Training Kit site made available an OData Introduction: Demo Demo Overview in DOCX format in January 2011:

This demo introduces you to the OData standard, how to query Odata, and also shows you the Dallas initiative.

The demo is a script for Web Camp presenters and is a bit dated, based on use of the term “Dallas initiative” for Azure Market Place DataMarket. If you’re making an OData presentation, you might find it useful. Here’s a screen capture of section 2:

The Training Kit includes similar materials for ASP.NET MVC, IE9 & HTML5, JQuery, WebApps and WebMatrix.

<Return to section navigation list>

Windows Azure AppFabric: Access Control and Service Bus

• Alik Levin explained Windows Azure AppFabric Access Control Service (ACS) v2– Returning Friendly Error Messages Using Error URL Feature in a 1/15/2011 post:

Windows Azure AppFabric Access Control Service (ACS) v2 has a feature called Error URL that allows a web site to display friendly message in case of error during the authentication/federation process. For example, during the authentication with Facebook or Google a user asked for consent after successful authentication. If the user denies ACS generates an error that could be presneted to a user in a friendly manner. Another case is when there is a mis-configuration at ACS level, for example, no rules generated for specific identity provider which results in error generated by ACS.

How to show friendly error message for these cases, branded with the look an feel as the rest of my website?

The solution is using Error URL feature available through ACS management portal. ACS generated JSON encoded error message and passes it to your error page. You need to specify the URL of the error page on ACS management portal so that ACS will know where to pass the information. Your error page should pars the JSON encoded error message and render appropriate HTML for the end user. Here is a sample JSON encoded error message:

{"context":null,"httpReturnCode":401,"identityProvider":"Google","timeStamp":"2010-12-17 21:01:36Z","traceId":"16bba464-03b9-48c6-a248-9d16747b1515","errors":[{"errorCode":"ACS30000","errorMessage":"There was an error processing an OpenID sign-in response."},{"errorCode":"ACS50019","errorMessage":"Sign-in was cancelled by the user."}]}

Step 1 – Enable Error URL Feature

To enable Error URL feature for your relying party:

- Login to http://portal.appfabriclabs.com.

- On the My Projects page click on your project.

- On the Project:<<YourProject>> page click on Access Control link for desired namespace.

- On the Access Control Settings: <<YourNamespace>> page click on Manage Access Control link.

- On the Access Control Service page click on Relying Party Applications link.

- On the Relying Party Applications page click on your relying party application.

- On the Edit Relying Party application page notice Error URL field in Relying Party Application Details section.

- Inter your error page URL. This is the page that will receive JSON URL encoded parameters that include the error details.

Step 2 – Process JSON Encoded Error Message

To process JSON encoded error message generated by ACS:

- Add an aspx web page to your ASP.NET application. And give it a name, for example, ErrorPage.aspx. It will serve as an error page that will process the JSON encoded error message sent from ACS.

- Add the following labels controls to the ASP.NET markup:

<asp:Label ID="lblIdntityProvider" runat="server"></asp:Label><asp:Label ID="lblErrorMessage" runat="server"></asp:Label>- Switch to the page’s code behind file, ErrorPge.aspx.cs.

- Add the following declaration to the top:

using System.Web.Script.Serialization;- Add the following code to Page_Load method:

JavaScriptSerializer serializer = new JavaScriptSerializer(); ErrorDetails error = serializer.Deserialize<ErrorDetails>(

Request["ErrorDetails"] ); lblIdntityProvider.Text = error.identityProvider; lblErrorMessage.Text = string.Format("Error Code {0}: {1}",

error.errors[0].errorCode,

error.errors[0].errorMessage);

- The above code is responsible for processing JSON encoded error messages received from ACS. You might want to loop through the errors array as it might have additional more fine grained error information.

Step 3 – Configure Anonymous Access To The Error Page

To configure anonymous access to the error page:

- Open web.config of your application and add the following entry:

<location path="ErrorPage.aspx"> <system.web> <authorization> <allow users="*" /> </authorization> </system.web> </location>- This will make sure you are not get into infinite redirection loop.

Step 4 – Test Your Work

To test Error URL feature:

- Login to http://portal.appfabriclabs.com.

- On the My Projects page click on your project.

- On the Project:<<YourProject>> page click on Access Control link for desired namespace.

- On the Access Control Settings: <<YourNamespace>> page click on Manage Access Control link.

- On the Access Control Service page click on Rule Groups link.

- On the Rule Groups page click on the rule group related to your relying party.

- WARNING: This step cannot be undone. If you are deleting generated rules they can be easily generated once more. On the Edit Rule Group page delete all rules. To delete all rules check all rules in the Rules section and click Delete Selected Rules link.

- Click Save button.

- Return to your web site and navigate to one of the pages using browser.

- You should be redirected to your identity provider for authentication – Windows LiveID, Google, Facebook, Yahoo!, or ADFS 2.0 – whatever is configured for your relying party as identity provider.

- After successful authentication your should be redirected back to ACS which should generate an error since no rules defined.

- This error should be displayed on your error page that you created in step 2, similar to the following:

uri:WindowsLiveID

Error Code ACS50000: There was an error issuing a token.Another way to test it is denying user consent. This is presented when you login using Facebook or Google.

Related Books

- Programming Windows Identity Foundation (Dev - Pro)

- A Guide to Claims-Based Identity and Access Control (Patterns & Practices) – free online version

- Developing More-Secure Microsoft ASP.NET 2.0 Applications (Pro Developer)

- Ultra-Fast ASP.NET: Build Ultra-Fast and Ultra-Scalable web sites using ASP.NET and SQL Server

- Advanced .NET Debugging

- Debugging Microsoft .NET 2.0 Applications

Related Info

- ACS Error Codes

- Windows Identity Foundation (WIF) and Azure AppFabric Access Control (ACS) Service Survival Guide

- Videos: Windows Azure Security Essentials For Decision Makers, Security Architecture, Access, and Secure Development

- Video: What’s Windows Azure AppFabric Access Control Service (ACS) v2?

- Video: What Windows Azure AppFabric Access Control Service (ACS) v2 Can Do For Me?

- Video: Windows Azure AppFabric Access Control Service (ACS) v2 Key Components and Architecture

- Video: Windows Azure AppFabric Access Control Service (ACS) v2 Prerequisites

• Alik Levin updated the How To: Create My First Claims Aware ASP.NET application Integrated with ACS v2 wiki page of the Access Control Service Samples and Documentation (Labs)project on CodePlex on 1/14/2011 (missed when published):

Applies To

Microsoft® Windows Azure® AppFabric Access Control Service version 2.0 (ACS v2.0)

Overview

This topic describes the simple scenario of integrating ACS v2.0 with an ASP.NET Relying Party application. By integrating your web application with ACS v2.0, you factor the features of authentication and authorization out of your code. In other words, ACS v2.0 provides the mechanism for authenticating and authorizing users to your web application. In this practice scenario, ACS v2.0 authenticates users with a Google identity into a test ASP.Net Relying Party application.

Summary of Steps

Important

Before performing the following steps, make sure that your system meets all of the .Net framework and platform requirements that are summarized in Prerequisites for Windows Azure AppFabric Access Control Service.

To integrate ACS v2.0 with an ASP.NET Relying Party application, complete the following steps:

- Step 1 - Create a Windows Azure AppFabric Project

- Step 2 - Add a Service Namespace to a Windows Azure AppFabric Project

- Step 3 – Launch the ACS v2.0 Management Portal

- Step 4 – Add Identity Providers

- Step 5 - Setup the Relying Party Application

- Step 6 - Create Rules

- Step 7 - View Application Integration Section

- Step 8 - Create an ASP.Net Relying Party Application

- Step 9 - Configure trust between the ASP.NET Relying Party Application and ACS v2.0

- Step 10 – Test the integration between the ASP.NET Relying Party application and ACS v2.0

Step 1 - Create a Windows Azure AppFabric Project

For detailed instructions on how to create a Windows Azure AppFabric Project, see How to: Create a New Windows Azure AppFabric Project.

Step 2 - Add a Service Namespace to a Windows Azure AppFabric Project

For detailed instructions on how to Add a Service Namespace to a Windows Azure AppFabric Project, see How to: Add a Service Namespace to a Windows Azure AppFabric Project.

Step 3 – Launch the ACS v2.0 Management Portal

This section describes how to launch the ACS v2.0 Management Portal. The ACS v2.0 Management Portal allows you to configure your ACS Service Namespace by adding identity providers, configuring relying party applications, defining rules and groups of rules, and establishing the credentials that your relying party trusts.

To launch the ACS v2.0 Management Portal

On the Project page, once the service namespace you created in Step 2 is active, click Access Control.

You are redirected to the page that displays your project ID, allows you to delete the Service Namespace, or launch the ACS v2.0 Management Portal.

To launch the ACS v2.0 Management Portal, click Manage Access Control.

Step 4 – Add Identity Providers

This section describes how to add identity providers to use with your relying party application for authentication. For more information about identity providers, see Relying party, Client, Identity Provider, ACS.

How to add identity providers

On the ACS v2.0 Management Portal, click Identity Providers.

On Identity Providers page, click Add Identity Provider, and then click Add button next to Google.

The Add Google Identity Provider page prompts you to enter a login link text (the default is Google) and an image URL. This URL points to a file of an image that can be used as the login link for this identity provider (in this case, Google). Editing these fields is optional. For this demo, do not edit them, and click Save.

On Identity Providers page, click Return to Access Control Service to go back to the ACS v2.0 management portal main page.

Step 5 - Setup the Relying Party Application

This section describes how to setup a Relying Party Application. In ACS, a Relying Party Application is a projection of your web application into the system. It defines the URLs for your application, token format preference, token timeout, token signing options, and token encryption options. For more information about relying party applications, see Relying party, Client, Identity Provider, ACS

How to setup a relying party applicaiton

On the ACS v2.0 Management Portal, click Relying Party Applications.

On Relying Party Applications page, click Add Relying Party Application.

On Add Relying Party Application page, do the following:

- In Name, type the name of the relying party application. For this demo, type TestApp.

- In Mode, select either Enter settings manually (if you want to configure your relying party application settings manually) or Import WS-Federation metadata (if you want to upload a WS-Federation metadata document with the settings for your relying party application.

- In Realm, type the URI that the security token issued by ACS v2.0 applies to. For this demo, type http://localhost:7777/.

- In Return URL, type the URL that ACS v2.0 returns the security token to. This field is optional. For this demo, you can type http://localhost:7777/

- In Token format, select a token format for ACS v2.0 to use when issuing security tokens to this relying party application. For this demo, select SAML 2.0. For more information about SAML tokens, see Tokens SAML, SWT and all about tokens..

- In Token encryption policy, select an encryption policy for tokens issued by ACS v2.0 for this relying party application. For this demo, accept the default value of None. For more information about token encryption policy, see Tokens SAML, SWT and all about tokens.

- In Token lifetime (secs):, specify the amount of time for a security token issued by ACS v2.0 to remain valid. For this demo, accept the default value of 600. For more information about tokens, see Tokens SAML, SWT and all about tokens.

- In Identity providers, Select the identity providers to use with this relying party application. For this demo, accept the checked defaults (Google and Windows Live ID). For more information about relying party applications, see Relying party, Client, Identity Provider, ACS

- In Rule groups, select rule groups for this relying party application to use when processing claims. For this demo, accept Create New Rule Group that is checked by default. For more information about rule groups, see Relying party, Client, Identity Provider, ACS

- In Token signing, select whether to sign SAML tokens using the default service namespace certificate, or using a custom certificate specific to this application. For this demo, accept the default value of Use service namespace certificate (standard). For more information about token signing, see Tokens SAML, SWT and all about tokens.

Click Save.

On Relying Party Applications page, click Return to Access Control Service to go back to the ACS v2.0 management portal main page.

Step 6 - Create Rules

This section describes how to define rules that drive how claims are passed from identity providers to your relying party application. For more information about rules and rule groups, see Rules and rule groups.

How to create rules

On the ACS v2.0 Management Portal main page, click Rule Groups.

On Rule Groups page, click Default Rule Group for TestApp (since you named your relying party application TestApp.

On Edit Rule Group page, click Generate Rules.

On Generate Rules: Default Rule Group for TestApp page, accept the identity providers selected by default (in this demo, Google and Windows Live ID), and then click Generate.

On Edit Rule Group page, click Save.

On Rule Groups page, click Return to Access Control Service to return to the main page of the ACS v2.0 management portal.

Step 7 - View Application Integration Section

You can find all the information and code necessary to modify your relying party application to work with ACS v2.0 on the Application Integration page.

How to view the Application Integration page

On the ACS v2.0 Management portal main page, click Application Integration.

The ACS URIs that are displayed on the Application Integration page are unique to your service namespace.

For this demo, it is recommended to keep this page open in order to perfrom future steps quickly.

Step 8 - Create an ASP.Net Relying Party Application

This section describes how to create an ASP.Net Relying Party application that you want to eventually integrate with ACS v2.0.

How to create an ASP.NET Relying Party application

To run Visual Studio 2010, click Start, click Run, type devenv.exe and press Enter.

In Visual Studio, click File, and then click New Project.

In New Project window, select either Visual Basic or Visual C# template, and then select ASP.NET MVC 2 Web Application.

In Name, type TestApp, and then click OK.

In Create Unit Test Project, select No, do not create a unit test project and then click OK.

In Solution Explorer, right-click TestApp and then select Properties.

In the TestApp properties window, select the Web tab, and under Use Visual Studio Development Server, click Specific port, and then change the value to 7777.

To run and debug the application you just created, press F5. If no errors were found, your browser renders an empty MVC project.

Keep Visual Studio 2010 open in order to complete the next step described in the section below.

Step 9 - Configure trust between the ASP.NET Relying Party Application and ACS v2.0

This section describes how to integrate ACS v2.0 with the ASP.NET Relying Party application that you created in the previous step.

How to configure trust between the ASP.NET Relying Party Application and ACS v2.0

In Visual Studio 2010, in Solution Explorer for TestApp, right-click TestApp and select Add STS Reference.

In Federation Utility wizard, do the following:

- On Welcome to the Federation Utility Wizard page, in Application URI, enter the application URI and then click Next. In this demo, the application URI is http://localhost:7777/.

Note

The trailing slash is important because it matches the value you entered in ACS v2.0 Management Portal for your relying party application. For more information, see Step 5 - Setup the Relying Party Application.

- A warning pops up: ID 1007: The Application is not hosted on a secure https connection. Do you wish to continue? For this demo, click Yes.

Note

In a production environment, this warning about using SSL is valid and should not be dismissed.

- On Security Token Service page, select Use Existing STS, enter the WS-Federation Metadata URL published by ACS v2, and then click Next.

Note

You can find the value of the WS-Federation Metadata URL on the Application Integration page of the ACS v2.0 Management Portal. For more information, see Step 7 - View Application Integration Section.

- On STS signing certificate chain validation error page, click Next.

- On Security token encryption page, click Next.

- On Offered claims page, click Next.

- On Summary page, click Finish.

Once you successfully finish running the Federation Utility wizard, it adds a reference to the Microsoft.IdentityModel.dll assembly and writes values to your Web.config file that configures the Windows Identity Foundation in your ASP.NET MVC 2 Web Application (TestApp).

Open Web.config and locate the main system.web element. It might look like the following:

<system.web> <authorization> <deny users="?" /> </authorization>Modify Web.config to enable request validation by adding the following code under the main system.web element:

<!--set this value--> <httpRuntime requestValidationMode="2.0"/>After you perform the update, this is what the above code fragment must look like:

<system.web> <!--set this value--> <httpRuntime requestValidationMode="2.0"/> <authorization> <deny users="?" /> </authorization>Step 10 – Test the integration between the ASP.NET Relying Party application and ACS v2.0

This section describes how you can test the integration between your relying party application and ACS v2.0

How to test the integration between the ASP.NET Relying Party application and ACS v2.0

Keeping Visual Studio 2010 open, press F5 to start debugging your ASP.NET relying party application.

If no errors were found, instead of opening the default MVC application, your browser is redirected to a Home Realm Discovery page that is hosted by ACS v2.0 hosted that asks you to choose an identity provider.

Select Google.

The browser will load the Google login page.

Enter test Google credentials, and accept the consent UI shown at the Google website.

The browser will post back to ACS, ACS will issue a token, and post that token to your MVC site. Once the MVC site loads you should see something like the following. Notice the name is automatically populated with data from Google and ACS.

See Graham Calladine explained Windows Azure Platform Security Essentials: Module 3 Storage Access in a 00:22:35 Channel9 video segment posted on 1/15/2011, which includes a link to Windows Azure Platform Security Essentials: Module 2 – Identity Access Management of 12/26/2010.

<Return to section navigation list>

Windows Azure Virtual Network, Connect, RDP and CDN

Neudesic offered on 1/7/2011 a What's New From PDC: Windows Azure AppFabric and Windows Azure Connect Webcast (missed when published):

[By] David Pallmann, General Manager of Custom Application Development, Neudesic

At PDC 2010, Microsoft announced new capabilities for Windows Azure. In this webcast you'll learn about new AppFabric features included the distributed cache service and the future AppFabric Composition Service. You'll also learn about the new Windows Azure Connect virtual networking capability which allows you to link your IT assets in the cloud to your on-premise IT assets.

Requires site registration to view the Webcast.

<Return to section navigation list>

Live Windows Azure Apps, APIs, Tools and Test Harnesses

• Torsten Langner explained how to Run Batch Workload on a Mixed Infrastructure (Windows Azure Worker Nodes & On-Premise HPC Server 2008 R2 Compute Nodes) in a post to the Windows HPC Team Blog of 1/15/2011:

With the introduction of SP1 of HPC Server 2008 R2 it is possible to run workload on Windows Azure. If you want to be able to off-burst your batch application (= extending the infrastructure from classic on-premise servers to cloud based Azure worker nodes) you need to set specific environment variables within the on-premise server infrastructure.

Here is a sample of how to upload a batch based application (azuretestbatch.exe) to Azure workder nodes and run a parametric sweep workload across the full cluster (on-premise compute nodes + Azure worker nodes):

Create the Windows Azure Package & Upload It

Use the hpcpack create command to create a *.zip file. Once the package got created, upload it to the Windows Azure storage by using the hpcpack upload command. Specify the node template of your Windows Azure worker nodes. Additionally specify a relative path – in our sample its named test. This forces the hpc sync command which we call later to unzip the package content to the folder named test:

C:\Users\tlangner>hpcpack create AzureTestPackage.zip C:\temp\folder1 C:\Users\tlangner>hpcpack upload AzureTestPackage.zip /nodetemplate:AzureNodeTemplate /relativepath:testYou can test your upload by calling the hpcpack view command. As you can see in the lines below the targeted physical directory on the Windows Azure worker node disks is %CCP_PACKAGE_ROOT%\test. The environment variable %CCP_PACKAGE_ROOT% only exists on Windows Azure worker nodes.

C:\Users\tlangner>hpcpack view azuretestpackage.zip /nodetemplate:AzureNodeTemplate Connecting to head node: head Querying for Windows Azure Worker Role node template "AzureNodeTemplate" Windows Azure Worker Role node template found. Retrieving Azure account name and key. Found account: hpc*** and key: ******** Package Name: azuretestpackage.zip Uploaded: 06.01.2011 10:43:44 Description: Node Template: AzureNodeTemplate Target Azure Dir: %CCP_PACKAGE_ROOT%\testAfter the hpcpack upload command has finished, it is time to deploy the package content to the Windows Azure worker nodes (ie. local disks of the cloud machines). This is done by calling the hpcsync command:

C:\Users\tlangner>hpcsync /nodetemplate:AzureNodeTemplateDeploy the Package Content Locally

In order to run the batch workload on the “classic” compute nodes, too, it is helpful to deploy the package using the same environment variables as they are are available in Windows Azure. In this sample we want the CCP_PACKAGE_ROOT variable link to the local C:\TEMP folder of every machine in the cluster:

C:\Users\tlangner>cluscfg setenvs "CCP_PACKAGE_ROOT=C:\TEMP"Then, let’s copy the zip package content to the target directory of every “classic” compute node in the cluster. The content will be deployed to the C:\TEMP\test directory:

C:\Users\tlangner>clusrun /nodegroup:computenodes xcopy \\head\temp\AzureTestBatch\AzureTestBatch\bin\Debug\*.* ^%CCP_PACKAGE_ROOT%^\testRun the Mixed Workload

After deploying the package content to the whole cluster it’s time to run the batch job:

C:\Users\tlangner>job submit /nodegroup:X /parametric:1-1000 /workdir:^%CCP_PACKAGE_ROOT%^\test azuretestbatch.exe 5000

• Erik Oppendijk explained Adding assemblies to the GAC in Windows Azure with Startup Tasks in a 1/14/2011 post to the InfoSupport Blog Community:

Startup tasks are the new way to run a script or file in Windows Azure, and we can run them under Elevated (Administrator) context. For this to work, add the assembly you want to add to the GAC to your project and set copy local to true.

Edit the .CSDEF file and add a Startup task with executionContext elevated:

<ServiceDefinition name="MyProject" xmlns="http://schemas.microsoft.com/ServiceHosting/2008/10/ServiceDefinition">

<WebRole name="MvcWebRole1">

<Startup>

<Task commandLine="RegisterGAC.cmd" executionContext="elevated" taskType="simple" />

</Startup>Now add the RegisterGAC.cmd and gacutil.exe to your project, set the build action to none, and copy to output directory to Copy Always for both files!

In the RegisterGAC.cmd enter the following 2 lines:

gacutil /nologo /i .\Microsoft.IdentityModel.dll

exit /b 0Remember that the startup task runs from the /BIN folder. There is also the possibility to set the taskType:

- Simple - needs to complete before the role continues

- Background - runs parallel with the role (startup)

- Foreground - runs parallel with the role, but needs to finish before the role can shutdown

Also remember that the right .Net 4.0 gacutil.exe is in this directory: C:\Program Files\Microsoft SDKs\Windows\v7.0A\bin\NETFX 4.0 Tools

• Bill Zack explained How to Survive with Windows PowerShell in a 1/14/2011 post to the Ignition Showcase blog:

Whether you are a developer or an IT pro working at an ISV you need to know the basics of PowerShell, Microsoft’s premier command shell and scripting language. If you need to automate Windows Azure Deployments, make maximum effective use of the Azure PowerShell cmdlets or customize a VMRole at startup time then this tool is for you.

This PowerShell Survival Guide will give you those basics. It includes links to Essential Windows PowerShell Resources, Sample PowerShell Scripts and modules, Forums, User Groups, videos and more.

Alex Williams asked (and partially answered) Is Windows Azure Suitable for Startups? in a 1/14/2010 post to the ReadWriteCloud blog:

Last week we wrote about the services that Y Combinator startups use. For hosting, Amazon Web Services and Rackspace were the most popular services.

Windows Azure was noticeably absent. But why? A topic turned up on Quora, citing our post and wondering why the lack of adoption.

The topic has sparked a bit of discussion. The consensus: Azure is better suited for larger enterprise operations.

That does make sense to some degree. We'll just have to see by how much.The people who make up Azure are a smart group. Under Bob Muglia, a real push started to attract more developers. That became apparent at the Professional Developers Conference, which was not a huge event but did have a core group there developing on numerous platforms such as Windows 7, Windows Phone 7 and the Azure marketplaces. Muglia is leaving Microsoft so questions are out there but the structure is in place for his successor.

Further, the tablet market will continue to drive a startup community to Azure. And BizSpark is no wilting effort. The official Microsoft blog states some of the results for the program since the effort began in 2008:

Since then, about 35,000 startups from all over the world have enrolled in the program, which provides early-stage businesses with fast and easy access to software development tools and platforms as well as a network of 2,500 network partners - including venture capital firms, university incubators, consultants and angel investors - interested in helping startups grow.Microsoft will also focus on synchronizing connected devices this year, which will be a driving feature as devices are optimized for users. That alone will help drive development. Our bet is that synchronization will be a driving factor in the sale of Windows Phone 7 and tablet devices.

Commenters on Quora have a different perspective. They state that Azure is just not the right choice for startups, citing better suitability for large enterprises, the costs and productivity differences between it and platforms such as Heroku, which actually runs on Amazon Web Services.

What Commenters Say About Why Azure is Better for the Fortune 500

Startups are disruptive. Microsoft caters to Fortune 500 companies. Using Azure means startups adopting one vendor as opposed to an open source community with large pools of available software engineers.

The costs are higher compared to platforms such as Heroku. It can cost at least $40 per month for each site a user sets up. To set up an app on Heroku is free.

It can take longer. One developer commented that for typical Web apps they are ten times more productive with Ruby on Rails than the Microsoft. Rails was built for the Web. Microsoft originally built its stack for the desktop[*].

Azure is a platform, which is a same reason that Google App Engine is not represented either. Amazon and Rackpace are infrastructure providers, which provide the flexibility to build your own stack, which gives far more flexibility than a platform, which at some point will pose limits to the developer.

On a final note, there are some praises for Windows Azure. Martin Wawrusch critiqued Azure but ended by saying that it is a great platform and he wholeheartedly recommends it. He even said that the SQL Azure engine is years ahead of the competition, serving as a good fit for backend processing for a startup. He does also say he would however not use it for any front end work in a startup environment.

There are still open questions about Azure. It's not ruled out as a platform. It has potential for considerable success. The question is its comparison to other offerings on Amazon or Rackspace. For now, those offerings are more popular and should continue to be so for at least the year ahead.

* Here’s what I had to say in answer to the aggregated comments as of 1/15/2011:

The Microsoft Partner Network offers 750 free hours/month of an Extra Small Compute instance, free blog, queue and table storage with a moderate amount of free bandwidth, a free SQL Azure Web database instance (1 GB max size), and other freebies in its Cloud Essentials Pack. Details are at http://oakleafblog.blogspot.com/.... Pricing for Azure commercial usage levels is very similar to that of Amazon Web Services.

Windows Azure can emulated continuous deployment with its Azure Fabric Emulator which runs on the developer's PC.

Productivity for ASP.NET or ASP.NET MVC web development is very close to that for on-premises or hosted IIS. Windows Azure is a platform as a service (PaaS) offering, which handles availability issues with automatic replication, supports automating horizontal scaling up and down, and eliminates the need for Windows developers to upgrade or patch the operating system.

Microsoft's Case Studies group has many examples of startups using the Windows Azure platform at http://www.microsoft.com/Windows....

For more comparisons of Windows Azure with its competitors, see David Pallman answered the What Platform to Choose? question in Picking a Lane in Cloud Computing on 1/14/2011 in the Windows Azure Infrastructure section below.

<Return to section navigation list>

Visual Studio LightSwitch

Return to section navigation list>

Windows Azure Infrastructure

• Brian Loesgen posted Debunking a couple of Azure Myths on 1/15/2011:

After about three months in the field talking with ISV partners, enterprise developers and developers in general, I’ve noticed a couple of Azure misconceptions that seem to be recurring, so I thought I’d post something here for people to find to try to correct that.

Myth #1: “Azure is from Microsoft so it’s something that only .NET developers can use”

Actually, anything that runs in Windows will run on Azure. Microsoft’s goal is to provide the best cloud platform, PERIOD, regardless of your choice of languages or development tools. It was announced at PDC the the JRE would be present in Azure worker/web roles and that Java would be a first class citizen. Sure, the Visual Studio integration is great, but we also just released Eclipse integration for the latest V1.3 SDK.

In fact, my very first project when I started my Azure Architect Evangelist role was running Tomcat and Google Web Toolkit, migrating Postgress to SQL Azure. That’s pretty far from the typical .NET stack! As you can see below, we support Java, PHP, Python, Ruby, Tomcat, Zend and more! So, if you use any of those, you can take advantage of our near $3 billion dollar (so far) investment in 6 Azure datacenters worldwide, as well as other capabilities like our on-prem/off-prem bridging, federated security model (supporting oAuth 2, Google ID, Facebook , LiveID and more).

Microsoft is making significant investments in interoperability. If you want to see more about Azure interoperability (as well as other interoperability initiatives), I suggest you check out the Interoperability Bridges site.

Myth #2: “Azure is not done because they keep releasing new stuff”

Frankly, I was surprised the first time I heard this. Then I heard it a few more times. At PDC 2010 we released/announced probably more than a dozen new Azure capabilities. However, the reality is that we (Microsoft) are investing BILLIONS into our cloud initiatives, which includes Azure. The strong stream of announcements and releases is the realization of our vision, and the fruits of our investments.

If you look back, the only REALLY fundamental shift that happened was SQL Azure (where the team responded incredibly quickly to customer feedback). All other announcements and releases have been aligned with the vision and path that we have been on for many years now. Azure is absolutely done and in use by thousands. Will there be more pieces to come in the future? Absolutely! We embarked on the Platform-as-a-Service path 4 years ago, we have not and will not deviate. On a related theme, we are also being very open about our plans, and actively soliciting customer feedback. Got an idea for something you’d like added, or would you like to provide feedback on proposed features? Go to http://www.mygreatwindowsazureidea.com.

• Frank Gartland asked Your Weekend Look Cloudy? Free Windows Azure Training Just Released! and reminded developers about Windows Azure training in a 1/14/2011 post to Microsoft Learning’s Born to Learn Blogs:

Just before the Holidays, Microsoft hosted another new and exclusive Jump Start virtual training event, this time covering the Windows Azure Platform. What a success! “Building Cloud Applications using the Windows Azure Platform” was tailored for application architects and developers interested in leveraging the cloud.

While many of the attendees were already building a pilot project or planning to migrate an application, around 35% were searching for real-world answers as they consider whether or not the Windows Azure Platform fits their needs. They were in for a treat since all 12 hours of this training was led by two of the most respected authorities on Microsoft development technologies, David S. Platt and Manu Cohen-Yashar. Learn strategies for your cloud application lifecycle, know your options for storage in the cloud, understand the realities of Security using Azure and find out all your team should consider to truly design for scale and elasticity.

The feedback has been great, but due to the Holidays many of you weren’t able to join us back in December. So whether you were with us and want to refresh and reinforce what you learned, or you want to check it out for the first time, all 12 hours of the HD-quality videos were just released and are ready to go! Just browse this list and get started!

Session 01: Windows Azure Overview

Session 02: Introduction to Compute

Session 03: Windows Azure Lifecycle, Part 1

Session 04: Windows Azure Lifecycle, Part 2

Session 05: Windows Azure Storage, Part 1

Session 06: Windows Azure Storage, Part 2

Session 07: Introduction to SQL Azure

Session 08: Windows Azure Diagnostics

Session 09: Windows Azure Security, Part 1

Session 10: Windows Azure Security, Part 2

Session 11: Scalability, Caching & Elasticity, Part 1

Session 12: Scalability, Caching & Elasticity, Part 2, and Q&ABy the way, feel free to check out the course materials and code samples while you’re watching.

Get Access to the Windows Azure Platform for the Labs and learn about training & certification options by visiting the Windows Azure Online Portal.

• Pat Romanski described a “Forecast for Cloud Computing Across Key U.S. Cities Calls for New Lines Of Business, More IT Services, and Job Growth” in her Microsoft Survey Highlights the US Top "Cloud-Friendly" Cities article of 1/14/2011:

Microsoft on Wednesday named some of the country’s top “cloud-friendly” U.S. cities. The rankings are based on the results of an extensive survey in which 2,000 IT decision makers nationwide discussed how they are adopting and using cloud computing.

The forecast for cloud computing across key U.S. cities calls for new lines of business, more need for IT services, and potential job growth, according to a new survey released on Wednesday by Microsoft.

Microsoft released the results of the study this week after interviewing more than 2,000 IT decision-makers in 10 U.S. cities.

The cities are ranked based on how local businesses are adopting and using cloud computing solutions – including hiring vendors to migrate to the cloud, seeking IT professionals with cloud computing experience, and creating new lines of business based on cloud platforms. The survey indicates that cloud computing is not only a growing sector of the IT services community, but helping to create new businesses and jobs locally.

Atlanta, Ga. Atlanta ranks in the middle of the pack of “cloud friendly” cities. The majority (62 percent) of IT decision makers at large companies in Atlanta currently employ, or plan to implement, cloud-based e-mail and communications tools, like IM and voice, compared with 36 percent of those at small businesses.

Boston, Mass. Ranked as the most “cloud-friendly” city for large companies, Boston boasts a high percentage of companies that view cloud services as an opportunity to be more innovative and strategic. Nearly half (46 percent) of large businesses have one or more cloud projects planned and underway, and more than half are already using the cloud for e-mail, communication and collaboration.

Chicago, Ill. The Windy City ranks 9 out of 10 in the survey of “cloud-friendly” cities. The tide may be turning because half of IT decision makers at large companies there say cloud computing is an opportunity to be more strategic. Small businesses are also beginning to see the benefits, with 39 percent stating they are encouraged to deploy cloud services because they are cost-effective.

Dallas, Texas Dallas ranks third among the most cloud-ready cities for large companies. Of IT decision makers, 46 percent believe the cloud is an engine of innovation, where only 37 percent of those surveyed nationally believe so. Of the local small companies, 46 percent say they are encouraged to buy cloud services for reliable security, almost double the response of enterprise companies (29 percent).

Detroit, Mich. The Motor City ranks near the bottom of our rankings for “cloud-friendly” cities, but nearly half (47 percent) of IT decision makers in Detroit see the cloud as an avenue for creating business advancements, saying the cloud is an engine of innovation. The majority (51 percent) of respondents agree that investing in IT during the next five years will increase profitability.

Los Angeles/Orange County, Calif. Los Angeles and Orange County rank fourth among the most cloud-ready cities for small companies. Of the IT decision makers surveyed, nearly half (46 percent) are investing in cloud services. Small businesses agree that the use of cloud services helps to ensure they always have the latest upgrades available to them, and focusing more strategic initiatives will reduce IT workload.

New York, N.Y. A city of great contrast in cloud adoption between small and large businesses, New York businesses boast the highest number of enterprises nationwide using cloud-based applications. While nearly half (46 percent) of large companies have cloud projects actively underway, only a small percentage (14 percent) of New York’s small businesses say the same.

Philadelphia, Pa. Philadelphia ranks among the top three “cloud-friendly” cities for small businesses. A majority (87 percent) of IT decision makers at large companies have at least some knowledge of the cloud compared with only half (50 percent) of small businesses. Regardless of company size, a high percentage cites low total cost of ownership as a reason to transition to the cloud. San Francisco, Calif.

San Francisco ranks among the top “cloud-friendly” cities. More than half (51 percent) of IT decision makers at large companies in San Francisco know a fair amount about cloud computing, with 49 percent having at least one cloud project planned or underway. Also, 40 percent of IT decision makers at local small companies believe cloud computing is an engine of innovation.

Washington, D.C. Washington, D.C., ranks as the most “cloud-friendly” city for small businesses. Of those businesses that have adopted cloud services, enabling a remote workforce and lower total cost of ownership are cited as the top reasons for the move. In fact, almost half (46 percent) of IT decision makers at local businesses report cost savings of at least $1,000 through their use of cloud services.

“I think the study is incredibly interesting, and it shows business and IT growth is a key output of the cloud,” said Scott Woodgate, a director in Microsoft’s corporate account segment, which serves mid-market businesses. “For IT professionals, it’s clear that becoming skilled in the cloud is an important call to action. For businesses, the cloud really empowers growth. Because of the nature of the cloud, you can take more risks and innovate at a much lower cost.”

IT Decision Makers: Cloud Is Creating New Business Opportunities IT decision makers in financial services, manufacturing, professional services, and retail and hospitality see cloud computing as an opportunity to grow their business, drive innovation and strategy, and efficiently collaborate across geographies, according to Microsoft’s new could computing survey.

Among IT decision makers surveyed: 24 percent used the cloud to help start a new line of business.

- 68 percent in the financial services said they have been asked to find ways for their companies to save money on the IT side.

- 34 percent in professional services, and 33 percent in retail and hospitality, believe cloud computing is an opportunity for the IT department to be more strategic.

- 71 percent in manufacturing said their IT departments must address the business requirement to work anywhere at any time in the next year.

- 33 percent in professional services said their IT departments must find new ways to enable and support their company’s growing workforce.

The survey, funded by Microsoft, was conducted online and targeted IT decision makers from various industries in 10 U.S. cities. Microsoft ranked the cities according to their “cloud-friendliness” based on a number of results, including opinions and attitudes about cloud computing.

The study shows that one of two things is happening in business – either companies are turning to outside experts to understand and implement the cloud, or they’re looking within their existing IT departments for help, Woodgate said.

Scott Woodgate, a Microsoft director of corporate account marketing. From Microsoft’s perspective, Woodgate said, cloud computing has two other advantages: it lets small businesses act like big businesses, and it lets big businesses move quickly and cheaply like a small business by quickly scaling up and down in size as their IT needs shift.

“It works for small business adopters because they have limited IT staff but have similar desires to large-businesses in terms of productivity, running the business and satisfying customers,” he said. “For big businesses, there is an opportunity to innovate at a lower cost with multiple options rather than having to sink all of their chips into a single, big capital cost option.”

Some businesses still believe that cloud computing will mean job losses, based on new efficiencies gained by moving some IT services to the cloud. This belief, coupled with a shaky economy that wasn’t allowing for new IT projects, led to some reticence for businesses to adopt cloud computing more eagerly. However, the Microsoft survey shows that tide is turning.

The survey also showed that businesses still believe some misnomers about cloud computing – such as that it’s just a trend or a fad.

“People often compare cloud computing to outsourcing. I don’t think it compares well,” Woodgate said. “The skill set of IT workers is changing, and there is plenty of opportunity for IT directly in the context of the cloud. Also, the value proposition of IT is changing. They currently spend a lot of time keeping the lights on. I think with the cloud, they’ll be able to spend less time on that and more time on moving the overall business forward.”

“For example, almost two-thirds of enterprise IT decision makers have hired or are planning on hiring vendors to help understand and deploy the cloud,” Woodgate said. “And 21 percent of IT decision makers are looking to hire new staff with cloud experience.”

RDA Corporation in Baltimore is one of the many cloud consultants investing heavily in the technology – and it’s paying off.

Tom Cole, CEO of RDA Corporation in Baltimore, Md. CEO Tom Cole said his company, which does IT consulting, planning, strategy and integration, spent all of last year introducing the concept of cloud computing to its customers. In just one year there has been a surge in interest in the cloud, he said. Last year his company decided to invest in the cloud and started talking to customers about it in a major way, and now companies are approaching RDA on their own asking for help moving to the cloud.

“Adoption is a two-pronged effort,” Cole said. “No. 1, understand what the technology is and its viability in the marketplace and, once you determine it is in fact viable, ensure that you’ve invested in the people and tools to learn the technology and be able to apply it. Secondly, you’ve got to invest in a field sales team and customers to be able to understand who the early adopters are, how they can take advantage of the cloud, and what the market will bear.” Cole said his company is heavily invested in cloud computing, adding that it’s not hard to pitch Microsoft Azure – Microsoft’s cloud computing platform – as a solution. He said it’s quick and affordable to deploy; it’s easy to build, maintain, manage, and add devices; and it’s easy to build customized solutions that scale up and down when the need shifts.

Cole said any talk of cloud computing contributing to job loss is “totally fictitious.” “It does not drive people out of work. If anything, it creates business opportunities to add different value and lowers the cost of optimizing your infrastructure,” Cole said. “The planning, deployment, migration and support opportunities in the area of new venture startup are a tremendous way – at a low risk – to start something new, which means new jobs.” Whenever there is a seismic shift in an industry, like cloud computing is for the IT industry, the changes may mean job shifting, Woodgate said. But in the long term, it will mean more jobs and higher-value IT jobs – such as creating new services for end users.

“With these changes, it takes time to get people’s skills up, it takes time to understand the cloud and evaluate how it can help you, execute your first project and then build on that success” Woodgate said. “Certainly the infrastructure for cloud computing exists today, so it’s great to see this level of interest. Microsoft began our journey to the cloud more than 10 years ago and we have some very strong offerings across productivity, management and software as a service.”

David Pallman gave a detailed answer to the What Platform to Choose? question in Picking a Lane in Cloud Computing on 1/14/2011:

Because cloud computing is big and varied, there are lots of ways to apply it—which requires you to ultimately make some important decisions. In this post we’ll explore what some of those decisions are, first with cloud computing generally, then specifically with the Windows Azure platform. This is an excerpt from my upcoming book, The Azure Handbook.

Choice is good, right? Yes and no: it’s good to have options but it also raises the specter that a wrong choice will take you to some place you don’t want to go. You might even be unaware that you have a choice in some area or that a decision needs to be made. While there’s some value in experimenting, you eventually need to make some rather binding decisions. Failure to get those decisions right early on could cost you wasted time, effort, and expense.

The first choice to make is the one that’s most talked about (talked about to death, perhaps): whether you’re going to run Software-as-a-Service, Platform-as-a-Service, or Infrastructure-as-a-Service. What’s at issue here is the level at which you use cloud computing.

SaaS: Someone else’s software in the cloud. If you’re simply going to use someone else’s cloud-hosted application (such as Salesforce.com or Microsoft Exchange in the cloud), decision made: you’ll be using SaaS. If that’s you, read no further. The rest of this article is for those who want to run their own software applications in the cloud (to be sure, your SaaS software provider is using IaaS or PaaS themselves but that’s their worry, not yours.)

PaaS: Your Cloud Applications. This means running applications in the cloud that conform to your cloud provider’s platform model. In other words, they do things the cloud’s way (which is often different from in the enterprise). There are many benefits to running at this level, among them superb scale, availability, elasticity, and management. There’s a spectrum here that ranges from minimal conformance all the way to applications designed from the ground up to strongly leverage the cloud.

IaaS: Traditional Applications in the Cloud. This means running traditional applications in the cloud. Not all applications can run in the cloud, and you’re not leveraging the cloud very strongly by running at this level. If your application and data aren’t protected with redundancy there are some real dangers you could lose availability or data (in PaaS, the platform has these protections built into its services). IaaS appeals to some people because it’s more similar to traditional hosting and thus somewhat more familiar, or because they prefer to take control themselves.

Not sure which way to go? For running your own applications in the cloud, PaaS is the best choice for nearly everybody.

Public, Private, or Hybrid Cloud?Public Cloud: Full Cloud Computing. Cloud computing in its fullest sense is provided by large technology providers such as Amazon, Google, and Microsoft who have both the infrastructure and the experience to support large communities well with dynamic scale and high reliability. We call this “public cloud”. When you use public cloud, you get the most benefits: no up-front costs, consumption-based pricing, capacity on tap, high availability, elasticity, and no requirement to make commitments.

Private Cloud: Under Your Control. And then there’s private cloud, not quite as firmly defined yet but very much on everyone's mind. Ever since cloud computing became a category there’s been ongoing demand in the market for “private clouds”. There’s more than one interpretation of just what this means or how it can be delivered; the general idea is to benefit from the cloud computing way of doing things but with a strong degree of privacy and direct control as compared to public clouds, where you are in a shared environment. Here are some of the ways private cloud is interpreted:

- Hardware private cloud: a local cloud computing hardware appliance for your data center.

- Software private cloud: a software emulation you run locally.

- Dedicated private cloud: leasing a dedicated area of a cloud computing data center not shared with other tenants.

- Network private cloud: ability to exercise network control over assets in the cloud such as joining them to your domain and making them subject to your policies. This last idea more properly belongs in our next category, Hybrid Cloud.

Hybrid Cloud. If you’re making use of public cloud, it often makes sense to connect your cloud and on-premise assets. Using VPN technology some cloud platforms allow you to link your virtual machines running in the cloud with local on-premise machines. You might do this for example if you had a cloud-hosted web site that needed to talk to an on-premise database server. If you want to be on more intimate terms, your cloud assets can become members of your domain.

In the Windows Azure platform, all 3 forms of cloud are available: public cloud, hardware private cloud, and hybrid cloud.Not sure what you need? Public cloud is the best starting point for most organizations, it doesn’t commit you. Whether and when you look into private cloud or hybrid cloud is something best decided once you’ve tested the public cloud waters.

Which Cloud Computing Platform to use?If you’ve decided to go with PaaS or IaaS, you need to choose a vendor and platform. The big players are Amazon, Microsoft, and Google.

Amazon Web Services offers an extensive set of cloud services. I think of them as mostly focused on IaaS but they also provide a growing set of PaaS services.

Microsoft’s Windows Azure Platform also offers an extensive set of cloud services. Windows Azure is very focused on PaaS but also offers some IaaS capability. One distinguishing feature of Windows Azure is the symmetry Microsoft offers between its enterprise technology stack and its cloud services.Google provides some interesting cloud services such as AppEngine that are very automatic in how they scale, but they limit you to a smaller set of languages and application scenarios.

Here’s a comparison I recently put together on the services offered by these vendors. Keep in mind, the platforms advance rapidly and I’m only an authority on Windows Azure; so you should definitely research this decision carefully and make sure you’re using up-to-date information.